Physics I Entropy Reversibility Disorder and Information Prof

Physics I Entropy: Reversibility, Disorder, and Information Prof. WAN, Xin xinwan@zju. edu. cn http: //zimp. zju. edu. cn/~xinwan/

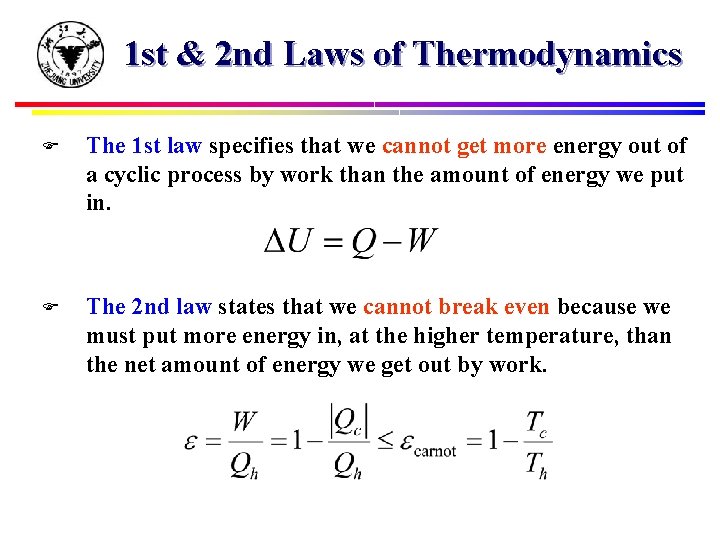

1 st & 2 nd Laws of Thermodynamics F The 1 st law specifies that we cannot get more energy out of a cyclic process by work than the amount of energy we put in. F The 2 nd law states that we cannot break even because we must put more energy in, at the higher temperature, than the net amount of energy we get out by work.

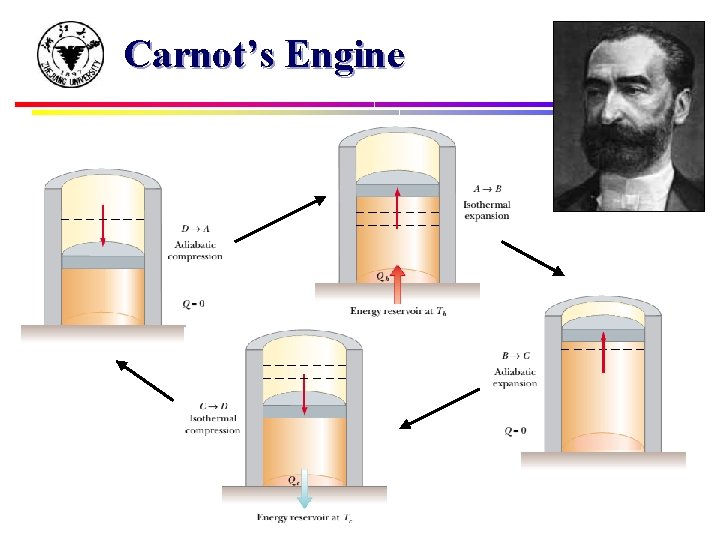

Carnot’s Engine

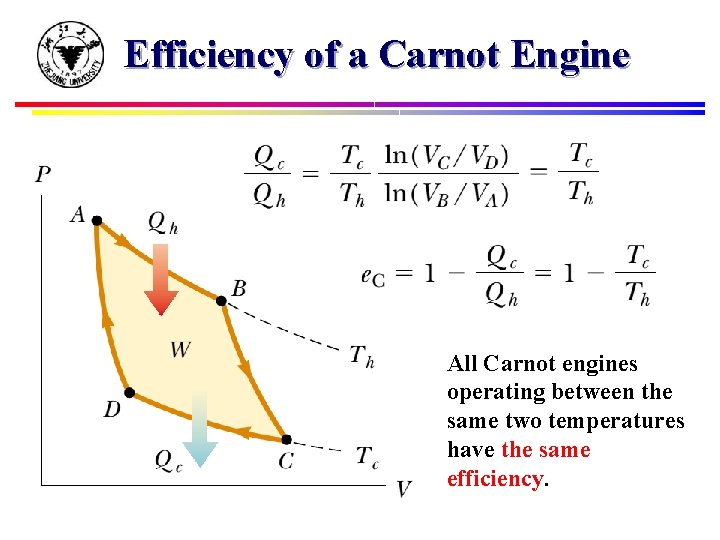

Efficiency of a Carnot Engine All Carnot engines operating between the same two temperatures have the same efficiency.

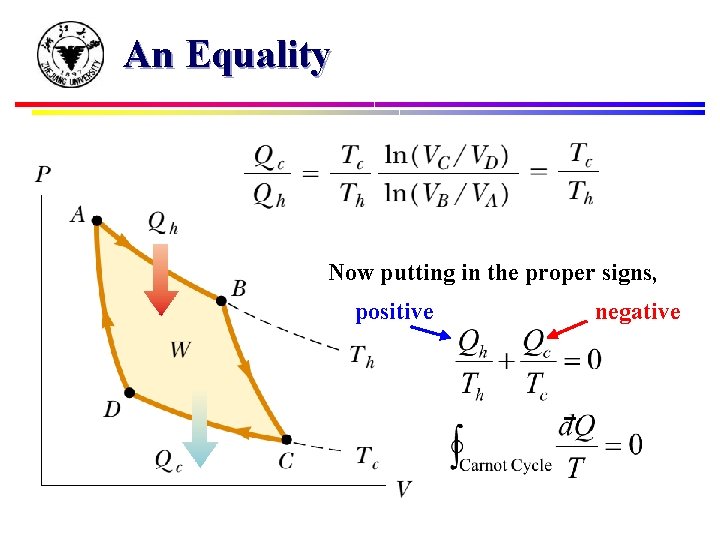

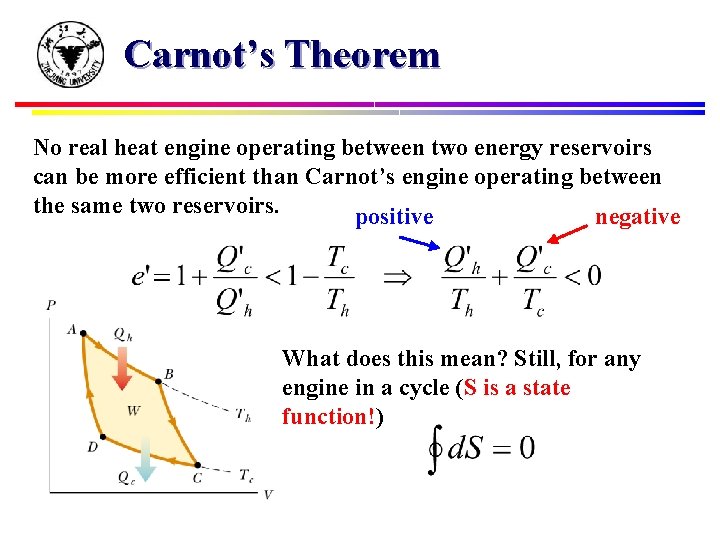

An Equality Now putting in the proper signs, positive negative

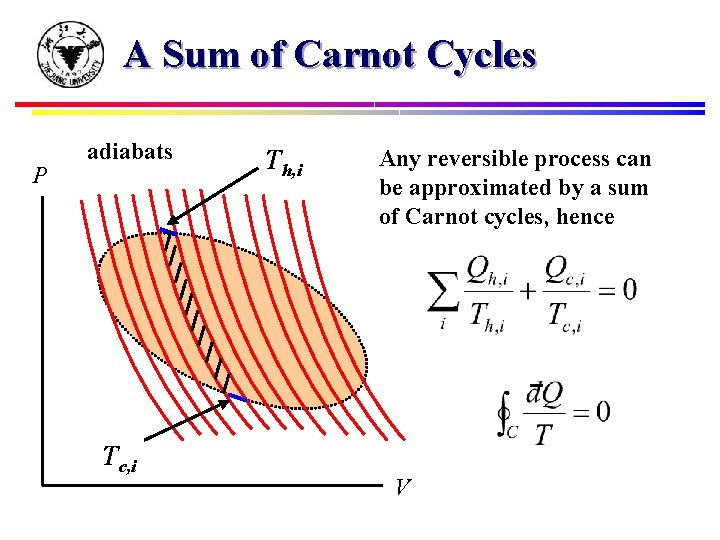

A Sum of Carnot Cycles P adiabats Tc, i Th, i Any reversible process can be approximated by a sum of Carnot cycles, hence V

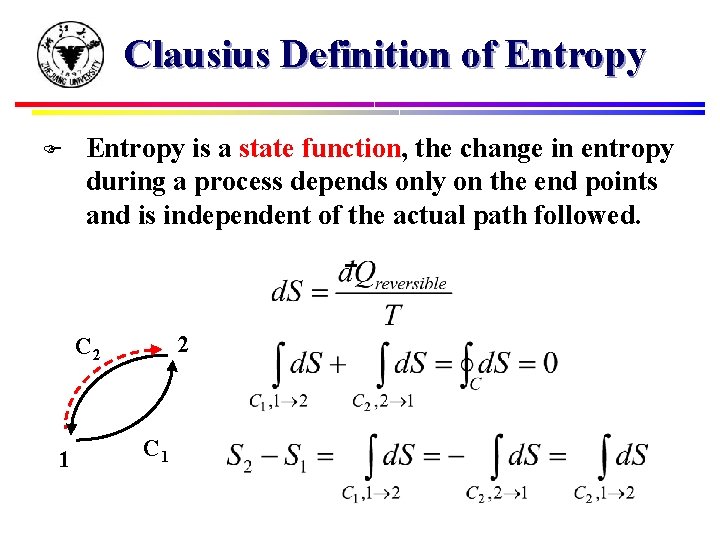

Clausius Definition of Entropy F Entropy is a state function, the change in entropy during a process depends only on the end points and is independent of the actual path followed. 2 C 2 1 C 1

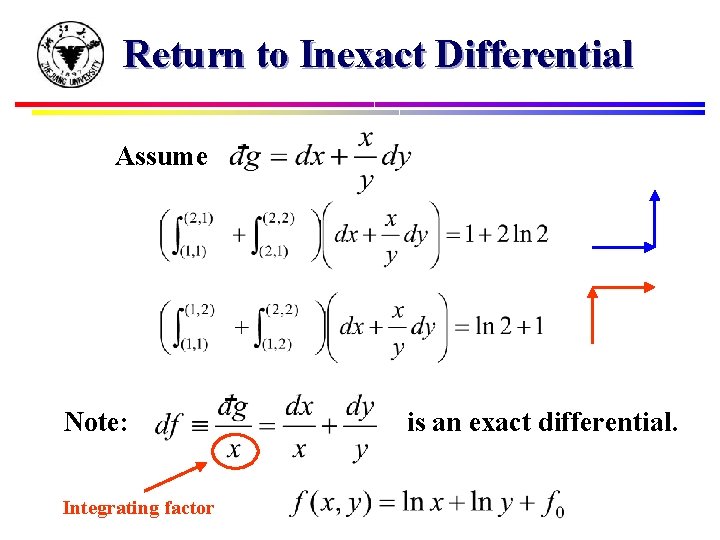

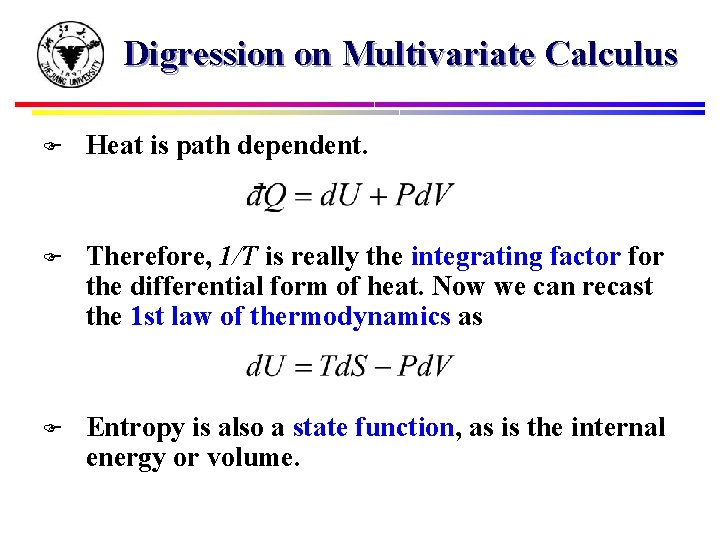

Return to Inexact Differential Assume Note: Integrating factor is an exact differential.

Digression on Multivariate Calculus F Heat is path dependent. F Therefore, 1/T is really the integrating factor for the differential form of heat. Now we can recast the 1 st law of thermodynamics as F Entropy is also a state function, as is the internal energy or volume.

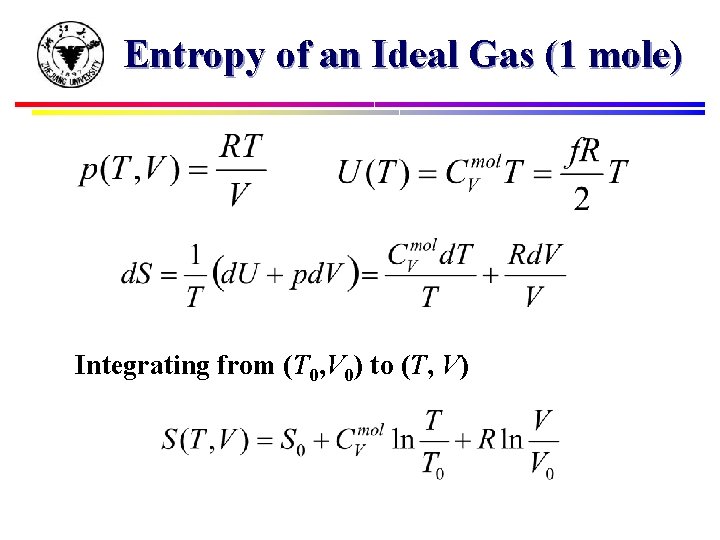

Entropy of an Ideal Gas (1 mole) Integrating from (T 0, V 0) to (T, V)

Carnot’s Theorem No real heat engine operating between two energy reservoirs can be more efficient than Carnot’s engine operating between the same two reservoirs. positive negative What does this mean? Still, for any engine in a cycle (S is a state function!)

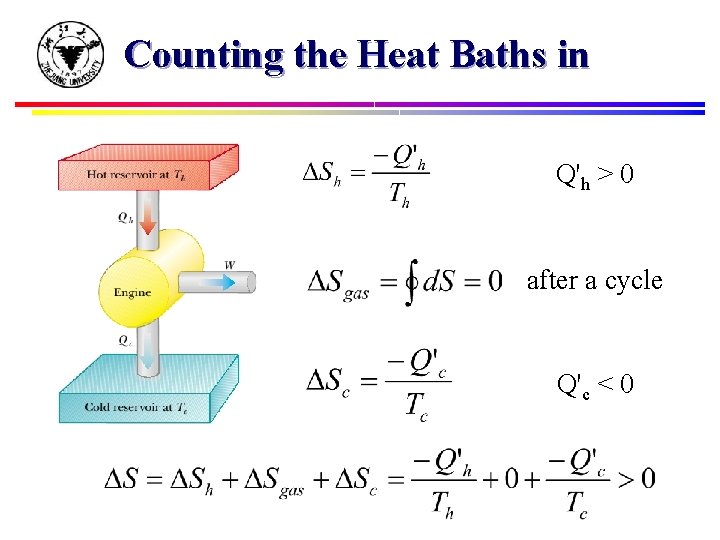

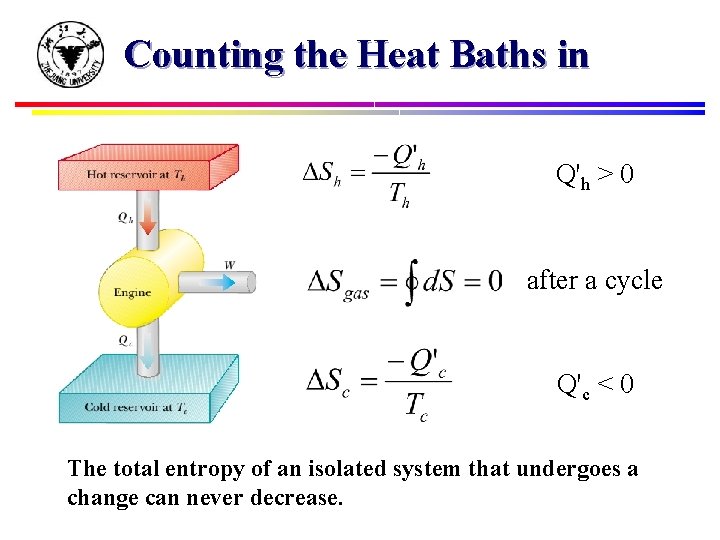

Counting the Heat Baths in Q'h > 0 after a cycle Q'c < 0

Counting the Heat Baths in Q'h > 0 after a cycle Q'c < 0 The total entropy of an isolated system that undergoes a change can never decrease.

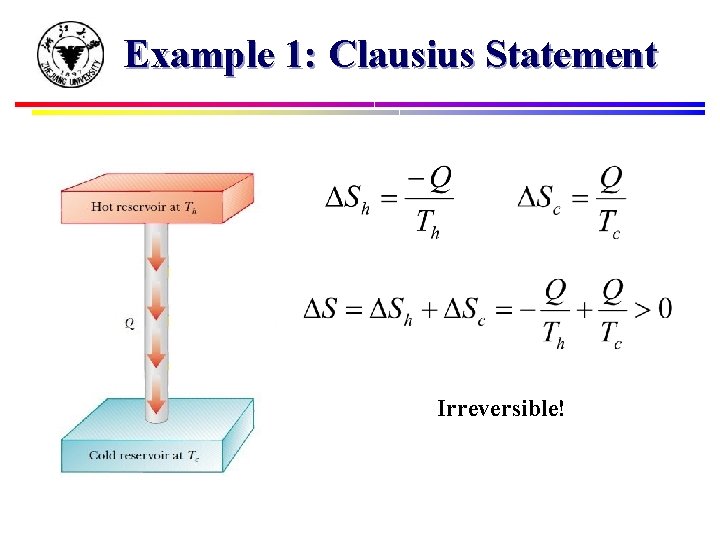

Example 1: Clausius Statement Irreversible!

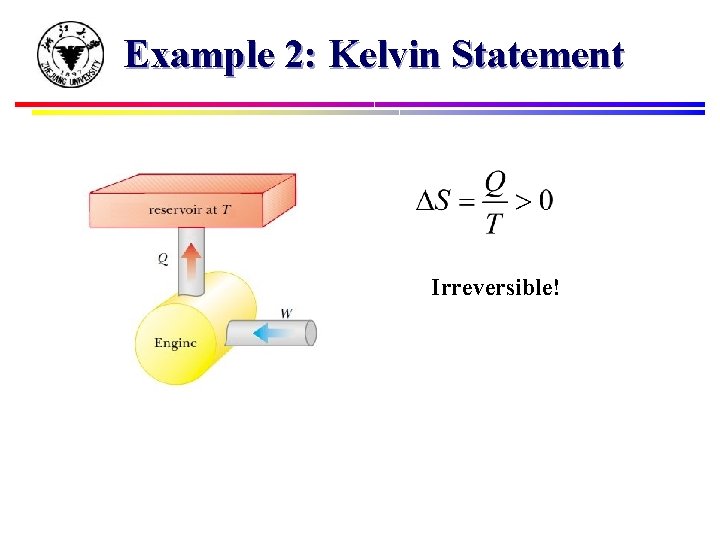

Example 2: Kelvin Statement Irreversible!

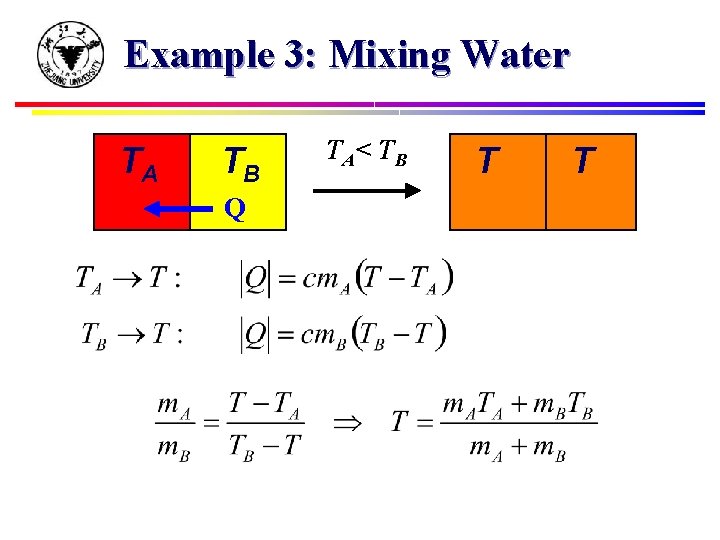

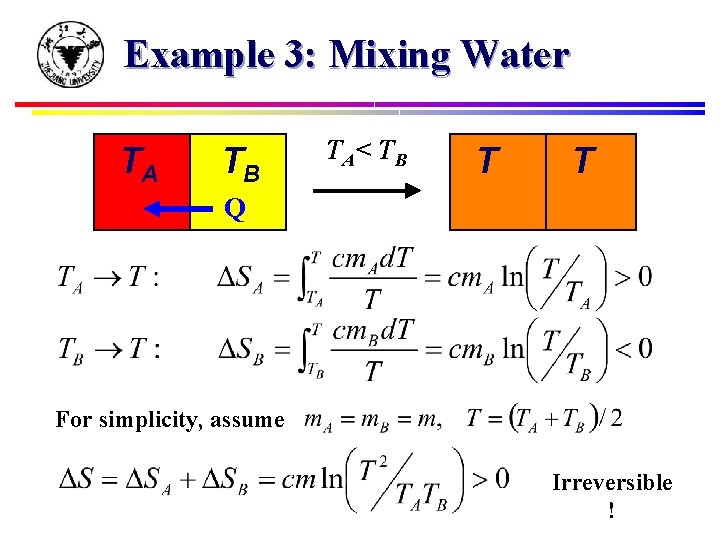

Example 3: Mixing Water TA TB Q TA< T B T A Q TB A B

Example 3: Mixing Water TA TB Q TA< T B T A Q TB A B For simplicity, assume Irreversible !

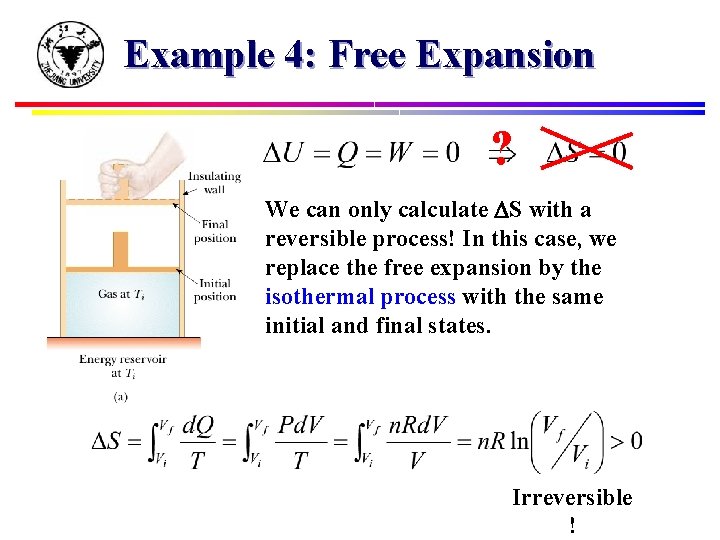

Example 4: Free Expansion ? We can only calculate DS with a reversible process! In this case, we replace the free expansion by the isothermal process with the same initial and final states. Irreversible !

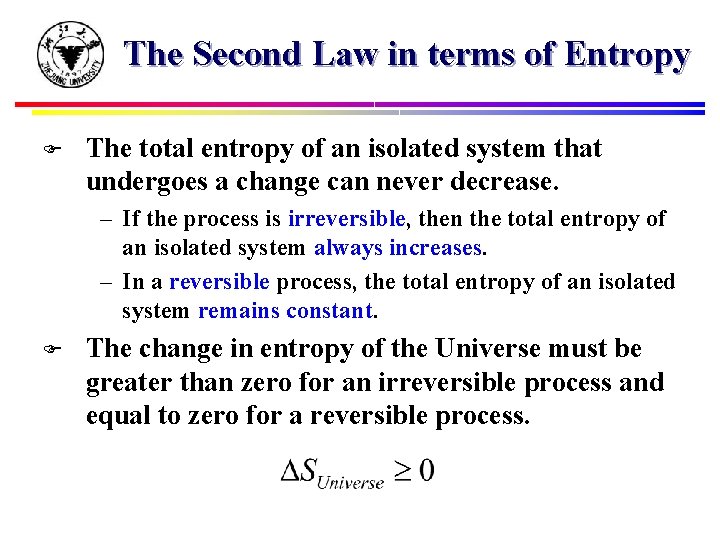

The Second Law in terms of Entropy F The total entropy of an isolated system that undergoes a change can never decrease. – If the process is irreversible, then the total entropy of an isolated system always increases. – In a reversible process, the total entropy of an isolated system remains constant. F The change in entropy of the Universe must be greater than zero for an irreversible process and equal to zero for a reversible process.

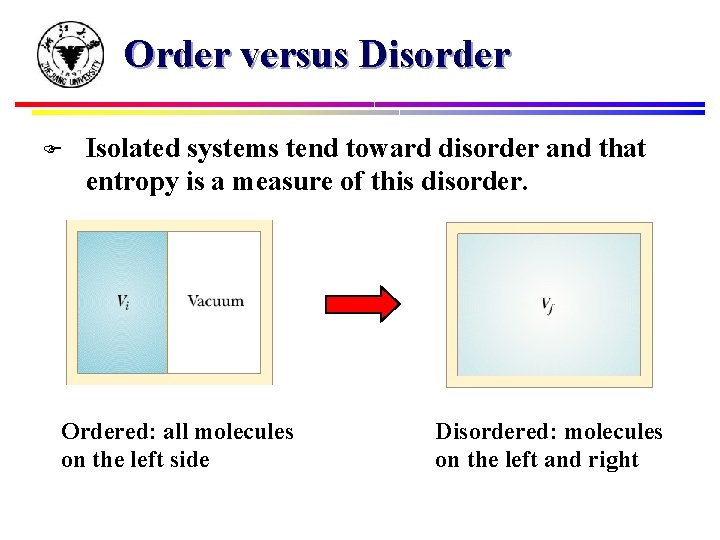

Order versus Disorder F Isolated systems tend toward disorder and that entropy is a measure of this disorder. Ordered: all molecules on the left side Disordered: molecules on the left and right

Macrostate versus Microstate F F F Each of the microstates is equally probable. Ordered microstate to be very unlikely because random motions tend to distribute molecules uniformly. There are many more disordered microstates than ordered microstates. A macrostate corresponding to a large number of equivalent disordered microstates is much more probable than a macrostate corresponding to a small number of equivalent ordered microstates. How much more probable?

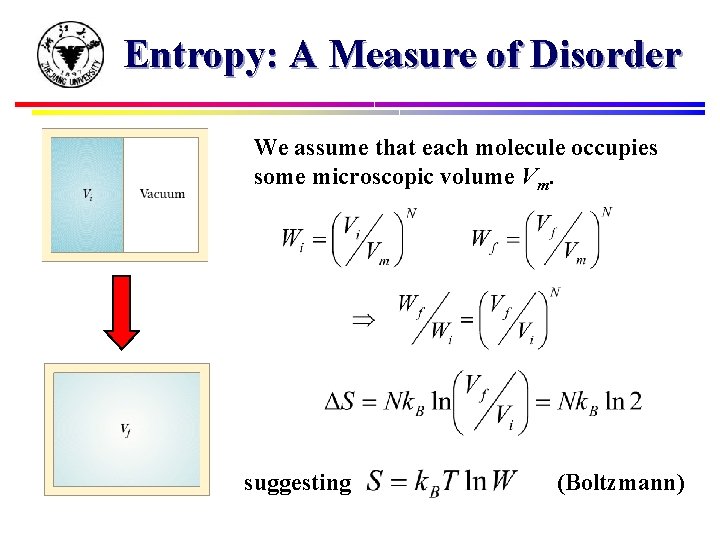

Entropy: A Measure of Disorder We assume that each molecule occupies some microscopic volume Vm. suggesting (Boltzmann)

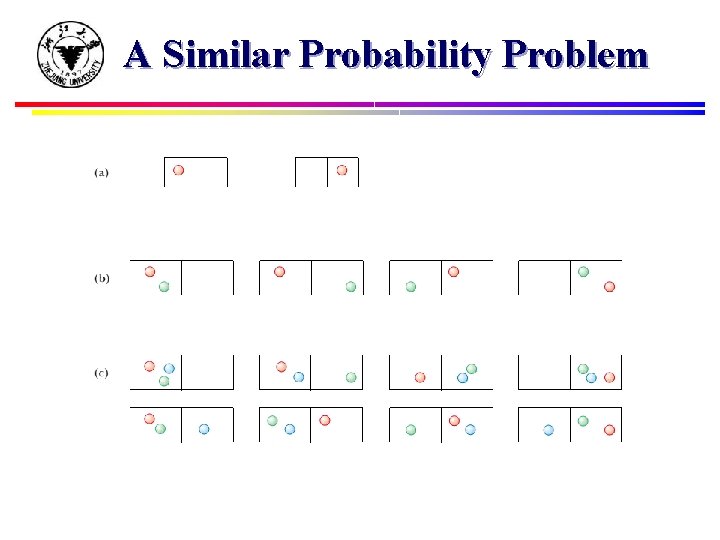

A Similar Probability Problem

Let’s Play Cards F Imagine shuffling a deck of playing cards: – Systems have a natural tendency to become more and more disordered. F Disorder almost always increases is that disordered states hugely outnumber highly ordered states, such that the system inevitably settles down in one of the more disordered states.

Computers are useless. They can only give us answers. ---- Pablo Picasso

Information and Entropy F (1927) Bell Labs, Ralph Hartley – Measure for information in a message – Logarithm: 8 bit = 28 = 256 different numbers F (1940) Bell Labs, Claude Shannon – “A mathematical theory of communication” – Probability of a particular message But there is no information. You are not winning the lottery.

Information and Entropy F (1927) Bell Labs, Ralph Hartley – Measure for information in a message – Logarithm: 8 bit = 28 = 256 different numbers F (1940) Bell Labs, Claude Shannon – “A mathematical theory of communication” – Probability of a particular message Now that’s something. Okay, you are going to win the lottery.

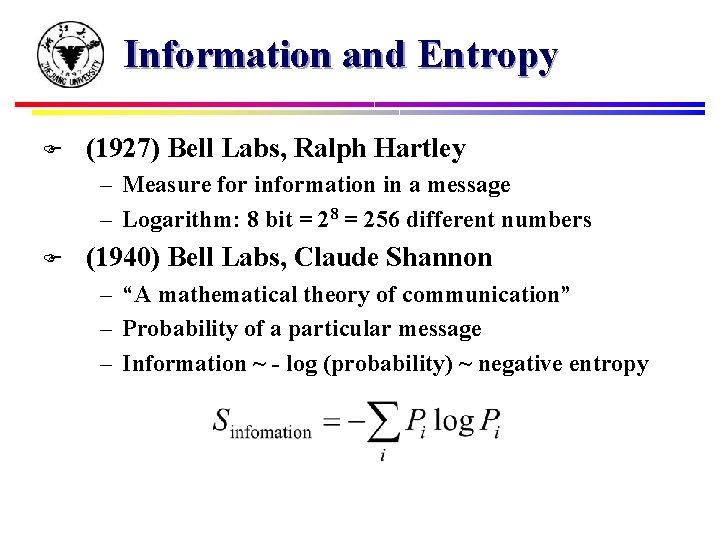

Information and Entropy F (1927) Bell Labs, Ralph Hartley – Measure for information in a message – Logarithm: 8 bit = 28 = 256 different numbers F (1940) Bell Labs, Claude Shannon – “A mathematical theory of communication” – Probability of a particular message – Information ~ - log (probability) ~ negative entropy

It is already in use under that name. … and besides, it will give you great edge in debates because nobody really knows what entropy is anyway. ---- John von Neumann

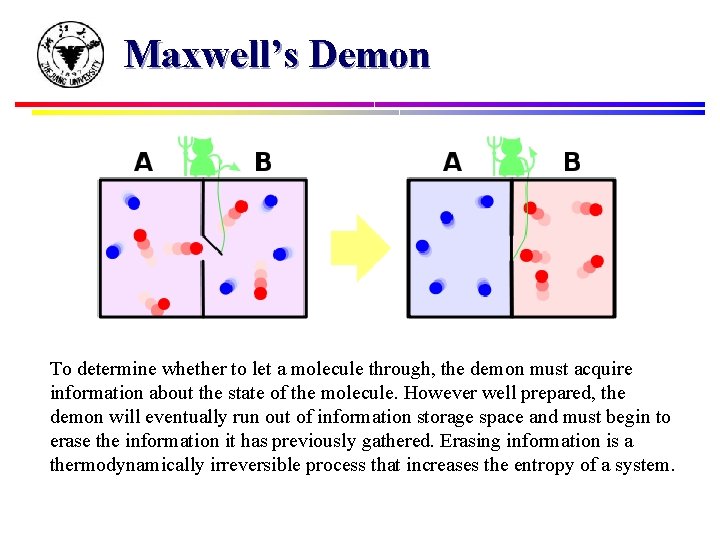

Maxwell’s Demon To determine whether to let a molecule through, the demon must acquire information about the state of the molecule. However well prepared, the demon will eventually run out of information storage space and must begin to erase the information it has previously gathered. Erasing information is a thermodynamically irreversible process that increases the entropy of a system.

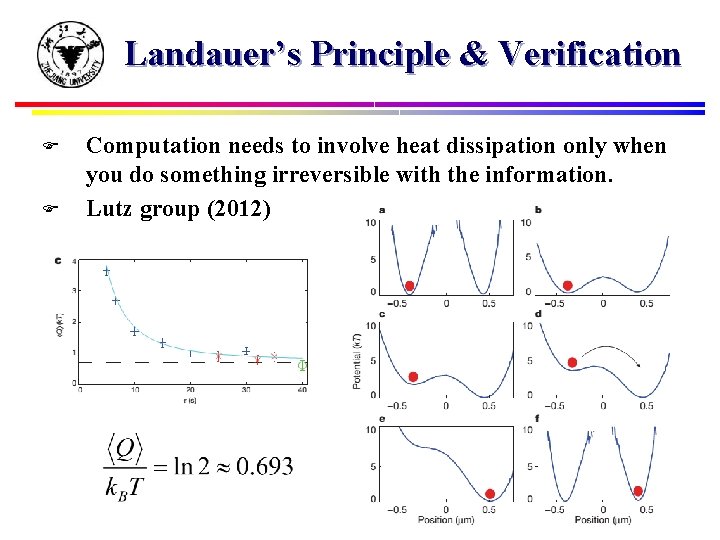

Landauer’s Principle & Verification F F Computation needs to involve heat dissipation only when you do something irreversible with the information. Lutz group (2012)

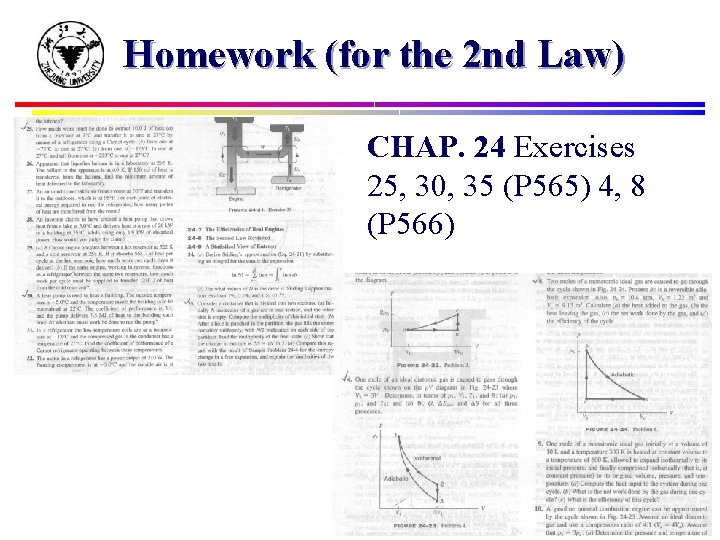

Homework (for the 2 nd Law) CHAP. 24 Exercises 25, 30, 35 (P 565) 4, 8 (P 566)

Homework F Reading (downloadable from my website): – Charles Bennett and Rolf Landauer, The fundamental physical limits of computation. – Antoine Bérut et al. , Experimental verification of Landauer’s principle linking information and thermodynamics, Nature (2012). – Seth Lloyd, Ultimate physical limits to computation, Nature (2000). Dare to adventure where you have not been!

- Slides: 33