PGM Tirgul 11 Na ve Bayesian Classifier Tree

PGM: Tirgul 11 Na? ve Bayesian Classifier + Tree Augmented Na? ve Bayes (adapted from tutorial by Nir Friedman and Moises Goldszmidt

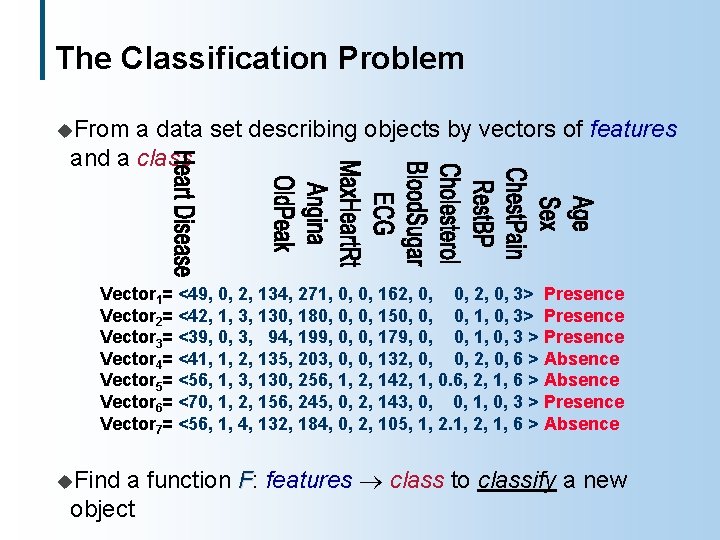

The Classification Problem u. From a data set describing objects by vectors of features and a class Vector 1= <49, 0, 2, 134, 271, 0, 0, 162, 0, 0, 2, 0, 3> Presence Vector 2= <42, 1, 3, 130, 180, 0, 0, 150, 0, 0, 1, 0, 3> Presence Vector 3= <39, 0, 3, 94, 199, 0, 0, 179, 0, 0, 1, 0, 3 > Presence Vector 4= <41, 1, 2, 135, 203, 0, 0, 132, 0, 0, 2, 0, 6 > Absence Vector 5= <56, 1, 3, 130, 256, 1, 2, 142, 1, 0. 6, 2, 1, 6 > Absence Vector 6= <70, 1, 2, 156, 245, 0, 2, 143, 0, 0, 1, 0, 3 > Presence Vector 7= <56, 1, 4, 132, 184, 0, 2, 105, 1, 2, 1, 6 > Absence a function F: features class to classify a new object u. Find

Examples u. Predicting l l Features: cholesterol, chest pain, angina, age, etc. Class: {present, absent} u. Finding l l l recognition Features: matrix of pixel descriptors Class: {1, 2, 3, 4, 5, 6, 7, 8, 9, 0} u. Speech l l lemons in cars Features: make, brand, miles per gallon, acceleration, etc. Class: {normal, lemon} u. Digit l heart disease recognition Features: Signal characteristics, language model Class: {pause/hesitation, retraction}

Approaches u. Memory l l based Define a distance between samples Nearest neighbor, support vector machines u. Decision l l surface Find best partition of the space CART, decision trees u. Generative l l models Induce a model and impose a decision rule Bayesian networks

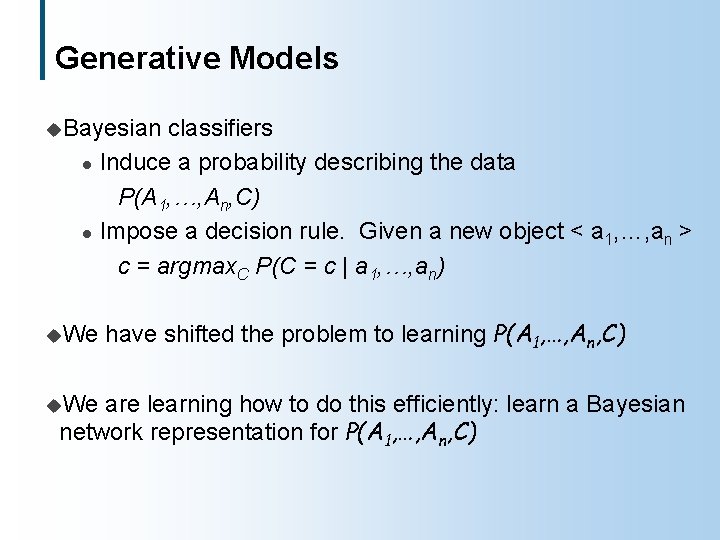

Generative Models u. Bayesian l l u. We classifiers Induce a probability describing the data P(A 1, …, An, C) Impose a decision rule. Given a new object < a 1, …, an > c = argmax. C P(C = c | a 1, …, an) have shifted the problem to learning P(A 1, …, An, C) are learning how to do this efficiently: learn a Bayesian network representation for P(A 1, …, An, C)

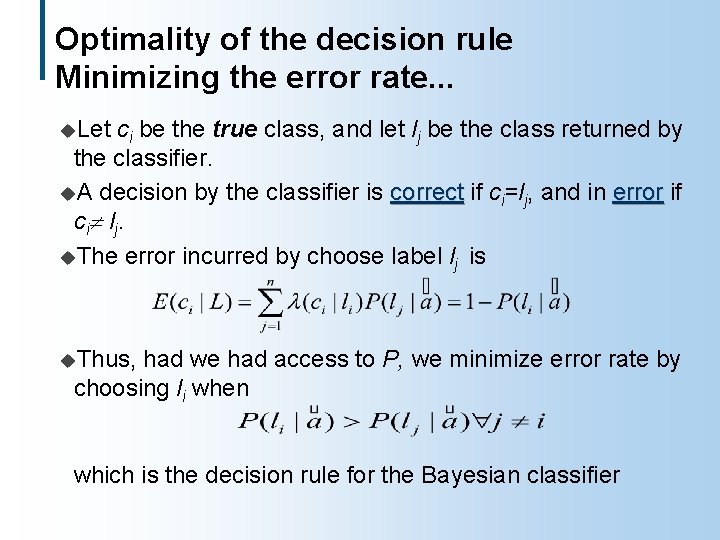

Optimality of the decision rule Minimizing the error rate. . . u. Let ci be the true class, and let lj be the class returned by the classifier. u. A decision by the classifier is correct if ci=lj, and in error if ci lj. u. The error incurred by choose label lj is u. Thus, had we had access to P, we minimize error rate by choosing li when which is the decision rule for the Bayesian classifier

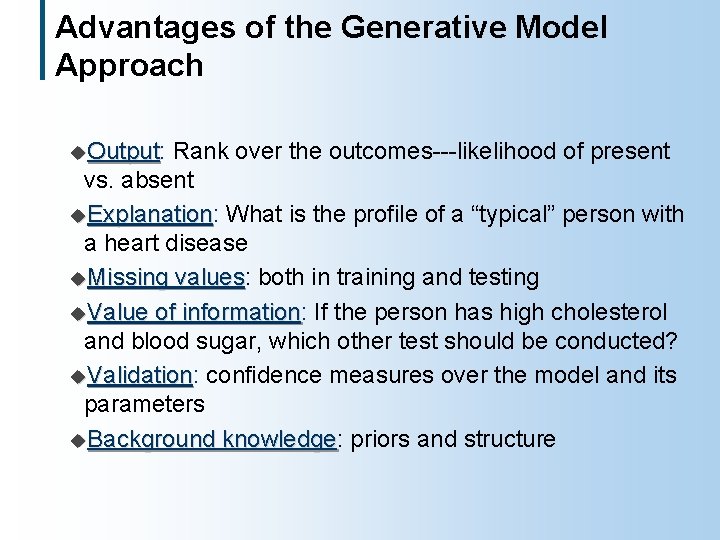

Advantages of the Generative Model Approach u. Output: Output Rank over the outcomes---likelihood of present vs. absent u. Explanation: Explanation What is the profile of a “typical” person with a heart disease u. Missing values: values both in training and testing u. Value of information: information If the person has high cholesterol and blood sugar, which other test should be conducted? u. Validation: Validation confidence measures over the model and its parameters u. Background knowledge: knowledge priors and structure

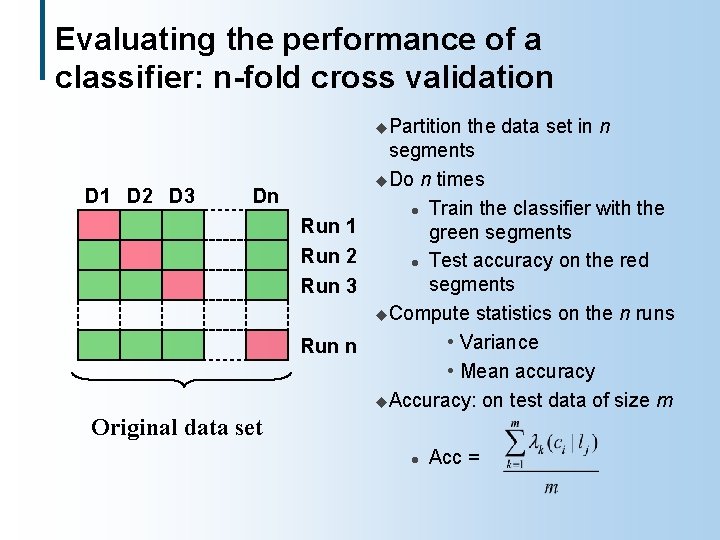

Evaluating the performance of a classifier: n-fold cross validation u. Partition D 1 D 2 D 3 Dn Run 1 Run 2 Run 3 Run n the data set in n segments u. Do n times l Train the classifier with the green segments l Test accuracy on the red segments u. Compute statistics on the n runs • Variance • Mean accuracy u. Accuracy: on test data of size m Original data set l Acc =

Advantages of Using a Bayesian Network u Efficiency in learning and query answering l Combine knowledge engineering and statistical induction l Algorithms for decision making, value of information, diagnosis and repair Heart disease Accuracy = 85% Data source UCI repository Outcome Age Vessels Max. Heart. Rate STSlope Angina Chest. Pain Blood. Sugar Cholesterol Rest. BP Sex ECG Old. Peak Thal

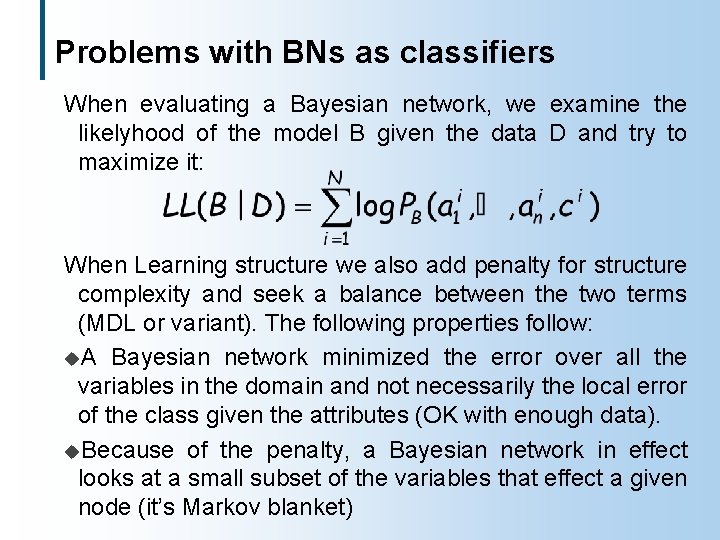

Problems with BNs as classifiers When evaluating a Bayesian network, we examine the likelyhood of the model B given the data D and try to maximize it: When Learning structure we also add penalty for structure complexity and seek a balance between the two terms (MDL or variant). The following properties follow: u. A Bayesian network minimized the error over all the variables in the domain and not necessarily the local error of the class given the attributes (OK with enough data). u. Because of the penalty, a Bayesian network in effect looks at a small subset of the variables that effect a given node (it’s Markov blanket)

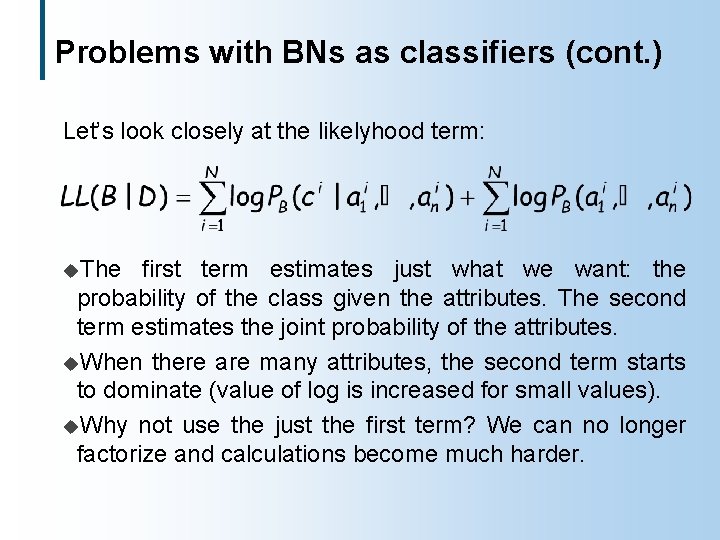

Problems with BNs as classifiers (cont. ) Let’s look closely at the likelyhood term: u. The first term estimates just what we want: the probability of the class given the attributes. The second term estimates the joint probability of the attributes. u. When there are many attributes, the second term starts to dominate (value of log is increased for small values). u. Why not use the just the first term? We can no longer factorize and calculations become much harder.

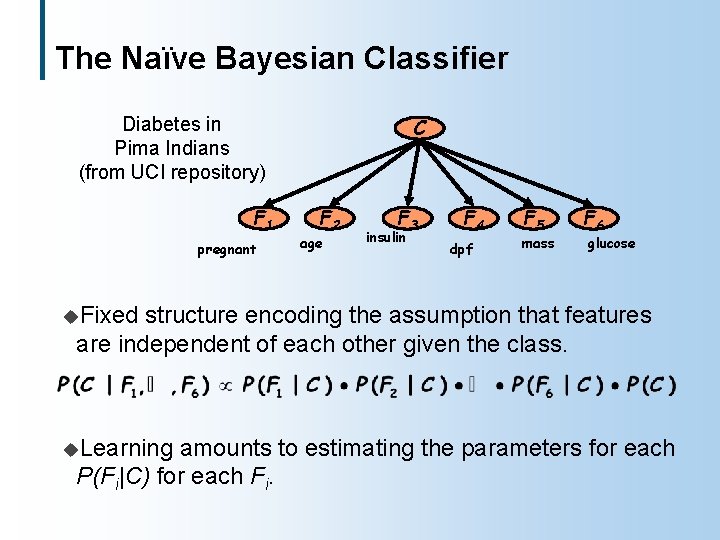

The Naïve Bayesian Classifier C Diabetes in Pima Indians (from UCI repository) F 1 pregnant F 2 age F 3 insulin F 4 dpf F 5 mass F 6 glucose u. Fixed structure encoding the assumption that features are independent of each other given the class. u. Learning amounts to estimating the parameters for each P(Fi|C) for each Fi.

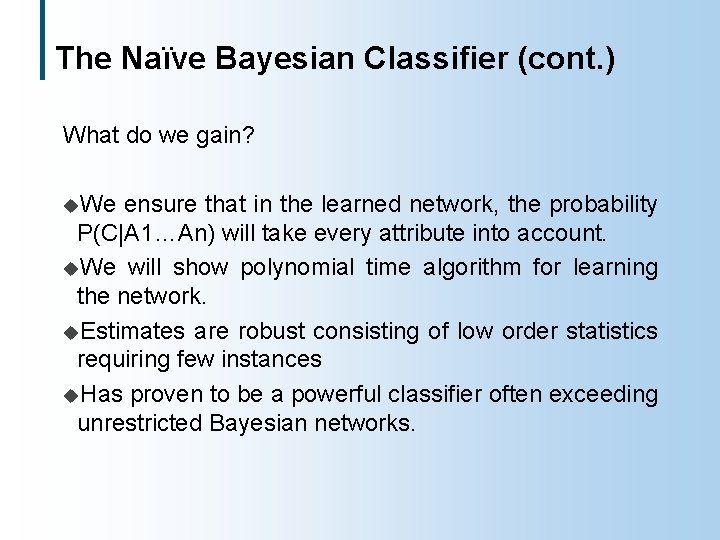

The Naïve Bayesian Classifier (cont. ) What do we gain? u. We ensure that in the learned network, the probability P(C|A 1…An) will take every attribute into account. u. We will show polynomial time algorithm for learning the network. u. Estimates are robust consisting of low order statistics requiring few instances u. Has proven to be a powerful classifier often exceeding unrestricted Bayesian networks.

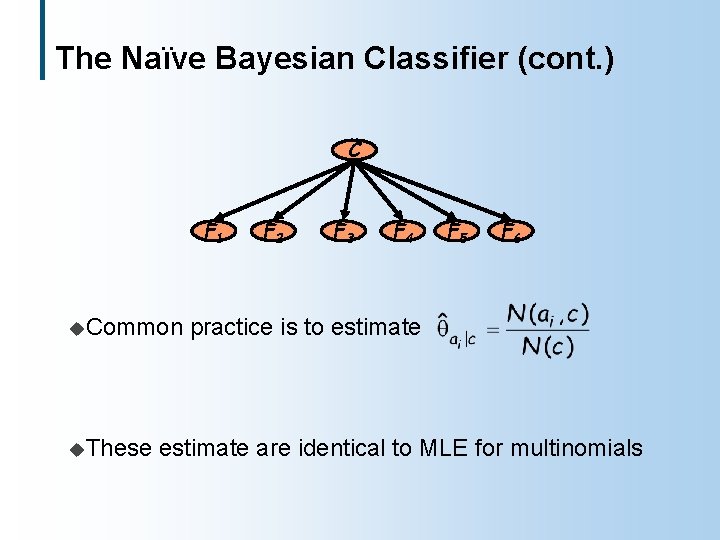

The Naïve Bayesian Classifier (cont. ) C F 1 u. Common u. These F 2 F 3 F 4 F 5 F 6 practice is to estimate are identical to MLE for multinomials

Improving Naïve Bayes u. Naïve Bayes encodes assumptions of independence that may be unreasonable: Are pregnancy and age independent given diabetes? Problem: same evidence may be incorporated multiple times (a rare Glucose level and a rare Insulin level over penalize the class variable) u. The success of naïve Bayes is attributed to l l Robust estimation Decision may be correct even if probabilities are inaccurate u. Idea: improve on naïve Bayes by weakening the independence assumptions Bayesian networks provide the appropriate mathematical language for this task

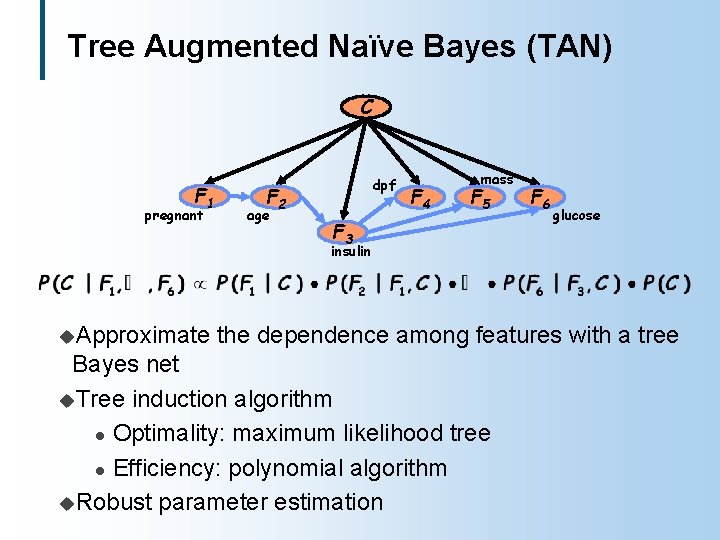

Tree Augmented Naïve Bayes (TAN) C F 1 pregnant dpf F 2 age F 4 mass F 5 F 3 F 6 glucose insulin u. Approximate the dependence among features with a tree Bayes net u. Tree induction algorithm l Optimality: maximum likelihood tree l Efficiency: polynomial algorithm u. Robust parameter estimation

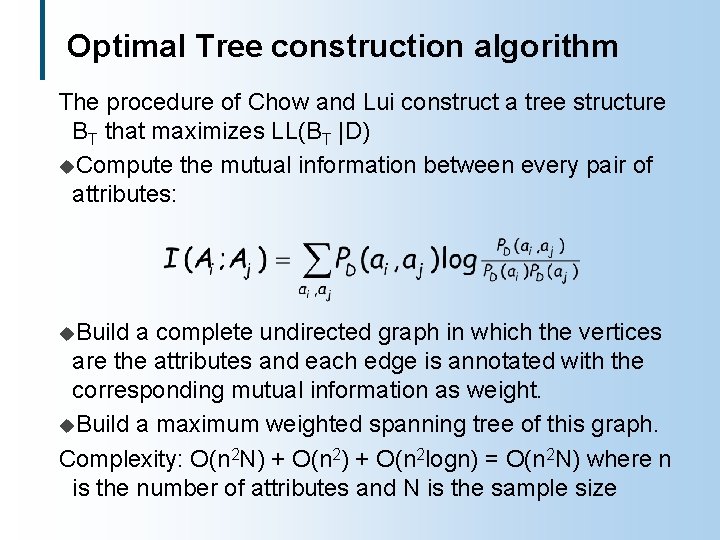

Optimal Tree construction algorithm The procedure of Chow and Lui construct a tree structure BT that maximizes LL(BT |D) u. Compute the mutual information between every pair of attributes: u. Build a complete undirected graph in which the vertices are the attributes and each edge is annotated with the corresponding mutual information as weight. u. Build a maximum weighted spanning tree of this graph. Complexity: O(n 2 N) + O(n 2 logn) = O(n 2 N) where n is the number of attributes and N is the sample size

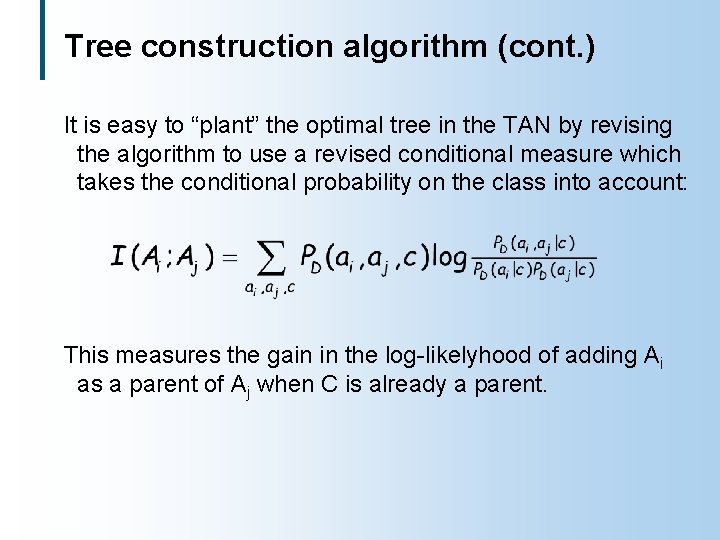

Tree construction algorithm (cont. ) It is easy to “plant” the optimal tree in the TAN by revising the algorithm to use a revised conditional measure which takes the conditional probability on the class into account: This measures the gain in the log-likelyhood of adding Ai as a parent of Aj when C is already a parent.

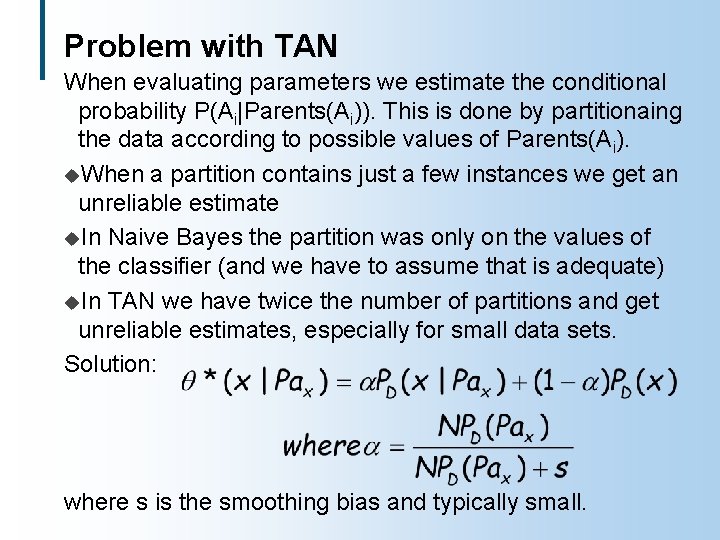

Problem with TAN When evaluating parameters we estimate the conditional probability P(Ai|Parents(Ai)). This is done by partitionaing the data according to possible values of Parents(Ai). u. When a partition contains just a few instances we get an unreliable estimate u. In Naive Bayes the partition was only on the values of the classifier (and we have to assume that is adequate) u. In TAN we have twice the number of partitions and get unreliable estimates, especially for small data sets. Solution: where s is the smoothing bias and typically small.

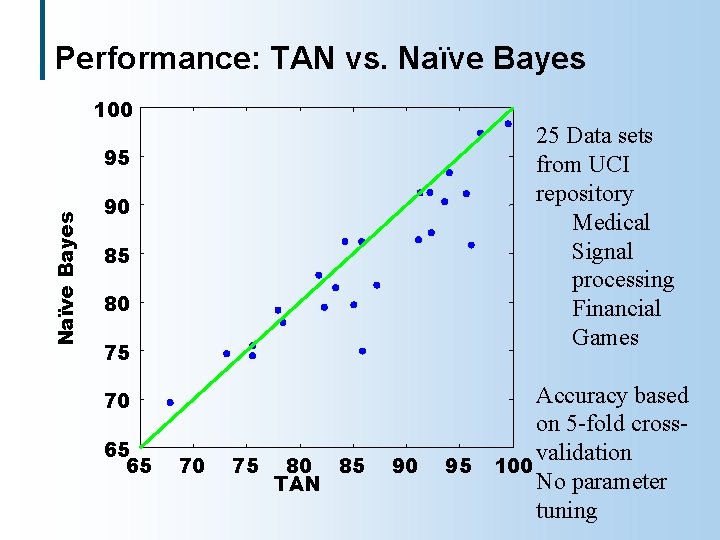

Performance: TAN vs. Naïve Bayes 100 25 Data sets from UCI repository Medical Signal processing Financial Games Naïve Bayes 95 90 85 80 75 70 65 65 70 75 80 85 TAN 90 Accuracy based on 5 -fold crossvalidation 95 100 No parameter tuning

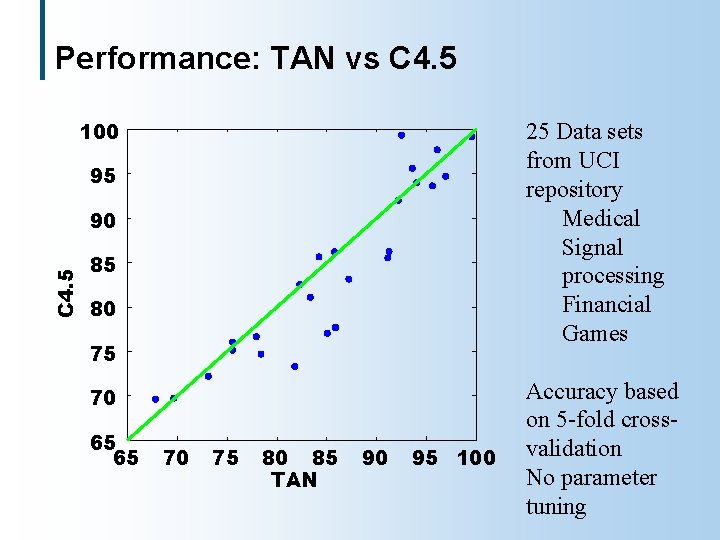

Performance: TAN vs C 4. 5 100 25 Data sets from UCI repository Medical Signal processing Financial Games 95 C 4. 5 90 85 80 75 70 65 65 70 75 80 85 TAN 90 95 100 Accuracy based on 5 -fold crossvalidation No parameter tuning

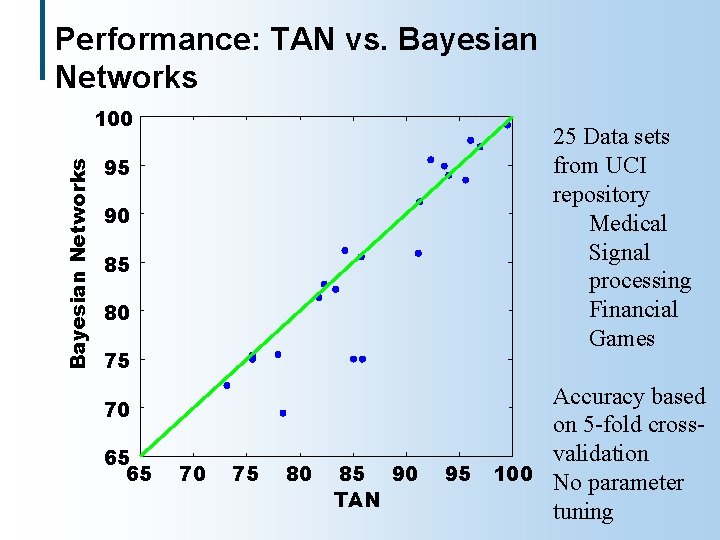

Beyond TAN u. Can we do better by learning a more flexible structure? u. Experiment: the structure learn a Bayesian network without restrictions on

Performance: TAN vs. Bayesian Networks 100 25 Data sets from UCI repository Medical Signal processing Financial Games 95 90 85 80 75 70 65 65 70 75 80 85 90 TAN Accuracy based on 5 -fold crossvalidation 95 100 No parameter tuning

Classification: Summary u. Bayesian networks provide a useful language to improve Bayesian classifiers l Lesson: we need to be aware of the task at hand, the amount of training data vs dimensionality of the problem, etc u. Additional l Missing values Compute the tradeoffs involved in finding out feature values Compute misclassification costs u. Recent l benefits progress: Combine generative probabilistic models, such as Bayesian networks, with decision surface approaches such as Support Vector Machines

- Slides: 24