Performance Observation Sameer Shende and Allen D Malony

Performance Observation Sameer Shende and Allen D. Malony {sameer, malony} @ cs. uoregon. edu

Outline r r r Motivation Introduction to TAU Optimizing instrumentation: approaches Perturbation compensation Conclusion Performance Measurement 2 Advanced Operating Systems, U. Oregon

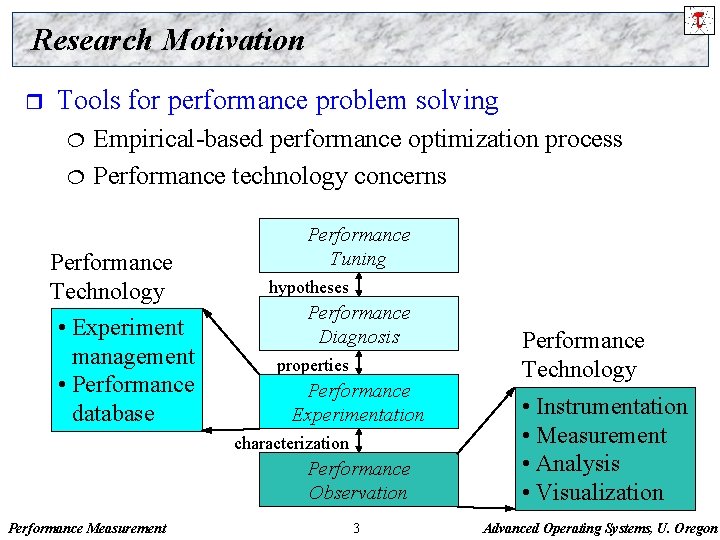

Research Motivation r Tools for performance problem solving ¦ ¦ Empirical-based performance optimization process Performance technology concerns Performance Technology • Experiment management • Performance database Performance Tuning hypotheses Performance Diagnosis properties Performance Experimentation characterization Performance Observation Performance Measurement 3 Performance Technology • Instrumentation • Measurement • Analysis • Visualization Advanced Operating Systems, U. Oregon

TAU Performance System r r r Tuning and Analysis Utilities (11+ year project effort) Performance system framework for scalable parallel and distributed high-performance computing Targets a general complex system computation model ¦ ¦ ¦ r Integrated toolkit for performance instrumentation, measurement, analysis, and visualization ¦ ¦ r nodes / contexts / threads Multi-level: system / software / parallelism Measurement and analysis abstraction Portable performance profiling and tracing facility Open software approach with technology integration University of Oregon , Forschungszentrum Jülich, LANL Performance Measurement 4 Advanced Operating Systems, U. Oregon

Definitions – Profiling r Profiling ¦ Recording of summary information during execution Ø inclusive, ¦ exclusive time, # calls, hardware statistics, … Reflects performance behavior of program entities Ø functions, loops, basic blocks Ø user-defined “semantic” entities ¦ ¦ ¦ Very good for low-cost performance assessment Helps to expose performance bottlenecks and hotspots Implemented through Ø sampling: periodic OS interrupts or hardware counter traps Ø instrumentation: direct insertion of measurement code Performance Measurement 5 Advanced Operating Systems, U. Oregon

Definitions – Tracing r Tracing ¦ Recording of information about significant points (events) during program execution Ø entering/exiting code region (function, loop, block, …) Ø thread/process interactions (e. g. , send/receive message) ¦ Save information in event record Ø timestamp Ø CPU identifier, thread identifier Ø Event type and event-specific information ¦ ¦ ¦ Event trace is a time-sequenced stream of event records Can be used to reconstruct dynamic program behavior Typically requires code instrumentation Performance Measurement 6 Advanced Operating Systems, U. Oregon

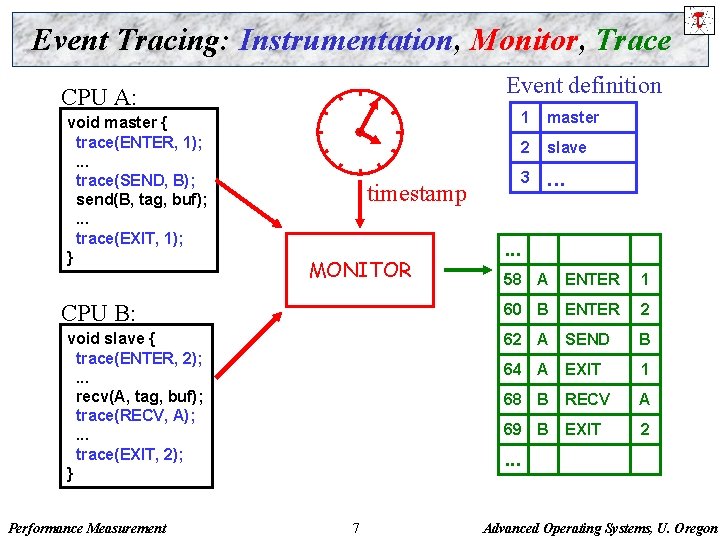

Event Tracing: Instrumentation, Monitor, Trace Event definition CPU A: void master { trace(ENTER, 1); . . . trace(SEND, B); send(B, tag, buf); . . . trace(EXIT, 1); } timestamp MONITOR CPU B: void slave { trace(ENTER, 2); . . . recv(A, tag, buf); trace(RECV, A); . . . trace(EXIT, 2); } Performance Measurement 1 master 2 slave 3 . . . 58 A ENTER 1 60 B ENTER 2 62 A SEND B 64 A EXIT 1 68 B RECV A 69 B EXIT 2 . . . 7 Advanced Operating Systems, U. Oregon

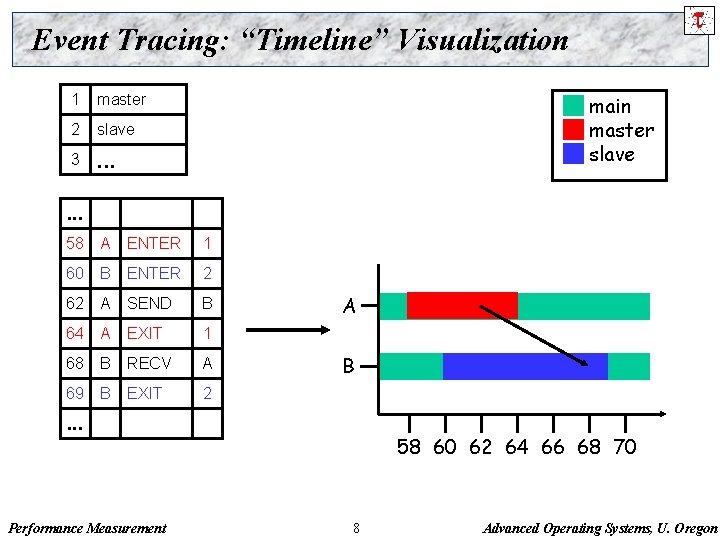

Event Tracing: “Timeline” Visualization 1 master 2 slave 3 . . . main master slave . . . 58 A ENTER 1 60 B ENTER 2 62 A SEND B 64 A EXIT 1 68 B RECV A 69 B EXIT 2 A B . . . Performance Measurement 58 60 62 64 66 68 70 8 Advanced Operating Systems, U. Oregon

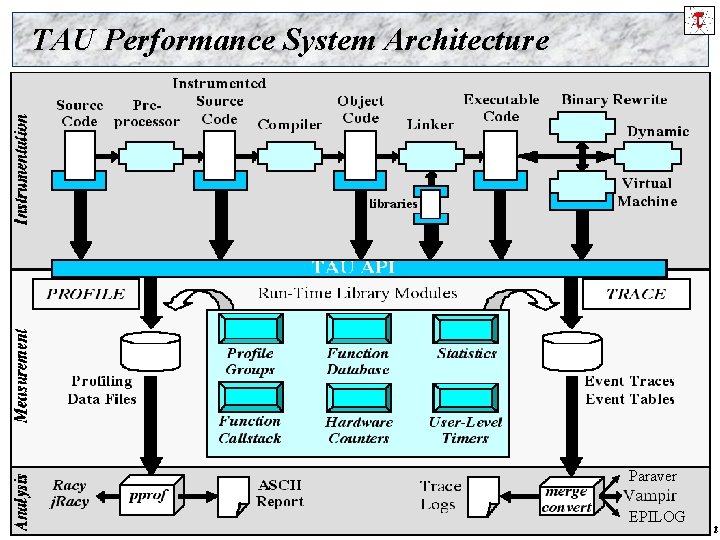

TAU Performance System Architecture Paraver Performance Measurement 9 EPILOG Advanced Operating Systems, U. Oregon

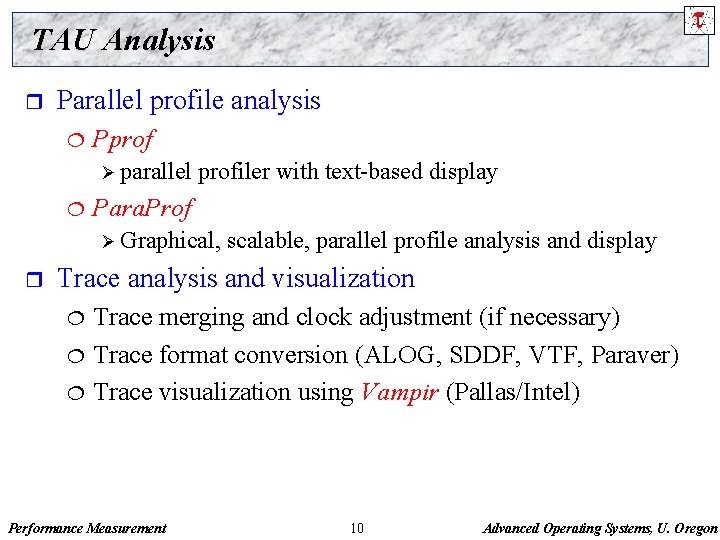

TAU Analysis r Parallel profile analysis ¦ Pprof Ø parallel ¦ profiler with text-based display Para. Prof Ø Graphical, r scalable, parallel profile analysis and display Trace analysis and visualization ¦ ¦ ¦ Trace merging and clock adjustment (if necessary) Trace format conversion (ALOG, SDDF, VTF, Paraver) Trace visualization using Vampir (Pallas/Intel) Performance Measurement 10 Advanced Operating Systems, U. Oregon

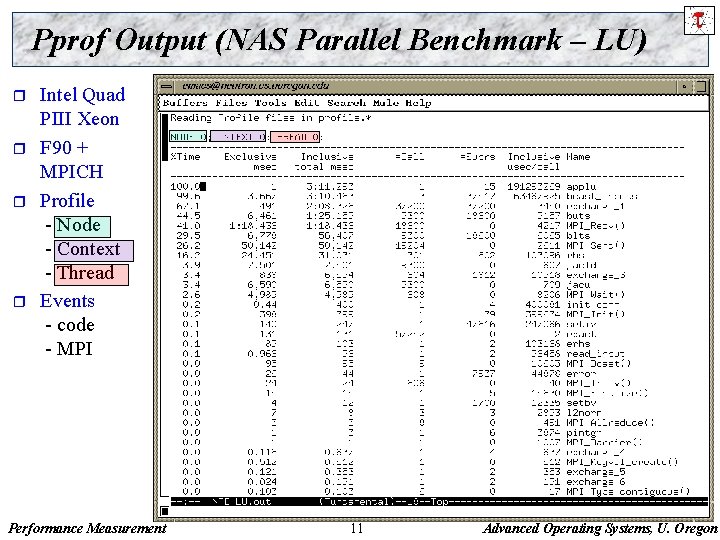

Pprof Output (NAS Parallel Benchmark – LU) r r Intel Quad PIII Xeon F 90 + MPICH Profile - Node - Context - Thread Events - code - MPI Performance Measurement 11 Advanced Operating Systems, U. Oregon

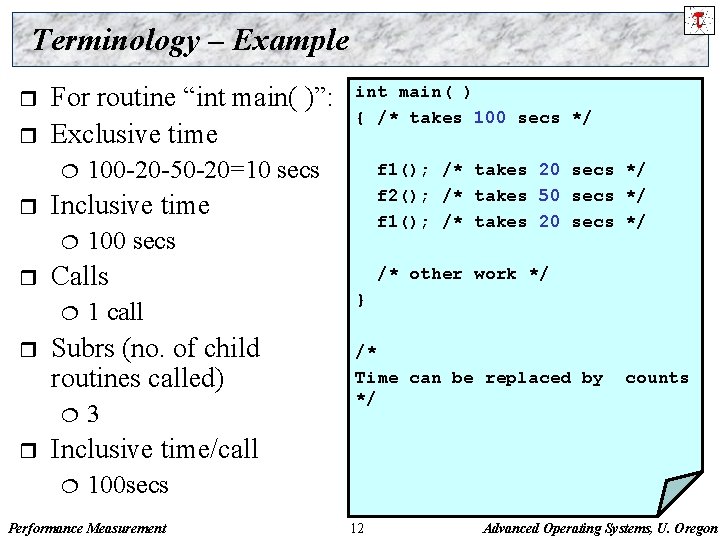

Terminology – Example r r For routine “int main( )”: Exclusive time ¦ r 1 call Subrs (no. of child routines called) ¦ r f 1(); /* takes 20 secs */ f 2(); /* takes 50 secs */ f 1(); /* takes 20 secs */ 100 secs Calls ¦ r 100 -20 -50 -20=10 secs Inclusive time ¦ r int main( ) { /* takes 100 secs */ 3 /* other work */ } /* Time can be replaced by */ counts Inclusive time/call ¦ 100 secs Performance Measurement 12 Advanced Operating Systems, U. Oregon

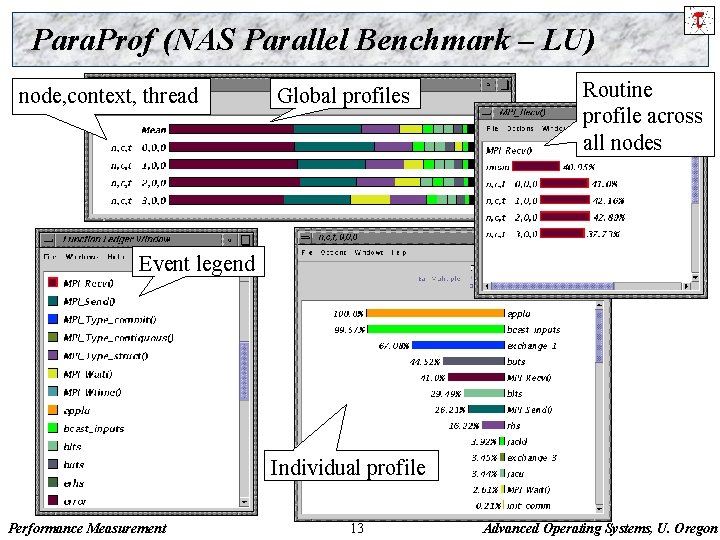

Para. Prof (NAS Parallel Benchmark – LU) node, context, thread Global profiles Routine profile across all nodes Event legend Individual profile Performance Measurement 13 Advanced Operating Systems, U. Oregon

![Trace Visualization using Vampir [Intel/Pallas] Timeline display Callgraph display Parallelism display Communications display Performance Trace Visualization using Vampir [Intel/Pallas] Timeline display Callgraph display Parallelism display Communications display Performance](http://slidetodoc.com/presentation_image_h2/f94849c0f689914fe52b047425b5012a/image-14.jpg)

Trace Visualization using Vampir [Intel/Pallas] Timeline display Callgraph display Parallelism display Communications display Performance Measurement 14 Advanced Operating Systems, U. Oregon

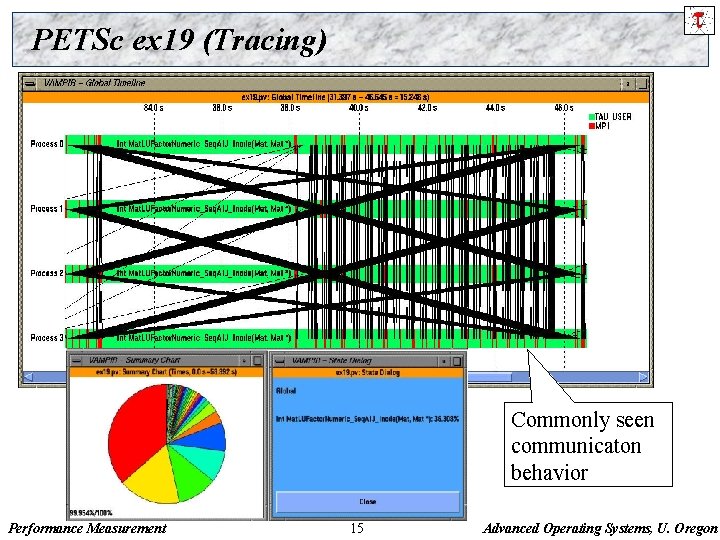

PETSc ex 19 (Tracing) Commonly seen communicaton behavior Performance Measurement 15 Advanced Operating Systems, U. Oregon

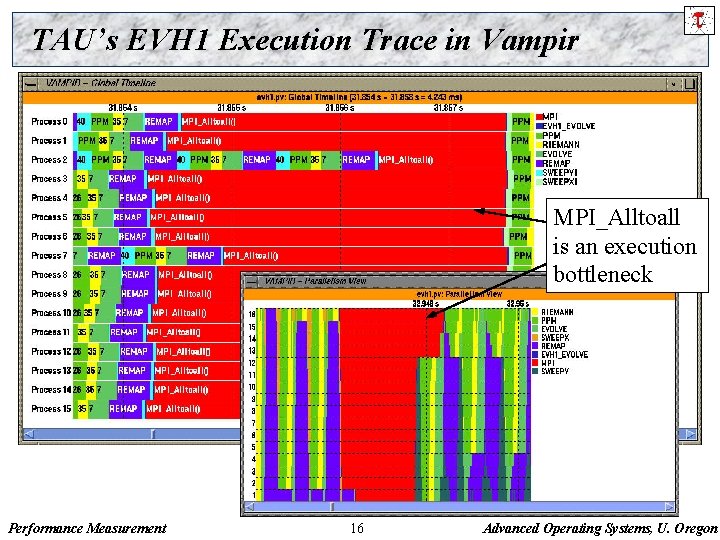

TAU’s EVH 1 Execution Trace in Vampir MPI_Alltoall is an execution bottleneck Performance Measurement 16 Advanced Operating Systems, U. Oregon

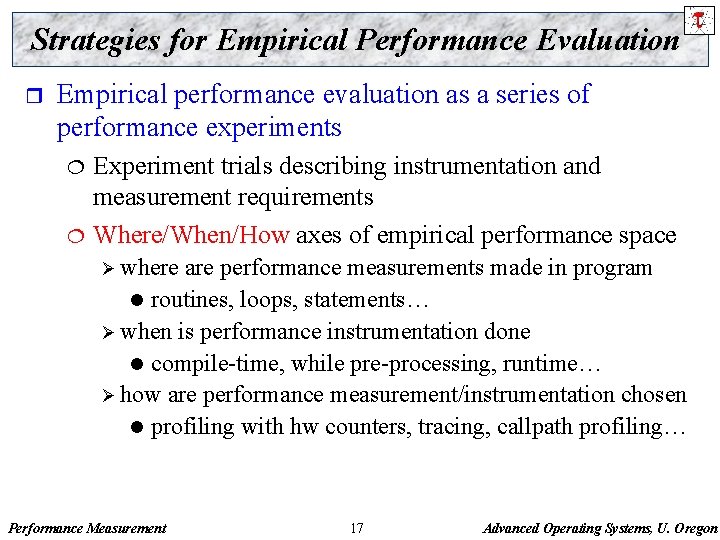

Strategies for Empirical Performance Evaluation r Empirical performance evaluation as a series of performance experiments ¦ ¦ Experiment trials describing instrumentation and measurement requirements Where/When/How axes of empirical performance space Ø where are performance measurements made in program l routines, loops, statements… Ø when is performance instrumentation done l compile-time, while pre-processing, runtime… Ø how are performance measurement/instrumentation chosen l profiling with hw counters, tracing, callpath profiling… Performance Measurement 17 Advanced Operating Systems, U. Oregon

TAU Instrumentation Approach r Support for standard program events ¦ ¦ ¦ r Support for user-defined events ¦ ¦ ¦ r r r Routines Classes and templates Statement-level blocks Begin/End events (“user-defined timers”) Atomic events Selection of event statistics Support definition of “semantic” entities for mapping Support for event groups Instrumentation optimization Performance Measurement 18 Advanced Operating Systems, U. Oregon

TAU Instrumentation r Flexible instrumentation mechanisms at multiple levels ¦ Source code Ø manual Ø automatic C, C++, F 77/90/95 (Program Database Toolkit (PDT)) l Open. MP (directive rewriting (Opari), POMP spec) l ¦ Object code Ø pre-instrumented libraries (e. g. , MPI using PMPI) Ø statically-linked and dynamically-linked ¦ Executable code Ø dynamic instrumentation (pre-execution) (Dyn. Inst. API) Ø virtual machine instrumentation (e. g. , Java using JVMPI) Performance Measurement 19 Advanced Operating Systems, U. Oregon

Multi-Level Instrumentation r Targets common measurement interface ¦ r Multiple instrumentation interfaces ¦ r Utilizes instrumentation knowledge between levels Selective instrumentation ¦ r Simultaneously active Information sharing between interfaces ¦ r TAU API Available at each level Cross-level selection Targets a common performance model Presents a unified view of execution ¦ Consistent performance events Performance Measurement 20 Advanced Operating Systems, U. Oregon

TAU Measurement Options r Parallel profiling ¦ ¦ ¦ r Function-level, block-level, statement-level Supports user-defined events TAU parallel profile data stored during execution Hardware counts values Support for multiple counters Support for callgraph and callpath profiling Tracing ¦ ¦ ¦ All profile-level events Inter-process communication events Trace merging and format conversion Performance Measurement 21 Advanced Operating Systems, U. Oregon

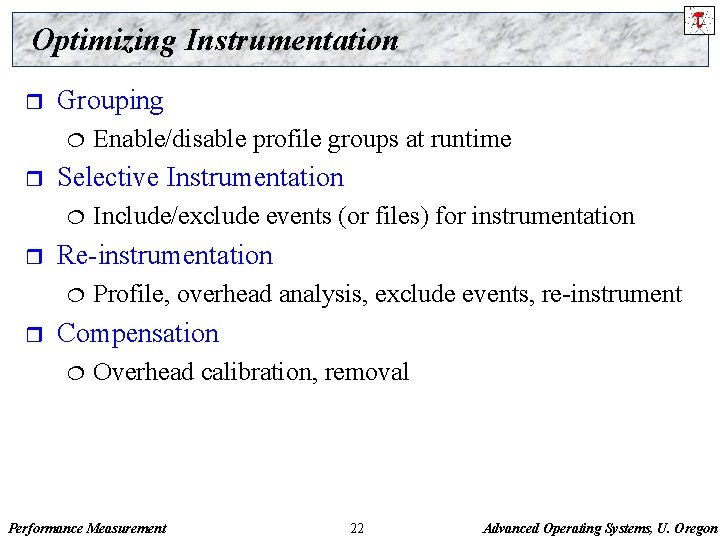

Optimizing Instrumentation r Grouping ¦ r Selective Instrumentation ¦ r Include/exclude events (or files) for instrumentation Re-instrumentation ¦ r Enable/disable profile groups at runtime Profile, overhead analysis, exclude events, re-instrument Compensation ¦ Overhead calibration, removal Performance Measurement 22 Advanced Operating Systems, U. Oregon

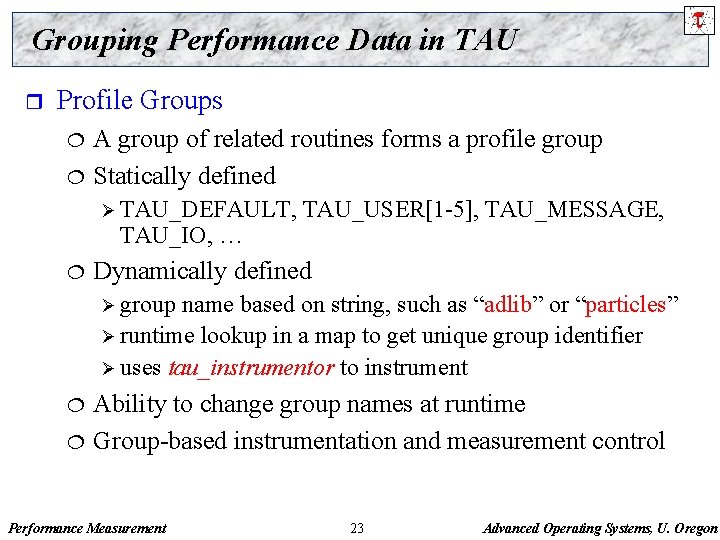

Grouping Performance Data in TAU r Profile Groups ¦ ¦ A group of related routines forms a profile group Statically defined Ø TAU_DEFAULT, TAU_IO, … ¦ TAU_USER[1 -5], TAU_MESSAGE, Dynamically defined Ø group name based on string, such as “adlib” or “particles” Ø runtime lookup in a map to get unique group identifier Ø uses tau_instrumentor to instrument ¦ ¦ Ability to change group names at runtime Group-based instrumentation and measurement control Performance Measurement 23 Advanced Operating Systems, U. Oregon

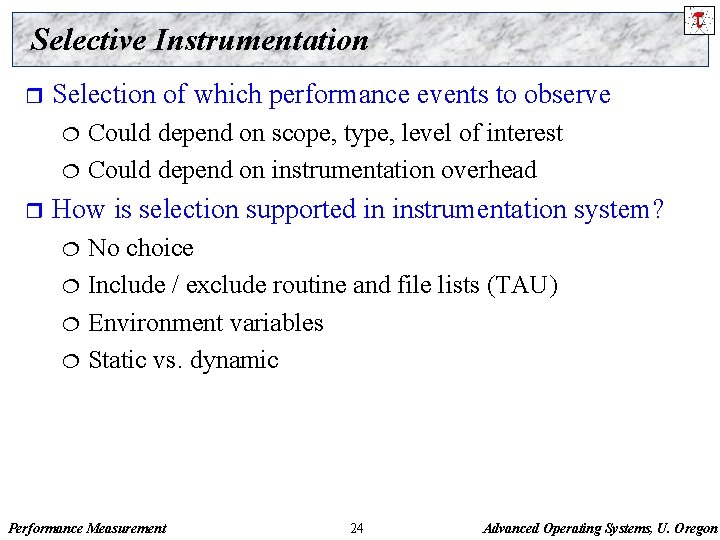

Selective Instrumentation r Selection of which performance events to observe Could depend on scope, type, level of interest ¦ Could depend on instrumentation overhead ¦ r How is selection supported in instrumentation system? No choice ¦ Include / exclude routine and file lists (TAU) ¦ Environment variables ¦ Static vs. dynamic ¦ Performance Measurement 24 Advanced Operating Systems, U. Oregon

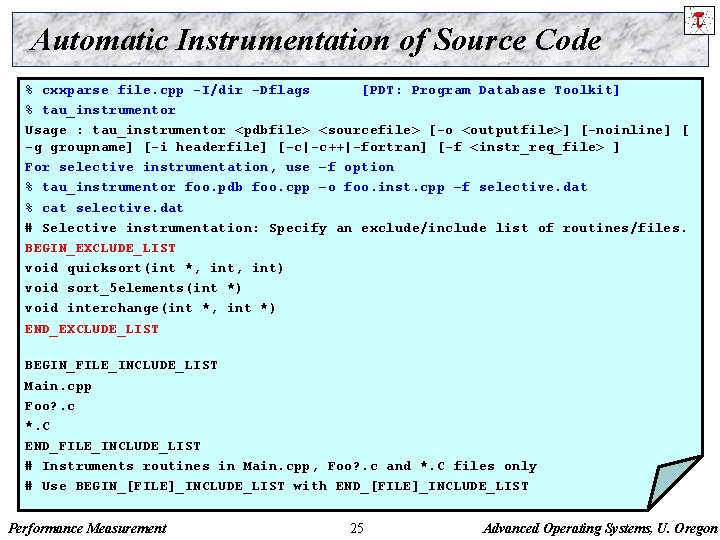

Automatic Instrumentation of Source Code % cxxparse file. cpp -I/dir -Dflags [PDT: Program Database Toolkit] % tau_instrumentor Usage : tau_instrumentor <pdbfile> <sourcefile> [-o <outputfile>] [-noinline] [ -g groupname] [-i headerfile] [-c|-c++|-fortran] [-f <instr_req_file> ] For selective instrumentation, use –f option % tau_instrumentor foo. pdb foo. cpp –o foo. inst. cpp –f selective. dat % cat selective. dat # Selective instrumentation: Specify an exclude/include list of routines/files. BEGIN_EXCLUDE_LIST void quicksort(int *, int) void sort_5 elements(int *) void interchange(int *, int *) END_EXCLUDE_LIST BEGIN_FILE_INCLUDE_LIST Main. cpp Foo? . c *. C END_FILE_INCLUDE_LIST # Instruments routines in Main. cpp, Foo? . c and *. C files only # Use BEGIN_[FILE]_INCLUDE_LIST with END_[FILE]_INCLUDE_LIST Performance Measurement 25 Advanced Operating Systems, U. Oregon

Distortion of Performance Data r Problem: Controlling instrumentation of small routines High relative measurement overhead ¦ Significant intrusion and possible perturbation ¦ r Solution: Re-instrument the application! Weed out frequently executing lightweight routine ¦ Feedback to instrumentation system ¦ Performance Measurement 26 Advanced Operating Systems, U. Oregon

Re-instrumentation Tau_reduce: rule based overhead analysis r Analyze the performance data to determine events with high (relative) overhead performance measurements r Create a select list for excluding those events r Rule grammar (used in tau_reduce tool [N. Trebon, UO]) r [Group. Name: ] Field Operator Number ¦ Group. Name indicates rule applies to events in group ¦ Field is a event metric attribute (from profile statistics) Ø numcalls, numsubs, percent, usec, cumusec, count [PAPI], totalcount, stdev, usecs/call, counts/call Operator is one of >, <, or = ¦ Number is any number ¦ Compound rules possible using & between simple rules ¦ Performance Measurement 27 Advanced Operating Systems, U. Oregon

Example Rules #Exclude all events that are members of TAU_USER #and use less than 1000 microseconds TAU_USER: usec < 1000 r #Exclude all events that have less than 100 #microseconds and are called only once usec < 1000 & numcalls = 1 r #Exclude all events that have less than 1000 usecs per #call OR have a (total inclusive) percent less than 5 usecs/call < 1000 percent < 5 r Scientific notation can be used r ¦ usec>1000 & numcalls>400000 & usecs/call<30 & percent>25 Performance Measurement 28 Advanced Operating Systems, U. Oregon

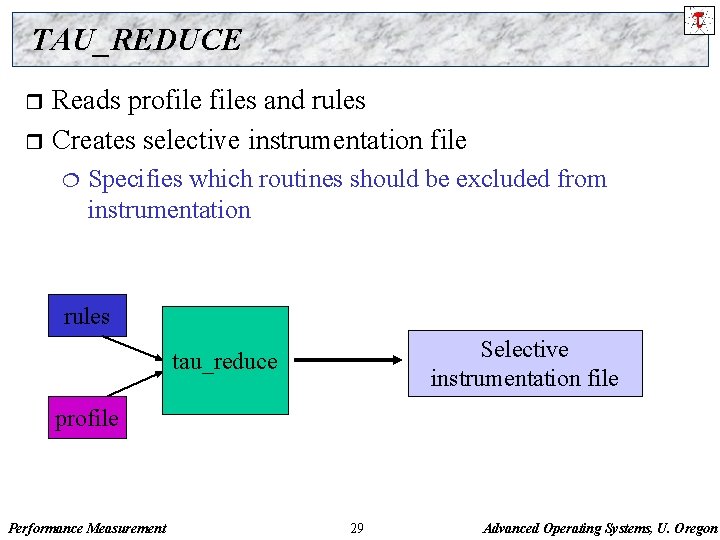

TAU_REDUCE Reads profiles and rules r Creates selective instrumentation file r ¦ Specifies which routines should be excluded from instrumentation rules Selective instrumentation file tau_reduce profile Performance Measurement 29 Advanced Operating Systems, U. Oregon

Compensation of Overhead r r Runtime estimation of a single timer overhead Evaluation of number of timer calls along a calling path Compensation by subtracting timer overhead Recalculation of performance metrics Performance Measurement 30 Advanced Operating Systems, U. Oregon

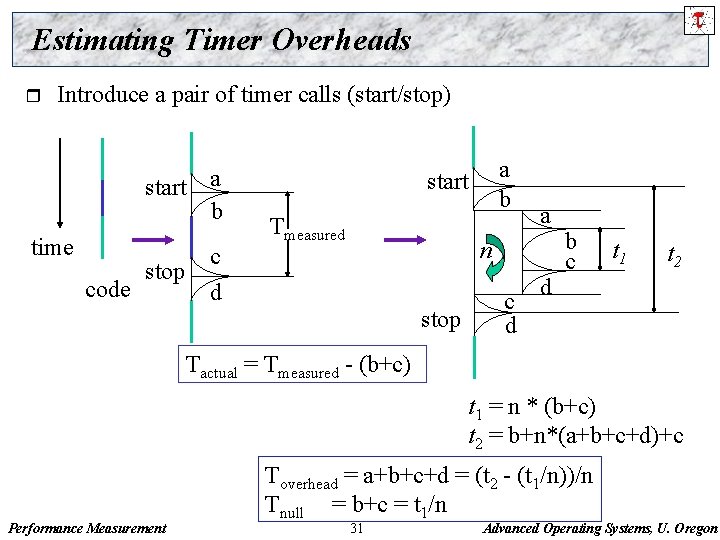

Estimating Timer Overheads r Introduce a pair of timer calls (start/stop) start a b time code stop c d a b start Tmeasured a n stop c d d b c t 1 t 2 Tactual = Tmeasured - (b+c) t 1 = n * (b+c) t 2 = b+n*(a+b+c+d)+c Performance Measurement Toverhead = a+b+c+d = (t 2 - (t 1/n))/n Tnull = b+c = t 1/n 31 Advanced Operating Systems, U. Oregon

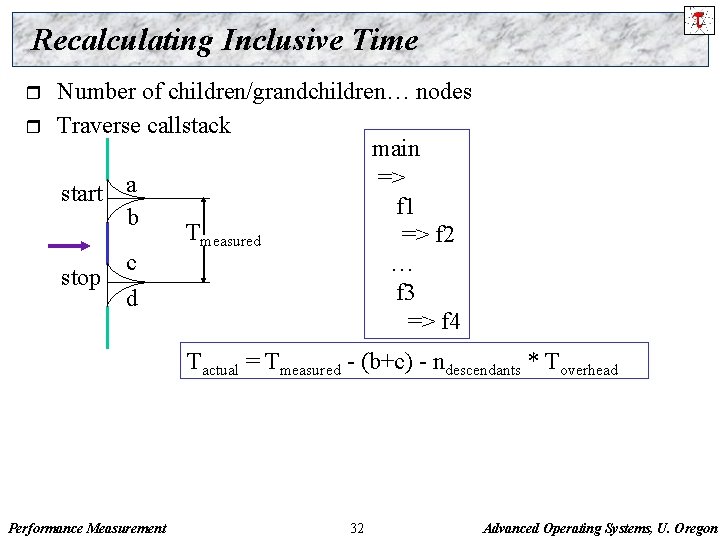

Recalculating Inclusive Time r r Number of children/grandchildren… nodes Traverse callstack main => a start f 1 b Tmeasured => f 2 c … stop f 3 d => f 4 Tactual = Tmeasured - (b+c) - ndescendants * Toverhead Performance Measurement 32 Advanced Operating Systems, U. Oregon

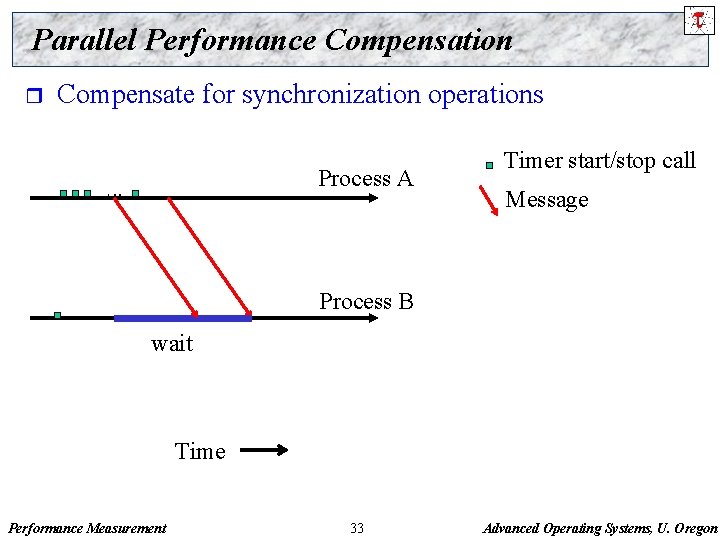

Parallel Performance Compensation r Compensate for synchronization operations Process A Timer start/stop call Message Process B wait Time Performance Measurement 33 Advanced Operating Systems, U. Oregon

![Lamport’s Logical Time [Lamport 1978] r r r Logical time incremented by timer start/stop Lamport’s Logical Time [Lamport 1978] r r r Logical time incremented by timer start/stop](http://slidetodoc.com/presentation_image_h2/f94849c0f689914fe52b047425b5012a/image-34.jpg)

Lamport’s Logical Time [Lamport 1978] r r r Logical time incremented by timer start/stop Accumulate timer overhead on local process Send local timer overhead with message t. A Process A overhead Timer start/stop call Message t. Aoverhead Process B wait Time Performance Measurement t. Aoverhead > t. Boverhead? Yes : t. Boverhead = t. Aoverhead twait’ = twait - (t. Aoverhead - t. Boverhead) = 0 (if negative) 34 Advanced Operating Systems, U. Oregon

Compensation (contd. ) r Message passing programs ¦ ¦ Adjust wait times (MPI_Recv, MPI_Wait…) Adjust barrier wait times (MPI_Barrier) Ø Each process sends its timer overheads to all other tasks Ø Each task compares its overhead with max overhead r Shared memory multi-threaded programs ¦ Adjust barrier synchronization wait times Ø Each task compares its overhead to max overhead from all participating threads ¦ Adjust semaphore/condition variable wait times Ø Each task compares its overhead with other thread’s overhead Performance Measurement 35 Advanced Operating Systems, U. Oregon

Conclusions r r Complex software and parallel computing systems pose challenging performance analysis problems that require robust methodologies and tools Optimizing instrumentation is a key step towards balancing the volume of performance data with accuracy of measurements Present new research in the area of performance perturbation compensation techniques for profiling http: //www. cs. uoregon. edu/research/paracomp/tau Performance Measurement 36 Advanced Operating Systems, U. Oregon

Support Acknowledgements r r Department of Energy (DOE) ¦ Office of Science contracts ¦ University of Utah DOE ASCI Level 1 sub-contract ¦ DOE ASCI Level 3 (LANL, LLNL) NSF National Young Investigator (NYI) award Research Centre Juelich ¦ John von Neumann Institute for Computing ¦ Dr. Bernd Mohr Los Alamos National Laboratory Performance Measurement 37 Advanced Operating Systems, U. Oregon

- Slides: 37