Performance Assessment Rubrics Rating Scales 1 Trends Definitions

Performance Assessment, Rubrics, & Rating Scales § § § 1 Trends Definitions Advantages & Disadvantages Elements for Planning Technical Concerns Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

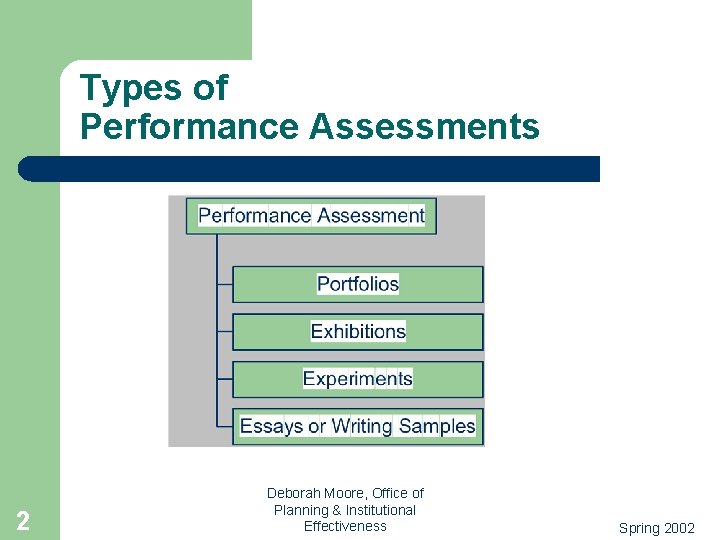

Types of Performance Assessments 2 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Performance Assessment 3 l Who is currently using performance assessments in their courses or programs? l What are some examples of these assessment tasks? Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Primary Characteristics 4 l Constructed response l Reviewed against criteria/continuum (individual or program) l Design is driven by assessment question/ decision Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Why on Rise? l l l 5 Accountability issues increasing Educational reform has been underway Growing dissatisfaction with traditional multiple choice tests (MC) Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Exercise 1 l l l 6 Locate the sample rubrics in your packet. Working with a partner, review the different rubrics. Describe what you like and what you find difficult about each (BE KIND). Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Advantages As Reported By Faculty 7 l Clarification of goals & objectives l Narrows gap between instruction & assessment l May enrich insights about students’ skills & abilities l Useful for assessing complex learning Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Advantages for Students 8 l Opportunity for detailed feedback l Motivation for learning enhanced l Process information differently Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Disadvantages l Requires Coordination –Goals –Administration –Scoring –Summary 9 & Reports Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Disadvantages l Archival/Retrieval –Accessible –Maintain 10 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Disadvantages l. Costs –Designing –Scoring (Train/Monitor) –Archiving 11 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Steps in Developing Performance Assessments 1. 2. 3. 4. 5. 12 Clarify purpose/reason for assessment Clarify performance Design tasks Design rating plan Pilot/revise Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Steps in Developing Rubrics 1. Identify purpose/reason for rating scale 2. Define clearly what is to be rated 3. Decide which you will use a. Holistic or Analytic b. Generic or Task-Specific 4. Draft the rating scale and have it reviewed 13 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

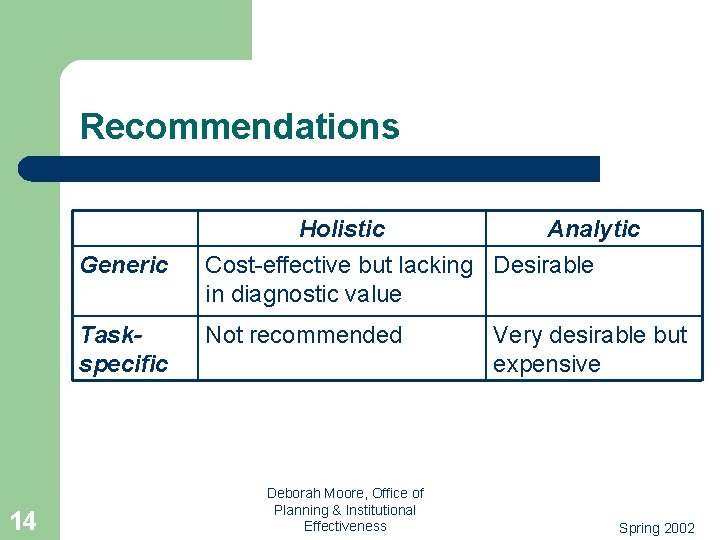

Recommendations Generic Taskspecific 14 Holistic Analytic Cost-effective but lacking Desirable in diagnostic value Not recommended Deborah Moore, Office of Planning & Institutional Effectiveness Very desirable but expensive Spring 2002

Steps in Developing Rubrics (continued) 5. 6. 7. 8. 15 Pilot your assessment tasks and review Apply your rating scales Determine the reliability of the ratings Evaluate results and revise as needed. Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

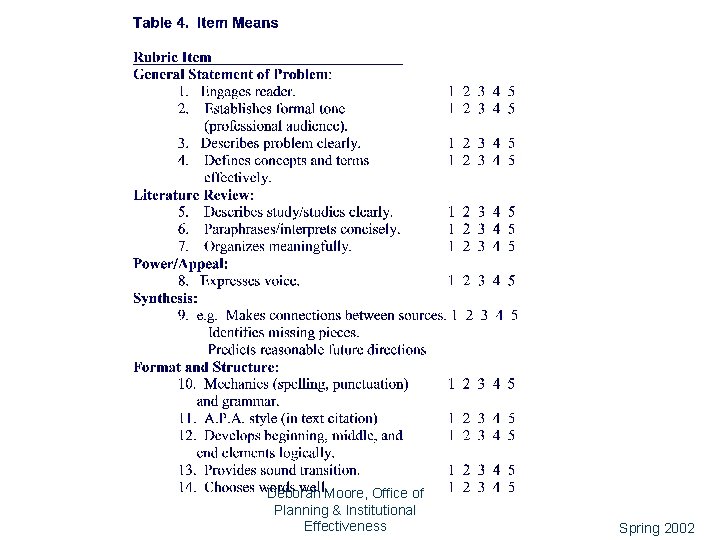

16 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Descriptive Rating Scales l l 17 Each rating scale point has a phrase, sentence, or even paragraph describing what is being rated. Generally recommended over graded-category rating scales. Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

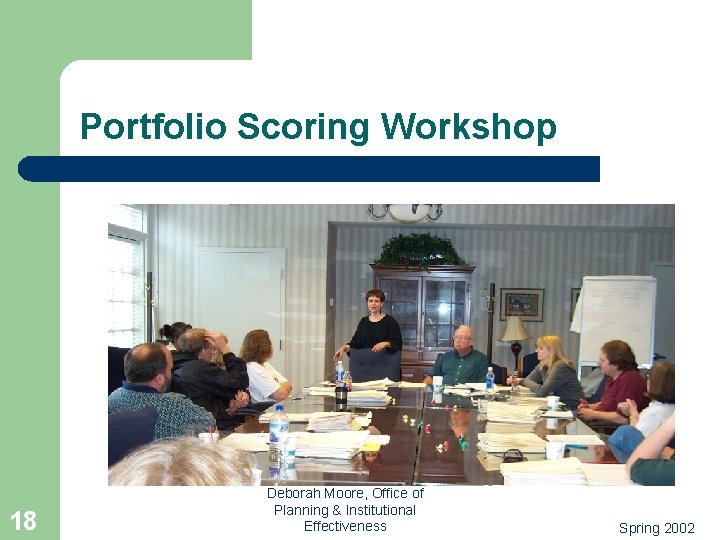

Portfolio Scoring Workshop 18 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Subject Matter Expertise Experts like Dr. Edward White join faculty in their work to refine scoring rubrics and monitor the process. 19 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Exercise 2 l l l 20 Locate the University of South Florida example. Identify the various rating strategies that are involved in use of this form. Identify strengths and weaknesses of this form. Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Common Strategy Used l l l 21 Instructor assigns individual grade for an assignment within a course. Assignments are forwarded to program-level assessment team. Team randomly selects a set of assignments and assigns a different rating scheme. Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Exercise 3 l l Locate Rose-Hulman criteria. Select one of the criteria. In 1 -2 sentences, describe an assessment task/scenario for that criterion. Develop rating scales for the criterion. – – 22 List traits Describe distinctions along continuum of ratings Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

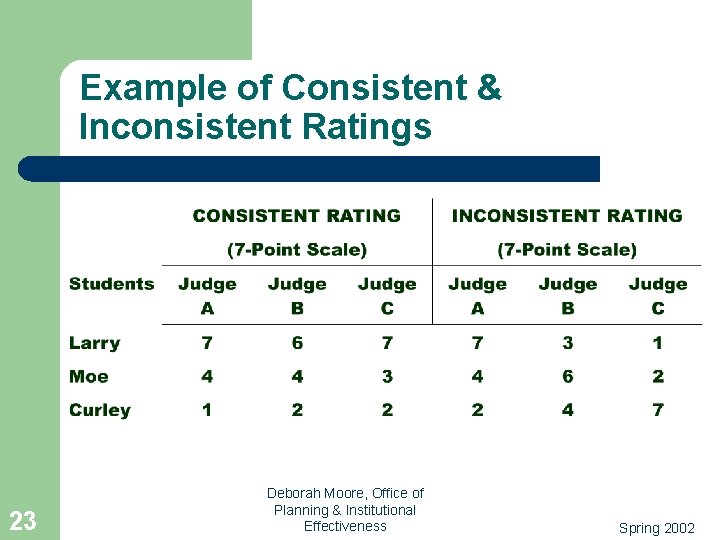

Example of Consistent & Inconsistent Ratings 23 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Calculating Rater Agreement (3 Raters for 2 Papers) 24 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Rater Selection and Training 25 l Identify raters carefully. l Train raters about purpose of assessment and to use rubrics appropriately. l Study rating patterns and do not keep raters who are inconsistent. Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Some Rating Problems l l l 26 Leniency/Severity Response set Central tendency Idiosyncrasy Lack of interest Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

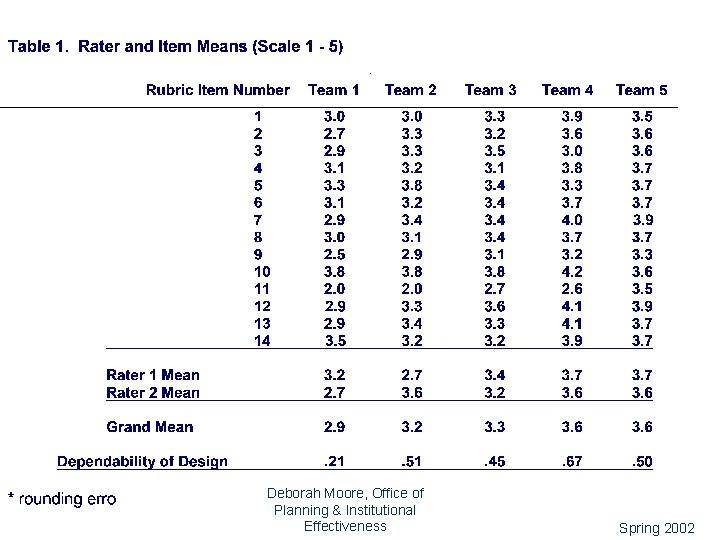

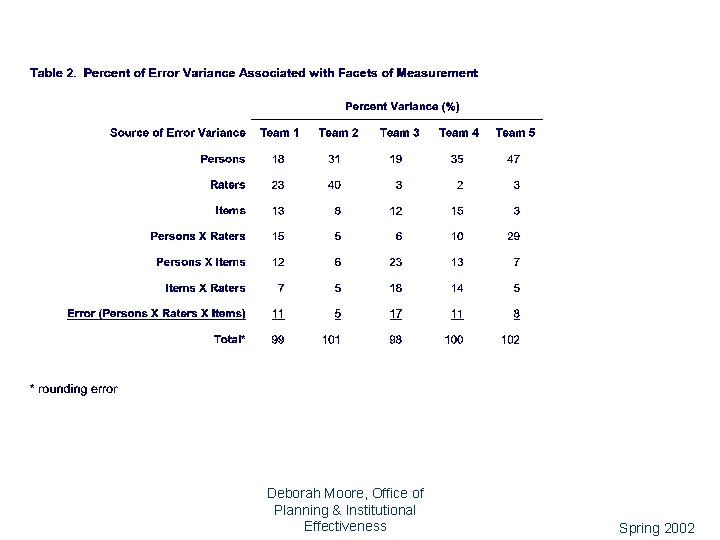

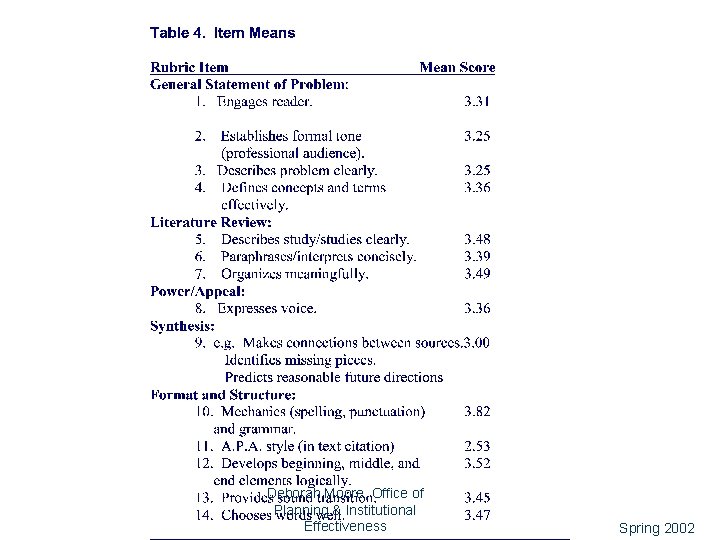

Exercise 4 l l l 27 Locate Generalizability Study tables (1 -4). In reviewing table 1, describe the plan for rating the performance. What kinds of rating problems do you see? In table 2, what seems to be the biggest rating problem? In table 3, what seems to have more impact, additional items or raters? Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Generalizability Study (GENOVA) 28 l G Study: identifies sources of error (facet) in the overall design; estimates error variance for each facet of the measurement design l D Study: estimates reliability of ratings with current design to project outcome of alternative designs Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

29 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

30 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

31 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

32 Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

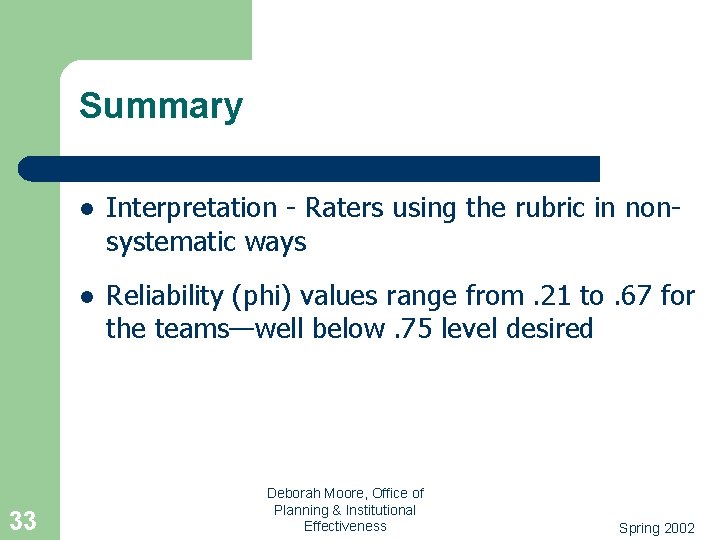

Summary 33 l Interpretation - Raters using the rubric in nonsystematic ways l Reliability (phi) values range from. 21 to. 67 for the teams—well below. 75 level desired Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

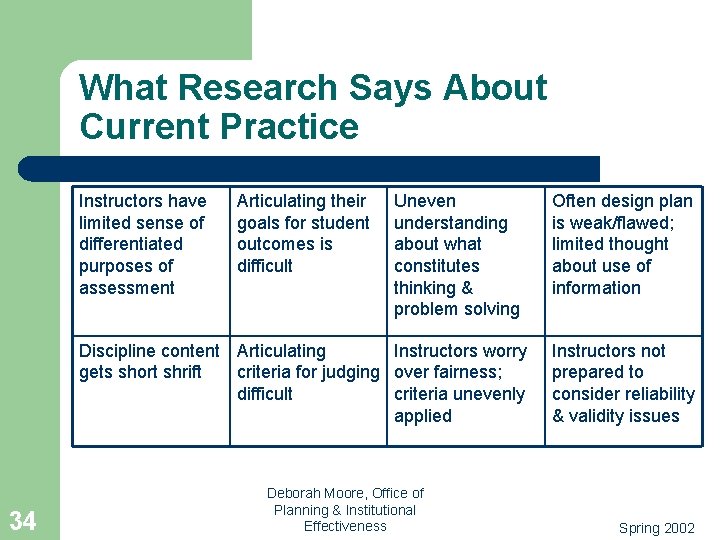

What Research Says About Current Practice Instructors have limited sense of differentiated purposes of assessment Articulating their goals for student outcomes is difficult Uneven understanding about what constitutes thinking & problem solving Discipline content Articulating Instructors worry gets short shrift criteria for judging over fairness; difficult criteria unevenly applied 34 Deborah Moore, Office of Planning & Institutional Effectiveness Often design plan is weak/flawed; limited thought about use of information Instructors not prepared to consider reliability & validity issues Spring 2002

Summary l l l 35 Use on the rise Costly Psychometrically challenging Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

Thank you for your attention. 36 l Deborah Moore, Assessment Specialist l 101 B Alumni Gym Office of Planning & Institutional Effectiveness l dlmoor 2@email. uky. edu l 859/257 -7086 l http: //www. uky. edu/Lex. Campus/; http: //www. uky. edu/OPIE/ Thank you for attending. Deborah Moore, Office of Planning & Institutional Effectiveness Spring 2002

- Slides: 36