Perception for Robot Detection 20111208 Robot Detection Robot

Perception for Robot Detection 2011/12/08

Robot Detection • Robot Detection • Better Localization and Tracking • No Collisions with others

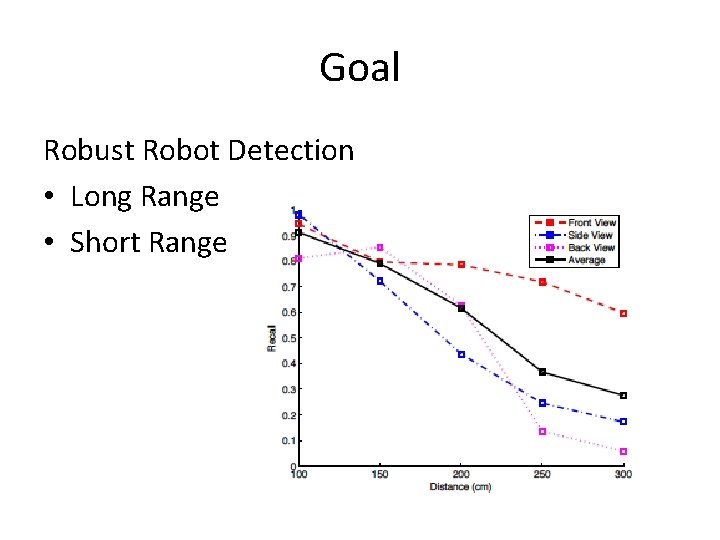

Goal Robust Robot Detection • Long Range • Short Range

Long Range Current Method:Heuristic Color-Based non-line white segments are clustered. The extracted clusters are classified as Nao robots if the following three criterions are satisfied: • the number of segments in the cluster should be larger than 3 • the width-to-height ratio should be larger than 0. 2 • the highest point of the cluster should be close enough to the border line within 10 pixels as the observed robot should intersect with the field border in the camera view if both of the observing and observed robots are standing in the field.

Long Range Improvement:Feature-Based Considerations • Scale Invariant • Affine Invariant • Complexity Possible Solutions 1. SIFT(Scale-invariant feature transform) 2. SURF(Speeded Up Robust Features) 3. MSER(Maximally Stable Extremal Regions)

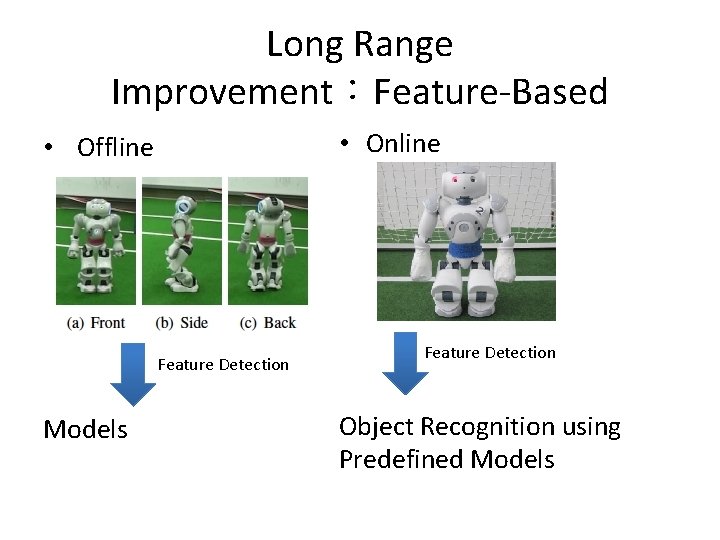

Long Range Improvement:Feature-Based • Online • Offline Feature Detection Models Feature Detection Object Recognition using Predefined Models

Short Range Sonar and Vision • Two-Stage 1. Sonar 2. Active Vision – Feet Detection(sufficiently large white spot)

New NAO Possible Improvement • Using two cameras – One for ball, the other for localization – One for feet detection, the other for localization – …… • Not Downsampling – 320 x 240 -> 640 x 480

References • • bhuman 11_coderelease SIFT(http: //www. cs. ubc. ca/~lowe/keypoints/) SURF Paper:Speeded-Up Robust Features (SURF) MSER Tracking Paper:Efficient Maximally Stable Extremal Region (MSER) Tracking

Perception for Robot Detection 2011/12/22

COLOR-BASED SUCCESSFUL CASES

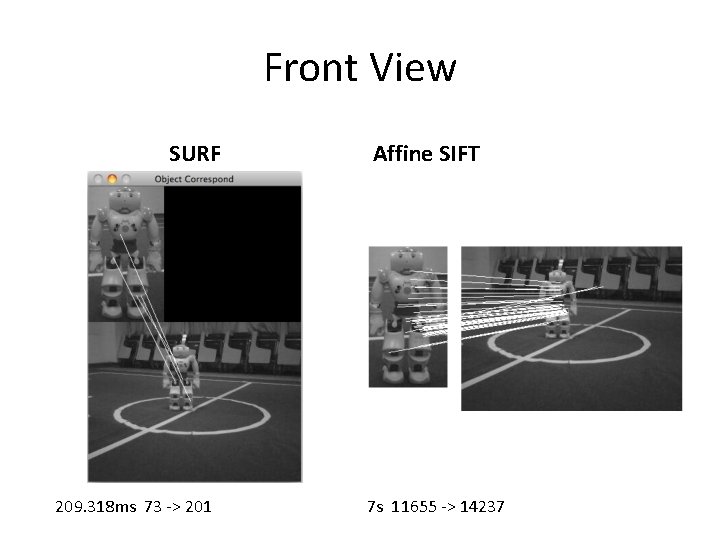

Front View SURF 209. 318 ms 73 -> 201 Affine SIFT 7 s 11655 -> 14237

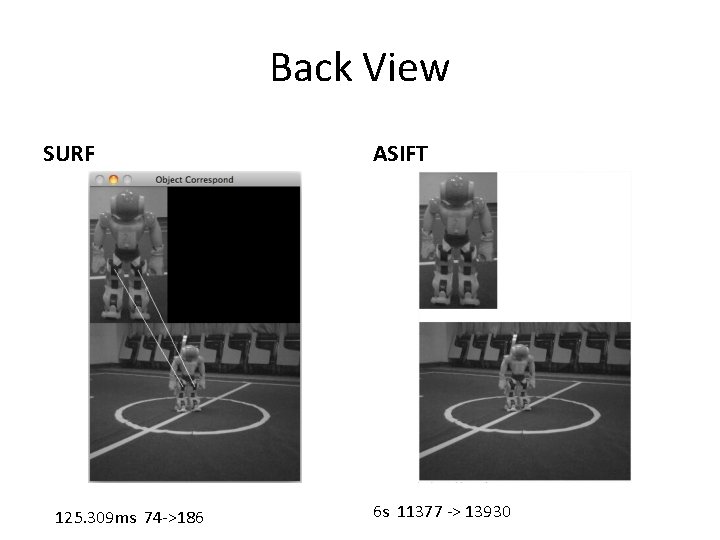

Back View SURF 125. 309 ms 74 ->186 ASIFT 6 s 11377 -> 13930

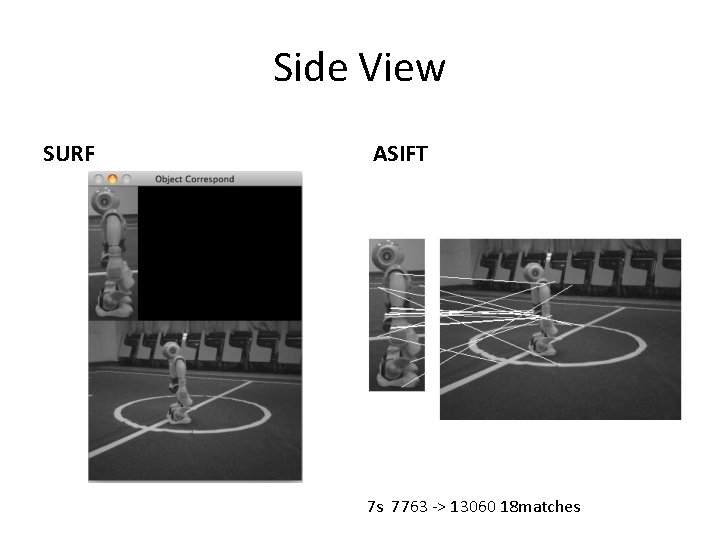

Side View SURF ASIFT 7 s 7763 -> 13060 18 matches

COLOR-BASED FAILED CASES

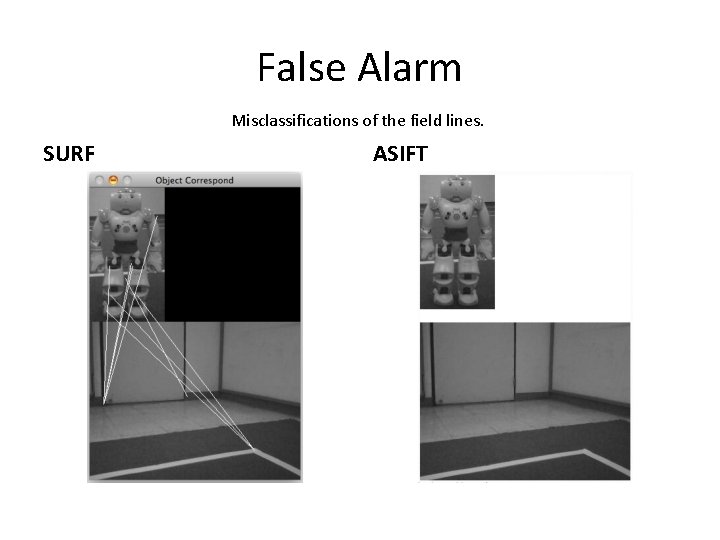

False Alarm Misclassifications of the field lines. SURF ASIFT

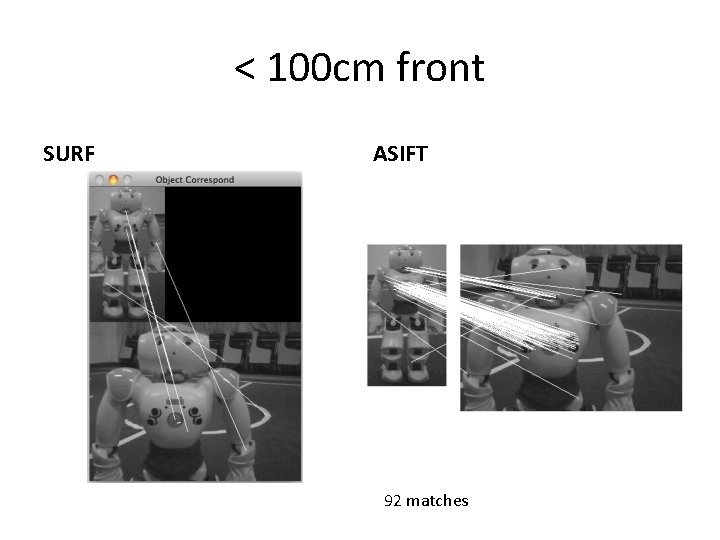

< 100 cm front SURF ASIFT 92 matches

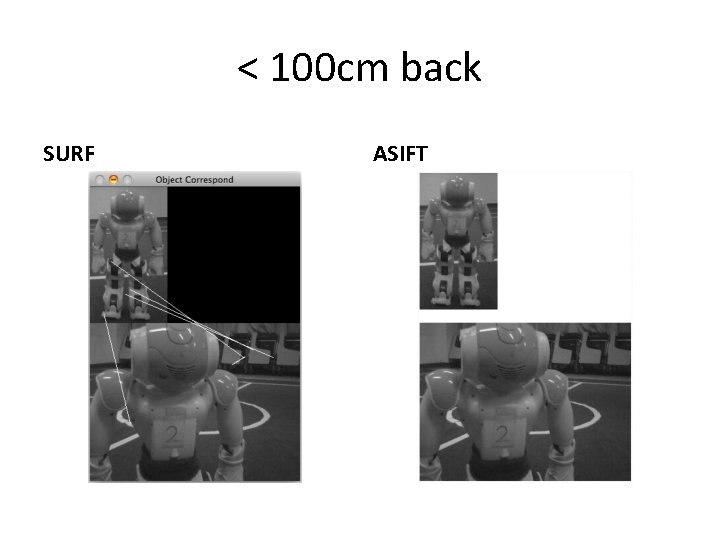

< 100 cm back SURF ASIFT

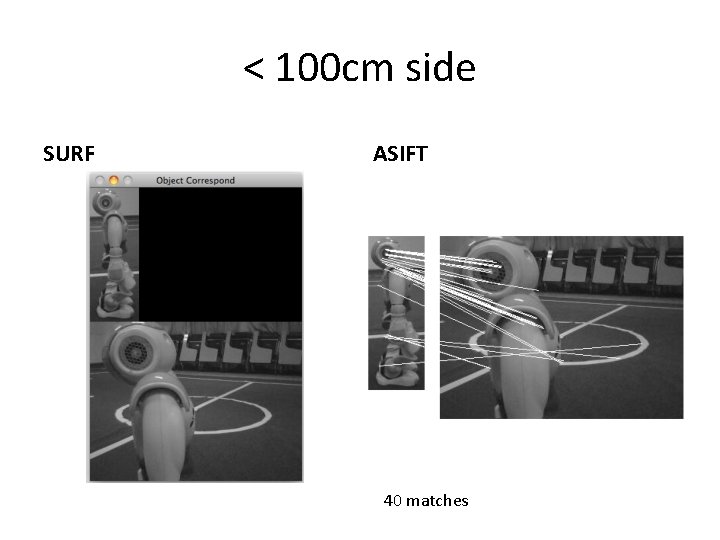

< 100 cm side SURF ASIFT 40 matches

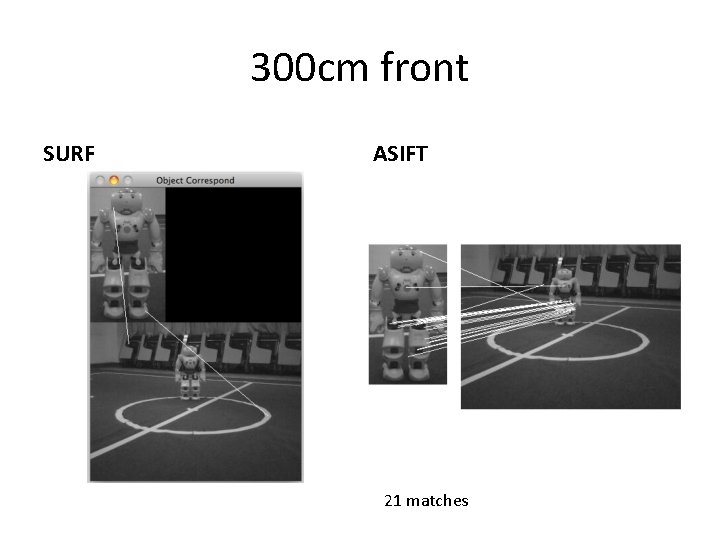

300 cm front SURF ASIFT 21 matches

300 cm side ASIFT

350 cm front ASIFT

Conclusion • Performance is not significantly better • Processing time is an issue

Perception for Robot Detection 2011/1/5

ROBOT DETECTION USING ADABOOST WITH SIFT

Multi-Class Training Stage:Using Adaboost • Classes = (different view point of nao robots) X (different scale of nao robots) X (different illuminations) • Input:For each class, training images (I 1, l 1)…(In, ln) where li = 0, 1 for negative and positive examples, respectively. • Output:strong classifier (set of weak classifiers) for each class.

Issues In Training Stage • Number of Classes:It depends on the limits of SIFT features(angle of view-invariant, range of scale-invariant, degree of illuminationinvariant)

Detection Stage • Input:input image from nao camera • Output: 1. Number of robots in the image 2. Classes each robot belongs to => rough distance and facing direction of the detected robot

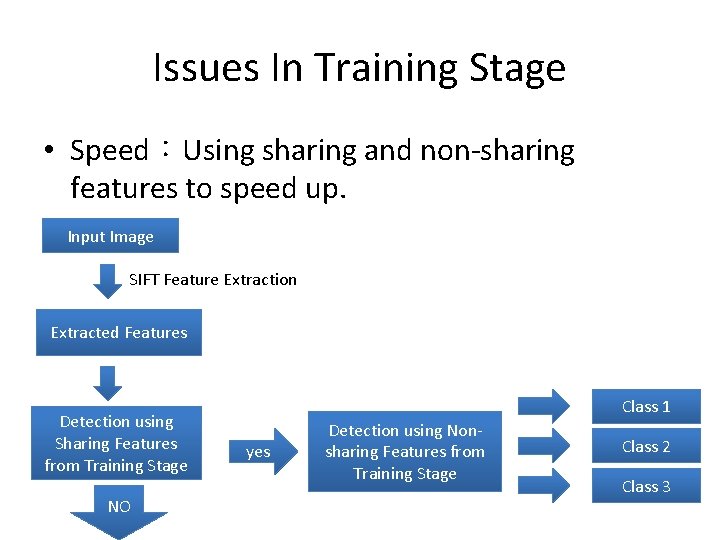

Issues In Training Stage • Speed:Using sharing and non-sharing features to speed up. Input Image SIFT Feature Extraction Extracted Features Detection using Sharing Features from Training Stage NO Class 1 yes Detection using Nonsharing Features from Training Stage Class 2 Class 3

References • Hand Posture Recognition Using Adaboost with SIFT for Human Robot Interaction • Sharing features: efficient boosting procedures for multiclass object detection

Aldebaran SDK 2012/3/16

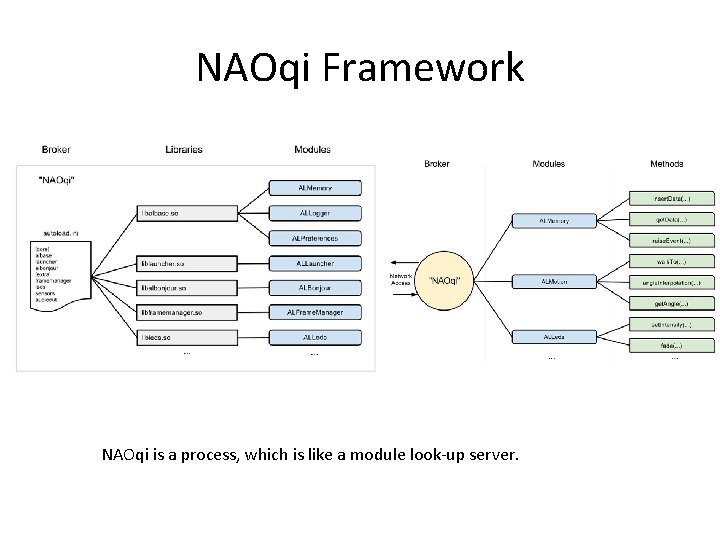

NAOqi Framework NAOqi is a process, which is like a module look-up server.

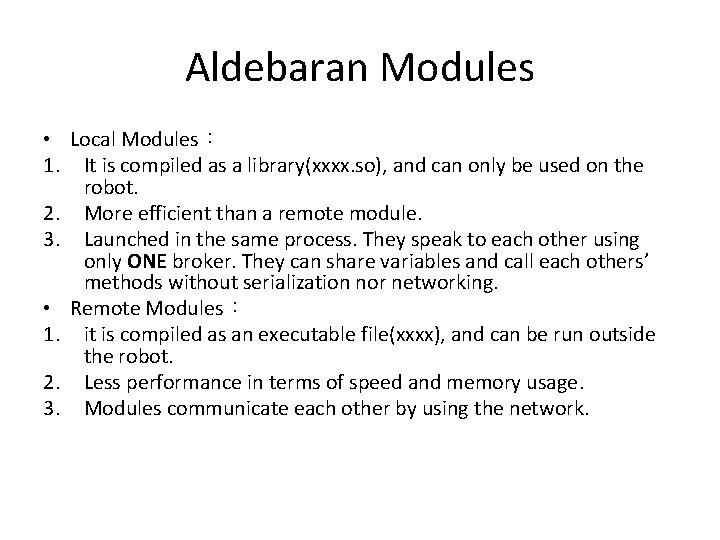

Aldebaran Modules • Local Modules: 1. It is compiled as a library(xxxx. so), and can only be used on the robot. 2. More efficient than a remote module. 3. Launched in the same process. They speak to each other using only ONE broker. They can share variables and call each others’ methods without serialization nor networking. • Remote Modules: 1. it is compiled as an executable file(xxxx), and can be run outside the robot. 2. Less performance in terms of speed and memory usage. 3. Modules communicate each other by using the network.

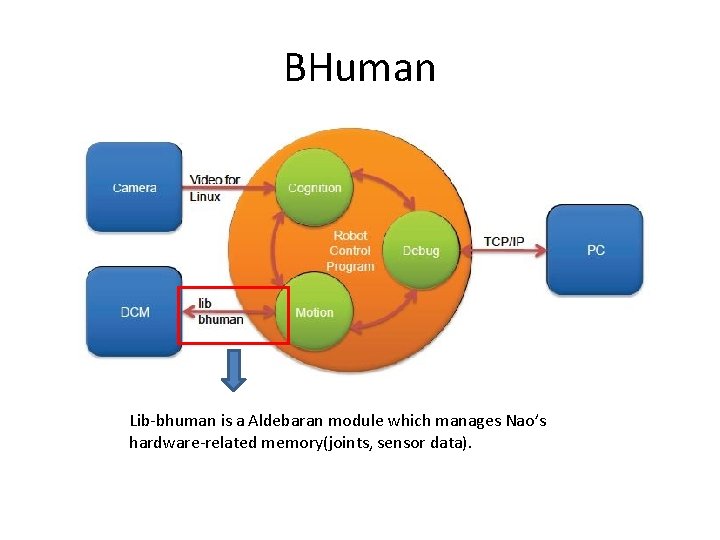

BHuman Lib-bhuman is a Aldebaran module which manages Nao’s hardware-related memory(joints, sensor data).

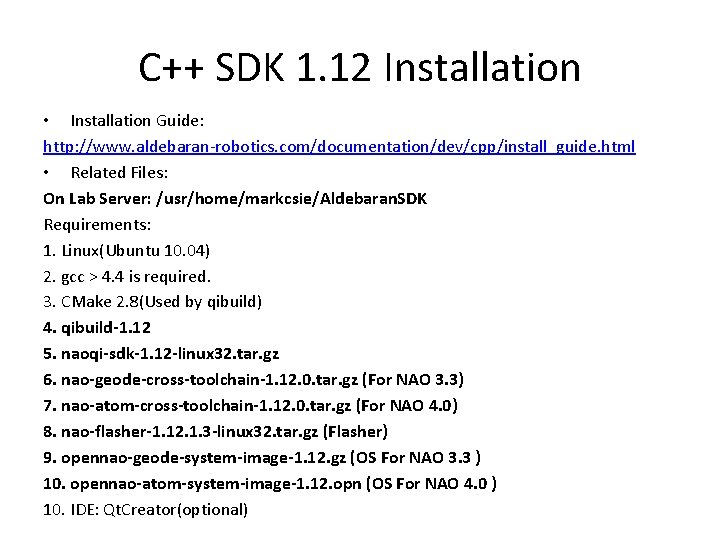

C++ SDK 1. 12 Installation • Installation Guide: http: //www. aldebaran-robotics. com/documentation/dev/cpp/install_guide. html • Related Files: On Lab Server: /usr/home/markcsie/Aldebaran. SDK Requirements: 1. Linux(Ubuntu 10. 04) 2. gcc > 4. 4 is required. 3. CMake 2. 8(Used by qibuild) 4. qibuild-1. 12 5. naoqi-sdk-1. 12 -linux 32. tar. gz 6. nao-geode-cross-toolchain-1. 12. 0. tar. gz (For NAO 3. 3) 7. nao-atom-cross-toolchain-1. 12. 0. tar. gz (For NAO 4. 0) 8. nao-flasher-1. 12. 1. 3 -linux 32. tar. gz (Flasher) 9. opennao-geode-system-image-1. 12. gz (OS For NAO 3. 3 ) 10. opennao-atom-system-image-1. 12. opn (OS For NAO 4. 0 ) 10. IDE: Qt. Creator(optional)

![Installation 1. Edit ~/. bashrc: export LD_LIBRARY_PATH=[path to sdk]/lib export PATH=${PATH}: ~/. local/bin: ~/bin Installation 1. Edit ~/. bashrc: export LD_LIBRARY_PATH=[path to sdk]/lib export PATH=${PATH}: ~/. local/bin: ~/bin](http://slidetodoc.com/presentation_image_h/0326fe1f56f777ae892a36a132b3c0a0/image-36.jpg)

Installation 1. Edit ~/. bashrc: export LD_LIBRARY_PATH=[path to sdk]/lib export PATH=${PATH}: ~/. local/bin: ~/bin 2. $ [path to qibuild]/install-qibuild. sh 3. $ cd [Programming Workspace] $ qibuild init –interactive(choose UNIX Makefiles) 4. $ qitoolchain create [toolchain name] [path to sdk]/toolchain. xml –default

![Create and Build a Project 1. $ qibuild create [project name] 2. $ qibuild Create and Build a Project 1. $ qibuild create [project name] 2. $ qibuild](http://slidetodoc.com/presentation_image_h/0326fe1f56f777ae892a36a132b3c0a0/image-37.jpg)

Create and Build a Project 1. $ qibuild create [project name] 2. $ qibuild configure [project name] –c [toolchain name] (--release) 3. $ qibuild make [project name] –c [toolchain name] (–release) 4. $ qibuild open [project name] • 3. == running Makefile

![Cross Compile(Local Module) $ qitoolchain create opennao-geode [path to cross toolchain]/toolchain. xml –default $ Cross Compile(Local Module) $ qitoolchain create opennao-geode [path to cross toolchain]/toolchain. xml –default $](http://slidetodoc.com/presentation_image_h/0326fe1f56f777ae892a36a132b3c0a0/image-38.jpg)

Cross Compile(Local Module) $ qitoolchain create opennao-geode [path to cross toolchain]/toolchain. xml –default $ qibuild configure [project name] –c opennaogeode $ qibuild make [project name] –c opennaogeode

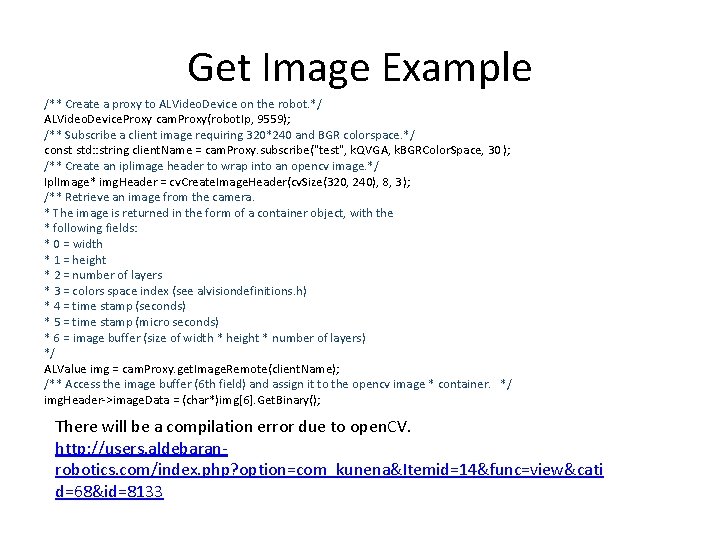

Get Image Example /** Create a proxy to ALVideo. Device on the robot. */ ALVideo. Device. Proxy cam. Proxy(robot. Ip, 9559); /** Subscribe a client image requiring 320*240 and BGR colorspace. */ const std: : string client. Name = cam. Proxy. subscribe("test", k. QVGA, k. BGRColor. Space, 30 ); /** Create an iplimage header to wrap into an opencv image. */ Ipl. Image* img. Header = cv. Create. Image. Header(cv. Size(320, 240), 8, 3 ); /** Retrieve an image from the camera. * The image is returned in the form of a container object, with the * following fields: * 0 = width * 1 = height * 2 = number of layers * 3 = colors space index (see alvisiondefinitions. h) * 4 = time stamp (seconds) * 5 = time stamp (micro seconds) * 6 = image buffer (size of width * height * number of layers) */ ALValue img = cam. Proxy. get. Image. Remote(client. Name); /** Access the image buffer (6 th field) and assign it to the opencv image * container. */ img. Header->image. Data = (char*)img[6]. Get. Binary(); There will be a compilation error due to open. CV. http: //users. aldebaranrobotics. com/index. php? option=com_kunena&Itemid=14&func=view&cati d=68&id=8133

NAO OS • NAO 3. X : http: //www. aldebaranrobotics. com/documentation/software/naoflash er/rescue_nao_v 3. html? highlight=flasher • NAO 4. 0 : http: //www. aldebaranrobotics. com/documentation/software/naoflash er/rescue_nao_v 4. html

Connect to NAO • Wired Connection(Windows only): Plug the Ethernet cable, then press the chest button, NAO will speak out his IP address. Connect to NAO using web browser. • Wireless Connection: http: //www. aldebaranrobotics. com/documentation/naoconnecting. html

Software, Documentation and Forum http: //users. aldebaran-robotics. com/ Account: nturobotpal Password: xxxxx

- Slides: 43