PeertoPeer Networks 14 740 Fundamentals of Computer Networks

- Slides: 32

Peer-to-Peer Networks 14 -740: Fundamentals of Computer Networks Credit to Bill Nace, 14 -740, Fall 2017 Material from Computer Networking: A Top Down Approach, 6 th edition. J. F. Kurose and K. W. Ross

traceroute • P 2 P Overview • Architecture components • Napster (Centralized) • Gnutella (Distributed) • Skype and Ka. Za. A (Hybrid, Hierarchical) • Ka. Za. A Reverse Engineering Study 14 -740: Spring 2018 2

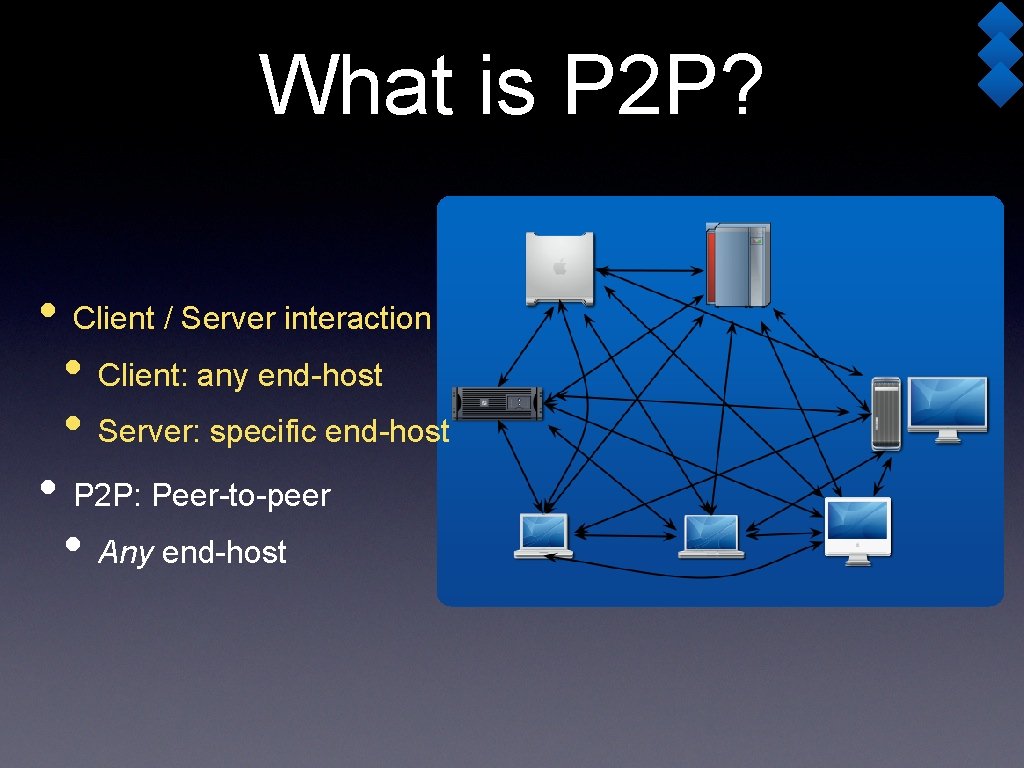

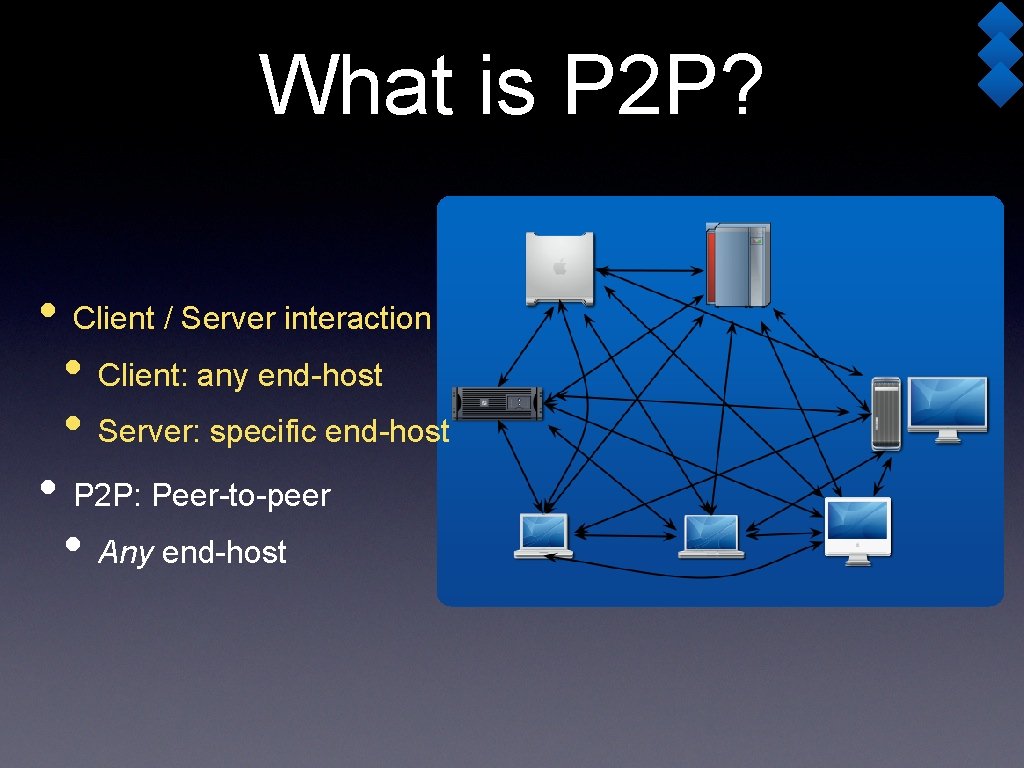

What is P 2 P? • Client / Server interaction • Client: any end-host • Server: specific end-host • P 2 P: Peer-to-peer • Any end-host

• Aim to leverage resources available on “clients” (peers) • Hard drive space • Bandwidth (especially upload) • Computational power • Anonymity (i. e. Zombie botnets) • “Edge-ness” (i. e. being distributed at network edges)

• Clients are particularly fickle • Users have not agreed to provide any particular level of service • Users are not altruistic -- algorithm must force participation without allowing cheating • Clients are not trusted • Client code may be modified • And yet, availability of resources must be assured

P 2 P History • Proto-P 2 P systems exist • DNS, Netnews/Usenet • Xerox Grapevine (~1982): name, mail delivery service • Kicked into high gear in 1999 • Many users had “always-on” broadband net connections • 1 st Generation: Napster (music exchange) • 2 nd Generation: Freenet, Gnutella, Kazaa, Bit. Torrent • More scalable, designed for anonymity, fault-tolerant • 3 rd Generation: Middleware -- Pastry, Chord • Provide for overlay routing to place/find resources 14 -740: Spring 2018 6

P 2 P Architecture • Content Directory • “Database” of content • Structured? Unstructured? • Which peer has what files? • Metadata: Other info about files • Signaling protocol • How do peers exchange coordination messages? • Proprietary? Encrypted? 14 -740: Spring 2018 7

Architecture (2) • File transfer • How does a peer retrieve a file from another peer? • HTTP or HTTP-like • Any peer must be able to send reply messages 14 -740: Spring 2018 8

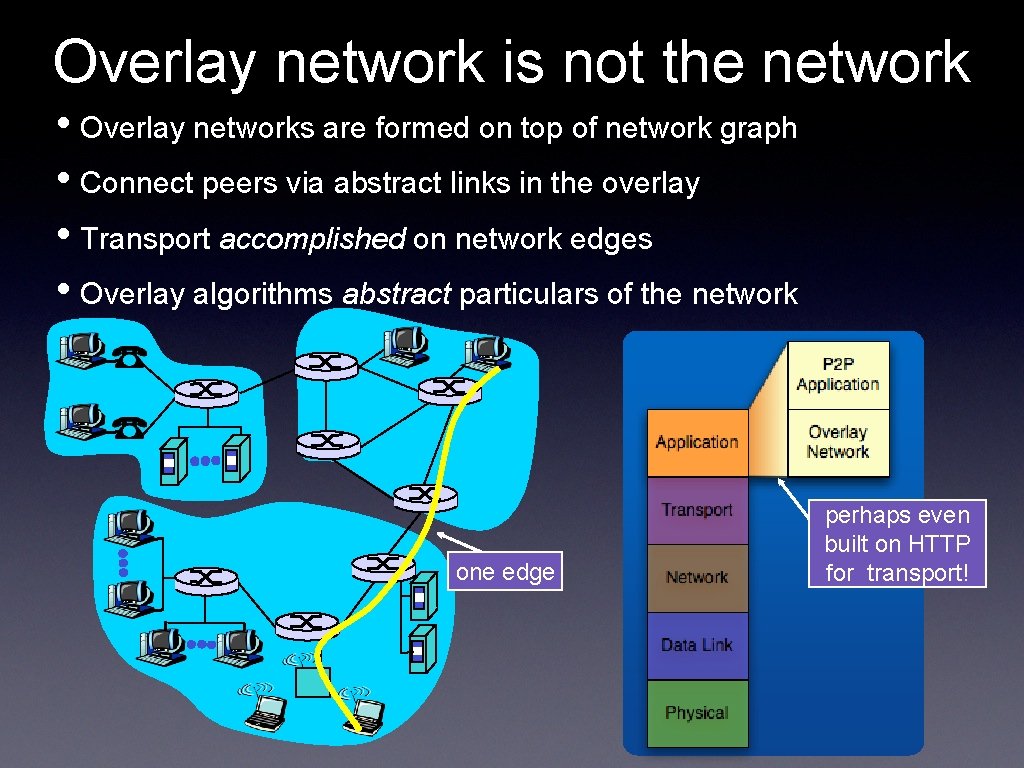

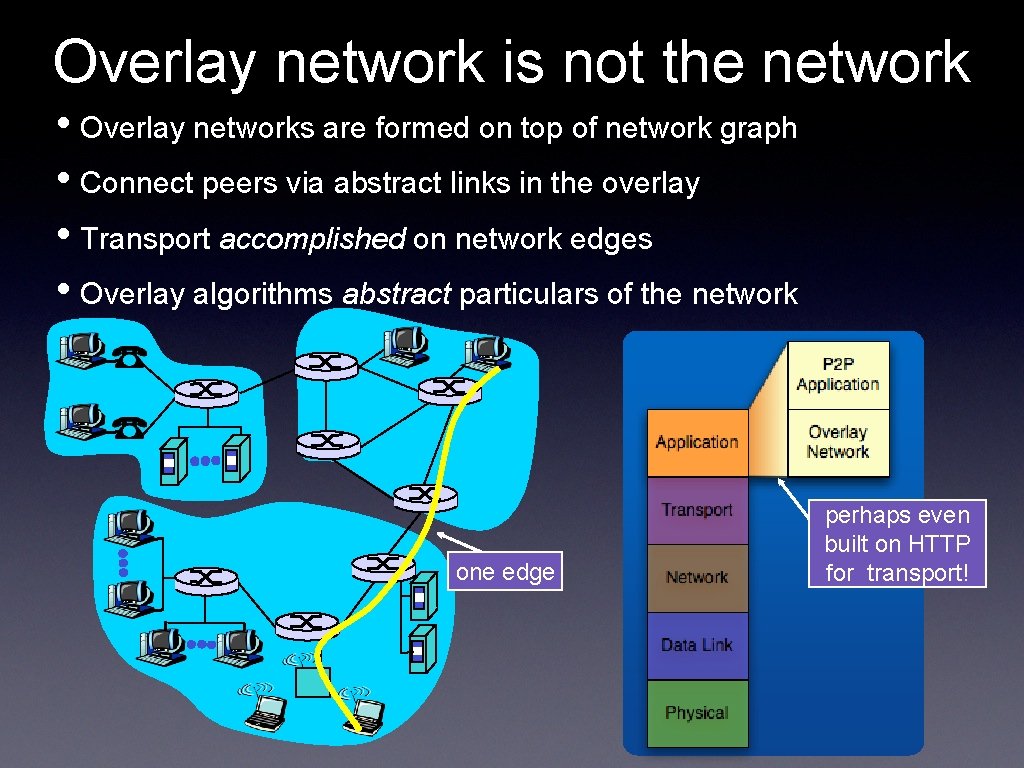

Overlay network is not the network • Overlay networks are formed on top of network graph • Connect peers via abstract links in the overlay • Transport accomplished on network edges • Overlay algorithms abstract particulars of the network one edge perhaps even built on HTTP for transport!

traceroute • P 2 P Overview • Architecture components • Napster (Centralized) • Gnutella (Distributed) • Skype and Ka. Za. A (Hybrid, Hierarchical) • Ka. Za. A Reverse Engineering Study 14 -740: Spring 2018 10

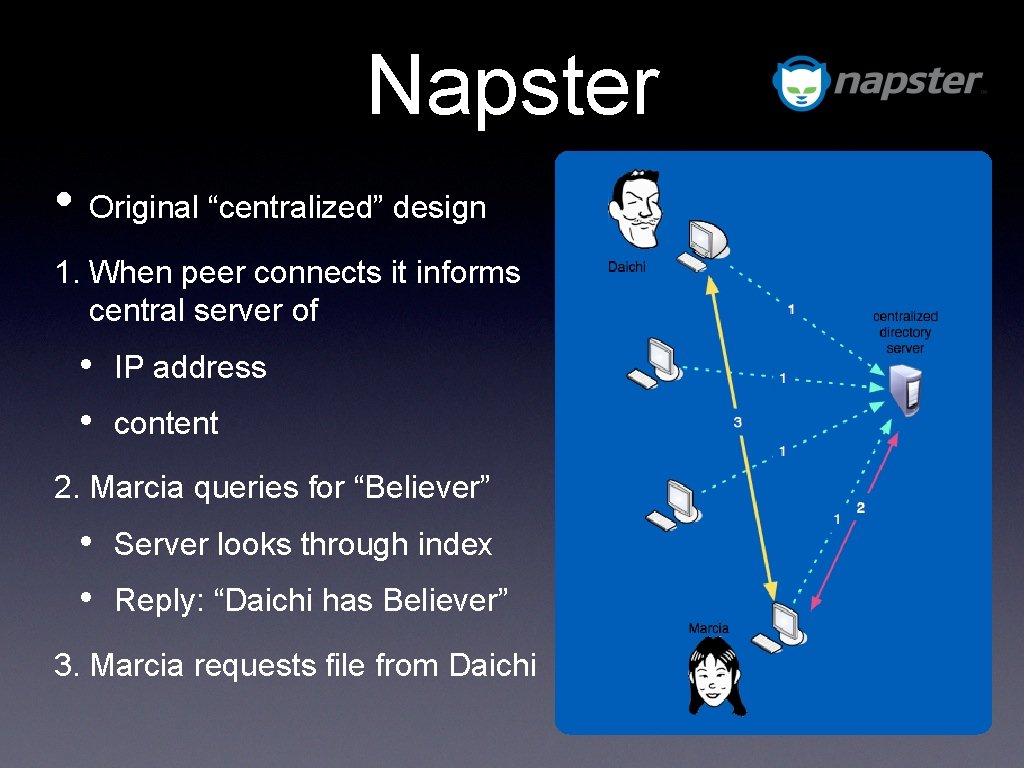

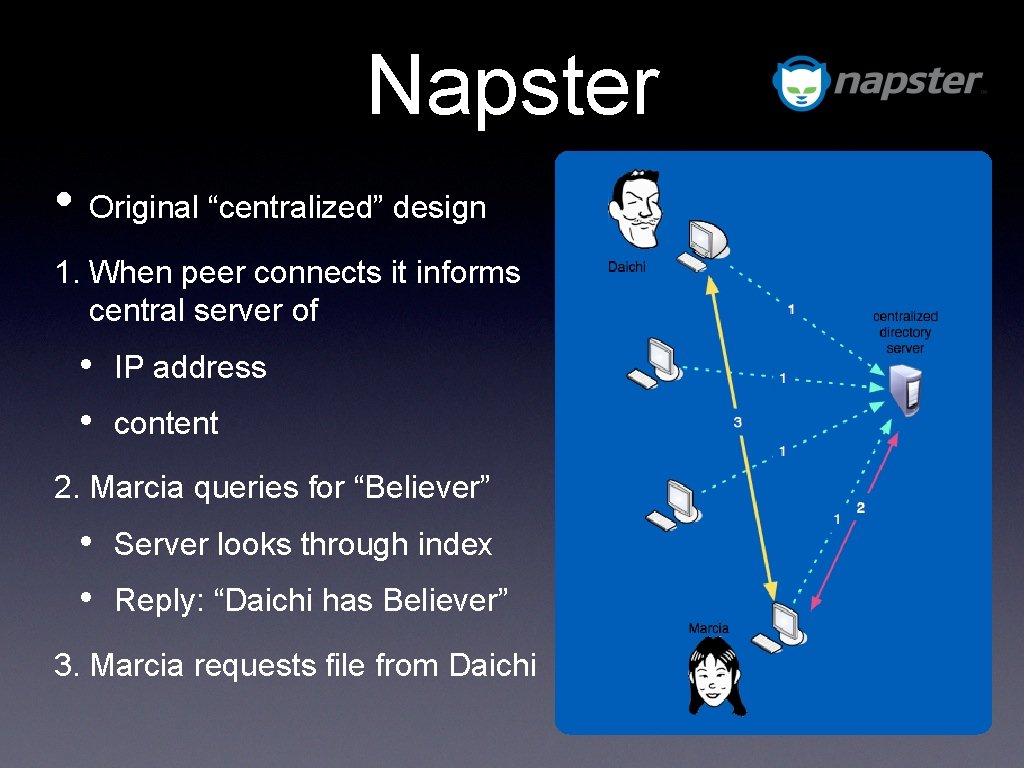

Napster • Original “centralized” design 1. When peer connects it informs central server of • • IP address content 2. Marcia queries for “Believer” • • Server looks through index Reply: “Daichi has Believer” 3. Marcia requests file from Daichi

Problems? • File transfer is decentralized, but locating content is highly centralized • Single point of failure • Performance bottleneck • Single point of lawsuit • Result: Napster was owned by Best Buy • Now it’s a rebranded Rhapsody music streaming service 14 -740: Spring 2018 12

traceroute • P 2 P Overview • Architecture components • Napster (Centralized) • Gnutella (Distributed) • Skype and Ka. Za. A (Hybrid, Hierarchical) • Ka. Za. A Reverse Engineering Study 14 -740: Spring 2018 13

Gnutella • Created in response to Napster problems • Fully decentralized • Does not depend on central directory • Participants arrange themselves in overlay • Queries flood network to find file • Fully anonymous • Public domain protocol • Various Gnutella clients 14 -740: Spring 2018 14

Bootstrapping 1. New peer X must find some member of the Gnutella network • Use a list of candidate peers 2. X sequentially attempts to make TCP connection with peers on list until successful with peer Y 3. X sends ping message to Y; Y forwards ping message 4. All peers receiving a ping message respond to X with a pong message 5. X receives many pong messages and can setup additional TCP connections 14 -740: Spring 2018 15

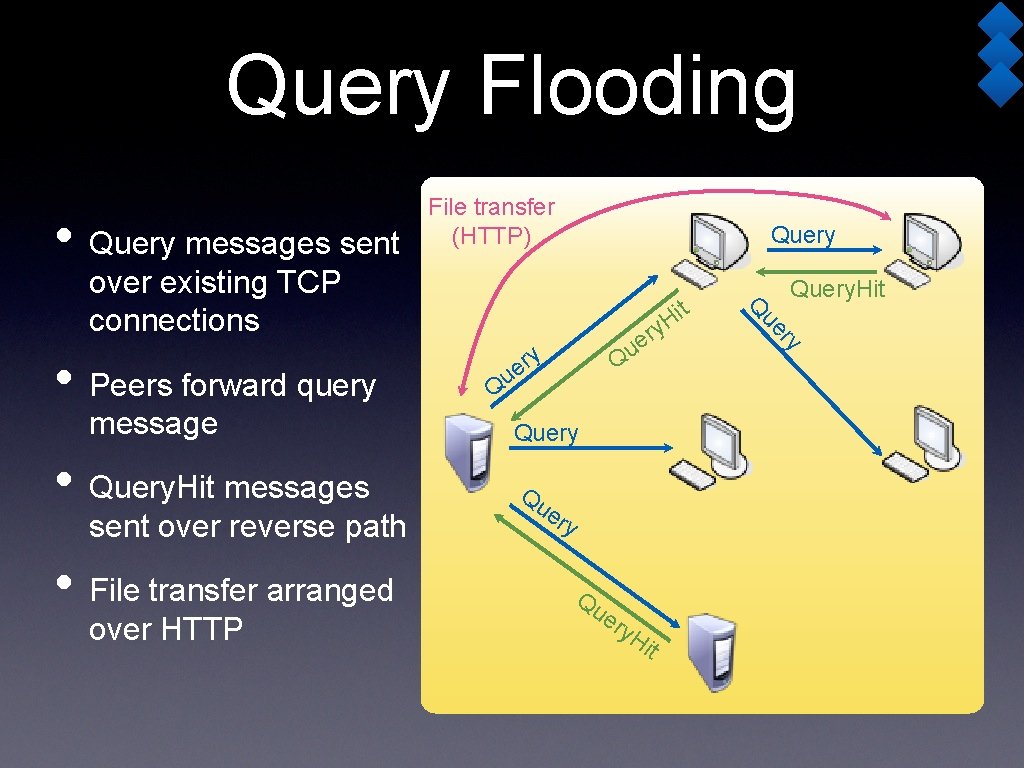

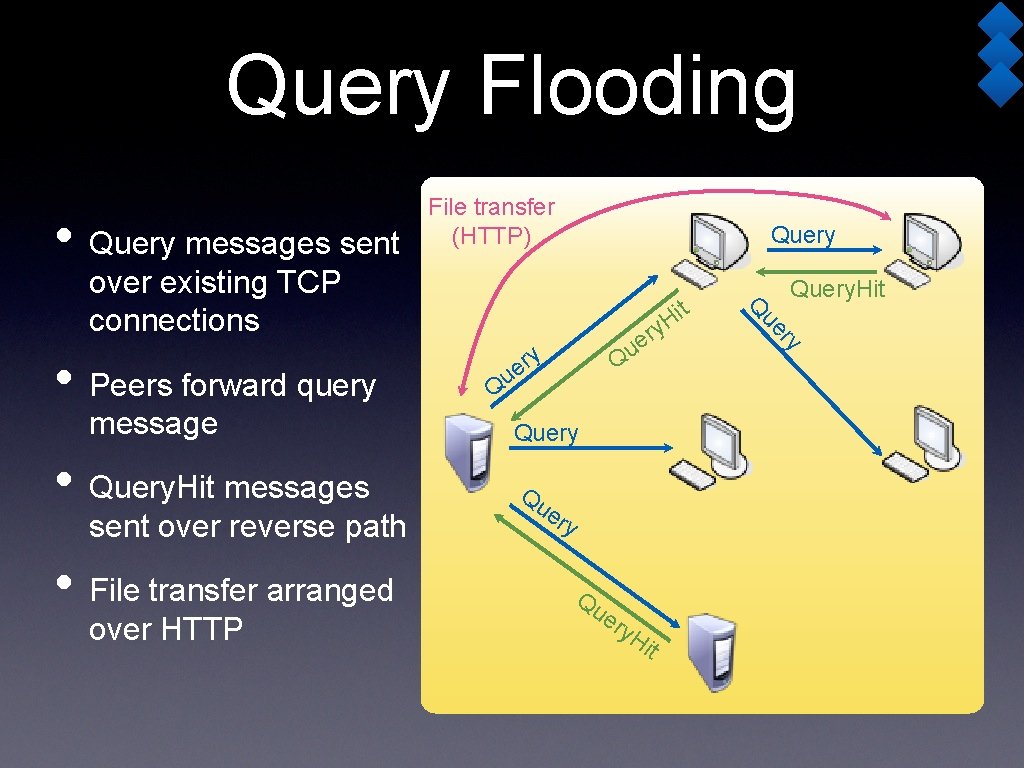

Query Flooding • Query messages sent File transfer (HTTP) Query • Query. Hit messages sent over reverse path • File transfer arranged over HTTP Q Q Query Qu er y. H it ry message y r e u ue • Peers forward query it H ry e u Query. Hit Q over existing TCP connections

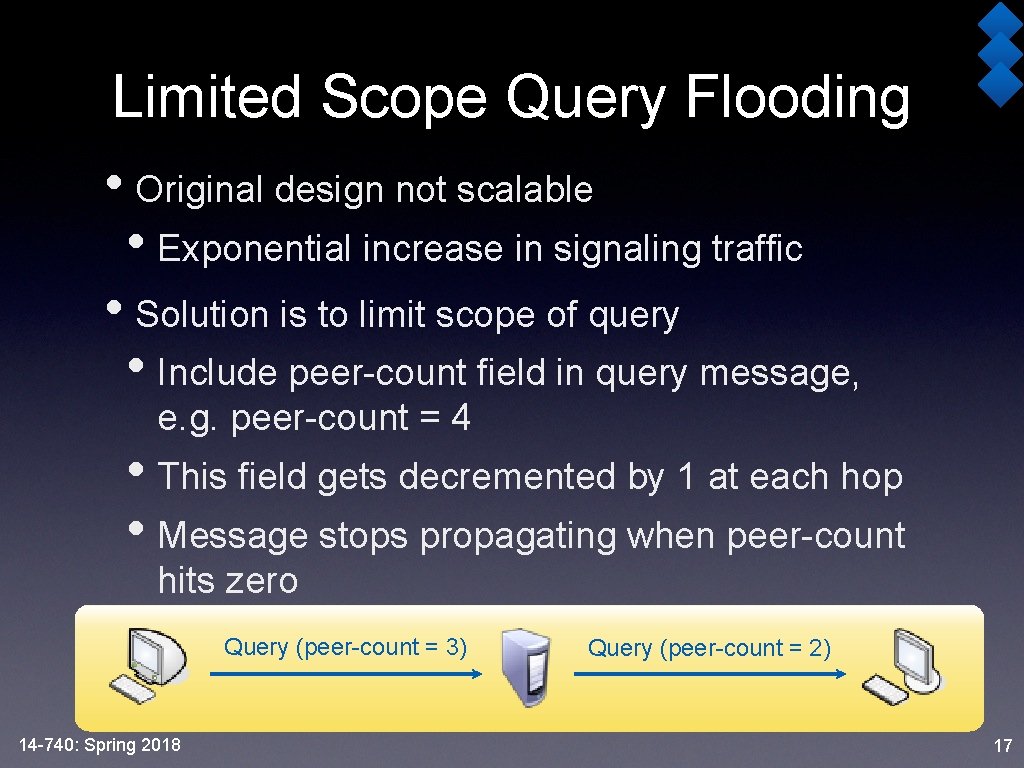

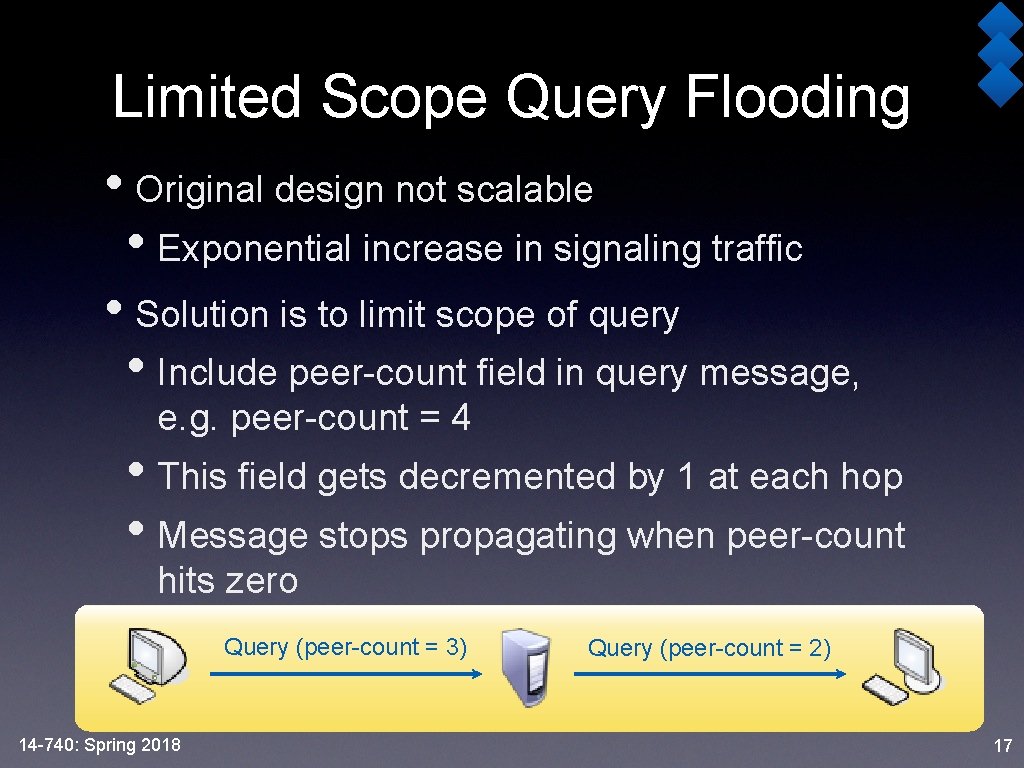

Limited Scope Query Flooding • Original design not scalable • Exponential increase in signaling traffic • Solution is to limit scope of query • Include peer-count field in query message, e. g. peer-count = 4 • This field gets decremented by 1 at each hop • Message stops propagating when peer-count hits zero Query (peer-count = 3) 14 -740: Spring 2018 Query (peer-count = 2) 17

Question • If peer-count = 4 at the start, how many peers would the query message eventually reach? • It depends on the number of neighbors each peer has! 14 -740: Spring 2018 19

More Questions • Is limited scope query flooding scalable? (i. e. How does number of nodes affect message counts? ) • Not scalable • Number of messages grows with number of nodes • Desire: constant time search 14 -740: Spring 2018 21

Even more questions • Are we guaranteed to find an object? (Assume the object exists somewhere in the overlay network) • No guarantee • Query stops after peer-count hits zero • Gnutella uses a unstructured graph 14 -740: Spring 2018 23

traceroute • P 2 P Overview • Architecture components • Napster (Centralized) • Gnutella (Distributed) • Skype and Ka. Za. A (Hybrid, Hierarchical) • Ka. Za. A Reverse Engineering Study 14 -740: Spring 2018 24

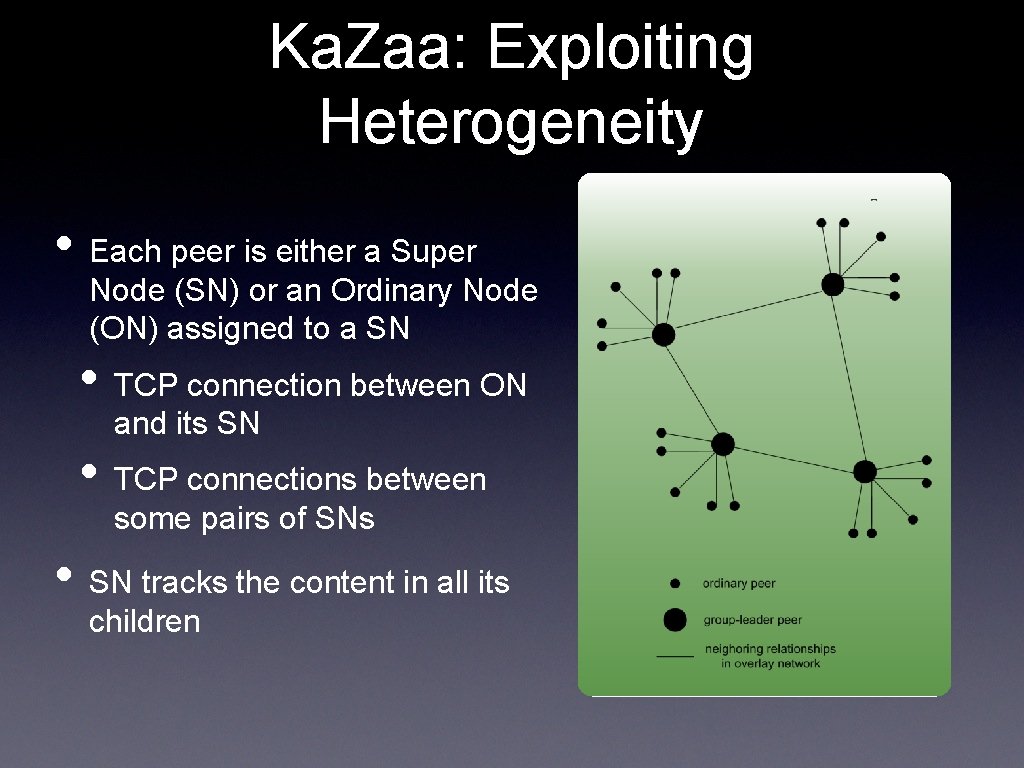

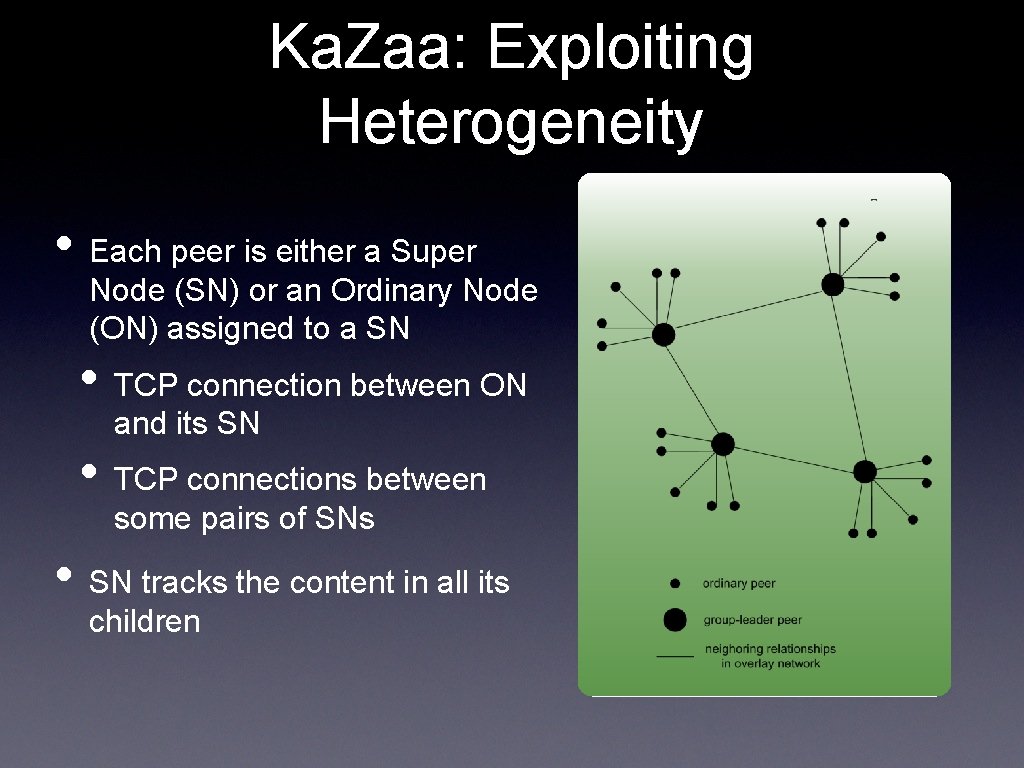

Ka. Zaa: Exploiting Heterogeneity • Each peer is either a Super Node (SN) or an Ordinary Node (ON) assigned to a SN • TCP connection between ON and its SN • TCP connections between some pairs of SNs • SN tracks the content in all its children

Ka. Zaa Queries • Each file has a hash and a descriptor • Client sends keyword query to its SN • SN responds with matches: • For each match: metadata, hash, IP address • If SN forwards query to other SNs, they respond with matches • Client then selects files for downloading • HTTP requests using hash as identifier sent to peers holding desired file 14 -740: Spring 2018 27

Measurement Study • Developed tools to reverse engineering Ka. Za. A • Attempt to answer the following questions: • What is the ratio of SN to ONs? • What is the fraction of SNs overall? • How are SNs connected, sparsely or densely? • How does ON pick best SN? • Random port numbers and NATs? 14 -740: Spring 2018 28

Structural Properties • Deployed apparatus in Polytechnic campus and broadband residential network • SN connects to 40 -50 other SNs (dynamic) • SN has 100 -160 ONs at Polytechnic, 55 -70 at access network • Given 3 million peers, 25000 – 40000 SNs • SN is connected to ~0. 1% of other SNs 14 -740: Spring 2018 29

Unanswered Questions. . . • Details about the residential access network? • Where is it? What is it? • What is the uplink/download bandwidth? • How long was the measurement study? • 6 hours on 2 days? Aug 22 03, Oct 24 03 • How are these time periods representative samples? • Where did the 3 million peers number come from? • From Ka. Za. A? 14 -740: Spring 2018 30

Overlay Dynamics • Connection lifetimes are short • Average for ON-SN is 34 mins, SN-SN is 11 mins • 38% of ON-SN and 32% of SN-SN lasted < 30 secs • Why so short? • SN searching for other SNs with small workload • Long-term connection shuffling, so larger set of SNs can be explored • Exchange of SN lists 14 -740: Spring 2018 31

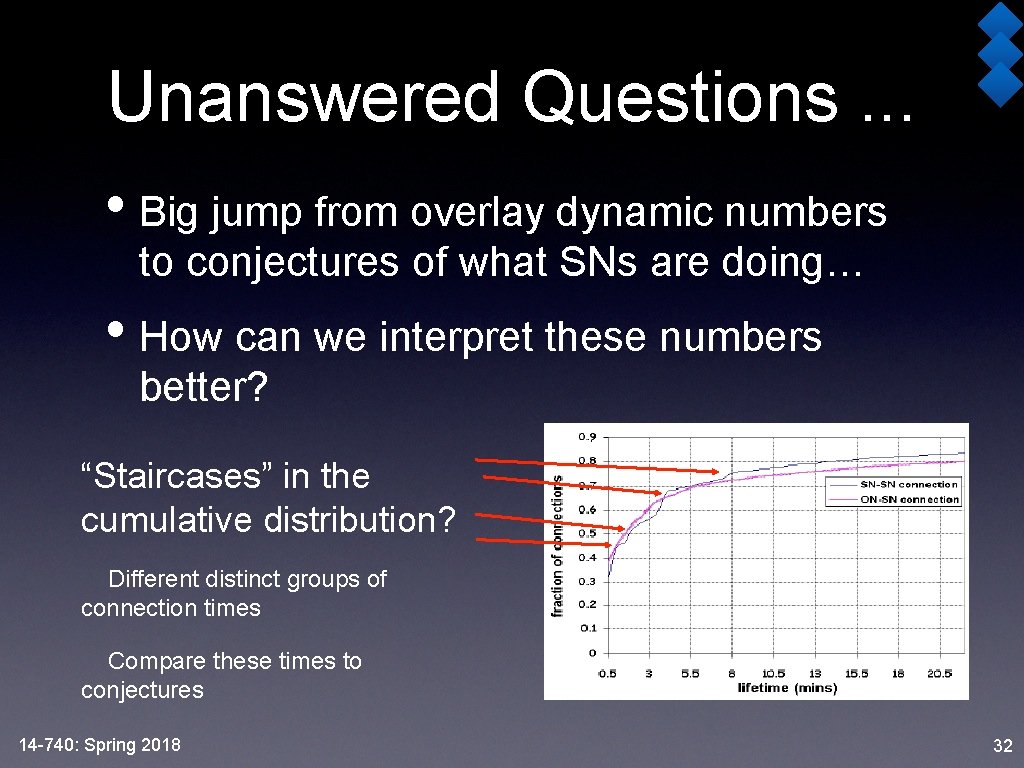

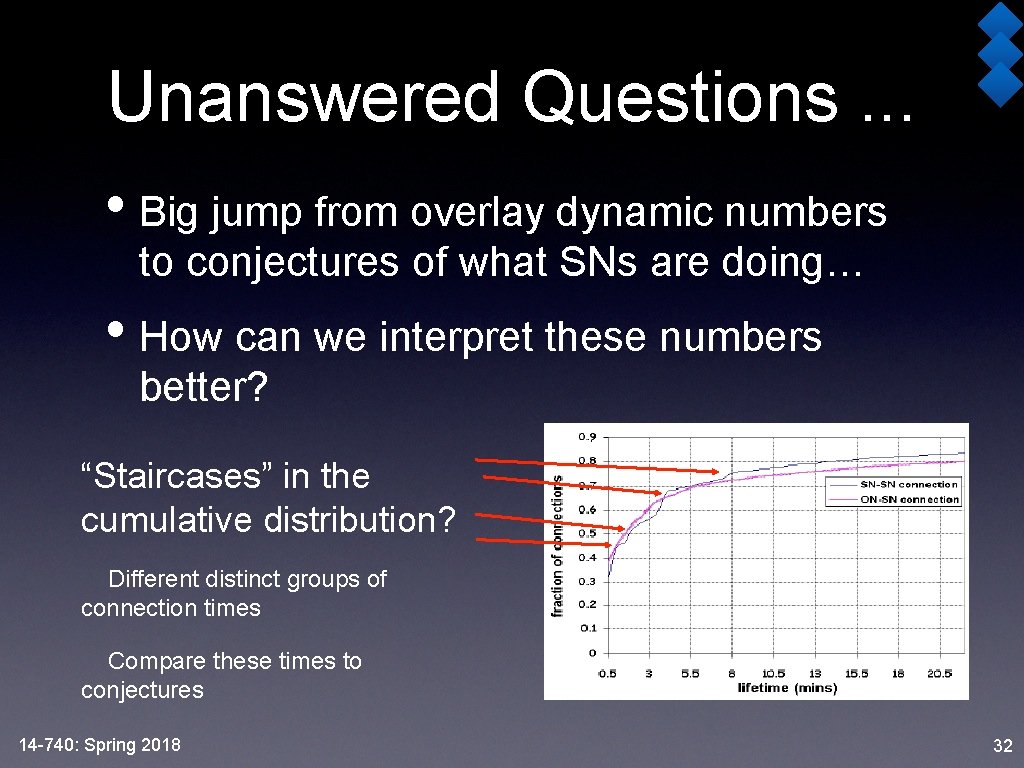

Unanswered Questions. . . • Big jump from overlay dynamic numbers to conjectures of what SNs are doing… • How can we interpret these numbers better? “Staircases” in the cumulative distribution? Different distinct groups of connection times Compare these times to conjectures 14 -740: Spring 2018 32

Parent Selection • Workload • Exact algorithm to calculate workload is unknown • Tied to the number of connections a SN is current supporting • Locality • RTT measurements • 60% of SN-SN connections < 50 msec • 40% of ON-SN < 5 msecs • Transatlantic traffic ~ 100 msecs • Transpacific traffic ~ 180 msecs • Topological closeness (Prefix matching) • SNs in SN list close to ON • Issues with this methodology? 14 -740: Spring 2018 33

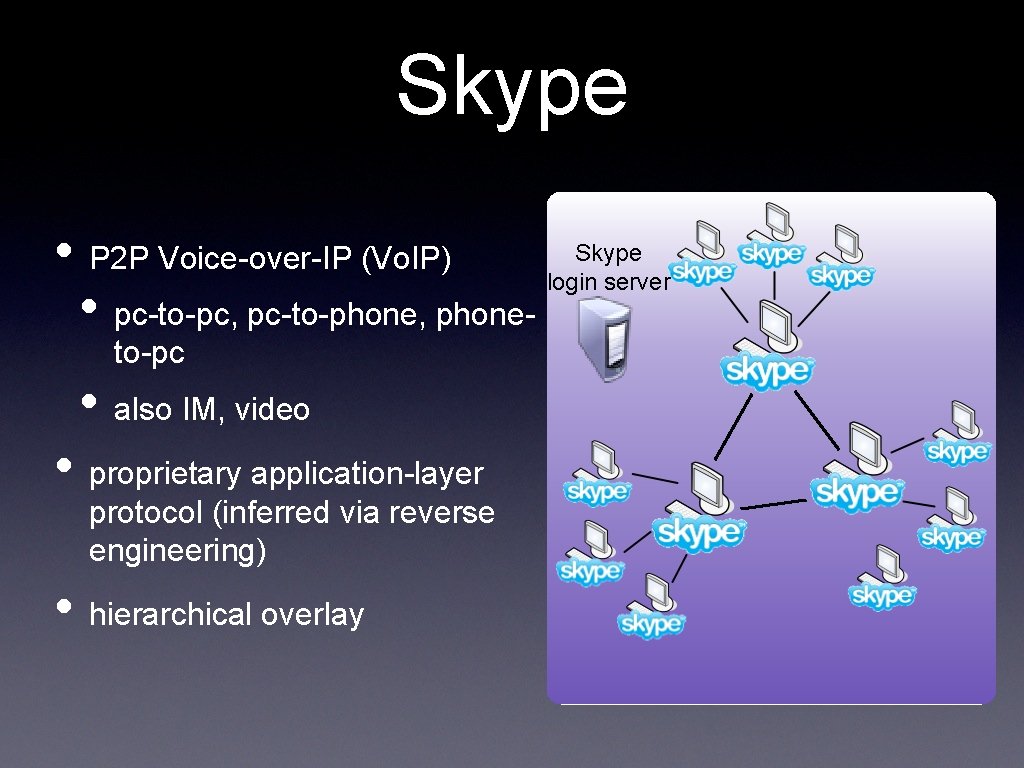

Skype • P 2 P Voice-over-IP (Vo. IP) login server • pc-to-pc, pc-to-phone, phone- to-pc • also IM, video • proprietary application-layer protocol (inferred via reverse engineering) • hierarchical overlay

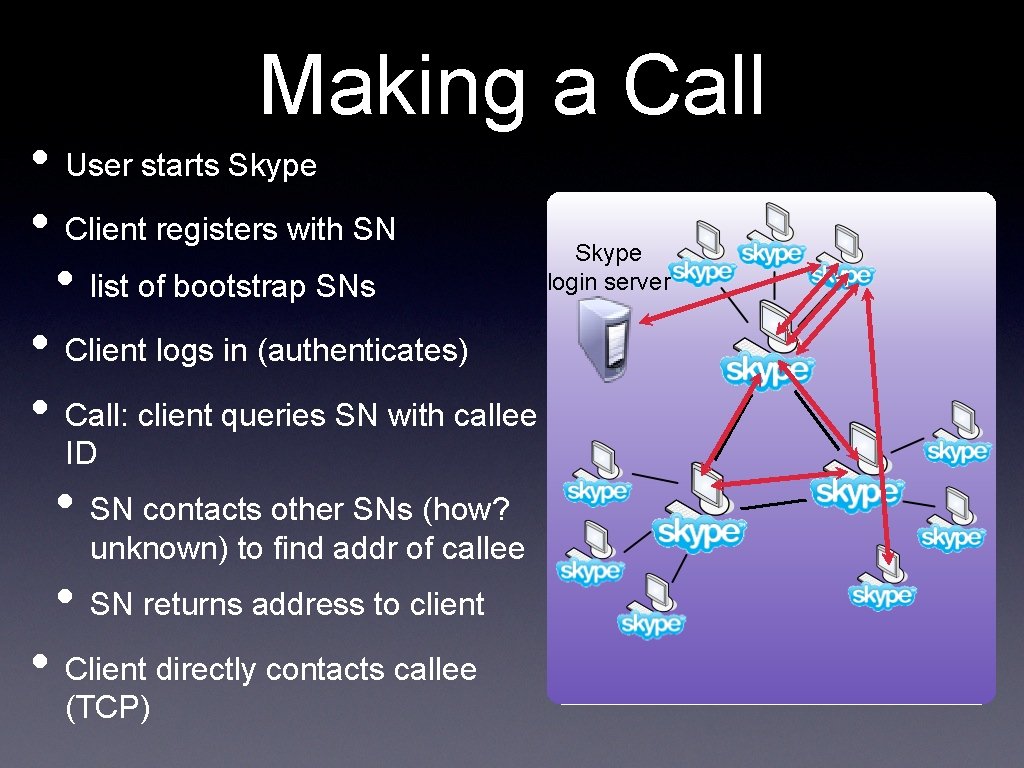

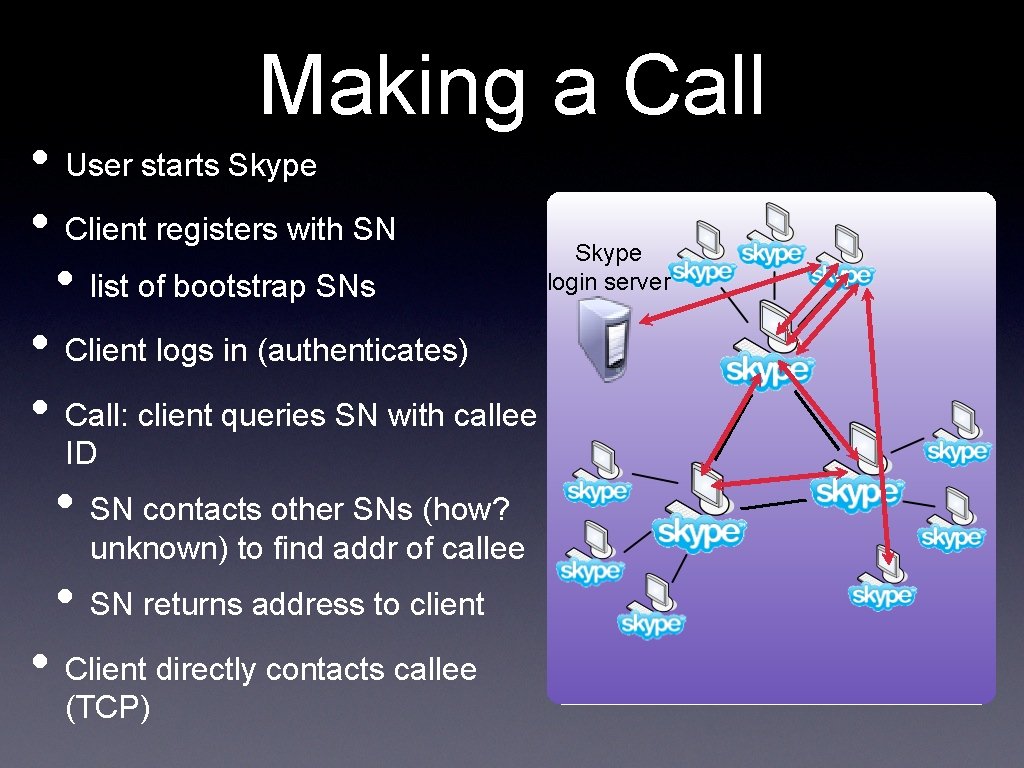

Making a Call • User starts Skype • Client registers with SN Skype login server • list of bootstrap SNs • Client logs in (authenticates) • Call: client queries SN with callee ID • SN contacts other SNs (how? unknown) to find addr of callee • SN returns address to client • Client directly contacts callee (TCP)

Lesson Objectives • Now, you should be able to: • list reasons that led to the creation of P 2 P networks • describe what an overlay network is and how it is different from the internet • use historical P 2 P networks to describe centralized P 2 P networks, fully distributed P 2 P networks, and hierarchical P 2 P networks • describe search techniques in the various P 2 P forms, and to analyze search efficiencies 14 -740: Spring 2018 36