PeerToPeer Multimedia Streaming Using Bit Torrent Purvi Shah

Peer-To-Peer Multimedia Streaming Using Bit. Torrent Purvi Shah, Jehan-François Pâris University of Houston, TX

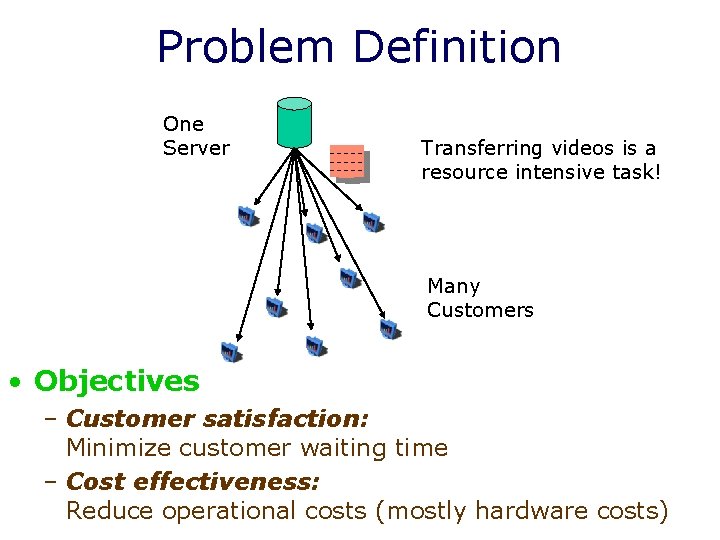

Problem Definition One Server Transferring videos is a resource intensive task! Many Customers • Objectives – Customer satisfaction: Minimize customer waiting time – Cost effectiveness: Reduce operational costs (mostly hardware costs)

Transferring Videos • Video download: – Just like any other file – Simplest case: file downloaded using conventional protocol – Playback does not overlap with the transfer • Video streaming from a server: – Playback of video starts while video is downloaded – No need to wait until download is completed – New challenge: ensuring on-time delivery of data • Otherwise the client cannot keep playing the video

Why use P 2 P Architecture? • Infrastructure-based approach (e. g. Akamai) – Most commonly used – Client-server architecture – Expensive: Huge server farms – Best effort delivery – Client upload capacity completely unutilized – Not suitable for flash crowds

Why use P 2 P Architecture? • IP Multicast – Highly efficient bandwidth usage – Several drawbacks so far • Infrastructure level changes make most administrators reluctant to provide it • Security flaws • No effective & widely accepted transport protocol on IP multicast layer

P 2 P Architecture • Leverage power of P 2 P networks – Multiple solutions are possible • Tree based structured overlay networks – Leaf clients’ bandwidth unutilized – Less reliable – Complex overlay construction – Content bottlenecks – Fairness issues

Our Solution • Mesh based unstructured overlay – Based on widely-used Bit. Torrent content distribution protocol – A P 2 P protocol started ~ 2002 – Linux distributors such as Lindows offer software updates via BT – Blizzard uses BT to distribute game patches – Start to distribute films through BT this year

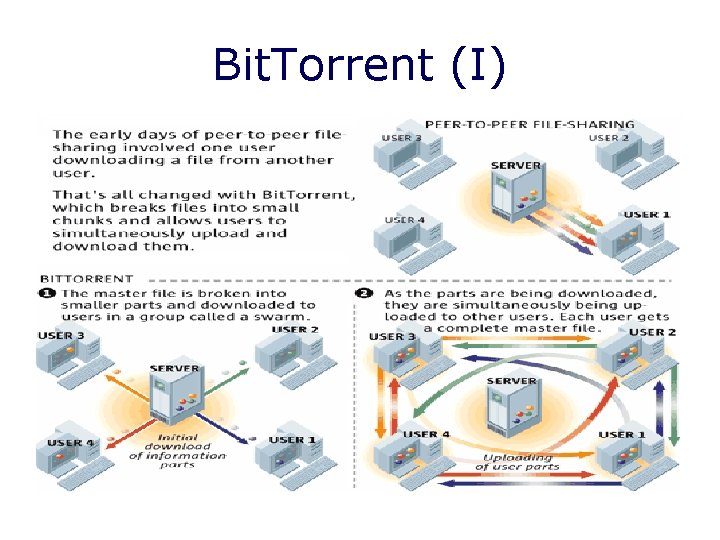

Bit. Torrent (I)

Bit. Torrent (II) • Has a central tracker – Keeps information on peers – Responds to requests for that information – Service subscription • Built-in incentives: Rechoking – Give preference to cooperative peers: Tit-for -tat exchange of content chunks – Random search: Optimistic un-choke • When all chunks are downloaded, peers can reconstruct the whole file – Not tailored to streaming applications

Evaluation Methodology • Simulation-based – Answers depend on many parameters – Hard to control in measurements or to model • Java based discrete-event simulator – Models queuing delay and transmission delay – Remains faithful to BT specifications

BT Limitations • BT does not account for the real-time needs of streaming applications – Chunk selection • Peers do not download chunks in sequence – Neighbor selection • Incentive mechanism makes too many peers to wait for too long before joining the swarm

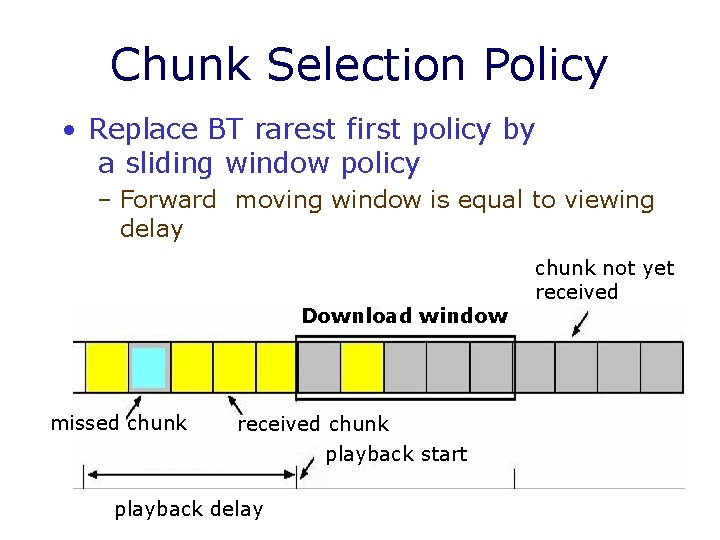

Chunk Selection Policy • Replace BT rarest first policy by a sliding window policy – Forward moving window is equal to viewing delay Download window missed chunk received chunk playback start playback delay chunk not yet received

Two Options • Sequential policy – Peers download first the chunks at the beginning of the window – Limit the opportunity to exchange chunks between the peers • Rarest-first policy – Peers download first the chunks within the window that are least replicated among its neighbors – Feasibility of swarming by diversifying available chunks among peers

Best Worst

Discussion • Switching to a sliding window policy greatly increases quality of service – Must use a rarest first inside window policy – Change does not suffice to achieve a satisfactory quality of service

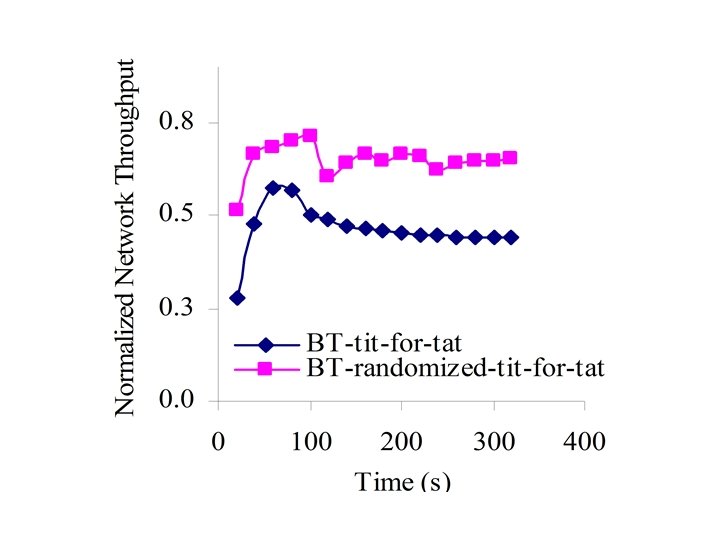

Neighbor Selection Policy • BT tit-for-tat policy – Peers select other peers according to their observed behaviors – Significant number of peers suffer from slow start • Randomized tit-for-tat policy – At the beginning of every playback each peer selects neighbors at random – Rapid diffusion of new chunks among peers – Gives more free tries to a larger number of peers in the swarm to download chunks

Discussion • Should combine our neighbor selection policy with our sliding window chunk selection policy • Can then achieve an excellent Qo. S with playback delays as short as 30 s as long as video consumption rate does not exceed 60 % of network link bandwidth.

Comparison with Client-Server Solutions

Chunk size selection • Small chunks – Result in faster chunk downloads – Occasion more processing overhead • Larger chunks – Cause slow starts for every sliding window • Our simulations indicate that 256 KB is a good compromise

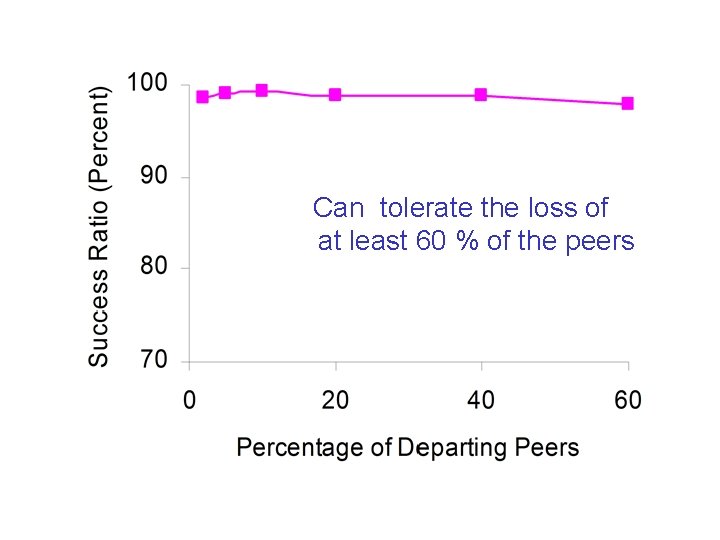

Premature Departures • Peer departures before the end of the session – Can be voluntary or resulting from network failures – When a peer leaves the swarm, it tears down connections to its neighbors – Each of its neighbors to lose one of their active connections

Can tolerate the loss of at least 60 % of the peers

Future Work • Current work – On-demand streaming • Robustness – Detect malicious and selfish peers – Incorporate a trust management system into the protocol • Performance evaluation – Conduct a comparison study

Thank You – Questions? Contact: purvi@cs. uh. edu paris@cs. uh. edu

Extra slides

n. Vo. D • Dynamics of client participations, i. e. churn – Clients do no synchronize their viewing times • Serve many peers even if they arrive according to different patterns

Admission control policy (I) • Determine if a new peer request can be accepted without violating the Qo. S requirements of the existing customers • Based on server oriented staggered broadcast scheme – Combine P 2 P streaming and staggered broadcasting ensures high Qo. S – Beneficial for popular videos

Admission control policy (II) • Use tracker to batch clients arriving close in time form a session – Closeness is determined by threshold θ • Service latency, though server oriented, is independent of number of clients – Can handle flash crowds • Dedicate η channels for each video making worst service latency, w D/η

Results • We use the M/D/η queuing model to estimate the effect on the playback delay experienced by the peers

- Slides: 33