PeertoPeer Filesystems Slides originally created by Tom Roeder

Peer-to-Peer Filesystems Slides originally created by Tom Roeder

Nature of P 2 P Systems n P 2 P: communicating peers in the system q q n In some sense, P 2 P is older than the name q q n normally an overlay in the network Hot topic because of Napster, Gnutella, etc many protocols used symmetric interactions not everything is client-server What’s the real definition? q q no-one has a good one, yet depends on what you want to fit in the class

Nature of P 2 P Systems n Standard definition q q n Minimally: is the Web a P 2 P system? q q q n symmetric interactions between peers no distinguished server We don’t want to say that it is but it is, under this definition I can always run a server if I want: no asymmtery There must be more structure than this q Let’s try again

Nature of P 2 P Systems n Recent definition q q n Try again: is the Web P 2 P? q n No distinguished initial state Each server has the same code servers cooperate to handle requests clients don’t matter: servers are the P 2 P system No, not under this def: servers don’t interact Is the Google server farm P 2 P? q Depends on how it’s set up? Probably not.

Overlays n n Two types of overlays Unstructured q q n No infrastructure set up for routing Random walks, flood search Structured q q Small World Phenomenon: Kleinberg Set up enough structure to get fast routing We will see O(log n) For special tasks, can get O(1)

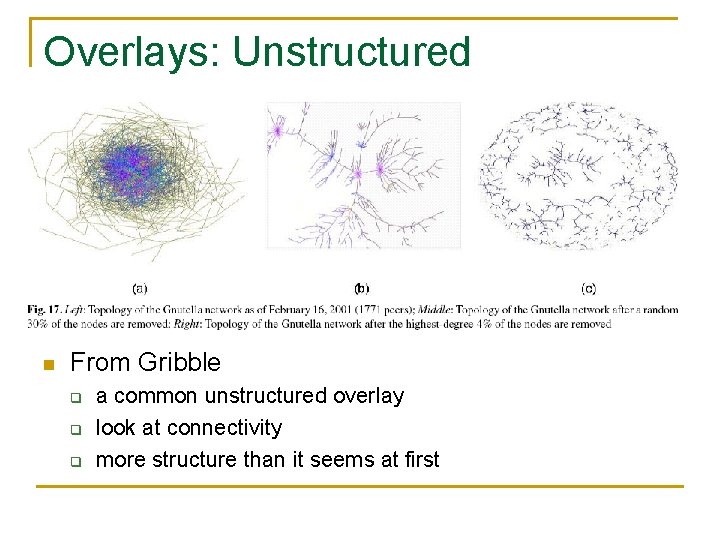

Overlays: Unstructured n From Gribble q q q a common unstructured overlay look at connectivity more structure than it seems at first

Overlays: Unstructured n Gossip: state synchronization technique q q q n Convergence of state is reasonably fast q q n Instead of forced flooding, share state Do so infrequently with one neighbor at a time Original insight from epidemic theory with high probability for almost all nodes good probabilistic guarantees Trivial to implement q Saves bandwidth and energy consumption

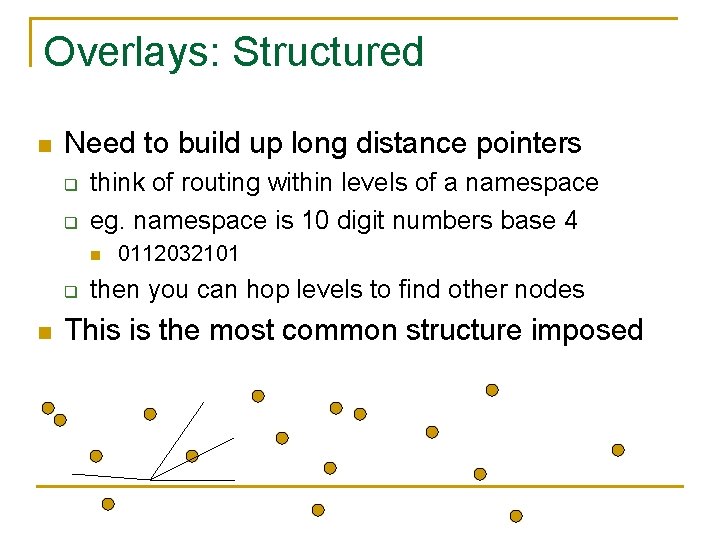

Overlays: Structured n Need to build up long distance pointers q q think of routing within levels of a namespace eg. namespace is 10 digit numbers base 4 n q n 0112032101 then you can hop levels to find other nodes This is the most common structure imposed

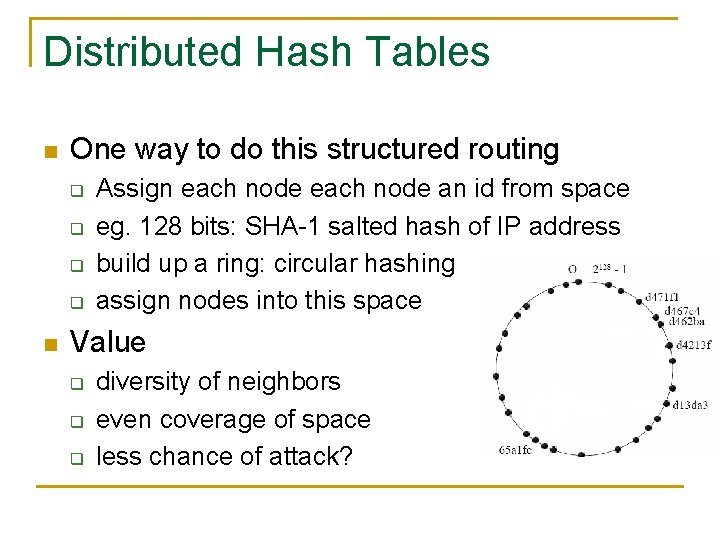

Distributed Hash Tables n One way to do this structured routing q q n Assign each node an id from space eg. 128 bits: SHA-1 salted hash of IP address build up a ring: circular hashing assign nodes into this space Value q q q diversity of neighbors even coverage of space less chance of attack?

Distributed Hash Tables n Why “hash tables”? q q q n Stored named objects by hash code Route the object to the nearest location in space key idea: nodes and objects share id space How do you find an object without its name? n n Cost of churn? n n Close names don’t help because of hashing In most P 2 P apps, many joins and leaves Cost of freeloaders?

Distributed Hash Tables n Dangers q q q Sybil attacks: one node becomes many id attacks: can place your node wherever Solutions hard to come by n n n crytpo puzzles / money for IDs? Certification of routing and storage? Many routing frameworks in this spirit q q Very popular in late 90 s early 00 s Pastry, Tapestry, CAN, Chord, Kademlia

Applications of DHTs n Almost anything that involves routing q q n illegal file sharing: obvious application backup/storage filesystems P 2 P DNS Good properties q q q O(log N) hops to find an id (but how good is this? ) Non-fate-sharing id neighbors Random distribution of objects to nodes

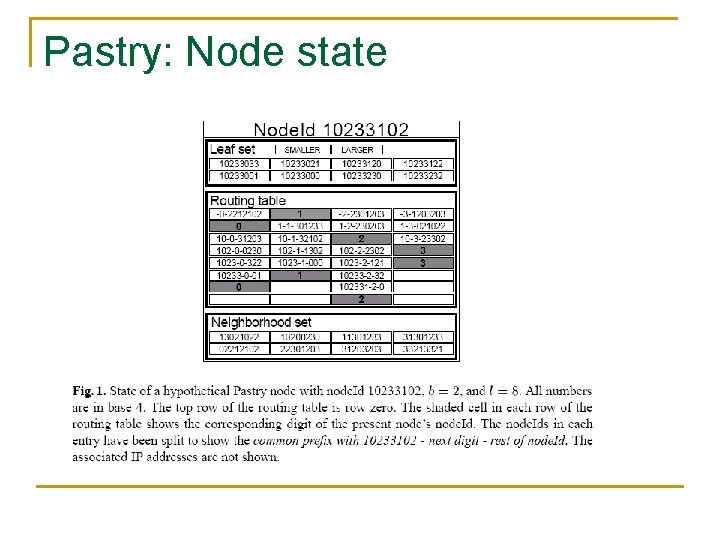

Pastry: Node state

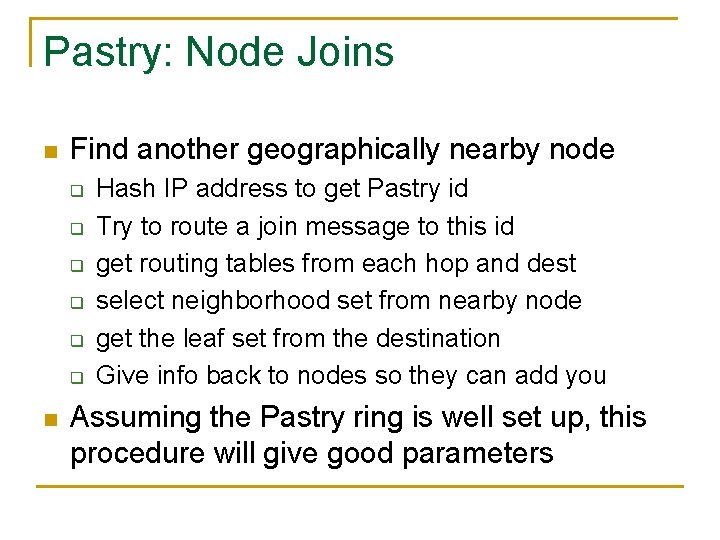

Pastry: Node Joins n Find another geographically nearby node q q q n Hash IP address to get Pastry id Try to route a join message to this id get routing tables from each hop and dest select neighborhood set from nearby node get the leaf set from the destination Give info back to nodes so they can add you Assuming the Pastry ring is well set up, this procedure will give good parameters

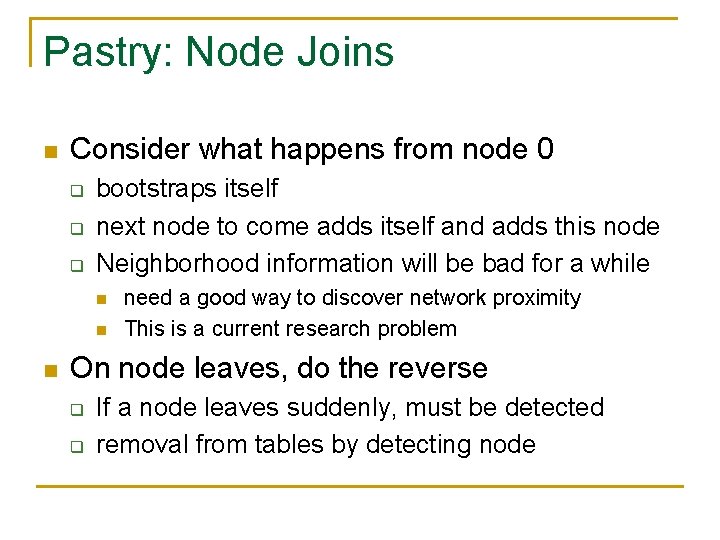

Pastry: Node Joins n Consider what happens from node 0 q q q bootstraps itself next node to come adds itself and adds this node Neighborhood information will be bad for a while n need a good way to discover network proximity This is a current research problem On node leaves, do the reverse q q If a node leaves suddenly, must be detected removal from tables by detecting node

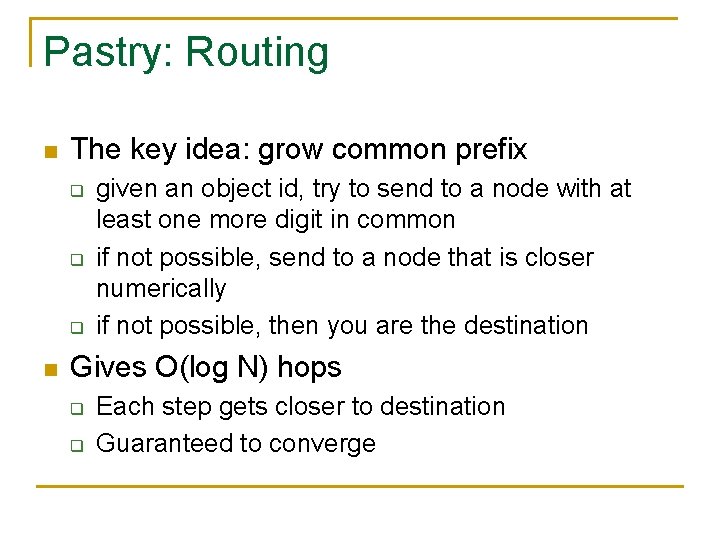

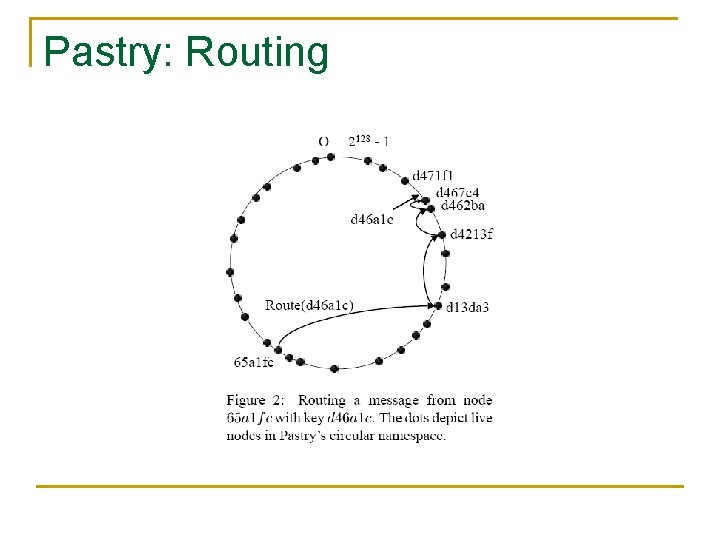

Pastry: Routing n The key idea: grow common prefix q q q n given an object id, try to send to a node with at least one more digit in common if not possible, send to a node that is closer numerically if not possible, then you are the destination Gives O(log N) hops q q Each step gets closer to destination Guaranteed to converge

Pastry: Routing

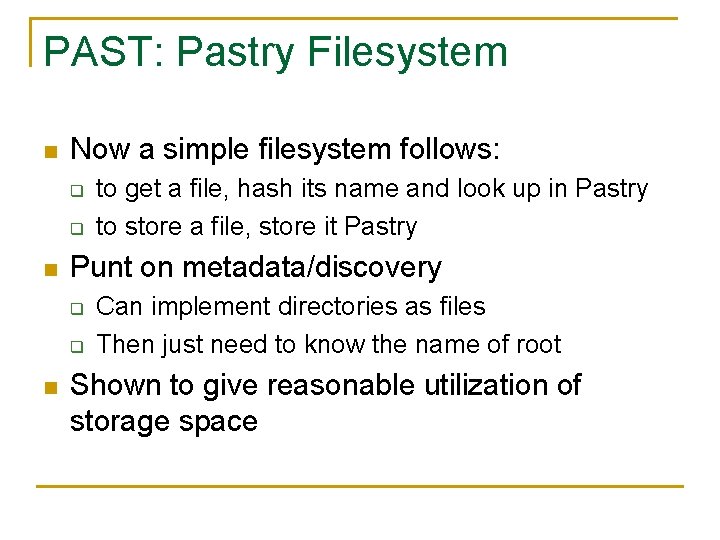

PAST: Pastry Filesystem n Now a simple filesystem follows: q q n Punt on metadata/discovery q q n to get a file, hash its name and look up in Pastry to store a file, store it Pastry Can implement directories as files Then just need to know the name of root Shown to give reasonable utilization of storage space

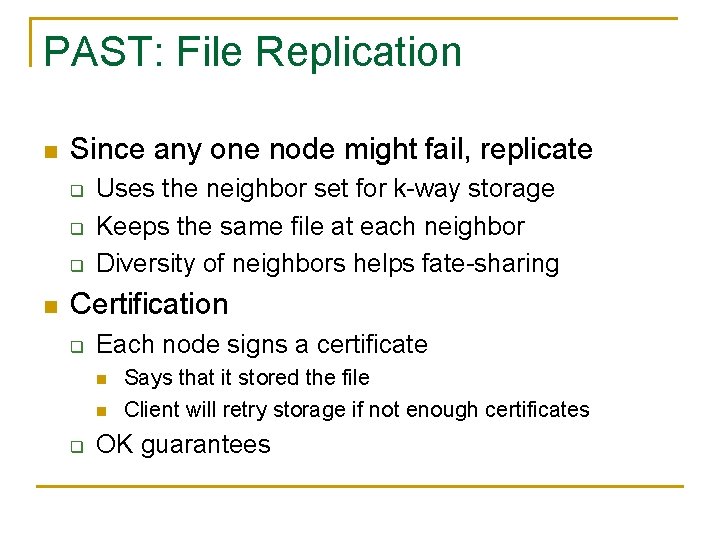

PAST: File Replication n Since any one node might fail, replicate q q q n Uses the neighbor set for k-way storage Keeps the same file at each neighbor Diversity of neighbors helps fate-sharing Certification q Each node signs a certificate n n q Says that it stored the file Client will retry storage if not enough certificates OK guarantees

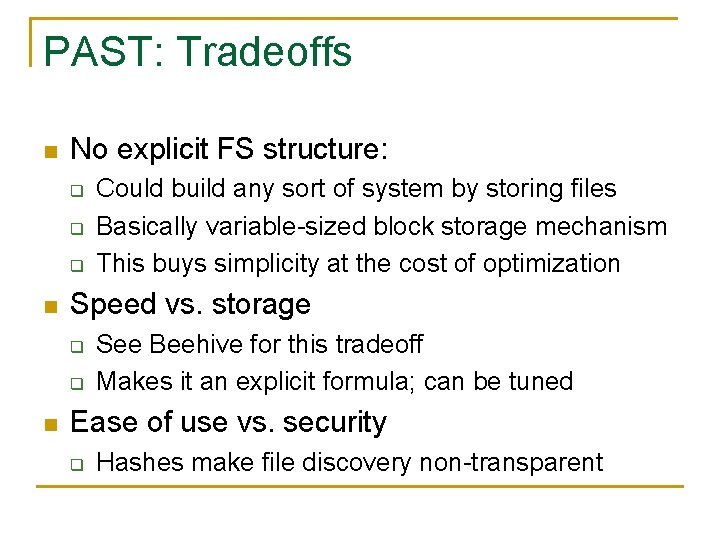

PAST: Tradeoffs n No explicit FS structure: q q q n Speed vs. storage q q n Could build any sort of system by storing files Basically variable-sized block storage mechanism This buys simplicity at the cost of optimization See Beehive for this tradeoff Makes it an explicit formula; can be tuned Ease of use vs. security q Hashes make file discovery non-transparent

Rationale and Validation n Backing up on other systems q q n no fate sharing automatic backup by storing the file But q q q Cost much higher than regular filesystem Incentives: why should I store your files? How is this better than tape backup? How is this affected by churn/freeloaders Will anyone ever use it?

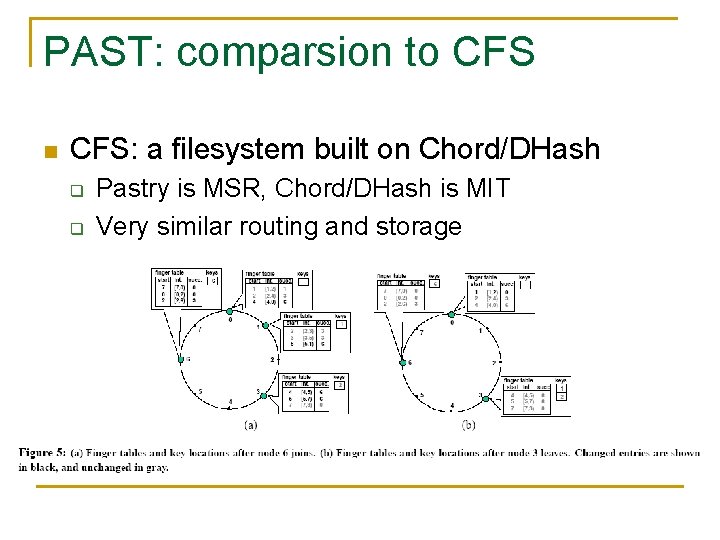

PAST: comparsion to CFS n CFS: a filesystem built on Chord/DHash q q Pastry is MSR, Chord/DHash is MIT Very similar routing and storage

PAST: comparison to CFS n PAST stores files, CFS blocks q q Thus CFS can use more fine-grained space lookup could be much longer n q CFS claims: ftp-like speed n n get each block: must go through routing for each Could imagine much faster: get blocks in parallel thus routing is slowing them down Remember: hops here are overlay, not internet, hops Load balancing in CFS q predictable storage requirements per file per node

Issues n Faster lookup. q n Churn q q n When nodes come and go, some algorithms perform repairs that involve disruptive overheads For example, CFS and PAST are at risk of copying tons of data to maintain replication levels! Robustness to network partitioning q n Cornell’s Kelips, Beehive DHTs achieve 1 -hop lookups Chord, for example, can develop a split brain problem! Legality. Are these good for anything legal?

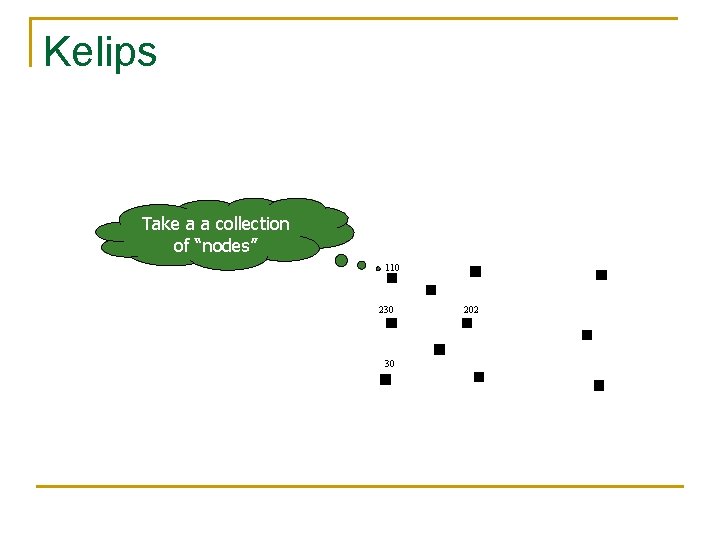

Kelips Take a a collection of “nodes” 110 230 30 202

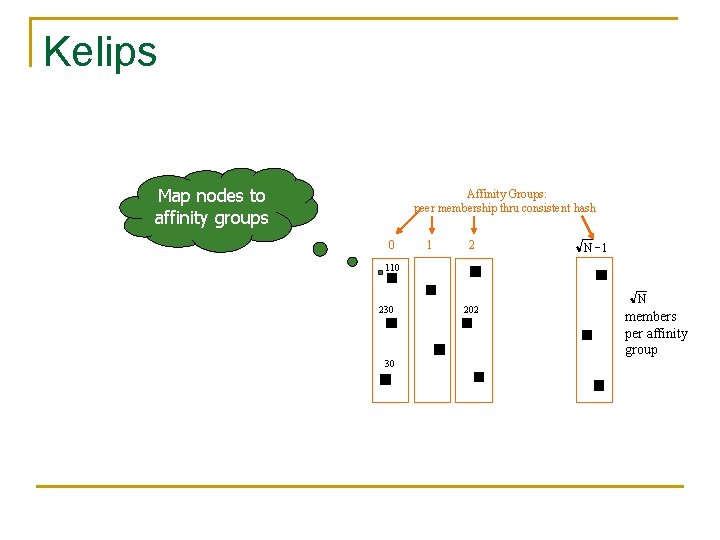

Kelips Map nodes to affinity groups Affinity Groups: peer membership thru consistent hash 0 1 2 N -1 110 230 30 202 N members per affinity group

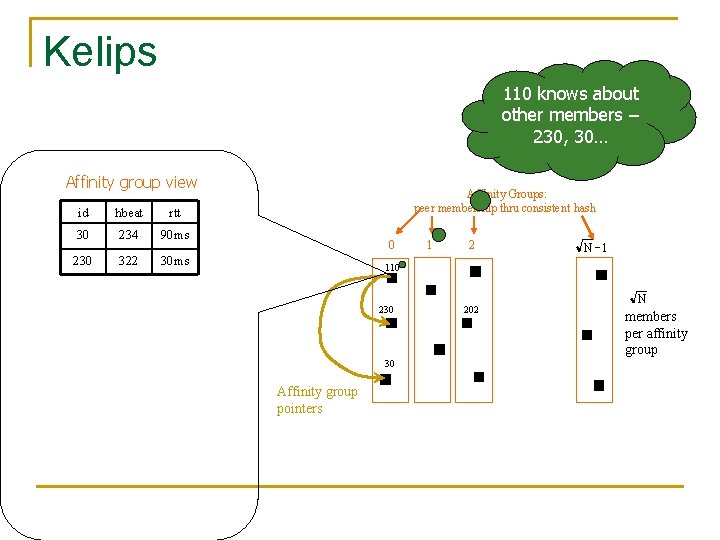

Kelips 110 knows about other members – 230, 30… Affinity group view id hbeat rtt 30 234 90 ms 230 322 30 ms Affinity Groups: peer membership thru consistent hash 0 1 2 N -1 110 230 30 Affinity group pointers 202 N members per affinity group

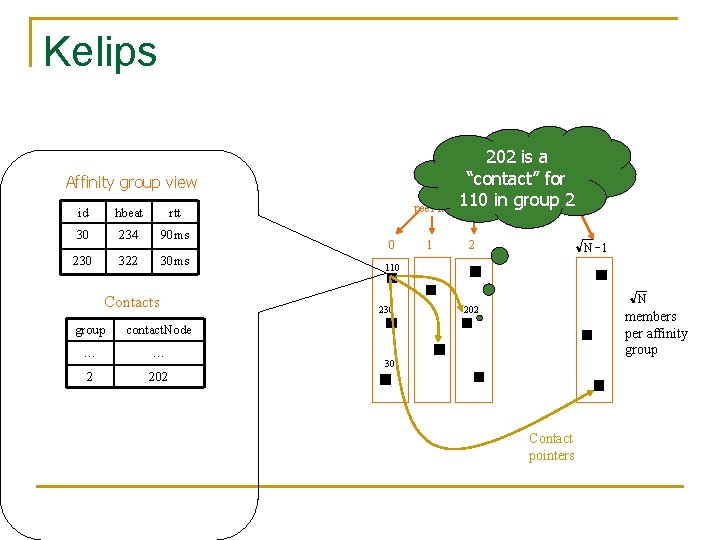

Kelips 202 is a “contact” for Affinity Groups: 110 inthrugroup peer membership consistent 2 hash Affinity group view id hbeat rtt 30 234 90 ms 230 322 30 ms Contacts group contact. Node … … 2 202 0 1 2 N -1 110 230 N 202 members per affinity group 30 Contact pointers

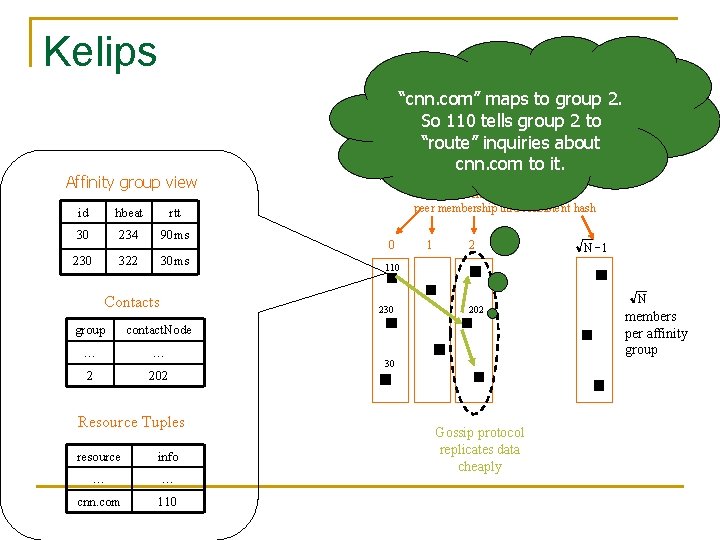

Kelips “cnn. com” maps to group 2. So 110 tells group 2 to “route” inquiries about cnn. com to it. Affinity group view id hbeat rtt 30 234 90 ms 230 322 30 ms Contacts Affinity Groups: peer membership thru consistent hash 0 contact. Node … … 2 202 Resource Tuples resource info … … cnn. com 110 2 N -1 110 230 group 1 202 30 Gossip protocol replicates data cheaply N members per affinity group

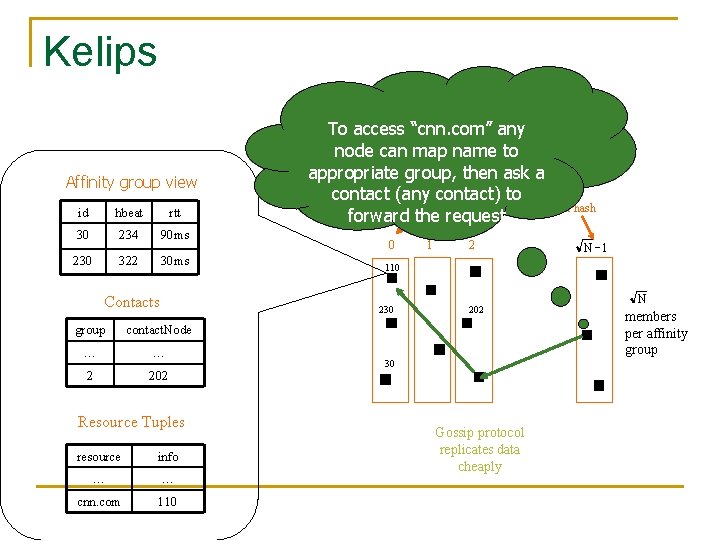

Kelips Affinity group view id hbeat rtt 30 234 90 ms 230 322 30 ms Contacts To access “cnn. com” any node can map name to appropriate group, then ask a Affinity to Groups: contact (any contact) peer membership thru consistent hash forward the request 0 contact. Node … … 2 202 Resource Tuples resource info … … cnn. com 110 2 N -1 110 230 group 1 202 30 Gossip protocol replicates data cheaply N members per affinity group

Conclusions n Tradeoffs are critical q q n DHT applications q n Why are you using it? What sort of security/anonymity guarantees? Think of a good one and become famous PAST q q q caches whole files Save some routing overhead Harder to implement true filesystem

References n n n A. Rowstron and P. Druschel, "Pastry: Scalable, distributed object location and routing for large-scale peer-to-peer systems". IFIP/ACM International Conference on Distributed Systems Platforms (Middleware), Heidelberg, Germany, pages 329 -350, November, 2001. A. Rowstron and P. Druschel, "Storage management and caching in PAST, a large-scale, persistent peer-to-peer storage utility", ACM Symposium on Operating Systems Principles (SOSP'01), Banff, Canada, October 2001. Ion Stoica, Robert Morris, David Karger, M. Frans Kaashoek, and Hari Balakrishnan, Chord: A Scalable Peer-to-peer Lookup Service for Internet Applications, ACM SIGCOMM 2001, San Deigo, CA, August 2001, pp. 149 -160.

References n n n Frank Dabek, M. Frans Kaashoek, David Karger, Robert Morris, and Ion Stoica, Wide-area cooperative storage with CFS, ACM SOSP 2001, Banff, October 2001. Stefan Saroiu, P. Krishna Gummadi, and Steven D. Gribble. A Measurement Study of Peer-to-Peer File Sharing Systems, Proceedings of Multimedia Computing and Networking 2002 (MMCN'02), San Jose, CA, January 2002. Kleinberg C. G. Plaxton, R. Rajaraman, and A. W. Richa. Accessing nearby copies of replicated objects in a distributed environment. In Proceedings of the 9 th Annual ACM Symposium on Parallel Algorithms and Architectures, Newport, Rhode Island, pages 311 -320, June 1997.

- Slides: 33