PEDIATRIC PEM FELLOW BIOSTATS FOR THE BOARDS Amy

PEDIATRIC PEM FELLOW BIOSTATS FOR THE BOARDS Amy H Kaji, MD Ph. D Harbor-UCLA Medical Center Part I - August 13, 2015 Part II – December 15, 2015 Modified by Kelly D. Young, MD, April 2016

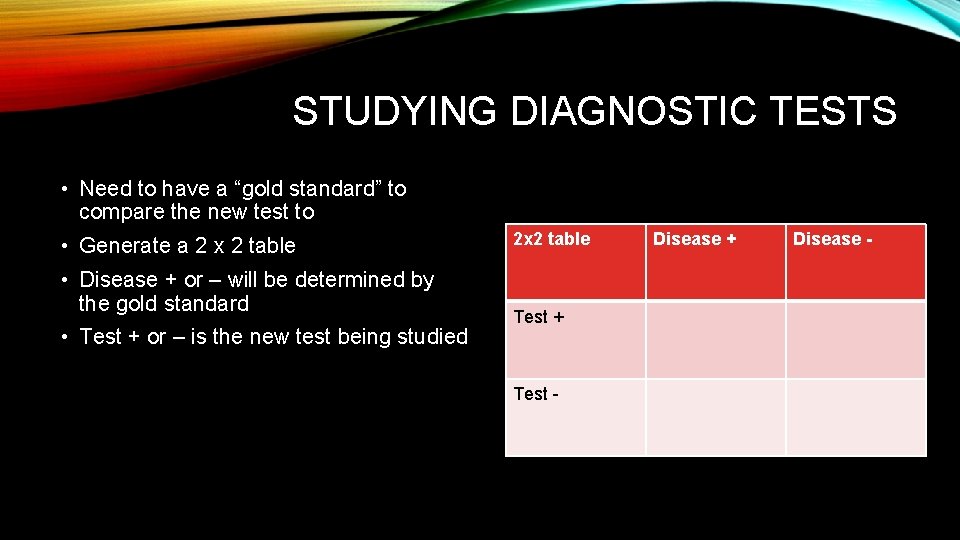

STUDYING DIAGNOSTIC TESTS • Need to have a “gold standard” to compare the new test to • Generate a 2 x 2 table • Disease + or – will be determined by the gold standard • Test + or – is the new test being studied 2 x 2 table Test + Test - Disease + Disease -

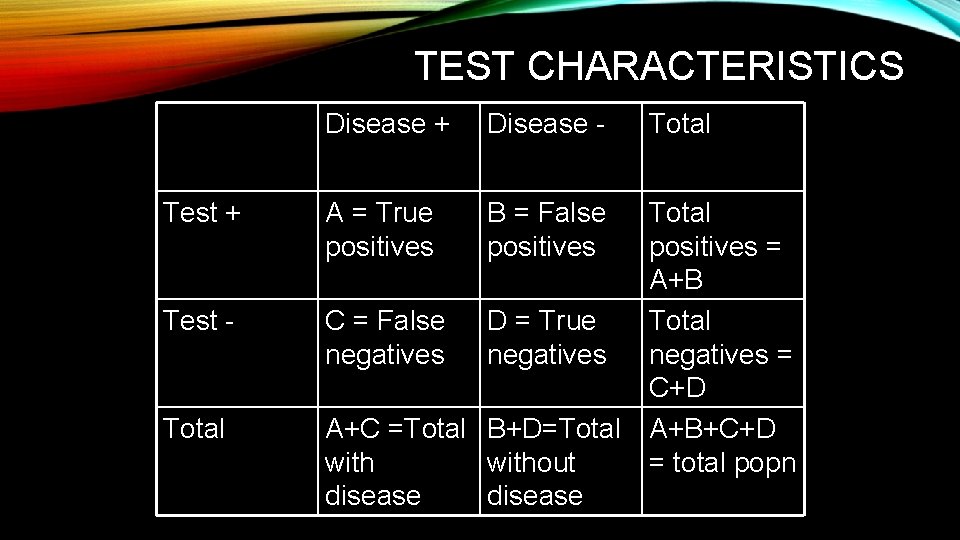

TEST CHARACTERISTICS Disease + Disease - Total Test + A = True positives B = False positives Test - C = False negatives D = True negatives Total A+C =Total B+D=Total without disease Total positives = A+B Total negatives = C+D A+B+C+D = total popn

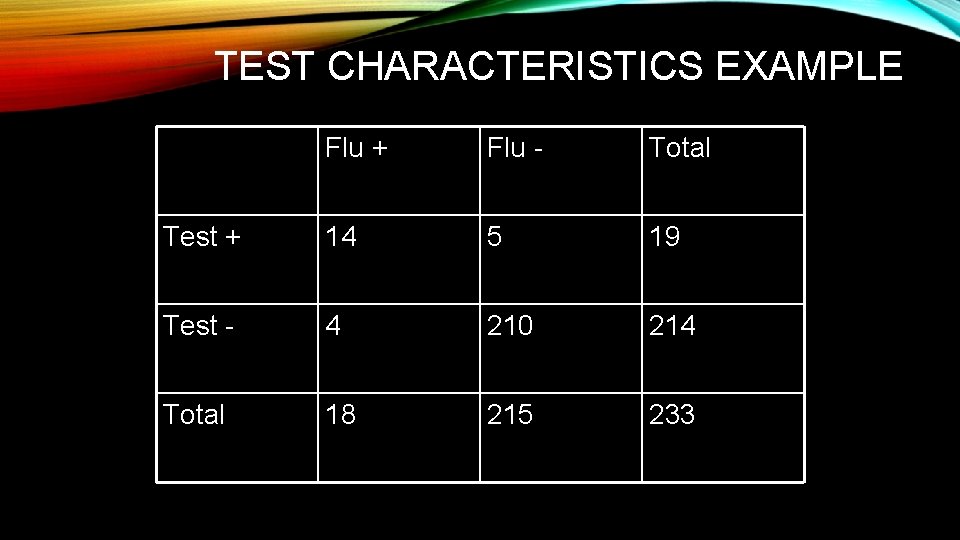

TEST CHARACTERISTICS EXAMPLE Flu + Flu - Total Test + 14 5 19 Test - 4 210 214 Total 18 215 233

TEST CHARACTERISTICSSENSITIVITY • Sensitivity = probability that the patient with the disease will have a positive test. A/A+C • It there are 18 patients with influenza, and 14 have a positive result on a rapid bedside test for influenza, the sensitivity = 14/18 or 78%. • Mnemonics for sensitivity • PID or Positive in Disease! • A perfectly Sensitive test, when Negative, rules Out disease” = Sn. NOUT

TEST CHARACTERISTICSSPECIFICITY • Specificity = probability that a patient without the disease will have a negative test. D/B+D • There were 215 patients without the disease, of whom 210 patients had a negative test, so the specificity = 210/215 or 98%. • Mnemonics for specificity • NIH or negative in health • A perfectly Specific test, when Positive, rules disease IN = Sp. PIN

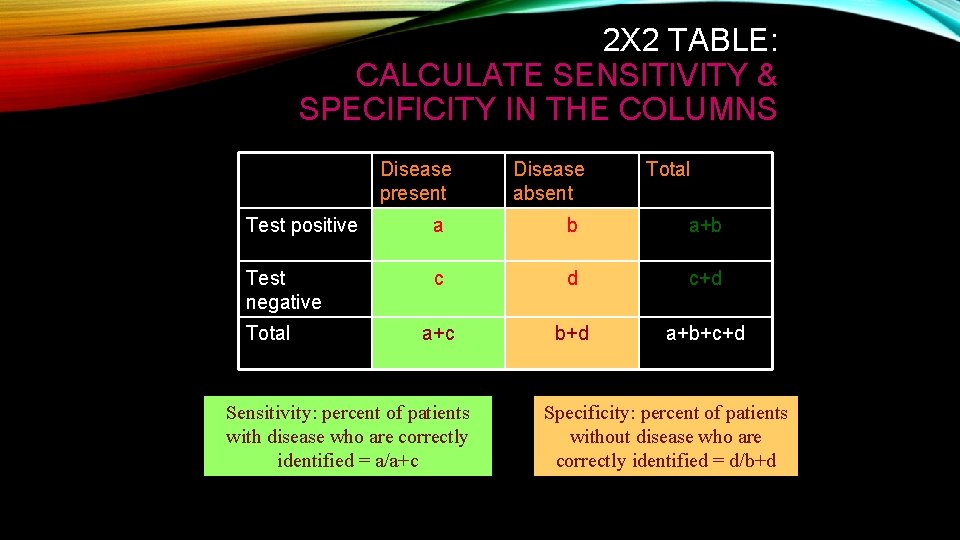

2 X 2 TABLE: CALCULATE SENSITIVITY & SPECIFICITY IN THE COLUMNS Disease present Disease absent Total Test positive a b a+b Test negative c d c+d a+c b+d a+b+c+d Total Sensitivity: percent of patients with disease who are correctly identified = a/a+c Specificity: percent of patients without disease who are correctly identified = d/b+d

TEST CHARACTERISTICS • False positive rate or alpha is B/(B+D) • False positive rate equals the FP divided by the sum of the FP and TN. It can be considered the “reverse” of the specificity (1 -specifity), and it is the probability of a positive test in an individual that does not have the condition. • False negative rate or beta is C/(A+C) • False negative rate equals the FN divided by the sum of the FN and TP. It can be considered the “reverse” of the sensitivity (1 -sensitivity), and it is the probability of a negative test in an individual that does have the condition.

TEST CHARACTERISTICS-PPV • Positive Predictive Value (PPV) = probability that a patient with a positive test has the disease. • A/(A+B) • Of the 19 patients with a positive flu test, 14 had the disease. • PPV = 14/19 or 74%

TEST CHARACTERISTICS-NPV • Negative Predictive Value (NPV) = the probability that patient with a negative test does not have the disease. • D/(C+D) • Of the 214 patients with a negative test, there were 210 who did not have the flu/ • NPV = 210/214 or 98%

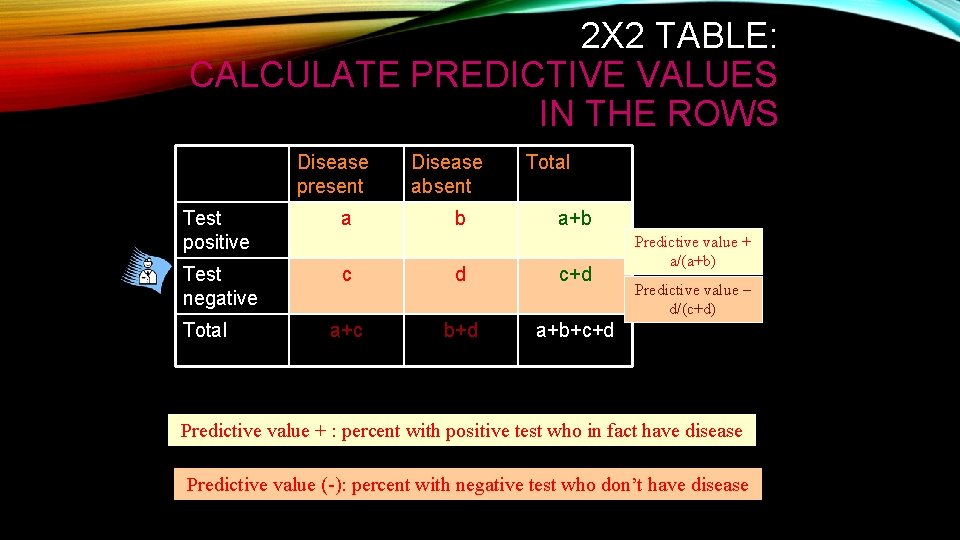

2 X 2 TABLE: CALCULATE PREDICTIVE VALUES IN THE ROWS Disease present Disease absent Test positive a Test negative c d c+d a+c b+d a+b+c+d Total b Total a+b Predictive value + a/(a+b) Predictive value – d/(c+d) Predictive value + : percent with positive test who in fact have disease Predictive value (-): percent with negative test who don’t have disease

TEST CHARACTERISTICS • Note that PPV and NPV depend upon the prevalence of disease or the pretest probability. • The probability of flu before obtaining the test result was already only 18/233 or 7. 7% (or the probability that the patient did not have the flu = 100 -7. 7=92. 3%). • NPV will always be high when the pre-test probability of the disease is low.

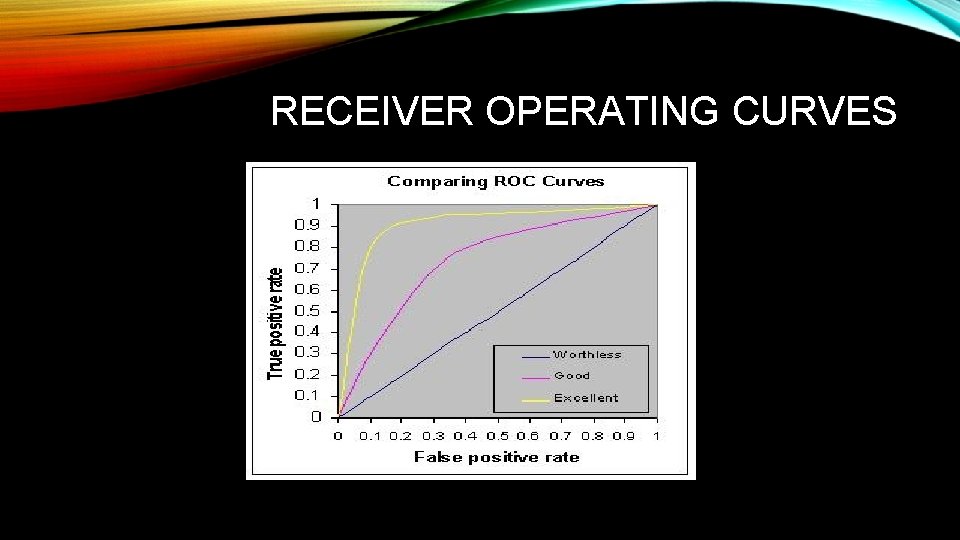

RECEIVER OPERATING CURVES • Illustrates performance of a binary classifier system as its discrimination threshold is varied. • That is, used to evaluate performance of a test that gives a positive or negative result. • Created by plotting the fraction of true positives out of the positives (TPR = true positive rate) vs. the fraction of false positives out of the negatives (FPR = false positive rate), at various threshold settings.

RECEIVER OPERATING CURVES • TPR is also known as sensitivity, and FPR is one minus the specificity or true negative rate. • Accuracy of test represented by area under the ROC curve: greater area (the closer the line to upper left corner of graph) = increased accuracy. • Plot sensitivity on the Y axis and (1 -specificity) on the X axis

RECEIVER OPERATING CURVES

LIKELIHOOD RATIOS • Likelihood ratios (positive and negative) can be calculated from the sensitivity and specificity of the disease • LR + = sensitivity/(1 -specificity) • LR - = (1 -specificity)/sensitivity • Pre-test probability for an individual may be estimated by the prevalence of the disease • Pretest odds = pretest probability/(1 -pretest probability) • Post-test odds – is calculated by multiplying pretest odds by the likelihood ratio • Post-test probability = posttest odds/ (posttest odds+1)

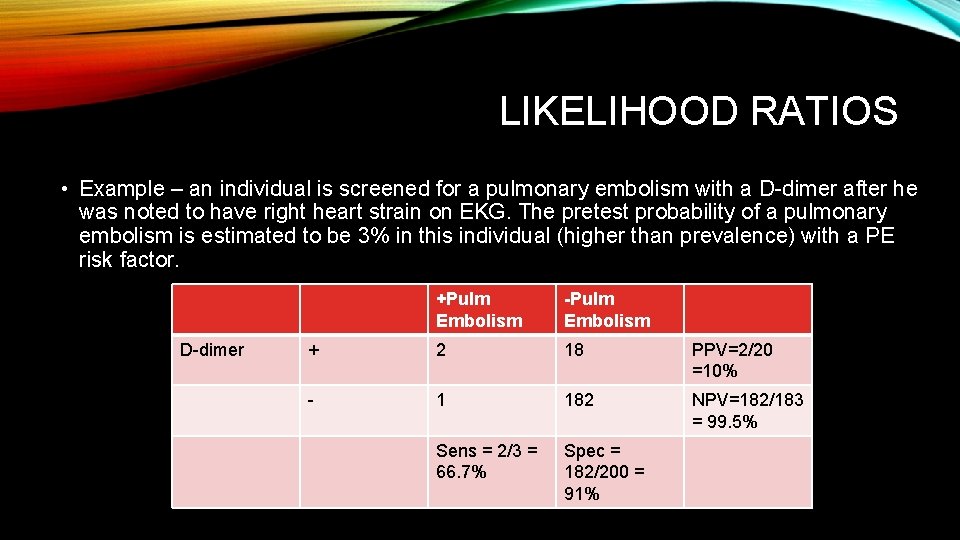

LIKELIHOOD RATIOS • Example – an individual is screened for a pulmonary embolism with a D-dimer after he was noted to have right heart strain on EKG. The pretest probability of a pulmonary embolism is estimated to be 3% in this individual (higher than prevalence) with a PE risk factor. D-dimer +Pulm Embolism -Pulm Embolism + 2 18 PPV=2/20 =10% - 1 182 NPV=182/183 = 99. 5% Sens = 2/3 = 66. 7% Spec = 182/200 = 91%

LIKELIHOOD RATIO • LR+ = sens/(1 -spec) = 66. 7/(1 -91) = 7. 4 • LR- = (1 -sens)/specificity = (1 -66. 7)/91 = 0. 37 • Pretest probability = 0. 03 • Pretest odds = 0. 03/(1 -0. 03) = 0. 0309 • Positive posttest odds (PPO)= pretest odds x LR+ = 0. 0309*7. 4 = 0. 229 • Positive posttest probability = PPO/(PPO+1) = 0. 229/(0. 229+1) = 0. 186 or 18. 6% • A positive D-dimer in this individual yields a post-test probabilty of 18. 6% of pulmonary embolism

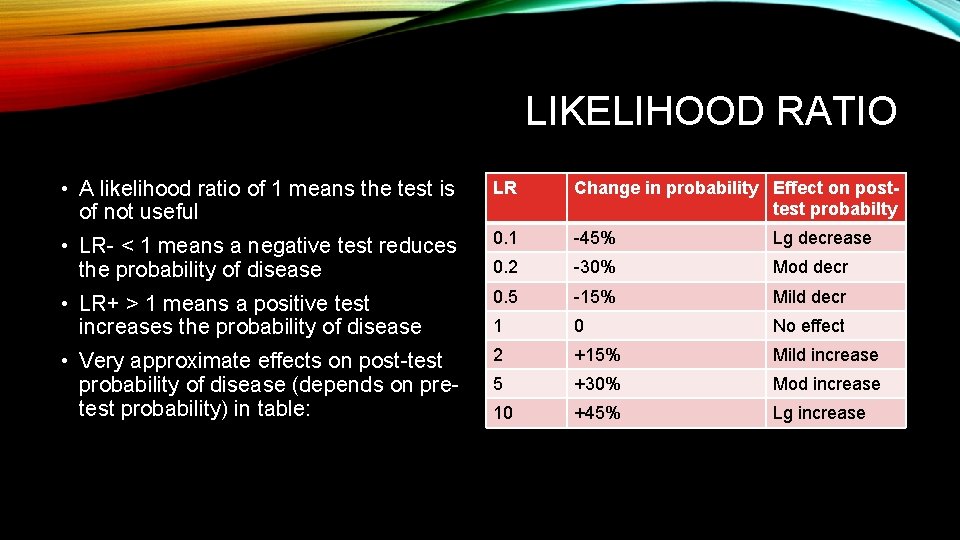

LIKELIHOOD RATIO • A likelihood ratio of 1 means the test is of not useful LR Change in probability Effect on posttest probabilty • LR- < 1 means a negative test reduces the probability of disease 0. 1 -45% Lg decrease 0. 2 -30% Mod decr • LR+ > 1 means a positive test increases the probability of disease 0. 5 -15% Mild decr 1 0 No effect • Very approximate effects on post-test probability of disease (depends on pretest probability) in table: 2 +15% Mild increase 5 +30% Mod increase 10 +45% Lg increase

CHARACTERISTICS OF MEASUREMENTS • New methods of measuring something (eg a new asthma score) need to be tested to make sure they are reliable, accurate, valid measurements of what you are trying to measure • Reliable: when the measurement is made over and over on the same patient, it is about the same each time • Also when the measurement is made by different practitioners • Accurate: the measurement closely approximates the true value of what you are trying to measure • The asthma score accurately measures how severe the patient’s asthma is • Valid: the measurement is a valid measure by construct, content, face, criterion validity (more on these in a future slide)

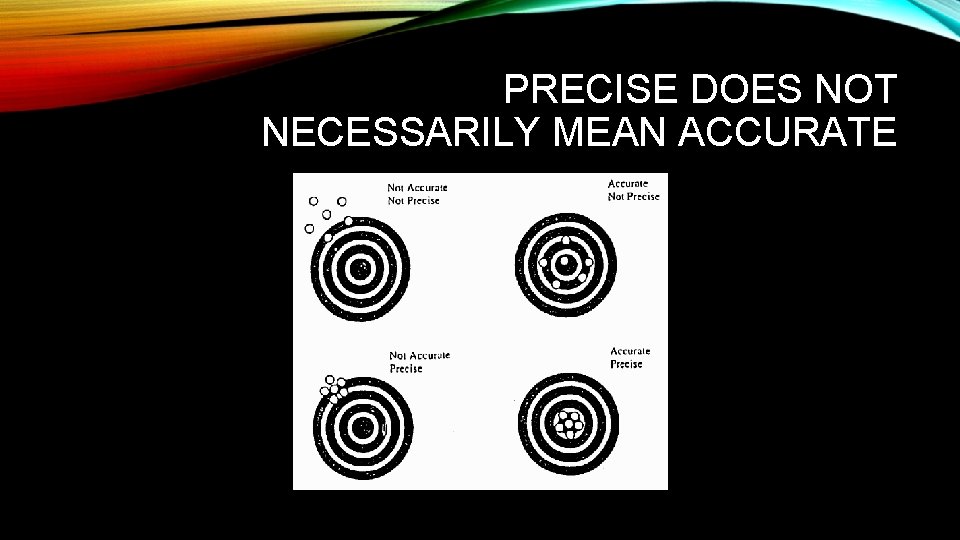

PRECISE DOES NOT NECESSARILY MEAN ACCURATE

TYPES OF VALIDITY • Construct validity: the measurement as constructed is measuring what you want, eg contains elements (RR, work of breathing) expected to change with asthma severity • Content validity: the content of the measurement covers all appropriate items (usually determined by an expert panel) • Face validity: a reasonable clinician would think this measurement (asthma score) is an appropriate and complete measure of asthma severity • Criterion validity: the measure performs as well as a gold standard / previously validated measure • Internal vs external validity: measure is valid on your data = internal; generalizable to other data sets = external

STUDIES: DEFINITIONS • Null hypothesis • Hypothesis that there is no difference between the two groups being compared, with respect to the outcome/variable of interest. • Must be defined a priori, prior to data collection. • The hypothesis that is in question must be clinically meaningful -- must pass the “so what” test.

DEFINITIONS • Alternative hypothesis • Hypothesis that there is a difference between the two groups being compared, with respect to the outcome/variable of interest. • The size of the difference that you are looking for should be defined a priori, before any data collection. • The size of the difference that you are looking for should be clinically meaningful – pass the “so what test. ”

CLASSICAL HYPOTHESIS TESTING • After defining the null and alternative hypotheses, and selecting the outcome and clinically significant difference, an experiment is performed to determine whethere is, in fact, a difference • Looking for a “statistically significant” difference • The sample size to be recruited into the experiment is determined by the power of the study to find a difference (1 -beta error), alpha error, and the size of the difference the study will look for • Beta error (type II error) is when the alternative hypothesis is actually true, but the study doesn’t find the difference (false negative study) • Often arbitrarily set to 10 -20% corresponding to a power of 80 -90% • Alpha error (type I error) is when the null hypothesis is actually true, but the study finds a difference by chance alone (false positive study) • Usually arbitrarily set to 5%

CLASSICAL HYPOTHESIS TESTING • After the study is conducted and the data are gathered, a statistical test is run to determine the likelihood of obtaining that set of data if the null hypothesis were actually true • This results in a “p value”, which is the probability of obtaining that set of data if the null hypothesis were actually true • We arbitrarily say that if p < 0. 05, ie there is a < 5% chance of obtaining the data by chance alone, then the alternative hypothesis must be true • But note, we can be wrong 5% of the time by definition • The thinking is: the alpha error is set at 0. 05, and the alpha error is the probability of finding a difference by chance alone • If p < the alpha error, then the findings are not by chance alone, and there really is a difference

MEASUREMENTS AND SCALE TYPES • Categorical = unordered categories, such as sex, blood type • Ordinal = ordered categories with intervals that are not clearly quantifiable, such as degrees of pain (mild, moderate, severe) • Continuous = quantified intervals on an infinite scale of values, such as weight • Ordered discrete = ranked categories with discrete intervals, such as a Likert scale • Very satisfied, Satisfied, Neutral, Dissatisfied, Very Dissatisfied

STATISTICAL TESTS • Parametric tests can be used to describe and analyze normally distributed continuous data with equal variances. • Non-parametric tests do not rely on assumptions that the data are drawn from a given probability distribution (ie don’t have to be normally distributed). • Less powerful (more likely to make a Type II error) • More realistic and conservative

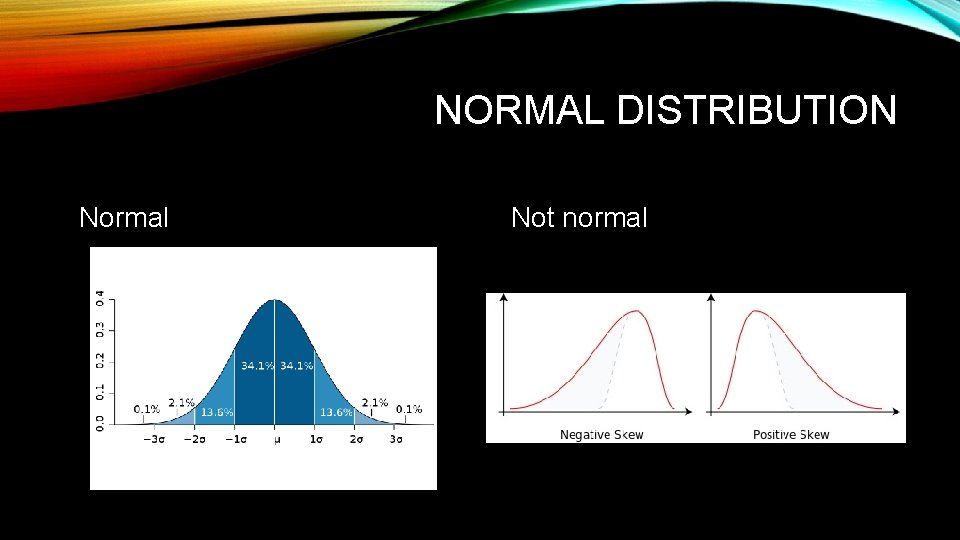

NORMAL DISTRIBUTION Normal Not normal

STATISTICAL TESTS • Student’s t-test • Parametric test • Compares the means of two groups • Assumes continuous variables, normally distributed, with equal variance • Wilcoxon rank sum or Mann-Whitney U test • Nonparametric test • Compares the medians of two groups • Assumes only that both are continuous variables

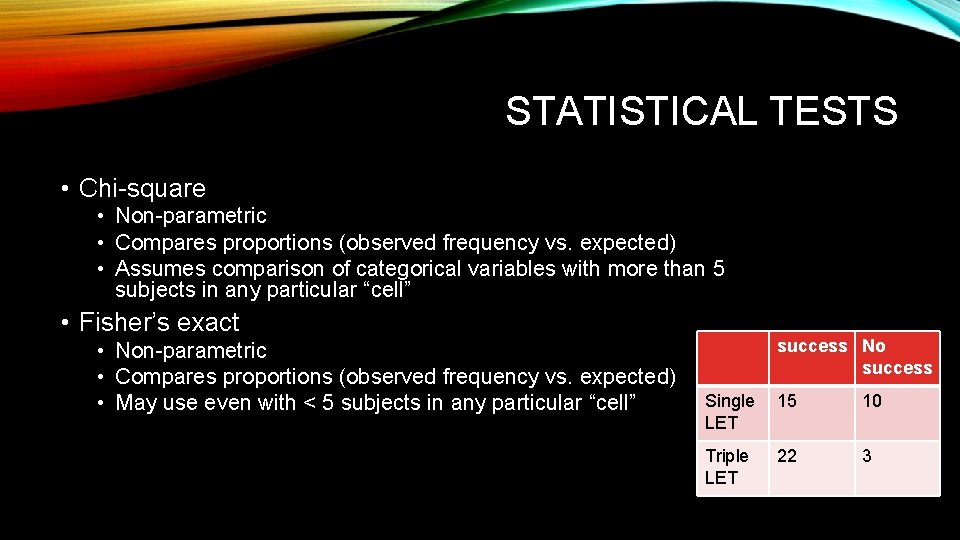

STATISTICAL TESTS • Chi-square • Non-parametric • Compares proportions (observed frequency vs. expected) • Assumes comparison of categorical variables with more than 5 subjects in any particular “cell” • Fisher’s exact • Non-parametric • Compares proportions (observed frequency vs. expected) • May use even with < 5 subjects in any particular “cell” success No success Single LET 15 10 Triple LET 22 3

STATISTICAL TESTS • One-way ANOVA • Parametric test • Compares the means of three or more groups with one dependent variable • Assumes continuous variable, normally distributed, equal variance in all groups • Multivariate ANOVA or MANOVA when there is more than one dependent variable • Kruskal-Wallis • Non-parametric test • Compares the medians of three or more groups • Assumes only that the variables are continuous

STATISTICAL TESTS • Pearson’s product-moment correlation coefficient = r • Parametric • Measures the linear relationship between two continuous variables • Values range from -1 to +1 • Spearman rank correlation = rho • Non-parametric • Measures the monotonic statistical dependence between two continuous variables • Values range from -1 to +1

STATISTICAL TESTS • Linear Regression • Approach to modeling the relationship between a dependent variable that is continuous and one or more explanatory variables. • Simple vs. multiple linear regression • Example – length of hospital stay as dependent variable and laparoscopic vs. open appendectomy as the predictor variable.

STATISTICAL TESTS • Logistic Regression • Approach to modeling the relationship between a dependent variable that is categorical and one or more explanatory variables • Simple vs. multiple logistic regression • Odds ratio is primary measure of effect size • Compares odds that one group will lead to an outcome with the odds that some other group will lead to an outcome. • Example – Is increasing age or BMI (predictors) associated with Diabetes (outcome)?

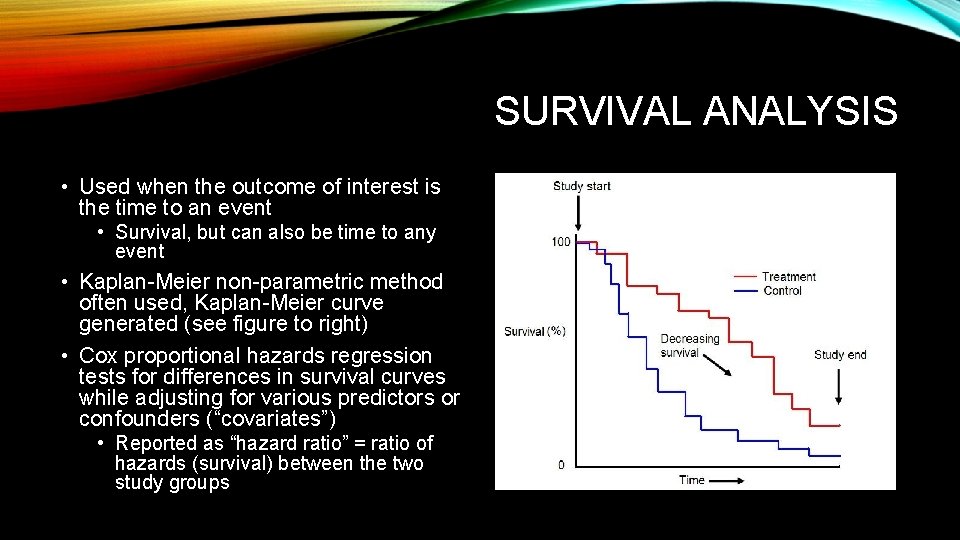

SURVIVAL ANALYSIS • Used when the outcome of interest is the time to an event • Survival, but can also be time to any event • Kaplan-Meier non-parametric method often used, Kaplan-Meier curve generated (see figure to right) • Cox proportional hazards regression tests for differences in survival curves while adjusting for various predictors or confounders (“covariates”) • Reported as “hazard ratio” = ratio of hazards (survival) between the two study groups

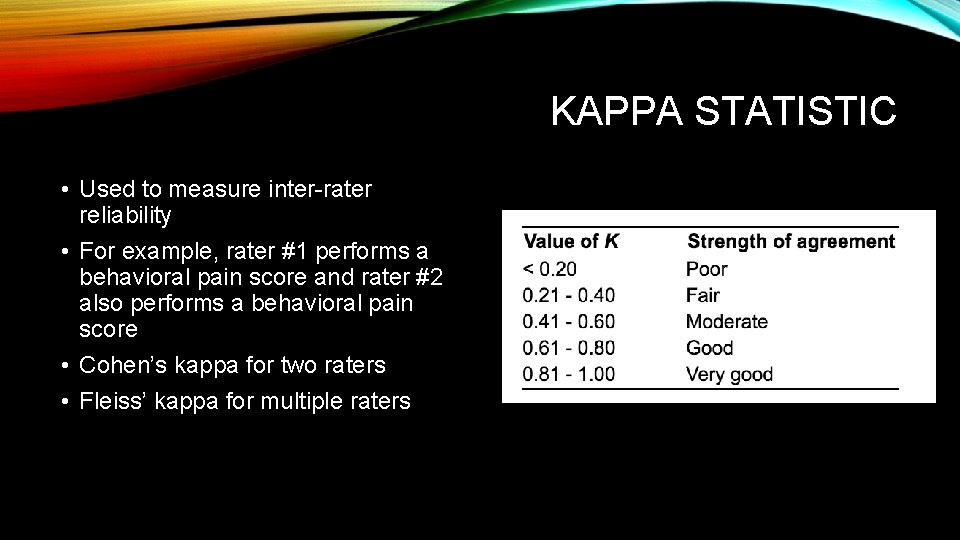

KAPPA STATISTIC • Used to measure inter-rater reliability • For example, rater #1 performs a behavioral pain score and rater #2 also performs a behavioral pain score • Cohen’s kappa for two raters • Fleiss’ kappa for multiple raters

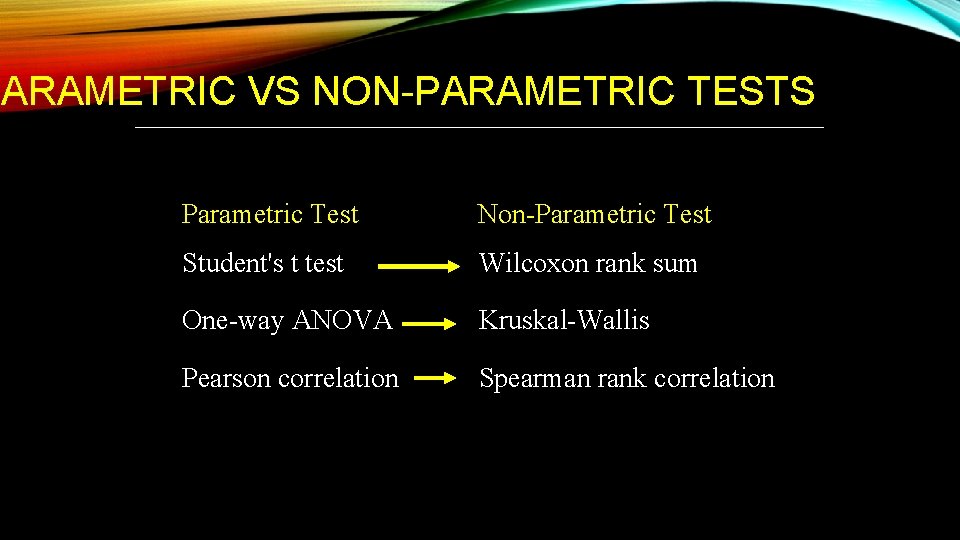

PARAMETRIC VS NON-PARAMETRIC TESTS Parametric Test Non-Parametric Test Student's t test Wilcoxon rank sum One-way ANOVA Kruskal-Wallis Pearson correlation Spearman rank correlation

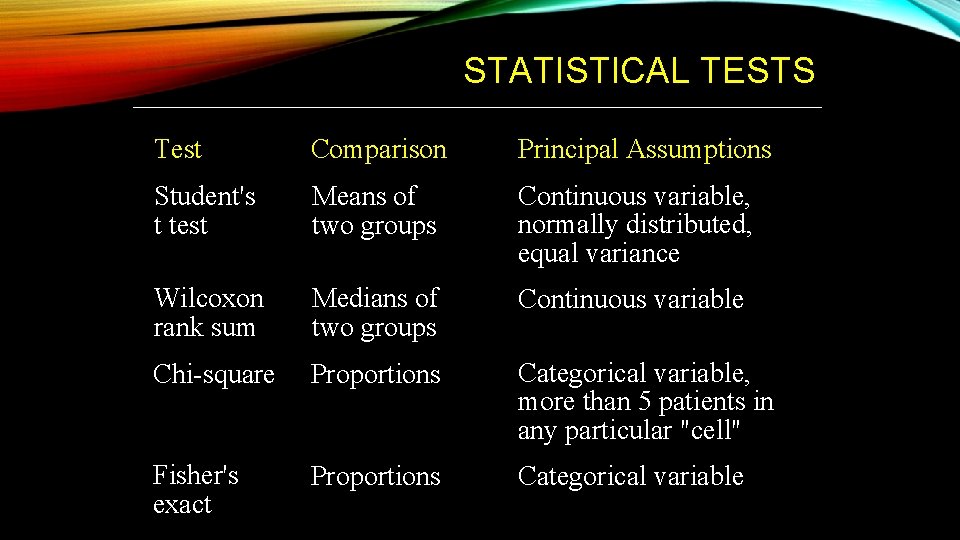

STATISTICAL TESTS Test Comparison Principal Assumptions Student's t test Means of two groups Continuous variable, normally distributed, equal variance Wilcoxon rank sum Medians of two groups Continuous variable Chi-square Proportions Categorical variable, more than 5 patients in any particular "cell" Fisher's exact Proportions Categorical variable

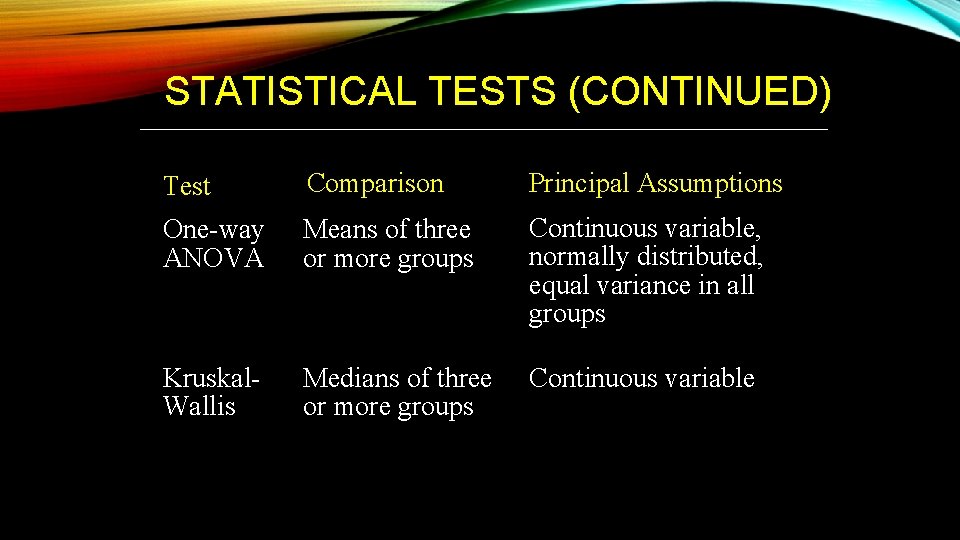

STATISTICAL TESTS (CONTINUED) Test Comparison Principal Assumptions One-way ANOVA Means of three or more groups Continuous variable, normally distributed, equal variance in all groups Kruskal. Wallis Medians of three or more groups Continuous variable

EXAMPLE QUESTION • A study compares 2 techniques for RHS reduction. The mean for group 1 is 1. 1 seconds ± 0. 3 seconds and the median is 3 seconds. In group 2, the mean is 2. 1 ± 2. 0 seconds and the median is 3 seconds. The most appropriate statistical test to compare these two groups would be: • • A. Chi-square B. Wilcoxon Rank Sum test C. Student’s t-test D. Fisher’s Exact test

EXAMPLE QUESTION • A study of 2 groups (50 patients each) aims to compare their respective outcomes (proportion with yes, no or indeterminate). What is the best to determine if there is a statistically significant difference? • A. Chi square • B. Wilcoxon Rank Sum • C. Student’s t-test

EXAMPLE QUESTION • You design a study in which two groups are assessed, and the primary outcome is positive, negative, or indeterminate. Each group has 8 patients. What is the best to determine if there is a statistically significant difference? • A. Chi-square analysis • B. Wilcoxon rank sum test • C. Student’s t-test • D. Bonferroni’s correction • E. Fisher’s Exact test

EXAMPLE QUESTION • You design a study that measures effect of TV on reported pain scales before and after watching the clip. You assume that the pain scores are normally distributed. Which test should be used to determine statistically significant differences? • • • A. Kologorov-Smirnov test B. Paired t-test C. ANOVA (analysis of variance) D. Fisher’s exact test E. Shapiro-Wilk test

EXAMPLE QUESTION • You design a study that involves comparing group IQs (nonnormally distributed) of the football teams of the Trojans, Bruins, and Wolverines. You apply the Wilcoxon rank sum test, but there is an error, because you should be using: • • A. ANOVA B. Chi-square C. Kruskal-Wallis D. Independent t-test

EXAMPLE QUESTION • The IQ of defensive squad for Trojans: 75, 85, 100, 140, 141, 142, 143, 145, and 146. This is an example of data that is: • A. normally distributed • B. ideally analyzed by parametric tests • C. ideally analyzed by non-parametric tests • D. categorical • E. nominal

EXAMPLE QUESTION • You are reading a study to determine predictors of pneumonia. The authors collected the following: diminished breath sounds, wheeze, rales, fever, retraction, and cough. What statistical test would be appropriate to determine which variables are most likely to be independent predictors of pneumonia? • • • A. Logistic regression B. Data mining C. Variable Rules analysis D. Kolmogorov Smirnov test E. Independent t-test

EXAMPLE QUESTION • You design a study in which you are measuring 5 yo, 10 yo, and 15 yo children and their incidence (which is normally distributed) of buckle fractures. What is the best to determine differences? • • • A. ANOVA B. MANOVA C. Student’s t test D. Mann-Whitney U test E. Kruskal-Wallis test

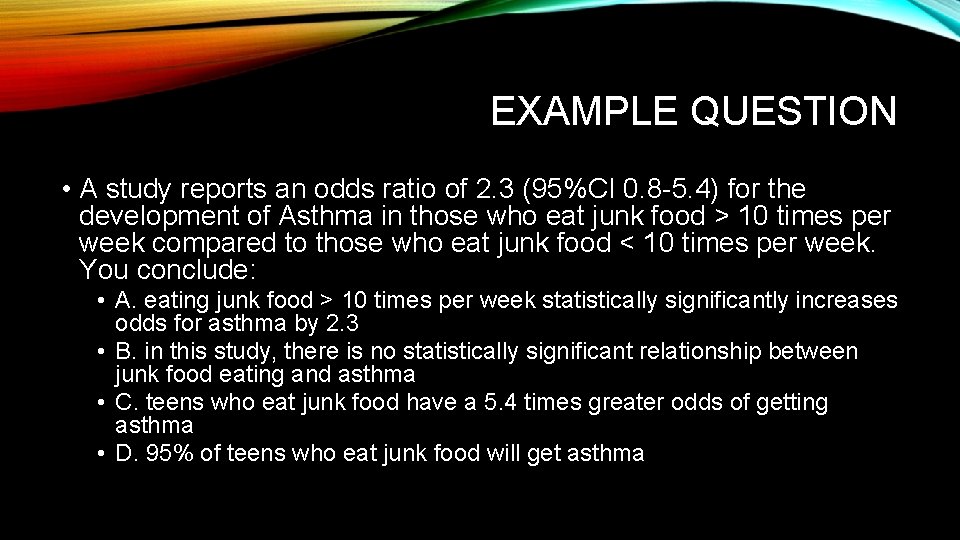

EXAMPLE QUESTION • A study reports an odds ratio of 2. 3 (95%CI 0. 8 -5. 4) for the development of Asthma in those who eat junk food > 10 times per week compared to those who eat junk food < 10 times per week. You conclude: • A. eating junk food > 10 times per week statistically significantly increases odds for asthma by 2. 3 • B. in this study, there is no statistically significant relationship between junk food eating and asthma • C. teens who eat junk food have a 5. 4 times greater odds of getting asthma • D. 95% of teens who eat junk food will get asthma

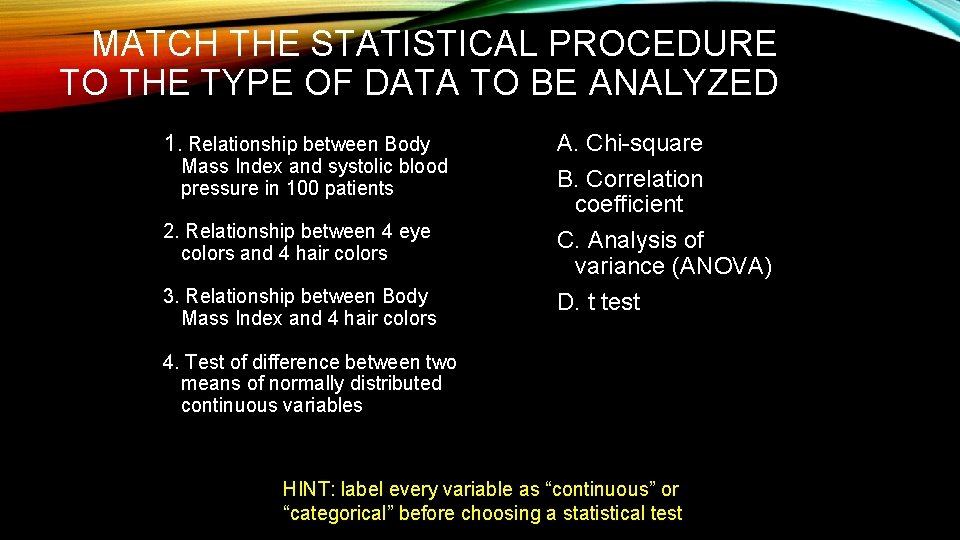

MATCH THE STATISTICAL PROCEDURE TO THE TYPE OF DATA TO BE ANALYZED 1. Relationship between Body Mass Index and systolic blood pressure in 100 patients 2. Relationship between 4 eye colors and 4 hair colors 3. Relationship between Body Mass Index and 4 hair colors A. Chi-square B. Correlation coefficient C. Analysis of variance (ANOVA) D. t test 4. Test of difference between two means of normally distributed continuous variables HINT: label every variable as “continuous” or “categorical” before choosing a statistical test

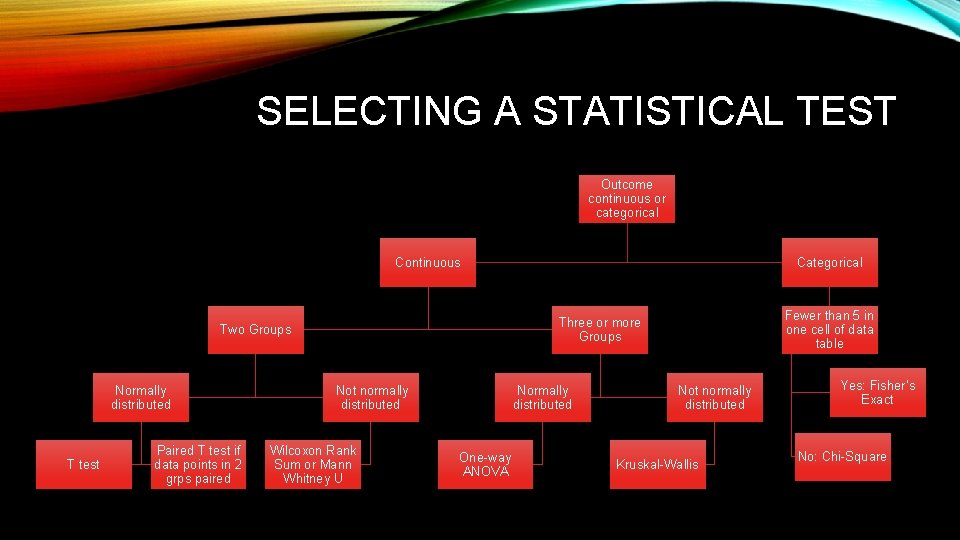

SELECTING A STATISTICAL TEST Outcome continuous or categorical Continuous T test Paired T test if data points in 2 grps paired Fewer than 5 in one cell of data table Three or more Groups Two Groups Normally distributed Categorical Not normally distributed Wilcoxon Rank Sum or Mann Whitney U Normally distributed One-way ANOVA Not normally distributed Kruskal-Wallis Yes: Fisher’s Exact No: Chi-Square

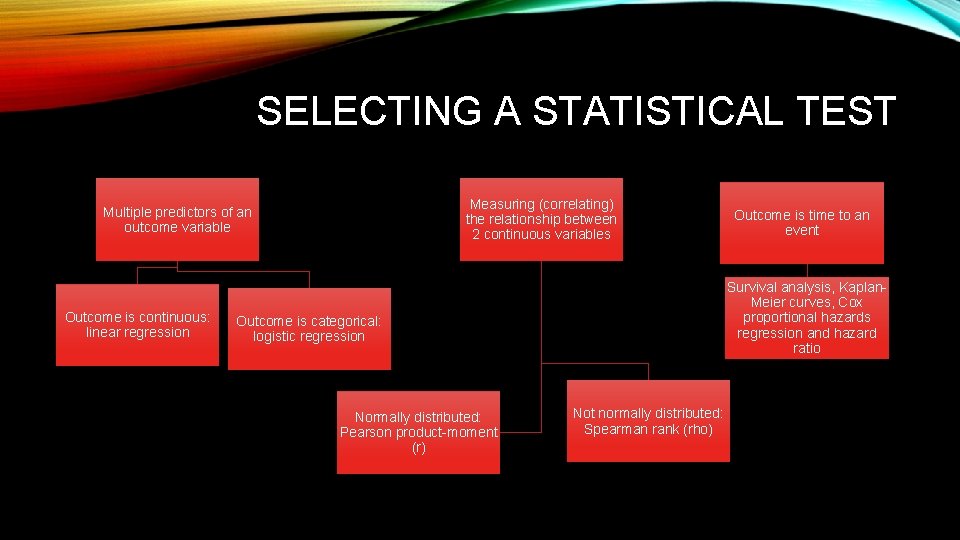

SELECTING A STATISTICAL TEST Measuring (correlating) the relationship between 2 continuous variables Multiple predictors of an outcome variable Outcome is continuous: linear regression Survival analysis, Kaplan. Meier curves, Cox proportional hazards regression and hazard ratio Outcome is categorical: logistic regression Normally distributed: Pearson product-moment (r) Outcome is time to an event Not normally distributed: Spearman rank (rho)

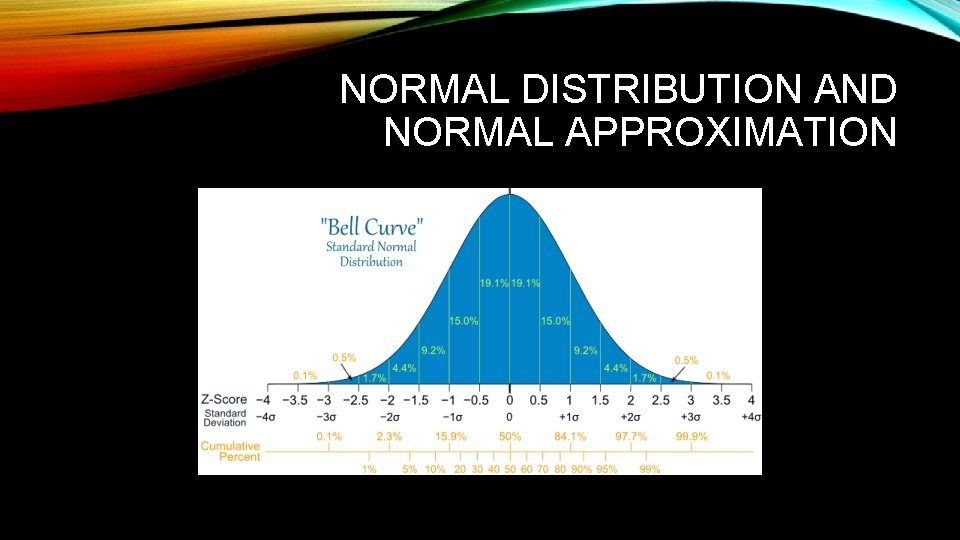

NORMAL DISTRIBUTION AND NORMAL APPROXIMATION

NORMAL DISTRIBUTION AND NORMAL APPROXIMATION • Normal distribution (or bell curve) is a continuous probability distribution • About 68% of values drawn from a normal distribution are within one standard deviation σ away from the mean; about 95% of the values lie within two standard deviations; and about 99. 7% are within three standard deviations. This fact is known as the 68 -95 -99. 7 (empirical) rule, or the 3 -sigma rule.

DEFINITIONS • P-value • The null hypothesis is tested to determine which hypothesis (null or alternative) will be accepted as true or rejected • The p-value is the probability of obtaining the results observed, or results more inconsistent with the null hypothesis, if the null hypothesis were true. • What are the limitations of the p-value?

P-VALUE • P<0. 05 means that the observed treatment difference is “statistically significant” • P<0. 05 does NOT tell us • Uncertainty in the size of the true treatment effect • The likelihood that the true treatment effect is clinically important

STATISTICAL SIGNIFICANCE: PITFALL • A problem with significance testing is that it takes a continuous measure (p-value) and forces us to make a yes/no decision to reject a hypothesis. • In declaring results to be statistically significant (or not) we are losing information contained in the p-value

CONFIDENCE INTERVAL • When using confidence intervals (CI), the point estimate and the limits of the CI surrounding the point estimate, would be reported. • Example – the mean difference in temperature was 10 degrees (95%CI 8 -12 degrees centigrade) • What is the advantage of the CI over the p-value?

STATISTICAL SIGNIFICANCE VS BIOLOGICALLY MEANINGFUL OR CLINICALLY SIGNIFICANT • The difference between a pre-post intervention study demonstrates that the total cholesterol decreased by 2 points, with a 95%CI of 1 -3, p<0. 05. • While this difference may be statistically significant, a difference in 2 points on the serum total cholesterol will not be clinically relevant.

CONFIDENCE INTERVAL BENEFITS • Even if data don’t demonstrate a statistically significant difference, the CI can tell us if: • There may actually be a clinically important difference between the treatments; or • There were not enough patients to reliably detect a clinically important difference even if it really exists

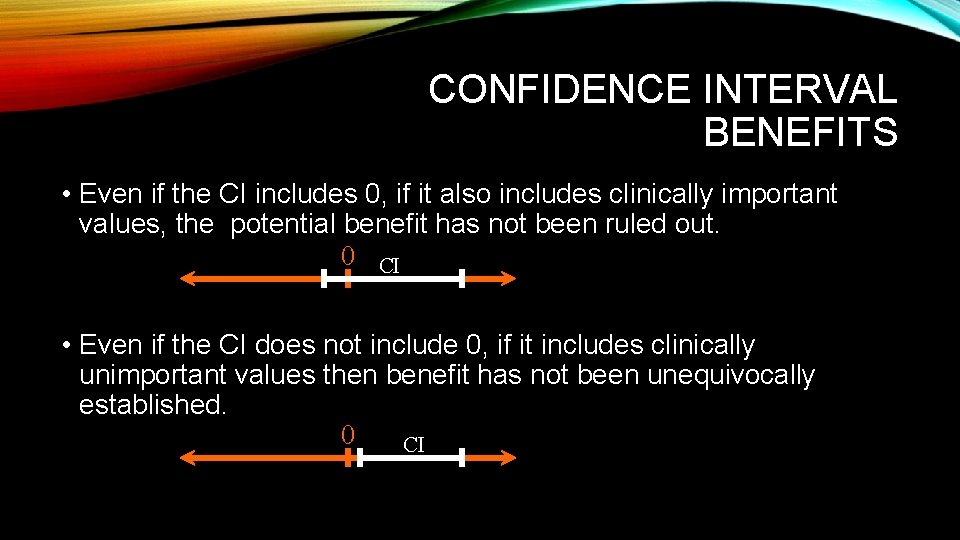

CONFIDENCE INTERVAL BENEFITS • Even if the CI includes 0, if it also includes clinically important values, the potential benefit has not been ruled out. 0 CI • Even if the CI does not include 0, if it includes clinically unimportant values then benefit has not been unequivocally established. 0 CI

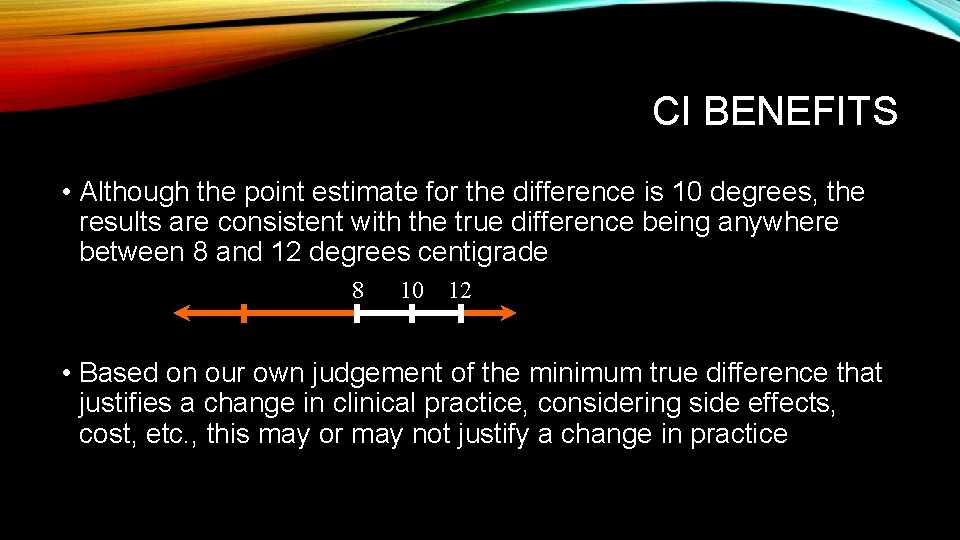

CI BENEFITS • Although the point estimate for the difference is 10 degrees, the results are consistent with the true difference being anywhere between 8 and 12 degrees centigrade 8 10 12 • Based on our own judgement of the minimum true difference that justifies a change in clinical practice, considering side effects, cost, etc. , this may or may not justify a change in practice

CI BENEFITS • Why a 95%CI? • The selection of 95% CIs (as opposed to 99%CIs, for example) is arbitrary, like the selection of 0. 05 as the cutoff for a statistically significant p value

A NOTE ON CONFIDENCE INTERVALS • Report the point estimate and the limits of the CI surrounding the point estimate: difference in BP between two groups: 25 mm Hg (95%CI 5 -44 mm Hg) • Interpretation- although point estimate for difference is 25, results are c/w true difference being between 5 and 44. • One is 95% confident that interval from 5 -44 will cover the true value. • As sample size increases, a narrower CI results (greater precision). • Wide CIs are indicative of small sample sizes!

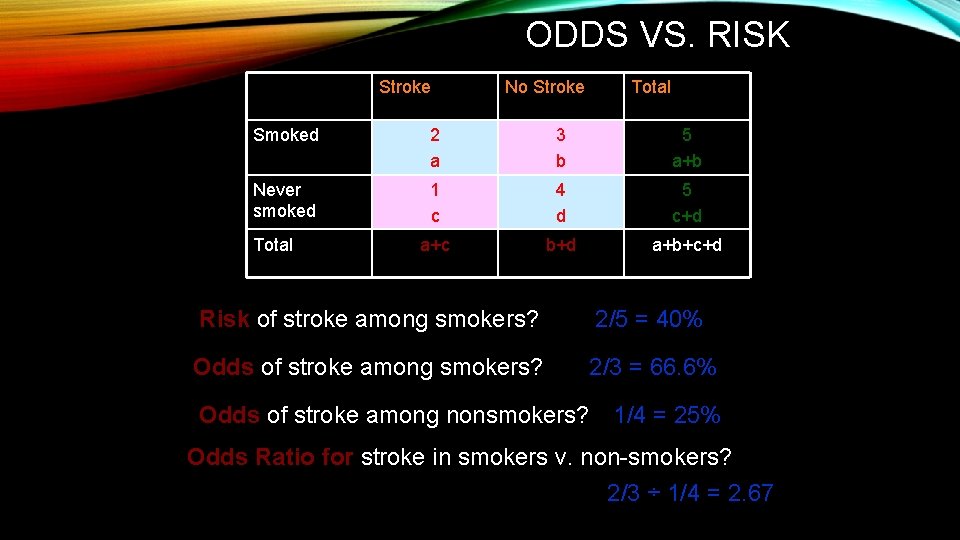

ODDS VS. RISK Stroke No Stroke Total Smoked 2 a 3 b 5 a+b Never smoked 1 c 4 d 5 c+d a+c b+d a+b+c+d Total Risk of stroke among smokers? 2/5 = 40% Odds of stroke among smokers? 2/3 = 66. 6% Odds of stroke among nonsmokers? 1/4 = 25% Odds Ratio for stroke in smokers v. non-smokers? 2/3 ÷ 1/4 = 2. 67

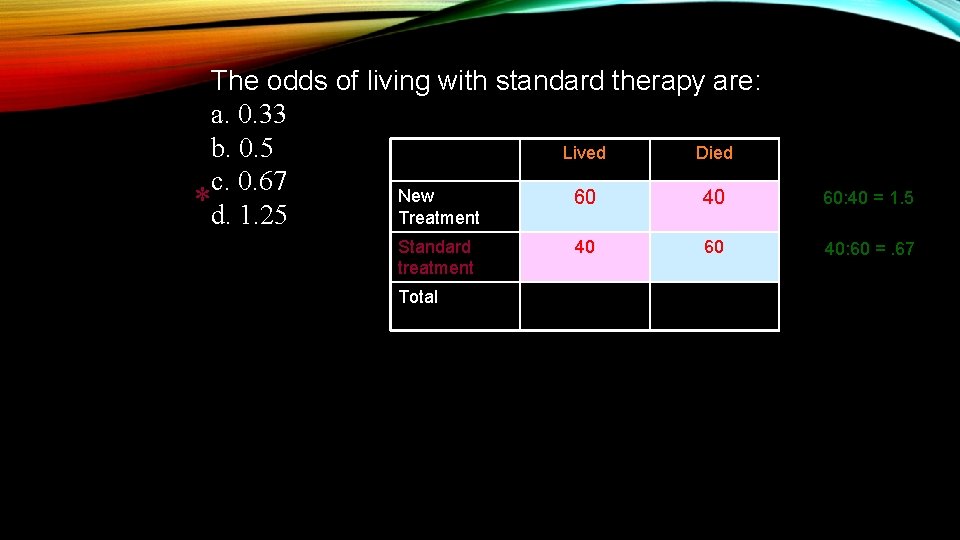

The odds of living with standard therapy are: a. 0. 33 b. 0. 5 Lived Died c. 0. 67 New 60 40 *d. 1. 25 Treatment Standard treatment Total 40 60 60: 40 = 1. 5 40: 60 =. 67

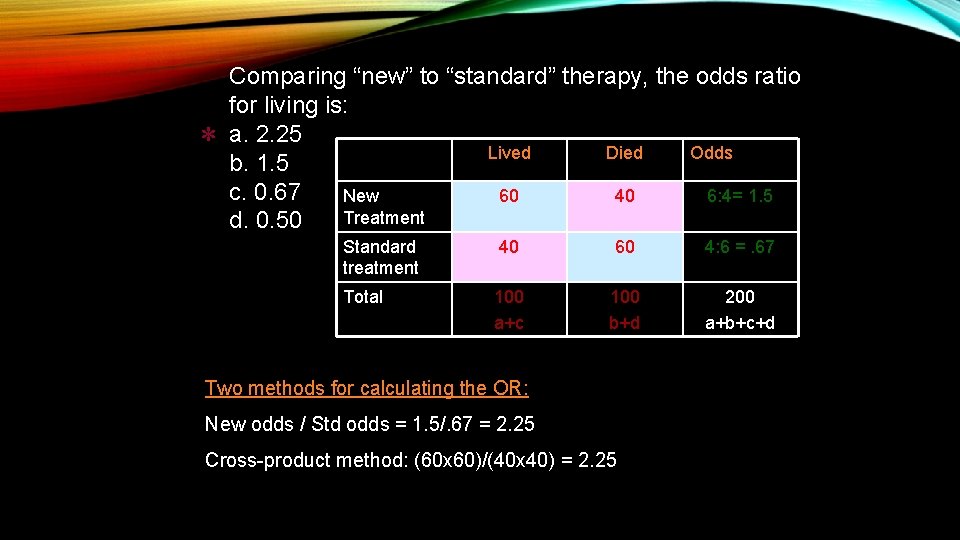

* Comparing “new” to “standard” therapy, the odds ratio for living is: a. 2. 25 Lived Died Odds b. 1. 5 c. 0. 67 New 60 40 6: 4= 1. 5 Treatment d. 0. 50 Standard treatment 40 60 4: 6 =. 67 Total 100 a+c 100 b+d 200 a+b+c+d Two methods for calculating the OR: New odds / Std odds = 1. 5/. 67 = 2. 25 Cross-product method: (60 x 60)/(40 x 40) = 2. 25

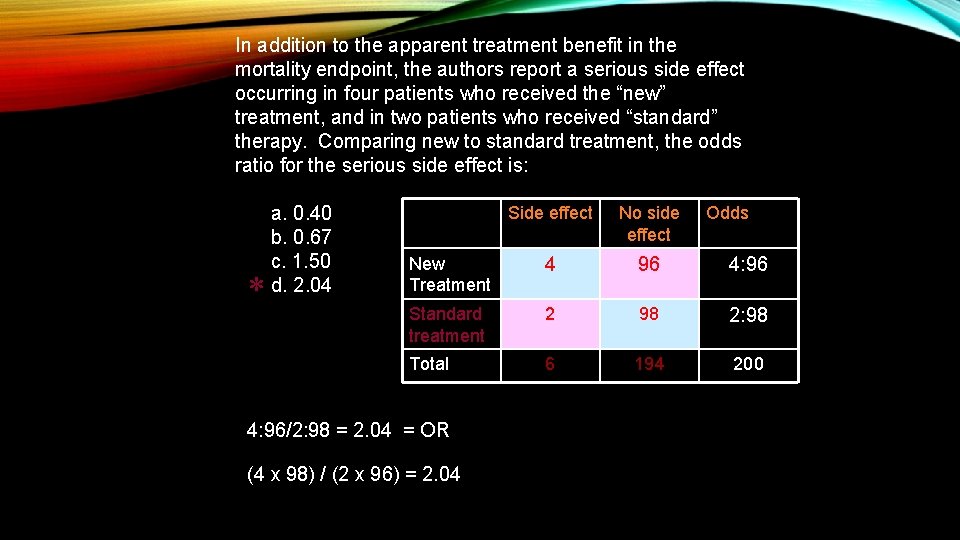

In addition to the apparent treatment benefit in the mortality endpoint, the authors report a serious side effect occurring in four patients who received the “new” treatment, and in two patients who received “standard” therapy. Comparing new to standard treatment, the odds ratio for the serious side effect is: * a. 0. 40 b. 0. 67 c. 1. 50 d. 2. 04 Side effect No side effect New Treatment 4 96 4: 96 Standard treatment 2 98 2: 98 Total 6 194 200 4: 96/2: 98 = 2. 04 = OR (4 x 98) / (2 x 96) = 2. 04 Odds

ABSOLUTE VS RELATIVE RISK REDUCTION • A study of a new drug reduces the risk of inadequate migraine control from 10% in the control group to 5% in the study group • The absolute risk reduction is 10% - 5% = 5% • The relative risk reduction is a 50% drop in risk, from 10% to 5% (5% is 50% of 10%) • Note that relative risk reduction sounds more impressive in this case

CONFOUNDING • Confounding occurs when the exposure being studied is associated with another factor, which is also a risk factor for the disease. • Coffee drinking was thought to be associated with lung cancer, but smoking is a confounder, because it is associated with coffee drinking, and it is a risk factor for the disease.

BIASES • Selection Bias • Recall Bias • Misclassification Bias • Interviewer Bias • Incorporation Bias • Lead-time Bias • Publication Bias • Spectrum Bias • Verification Bias

SELECTION BIAS • Selection bias – inequality in the study groups because the relationship between the exposure and the condition of interest in the study participants is not the same as for those subjects who would have been eligible for the study, but were not included. • Example - Subjects who volunteer to participate in a study may have higher literacy than those who are less likely to volunteer (because they don’t read the advertisements in the paper)

RECALL BIAS • Recall bias – inaccuracy that may result from study subjects remembering events of the past • Example – patients with a miscarriage may be more likely (than those without a miscarriage) to remember an exposure to a certain medication

MISCLASSIFICATION BIAS OR ERROR • Misclassification of exposure or outcome can occur • Can be differential or non-differential • Example – mothers of infants with congenital malformations recall radiation exposure to a greater extent than control mothers = differential • Ovarian cancer cases and controls may not remember age of menarche, but it is unlikely that the magnitude of error will depend on whether they are a case or a control = nondifferential

INTERVIEWER BIAS • Systematic error due to interviewer’s subconscious or conscious gathering of selective data

INCORPORATION BIAS • Classification of disease status partly depends on the results of the index test. • Criterion standard incorporates the index test and leads to falsely high estimates of sensitivity and specificity. • Example – you are assessing the sensitivity and specificity of Ddimer for pulmonary embolism, where the criterion standard is a CTA. However, a CTA is only ordered if the D-dimer is greater than 500. • If you are assessing a test’s ability to detect disease, and you define the disease partly by a positive test, the test is likely to look good!

LEAD-TIME BIAS • If starting point for measuring survival is different in screened and unscreened cases • Those whose illness is found via screening (at an earlier asymptomatic period) will appear to have a better survival rate, even in the absence of treatment that influences the disease’s natural history.

PUBLICATION BIAS • Occurs when studies that have “positive” results are published preferentially over those that are “negative. ” • Applicable in systematic reviews • Particularly true for studies of prognostic markers – it is hard to get excited about a paper about factors that are worthless for predicting prognosis

EXAMPLE QUESTION • In ambulatory medicine clinic, you see a 53 year-old woman who meets diagnostic criteria for major depressive disorder. You consider recommending a trial of therapy with an SSRI. In a brief literature search, you find a recent systematic review, which reports moderate but clinically significant improvement in depression symptoms, using pooled data from randomized trials that enrolled patients like yours. If, in fact, no real treatment benefit exists from using SSRIs in this population of patients, this discrepancy is most likely due to: • A. lead-time bias • B. publication bias • C. selection bias • D. recall bias

SPECTRUM BIAS • Bias caused by a study population whose disease profile does not reflect that of the intended population • Example – patients with more severe disease may be more or less likely to respond to a particular intervention or a test may work better in this “sicker” population.

VERIFICATION BIAS • Also known as referral or work-up bias • Bias which occurs when people who are positive on the index test are more likely to get the gold standard, and only those who receive the gold standard are included in the study. • Example – is ankle swelling predictive of a fracture (disease)? Xrays are unlikely to be ordered in patients with no swelling, and the study includes only those with x-rays (decreases the number of subjects without swelling, both with and without fractures).

DEFINITIONS • Type 1 error • Concluding that a difference exists when it does not • A false positive • Occurs when a statistically significant p value (p<�) is obtained even though the two groups are not actually different • The risk of a type I error, assuming there is no underlying difference, is � • Probability of committing a type I error (rejecting null hypothesis when it is true) is called � (the level of statistical significance)

DEFINITIONS • Type 2 error • Concluding that a difference does not exist, when a difference equal to the alternative hypothesis does exist • A false negative • Occurs when a p value > � is obtained, yet the two groups are actually different • The probability of a type II error (failing to reject the null hypothesis when it is actually false), assuming there is a difference, is β • 1 - β is the power, or the probability of observing an effect in the sample

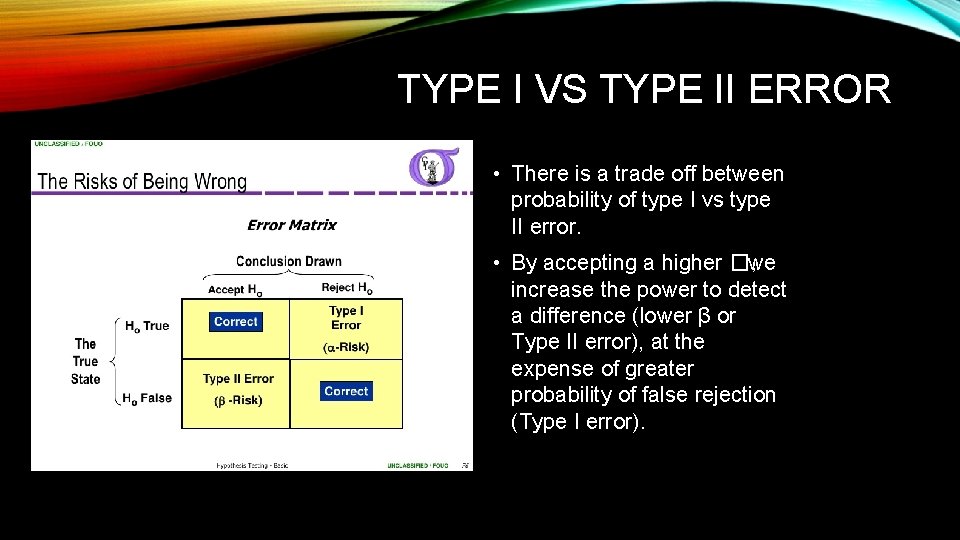

TYPE I VS TYPE II ERROR • There is a trade off between probability of type I vs type II error. • By accepting a higher �, we increase the power to detect a difference (lower β or Type II error), at the expense of greater probability of false rejection (Type I error).

EXAMPLE QUESTION • If in a study done to see if wearing baseball caps impacts team performance, you find the team plays better when fans wear baseball caps, but in reality there is no difference, what kind of error have you made? • A. Type I Error • B. Type II Error • C. Sampling Error • D. Margin of Error

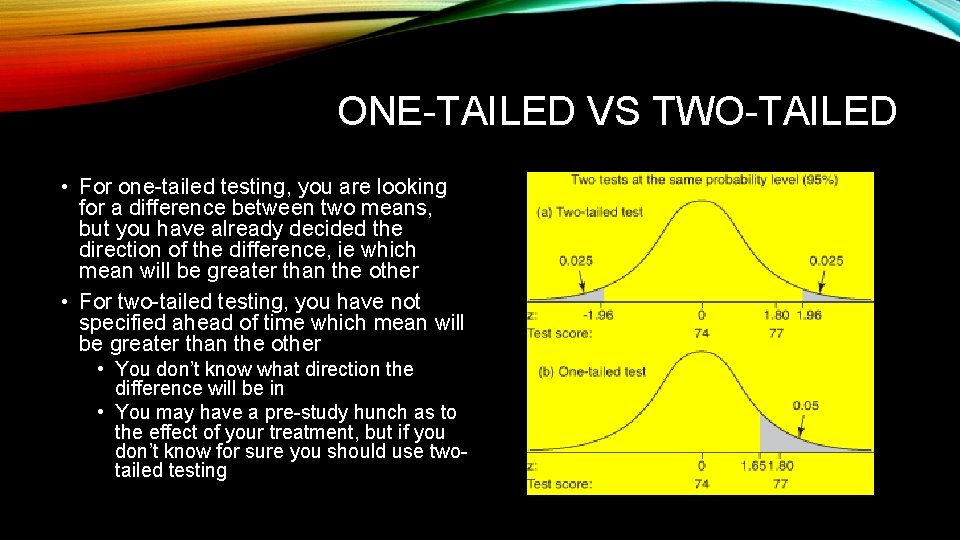

ONE-TAILED VS TWO-TAILED • For one-tailed testing, you are looking for a difference between two means, but you have already decided the direction of the difference, ie which mean will be greater than the other • For two-tailed testing, you have not specified ahead of time which mean will be greater than the other • You don’t know what direction the difference will be in • You may have a pre-study hunch as to the effect of your treatment, but if you don’t know for sure you should use twotailed testing

MULTIPLE COMPARISONS • When multiple comparisons are performed, the risk of one or more false-positive p values is increased • Multiple comparisons include: • Pair-wise comparisons of more than two groups • Comparison of multiple characteristics between two groups • Comparison of two groups at multiple time points • If you data mine (or data dredge) by looking at a large dataset’s many predictors to see if one happens to be statistically significantly associated with an outcome, you are making multiple comparisons

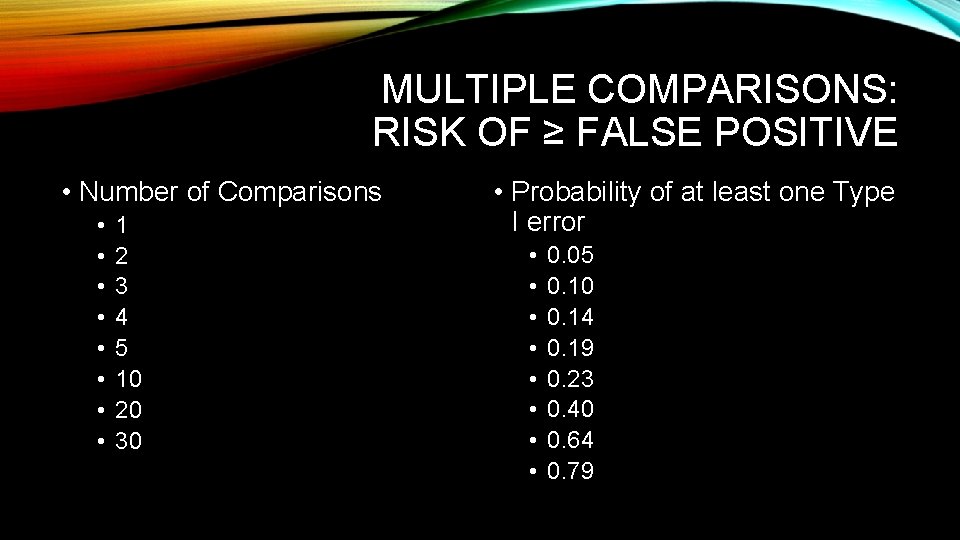

MULTIPLE COMPARISONS: RISK OF ≥ FALSE POSITIVE • Number of Comparisons • • 1 2 3 4 5 10 20 30 • Probability of at least one Type I error • • 0. 05 0. 10 0. 14 0. 19 0. 23 0. 40 0. 64 0. 79

MULTIPLE COMPARISONS: BONFERRONI CORRECTION • A method for reducing the overall risk of a type I error when making multiple comparisons • Overall (study-wise) desired type I error risk (e. g. , 0. 05) is divided by the number of tests, and this new value is used as the α for each individual test • Controls the type I error risk, but reduces the power (increased type II error risk)

STUDY DESIGNS • Investigator-initiated or industry-sponsored • Prospective • Cohort (epidemiologic) • Randomized clinical trial • Retrospective • Cohort (chart review) • Case-control (identify based on outcomes) • Secondary data analysis • Sometimes of large databases such as NHANES, KIDS, registries • Meta-analysis or systematic review

FDA TRIALS • Phase I: healthy human volunteers for safety • Phase II: small randomized blinded trials to identify an effective dose • Phase III: large randomized clinical trials to test efficacy (primarily) and assess safety • Phase IV: post-licensure studies, usually to assess rare, serious adverse effects

CAUSAL INFERENCE • An observed association can be: • • True cause-effect relationship Chance (random error) – alpha or type I error Bias (systematic error) Effect-cause (esp. in case-control or cross-sectional studies, cohort if subclinical disease not identified at baseline) • Confounding • The more rigorous the study design, the higher chance any observed association is a true cause-effect relationship

SAMPLE RESEARCH QUESTION • Does phenytoin prophylaxis prevent early post-traumatic seizures in children after blunt head trauma? • Establish a single primary research question for study planning and sample size • Primary outcome will be early post-traumatic seizure (PTS) • Would like to look at outcomes other than seizure: survival, neurologic outcome = secondary outcomes • Need to define early PTS, blunt head trauma (severity? ), child

STUDY DESIGN RANDOMIZED CLINICAL TRIAL • • PICO: Population/patients, Intervention, Comparison/control, Outcome P: <16 yrs, BHT, GCS < 11, no previous seizure I: Phenytoin C: Placebo O: Seizure within 48 hours of medication, secondary: survival, neurologic outcome RCT is usually the most rigorous study design if done well Important elements include blinding (double-blinding = neither patients nor researchers know whether patient is receiving the treatment at the time of measuring the outcome), true randomization (not manipulatable subconsciously)

STUDY DESIGN RETROSPECTIVE AND PROSPECTIVE COHORTS • P: Charts from children < 16 years, BHT, admitted to PICU or step-down • I: Phenytoin • C: No Phenytoin • O: Seizure during hospital stay, secondary: survival to hospital discharge • Retrospective cohort studies are often chart review studies • Subject to recall bias, missing information if not documented in the chart • Prospective cohort: follow a group of patients but don’t randomize intervention • More rigorous methodology since can define what data you would like to collect and systematically collect it, reducing recall bias, missing information • More time-consuming and expensive to conduct than retrospective

STUDY DESIGN CASE-CONTROL STUDY • P: Cases = patients < 16 years old with BHT who had a documented seizure in ED or PICU within 7 days of admission • Controls = same, no seizure • I: Phenytoin • C: No Phenytoin • O: The risk factor (Phenytoin) • Reverse order: patients chosen based on outcome, not risk factor • Case-control studies are particularly useful when cases are very rare • Sometimes have to look at a period of years to find a good number of cases to study

STUDY DESIGN CROSS-SECTIONAL SURVEY • P: Call PICU’s of level III trauma centers, children admitted with BHT in last 7 days • I: Phenytoin – who is currently on it? • C: No Phenytoin • O: Seizure during admission so far • Which came first – seizure or phenytoin? • Cross-sectional studies cannot really tell you about cause-effect, only that the two variables studied are correlated; the question remains which was the cause and which the effect?

STUDY DESIGN CASE SERIES • P: A collection of pediatric head trauma cases the investigator has seen • I: Investigator describes the characteristics of the cases, including whether or not they received phenytoin • C: As above • O: Investigator describes whether or not they had post-traumatic seizures • In a case series, the investigator may note an interesting observation – eg that all the patients in the series that received phenytoin did not have a PTS, and all those that didn’t did have a PTS • But, these findings are only “hypothesis-generating” and warrant additional study • Lots of potential for bias in case series: one investigator, one institution, selection bias, etc

STUDY DESIGN SYSTEMATIC REVIEW AND METAANALYSIS • Systematic review collects previous studies on this topic and attempts to summarize them in a cohesive manner • Meta-analysis attempts to combine the data from multiple studies so that the treatment effect can be estimated more precisely (larger sample size) • To do a meta-analysis, the studies cannot be too heterogeneous • For example, if three studies looked at PTS within 2 days, and two studies looked at PTS within 7 days, and one study looked at phenytoin OR levitiracetam, it may be difficult to combine results • Meta-analyses should include a grading of the quality of studies included – the combined findings are only as good as the separate studies that went into them

OTHER STUDY TERMINOLOGY • Crossover study • Patients are given one treatment (or control), observed, then “crossed over” to the other study condition (other treatment or control), and observed. • Patient serve as their own controls (paired measurements) • Before-after study • Patients are observed before and after an intervention (eg instituting a clinical practice guideline) • Subject to temporal bias – did something else change before & after also? • Intention to treat analysis • Groups are compared based on the treatment they were intended to receive / randomized to, even if they did not take the medication or accidentally got the other group’s treatment

OTHER STUDY TERMINOLOGY • Post-hoc analysis • Researchers see something they didn’t plan on studying in the data that looks interesting (phenytoin’s effect on blood pressure) and report on it • Sub-group analysis • Researchers don’t find a positive effect in the study as a whole, but notice that there is an effect in a subgroup and report on it (eg phenytoin reduces PTS in children < 2 years old) • Not OK if subgroups were not defined ahead of time when planning the study • May be OK if planned subgroup analyses (had some idea that phenytoin efficacy might differ by age group), but realize that sample size is much decreased for each subgroup compared to the overall study sample size

- Slides: 101