Pattern Programming ITCS 45145 Parallel Programming UNCCharlotte B

Pattern Programming ITCS 4/5145 Parallel Programming UNC-Charlotte, B. Wilkinson, 2012. Aug 30, 2012 Pattern. Prog-1 PP-1. 1

Acknowledgment This work was initiated by Jeremy Villalobos and described in his Ph. D thesis: “RUNNING PARALLEL APPLICATIONS ON A HETEROGENEOUS ENVIRONMENT WITH ACCESSIBLE DEVELOPMENT PRACTICES AND AUTOMATIC SCALABILITY, ” UNC-Charlotte, 2011. 2

Pattern Programming Research Group • 2011 – Jeremy Villalobos (Ph. D awarded, continuing involvement) – Saurav Bhattara (MS thesis, graduated) • Spring 2012 – Yawo Adibolo (ITCS 6880 Individual Study) – Ayay Ramesh (ITCS 6880 Individual Study) • Fall 2012 – Haoqi Zhao (MS thesis) – Pohua Lee (BS senior project) Openings! 3

Problem Addressed • To make parallel programming more useable and scalable. • Parallel programming, writing programs for solving problems using multiple computers, processors, and cores, has a very long history but still a challenge. • Traditional approach involve explicitly specifying messagepassing (for clusters and distributed computers) and threads (for shared memory) with low-level APIs. • Need a better structured approach. 4

Pattern Programming Concept Programmer begins by constructing his program using established computational or algorithmic “patterns” that provide a structure. What patterns are we talking about? • Low-level algorithmic patterns that might be embedded into a program such as fork-join, broadcast/scatter/gather. • Higher level algorithm patterns forming a complete program such as workpool, pipeline, stencil, map-reduce. We concentrate upon higher-level “computational/algorithm ” level patterns rather than lower level patterns. 5

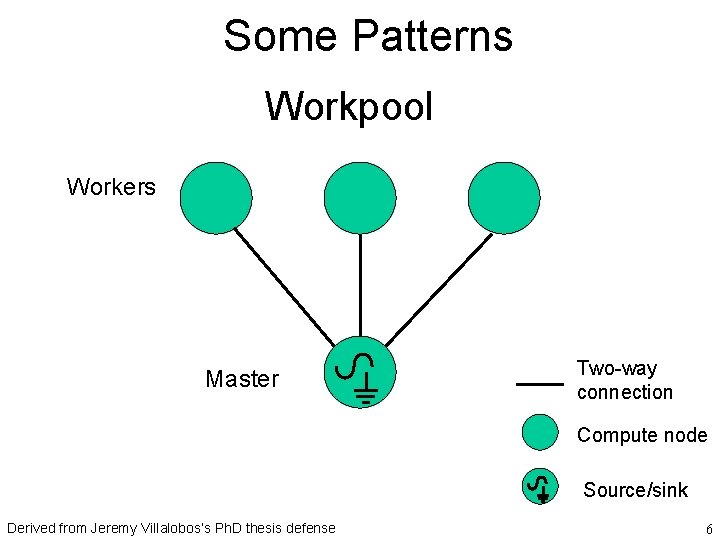

Some Patterns Workpool Workers Master Two-way connection Compute node Source/sink Derived from Jeremy Villalobos’s Ph. D thesis defense 6

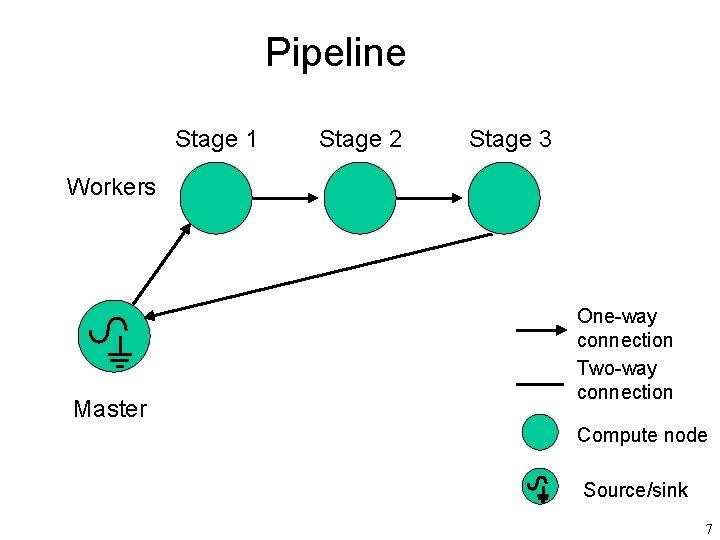

Pipeline Stage 1 Stage 2 Stage 3 Workers Master One-way connection Two-way connection Compute node Source/sink 7

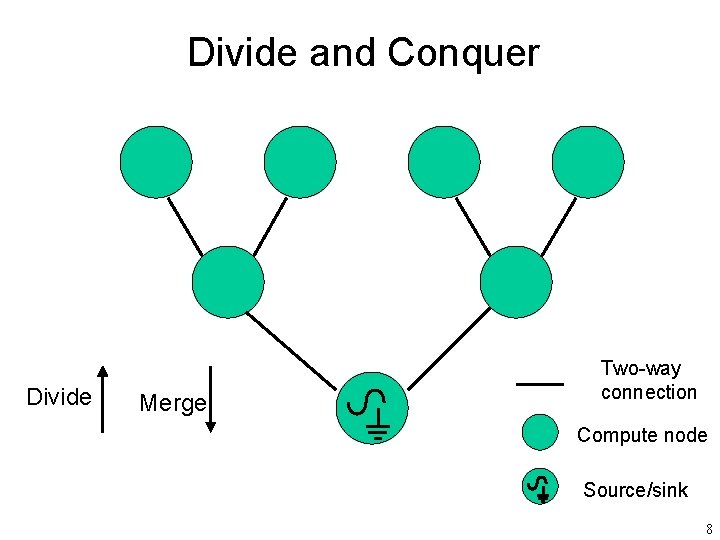

Divide and Conquer Divide Merge Two-way connection Compute node Source/sink 8

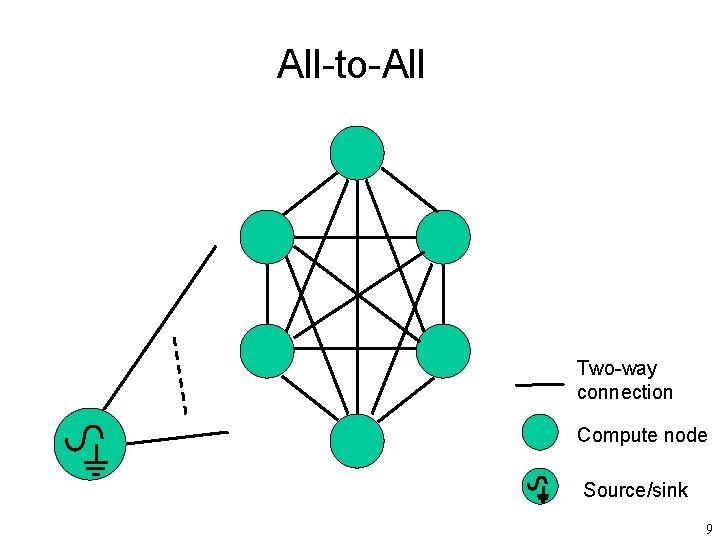

All-to-All Two-way connection Compute node Source/sink 9

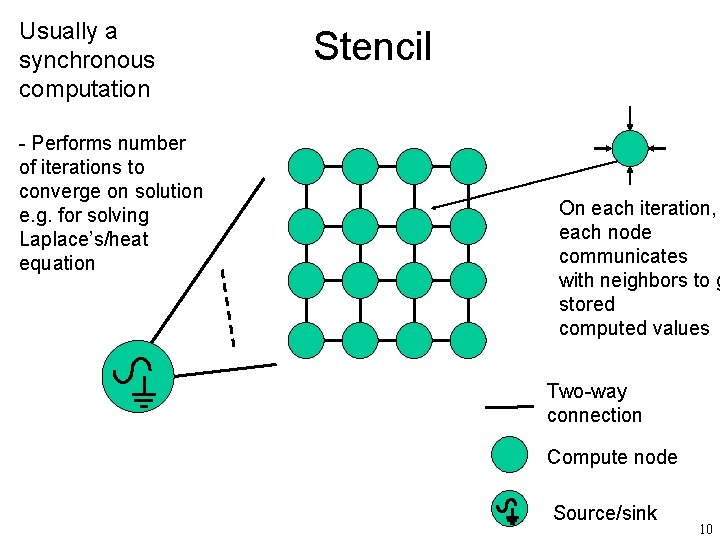

Usually a synchronous computation - Performs number of iterations to converge on solution e. g. for solving Laplace’s/heat equation Stencil On each iteration, each node communicates with neighbors to g stored computed values Two-way connection Compute node Source/sink 10

Note on Terminology “Skeletons” Sometimes term “skeleton” used to describe “patterns”, especially directed acyclic graphs with a source, a computation, and a sink. We do not make that distinction and use the term “pattern” whether directed or undirected and whether acyclic or cyclic. This is done elsewhere. 11

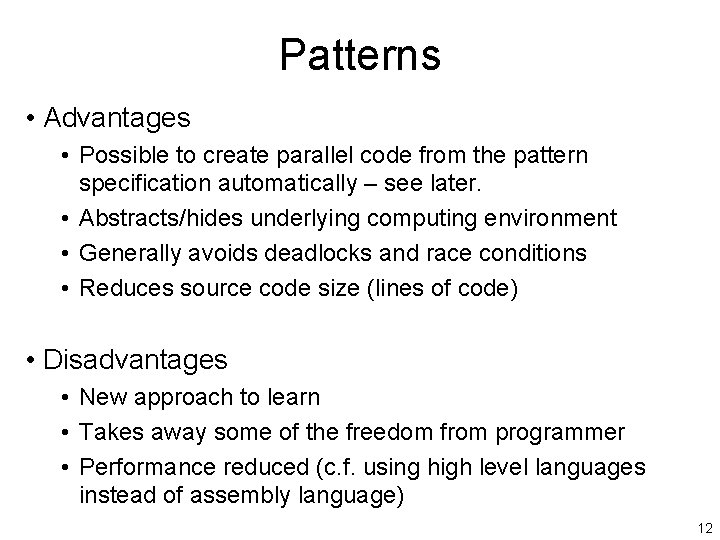

Patterns • Advantages • Possible to create parallel code from the pattern specification automatically – see later. • Abstracts/hides underlying computing environment • Generally avoids deadlocks and race conditions • Reduces source code size (lines of code) • Disadvantages • New approach to learn • Takes away some of the freedom from programmer • Performance reduced (c. f. using high level languages instead of assembly language) 12

More Advantages/Notes • “Design patterns” part of software engineering for many years – Reusable solutions to commonly occurring problems * – Patterns provide guide to “best practices”, not a final implementation – Provides good scalable design structure to parallel programs – Can reason more easier about programs • Hierarchical designs with patterns embedded into patterns, and pattern operators to combine patterns. • Leads to an automated conversion into parallel programs without need to write with low level message-passing routines such as MPI. * http: //en. wikipedia. org/wiki/Design_pattern_(computer_science) 13

Previous/Existing Work • Patterns/skeletons explored in several projects. • Universities: – University of Illinois at Urbana-Champaign and University of California, Berkeley – University of Torino/Università di Pisa Italy –. . . • Industrial efforts – Intel – Microsoft –… 14

Universal Parallel Computing Research Centers (UPCRC) University of Illinois at Urbana-Champaign and University of California, Berkeley with Microsoft and Intel in 2008 (with combined funding of at least $35 million). Co-developed OPL (Our Pattern Language). Group of twelve computational patterns identified: • Finite State Machines • Circuits • Graph Algorithms • Structured Grid • Dense Matrix • Sparse Matrix in seven general application areas 15

Intel Focused on very low level patterns such as fork-join, and provides constructs for them in: • Intel Threading Building Blocks (TBB) – Template library for C++ to support parallelism • Intel Cilk plus – Compiler extensions for C/C++ to support parallelism • Intel Array Building Blocks (Ar. BB) – Pure C++ library-based solution for vector parallelism Above are somewhat competing tools obtained through takeovers of small companies. Each implemented differently. 16

New book 2012 from Intel authors “Structured Parallel Programming: Patterns for Efficient Computation, ” Michael Mc. Cool, James Reinders, Arch Robison, Morgan Kaufmann, 2012 Focuses on Intel tools 15. 17

Using patterns with Microsoft C# http: //www. microsoft. com/download/en/det ails. aspx? displaylang=en&id=19222 Again very low-level with patterns such as parallel for loops. 18

Closest to our work http: //calvad os. di. unipi. it/d okuwiki/doku. php? id=ffnam espace: about University of Torino, Italy /Università di Pisa 19

Our approach (Jeremy Villalobos’ UNC-C Ph. D thesis) Focuses on a few patterns of wide applicability (e. g. workpool, synchronous all-to-all, pipelined, stencil) but Jeremy took it much further than UPCRC and Intel. He developed a higher-level framework called “Seeds” Uses pattern approach to automatically distribute code across processor cores, computers, or geographical distributed computers and execute the parallel code. 20

“Seeds” Parallel Grid Application Framework Some Key Features • Pattern-programming (Java) user interface • Self-deploys on computers, clusters, and geographically distributed computers • Load balances • Three levels of user interface http: //coit-grid 01. uncc. edu/seeds/21

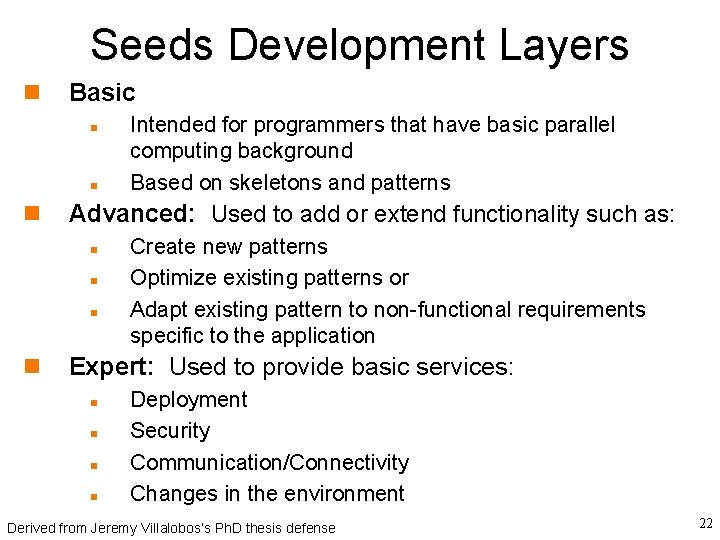

Seeds Development Layers Basic Advanced: Used to add or extend functionality such as: Intended for programmers that have basic parallel computing background Based on skeletons and patterns Create new patterns Optimize existing patterns or Adapt existing pattern to non-functional requirements specific to the application Expert: Used to provide basic services: Deployment Security Communication/Connectivity Changes in the environment Derived from Jeremy Villalobos’s Ph. D thesis defense 22

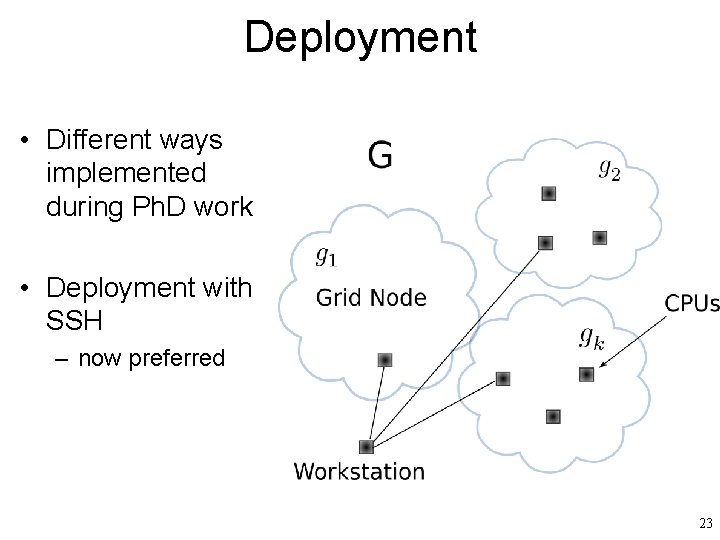

Deployment • Different ways implemented during Ph. D work • Deployment with SSH – now preferred 23

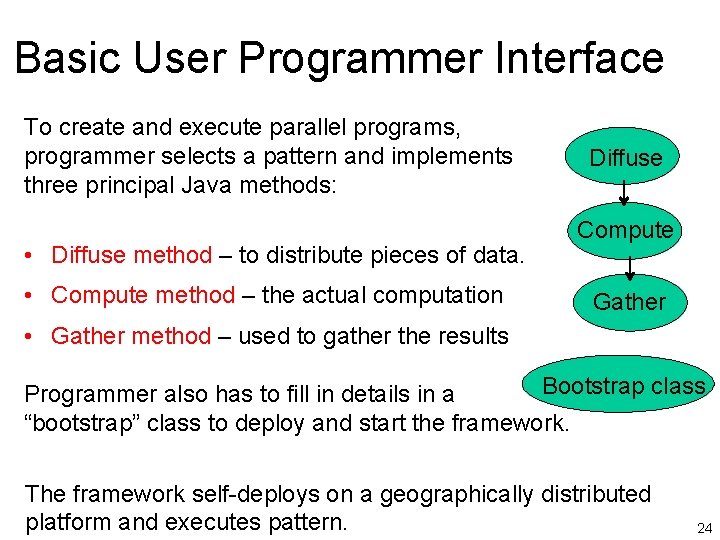

Basic User Programmer Interface To create and execute parallel programs, programmer selects a pattern and implements three principal Java methods: • Diffuse method – to distribute pieces of data. • Compute method – the actual computation Diffuse Compute Gather • Gather method – used to gather the results Bootstrap class Programmer also has to fill in details in a “bootstrap” class to deploy and start the framework. The framework self-deploys on a geographically distributed platform and executes pattern. 24

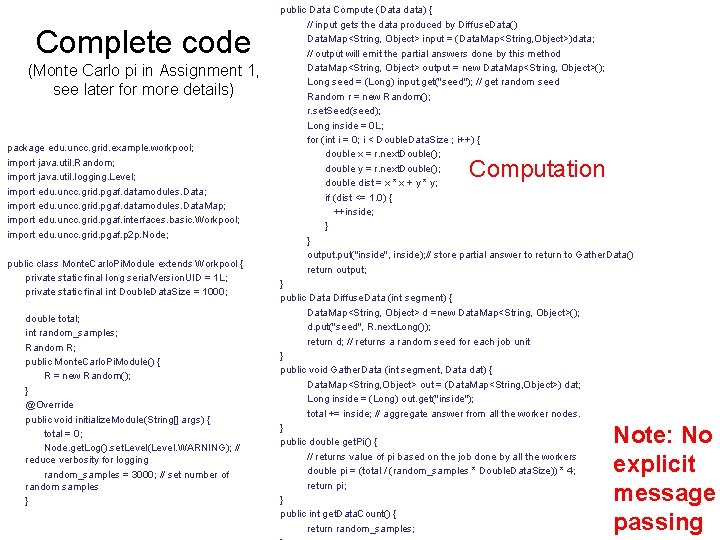

Complete code (Monte Carlo pi in Assignment 1, see later for more details) package edu. uncc. grid. example. workpool; import java. util. Random; import java. util. logging. Level; import edu. uncc. grid. pgaf. datamodules. Data. Map; import edu. uncc. grid. pgaf. interfaces. basic. Workpool; import edu. uncc. grid. pgaf. p 2 p. Node; public class Monte. Carlo. Pi. Module extends Workpool { private static final long serial. Version. UID = 1 L; private static final int Double. Data. Size = 1000; double total; int random_samples; Random R; public Monte. Carlo. Pi. Module() { R = new Random(); } @Override public void initialize. Module(String[] args) { total = 0; Node. get. Log(). set. Level(Level. WARNING); // reduce verbosity for logging random_samples = 3000; // set number of random samples } public Data Compute (Data data) { // input gets the data produced by Diffuse. Data() Data. Map<String, Object> input = (Data. Map<String, Object>)data; // output will emit the partial answers done by this method Data. Map<String, Object> output = new Data. Map<String, Object>(); Long seed = (Long) input. get("seed"); // get random seed Random r = new Random(); r. set. Seed(seed); Long inside = 0 L; for (int i = 0; i < Double. Data. Size ; i++) { double x = r. next. Double(); double y = r. next. Double(); double dist = x * x + y * y; if (dist <= 1. 0) { ++inside; } } output. put("inside", inside); // store partial answer to return to Gather. Data() return output; } public Data Diffuse. Data (int segment) { Data. Map<String, Object> d =new Data. Map<String, Object>(); d. put("seed", R. next. Long()); return d; // returns a random seed for each job unit } public void Gather. Data (int segment, Data dat) { Data. Map<String, Object> out = (Data. Map<String, Object>) dat; Long inside = (Long) out. get("inside"); total += inside; // aggregate answer from all the worker nodes. } public double get. Pi() { // returns value of pi based on the job done by all the workers double pi = (total / (random_samples * Double. Data. Size)) * 4; return pi; } public int get. Data. Count() { return random_samples; Computation Note: No explicit message passing 25

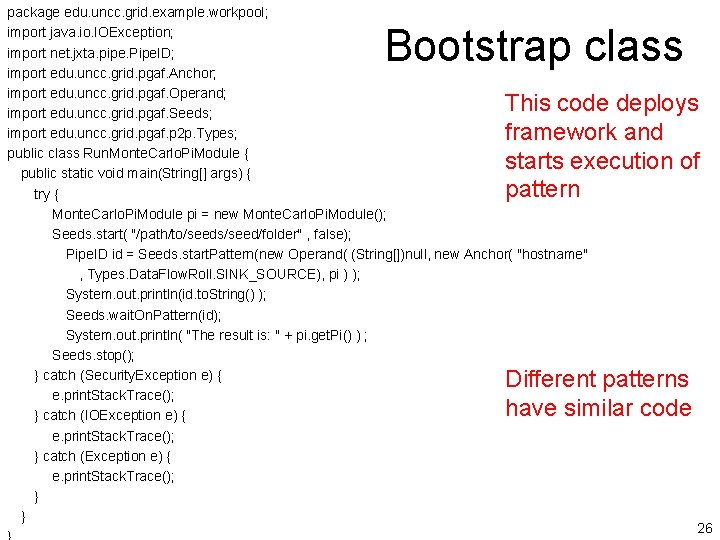

package edu. uncc. grid. example. workpool; import java. io. IOException; import net. jxta. pipe. Pipe. ID; import edu. uncc. grid. pgaf. Anchor; import edu. uncc. grid. pgaf. Operand; import edu. uncc. grid. pgaf. Seeds; import edu. uncc. grid. pgaf. p 2 p. Types; public class Run. Monte. Carlo. Pi. Module { public static void main(String[] args) { try { Monte. Carlo. Pi. Module pi = new Monte. Carlo. Pi. Module(); Seeds. start( "/path/to/seeds/seed/folder" , false); Pipe. ID id = Seeds. start. Pattern(new Operand( (String[])null, new Anchor( "hostname" , Types. Data. Flow. Roll. SINK_SOURCE), pi ) ); System. out. println(id. to. String() ); Seeds. wait. On. Pattern(id); System. out. println( "The result is: " + pi. get. Pi() ) ; Seeds. stop(); } catch (Security. Exception e) { e. print. Stack. Trace(); } catch (IOException e) { e. print. Stack. Trace(); } catch (Exception e) { e. print. Stack. Trace(); } } Bootstrap class This code deploys framework and starts execution of pattern Different patterns have similar code 26

Compiling/executing • Can be done on the command line (ant script provided) or through an IDE (Eclipse) 27

Tutorial page http: //coit-grid 01. uncc. edu/seeds/ 15. 28

Next step • Assignment 1 – using the Seeds framework 29

Questions 30

- Slides: 30