Path Analysis Sequence Modelling Regularization of RNN through

![REFERENCES • Bayesian Neural Nets • https: //arxiv. org/abs/1505. 05424 [arxiv. org] - Info. REFERENCES • Bayesian Neural Nets • https: //arxiv. org/abs/1505. 05424 [arxiv. org] - Info.](https://slidetodoc.com/presentation_image_h/2745556bd654ece476f7eb6dbd8ffc4d/image-13.jpg)

- Slides: 22

Path Analysis & Sequence Modelling Regularization of RNN through Bayesian Neural Networks Vish Hawa Principal Scientist, Vanguard April 18 , 2019 1 1

PROBLEM SETUP • Marketing leads are exposed numerous channels, it is difficult to isolate how much credit should be attributed towards each channel. This can have big impact on how much budget /efforts should be put into any particular channel • Usage of First Touch or Last Touch Attribution - can potentially overweigh on first or last touch channels and be sub-optimal in credit assignments • Are there sequences that are better at driving leads than others? Build model and Conduct Path Analysis and Produce weights to channel contribution for each path • RNNs have shown great promises on sequence-modelling (can they solve memorizing issues especially when data size is limited) 2

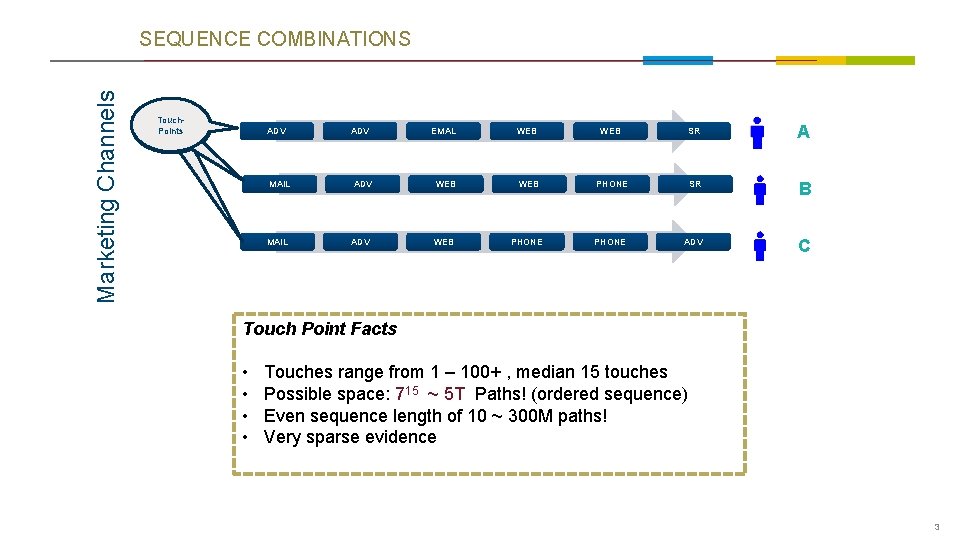

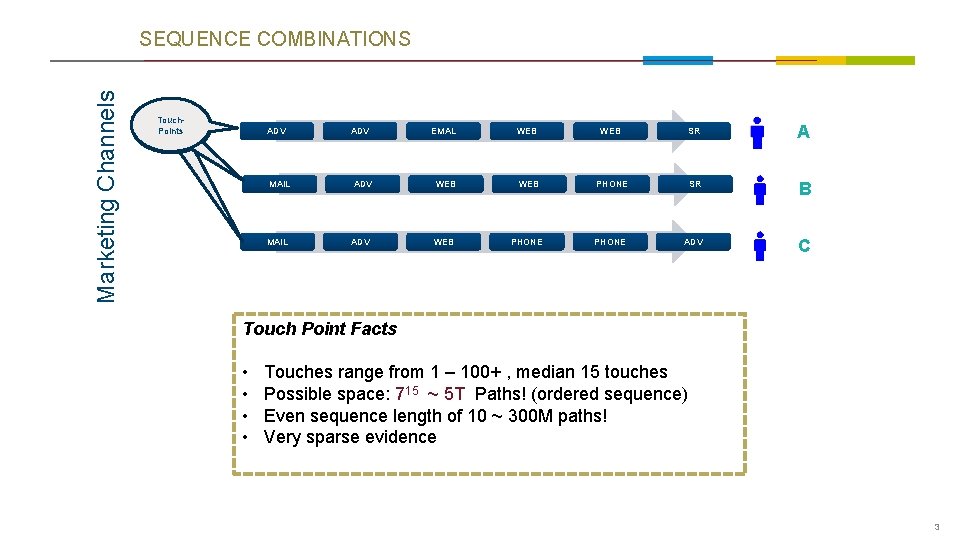

Marketing Channels SEQUENCE COMBINATIONS Touch. Points ADV EMAL WEB SR A MAIL ADV WEB PHONE SR B MAIL ADV WEB PHONE ADV C Touch Point Facts • • Touches range from 1 – 100+ , median 15 touches Possible space: 715 ~ 5 T Paths! (ordered sequence) Even sequence length of 10 ~ 300 M paths! Very sparse evidence 3

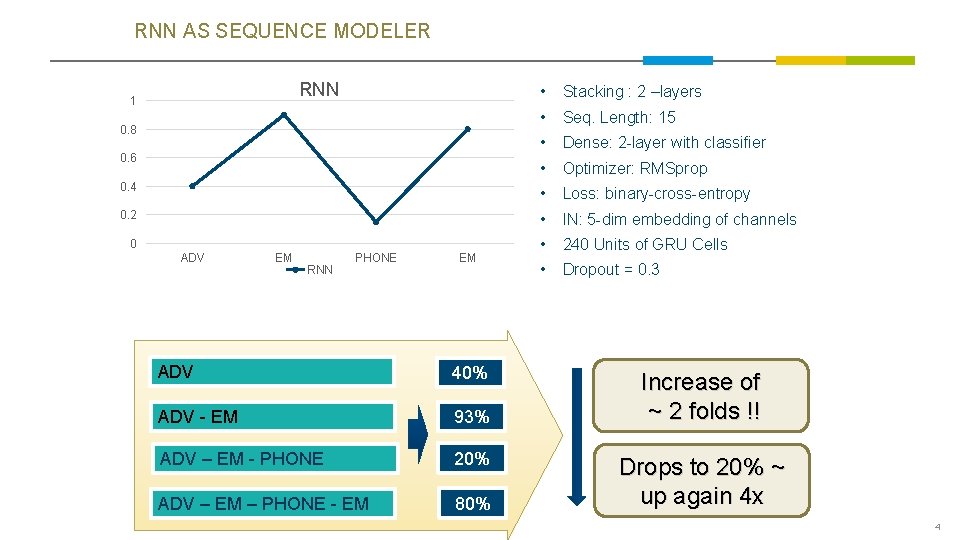

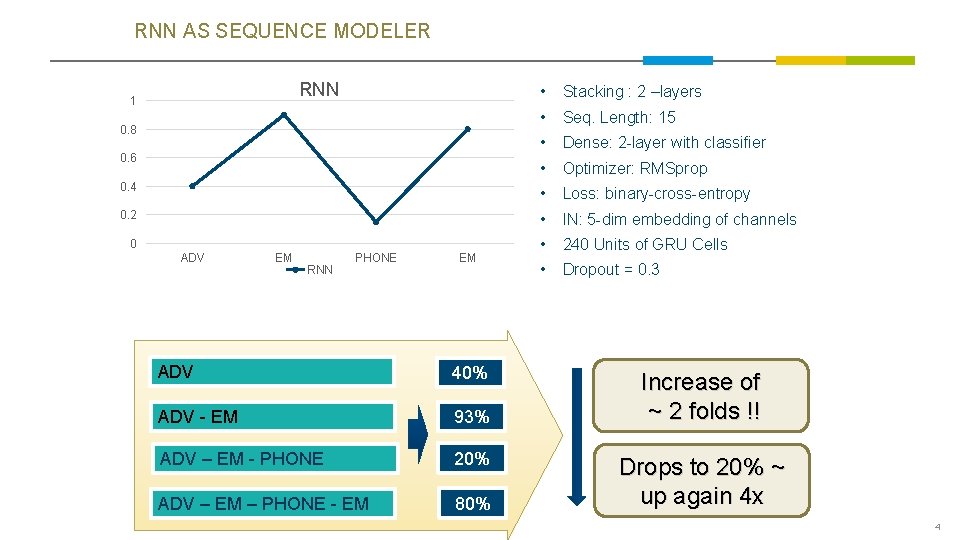

RNN AS SEQUENCE MODELER RNN • Stacking : 2 –layers • Seq. Length: 15 • Dense: 2 -layer with classifier • Optimizer: RMSprop 0. 4 • Loss: binary-cross-entropy 0. 2 • IN: 5 -dim embedding of channels • 240 Units of GRU Cells • Dropout = 0. 3 1 0. 8 0. 6 0 ADV EM RNN PHONE EM ADV 40% ADV - EM 93% ADV – EM - PHONE 20% ADV – EM – PHONE - EM 80% Increase of ~ 2 folds !! Drops to 20% ~ up again 4 x 4

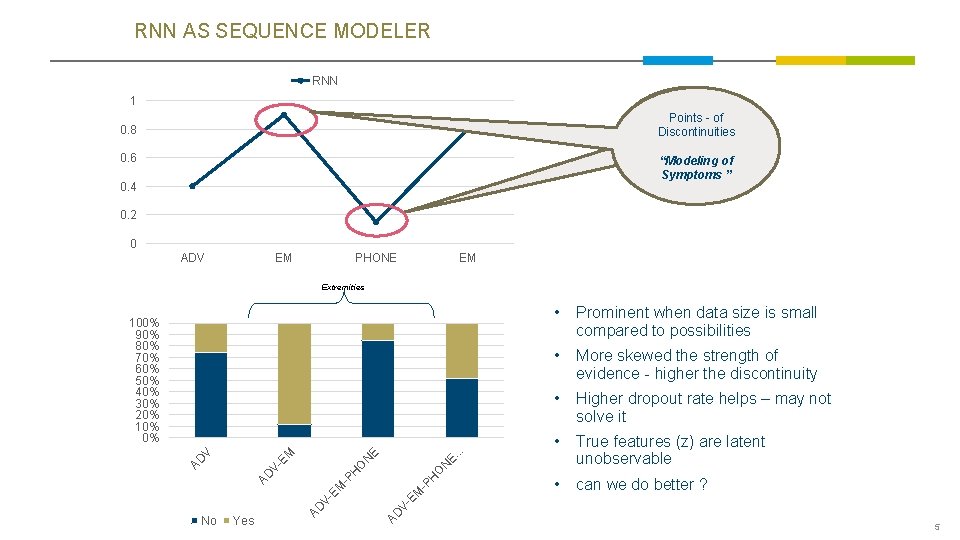

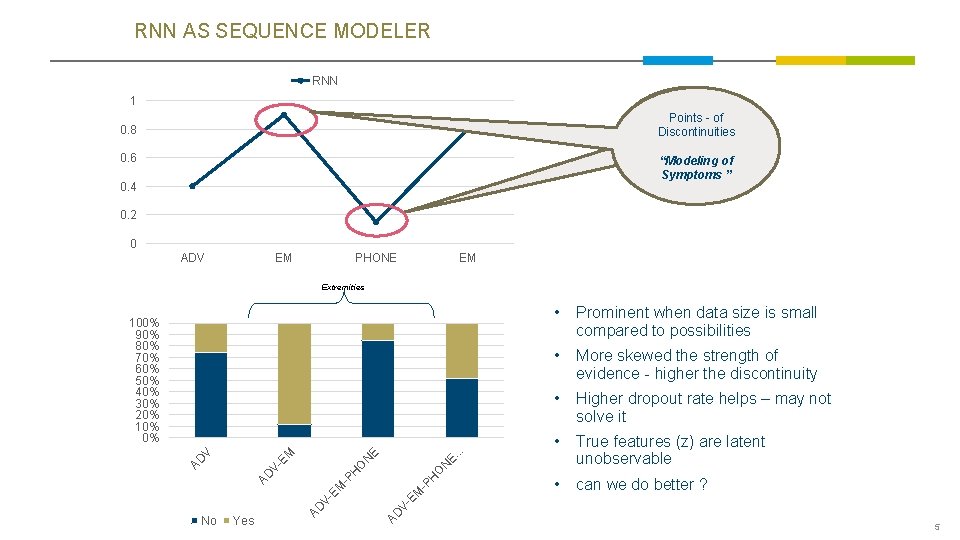

RNN AS SEQUENCE MODELER RNN 1 Points - of Discontinuities Touch-Points 0. 8 0. 6 “Modeling of Symptoms ” 0. 4 0. 2 0 ADV EM PHONE EM Extremities • More skewed the strength of evidence - higher the discontinuity • Higher dropout rate helps – may not solve it • True features (z) are latent unobservable • can we do better ? . . Prominent when data size is small compared to possibilities O N H -P AD V- EM EM V- Yes AD No • E. E O N -P AD H V- AD V EM 100% 90% 80% 70% 60% 50% 40% 30% 20% 10% 0% 5

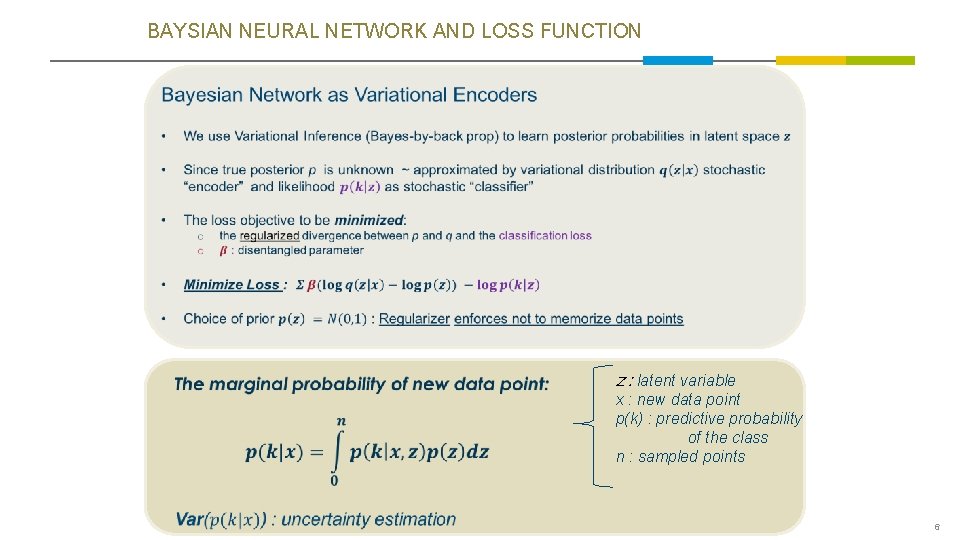

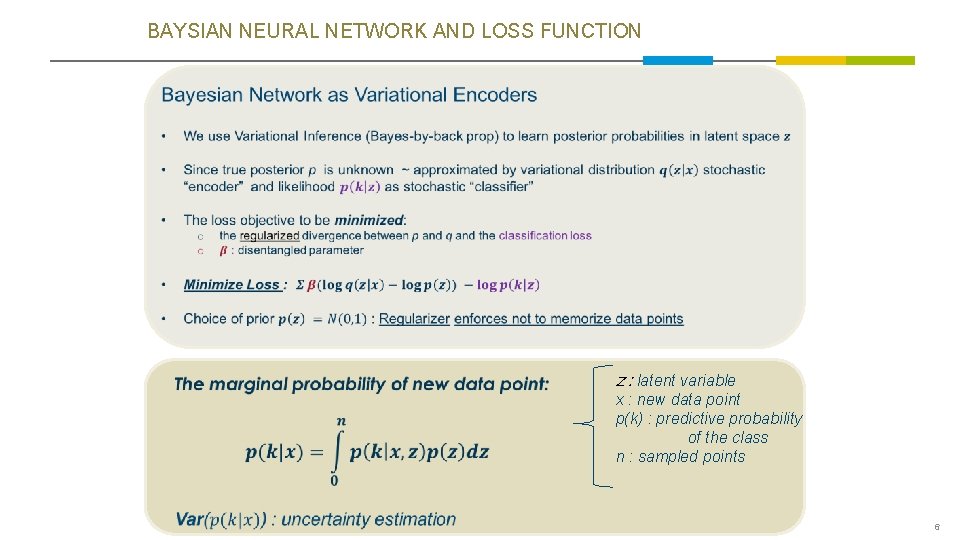

BAYSIAN NEURAL NETWORK AND LOSS FUNCTION z : latent variable x : new data point p(k) : predictive probability of the class n : sampled points 6

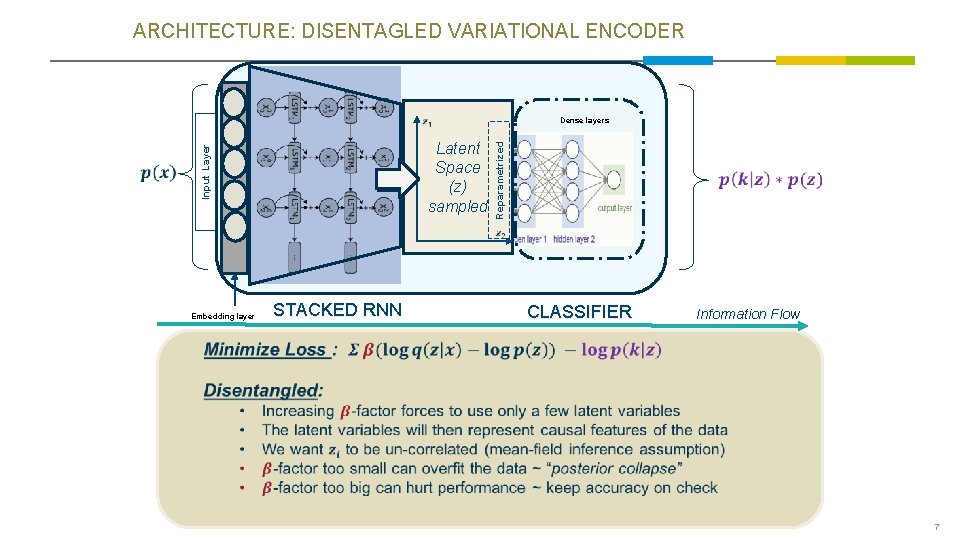

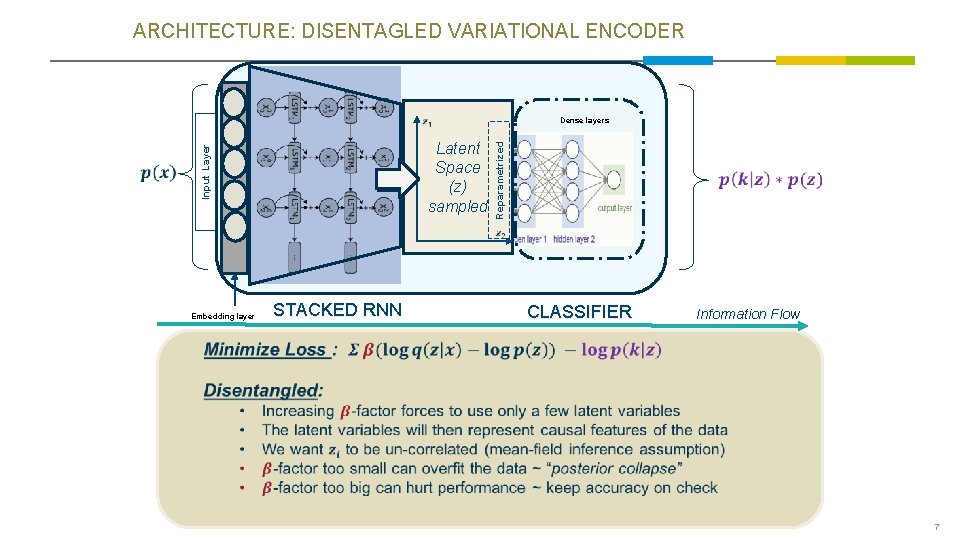

ARCHITECTURE: DISENTAGLED VARIATIONAL ENCODER Reparametrized Latent Space (z) sampled Input Layer Dense layers Embedding layer STACKED RNN CLASSIFIER Information Flow 7

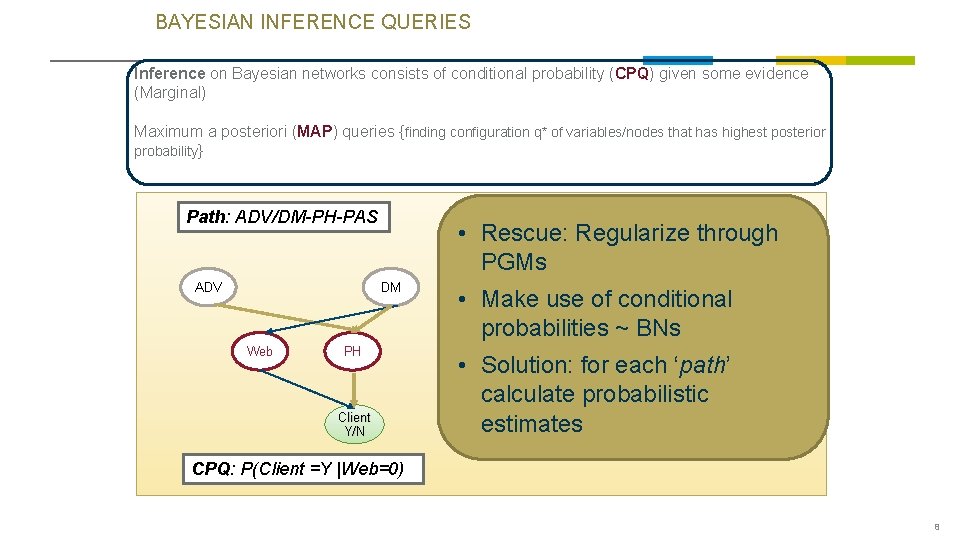

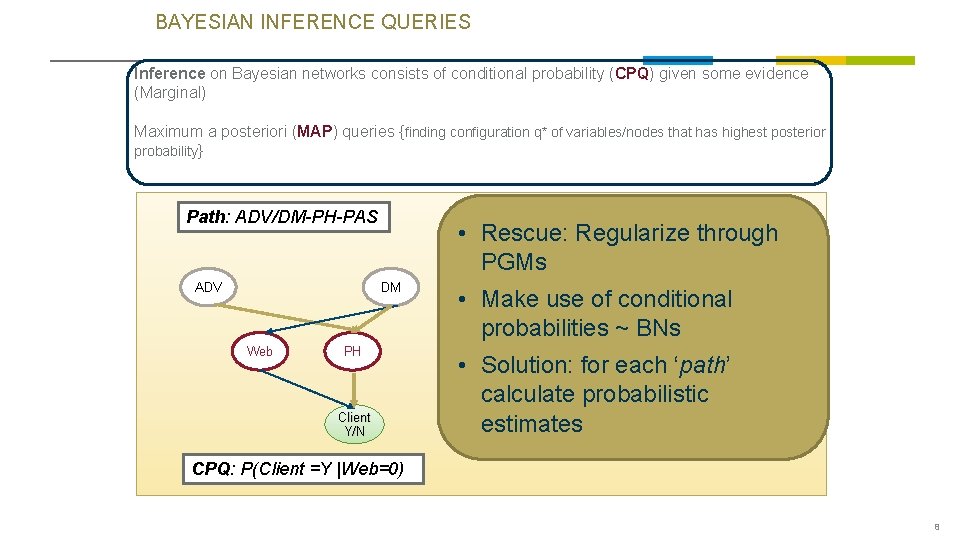

BAYESIAN INFERENCE QUERIES Inference on Bayesian networks consists of conditional probability (CPQ) given some evidence (Marginal) Maximum a posteriori (MAP) queries {finding configuration q* of variables/nodes that has highest posterior probability} Path: ADV/DM-PH-PAS ADV • Rescue: Regularize through PGMs DM Web PH Client Y/N • Make use of conditional probabilities ~ BNs • Solution: for each ‘path’ calculate probabilistic estimates CPQ: P(Client =Y |Web=0) 8

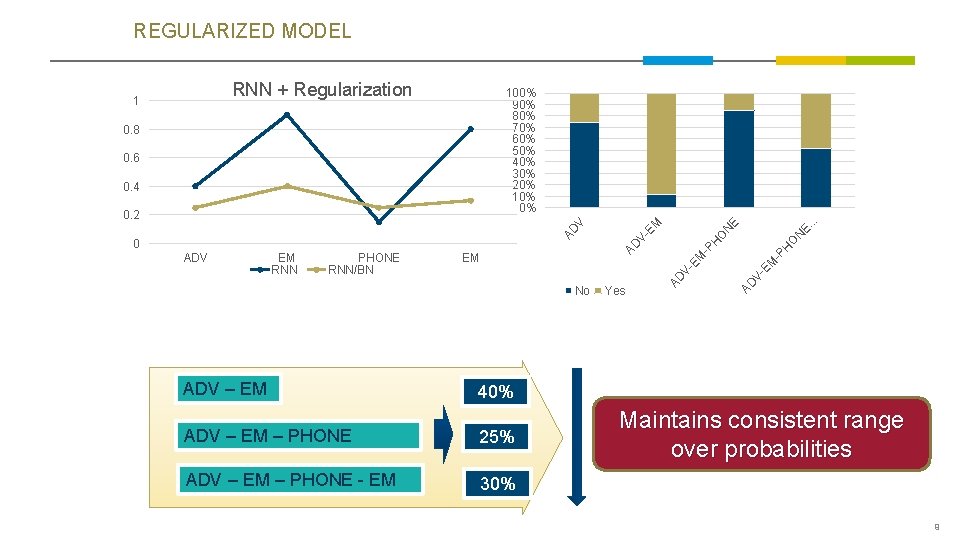

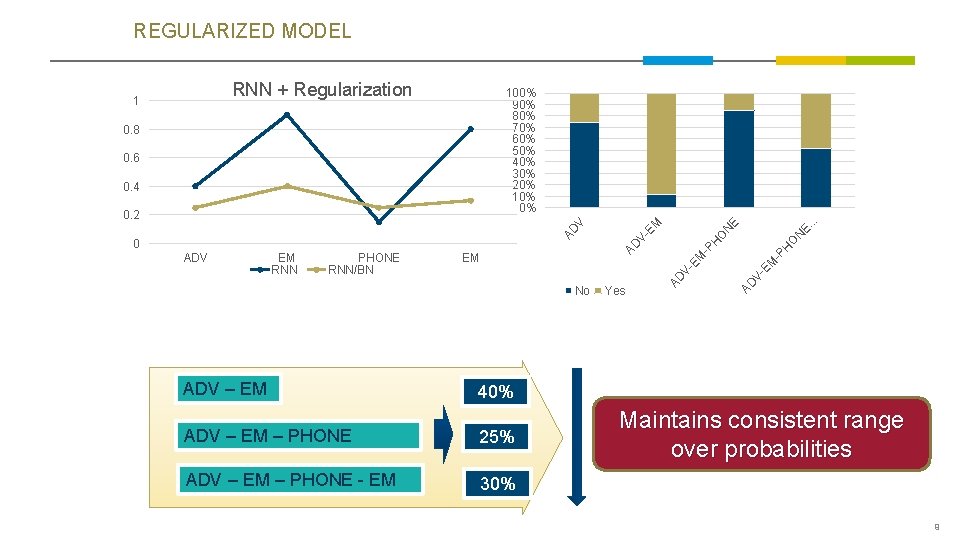

REGULARIZED MODEL RNN + Regularization No ADV – EM 40% ADV – EM – PHONE 25% ADV – EM – PHONE - EM 30% Yes E. E N N O H -P EM V- EM AD PHONE RNN/BN V- EM RNN AD ADV AD 0 V- AD V 0. 2 O 0. 4 H 0. 6 EM -P 0. 8 . . 100% 90% 80% 70% 60% 50% 40% 30% 20% 10% 0% EM 1 Maintains consistent range over probabilities 9

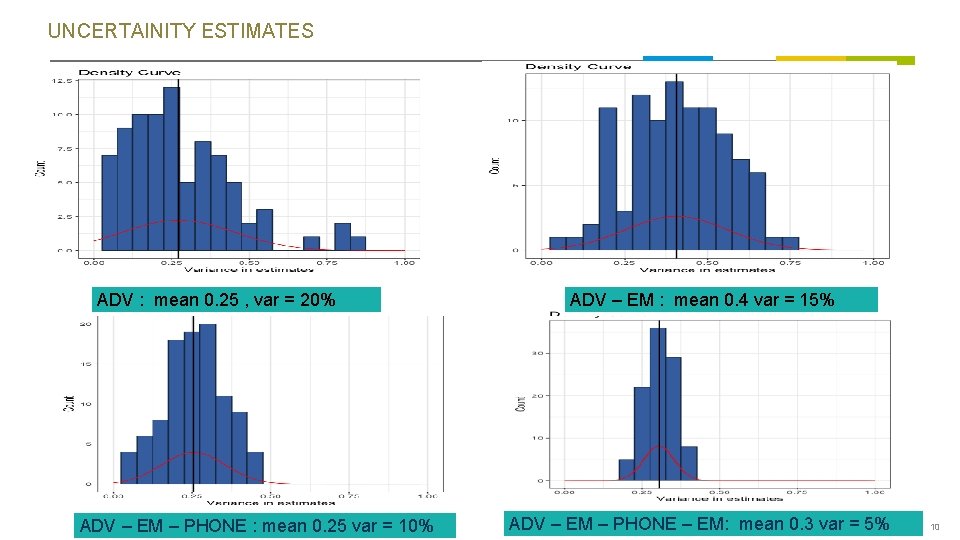

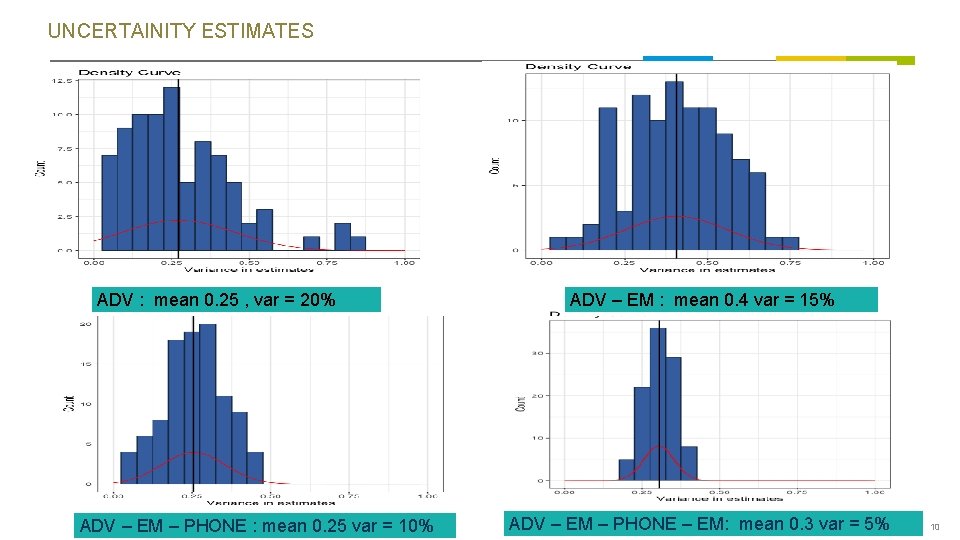

UNCERTAINITY ESTIMATES ADV : mean 0. 25 , var = 20% ADV – EM – PHONE : mean 0. 25 var = 10% ADV – EM : mean 0. 4 var = 15% ADV – EM – PHONE – EM: mean 0. 3 var = 5% 10

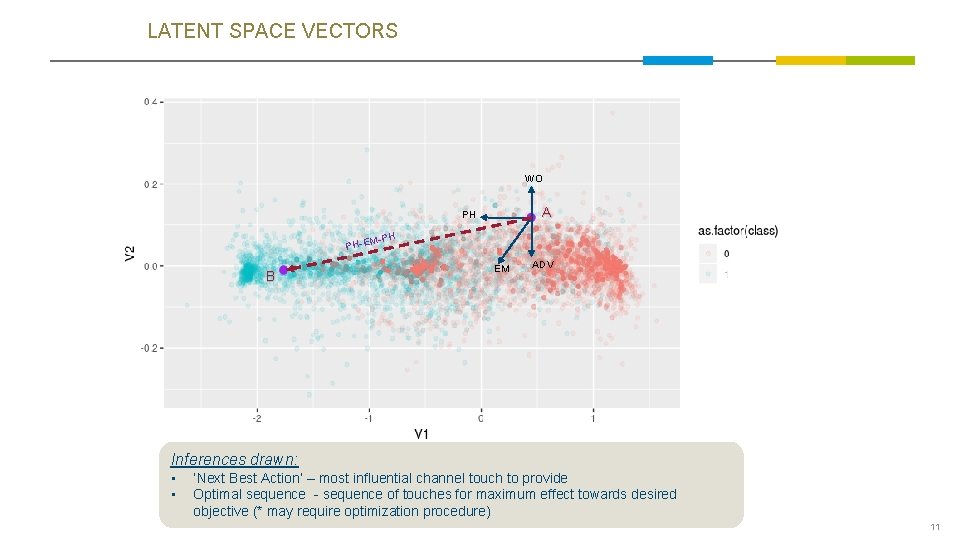

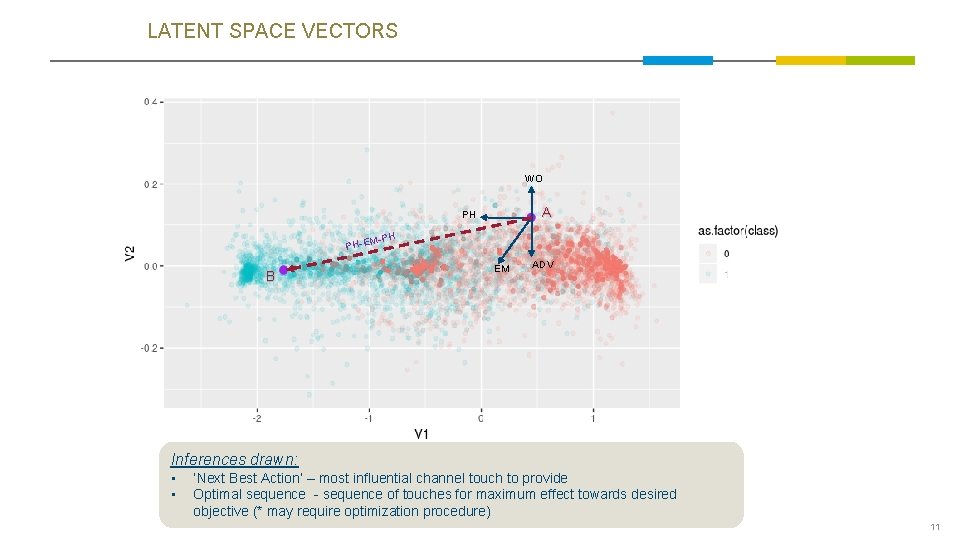

LATENT SPACE VECTORS WO A PH M-PH PH-E B EM ADV Inferences drawn: • • ‘Next Best Action’ – most influential channel touch to provide Optimal sequence - sequence of touches for maximum effect towards desired objective (* may require optimization procedure) 11

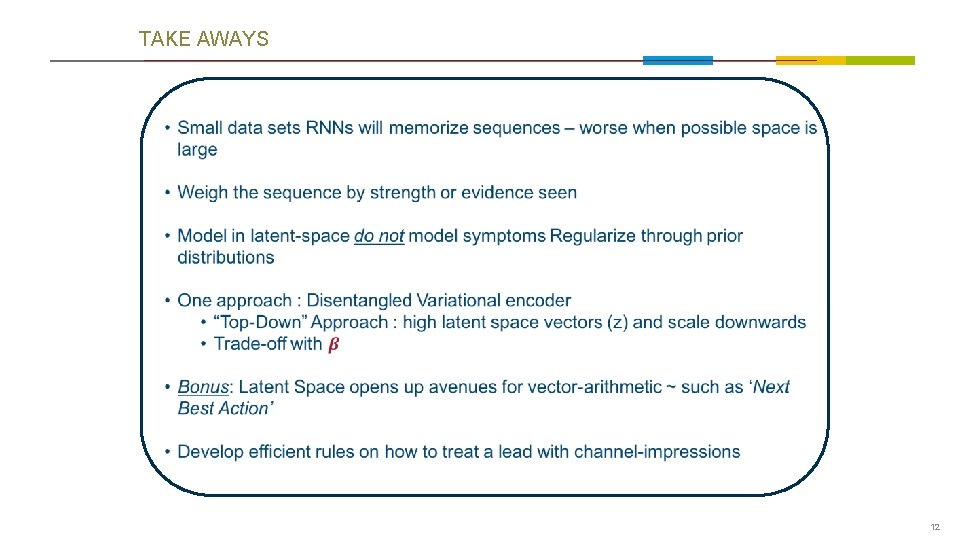

TAKE AWAYS 12

![REFERENCES Bayesian Neural Nets https arxiv orgabs1505 05424 arxiv org Info REFERENCES • Bayesian Neural Nets • https: //arxiv. org/abs/1505. 05424 [arxiv. org] - Info.](https://slidetodoc.com/presentation_image_h/2745556bd654ece476f7eb6dbd8ffc4d/image-13.jpg)

REFERENCES • Bayesian Neural Nets • https: //arxiv. org/abs/1505. 05424 [arxiv. org] - Info. VAE: Information Maximizing Variational Autoencoders • https: //arxiv. org/pdf/1706. 02262. pdf • Understanding disentangling in β-VAE • https: //arxiv. org/abs/1804. 03599 • Auto-Encoding Variational Bayes • https: //arxiv. org/abs/1312. 6114 • http: //papers. nips. cc/paper/7141 -what-uncertainties-do-we-need-in-bayesian-deep -learning-for-computer-vision. pdf • ELBO Surgery • http: //approximateinference. org/accepted/Hoffman. Johnson 2016. pdf 13 13

THANK YOU 14

APPENDIX 15

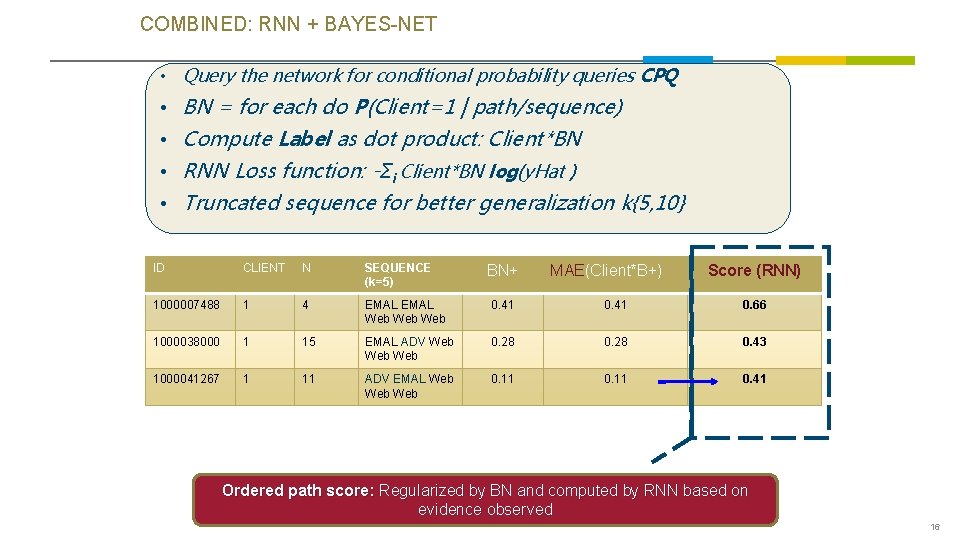

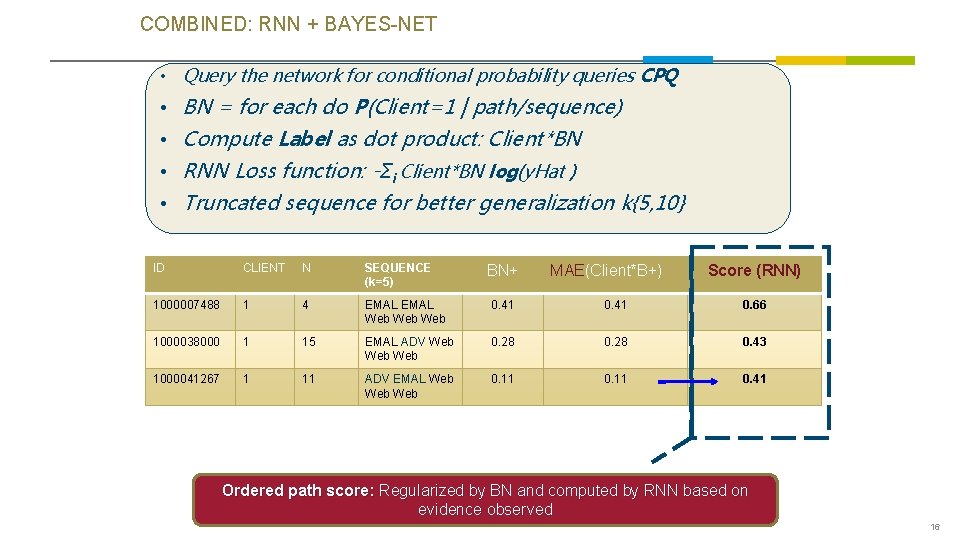

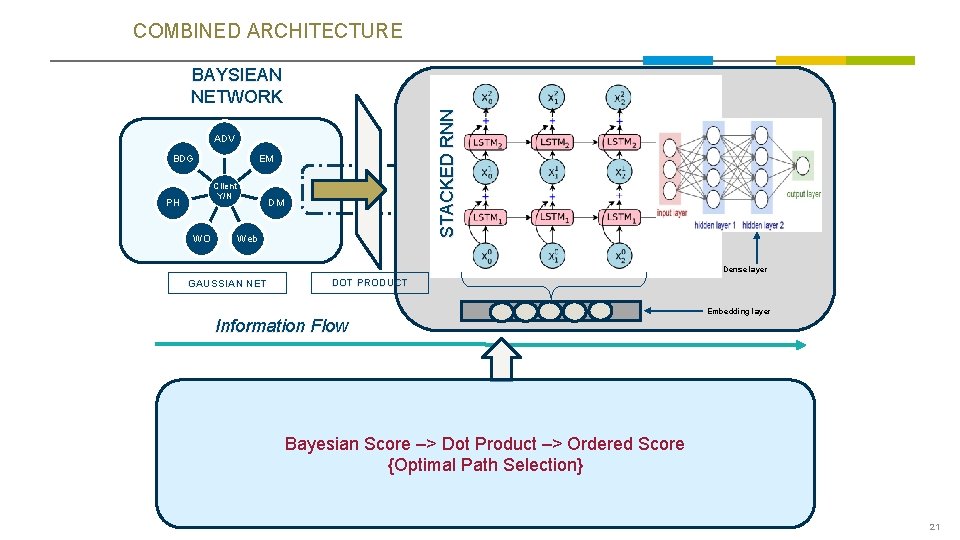

COMBINED: RNN + BAYES-NET • Query the network for conditional probability queries CPQ • BN = for each do P(Client=1 | path/sequence) • Compute Label as dot product: Client*BN • RNN Loss function: -Σi Client*BN log(y. Hat ) • Truncated sequence for better generalization k{5, 10} ID CLIENT N SEQUENCE (k=5) 1000007488 1 4 EMAL Web Web 0. 41 0. 66 1000038000 1 15 EMAL ADV Web Web 0. 28 0. 43 1000041267 1 11 ADV EMAL Web Web 0. 11 0. 41 BN+ MAE(Client*B+) Score (RNN) Ordered path score: Regularized by BN and computed by RNN based on evidence observed 16

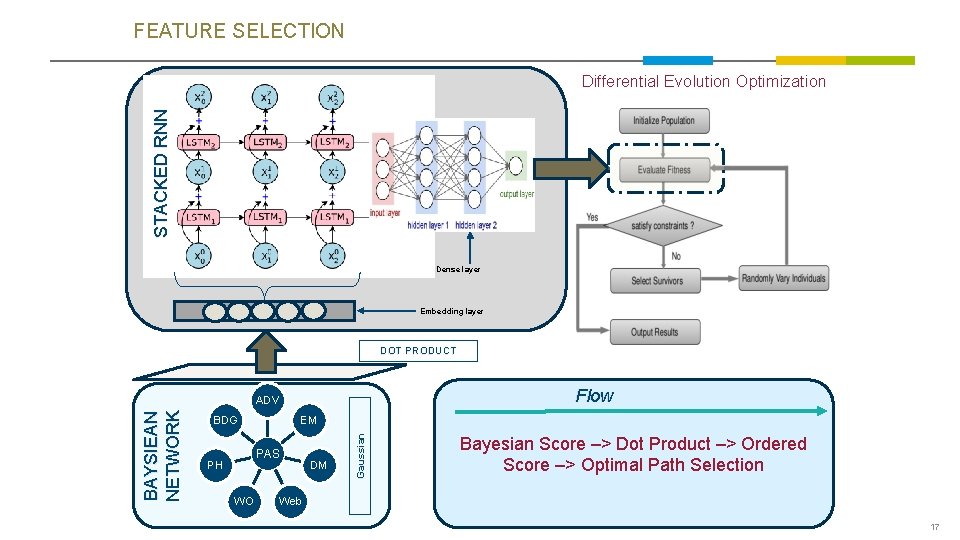

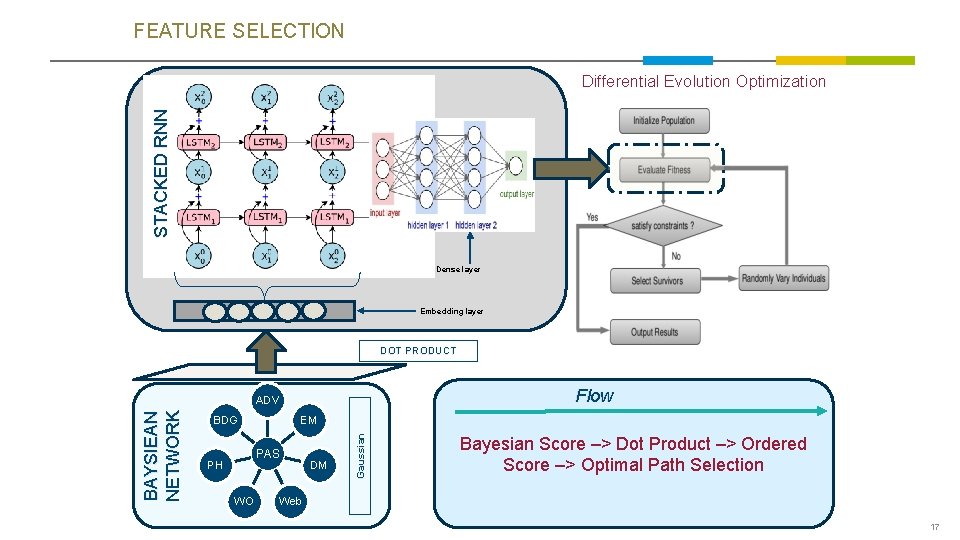

FEATURE SELECTION STACKED RNN Differential Evolution Optimization Dense layer Embedding layer Flow ADV BDG EM PAS PH WO DM Gaussian BAYSIEAN NETWORK DOT PRODUCT Bayesian Score –> Dot Product –> Ordered Score –> Optimal Path Selection Web 17

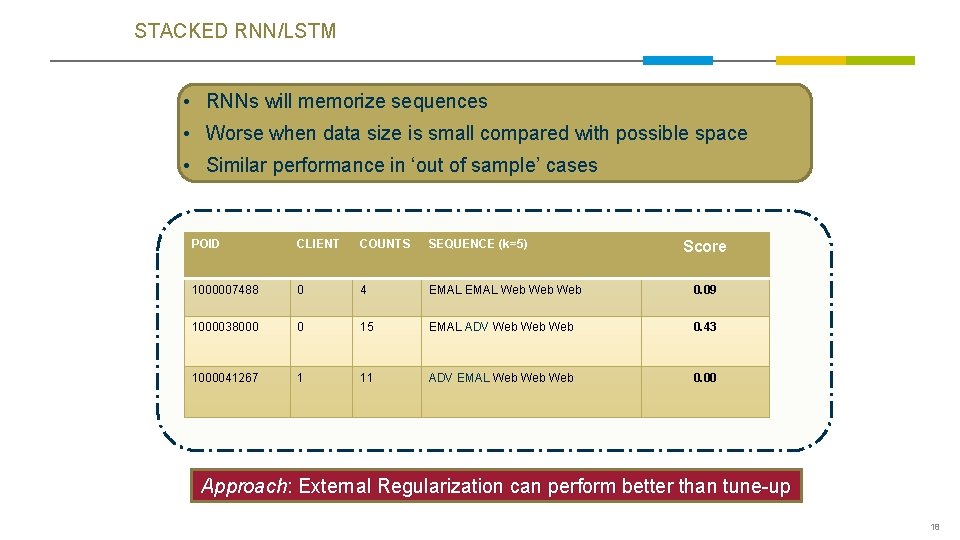

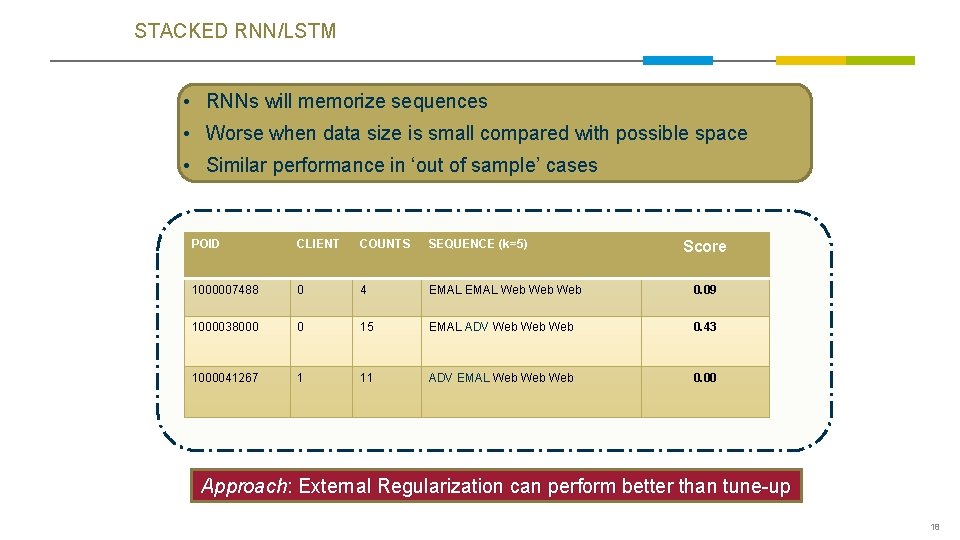

STACKED RNN/LSTM • RNNs will memorize sequences • Worse when data size is small compared with possible space • Similar performance in ‘out of sample’ cases POID CLIENT COUNTS SEQUENCE (k=5) 1000007488 0 4 EMAL Web Web 0. 09 1000038000 0 15 EMAL ADV Web Web 0. 43 1000041267 1 11 ADV EMAL Web Web 0. 00 Score Approach: External Regularization can perform better than tune-up 18

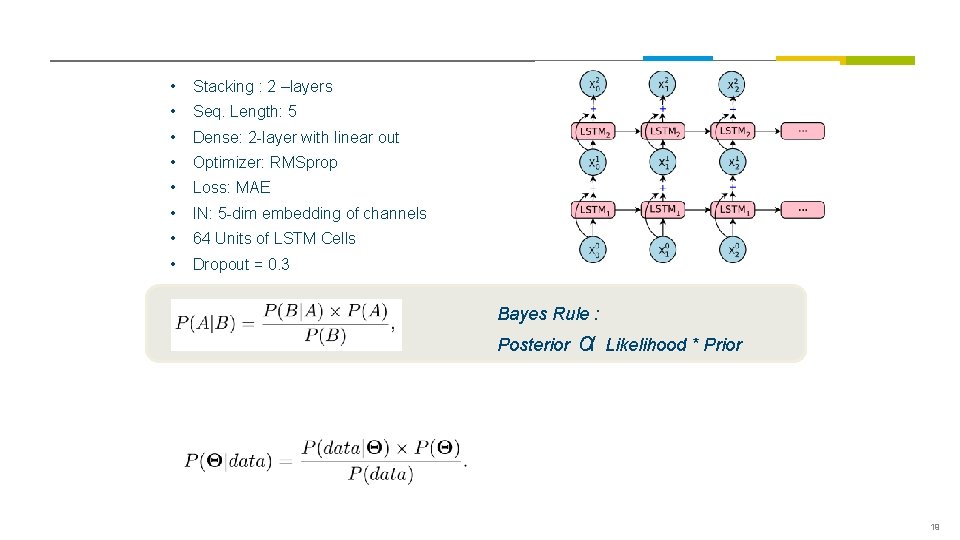

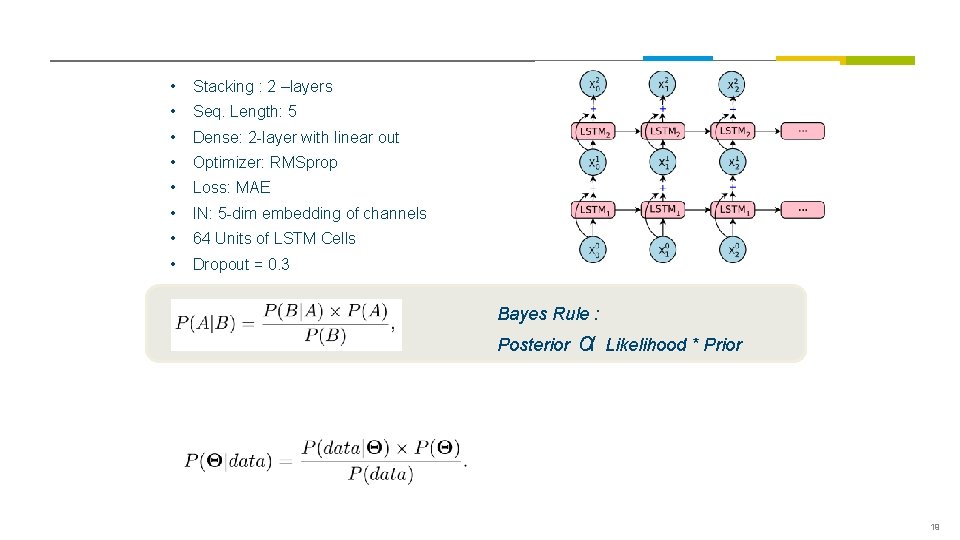

• Stacking : 2 –layers • Seq. Length: 5 • Dense: 2 -layer with linear out • Optimizer: RMSprop • Loss: MAE • IN: 5 -dim embedding of channels • 64 Units of LSTM Cells • Dropout = 0. 3 Bayes Rule : Posterior α Likelihood * Prior 19

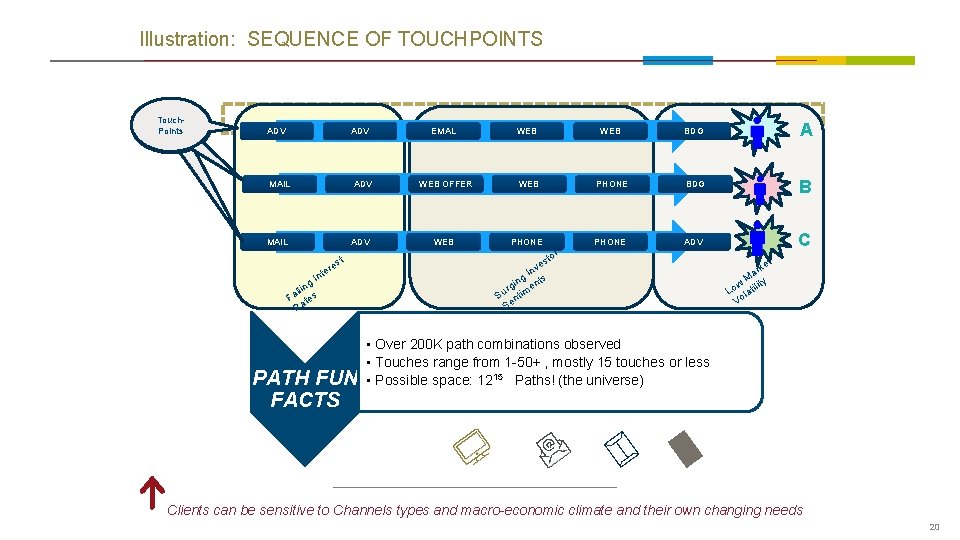

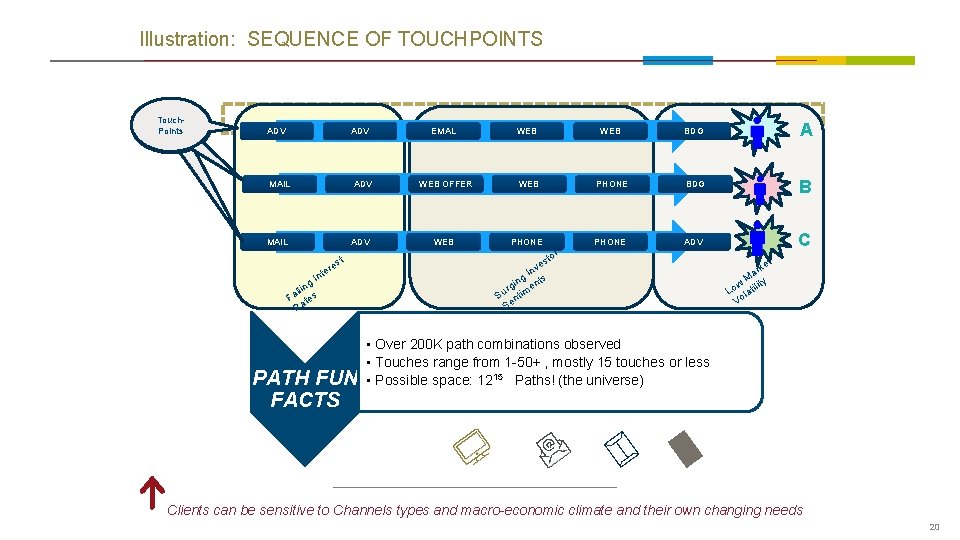

Illustration: SEQUENCE OF TOUCHPOINTS Touch. Points ADV EMAL WEB BDG A MAIL ADV WEB OFFER WEB PHONE BDG B MAIL ADV WEB PHONE ADV C t es r e t In g n lli Fa tes Ra PATH FUN FACTS tor s ve In s g t in rg imen u S nt Se t ke r Ma w tility o L la Vo • Over 200 K path combinations observed • Touches range from 1 -50+ , mostly 15 touches or less • Possible space: 1215 Paths! (the universe) Clients can be sensitive to Channels types and macro-economic climate and their own changing needs 20

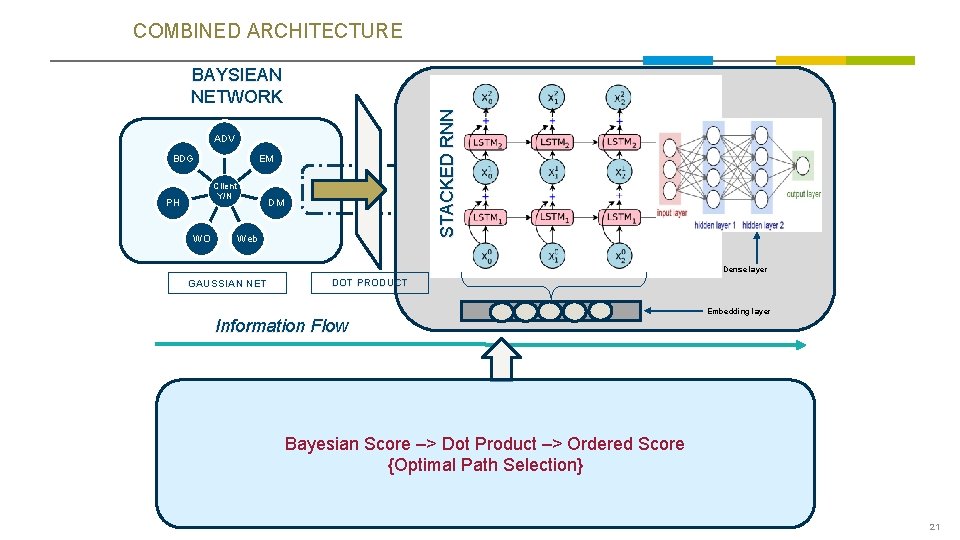

COMBINED ARCHITECTURE STACKED RNN BAYSIEAN NETWORK ADV BDG EM Client Y/N PH WO DM Web Dense layer GAUSSIAN NET DOT PRODUCT Embedding layer Information Flow Bayesian Score –> Dot Product –> Ordered Score {Optimal Path Selection} 21

SECTIONS 22 22