PART 4 Classification of Random Processes Huseyin Bilgekul

PART 4 Classification of Random Processes Huseyin Bilgekul Eeng 571 Probability and astochastic Processes Department of Electrical and Electronic Engineering Eastern Mediterranean University EE 571 1

1. 7 Statistics of Stochastic Processes • • • n-th Order Distribution (Density) Expected Value Autocorrelation Cross-correlation Autocovariance Cross-covariance EE 571 2

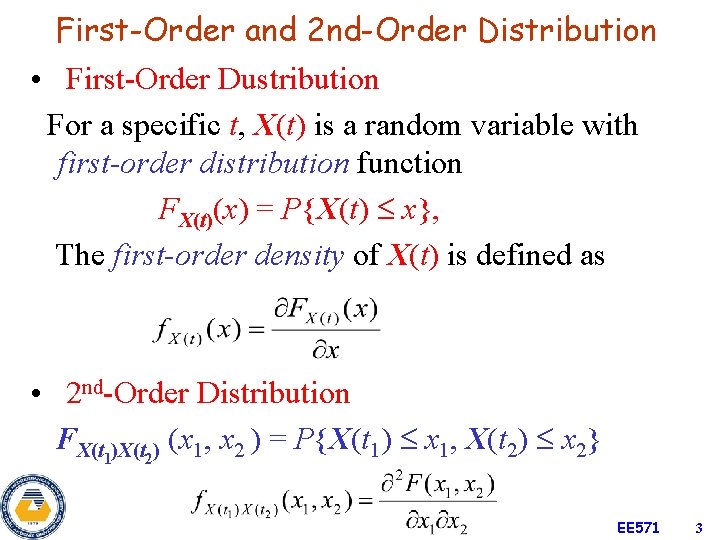

First-Order and 2 nd-Order Distribution • First-Order Dustribution For a specific t, X(t) is a random variable with first-order distribution function FX(t)(x) = P{X(t) x}, The first-order density of X(t) is defined as • 2 nd-Order Distribution FX(t 1)X(t 2) (x 1, x 2 ) = P{X(t 1) x 1, X(t 2) x 2} EE 571 3

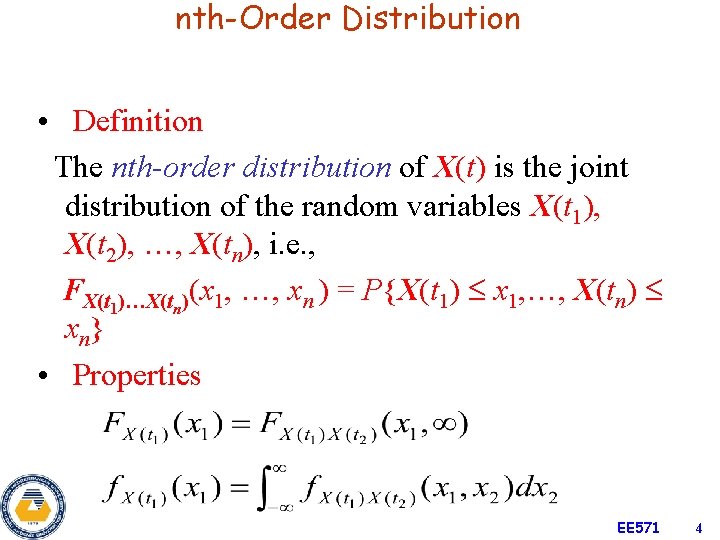

nth-Order Distribution • Definition The nth-order distribution of X(t) is the joint distribution of the random variables X(t 1), X(t 2), …, X(tn), i. e. , FX(t 1)…X(tn)(x 1, …, xn ) = P{X(t 1) x 1, …, X(tn) xn} • Properties EE 571 4

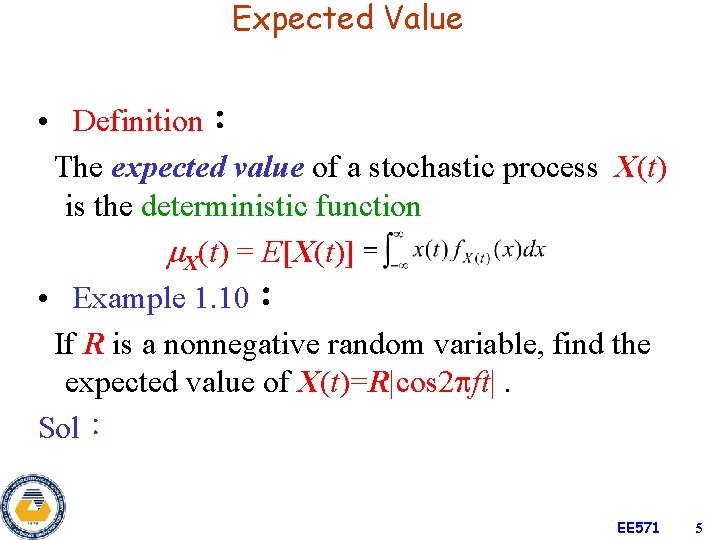

Expected Value • Definition: The expected value of a stochastic process X(t) is the deterministic function X(t) = E[X(t)] • Example 1. 10: If R is a nonnegative random variable, find the expected value of X(t)=R|cos 2 ft|. Sol: EE 571 5

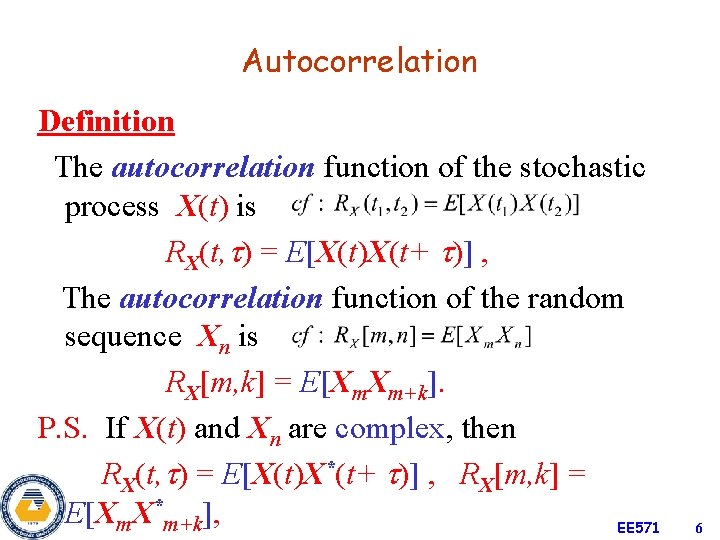

Autocorrelation Definition The autocorrelation function of the stochastic process X(t) is RX(t, ) = E[X(t)X(t+ )] , The autocorrelation function of the random sequence Xn is RX[m, k] = E[Xm. Xm+k]. P. S. If X(t) and Xn are complex, then RX(t, ) = E[X(t)X*(t+ )] , RX[m, k] = E[Xm. X*m+k], EE 571 6

Complex Process and Vector Processes Definitions The complex process Z(t) = X(t) + j. Y(t) is specified in terms of the joint statistics of the real processes X(t) and Y(t). The vector process (n-dimensional process) is a family of n stochastic processes. EE 571 7

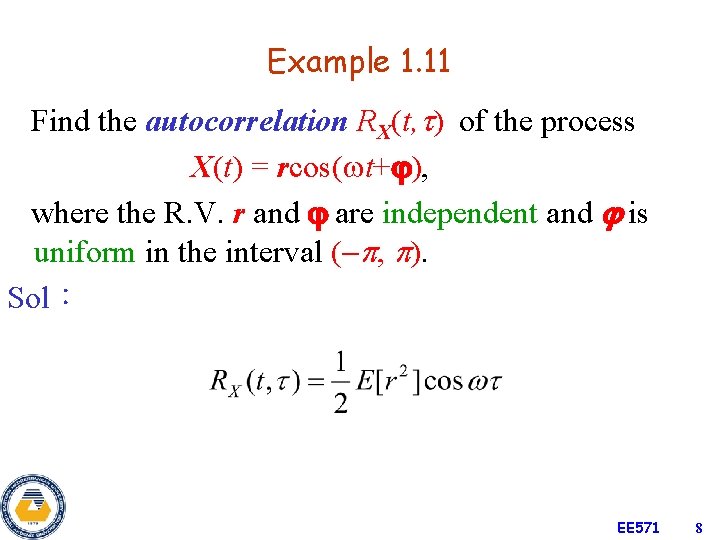

Example 1. 11 Find the autocorrelation RX(t, ) of the process X(t) = rcos( t+ ), where the R. V. r and are independent and is uniform in the interval ( , ). Sol: EE 571 8

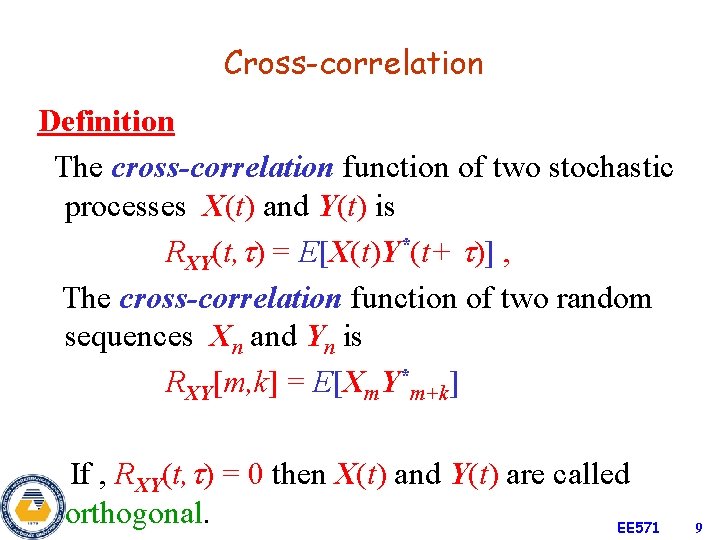

Cross-correlation Definition The cross-correlation function of two stochastic processes X(t) and Y(t) is RXY(t, ) = E[X(t)Y*(t+ )] , The cross-correlation function of two random sequences Xn and Yn is RXY[m, k] = E[Xm. Y*m+k] If , RXY(t, ) = 0 then X(t) and Y(t) are called orthogonal. EE 571 9

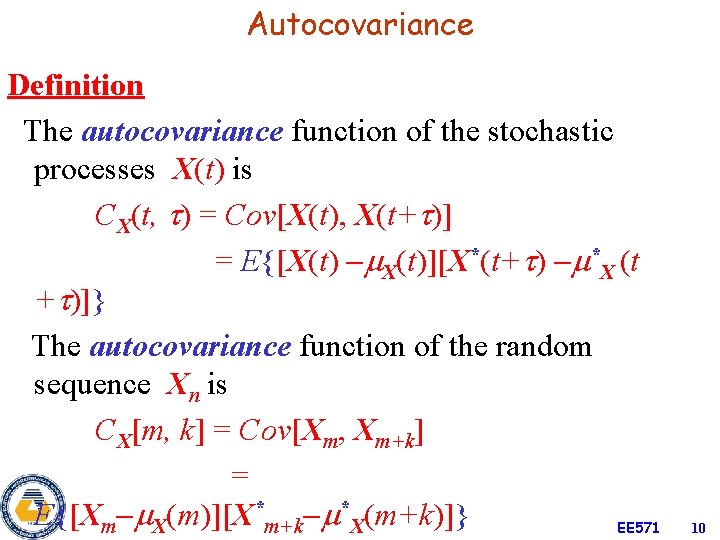

Autocovariance Definition The autocovariance function of the stochastic processes X(t) is CX(t, ) = Cov[X(t), X(t+ )] = E{[X(t) X(t)][X*(t+ ) *X (t + )]} The autocovariance function of the random sequence Xn is CX[m, k] = Cov[Xm, Xm+k] = E{[Xm X(m)][X*m+k *X(m+k)]} EE 571 10

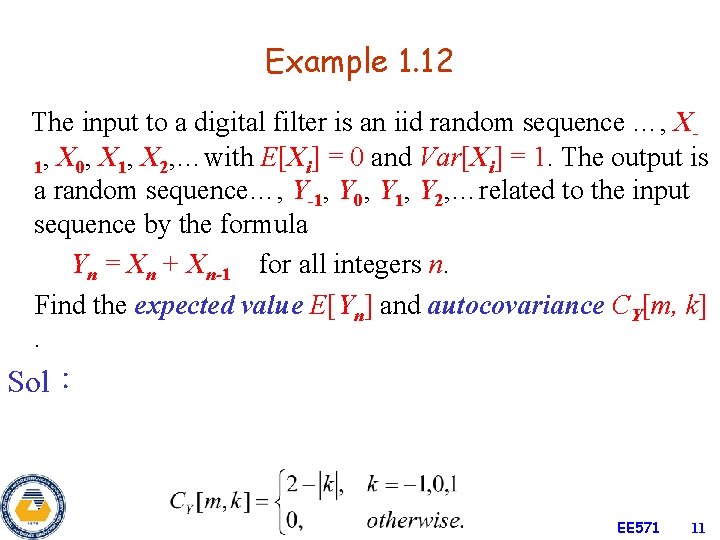

Example 1. 12 The input to a digital filter is an iid random sequence …, X 1, X 0, X 1, X 2, …with E[Xi] = 0 and Var[Xi] = 1. The output is a random sequence…, Y-1, Y 0, Y 1, Y 2, …related to the input sequence by the formula Yn = Xn + Xn-1 for all integers n. Find the expected value E[Yn] and autocovariance CY[m, k]. Sol: EE 571 11

![Example 1. 13 Suppose that X(t) is a process with E[X(t)] = 3, RX(t, Example 1. 13 Suppose that X(t) is a process with E[X(t)] = 3, RX(t,](http://slidetodoc.com/presentation_image_h/0cc211f484aa81493d5b10cefd6e094c/image-12.jpg)

Example 1. 13 Suppose that X(t) is a process with E[X(t)] = 3, RX(t, ) = 9 + 4 e 0. 2| | , Find the mean, variance, and the covariance of the R. V. Z = X(5) and W = X(8). Sol: EE 571 12

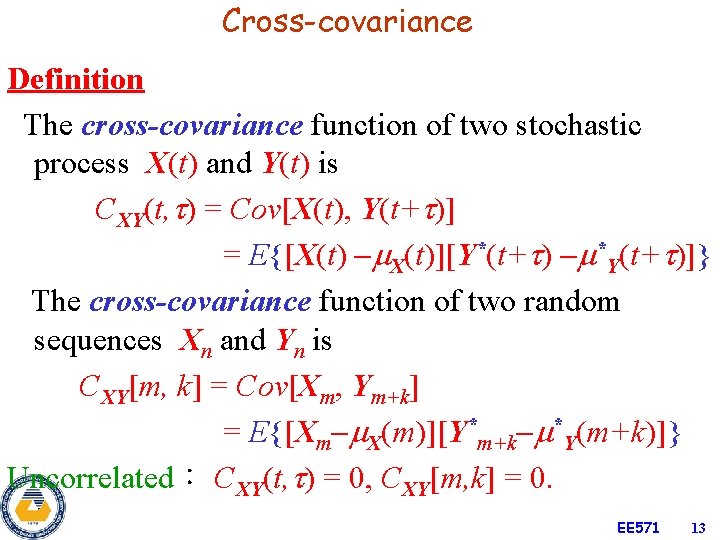

Cross-covariance Definition The cross-covariance function of two stochastic process X(t) and Y(t) is CXY(t, ) = Cov[X(t), Y(t+ )] = E{[X(t) X(t)][Y*(t+ ) *Y(t+ )]} The cross-covariance function of two random sequences Xn and Yn is CXY[m, k] = Cov[Xm, Ym+k] = E{[Xm X(m)][Y*m+k *Y(m+k)]} Uncorrelated: CXY(t, ) = 0, CXY[m, k] = 0. EE 571 13

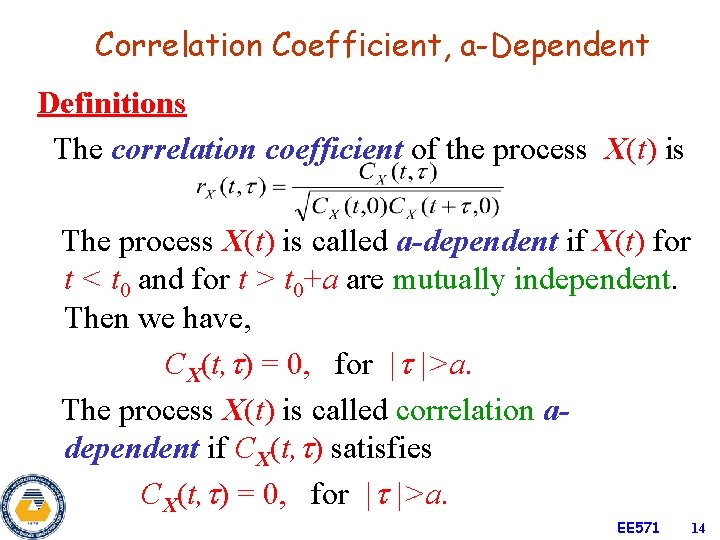

Correlation Coefficient, a-Dependent Definitions The correlation coefficient of the process X(t) is The process X(t) is called a-dependent if X(t) for t < t 0 and for t > t 0+a are mutually independent. Then we have, CX(t, ) = 0, for | |>a. The process X(t) is called correlation adependent if CX(t, ) satisfies CX(t, ) = 0, for | |>a. EE 571 14

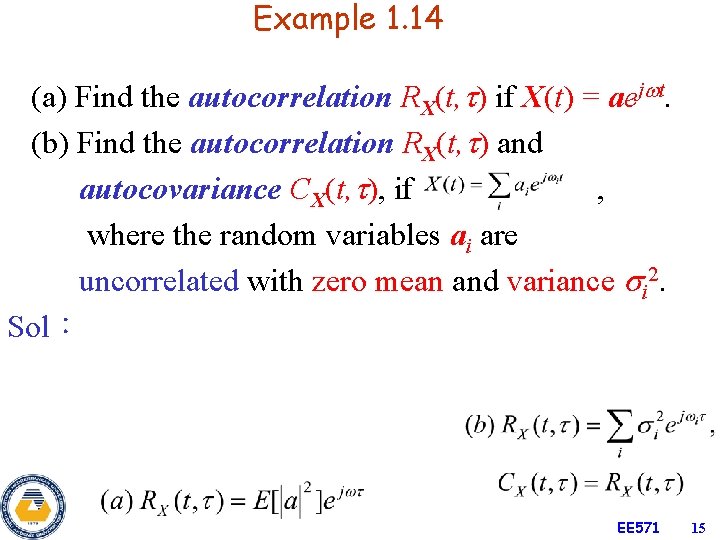

Example 1. 14 (a) Find the autocorrelation RX(t, ) if X(t) = aej t. (b) Find the autocorrelation RX(t, ) and autocovariance CX(t, ), if , where the random variables ai are uncorrelated with zero mean and variance i 2. Sol: EE 571 15

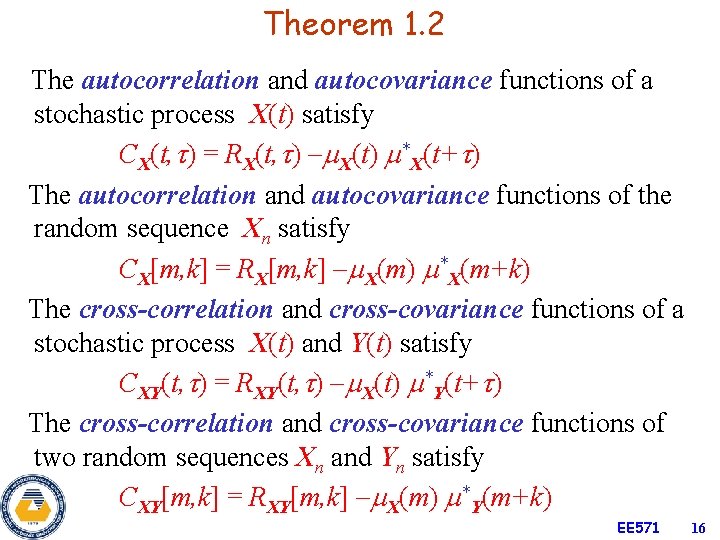

Theorem 1. 2 The autocorrelation and autocovariance functions of a stochastic process X(t) satisfy CX(t, ) = RX(t, ) X(t) *X(t+ ) The autocorrelation and autocovariance functions of the random sequence Xn satisfy CX[m, k] = RX[m, k] X(m) *X(m+k) The cross-correlation and cross-covariance functions of a stochastic process X(t) and Y(t) satisfy CXY(t, ) = RXY(t, ) X(t) *Y(t+ ) The cross-correlation and cross-covariance functions of two random sequences Xn and Yn satisfy CXY[m, k] = RXY[m, k] X(m) *Y(m+k) EE 571 16

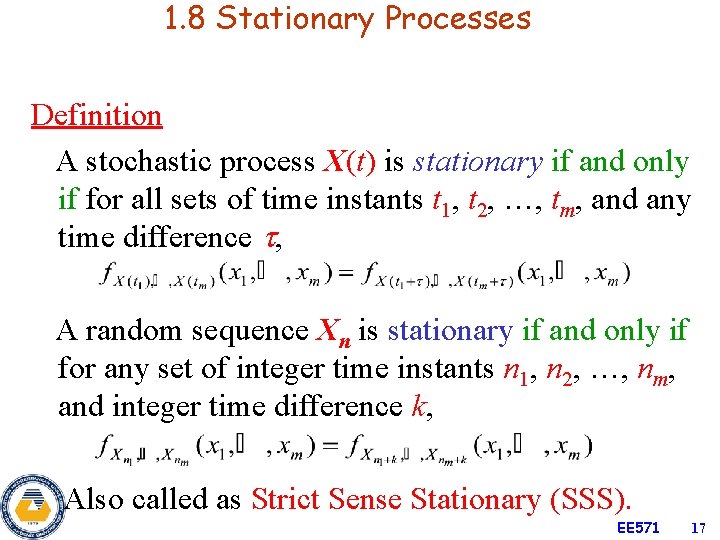

1. 8 Stationary Processes Definition A stochastic process X(t) is stationary if and only if for all sets of time instants t 1, t 2, …, tm, and any time difference , A random sequence Xn is stationary if and only if for any set of integer time instants n 1, n 2, …, nm, and integer time difference k, Also called as Strict Sense Stationary (SSS). EE 571 17

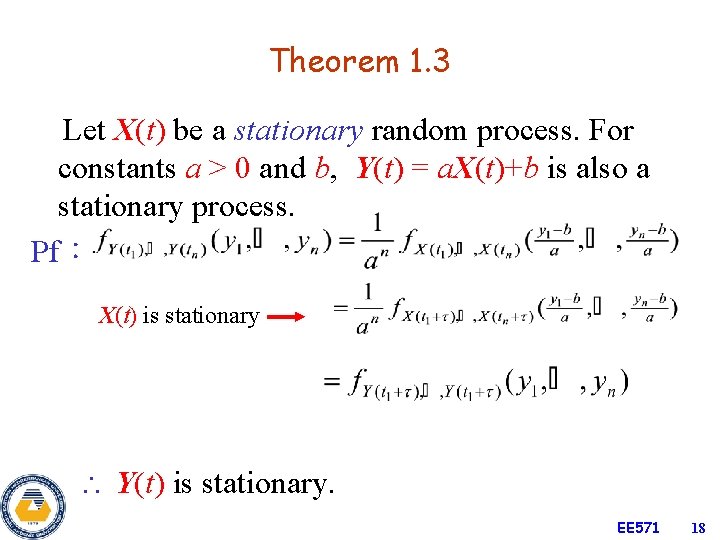

Theorem 1. 3 Let X(t) be a stationary random process. For constants a > 0 and b, Y(t) = a. X(t)+b is also a stationary process. Pf: X(t) is stationary Y(t) is stationary. EE 571 18

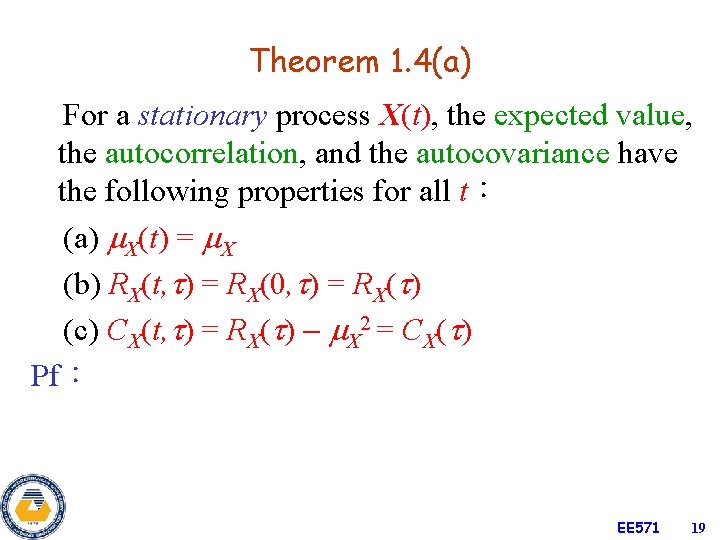

Theorem 1. 4(a) For a stationary process X(t), the expected value, the autocorrelation, and the autocovariance have the following properties for all t: (a) X(t) = X (b) RX(t, ) = RX(0, ) = RX( ) (c) CX(t, ) = RX( ) X 2 = CX( ) Pf: EE 571 19

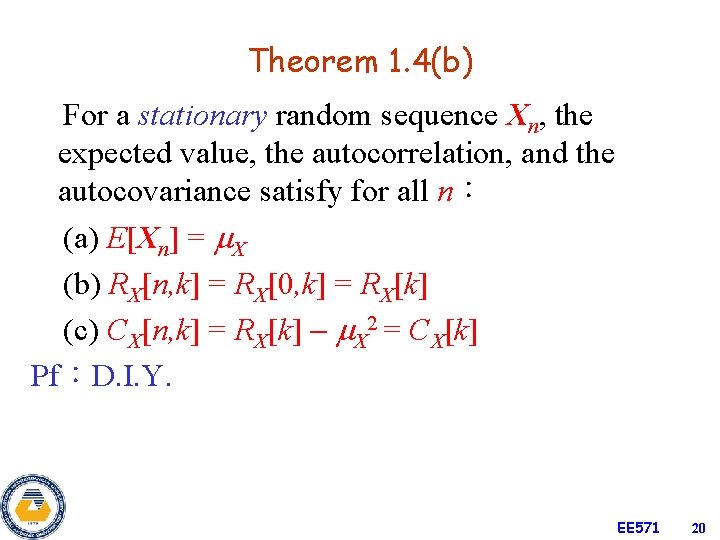

Theorem 1. 4(b) For a stationary random sequence Xn, the expected value, the autocorrelation, and the autocovariance satisfy for all n: (a) E[Xn] = X (b) RX[n, k] = RX[0, k] = RX[k] (c) CX[n, k] = RX[k] X 2 = CX[k] Pf:D. I. Y. EE 571 20

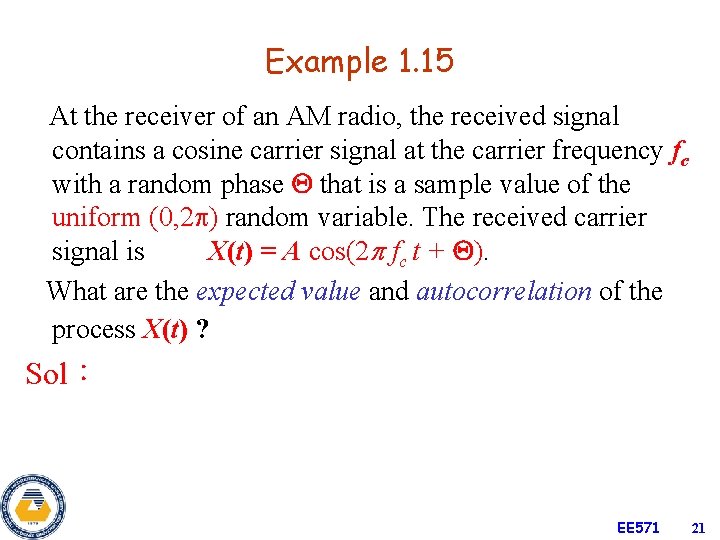

Example 1. 15 At the receiver of an AM radio, the received signal contains a cosine carrier signal at the carrier frequency fc with a random phase that is a sample value of the uniform (0, 2 ) random variable. The received carrier signal is X(t) = A cos(2 fc t + ). What are the expected value and autocorrelation of the process X(t) ? Sol: EE 571 21

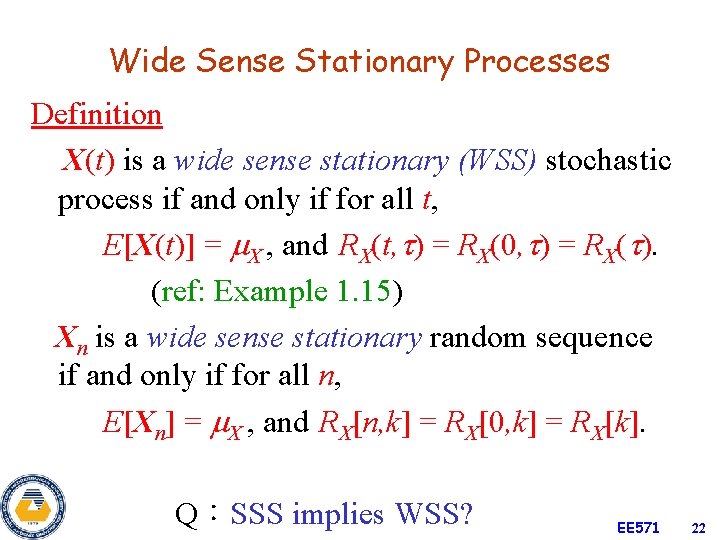

Wide Sense Stationary Processes Definition X(t) is a wide sense stationary (WSS) stochastic process if and only if for all t, E[X(t)] = X , and RX(t, ) = RX(0, ) = RX( ). (ref: Example 1. 15) Xn is a wide sense stationary random sequence if and only if for all n, E[Xn] = X , and RX[n, k] = RX[0, k] = RX[k]. Q:SSS implies WSS? EE 571 22

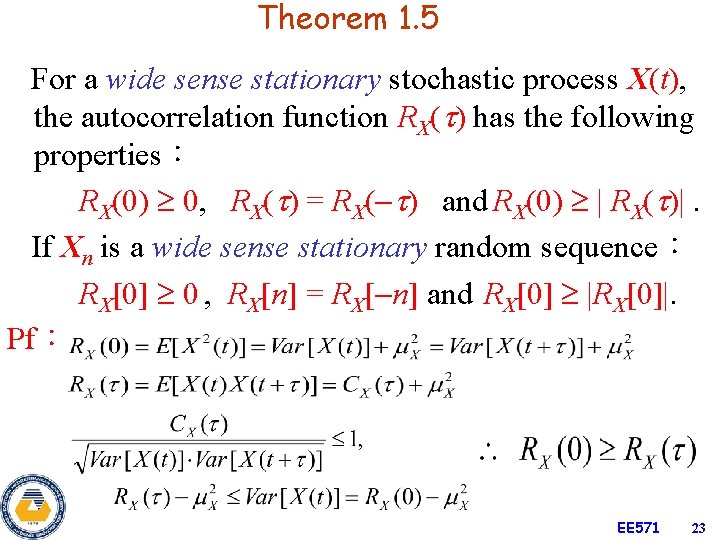

Theorem 1. 5 For a wide sense stationary stochastic process X(t), the autocorrelation function RX( ) has the following properties: RX(0) 0, RX( ) = RX( ) and RX(0) | RX( )|. If Xn is a wide sense stationary random sequence: RX[0] 0 , RX[n] = RX[ n] and RX[0] |RX[0]|. Pf: EE 571 23

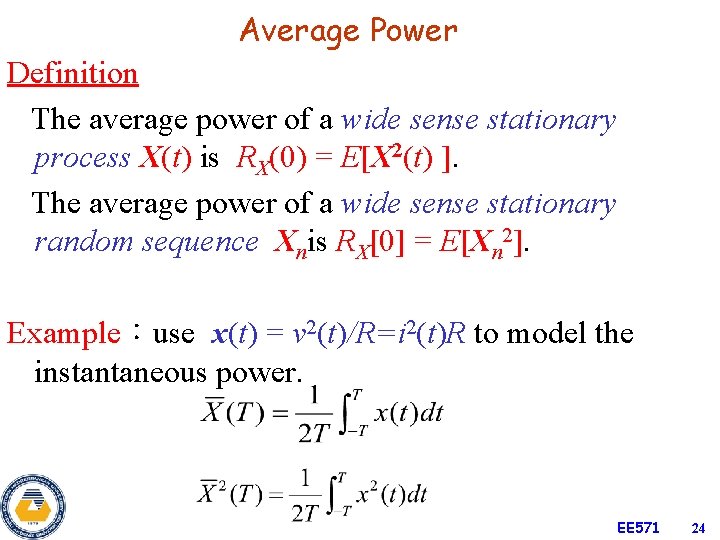

Average Power Definition The average power of a wide sense stationary process X(t) is RX(0) = E[X 2(t) ]. The average power of a wide sense stationary random sequence Xnis RX[0] = E[Xn 2]. Example:use x(t) = v 2(t)/R=i 2(t)R to model the instantaneous power. EE 571 24

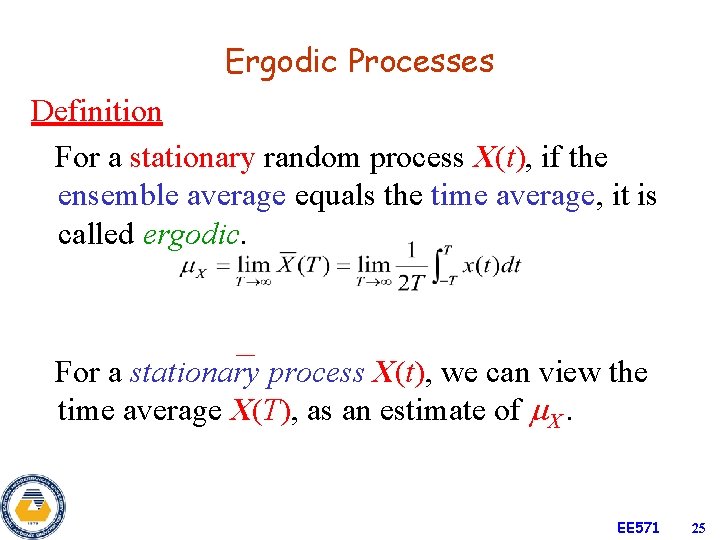

Ergodic Processes Definition For a stationary random process X(t), if the ensemble average equals the time average, it is called ergodic. For a stationary process X(t), we can view the time average X(T), as an estimate of X. EE 571 25

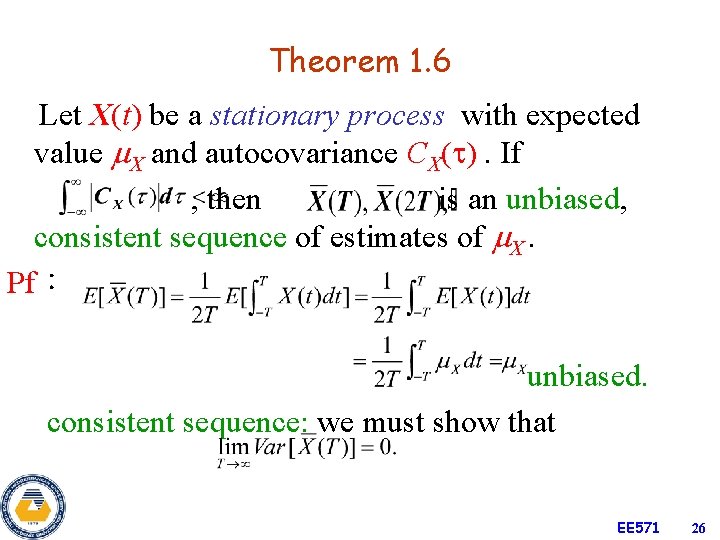

Theorem 1. 6 Let X(t) be a stationary process with expected value X and autocovariance CX( ). If , then is an unbiased, consistent sequence of estimates of X. Pf: unbiased. consistent sequence: we must show that EE 571 26

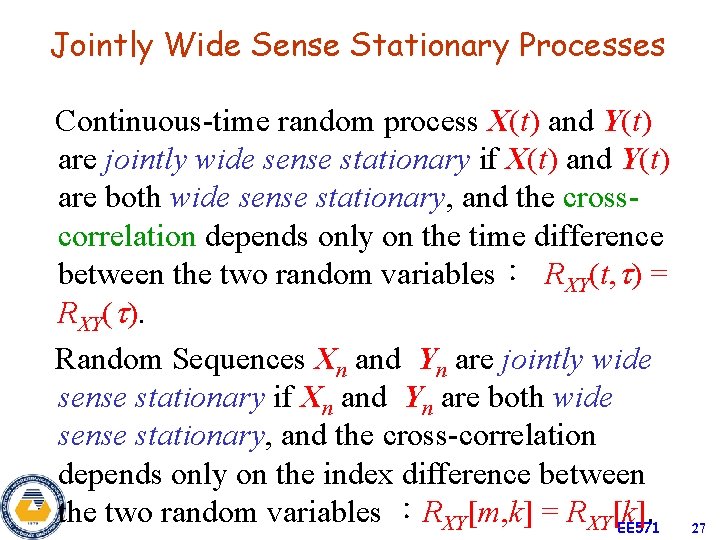

Jointly Wide Sense Stationary Processes Continuous-time random process X(t) and Y(t) are jointly wide sense stationary if X(t) and Y(t) are both wide sense stationary, and the crosscorrelation depends only on the time difference between the two random variables: RXY(t, ) = RXY( ). Random Sequences Xn and Yn are jointly wide sense stationary if Xn and Yn are both wide sense stationary, and the cross-correlation depends only on the index difference between the two random variables :RXY[m, k] = RXY[k]. EE 571 27

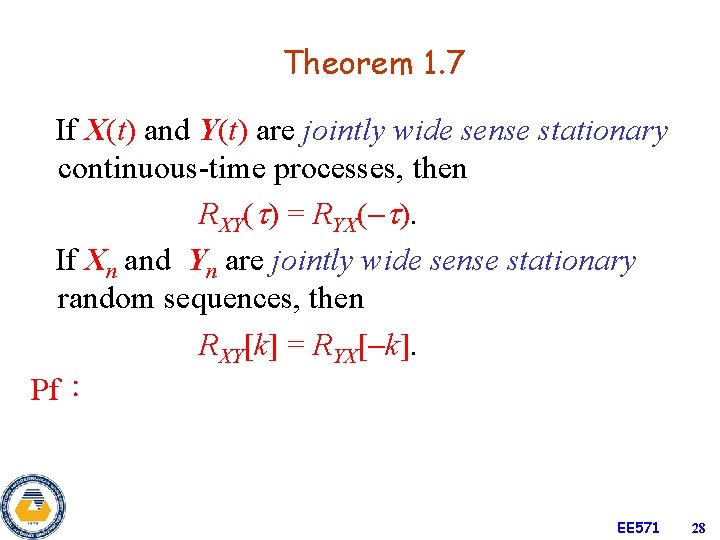

Theorem 1. 7 If X(t) and Y(t) are jointly wide sense stationary continuous-time processes, then RXY( ) = RYX( ). If Xn and Yn are jointly wide sense stationary random sequences, then RXY[k] = RYX[ k]. Pf: EE 571 28

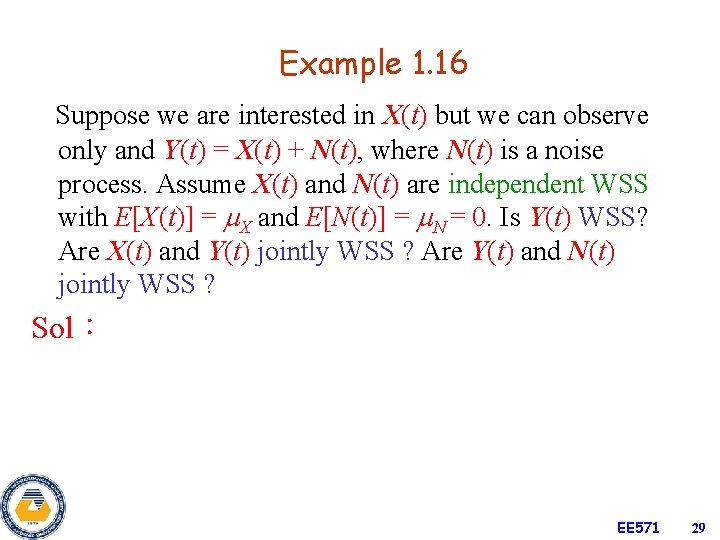

Example 1. 16 Suppose we are interested in X(t) but we can observe only and Y(t) = X(t) + N(t), where N(t) is a noise process. Assume X(t) and N(t) are independent WSS with E[X(t)] = X and E[N(t)] = N = 0. Is Y(t) WSS? Are X(t) and Y(t) jointly WSS ? Are Y(t) and N(t) jointly WSS ? Sol: EE 571 29

![Example 1. 17 Xn is a WSS random sequence with RX[k]. The random sequence Example 1. 17 Xn is a WSS random sequence with RX[k]. The random sequence](http://slidetodoc.com/presentation_image_h/0cc211f484aa81493d5b10cefd6e094c/image-30.jpg)

Example 1. 17 Xn is a WSS random sequence with RX[k]. The random sequence Yn is obtained from Xn by reversing the sign of every other random variable in Xn:Yn = ( 1)n Xn. (a) Find RY[n, k] in terms of RX[k]. (b) Find RXY[n, k] in terms of RX[k]. (c) Is Yn WSS? (d) Are Xn and Yn jointly WSS? Sol: EE 571 30

- Slides: 30