Part 4 c BaumWelch Algorithm CSE 717 SPRING

Part 4 c Baum-Welch Algorithm CSE 717, SPRING 2008 CUBS, Univ at Buffalo

Review of Last Class n. Production Probability n Forward-backward Algorithm Dynamic programming n. Decoding Problem n Viterbi Algorithm Dynamic programming

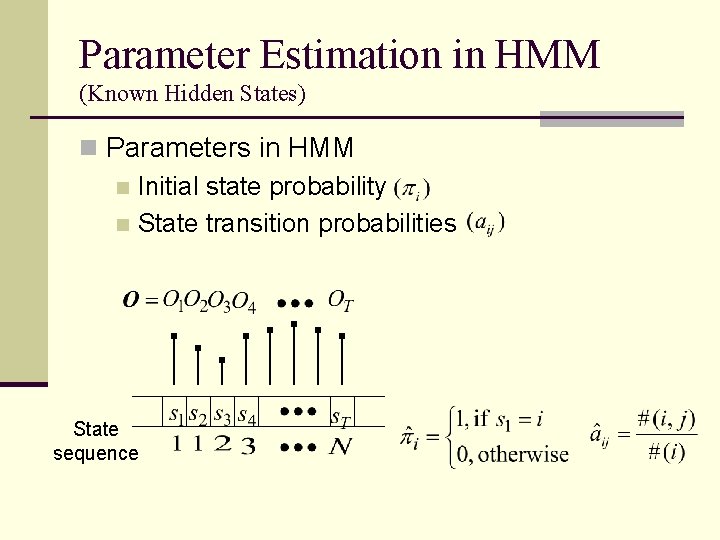

Parameter Estimation in HMM (Known Hidden States) n Parameters in HMM n Initial state probability n State transition probabilities State sequence

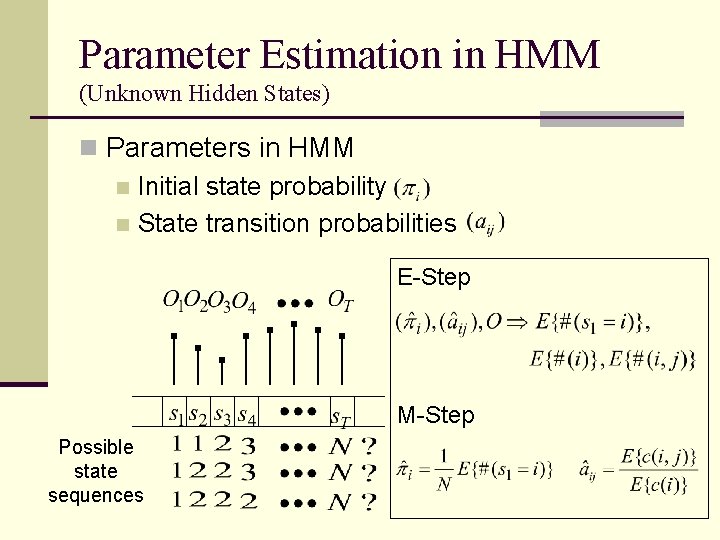

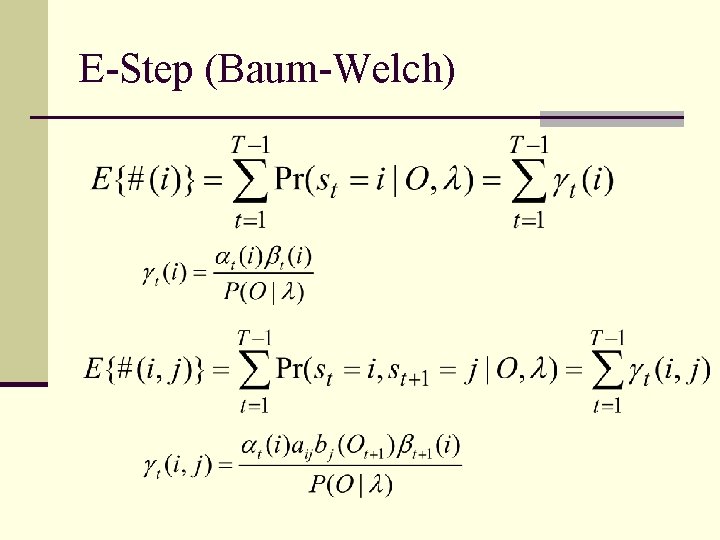

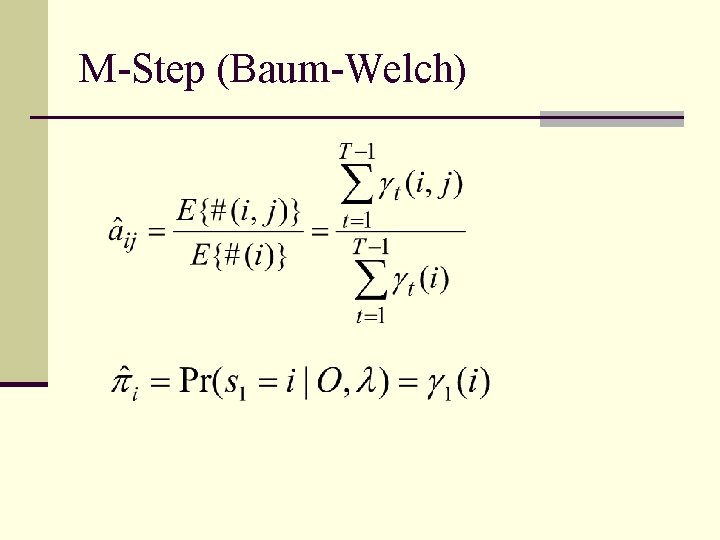

Parameter Estimation in HMM (Unknown Hidden States) n Parameters in HMM n Initial state probability n State transition probabilities E-Step M-Step Possible state sequences

E-Step (Baum-Welch)

M-Step (Baum-Welch)

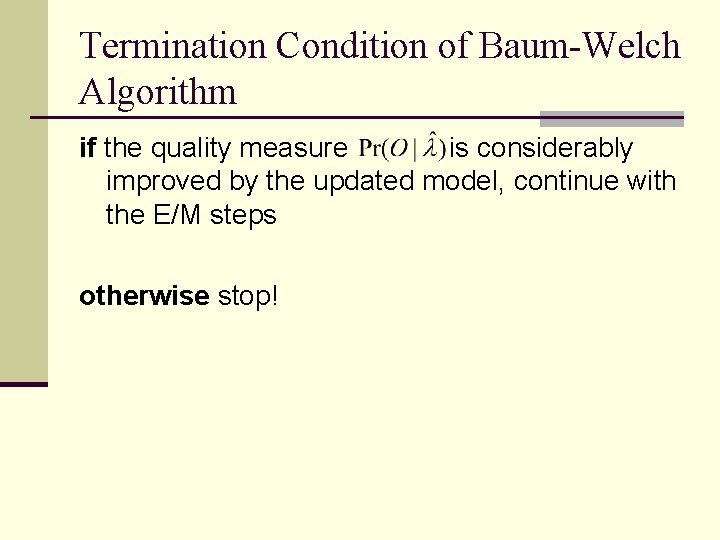

Termination Condition of Baum-Welch Algorithm if the quality measure is considerably improved by the updated model, continue with the E/M steps otherwise stop!

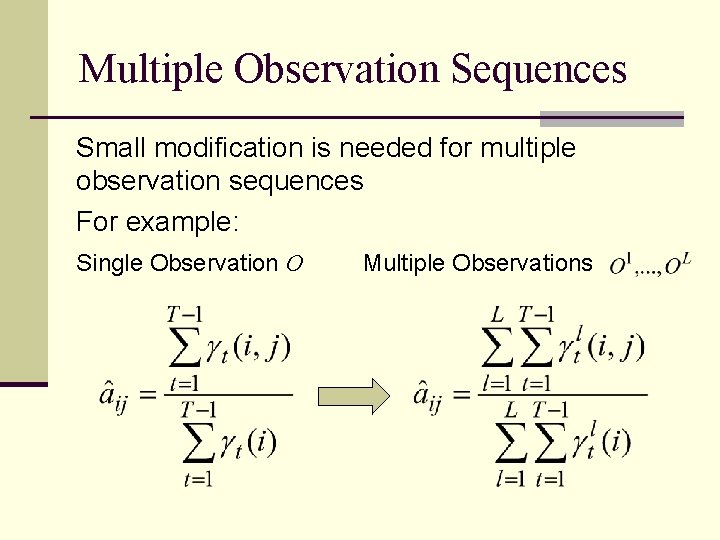

Multiple Observation Sequences Small modification is needed for multiple observation sequences For example: Single Observation O Multiple Observations

Updating Observation Likelihood (Discrete HMM: is represented non-parametrically)

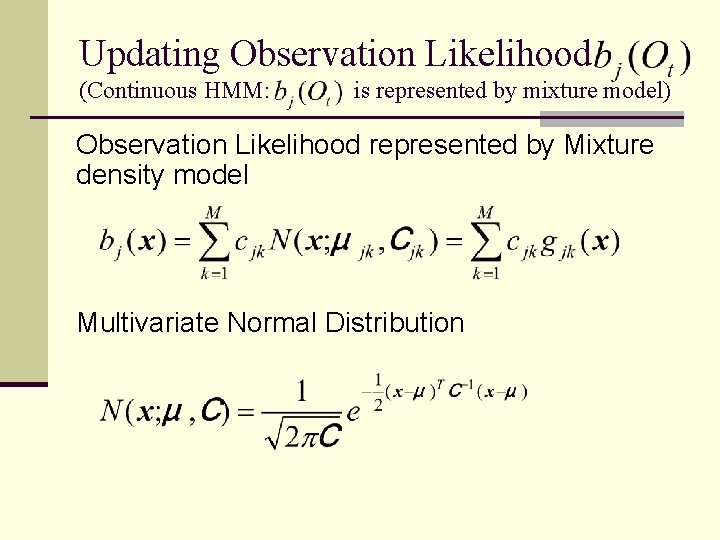

Updating Observation Likelihood (Continuous HMM: is represented by mixture model) Observation Likelihood represented by Mixture density model Multivariate Normal Distribution

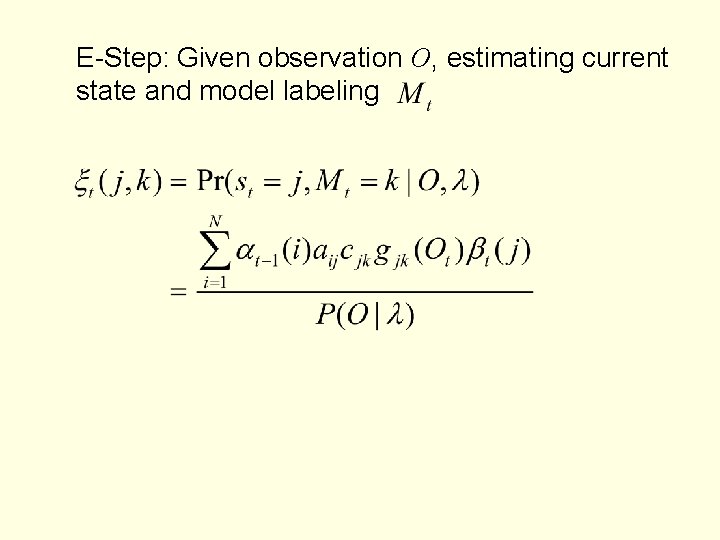

E-Step: Given observation O, estimating current state and model labeling

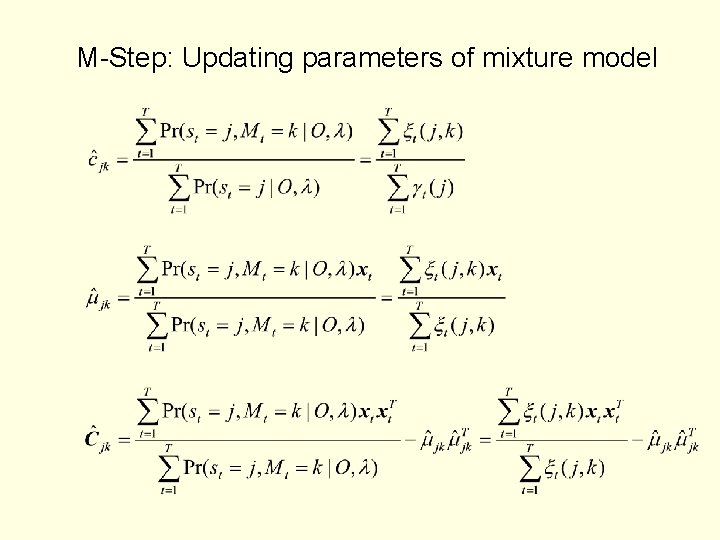

M-Step: Updating parameters of mixture model

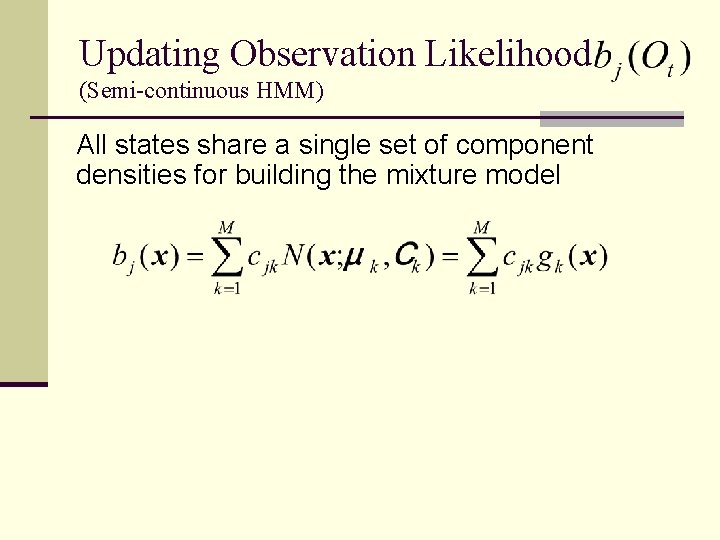

Updating Observation Likelihood (Semi-continuous HMM) All states share a single set of component densities for building the mixture model

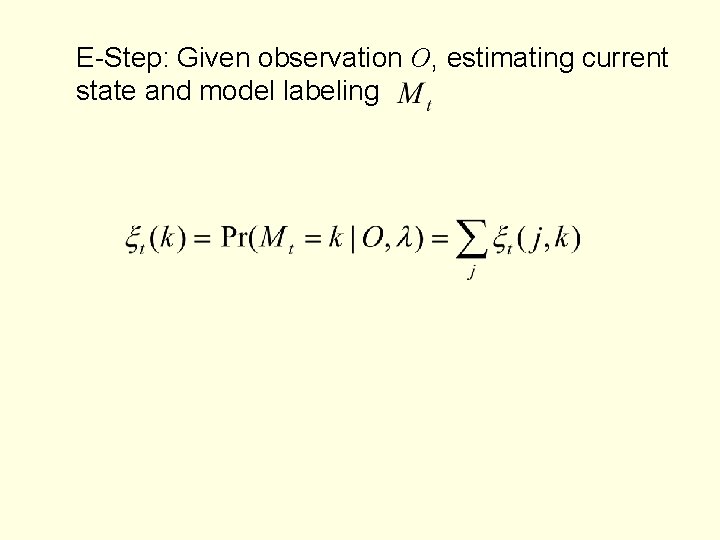

E-Step: Given observation O, estimating current state and model labeling

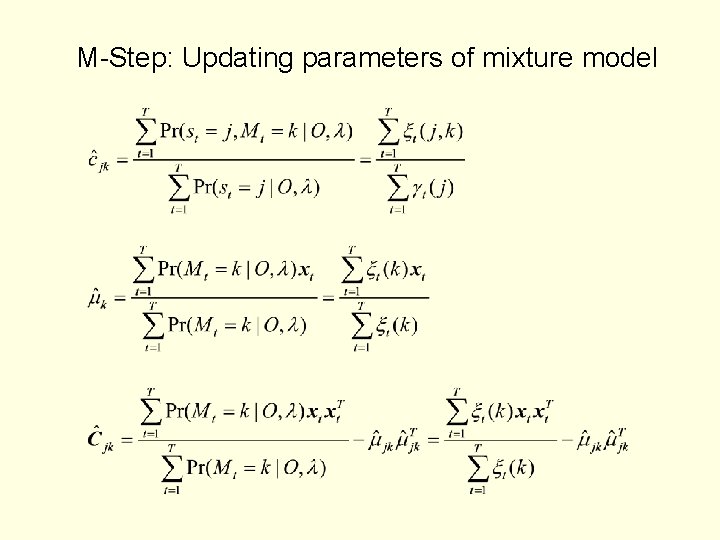

M-Step: Updating parameters of mixture model

- Slides: 15