Part 2 Webbased Information Literacy Lauriers Assessment Plan

Part 2: Web-based Information Literacy : Laurier’s Assessment Plan ___________________ • Overview of instruction provided. • Description of assessment plan. • Using assessment to achieve ongoing enhancement of instruction.

Overview of Instruction • Web-based with exception of initial class visit www. wlu. ca/library/infolit/tutorial/ Quizzes delivered via Web. CT. • Focus on basic information literacy competencies. • Comprised of individual instructional modules. • Generic not subject specific. • Integrated within first year courses – Communication Studies, Geography, Sociology. Required course component in all cases and typically counted for grades. • Completed by some 2000 first year students since launched in Fall 2001.

Type of Assessment Combination of assessment methods: • Formative/Subjective Assessment Measures the quality of instruction; typically focuses on user satisfaction in the form of reactive data e. g. written or verbal feedback. Predominant form of assessment in academic libraries. Instruments used: pre-survey, evaluation survey, usability testing. • Summative/Objective Assessment Aims to gauge what has been learned or the extent of improvement in competencies or skills. Instruments used: pre-test/post-test, online quizzes.

Scope of Assessment • Limited resources meant modest approach. • Online delivery of instruction resulted in ruling out some forms of assessment. • Though instruction delivered entirely online, not all assessment tools were Web-based. • Not introduced at program level. Involved assessing one specific instructional tool targeted at a specific audience.

Goals of Assessment • Obtain insights into students’ perceptions of what and how much they had learned, and how useful they found the tutorial in teaching them research skills. • Determine what students had actually learned. • Establish credibility and accountability of instructional efforts. • Gain support of faculty and university administration for information literacy initiatives. • Identify what we were doing right and where improvement needed.

Formative Evaluation • Pre-Survey (Library Usage/Experience) • Evaluation Survey (User satisfaction) • Usability Testing

Pre Survey • Administered alongside pre-test during class time for first three terms. • High response rates: CS 100 (83% first year, 70% second year), GG 100 (78%), SY 100 (84%). • Students asked five questions: (1) whether they had used an online library catalogue before; (2) how often they used libraries; (3) whether they had previously received library instruction; (4) if Internet access available from home or residence room; (5) how often they use the Internet.

Evaluation Survey • Primary Goal: to determine student perceptions of the effectiveness of the tutorial as a learning experience. • Combination of short answer (7) and open-ended questions (3). • Distributed in class after students had completed tutorial and associated quizzes. • Response rates quite high: CS 100 – 69% first year, 51% second year; GG 100 – 77% first year, 49% second year; SY 100 - 73%. • Results processed manually with some student assistance.

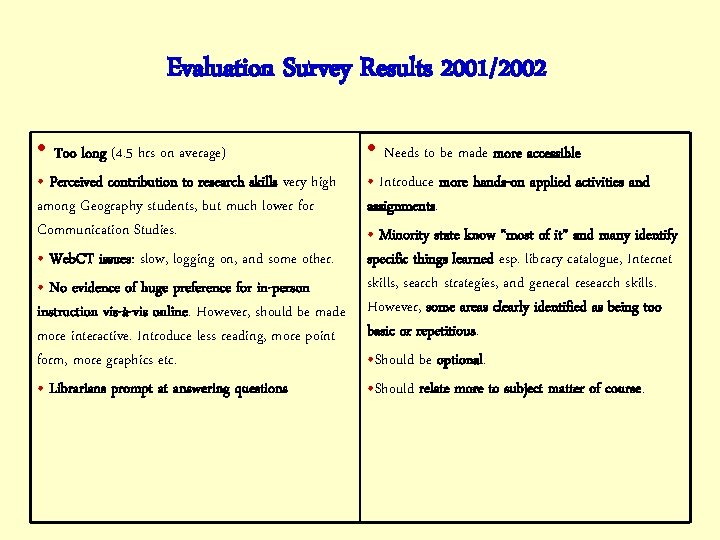

Evaluation Survey Results 2001/2002 • Too long (4. 5 hrs on average) • Needs to be made more accessible • Perceived contribution to research skills very high among Geography students, but much lower for Communication Studies. • Web. CT issues: slow, logging on, and some other. • No evidence of huge preference for in-person instruction vis-à-vis online. However, should be made more interactive. Introduce less reading, more point form, more graphics etc. • Librarians prompt at answering questions • Introduce more hands-on applied activities and assignments. • Minority state know “most of it” and many identify specific things learned esp. library catalogue, Internet skills, search strategies, and general research skills. However, some areas clearly identified as being too basic or repetitious. • Should be optional. • Should relate more to subject matter of course.

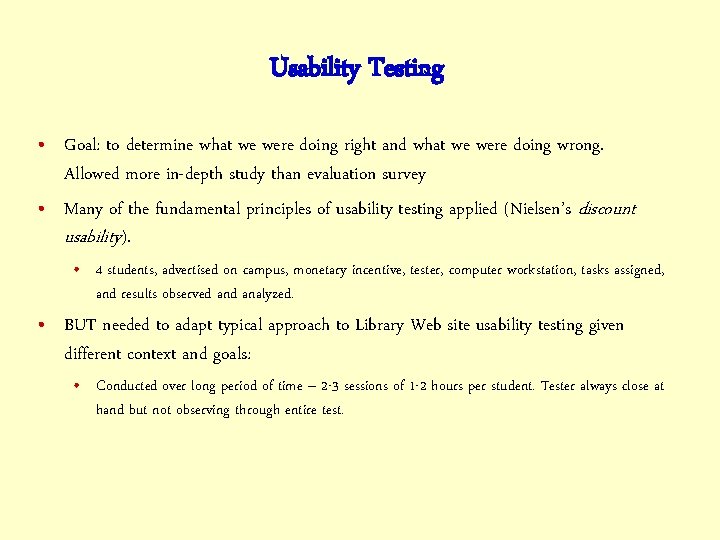

Usability Testing • Goal: to determine what we were doing right and what we were doing wrong. Allowed more in-depth study than evaluation survey • Many of the fundamental principles of usability testing applied (Nielsen’s discount usability). • 4 students, advertised on campus, monetary incentive, tester, computer workstation, tasks assigned, and results observed analyzed. • BUT needed to adapt typical approach to Library Web site usability testing given different context and goals: • Conducted over long period of time – 2 -3 sessions of 1 -2 hours per student. Tester always close at hand but not observing through entire test.

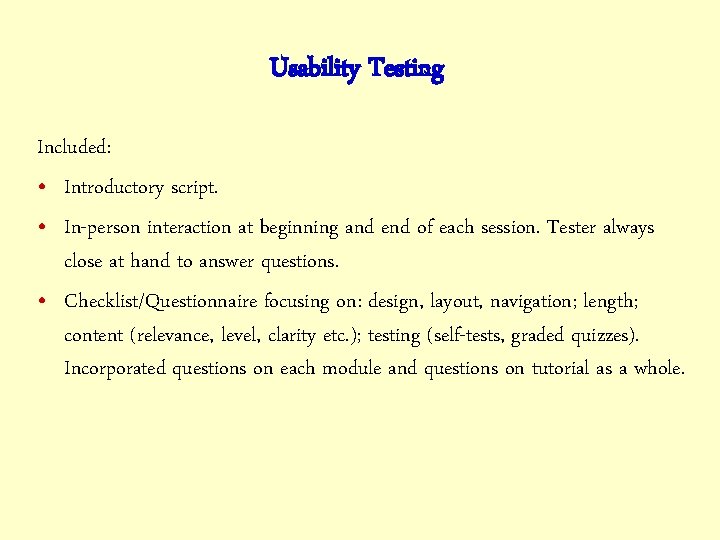

Usability Testing Included: • Introductory script. • In-person interaction at beginning and end of each session. Tester always close at hand to answer questions. • Checklist/Questionnaire focusing on: design, layout, navigation; length; content (relevance, level, clarity etc. ); testing (self-tests, graded quizzes). Incorporated questions on each module and questions on tutorial as a whole.

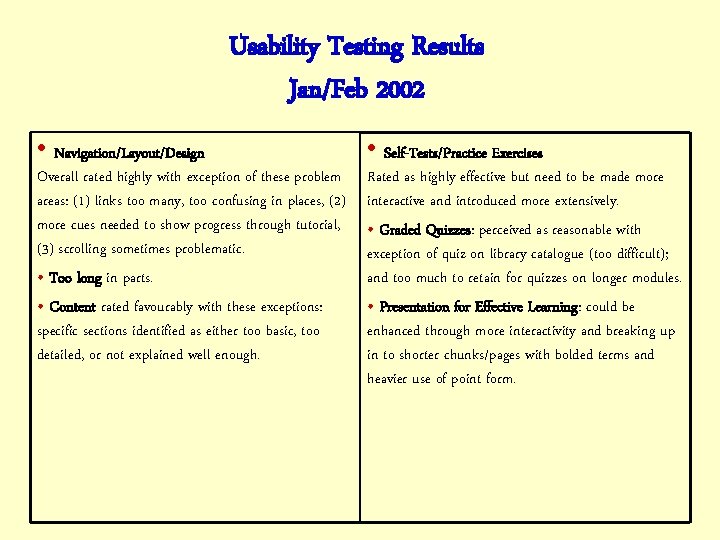

Usability Testing Results Jan/Feb 2002 • Navigation/Layout/Design Overall rated highly with exception of these problem areas: (1) links too many, too confusing in places, (2) more cues needed to show progress through tutorial, (3) scrolling sometimes problematic. • Too long in parts. • Content rated favourably with these exceptions: specific sections identified as either too basic, too detailed, or not explained well enough. • Self-Tests/Practice Exercises Rated as highly effective but need to be made more interactive and introduced more extensively. • Graded Quizzes: perceived as reasonable with exception of quiz on library catalogue (too difficult); and too much to retain for quizzes on longer modules. • Presentation for Effective Learning: could be enhanced through more interactivity and breaking up in to shorter chunks/pages with bolded terms and heavier use of point form.

Summative Assessment • Quizzes • Pre-Test/Post-Test

Quizzes • Web. CT, multiple-choice, random pool. • Required component of course. Counted toward grade in all courses but one. • Data collected: • • • Average overall student grades Rate of participation Average results on individual quizzes Analysis of average scores on individual quiz questions. Where did they do well, where was performance okay, and where were scores very low?

Pre-Test/Post-Test • Designed to test core competencies pre and post tutorial. Multiple choice. Ran first three terms. • Pre-test administered during class (captive audience) using classroom answer sheets graded by computer. • Post-Test formed one of Web. CT quizzes and counted for marks. • Average score increased in all cases typically starting out at approx. 55% Communications Studies (20% increase each year); Geography (15% increase); Sociology (14% increase) • Differences in average scores for each question on both tests were compared – considerable variance in question scores and in percentage change in marks.

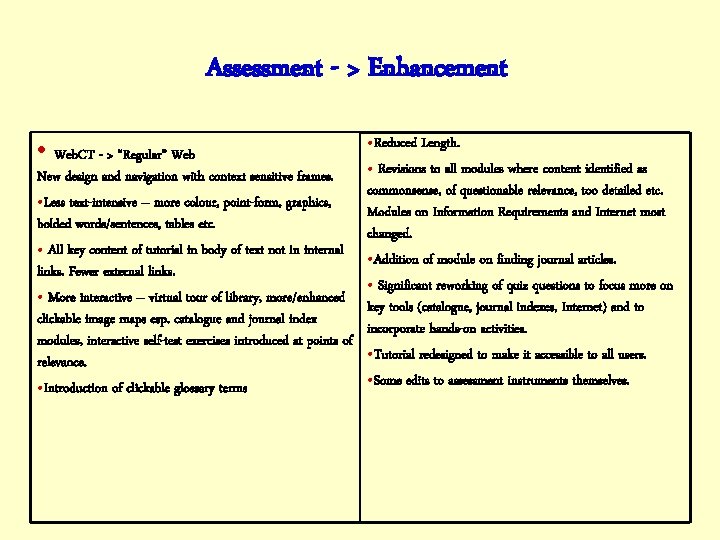

Assessment - > Enhancement • Web. CT - > “Regular” Web • Reduced Length. • Revisions to all modules where content identified as New design and navigation with context sensitive frames. commonsense, of questionable relevance, too detailed etc. • Less text-intensive – more colour, point-form, graphics, Modules on Information Requirements and Internet most bolded words/sentences, tables etc. changed. • All key content of tutorial in body of text not in internal • Addition of module on finding journal articles. links. Fewer external links. • Significant reworking of quiz questions to focus more on • More interactive – virtual tour of library, more/enhanced key tools (catalogue, journal indexes, Internet) and to clickable image maps esp. catalogue and journal index incorporate hands-on activities. modules, interactive self-test exercises introduced at points of • Tutorial redesigned to make it accessible to all users. relevance. • Some edits to assessment instruments themselves. • Introduction of clickable glossary terms

So is it Working Now? Assessment Results Second Time Round – 2002/2003 • Definite evidence of improvement Significant number of students saying tutorial helpful or wouldn’t change anything; majority of previous problem areas not surfacing again or receiving less emphasis. • Some problem areas still exist - some old, some new!

Some Conclusions • Assessment, even where modest in scope, goes a long way to improving the effectiveness of instruction. • Perfection or 100% user satisfaction may seem elusive but don’t give up! Enhancements can be made incrementally. • Evaluation of information literacy needs to be ongoing – continuous process of identifying strengths and weaknesses, and of working toward improvement.

- Slides: 18