Parameter Estimation Eberhard O Voit Integrative Core Problem

Parameter Estimation Eberhard O. Voit Integrative Core Problem Solving with Models September 2011

Recall: Overarching Challenges of Modeling in Biology Map reality into a diagram Map diagram into symbolic equations Convert symbolic equations into functional symbolic equations Convert functional symbolic equations into parameterized equations Arguably the hardest part of modeling The rest is comparatively straightforward!

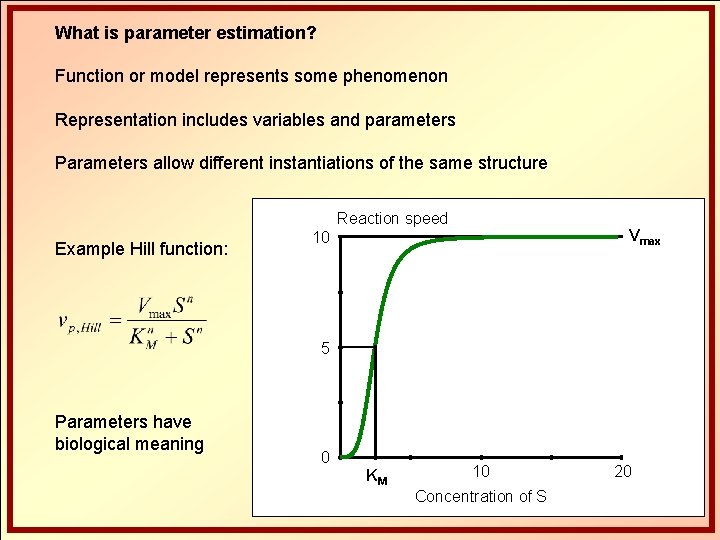

What is parameter estimation? Function or model represents some phenomenon Representation includes variables and parameters Parameters allow different instantiations of the same structure Reaction speed Example Hill function: Vmax 10 5 Parameters have biological meaning 0 KM 10 Concentration of S 20

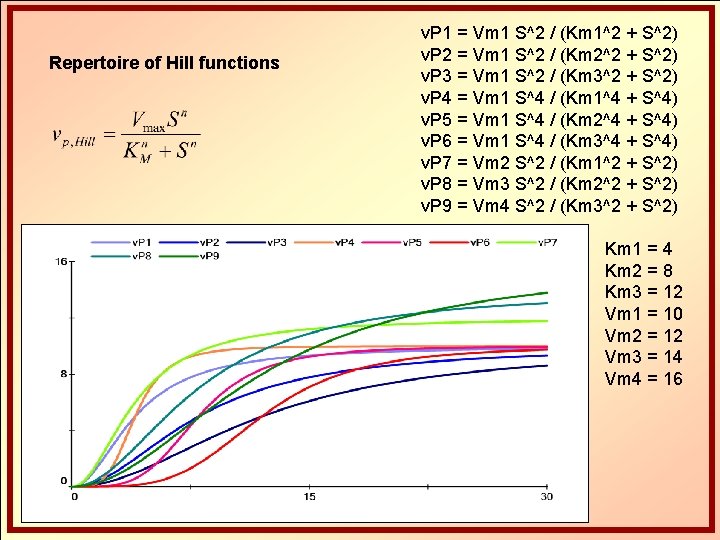

Repertoire of Hill functions v. P 1 = Vm 1 S^2 / (Km 1^2 + S^2) v. P 2 = Vm 1 S^2 / (Km 2^2 + S^2) v. P 3 = Vm 1 S^2 / (Km 3^2 + S^2) v. P 4 = Vm 1 S^4 / (Km 1^4 + S^4) v. P 5 = Vm 1 S^4 / (Km 2^4 + S^4) v. P 6 = Vm 1 S^4 / (Km 3^4 + S^4) v. P 7 = Vm 2 S^2 / (Km 1^2 + S^2) v. P 8 = Vm 3 S^2 / (Km 2^2 + S^2) v. P 9 = Vm 4 S^2 / (Km 3^2 + S^2) Km 1 = 4 Km 2 = 8 Km 3 = 12 Vm 1 = 10 Vm 2 = 12 Vm 3 = 14 Vm 4 = 16

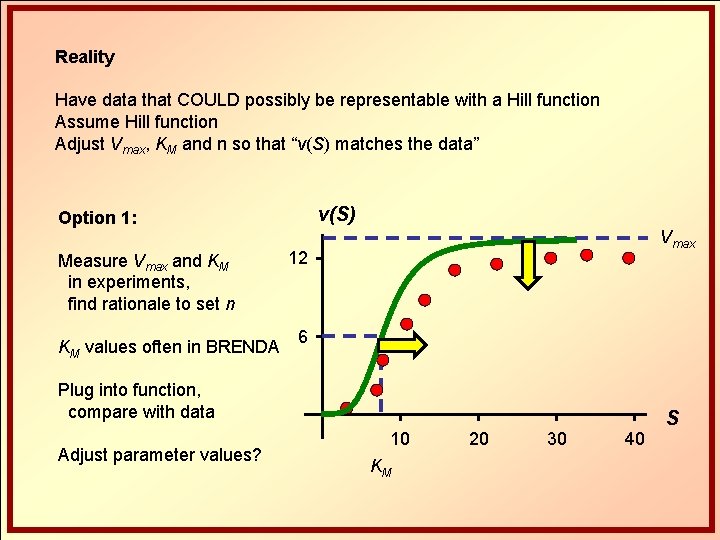

Reality Have data that COULD possibly be representable with a Hill function Assume Hill function Adjust Vmax, KM and n so that “v(S) matches the data” v(S) Option 1: Measure Vmax and KM in experiments, find rationale to set n KM values often in BRENDA Vmax 12 6 Plug into function, compare with data Adjust parameter values? 10 KM 20 30 40 S

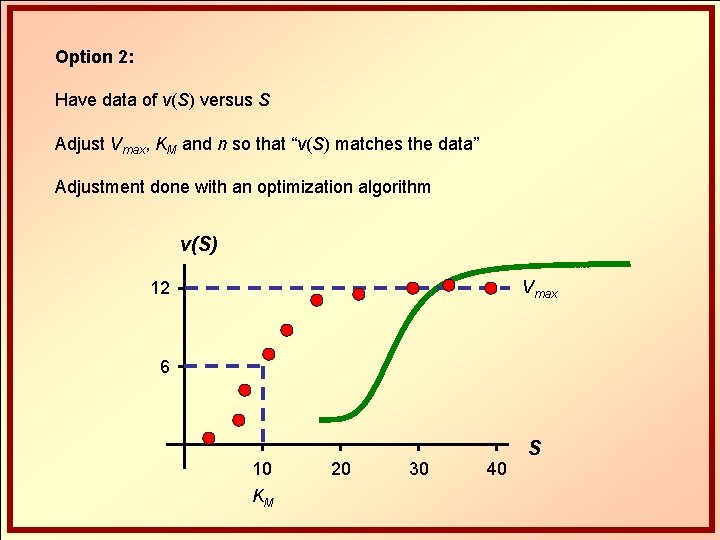

Option 2: Have data of v(S) versus S Adjust Vmax, KM and n so that “v(S) matches the data” Adjustment done with an optimization algorithm v(S) Vmax 12 6 10 KM 20 30 40 S

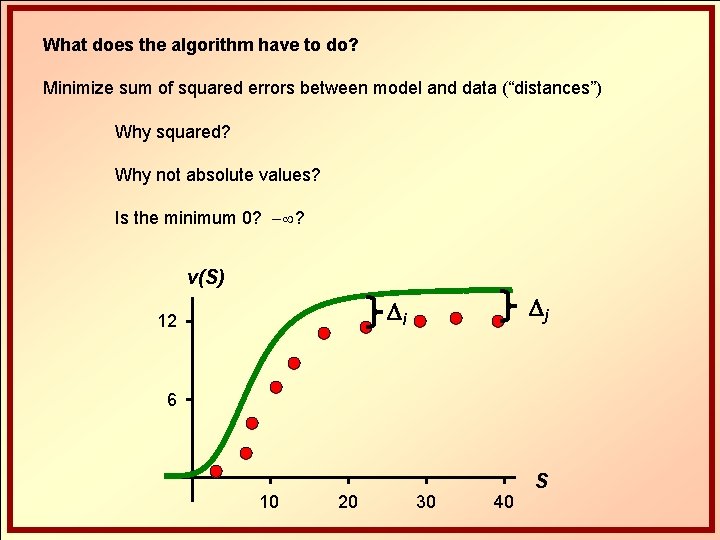

What does the algorithm have to do? Minimize sum of squared errors between model and data (“distances”) Why squared? Why not absolute values? Is the minimum 0? ? v(S) Dj Di 12 6 10 20 30 40 S

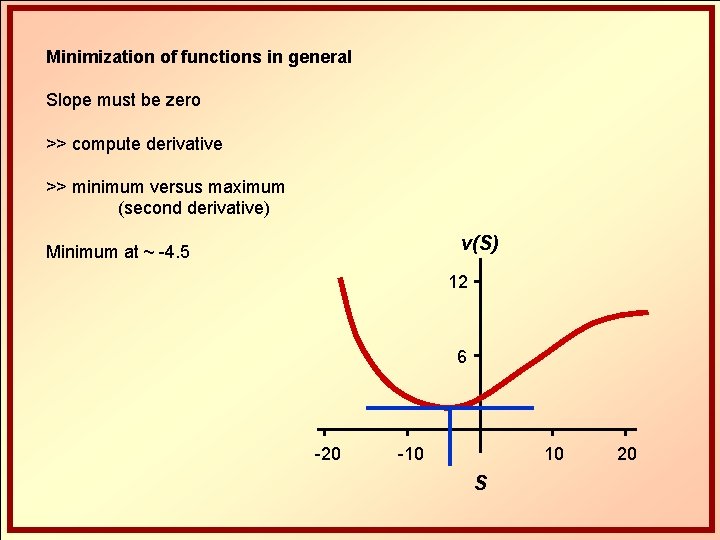

Minimization of functions in general Slope must be zero >> compute derivative >> minimum versus maximum (second derivative) v(S) Minimum at ~ -4. 5 12 6 -20 -10 10 S 20

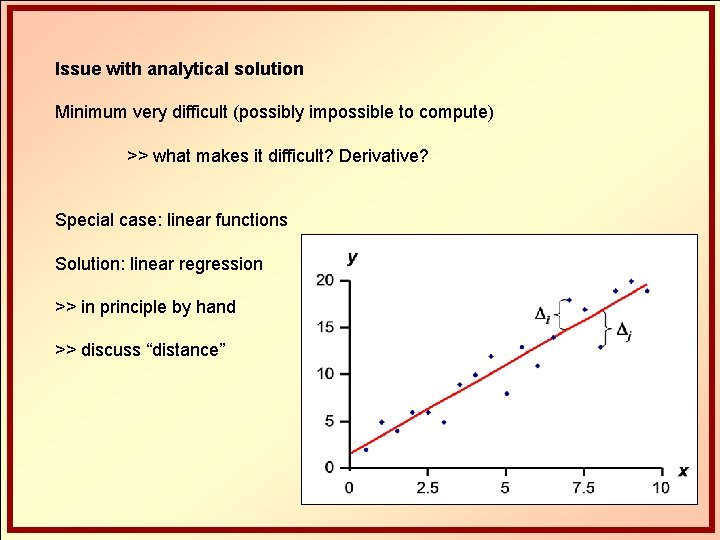

Issue with analytical solution Minimum very difficult (possibly impossible to compute) >> what makes it difficult? Derivative? Special case: linear functions Solution: linear regression >> in principle by hand >> discuss “distance”

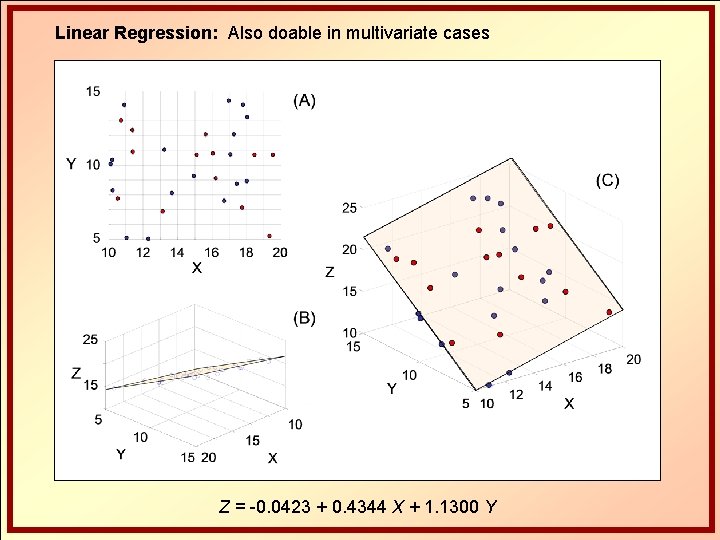

Linear Regression: Also doable in multivariate cases Z = -0. 0423 + 0. 4344 X + 1. 1300 Y

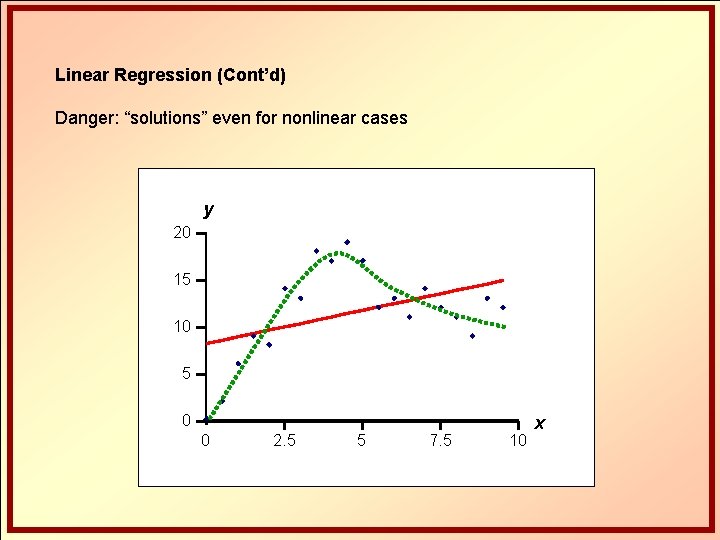

Linear Regression (Cont’d) Danger: “solutions” even for nonlinear cases y 20 15 10 5 0 0 2. 5 5 7. 5 10 x

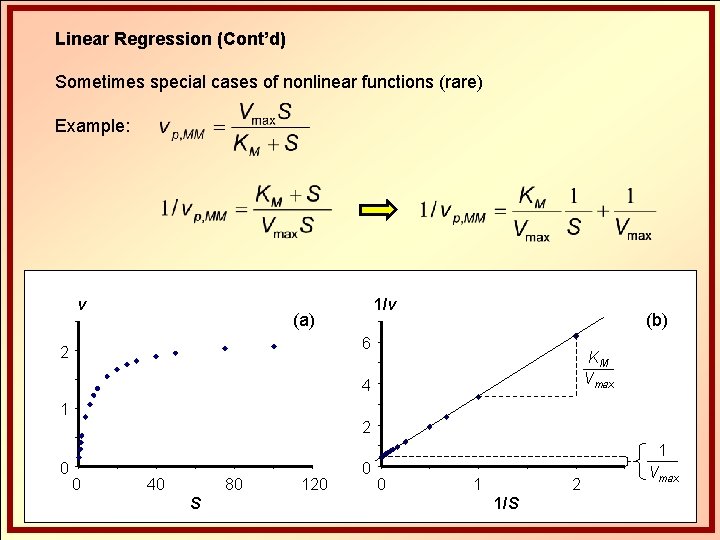

Linear Regression (Cont’d) Sometimes special cases of nonlinear functions (rare) Example: v 1/v (a) (b) 6 2 KM Vmax 4 1 2 1 0 0 40 80 S 120 0 0 1 2 1/S Vmax

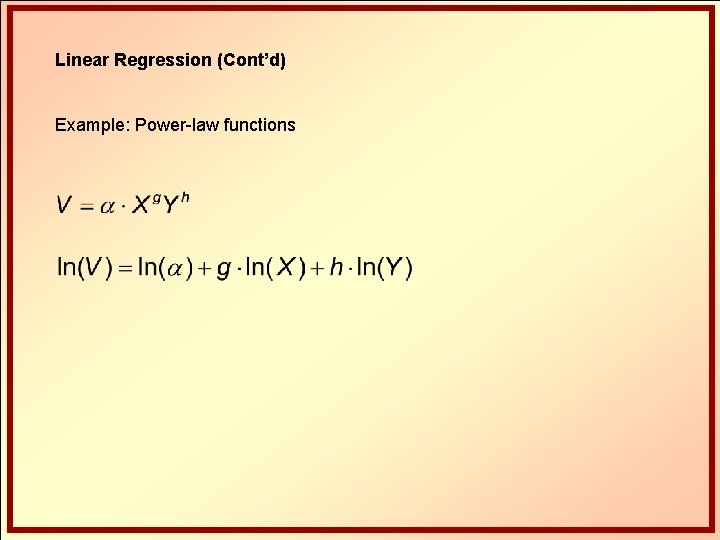

Linear Regression (Cont’d) Example: Power-law functions

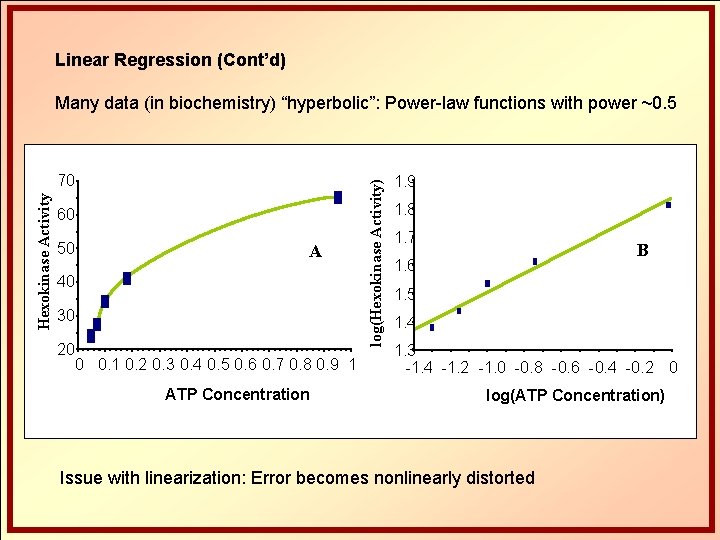

Linear Regression (Cont’d) Hexokinase Activity 70 60 50 A 40 30 20 0 0. 1 0. 2 0. 3 0. 4 0. 5 0. 6 0. 7 0. 8 0. 9 1 ATP Concentration log(Hexokinase Activity) Many data (in biochemistry) “hyperbolic”: Power-law functions with power ~0. 5 1. 9 1. 8 1. 7 B 1. 6 1. 5 1. 4 1. 3 -1. 4 -1. 2 -1. 0 -0. 8 -0. 6 -0. 4 -0. 2 0 log(ATP Concentration) Issue with linearization: Error becomes nonlinearly distorted

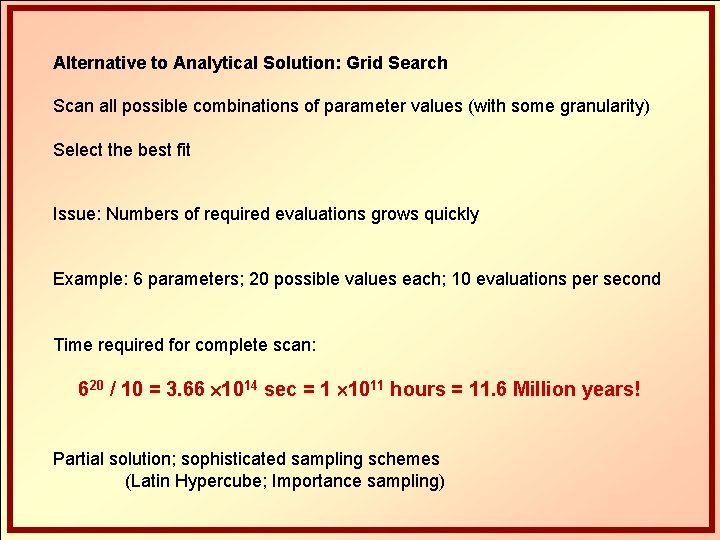

Alternative to Analytical Solution: Grid Search Scan all possible combinations of parameter values (with some granularity) Select the best fit Issue: Numbers of required evaluations grows quickly Example: 6 parameters; 20 possible values each; 10 evaluations per second Time required for complete scan: 620 / 10 = 3. 66 1014 sec = 1 1011 hours = 11. 6 Million years! Partial solution; sophisticated sampling schemes (Latin Hypercube; Importance sampling)

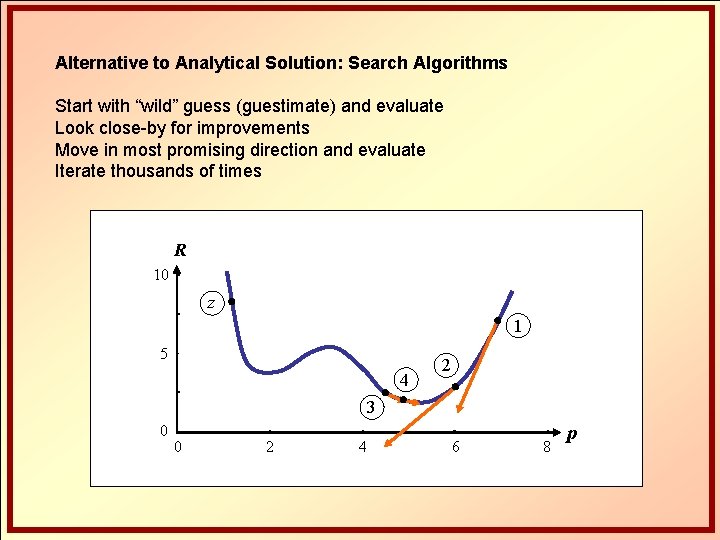

Alternative to Analytical Solution: Search Algorithms Start with “wild” guess (guestimate) and evaluate Look close-by for improvements Move in most promising direction and evaluate Iterate thousands of times R 10 z 1 5 4 2 3 0 0 2 4 6 8 xp

Search Algorithms 1. Gradient Methods Synonyms: Steepest descent, Newton method, hill climbing, …

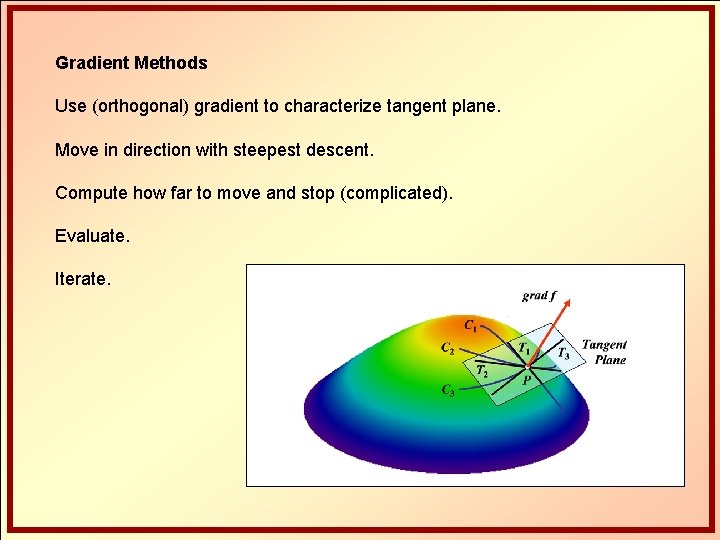

Gradient Methods Use (orthogonal) gradient to characterize tangent plane. Move in direction with steepest descent. Compute how far to move and stop (complicated). Evaluate. Iterate.

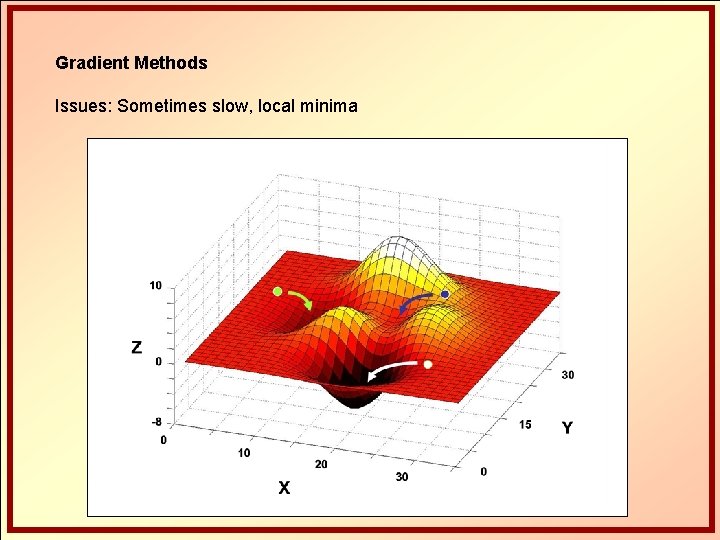

Gradient Methods Issues: Sometimes slow, local minima

Gradient Methods Issues: “Banana-shaped valleys” (in high dimensions) p 2 p 1

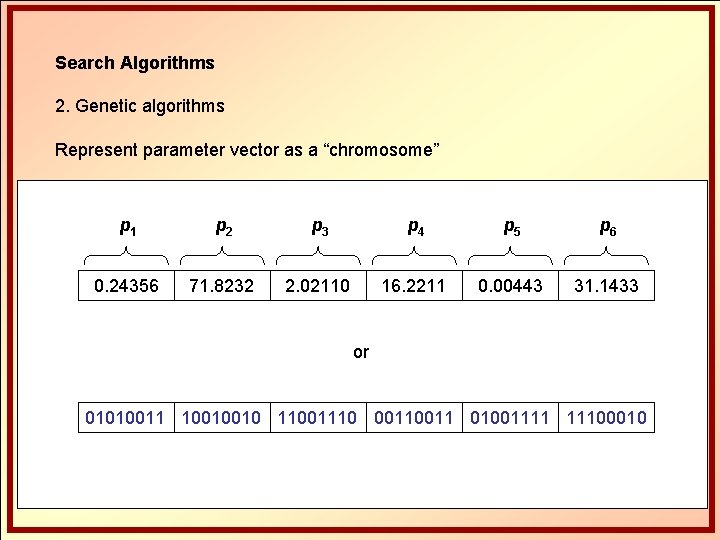

Search Algorithms 2. Genetic algorithms Represent parameter vector as a “chromosome” p 1 p 2 p 3 p 4 p 5 p 6 0. 24356 71. 8232 2. 02110 16. 2211 0. 00443 31. 1433 or 01010011 10010010 11001110 0011 01001111 11100010

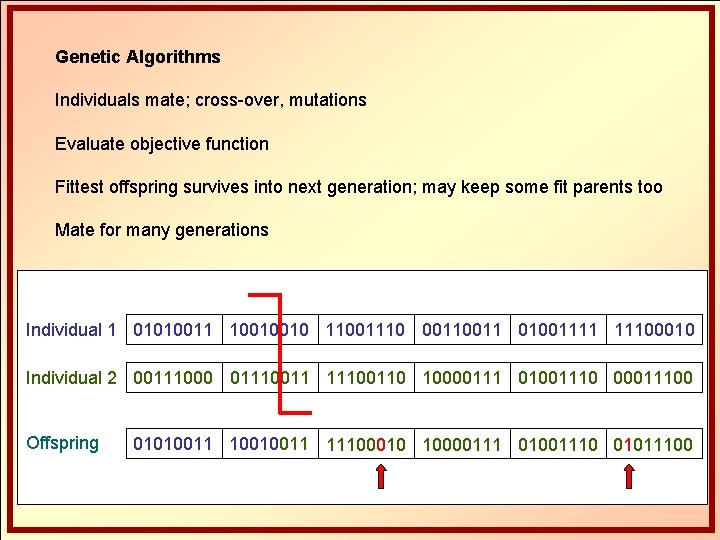

Genetic Algorithms Individuals mate; cross-over, mutations Evaluate objective function Fittest offspring survives into next generation; may keep some fit parents too Mate for many generations Individual 1 01010011 10010010 11001110 0011 01001111 11100010 Individual 2 00111000 011100110 10000111 01001110 00011100 Offspring 01010011 10010011 11100010 10000111 01001110 01011100

Genetic Algorithms Advantages: Good chance to find region containing global optimum (no guarantee) Almost any objective function can be used for optimization Issues: Finding best algorithmic settings is somewhat of an art Usually slow; sometimes no convergence at all Local minima Seldom very exact solutions

Feasible Strategy: Combine the best of both worlds Start with genetic algorithm Chances: Identify some promising region(s) in parameter space Use nonlinear regression (gradient method) Refine solution within promising region(s)

Search Algorithms 3. Other evolutionary (stochastic) algorithms Ant colony optimization (based on paths most often traveled) Particle swarm optimization (based on best positions within swarm) 4. Simulated annealing Concept from metallurgy; tempering steel Heating (perturbations) Cooling (find new energy optimum) Issues with all: slow, local minima, no global solution guaranteed

Search Algorithms 5. Global Optimization Grid searches Coarse grid searches with interpolation and importance sampling Branch-and-bound (or branch-and-reduce) methods Subdivide parameter space Compute underestimating functions (UF) for each division If lowest possible point of UF in division A is higher than values in another division B, A cannot contain the global minimum and is discarded.

Typical Challenges Noise masks true functional shape v size 10 v 10 5 5 5 a 0 0 2. 5 time v 10 b 5 0 0 2. 5 time c 5 0 0 2. 5 time 5

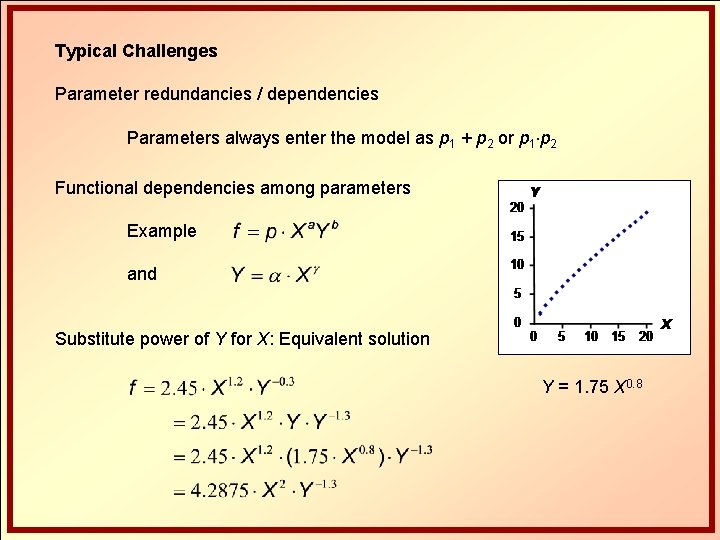

Typical Challenges Parameter redundancies / dependencies Parameters always enter the model as p 1 + p 2 or p 1 p 2 Functional dependencies among parameters Example and Substitute power of Y for X: Equivalent solution Y = 1. 75 X 0. 8

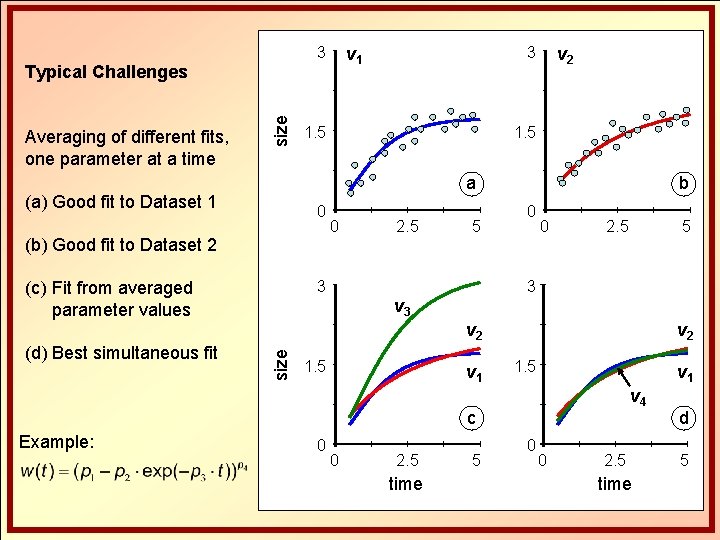

v 1 3 Averaging of different fits, one parameter at a time size Typical Challenges 1. 5 a (a) Good fit to Dataset 1 0 (b) Good fit to Dataset 2 0 3 (c) Fit from averaged parameter values 2. 5 5 b 0 0 2. 5 3 v 3 1. 5 v 1 v 2 1. 5 v 1 v 4 c Example: 5 v 2 size (d) Best simultaneous fit v 2 3 0 0 2. 5 time 5 0 0 2. 5 time d 5

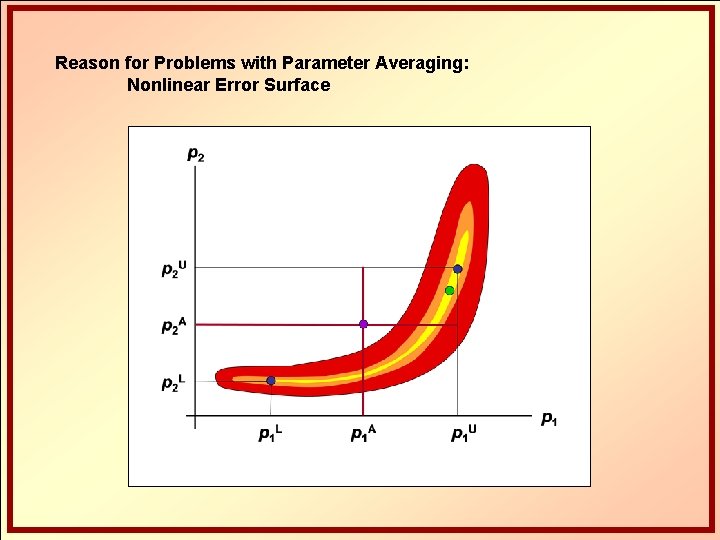

Reason for Problems with Parameter Averaging: Nonlinear Error Surface

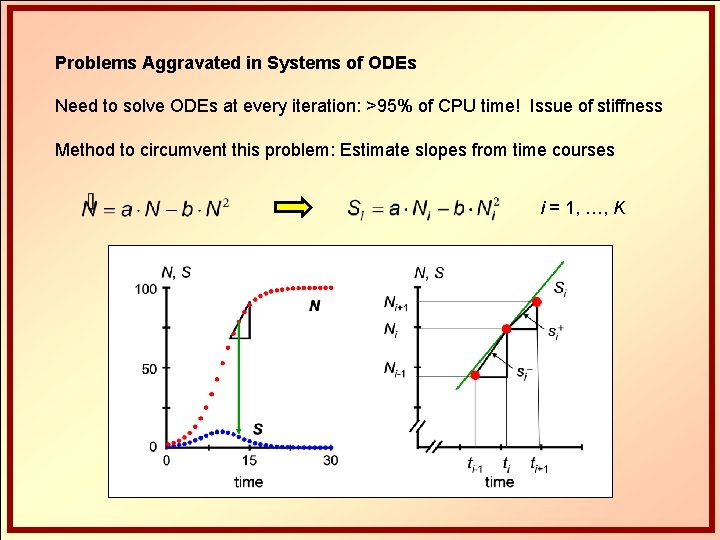

Problems Aggravated in Systems of ODEs Need to solve ODEs at every iteration: >95% of CPU time! Issue of stiffness Method to circumvent this problem: Estimate slopes from time courses i = 1, …, K

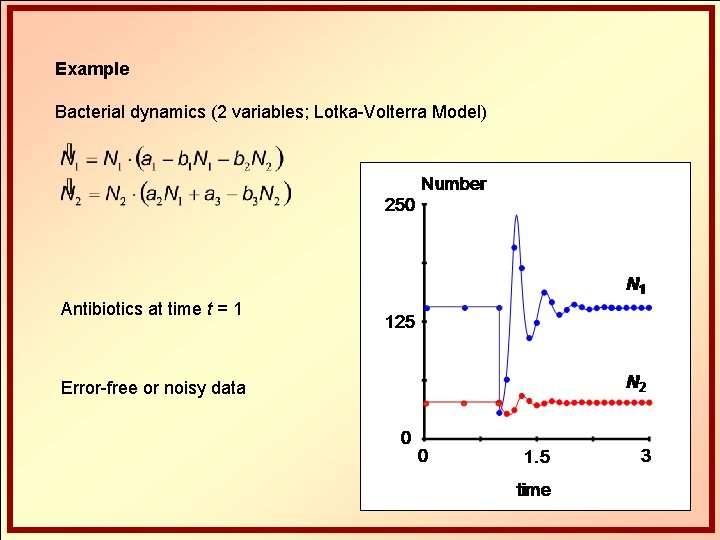

Example Bacterial dynamics (2 variables; Lotka-Volterra Model) Antibiotics at time t = 1 Error-free or noisy data

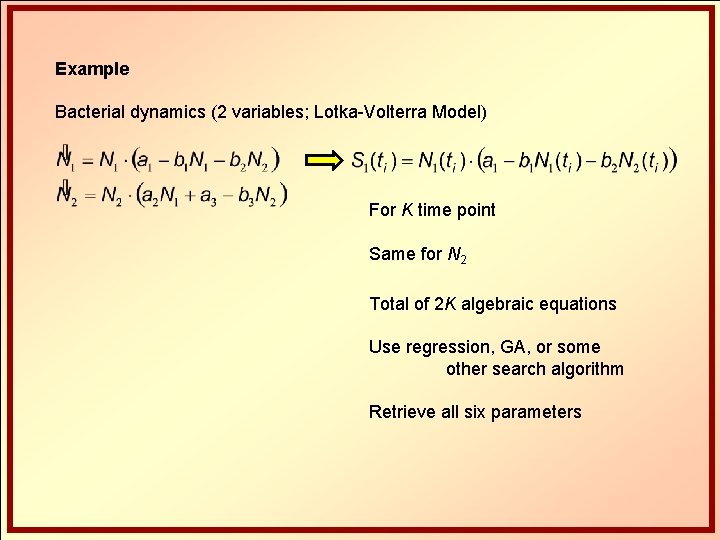

Example Bacterial dynamics (2 variables; Lotka-Volterra Model) For K time point Same for N 2 Total of 2 K algebraic equations Use regression, GA, or some other search algorithm Retrieve all six parameters

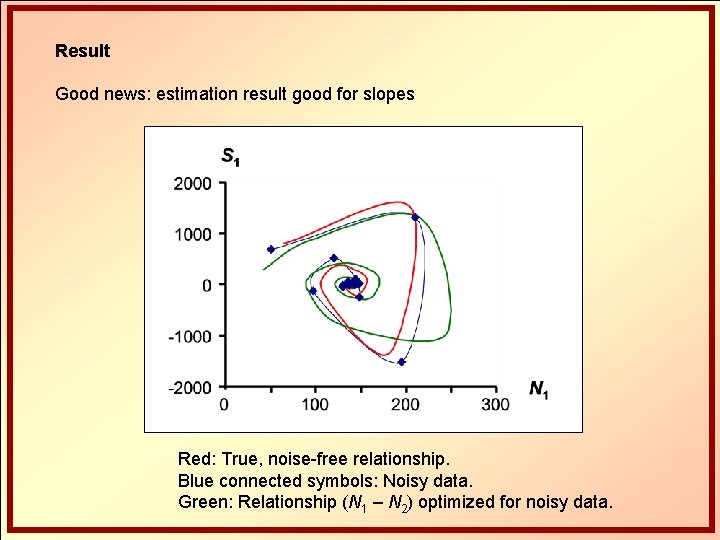

Result Good news: estimation result good for slopes Red: True, noise-free relationship. Blue connected symbols: Noisy data. Green: Relationship (N 1 – N 2) optimized for noisy data.

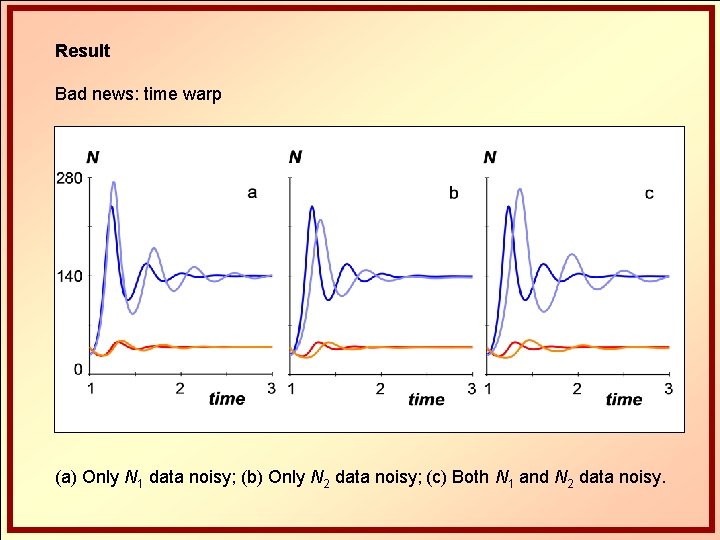

Result Bad news: time warp (a) Only N 1 data noisy; (b) Only N 2 data noisy; (c) Both N 1 and N 2 data noisy.

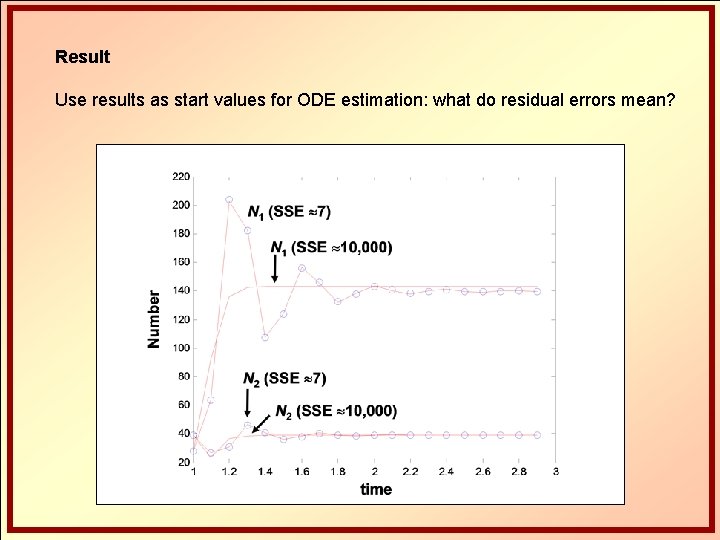

Result Use results as start values for ODE estimation: what do residual errors mean?

Result Use results as start values for ODE estimation: good results

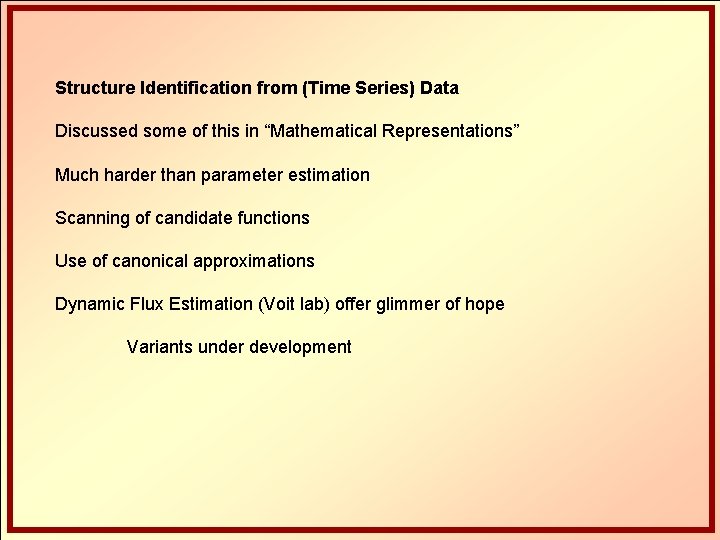

Structure Identification from (Time Series) Data Discussed some of this in “Mathematical Representations” Much harder than parameter estimation Scanning of candidate functions Use of canonical approximations Dynamic Flux Estimation (Voit lab) offer glimmer of hope Variants under development

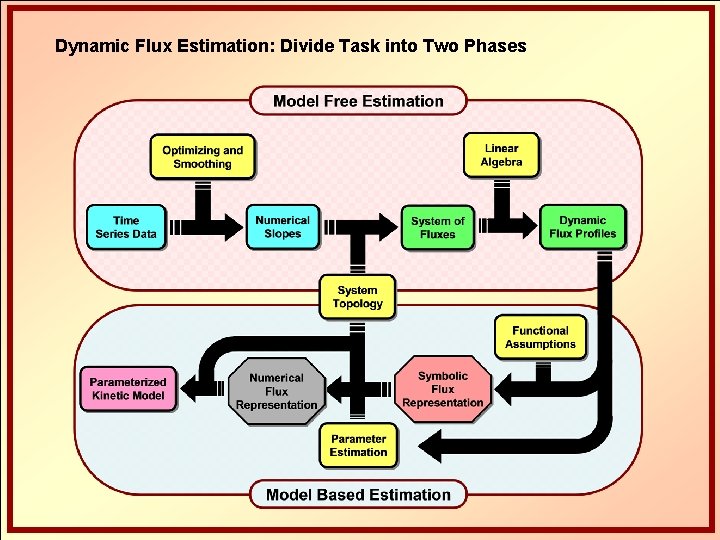

Dynamic Flux Estimation: Divide Task into Two Phases

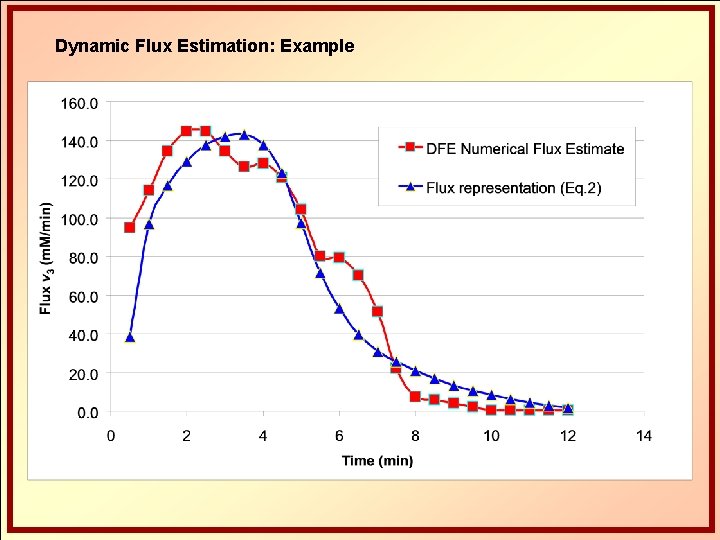

Dynamic Flux Estimation: Example ?

Dynamic Flux Estimation: Example

Summary Parameter estimation stands between reality and realistic model Crucial component of modeling Lots of research and CS No silver bullet yet! Most methods work sometimes, few work always For ODEs, slope estimation / decoupling a tremendous speed-up, but tends to incur time warps

- Slides: 42