Parallel Splash Belief Propagation Joseph E Gonzalez Yucheng

Parallel Splash Belief Propagation Joseph E. Gonzalez Yucheng Low Carlos Guestrin David O’Hallaron Computers which worked on this project: Big. Bro 1, Big. Bro 2, Big. Bro 3, Big. Bro 4, Big. Bro 5, Big. Bro 6, Bigger. Bro, Big. Bro. FS Tashish 01, Tashi 02, Tashi 03, Tashi 04, Tashi 05, Tashi 06, …, Tashi 30, parallel, gs 6167, koobcam (helped with writing) Carnegie Mellon

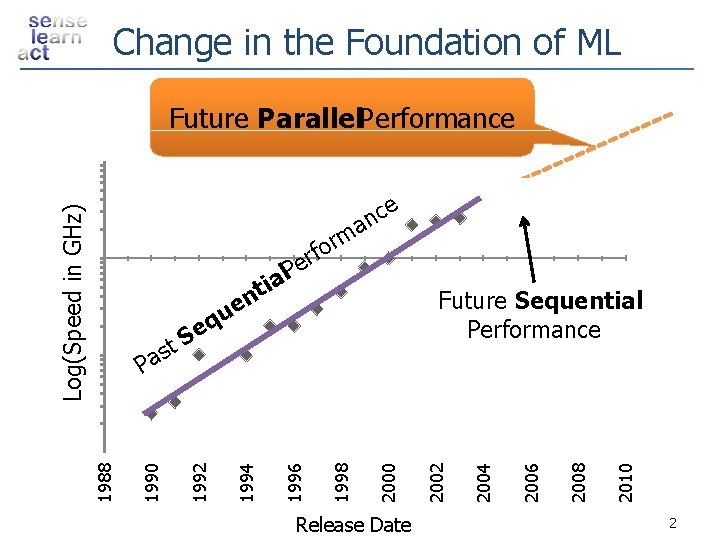

Change in the Foundation of ML Why talk. Parallel about parallelism now? Future Performance e Log(Speed in GHz) c an m r o erf l. P a i t n e u eq Release Date 2010 2008 2006 2004 2000 1998 1996 1994 1992 1990 1988 S t s Pa 2002 Future Sequential Performance 2

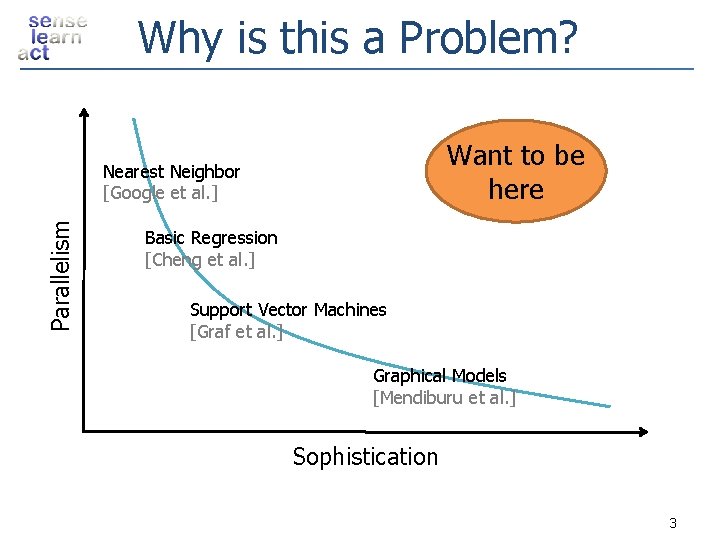

Why is this a Problem? Want to be here Parallelism Nearest Neighbor [Google et al. ] Basic Regression [Cheng et al. ] Support Vector Machines [Graf et al. ] Graphical Models [Mendiburu et al. ] Sophistication 3

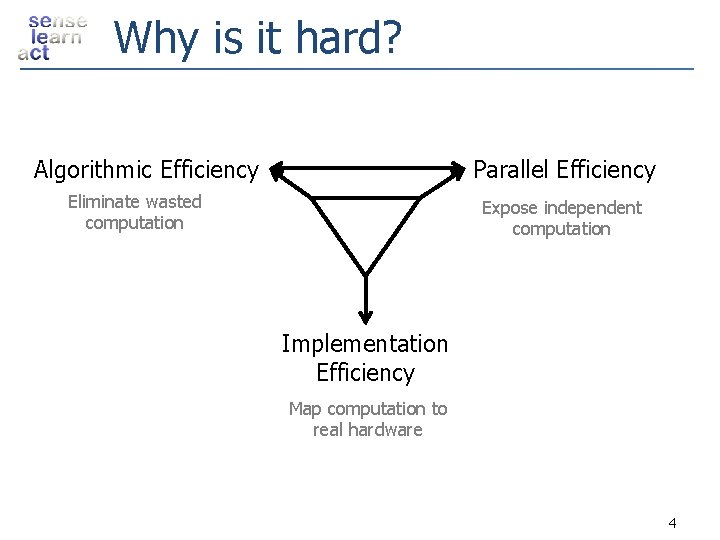

Why is it hard? Algorithmic Efficiency Parallel Efficiency Eliminate wasted computation Expose independent computation Implementation Efficiency Map computation to real hardware 4

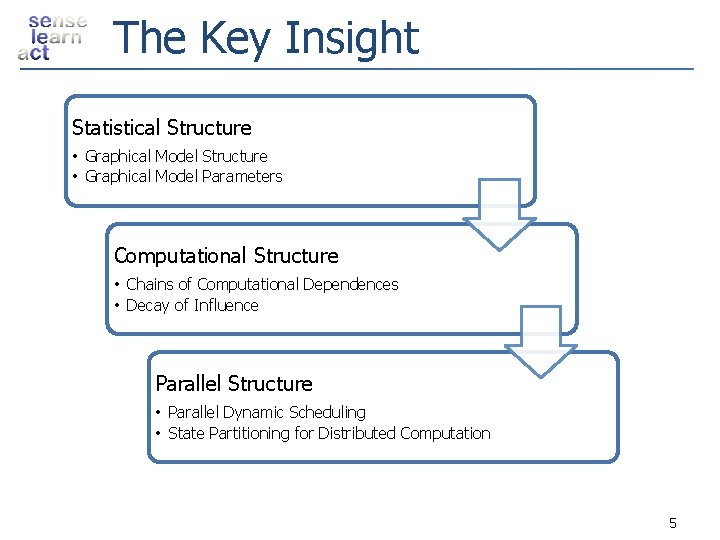

The Key Insight Statistical Structure • Graphical Model Parameters Computational Structure • Chains of Computational Dependences • Decay of Influence Parallel Structure • Parallel Dynamic Scheduling • State Partitioning for Distributed Computation 5

![The Result Splash Belief Propagation Parallelism Nearest Neighbor [Google et al. ] Goal Basic The Result Splash Belief Propagation Parallelism Nearest Neighbor [Google et al. ] Goal Basic](http://slidetodoc.com/presentation_image/48ccddb642c7c8fa53f0eeb7283deeb3/image-6.jpg)

The Result Splash Belief Propagation Parallelism Nearest Neighbor [Google et al. ] Goal Basic Regression [Cheng et al. ] Support Vector Machines [Graf et al. ] Graphical Models [Mendiburuetetal. ] [Gonzalez Sophistication 6

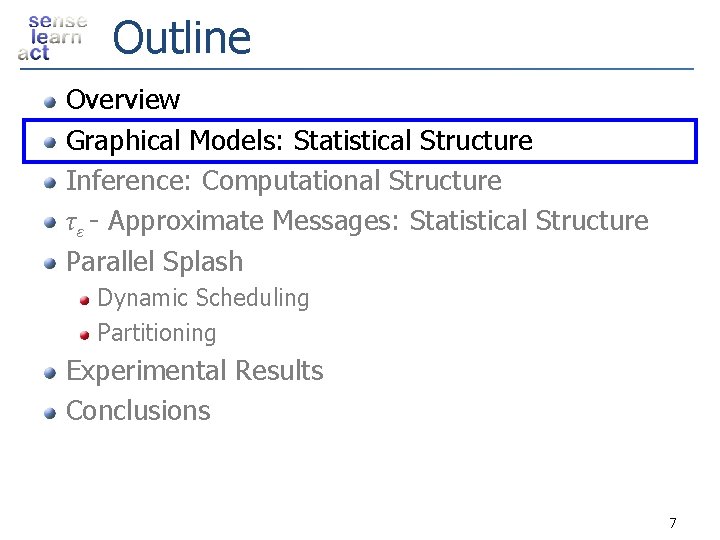

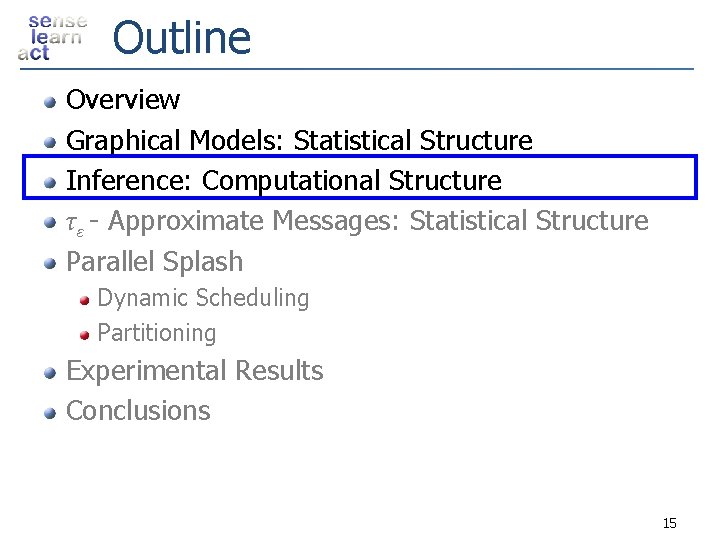

Outline Overview Graphical Models: Statistical Structure Inference: Computational Structure τ ε - Approximate Messages: Statistical Structure Parallel Splash Dynamic Scheduling Partitioning Experimental Results Conclusions 7

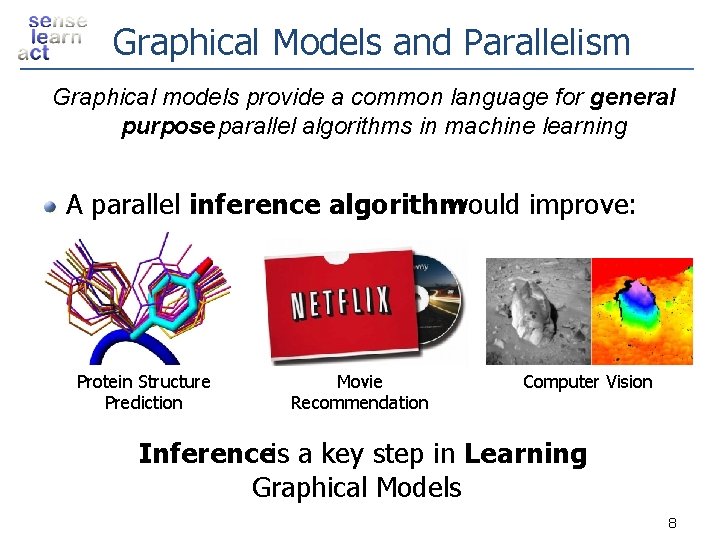

Graphical Models and Parallelism Graphical models provide a common language for general purpose parallel algorithms in machine learning A parallel inference algorithm would improve: Protein Structure Prediction Movie Recommendation Computer Vision Inferenceis a key step in Learning Graphical Models 8

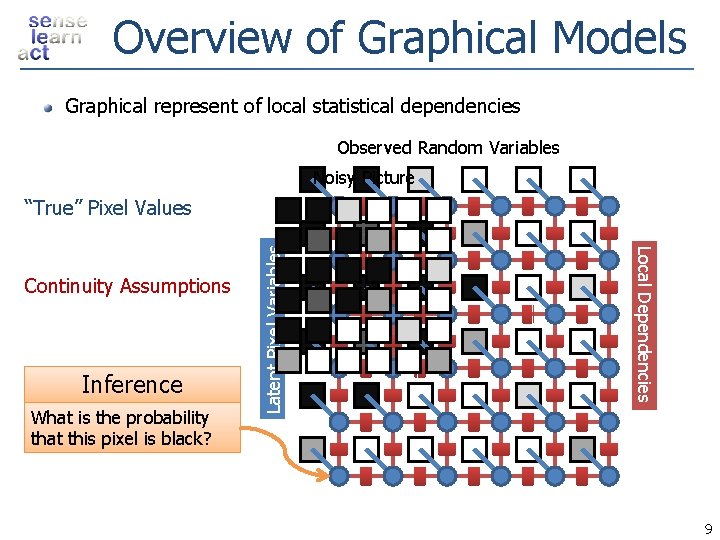

Overview of Graphical Models Graphical represent of local statistical dependencies Observed Random Variables Noisy Picture Inference What is the probability that this pixel is black? Local Dependencies Continuity Assumptions Latent Pixel Variables “True” Pixel Values 9

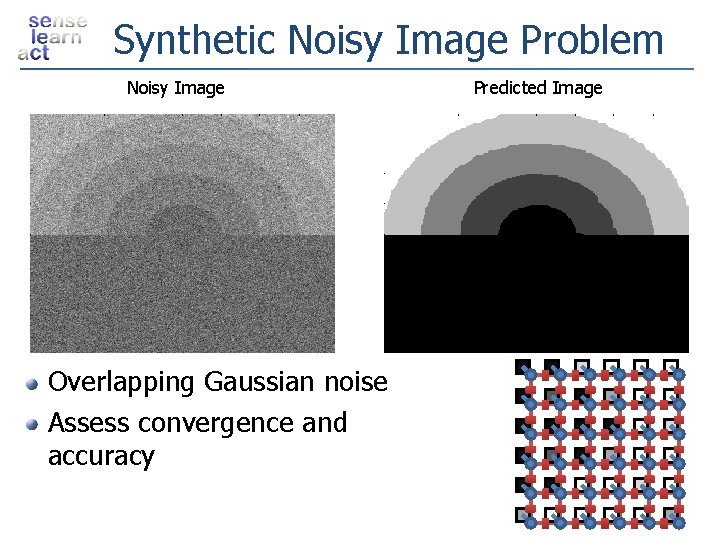

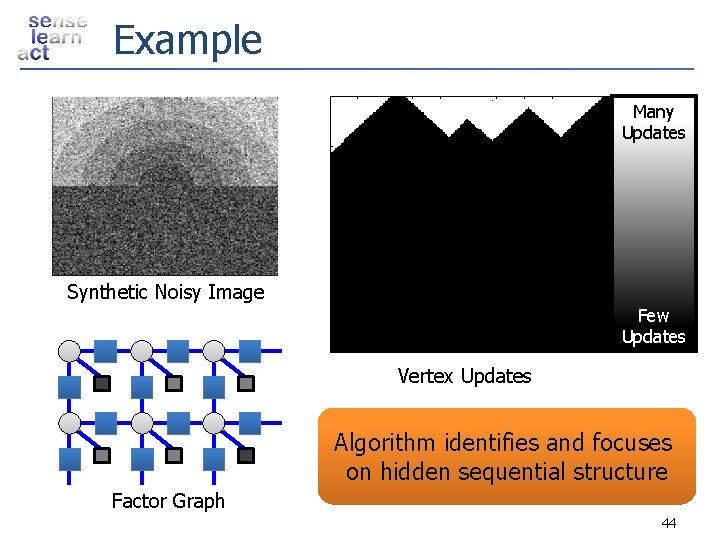

Synthetic Noisy Image Problem Noisy Image Overlapping Gaussian noise Assess convergence and accuracy Predicted Image

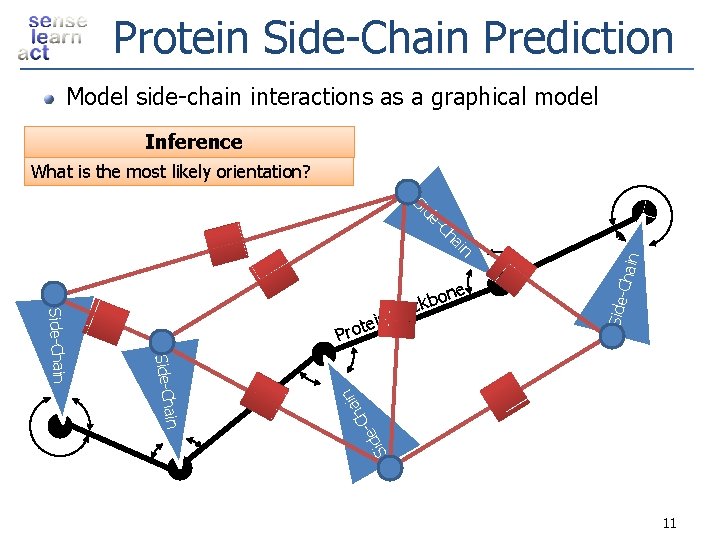

Protein Side-Chain Prediction Model side-chain interactions as a graphical model Inference What is the most likely orientation? Chain Sid e ain h -C Side- in ha -C de Si ain Side-Chain in te Pro one b k Bac 11

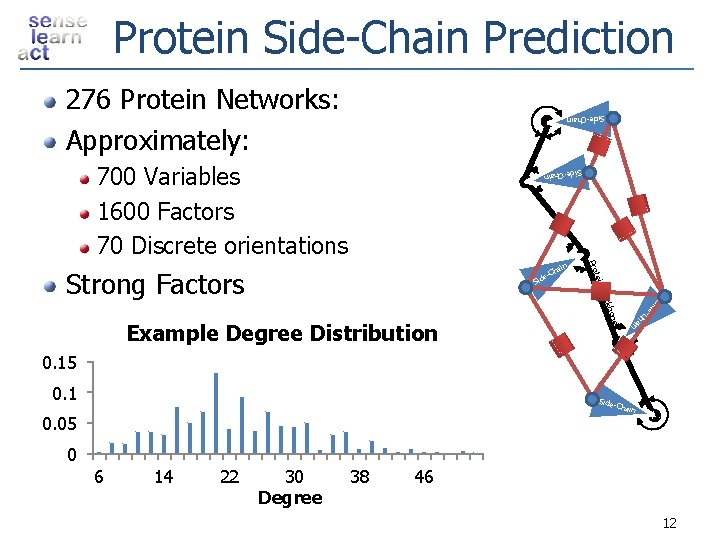

Protein Side-Chain Prediction 276 Protein Networks: Approximately: Side-Chain 700 Variables 1600 Factors 70 Discrete orientations Side-Ch ain tein Pro Strong Factors ain -Ch e Sid in e ha e. C bon d Si k Bac Example Degree Distribution 0. 15 0. 1 Side. C hain 0. 05 0 6 14 22 30 Degree 38 46 12

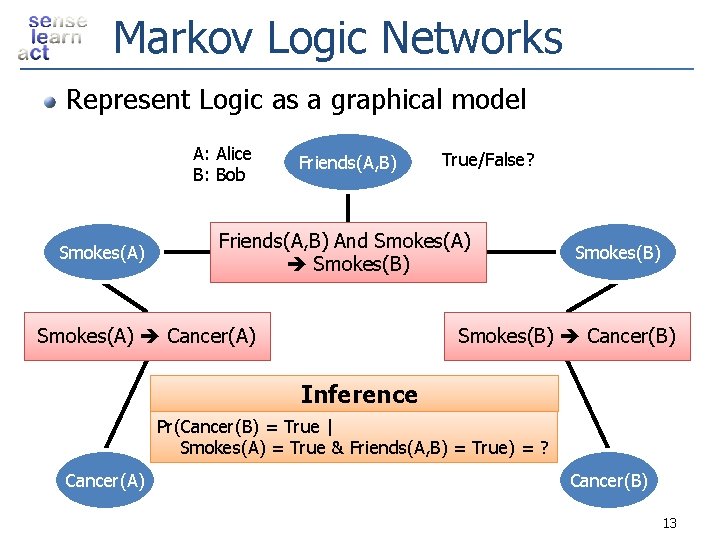

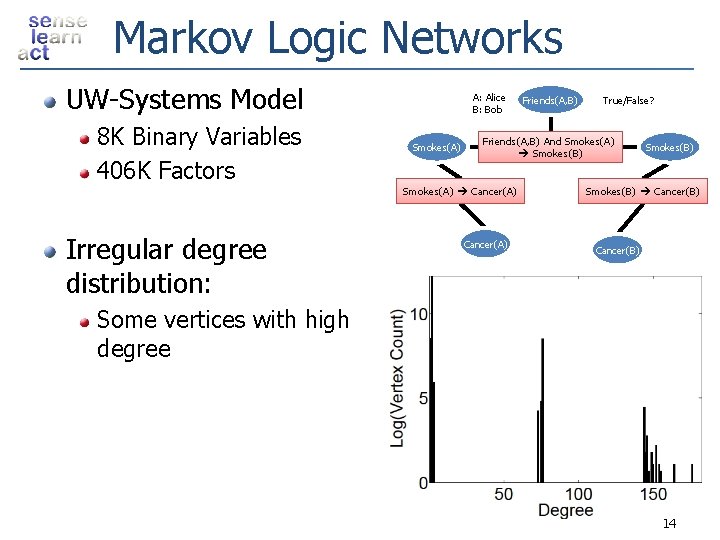

Markov Logic Networks Represent Logic as a graphical model A: Alice B: Bob Smokes(A) Friends(A, B) True/False? Friends(A, B) And Smokes(A) Smokes(B) Smokes(A) Cancer(A) Smokes(B) Cancer(B) Inference Pr(Cancer(B) = True | Smokes(A) = True & Friends(A, B) = True) = ? Cancer(A) Cancer(B) 13

Markov Logic Networks UW-Systems Model 8 K Binary Variables 406 K Factors Irregular degree distribution: A: Alice B: Bob Smokes(A) Friends(A, B) True/False? Friends(A, B) And Smokes(A) Smokes(B) Smokes(A) Cancer(A) Smokes(B) Cancer(B) Some vertices with high degree 14

Outline Overview Graphical Models: Statistical Structure Inference: Computational Structure τ ε - Approximate Messages: Statistical Structure Parallel Splash Dynamic Scheduling Partitioning Experimental Results Conclusions 15

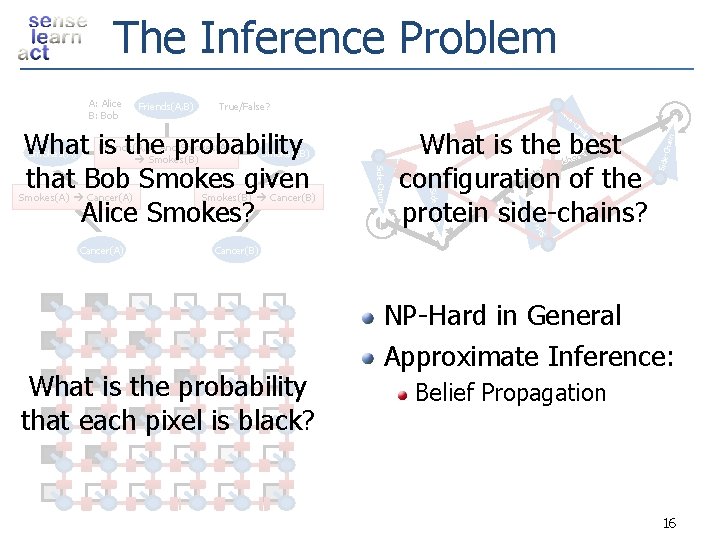

The Inference Problem True/False? -Ch ain Side. Chain n Smokes(B) Cancer(B) te Pro e n kbo ac in B ain Cancer(A) Smokes(B) Side-Chain Smokes(A) Cancer(A) What is the best configuration of the protein side-chains? ai Friends(A, B) And Smokes(A) Smokes(B) Ch e- Smokes(A) Side-Ch What is the probability that Bob Smokes given Alice Smokes? e Sid Friends(A, B) d Si A: Alice B: Bob Cancer(B) What is the probability that each pixel is black? NP-Hard in General Approximate Inference: Belief Propagation 16

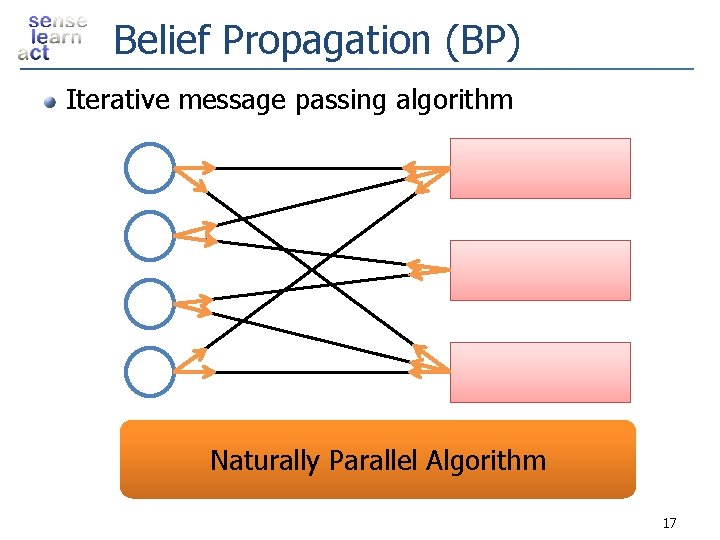

Belief Propagation (BP) Iterative message passing algorithm Naturally Parallel Algorithm 17

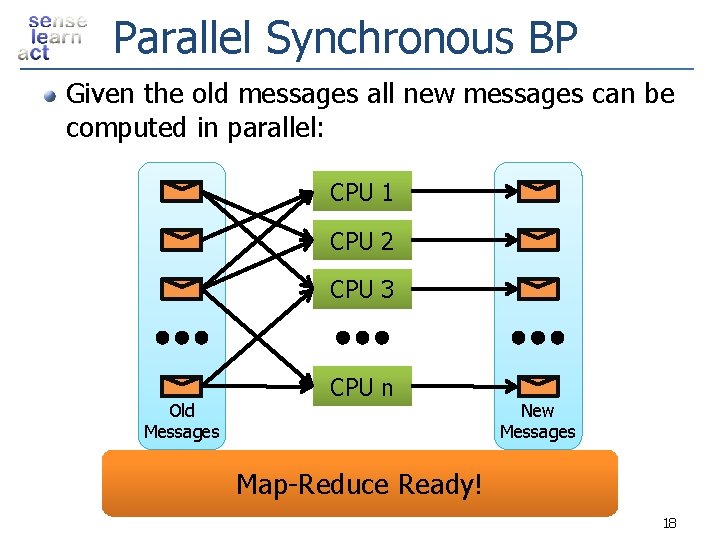

Parallel Synchronous BP Given the old messages all new messages can be computed in parallel: CPU 1 CPU 2 CPU 3 Old Messages CPU n New Messages Map-Reduce Ready! 18

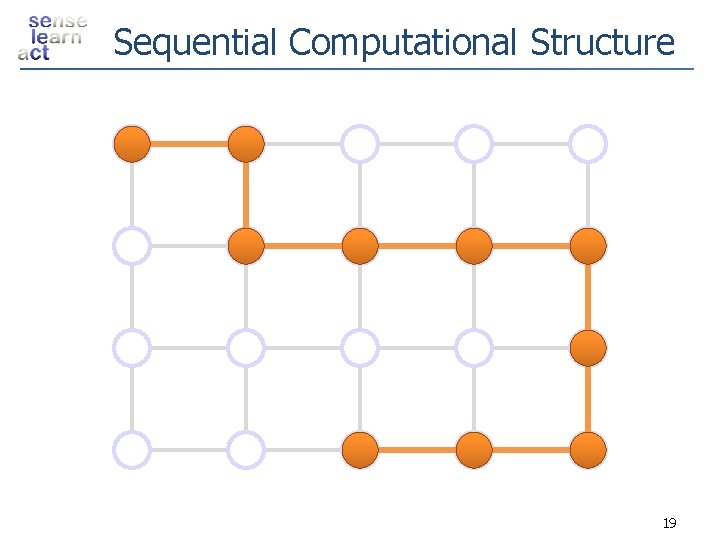

Sequential Computational Structure 19

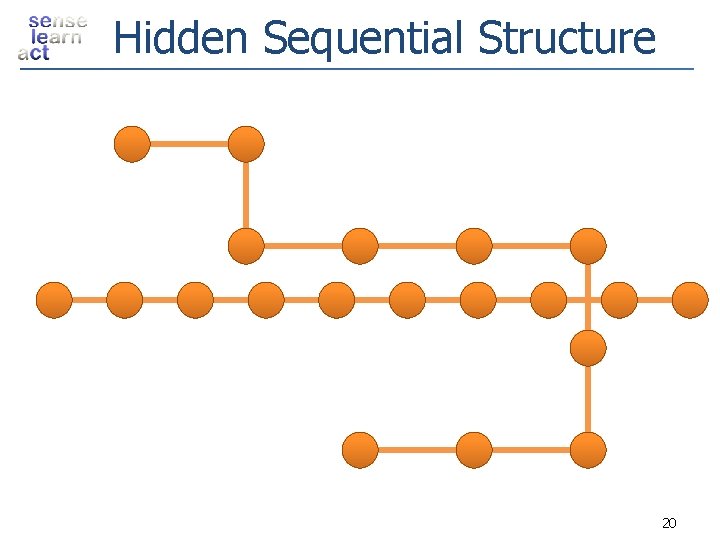

Hidden Sequential Structure 20

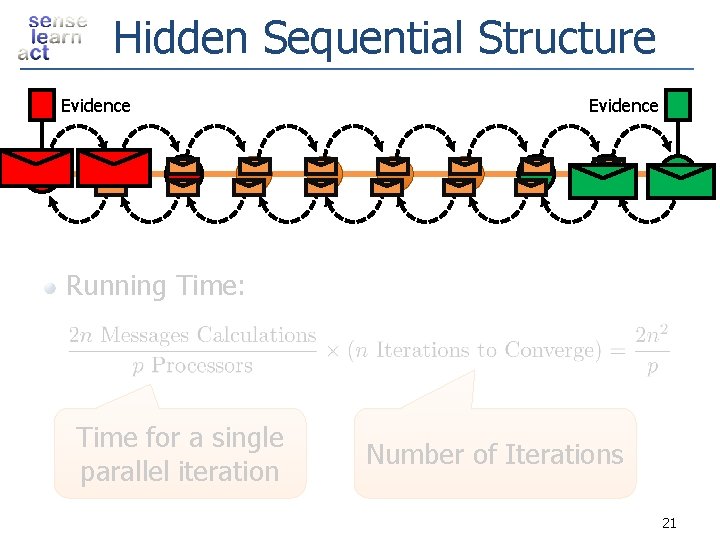

Hidden Sequential Structure Evidence Running Time: Time for a single parallel iteration Number of Iterations 21

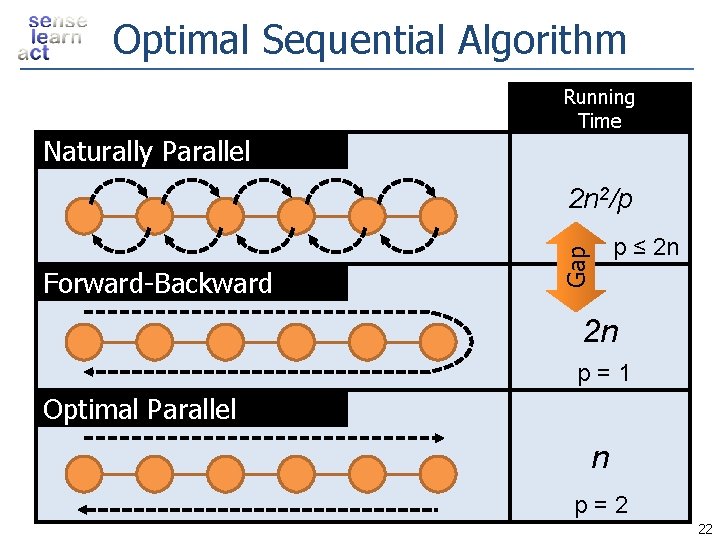

Optimal Sequential Algorithm Running Time Naturally Parallel 2 n 2/p Gap Forward-Backward p ≤ 2 n 2 n p=1 Optimal Parallel n p=2 22

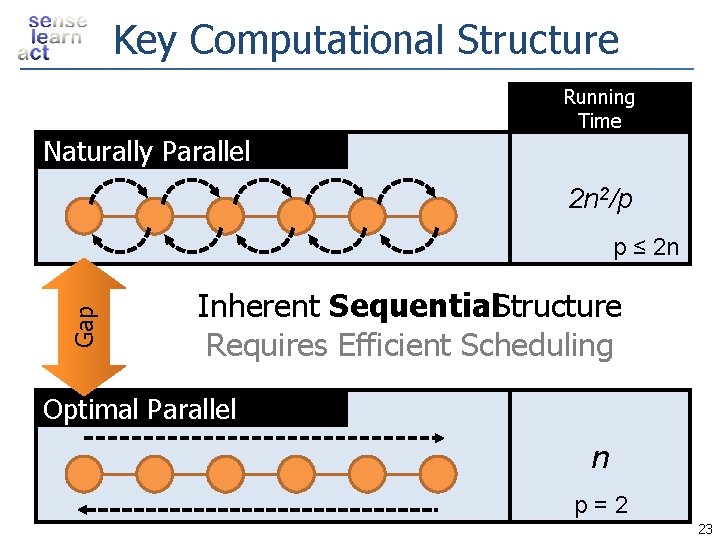

Key Computational Structure Running Time Naturally Parallel 2 n 2/p Gap p ≤ 2 n Inherent Sequential. Structure Requires Efficient Scheduling Optimal Parallel n p=2 23

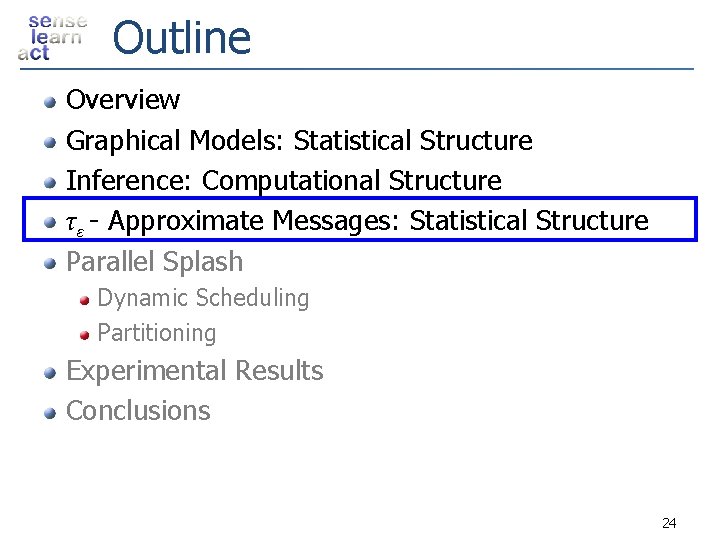

Outline Overview Graphical Models: Statistical Structure Inference: Computational Structure τ ε - Approximate Messages: Statistical Structure Parallel Splash Dynamic Scheduling Partitioning Experimental Results Conclusions 24

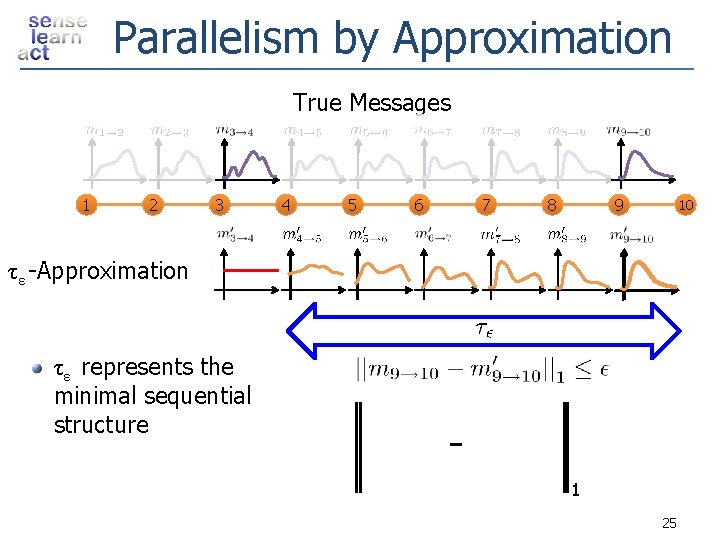

Parallelism by Approximation True Messages 1 2 3 4 5 6 7 8 9 10 τε -Approximation τε represents the minimal sequential structure 1 25

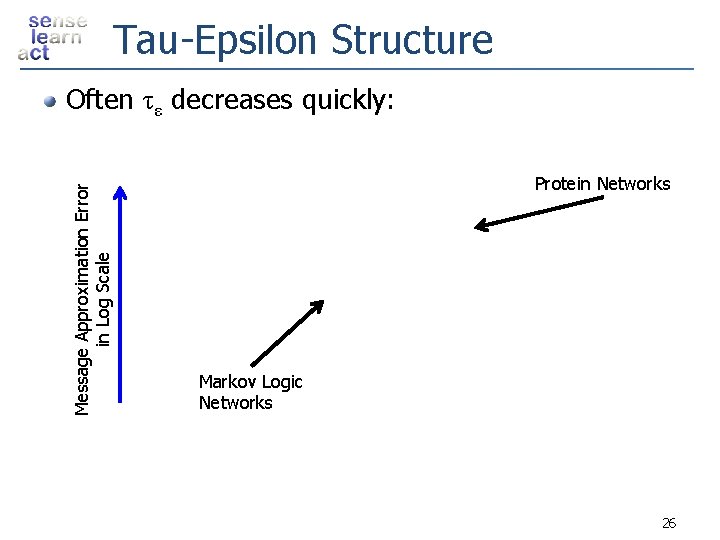

Tau-Epsilon Structure Message Approximation Error in Log Scale Often τε decreases quickly: Protein Networks Markov Logic Networks 26

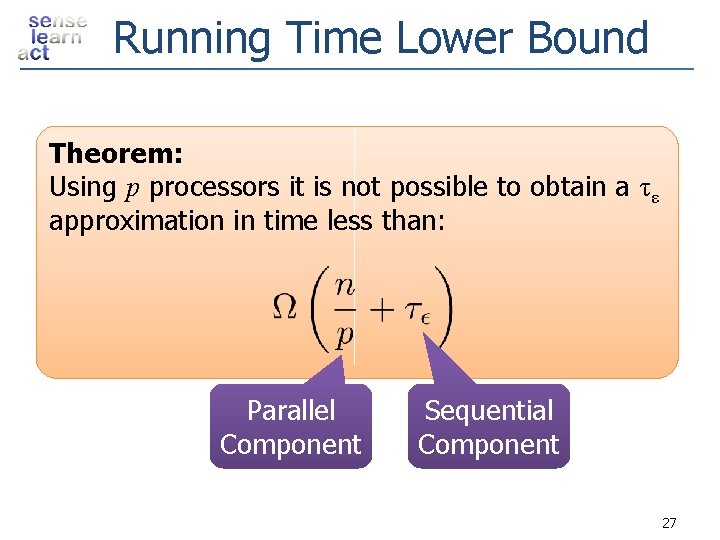

Running Time Lower Bound Theorem: Using p processors it is not possible to obtain a τε approximation in time less than: Parallel Component Sequential Component 27

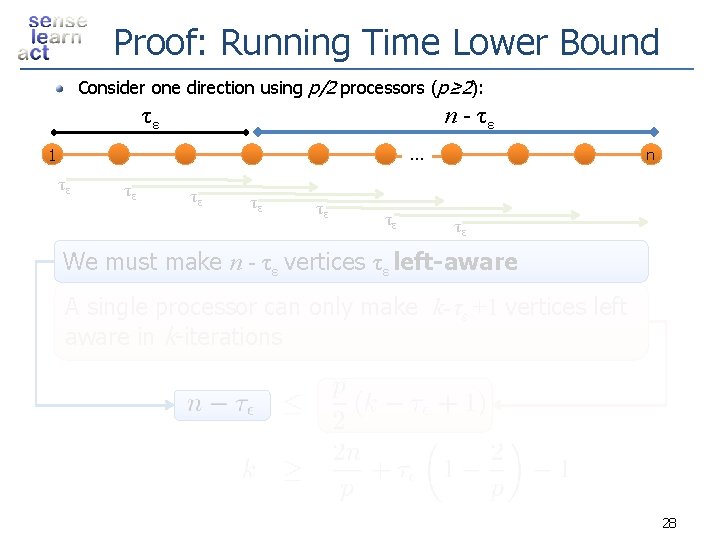

Proof: Running Time Lower Bound Consider one direction using p/2 processors (p≥ 2): τε n - τε … 1 τε τε τε n τε We must make n - τε vertices τε left-aware A single processor can only make k-τ ε +1 vertices left aware in k-iterations 28

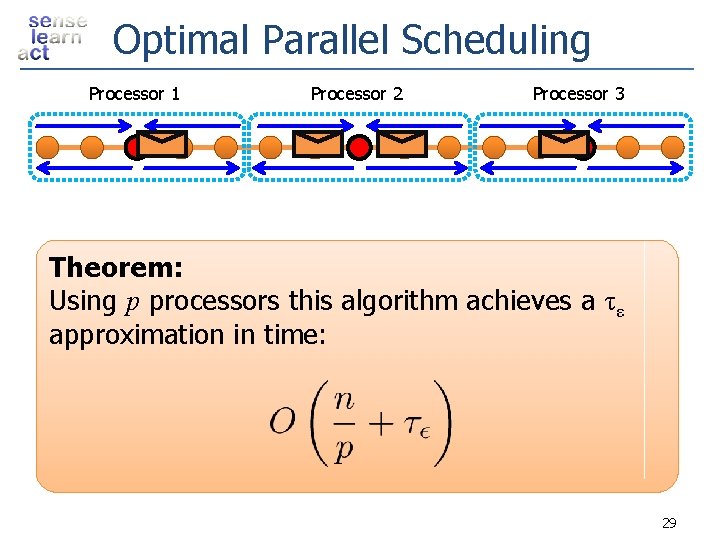

Optimal Parallel Scheduling Processor 1 Processor 2 Processor 3 Theorem: Using p processors this algorithm achieves a τε approximation in time: 29

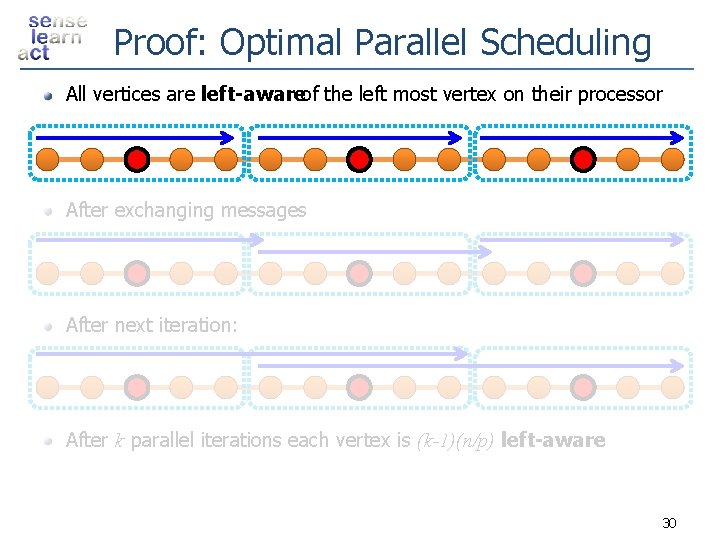

Proof: Optimal Parallel Scheduling All vertices are left-awareof the left most vertex on their processor After exchanging messages After next iteration: After k parallel iterations each vertex is (k-1)(n/p) left-aware 30

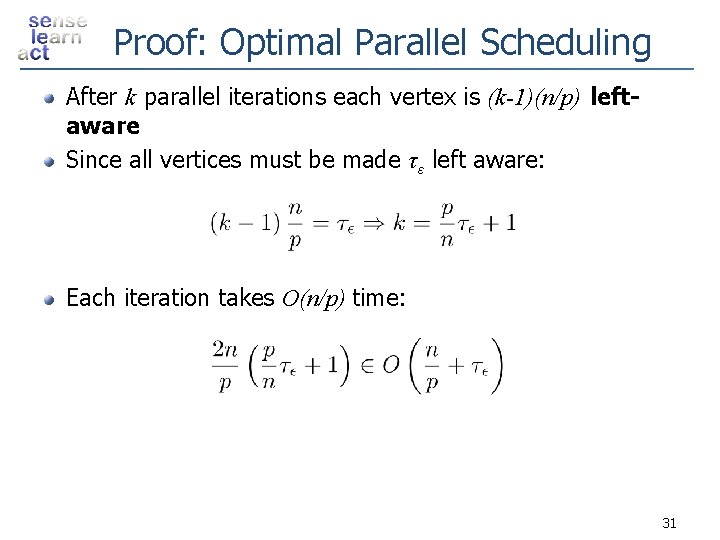

Proof: Optimal Parallel Scheduling After k parallel iterations each vertex is (k-1)(n/p) leftaware Since all vertices must be made τ ε left aware: Each iteration takes O(n/p) time: 31

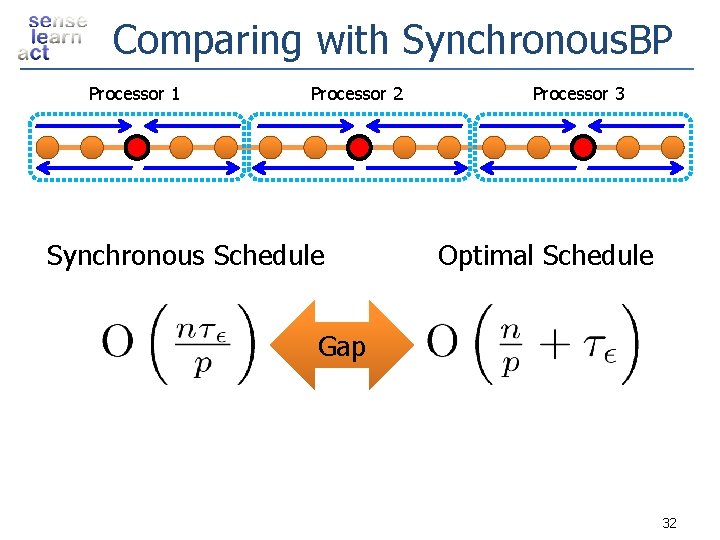

Comparing with Synchronous. BP Processor 1 Processor 2 Synchronous Schedule Processor 3 Optimal Schedule Gap 32

Outline Overview Graphical Models: Statistical Structure Inference: Computational Structure τ ε - Approximate Messages: Statistical Structure Parallel Splash Dynamic Scheduling Partitioning Experimental Results Conclusions 33

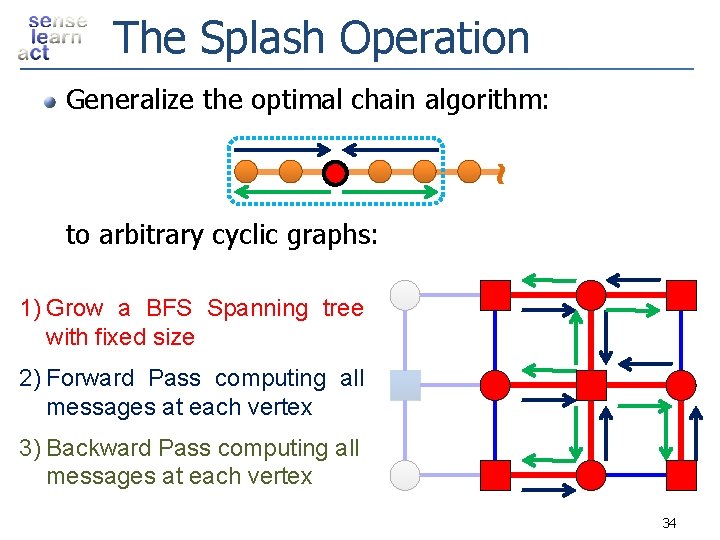

The Splash Operation ~ Generalize the optimal chain algorithm: to arbitrary cyclic graphs: 1) Grow a BFS Spanning tree with fixed size 2) Forward Pass computing all messages at each vertex 3) Backward Pass computing all messages at each vertex 34

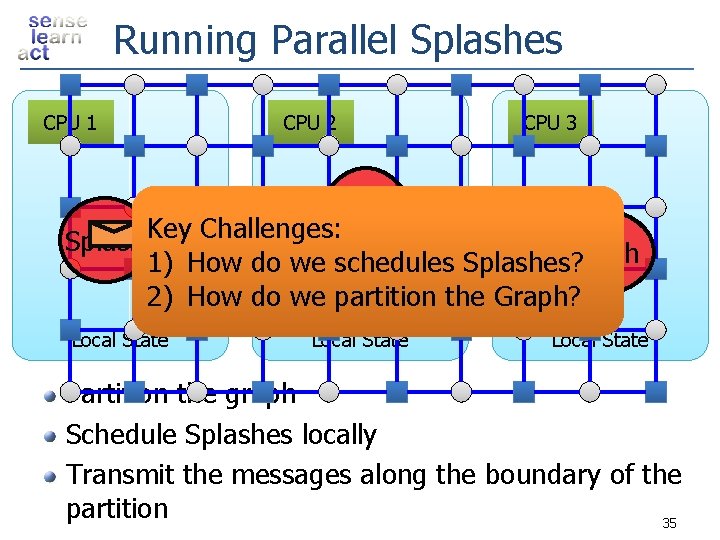

Running Parallel Splashes CPU 1 CPU 2 CPU 3 Splash Key Challenges: Splash 1) How do we schedules Splashes? 2) How do we partition the Graph? Local State Partition the graph Schedule Splashes locally Transmit the messages along the boundary of the partition 35

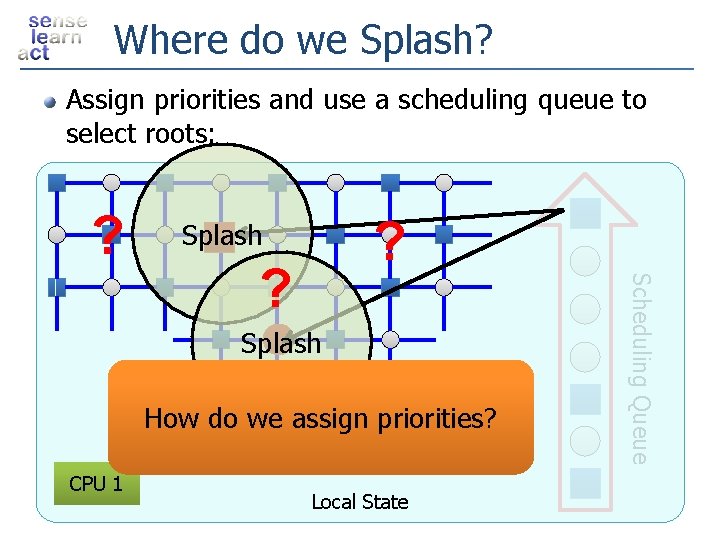

Where do we Splash? Assign priorities and use a scheduling queue to select roots: ? ? Splash How do we assign priorities? CPU 1 Local State Scheduling Queue ?

![Message Scheduling Residual Belief Propagation [Elidan et al. , UAI 06]: Assign priorities based Message Scheduling Residual Belief Propagation [Elidan et al. , UAI 06]: Assign priorities based](http://slidetodoc.com/presentation_image/48ccddb642c7c8fa53f0eeb7283deeb3/image-37.jpg)

Message Scheduling Residual Belief Propagation [Elidan et al. , UAI 06]: Assign priorities based on change in inbound messages Small Change Message Large Change Message 1 2 Small Change: Message Expensive No-Op Large Change: Message Informative Update Message 37

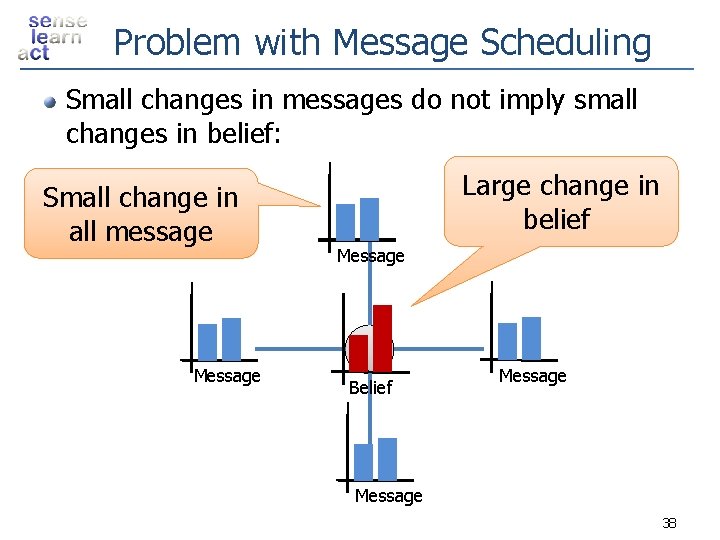

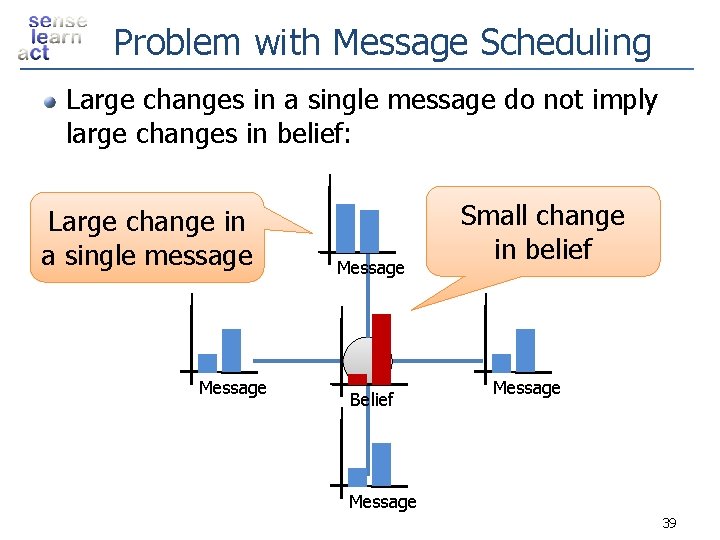

Problem with Message Scheduling Small changes in messages do not imply small changes in belief: Small change in all message Message Large change in belief Message Belief Message 38

Problem with Message Scheduling Large changes in a single message do not imply large changes in belief: Large change in a single message Message Belief Small change in belief Message 39

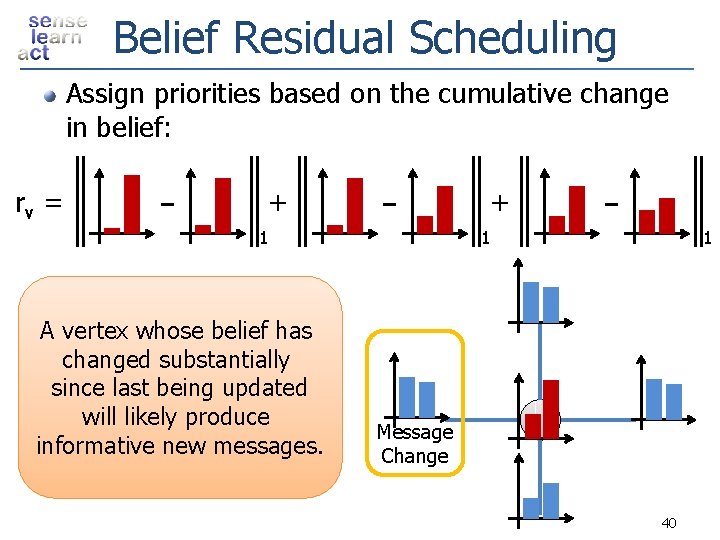

Belief Residual Scheduling Assign priorities based on the cumulative change in belief: rv = + + 1 A vertex whose belief has changed substantially since last being updated will likely produce informative new messages. 1 1 Message Change 40

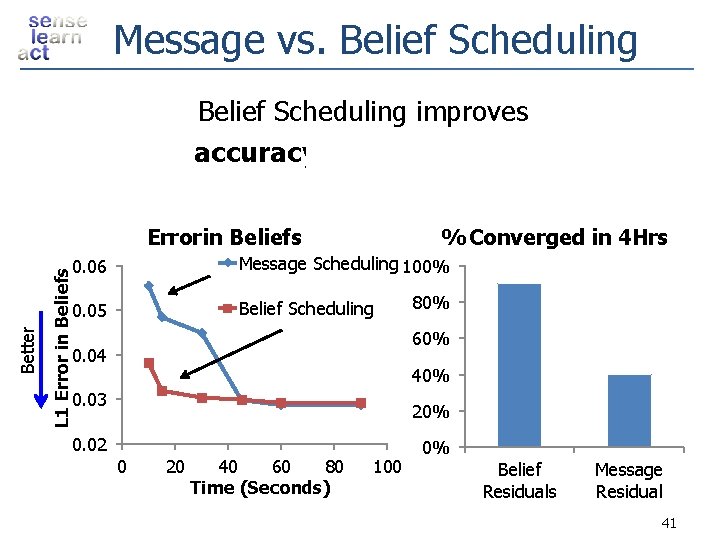

Message vs. Belief Scheduling improves accuracyand convergence L 1 Error in Beliefs Better Error in Beliefs % Converged in 4 Hrs 0. 06 Message Scheduling 100% 0. 05 Belief Scheduling 80% 60% 0. 04 40% 0. 03 20% 0. 02 0 20 40 60 80 Time (Seconds) 100 0% Belief Residuals Message Residual 41

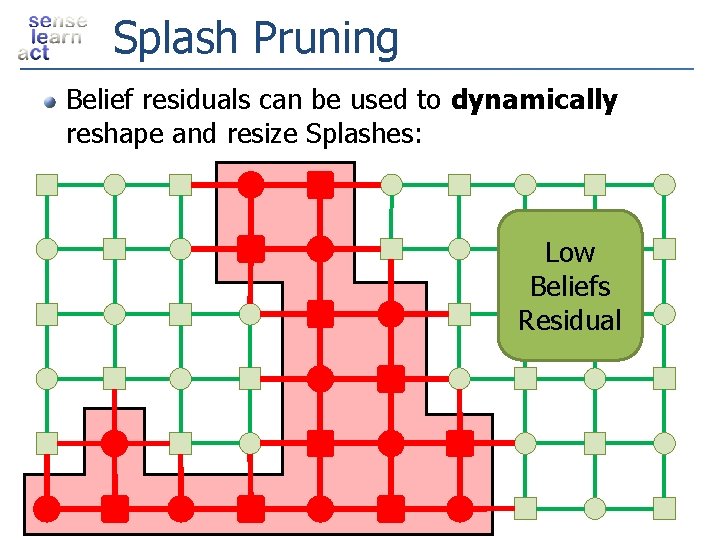

Splash Pruning Belief residuals can be used to dynamically reshape and resize Splashes: Low Beliefs Residual

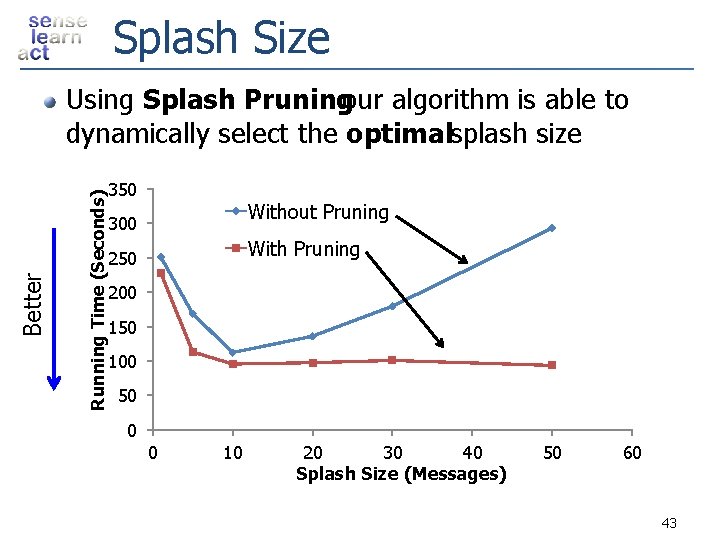

Splash Size Running Time (Seconds) Better Using Splash Pruningour algorithm is able to dynamically select the optimalsplash size 350 Without Pruning 300 With Pruning 250 200 150 100 50 0 0 10 20 30 40 Splash Size (Messages) 50 60 43

Example Many Updates Synthetic Noisy Image Few Updates Vertex Updates Algorithm identifies and focuses on hidden sequential structure Factor Graph 44

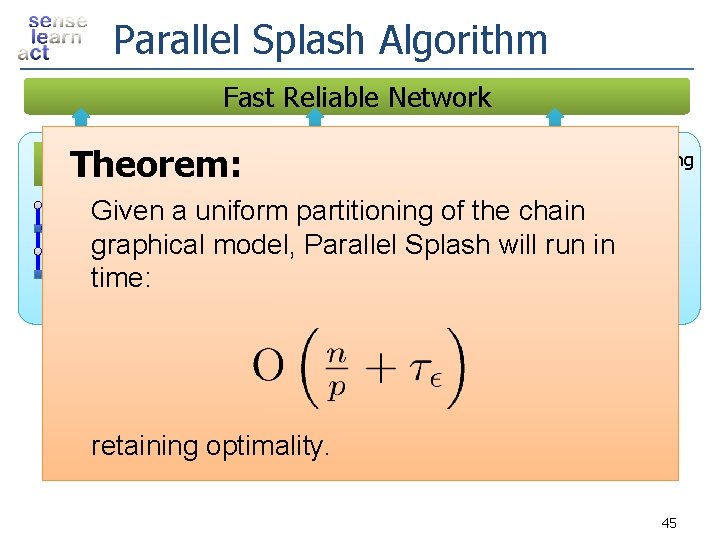

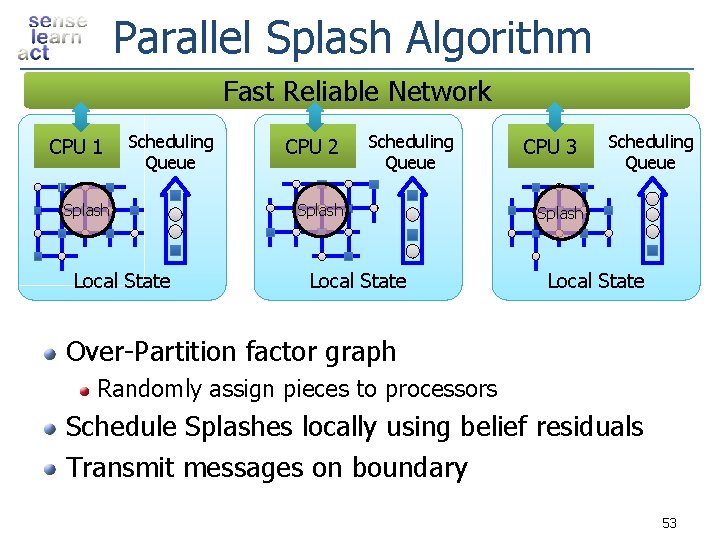

Parallel Splash Algorithm Fast Reliable Network Scheduling Theorem: Queue CPU 1 CPU 2 Scheduling Queue CPU 3 Scheduling Queue Given a uniform partitioning of the chain Splash graphical model, Parallel Splash will run in time: Splash Local State Partition factor graph over processors Schedule Splashes locally using belief residuals Transmit messages on boundary retaining optimality. 45

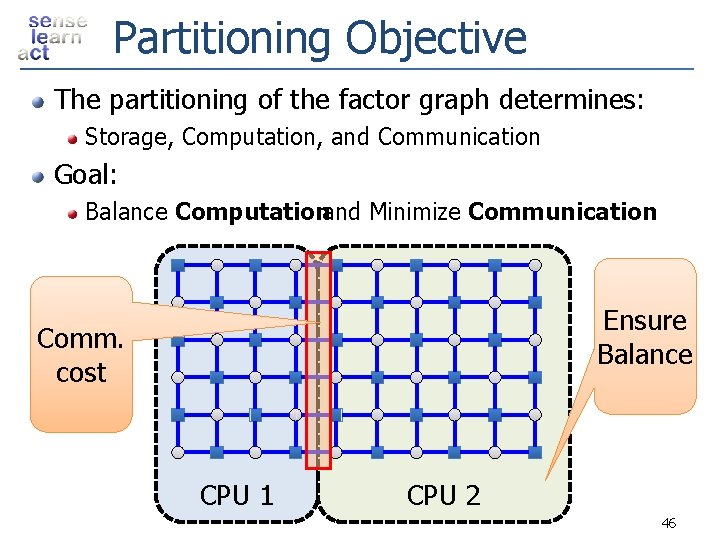

Partitioning Objective The partitioning of the factor graph determines: Storage, Computation, and Communication Goal: Balance Computationand Minimize Communication Ensure Balance Comm. cost CPU 1 CPU 2 46

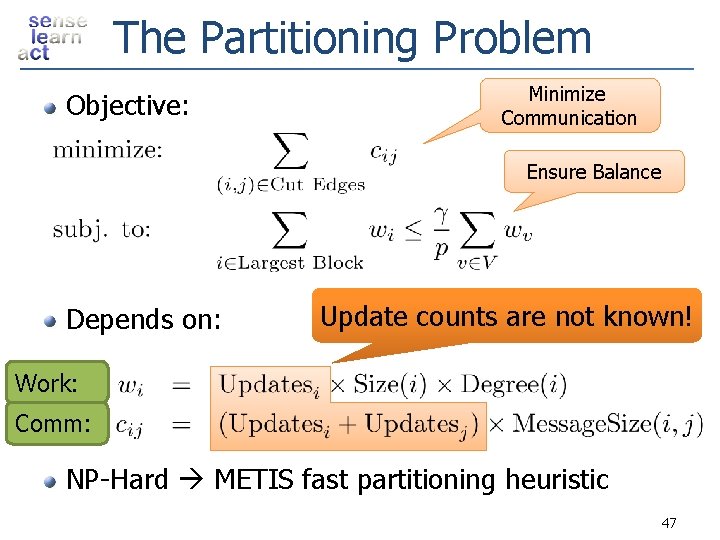

The Partitioning Problem Objective: Minimize Communication Ensure Balance Depends on: Update counts are not known! Work: Comm: NP-Hard METIS fast partitioning heuristic 47

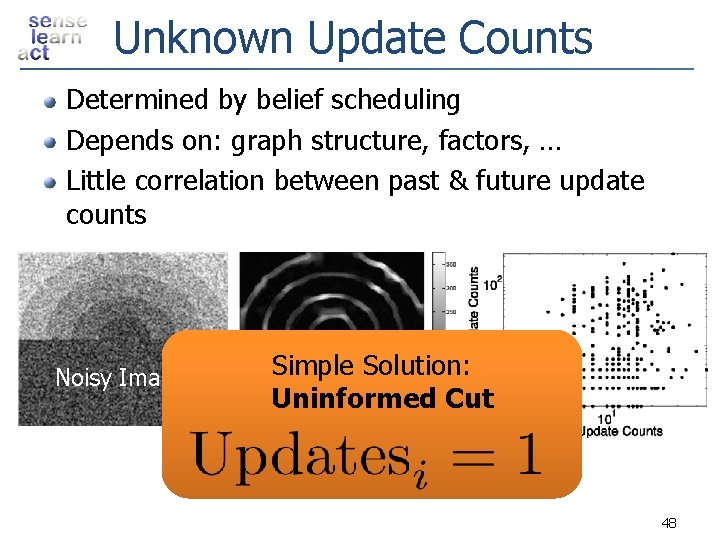

Unknown Update Counts Determined by belief scheduling Depends on: graph structure, factors, … Little correlation between past & future update counts Noisy Image Simple Solution: Update Counts Uninformed Cut 48

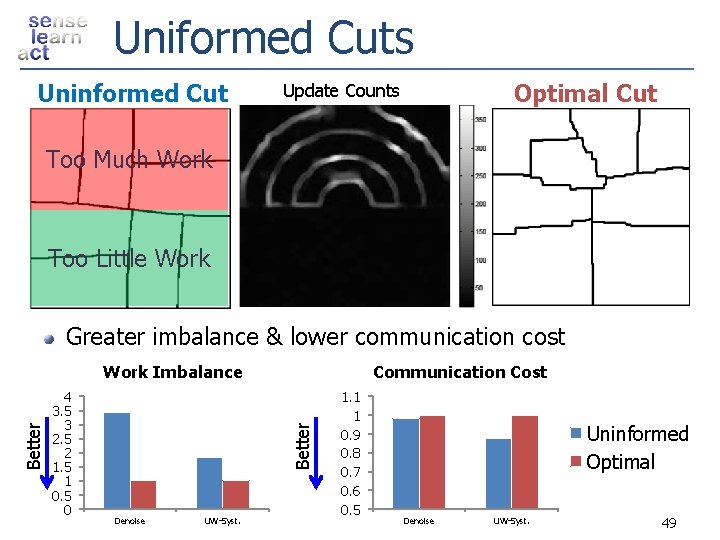

Uniformed Cuts Uninformed Cut Optimal Cut Update Counts Too Much Work Too Little Work Greater imbalance & lower communication cost 4 3. 5 3 2. 5 2 1. 5 1 0. 5 0 Communication Cost Better Work Imbalance Denoise UW-Syst. 1. 1 1 0. 9 0. 8 0. 7 0. 6 0. 5 Uninformed Optimal Denoise UW-Syst. 49

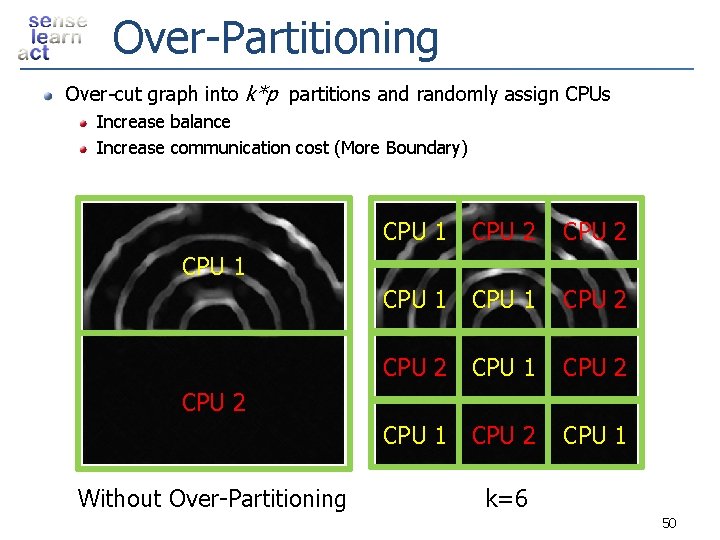

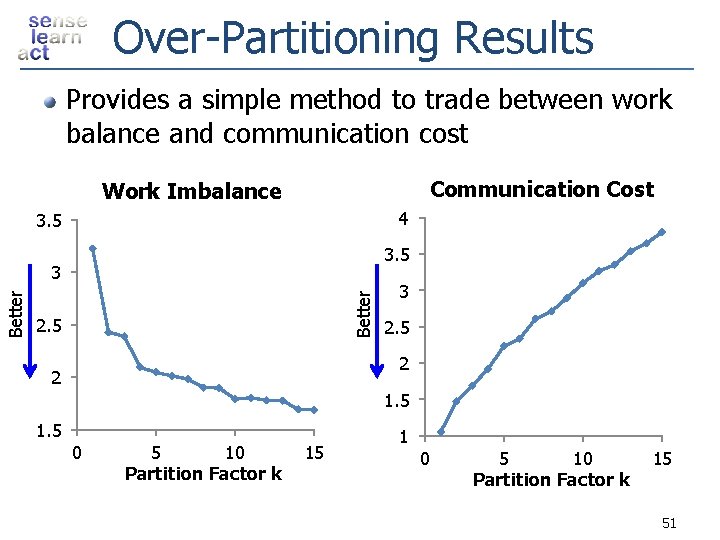

Over-Partitioning Over-cut graph into k*p partitions and randomly assign CPUs Increase balance Increase communication cost (More Boundary) CPU 1 CPU 2 CPU 1 CPU 2 CPU 1 CPU 2 Without Over-Partitioning k=6 50

Over-Partitioning Results Provides a simple method to trade between work balance and communication cost Communication Cost Work Imbalance 4 3. 5 Better 3 2. 5 2 2 1. 5 0 5 10 Partition Factor k 15 1 0 5 10 Partition Factor k 15 51

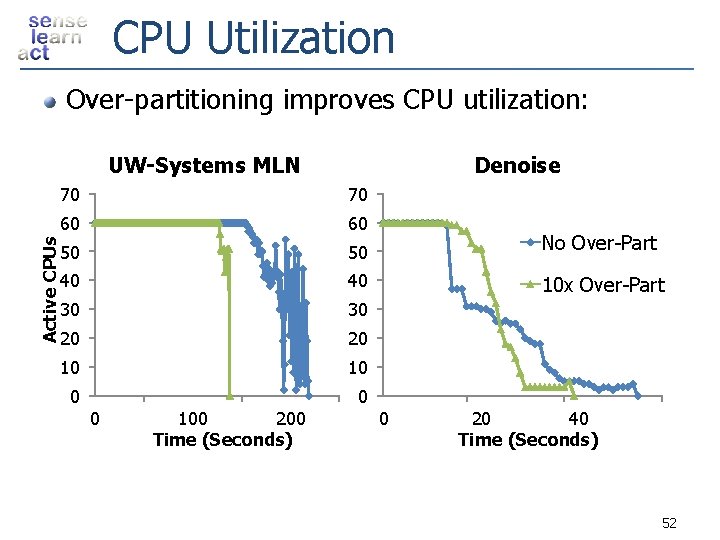

CPU Utilization Over-partitioning improves CPU utilization: Active CPUs UW-Systems MLN Denoise 70 70 60 60 50 50 No Over-Part 40 40 10 x Over-Part 30 30 20 20 10 100 200 Time (Seconds) 0 20 40 Time (Seconds) 52

Parallel Splash Algorithm Fast Reliable Network CPU 1 Scheduling Queue Splash Local State CPU 2 Scheduling Queue Splash Local State CPU 3 Scheduling Queue Splash Local State Over-Partition factor graph Randomly assign pieces to processors Schedule Splashes locally using belief residuals Transmit messages on boundary 53

Outline Overview Graphical Models: Statistical Structure Inference: Computational Structure τ ε - Approximate Messages: Statistical Structure Parallel Splash Dynamic Scheduling Partitioning Experimental Results Conclusions 54

Experiments Implemented in C++ using MPICH 2 as a message passing API Ran on Intel Open. Cirrus cluster: 120 processors 15 Nodes with 2 x Quad Core Intel Xeon Processors Gigabit Ethernet Switch Tested on Markov Logic Networks obtained from Alchemy [Domingos et al. SSPR 08] Present results on largest UW-Systems and smallest UW-Languages MLNs 55

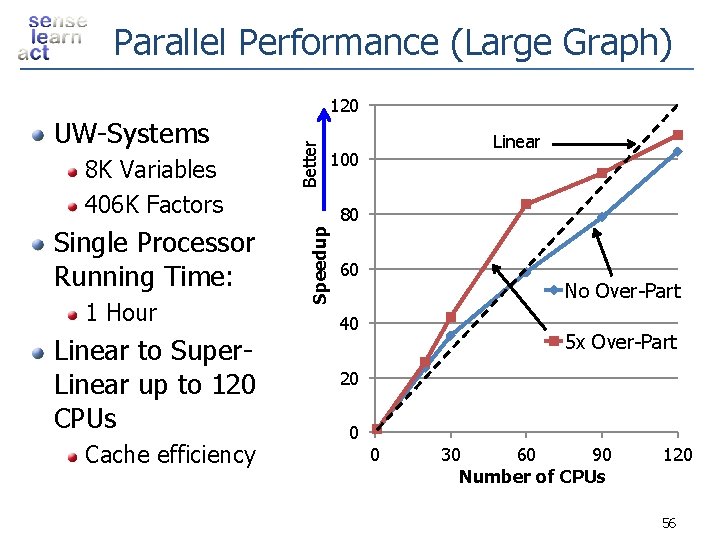

Parallel Performance (Large Graph) Single Processor Running Time: 1 Hour Linear to Super. Linear up to 120 CPUs Cache efficiency Better 8 K Variables 406 K Factors Linear 100 80 Speedup UW-Systems 120 60 No Over-Part 40 5 x Over-Part 20 0 0 30 60 90 Number of CPUs 120 56

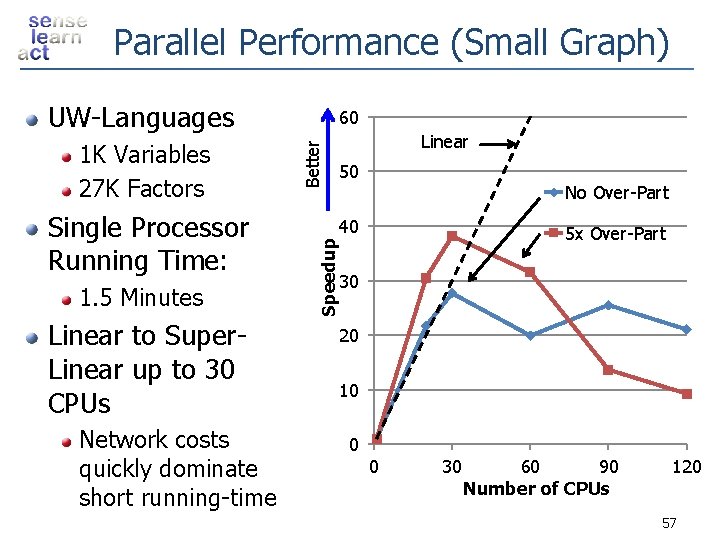

Parallel Performance (Small Graph) UW-Languages 1. 5 Minutes Linear to Super. Linear up to 30 CPUs Network costs quickly dominate short running-time Linear 50 No Over-Part 40 Speedup Single Processor Running Time: Better 1 K Variables 27 K Factors 60 5 x Over-Part 30 20 10 0 0 30 60 90 Number of CPUs 120 57

Outline Overview Graphical Models: Statistical Structure Inference: Computational Structure τ ε - Approximate Messages: Statistical Structure Parallel Splash Dynamic Scheduling Partitioning Experimental Results Conclusions 58

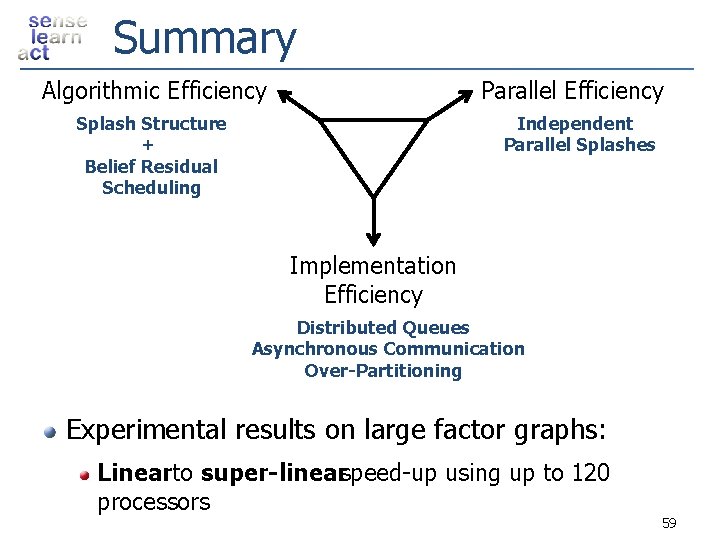

Summary Algorithmic Efficiency Parallel Efficiency Splash Structure + Belief Residual Scheduling Independent Parallel Splashes Implementation Efficiency Distributed Queues Asynchronous Communication Over-Partitioning Experimental results on large factor graphs: Linearto super-linearspeed-up using up to 120 processors 59

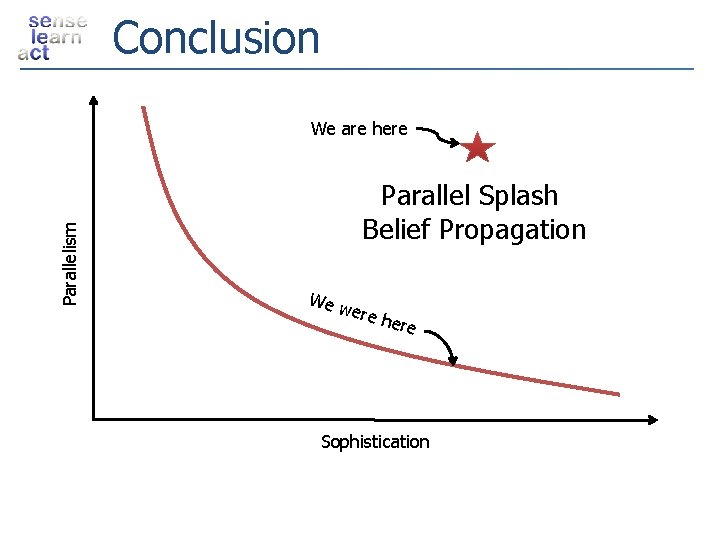

Conclusion Parallelism We are here Parallel Splash Belief Propagation We w ere h ere Sophistication

Questions 61

Protein Results 62

3 D Video Task 63

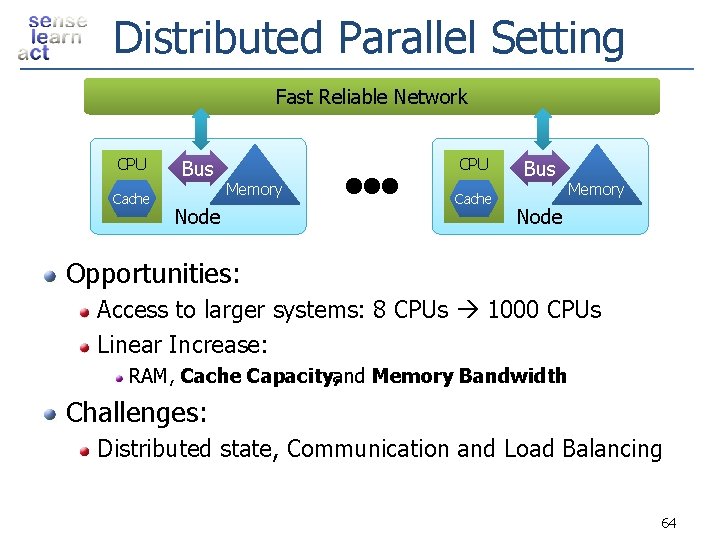

Distributed Parallel Setting Fast Reliable Network CPU Cache Bus CPU Memory Node Cache Bus Memory Node Opportunities: Access to larger systems: 8 CPUs 1000 CPUs Linear Increase: RAM, Cache Capacity, and Memory Bandwidth Challenges: Distributed state, Communication and Load Balancing 64

- Slides: 64