Parallel Programming with PVM Prof Sivarama Dandamudi School

![Example (cont’d) main() { int cc, tid[NPROCS]; long vector[MAX_SIZE]; double sum = 0, partial_sum; Example (cont’d) main() { int cc, tid[NPROCS]; long vector[MAX_SIZE]; double sum = 0, partial_sum;](https://slidetodoc.com/presentation_image_h2/05439a22db5f95df55deeb3278cd8961/image-35.jpg)

![Example (cont’d) tid[0] = pvm_mytid(); /* establish my tid */ /* get # of Example (cont’d) tid[0] = pvm_mytid(); /* establish my tid */ /* get # of](https://slidetodoc.com/presentation_image_h2/05439a22db5f95df55deeb3278cd8961/image-38.jpg)

![Example (cont’d) if (nhost > 1) pvm_spawn("vecsum_slave", (char **)0, 0, "", nhost-1, &tid[1]); for Example (cont’d) if (nhost > 1) pvm_spawn("vecsum_slave", (char **)0, 0, "", nhost-1, &tid[1]); for](https://slidetodoc.com/presentation_image_h2/05439a22db5f95df55deeb3278cd8961/image-39.jpg)

![Example (cont’d) for (i=0; i<size; i++) /* perform local sum */ sum += vector[i]; Example (cont’d) for (i=0; i<size; i++) /* perform local sum */ sum += vector[i];](https://slidetodoc.com/presentation_image_h2/05439a22db5f95df55deeb3278cd8961/image-40.jpg)

- Slides: 46

Parallel Programming with PVM Prof. Sivarama Dandamudi School of Computer Science Carleton University

Parallel Algorithm Models v Five basic models v Data parallel model v Task graph model v Work pool model v Master-slave model v Pipeline model v Hybrid models Carleton University © S. Dandamudi 2

Parallel Algorithm Models v Data (cont’d) parallel model v One of the simplest of all the models v Tasks are statically mapped onto processors v Each task performs similar operation on different data v Called v Work data parallelism model may be done in phases v Operations v Ex: in different phases may be different Matrix multiplication Carleton University © S. Dandamudi 3

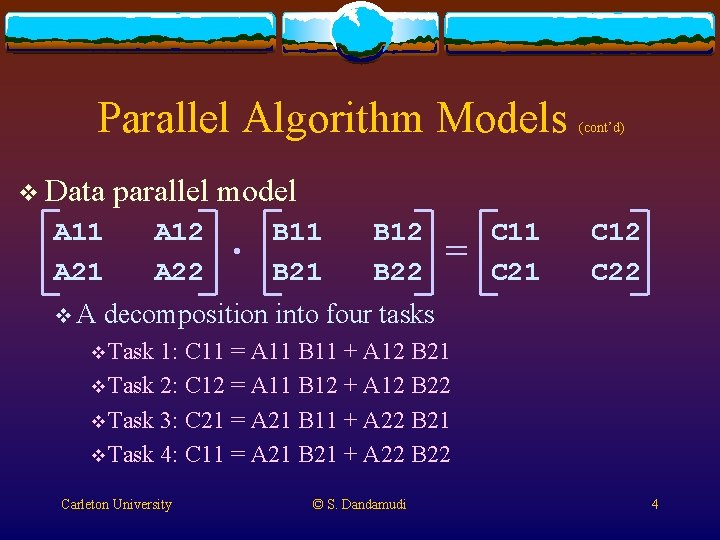

Parallel Algorithm Models v Data parallel model A 11 A 21 v. A (cont’d) A 12 A 22 . B 11 B 21 B 12 B 22 = C 11 C 21 C 12 C 22 decomposition into four tasks v Task 1: C 11 = A 11 B 11 + A 12 B 21 v Task 2: C 12 = A 11 B 12 + A 12 B 22 v Task 3: C 21 = A 21 B 11 + A 22 B 21 v Task 4: C 11 = A 21 B 21 + A 22 B 22 Carleton University © S. Dandamudi 4

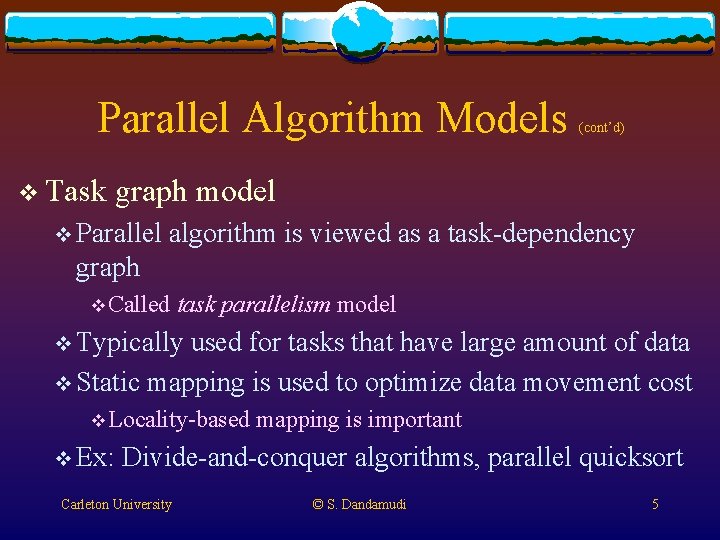

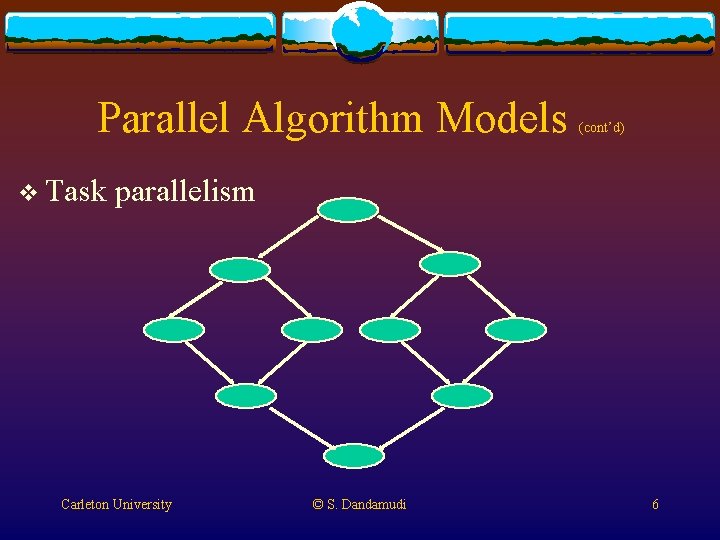

Parallel Algorithm Models v Task (cont’d) graph model v Parallel algorithm is viewed as a task-dependency graph v Called task parallelism model v Typically used for tasks that have large amount of data v Static mapping is used to optimize data movement cost v Locality-based v Ex: mapping is important Divide-and-conquer algorithms, parallel quicksort Carleton University © S. Dandamudi 5

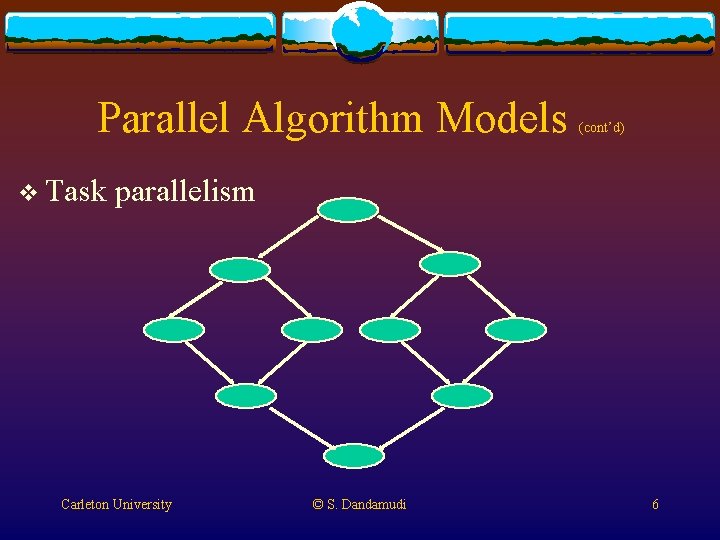

Parallel Algorithm Models v Task (cont’d) parallelism Carleton University © S. Dandamudi 6

Parallel Algorithm Models v Work (cont’d) pool model v Dynamic mapping of tasks onto processors v Important v Used for load balancing on message passing systems v When the data associated with a task is relatively small v Granularity of tasks v Too small: overhead in accessing tasks can increase v Too big: Load imbalance v Ex: Parallelization of loops by chunk scheduling Carleton University © S. Dandamudi 7

Parallel Algorithm Models v Master-slave (cont’d) model v One or more master processes generate work and allocate it to worker processes v Also called manager-worker model v Suitable for both shared-memory and message passing systems v Master can potentially become a bottleneck v Granularity Carleton University of tasks is important © S. Dandamudi 8

Parallel Algorithm Models v Pipeline v. A (cont’d) model stream of data passes through a series of processors v Each process performs some task on the data v Also called stream parallelism model v Uses producer-consumer relationship v Overlapped execution v Useful in applications such as database query processing v Potential v One problem process can delay the whole pipeline Carleton University © S. Dandamudi 9

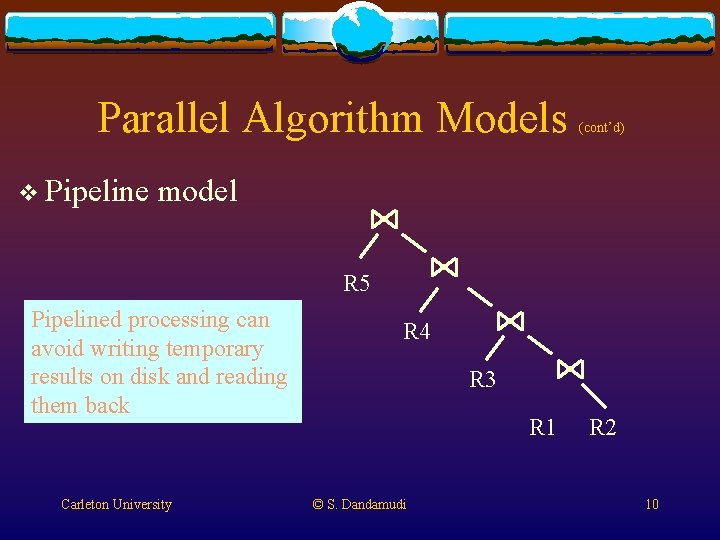

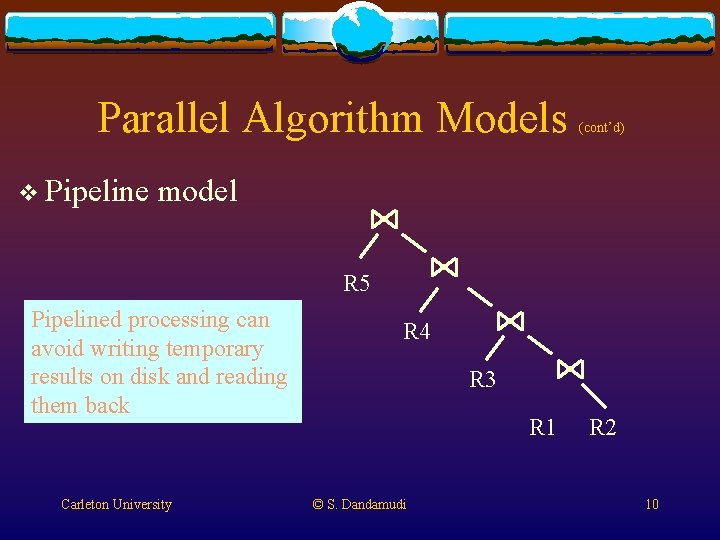

Parallel Algorithm Models v Pipeline (cont’d) model R 5 Pipelined processing can avoid writing temporary results on disk and reading them back Carleton University R 4 R 3 R 1 © S. Dandamudi R 2 10

Parallel Algorithm Models v Hybrid (cont’d) models v Possible to use multiple models v Hierarchical v Different models at different levels v Sequentially v Different v Ex: models in different phases Major computation may use task graph model v Each node of the graph may use data parallelism or pipeline model Carleton University © S. Dandamudi 11

PVM v Parallel virtual machine v Collaborative effort v Oak Ridge National Lab, University of Tennessee, Emory University, and Carnegie Mellon University v Began in 1989 v Version 1. 0 was used internally v Version 2. 0 released in March 1991 v Version 3. 0 in February 1993 Carleton University © S. Dandamudi 12

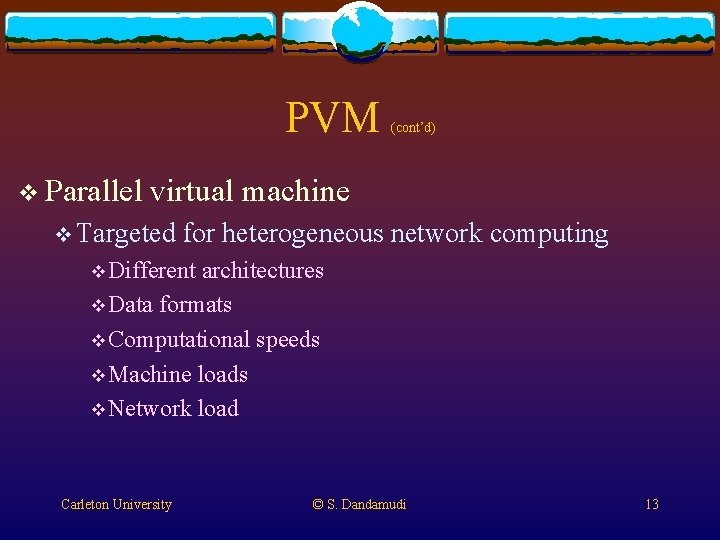

PVM v Parallel (cont’d) virtual machine v Targeted for heterogeneous network computing v Different architectures v Data formats v Computational speeds v Machine loads v Network load Carleton University © S. Dandamudi 13

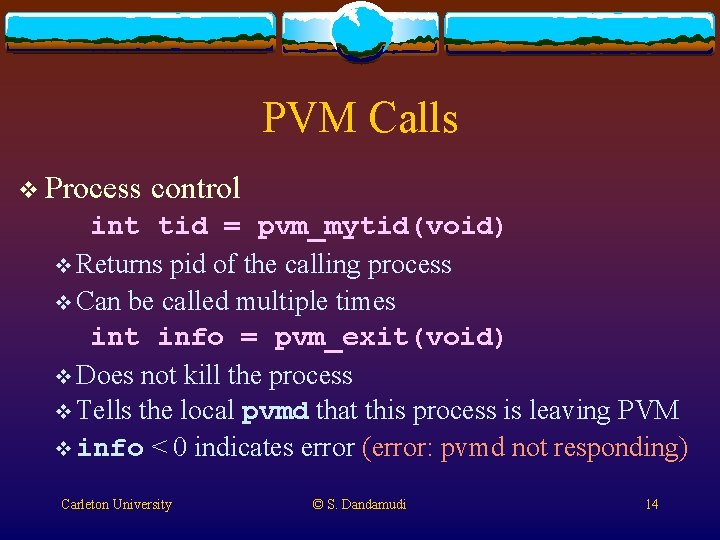

PVM Calls v Process control int tid = pvm_mytid(void) v Returns pid of the calling process v Can be called multiple times int info = pvm_exit(void) v Does not kill the process v Tells the local pvmd that this process is leaving PVM v info < 0 indicates error (error: pvmd not responding) Carleton University © S. Dandamudi 14

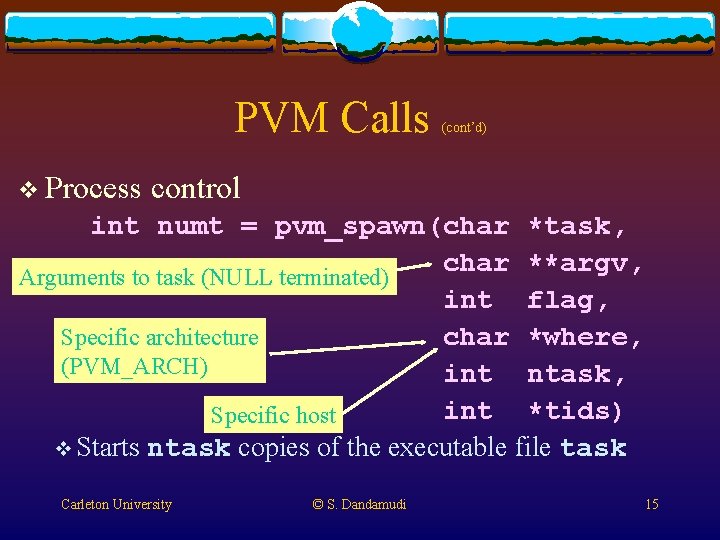

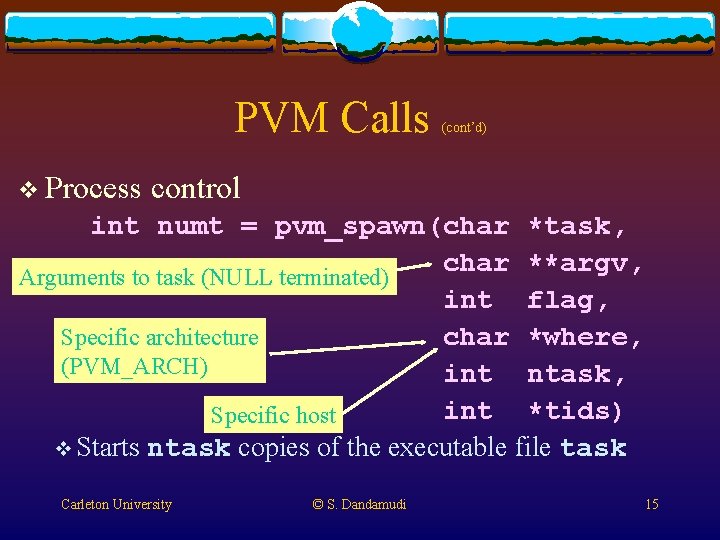

PVM Calls v Process (cont’d) control int numt = pvm_spawn(char *task, char **argv, Arguments to task (NULL terminated) int flag, char *where, Specific architecture (PVM_ARCH) int ntask, int *tids) Specific host v Starts ntask copies of the executable file task Carleton University © S. Dandamudi 15

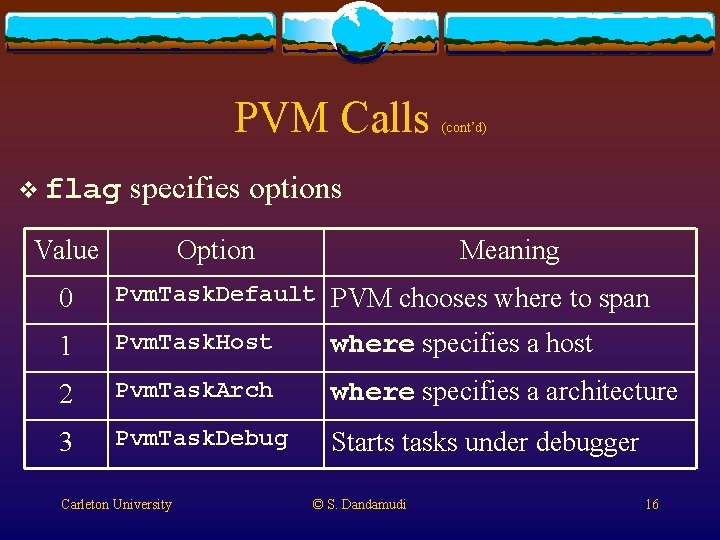

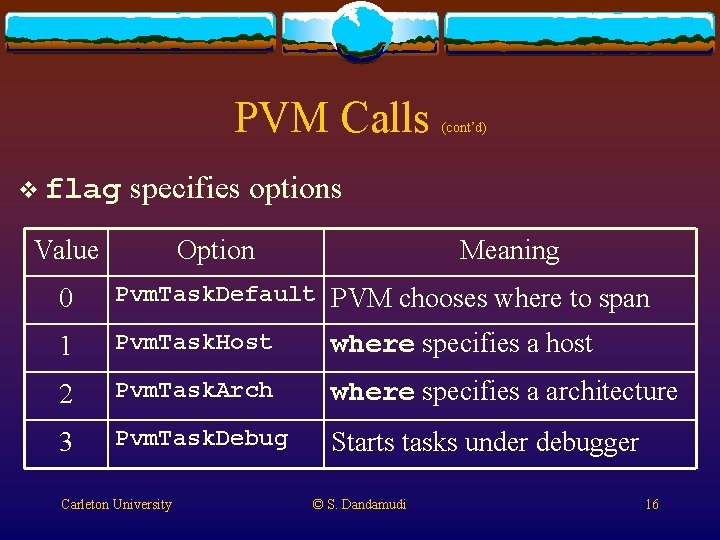

PVM Calls v flag (cont’d) specifies options Value Option Meaning 0 Pvm. Task. Default PVM chooses where to span 1 Pvm. Task. Host where specifies a host 2 Pvm. Task. Arch where specifies a architecture 3 Pvm. Task. Debug Starts tasks under debugger Carleton University © S. Dandamudi 16

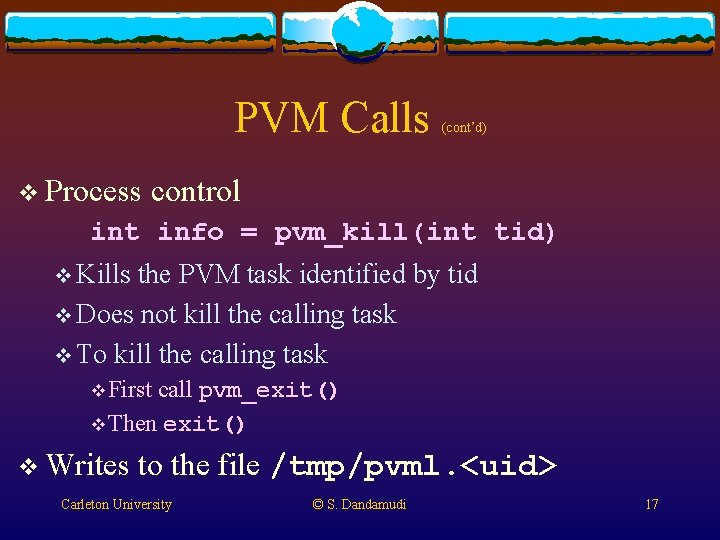

PVM Calls v Process (cont’d) control int info = pvm_kill(int tid) v Kills the PVM task identified by tid v Does not kill the calling task v To kill the calling task v First call pvm_exit() v Then exit() v Writes to the file /tmp/pvml. <uid> Carleton University © S. Dandamudi 17

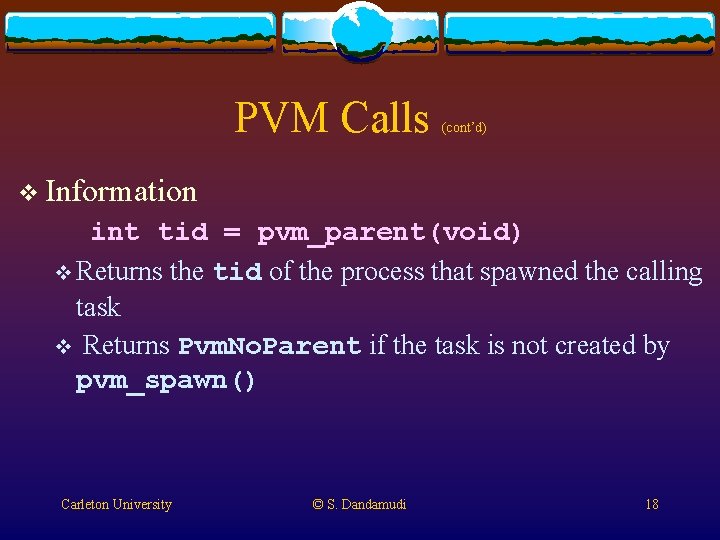

PVM Calls (cont’d) v Information int tid = pvm_parent(void) v Returns the tid of the process that spawned the calling task v Returns Pvm. No. Parent if the task is not created by pvm_spawn() Carleton University © S. Dandamudi 18

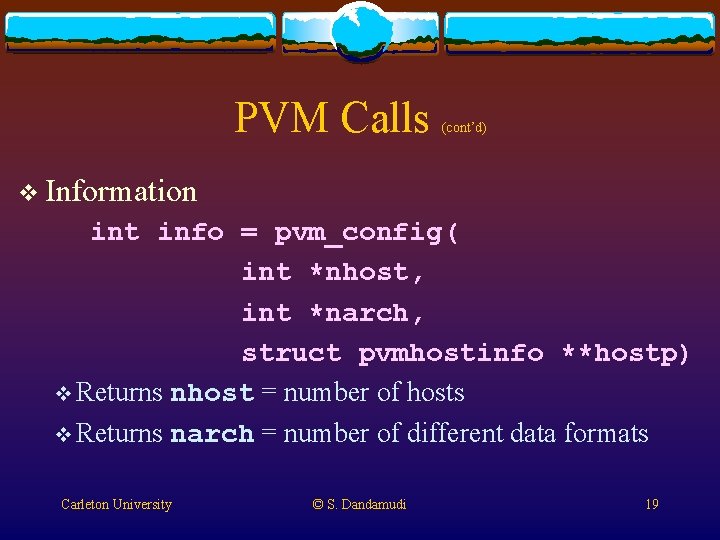

PVM Calls (cont’d) v Information int info = pvm_config( int *nhost, int *narch, struct pvmhostinfo **hostp) v Returns nhost = number of hosts v Returns narch = number of different data formats Carleton University © S. Dandamudi 19

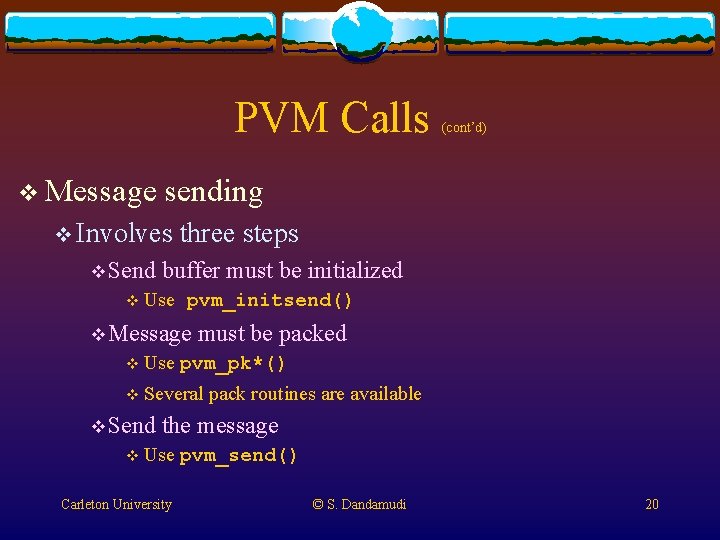

PVM Calls v Message sending v Involves v Send three steps buffer must be initialized v Use pvm_initsend() v Message v Use must be packed pvm_pk*() v Several v Send (cont’d) pack routines are available the message v Use Carleton University pvm_send() © S. Dandamudi 20

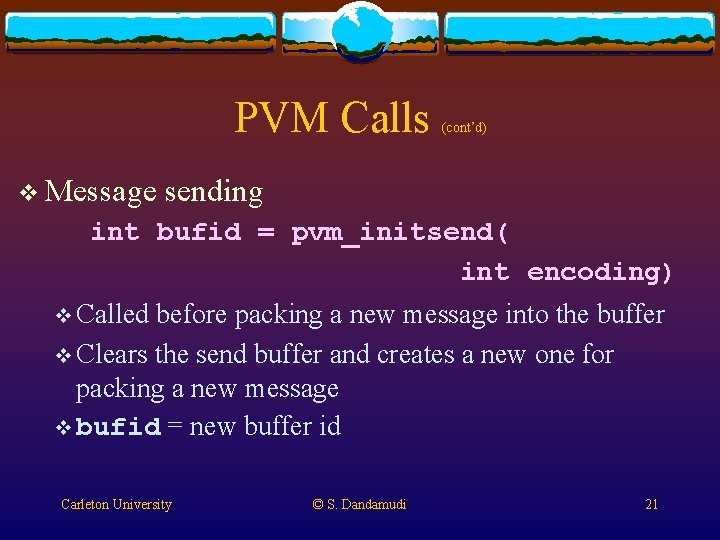

PVM Calls v Message (cont’d) sending int bufid = pvm_initsend( int encoding) v Called before packing a new message into the buffer v Clears the send buffer and creates a new one for packing a new message v bufid = new buffer id Carleton University © S. Dandamudi 21

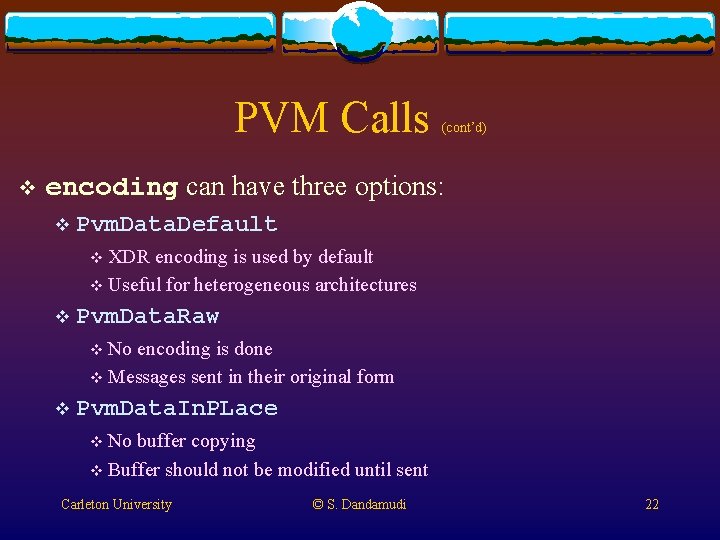

PVM Calls v (cont’d) encoding can have three options: v Pvm. Data. Default v XDR encoding is used by default v Useful for heterogeneous architectures v Pvm. Data. Raw v No encoding is done v Messages sent in their original form v Pvm. Data. In. PLace v No buffer copying v Buffer should not be modified until sent Carleton University © S. Dandamudi 22

PVM Calls (cont’d) Packing data v Several routines are available (one for each data type) v Each takes three arguments int info = pvm_pkbyte(char *cp, int nitem, int stride) v nitem = # items to be packed v stride = stride in elements v Carleton University © S. Dandamudi 23

PVM Calls data pvm_pkint pvm_pkfloat pvm_pkshort (cont’d) v Packing pvm_pklong pvm_pkdouble v Pack string routine requires only the NULL-terminated string pointer pvm_pkstr(char *cp) Carleton University © S. Dandamudi 24

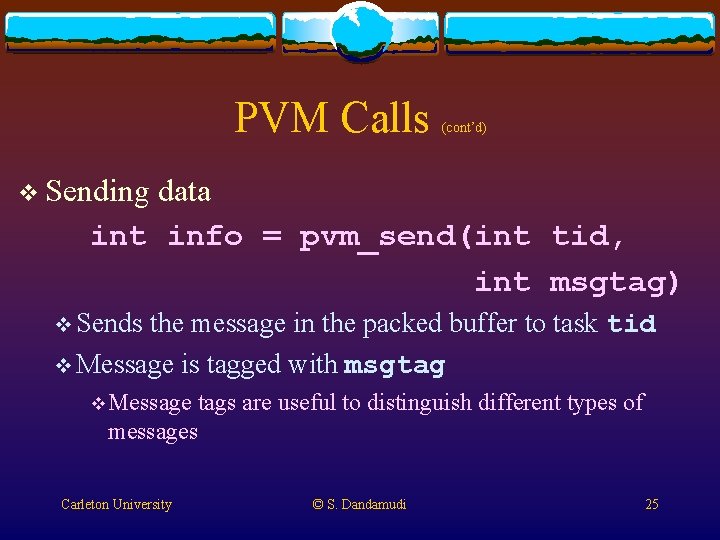

PVM Calls (cont’d) v Sending data int info = pvm_send(int tid, int msgtag) v Sends the message in the packed buffer to task tid v Message is tagged with msgtag v Message tags are useful to distinguish different types of messages Carleton University © S. Dandamudi 25

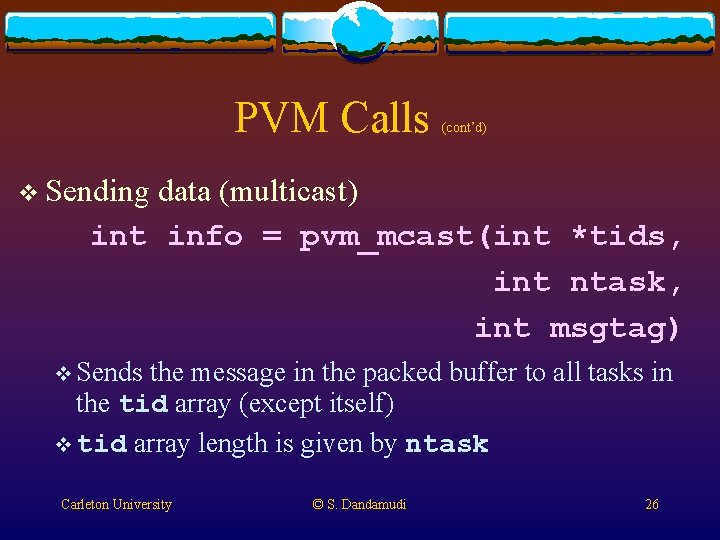

PVM Calls (cont’d) v Sending data (multicast) int info = pvm_mcast(int *tids, int ntask, int msgtag) v Sends the message in the packed buffer to all tasks in the tid array (except itself) v tid array length is given by ntask Carleton University © S. Dandamudi 26

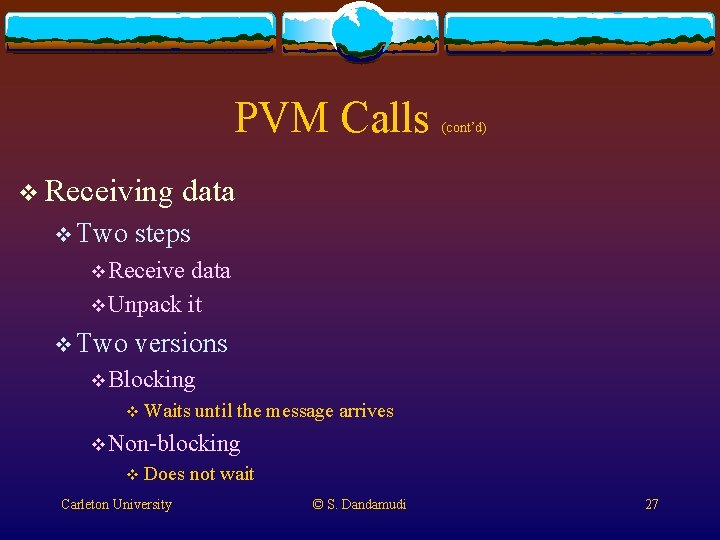

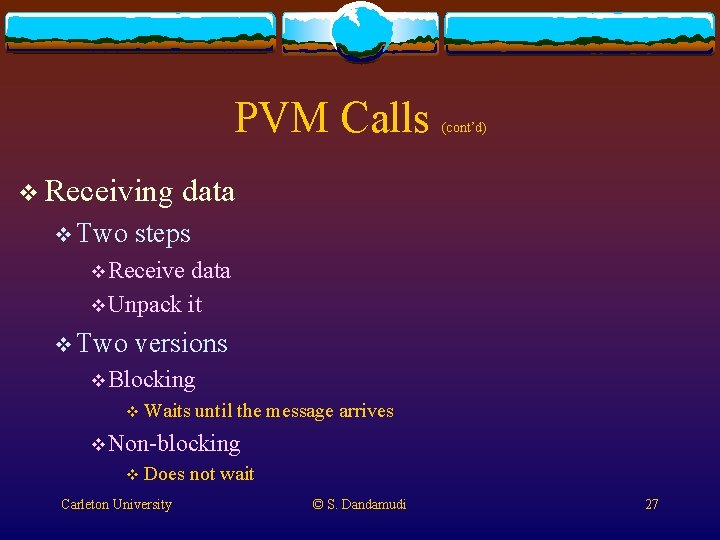

PVM Calls v Receiving v Two (cont’d) data steps v Receive data v Unpack it v Two versions v Blocking v Waits until the message arrives v Non-blocking v Does Carleton University not wait © S. Dandamudi 27

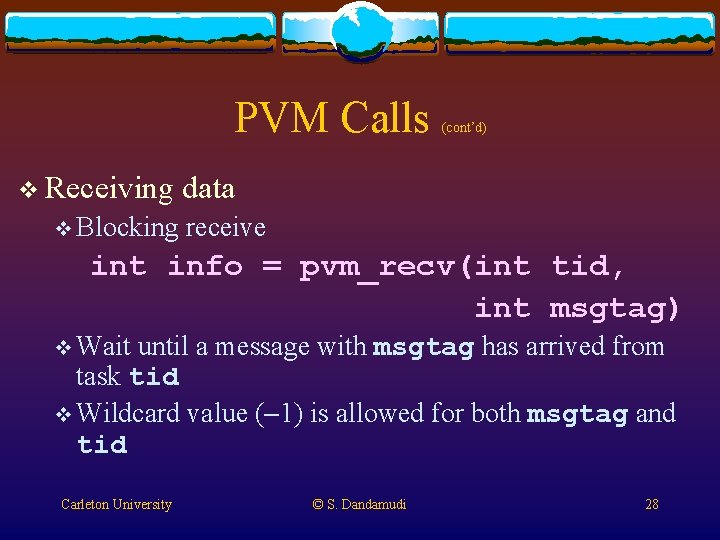

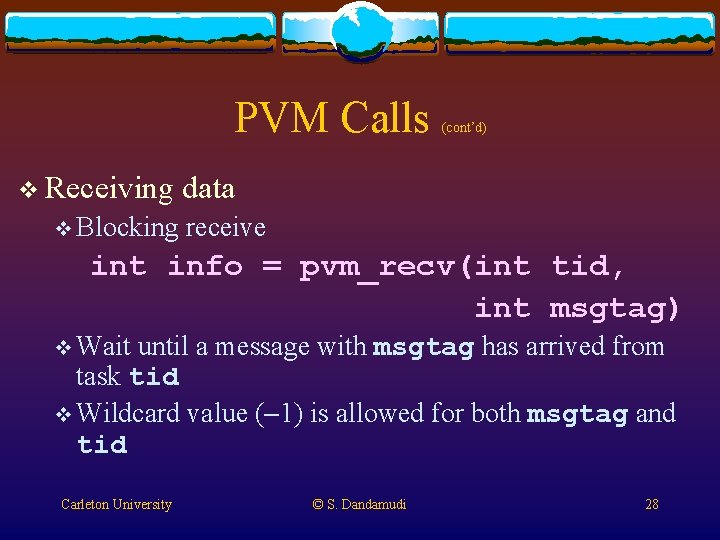

PVM Calls v Receiving v Blocking (cont’d) data receive int info = pvm_recv(int tid, int msgtag) v Wait until a message with msgtag has arrived from task tid v Wildcard value (-1) is allowed for both msgtag and tid Carleton University © S. Dandamudi 28

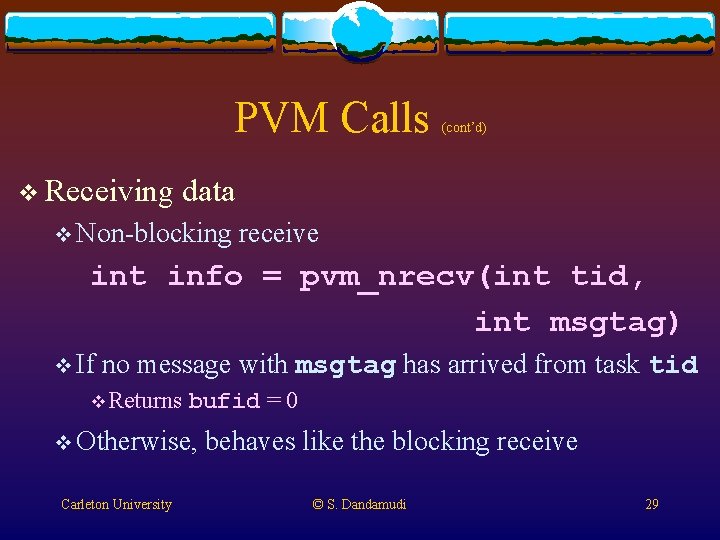

PVM Calls v Receiving (cont’d) data v Non-blocking receive int info = pvm_nrecv(int tid, int msgtag) v If no message with msgtag has arrived from task tid v Returns bufid = 0 v Otherwise, Carleton University behaves like the blocking receive © S. Dandamudi 29

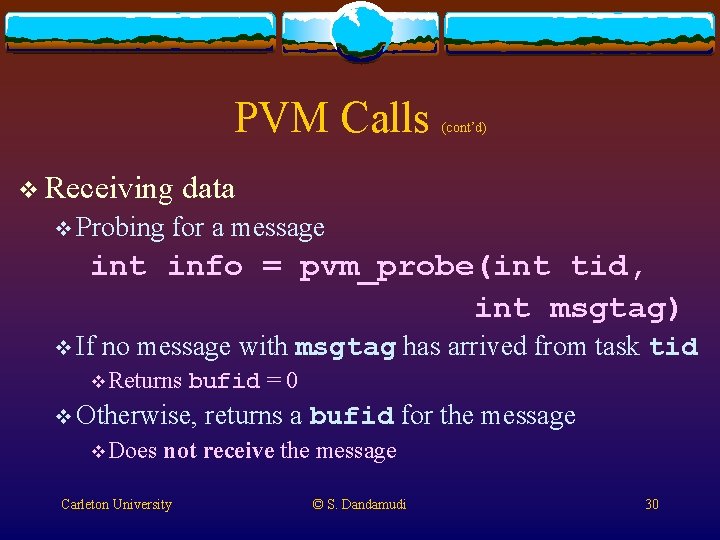

PVM Calls v Receiving v Probing (cont’d) data for a message int info = pvm_probe(int tid, int msgtag) v If no message with msgtag has arrived from task tid v Returns bufid = 0 v Otherwise, v Does returns a bufid for the message not receive the message Carleton University © S. Dandamudi 30

PVM Calls (cont’d) v Unpacking data (similar to packing routines) pvm_upkint pvm_upklong pvm_upkfloat pvm_upkdouble pvm_upkshort pvm_upkbyte v Pack string routine requires only the NULL-terminated string pointer pvm_upkstr(char *cp) Carleton University © S. Dandamudi 31

PVM Calls v Buffer (cont’d) information v Useful to find the size of the received message int info = pvm_bufinfo(int bufid, int *bytes, int *msgtag, int *tid) v Returns msgtag, source tid, and size in bytes Carleton University © S. Dandamudi 32

Example v Finds sum of elements of a given vector v Vector size is given as input v The program can be run on a PVM with up to 10 nodes v Can be modified by changing a constant v Vector is assumes to be evenly divisable by number of nodes in PVM v Easy to modify this restriction v Master (vecsum. c) and slave (vecsum_slave. c) programs Carleton University © S. Dandamudi 33

Example (cont’d) vecsum. c #include <stdio. h> #include <sys/time. h> #include "pvm 3. h" #define MAX_SIZE 250000 /* max. vector size */ NPROCS 10 /* max. number of PVM nodes */ Carleton University © S. Dandamudi 34

![Example contd main int cc tidNPROCS long vectorMAXSIZE double sum 0 partialsum Example (cont’d) main() { int cc, tid[NPROCS]; long vector[MAX_SIZE]; double sum = 0, partial_sum;](https://slidetodoc.com/presentation_image_h2/05439a22db5f95df55deeb3278cd8961/image-35.jpg)

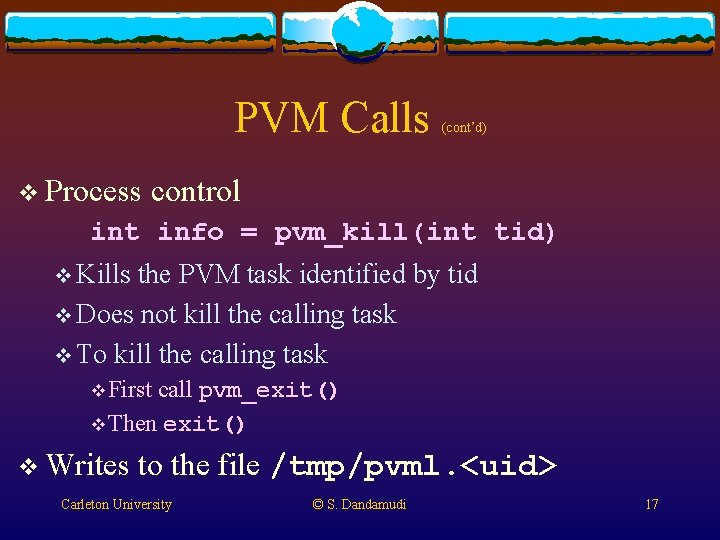

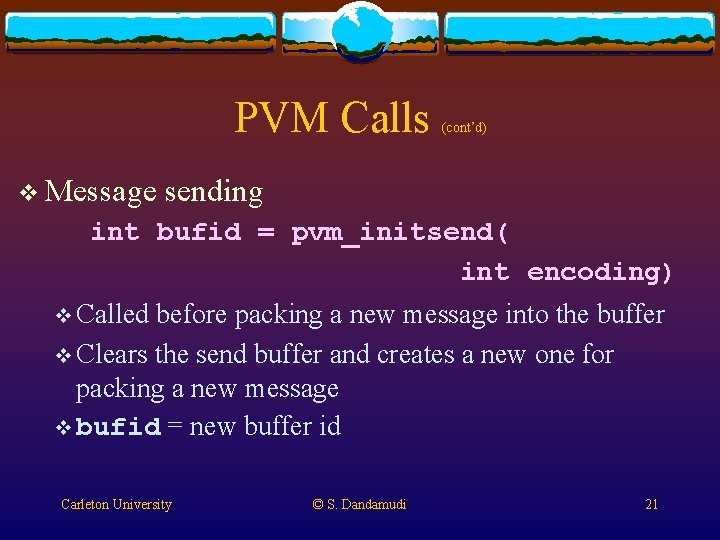

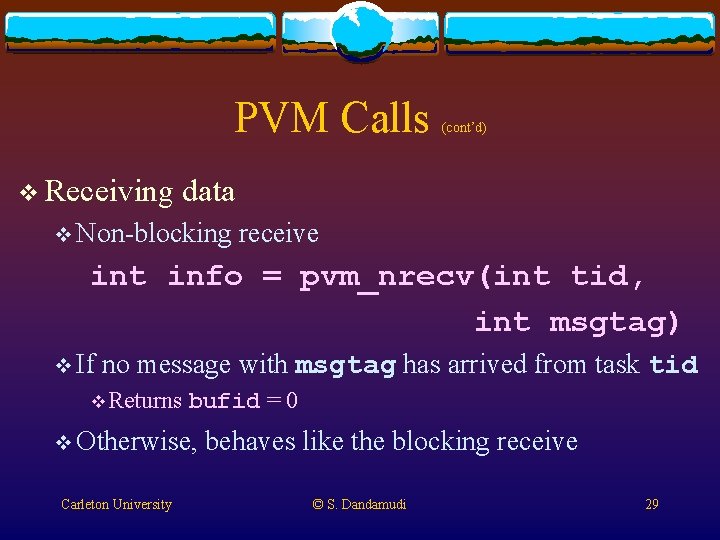

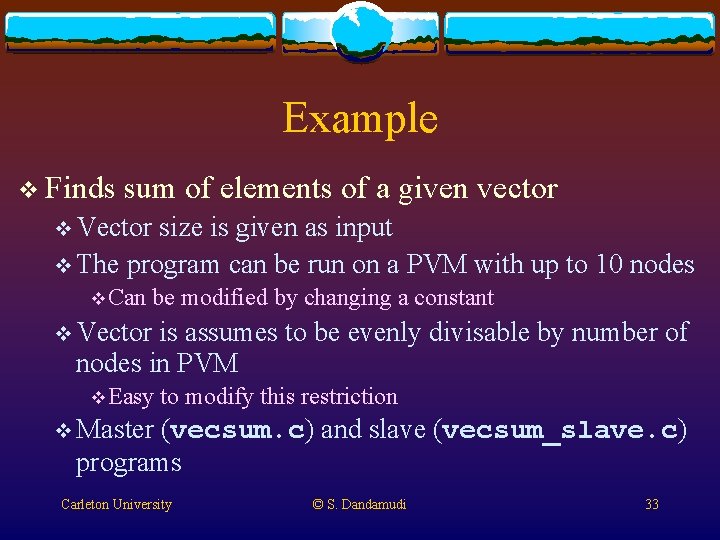

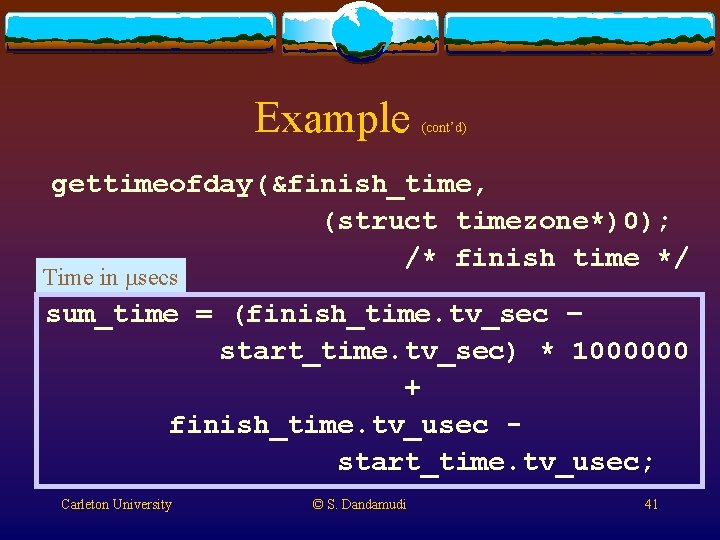

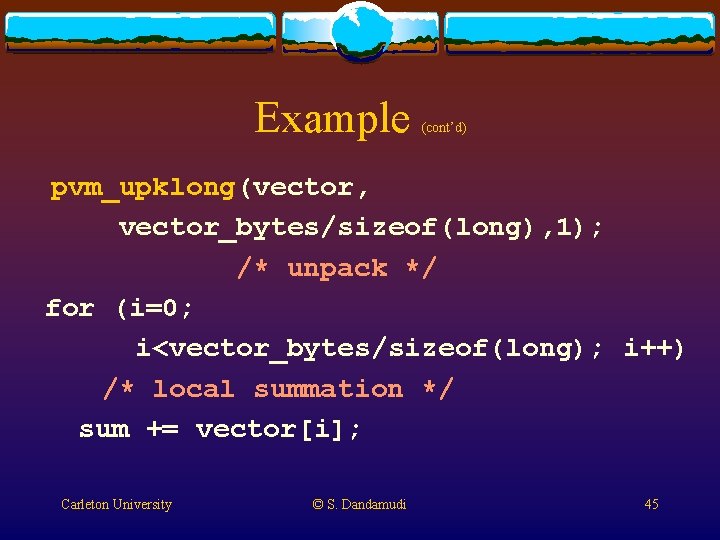

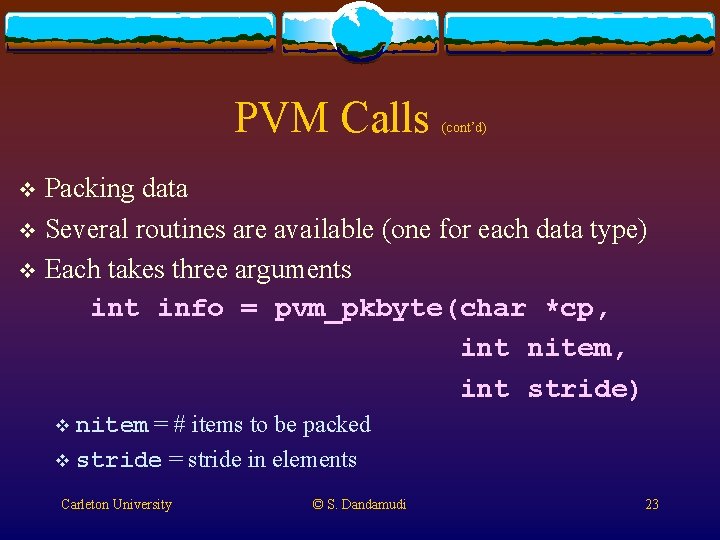

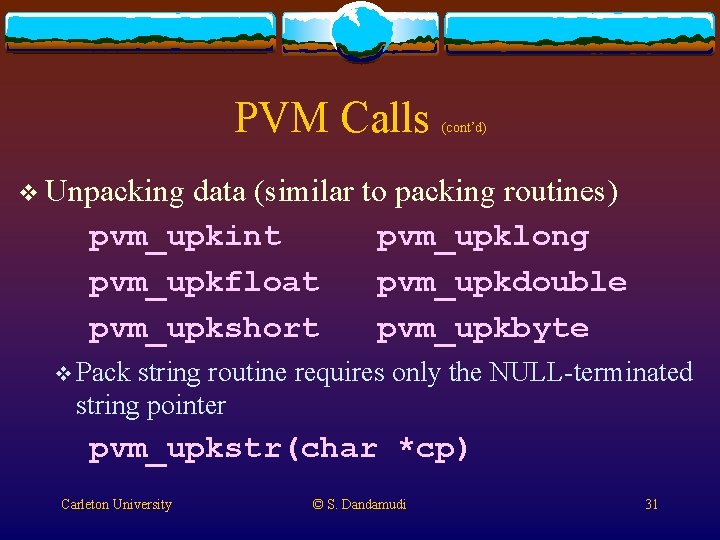

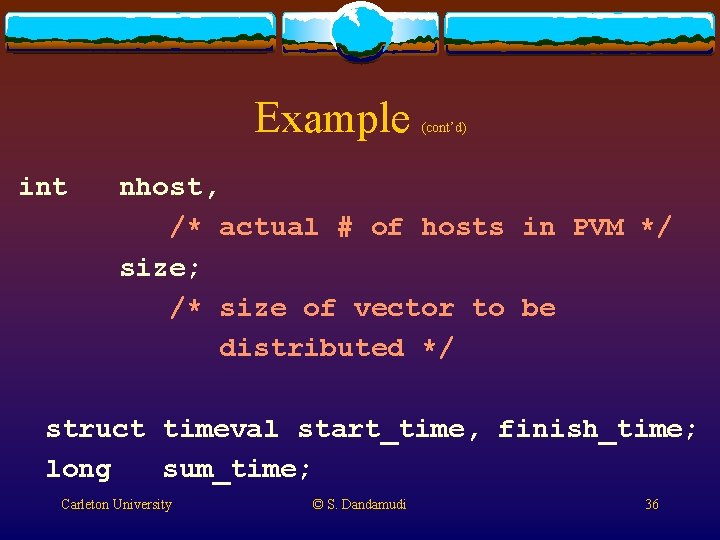

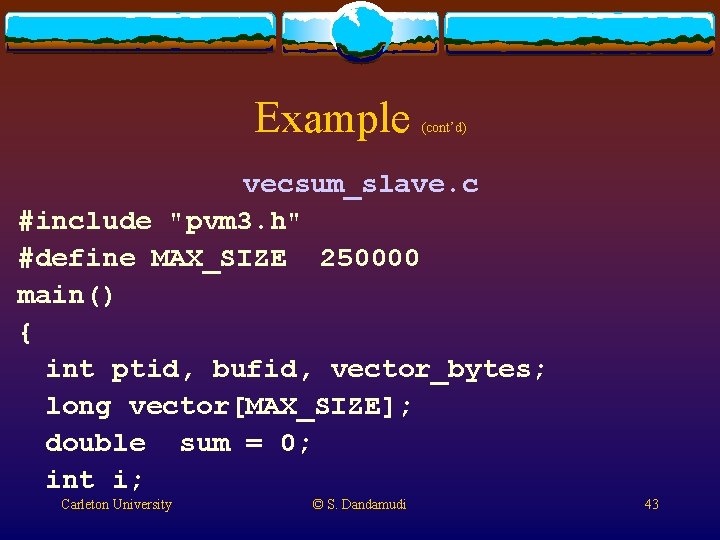

Example (cont’d) main() { int cc, tid[NPROCS]; long vector[MAX_SIZE]; double sum = 0, partial_sum; /* partial sum received from slaves */ long i, vector_size; Carleton University © S. Dandamudi 35

Example int (cont’d) nhost, /* actual # of hosts in PVM */ size; /* size of vector to be distributed */ struct timeval start_time, finish_time; long sum_time; Carleton University © S. Dandamudi 36

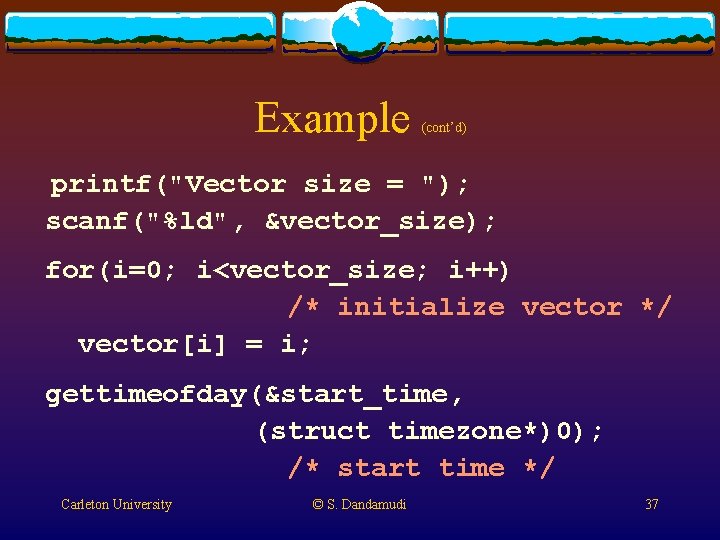

Example (cont’d) printf("Vector size = "); scanf("%ld", &vector_size); for(i=0; i<vector_size; i++) /* initialize vector */ vector[i] = i; gettimeofday(&start_time, (struct timezone*)0); /* start time */ Carleton University © S. Dandamudi 37

![Example contd tid0 pvmmytid establish my tid get of Example (cont’d) tid[0] = pvm_mytid(); /* establish my tid */ /* get # of](https://slidetodoc.com/presentation_image_h2/05439a22db5f95df55deeb3278cd8961/image-38.jpg)

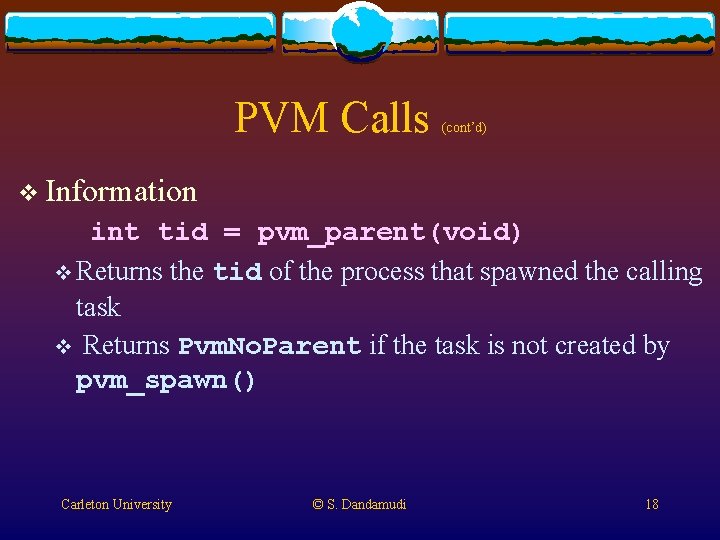

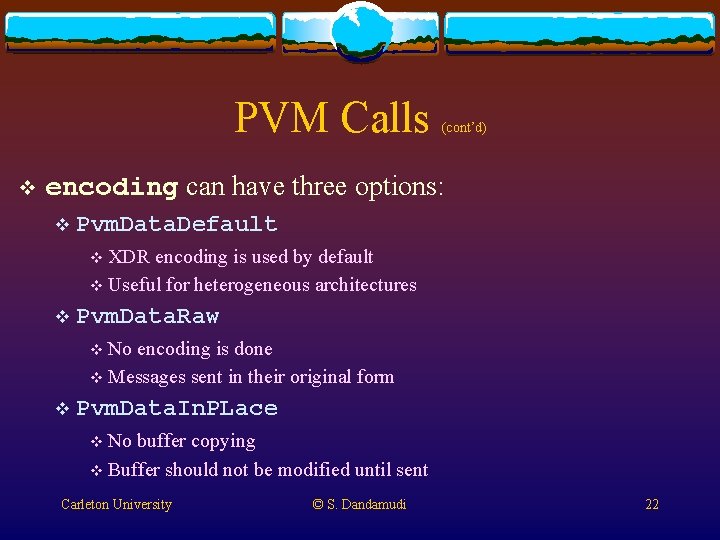

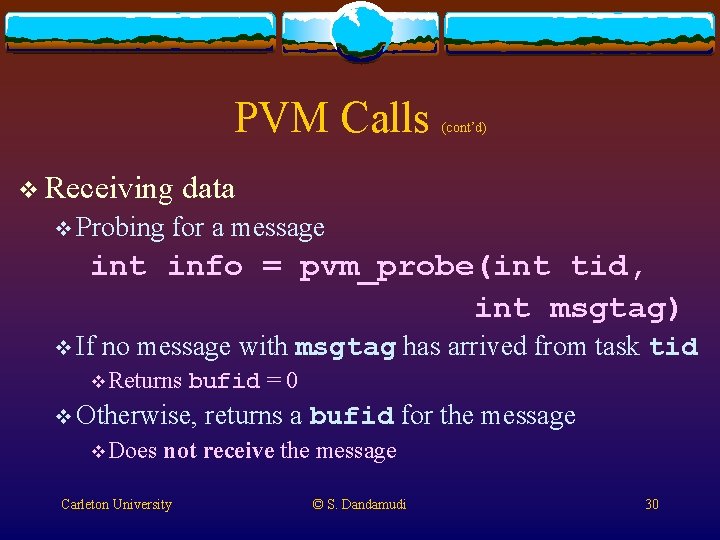

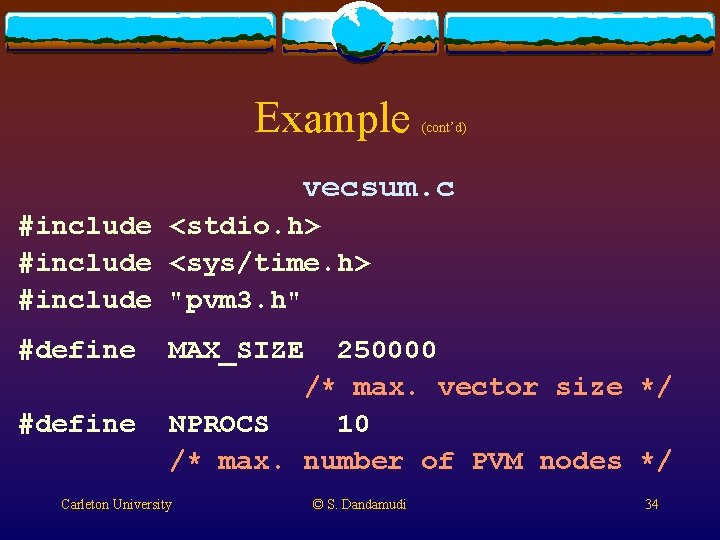

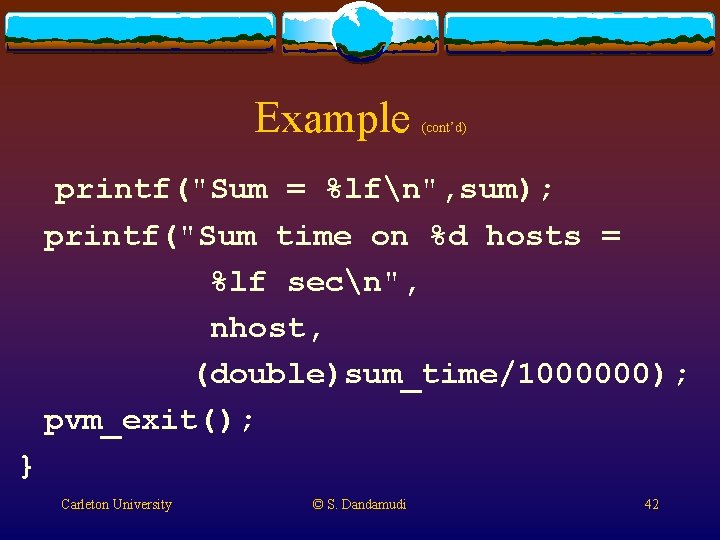

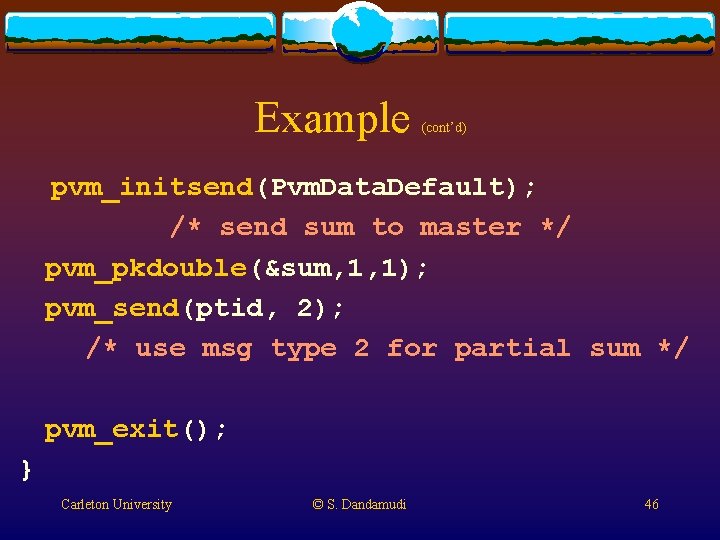

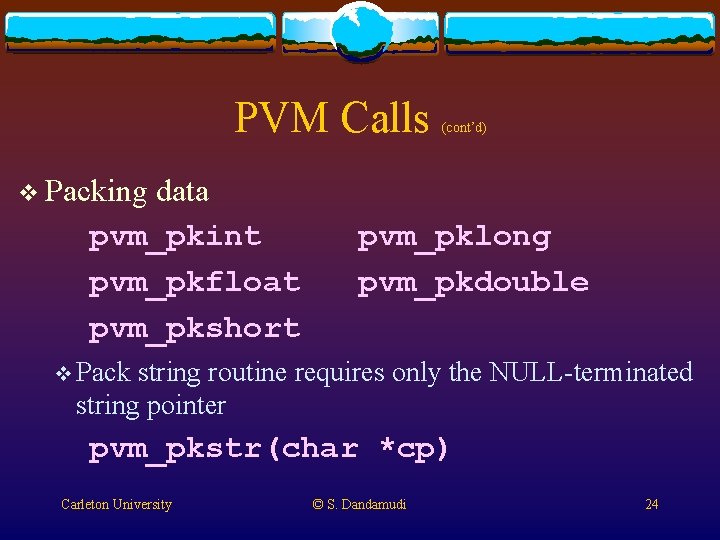

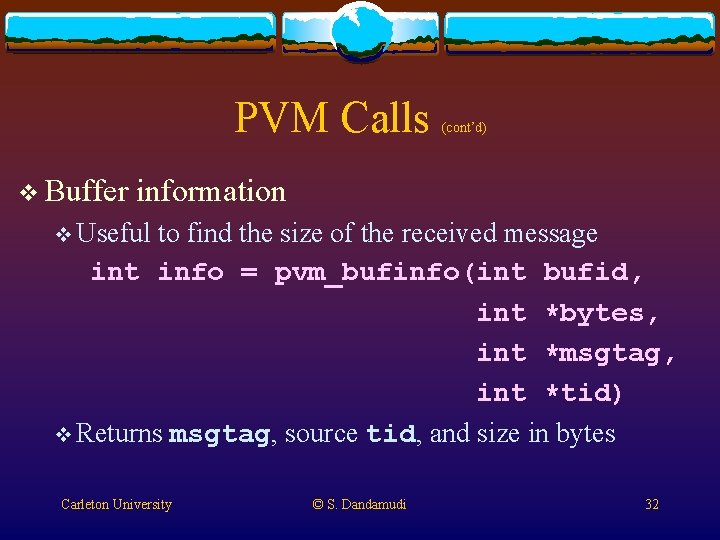

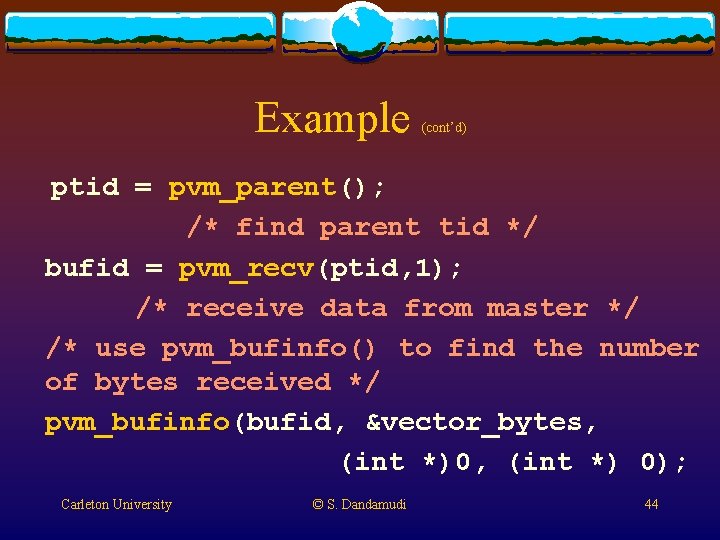

Example (cont’d) tid[0] = pvm_mytid(); /* establish my tid */ /* get # of hosts using pvm_config() */ pvm_config(&nhost, (int *)0, (struct hostinfo *)0); size = vector_size/nhost; /* size of vector to send to slaves */ Carleton University © S. Dandamudi 38

![Example contd if nhost 1 pvmspawnvecsumslave char 0 0 nhost1 tid1 for Example (cont’d) if (nhost > 1) pvm_spawn("vecsum_slave", (char **)0, 0, "", nhost-1, &tid[1]); for](https://slidetodoc.com/presentation_image_h2/05439a22db5f95df55deeb3278cd8961/image-39.jpg)

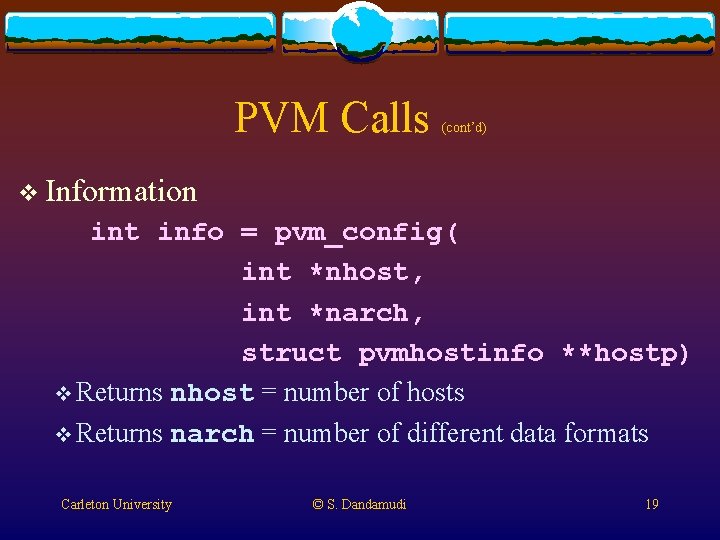

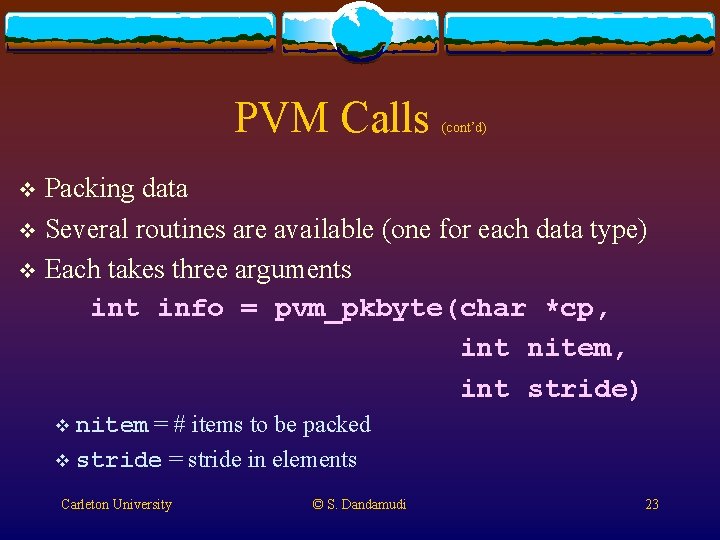

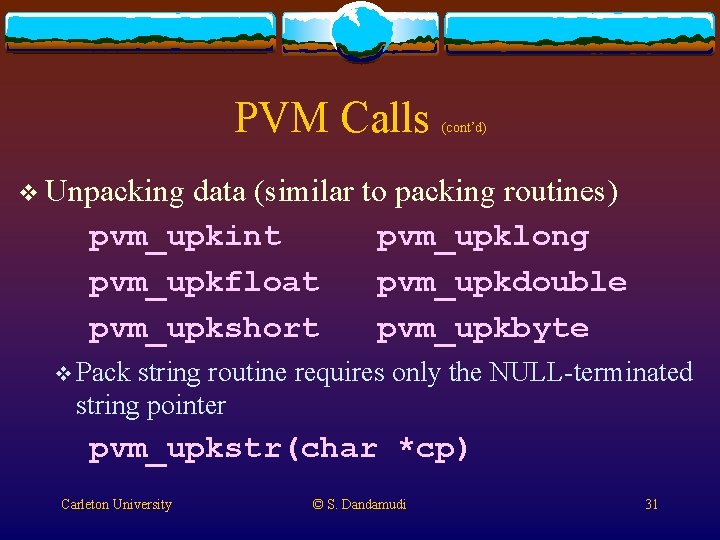

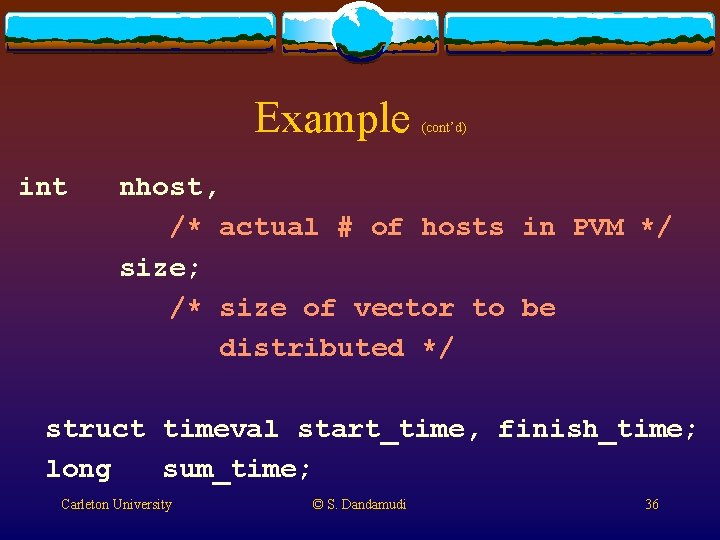

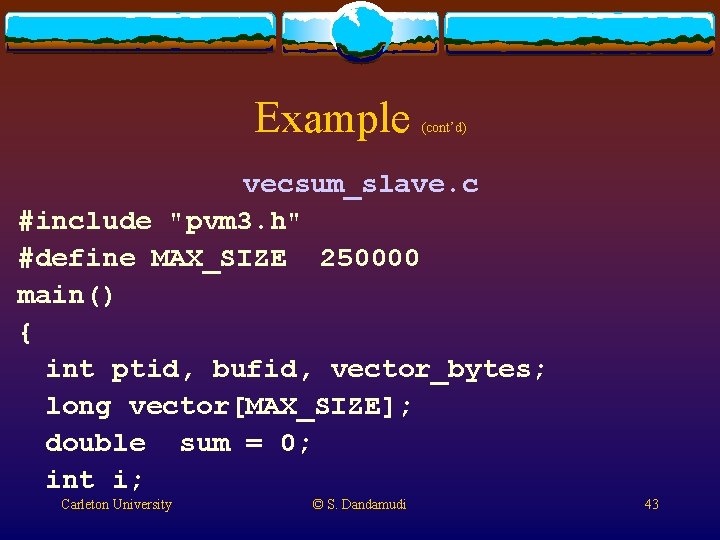

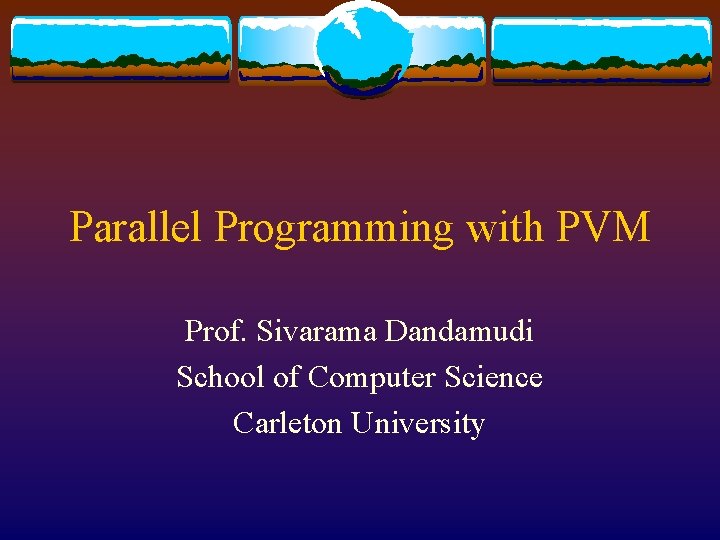

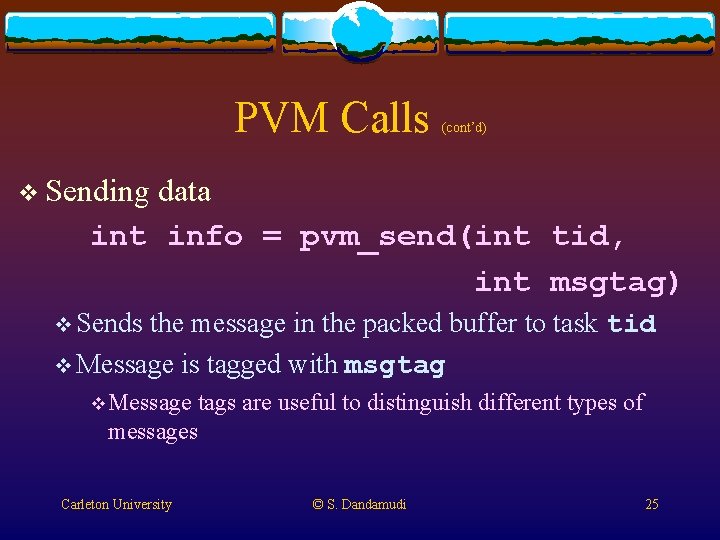

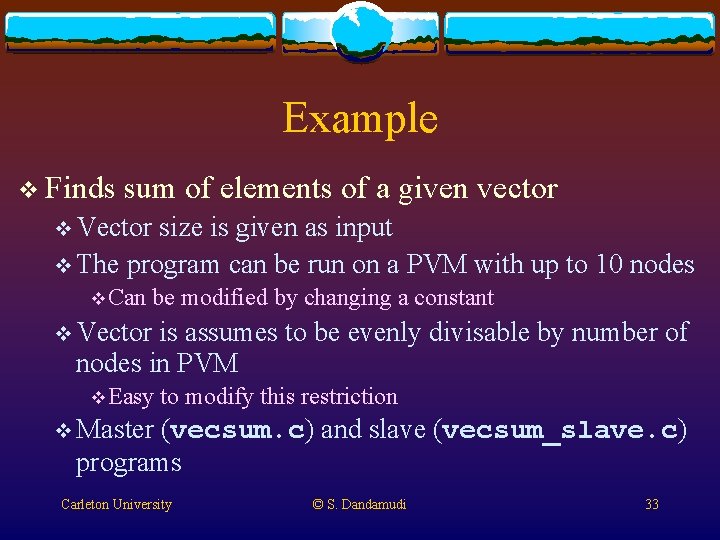

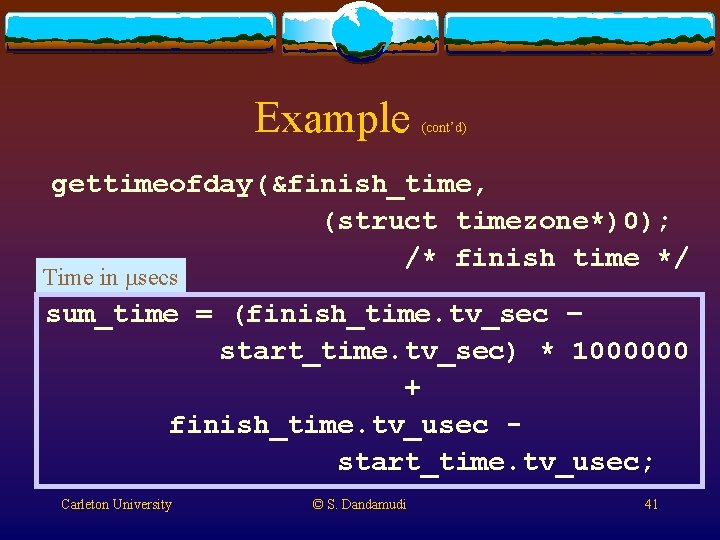

Example (cont’d) if (nhost > 1) pvm_spawn("vecsum_slave", (char **)0, 0, "", nhost-1, &tid[1]); for (i=1; i<nhost; i++){ /* distribute data to slaves */ pvm_initsend(Pvm. Data. Default); pvm_pklong(&vector[i*size], size, 1); pvm_send(tid[i], 1); } Carleton University © S. Dandamudi 39

![Example contd for i0 isize i perform local sum sum vectori Example (cont’d) for (i=0; i<size; i++) /* perform local sum */ sum += vector[i];](https://slidetodoc.com/presentation_image_h2/05439a22db5f95df55deeb3278cd8961/image-40.jpg)

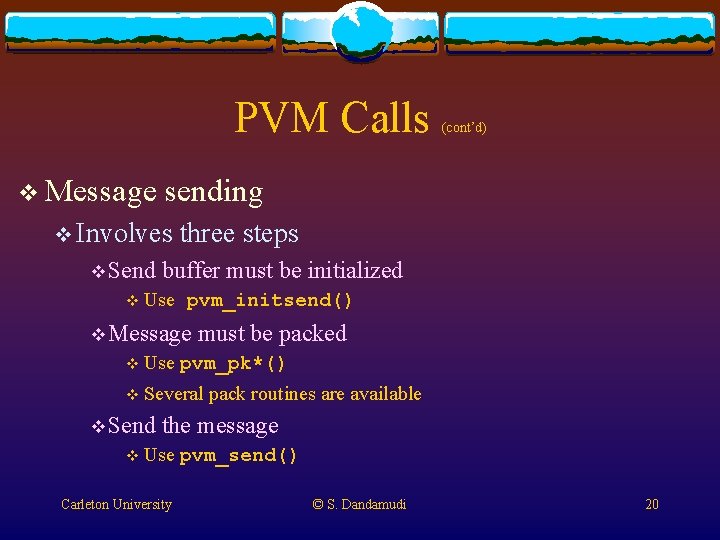

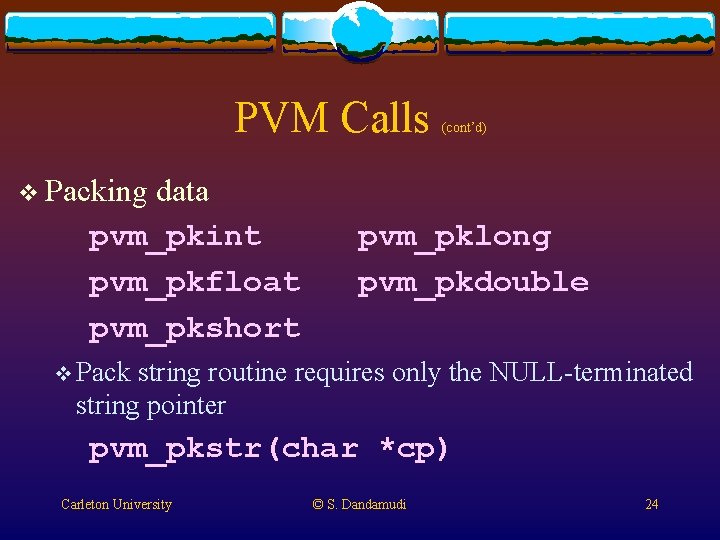

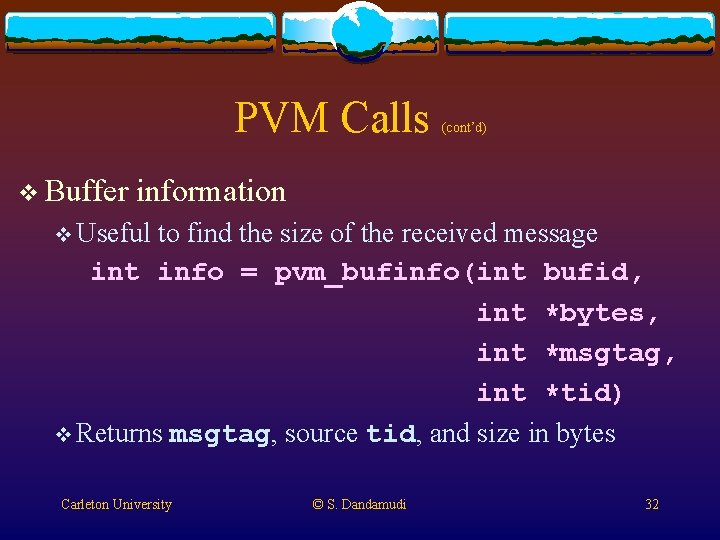

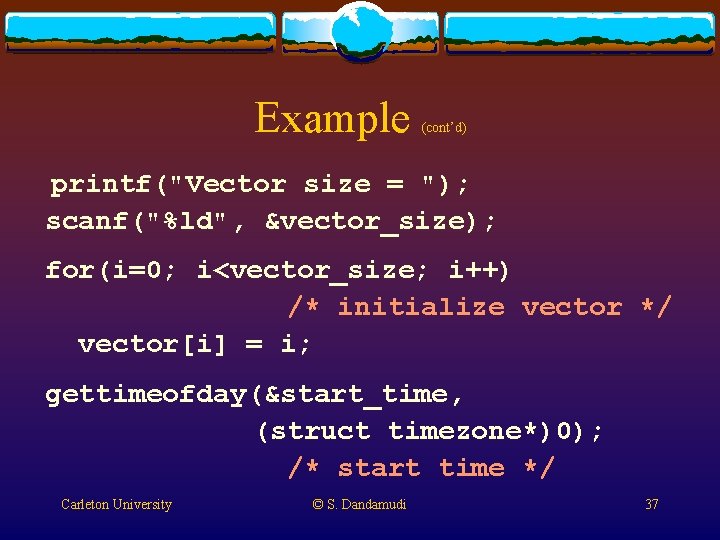

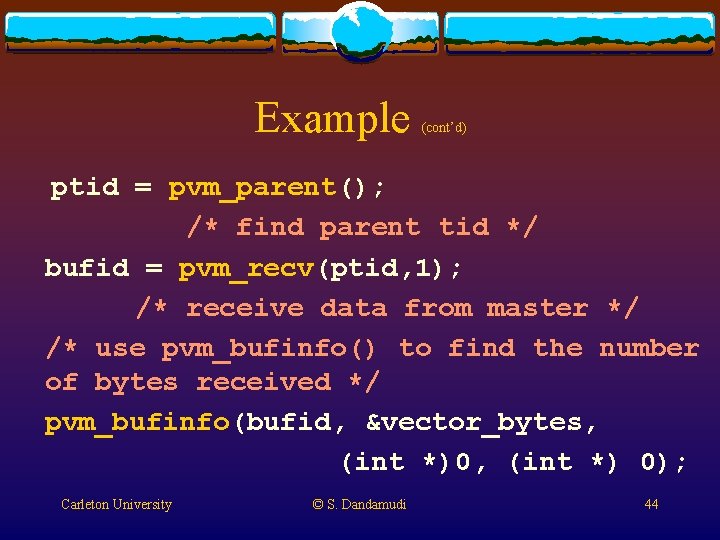

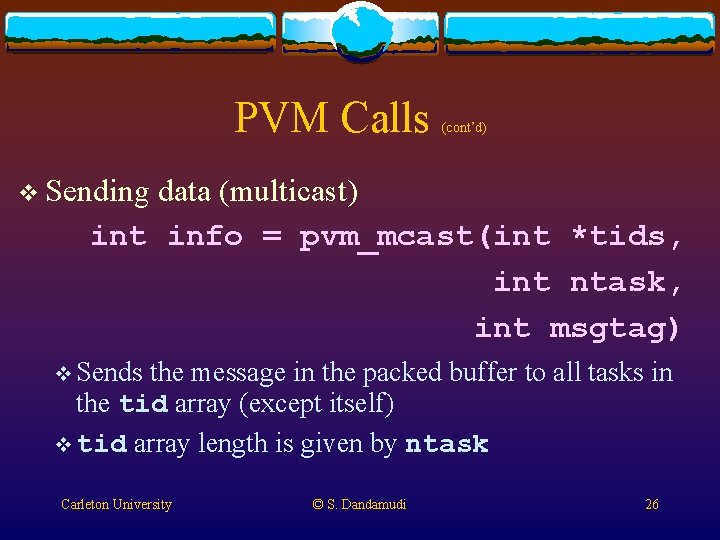

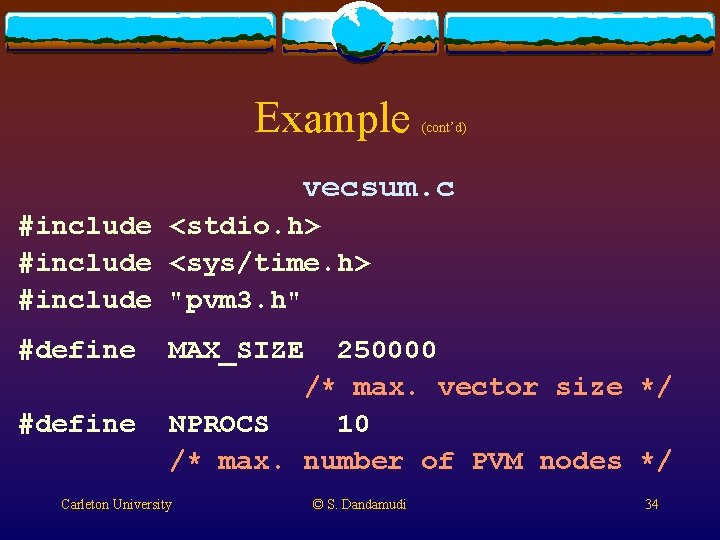

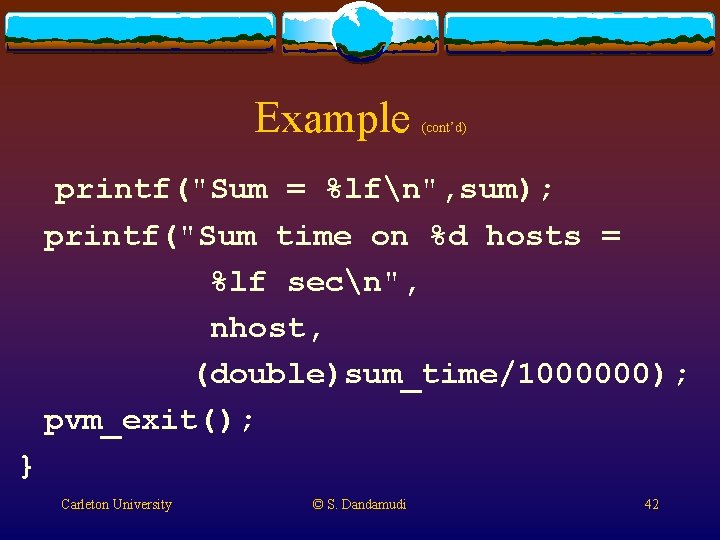

Example (cont’d) for (i=0; i<size; i++) /* perform local sum */ sum += vector[i]; for (i=1; i<nhost; i++){ /* collect partial sums from slaves */ pvm_recv(-1, 2); pvm_upkdouble(&partial_sum, 1, 1); sum += partial_sum; } Carleton University © S. Dandamudi 40

Example (cont’d) gettimeofday(&finish_time, (struct timezone*)0); /* finish time */ Time in msecs sum_time = (finish_time. tv_sec – start_time. tv_sec) * 1000000 + finish_time. tv_usec start_time. tv_usec; Carleton University © S. Dandamudi 41

Example (cont’d) printf("Sum = %lfn", sum); printf("Sum time on %d hosts = %lf secn", nhost, (double)sum_time/1000000); pvm_exit(); } Carleton University © S. Dandamudi 42

Example (cont’d) vecsum_slave. c #include "pvm 3. h" #define MAX_SIZE 250000 main() { int ptid, bufid, vector_bytes; long vector[MAX_SIZE]; double sum = 0; int i; Carleton University © S. Dandamudi 43

Example (cont’d) ptid = pvm_parent(); /* find parent tid */ bufid = pvm_recv(ptid, 1); /* receive data from master */ /* use pvm_bufinfo() to find the number of bytes received */ pvm_bufinfo(bufid, &vector_bytes, (int *)0, (int *) 0); Carleton University © S. Dandamudi 44

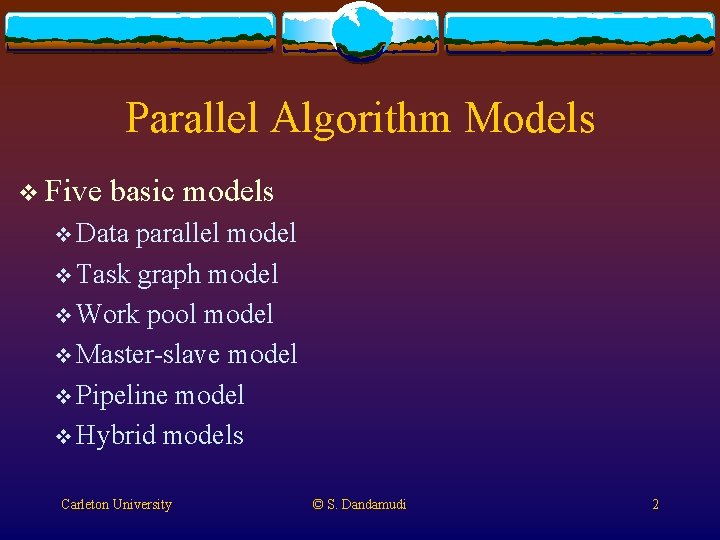

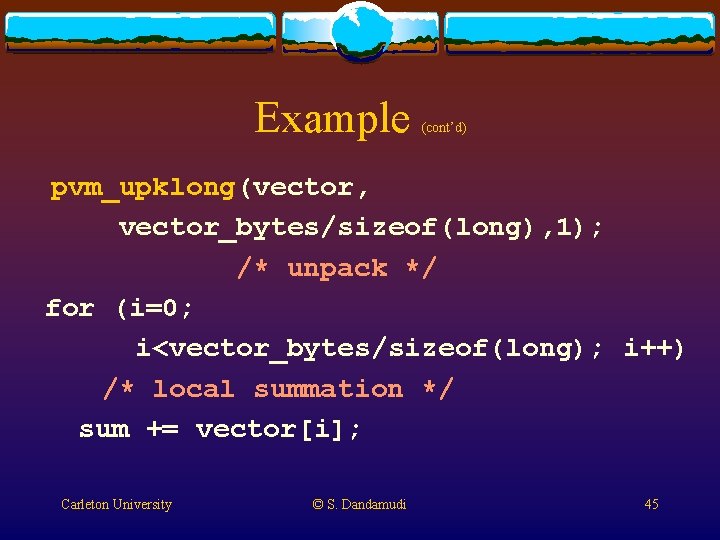

Example (cont’d) pvm_upklong(vector, vector_bytes/sizeof(long), 1); /* unpack */ for (i=0; i<vector_bytes/sizeof(long); i++) /* local summation */ sum += vector[i]; Carleton University © S. Dandamudi 45

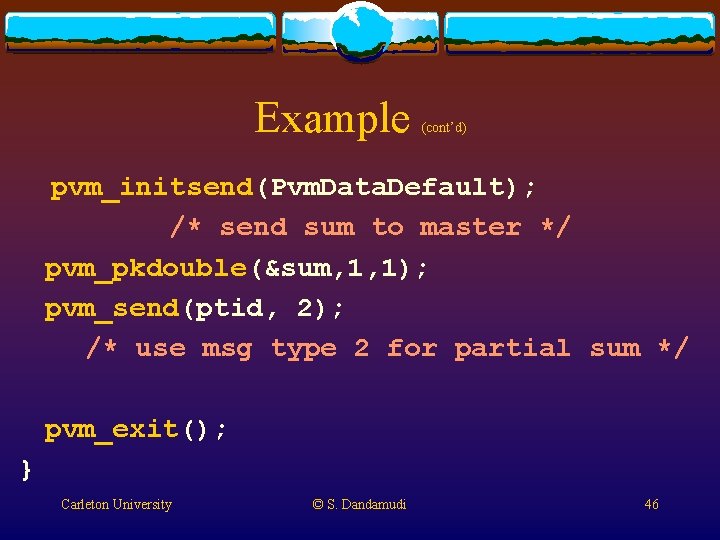

Example (cont’d) pvm_initsend(Pvm. Data. Default); /* send sum to master */ pvm_pkdouble(&sum, 1, 1); pvm_send(ptid, 2); /* use msg type 2 for partial sum */ pvm_exit(); } Carleton University © S. Dandamudi 46