Parallel Processing introduction Traditionally the computer has been

Parallel Processing - introduction ¡ ¡ ¡ Traditionally, the computer has been viewed as a sequential machine. This view of the computer has never been entirely true. Instruction pipelining (micro-instruction level parallelism) superscalar organization (instructionlevel parallelism)

Parallelism in Uniprocessor System ¡ ¡ ¡ Multiple functional units Pipelining within the CPU Overlapped CPU and I/O operations Use of hierarchical memory system Multiprogramming and time sharing Use of hierarchical bus system (balancing of subsystem bandwidths)

Pipelining Strategy ¡ ¡ Instruction pipelining is similar to assembly lines in industrial plant (divide the task into subtasks, each of which can be executed by a special hardware concurrently) Instruction cycle has a number of stages: l l l Fetch instruction (FI) Decode instruction (DI) Calculate operands effective addresses (CO) Fetch operands (FO) Execute instructions (EI) Write result (WO)

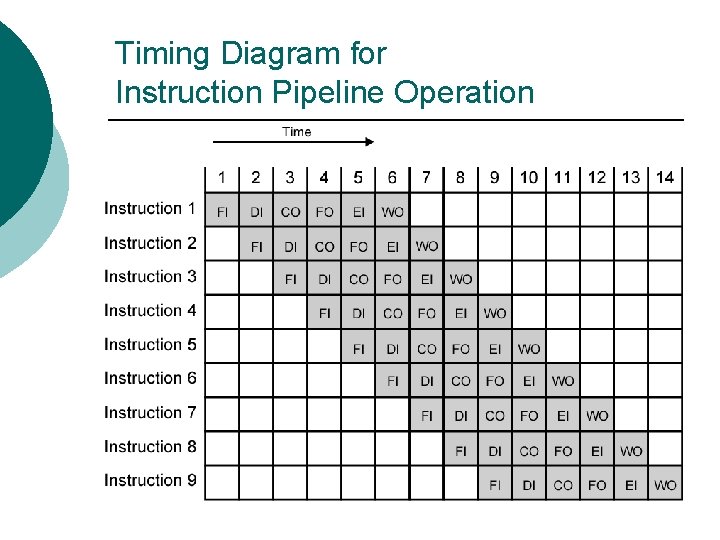

Timing Diagram for Instruction Pipeline Operation

Assumptions ¡ ¡ ¡ Each instruction goes through all six stages of the pipeline equal duration All of the stages can be performed in parallel (no resources conflict)

Improved Performance ¡ But not doubled: l l ¡ Fetch usually shorter than execution Any jump or branch means that prefetched instructions are not the required instructions Add more stages to improve performance

Pipeline Hazards ¡ ¡ Pipeline, or some portion of pipeline, must stall. Also called pipeline bubble Types of hazards l l l Resource Data Control

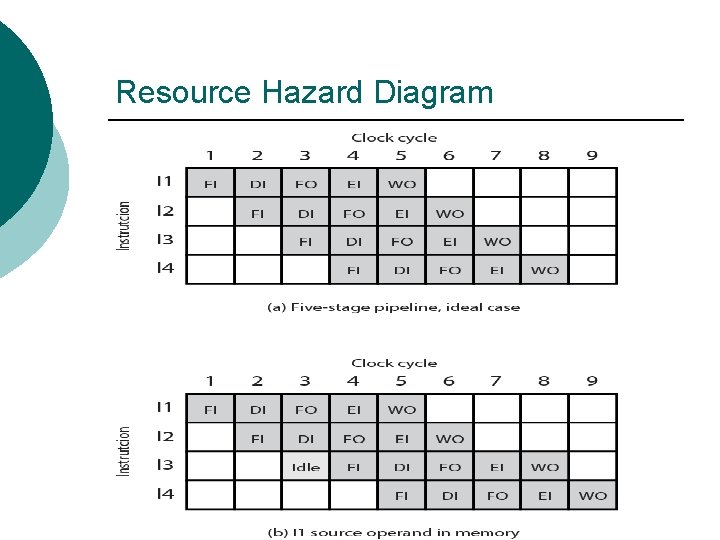

Resource Hazards ¡ ¡ Two (or more) instructions in pipeline need same resource Executed in serial rather than parallel for part of pipeline Operand read or write cannot be performed in parallel with instruction fetch One solution: increase available resources l l Multiple main memory ports Multiple ALUs

Resource Hazard Diagram

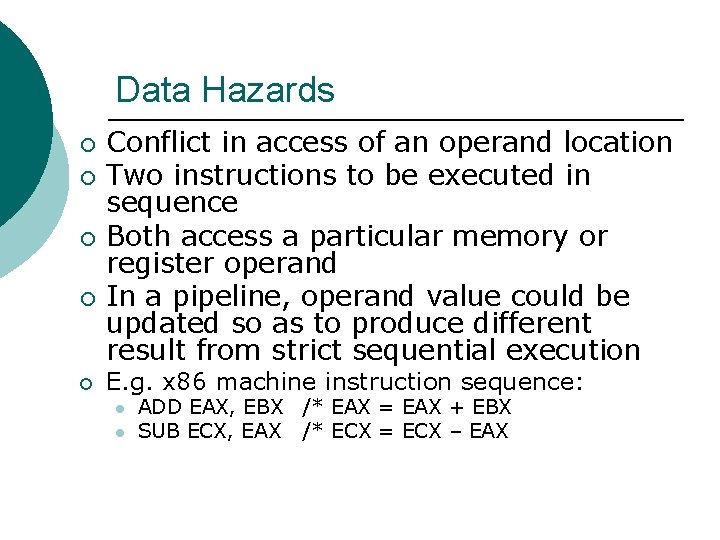

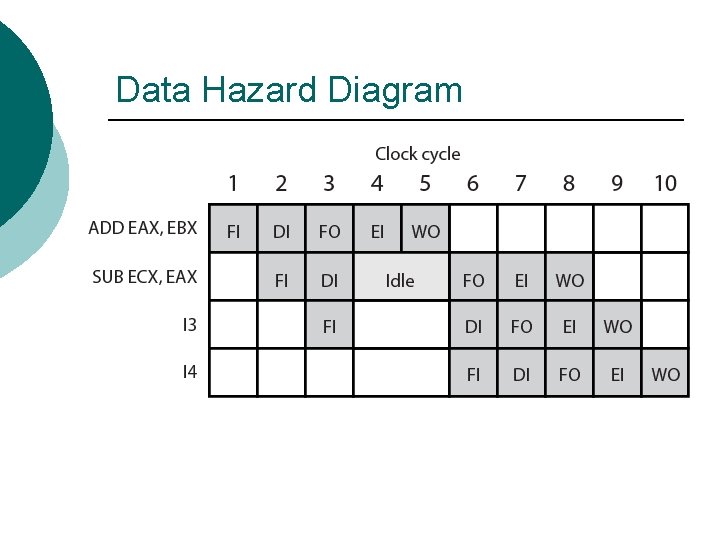

Data Hazards ¡ ¡ ¡ Conflict in access of an operand location Two instructions to be executed in sequence Both access a particular memory or register operand In a pipeline, operand value could be updated so as to produce different result from strict sequential execution E. g. x 86 machine instruction sequence: l l ADD EAX, EBX /* EAX = EAX + EBX SUB ECX, EAX /* ECX = ECX – EAX

Data Hazard Diagram

Control Hazard ¡ ¡ Also known as branch hazard Brings instructions into pipeline that must subsequently be discarded

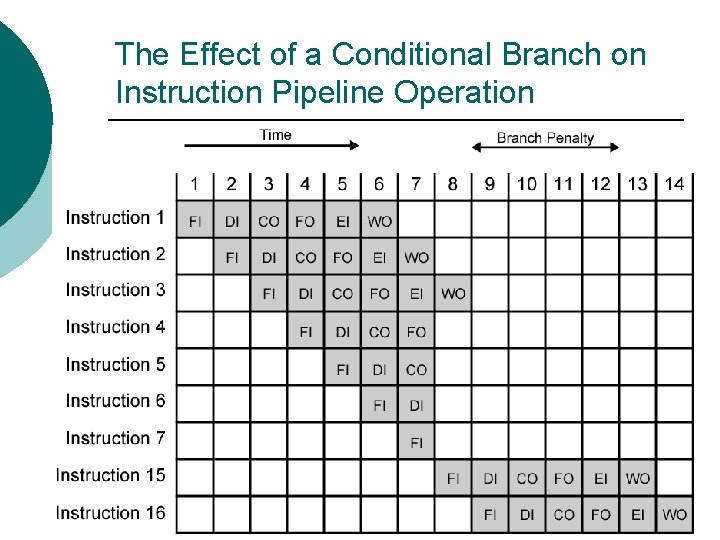

The Effect of a Conditional Branch on Instruction Pipeline Operation

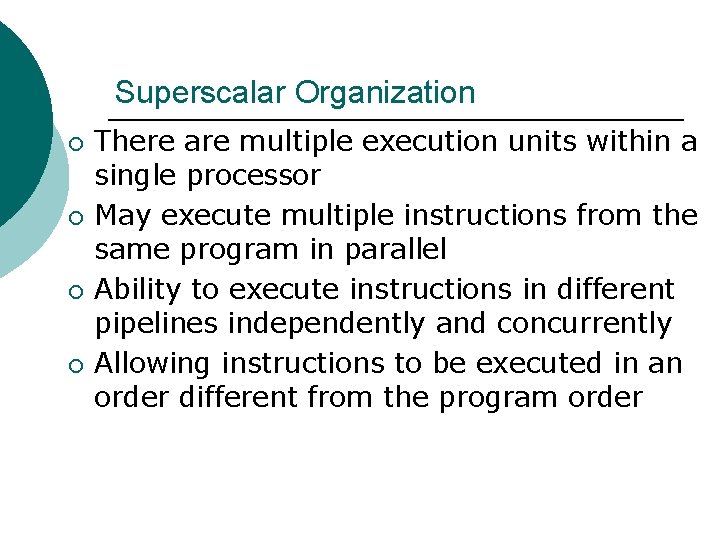

Superscalar Organization ¡ ¡ There are multiple execution units within a single processor May execute multiple instructions from the same program in parallel Ability to execute instructions in different pipelines independently and concurrently Allowing instructions to be executed in an order different from the program order

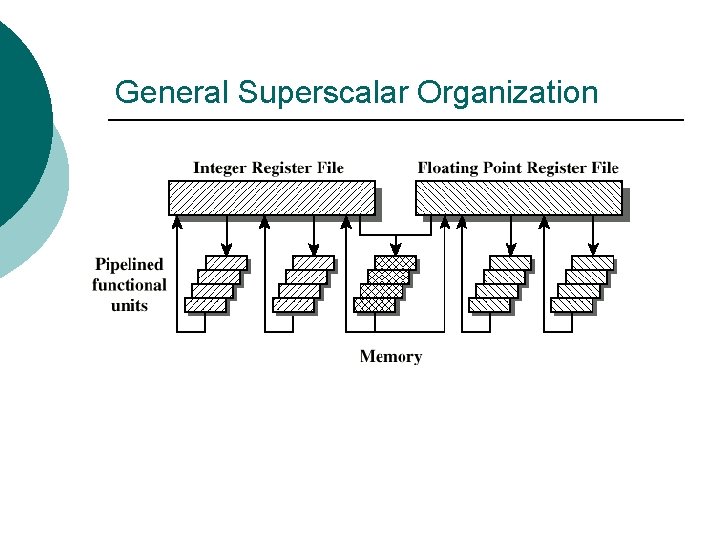

General Superscalar Organization

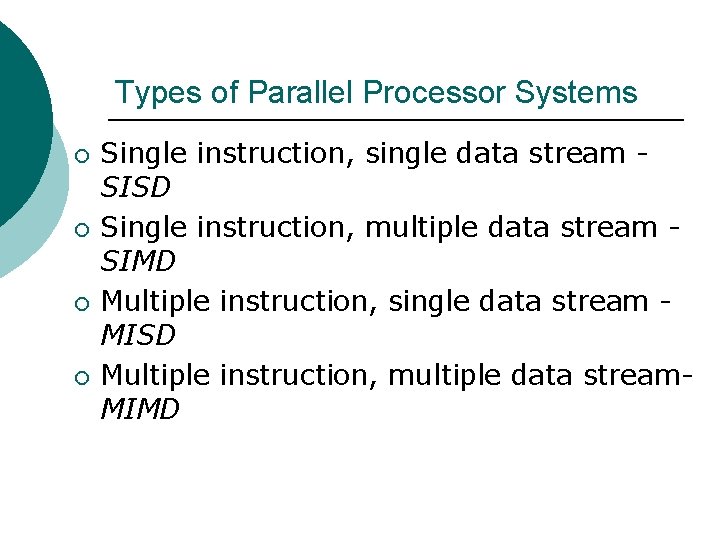

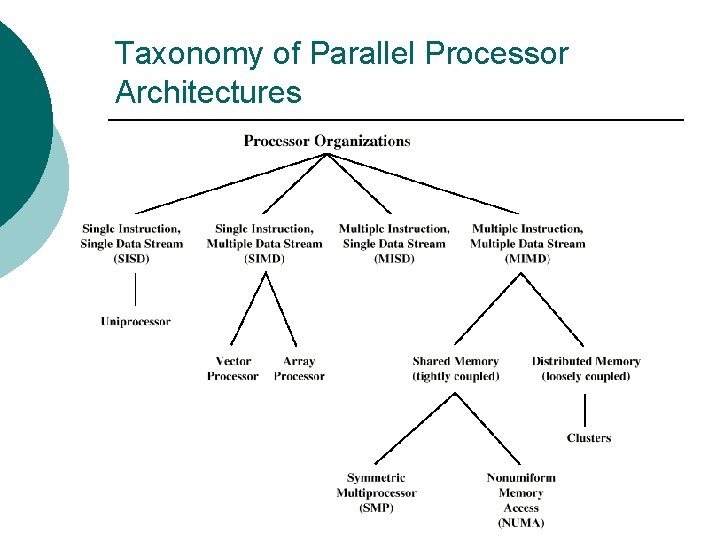

Types of Parallel Processor Systems ¡ ¡ Single instruction, single data stream SISD Single instruction, multiple data stream SIMD Multiple instruction, single data stream MISD Multiple instruction, multiple data stream. MIMD

Single Instruction, Single Data Stream SISD ¡ ¡ ¡ Single processor Single instruction stream Data stored in single memory Example: Uniprocessor

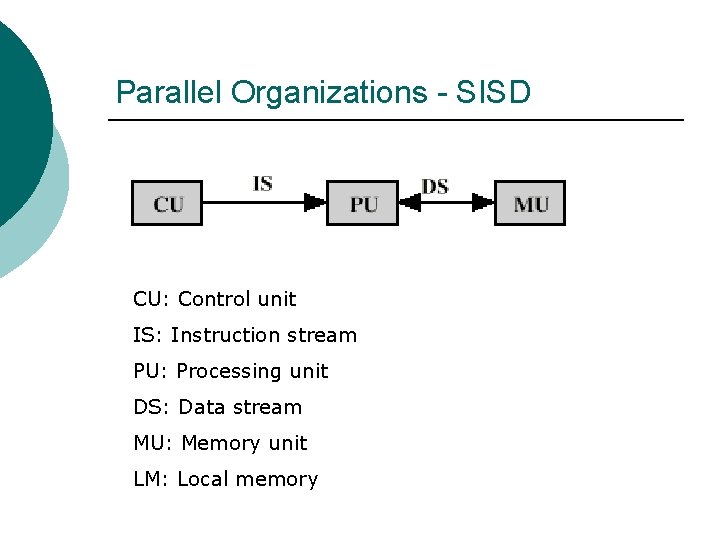

Parallel Organizations - SISD CU: Control unit IS: Instruction stream PU: Processing unit DS: Data stream MU: Memory unit LM: Local memory

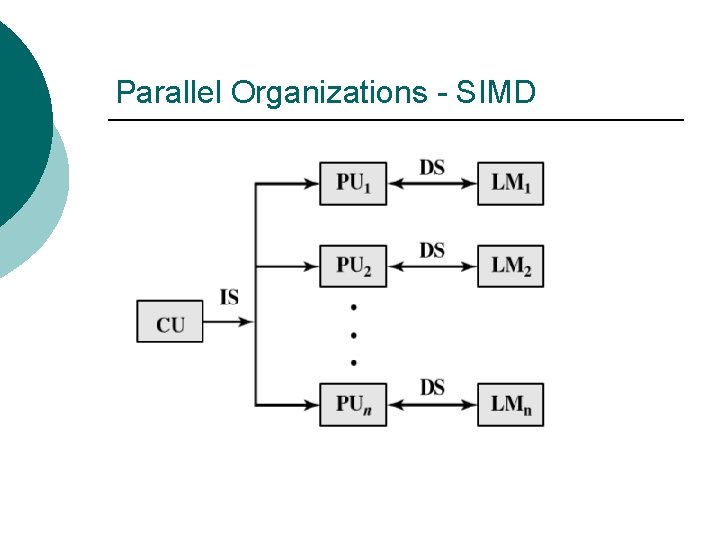

Single Instruction, Multiple Data Stream - SIMD ¡ ¡ ¡ Single machine instruction Controls simultaneous execution Number of processing elements Each processing element has associated data memory Each instruction executed on different set of data by different processors Example: Vector and array processors

Parallel Organizations - SIMD

Multiple Instruction, Single Data Stream - MISD ¡ ¡ Sequence of data Transmitted to set of processors Each processor executes different instruction sequence Never been implemented

Multiple Instruction, Multiple Data Stream- MIMD ¡ ¡ Set of processors Simultaneously execute different instruction sequences Different sets of data Examples: l l l SMPs (symmetric multiprocessors ) Clusters NUMA (nonuniform memory access) systems

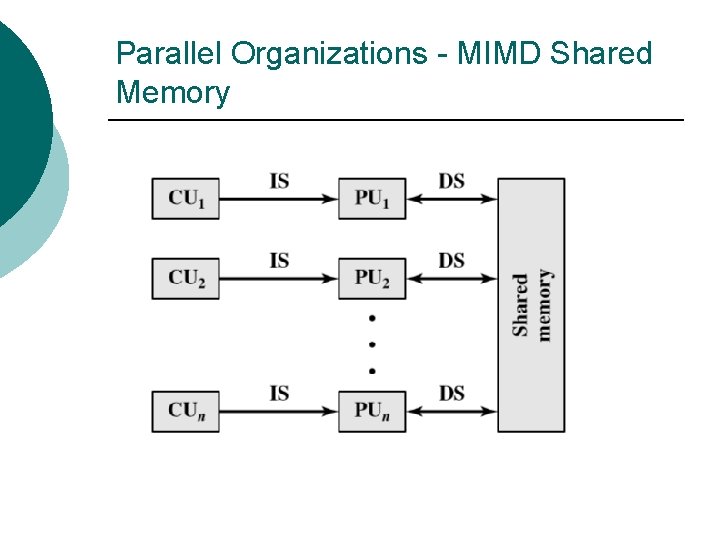

Parallel Organizations - MIMD Shared Memory

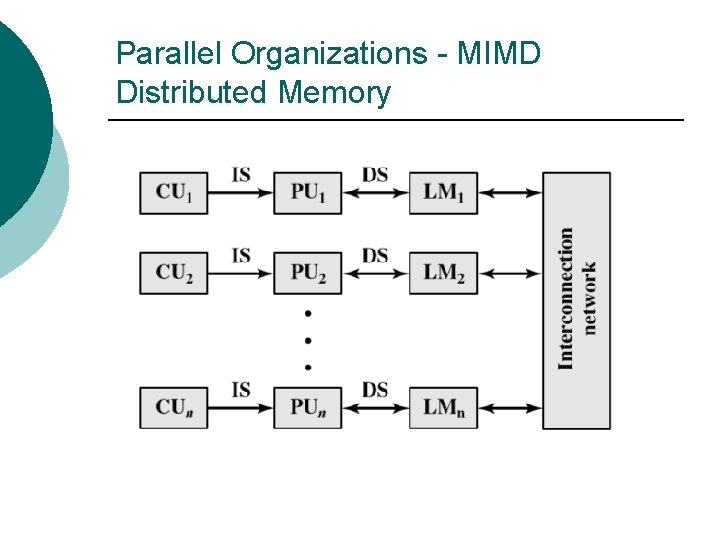

Parallel Organizations - MIMD Distributed Memory

Taxonomy of Parallel Processor Architectures

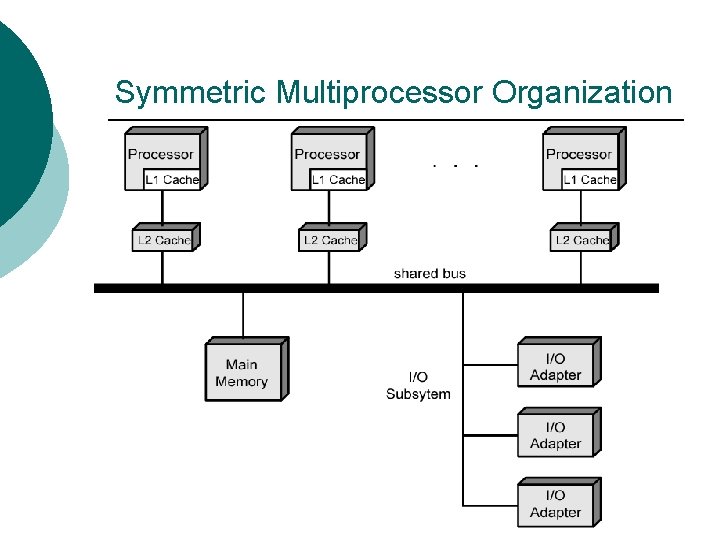

Symmetric Multiprocessor Organization

SMP Advantages ¡ Performance l ¡ Availability l ¡ Since all processors can perform the same functions, failure of a single processor does not halt the system Incremental growth l ¡ If some work can be done in parallel User can enhance performance by adding additional processors Scaling l Vendors can offer range of products based on number of processors

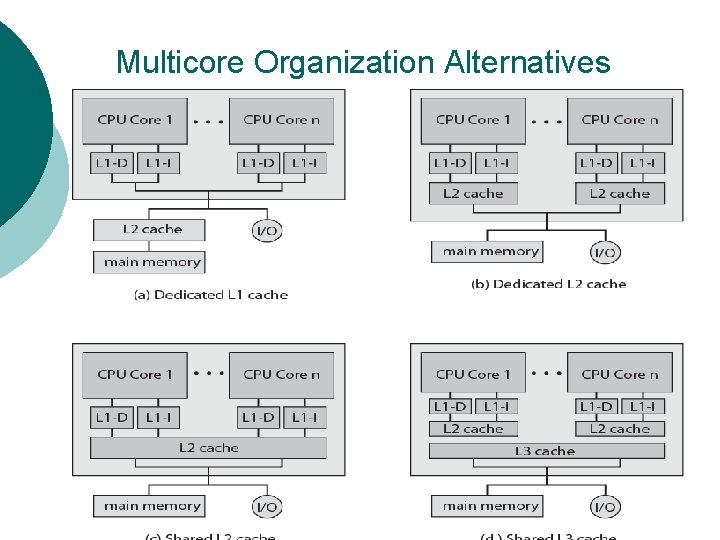

Multicore Organization ¡ ¡ Number of core processors on chip Number of levels of cache on chip Amount of shared cache Examples: l l (a) ARM 11 MPCore (b) AMD Opteron (c) Intel Core Duo (d) Intel Core i 7

Multicore Organization Alternatives

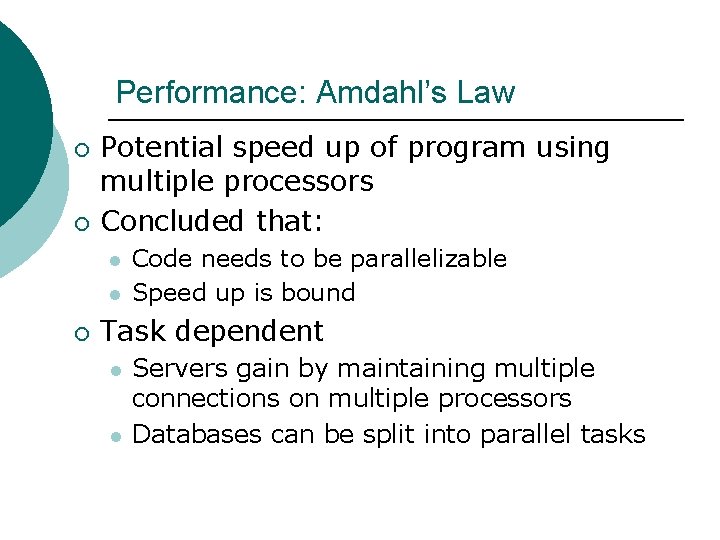

Performance: Amdahl’s Law ¡ ¡ Potential speed up of program using multiple processors Concluded that: l l ¡ Code needs to be parallelizable Speed up is bound Task dependent l l Servers gain by maintaining multiple connections on multiple processors Databases can be split into parallel tasks

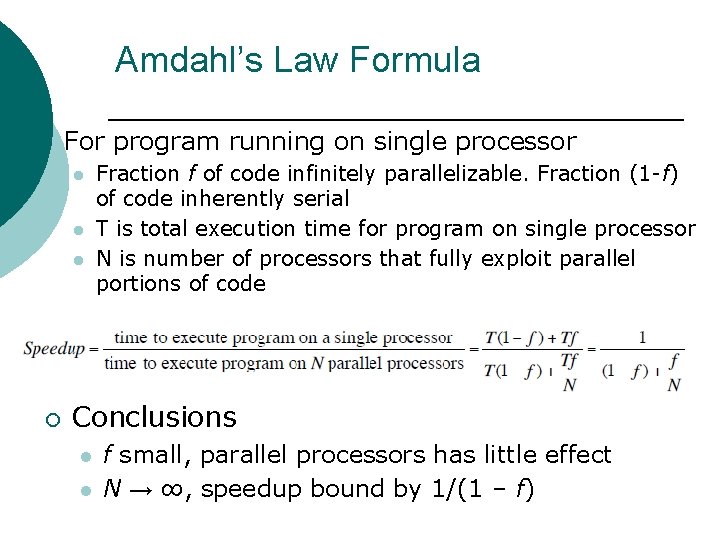

Amdahl’s Law Formula ¡ For program running on single processor l l l ¡ Fraction f of code infinitely parallelizable. Fraction (1 -f) of code inherently serial T is total execution time for program on single processor N is number of processors that fully exploit parallel portions of code Conclusions l l f small, parallel processors has little effect N → ∞, speedup bound by 1/(1 – f)

RQ: 12. 5 P: 12. 5, 12. 8 RQ: 17. 1, 17. 3

- Slides: 32