Parallel Processing A Perspective Hardware and Software 1

![Code for Payroll For i = 0 to 9 Pi process A[i*10] to A[((i+1)*10)-1] Code for Payroll For i = 0 to 9 Pi process A[i*10] to A[((i+1)*10)-1]](https://slidetodoc.com/presentation_image_h2/a3b70775a14862a22012cde49c21a884/image-13.jpg)

- Slides: 52

Parallel Processing A Perspective Hardware and Software 1

Introduction to PP o o From “Efficient Linked List Ranking Algorithms and Parentheses Matching as a New Strategy for Parallel Algorithm Design”, R. Halverson Chapter 1 – Introduction to Parallel Processing 2

Parallel Processing Research o o o 1980’s – Great Deal of Research & Publications 1990’s – Hardware not too successful so the research area “dies” – Why? ? ? Early 2000’s – Begins Resurgence? Why? ? ? Will it continue to be successful this time ? ? ? 3

Goal of PP o o o Why bother with Parallel Processing? Goal: Solve problems faster! In reality, faster but efficient! Work-Optimal: parallel algorithm runs faster than sequential algorithm in proportion to the number of processors used. Sometimes work-optimal is seemingly not possible 4

PP Issues o o o Processors: number, connectivity, communication Memory: shared vs. local Data structures Data Distribution Problem Solving Strategies 5

Parallel Problems o o One Approach: Try to develop a parallel solution to a problem without consideration of the hardware. n Apply: Apply the solution to the specific hardware and determine the extra cost, if any If not acceptably efficient, try again! 6

Parallel Problems o o Another Approach: Armed with the knowledge of strategies, data structures, etc. that work well for a particular hardware, develop a solution with a specific hardware in mind. Third Approach: Modify a solution for one hardware configuration for another 7

Real World Problems o o Inherently Parallel – nature or structure of the problem lends itself to parallelism Examples n n n o Mowing a lawn Cleaning a house Grading papers Problems are easily divided into subproblems; very little overhead 8

Real World Problems o o Not Inherently Parallel – parallelism is possible but more complex to define or with (excessive) overhead cost Examples n n n Balancing a checkbook Giving a haircut Wallpapering a room 9

Some Computer Problems o Are these “inherently parallel” or not? n n n o Processing customers’ monthly bills Payroll checks Building student grade reports from class grade sheets Searching for an item in a linked list A video game program Searching a state driver’s license database Is problem hw, sw, data? Assumptions? 10

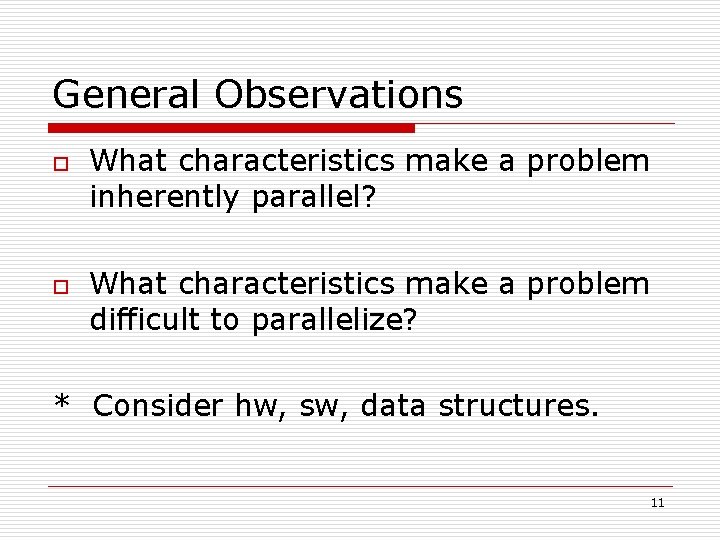

General Observations o o What characteristics make a problem inherently parallel? What characteristics make a problem difficult to parallelize? * Consider hw, sw, data structures. 11

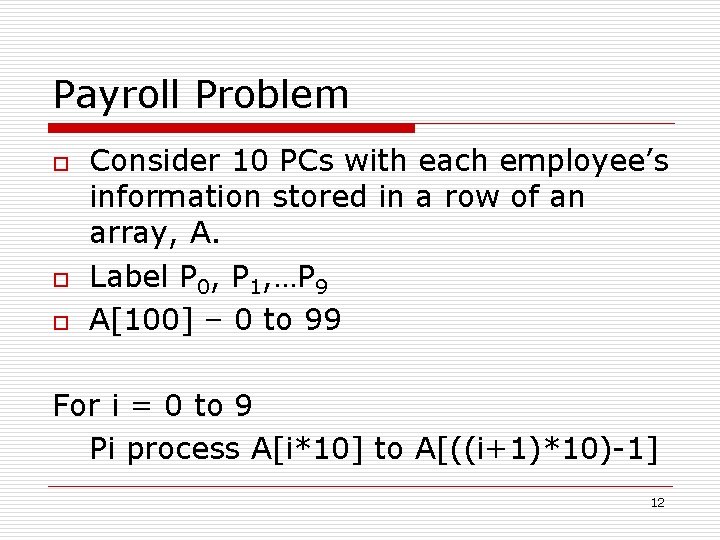

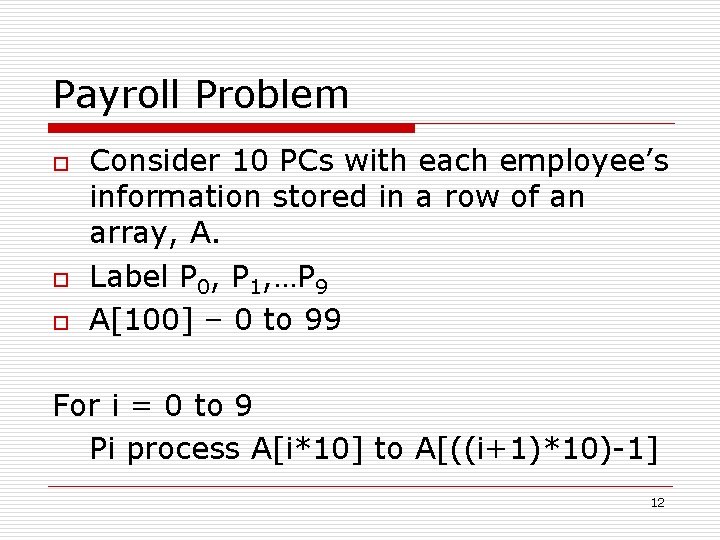

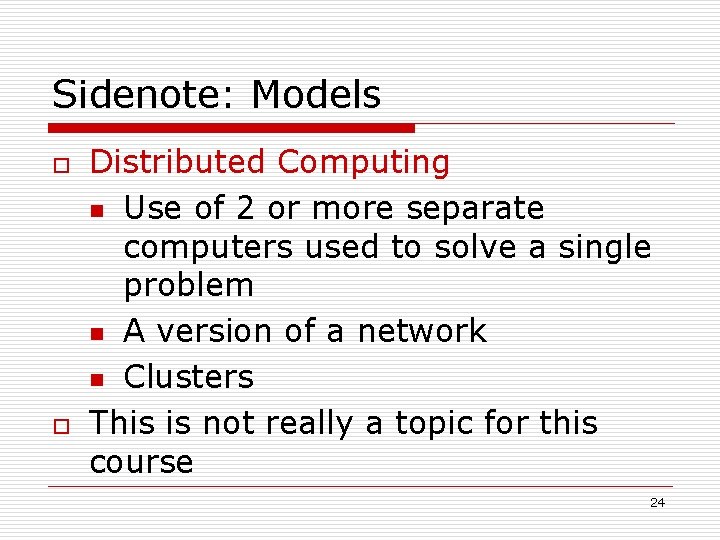

Payroll Problem o o o Consider 10 PCs with each employee’s information stored in a row of an array, A. Label P 0, P 1, …P 9 A[100] – 0 to 99 For i = 0 to 9 Pi process A[i*10] to A[((i+1)*10)-1] 12

![Code for Payroll For i 0 to 9 Pi process Ai10 to Ai1101 Code for Payroll For i = 0 to 9 Pi process A[i*10] to A[((i+1)*10)-1]](https://slidetodoc.com/presentation_image_h2/a3b70775a14862a22012cde49c21a884/image-13.jpg)

Code for Payroll For i = 0 to 9 Pi process A[i*10] to A[((i+1)*10)-1] Each PC runs a process in parallel For each Pi , i = 0 to 9 do //separate process For j = 0 to 9 Process A[i*10 + j] 13

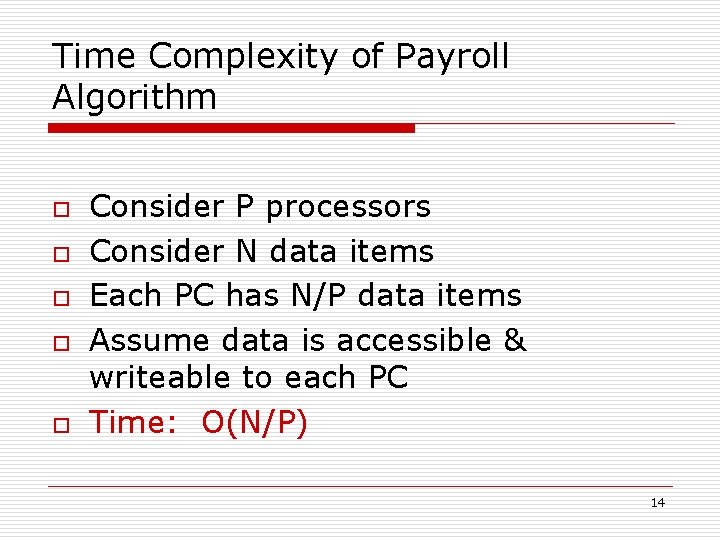

Time Complexity of Payroll Algorithm o o o Consider P processors Consider N data items Each PC has N/P data items Assume data is accessible & writeable to each PC Time: O(N/P) 14

Payroll Questions? ? o o Now we have a solution, must be applied to hardware. Which hardware? Main question: Where is the array and how is it accessed by each processor? One shared memory or many local memories? Where are the results placed? 15

What about I/O? ? o o Generally, in parallel algorithms, I/O is disregarded. Assumption: Data is stored in the available memory. Assumption: Results are written back to memory. Data input and output are generally independent of the processing algorithm. 16

Balancing a Checkbook o o Consider same hardware & data array Can still distribute and process in the same manner as the payroll n o o Each block computes deposits as addition & checks a subtraction; totals the page (10 totals) BUT then must combine the 10 totals to the final total This is the overhead 17

Complexity of Checkbook o o o o Consider P processors Consider N data items Each PC has N/P data items Assume data is accessible & writeable Time for each section: O(N/P) Combination of P subtotals Time for combining: O(P) to O(log P) Total: O(N/P + P) to O(N/P + log P) 18

Performance Complexity - Perfect Parallel Algorithm o If the best sequential algorithm for a problem is O(f(x)) then the parallel algorithm would be O(f(x)/P) o This happens if little or no overhead Actual Run Time o Typically, takes 4 processors to achieve ½ the actual run time 19

Performance Measures o Run Time: not a practical measurement Assume T 1 & Tp are run times using 1 & p processors, respectively o Speedup: S = T 1/Tp o Work: W = p * Tp (aka Cost) o If W = O(T 1) the it is Work (Cost) Optimal & achieves Linear Speedup 20

Scalability o o An algorithm is said to be Scalable if performance increases linearly with the number of processors Implication: Algorithm sustains good performance over a wide range of processors. 21

Scalability o o o What about continuing to add processors? At what point does adding more processors stop improving the run time? Does adding processors ever cause the algorithm to take more time? What is the optimal number of processors? Consider W = p * Tp = O(T 1) n Solve for p 22

Models of Computation o Two major categories n n o o Shared memory o PRAM Fixed connection o Hypercube There are numerous versions of each Not all are totally realizable in hw 23

Sidenote: Models o o Distributed Computing n Use of 2 or more separate computers used to solve a single problem n A version of a network n Clusters This is not really a topic for this course 24

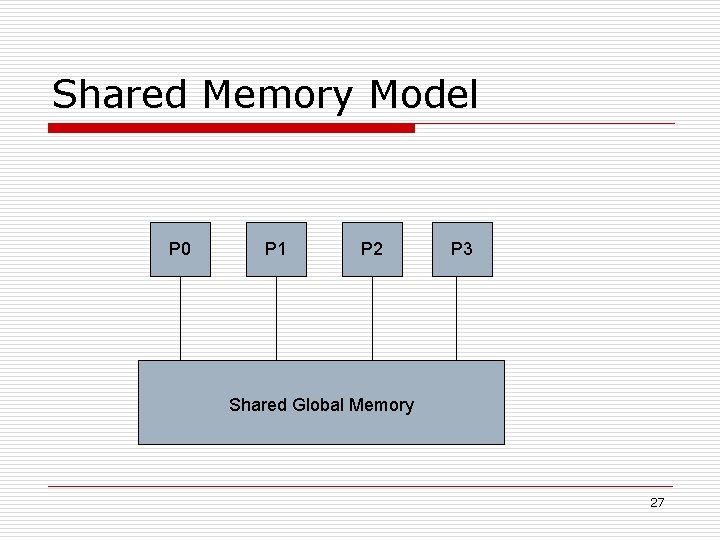

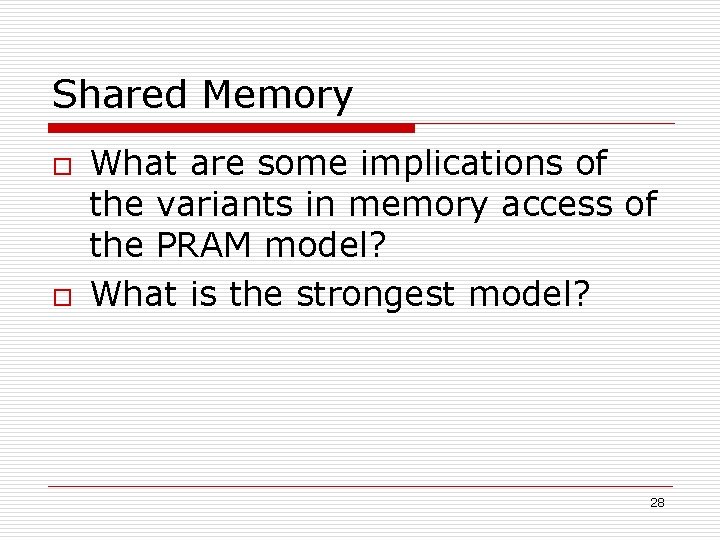

Shared Memory Model o o PRAM – parallel random access machine A category with 4 variants n o o EREW-CREW-ERCW-CRCW All communication through a shared global memory Each PC has a small local memory 25

Variants of PRAM o o EREW-CREW-ERCW-CRCW Concurrent read: 2 or more processors may read the same (or different) memory location simultaneously Exclusive read: 2 or more processors may access global memory location only if each is accessing a unique address Similarly defined for write 26

Shared Memory Model P 0 P 1 P 2 P 3 Shared Global Memory 27

Shared Memory o o What are some implications of the variants in memory access of the PRAM model? What is the strongest model? 28

Fixed Connection Models o Each PC contains a Local Memory n o PCs are connected through some type of Interconnection Network n o o Distributed memory Interconnection network defines the model Communication is via Message Passing Can be synchronous or asynchronous 29

Interconnection Networks o o o Bus Network (Linear) Ring Mesh Torus Hypercube 30

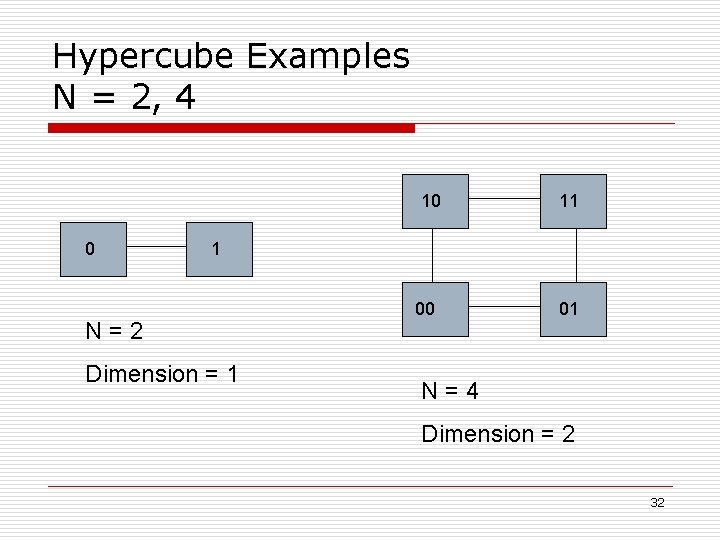

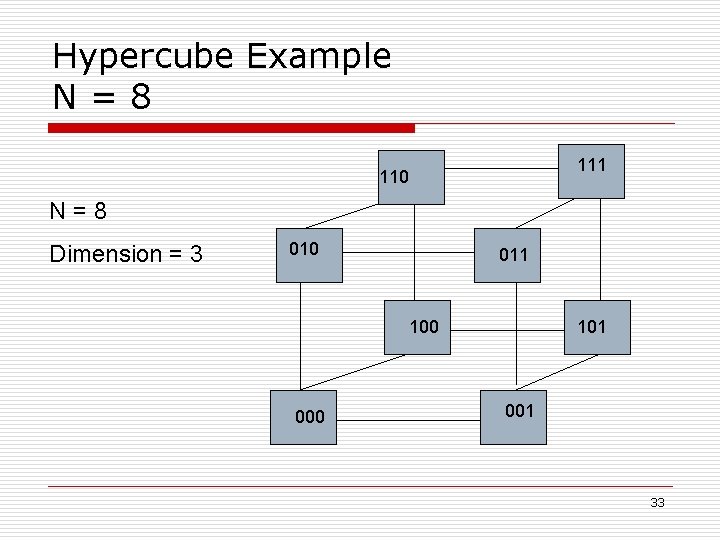

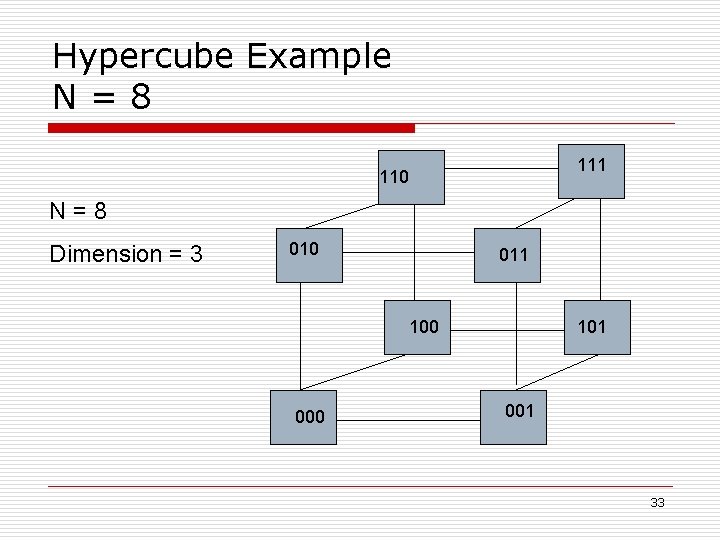

Hypercube Model o o o Distributed memory, message passing, fixed connection, parallel computer N = 2 r number of nodes E = r 2 r-1 number of edges Nodes are number 0 – N in binary such that any 2 nodes differing in one bit are connected by an edge Dimension is r 31

Hypercube Examples N = 2, 4 10 0 11 1 N=2 Dimension = 1 00 01 N=4 Dimension = 2 32

Hypercube Example N=8 111 110 N=8 Dimension = 3 010 011 100 000 101 001 33

Hypercube Considerations o o Message Passing Communication n Possible Delays Load Balancing n Each PC has same work load Data Distribution n Must follow connections 34

Consider Checkbook Problem o o How about distribution of data? n Often initial distribution is disregarded What about the combination of the subtotals? n Reduction is by dimension 35

Design Strategies o Paradigm: a general strategy used to aid in the development of the solution to a problem 36

Paradigms Extended from Sequential Use o Extended from sequential use n Divide-and-Conquer n Branch-and-Bound n Dynamic Programming 37

Paradigms Developed for Parallel Use o o o o Deterministic coin tossing Symmetry breaking Accelerating cascades Tree contraction Euler Tours Linked List Ranking All Nearest Smaller Values (ANSV) Parentheses Matching 38

Divide-and-Conquer o o Most basic parallel strategy Used in virtually every parallel algorithm Problem is divided into several subproblems that can be solved independently; results of subproblems are combined into the final solution Example: Checkbook Problem 39

Dynamic Programming o o o Divide-n-conquer technique used when sub-problems are not independent; share common subproblems Sub-problem solutions are stored in table for use by other processes Often used for optimization problems n n Minimum or Maximum Fibonacci Numbers 40

Branch-and-Bound o o o Breadth-first tree processing technique Uses a bounding function that allows some branches of the tree to be pruned (i. e. eliminated) Example: Game programming 41

Symmetry Breaking o o Strategy that breaks a linked structure (e. g. linked list) into disjoint pieces for processing Deterministic Coin Tossing n n Using a binary representation of index, nonadjacent elements are selected for processing Often used in Linked List Ranking Algorithms 42

Accelerated Cascades o o o Applying 2 or more algorithms to a single problem, Change from one to another based on the ratio of the problem size to the number of processors – Threshold This “fine tuning” sometimes allows for better performance 43

Tree Contraction o o Nodes of a tree are removed; information removed is combined with remaining nodes’ Multiple processors are assigned to independent nodes Tree is reduced to a single node which contains the solution E. G. Arithmetic Expression Computation 44

Euler Tour o o Create duplicate nodes in a tree or graph with edge in opposite direction to create a circuit Allows tree or graph to be processed as a linked list 45

Linked List Ranking o o o Halverson’s area of dissertation research Technique to number, in order, the elements of a linked list (20+) Applied to a wide range of problems (23) n n Euler Tours Tree Searches List Packing Components Connectivity Decomposition -- Tree Traversals -- Spanning Trees & Forests -- Connected -- Graph 46

All Nearest Smaller Values o o For each value x, which elements are smaller than x Successfully applied to n n Depth first search of interval graph Parentheses matching Line Packing Triangulating a monotone polynomial 47

Parentheses Matching o o In a properly formed string of parentheses, find the index of each parentheses mate Applied to solve n n Heights of all nodes in a tree Extreme values in a tree Lowest common ancestor Balancing binary trees 48

Parallel Algorithm Design o o Identify problems and/or classes of problems for which a particular strategy will work Apply to the appropriate hardware n Most of the paradigms have been optimized for a variety of parallel architectures 49

Broadcast Operation o o o Not a paradigm, but an operation used in many parallel algorithms Provide one or more items of data to all the processors (individual memories) Let P be the number of processors. For most models, broadcast operation is O(log P) time complexity 50

Broadcast o Shared Memory (EREW) n n o Hypercube n o P 0 writes for P 1; P 0 & P 1 write for P 2 & P 3; P 0 – P 3 write for P 4 – P 7 Then each PC has a copy to be read in one time unit P 0 sends to P 1; P 0 & P 1 send to P 2 & P 3, etc. Both are O(log P) 51

Remainder of this Course o o o o Cover Chapter 1 & 2 Cover parts of Chapters 3, 4, 5 Cover Chapter 6 Other Chapters to be determined Graduate Student Presentations Videos Exams, Homework, Quizzes 52