Parallel jobs with MPI and hands on tutorial

Parallel jobs with MPI and hands on tutorial Enol Fernández del Castillo Instituto de Física de Cantabria

Introduction • What is a parallel job? – A job that uses more than one CPU simultaneously to solve a problem • Why go parallel? – Save time – Solve larger problems – Limits of hardware (speed, integration limits, economic limits) Curso Grid & e-Ciencia. Valencia - Julio 2010. 2

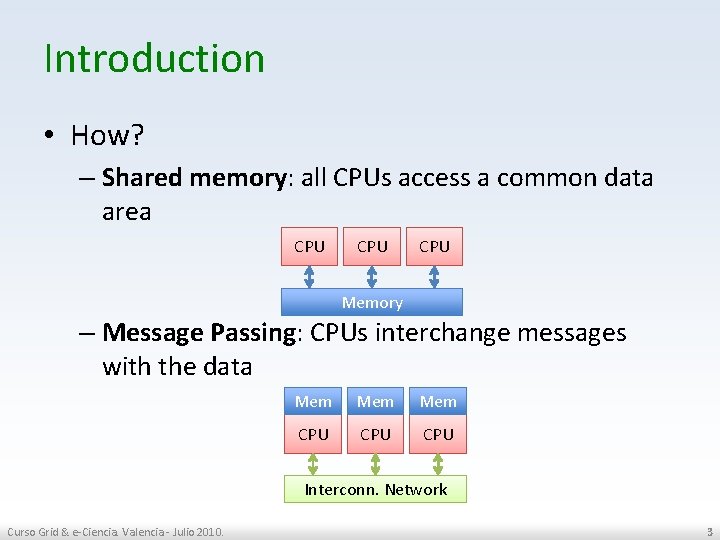

Introduction • How? – Shared memory: all CPUs access a common data area CPU CPU Memory – Message Passing: CPUs interchange messages with the data Mem Mem CPU CPU Interconn. Network Curso Grid & e-Ciencia. Valencia - Julio 2010. 3

Introduction • Speed up: how fast the parallel version is. Curso Grid & e-Ciencia. Valencia - Julio 2010. 4

Introduction • Shared Memory Model – Tasks share a common address space, which they read and write asynchronously. – Various mechanisms such as locks / semaphores may be used to control access to the shared memory. – No need to specify explicitly the communication of data between tasks. Program development can often be simplified. Curso Grid & e-Ciencia. Valencia - Julio 2010. 5

Introduction • Message Passing Model – Tasks that use their own local memory during computation. – Multiple tasks can reside on the same physical machine as well across an arbitrary number of machines. – Tasks exchange data through communications by sending and receiving messages. – Data transfer usually requires cooperative operations to be performed by each process. For example, a send operation must have a matching receive operation. • MPI (Message Passing Interface) is an API for programming Message Passing parallel applications Curso Grid & e-Ciencia. Valencia - Julio 2010. 6

Before MPI • Every computer manufacturer had his own programming interface • Pro – Offers the best performance on the specific system • Contra – Sometimes required to have in depth knowledge of the underlying hardware – Programmer has to learn different programming interfaces and paradigms (e. g. Shared memory vs. distributed memory) – Not portable – Efforts needs to be redone for every application port to a new architecture Curso Grid & e-Ciencia. Valencia - Julio 2010. 7

MPI • Defines uniform and standard API (vendor neutral) for message passing • Allows efficient implementation • Provides C, C++ and Fortran bindings • Several MPI implementations available: – From hardware providers (IBM, HP, SGI…. ) optimized for their systems – Academic implementations • Most extended implementations: – MPICH (from ANL/MSU), includes support for a wide range of devices (even using globus from communication) – Open MPI (join effort from FT-MPI, LAN/MPI and PACXMPI developers): modular implementation that allows the use of advanced hardware during runtime Curso Grid & e-Ciencia. Valencia - Julio 2010. 8

MPI-1 & MPI-2 • MPI-1 (1994) standard includes: – Point to point communication – Collective operations – Process groups and topologies – Communication contexts – Datatype Management • MPI-2 (1997) adds: – Dynamic Process Management – File I/O – One Sided Communicactions – Extension of Collective Operations Curso Grid & e-Ciencia. Valencia - Julio 2010. 9

Processes • Every MPI job consist of N processes – 1 <= N , N == 1 is valid • Each process in a Job could execute a different binary – In general it's always the same binary which executes different code path based on the process number • Processes are included in groups Curso Grid & e-Ciencia. Valencia - Julio 2010. 10

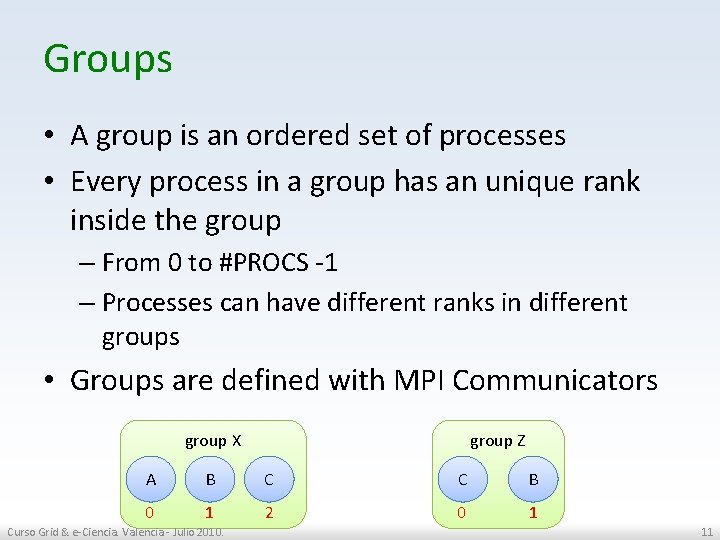

Groups • A group is an ordered set of processes • Every process in a group has an unique rank inside the group – From 0 to #PROCS -1 – Processes can have different ranks in different groups • Groups are defined with MPI Communicators group X group Z A B C C B 0 1 2 0 1 Curso Grid & e-Ciencia. Valencia - Julio 2010. 11

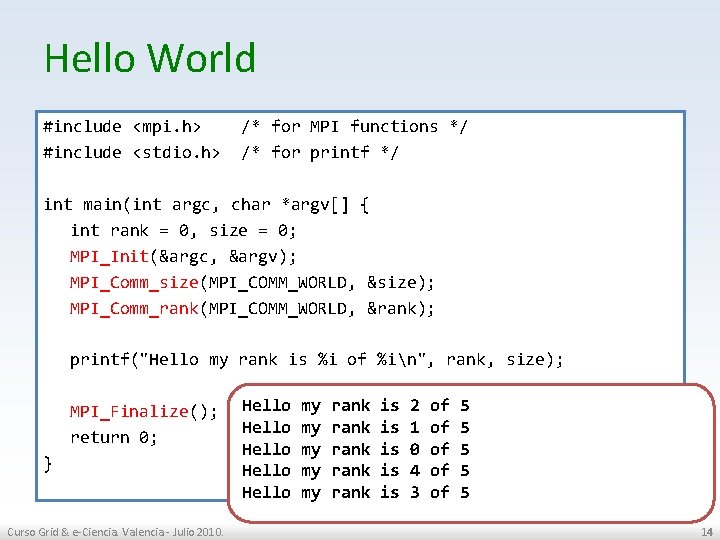

Hello World #include <mpi. h> /* for MPI functions */ #include <stdio. h> /* for printf */ int main(int argc, char *argv[] { int rank = 0, size = 0; MPI_Init(&argc, &argv); MPI_Comm_size(MPI_COMM_WORLD, &size); MPI_Comm_rank(MPI_COMM_WORLD, &rank); printf("Hello my rank is %i of %in", rank, size); MPI_Finalize(); return 0; } Curso Grid & e-Ciencia. Valencia - Julio 2010. 12

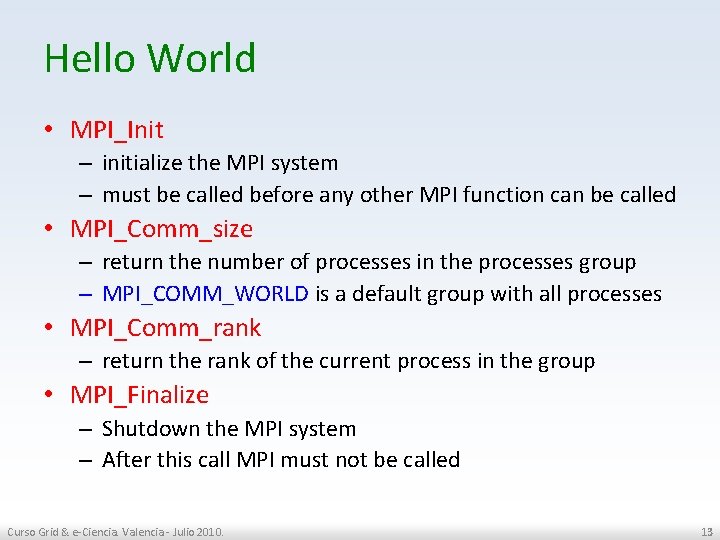

Hello World • MPI_Init – initialize the MPI system – must be called before any other MPI function can be called • MPI_Comm_size – return the number of processes in the processes group – MPI_COMM_WORLD is a default group with all processes • MPI_Comm_rank – return the rank of the current process in the group • MPI_Finalize – Shutdown the MPI system – After this call MPI must not be called Curso Grid & e-Ciencia. Valencia - Julio 2010. 13

Hello World #include <mpi. h> /* for MPI functions */ #include <stdio. h> /* for printf */ int main(int argc, char *argv[] { int rank = 0, size = 0; MPI_Init(&argc, &argv); MPI_Comm_size(MPI_COMM_WORLD, &size); MPI_Comm_rank(MPI_COMM_WORLD, &rank); printf("Hello my rank is %i of %in", rank, size); MPI_Finalize(); return 0; } Curso Grid & e-Ciencia. Valencia - Julio 2010. Hello my rank is 2 of 5 Hello my rank is 1 of 5 Hello my rank is 0 of 5 Hello my rank is 4 of 5 Hello my rank is 3 of 5 14

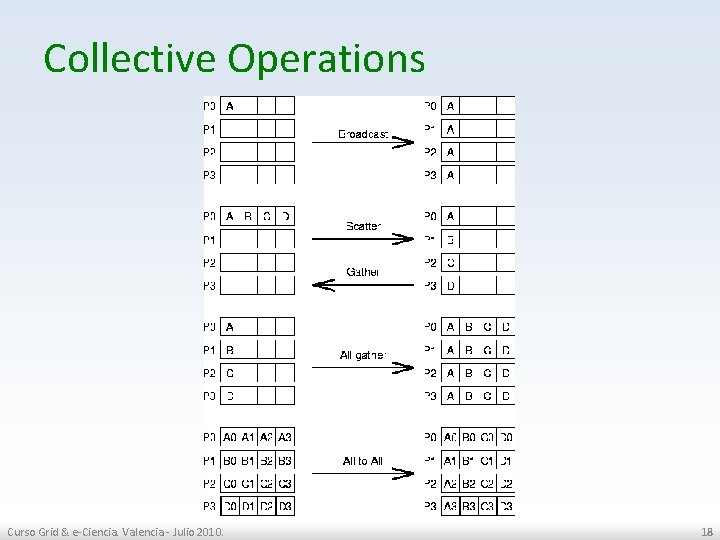

Communication between processes • Two types of processes communication: – Point-to-point: the source process knows the rank of the destination process and sends message directed to it. – Collective: all the processes in the group are involved in the communication. • Most functions are blocking. That is: the process waits until it has received completely the message. Curso Grid & e-Ciencia. Valencia - Julio 2010. 15

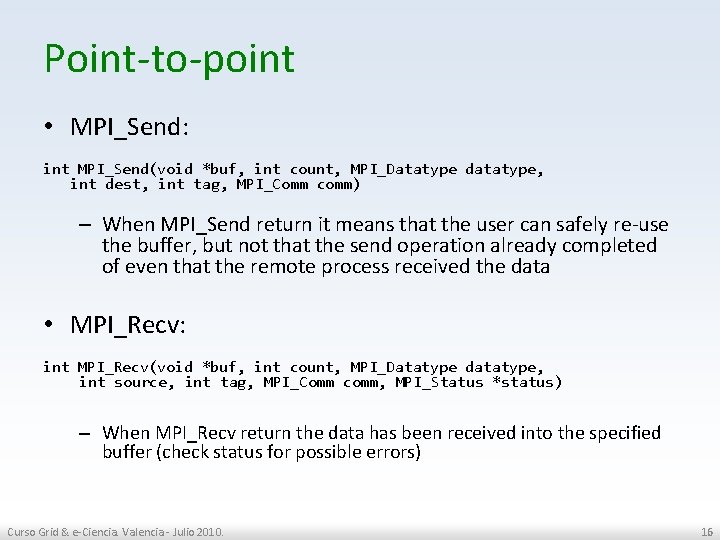

Point-to-point • MPI_Send: int MPI_Send(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) – When MPI_Send return it means that the user can safely re-use the buffer, but not that the send operation already completed of even that the remote process received the data • MPI_Recv: int MPI_Recv(void *buf, int count, MPI_Datatype datatype, int source, int tag, MPI_Comm comm, MPI_Status *status) – When MPI_Recv return the data has been received into the specified buffer (check status for possible errors) Curso Grid & e-Ciencia. Valencia - Julio 2010. 16

![Point-to-point: ring example #include <mpi. h> #include <stdio. h> int main(int argc, char *argv[]) Point-to-point: ring example #include <mpi. h> #include <stdio. h> int main(int argc, char *argv[])](http://slidetodoc.com/presentation_image/14ad917518c81d7e3cd40a296e14f778/image-17.jpg)

Point-to-point: ring example #include <mpi. h> #include <stdio. h> int main(int argc, char *argv[]) { MPI_Status status; MPI_Request request; int rank = 0, size = 0; int next = 0, prev = 0; int data = 0; 0 1 2 MPI_Init(&argc, &argv); MPI_Comm_rank(MPI_COMM_WORLD, &rank); MPI_Comm_size(MPI_COMM_WORLD, &size); prev = (rank + (size 1)) % size; next = (rank + 1) % size; MPI_Send(&rank, 1, MPI_INT, next, 0, MPI_COMM_WORLD); MPI_Recv(&data, 1, MPI_INT, prev, 0, MPI_COMM_WORLD, &status); printf("%i received %in", rank, data); MPI_Finalize(); return 0; } Curso Grid & e-Ciencia. Valencia - Julio 2010. 17

Collective Operations Curso Grid & e-Ciencia. Valencia - Julio 2010. 18

![Collective Operations Example int main(int argc, char *argv[]) { int n, myid, numprocs, i; Collective Operations Example int main(int argc, char *argv[]) { int n, myid, numprocs, i;](http://slidetodoc.com/presentation_image/14ad917518c81d7e3cd40a296e14f778/image-19.jpg)

Collective Operations Example int main(int argc, char *argv[]) { int n, myid, numprocs, i; double mypi, h, sum, x; MPI_Init(&argc, &argv); MPI_Comm_size(MPI_COMM_WORLD, &numprocs); MPI_Comm_rank(MPI_COMM_WORLD, &myid); n = 10000; /* default # of rectangles */ MPI_Bcast(&n, 1, MPI_INT, 0, MPI_COMM_WORLD); h = 1. 0 / (double) n; sum = 0. 0; for (i = myid + 1; i <= n; i += numprocs) { x = h * ((double)i 0. 5); sum += 4/(1+x*x); } mypi = h * sum; MPI_Reduce(&mypi, &pi, 1, MPI_DOUBLE, MPI_SUM, 0, MPI_COMM_WORLD); if (myid == 0) { printf("pi is approximately %. 16 fn", pi); } MPI_Finalize(); return 0; } Curso Grid & e-Ciencia. Valencia - Julio 2010. 19

Take into account… • MPI is no Magic: – “I installed XXX-MPI in 3 of my machines but my Photoshop doesn't run faster !? !” • MPI is not an implementation • MPI is not the holy grail – Some problems don't really fit into the message passing paradigm – It's quite easy to write a parallel MPI application that perform worse than the sequential version – MPI works fine on shared memory systems, but maybe an Open. MP solution would work better Curso Grid & e-Ciencia. Valencia - Julio 2010. 20

Some MPI resources • MPI Forum http: //www. mpi-forum. org/ • Open MPI http: //www. open-mpi. org/ • MPICH (2)http: //www. mcs. anl. gov/research/projects /mpich 2/ • MPI Tutorials: – http: //www 2. epcc. ed. ac. uk/computing/training/d ocument_archive/mpi-course/mpicourse. book_1. html – https: //computing. llnl. gov/tutorials/mpi/ Curso Grid & e-Ciencia. Valencia - Julio 2010. 21

Moving to the grid… • There is no standard way of starting an MPI application – No common syntax for mpirun, mpiexec support optional • The cluster where the MPI job is supposed to run doesn't have a shared file system – How to distribute the binary and input files? – How to gather the output? • Different clusters over the Grid are managed by different Local Resource Management Systems (PBS, LSF, SGE, …) – Where is the list of machines that the job can use? – What is the correct format for this list? • How to compile MPI program? – How can a physicist working on Windows workstation compile his code for/with an Itanium MPI implementation? Curso Grid & e-Ciencia. Valencia - Julio 2010. 22

MPI-Start • Specify a unique interface to the upper layer to run a MPI job • Allow the support of new MPI implementations without modifications in the Grid middleware • Support of “simple” file distribution • Provide some support for the user to help manage his data Grid Middleware MPI-START MPI Curso Grid & e-Ciencia. Valencia - Julio 2010. Resources 23

MPI-Start design goals • Portable – The program must be able to run under any supported operating system • Modular and extensible architecture – Plugin/Component architecture • Relocatable – Must be independent of absolute path, to adapt to different site configurations – Remote “injection” of mpi-start along with the job • “Remote” debugging features Curso Grid & e-Ciencia. Valencia - Julio 2010. 24

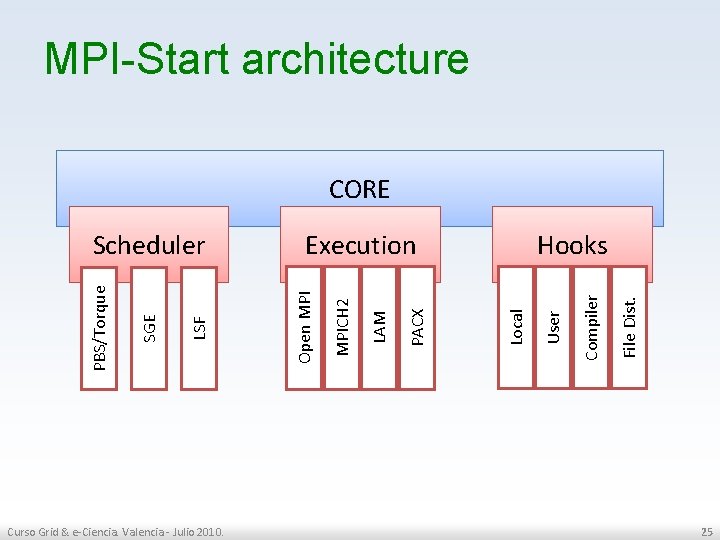

MPI-Start architecture CORE Curso Grid & e-Ciencia. Valencia - Julio 2010. File Dist. Compiler User Hooks Local PACX LAM MPICH 2 Execution Open MPI LSF SGE PBS/Torque Scheduler 25

MPI-Start flow NO START Scheduler Plugin Do we have a scheduler plugin for the current environment? Ask Scheduler plugin for a machinefile in default format NO Execution Plugin Do we have a plugin for the selected MPI? Activate MPI Plugin Prepare mpirun Trigger pre-run hooks Start mpirun Trigger post-run hooks Dump Env Curso Grid & e-Ciencia. Valencia - Julio 2010. EXIT Hooks Plugins 26

Using MPI-Start • Interface with environment variables: – I 2 G_MPI_APPLICATION: • The executable – I 2 G_MPI_APPLICATION_ARGS: • The parameters to be passed to the executable – I 2 G_MPI_TYPE: • The MPI implementation to use (e. g openmpi, . . . ) – I 2 G_MPI_VERSION: • Specifies which version of the MPI implementation to use. If not defined the default version will be used Curso Grid & e-Ciencia. Valencia - Julio 2010. 27

Using MPI-Start • More variables: – I 2 G_MPI_PRECOMMAND • Specifies a command that is prepended to the mpirun (e. g. time). – I 2 G_MPI_PRE_RUN_HOOK • Points to a shell script that must contain a “pre_run_hook” function. • This function will be called before the parallel application is started (usage: compilation of the executable) – I 2 G_MPI_POST_RUN_HOOK • Like I 2 G_MPI_PRE_RUN_HOOK, but the script must define a “post_run_hook” that is called after the parallel application finished (usage: upload of results). Curso Grid & e-Ciencia. Valencia - Julio 2010. 28

![Using MPI-Start The script: [imain 179@i 2 g-ce 01 ~]$ cat test 2 mpistart. Using MPI-Start The script: [imain 179@i 2 g-ce 01 ~]$ cat test 2 mpistart.](http://slidetodoc.com/presentation_image/14ad917518c81d7e3cd40a296e14f778/image-29.jpg)

Using MPI-Start The script: [imain 179@i 2 g-ce 01 ~]$ cat test 2 mpistart. sh #!/bin/sh # This is a script to show mpi-start is called # Set environment variables needed by mpi-start export I 2 G_MPI_APPLICATION=/bin/hostname export I 2 G_MPI_APPLICATION_ARGS= export I 2 G_MPI_NP=2 export I 2 G_MPI_TYPE=openmpi export I 2 G_MPI_FLAVOUR=openmpi export I 2 G_MPI_JOB_NUMBER=0 export I 2 G_MPI_STARTUP_INFO=/home/imain 179 export I 2 G_MPI_PRECOMMAND=time export I 2 G_MPI_RELAY= export I 2 G_MPI_START=/opt/i 2 g/bin/mpi-start # Execute mpi-start $I 2 G_MPI_START The submission (in SGE): [imain 179@i 2 g-ce 01 ~]$ qsub -S /bin/bash -pe openmpi 2 -l allow_slots_egee=0. /test 2 mpistart. sh The Std. Out: [imain 179@i 2 g-ce 01 ~]$ cat test 2 mpistart. sh. o 114486 Scientific Linux CERN SLC release 4. 5 (Beryllium) lflip 30. lip. pt lflip 31. lip. pt The Std. Err: [lflip 31] /home/imain 179 > cat test 2 mpistart. sh. e 114486 Scientific Linux CERN SLC release 4. 5 (Beryllium) real 0 m 0. 731 s user 0 m 0. 021 s sys 0 m 0. 013 s • MPI commands are transparent to the user – No explicit mpiexec/mpirun instruction – Start the script via normal LRMS submission Curso Grid & e-Ciencia. Valencia - Julio 2010. 29

PRACTICAL SESSION… Curso Grid & e-Ciencia. Valencia - Julio 2010. 30

Get the examples • wget http: //devel. ifca. es/~enol/mpi. tgz • tar xzf mpi. tgz Curso Grid & e-Ciencia. Valencia - Julio 2010. 31

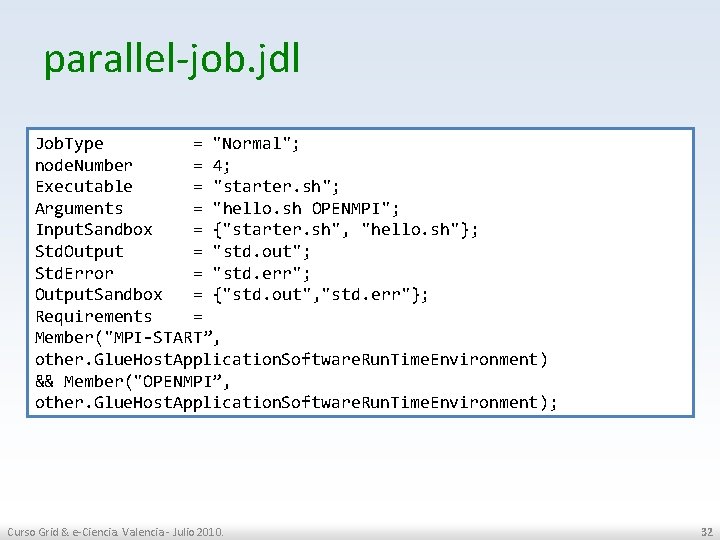

parallel-job. jdl Job. Type = "Normal"; node. Number = 4; Executable = "starter. sh"; Arguments = "hello. sh OPENMPI"; Input. Sandbox = {"starter. sh", "hello. sh"}; Std. Output = "std. out"; Std. Error = "std. err"; Output. Sandbox = {"std. out", "std. err"}; Requirements = Member("MPI START”, other. Glue. Host. Application. Software. Run. Time. Environment) && Member("OPENMPI”, other. Glue. Host. Application. Software. Run. Time. Environment); Curso Grid & e-Ciencia. Valencia - Julio 2010. 32

starter. sh #!/bin/bash MY_EXECUTABLE=$1 MPI_FLAVOR=$2 # Convert flavor to lowercase for passing to mpi start. MPI_FLAVOR_LOWER=`echo $MPI_FLAVOR | tr '[: upper: ]' '[: lower: ]'` # Pull out the correct paths for the requested flavor. eval MPI_PATH=`printenv MPI_${MPI_FLAVOR}_PATH` # Ensure the prefix is correctly set. Don't rely on the defaults. eval I 2 G_${MPI_FLAVOR}_PREFIX=$MPI_PATH export I 2 G_${MPI_FLAVOR}_PREFIX # Touch the executable. It exist must for the shared file system check. # If it does not, then mpi start may try to distribute the executable # when it shouldn't. touch $MY_EXECUTABLE # chmod +x $MY_EXECUTABLE # Setup for mpi start. export I 2 G_MPI_APPLICATION=$MY_EXECUTABLE #export I 2 G_MPI_APPLICATION_ARGS=". / 0 0 1" export I 2 G_MPI_TYPE=$MPI_FLAVOR_LOWER # optional hooks #export I 2 G_MPI_PRE_RUN_HOOK=hooks. sh #export I 2 G_MPI_POST_RUN_HOOK=hooks. sh # If these are set then you will get more debugging information. #export I 2 G_MPI_START_VERBOSE=1 #export I 2 G_MPI_START_TRACE=1 #export I 2 G_MPI_START_DEBUG=1 # Invoke mpi start. $I 2 G_MPI_START Curso Grid & e-Ciencia. Valencia - Julio 2010. 33

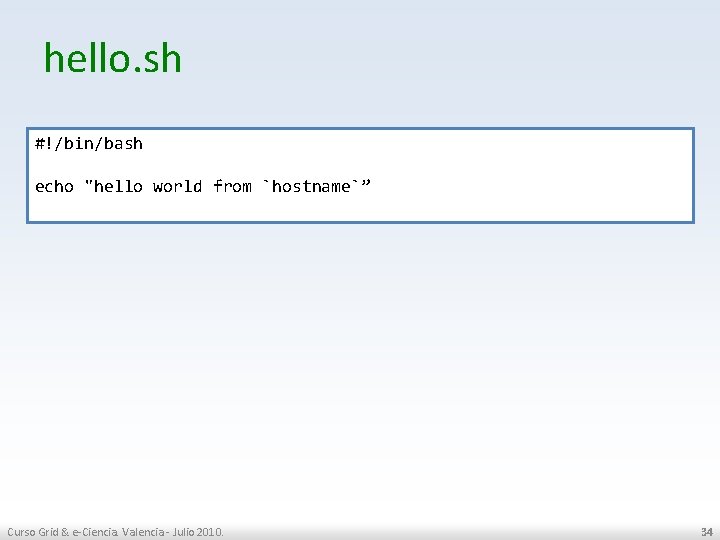

hello. sh #!/bin/bash echo "hello world from `hostname`” Curso Grid & e-Ciencia. Valencia - Julio 2010. 34

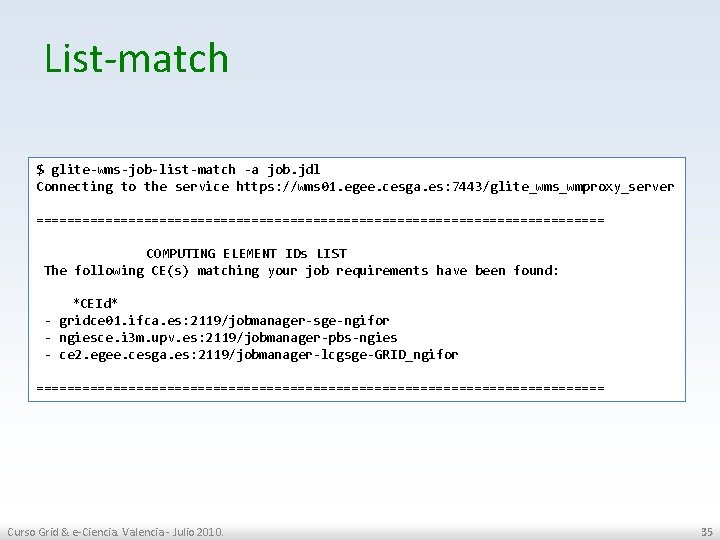

List-match $ glite wms job list match a job. jdl Connecting to the service https: //wms 01. egee. cesga. es: 7443/glite_wms_wmproxy_server ===================================== COMPUTING ELEMENT IDs LIST The following CE(s) matching your job requirements have been found: *CEId* gridce 01. ifca. es: 2119/jobmanager sge ngifor ngiesce. i 3 m. upv. es: 2119/jobmanager pbs ngies ce 2. egee. cesga. es: 2119/jobmanager lcgsge GRID_ngifor ===================================== Curso Grid & e-Ciencia. Valencia - Julio 2010. 35

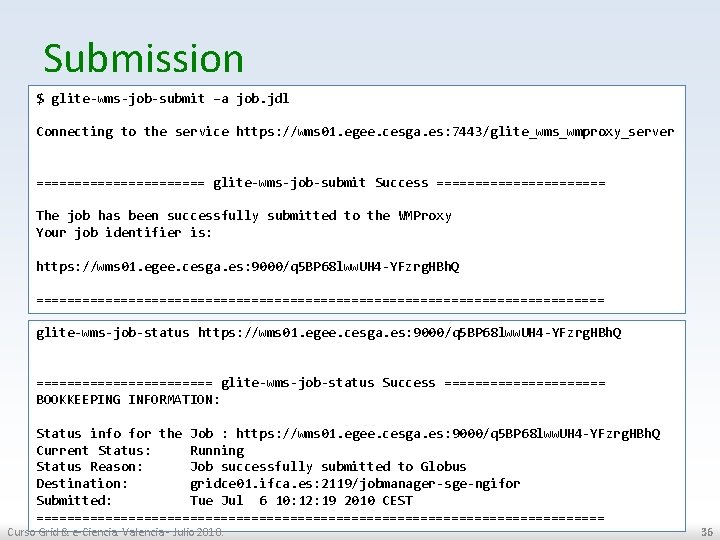

Submission $ glite wms job submit –a job. jdl Connecting to the service https: //wms 01. egee. cesga. es: 7443/glite_wms_wmproxy_server =========== glite wms job submit Success =========== The job has been successfully submitted to the WMProxy Your job identifier is: https: //wms 01. egee. cesga. es: 9000/q 5 BP 68 lww. UH 4 YFzrg. HBh. Q ===================================== glite wms job status https: //wms 01. egee. cesga. es: 9000/q 5 BP 68 lww. UH 4 YFzrg. HBh. Q ============ glite wms job status Success =========== BOOKKEEPING INFORMATION: Status info for the Job : https: //wms 01. egee. cesga. es: 9000/q 5 BP 68 lww. UH 4 YFzrg. HBh. Q Current Status: Running Status Reason: Job successfully submitted to Globus Destination: gridce 01. ifca. es: 2119/jobmanager sge ngifor Submitted: Tue Jul 6 10: 12: 19 2010 CEST ===================================== Curso Grid & e-Ciencia. Valencia - Julio 2010. 36

Output $ glite wms job output https: //wms 01. egee. cesga. es: 9000/q 5 BP 68 lww. UH 4 YFzrg. HBh. Q Connecting to the service https: //wms 01. egee. cesga. es: 7443/glite_wms_wmproxy_server ======================================== JOB GET OUTPUT OUTCOME Output sandbox files for the job: https: //wms 01. egee. cesga. es: 9000/q 5 BP 68 lww. UH 4 YFzrg. HBh. Q have been successfully retrieved and stored in the directory: /gpfs/csic_projects/grid/tmp/job. Output/enol_q 5 BP 68 lww. UH 4 YFzrg. HBh. Q ======================================== $ cat /gpfs/csic_projects/grid/tmp/job. Output/enol_q 5 BP 68 lww. UH 4 YFzrg. HBh. Q/* hello world from gcsic 142 wn Curso Grid & e-Ciencia. Valencia - Julio 2010. 37

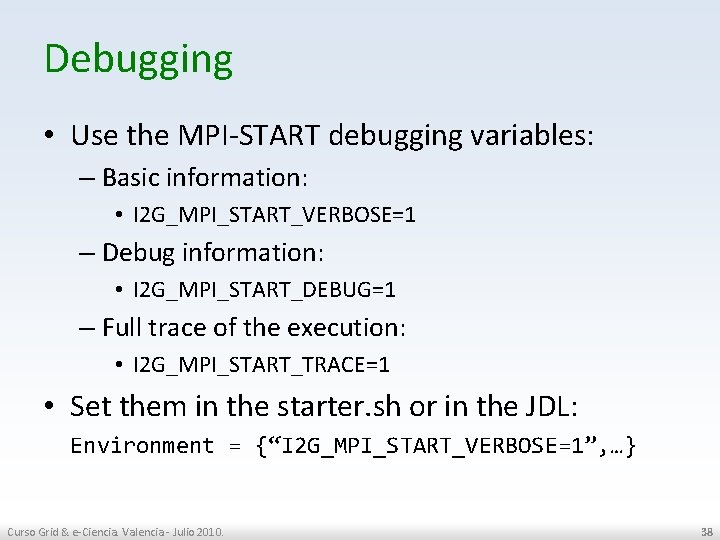

Debugging • Use the MPI-START debugging variables: – Basic information: • I 2 G_MPI_START_VERBOSE=1 – Debug information: • I 2 G_MPI_START_DEBUG=1 – Full trace of the execution: • I 2 G_MPI_START_TRACE=1 • Set them in the starter. sh or in the JDL: Environment = {“I 2 G_MPI_START_VERBOSE=1”, …} Curso Grid & e-Ciencia. Valencia - Julio 2010. 38

Debugging output ************************************ UID = ngifor 080 HOST = gcsic 177 wn DATE = Tue Jul 6 12: 07: 15 CEST 2010 VERSION = 0. 0. 66 ************************************ mpi start [DEBUG ]: dump configuration mpi start [DEBUG ]: => I 2 G_MPI_APPLICATION=hello. sh mpi start [DEBUG ]: => I 2 G_MPI_APPLICATION_ARGS= mpi start [DEBUG ]: => I 2 G_MPI_TYPE=openmpi […] mpi start [INFO ]: search for scheduler mpi start [DEBUG ]: source /opt/i 2 g/bin/. . /etc/mpi start/lsf. scheduler mpi start [DEBUG ]: checking for scheduler support : sge mpi start [DEBUG ]: checking for $PE_HOSTFILE mpi start [INFO ]: activate support for sge mpi start [DEBUG ]: convert PE_HOSTFILE into standard format mpi start [DEBUG ]: dump machinefile: mpi start [DEBUG ]: => gcsic 177 wn. ifca. es mpi start [DEBUG ]: starting with 4 processes. Curso Grid & e-Ciencia. Valencia - Julio 2010. 39

Compilation using hooks #!/bin/sh pre_run_hook () { # Compile the program. echo "Compiling ${I 2 G_MPI_APPLICATION}" # Actually compile the program. cmd="mpicc ${MPI_MPICC_OPTS} o ${I 2 G_MPI_APPLICATION}. c" $cmd if [ ! $? eq 0 ]; then echo "Error compiling program. Exiting. . . " exit 1 fi # Everything's OK. echo "Successfully compiled ${I 2 G_MPI_APPLICATION}" return 0 } Curso Grid & e-Ciencia. Valencia - Julio 2010. 40

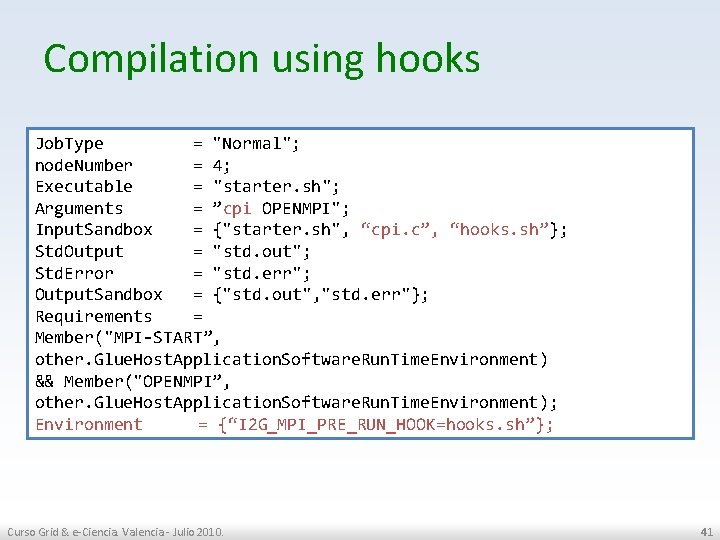

Compilation using hooks Job. Type = "Normal"; node. Number = 4; Executable = "starter. sh"; Arguments = ”cpi OPENMPI"; Input. Sandbox = {"starter. sh", “cpi. c”, “hooks. sh”}; Std. Output = "std. out"; Std. Error = "std. err"; Output. Sandbox = {"std. out", "std. err"}; Requirements = Member("MPI START”, other. Glue. Host. Application. Software. Run. Time. Environment) && Member("OPENMPI”, other. Glue. Host. Application. Software. Run. Time. Environment); Environment = {“I 2 G_MPI_PRE_RUN_HOOK=hooks. sh”}; Curso Grid & e-Ciencia. Valencia - Julio 2010. 41

![Compilation using hooks […] mpi start [DEBUG ]: Try to run pre hooks at Compilation using hooks […] mpi start [DEBUG ]: Try to run pre hooks at](http://slidetodoc.com/presentation_image/14ad917518c81d7e3cd40a296e14f778/image-42.jpg)

Compilation using hooks […] mpi start [DEBUG ]: Try to run pre hooks at hooks. sh mpi start [DEBUG ]: call pre_run hook <START PRE RUN HOOK> Compiling cpi Successfully compiled cpi <STOP PRE RUN HOOK> […] =[START]============================ […] pi is approximately 3. 1415926539002341, Error is 0. 000003104410 wall clock time = 0. 008393 =[FINISHED]=========================== Curso Grid & e-Ciencia. Valencia - Julio 2010. 42

![Getting ouput using hooks […] MPI_Comm_rank(MPI_COMM_WORLD, &myid); […] char filename[64]; snprintf(filename, 64, "host_%d. txt", Getting ouput using hooks […] MPI_Comm_rank(MPI_COMM_WORLD, &myid); […] char filename[64]; snprintf(filename, 64, "host_%d. txt",](http://slidetodoc.com/presentation_image/14ad917518c81d7e3cd40a296e14f778/image-43.jpg)

Getting ouput using hooks […] MPI_Comm_rank(MPI_COMM_WORLD, &myid); […] char filename[64]; snprintf(filename, 64, "host_%d. txt", myid); FILE *out; out = fopen(filename, "w+"); if (!out) fprintf(stderr, "Unable to open %s!n", filename); else fprintf(out, "Partial pi result: %. 16 fn", mypi); […] Curso Grid & e-Ciencia. Valencia - Julio 2010. 43

Getting ouput using hooks #!/bin/sh export OUTPUT_PATTERN="host_*. txt" export OUTPUT_ARCHIVE=myout. tgz # the first paramter is the name of a host in the copy_from_remote_node() { if [[ $1 == `hostname` || $1 == 'hostname f' || $1 == "localhost" ]]; then echo "skip local host" return 1 fi CMD="scp –r $1: "$PWD/$OUTPUT_PATTERN". ” $CMD } post_run_hook () { echo "post_run_hook called" if [ "x$MPI_START_SHARED_FS" == "x 0" a "x$MPI_SHARED_HOME" != "xyes" ] ; then echo "gather output from remote hosts" mpi_start_foreach_host copy_from_remote_node fi echo "pack the data" tar cvzf $OUTPUT_ARCHIVE $OUTPUT_PATTERN return 0 } Curso Grid & e-Ciencia. Valencia - Julio 2010. 44

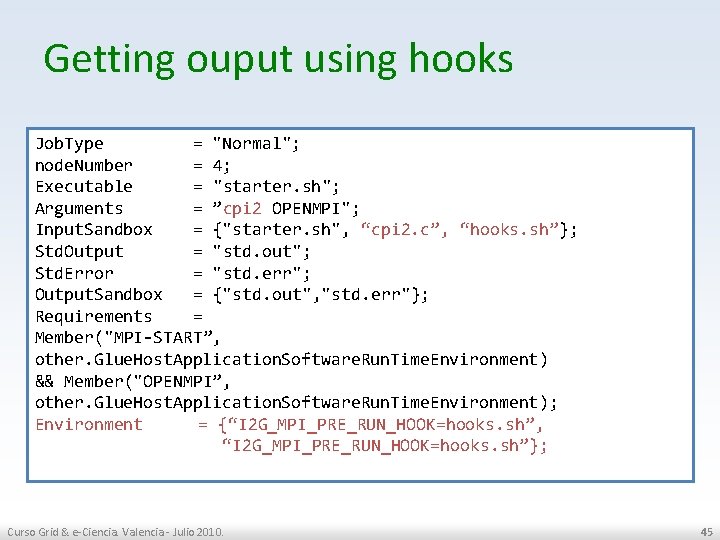

Getting ouput using hooks Job. Type = "Normal"; node. Number = 4; Executable = "starter. sh"; Arguments = ”cpi 2 OPENMPI"; Input. Sandbox = {"starter. sh", “cpi 2. c”, “hooks. sh”}; Std. Output = "std. out"; Std. Error = "std. err"; Output. Sandbox = {"std. out", "std. err"}; Requirements = Member("MPI START”, other. Glue. Host. Application. Software. Run. Time. Environment) && Member("OPENMPI”, other. Glue. Host. Application. Software. Run. Time. Environment); Environment = {“I 2 G_MPI_PRE_RUN_HOOK=hooks. sh”, “I 2 G_MPI_PRE_RUN_HOOK=hooks. sh”}; Curso Grid & e-Ciencia. Valencia - Julio 2010. 45

![Getting ouput using hooks […] mpi start [DEBUG ]: Try to run post hooks Getting ouput using hooks […] mpi start [DEBUG ]: Try to run post hooks](http://slidetodoc.com/presentation_image/14ad917518c81d7e3cd40a296e14f778/image-46.jpg)

Getting ouput using hooks […] mpi start [DEBUG ]: Try to run post hooks at hooks. sh mpi start [DEBUG ]: call post run hook <START POST RUN HOOK> post_run_hook called pack the data host_0. txt host_1. txt host_2. txt host_3. txt <STOP POST RUN HOOK> Curso Grid & e-Ciencia. Valencia - Julio 2010. 46

- Slides: 46