Parallel and Distributed Algorithms Eric Vidal Reference R

Parallel and Distributed Algorithms Eric Vidal Reference: R. Johnsonbaugh and M. Schaefer, Algorithms (International Edition). 2004. Pearson Education.

Outline • Introduction (case study: maximum element) – Work-optimality • The Parallel Random Access Machine – Shared memory modes – Accelerated cascading • Other Parallel Architectures (case study: sorting) – Circuits – Linear processor networks – (Mesh processor networks) • Distributed Algorithms – Message-optimality – Broadcast and echo – (Leader election)

Introduction

Why use parallelism? • p steps on 1 printer, 1 step on p printers • p = speed-up factor (best case) • Given a sequential algorithm, how can we parallelize it? – Some are inherently sequential (P-complete)

![Case Study: Maximum Element In: a[] Out: maximum element in a sequential_maximum(a) { n Case Study: Maximum Element In: a[] Out: maximum element in a sequential_maximum(a) { n](http://slidetodoc.com/presentation_image/57f3b385b37eeea4f217acc8c9df9281/image-5.jpg)

Case Study: Maximum Element In: a[] Out: maximum element in a sequential_maximum(a) { n = a. length max = a[0] for i = 1 to n – 1 { if (a[i] > max) max = a[i] } return max } 21 11 23 17 48 33 22 41 21 23 23 O(n) 48 48

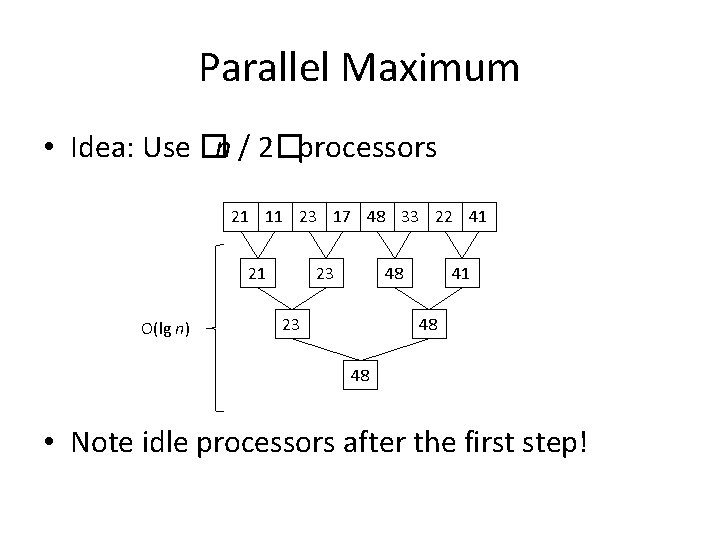

Parallel Maximum • Idea: Use �n / 2�processors 21 11 23 17 48 33 22 41 21 O(lg n) 23 48 23 41 48 48 • Note idle processors after the first step!

Work-Optimality • Work = number of algorithmic steps × number of processors • Running time of parallelized maximum algo = O(lg n) × (n / 2) = O(n lg n) • Not work-optimal! Sequential algo’s work is O(n) – Workaround: accelerated cascading…

Formal Algorithm for Parallel Maximum • But first!. . .

The Parallel Random Access Machine

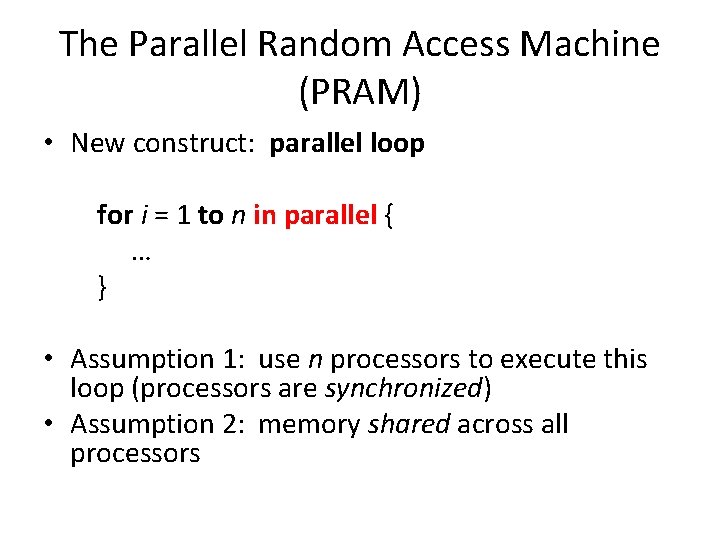

The Parallel Random Access Machine (PRAM) • New construct: parallel loop for i = 1 to n in parallel { … } • Assumption 1: use n processors to execute this loop (processors are synchronized) • Assumption 2: memory shared across all processors

![Example: Parallel Search In: a[], x Out: true if x is in a, false Example: Parallel Search In: a[], x Out: true if x is in a, false](http://slidetodoc.com/presentation_image/57f3b385b37eeea4f217acc8c9df9281/image-11.jpg)

Example: Parallel Search In: a[], x Out: true if x is in a, false otherwise parallel_search(a, x) { n = a. length found = false for i = 0 to n – 1 in parallel { if (a[i] == x) found = true } return found } Is this work-optimal? Shared memory modes: • Exclusive Read (ER) • Concurrent Read (CR) • Exclusive Write (EW) • Concurrent Write (CW) Real-world systems are most commonly CREW parallel_search runs on what type?

![Formal Algorithm for Parallel Maximum In: a[] Out: maximum element in a parallel_maximum(a) { Formal Algorithm for Parallel Maximum In: a[] Out: maximum element in a parallel_maximum(a) {](http://slidetodoc.com/presentation_image/57f3b385b37eeea4f217acc8c9df9281/image-12.jpg)

Formal Algorithm for Parallel Maximum In: a[] Out: maximum element in a parallel_maximum(a) { n = a. length for i = 0 to �lg n�– 1 { for j = 0 to �n/2 i+1�– 1 in parallel { if (j × 2 i+1 + 2 i < n) // boundary check a[j × 2 i+1] = max(a[j × 2 i+1], a[j × 2 i+1 + 2 i]) } } return a[0] } Theorem: parallel_maximum is CREW and finds the maximum element in parallel time O(lg n) and work O(n lg n)

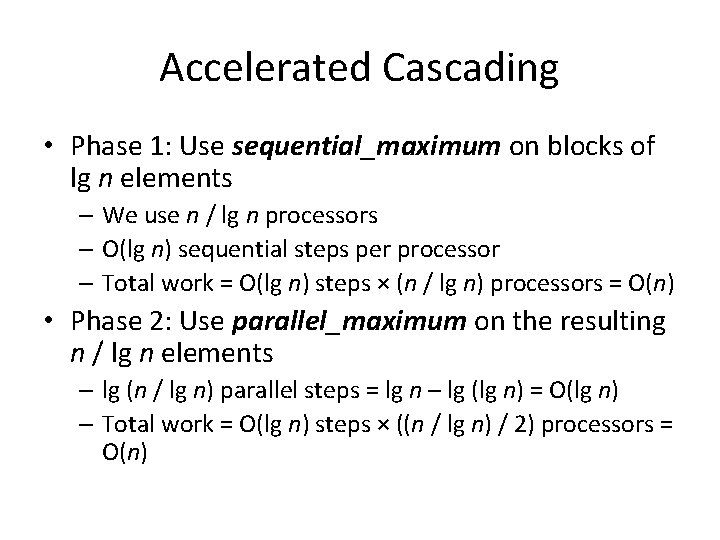

Accelerated Cascading • Phase 1: Use sequential_maximum on blocks of lg n elements – We use n / lg n processors – O(lg n) sequential steps per processor – Total work = O(lg n) steps × (n / lg n) processors = O(n) • Phase 2: Use parallel_maximum on the resulting n / lg n elements – lg (n / lg n) parallel steps = lg n – lg (lg n) = O(lg n) – Total work = O(lg n) steps × ((n / lg n) / 2) processors = O(n)

![Formal Algorithm for Optimal Maximum In: a[] Out: maximum element in a optimal_maximum(a) { Formal Algorithm for Optimal Maximum In: a[] Out: maximum element in a optimal_maximum(a) {](http://slidetodoc.com/presentation_image/57f3b385b37eeea4f217acc8c9df9281/image-14.jpg)

Formal Algorithm for Optimal Maximum In: a[] Out: maximum element in a optimal_maximum(a) { n = a. length block_size = �lg n� block_count = �n / block_size� create array block_results[block_count] for i = 0 to block_count – 1 in parallel { start = i × block_size end = min(n – 1, start + block_size – 1) block_results[i] = sequential_maximum(a[start. . end]) } return parallel_maximum(block_results) }

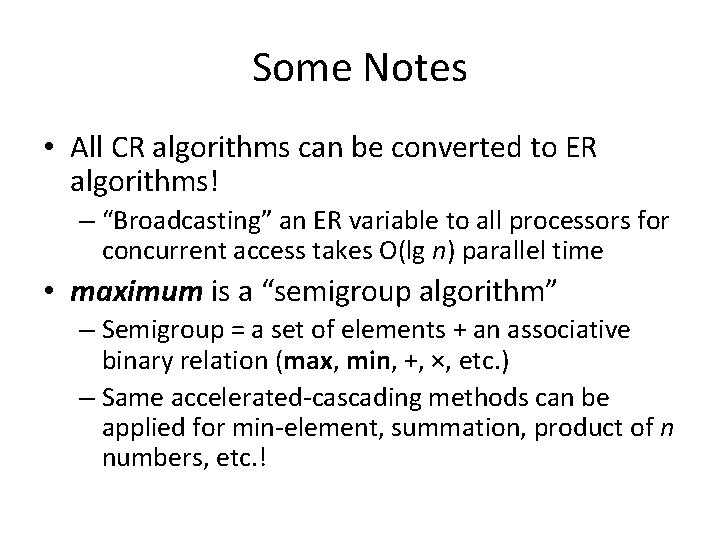

Some Notes • All CR algorithms can be converted to ER algorithms! – “Broadcasting” an ER variable to all processors for concurrent access takes O(lg n) parallel time • maximum is a “semigroup algorithm” – Semigroup = a set of elements + an associative binary relation (max, min, +, ×, etc. ) – Same accelerated-cascading methods can be applied for min-element, summation, product of n numbers, etc. !

Other Parallel Architectures

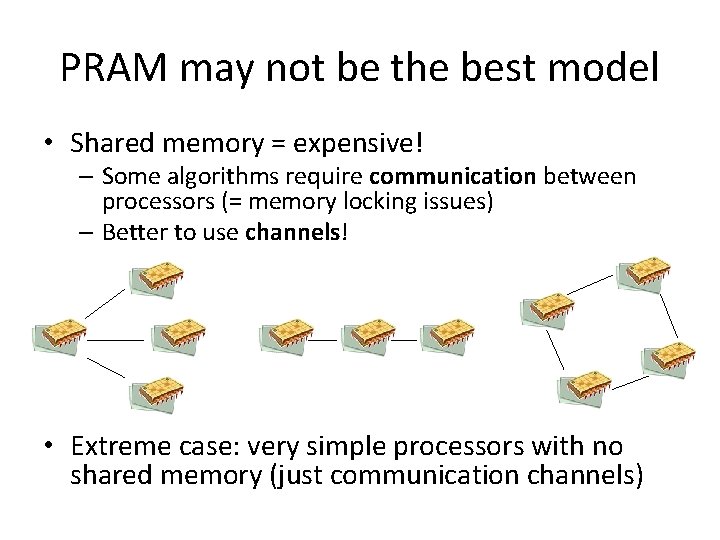

PRAM may not be the best model • Shared memory = expensive! – Some algorithms require communication between processors (= memory locking issues) – Better to use channels! • Extreme case: very simple processors with no shared memory (just communication channels)

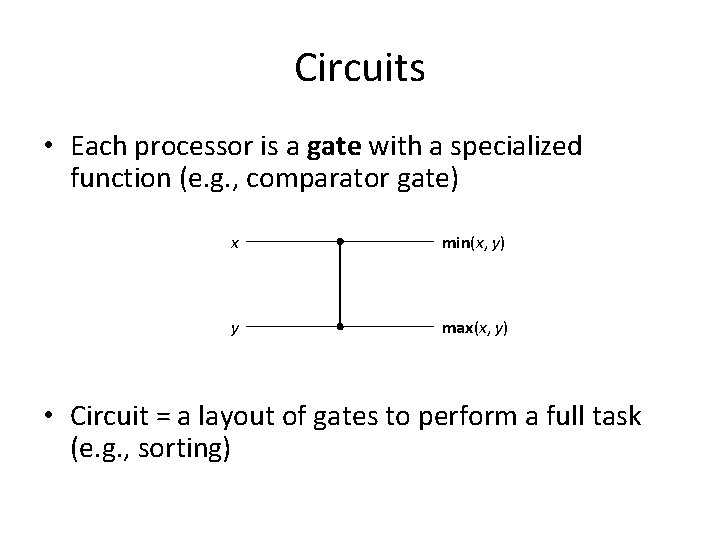

Circuits • Each processor is a gate with a specialized function (e. g. , comparator gate) x min(x, y) y max(x, y) • Circuit = a layout of gates to perform a full task (e. g. , sorting)

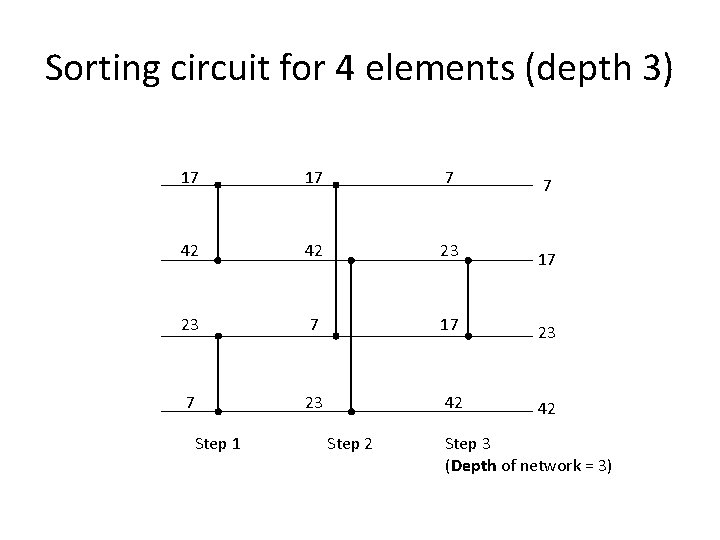

Sorting circuit for 4 elements (depth 3) 17 17 7 7 42 42 23 17 23 42 42 Step 1 Step 2 Step 3 (Depth of network = 3)

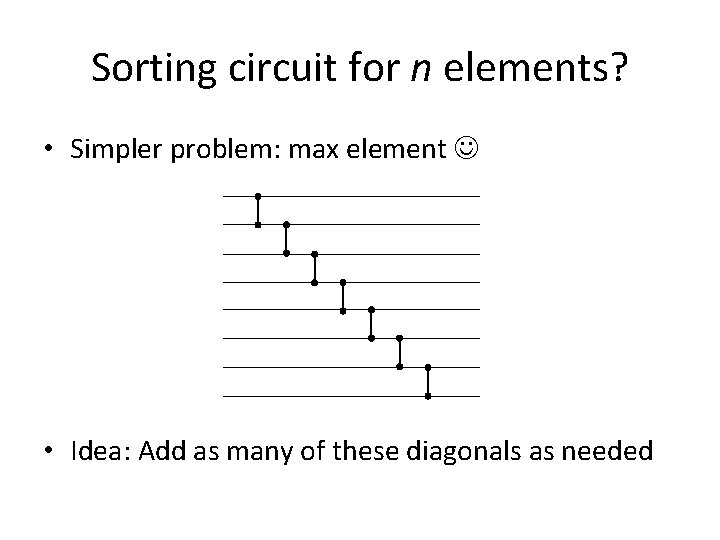

Sorting circuit for n elements? • Simpler problem: max element • Idea: Add as many of these diagonals as needed

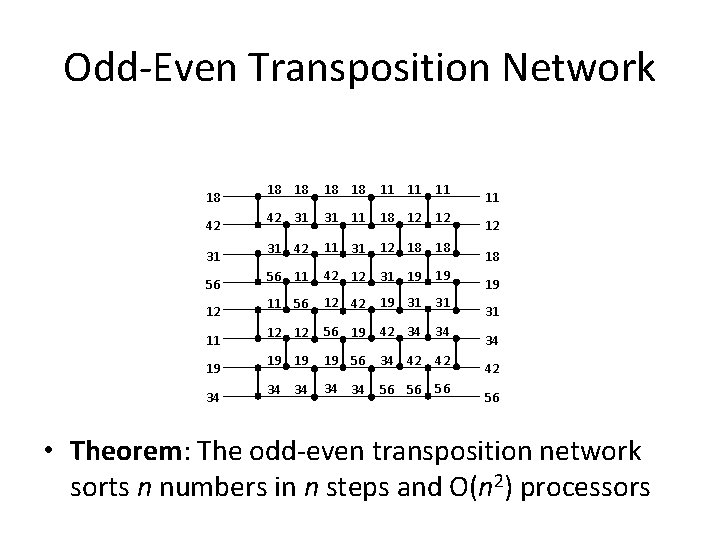

Odd-Even Transposition Network 18 42 31 56 12 11 19 34 18 18 11 11 11 42 31 31 11 18 12 12 31 42 11 31 12 18 18 56 11 42 12 31 19 19 11 56 12 42 19 31 31 12 12 56 19 42 34 34 19 19 19 56 34 42 42 34 34 56 56 56 11 12 18 19 31 34 42 56 • Theorem: The odd-even transposition network sorts n numbers in n steps and O(n 2) processors

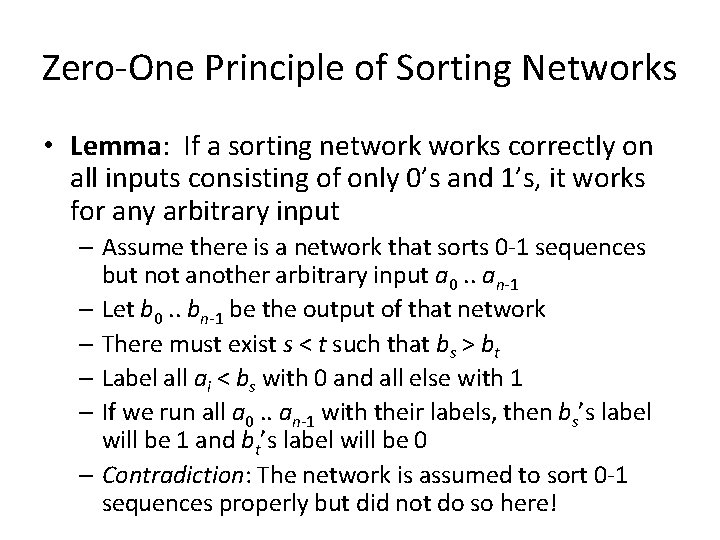

Zero-One Principle of Sorting Networks • Lemma: If a sorting networks correctly on all inputs consisting of only 0’s and 1’s, it works for any arbitrary input – Assume there is a network that sorts 0 -1 sequences but not another arbitrary input a 0. . an-1 – Let b 0. . bn-1 be the output of that network – There must exist s < t such that bs > bt – Label all ai < bs with 0 and all else with 1 – If we run all a 0. . an-1 with their labels, then bs’s label will be 1 and bt’s label will be 0 – Contradiction: The network is assumed to sort 0 -1 sequences properly but did not do so here!

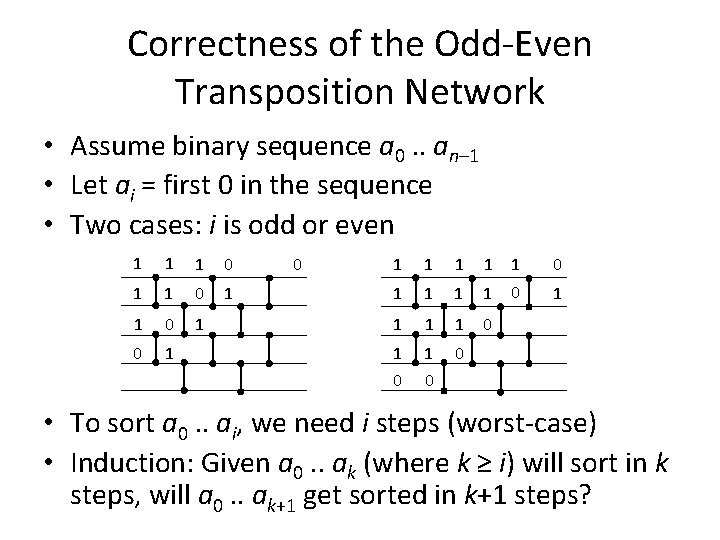

Correctness of the Odd-Even Transposition Network • Assume binary sequence a 0. . an– 1 • Let ai = first 0 in the sequence • Two cases: i is odd or even 1 1 1 0 1 0 1 1 1 1 0 1 0 0 • To sort a 0. . ai, we need i steps (worst-case) • Induction: Given a 0. . ak (where k ≥ i) will sort in k steps, will a 0. . ak+1 get sorted in k+1 steps?

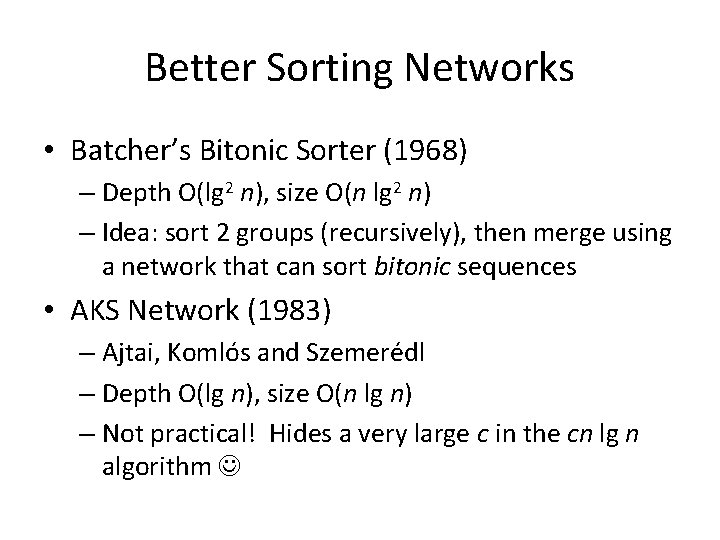

Better Sorting Networks • Batcher’s Bitonic Sorter (1968) – Depth O(lg 2 n), size O(n lg 2 n) – Idea: sort 2 groups (recursively), then merge using a network that can sort bitonic sequences • AKS Network (1983) – Ajtai, Komlós and Szemerédl – Depth O(lg n), size O(n lg n) – Not practical! Hides a very large c in the cn lg n algorithm

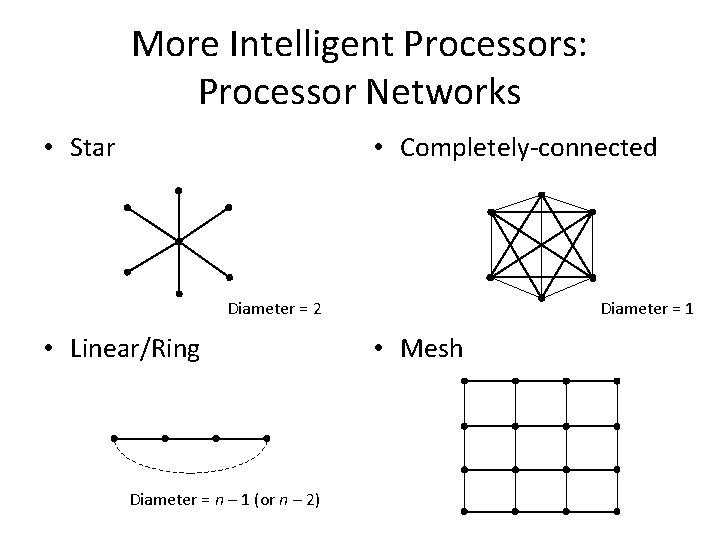

More Intelligent Processors: Processor Networks • Star • Completely-connected Diameter = 2 • Linear/Ring Diameter = n – 1 (or n – 2) Diameter = 1 • Mesh

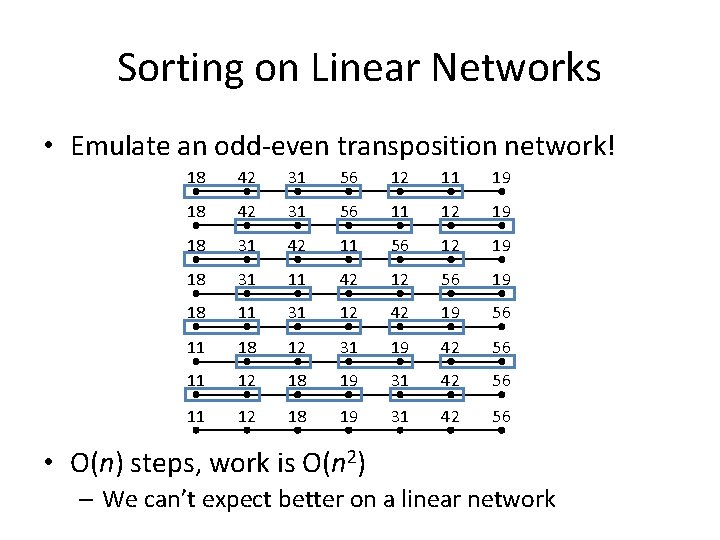

Sorting on Linear Networks • Emulate an odd-even transposition network! 18 42 31 56 12 11 19 18 42 31 56 11 12 19 18 31 42 11 56 12 19 18 31 11 42 12 56 19 18 11 31 12 42 19 56 11 18 12 31 19 42 56 11 12 18 19 31 42 56 • O(n) steps, work is O(n 2) – We can’t expect better on a linear network

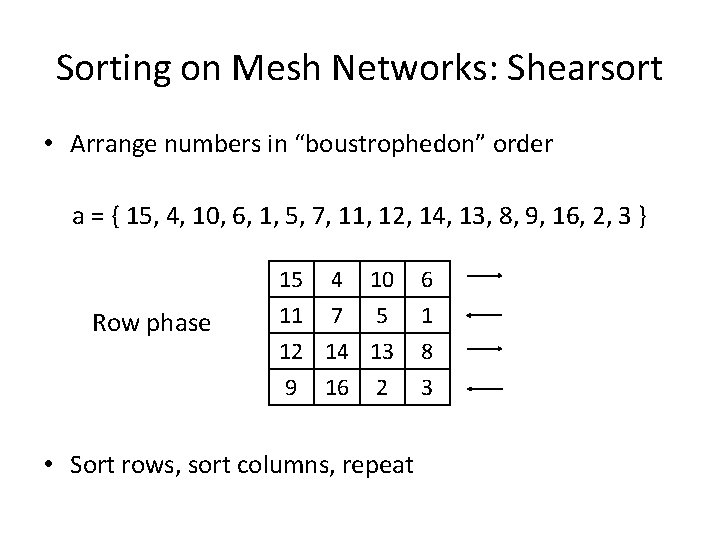

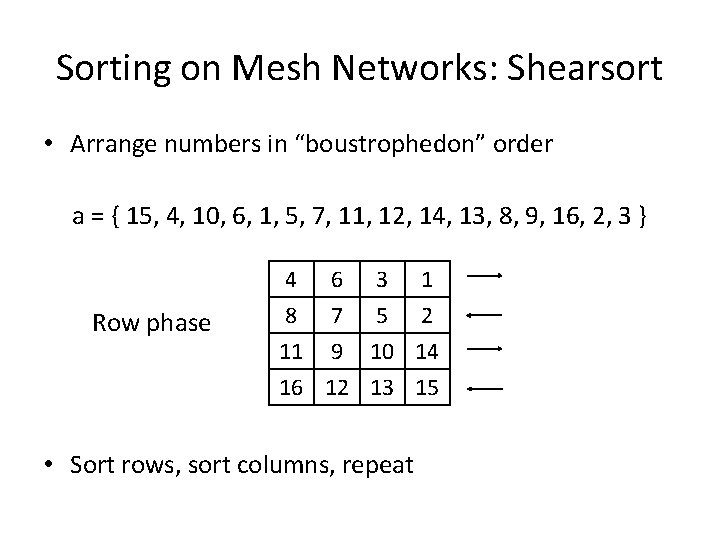

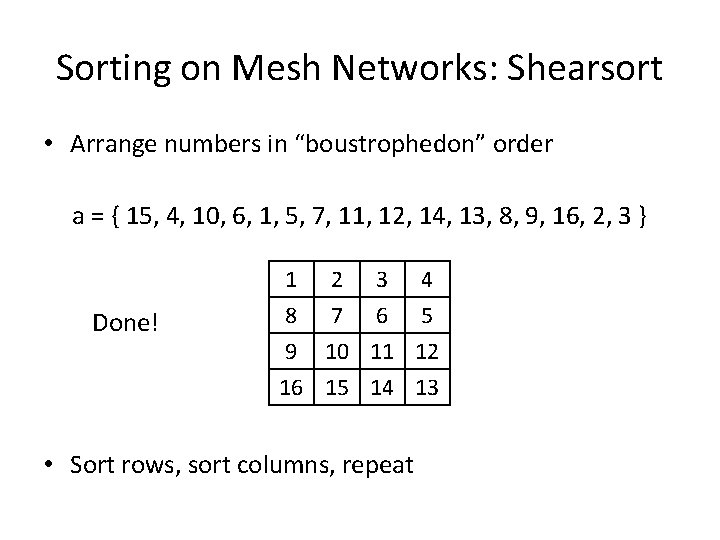

Sorting on Mesh Networks: Shearsort • Arrange numbers in “boustrophedon” order a = { 15, 4, 10, 6, 1, 5, 7, 11, 12, 14, 13, 8, 9, 16, 2, 3 } Row phase 15 4 10 11 7 5 12 14 13 9 16 2 • Sort rows, sort columns, repeat 6 1 8 3

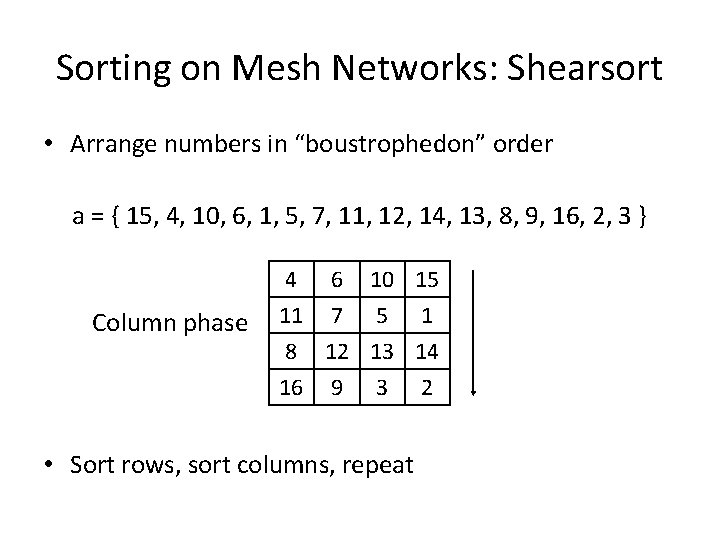

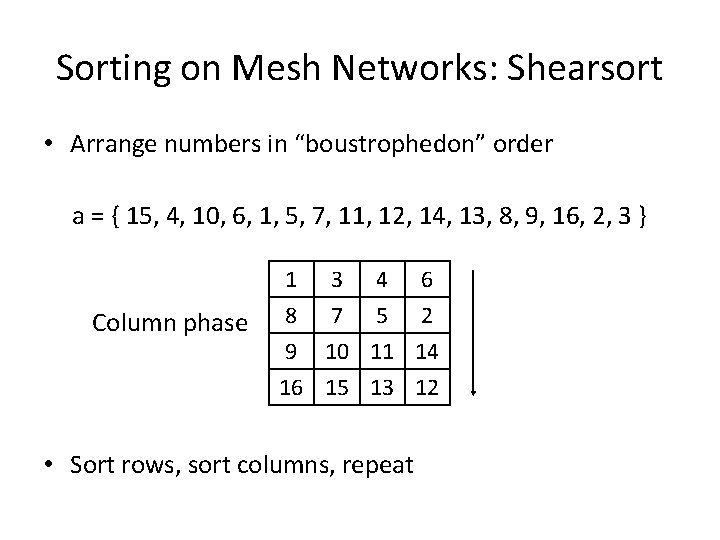

Sorting on Mesh Networks: Shearsort • Arrange numbers in “boustrophedon” order a = { 15, 4, 10, 6, 1, 5, 7, 11, 12, 14, 13, 8, 9, 16, 2, 3 } Column phase 4 6 10 15 11 7 5 1 8 12 13 14 16 9 3 2 • Sort rows, sort columns, repeat

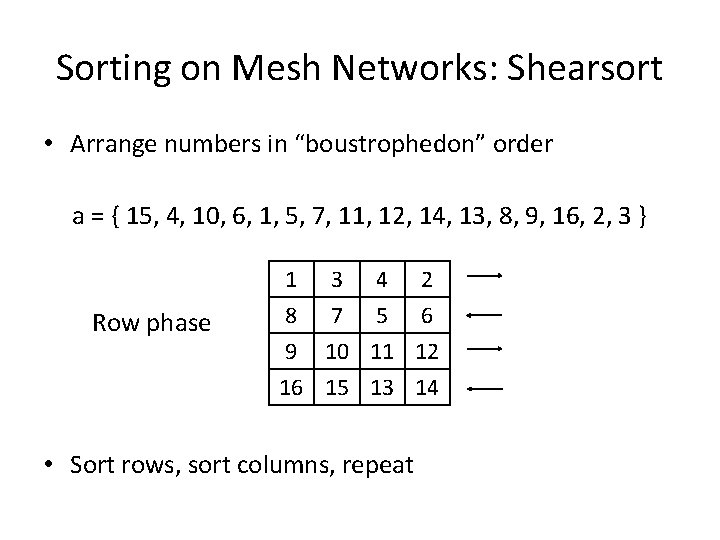

Sorting on Mesh Networks: Shearsort • Arrange numbers in “boustrophedon” order a = { 15, 4, 10, 6, 1, 5, 7, 11, 12, 14, 13, 8, 9, 16, 2, 3 } Row phase 4 6 3 1 8 7 5 2 11 9 10 14 16 12 13 15 • Sort rows, sort columns, repeat

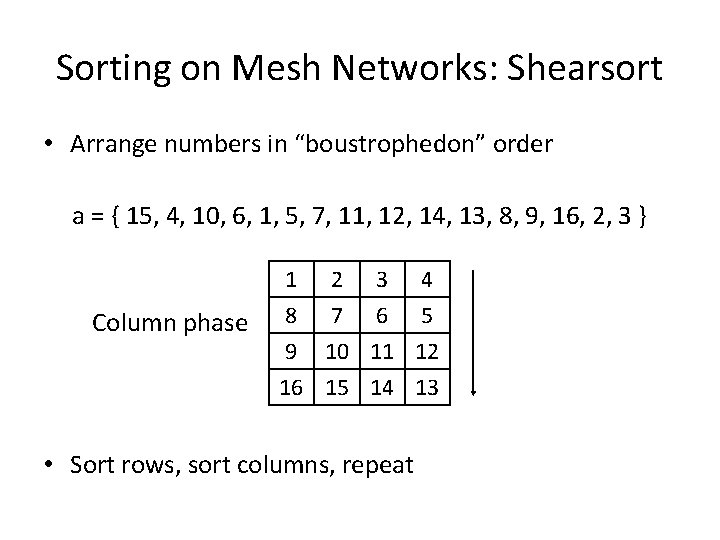

Sorting on Mesh Networks: Shearsort • Arrange numbers in “boustrophedon” order a = { 15, 4, 10, 6, 1, 5, 7, 11, 12, 14, 13, 8, 9, 16, 2, 3 } Column phase 1 3 4 6 8 7 5 2 9 10 11 14 16 15 13 12 • Sort rows, sort columns, repeat

Sorting on Mesh Networks: Shearsort • Arrange numbers in “boustrophedon” order a = { 15, 4, 10, 6, 1, 5, 7, 11, 12, 14, 13, 8, 9, 16, 2, 3 } Row phase 1 3 4 2 8 7 5 6 9 10 11 12 16 15 13 14 • Sort rows, sort columns, repeat

Sorting on Mesh Networks: Shearsort • Arrange numbers in “boustrophedon” order a = { 15, 4, 10, 6, 1, 5, 7, 11, 12, 14, 13, 8, 9, 16, 2, 3 } Column phase 1 2 3 4 8 7 6 5 9 10 11 12 16 15 14 13 • Sort rows, sort columns, repeat

Sorting on Mesh Networks: Shearsort • Arrange numbers in “boustrophedon” order a = { 15, 4, 10, 6, 1, 5, 7, 11, 12, 14, 13, 8, 9, 16, 2, 3 } Done! 1 2 3 4 8 7 6 5 9 10 11 12 16 15 14 13 • Sort rows, sort columns, repeat

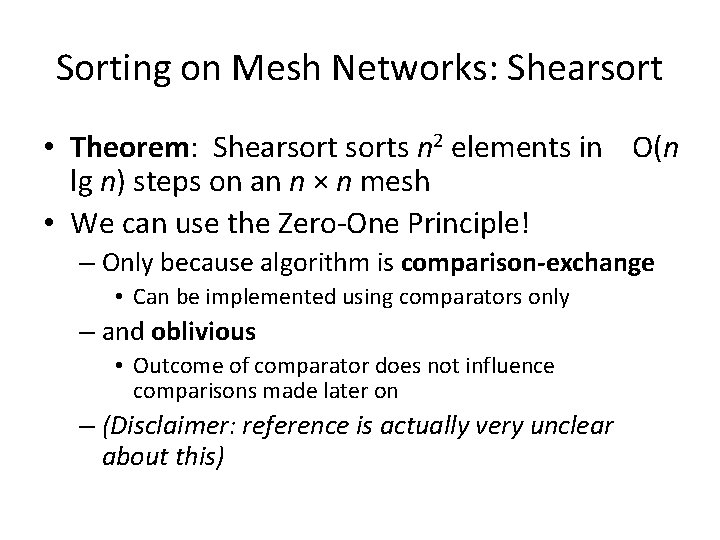

Sorting on Mesh Networks: Shearsort • Theorem: Shearsorts n 2 elements in O(n lg n) steps on an n × n mesh • We can use the Zero-One Principle! – Only because algorithm is comparison-exchange • Can be implemented using comparators only – and oblivious • Outcome of comparator does not influence comparisons made later on – (Disclaimer: reference is actually very unclear about this)

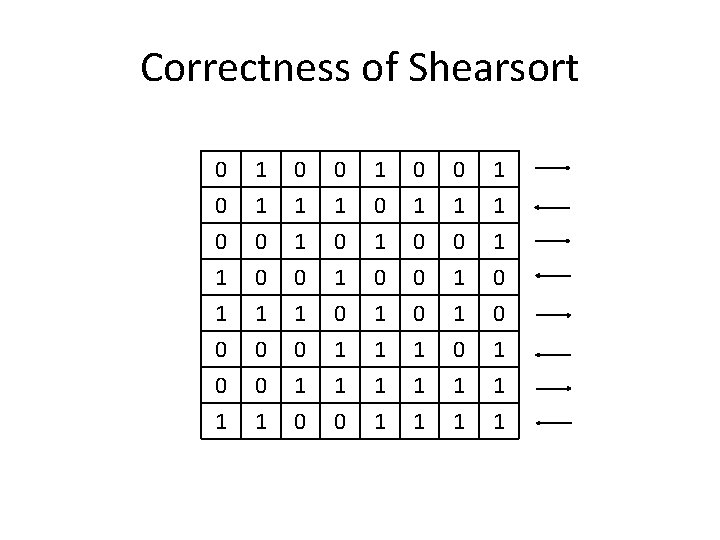

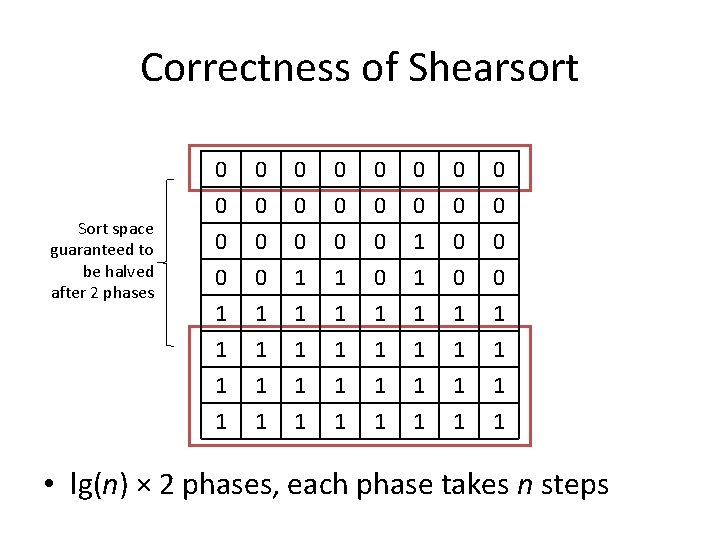

Correctness of Shearsort 0 0 0 1 1 1 0 0 0 1 1 0 0 1 0 0 0 1 1 1 1 0 0 1 1 0 1 0 0 1 1 1 1 0 1 1 1

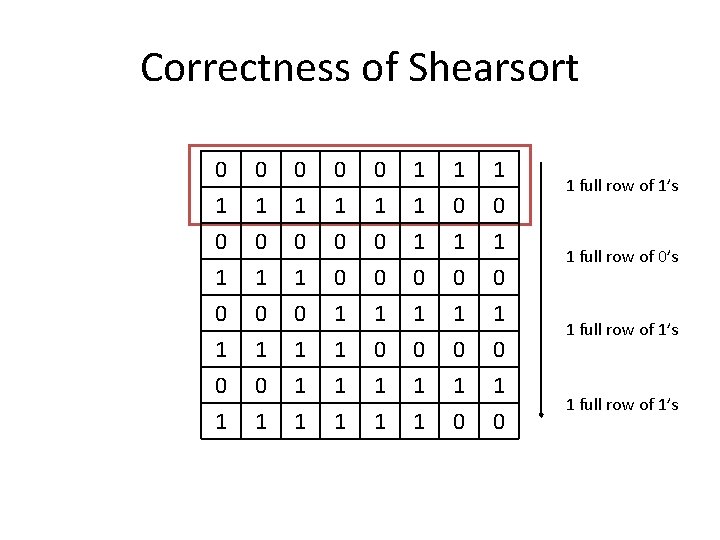

Correctness of Shearsort 0 1 0 1 0 0 1 1 1 0 1 0 1 0 1 1 1 1 0 1 0 1 full row of 1’s 1 full row of 0’s 1 full row of 1’s

Correctness of Shearsort Sort space guaranteed to be halved after 2 phases 0 0 0 1 0 0 0 0 1 1 1 1 1 1 1 1 1 • lg(n) × 2 phases, each phase takes n steps

Distributed Algorithms

Different concerns altogether… 2, 3, 5, 7, 13 … DES (56 -bit) … 242643801 -1, 243112609 -1… • Problems usually easy to parallelize • Main problems: – – – Inherently asynchronous How to broadcast data and ensure every node gets it How to minimize bandwidth usage What to do when nodes go down (decentralization) (Do we trust the results given by the nodes? ) SETI@Home

Message-Optimality • New language constructs: send <M> to p receive <M> from p terminate • Message-complexity = number of messages sent by a distributed algorithm (also uses O-notation)

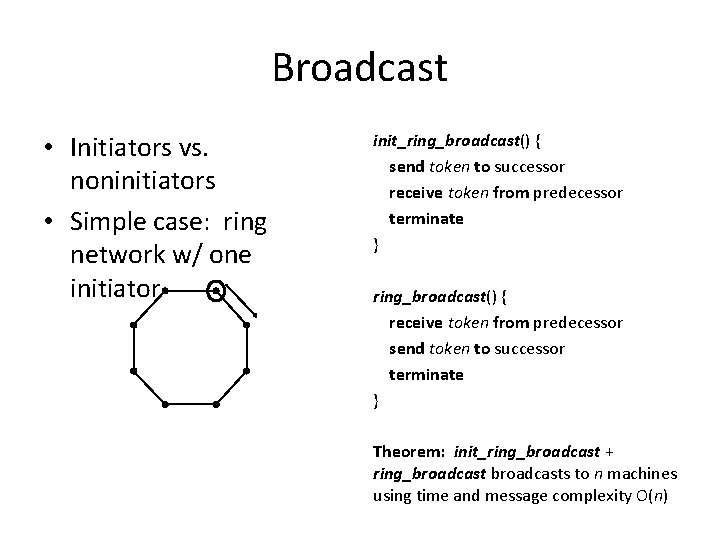

Broadcast • Initiators vs. noninitiators • Simple case: ring network w/ one initiator init_ring_broadcast() { send token to successor receive token from predecessor terminate } ring_broadcast() { receive token from predecessor send token to successor terminate } Theorem: init_ring_broadcast + ring_broadcasts to n machines using time and message complexity O(n)

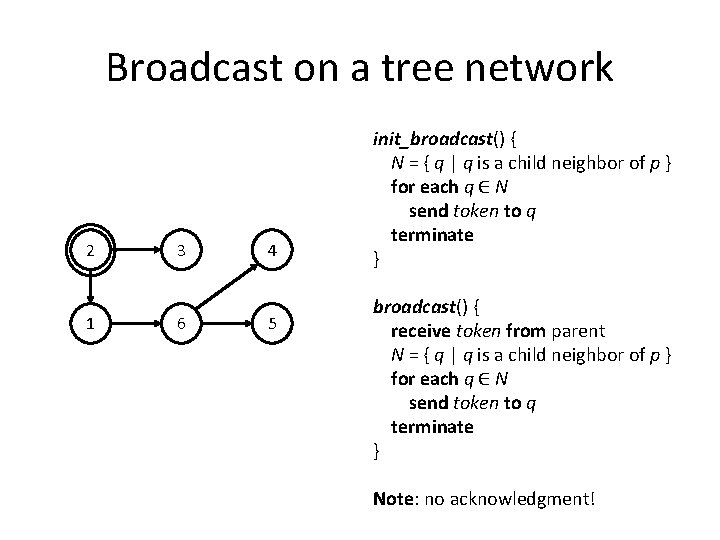

Broadcast on a tree network 2 1 3 6 4 5 init_broadcast() { N = { q | q is a child neighbor of p } for each q ∈ N send token to q terminate } broadcast() { receive token from parent N = { q | q is a child neighbor of p } for each q ∈ N send token to q terminate } Note: no acknowledgment!

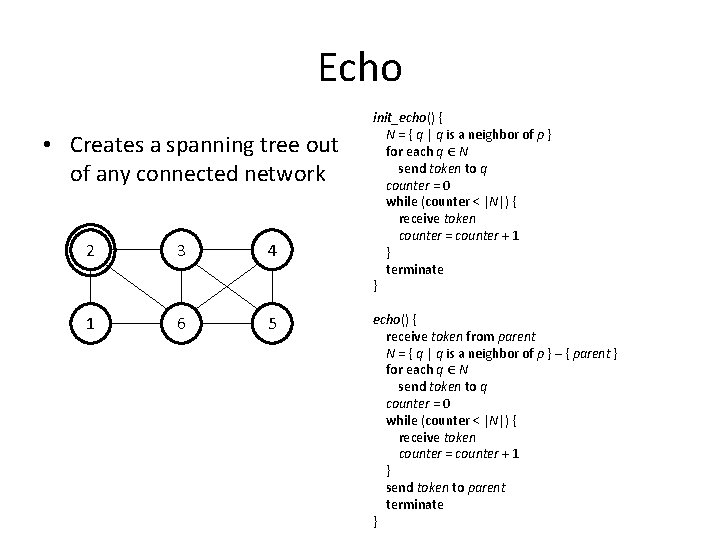

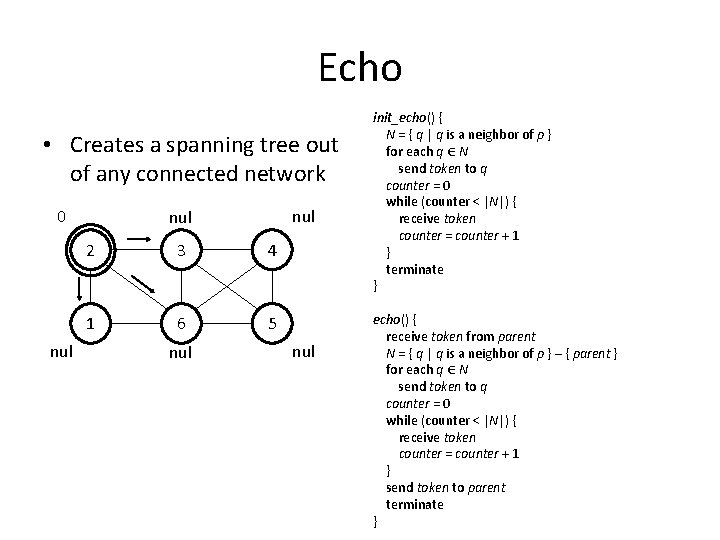

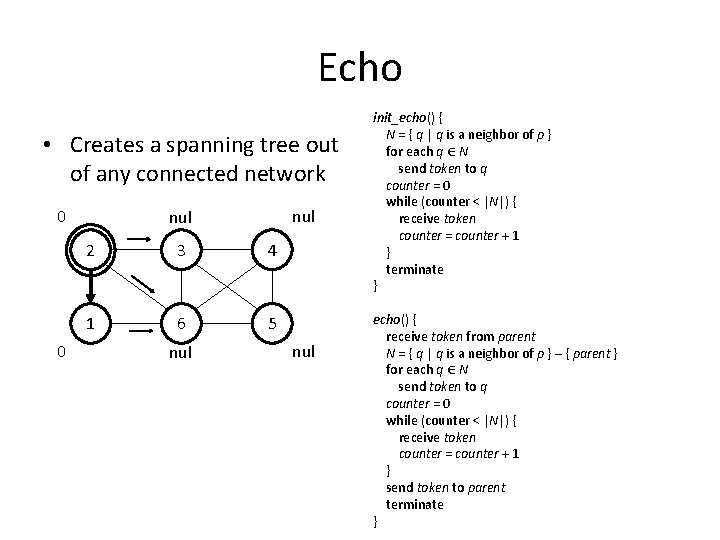

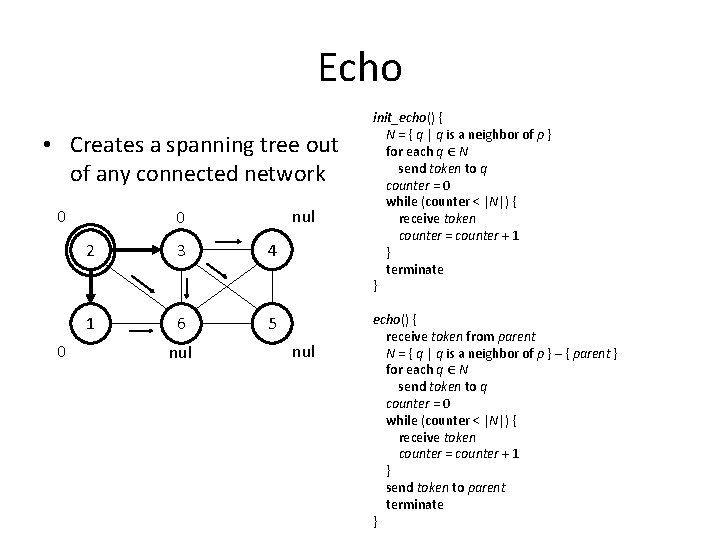

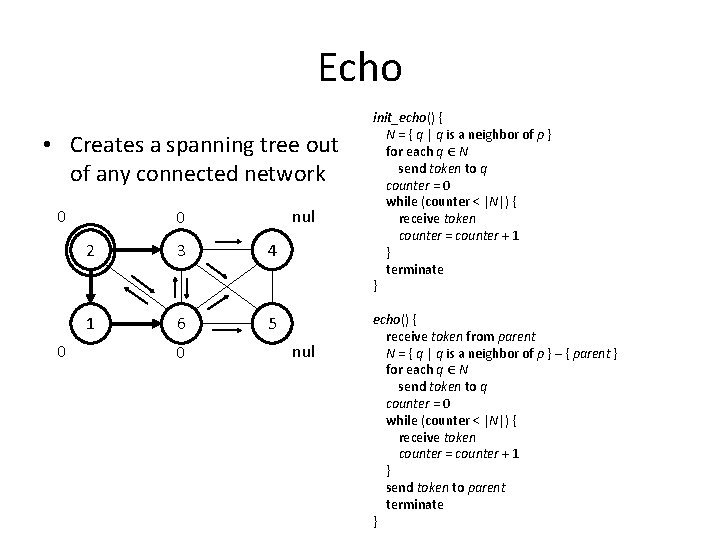

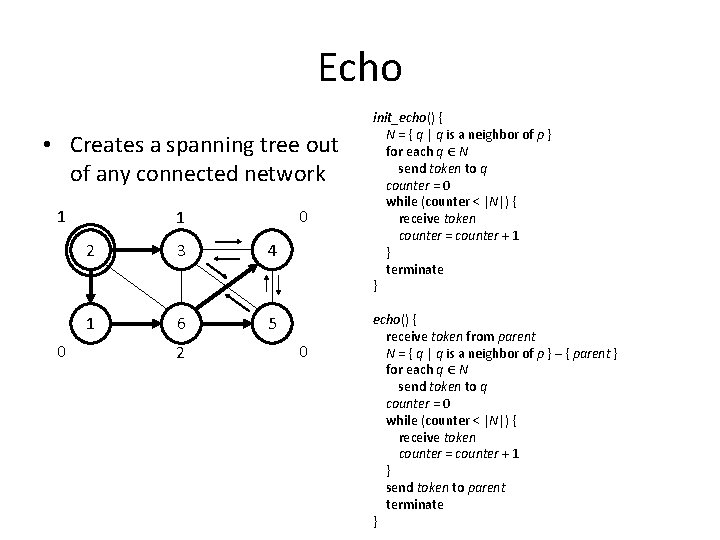

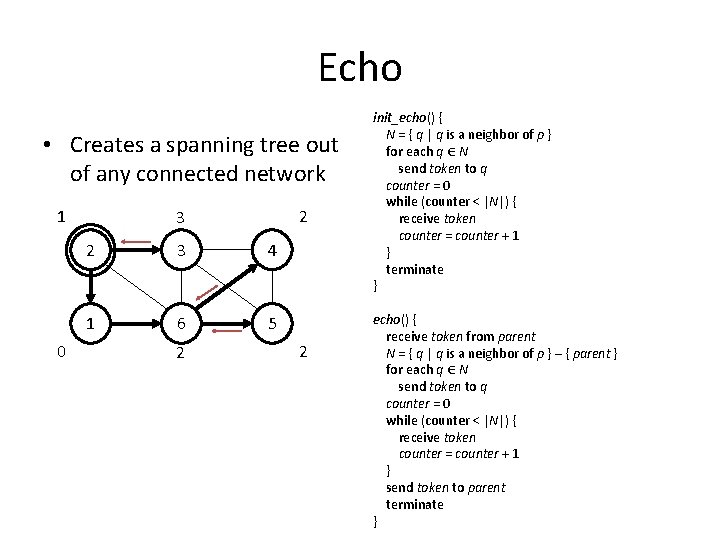

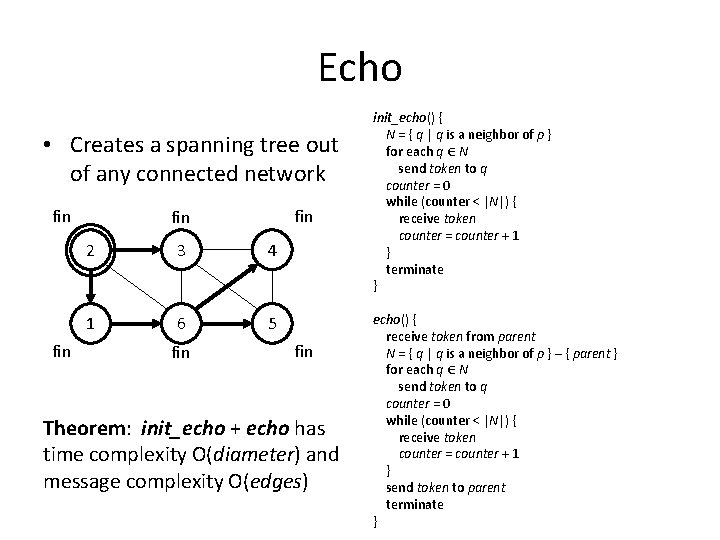

Echo • Creates a spanning tree out of any connected network 2 3 4 1 6 5 init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

Echo • Creates a spanning tree out of any connected network 0 nul nul 2 3 4 1 6 5 nul init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

Echo • Creates a spanning tree out of any connected network 0 0 nul 2 3 4 1 6 5 nul init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

Echo • Creates a spanning tree out of any connected network 0 0 nul 0 2 3 4 1 6 5 nul init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

Echo • Creates a spanning tree out of any connected network 0 0 nul 0 2 3 4 1 6 5 0 nul init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

Echo • Creates a spanning tree out of any connected network 1 0 0 1 2 3 4 1 6 5 2 0 init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

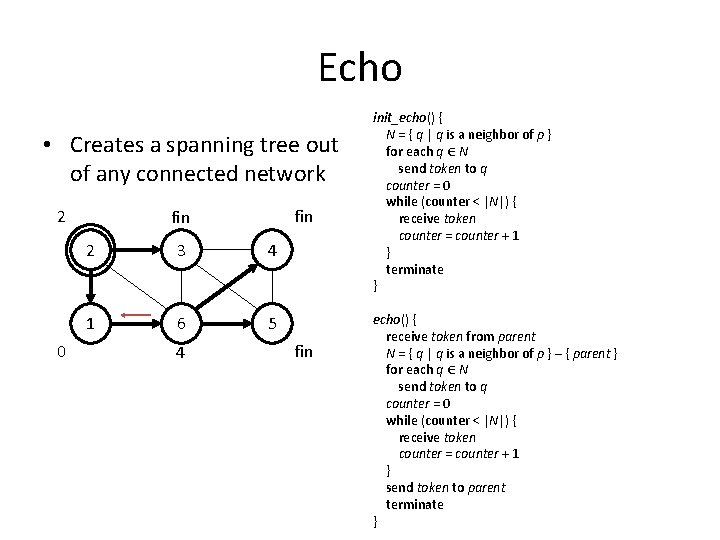

Echo • Creates a spanning tree out of any connected network 1 0 2 3 4 1 6 5 2 2 init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

Echo • Creates a spanning tree out of any connected network 2 0 fin 2 3 4 1 6 5 4 fin init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

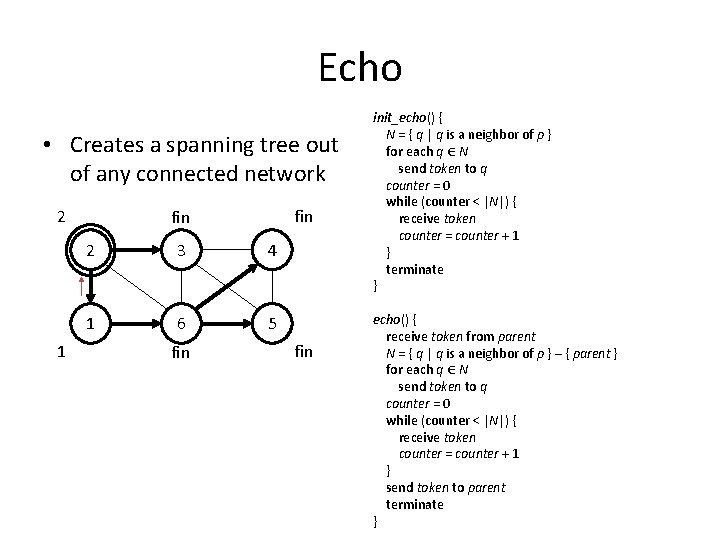

Echo • Creates a spanning tree out of any connected network 2 1 fin 2 3 4 1 6 5 fin init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

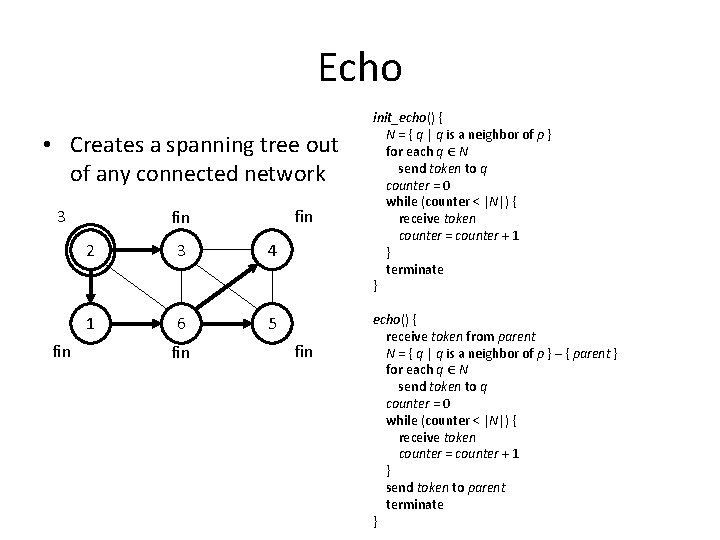

Echo • Creates a spanning tree out of any connected network 3 fin fin 2 3 4 1 6 5 fin init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

Echo • Creates a spanning tree out of any connected network fin fin 2 3 4 1 6 5 fin Theorem: init_echo + echo has time complexity O(diameter) and message complexity O(edges) init_echo() { N = { q | q is a neighbor of p } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } terminate } echo() { receive token from parent N = { q | q is a neighbor of p } – { parent } for each q ∈ N send token to q counter = 0 while (counter < |N|) { receive token counter = counter + 1 } send token to parent terminate }

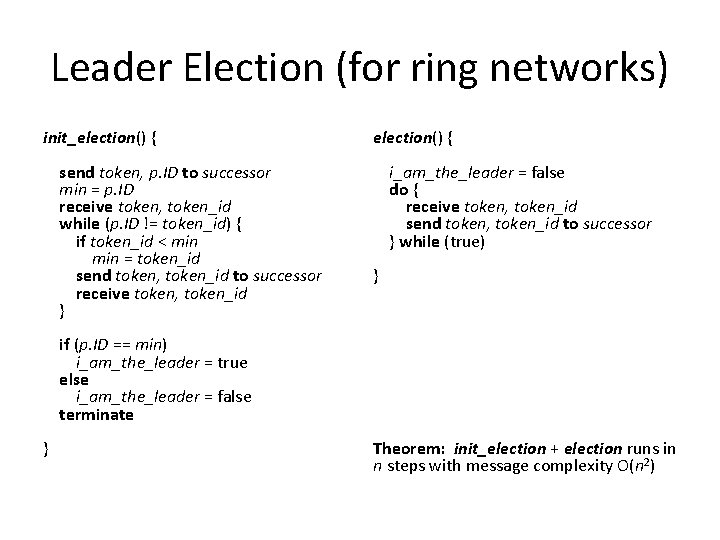

Leader Election (for ring networks) init_election() { send token, p. ID to successor min = p. ID receive token, token_id while (p. ID != token_id) { if token_id < min = token_id send token, token_id to successor receive token, token_id } election() { i_am_the_leader = false do { receive token, token_id send token, token_id to successor } while (true) } if (p. ID == min) i_am_the_leader = true else i_am_the_leader = false terminate } Theorem: init_election + election runs in n steps with message complexity O(n 2)

- Slides: 54