ParadynCondor Week 2005 March 2005 UAB Dynamic Tuning

Paradyn/Condor Week 2005 March 2005 UAB Dynamic Tuning of Master/Worker Applications Anna Morajko, Paola Caymes Scutari, Tomàs Margalef, Eduardo Cesar, Joan Sorribes and Emilio Luque Universitat Autònoma de Barcelona

Outline Introduction p MATE p Number of workers p Data distribution p Conclusions p 2

Outline Introduction p MATE p Number of workers p Data distribution p Conclusions p 3

Introduction Application performance n The main goal of parallel/distributed applications: solve a considered problem in the possible fastest way n Performance is one of the most important issues n Developers must optimize application performance to provide efficient and useful applications 4

Introduction (II) n Difficulties in finding bottlenecks and determining their solutions for parallel/distributed applications p Many tasks that cooperate with each other n Application behavior may change on input data or environment n Difficult task especially for non-expert users 5

Outline Introduction p MATE p Number of workers p Data distribution p Conclusions p 6

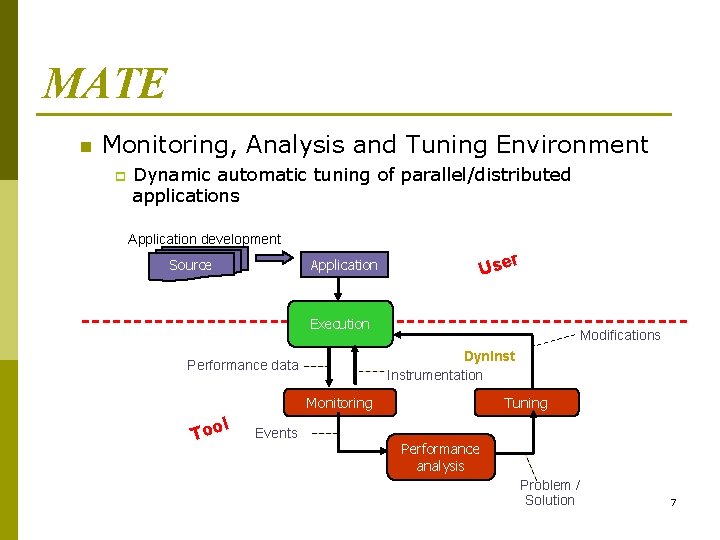

MATE n Monitoring, Analysis and Tuning Environment p Dynamic automatic tuning of parallel/distributed applications Application development r Use Application Source Execution Modifications Dyn. Instrumentation Performance data Monitoring l Too Events Tuning Performance analysis Problem / Solution 7

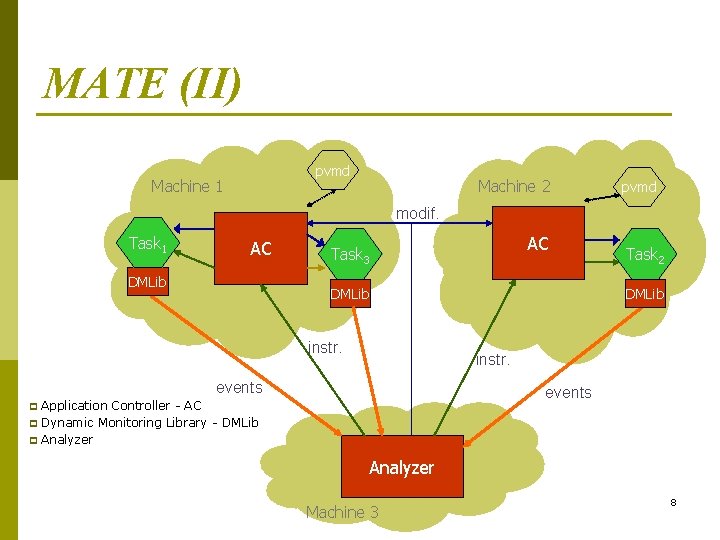

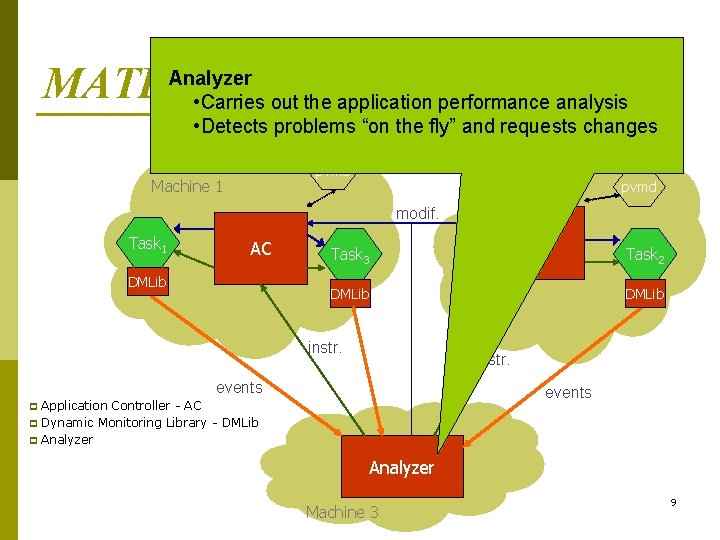

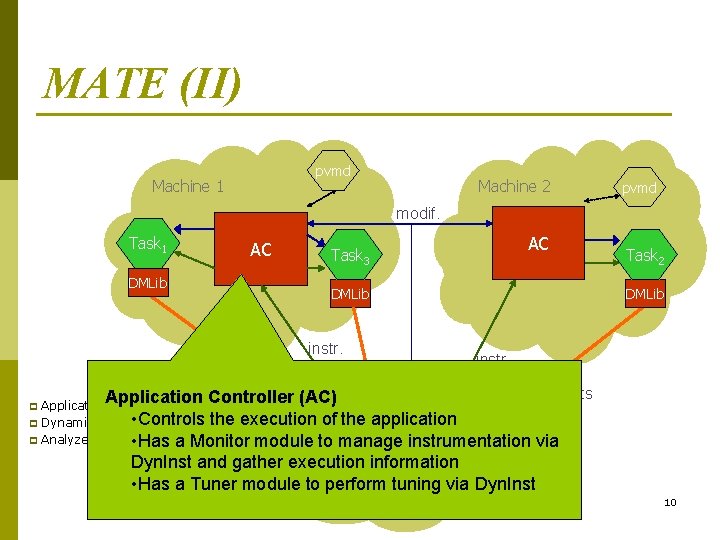

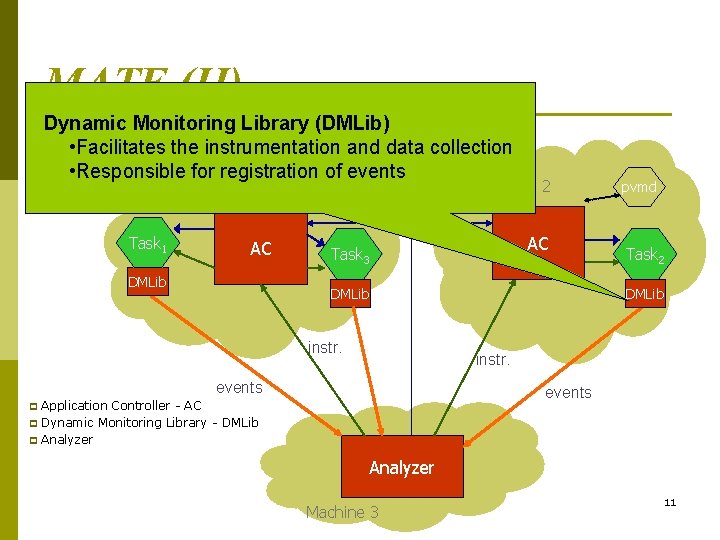

MATE (II) pvmd Machine 1 Machine 2 pvmd modif. Task 1 AC DMLib instr. p p Task 2 DMLib instr. events p AC Task 3 events Application Controller - AC Dynamic Monitoring Library - DMLib Analyzer Machine 3 8

MATEAnalyzer (II) • Carries out the application performance analysis • Detects problems “on the fly” and requests changes pvmd Machine 1 Machine 2 pvmd modif. Task 1 AC DMLib instr. p p Task 2 DMLib instr. events p AC Task 3 events Application Controller - AC Dynamic Monitoring Library - DMLib Analyzer Machine 3 9

MATE (II) pvmd Machine 1 Machine 2 pvmd modif. Task 1 AC DMLib AC Task 3 DMLib instr. Task 2 DMLib instr. events Application Controller (AC) p Application Controller - AC • Controls execution of the application p Dynamic Monitoring Library the - DMLib p Analyzer • Has a Monitor module to manage instrumentation via Dyn. Inst and gather execution Analyzer information • Has a Tuner module to perform tuning via Dyn. Inst Machine 3 10

MATE (II) Dynamic Monitoring Library (DMLib) • Facilitates the instrumentation and data collection • Responsible for registrationpvmd of events Machine 1 Machine 2 pvmd modif. Task 1 AC DMLib instr. p p Task 2 DMLib instr. events p AC Task 3 events Application Controller - AC Dynamic Monitoring Library - DMLib Analyzer Machine 3 11

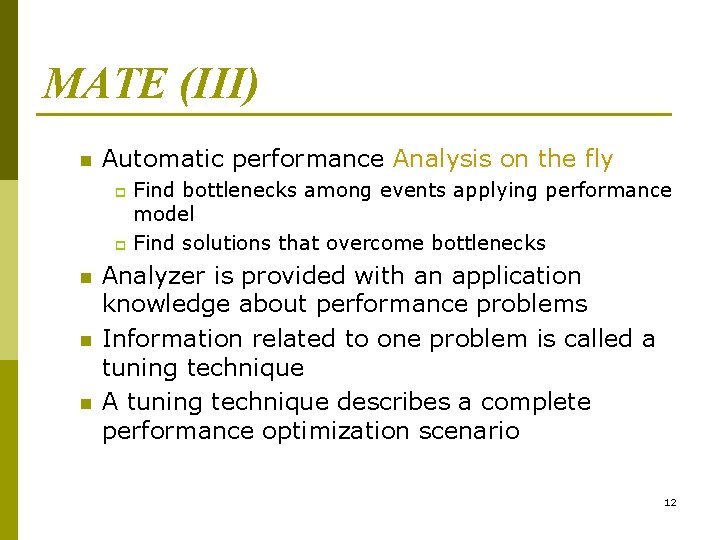

MATE (III) n Automatic performance Analysis on the fly Find bottlenecks among events applying performance model p Find solutions that overcome bottlenecks p n n n Analyzer is provided with an application knowledge about performance problems Information related to one problem is called a tuning technique A tuning technique describes a complete performance optimization scenario 12

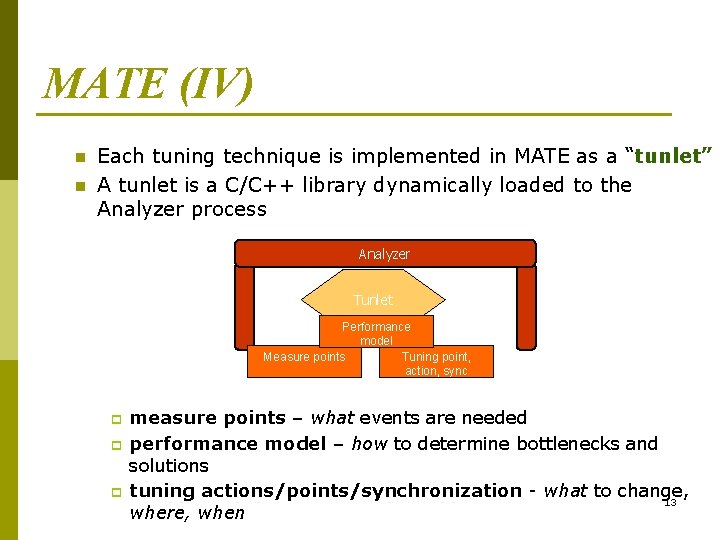

MATE (IV) n n Each tuning technique is implemented in MATE as a “tunlet” A tunlet is a C/C++ library dynamically loaded to the Analyzer process Analyzer Tunlet Performance model Measure points Tuning point, action, sync p p p measure points – what events are needed performance model – how to determine bottlenecks and solutions tuning actions/points/synchronization - what to change, 13 where, when

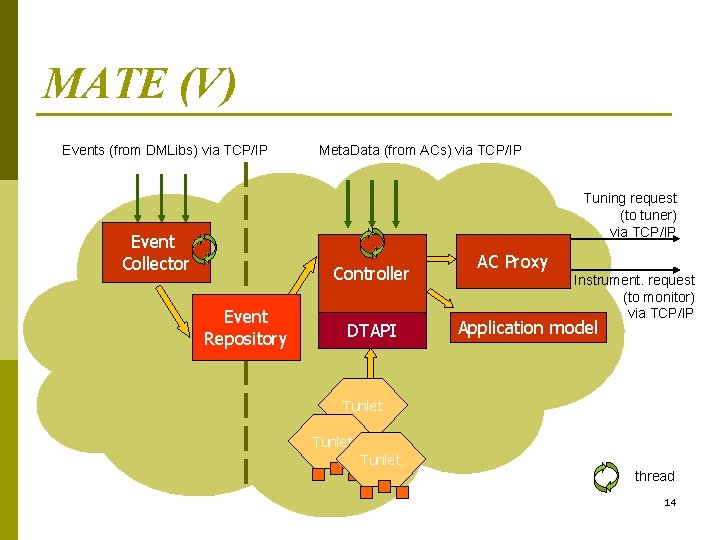

MATE (V) Events (from DMLibs) via TCP/IP Meta. Data (from ACs) via TCP/IP Tuning request (to tuner) via TCP/IP Event Collector Controller Event Repository DTAPI AC Proxy Instrument. request (to monitor) via TCP/IP Application model Tunlet thread 14

Outline Introduction p MATE p Number of workers p Data distribution p Conclusions p 15

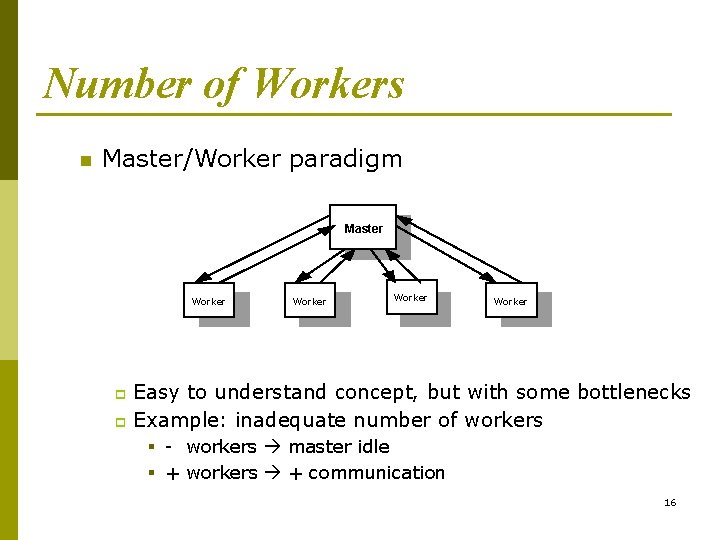

Number of Workers n Master/Worker paradigm Master Worker Easy to understand concept, but with some bottlenecks p Example: inadequate number of workers p § - workers master idle § + workers + communication 16

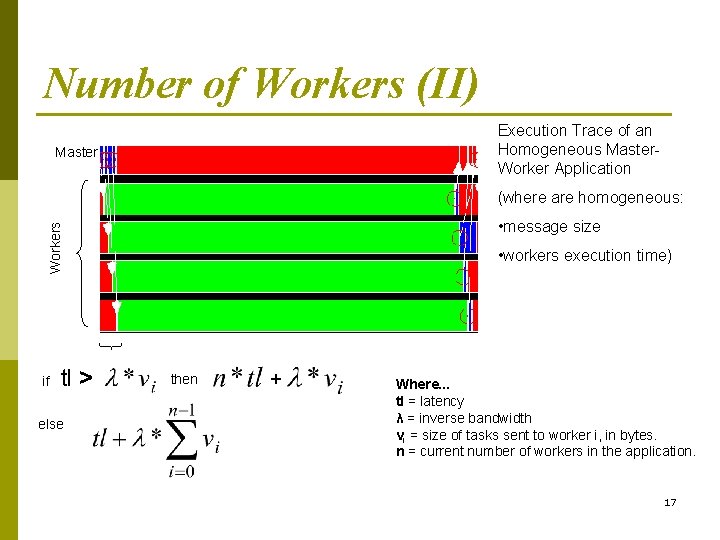

Number of Workers (II) Execution Trace of an Homogeneous Master. Worker Application Master (where are homogeneous: Workers • message size if tl > else • workers execution time) then + Where. . . tl = latency λ = inverse bandwidth vi = size of tasks sent to worker i, in bytes. n = current number of workers in the application. 17

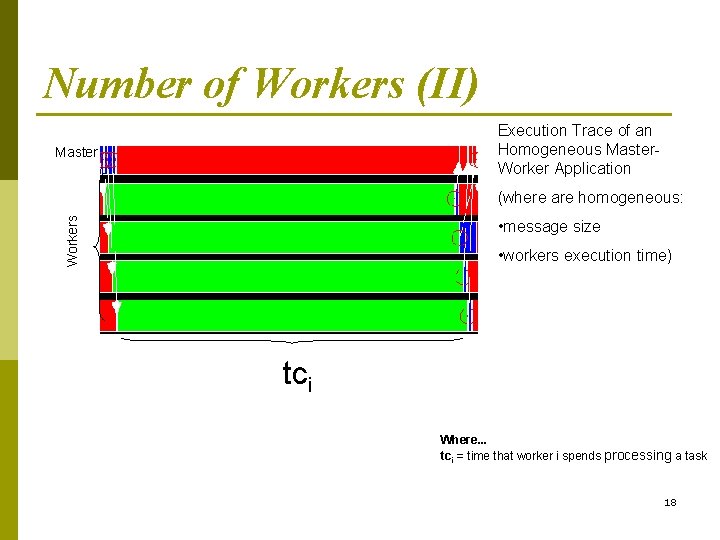

Number of Workers (II) Execution Trace of an Homogeneous Master. Worker Application Master Workers (where are homogeneous: • message size • workers execution time) tci Where. . . tci = time that worker i spends processing a task 18

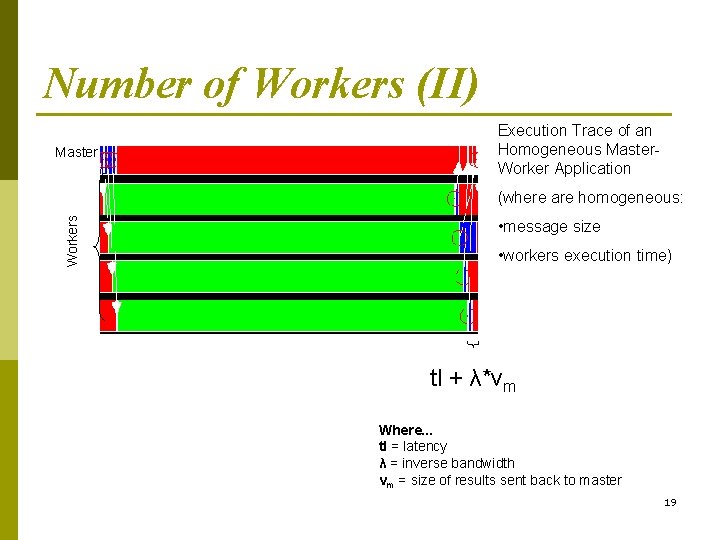

Number of Workers (II) Master Execution Trace of an Homogeneous Master. Worker Application Workers (where are homogeneous: • message size • workers execution time) tl + λ*vm Where. . . tl = latency λ = inverse bandwidth vm = size of results sent back to master 19

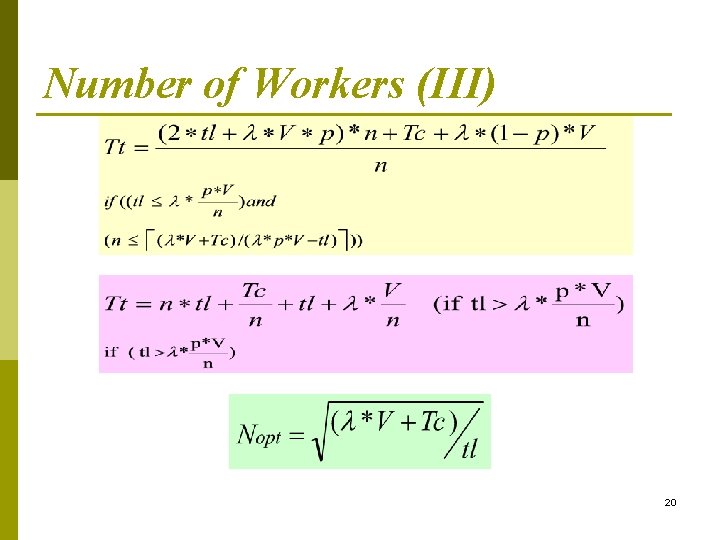

Number of Workers (III) 20

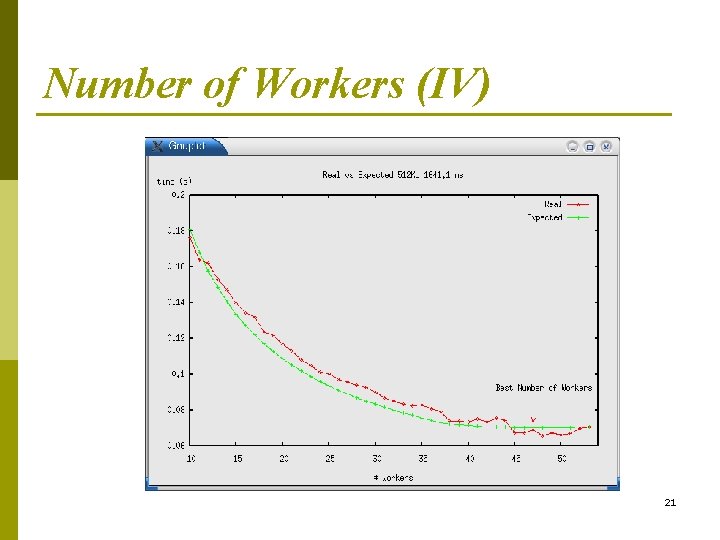

Number of Workers (IV) 21

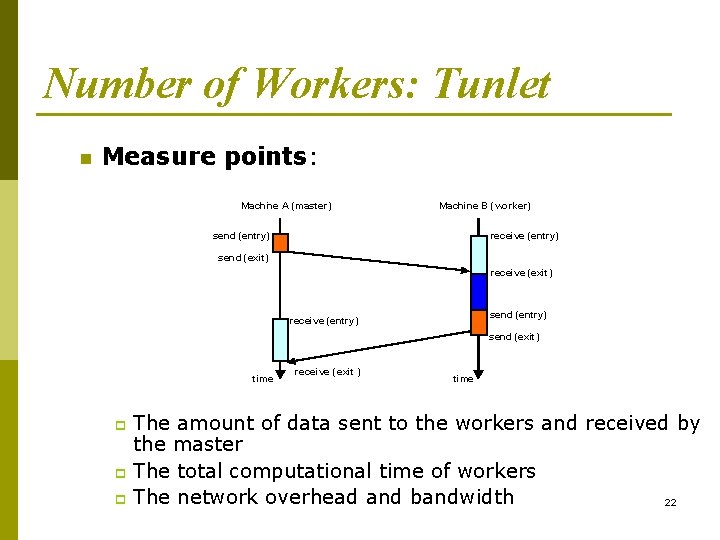

Number of Workers: Tunlet n Measure points: Machine A (master) Machine B (worker) send (entry) receive (entry) send (exit) receive (exit) send (entry) receive (entry) send (exit) time receive ( exit ) time The amount of data sent to the workers and received by the master p The total computational time of workers p The network overhead and bandwidth 22 p

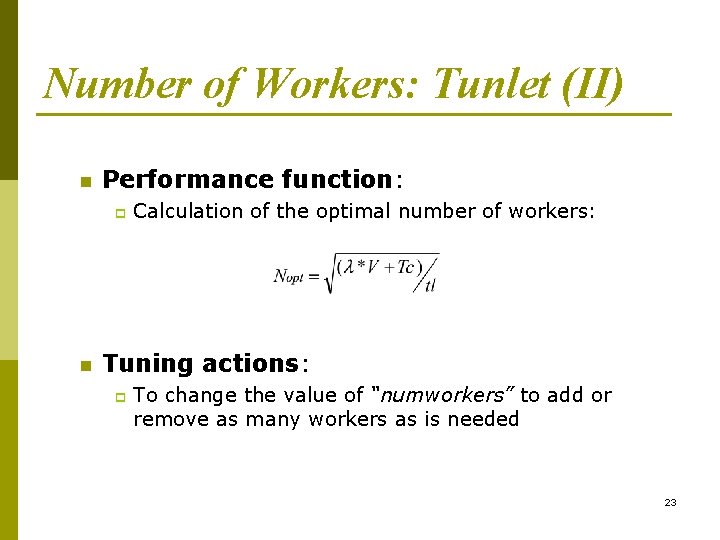

Number of Workers: Tunlet (II) n Performance function: p n Calculation of the optimal number of workers: Tuning actions: p To change the value of “numworkers” to add or remove as many workers as is needed 23

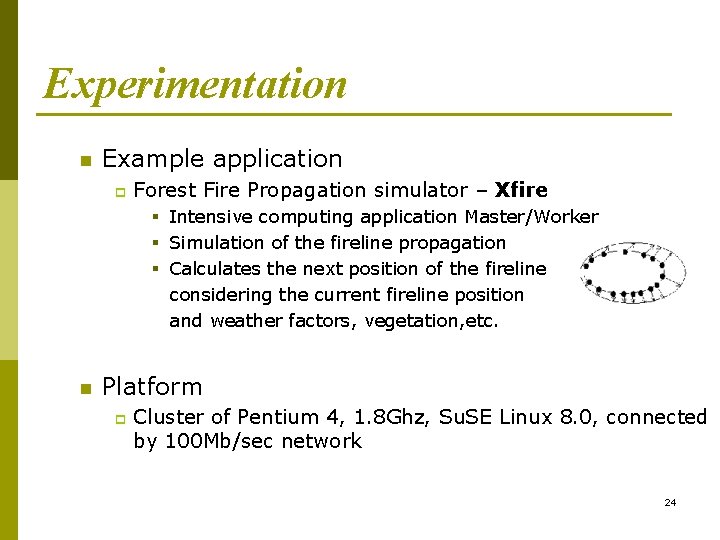

Experimentation n Example application p Forest Fire Propagation simulator – Xfire § Intensive computing application Master/Worker § Simulation of the fireline propagation § Calculates the next position of the fireline considering the current fireline position and weather factors, vegetation, etc. n Platform p Cluster of Pentium 4, 1. 8 Ghz, Su. SE Linux 8. 0, connected by 100 Mb/sec network 24

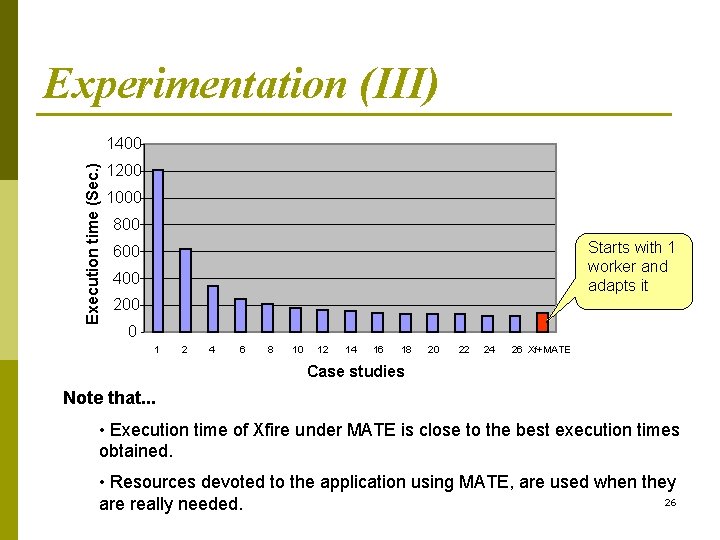

Experimentation (II) n Load in the system p p p n We designed different external load patterns They simulate the system’s time-sharing Allow us to reproduce experiments Case Studies p p Xfire executed with different fixed number of workers without any tuning, introducing external loads Xfire executed under MATE, introducing external loads 25

Experimentation (III) Execution time (Sec. ) 1400 1200 1000 800 Starts with 1 worker and adapts it 600 400 200 0 1 2 4 6 8 10 12 14 16 18 20 22 24 26 Xf+MATE Case studies Note that. . . • Execution time of Xfire under MATE is close to the best execution times obtained. • Resources devoted to the application using MATE, are used when they 26 are really needed.

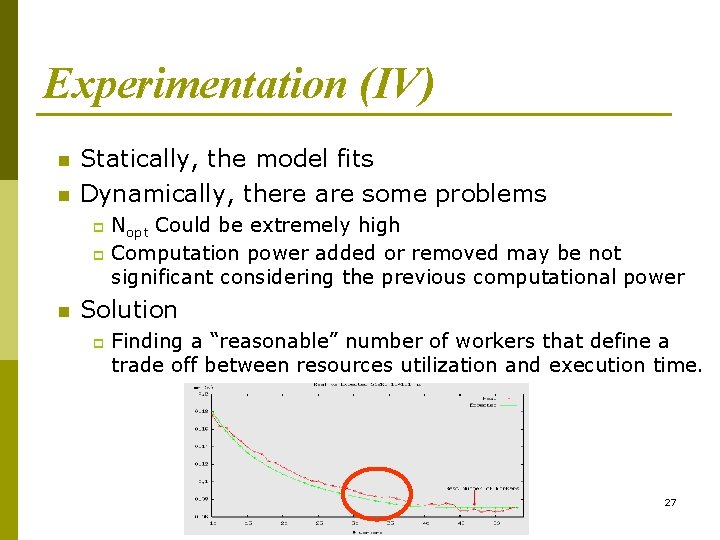

Experimentation (IV) n n Statically, the model fits Dynamically, there are some problems Nopt Could be extremely high p Computation power added or removed may be not significant considering the previous computational power p n Solution p Finding a “reasonable” number of workers that define a trade off between resources utilization and execution time. 27

Outline Introduction p MATE p Number of workers p Data distribution p Conclusions p 28

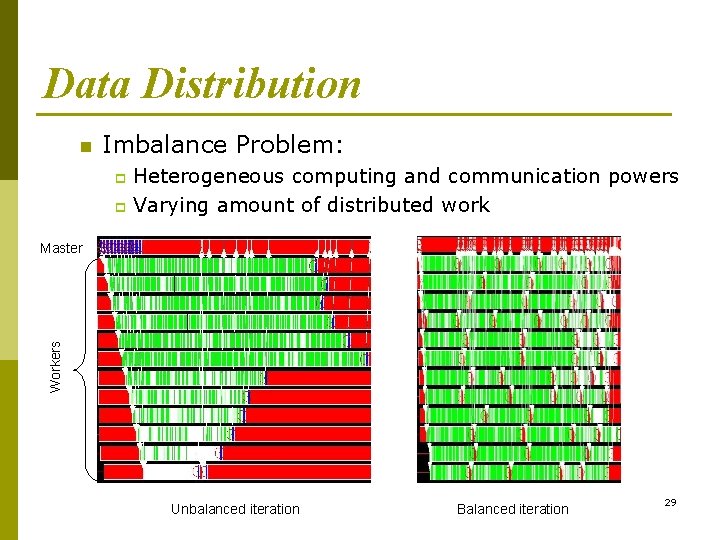

Data Distribution n Imbalance Problem: Heterogeneous computing and communication powers p Varying amount of distributed work p Workers Master Unbalanced iteration Balanced iteration 29

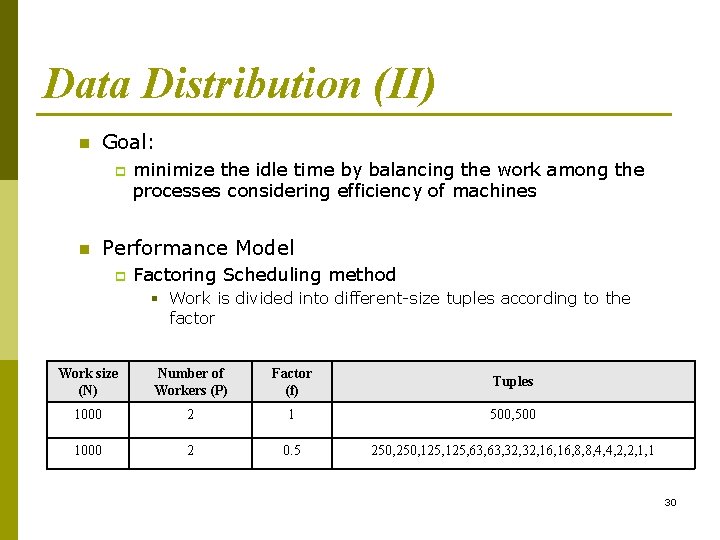

Data Distribution (II) n Goal: p n minimize the idle time by balancing the work among the processes considering efficiency of machines Performance Model p Factoring Scheduling method § Work is divided into different-size tuples according to the factor Work size (N) Number of Workers (P) Factor (f) Tuples 1000 2 1 500, 500 1000 2 0. 5 250, 125, 63, 32, 16, 8, 8, 4, 4, 2, 2, 1, 1 30

Data Distribution: Tunlet n Measure points: p p n Performance function: p p p n The work unit processing time. The latency and bandwidth Calculation of the factor. Analyzer simulates the execution considering different factors. Finally, it decides the best factor. Currently we are working on an analytical model to determine the factor Tuning actions: p To change the value of “The. Factor. F” 31

Experimentation n Example application p n Forest Fire Propagation simulator – Xfire Platform p Cluster of Pentium 4, 1. 8 Ghz, Su. SE Linux 8. 0, connected by 100 Mb/sec network 32

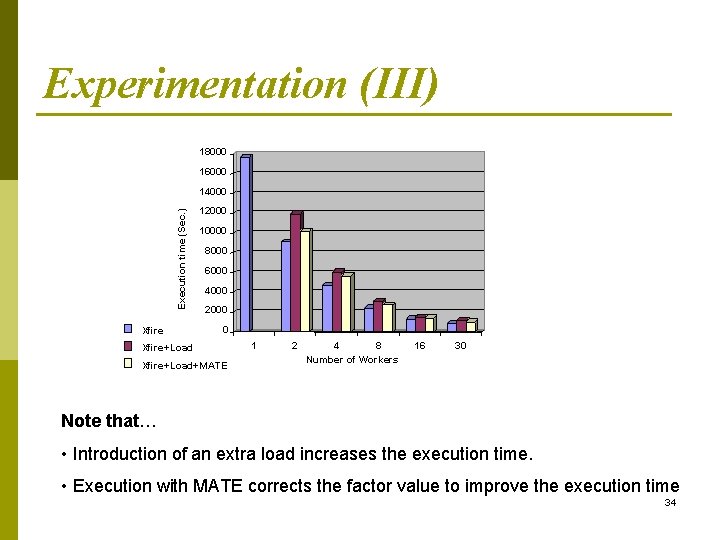

Experimentation (II) n Load in the system We designed different external load patterns p They simulate the system’s time-sharing p Permit us to reproduce experiments p n Study Cases Xfire executed without any tuning p Xfire, introducing controlled variable external loads p Xfire executed under MATE, introducing variable external loads p 33

Experimentation (III) 18000 16000 Execution time (Sec. ) 14000 Xfire 12000 10000 8000 6000 4000 2000 0 Xfire+Load+MATE 1 2 4 8 Number of Workers 16 30 Note that… • Introduction of an extra load increases the execution time. • Execution with MATE corrects the factor value to improve the execution time 34

Outline Introduction p MATE p Number of workers p Data distribution p Conclusions p 35

Conclusions and open lines n Conclusions p p Prototype environment – MATE – automatically monitors, analyses and tunes running applications Practical experiments conducted with MATE and parallel/distributed applications prove that it automatically adapts application behavior to existing conditions during run time MATE in particular is able to tune Master/Worker applications and overcome the possible bottlenecks: number of workers and data distribution Dynamic tuning works, is applicable, effective and useful in certain conditions. 36

Conclusions and open lines n Open Lines p Determining the “reasonable” number of workers. p Considering interaction between different tunlets. p Providing the system with other tuning techniques. 37

Thank you… 38

- Slides: 38