Panasas Parallel File System Brent Welch Director of

Panasas Parallel File System Brent Welch Director of Software Architecture, Panasas Inc October 16, 2008 HPC User Forum Go Faster. Go Parallel. www. panasas. com

Outline Panasas background Storage cluster background, hardware and software Technical Topics High Availability Scalable RAID p. NFS Brent’s garden, California Slide 2 | October 2008 IDC, Panasas, Inc.

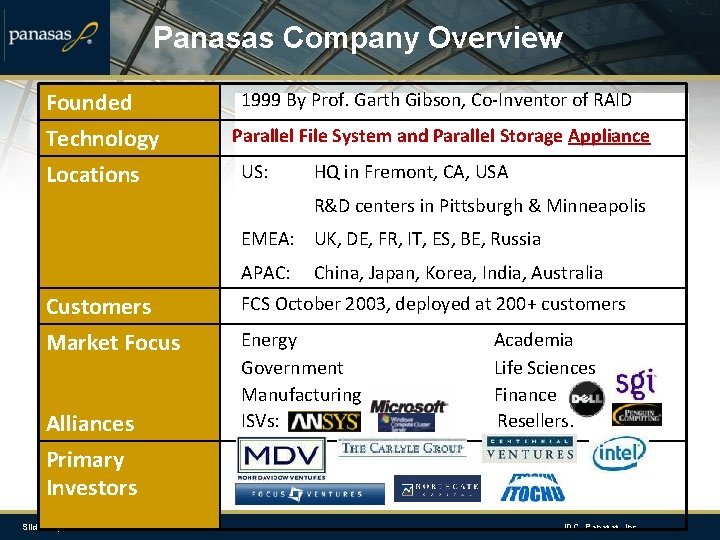

Panasas Company Overview Founded Technology Locations 1999 By Prof. Garth Gibson, Co-Inventor of RAID Parallel File System and Parallel Storage Appliance US: HQ in Fremont, CA, USA R&D centers in Pittsburgh & Minneapolis EMEA: UK, DE, FR, IT, ES, BE, Russia APAC: Customers Market Focus Alliances China, Japan, Korea, India, Australia FCS October 2003, deployed at 200+ customers Energy Government Manufacturing ISVs: Academia Life Sciences Finance Resellers: Primary Investors Slide 3 | October 2008 IDC, Panasas, Inc.

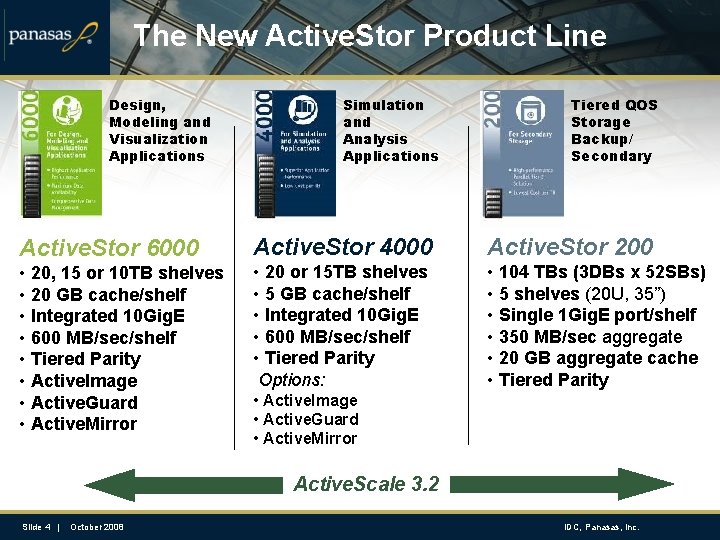

The New Active. Stor Product Line Design, Modeling and Visualization Applications Simulation and Analysis Applications Tiered QOS Storage Backup/ Secondary Active. Stor 6000 Active. Stor 4000 Active. Stor 200 • 20, 15 or 10 TB shelves • 20 GB cache/shelf • Integrated 10 Gig. E • 600 MB/sec/shelf • Tiered Parity • Active. Image • Active. Guard • Active. Mirror • 20 or 15 TB shelves • 5 GB cache/shelf • Integrated 10 Gig. E • 600 MB/sec/shelf • Tiered Parity Options: • 104 TBs (3 DBs x 52 SBs) • 5 shelves (20 U, 35”) • Single 1 Gig. E port/shelf • 350 MB/sec aggregate • 20 GB aggregate cache • Tiered Parity • Active. Image • Active. Guard • Active. Mirror Active. Scale 3. 2 Slide 4 | October 2008 IDC, Panasas, Inc.

Leaders in HPC choose Panasas Slide 5 | October 2008 IDC, Panasas, Inc.

Panasas Leadership Role in HPC US DOE: Panasas Selected for Roadrunner – Top of the Top 500 LANL $130 M system will deliver 2 x performance over current top BG/L Sci. DAC: Panasas CTO selected to lead Petascale Data Storage Inst CTO Gibson leads PDSI launched Sep 06, leveraging experience from PDSI members: LBNL/Nersc; LANL; ORNL; PNNL Sandia NL; and UCSC Aerospace: Airframes and engines, both commercial and defense Boeing HPC file system; major engine mfg; top 3 U. S. defense contractors Formula-1: HPC file system for Top 2 clusters – 3 teams in total Top clusters at Renault F-1 and BMW Sauber, Ferrari also on Panasas Intel: Certifies Panasas storage for broad range of HPC applications, now ICR Intel uses Panasas storage for EDA design, and in HPC benchmark center SC 07: Six Panasas customers won awards at SC 07 (Reno) conference Validation: Extensive recognition and awards for HPC breakthroughs Slide 6 | October 2008 IDC, Panasas, Inc.

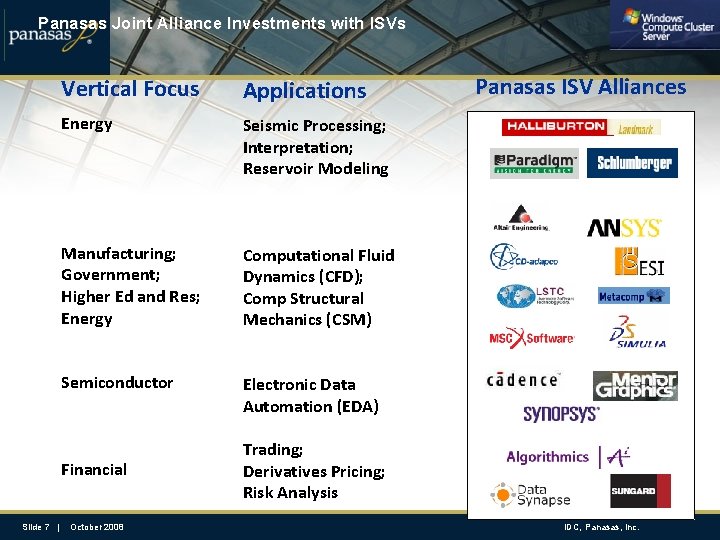

Panasas Joint Alliance Investments with ISVs Vertical Focus Applications Energy Seismic Processing; Interpretation; Reservoir Modeling Manufacturing; Government; Higher Ed and Res; Energy Computational Fluid Dynamics (CFD); Comp Structural Mechanics (CSM) Semiconductor Electronic Data Automation (EDA) Financial Slide 7 | October 2008 Panasas ISV Alliances Trading; Derivatives Pricing; Risk Analysis IDC, Panasas, Inc.

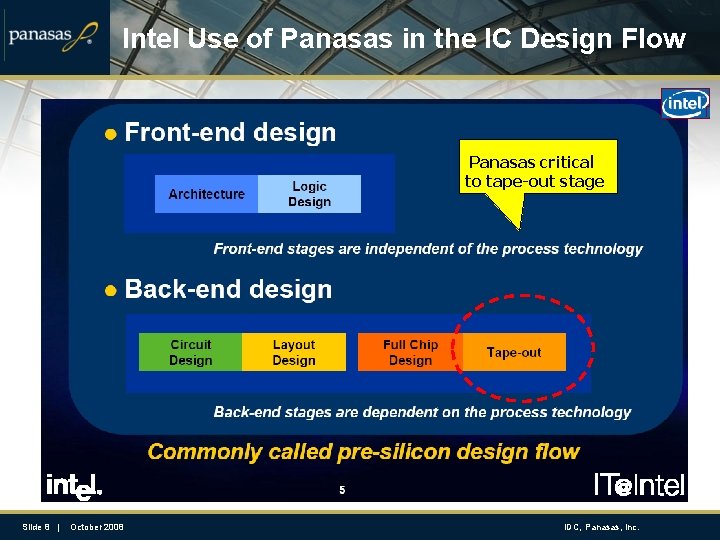

Intel Use of Panasas in the IC Design Flow Panasas critical to tape-out stage Slide 8 | October 2008 IDC, Panasas, Inc.

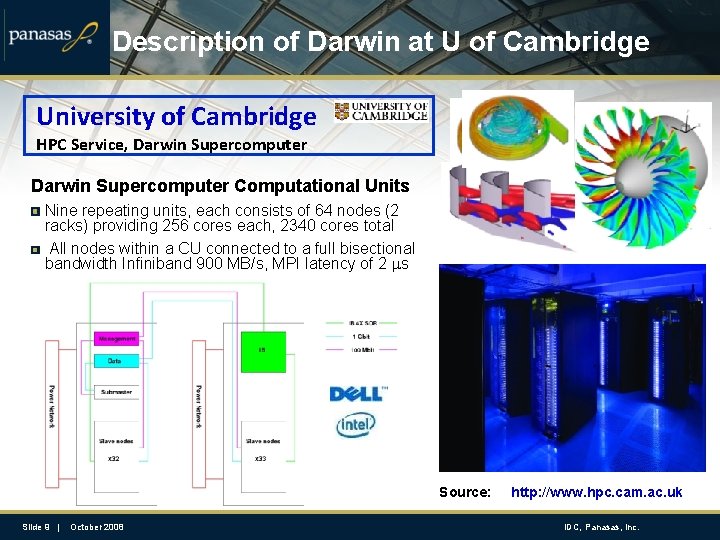

Description of Darwin at U of Cambridge University of Cambridge HPC Service, Darwin Supercomputer Computational Units Nine repeating units, each consists of 64 nodes (2 racks) providing 256 cores each, 2340 cores total All nodes within a CU connected to a full bisectional bandwidth Infiniband 900 MB/s, MPI latency of 2 ms Source: Slide 9 | October 2008 http: //www. hpc. cam. ac. uk IDC, Panasas, Inc.

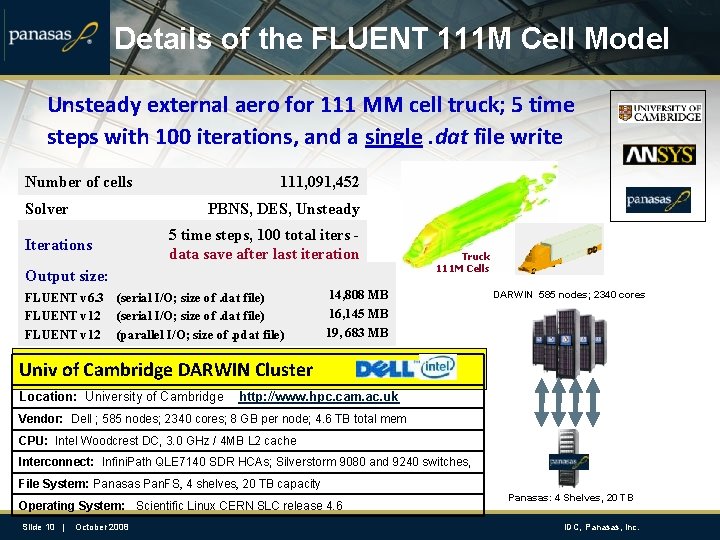

Details of the FLUENT 111 M Cell Model Unsteady external aero for 111 MM cell truck; 5 time steps with 100 iterations, and a single. dat file write Number of cells Solver 111, 091, 452 PBNS, DES, Unsteady 5 time steps, 100 total iters data save after last iteration Iterations Output size: FLUENT v 6. 3 FLUENT v 12 (serial I/O; size of. dat file) (parallel I/O; size of. pdat file) Truck 111 M Cells 14, 808 MB 16, 145 MB 19, 683 MB DARWIN 585 nodes; 2340 cores Univ of Cambridge DARWIN Cluster Location: University of Cambridge http: //www. hpc. cam. ac. uk Vendor: Dell ; 585 nodes; 2340 cores; 8 GB per node; 4. 6 TB total mem CPU: Intel Woodcrest DC, 3. 0 GHz / 4 MB L 2 cache Interconnect: Infini. Path QLE 7140 SDR HCAs; Silverstorm 9080 and 9240 switches, File System: Panasas Pan. FS, 4 shelves, 20 TB capacity Operating System: Scientific Linux CERN SLC release 4. 6 Slide 10 | October 2008 Panasas: 4 Shelves, 20 TB IDC, Panasas, Inc.

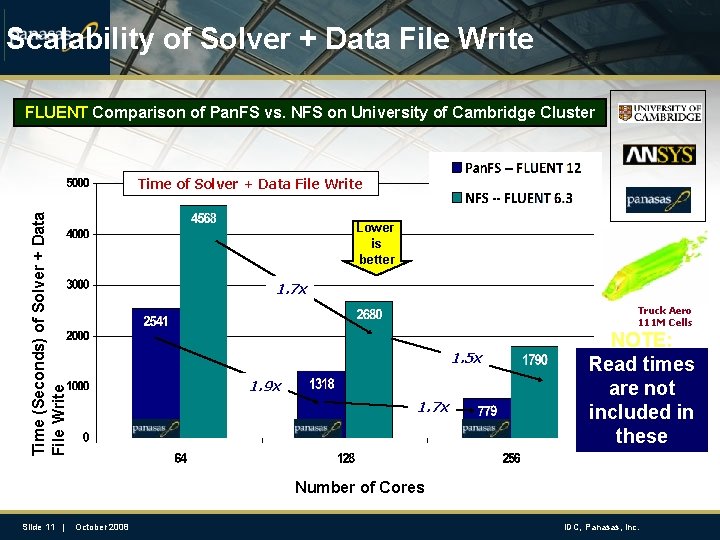

Scalability of Solver + Data File Write FLUENT Comparison of Pan. FS vs. NFS on University of Cambridge Cluster Time (Seconds) of Solver + Data File Write Time of Solver + Data File Write Lower is better 1. 7 x Truck Aero 111 M Cells 1. 5 x 1. 9 x 1. 7 x NOTE: Read times are not included in these results Number of Cores Slide 11 | October 2008 IDC, Panasas, Inc.

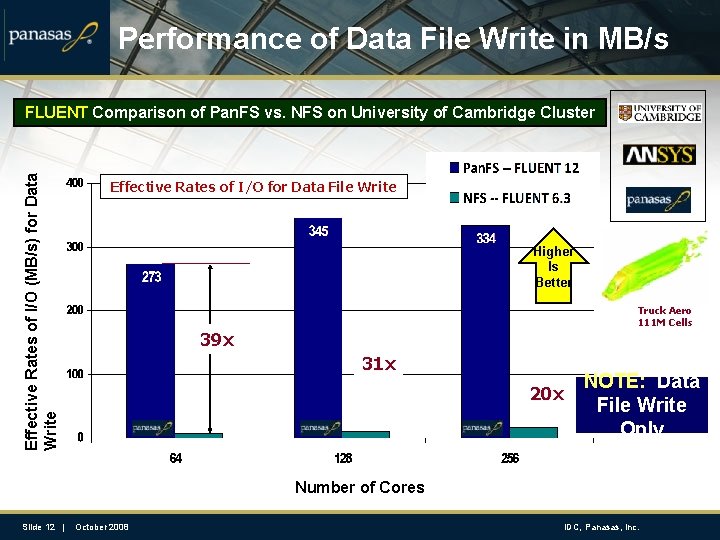

Performance of Data File Write in MB/s Effective Rates of I/O (MB/s) for Data Write FLUENT Comparison of Pan. FS vs. NFS on University of Cambridge Cluster Effective Rates of I/O for Data File Write Higher Is Better Truck Aero 111 M Cells 39 x 31 x 20 x NOTE: Data File Write Only Number of Cores Slide 12 | October 2008 IDC, Panasas, Inc.

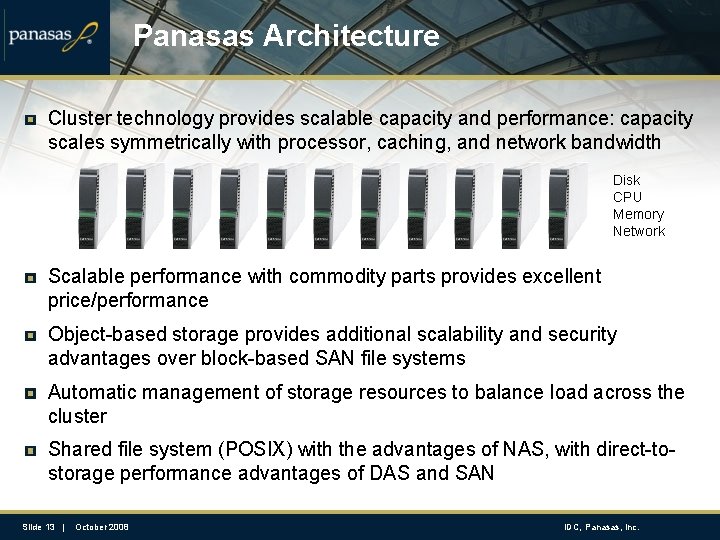

Panasas Architecture Cluster technology provides scalable capacity and performance: capacity scales symmetrically with processor, caching, and network bandwidth Disk CPU Memory Network Scalable performance with commodity parts provides excellent price/performance Object-based storage provides additional scalability and security advantages over block-based SAN file systems Automatic management of storage resources to balance load across the cluster Shared file system (POSIX) with the advantages of NAS, with direct-tostorage performance advantages of DAS and SAN Slide 13 | October 2008 IDC, Panasas, Inc.

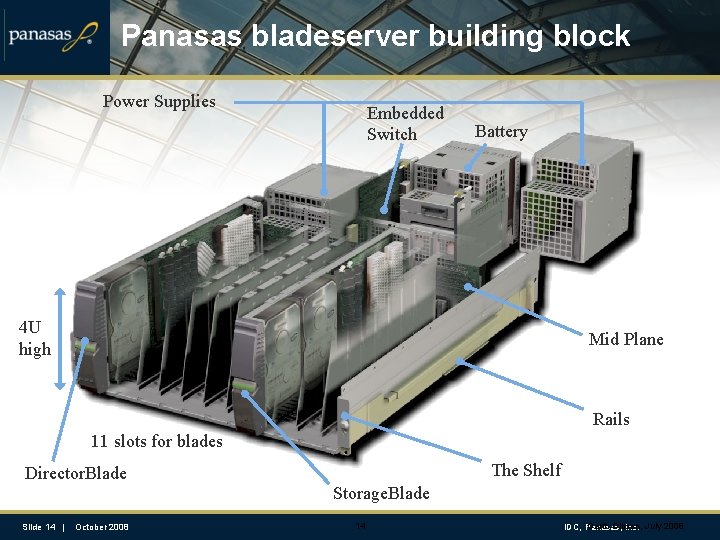

Panasas bladeserver building block Power Supplies Embedded Switch Battery 4 U high Mid Plane Rails 11 slots for blades The Shelf Director. Blade Storage. Blade Slide 14 | October 2008 14 Garth Gibson, IDC, Panasas, Inc. July 2008

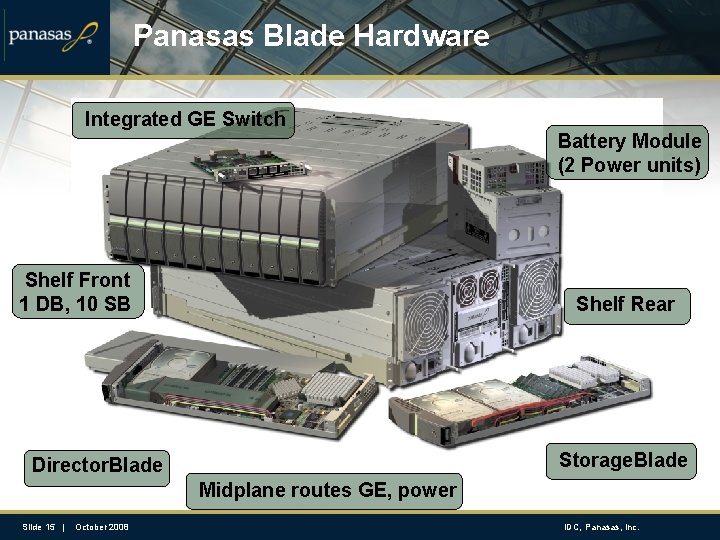

Panasas Blade Hardware Integrated GE Switch Shelf Front 1 DB, 10 SB Battery Module (2 Power units) Shelf Rear Storage. Blade Director. Blade Midplane routes GE, power Slide 15 | October 2008 IDC, Panasas, Inc.

Panasas Product Advantages Proven implementation with appliance-like ease of use/deployment Running mission-critical workloads at global F 500 companies Scalable performance with Object-based RAID No degradation as the storage system scales in size Unmatched RAID rebuild rates – parallel reconstruction Unique data integrity features Vertical parity on drives to mitigate media errors and silent corruptions Per-file RAID provides scalable rebuild and per-file fault isolation Network verified parity for end-to-end data verification at the client Scalable system size with integrated cluster management Storage clusters scaling to 1000+ storage nodes, 100+ metadata managers Simultaneous access from over 12000 servers Slide 16 | October 2008 IDC, Panasas, Inc.

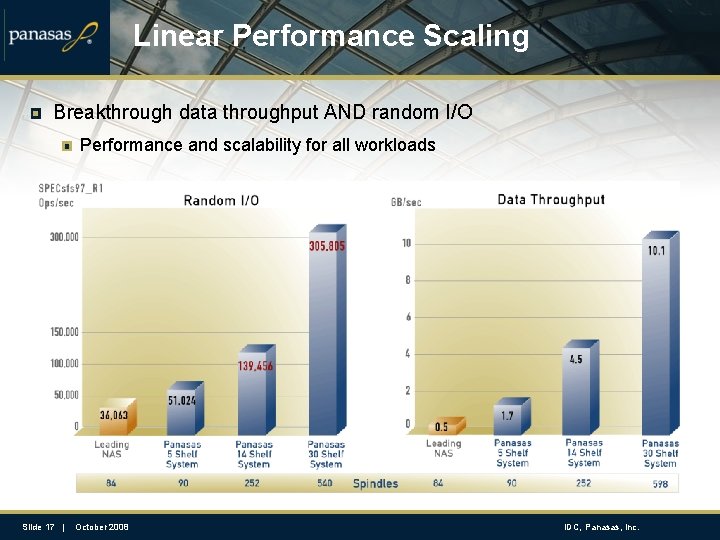

Linear Performance Scaling Breakthrough data throughput AND random I/O Performance and scalability for all workloads Slide 17 | October 2008 IDC, Panasas, Inc.

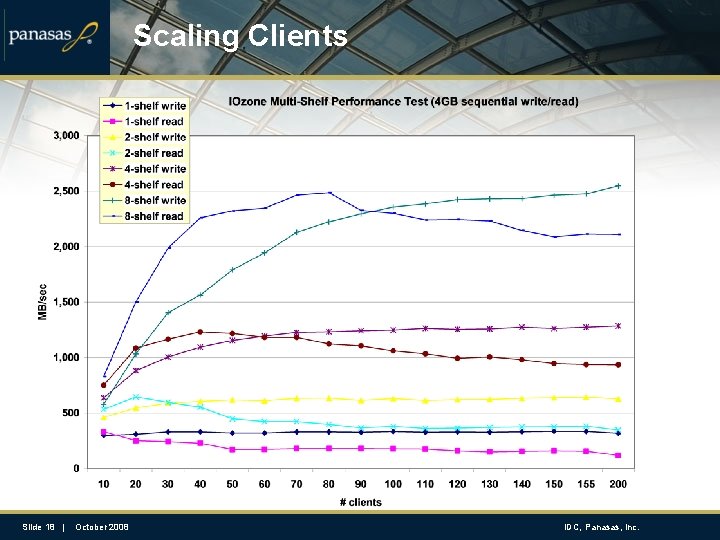

Scaling Clients Slide 18 | October 2008 IDC, Panasas, Inc.

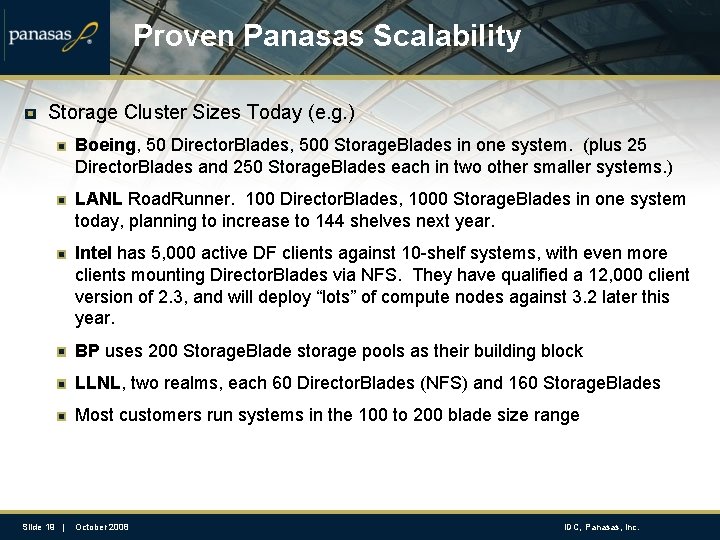

Proven Panasas Scalability Storage Cluster Sizes Today (e. g. ) Boeing, 50 Director. Blades, 500 Storage. Blades in one system. (plus 25 Director. Blades and 250 Storage. Blades each in two other smaller systems. ) LANL Road. Runner. 100 Director. Blades, 1000 Storage. Blades in one system today, planning to increase to 144 shelves next year. Intel has 5, 000 active DF clients against 10 -shelf systems, with even more clients mounting Director. Blades via NFS. They have qualified a 12, 000 client version of 2. 3, and will deploy “lots” of compute nodes against 3. 2 later this year. BP uses 200 Storage. Blade storage pools as their building block LLNL, two realms, each 60 Director. Blades (NFS) and 160 Storage. Blades Most customers run systems in the 100 to 200 blade size range Slide 19 | October 2008 IDC, Panasas, Inc.

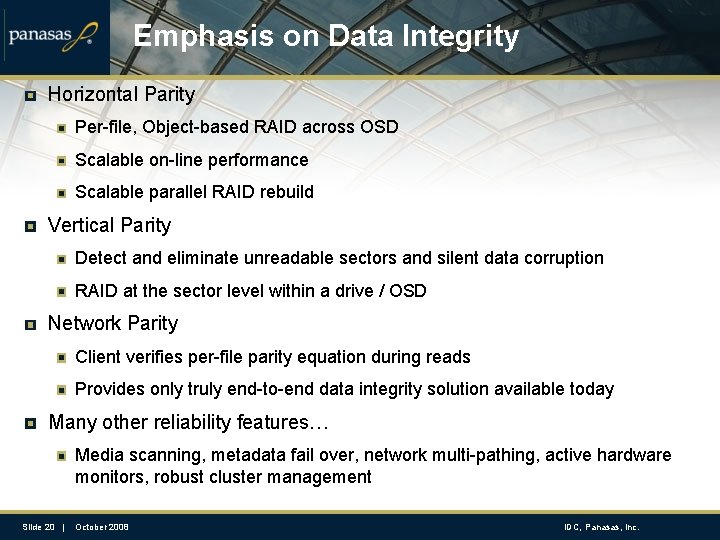

Emphasis on Data Integrity Horizontal Parity Per-file, Object-based RAID across OSD Scalable on-line performance Scalable parallel RAID rebuild Vertical Parity Detect and eliminate unreadable sectors and silent data corruption RAID at the sector level within a drive / OSD Network Parity Client verifies per-file parity equation during reads Provides only truly end-to-end data integrity solution available today Many other reliability features… Media scanning, metadata fail over, network multi-pathing, active hardware monitors, robust cluster management Slide 20 | October 2008 IDC, Panasas, Inc.

High Availability Quorum based cluster management 3 or 5 cluster managers to avoid split brain Replicated system state Cluster manager controls the blades and all other services High performance file system metadata fail over Primary-backup relationship controlled by cluster manager Low latency log replication to protect journals Client-aware fail over for application-transparency NFS level fail over via IP takeover Virtual NFS servers migrate among Director. Blade modules Lock services (lockd/statd) fully integrated with fail over system Slide 21 | October 2008 IDC, Panasas, Inc.

Technology Review Turn-key deployment and automatic resource configuration Storage Clusters scaling to ~1000 nodes today Scalable Object RAID Very fast RAID rebuild Compute clusters scaling to 12, 000 nodes today Vertical Parity to trap silent corruptions Blade-based hardware with 1 Gb/sec building block Network parity for end-to-end data verification Bigger building block going forward Distributed system platform with quorum-based fault tolerance Coarse grain metadata clustering Metadata fail over Automatic capacity load leveling Slide 22 | October 2008 IDC, Panasas, Inc.

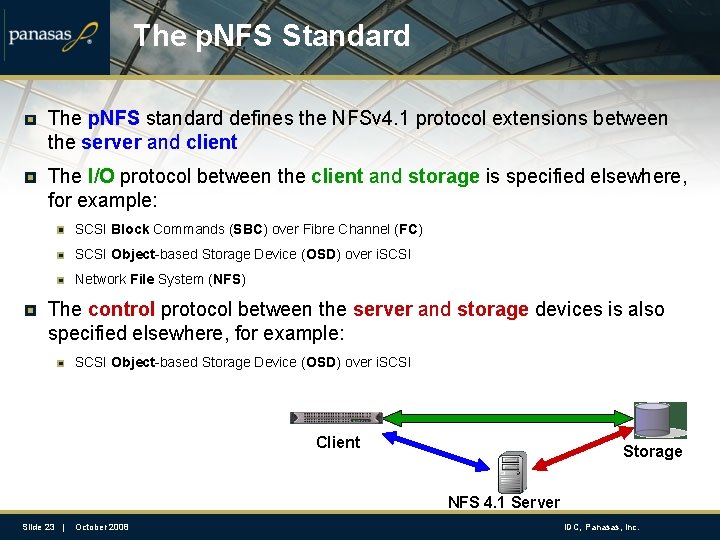

The p. NFS Standard The p. NFS standard defines the NFSv 4. 1 protocol extensions between the server and client The I/O protocol between the client and storage is specified elsewhere, for example: SCSI Block Commands (SBC) over Fibre Channel (FC) SCSI Object-based Storage Device (OSD) over i. SCSI Network File System (NFS) The control protocol between the server and storage devices is also specified elsewhere, for example: SCSI Object-based Storage Device (OSD) over i. SCSI Client Storage NFS 4. 1 Server Slide 23 | October 2008 IDC, Panasas, Inc.

Key p. NFS Participants Panasas (Objects) Network Appliance (Files over NFSv 4) IBM (Files, based on GPFS) EMC (Blocks, High. Road MPFSi) Sun (Files over NFSv 4) U of Michigan/CITI (Files over PVFS 2) Slide 24 | October 2008 IDC, Panasas, Inc.

p. NFS Status p. NFS is part of the IETF NFSv 4 minor version 1 standard draft Working group is passing draft up to IETF area directors, expect RFC later in ’ 08 Prototype interoperability continues San Jose Connect-a-thon March ’ 06, February ’ 07, May ‘ 08 Ann Arbor NFS Bake-a-thon September ’ 06, October ’ 07 Dallas p. NFS inter-op, June ’ 07, Austin February ’ 08, (Sept ’ 08) Availability TBD – gated behind NFSv 4 adoption and working implementations of p. NFS Patch sets to be submitted to Linux NFS maintainer starting “soon” Vendor announcements in 2008 Early adoptors in 2009 Production ready in 2010 Slide 25 | October 2008 IDC, Panasas, Inc.

Questions? Thank you for your time! Go Faster. Go Parallel. www. panasas. com

Deep Dive: Reliability High Availability Cluster Management Data Integrity Slide 27 | October 2008 IDC, Panasas, Inc.

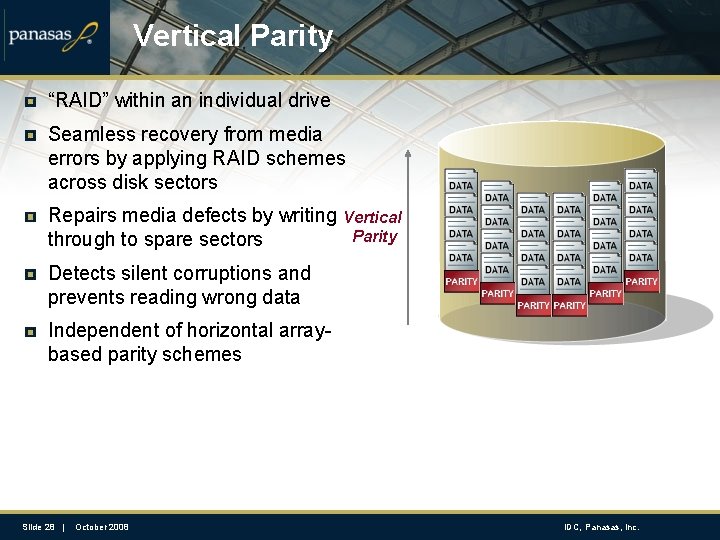

Vertical Parity “RAID” within an individual drive Seamless recovery from media errors by applying RAID schemes across disk sectors Repairs media defects by writing through to spare sectors Vertical Parity Detects silent corruptions and prevents reading wrong data Independent of horizontal arraybased parity schemes Slide 28 | October 2008 IDC, Panasas, Inc.

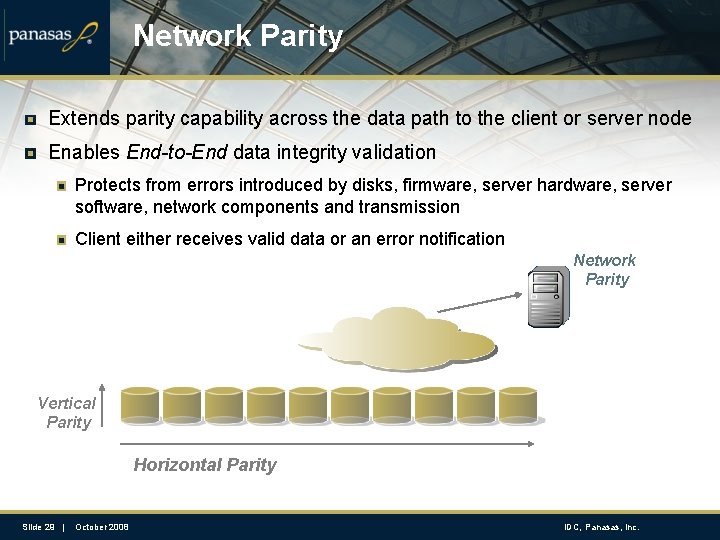

Network Parity Extends parity capability across the data path to the client or server node Enables End-to-End data integrity validation Protects from errors introduced by disks, firmware, server hardware, server software, network components and transmission Client either receives valid data or an error notification Network Parity Vertical Parity Horizontal Parity Slide 29 | October 2008 IDC, Panasas, Inc.

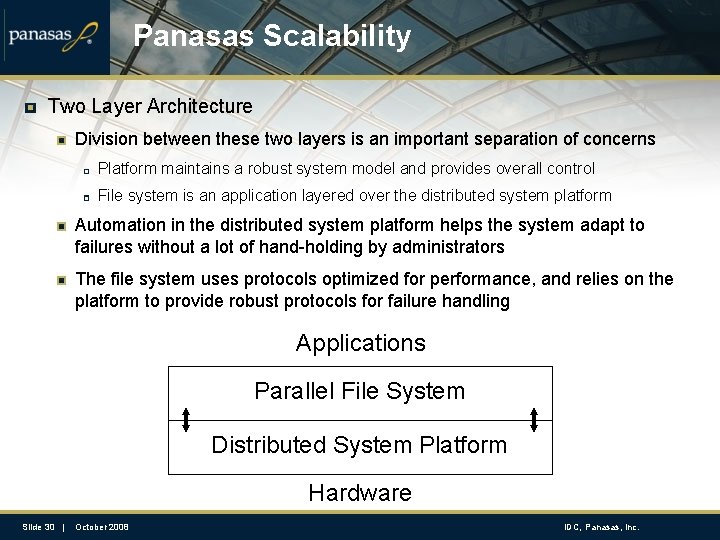

Panasas Scalability Two Layer Architecture Division between these two layers is an important separation of concerns Platform maintains a robust system model and provides overall control File system is an application layered over the distributed system platform Automation in the distributed system platform helps the system adapt to failures without a lot of hand-holding by administrators The file system uses protocols optimized for performance, and relies on the platform to provide robust protocols for failure handling Applications Parallel File System Distributed System Platform Hardware Slide 30 | October 2008 IDC, Panasas, Inc.

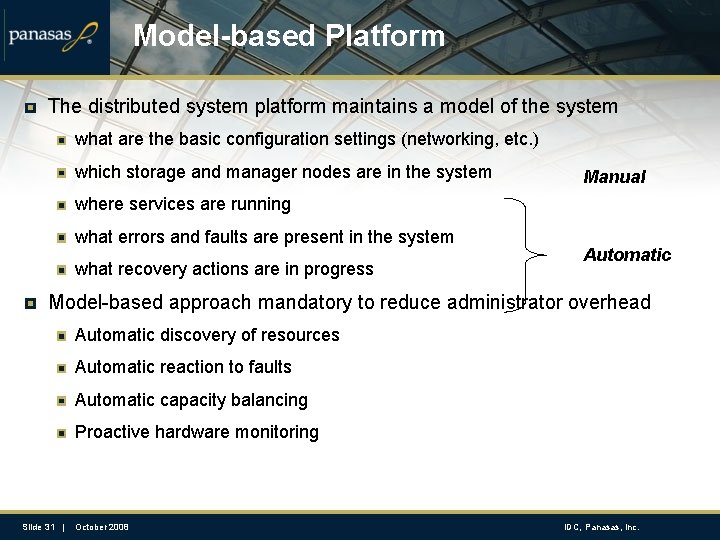

Model-based Platform The distributed system platform maintains a model of the system what are the basic configuration settings (networking, etc. ) which storage and manager nodes are in the system Manual where services are running what errors and faults are present in the system what recovery actions are in progress Automatic Model-based approach mandatory to reduce administrator overhead Automatic discovery of resources Automatic reaction to faults Automatic capacity balancing Proactive hardware monitoring Slide 31 | October 2008 IDC, Panasas, Inc.

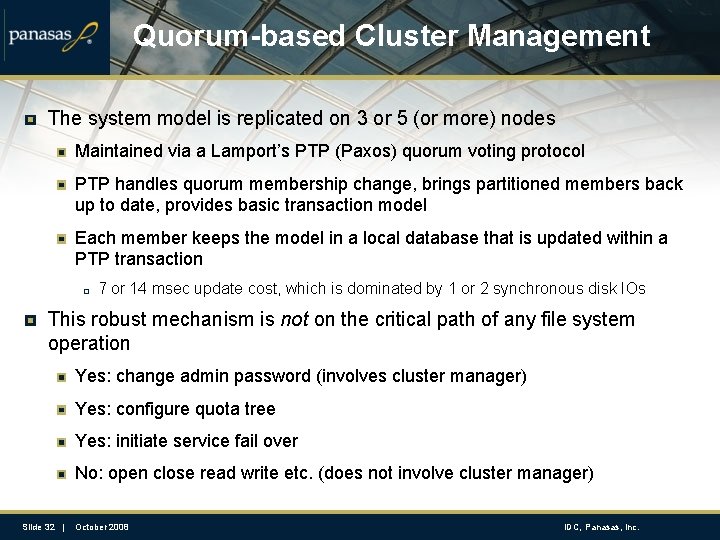

Quorum-based Cluster Management The system model is replicated on 3 or 5 (or more) nodes Maintained via a Lamport’s PTP (Paxos) quorum voting protocol PTP handles quorum membership change, brings partitioned members back up to date, provides basic transaction model Each member keeps the model in a local database that is updated within a PTP transaction 7 or 14 msec update cost, which is dominated by 1 or 2 synchronous disk IOs This robust mechanism is not on the critical path of any file system operation Yes: change admin password (involves cluster manager) Yes: configure quota tree Yes: initiate service fail over No: open close read write etc. (does not involve cluster manager) Slide 32 | October 2008 IDC, Panasas, Inc.

File Metadata Pan. FS metadata manager stores metadata in object attributes All component objects have simple attributes like their capacity, length, and security tag Two component objects store replica of file-level attributes, e. g. file-length, owner, ACL, parent pointer Directories contain hints about where components of a file are stored There is no database on the metadata manager Just transaction logs that are replicated to a backup via a low-latency network protocol Slide 33 | October 2008 IDC, Panasas, Inc.

File Metadata File ownership divided along file subtree boundaries (“volumes”) Multiple metadata managers, each own one or more volumes Match with the quota-tree abstraction used by the administrator Creating volumes creates more units of meta-data work Primary-backup failover model for MDS 90 us remote log update over 1 GE, vs. 2 us local in-memory log update Some customers introduce lots of volumes One volume per Director. Blade module is ideal Some customers are stubborn and stick with just one E. g. , 75 TB and 144 Storage. Blade modules and a single MDS Slide 34 | October 2008 IDC, Panasas, Inc.

Scalability over time Software baseline Single software product, but with branches corresponding to major releases that come out every year (or so) Today most customers are on 3. 0. x, which had a two year lifetime We are just introducing 3. 2, which has been in QA and beta for 7 months There is forward development on newer features Our goal is a major release each year, with a small number of maintenance releases in between major releases Compatibility is key New versions upgrade cleanly over old versions Old clients communicate with new servers, and vice versa Old hardware is compatible with new software Integrated data migration to newer hardware platforms Slide 35 | October 2008 IDC, Panasas, Inc.

Deep Dive: Networking Scalable networking infrastructure Integration with compute cluster fabrics Slide 36 | October 2008 IDC, Panasas, Inc.

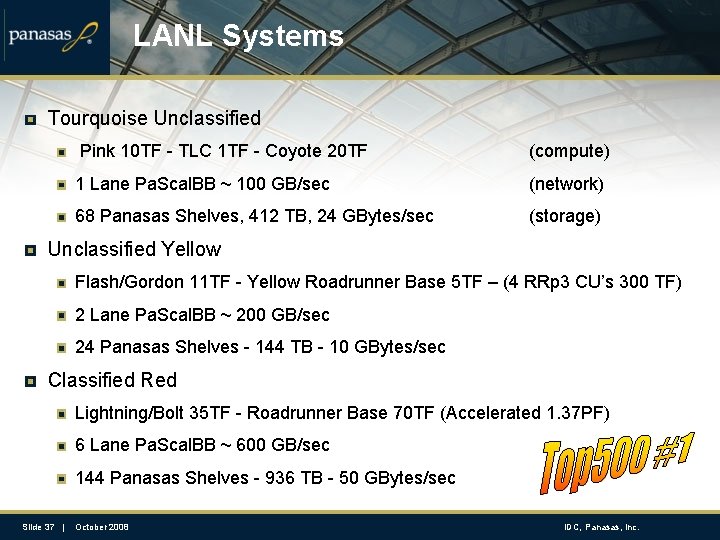

LANL Systems Tourquoise Unclassified Pink 10 TF - TLC 1 TF - Coyote 20 TF (compute) 1 Lane Pa. Scal. BB ~ 100 GB/sec (network) 68 Panasas Shelves, 412 TB, 24 GBytes/sec (storage) Unclassified Yellow Flash/Gordon 11 TF - Yellow Roadrunner Base 5 TF – (4 RRp 3 CU’s 300 TF) 2 Lane Pa. Scal. BB ~ 200 GB/sec 24 Panasas Shelves - 144 TB - 10 GBytes/sec Classified Red Lightning/Bolt 35 TF - Roadrunner Base 70 TF (Accelerated 1. 37 PF) 6 Lane Pa. Scal. BB ~ 600 GB/sec 144 Panasas Shelves - 936 TB - 50 GBytes/sec Slide 37 | October 2008 IDC, Panasas, Inc.

IB and other network fabrics Panasas is a TCP/IP, GE-based storage product Universal deployment, Universal routability Commodity price curve Panasas customers use IB, Myrinet, Quadrics, … Cluster interconnect du jour for performance, not necessarily cost IO routers connect cluster fabric to GE backbone Analogous to an “IO node”, but just does TCP/IP routing (no storage) Robust connectivity through IP multipath routing Scalable throughput at approx 650 MB/sec IO router (PCI-e class) Working on a 1 GB/sec IO router IB-GE switching platforms QLogic or Voltare switch provides wire-speed bridging Slide 38 | October 2008 IDC, Panasas, Inc.

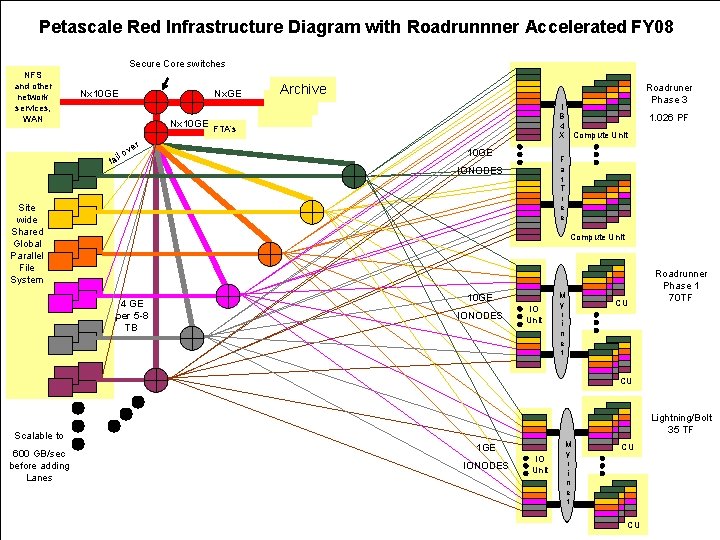

Petascale Red Infrastructure Diagram with Roadrunnner Accelerated FY 08 Secure Core switches NFS and other network services, WAN Nx 10 GE r ve lo fai Archive I B 4 X Compute Unit FTA’s 10 GE Roadruner Phase 3 1. 026 PF F a t T r e e IONODES Site wide Shared Global Parallel File System Compute Unit 4 GE per 5 -8 TB 10 GE IONODES IO Unit M y r i n e t CU Roadrunner Phase 1 70 TF CU Lightning/Bolt 35 TF Scalable to 1 GE 600 GB/sec before adding Lanes Slide 39 | IONODES October 2008 IO Unit M y r i n e t CU CU IDC, Panasas, Inc.

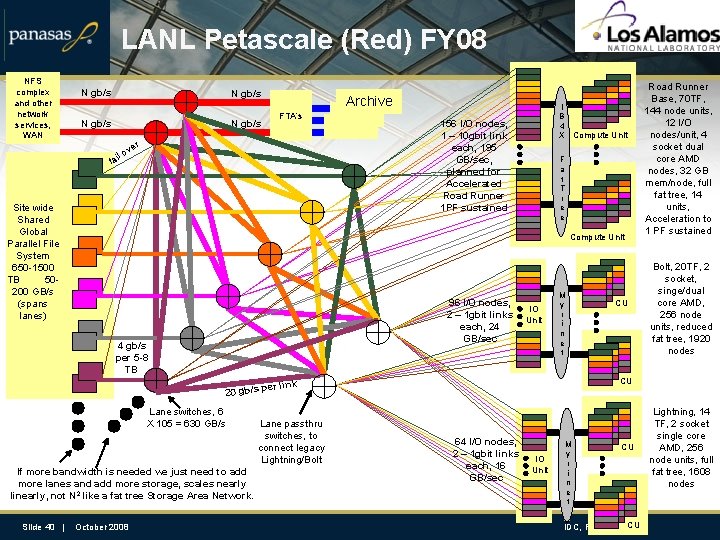

LANL Petascale (Red) FY 08 NFS complex and other network services, WAN N gb/s Archive FTA’s r ve lo fai Site wide Shared Global Parallel File System 650 -1500 TB 50200 GB/s (spans lanes) 156 I/O nodes, 1 – 10 gbit link each, 195 GB/sec, planned for Accelerated Road Runner 1 PF sustained F a t T r e e Compute Unit 96 I/O nodes, 2 – 1 gbit links each, 24 GB/sec 4 gb/s per 5 -8 TB 20 gb/s Lane switches, 6 X 105 = 630 GB/s If more bandwidth is needed we just need to add more lanes and add more storage, scales nearly linearly, not N 2 like a fat tree Storage Area Network. Slide 40 | I B 4 X Compute Unit October 2008 IO Unit M y r i n e t Bolt, 20 TF, 2 socket, singe/dual core AMD, 256 node units, reduced fat tree, 1920 nodes CU per link Lane passthru switches, to connect legacy Lightning/Bolt CU Road Runner Base, 70 TF, 144 node units, 12 I/O nodes/unit, 4 socket dual core AMD nodes, 32 GB mem/node, full fat tree, 14 units, Acceleration to 1 PF sustained 64 I/O nodes, 2 – 1 gbit links each, 16 GB/sec IO Unit M y r i n e t CU CU IDC, Panasas, Inc. Lightning, 14 TF, 2 socket single core AMD, 256 node units, full fat tree, 1608 nodes

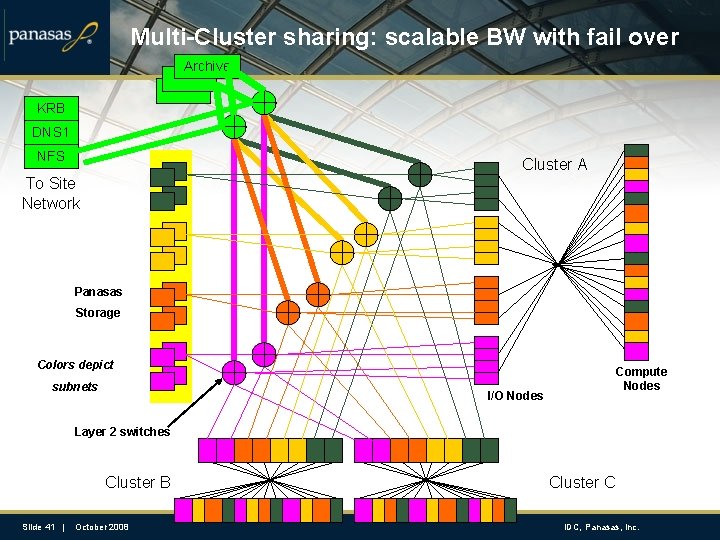

Multi-Cluster sharing: scalable BW with fail over Archive KRB DNS 1 NFS Cluster A To Site Network Panasas Storage Colors depict subnets Compute Nodes I/O Nodes Layer 2 switches Cluster B Slide 41 | October 2008 Cluster C IDC, Panasas, Inc.

Deep Dive: Scalable RAID Per-file RAID Scalable RAID rebuild Slide 42 | October 2008 IDC, Panasas, Inc.

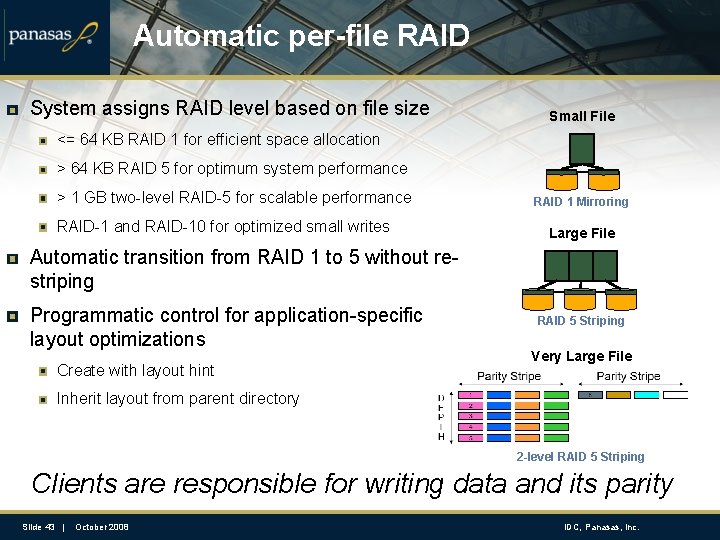

Automatic per-file RAID System assigns RAID level based on file size Small File <= 64 KB RAID 1 for efficient space allocation > 64 KB RAID 5 for optimum system performance > 1 GB two-level RAID-5 for scalable performance RAID-1 and RAID-10 for optimized small writes RAID 1 Mirroring Large File Automatic transition from RAID 1 to 5 without restriping Programmatic control for application-specific layout optimizations Create with layout hint RAID 5 Striping Very Large File Inherit layout from parent directory 2 -level RAID 5 Striping Clients are responsible for writing data and its parity Slide 43 | October 2008 IDC, Panasas, Inc.

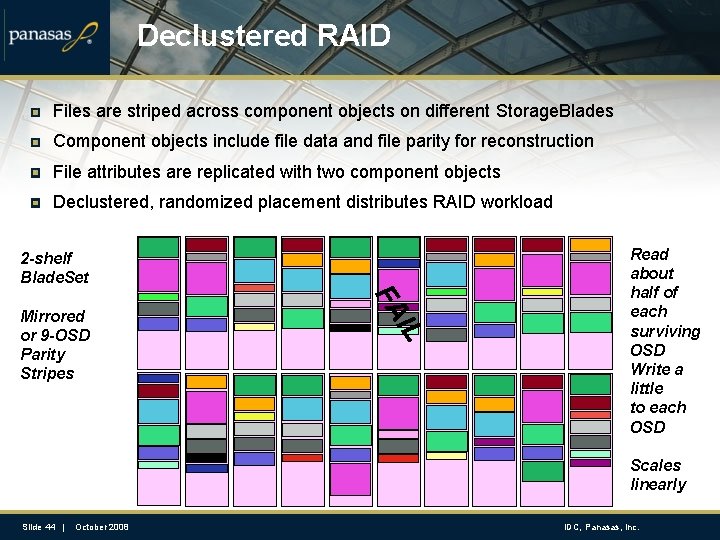

Declustered RAID Files are striped across component objects on different Storage. Blades Component objects include file data and file parity for reconstruction File attributes are replicated with two component objects Declustered, randomized placement distributes RAID workload IL FA CFE Mirrored or 9 -OSD Parity Stripes HGk. E 2 -shelf Blade. Set Read about half of each surviving OSD Write a little to each OSD Scales linearly Slide 44 | October 2008 IDC, Panasas, Inc.

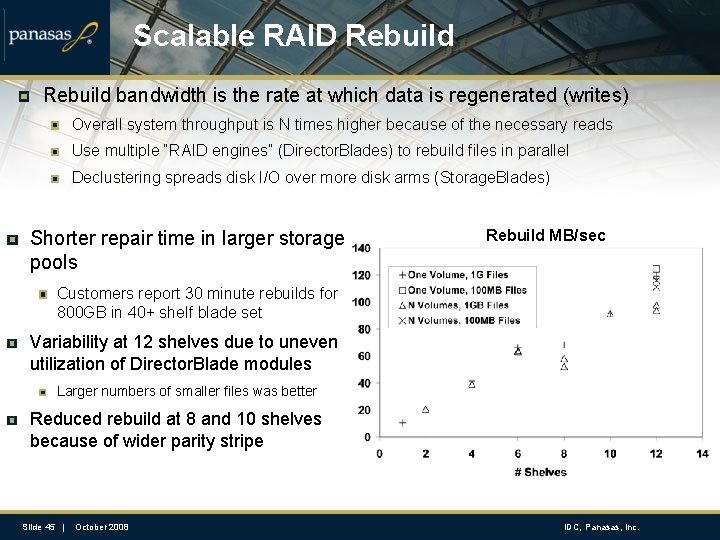

Scalable RAID Rebuild bandwidth is the rate at which data is regenerated (writes) Overall system throughput is N times higher because of the necessary reads Use multiple “RAID engines” (Director. Blades) to rebuild files in parallel Declustering spreads disk I/O over more disk arms (Storage. Blades) Shorter repair time in larger storage pools Rebuild MB/sec Customers report 30 minute rebuilds for 800 GB in 40+ shelf blade set Variability at 12 shelves due to uneven utilization of Director. Blade modules Larger numbers of smaller files was better Reduced rebuild at 8 and 10 shelves because of wider parity stripe Slide 45 | October 2008 IDC, Panasas, Inc.

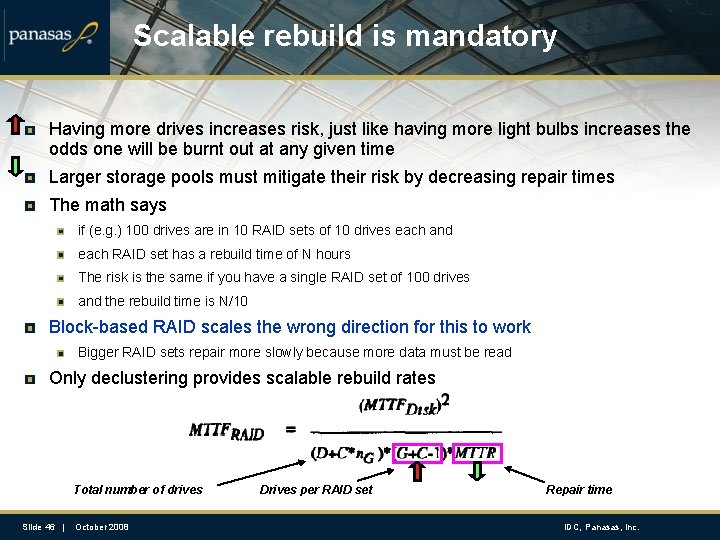

Scalable rebuild is mandatory Having more drives increases risk, just like having more light bulbs increases the odds one will be burnt out at any given time Larger storage pools must mitigate their risk by decreasing repair times The math says if (e. g. ) 100 drives are in 10 RAID sets of 10 drives each and each RAID set has a rebuild time of N hours The risk is the same if you have a single RAID set of 100 drives and the rebuild time is N/10 Block-based RAID scales the wrong direction for this to work Bigger RAID sets repair more slowly because more data must be read Only declustering provides scalable rebuild rates Total number of drives Slide 46 | October 2008 Drives per RAID set Repair time IDC, Panasas, Inc.

Deep Dive: p. NFS Standards-based parallel file systems: NFSv 4. 1 Slide 47 | October 2008 IDC, Panasas, Inc.

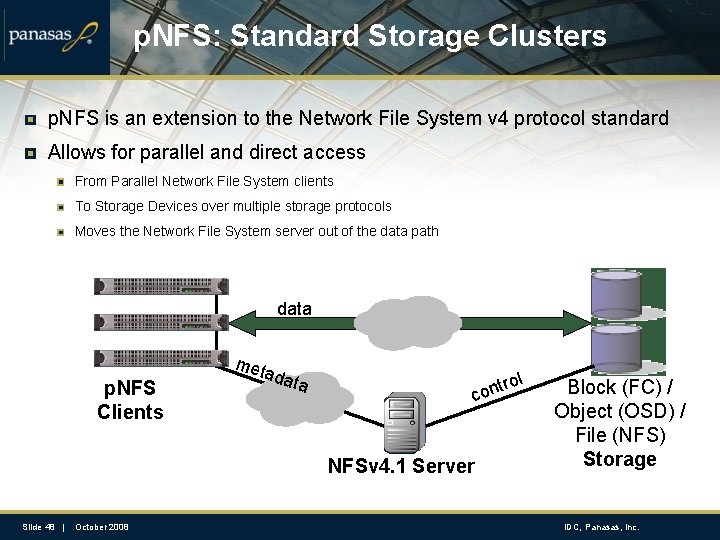

p. NFS: Standard Storage Clusters p. NFS is an extension to the Network File System v 4 protocol standard Allows for parallel and direct access From Parallel Network File System clients To Storage Devices over multiple storage protocols Moves the Network File System server out of the data path data p. NFS Clients met ada ta ol tr con NFSv 4. 1 Server Slide 48 | October 2008 Block (FC) / Object (OSD) / File (NFS) Storage IDC, Panasas, Inc.

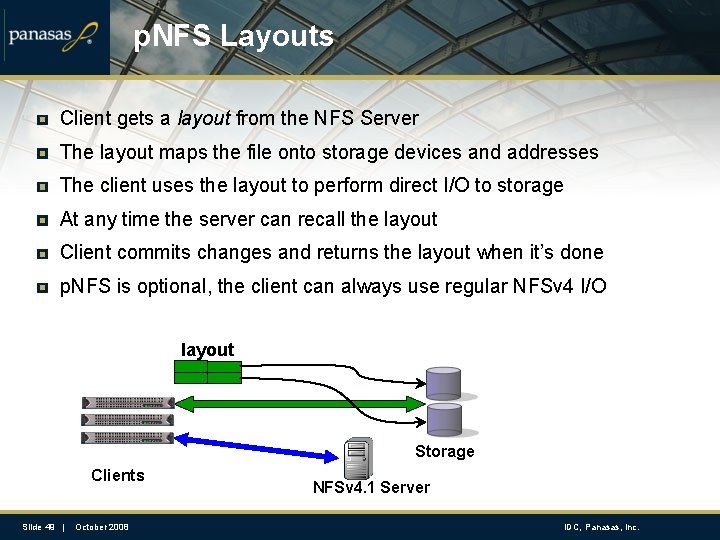

p. NFS Layouts Client gets a layout from the NFS Server The layout maps the file onto storage devices and addresses The client uses the layout to perform direct I/O to storage At any time the server can recall the layout Client commits changes and returns the layout when it’s done p. NFS is optional, the client can always use regular NFSv 4 I/O layout Storage Clients Slide 49 | October 2008 NFSv 4. 1 Server IDC, Panasas, Inc.

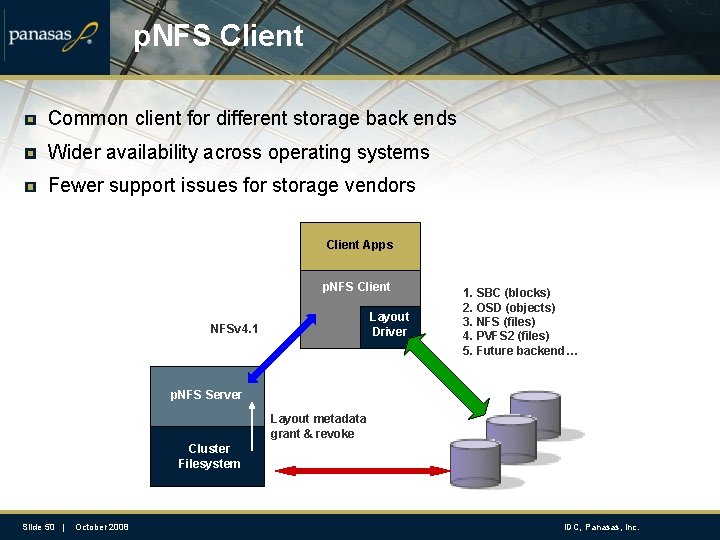

p. NFS Client Common client for different storage back ends Wider availability across operating systems Fewer support issues for storage vendors Client Apps p. NFS Client Layout Driver NFSv 4. 1 1. SBC (blocks) 2. OSD (objects) 3. NFS (files) 4. PVFS 2 (files) 5. Future backend… p. NFS Server Layout metadata grant & revoke Cluster Filesystem Slide 50 | October 2008 IDC, Panasas, Inc.

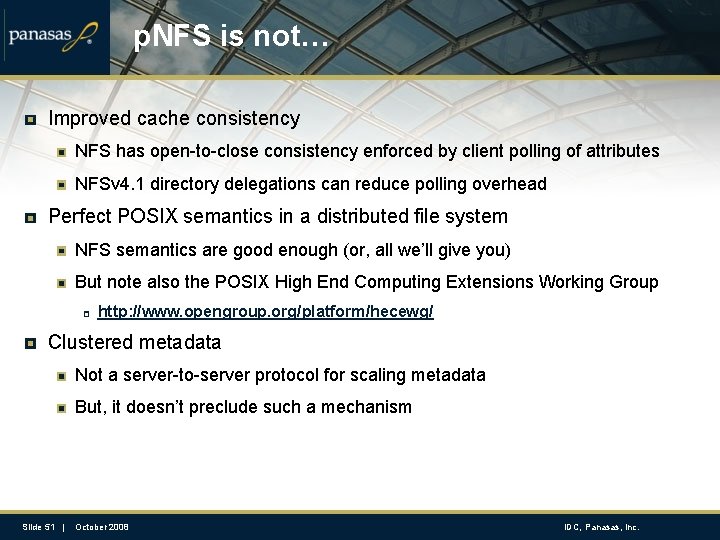

p. NFS is not… Improved cache consistency NFS has open-to-close consistency enforced by client polling of attributes NFSv 4. 1 directory delegations can reduce polling overhead Perfect POSIX semantics in a distributed file system NFS semantics are good enough (or, all we’ll give you) But note also the POSIX High End Computing Extensions Working Group http: //www. opengroup. org/platform/hecewg/ Clustered metadata Not a server-to-server protocol for scaling metadata But, it doesn’t preclude such a mechanism Slide 51 | October 2008 IDC, Panasas, Inc.

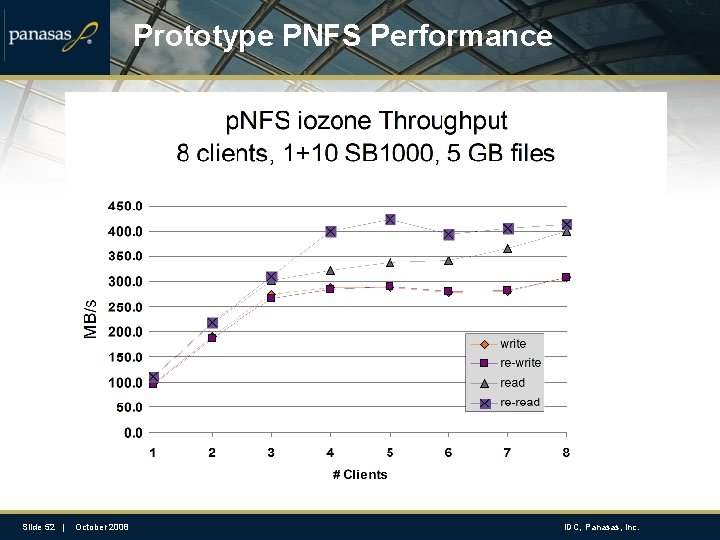

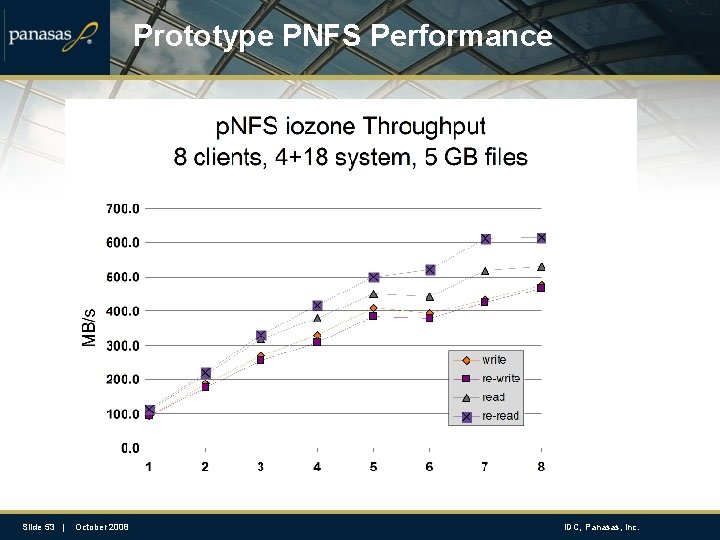

Prototype PNFS Performance Slide 52 | October 2008 IDC, Panasas, Inc.

Prototype PNFS Performance Slide 53 | October 2008 IDC, Panasas, Inc.

Is p. NFS Enough? Standard for out-of-band metadata Great start to avoid classic server bottleneck NFS has already relaxed some semantics to favor performance But there are certainly some workloads that will still hurt Standard framework for clients of different storage backends Files (Sun, Net. App, IBM/GPFS, GFS 2) Objects (Panasas, Sun? ) Blocks (EMC, LSI) PVFS 2 Your project… (e. g. , dcache. org) Slide 54 | October 2008 IDC, Panasas, Inc.

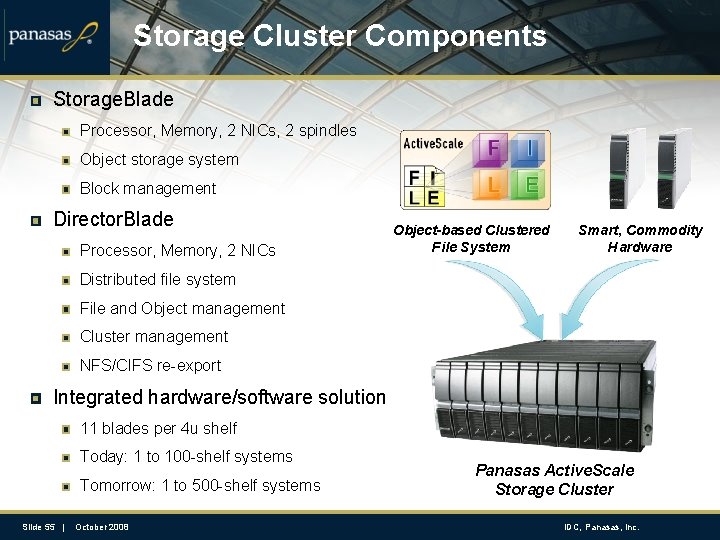

Storage Cluster Components Storage. Blade Processor, Memory, 2 NICs, 2 spindles Object storage system Block management Director. Blade Processor, Memory, 2 NICs Object-based Clustered File System Smart, Commodity Hardware Distributed file system File and Object management Cluster management NFS/CIFS re-export Integrated hardware/software solution 11 blades per 4 u shelf Today: 1 to 100 -shelf systems Tomorrow: 1 to 500 -shelf systems Slide 55 | October 2008 Panasas Active. Scale Storage Cluster IDC, Panasas, Inc.

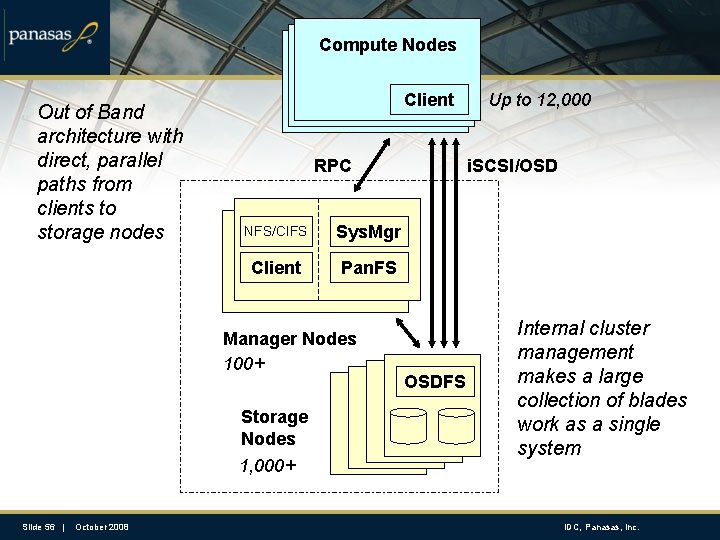

Compute Nodes Out of Band architecture with direct, parallel paths from clients to storage nodes Client RPC NFS/CIFS Sys. Mgr Client Pan. FS Manager Nodes 100+ Storage Nodes 1, 000+ Slide 56 | October 2008 Up to 12, 000 i. SCSI/OSD OSDFS Internal cluster management makes a large collection of blades work as a single system IDC, Panasas, Inc.

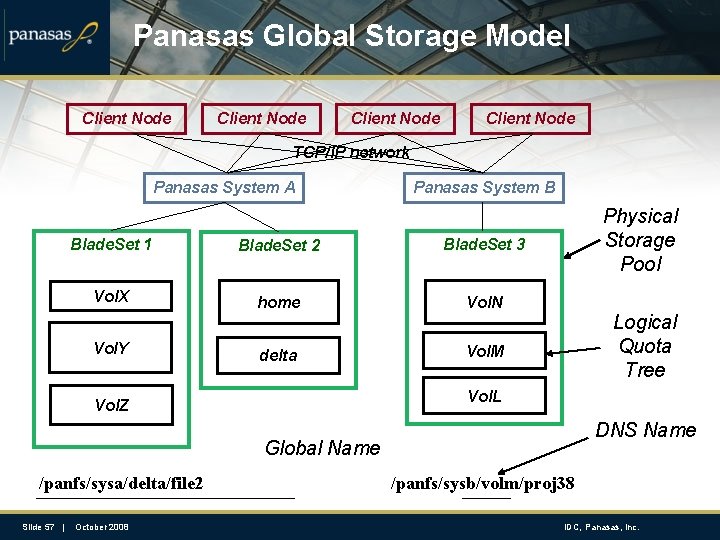

Panasas Global Storage Model Client Node TCP/IP network Panasas System A Panasas System B Blade. Set 1 Blade. Set 2 Blade. Set 3 Vol. X home Vol. N delta Vol. M Vol. Y Physical Storage Pool Logical Quota Tree Vol. L Vol. Z DNS Name Global Name /panfs/sysa/delta/file 2 Slide 57 | October 2008 /panfs/sysb/volm/proj 38 IDC, Panasas, Inc.

- Slides: 57