Oxford University Particle Physics Site Report Pete Gronbech

Oxford University Particle Physics Site Report Pete Gronbech Systems Manager and South Grid Technical Co-ordinator 11 th Oct 2005 Hepix SLAC - Oxford Site Report 1

Physics Department Computing Services l Physics department restructuring. Reduced staff involved in system management by one. Still trying to fill another post. l E-Mail hubs n A lot of work done to simplify the system and reduce manpower requirements. Haven’t had much effort available for anti-spam. Increased use of the Exchange Servers. l Windows Terminal Servers n Still a popular service. More use of remote access to user’s own desktops (XP only) l Web / Database n A lot of work around supporting administration and teaching. l Exchange Servers n Increased size of information store disks from 73 -300 GB. One major problem with failure of a disk but was solved by reloading overnight. l Windows Front End server – WINFE n Access to windows file system via SCP, SFTP or web browser n Access to exchange server (web and outlook) n Access to address lists (LDAP) for email, telephone n VPN service 11 th Oct 2005 Hepix SLAC - Oxford Site Report 2

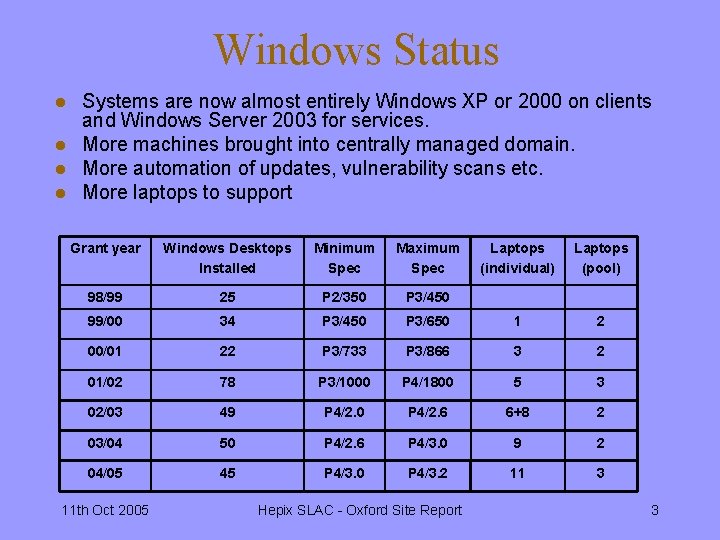

Windows Status l l Systems are now almost entirely Windows XP or 2000 on clients and Windows Server 2003 for services. More machines brought into centrally managed domain. More automation of updates, vulnerability scans etc. More laptops to support Grant year Windows Desktops Installed Minimum Spec Maximum Spec Laptops (individual) Laptops (pool) 98/99 25 P 2/350 P 3/450 99/00 34 P 3/450 P 3/650 1 2 00/01 22 P 3/733 P 3/866 3 2 01/02 78 P 3/1000 P 4/1800 5 3 02/03 49 P 4/2. 0 P 4/2. 6 6+8 2 03/04 50 P 4/2. 6 P 4/3. 0 9 2 04/05 45 P 4/3. 0 P 4/3. 2 11 3 11 th Oct 2005 Hepix SLAC - Oxford Site Report 3

Software Licensing l l l Cost increasing each year Have continued NAG deal (libraries only) New deal for Intel compilers run through OSC group Also deals for Labview, Mathematica, Maple, IDL System management tools, software for imaging, backup, anti-spyware etc. (MS OS’s and Office covered by Campus select agreement) 11 th Oct 2005 Hepix SLAC - Oxford Site Report 4

Network l l l Gigabit connection to campus operational since July 2005. Several technical problems with the link delayed this by over half a year. Gigabit firewall installed. Purchased commercial unit to minimise manpower required for development and maintenance. Juniper ISG 1000 running netscreen. Firewall also supports NAT and VPN services which is allowing us to consolidate and simplify the network services. Moving to the firewall NAT has solved a number of problems we were having previously, including unreliability of videoconferencing connections. Physics-wide wireless network. Installed in DWB public rooms, Martin Wood and Theory. Will install same in AOPP. New firewall provides routing and security for this network. 11 th Oct 2005 Hepix SLAC - Oxford Site Report 5

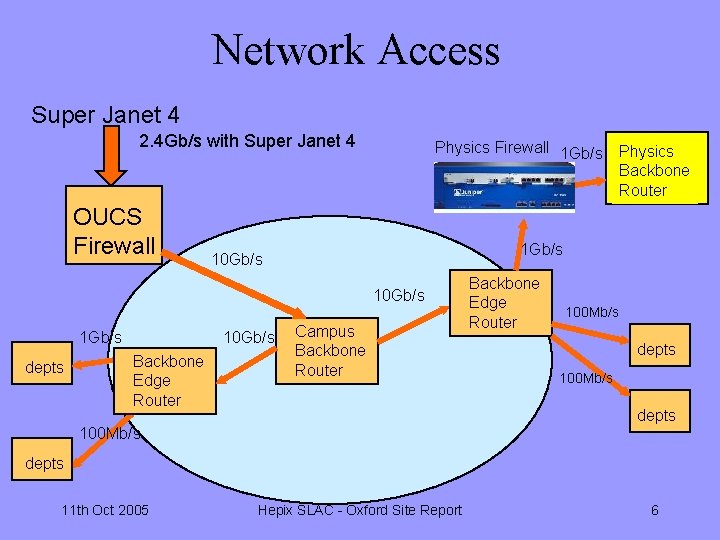

Network Access Super Janet 4 2. 4 Gb/s with Super Janet 4 OUCS Firewall Physics Firewall 1 Gb/s 10 Gb/s 1 Gb/s depts 10 Gb/s Backbone Edge Router Physics Backbone Router Campus Backbone Router Backbone Edge Router 100 Mb/s depts 11 th Oct 2005 Hepix SLAC - Oxford Site Report 6

Network Security l Constantly under threat from vulnerability scans, worms and viruses. We are attacking the problem in several ways n n n l Boundary Firewall’s ( but these don’t solve the problem entirely as people bring infections in on laptops. ) – new firewall Keeping operating systems patched and properly configured - new windows update server Antivirus on all systems – More use of Sophos but some problems Spyware detection – anti-spyware software running on all centrally managed systems Segmentation of the network into trusted and un-trusted sections – new firewall Strategy Centrally manage as many machines as possible to ensure they are uptodate and secure – most windows machines moved into domain n Use Network Address Translation (NAT) service to separate centrally managed and `un-trusted` systems into different networks – new firewall plus new Virtual LANs n Continue to lock-down systems by invoking network policies. The client firewall in Windows XP –SP 2 is very useful for excluding network based attacks – centralised client firewall policies n 11 th Oct 2005 Hepix SLAC - Oxford Site Report 7

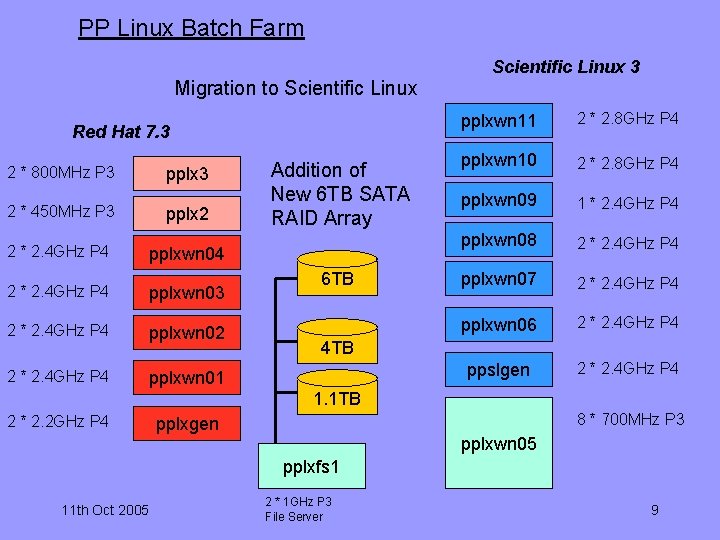

Particle Physics Linux l l l l Aim to provide general purpose Linux based system for code development and testing and other Linux based applications. New Unix Admin (Rosario Esposito) has joined us, so we now have more effort to put into improving this system. New main server installed (ppslgen) running Scientific Linux (SL 3) File server upgraded to SL 3 and 6 TB disk array added. Two dual processor worker nodes reclaimed from Atlas Barrel assembly and connected as SL 3 worker nodes. RH 7. 3 worker nodes being migrated to SL 3 ppslgen and worker nodes form a mosix cluster which we hope will provide a more scalable interactive service. These also support conventional batch queues. Some performance problems with gnome and SL 3 from exceed. Evaluating alternative (NX) for exceed which doesn’t exhibit this problem (also has better integrated ssl). 11 th Oct 2005 Hepix SLAC - Oxford Site Report 8

PP Linux Batch Farm Migration to Scientific Linux Red Hat 7. 3 2 * 800 MHz P 3 pplx 3 2 * 450 MHz P 3 pplx 2 2 * 2. 4 GHz P 4 pplxwn 04 2 * 2. 4 GHz P 4 pplxwn 03 2 * 2. 4 GHz P 4 pplxwn 02 2 * 2. 4 GHz P 4 pplxwn 01 Addition of New 6 TB SATA RAID Array 6 TB Scientific Linux 3 pplxwn 11 2 * 2. 8 GHz P 4 pplxwn 10 2 * 2. 8 GHz P 4 pplxwn 09 1 * 2. 4 GHz P 4 pplxwn 08 2 * 2. 4 GHz P 4 pplxwn 07 2 * 2. 4 GHz P 4 pplxwn 06 2 * 2. 4 GHz P 4 ppslgen 2 * 2. 4 GHz P 4 4 TB 1. 1 TB 2 * 2. 2 GHz P 4 8 * 700 MHz P 3 pplxgen pplxwn 05 pplxfs 1 11 th Oct 2005 2 * 1 GHz P 3 File Server 9

Southgrid Member Institutions l l l l Oxford RAL PPD Cambridge Birmingham Bristol HP-Bristol Warwick 11 th Oct 2005 Hepix SLAC - Oxford Site Report 10

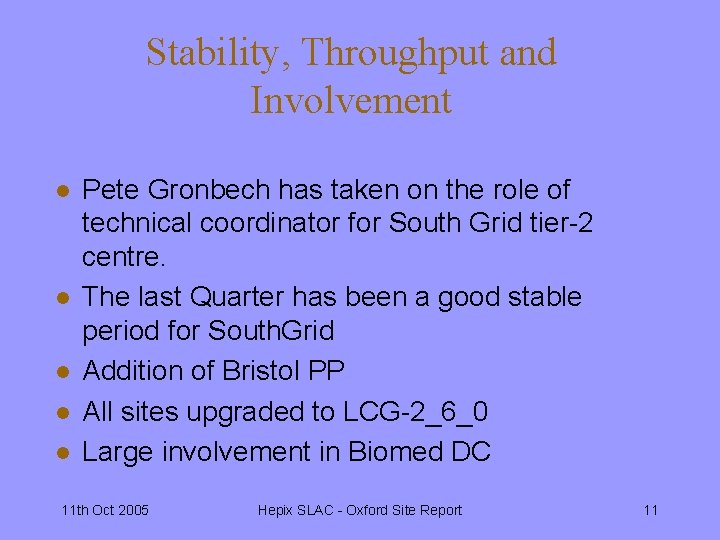

Stability, Throughput and Involvement l l l Pete Gronbech has taken on the role of technical coordinator for South Grid tier-2 centre. The last Quarter has been a good stable period for South. Grid Addition of Bristol PP All sites upgraded to LCG-2_6_0 Large involvement in Biomed DC 11 th Oct 2005 Hepix SLAC - Oxford Site Report 11

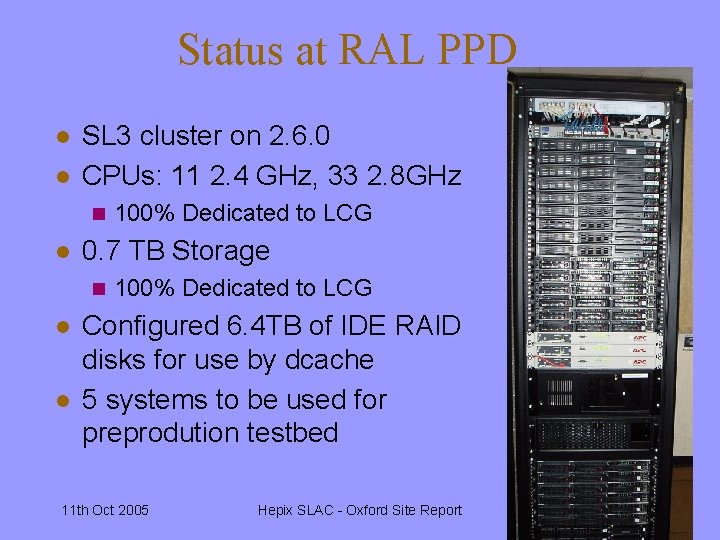

Status at RAL PPD l l SL 3 cluster on 2. 6. 0 CPUs: 11 2. 4 GHz, 33 2. 8 GHz n l 0. 7 TB Storage n l l 100% Dedicated to LCG Configured 6. 4 TB of IDE RAID disks for use by dcache 5 systems to be used for preprodution testbed 11 th Oct 2005 Hepix SLAC - Oxford Site Report 12

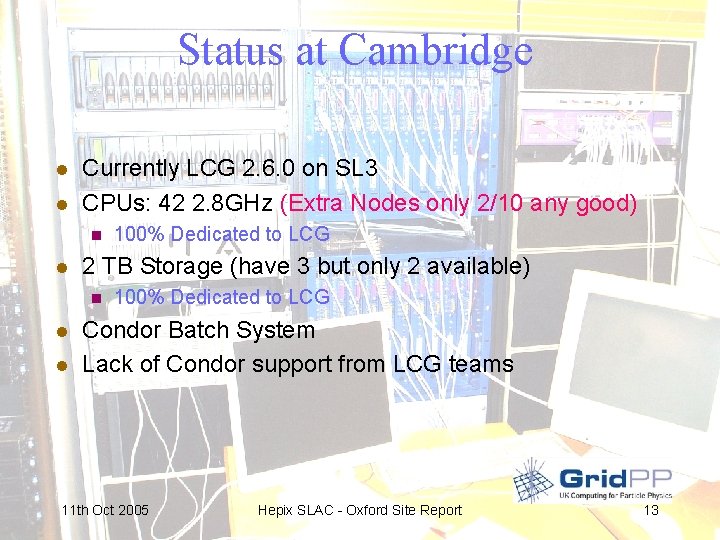

Status at Cambridge l l Currently LCG 2. 6. 0 on SL 3 CPUs: 42 2. 8 GHz (Extra Nodes only 2/10 any good) n l 2 TB Storage (have 3 but only 2 available) n l l 100% Dedicated to LCG Condor Batch System Lack of Condor support from LCG teams 11 th Oct 2005 Hepix SLAC - Oxford Site Report 13

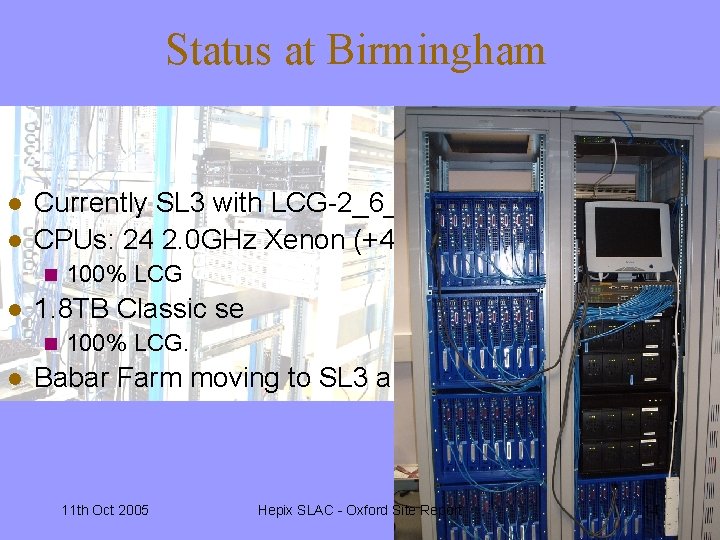

Status at Birmingham l l Currently SL 3 with LCG-2_6_0 CPUs: 24 2. 0 GHz Xenon (+48 local nodes which could n l 1. 8 TB Classic se n l 100% LCG. Babar Farm moving to SL 3 and Bristol integrated but no 11 th Oct 2005 Hepix SLAC - Oxford Site Report 14

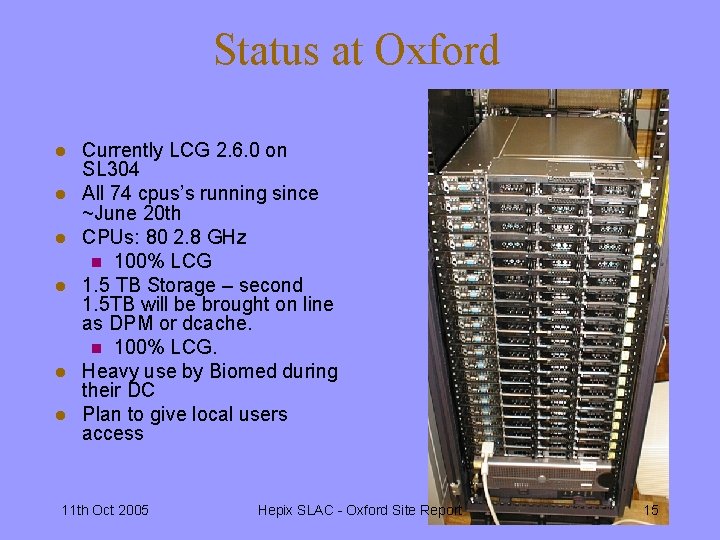

Status at Oxford l l l Currently LCG 2. 6. 0 on SL 304 All 74 cpus’s running since ~June 20 th CPUs: 80 2. 8 GHz n 100% LCG 1. 5 TB Storage – second 1. 5 TB will be brought on line as DPM or dcache. n 100% LCG. Heavy use by Biomed during their DC Plan to give local users access 11 th Oct 2005 Hepix SLAC - Oxford Site Report 15

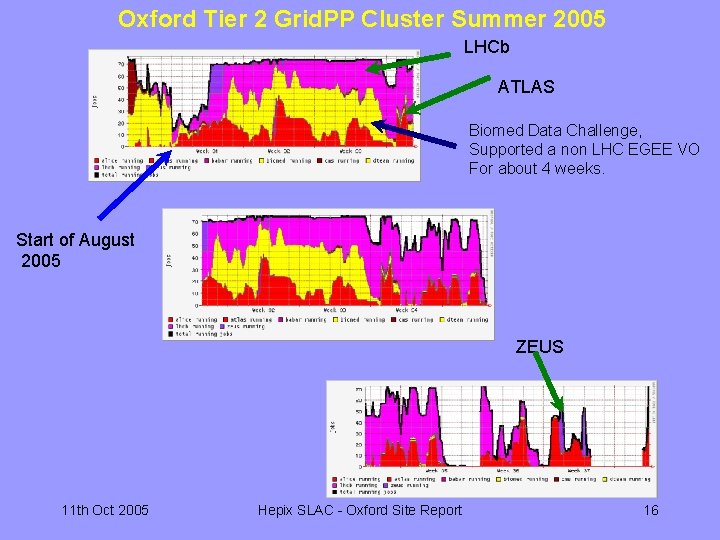

Oxford Tier 2 Grid. PP Cluster Summer 2005 LHCb ATLAS Biomed Data Challenge, Supported a non LHC EGEE VO For about 4 weeks. Start of August 2005 ZEUS 11 th Oct 2005 Hepix SLAC - Oxford Site Report 16

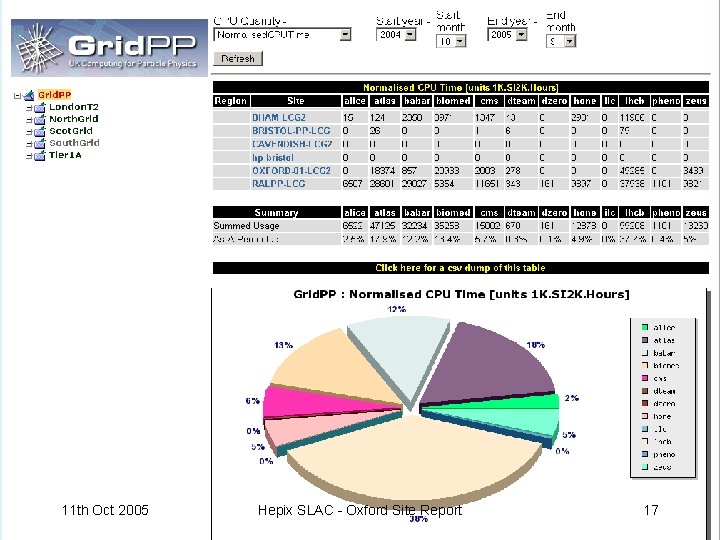

11 th Oct 2005 Hepix SLAC - Oxford Site Report 17

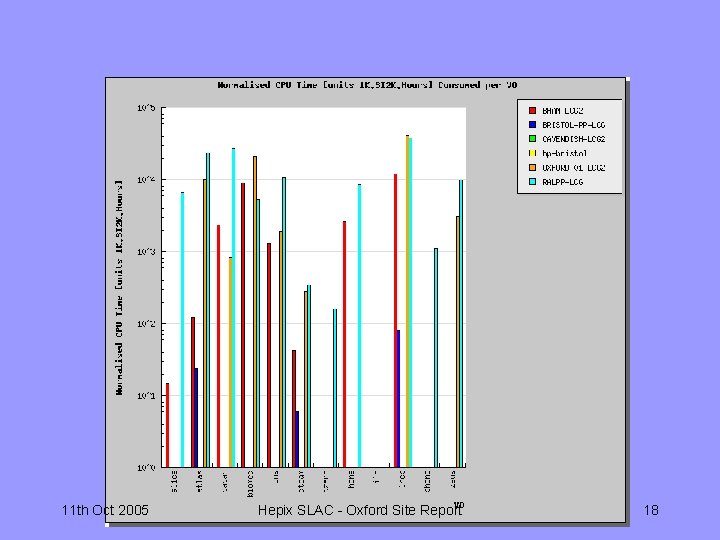

11 th Oct 2005 Hepix SLAC - Oxford Site Report 18

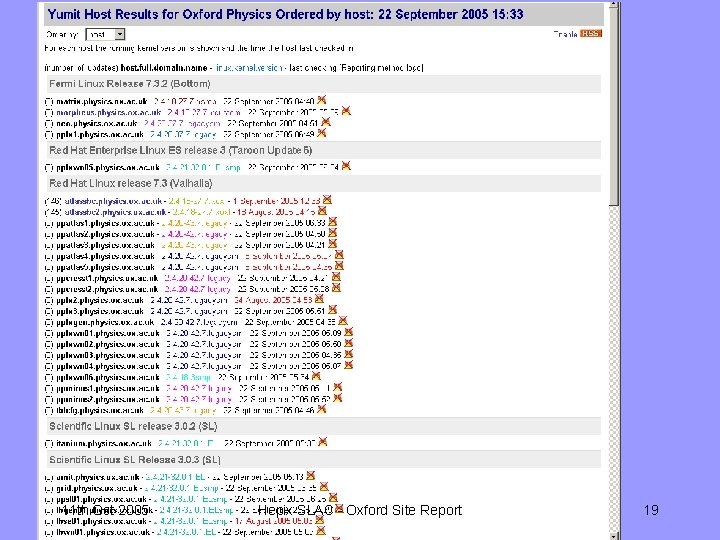

11 th Oct 2005 Hepix SLAC - Oxford Site Report 19

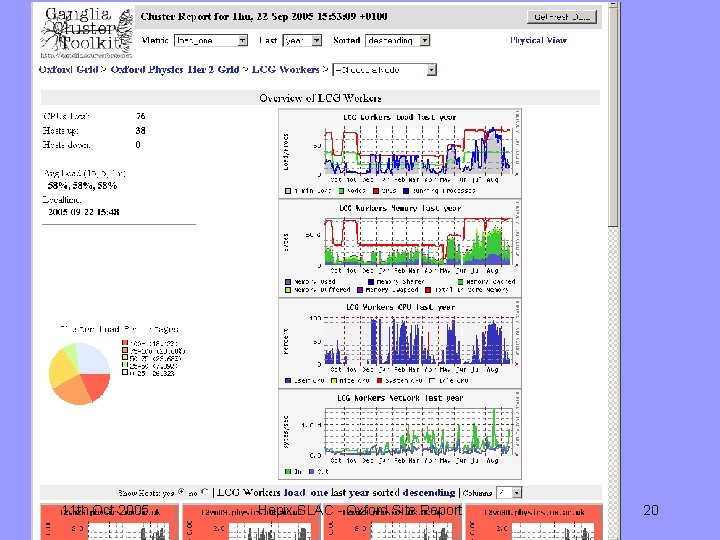

11 th Oct 2005 Hepix SLAC - Oxford Site Report 20

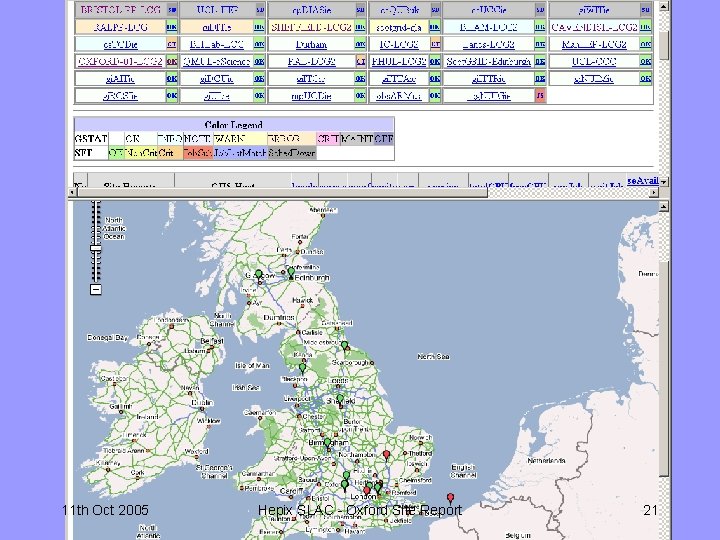

11 th Oct 2005 Hepix SLAC - Oxford Site Report 21

Oxford Computer Room l l l l Modern processors take a lot of power and generate a lot of heat. We’ve had many problems with air conditioning units and a power trip. Need new, properly designed and constructed computer room to deal with increasing requirements. Local work on the design and the Design Office has checked the cooling and air flow. Plan is to use two of the old target rooms on level 1, one for physics one for the new Oxford Supercomputer (800 nodes). Requirements call for power and cooling between of 0. 5 and 1 MW SRIF funding has been secured but this now means its all in the hands of the University’s estates. Now unlikely to be ready before next summer. 11 th Oct 2005 Hepix SLAC - Oxford Site Report 22

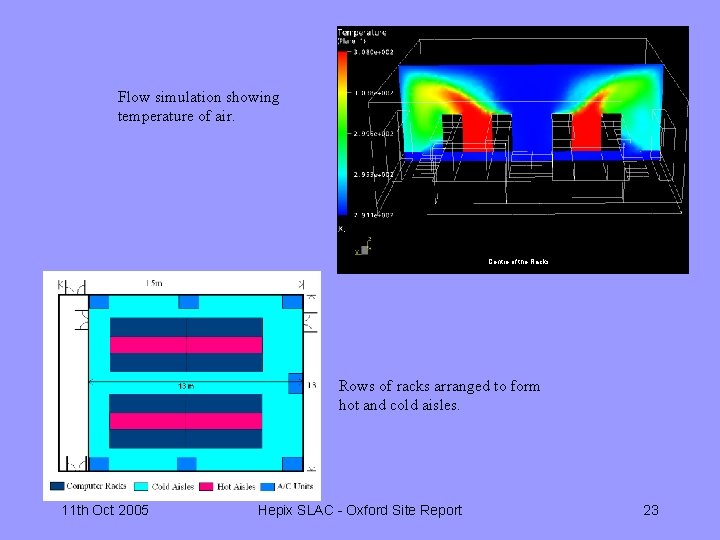

Flow simulation showing temperature of air. Centre of the Racks Rows of racks arranged to form hot and cold aisles. 11 th Oct 2005 Hepix SLAC - Oxford Site Report 23

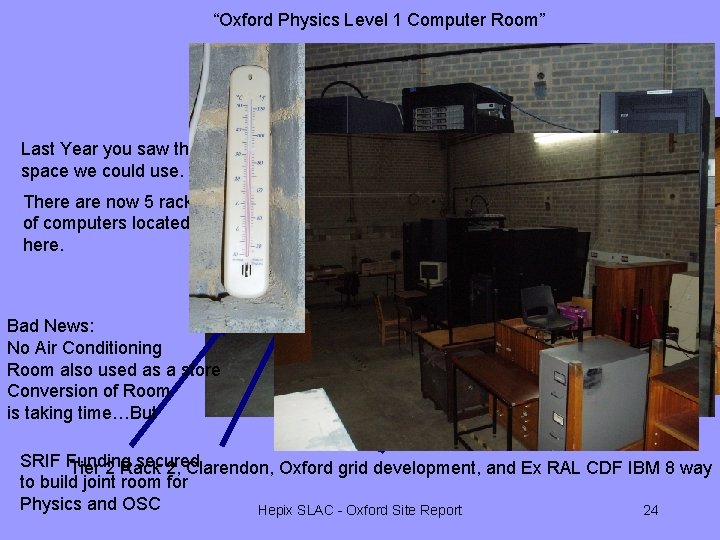

“Oxford Physics Level 1 Computer Room” Last Year you saw the space we could use. There are now 5 racks of computers located here. Bad News: No Air Conditioning Room also used as a store Conversion of Room is taking time…But SRIF Funding secured Tier 2 Rack 2, Clarendon, Oxford grid development, and Ex RAL CDF IBM 8 way to build joint room for Physics and OSC Hepix SLAC - Oxford Site Report 24

Future l l l l Intrusion detection system for increased network security Complete migration of desktops to XP-SP 2 and MS Office 2003. Improve support for laptops – still more difficult to manage than desktops. Once migration of central service to SL is complete, we will be developing a Linux desktop clone. Investigating how best to integrate Mac OS X with existing infrastructure. Scale up ppslgen in line with demand. Money has been set aside for more worker nodes and a further 12+ TB of storage. More worker nodes for the tier-2 service Look to use university services where appropriate. Perhaps the new supercomputer ? 11 th Oct 2005 Hepix SLAC - Oxford Site Report 25

- Slides: 25