Overview of the Data Mining Process Data Mining

- Slides: 39

Overview of the Data Mining Process Data Mining for Business Analytics Shmueli, Patel & Bruce

Core Ideas in Data Mining Classification Prediction Association Rules Predictive Analytics Data Reduction and Dimension Reduction Data Exploration and Visualization Supervised and Unsupervised Learning

Supervised Learning Goal: Predict a single “target” or “outcome” variable Training data, where target value is known Score to data where value is not known Methods: Classification and Prediction

Unsupervised Learning Goal: Segment data into meaningful segments; detect patterns There is no target (outcome) variable to predict or classify Methods: Association rules, data reduction & exploration, visualization

Supervised: Classification Goal: Predict categorical target (outcome) variable Examples: Purchase/no purchase, fraud/no fraud, creditworthy/not creditworthy… Each row is a case (customer, tax return, applicant) Each column is a variable Target variable is often binary (yes/no)

Supervised: Prediction Goal: Predict numerical target (outcome) variable Examples: sales, revenue, performance As in classification: Each row is a case (customer, tax return, applicant) Each column is a variable Taken together, classification and prediction constitute “predictive analytics”

Unsupervised: Association Rules Goal: Produce rules that define “what goes with what” Example: “If X was purchased, Y was also purchased” Rows are transactions Used in recommender systems – “Our records show you bought X, you may also like Y” Also called “affinity analysis”

Unsupervised: Data Reduction Distillation of complex/large data into simpler/smaller data Reducing the number of variables/columns (e. g. , principal components) Reducing the number of records/rows (e. g. , clustering)

Unsupervised: Data Visualization Graphs and plots of data Histograms, boxplots, bar charts, scatterplots Especially useful to examine relationships between pairs of variables

Data Exploration Data sets are typically large, complex & messy Need to review the data to help refine the task Use techniques of Reduction and Visualization

The Process of Data Mining

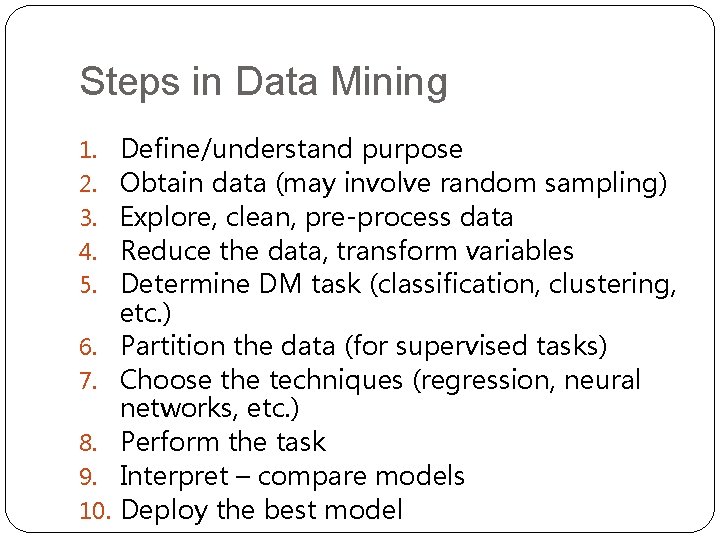

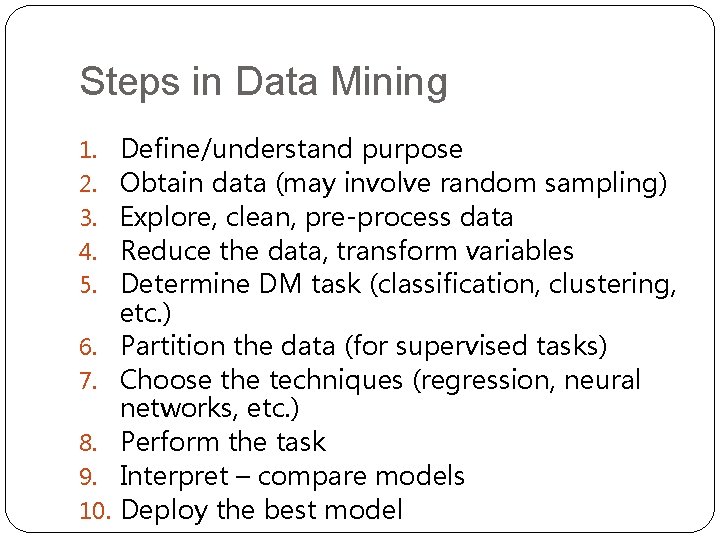

Steps in Data Mining 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. Define/understand purpose Obtain data (may involve random sampling) Explore, clean, pre-process data Reduce the data, transform variables Determine DM task (classification, clustering, etc. ) Partition the data (for supervised tasks) Choose the techniques (regression, neural networks, etc. ) Perform the task Interpret – compare models Deploy the best model

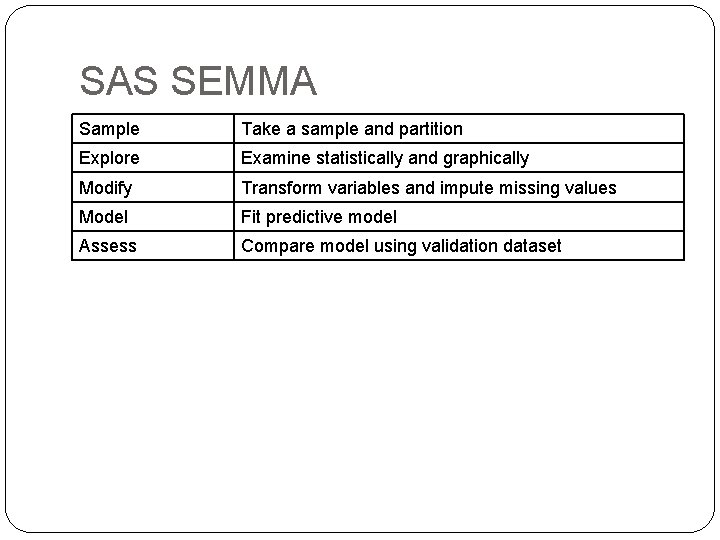

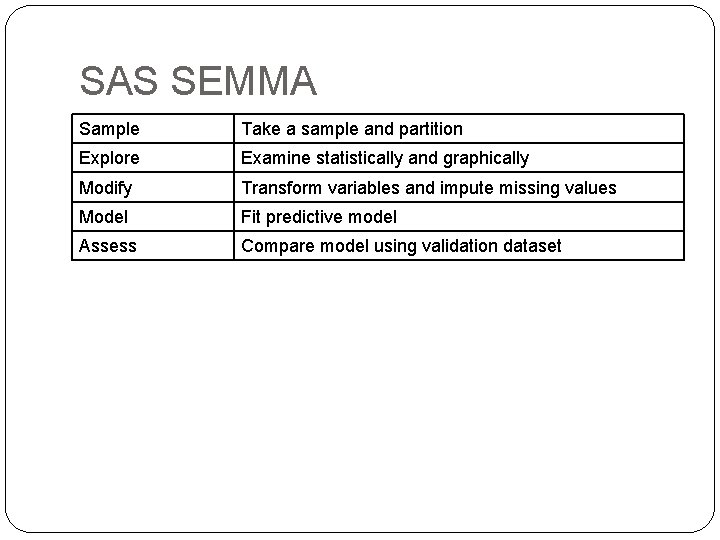

SAS SEMMA Sample Take a sample and partition Explore Examine statistically and graphically Modify Transform variables and impute missing values Model Fit predictive model Assess Compare model using validation dataset

Obtaining Data: Sampling Data mining typically deals with huge databases Algorithms and models are typically applied to a sample from a database, to produce statistically-valid results XLMiner, e. g. , limits the “training” partition to 10, 000 records Once you develop and select a final model, you use it to “score” the observations in the larger database

Rare event oversampling Often the event of interest is rare Examples: response to mailing, fraud in taxes, … Sampling may yield too few “interesting” cases to effectively train a model A popular solution: oversample the rare cases to obtain a more balanced training set Later, need to adjust results for the oversampling

Pre-processing Data

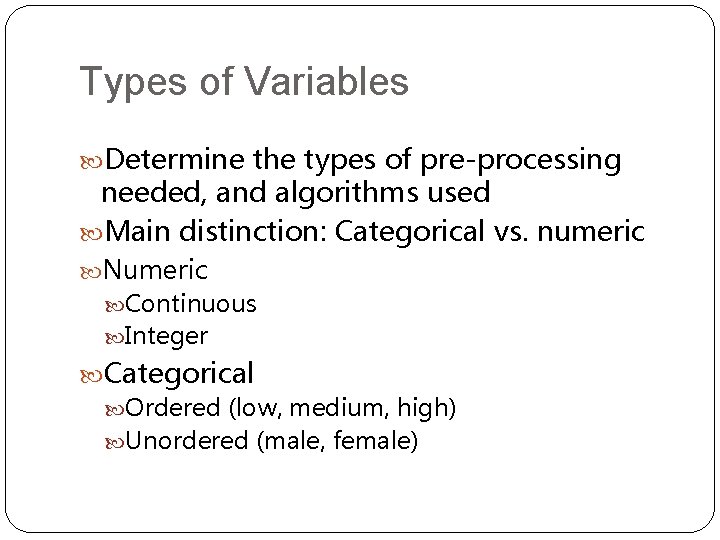

Types of Variables Determine the types of pre-processing needed, and algorithms used Main distinction: Categorical vs. numeric Numeric Continuous Integer Categorical Ordered (low, medium, high) Unordered (male, female)

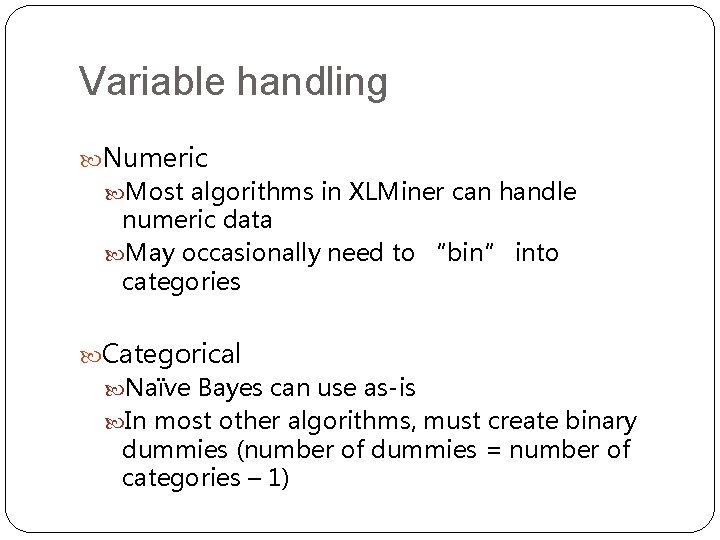

Variable handling Numeric Most algorithms in XLMiner can handle numeric data May occasionally need to “bin” into categories Categorical Naïve Bayes can use as-is In most other algorithms, must create binary dummies (number of dummies = number of categories – 1)

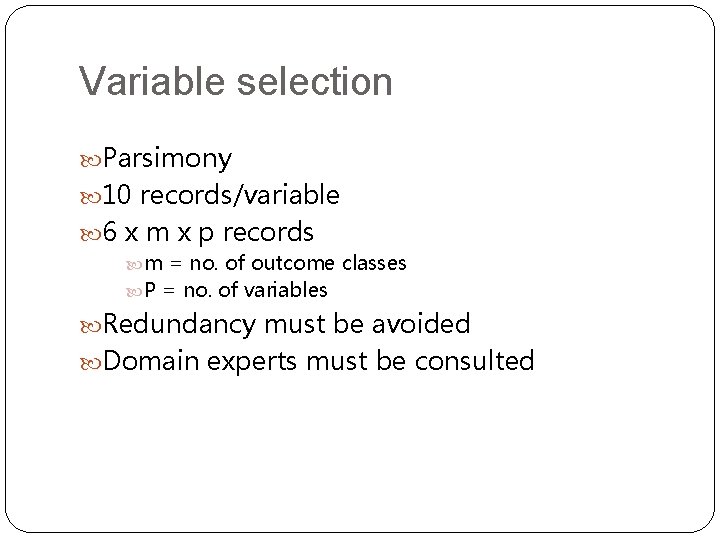

Variable selection Parsimony 10 records/variable 6 x m x p records m = no. of outcome classes P = no. of variables Redundancy must be avoided Domain experts must be consulted

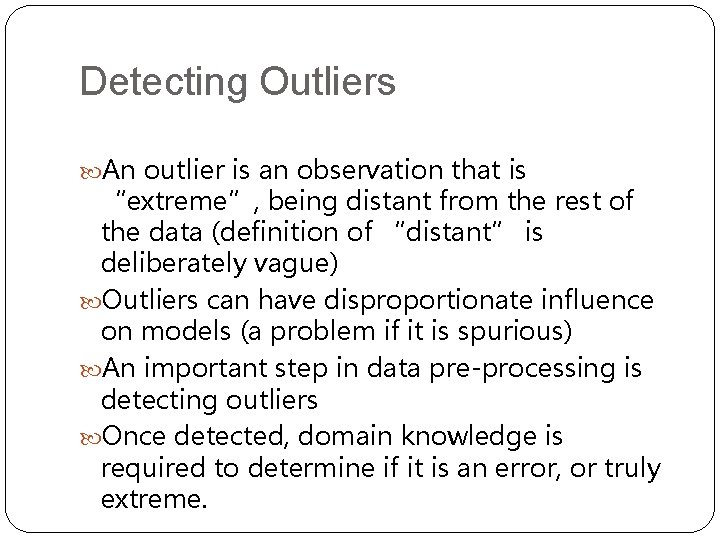

Detecting Outliers An outlier is an observation that is “extreme”, being distant from the rest of the data (definition of “distant” is deliberately vague) Outliers can have disproportionate influence on models (a problem if it is spurious) An important step in data pre-processing is detecting outliers Once detected, domain knowledge is required to determine if it is an error, or truly extreme.

Detecting Outliers In some contexts, finding outliers is the purpose of the DM exercise (airport security screening). This is called “anomaly detection”.

Handling Missing Data Most algorithms will not process records with missing values. Default is to drop those records. Solution 1: Omission If a small number of records have missing values, can omit them If many records are missing values on a small set of variables, can drop those variables (or use proxies) If many records have missing values, omission is not practical Solution 2: Imputation Replace missing values with reasonable substitutes Lets you keep the record and use the rest of its (non-missing) information

Normalizing (Standardizing) Data Used in some techniques when variables with the largest scales would dominate and skew results Puts all variables on same scale Normalizing function: Subtract mean and divide by standard deviation (used in XLMiner) Alternative function: scale to 0 -1 by subtracting minimum and dividing by the range

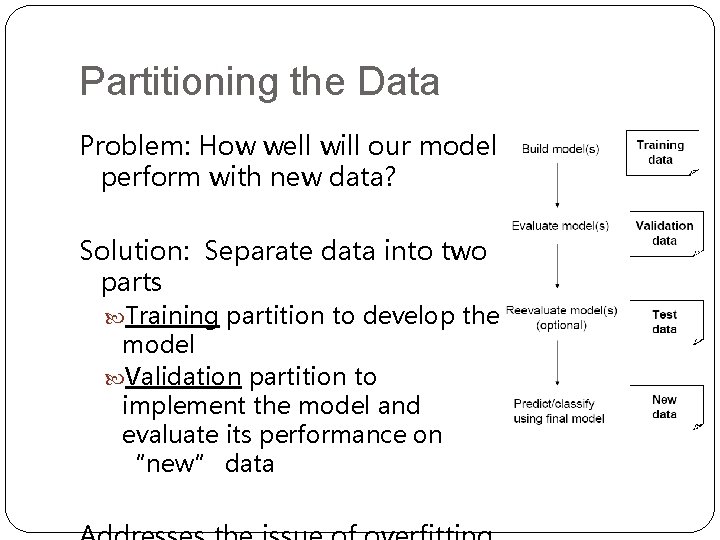

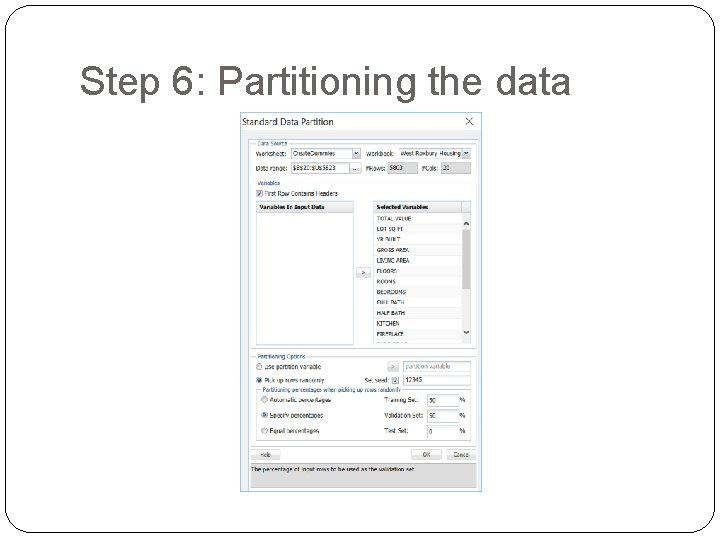

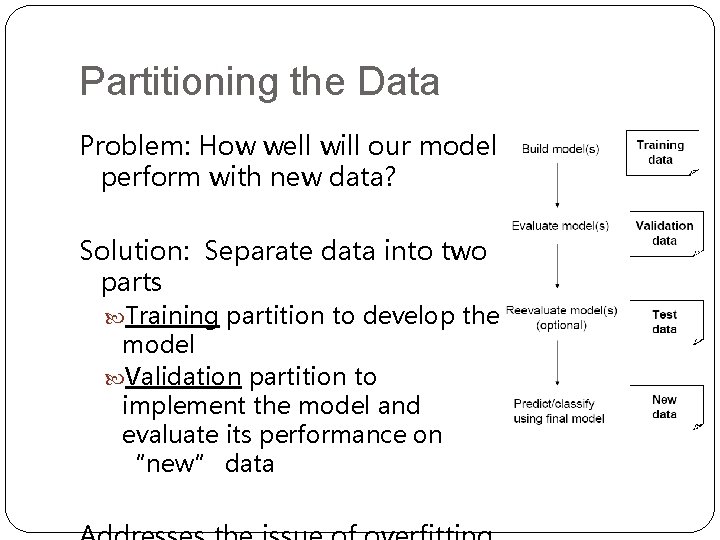

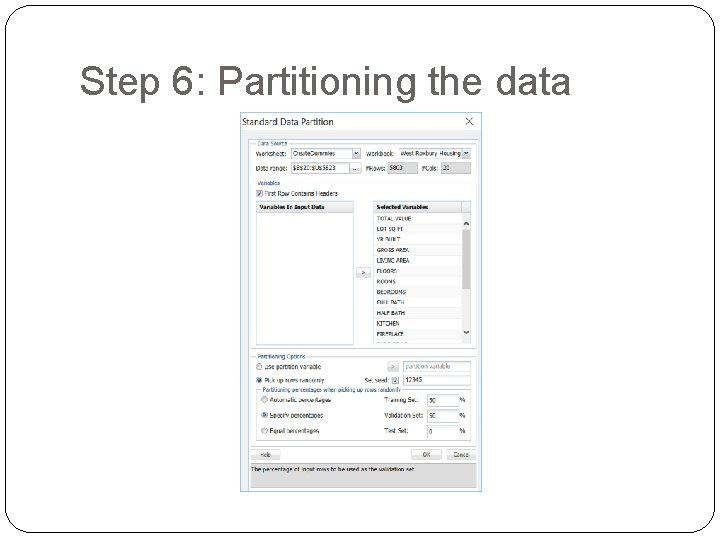

Partitioning the Data Problem: How well will our model perform with new data? Solution: Separate data into two parts Training partition to develop the model Validation partition to implement the model and evaluate its performance on “new” data

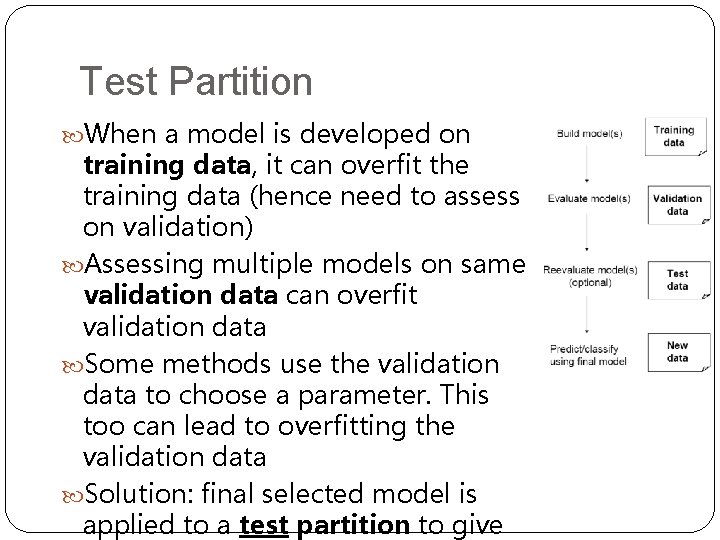

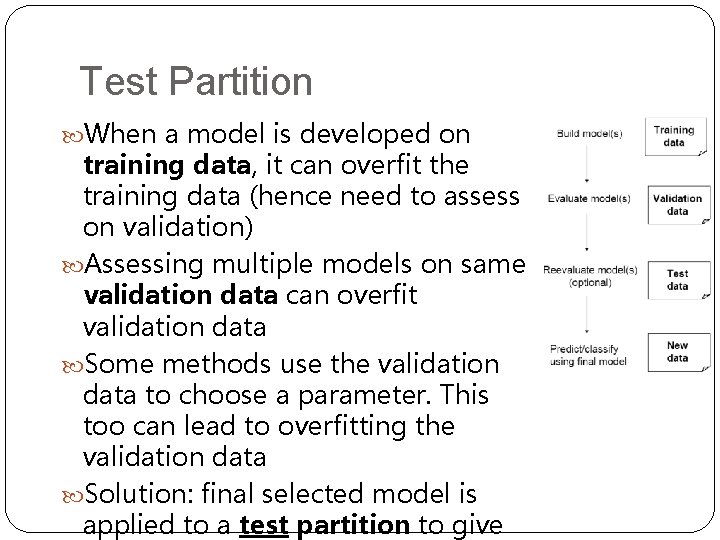

Test Partition When a model is developed on training data, it can overfit the training data (hence need to assess on validation) Assessing multiple models on same validation data can overfit validation data Some methods use the validation data to choose a parameter. This too can lead to overfitting the validation data Solution: final selected model is applied to a test partition to give

Overfitting Statistical models can produce highly complex explanations of relationships between variables The “fit” may be excellent When used with new data, models of great complexity do not do so well.

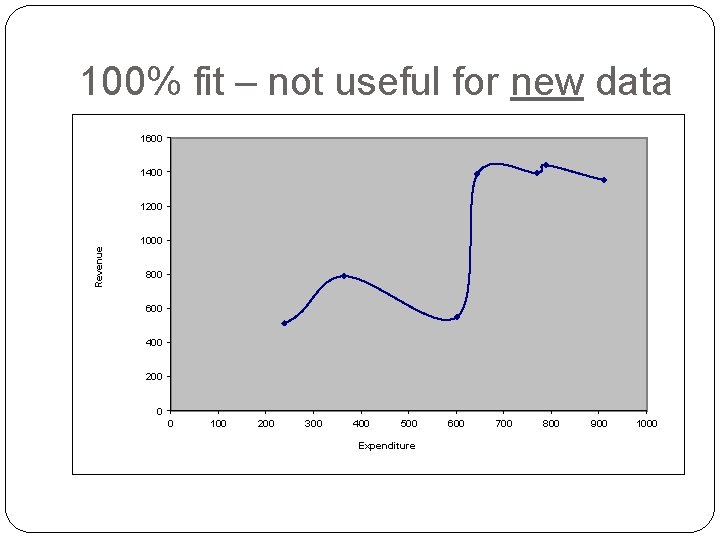

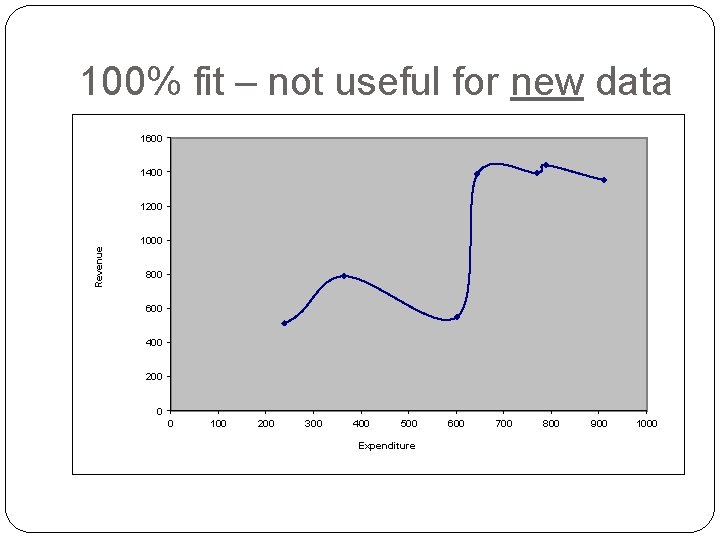

100% fit – not useful for new data 1600 1400 1200 Revenue 1000 800 600 400 200 0 0 100 200 300 400 500 Expenditure 600 700 800 900 1000

Overfitting (cont. ) Causes: Too many predictors A model with too many parameters Trying many different models Consequence: Deployed model will not work as well as expected with completely new data.

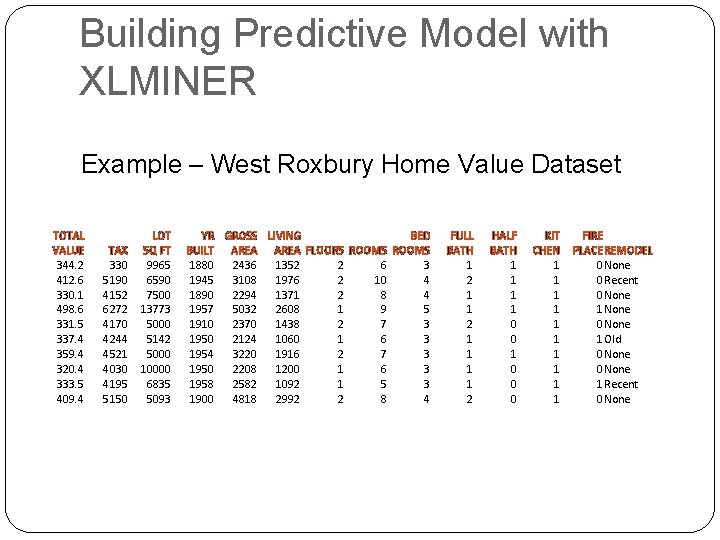

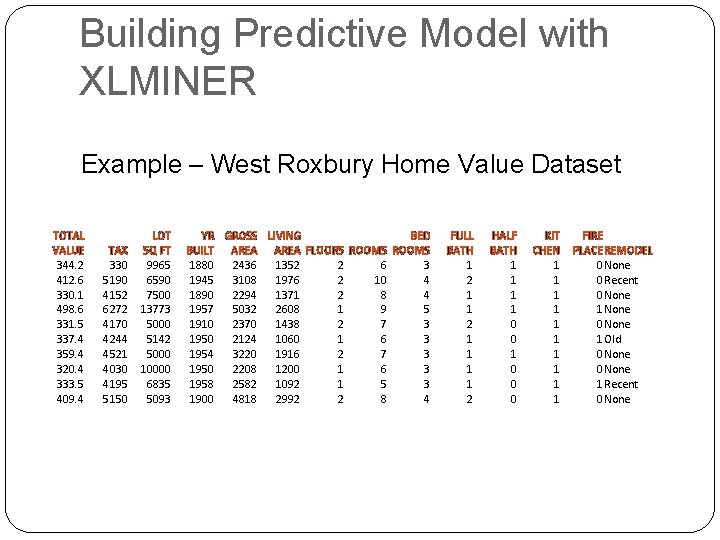

Building Predictive Model with XLMINER Example – West Roxbury Home Value Dataset TOTAL VALUE 344. 2 412. 6 330. 1 498. 6 331. 5 337. 4 359. 4 320. 4 333. 5 409. 4 TAX 330 5190 4152 6272 4170 4244 4521 4030 4195 5150 LOT SQ FT 9965 6590 7500 13773 5000 5142 5000 10000 6835 5093 YR GROSS LIVING BED BUILT AREA FLOORS ROOMS 1880 2436 1352 2 6 3 1945 3108 1976 2 10 4 1890 2294 1371 2 8 4 1957 5032 2608 1 9 5 1910 2370 1438 2 7 3 1950 2124 1060 1 6 3 1954 3220 1916 2 7 3 1950 2208 1200 1 6 3 1958 2582 1092 1 5 3 1900 4818 2992 2 8 4 FULL BATH 1 2 1 1 1 1 2 HALF BATH 1 1 0 0 0 KIT CHEN 1 1 1 1 1 FIRE PLACEREMODEL 0 None 0 Recent 0 None 1 None 0 None 1 Old 0 None 1 Recent 0 None

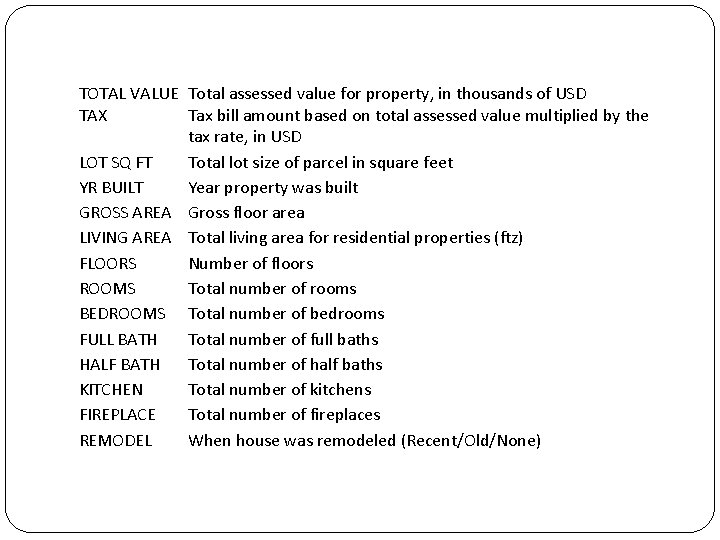

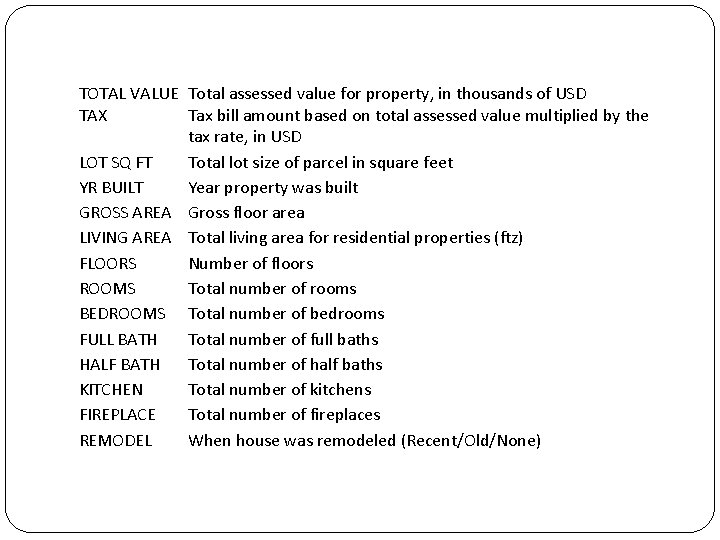

TOTAL VALUE Total assessed value for property, in thousands of USD TAX Tax bill amount based on total assessed value multiplied by the tax rate, in USD LOT SQ FT Total lot size of parcel in square feet YR BUILT Year property was built GROSS AREA Gross floor area LIVING AREA Total living area for residential properties (ftz) FLOORS Number of floors ROOMS Total number of rooms BEDROOMS Total number of bedrooms FULL BATH Total number of full baths HALF BATH Total number of half baths KITCHEN Total number of kitchens FIREPLACE Total number of fireplaces REMODEL When house was remodeled (Recent/Old/None)

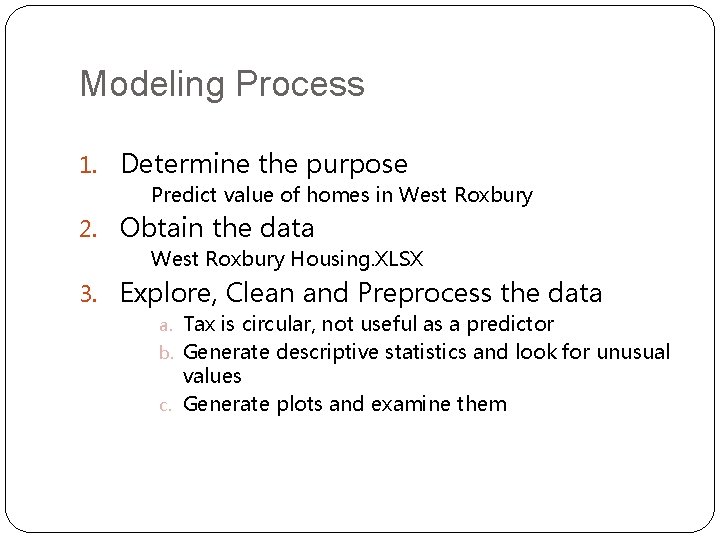

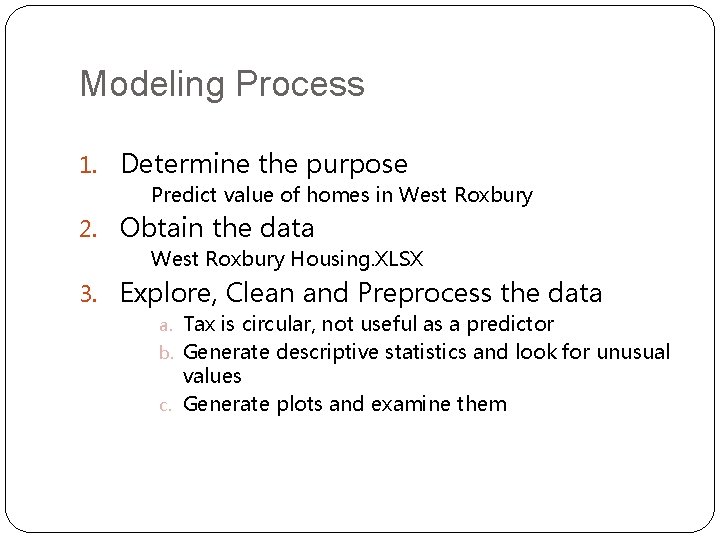

Modeling Process 1. Determine the purpose Predict value of homes in West Roxbury 2. Obtain the data West Roxbury Housing. XLSX 3. Explore, Clean and Preprocess the data a. Tax is circular, not useful as a predictor b. Generate descriptive statistics and look for unusual values c. Generate plots and examine them

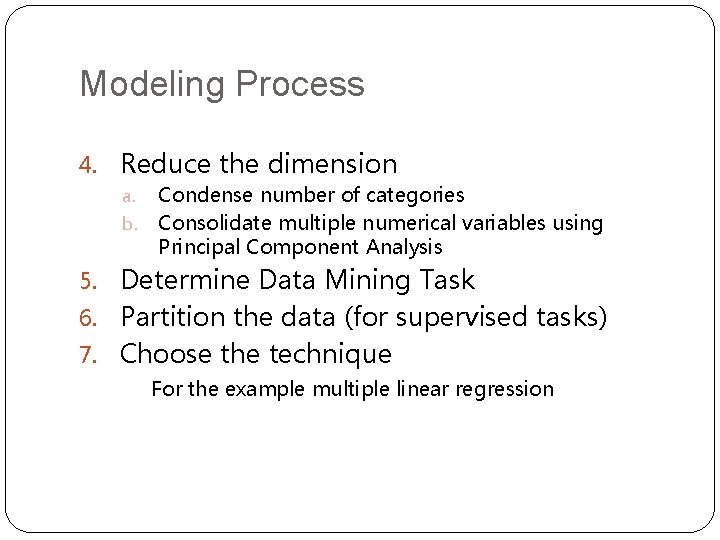

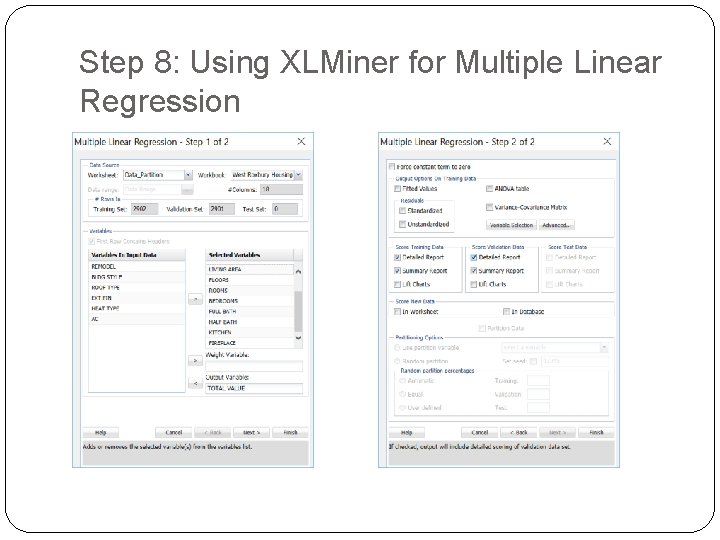

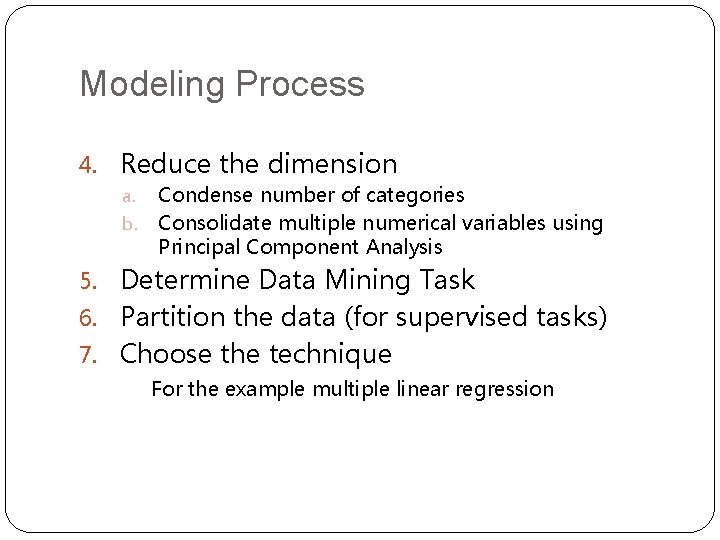

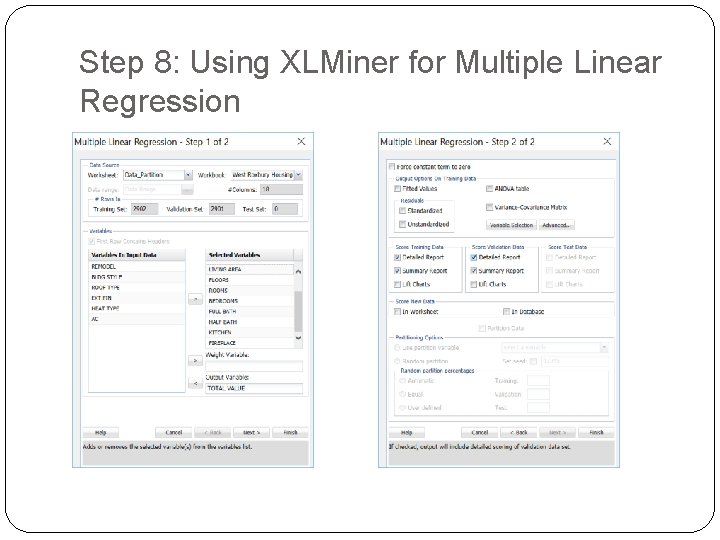

Modeling Process 4. Reduce the dimension a. b. Condense number of categories Consolidate multiple numerical variables using Principal Component Analysis 5. Determine Data Mining Task 6. Partition the data (for supervised tasks) 7. Choose the technique For the example multiple linear regression

Modeling Process 8. Use the algorithm to perform the task 9. Interpret results 10. Deploy the model

Step 6: Partitioning the data

Step 8: Using XLMiner for Multiple Linear Regression

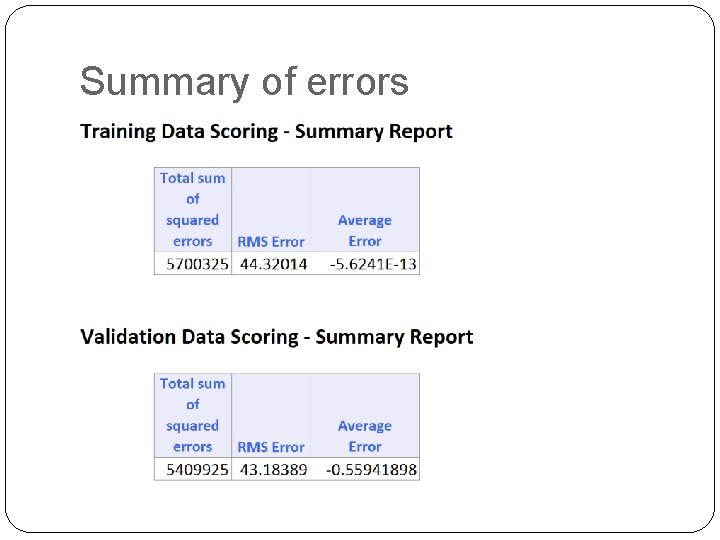

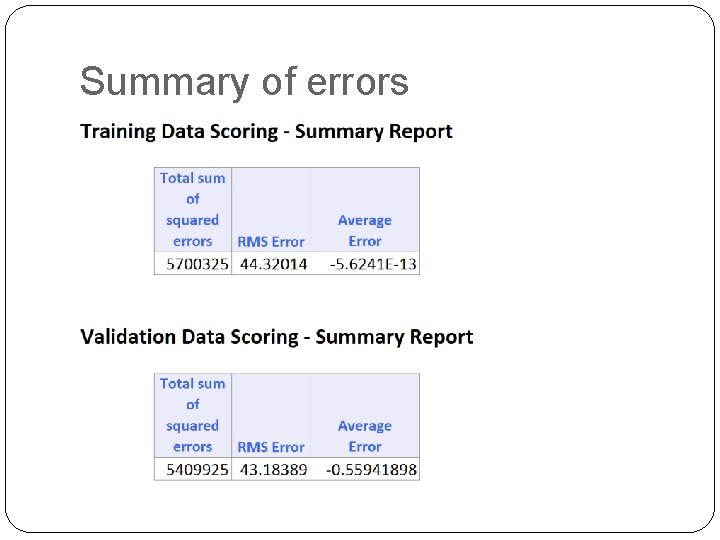

Summary of errors

RMS error Error = actual - predicted RMS = Root-mean-squared error = Square root of average squared error In previous example, sizes of training and validation sets differ, so only RMS Error and Average Error are comparable

Using Excel and XLMiner for Data Mining Excel is limited in data capacity However, the training and validation of DM models can be handled within the modest limits of Excel and XLMiner Models can then be used to score larger databases XLMiner has functions for interacting with various databases (taking samples from a database, and scoring a database from a developed model)

Summary Data Mining consists of supervised methods (Classification & Prediction) and unsupervised methods (Association Rules, Data Reduction, Data Exploration & Visualization) Before algorithms can be applied, data must be characterized and pre-processed To evaluate performance and to avoid overfitting, data partitioning is used Data mining methods are usually applied to a sample from a large database, and then the best model is used to score the entire database