Overview of Text Retrieval Part 1 Cheng Xiang

![Probability Ranking Principle [Robertson 77] • As stated by Cooper “If a reference retrieval Probability Ranking Principle [Robertson 77] • As stated by Cooper “If a reference retrieval](https://slidetodoc.com/presentation_image_h/1a7c993bb891fe3f4d46a7d7b3204532/image-16.jpg)

![What Works the Best? Error [ ] • Use single words • Use stat. What Works the Best? Error [ ] • Use single words • Use stat.](https://slidetodoc.com/presentation_image_h/1a7c993bb891fe3f4d46a7d7b3204532/image-55.jpg)

- Slides: 64

龙星计划课程: 信息检索 Overview of Text Retrieval: Part 1 Cheng. Xiang Zhai (翟成祥) Department of Computer Science Graduate School of Library & Information Science Institute for Genomic Biology, Statistics University of Illinois, Urbana-Champaign http: //www-faculty. cs. uiuc. edu/~czhai, czhai@cs. uiuc. edu 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 1

Outline • Basic Concepts in TR • Evaluation of TR • Common Components of a TR system • Vector Space Retrieval Model 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 2

Basic Concepts in TR 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 3

What is Text Retrieval (TR)? • There exists a collection of text documents • User gives a query to express the information need • A retrieval system returns relevant documents to users • Known as “search technology” in industry 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 4

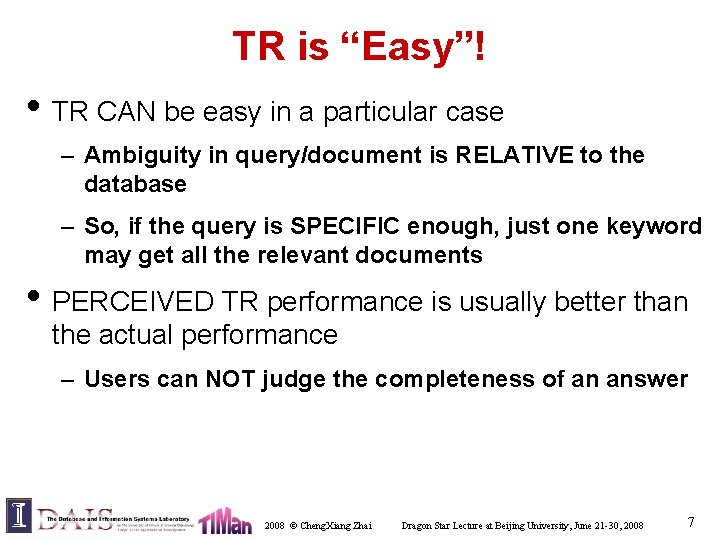

TR vs. Database Retrieval • Information – Unstructured/free text vs. structured data – Ambiguous vs. well-defined semantics • Query – Ambiguous vs. well-defined semantics – Incomplete vs. complete specification • Answers – Relevant documents vs. matched records • TR is an empirically defined problem! 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 5

TR is Hard! • Under/over-specified query – Ambiguous: “buying CDs” (money or music? ) – Incomplete: what kind of CDs? – What if “CD” is never mentioned in document? • Vague semantics of documents – Ambiguity: e. g. , word-sense, structural – Incomplete: Inferences required • Even hard for people! – 80% agreement in human judgments 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 6

TR is “Easy”! • TR CAN be easy in a particular case – Ambiguity in query/document is RELATIVE to the database – So, if the query is SPECIFIC enough, just one keyword may get all the relevant documents • PERCEIVED TR performance is usually better than the actual performance – Users can NOT judge the completeness of an answer 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 7

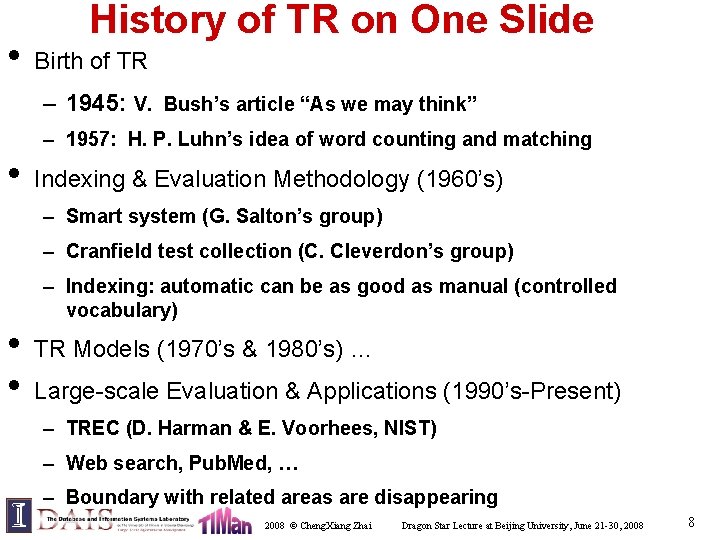

• History of TR on One Slide Birth of TR – 1945: V. Bush’s article “As we may think” – 1957: H. P. Luhn’s idea of word counting and matching • Indexing & Evaluation Methodology (1960’s) – Smart system (G. Salton’s group) – Cranfield test collection (C. Cleverdon’s group) – Indexing: automatic can be as good as manual (controlled vocabulary) • • TR Models (1970’s & 1980’s) … Large-scale Evaluation & Applications (1990’s-Present) – TREC (D. Harman & E. Voorhees, NIST) – Web search, Pub. Med, … – Boundary with related areas are disappearing 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 8

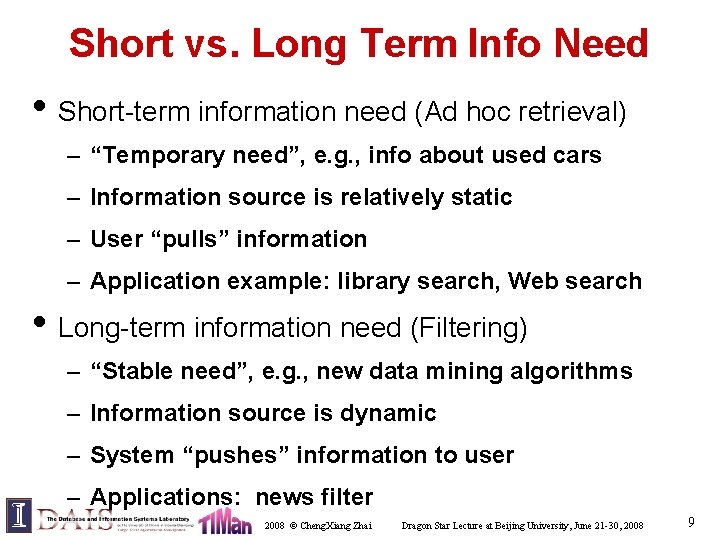

Short vs. Long Term Info Need • Short-term information need (Ad hoc retrieval) – “Temporary need”, e. g. , info about used cars – Information source is relatively static – User “pulls” information – Application example: library search, Web search • Long-term information need (Filtering) – “Stable need”, e. g. , new data mining algorithms – Information source is dynamic – System “pushes” information to user – Applications: news filter 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 9

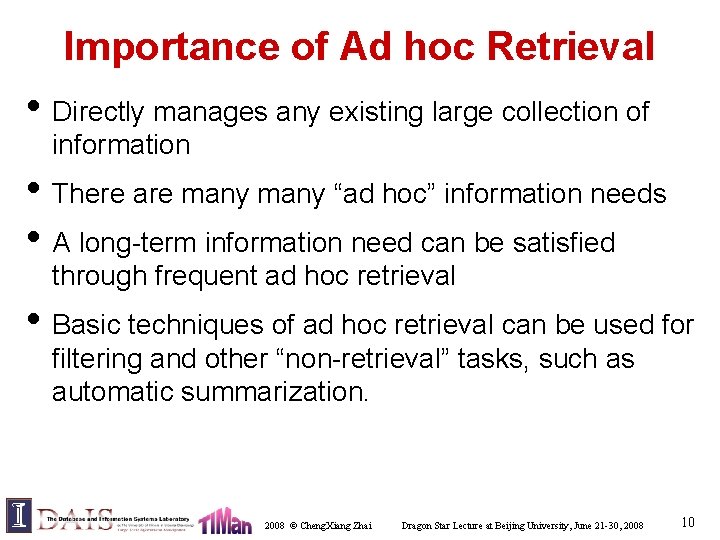

Importance of Ad hoc Retrieval • Directly manages any existing large collection of information • There are many “ad hoc” information needs • A long-term information need can be satisfied through frequent ad hoc retrieval • Basic techniques of ad hoc retrieval can be used for filtering and other “non-retrieval” tasks, such as automatic summarization. 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 10

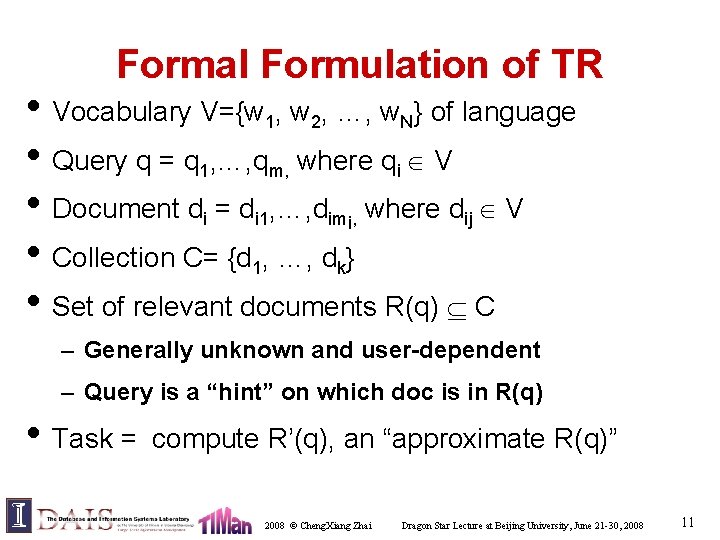

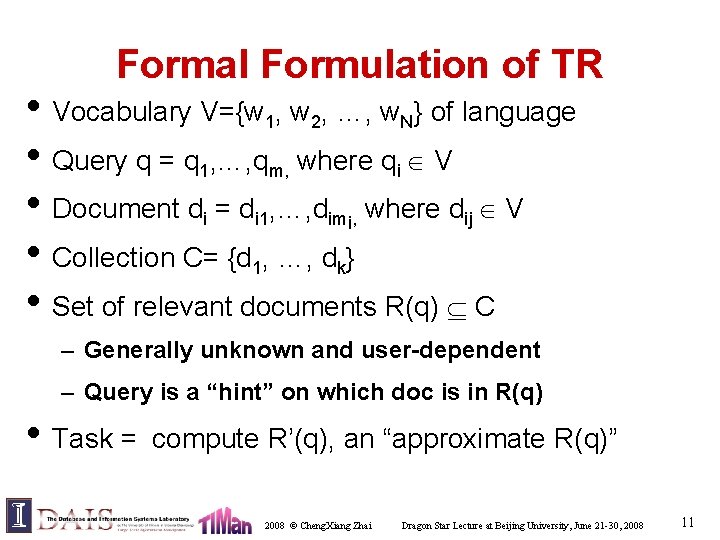

Formal Formulation of TR • Vocabulary V={w 1, w 2, …, w. N} of language • Query q = q 1, …, qm, where qi V • Document di = di 1, …, dimi, where dij V • Collection C= {d 1, …, dk} • Set of relevant documents R(q) C – Generally unknown and user-dependent – Query is a “hint” on which doc is in R(q) • Task = compute R’(q), an “approximate R(q)” 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 11

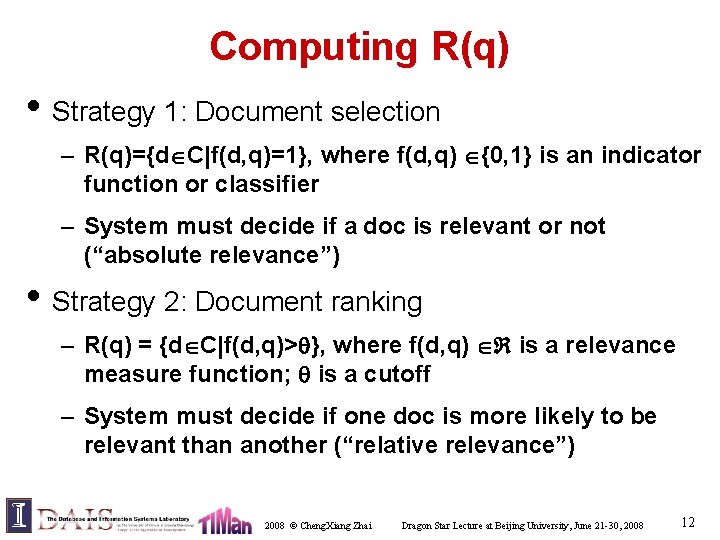

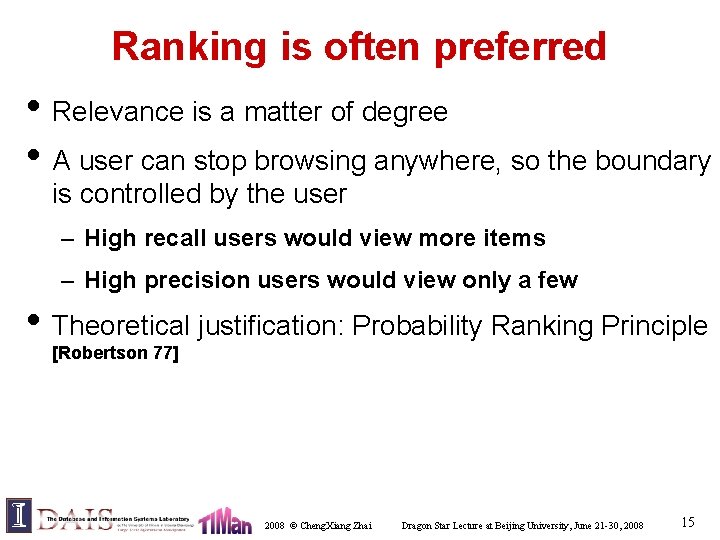

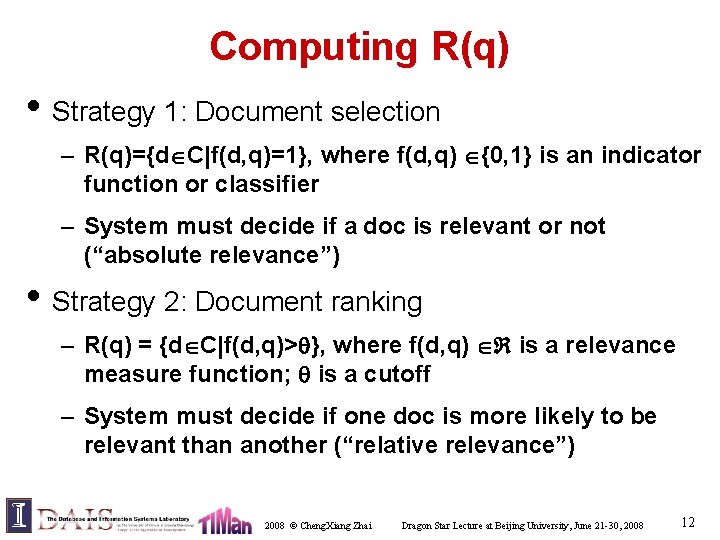

Computing R(q) • Strategy 1: Document selection – R(q)={d C|f(d, q)=1}, where f(d, q) {0, 1} is an indicator function or classifier – System must decide if a doc is relevant or not (“absolute relevance”) • Strategy 2: Document ranking – R(q) = {d C|f(d, q)> }, where f(d, q) is a relevance measure function; is a cutoff – System must decide if one doc is more likely to be relevant than another (“relative relevance”) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 12

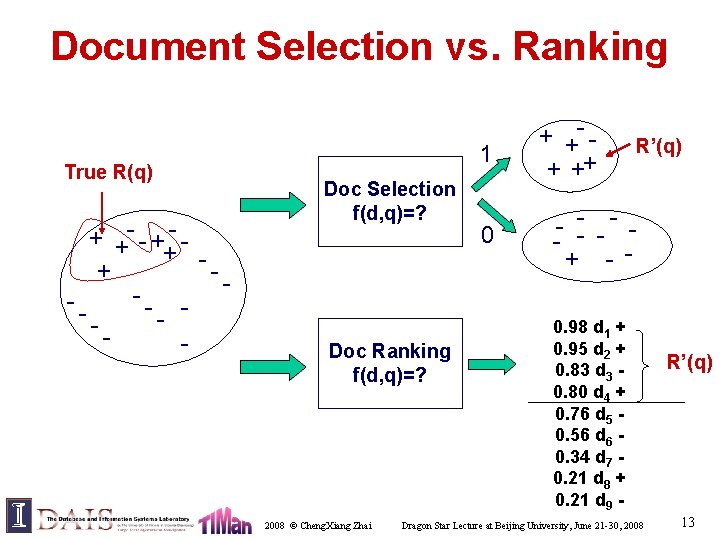

Document Selection vs. Ranking True R(q) + +- - + + --- 1 Doc Selection f(d, q)=? Doc Ranking f(d, q)=? 2008 © Cheng. Xiang Zhai 0 + +- + ++ R’(q) - -- - - + - 0. 98 d 1 + 0. 95 d 2 + 0. 83 d 3 0. 80 d 4 + 0. 76 d 5 0. 56 d 6 0. 34 d 7 0. 21 d 8 + 0. 21 d 9 - Dragon Star Lecture at Beijing University, June 21 -30, 2008 R’(q) 13

Problems of Doc Selection • The classifier is unlikely accurate – “Over-constrained” query (terms are too specific): no relevant documents found – “Under-constrained” query (terms are too general): over delivery – It is extremely hard to find the right position between these two extremes • Even if it is accurate, all relevant documents are not equally relevant • Relevance is a matter of degree! 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 14

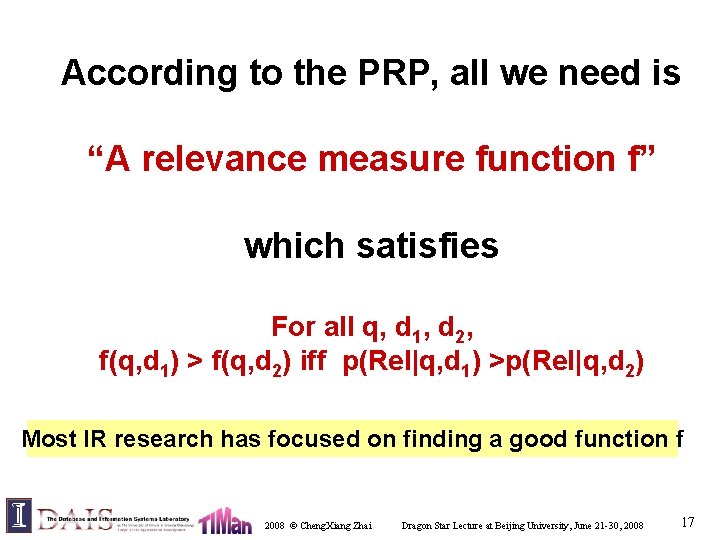

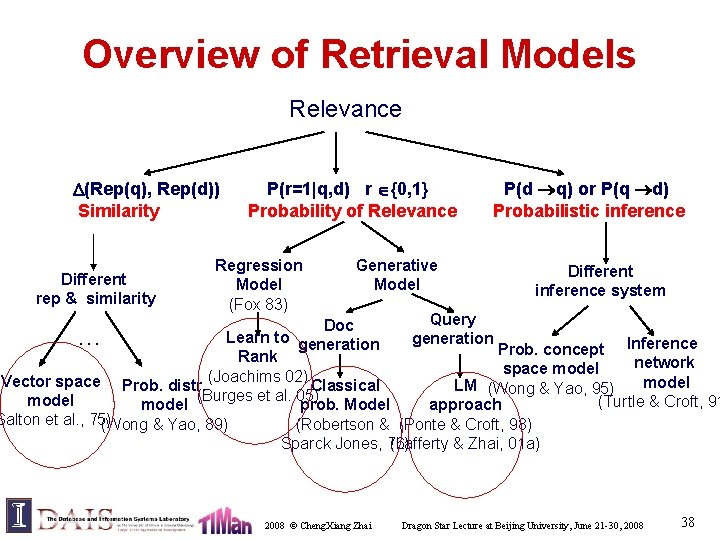

Ranking is often preferred • Relevance is a matter of degree • A user can stop browsing anywhere, so the boundary is controlled by the user – High recall users would view more items – High precision users would view only a few • Theoretical justification: Probability Ranking Principle [Robertson 77] 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 15

![Probability Ranking Principle Robertson 77 As stated by Cooper If a reference retrieval Probability Ranking Principle [Robertson 77] • As stated by Cooper “If a reference retrieval](https://slidetodoc.com/presentation_image_h/1a7c993bb891fe3f4d46a7d7b3204532/image-16.jpg)

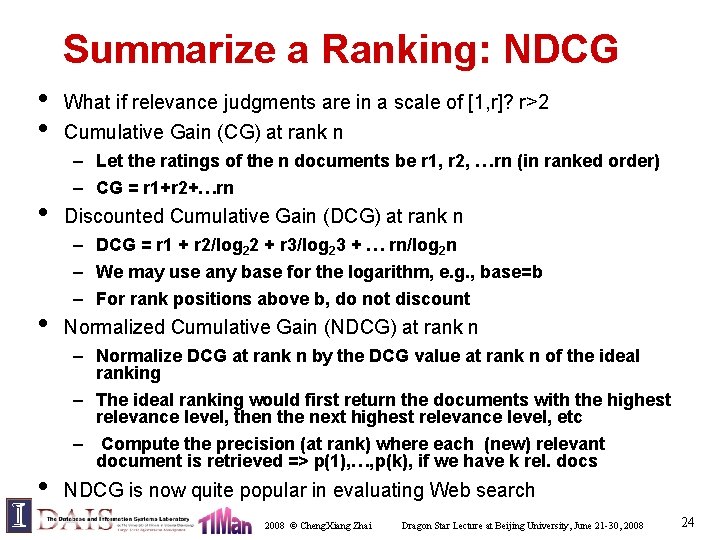

Probability Ranking Principle [Robertson 77] • As stated by Cooper “If a reference retrieval system’s response to each request is a ranking of the documents in the collections in order of decreasing probability of usefulness to the user who submitted the request, where the probabilities are estimated as accurately a possible on the basis of whatever data made available to the system for this purpose, then the overall effectiveness of the system to its users will be the best that is obtainable on the basis of that data. ” • Robertson provides two formal justifications • Assumptions: Independent relevance and sequential browsing (not necessarily all hold in reality) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 16

According to the PRP, all we need is “A relevance measure function f” which satisfies For all q, d 1, d 2, f(q, d 1) > f(q, d 2) iff p(Rel|q, d 1) >p(Rel|q, d 2) Most IR research has focused on finding a good function f 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 17

Evaluation in Information Retrieval 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 18

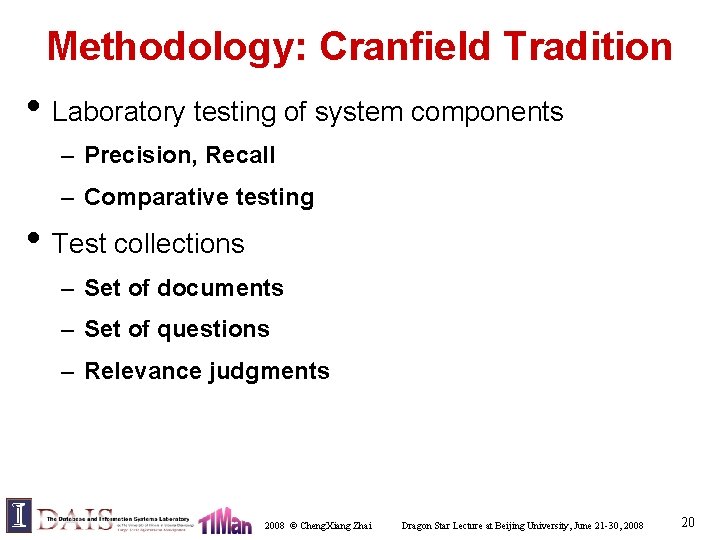

Evaluation Criteria • Effectiveness/Accuracy – Precision, Recall • Efficiency – Space and time complexity • Usability – How useful for real user tasks? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 19

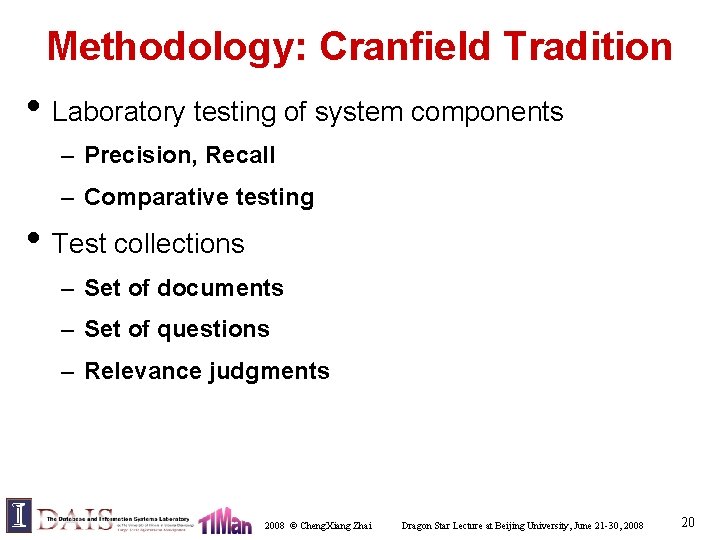

Methodology: Cranfield Tradition • Laboratory testing of system components – Precision, Recall – Comparative testing • Test collections – Set of documents – Set of questions – Relevance judgments 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 20

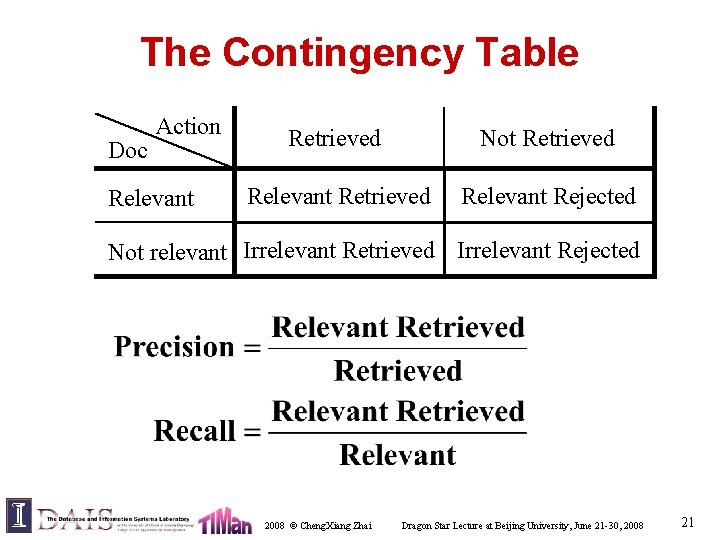

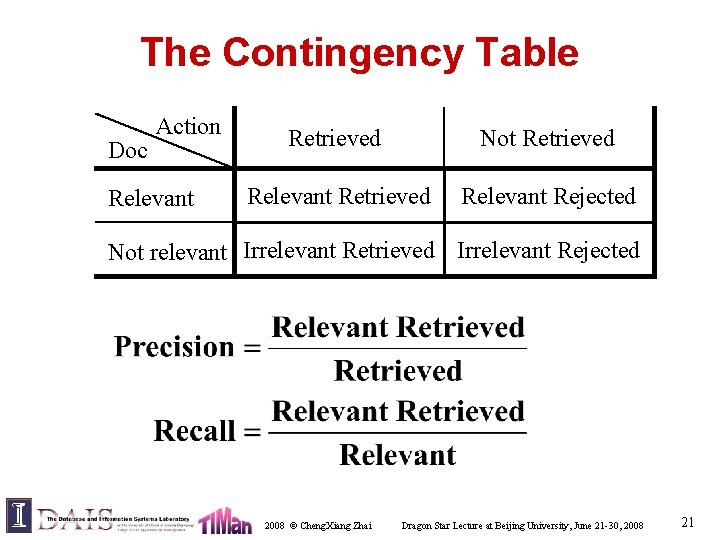

The Contingency Table Doc Action Relevant Retrieved Not Retrieved Relevant Rejected Not relevant Irrelevant Retrieved Irrelevant Rejected 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 21

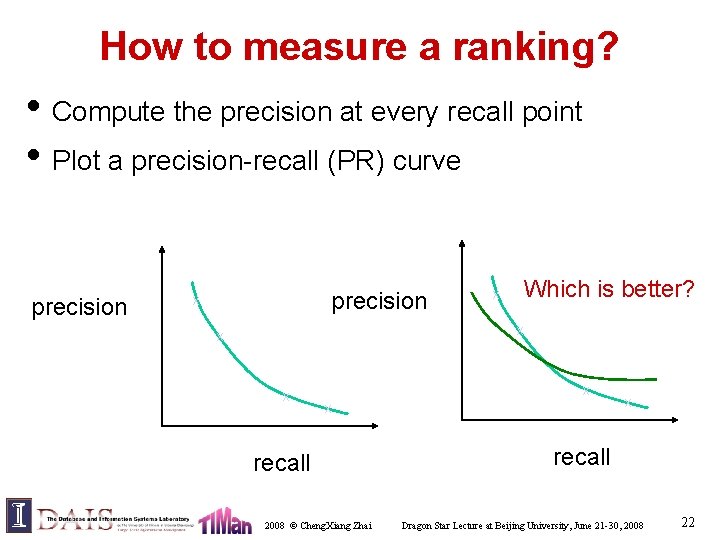

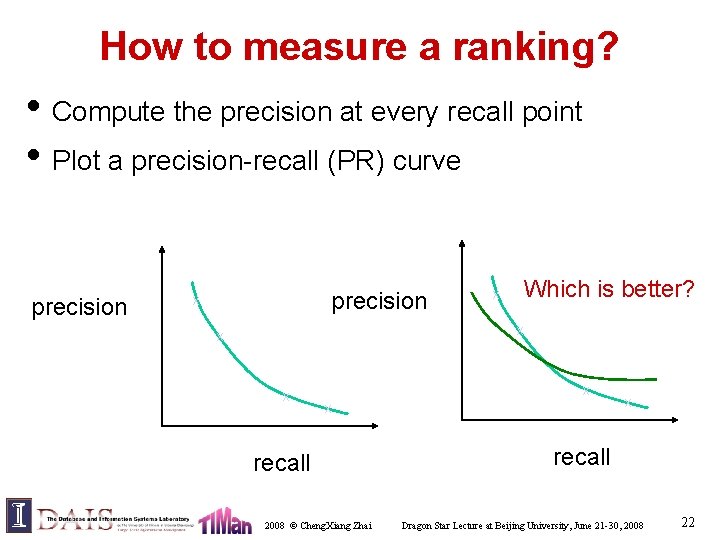

How to measure a ranking? • Compute the precision at every recall point • Plot a precision-recall (PR) curve precision x x Which is better? x x x recall 2008 © Cheng. Xiang Zhai x recall Dragon Star Lecture at Beijing University, June 21 -30, 2008 22

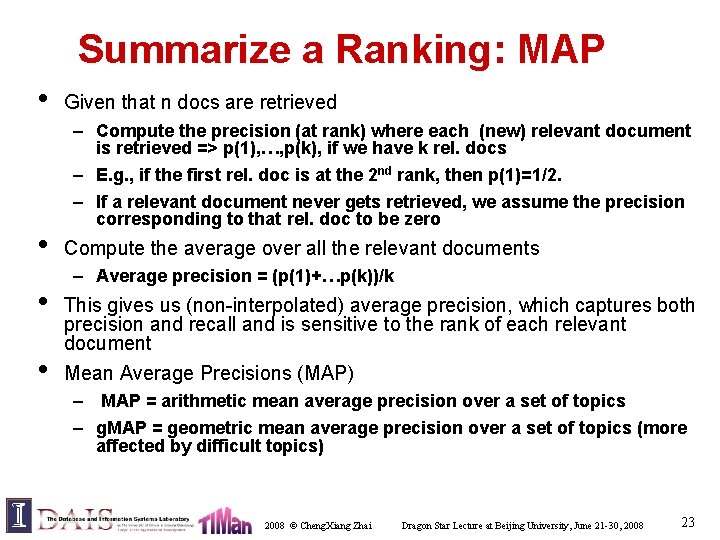

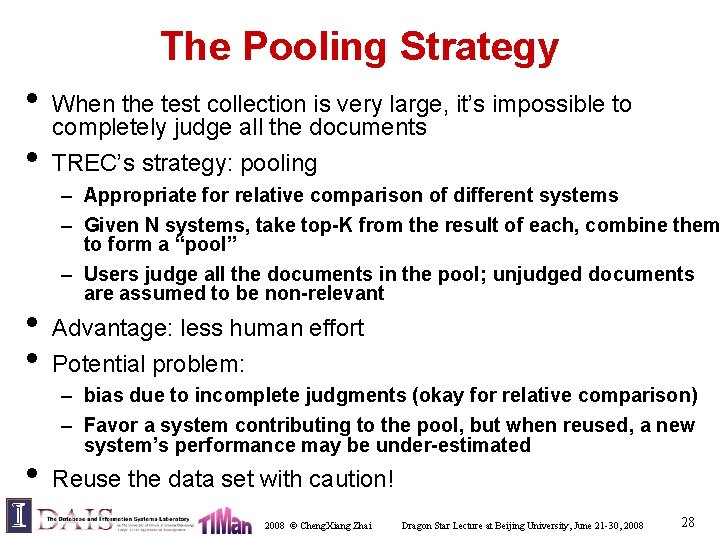

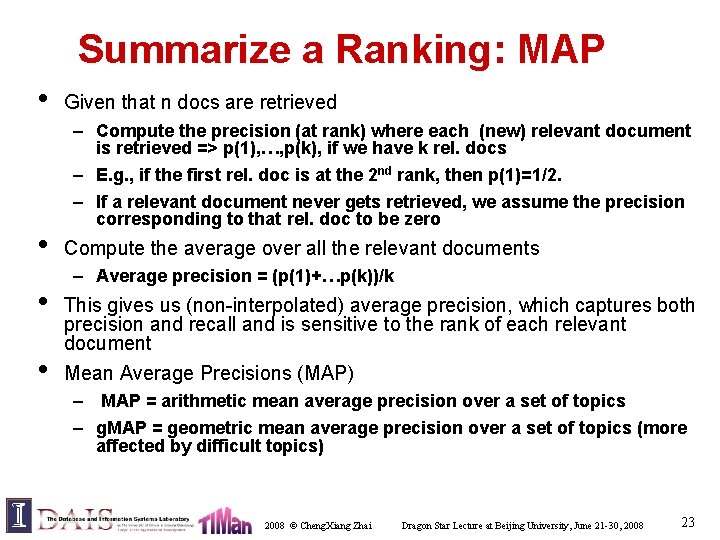

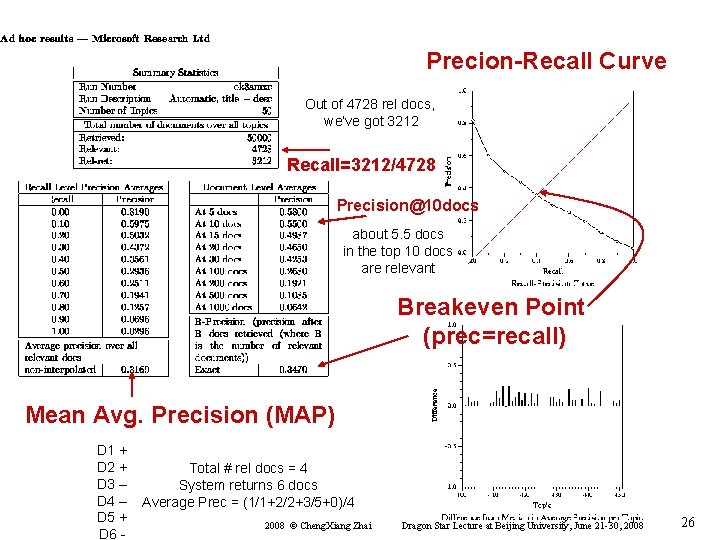

Summarize a Ranking: MAP • • Given that n docs are retrieved – Compute the precision (at rank) where each (new) relevant document is retrieved => p(1), …, p(k), if we have k rel. docs – E. g. , if the first rel. doc is at the 2 nd rank, then p(1)=1/2. – If a relevant document never gets retrieved, we assume the precision corresponding to that rel. doc to be zero Compute the average over all the relevant documents – Average precision = (p(1)+…p(k))/k This gives us (non-interpolated) average precision, which captures both precision and recall and is sensitive to the rank of each relevant document Mean Average Precisions (MAP) – MAP = arithmetic mean average precision over a set of topics – g. MAP = geometric mean average precision over a set of topics (more affected by difficult topics) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 23

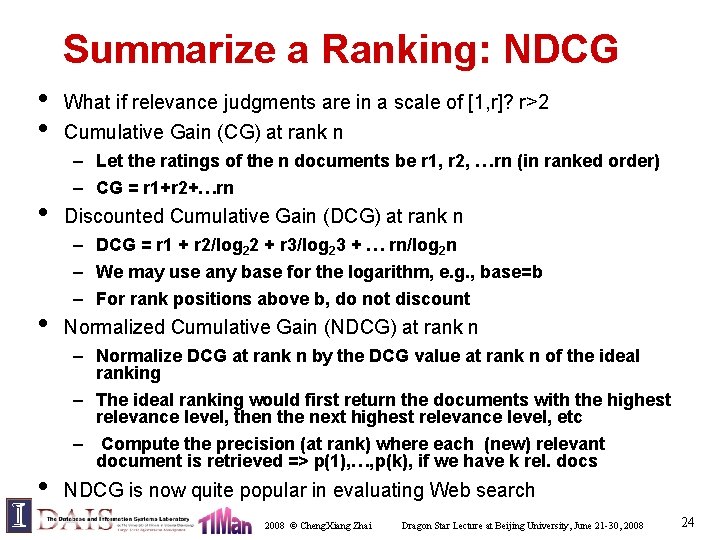

Summarize a Ranking: NDCG • • • What if relevance judgments are in a scale of [1, r]? r>2 Cumulative Gain (CG) at rank n – Let the ratings of the n documents be r 1, r 2, …rn (in ranked order) – CG = r 1+r 2+…rn Discounted Cumulative Gain (DCG) at rank n – DCG = r 1 + r 2/log 22 + r 3/log 23 + … rn/log 2 n – We may use any base for the logarithm, e. g. , base=b – For rank positions above b, do not discount Normalized Cumulative Gain (NDCG) at rank n – Normalize DCG at rank n by the DCG value at rank n of the ideal ranking – The ideal ranking would first return the documents with the highest relevance level, then the next highest relevance level, etc – Compute the precision (at rank) where each (new) relevant document is retrieved => p(1), …, p(k), if we have k rel. docs NDCG is now quite popular in evaluating Web search 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 24

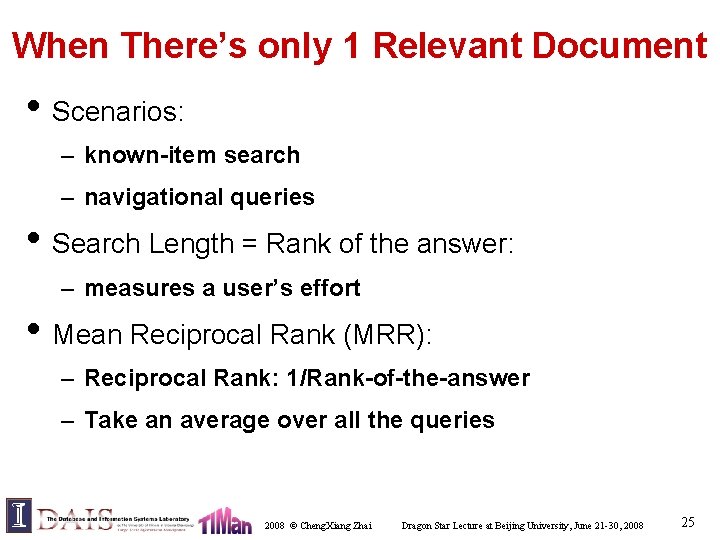

When There’s only 1 Relevant Document • Scenarios: – known-item search – navigational queries • Search Length = Rank of the answer: – measures a user’s effort • Mean Reciprocal Rank (MRR): – Reciprocal Rank: 1/Rank-of-the-answer – Take an average over all the queries 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 25

Precion-Recall Curve Out of 4728 rel docs, we’ve got 3212 Recall=3212/4728 Precision@10 docs about 5. 5 docs in the top 10 docs are relevant Breakeven Point (prec=recall) Mean Avg. Precision (MAP) D 1 + D 2 + D 3 – D 4 – D 5 + D 6 - Total # rel docs = 4 System returns 6 docs Average Prec = (1/1+2/2+3/5+0)/4 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 26

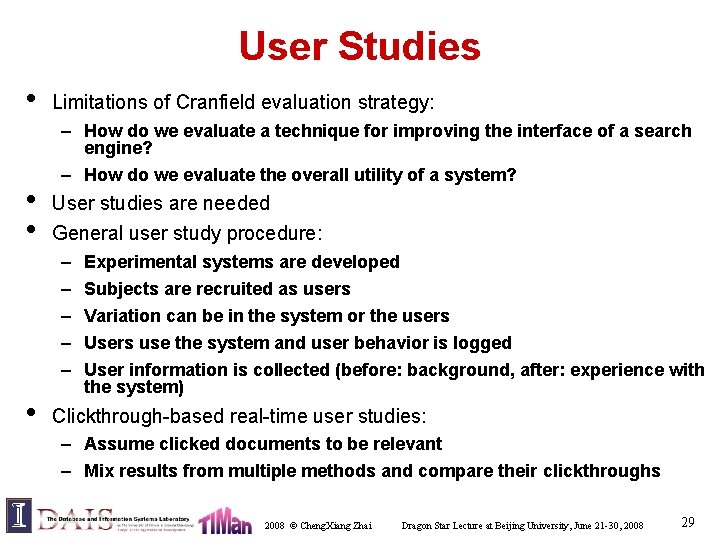

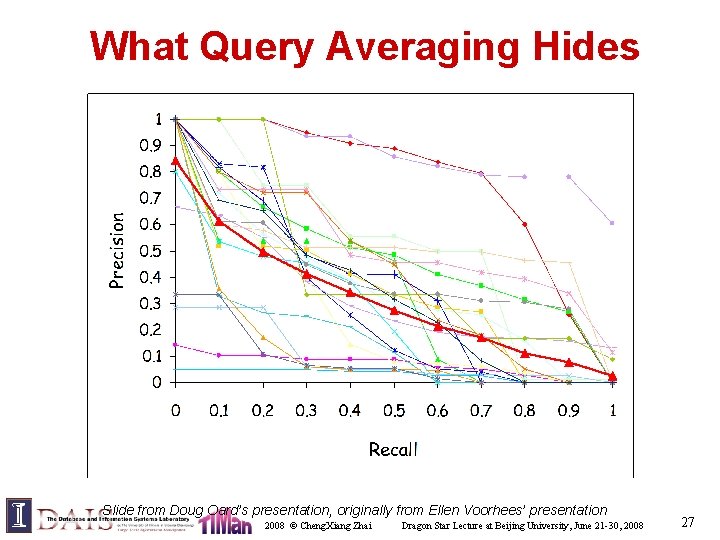

What Query Averaging Hides Slide from Doug Oard’s presentation, originally from Ellen Voorhees’ presentation 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 27

The Pooling Strategy • • • When the test collection is very large, it’s impossible to completely judge all the documents TREC’s strategy: pooling – Appropriate for relative comparison of different systems – Given N systems, take top-K from the result of each, combine them to form a “pool” – Users judge all the documents in the pool; unjudged documents are assumed to be non-relevant Advantage: less human effort Potential problem: – bias due to incomplete judgments (okay for relative comparison) – Favor a system contributing to the pool, but when reused, a new system’s performance may be under-estimated Reuse the data set with caution! 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 28

User Studies • • • Limitations of Cranfield evaluation strategy: – How do we evaluate a technique for improving the interface of a search engine? – How do we evaluate the overall utility of a system? User studies are needed General user study procedure: – – – • Experimental systems are developed Subjects are recruited as users Variation can be in the system or the users Users use the system and user behavior is logged User information is collected (before: background, after: experience with the system) Clickthrough-based real-time user studies: – Assume clicked documents to be relevant – Mix results from multiple methods and compare their clickthroughs 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 29

Common Components in a TR System 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 30

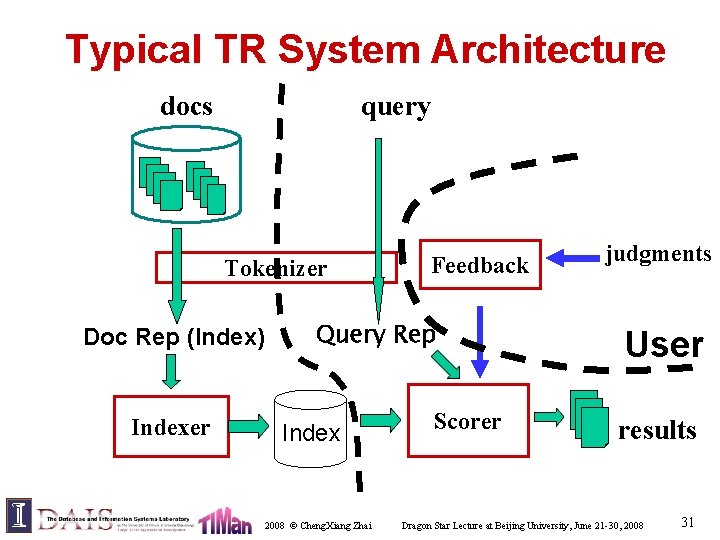

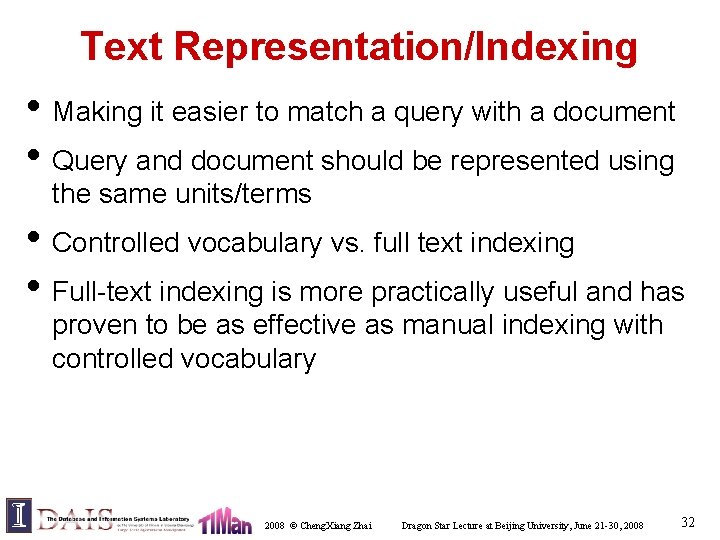

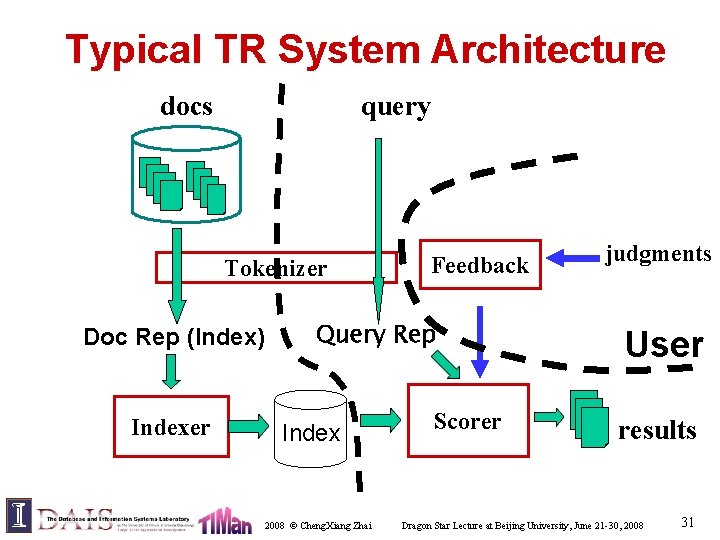

Typical TR System Architecture docs query Tokenizer Doc Rep (Index) Indexer Feedback Query Rep Index 2008 © Cheng. Xiang Zhai Scorer judgments User results Dragon Star Lecture at Beijing University, June 21 -30, 2008 31

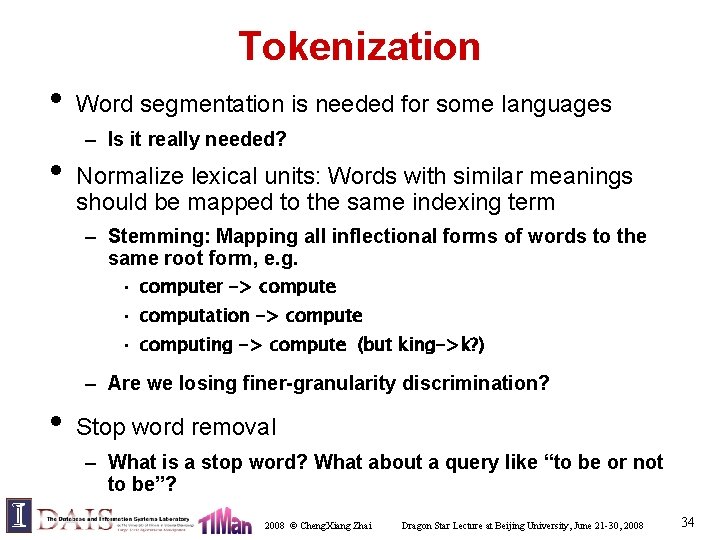

Text Representation/Indexing • Making it easier to match a query with a document • Query and document should be represented using the same units/terms • Controlled vocabulary vs. full text indexing • Full-text indexing is more practically useful and has proven to be as effective as manual indexing with controlled vocabulary 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 32

What is a good indexing term? • Specific (phrases) or general (single word)? • Luhn found that words with middle frequency are most useful – Not too specific (low utility, but still useful!) – Not too general (lack of discrimination, stop words) – Stop word removal is common, but rare words are kept • All words or a (controlled) subset? When term weighting is used, it is a matter of weighting not selecting of indexing terms (more later) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 33

Tokenization • • Word segmentation is needed for some languages – Is it really needed? Normalize lexical units: Words with similar meanings should be mapped to the same indexing term – Stemming: Mapping all inflectional forms of words to the same root form, e. g. • computer -> compute • computation -> compute • computing -> compute (but king->k? ) – Are we losing finer-granularity discrimination? • Stop word removal – What is a stop word? What about a query like “to be or not to be”? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 34

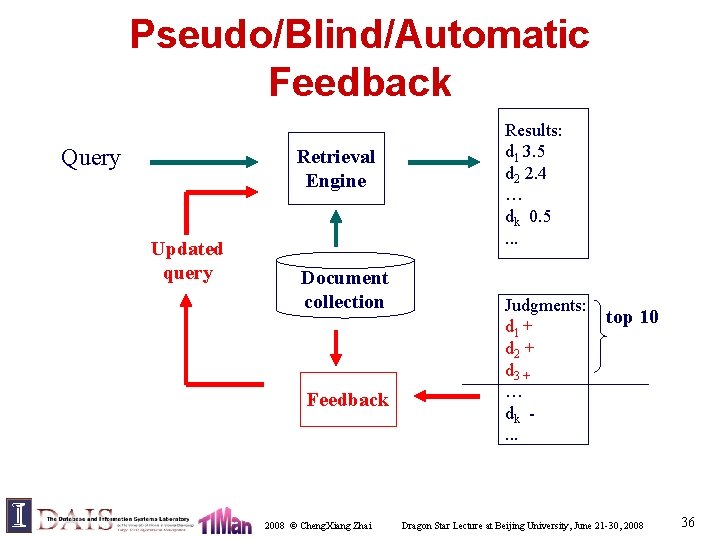

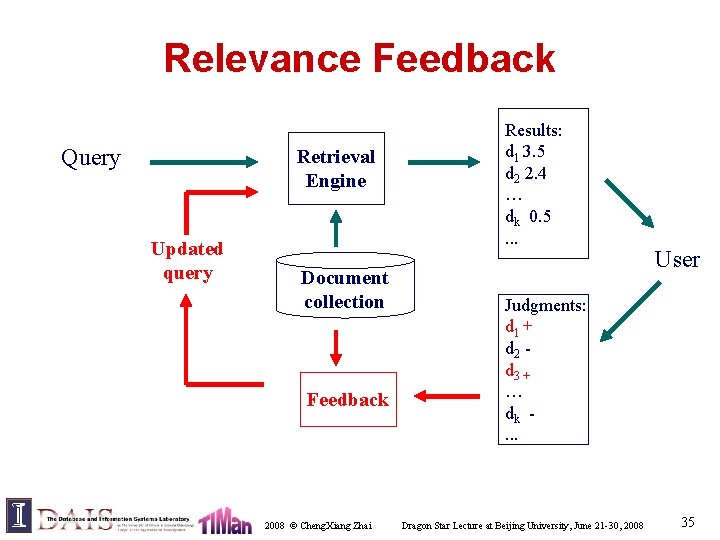

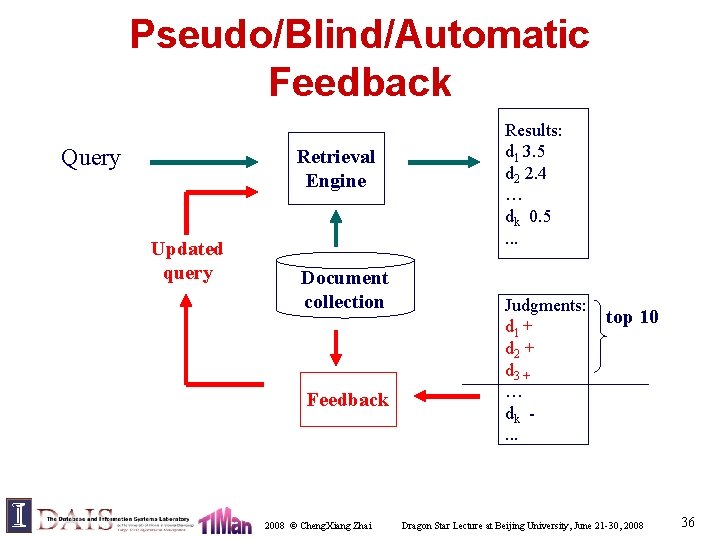

Relevance Feedback Query Retrieval Engine Updated query Document collection Feedback 2008 © Cheng. Xiang Zhai Results: d 1 3. 5 d 2 2. 4 … dk 0. 5. . . User Judgments: d 1 + d 2 d 3 + … dk. . . Dragon Star Lecture at Beijing University, June 21 -30, 2008 35

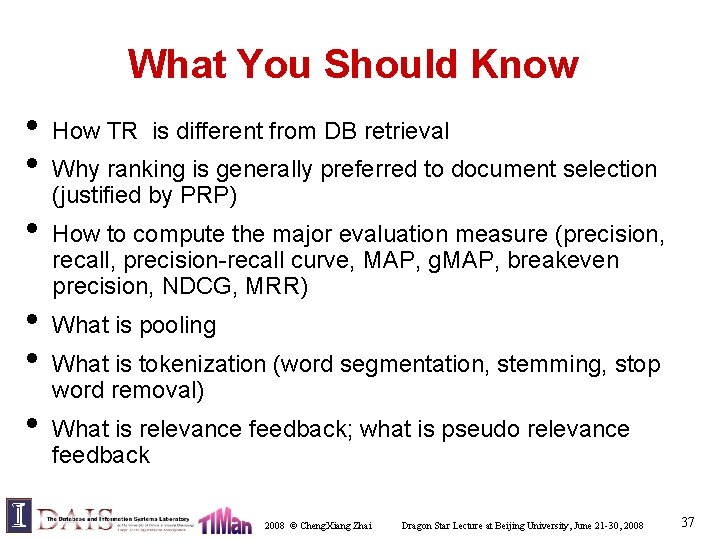

Pseudo/Blind/Automatic Feedback Query Retrieval Engine Updated query Document collection Feedback 2008 © Cheng. Xiang Zhai Results: d 1 3. 5 d 2 2. 4 … dk 0. 5. . . Judgments: d 1 + d 2 + d 3 + … dk. . . top 10 Dragon Star Lecture at Beijing University, June 21 -30, 2008 36

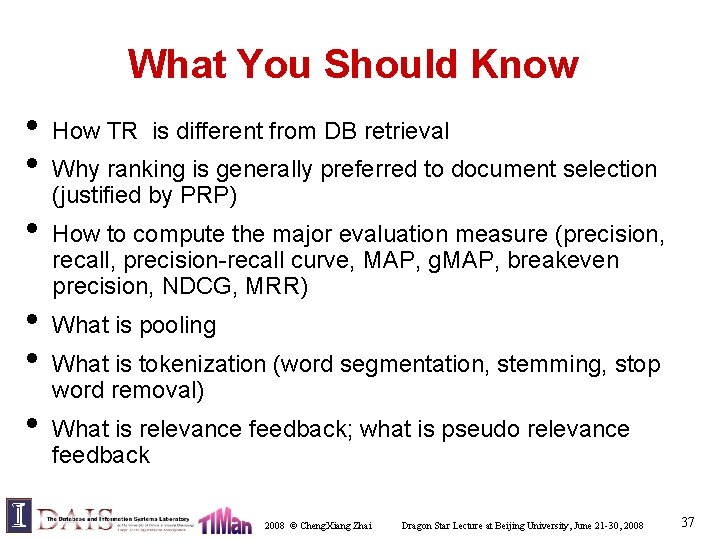

What You Should Know • • • How TR is different from DB retrieval Why ranking is generally preferred to document selection (justified by PRP) How to compute the major evaluation measure (precision, recall, precision-recall curve, MAP, g. MAP, breakeven precision, NDCG, MRR) What is pooling What is tokenization (word segmentation, stemming, stop word removal) What is relevance feedback; what is pseudo relevance feedback 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 37

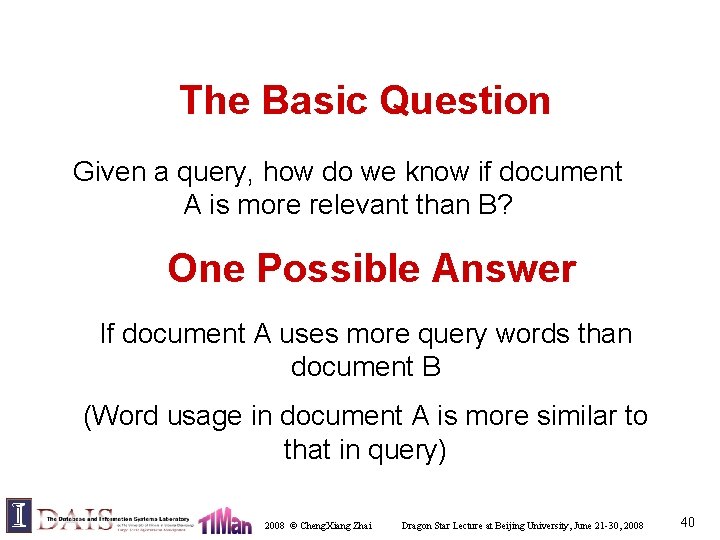

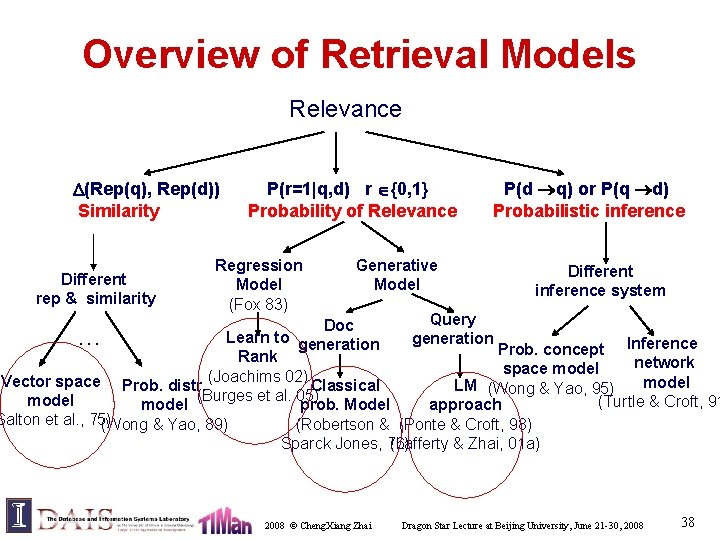

Overview of Retrieval Models Relevance (Rep(q), Rep(d)) P(r=1|q, d) r {0, 1} Similarity Probability of Relevance Different rep & similarity Regression Model (Fox 83) Generative Model P(d q) or P(q d) Probabilistic inference Different inference system Query Doc Learn to generation … Prob. concept Inference Rank network space model (Joachims 02) Vector space Prob. distr. model Classical LM (Wong & Yao, 95) (Burges et al. 05) model (Turtle & Croft, 91 model prob. Model approach Salton et al. , 75) (Wong & Yao, 89) (Robertson & (Ponte & Croft, 98) Sparck Jones, 76) (Lafferty & Zhai, 01 a) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 38

Retrieval Models: Vector Space 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 39

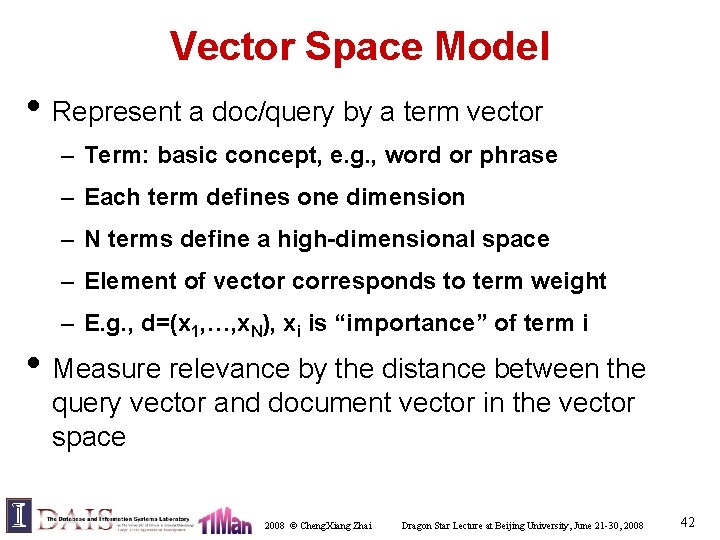

The Basic Question Given a query, how do we know if document A is more relevant than B? One Possible Answer If document A uses more query words than document B (Word usage in document A is more similar to that in query) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 40

Relevance = Similarity • Assumptions – Query and document are represented similarly – A query can be regarded as a “document” – Relevance(d, q) similarity(d, q) • R(q) = {d C|f(d, q)> }, f(q, d)= (Rep(q), Rep(d)) • Key issues – How to represent query/document? – How to define the similarity measure ? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 41

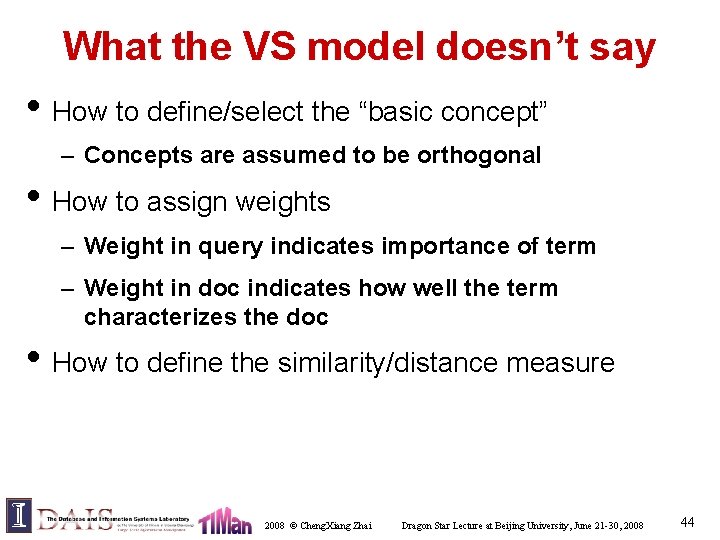

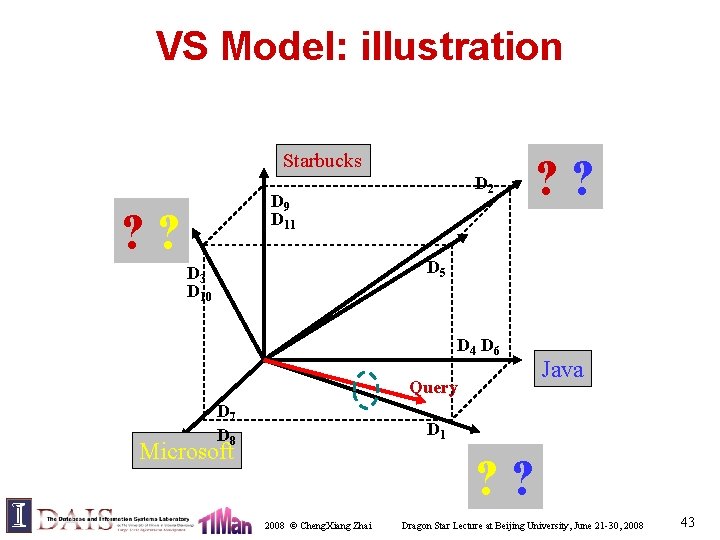

Vector Space Model • Represent a doc/query by a term vector – Term: basic concept, e. g. , word or phrase – Each term defines one dimension – N terms define a high-dimensional space – Element of vector corresponds to term weight – E. g. , d=(x 1, …, x. N), xi is “importance” of term i • Measure relevance by the distance between the query vector and document vector in the vector space 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 42

VS Model: illustration Starbucks D 2 D 9 D 11 ? ? D 5 D 3 D 10 D 4 D 6 Query D 7 D 8 Java D 1 Microsoft ? ? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 43

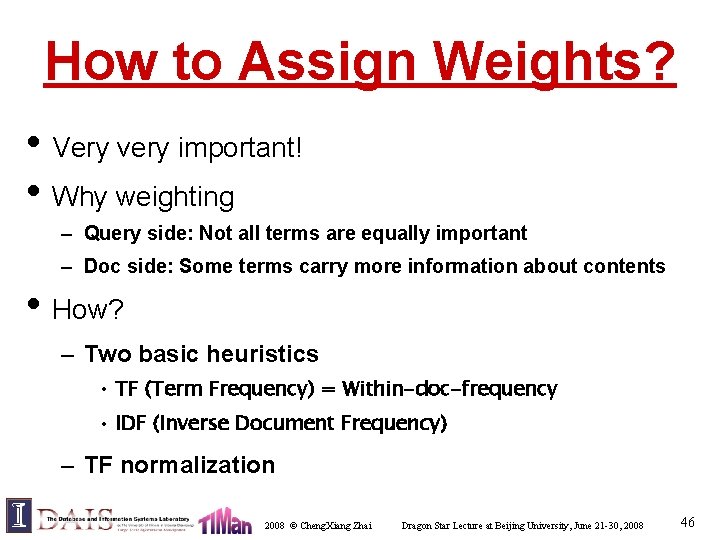

What the VS model doesn’t say • How to define/select the “basic concept” – Concepts are assumed to be orthogonal • How to assign weights – Weight in query indicates importance of term – Weight in doc indicates how well the term characterizes the doc • How to define the similarity/distance measure 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 44

What’s a good “basic concept”? • Orthogonal – Linearly independent basis vectors – “Non-overlapping” in meaning • No ambiguity • Weights can be assigned automatically and hopefully accurately • Many possibilities: Words, stemmed words, phrases, “latent concept”, … 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 45

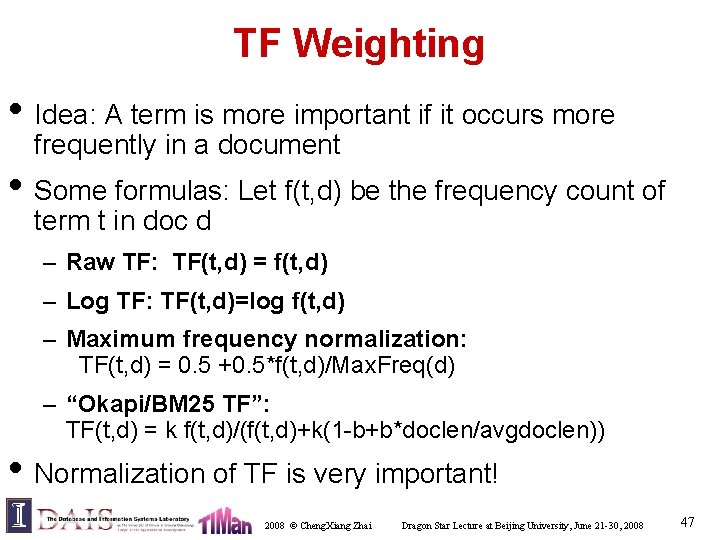

How to Assign Weights? • Very very important! • Why weighting – Query side: Not all terms are equally important – Doc side: Some terms carry more information about contents • How? – Two basic heuristics • TF (Term Frequency) = Within-doc-frequency • IDF (Inverse Document Frequency) – TF normalization 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 46

TF Weighting • Idea: A term is more important if it occurs more frequently in a document • Some formulas: Let f(t, d) be the frequency count of term t in doc d – Raw TF: TF(t, d) = f(t, d) – Log TF: TF(t, d)=log f(t, d) – Maximum frequency normalization: TF(t, d) = 0. 5 +0. 5*f(t, d)/Max. Freq(d) – “Okapi/BM 25 TF”: TF(t, d) = k f(t, d)/(f(t, d)+k(1 -b+b*doclen/avgdoclen)) • Normalization of TF is very important! 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 47

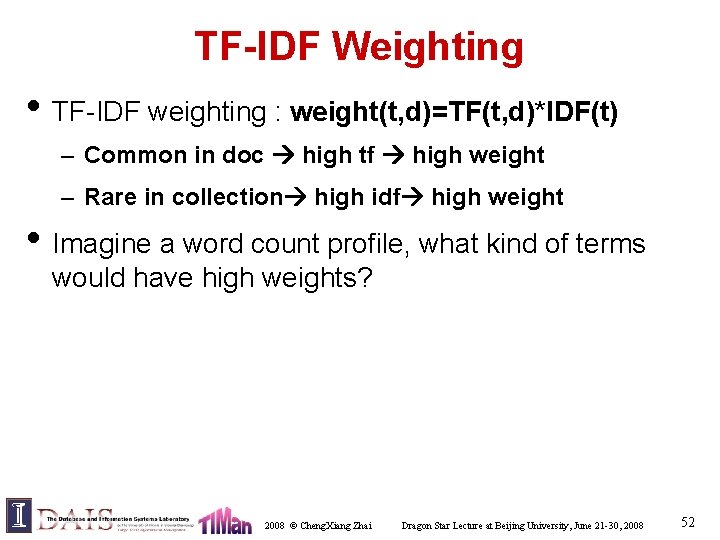

TF Normalization • Why? – Document length variation – “Repeated occurrences” are less informative than the “first occurrence” • Two views of document length – A doc is long because it uses more words – A doc is long because it has more contents • Generally penalize long doc, but avoid overpenalizing (pivoted normalization) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 48

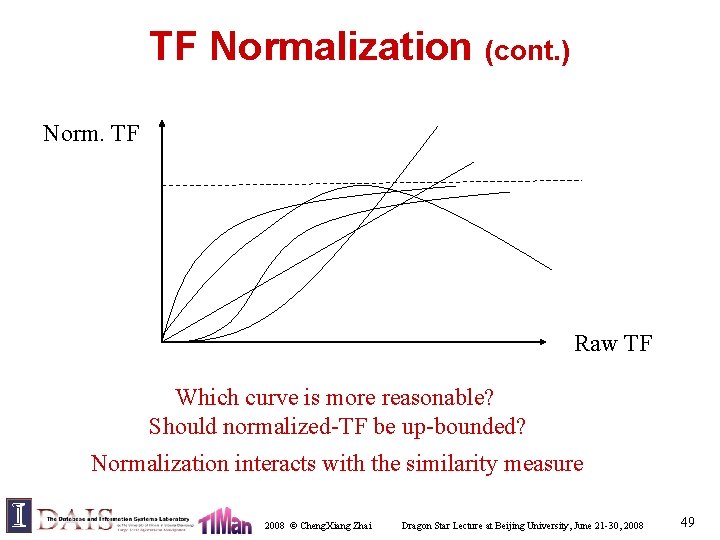

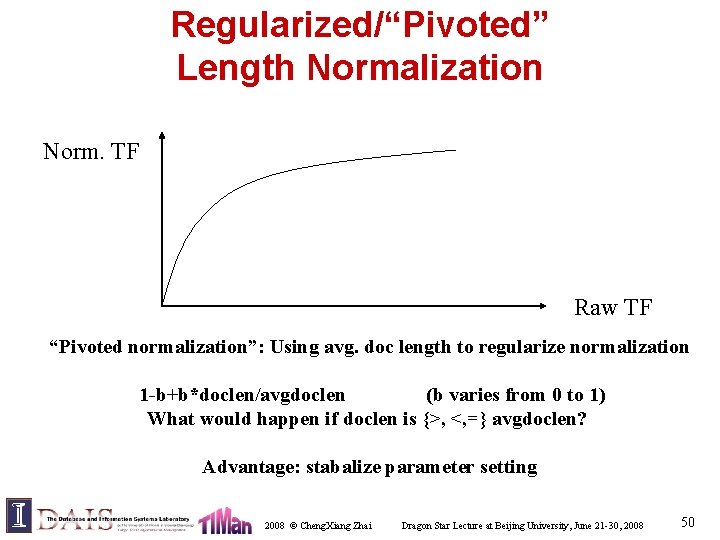

TF Normalization (cont. ) Norm. TF Raw TF Which curve is more reasonable? Should normalized-TF be up-bounded? Normalization interacts with the similarity measure 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 49

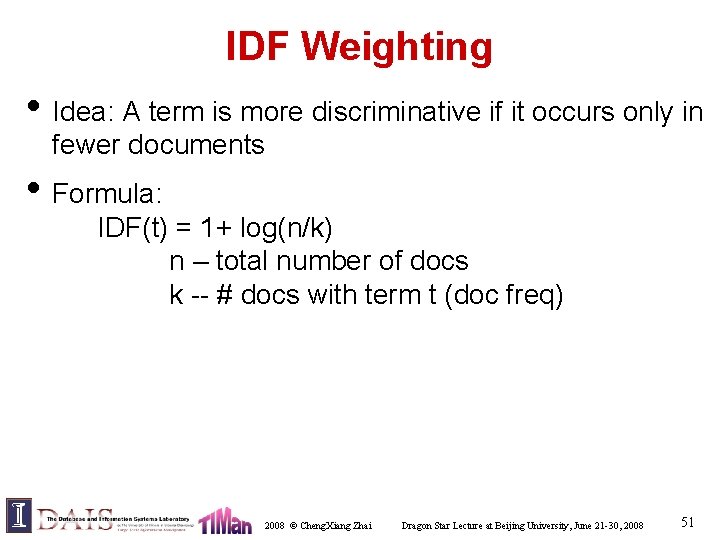

Regularized/“Pivoted” Length Normalization Norm. TF Raw TF “Pivoted normalization”: Using avg. doc length to regularize normalization 1 -b+b*doclen/avgdoclen (b varies from 0 to 1) What would happen if doclen is {>, <, =} avgdoclen? Advantage: stabalize parameter setting 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 50

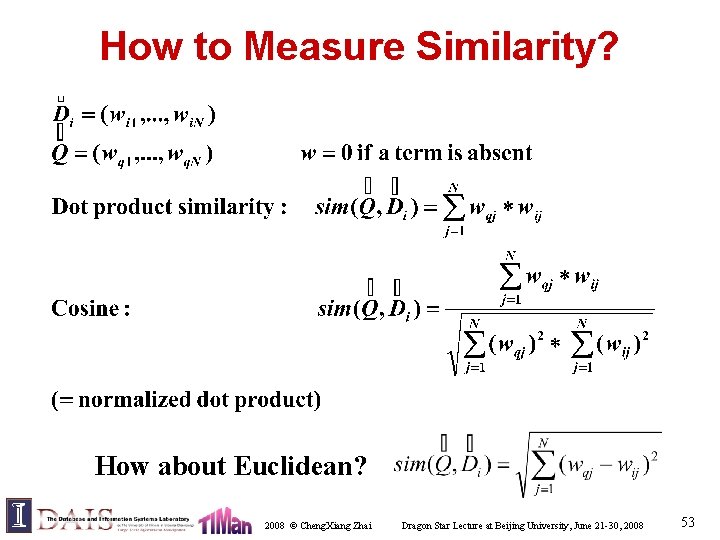

IDF Weighting • Idea: A term is more discriminative if it occurs only in fewer documents • Formula: IDF(t) = 1+ log(n/k) n – total number of docs k -- # docs with term t (doc freq) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 51

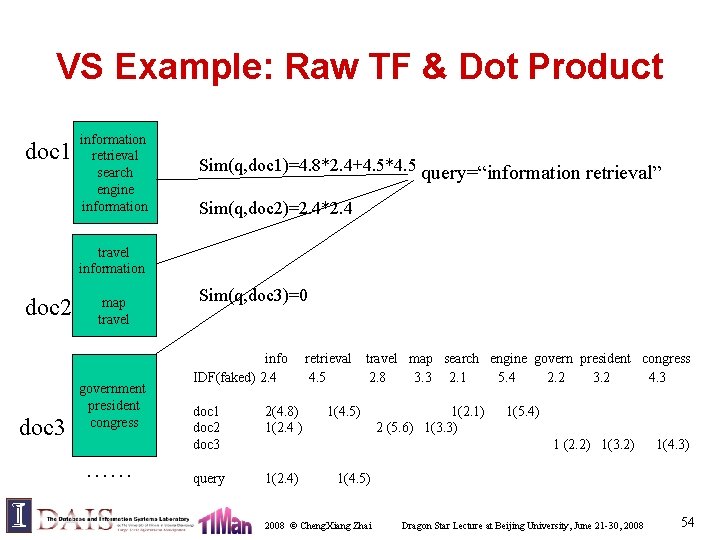

TF-IDF Weighting • TF-IDF weighting : weight(t, d)=TF(t, d)*IDF(t) – Common in doc high tf high weight – Rare in collection high idf high weight • Imagine a word count profile, what kind of terms would have high weights? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 52

How to Measure Similarity? How about Euclidean? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 53

VS Example: Raw TF & Dot Product doc 1 information retrieval search engine information Sim(q, doc 1)=4. 8*2. 4+4. 5*4. 5 query=“information retrieval” Sim(q, doc 2)=2. 4*2. 4 travel information doc 2 doc 3 map travel government president congress …… Sim(q, doc 3)=0 info IDF(faked) 2. 4 doc 1 doc 2 doc 3 2(4. 8) 1(2. 4 ) query 1(2. 4) retrieval 4. 5 travel map search engine govern president congress 2. 8 3. 3 2. 1 5. 4 2. 2 3. 2 4. 3 1(4. 5) 1(2. 1) 2 (5. 6) 1(3. 3) 1(5. 4) 1 (2. 2) 1(3. 2) 1(4. 3) 1(4. 5) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 54

![What Works the Best Error Use single words Use stat What Works the Best? Error [ ] • Use single words • Use stat.](https://slidetodoc.com/presentation_image_h/1a7c993bb891fe3f4d46a7d7b3204532/image-55.jpg)

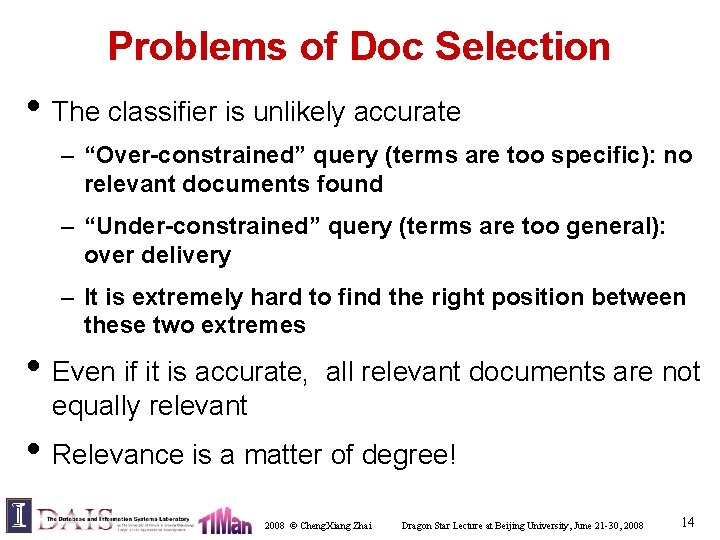

What Works the Best? Error [ ] • Use single words • Use stat. phrases • Remove stop words • Stemming • Others(? ) (Singhal 2001) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 55

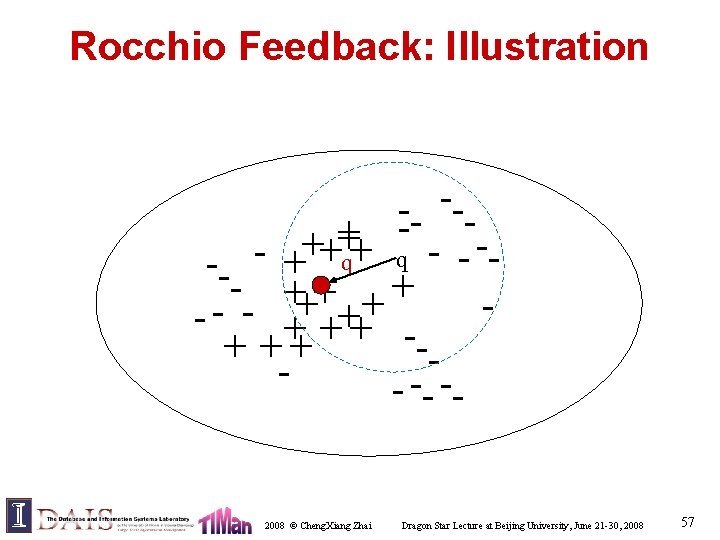

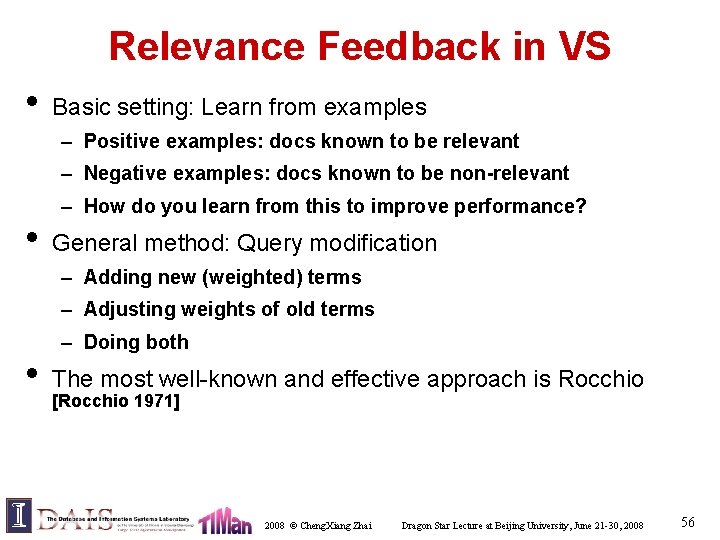

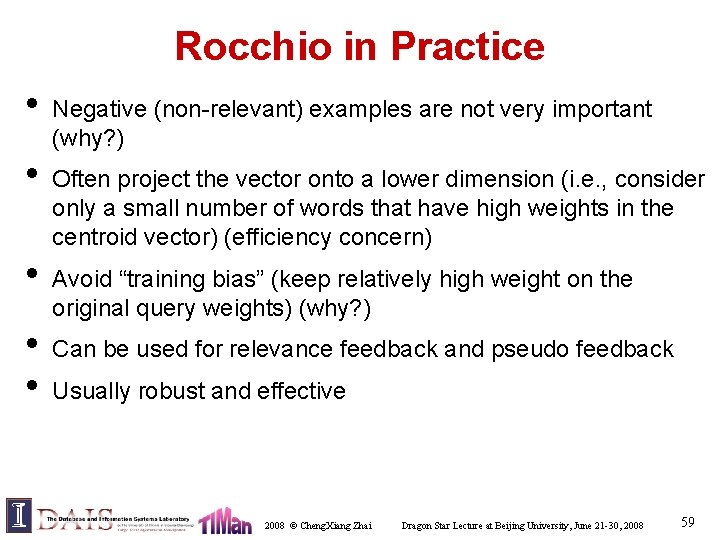

Relevance Feedback in VS • Basic setting: Learn from examples – Positive examples: docs known to be relevant – Negative examples: docs known to be non-relevant • – How do you learn from this to improve performance? General method: Query modification – Adding new (weighted) terms – Adjusting weights of old terms • – Doing both The most well-known and effective approach is Rocchio [Rocchio 1971] 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 56

Rocchio Feedback: Illustration --- --+ + q q -- + - +++ + + - - - + +++ + ++ -- -- -- 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 57

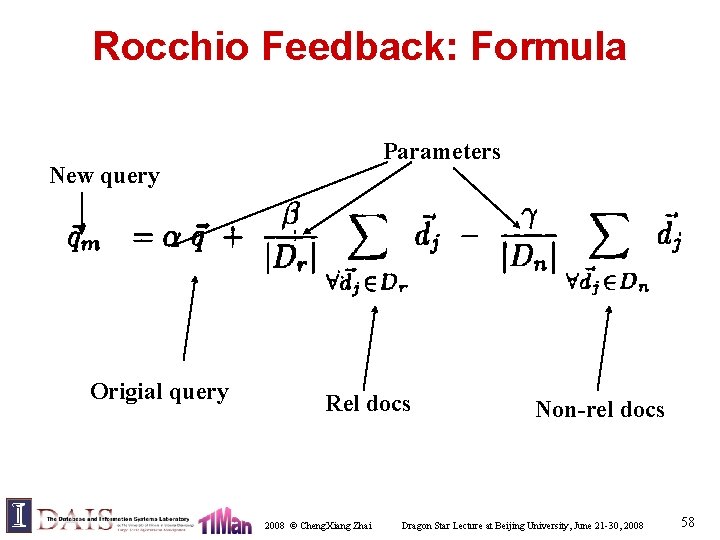

Rocchio Feedback: Formula Parameters New query Origial query Rel docs 2008 © Cheng. Xiang Zhai Non-rel docs Dragon Star Lecture at Beijing University, June 21 -30, 2008 58

Rocchio in Practice • • • Negative (non-relevant) examples are not very important (why? ) Often project the vector onto a lower dimension (i. e. , consider only a small number of words that have high weights in the centroid vector) (efficiency concern) Avoid “training bias” (keep relatively high weight on the original query weights) (why? ) Can be used for relevance feedback and pseudo feedback Usually robust and effective 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 59

“Extension” of VS Model • Alternative similarity measures – Many other choices (tend not to be very effective) – P-norm (Extended Boolean): matching a Boolean query with a TF-IDF document vector • Alternative representation – Many choices (performance varies a lot) – Latent Semantic Indexing (LSI) [TREC performance tends to be average] • Generalized vector space model – Theoretically interesting, not seriously evaluated 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 60

Advantages of VS Model • Empirically effective! (Top TREC performance) • Intuitive • Easy to implement • Well-studied/Most evaluated • The Smart system – Developed at Cornell: 1960 -1999 – Still widely used • Warning: Many variants of TF-IDF! 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 61

Disadvantages of VS Model • Assume term independence • Assume query and document to be the same • Lack of “predictive adequacy” – Arbitrary term weighting – Arbitrary similarity measure • Lots of parameter tuning! 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 62

What You Should Know • What is Vector Space Model (a family of models) • What is TF-IDF weighting • What is pivoted normalization weighting • How Rocchio works 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 63

Roadmap • This lecture – Basic concepts of TR – Evaluation – Common components – Vector space model • Next lecture: continue overview of IR – IR system implementation – Other retrieval models – Applications of basic TR techniques 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 64