Outline Nonlinear Dimension Reduction Brief introduction Isomap LLE

Outline • Nonlinear Dimension Reduction – Brief introduction – Isomap – LLE – Laplacian eigenmap 9/5/2021 Computer Vision 1

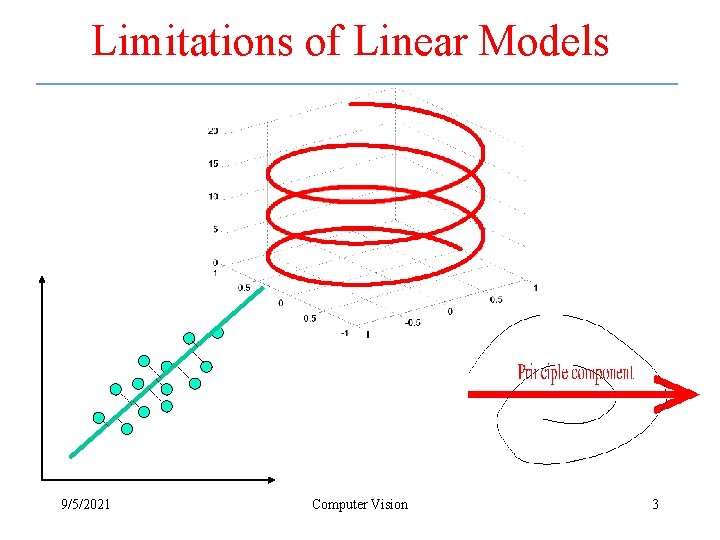

Motivations • In computer vision, one can create large image datasets – These datasets can not be described effectively using a linear model 9/5/2021 Computer Vision 2

Limitations of Linear Models 9/5/2021 Computer Vision 3

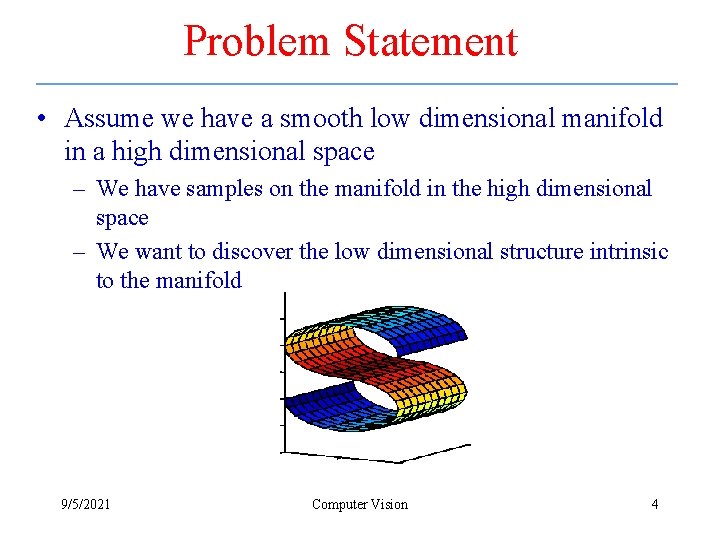

Problem Statement • Assume we have a smooth low dimensional manifold in a high dimensional space – We have samples on the manifold in the high dimensional space – We want to discover the low dimensional structure intrinsic to the manifold 9/5/2021 Computer Vision 4

Approaches • ISOMAP – Tenenbaum, de Silva, Langford, 2000 • Locally Linear Embeddings (LLE) – Roweis, Saul (2000) • Laplacian Eigenmaps – Belkin, Niyogi (2002) • Hessian Eigenmaps (HLLE) – Grimes, Donoho (2003); • Local Space Tangent Alignment (LTSA) – Zhang, Za (2003) • Semi. Definite Embedding (SDE) – Weinberger, Saul (2004) 9/5/2021 Computer Vision 5

Neighborhoods • Two ways to select neighboring objects: – k nearest neighbors (k-NN) – can make nonuniform neighbor distance across the dataset – ε-ball • prior knowledge of the data is needed to make reasonable neighborhoods • size of neighborhood can vary – Corresponding to Parzen Windows and Knnearest neighbor estimation 9/5/2021 Computer Vision 6

Isomap • Only geodesic distances reflect the true low dimensional geometry of the manifold • Geodesic distances are hard to compute even if you know the manifold – In a small neighborhood Euclidian distance is a good approximation of the geodesic distance – For faraway points, geodesic distance is approximated by adding up a sequence of “short hops” between neighboring points 9/5/2021 Computer Vision 7

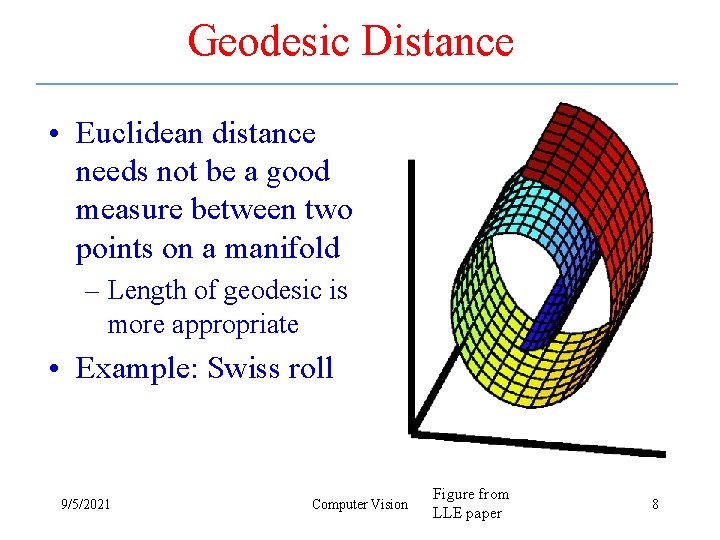

Geodesic Distance • Euclidean distance needs not be a good measure between two points on a manifold – Length of geodesic is more appropriate • Example: Swiss roll 9/5/2021 Computer Vision Figure from LLE paper 8

Isomap Algorithm • Find neighborhood of each object by computing distances between all pairs of points and selecting closest • Build a graph with a node for each object and an edge between neighboring points. Euclidian distance between two objects is used as edge weight – Use a shortest path graph algorithm to fill in distance between all non-neighboring points • Apply classical MDS on this distance matrix 9/5/2021 Computer Vision 9

Isomap 9/5/2021 Computer Vision 10

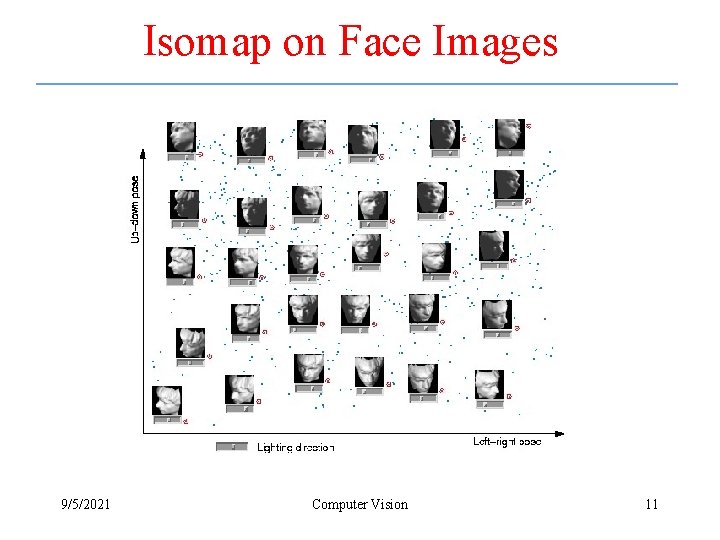

Isomap on Face Images 9/5/2021 Computer Vision 11

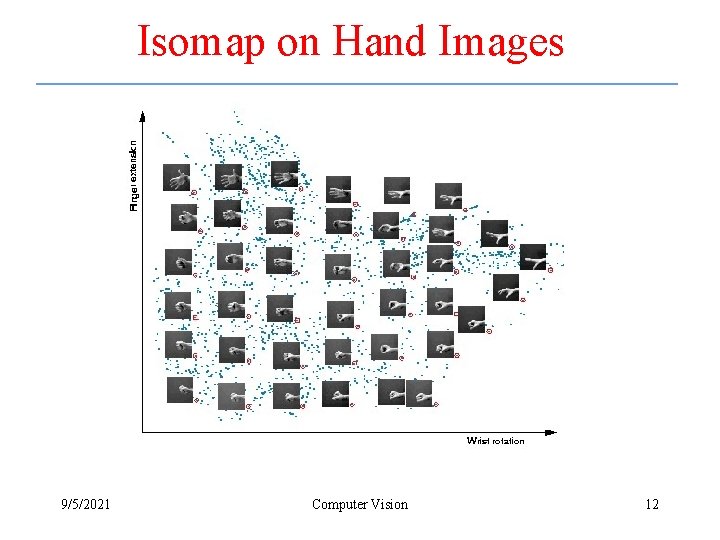

Isomap on Hand Images 9/5/2021 Computer Vision 12

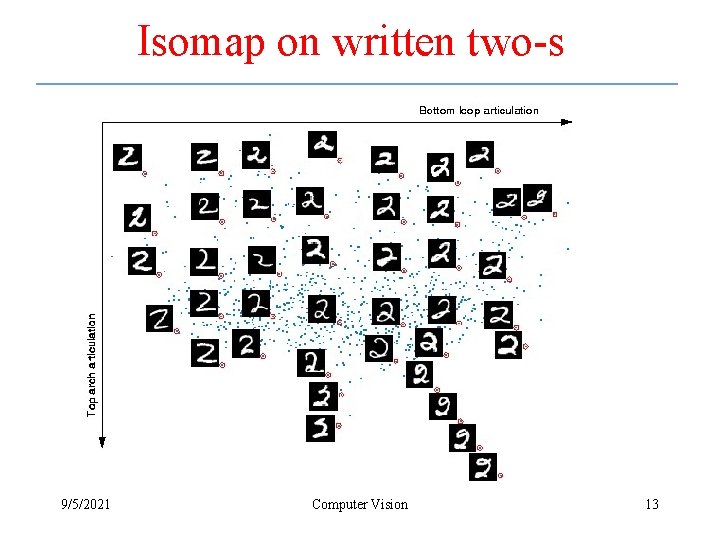

Isomap on written two-s 9/5/2021 Computer Vision 13

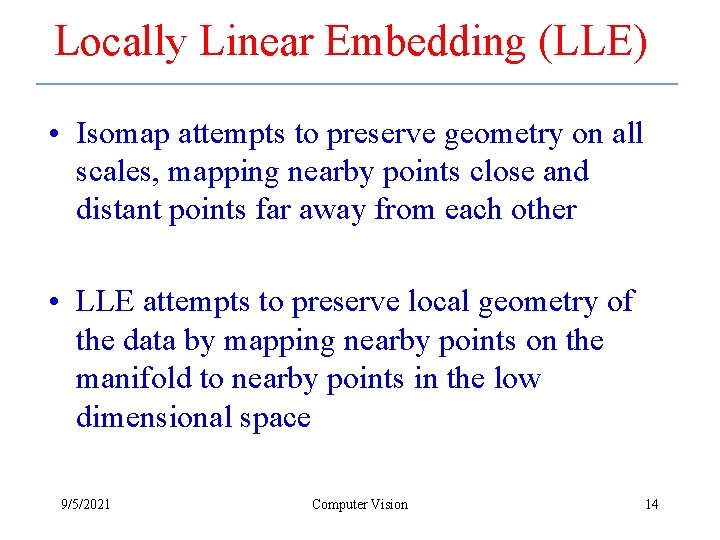

Locally Linear Embedding (LLE) • Isomap attempts to preserve geometry on all scales, mapping nearby points close and distant points far away from each other • LLE attempts to preserve local geometry of the data by mapping nearby points on the manifold to nearby points in the low dimensional space 9/5/2021 Computer Vision 14

LLE – General Idea • Locally, on a fine enough scale, everything looks linear • Represent object as linear combination of its neighbors • Representation indifferent to affine transformation • Assumption: same linear representation will hold in the low dimensional space 9/5/2021 Computer Vision 15

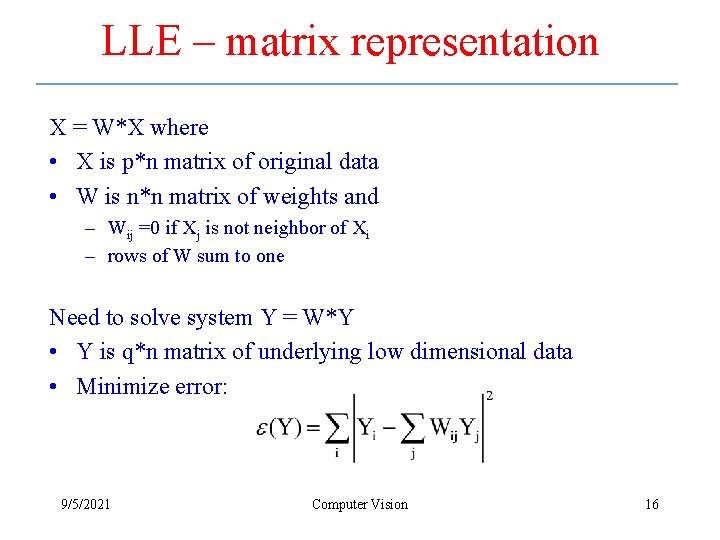

LLE – matrix representation X = W*X where • X is p*n matrix of original data • W is n*n matrix of weights and – Wij =0 if Xj is not neighbor of Xi – rows of W sum to one Need to solve system Y = W*Y • Y is q*n matrix of underlying low dimensional data • Minimize error: 9/5/2021 Computer Vision 16

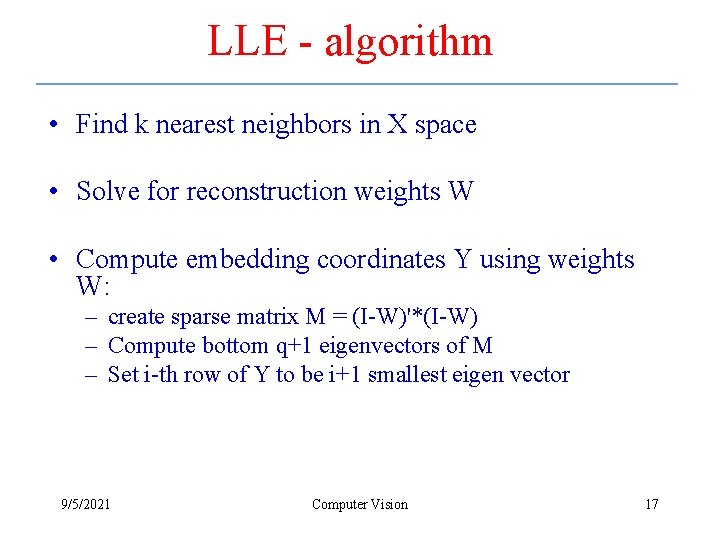

LLE - algorithm • Find k nearest neighbors in X space • Solve for reconstruction weights W • Compute embedding coordinates Y using weights W: – create sparse matrix M = (I-W)'*(I-W) – Compute bottom q+1 eigenvectors of M – Set i-th row of Y to be i+1 smallest eigen vector 9/5/2021 Computer Vision 17

LLE 9/5/2021 Computer Vision 18

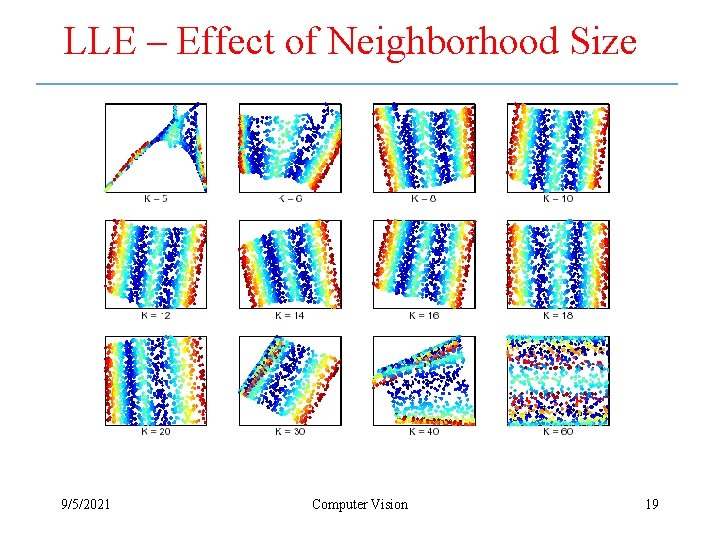

LLE – Effect of Neighborhood Size 9/5/2021 Computer Vision 19

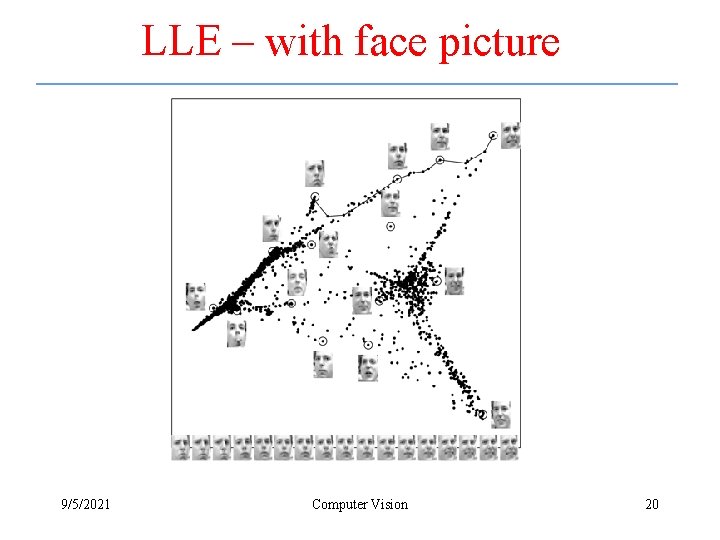

LLE – with face picture 9/5/2021 Computer Vision 20

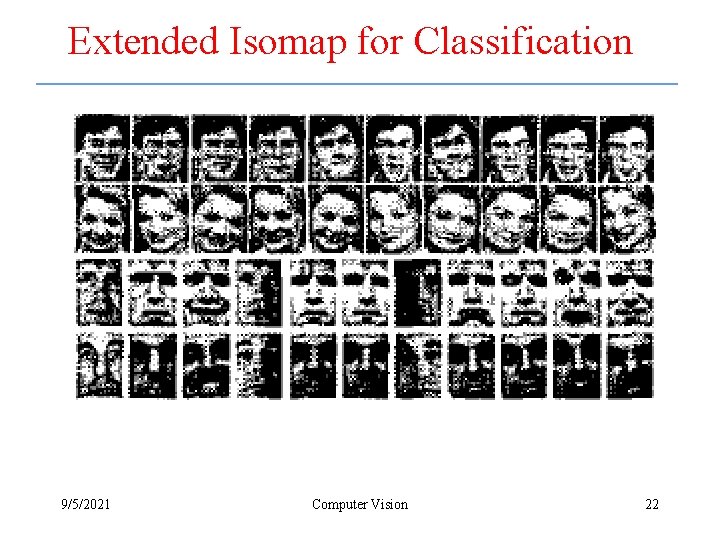

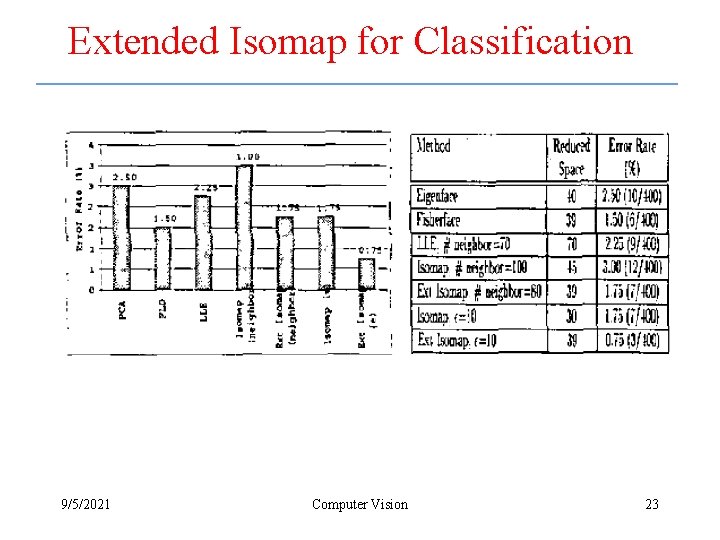

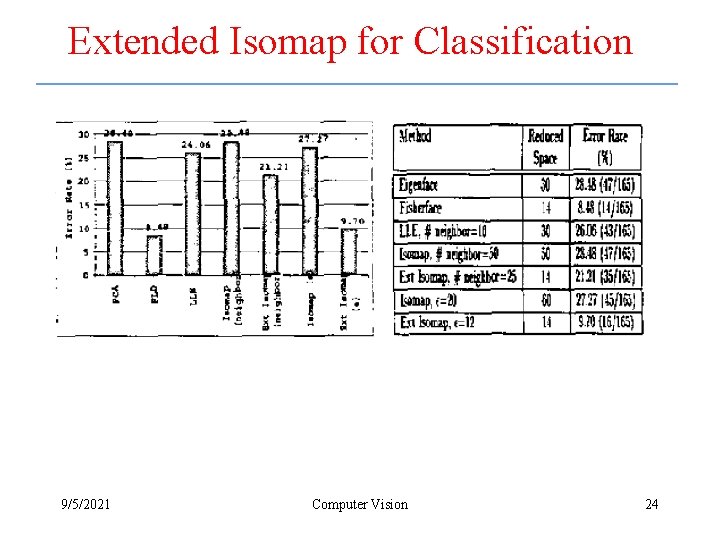

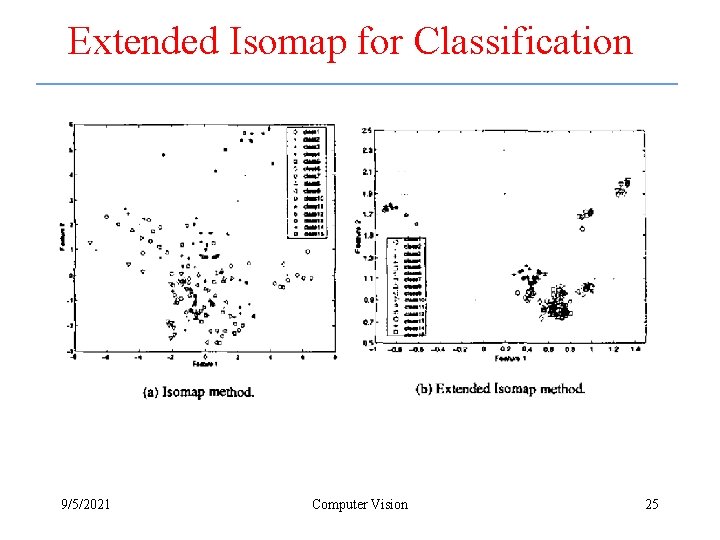

Extended Isomap for Classification • The idea is to use the geodesic distance to the training instances as a feature vector – Then reduce the dimension using the Fisher linear discriminant analysis 9/5/2021 Computer Vision 21

Extended Isomap for Classification 9/5/2021 Computer Vision 22

Extended Isomap for Classification 9/5/2021 Computer Vision 23

Extended Isomap for Classification 9/5/2021 Computer Vision 24

Extended Isomap for Classification 9/5/2021 Computer Vision 25

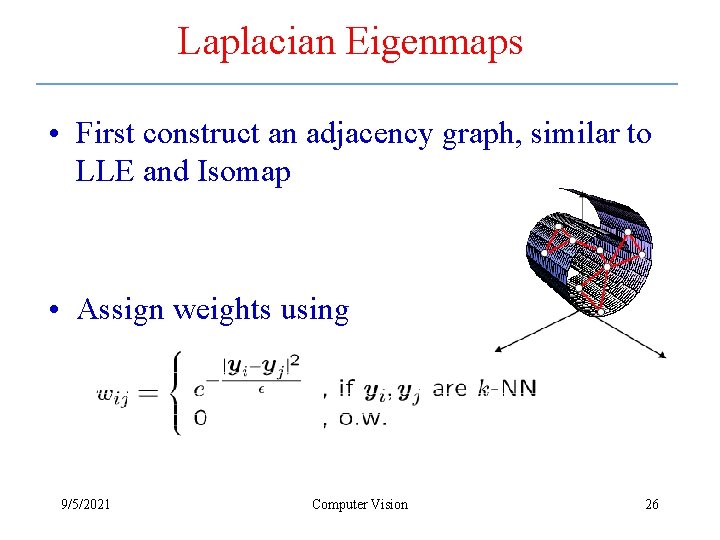

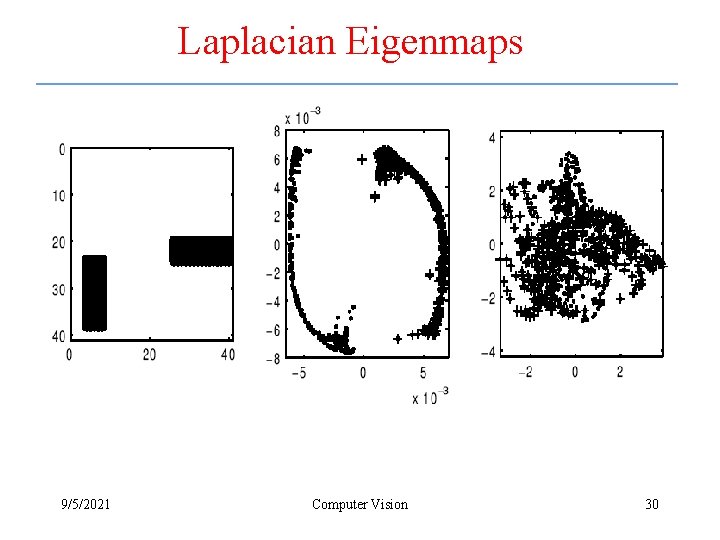

Laplacian Eigenmaps • First construct an adjacency graph, similar to LLE and Isomap • Assign weights using 9/5/2021 Computer Vision 26

Laplacian Eigenmaps • Compute eigenvalues and eigenvectors for a generalized eigenvector problem – Which minimizes 9/5/2021 Computer Vision 27

Laplacian Eigenmaps 9/5/2021 Computer Vision 28

Laplacian Eigenmaps 9/5/2021 Computer Vision 29

Laplacian Eigenmaps 9/5/2021 Computer Vision 30

- Slides: 30