Outline Motivation Mutual Consistency CH Model Noisy BestResponse

Outline Ø Motivation Ø Mutual Consistency: CH Model Ø Noisy Best-Response: QRE Model Ø Instant Convergence: EWA Learning Duke Ph. D Summer Camp Teck H. Ho 1

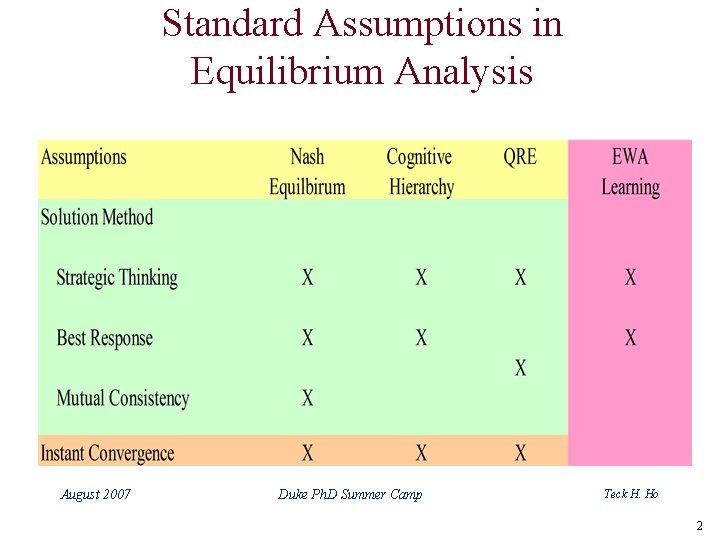

Standard Assumptions in Equilibrium Analysis August 2007 Duke Ph. D Summer Camp Teck H. Ho 2

Motivation: EWA Learning Ø Provide a formal model of dynamic adjustment based on information feedback (e. g. , a model of equilibration) Ø Allow players who have varying levels of expertise to behave differently (adaptation vs. sophistication) August 2007 Duke Ph. D Summer Camp Teck H. Ho 3

Outline Ø Research Question Ø Criteria of a Good Model Ø Experience Weighted Attraction (EWA 1. 0) Learning Model Ø Sophisticated EWA Learning (EWA 2. 0) Duke Ph. D Summer Camp Teck H. Ho 4

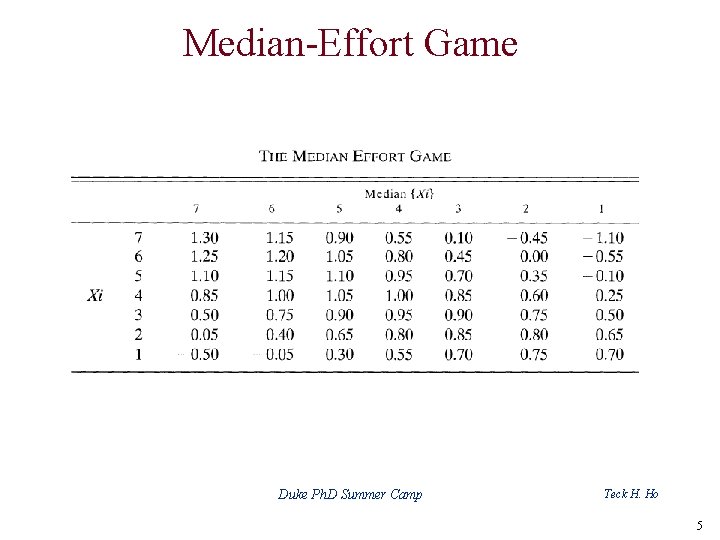

Median-Effort Game Duke Ph. D Summer Camp Teck H. Ho 5

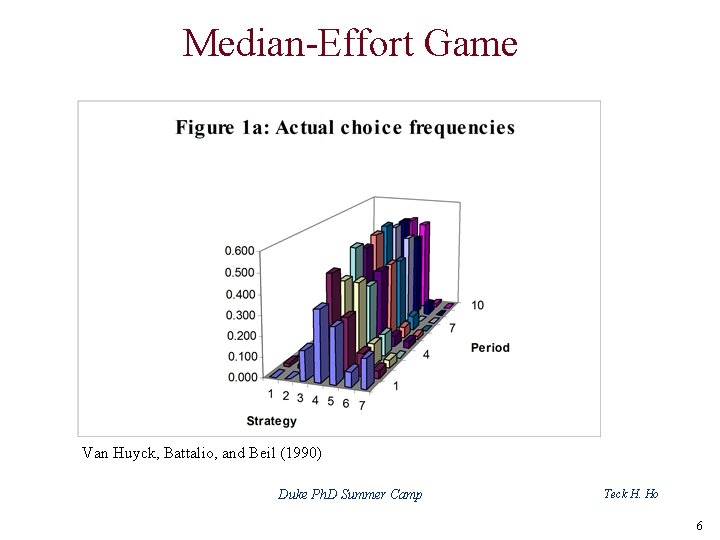

Median-Effort Game Van Huyck, Battalio, and Beil (1990) Duke Ph. D Summer Camp Teck H. Ho 6

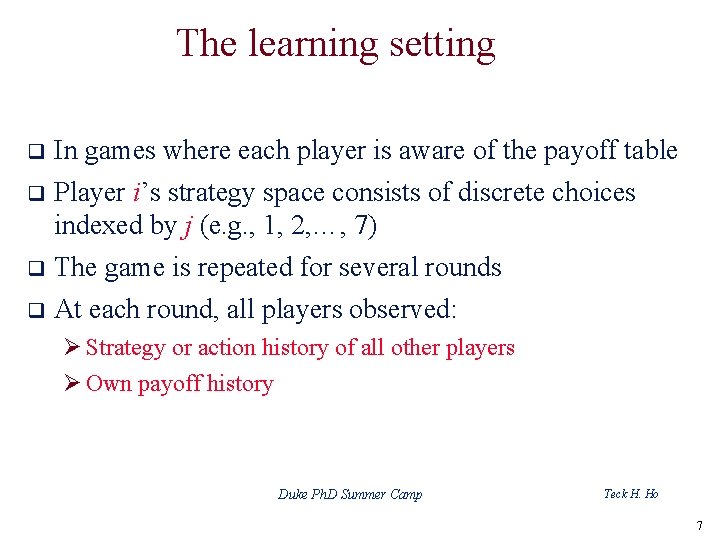

The learning setting q In games where each player is aware of the payoff table Player i’s strategy space consists of discrete choices indexed by j (e. g. , 1, 2, …, 7) q The game is repeated for several rounds q At each round, all players observed: q Ø Strategy or action history of all other players Ø Own payoff history Duke Ph. D Summer Camp Teck H. Ho 7

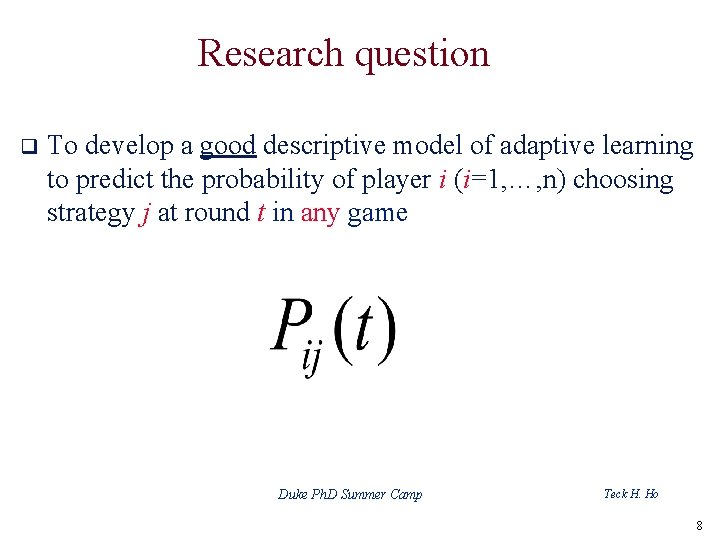

Research question q To develop a good descriptive model of adaptive learning to predict the probability of player i (i=1, …, n) choosing strategy j at round t in any game Duke Ph. D Summer Camp Teck H. Ho 8

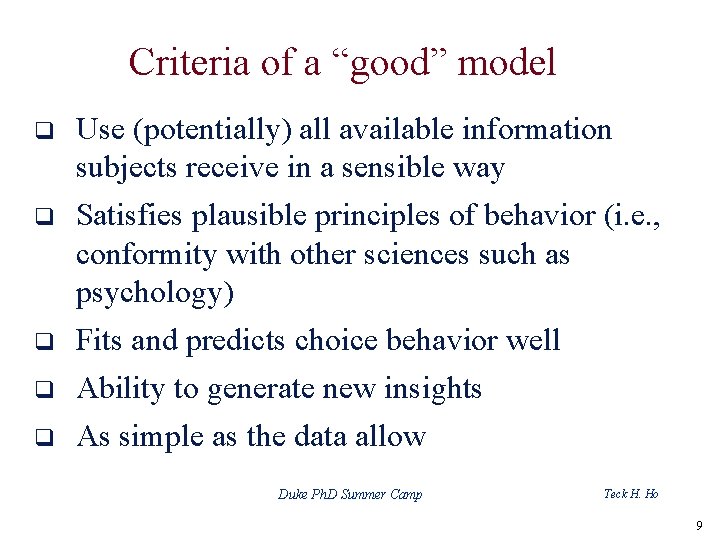

Criteria of a “good” model q q q Use (potentially) all available information subjects receive in a sensible way Satisfies plausible principles of behavior (i. e. , conformity with other sciences such as psychology) Fits and predicts choice behavior well Ability to generate new insights As simple as the data allow Duke Ph. D Summer Camp Teck H. Ho 9

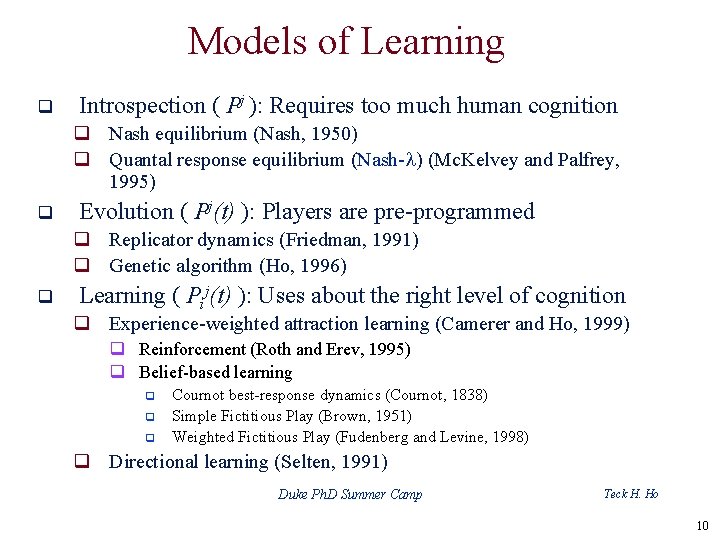

Models of Learning q Introspection ( Pj ): Requires too much human cognition q Nash equilibrium (Nash, 1950) q Quantal response equilibrium (Nash-l) (Mc. Kelvey and Palfrey, 1995) q Evolution ( Pj(t) ): Players are pre-programmed q Replicator dynamics (Friedman, 1991) q Genetic algorithm (Ho, 1996) q Learning ( Pij(t) ): Uses about the right level of cognition q Experience-weighted attraction learning (Camerer and Ho, 1999) q Reinforcement (Roth and Erev, 1995) q Belief-based learning q q q Cournot best-response dynamics (Cournot, 1838) Simple Fictitious Play (Brown, 1951) Weighted Fictitious Play (Fudenberg and Levine, 1998) q Directional learning (Selten, 1991) Duke Ph. D Summer Camp Teck H. Ho 10

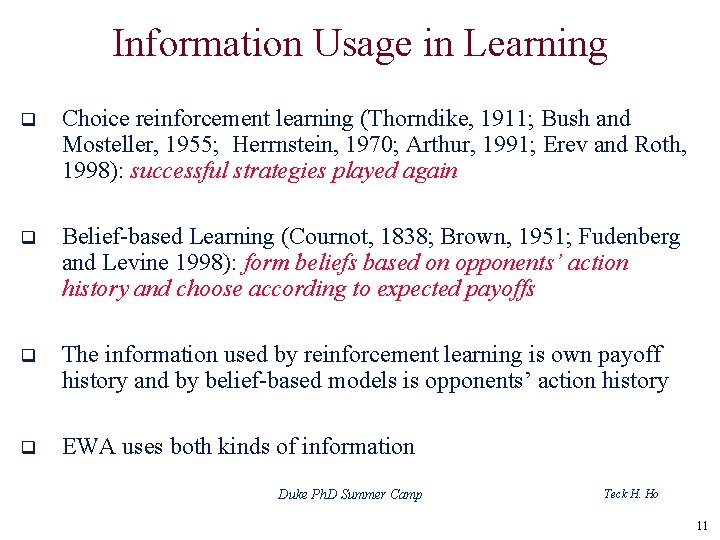

Information Usage in Learning q Choice reinforcement learning (Thorndike, 1911; Bush and Mosteller, 1955; Herrnstein, 1970; Arthur, 1991; Erev and Roth, 1998): successful strategies played again q Belief-based Learning (Cournot, 1838; Brown, 1951; Fudenberg and Levine 1998): form beliefs based on opponents’ action history and choose according to expected payoffs q The information used by reinforcement learning is own payoff history and by belief-based models is opponents’ action history q EWA uses both kinds of information Duke Ph. D Summer Camp Teck H. Ho 11

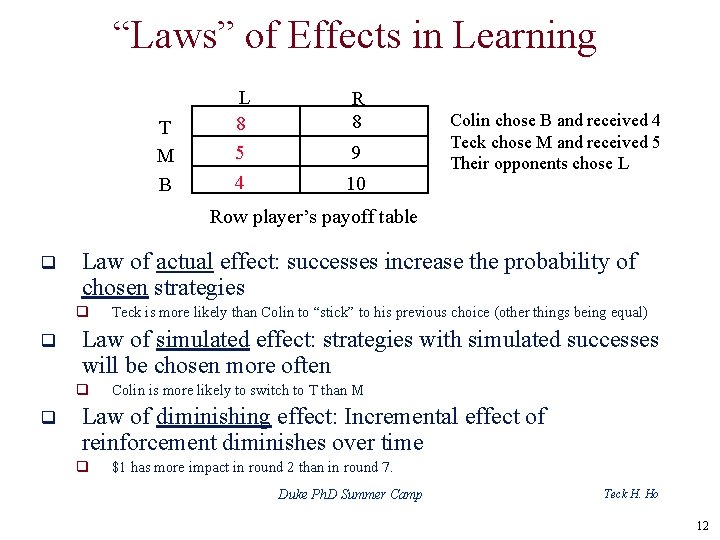

“Laws” of Effects in Learning T M B L 8 5 4 R 8 9 10 Colin chose B and received 4 Teck chose M and received 5 Their opponents chose L Row player’s payoff table q Law of actual effect: successes increase the probability of chosen strategies q q Law of simulated effect: strategies with simulated successes will be chosen more often q q Teck is more likely than Colin to “stick” to his previous choice (other things being equal) Colin is more likely to switch to T than M Law of diminishing effect: Incremental effect of reinforcement diminishes over time q $1 has more impact in round 2 than in round 7. Duke Ph. D Summer Camp Teck H. Ho 12

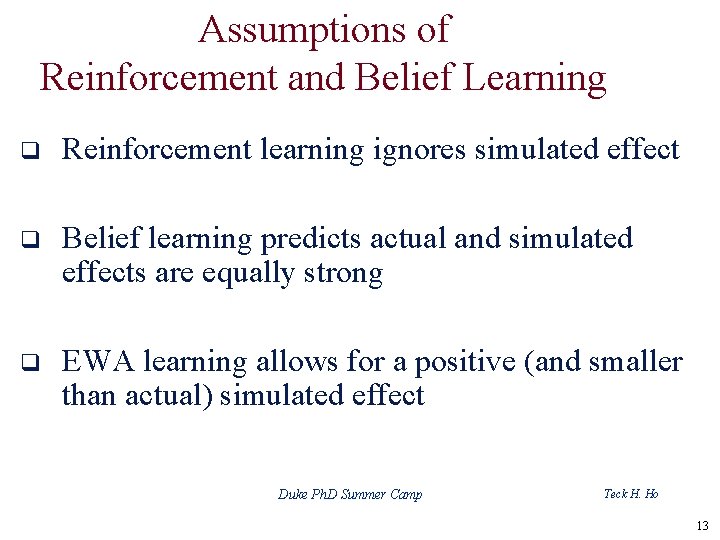

Assumptions of Reinforcement and Belief Learning q Reinforcement learning ignores simulated effect q Belief learning predicts actual and simulated effects are equally strong q EWA learning allows for a positive (and smaller than actual) simulated effect Duke Ph. D Summer Camp Teck H. Ho 13

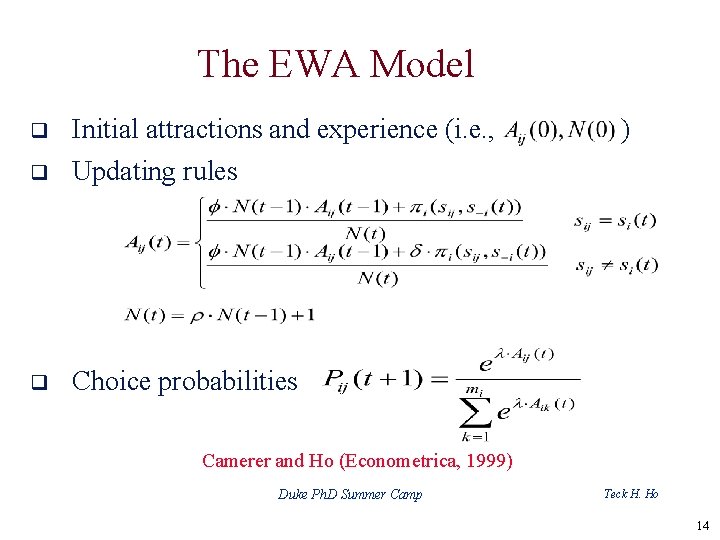

The EWA Model q Initial attractions and experience (i. e. , q Updating rules q Choice probabilities ) Camerer and Ho (Econometrica, 1999) Duke Ph. D Summer Camp Teck H. Ho 14

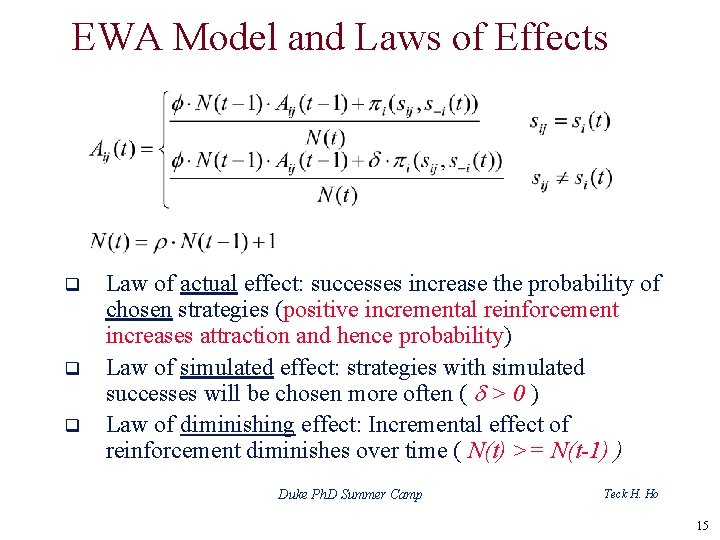

EWA Model and Laws of Effects q q q Law of actual effect: successes increase the probability of chosen strategies (positive incremental reinforcement increases attraction and hence probability) Law of simulated effect: strategies with simulated successes will be chosen more often ( d > 0 ) Law of diminishing effect: Incremental effect of reinforcement diminishes over time ( N(t) >= N(t-1) ) Duke Ph. D Summer Camp Teck H. Ho 15

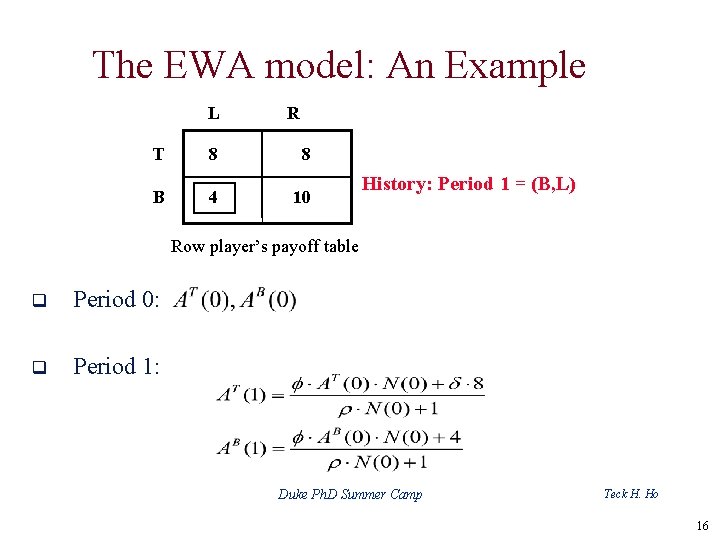

The EWA model: An Example L R T 8 8 B 4 10 History: Period 1 = (B, L) Row player’s payoff table q Period 0: q Period 1: Duke Ph. D Summer Camp Teck H. Ho 16

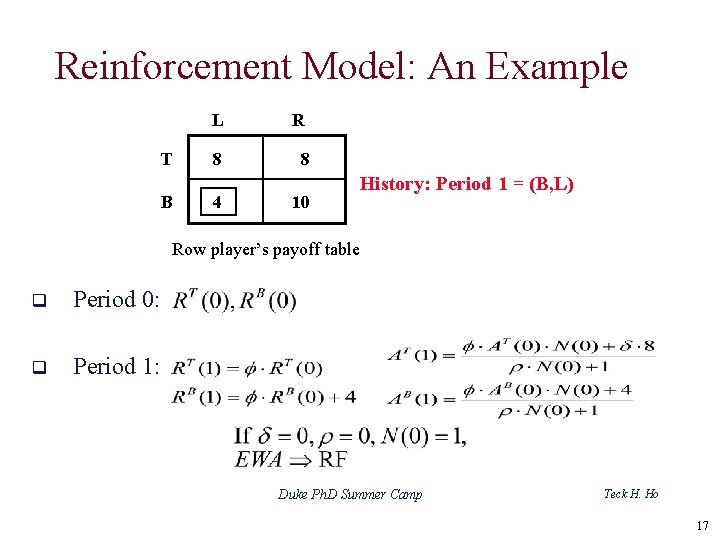

Reinforcement Model: An Example T B L R 8 8 4 10 History: Period 1 = (B, L) Row player’s payoff table q Period 0: q Period 1: Duke Ph. D Summer Camp Teck H. Ho 17

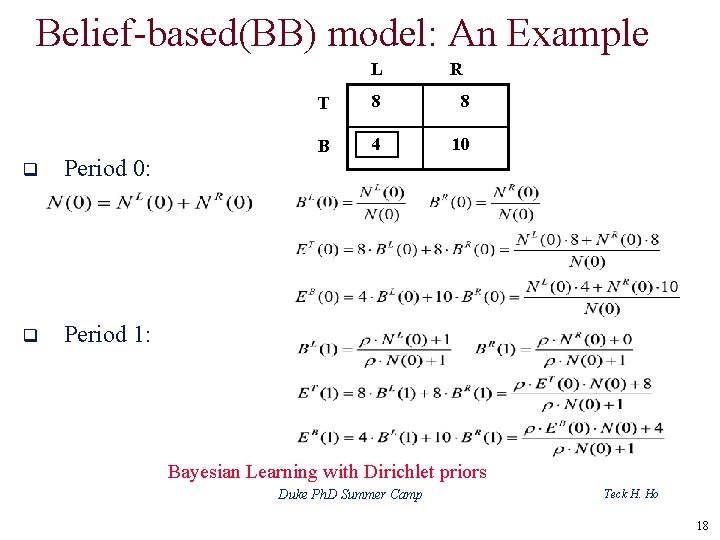

Belief-based(BB) model: An Example L q Period 0: q Period 1: R T 8 8 B 4 10 Bayesian Learning with Dirichlet priors Duke Ph. D Summer Camp Teck H. Ho 18

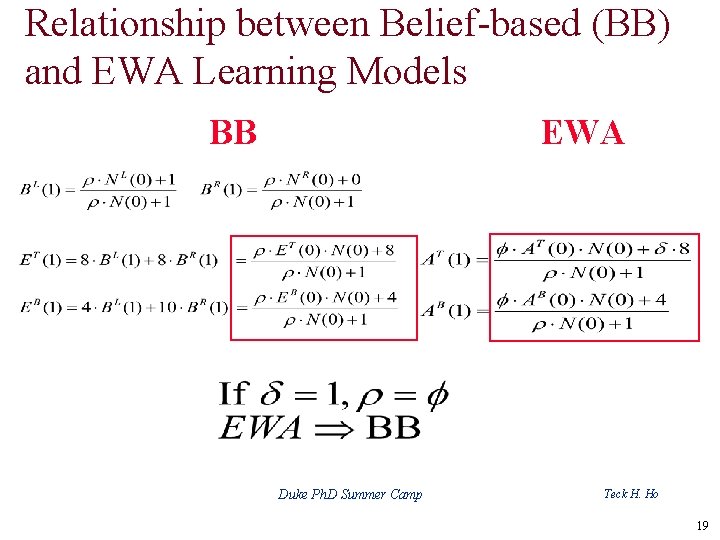

Relationship between Belief-based (BB) and EWA Learning Models BB EWA Duke Ph. D Summer Camp Teck H. Ho 19

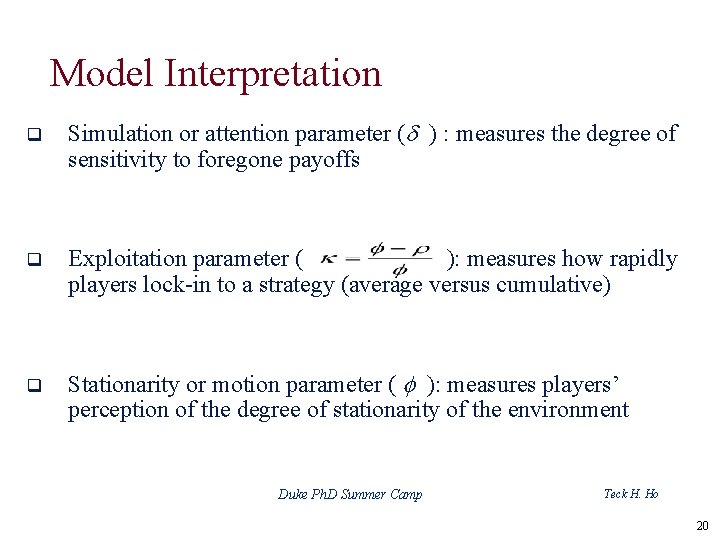

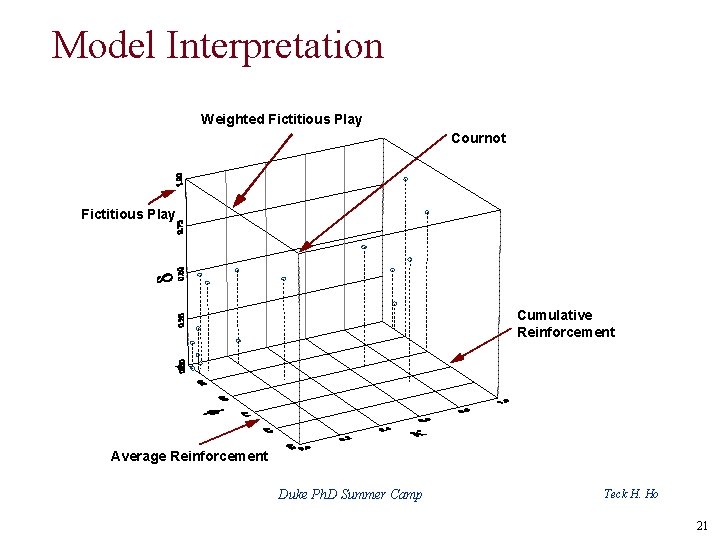

Model Interpretation q Simulation or attention parameter (d ) : measures the degree of sensitivity to foregone payoffs q Exploitation parameter ( ): measures how rapidly players lock-in to a strategy (average versus cumulative) q Stationarity or motion parameter ( f ): measures players’ perception of the degree of stationarity of the environment Duke Ph. D Summer Camp Teck H. Ho 20

Model Interpretation Weighted Fictitious Play Cournot Fictitious Play Cumulative Reinforcement Average Reinforcement Duke Ph. D Summer Camp Teck H. Ho 21

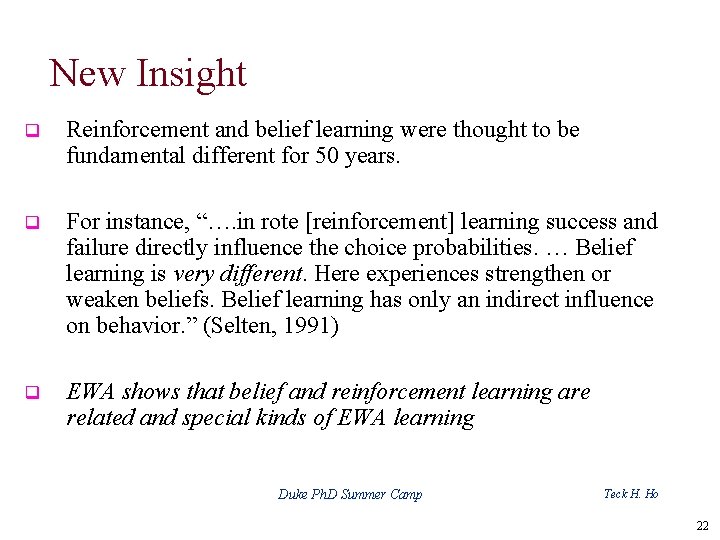

New Insight q Reinforcement and belief learning were thought to be fundamental different for 50 years. q For instance, “…. in rote [reinforcement] learning success and failure directly influence the choice probabilities. … Belief learning is very different. Here experiences strengthen or weaken beliefs. Belief learning has only an indirect influence on behavior. ” (Selten, 1991) q EWA shows that belief and reinforcement learning are related and special kinds of EWA learning Duke Ph. D Summer Camp Teck H. Ho 22

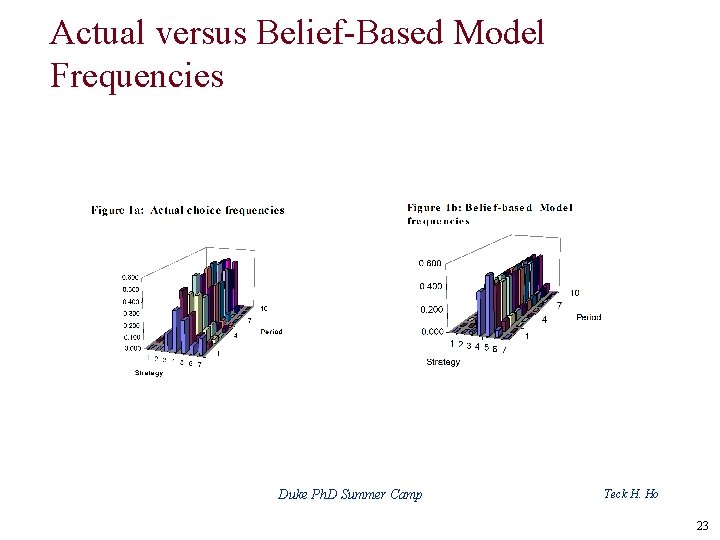

Actual versus Belief-Based Model Frequencies Duke Ph. D Summer Camp Teck H. Ho 23

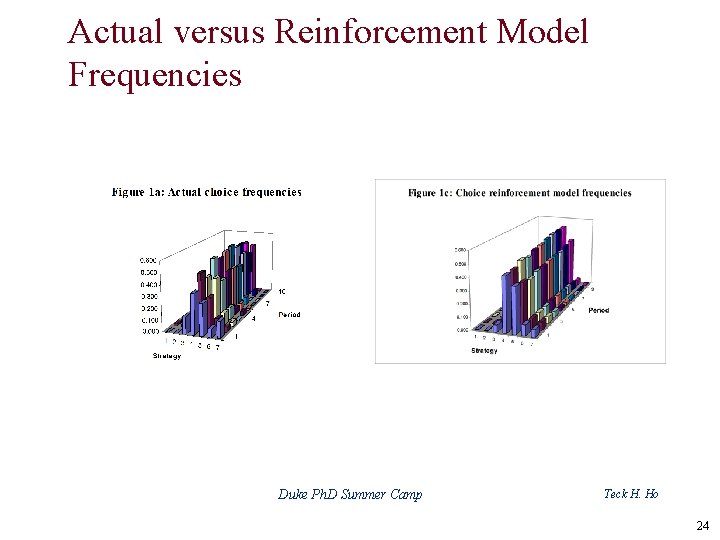

Actual versus Reinforcement Model Frequencies Duke Ph. D Summer Camp Teck H. Ho 24

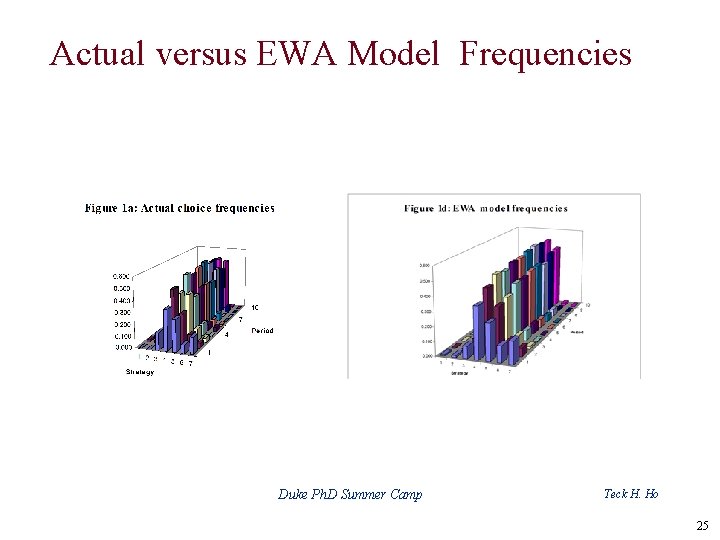

Actual versus EWA Model Frequencies Duke Ph. D Summer Camp Teck H. Ho 25

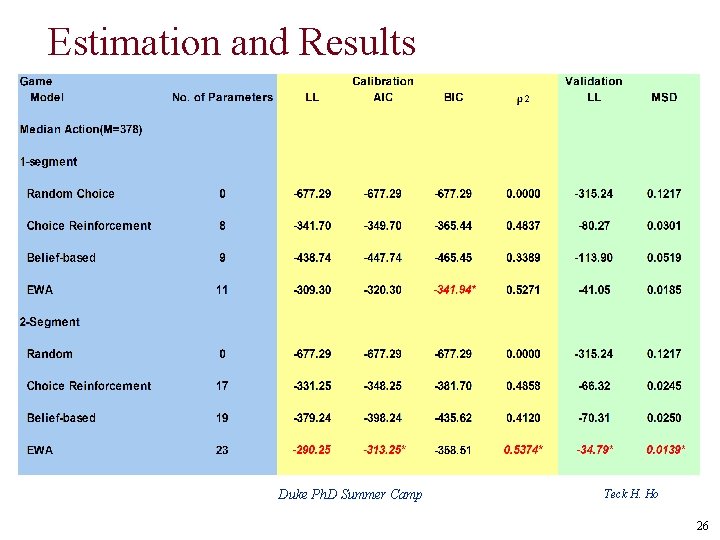

Estimation and Results Duke Ph. D Summer Camp Teck H. Ho 26

August 2007 Duke Ph. D Summer Camp Teck H. Ho 27

August 2007 Duke Ph. D Summer Camp Teck H. Ho 28

Extensions q Heterogeneity (JMP, Camerer and Ho, 1999) q Payoff learning (EJ, Ho, Wang, and Camerer 2006) q Sophistication and strategic teaching q q Sophisticated learning (JET, Camerer, Ho, and Chong, 2002) Reputation building (GEB, Chong, Camerer, and Ho, 2006) q EWA Lite (Self-tuning EWA learning) (JET, Ho, Camerer, and Chong, 2007) q Applications: q Product Choice at Supermarkets (JMR, Ho and Chong, 2004) Duke Ph. D Summer Camp Teck H. Ho 29

Homework Ø Provide a general proof that Bayesian learning (i. e. , weighted fictitious play) is a special case of EWA learning. Ø If players are faced with a stationary environment (i. e. , decision problems), will EWA learning lead to EU maximization in the long-run? Duke Ph. D Summer Camp Teck H. Ho 30

Outline Ø Research Question Ø Criteria of a Good Model Ø Experience Weighted Attraction (EWA 1. 0) Learning Model Ø Sophisticated EWA Learning (EWA 2. 0) Duke Ph. D Summer Camp Teck H. Ho 31

Three User Complaints of EWA 1. 0 u Experience matters. u EWA 1. 0 prediction is not sensitive to the structure of the learning setting (e. g. , matching protocol). u EWA 1. 0 model does not use opponents’ payoff matrix to predict behavior. Duke Ph. D Summer Camp Teck H. Ho 32

Example 3: p-Beauty Contest q n players q Every player simultaneously chooses a number from 0 to 100 q Compute the group average q Define Target Number to be 0. 7 times the group average q The winner is the player whose number is the closet to the Target Number q The prize to the winner is US$20 Ho, Camerer, and Weigelt (AER, 1998) August 2007 Duke Ph. D Summer Camp Teck H. Ho 33

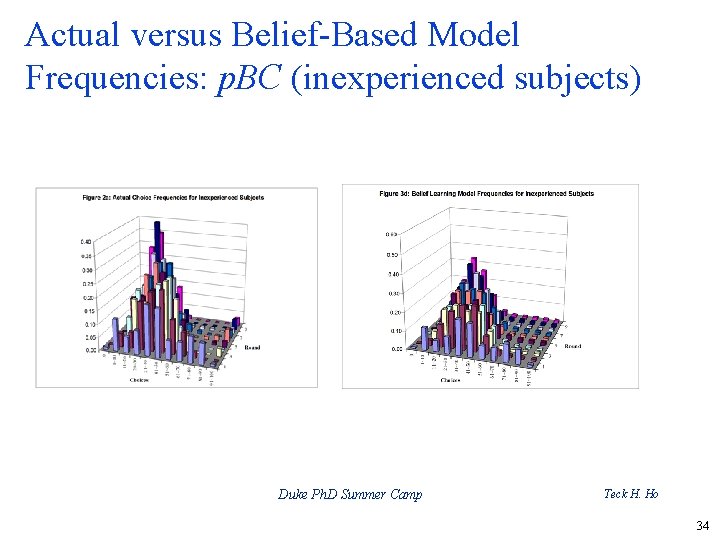

Actual versus Belief-Based Model Frequencies: p. BC (inexperienced subjects) Duke Ph. D Summer Camp Teck H. Ho 34

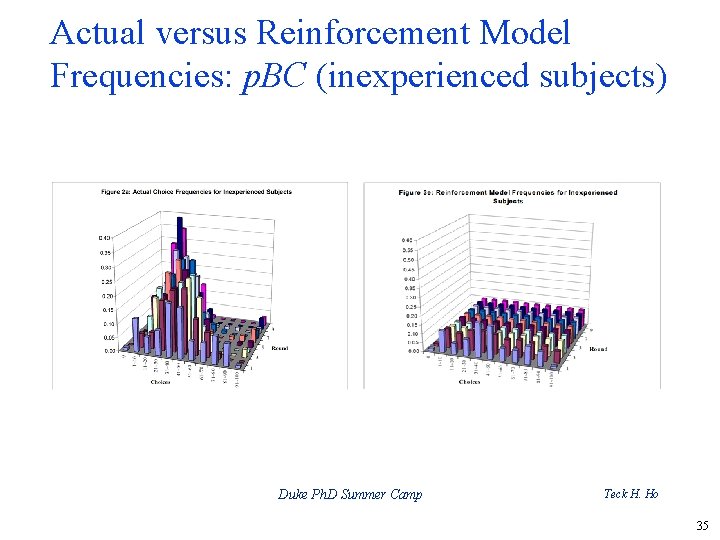

Actual versus Reinforcement Model Frequencies: p. BC (inexperienced subjects) Duke Ph. D Summer Camp Teck H. Ho 35

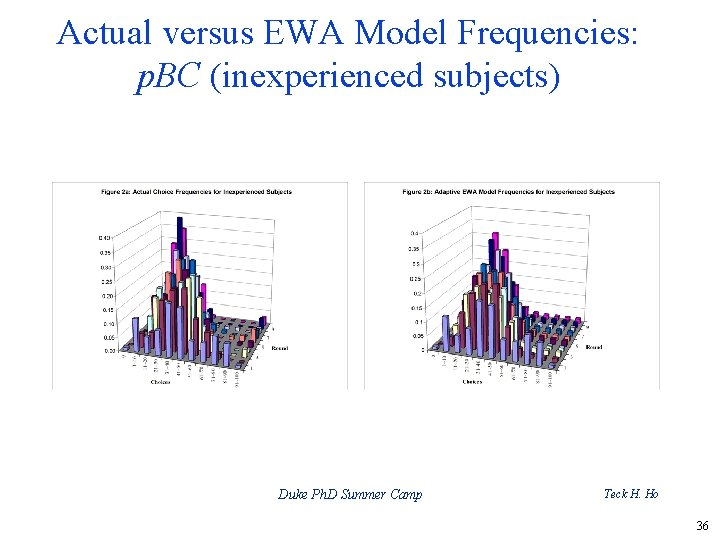

Actual versus EWA Model Frequencies: p. BC (inexperienced subjects) Duke Ph. D Summer Camp Teck H. Ho 36

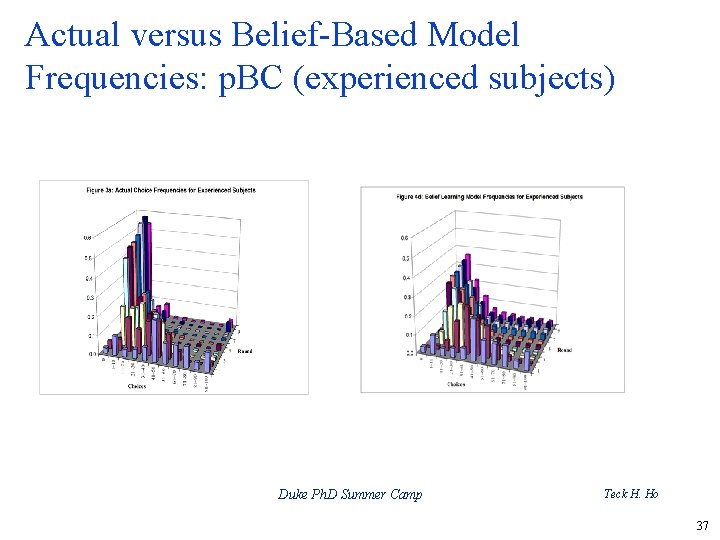

Actual versus Belief-Based Model Frequencies: p. BC (experienced subjects) Duke Ph. D Summer Camp Teck H. Ho 37

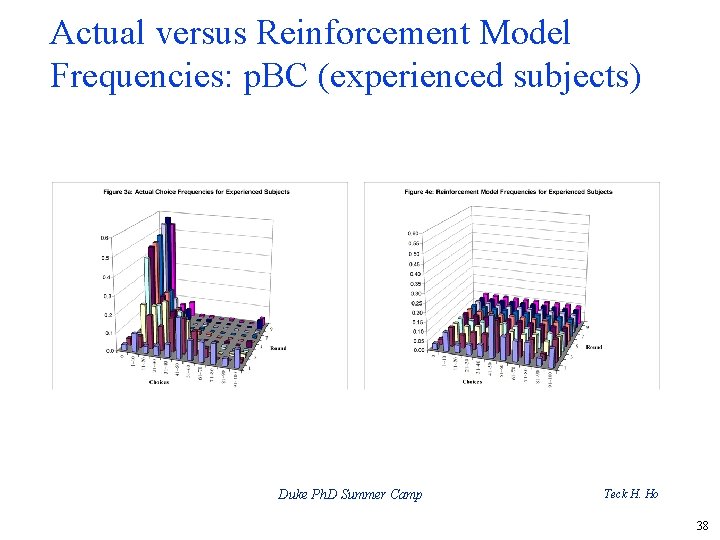

Actual versus Reinforcement Model Frequencies: p. BC (experienced subjects) Duke Ph. D Summer Camp Teck H. Ho 38

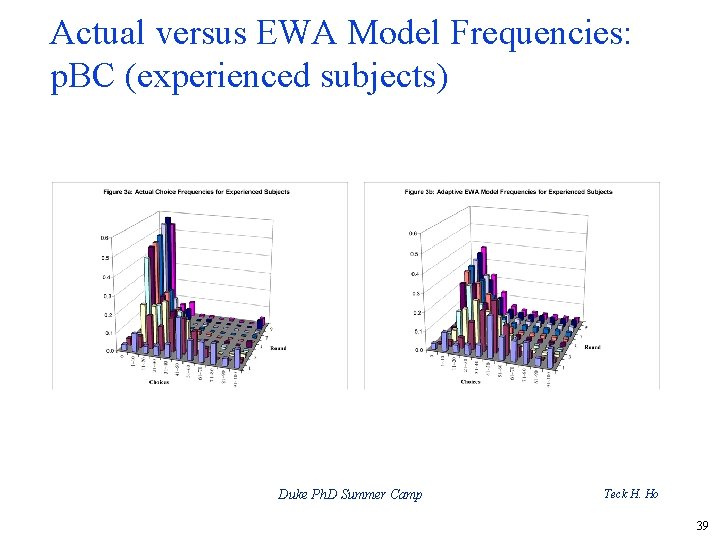

Actual versus EWA Model Frequencies: p. BC (experienced subjects) Duke Ph. D Summer Camp Teck H. Ho 39

Sophisticated EWA Learning (EWA 2. 0) u The population consists of both adaptive and sophisticated players. proportion of sophisticated players is denoted by a. Each sophisticated player however believes the proportion of sophisticated players to be a’. u The u Use latent class to estimate parameters. Duke Ph. D Summer Camp Teck H. Ho 40

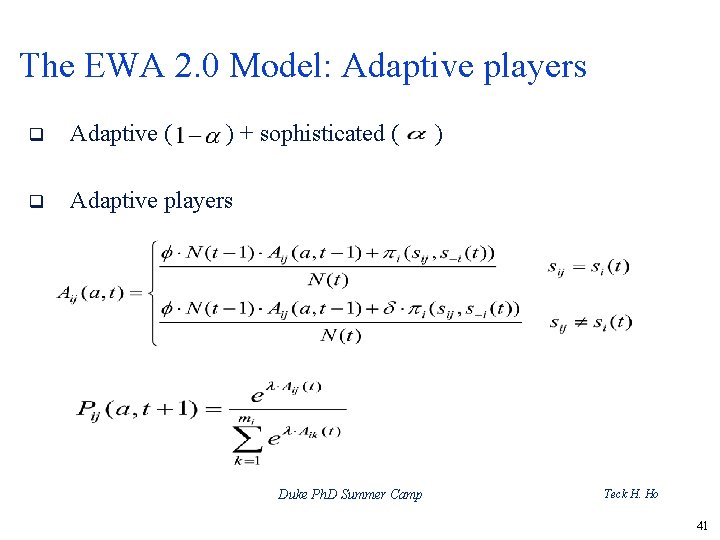

The EWA 2. 0 Model: Adaptive players q Adaptive ( ) + sophisticated ( q Adaptive players Duke Ph. D Summer Camp ) Teck H. Ho 41

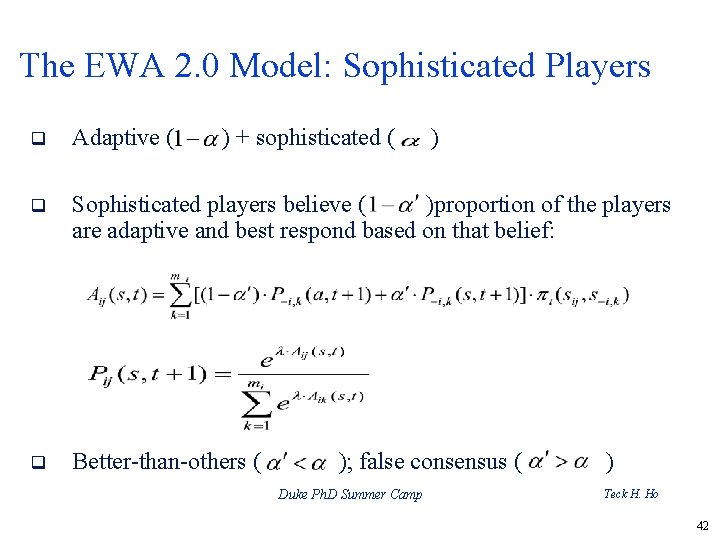

The EWA 2. 0 Model: Sophisticated Players q Adaptive ( ) + sophisticated ( ) q Sophisticated players believe ( )proportion of the players are adaptive and best respond based on that belief: q Better-than-others ( ); false consensus ( Duke Ph. D Summer Camp ) Teck H. Ho 42

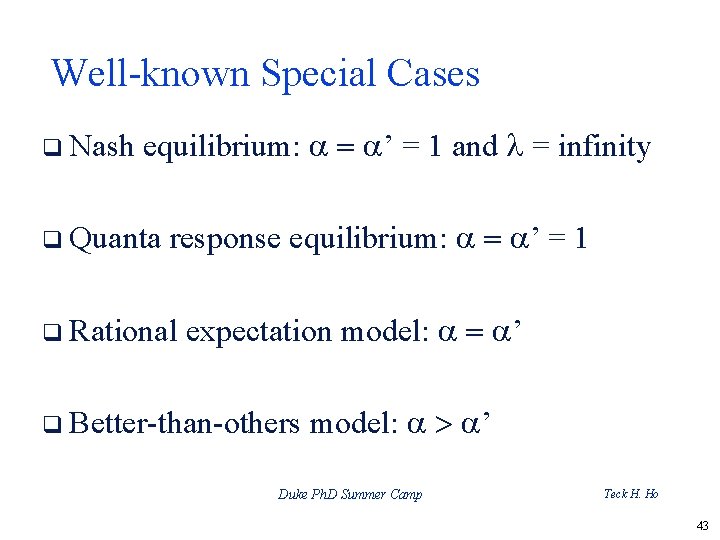

Well-known Special Cases q Nash equilibrium: a = a’ = 1 and l = infinity q Quanta response equilibrium: a = a’ = 1 q Rational expectation model: a = a’ q Better-than-others model: a > a’ Duke Ph. D Summer Camp Teck H. Ho 43

Results Duke Ph. D Summer Camp Teck H. Ho 44

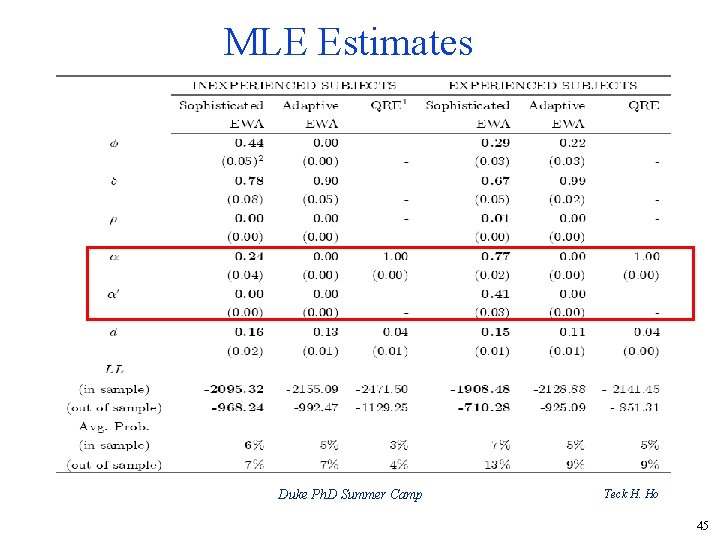

MLE Estimates Duke Ph. D Summer Camp Teck H. Ho 45

Summary q EWA cube provides a simple but useful framework for studying learning in games. q EWA 1. 0 model fits and predicts better than reinforcement and belief learning in many classes of games because it allows for a positive (and smaller than actual) simulated effect. q EWA 2. 0 model allows us to study equilibrium and adaptation simultaneously (and nests most familiar cases including QRE) Duke Ph. D Summer Camp Teck H. Ho 46

Key References for This Talk Ø Refer to Ho, Lim, and Camerer (JMR, 2006) for important references Ø CH Model: Camerer, Ho, and Chong (QJE, 2004) Ø QRE Model: Mc. Kelvey and Palfrey (GEB, 1995); Baye and Morgan (RAND, 2004) Ø EWA Learning: Camerer and Ho (Econometrica, 1999), Camerer, Ho, and Chong (JET, 2002) Duke Ph. D Summer Camp Teck H. Ho 47

Duke Ph. D Summer Camp Teck H. Ho 48

- Slides: 48