Outline Introduction Background Distributed DBMS Architecture Distributed Database

Outline Introduction Background Distributed DBMS Architecture Distributed Database Design Semantic Data Control Distributed Query Processing à Query Processing Methodology à Distributed Query Optimization Distributed DBMS Distributed Transaction Management Parallel Database Systems Distributed Object DBMS Database Interoperability Current Issues © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 1

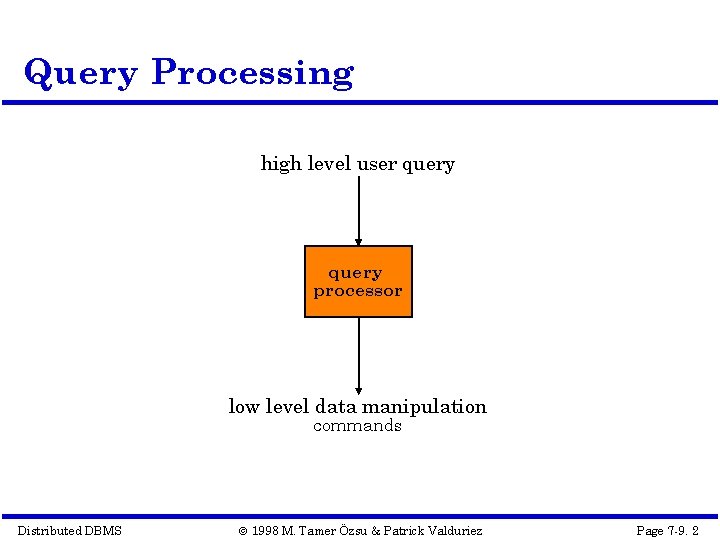

Query Processing high level user query processor low level data manipulation commands Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 2

Query Processing Components Query language that is used à SQL: “intergalactic dataspeak” Query execution methodology à The steps that one goes through in executing highlevel (declarative) user queries. Query optimization à How do we determine the “best” execution plan? Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 3

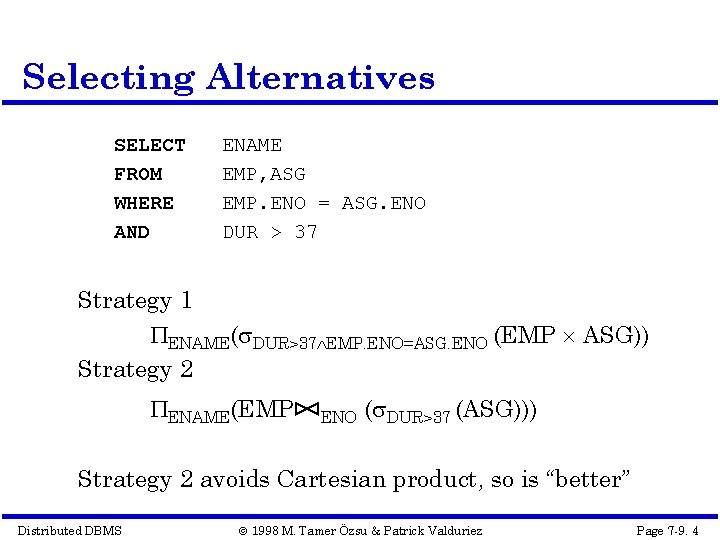

Selecting Alternatives SELECT FROM WHERE AND ENAME EMP, ASG EMP. ENO = ASG. ENO DUR > 37 Strategy 1 ENAME( DUR>37 EMP. ENO=ASG. ENO (EMP ASG)) Strategy 2 ENAME(EMP ENO ( DUR>37 (ASG))) Strategy 2 avoids Cartesian product, so is “better” Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 4

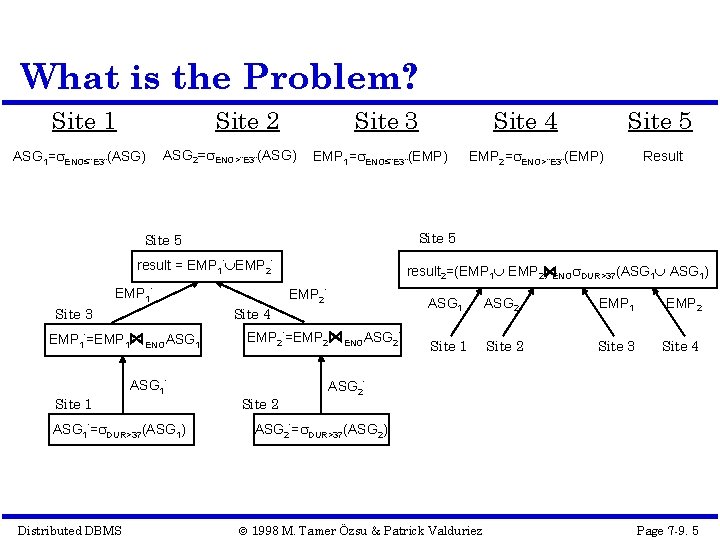

What is the Problem? Site 1 Site 2 ASG 1= ENO≤“E 3”(ASG) Site 3 ASG 2= ENO>“E 3”(ASG) EMP 1= ENO≤“E 3”(EMP) result = EMP 1’ EMP 2’ EMP 1’ Site 4 EMP 1’=EMP 1 Site 1 ’ ENOASG 1’= DUR>37(ASG 1) Distributed DBMS Site 5 EMP 2= ENO>“E 3”(EMP) Result Site 5 Site 3 Site 4 result 2=(EMP 1 EMP 2) ENO DUR>37(ASG 1) EMP 2’=EMP 2 Site 2 ’ ENOASG 2 ASG 1 ASG 2 EMP 1 EMP 2 Site 1 Site 2 Site 3 Site 4 ASG 2’= DUR>37(ASG 2) © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 5

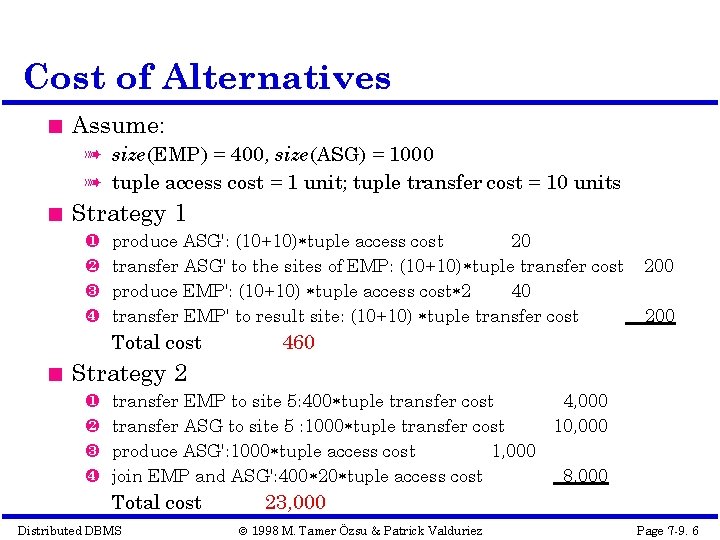

Cost of Alternatives Assume: à size(EMP) = 400, size(ASG) = 1000 à tuple access cost = 1 unit; tuple transfer cost = 10 units Strategy 1 produce ASG': (10+10) tuple access cost 20 transfer ASG' to the sites of EMP: (10+10) tuple transfer cost produce EMP': (10+10) tuple access cost 2 40 transfer EMP' to result site: (10+10) tuple transfer cost Total cost 200 460 Strategy 2 transfer EMP to site 5: 400 tuple transfer cost 4, 000 transfer ASG to site 5 : 1000 tuple transfer cost 10, 000 produce ASG': 1000 tuple access cost 1, 000 join EMP and ASG': 400 20 tuple access cost 8, 000 Total cost Distributed DBMS 23, 000 © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 6

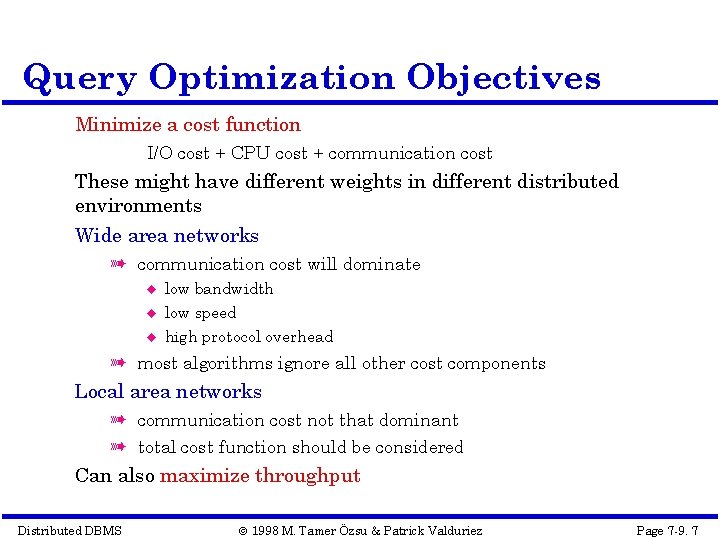

Query Optimization Objectives Minimize a cost function I/O cost + CPU cost + communication cost These might have different weights in different distributed environments Wide area networks à communication cost will dominate low bandwidth low speed high protocol overhead à most algorithms ignore all other cost components Local area networks à communication cost not that dominant à total cost function should be considered Can also maximize throughput Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 7

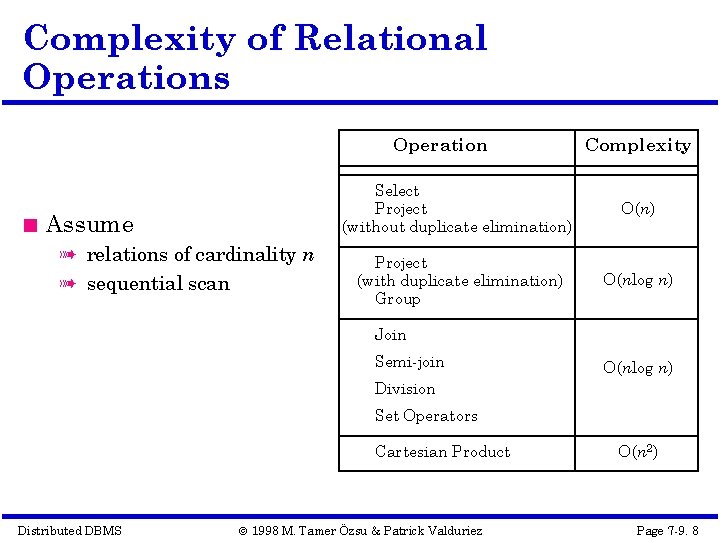

Complexity of Relational Operations Operation Assume à relations of cardinality n à sequential scan Complexity Select Project (without duplicate elimination) O(n) Project (with duplicate elimination) Group O(nlog n) Join Semi-join O(nlog n) Division Set Operators Cartesian Product Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez O(n 2) Page 7 -9. 8

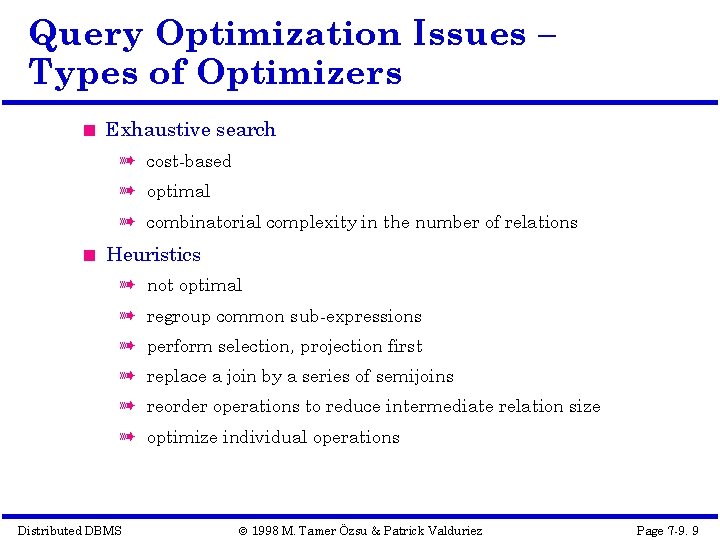

Query Optimization Issues – Types of Optimizers Exhaustive search à cost-based à optimal à combinatorial complexity in the number of relations Heuristics à not optimal à regroup common sub-expressions à perform selection, projection first à replace a join by a series of semijoins à reorder operations to reduce intermediate relation size à optimize individual operations Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 9

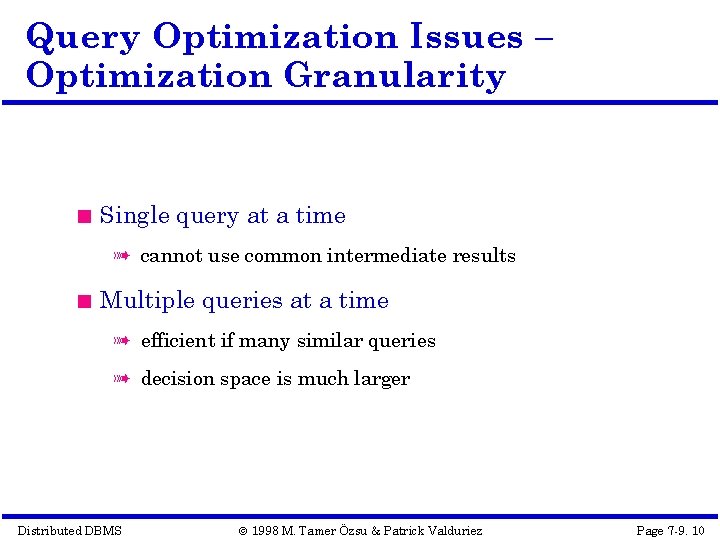

Query Optimization Issues – Optimization Granularity Single query at a time à cannot use common intermediate results Multiple queries at a time à efficient if many similar queries à decision space is much larger Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 10

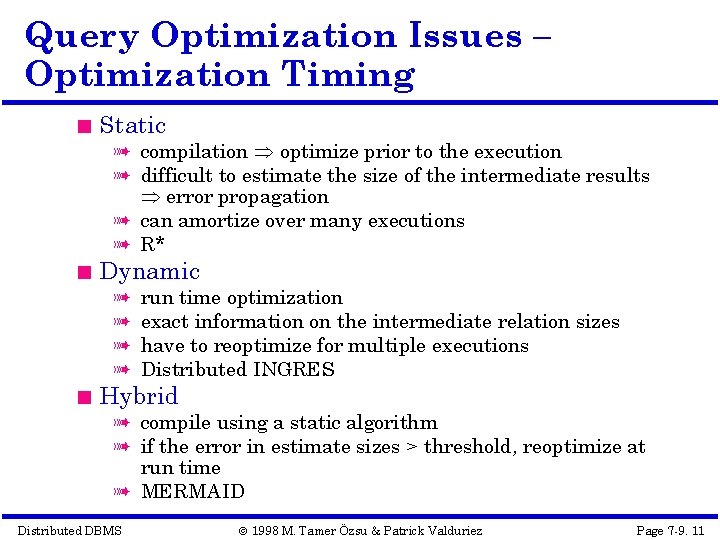

Query Optimization Issues – Optimization Timing Static à compilation optimize prior to the execution à difficult to estimate the size of the intermediate results error propagation à can amortize over many executions à R* Dynamic à à run time optimization exact information on the intermediate relation sizes have to reoptimize for multiple executions Distributed INGRES Hybrid à compile using a static algorithm à if the error in estimate sizes > threshold, reoptimize at run time à MERMAID Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 11

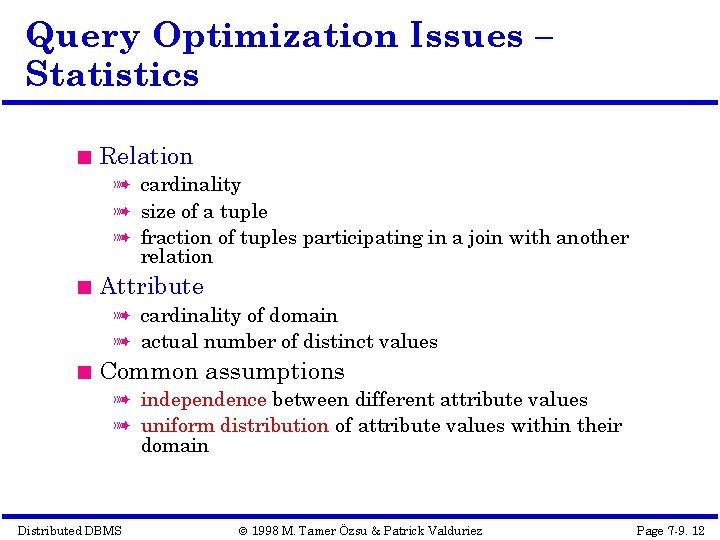

Query Optimization Issues – Statistics Relation à cardinality à size of a tuple à fraction of tuples participating in a join with another relation Attribute à cardinality of domain à actual number of distinct values Common assumptions à independence between different attribute values à uniform distribution of attribute values within their domain Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 12

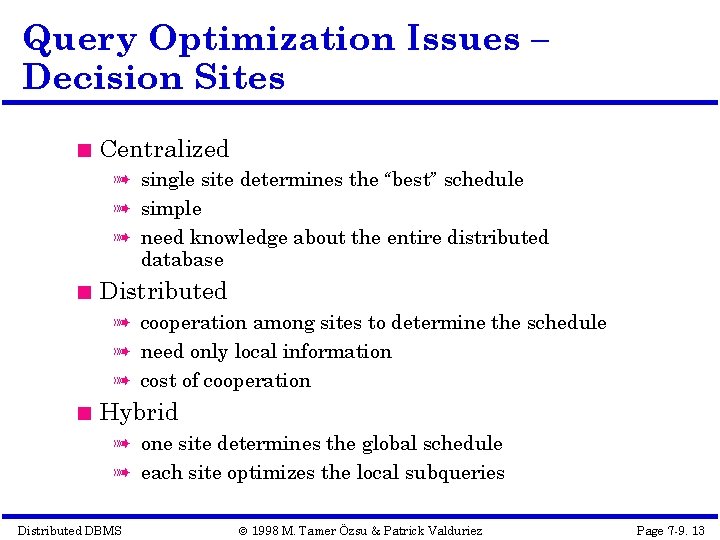

Query Optimization Issues – Decision Sites Centralized à single site determines the “best” schedule à simple à need knowledge about the entire distributed database Distributed à cooperation among sites to determine the schedule à need only local information à cost of cooperation Hybrid à one site determines the global schedule à each site optimizes the local subqueries Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 13

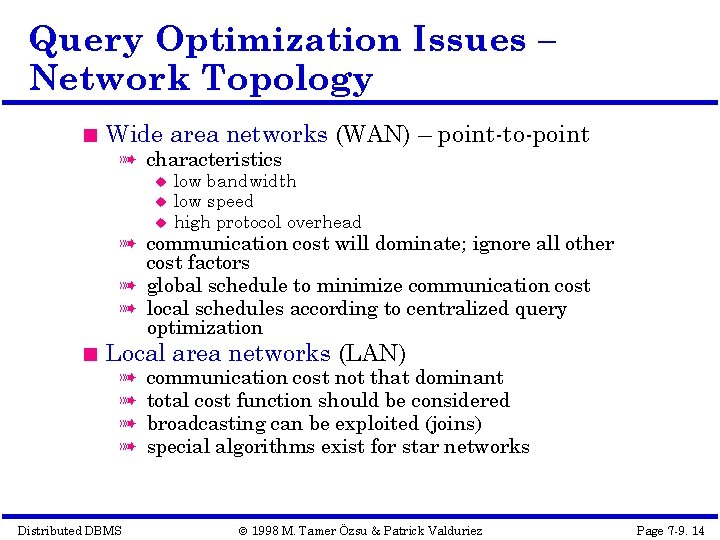

Query Optimization Issues – Network Topology Wide area networks (WAN) – point-to-point à characteristics low bandwidth low speed high protocol overhead à communication cost will dominate; ignore all other cost factors à global schedule to minimize communication cost à local schedules according to centralized query optimization Local area networks (LAN) à à Distributed DBMS communication cost not that dominant total cost function should be considered broadcasting can be exploited (joins) special algorithms exist for star networks © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 14

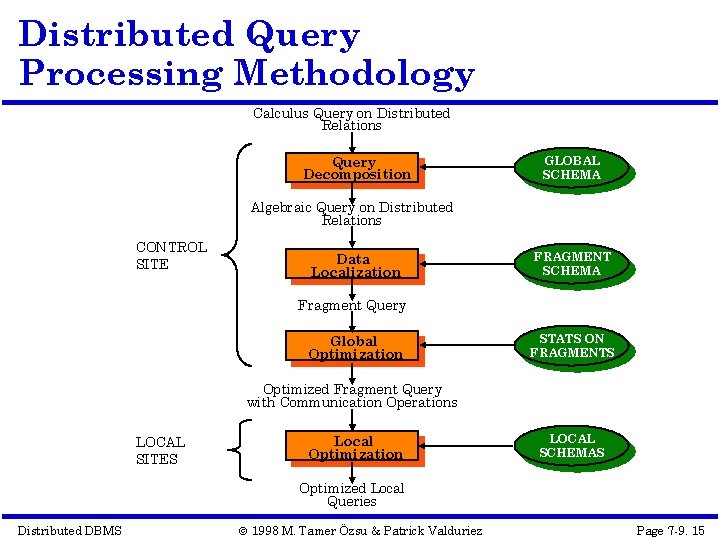

Distributed Query Processing Methodology Calculus Query on Distributed Relations Query Decomposition GLOBAL SCHEMA Algebraic Query on Distributed Relations CONTROL SITE Data Localization FRAGMENT SCHEMA Fragment Query Global Optimization STATS ON FRAGMENTS Optimized Fragment Query with Communication Operations LOCAL SITES Local Optimization LOCAL SCHEMAS Optimized Local Queries Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 15

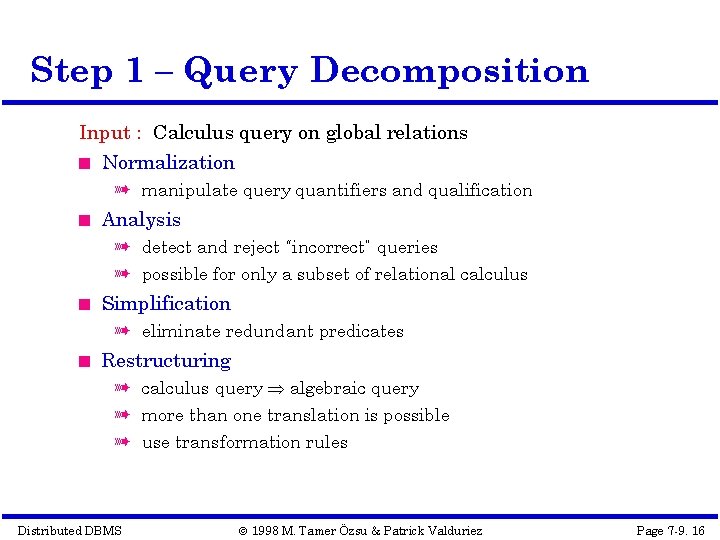

Step 1 – Query Decomposition Input : Calculus query on global relations Normalization à manipulate query quantifiers and qualification Analysis à detect and reject “incorrect” queries à possible for only a subset of relational calculus Simplification à eliminate redundant predicates Restructuring à calculus query algebraic query à more than one translation is possible à use transformation rules Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 16

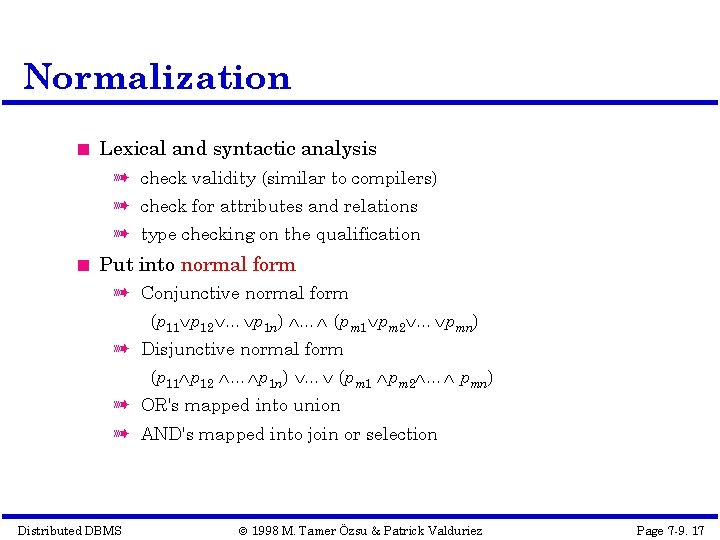

Normalization Lexical and syntactic analysis à check validity (similar to compilers) à check for attributes and relations à type checking on the qualification Put into normal form à Conjunctive normal form (p 11 p 12 … p 1 n) … (pm 1 pm 2 … pmn) à Disjunctive normal form (p 11 p 12 … p 1 n) … (pm 1 pm 2 … pmn) à OR's mapped into union à AND's mapped into join or selection Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 17

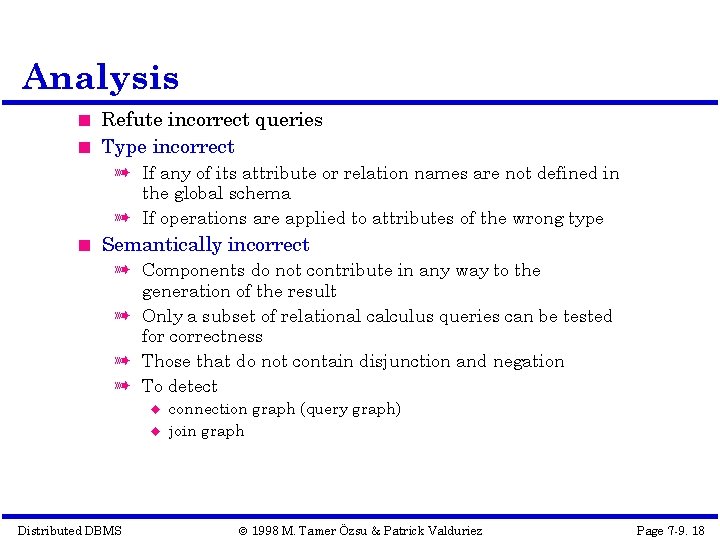

Analysis Refute incorrect queries Type incorrect à If any of its attribute or relation names are not defined in the global schema à If operations are applied to attributes of the wrong type Semantically incorrect à Components do not contribute in any way to the generation of the result à Only a subset of relational calculus queries can be tested for correctness à Those that do not contain disjunction and negation à To detect Distributed DBMS connection graph (query graph) join graph © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 18

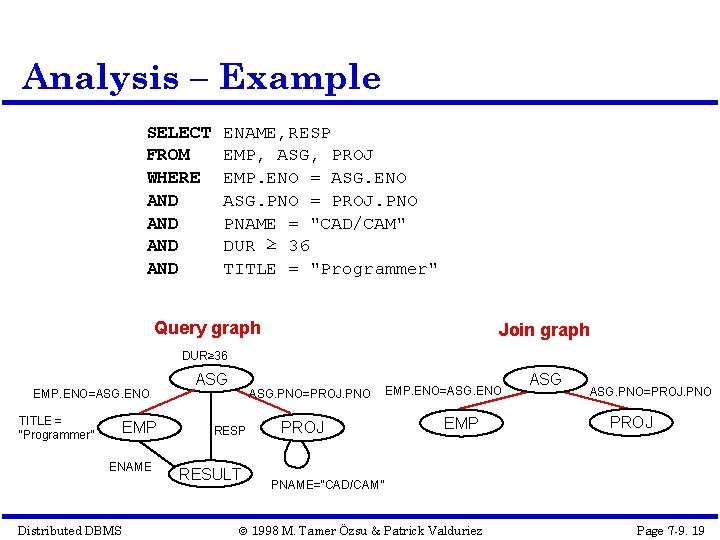

Analysis – Example SELECT FROM WHERE AND AND ENAME, RESP EMP, ASG, PROJ EMP. ENO = ASG. ENO ASG. PNO = PROJ. PNO PNAME = "CAD/CAM" DUR ≥ 36 TITLE = "Programmer" Query graph Join graph DUR≥ 36 EMP. ENO=ASG. ENO TITLE = “Programmer” EMP ENAME Distributed DBMS ASG. PNO=PROJ. PNO RESP RESULT PROJ EMP. ENO=ASG. ENO EMP ASG. PNO=PROJ. PNO PROJ PNAME=“CAD/CAM” © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 19

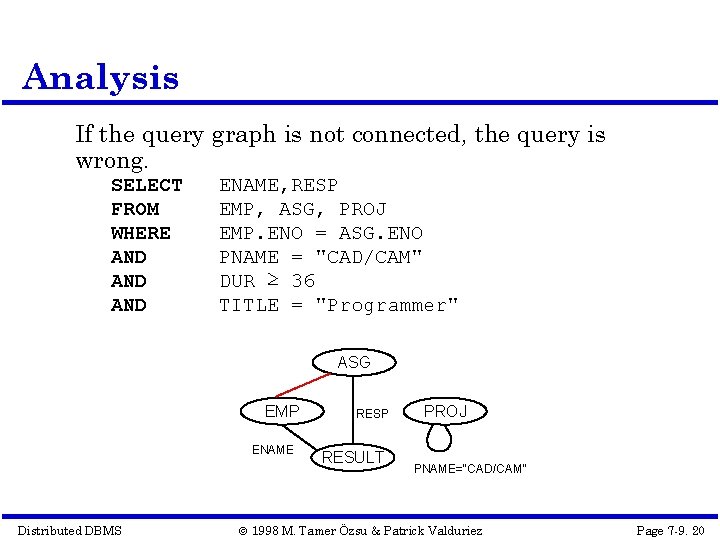

Analysis If the query graph is not connected, the query is wrong. SELECT FROM WHERE AND AND ENAME, RESP EMP, ASG, PROJ EMP. ENO = ASG. ENO PNAME = "CAD/CAM" DUR ≥ 36 TITLE = "Programmer" ASG EMP ENAME Distributed DBMS RESP RESULT PROJ PNAME=“CAD/CAM” © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 20

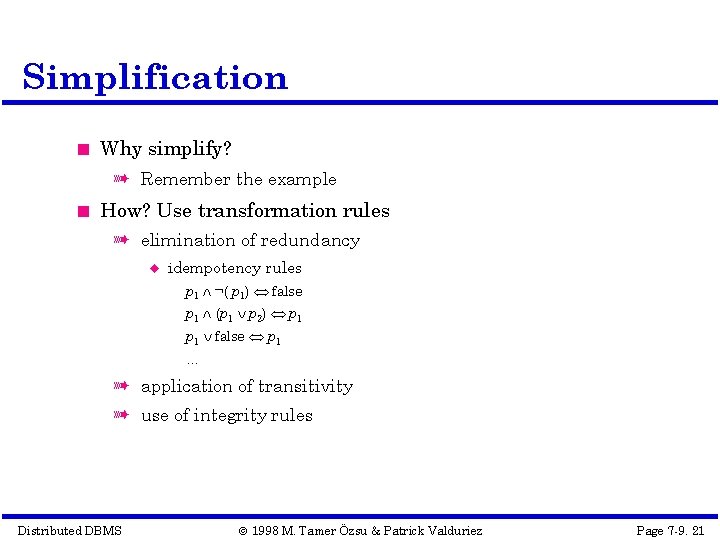

Simplification Why simplify? à Remember the example How? Use transformation rules à elimination of redundancy idempotency rules p 1 ¬( p 1) false p 1 (p 1 p 2) p 1 false p 1 … à application of transitivity à use of integrity rules Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 21

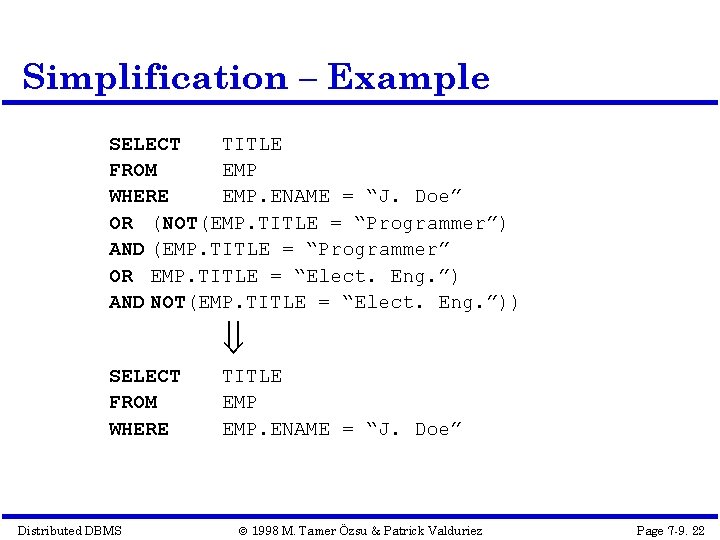

Simplification – Example SELECT TITLE FROM EMP WHERE EMP. ENAME = “J. Doe” OR (NOT(EMP. TITLE = “Programmer”) AND (EMP. TITLE = “Programmer” OR EMP. TITLE = “Elect. Eng. ”) AND NOT(EMP. TITLE = “Elect. Eng. ”)) SELECT FROM WHERE Distributed DBMS TITLE EMP. ENAME = “J. Doe” © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 22

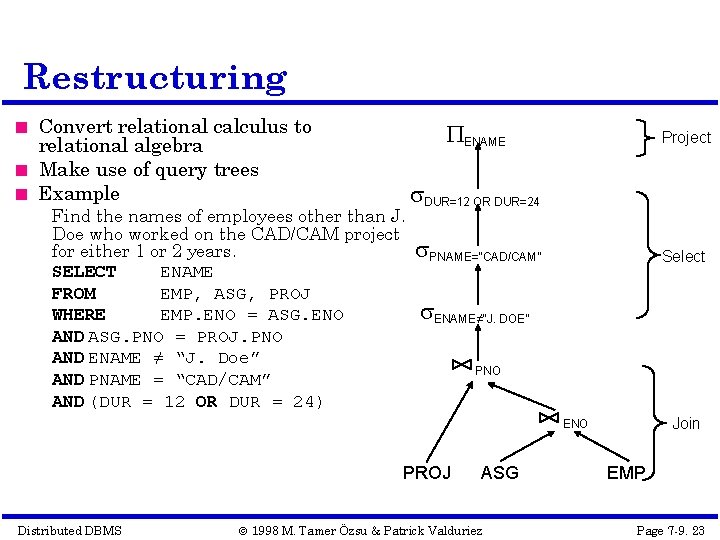

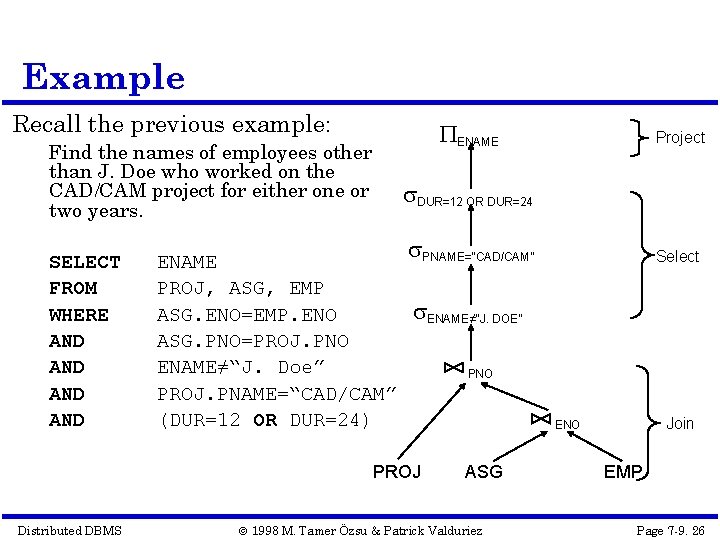

Restructuring Convert relational calculus to relational algebra Make use of query trees Example ENAME Find the names of employees other than J. Doe who worked on the CAD/CAM project for either 1 or 2 years. SELECT ENAME FROM EMP, ASG, PROJ WHERE EMP. ENO = ASG. ENO AND ASG. PNO = PROJ. PNO AND ENAME ≠ “J. Doe” AND PNAME = “CAD/CAM” AND (DUR = 12 OR DUR = 24) Project DUR=12 OR DUR=24 PNAME=“CAD/CAM” Select ENAME≠“J. DOE” PNO Join ENO PROJ Distributed DBMS ASG © 1998 M. Tamer Özsu & Patrick Valduriez EMP Page 7 -9. 23

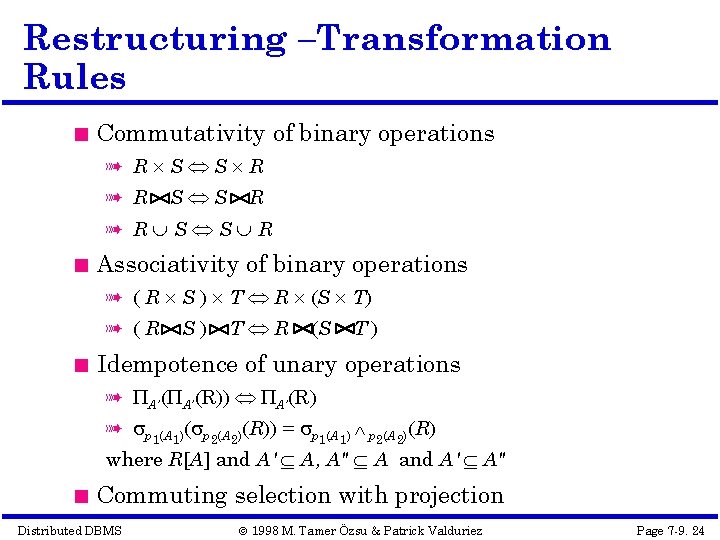

Restructuring –Transformation Rules Commutativity of binary operations à R S S R Associativity of binary operations à ( R S ) T R (S T) à (R S) T R (S T) Idempotence of unary operations à A’(R)) A’(R) à p 1(A 1)( p 2(A 2)(R)) = p 1(A 1) p 2(A 2)(R) where R[A] and A' A, A" A and A' A" Commuting selection with projection Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 24

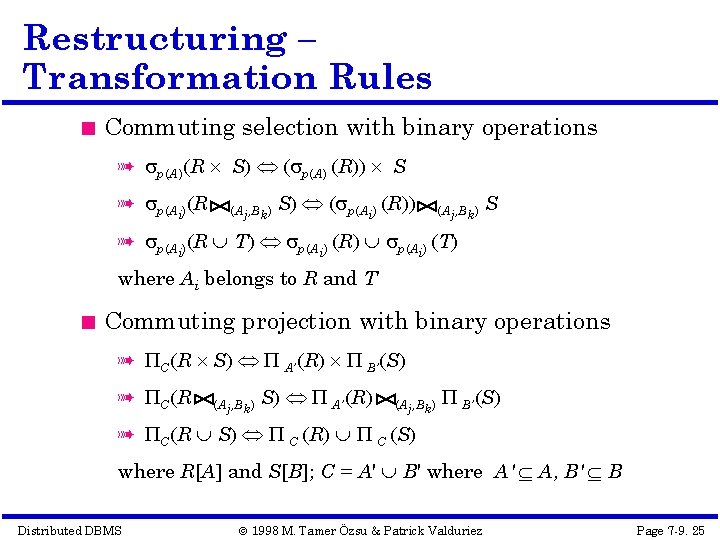

Restructuring – Transformation Rules Commuting selection with binary operations à p(A)(R S) ( p(A) (R)) S à p(Ai)(R (Aj, Bk) S) ( p(Ai) (R)) (Aj, Bk) S à p(Ai)(R T) p(Ai) (R) p(Ai) (T) where Ai belongs to R and T Commuting projection with binary operations à C(R S) A’(R) B’(S) à C(R (Aj, Bk) S) A’(R) (Aj, Bk) B’(S) à C(R S) C (R) C (S) where R[A] and S[B]; C = A' B' where A' A, B' B Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 25

Example Recall the previous example: ENAME Find the names of employees other than J. Doe who worked on the CAD/CAM project for either one or two years. SELECT FROM WHERE AND AND DUR=12 OR DUR=24 ENAME PROJ, ASG, EMP ASG. ENO=EMP. ENO ASG. PNO=PROJ. PNO ENAME≠“J. Doe” PROJ. PNAME=“CAD/CAM” (DUR=12 OR DUR=24) PNAME=“CAD/CAM” Select ENAME≠“J. DOE” PROJ Distributed DBMS Project PNO Join ENO ASG © 1998 M. Tamer Özsu & Patrick Valduriez EMP Page 7 -9. 26

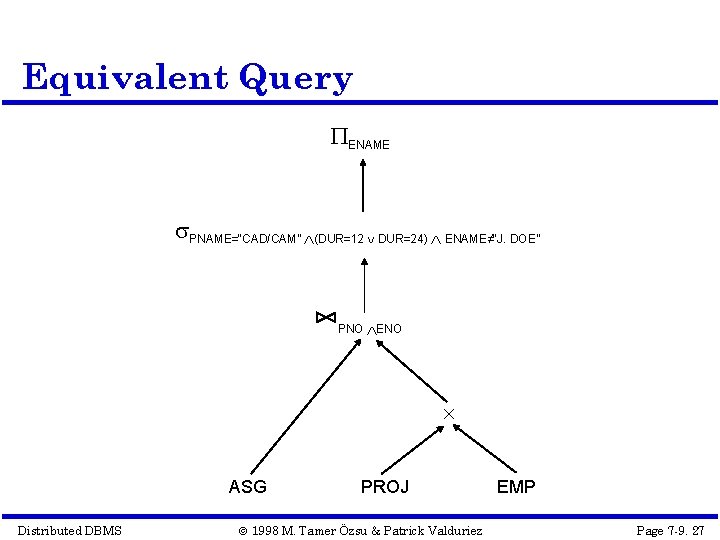

Equivalent Query ENAME PNAME=“CAD/CAM” (DUR=12 DUR=24) ENAME≠“J. DOE” PNO ENO ASG Distributed DBMS PROJ © 1998 M. Tamer Özsu & Patrick Valduriez EMP Page 7 -9. 27

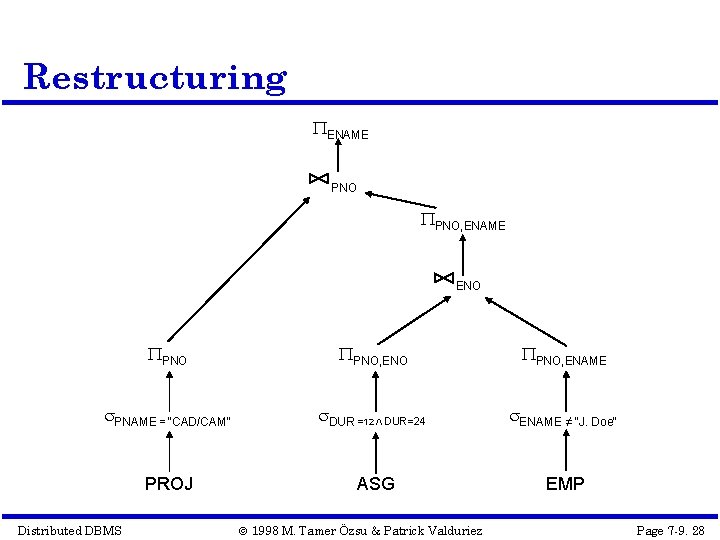

Restructuring ENAME PNO, ENAME ENO PNO, ENAME PNAME = "CAD/CAM" DUR =12 DUR=24 ENAME ≠ "J. Doe" PROJ ASG EMP Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 28

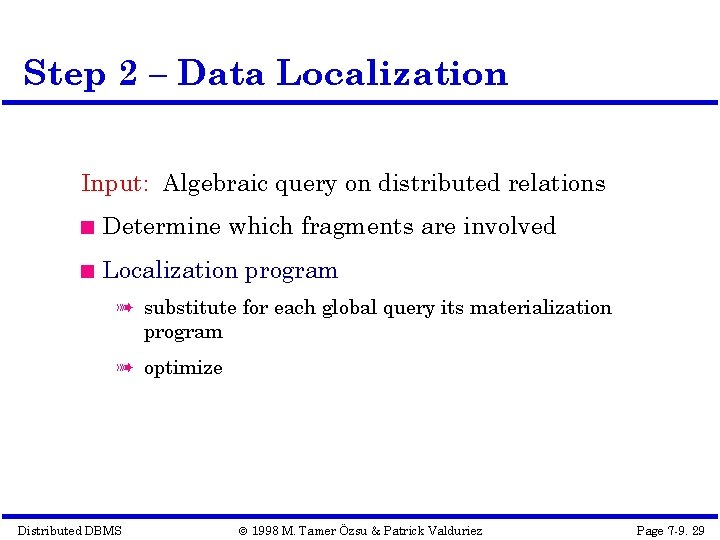

Step 2 – Data Localization Input: Algebraic query on distributed relations Determine which fragments are involved Localization program à substitute for each global query its materialization program à optimize Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 29

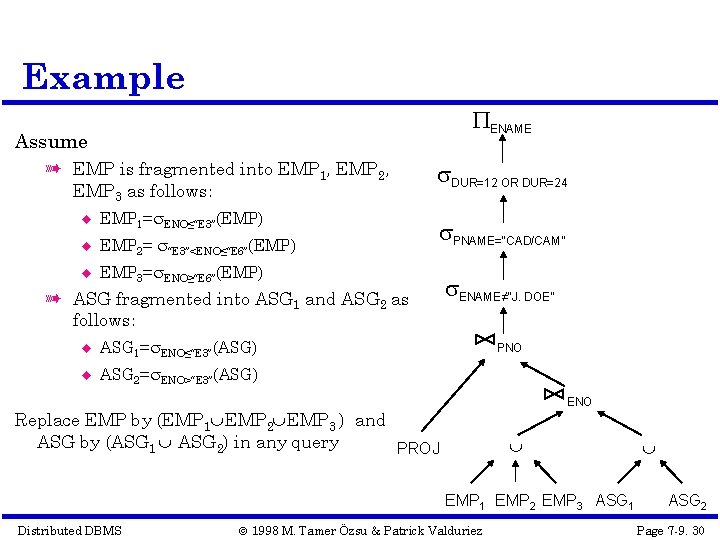

Example ENAME Assume à EMP is fragmented into EMP 1, EMP 2, EMP 3 as follows: EMP 1= ENO≤“E 3”(EMP) EMP 2= “E 3”<ENO≤“E 6”(EMP) EMP 3= ENO≥“E 6”(EMP) DUR=12 OR DUR=24 PNAME=“CAD/CAM” à ASG fragmented into ASG 1 and ASG 2 as follows: ASG 1= ENO≤“E 3”(ASG) ASG 2= ENO>“E 3”(ASG) ENAME≠“J. DOE” PNO ENO Replace EMP by (EMP 1 EMP 2 EMP 3 ) and ASG by (ASG 1 ASG 2) in any query PROJ EMP 1 EMP 2 EMP 3 ASG 1 Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez ASG 2 Page 7 -9. 30

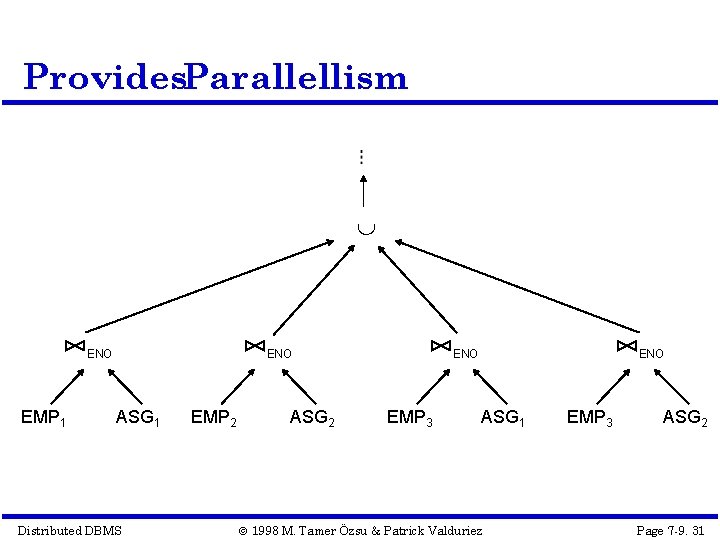

Provides. Parallellism ENO EMP 1 ENO ASG 1 Distributed DBMS EMP 2 ASG 2 ENO EMP 3 ENO ASG 1 © 1998 M. Tamer Özsu & Patrick Valduriez EMP 3 ASG 2 Page 7 -9. 31

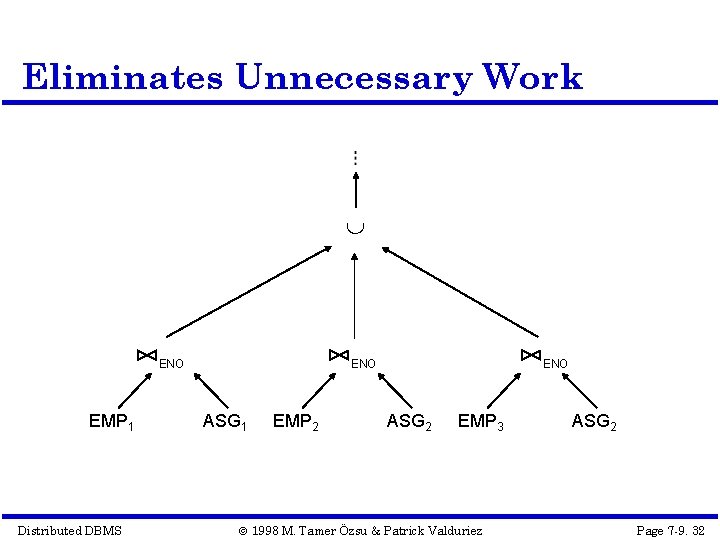

Eliminates Unnecessary Work ENO EMP 1 Distributed DBMS ENO ASG 1 EMP 2 ENO ASG 2 EMP 3 © 1998 M. Tamer Özsu & Patrick Valduriez ASG 2 Page 7 -9. 32

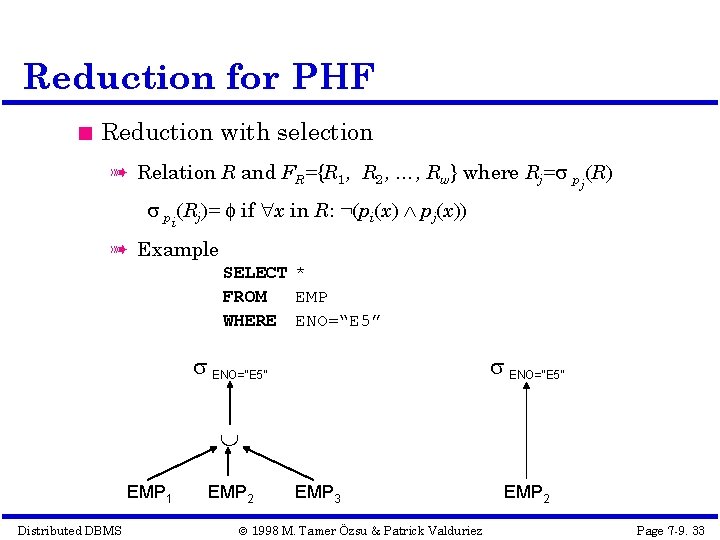

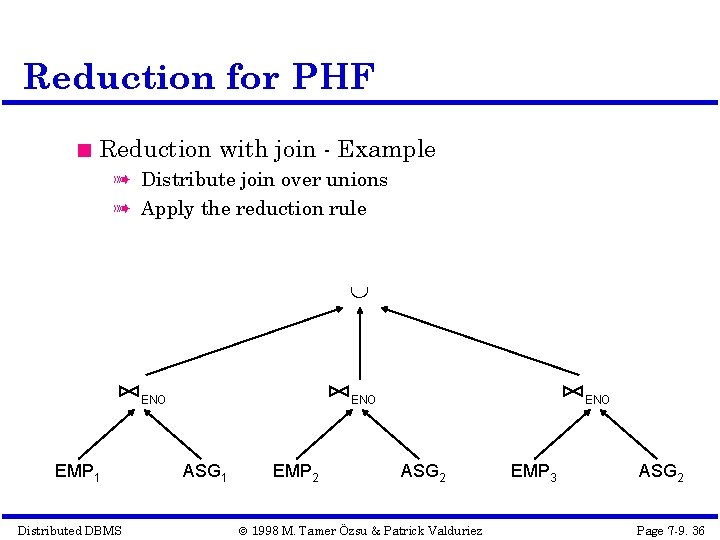

Reduction for PHF Reduction with selection à Relation R and FR={R 1, R 2, …, Rw} where Rj= p (R) j pi(Rj)= if x in R: ¬(pi(x) pj(x)) à Example SELECT * FROM EMP WHERE ENO=“E 5” EMP 1 Distributed DBMS EMP 2 EMP 3 © 1998 M. Tamer Özsu & Patrick Valduriez EMP 2 Page 7 -9. 33

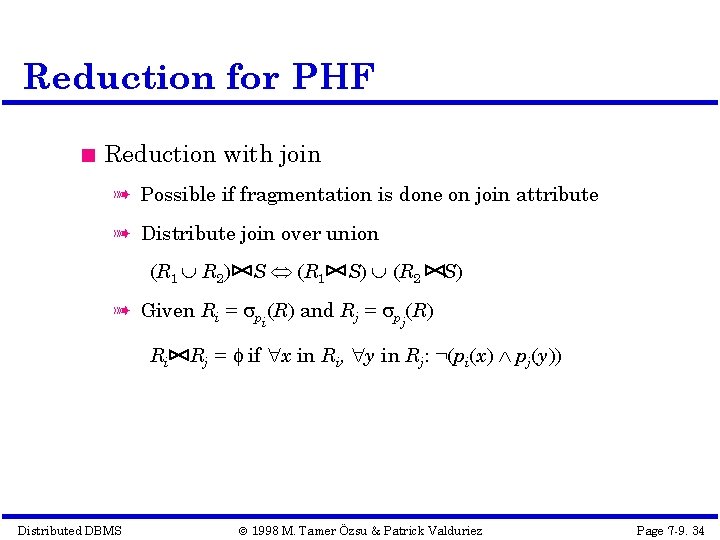

Reduction for PHF Reduction with join à Possible if fragmentation is done on join attribute à Distribute join over union (R 1 R 2) S (R 1 S) (R 2 S) à Given Ri = p (R) and Rj = p (R) i Ri Distributed DBMS j Rj = if x in Ri, y in Rj: ¬(pi(x) pj(y)) © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 34

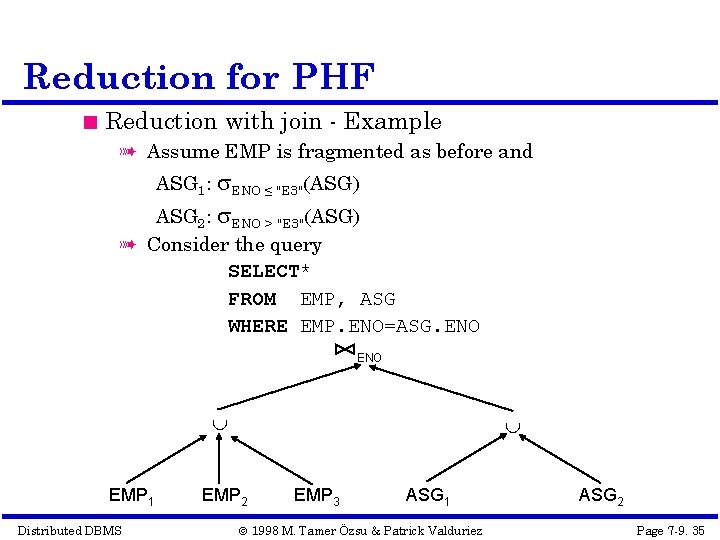

Reduction for PHF Reduction with join - Example à Assume EMP is fragmented as before and ASG 1: ENO ≤ "E 3"(ASG) ASG 2: ENO > "E 3"(ASG) à Consider the query SELECT* FROM EMP, ASG WHERE EMP. ENO=ASG. ENO EMP 1 Distributed DBMS EMP 2 EMP 3 ASG 1 © 1998 M. Tamer Özsu & Patrick Valduriez ASG 2 Page 7 -9. 35

Reduction for PHF Reduction with join - Example à Distribute join over unions à Apply the reduction rule ENO EMP 1 Distributed DBMS ENO ASG 1 EMP 2 ENO ASG 2 © 1998 M. Tamer Özsu & Patrick Valduriez EMP 3 ASG 2 Page 7 -9. 36

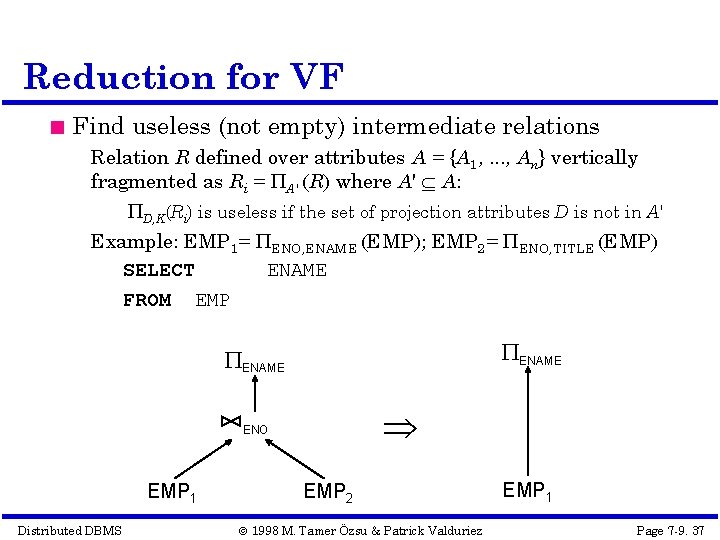

Reduction for VF Find useless (not empty) intermediate relations Relation R defined over attributes A = {A 1, . . . , An} vertically fragmented as Ri = A' (R) where A' A: D, K(Ri) is useless if the set of projection attributes D is not in A' Example: EMP 1= ENO, ENAME (EMP); EMP 2= ENO, TITLE (EMP) SELECT ENAME FROM EMP ENAME ENO EMP 1 Distributed DBMS EMP 2 © 1998 M. Tamer Özsu & Patrick Valduriez EMP 1 Page 7 -9. 37

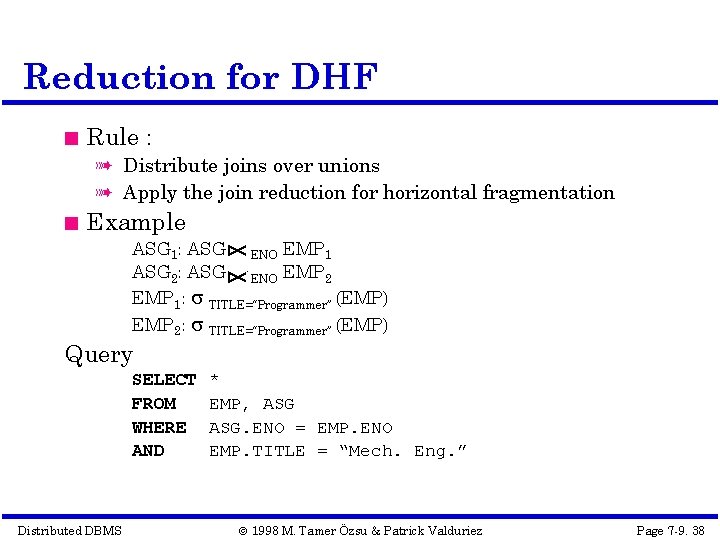

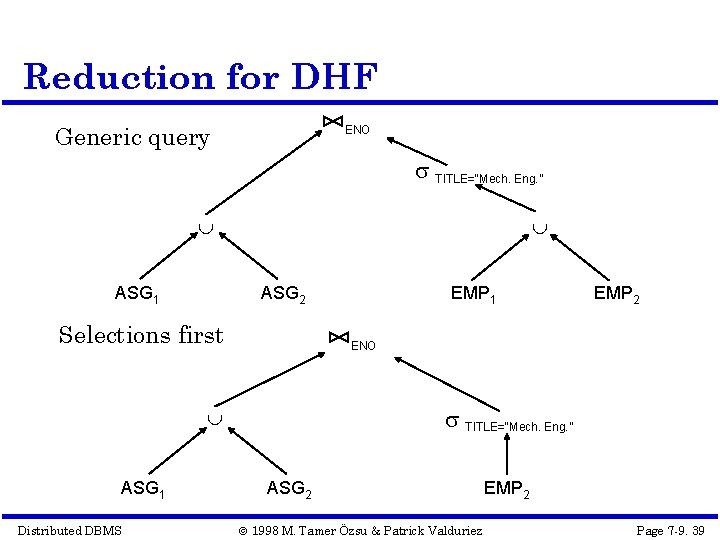

Reduction for DHF Rule : à Distribute joins over unions à Apply the join reduction for horizontal fragmentation Example ASG 1: ASG ENO EMP 1 ASG 2: ASG ENO EMP 2 EMP 1: TITLE=“Programmer” (EMP) EMP 2: TITLE=“Programmer” (EMP) Query SELECT FROM WHERE AND Distributed DBMS * EMP, ASG. ENO = EMP. ENO EMP. TITLE = “Mech. Eng. ” © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 38

Reduction for DHF Generic query ENO TITLE=“Mech. Eng. ” ASG 1 ASG 2 Selections first Distributed DBMS EMP 2 ENO TITLE=“Mech. Eng. ” ASG 1 EMP 1 ASG 2 © 1998 M. Tamer Özsu & Patrick Valduriez EMP 2 Page 7 -9. 39

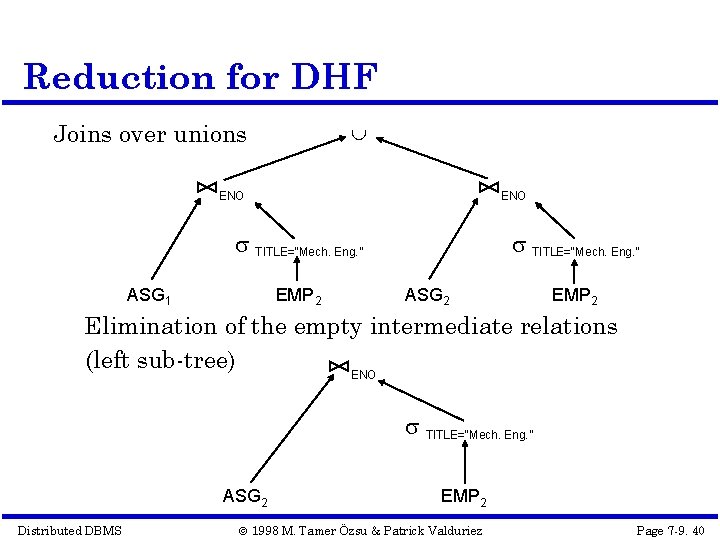

Reduction for DHF Joins over unions ENO TITLE=“Mech. Eng. ” ASG 1 EMP 2 TITLE=“Mech. Eng. ” ASG 2 EMP 2 Elimination of the empty intermediate relations (left sub-tree) ENO TITLE=“Mech. Eng. ” ASG 2 Distributed DBMS EMP 2 © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 40

Reduction for HF Combine the rules already specified: à Remove empty relations generated by contradicting selections on horizontal fragments; à Remove useless relations generated by projections on vertical fragments; à Distribute joins over unions in order to isolate and remove useless joins. Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 41

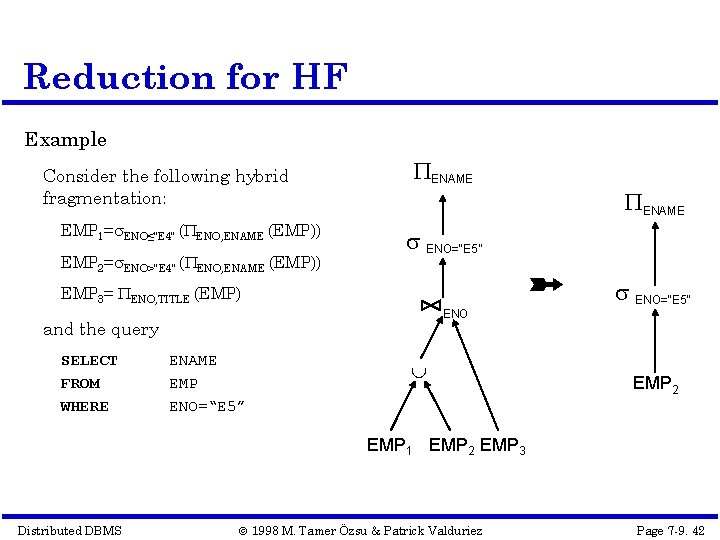

Reduction for HF Example Consider the following hybrid fragmentation: EMP 1= ENO≤"E 4" ( ENO, ENAME (EMP)) EMP 2= ENO>"E 4" ( ENO, ENAME (EMP)) ENAME ENO=“E 5” EMP 3= ENO, TITLE (EMP) ENO and the query SELECT FROM WHERE ENO=“E 5” ENAME EMP ENO=“E 5” EMP 2 EMP 1 EMP 2 EMP 3 Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 42

Step 3 – Global Query Optimization Input: Fragment query Find the best (not necessarily optimal) global schedule à Minimize a cost function à Distributed join processing Bushy vs. linear trees Which relation to ship where? Ship-whole vs ship-as-needed à Decide on the use of semijoins Semijoin saves on communication at the expense of more local processing. à Join methods Distributed DBMS nested loop vs ordered joins (merge join or hash join) © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 43

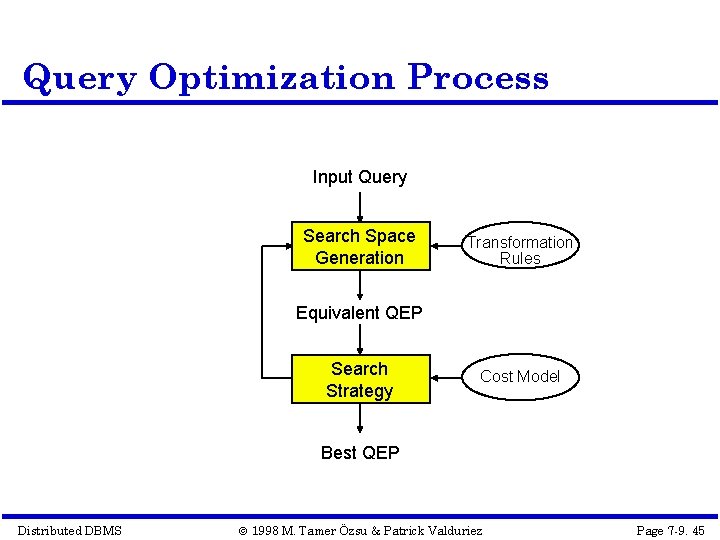

Cost-Based Optimization Solution space à The set of equivalent algebra expressions (query trees). Cost function (in terms of time) à I/O cost + CPU cost + communication cost à These might have different weights in different distributed environments (LAN vs WAN). à Can also maximize throughput Search algorithm à How do we move inside the solution space? à Exhaustive search, heuristic algorithms (iterative improvement, simulated annealing, genetic, …) Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 44

Query Optimization Process Input Query Search Space Generation Transformation Rules Equivalent QEP Search Strategy Cost Model Best QEP Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 45

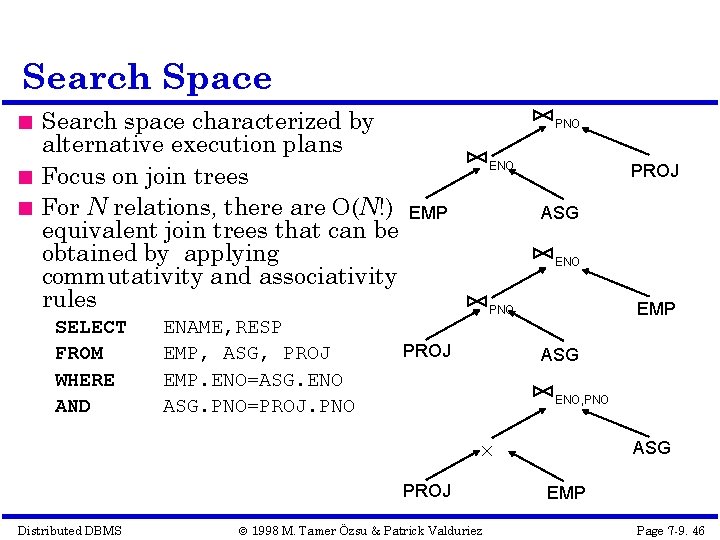

Search Space Search space characterized by alternative execution plans Focus on join trees For N relations, there are O(N!) equivalent join trees that can be obtained by applying commutativity and associativity rules SELECT FROM WHERE AND ENAME, RESP EMP, ASG, PROJ EMP. ENO=ASG. ENO ASG. PNO=PROJ. PNO ENO EMP PROJ ASG ENO EMP PNO PROJ ASG ENO, PNO PROJ Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez ASG EMP Page 7 -9. 46

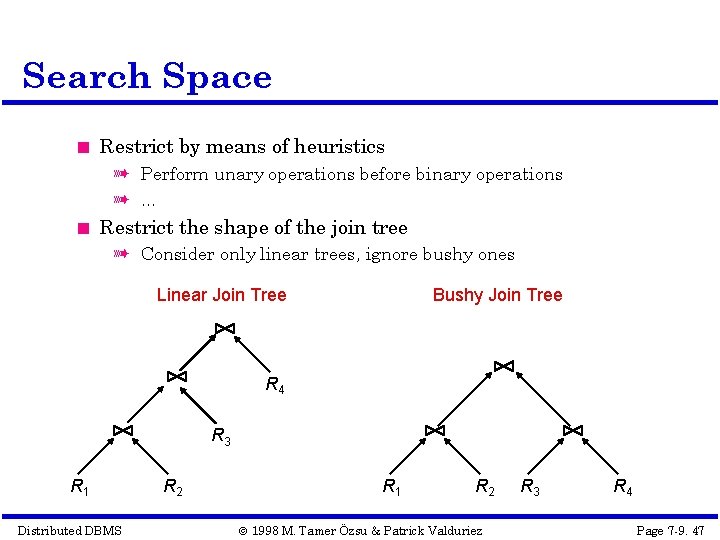

Search Space Restrict by means of heuristics à Perform unary operations before binary operations à … Restrict the shape of the join tree à Consider only linear trees, ignore bushy ones Linear Join Tree Bushy Join Tree R 4 R 3 R 1 Distributed DBMS R 2 R 1 R 2 © 1998 M. Tamer Özsu & Patrick Valduriez R 3 R 4 Page 7 -9. 47

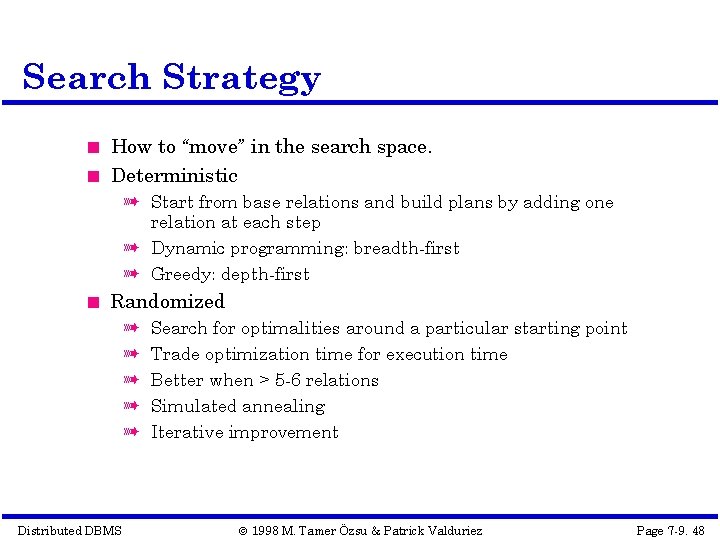

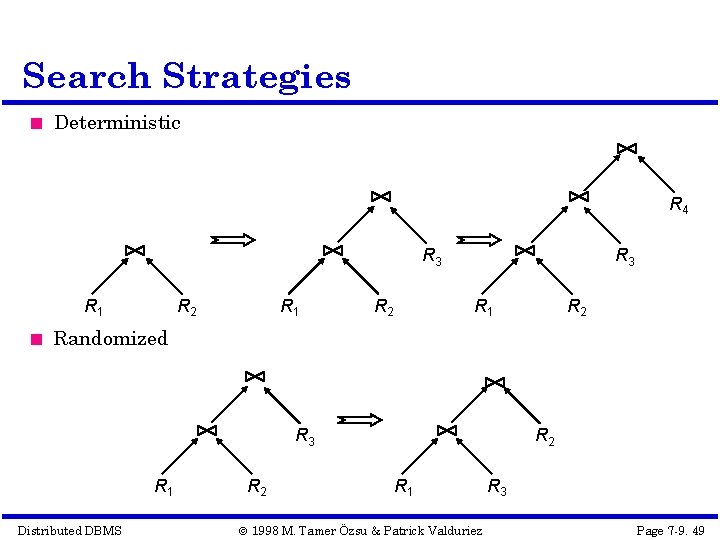

Search Strategy How to “move” in the search space. Deterministic à Start from base relations and build plans by adding one relation at each step à Dynamic programming: breadth-first à Greedy: depth-first Randomized à à à Distributed DBMS Search for optimalities around a particular starting point Trade optimization time for execution time Better when > 5 -6 relations Simulated annealing Iterative improvement © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 48

Search Strategies Deterministic R 4 R 3 R 1 R 2 R 1 R 2 R 3 R 1 R 2 Randomized R 3 R 1 Distributed DBMS R 2 R 1 © 1998 M. Tamer Özsu & Patrick Valduriez R 3 Page 7 -9. 49

Cost Functions Total Time (or Total Cost) à Reduce each cost (in terms of time) component individually à Do as little of each cost component as possible à Optimizes the utilization of the resources Increases system throughput Response Time à Do as many things as possible in parallel à May increase total time because of increased total activity Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 50

Total Cost Summation of all cost factors Total cost = CPU cost + I/O cost + communication cost CPU cost = unit instruction cost no. of instructions I/O cost = unit disk I/O cost no. of disk I/Os communication cost = message initiation + transmission Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 51

Total Cost Factors Wide area network à message initiation and transmission costs high à local processing cost is low (fast mainframes or minicomputers) à ratio of communication to I/O costs = 20: 1 Local area networks à communication and local processing costs are more or less equal à ratio = 1: 1. 6 Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 52

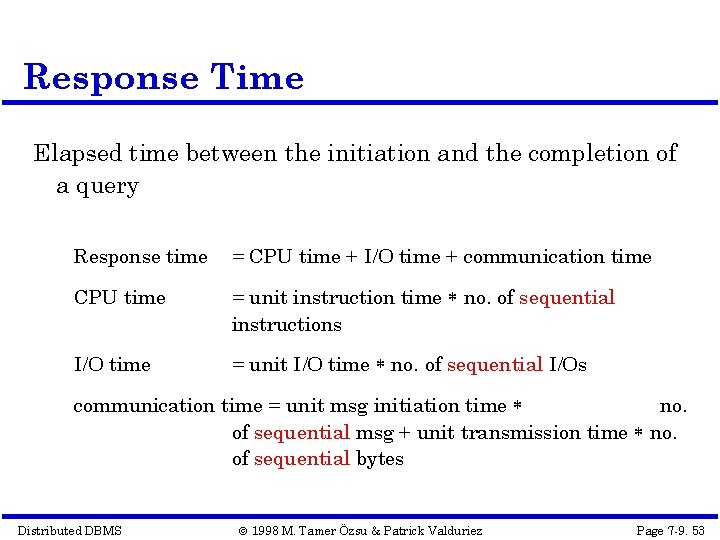

Response Time Elapsed time between the initiation and the completion of a query Response time = CPU time + I/O time + communication time CPU time = unit instruction time no. of sequential instructions I/O time = unit I/O time no. of sequential I/Os communication time = unit msg initiation time no. of sequential msg + unit transmission time no. of sequential bytes Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 53

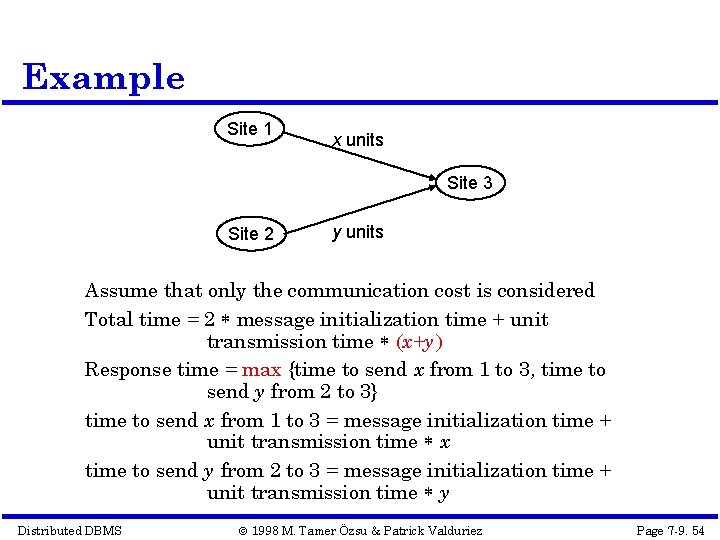

Example Site 1 x units Site 3 Site 2 y units Assume that only the communication cost is considered Total time = 2 message initialization time + unit transmission time (x+y) Response time = max {time to send x from 1 to 3, time to send y from 2 to 3} time to send x from 1 to 3 = message initialization time + unit transmission time x time to send y from 2 to 3 = message initialization time + unit transmission time y Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 54

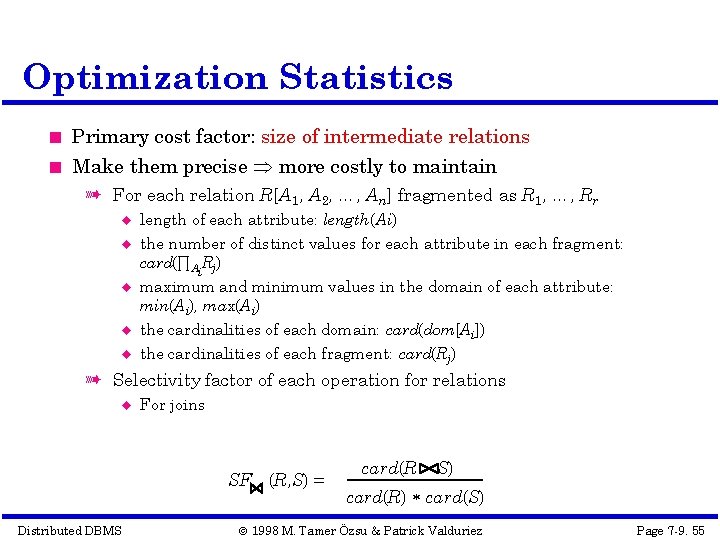

Optimization Statistics Primary cost factor: size of intermediate relations Make them precise more costly to maintain à For each relation R[A 1, A 2, …, An] fragmented as R 1, …, Rr length of each attribute: length(Ai) the number of distinct values for each attribute in each fragment: card(∏Ai. Rj) maximum and minimum values in the domain of each attribute: min(Ai), max(Ai) the cardinalities of each domain: card(dom[Ai]) the cardinalities of each fragment: card(Rj) à Selectivity factor of each operation for relations For joins SF (R, S) = Distributed DBMS card(R S) card(R) card(S) © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 55

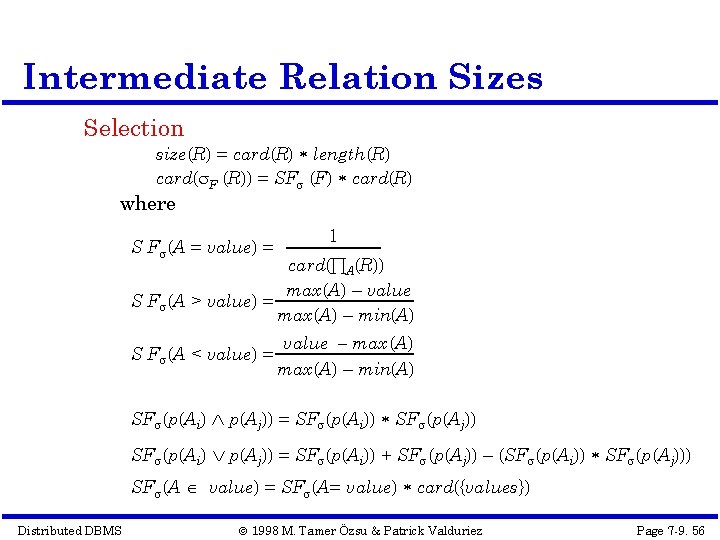

Intermediate Relation Sizes Selection size(R) = card(R) length(R) card( F (R)) = SF (F) card(R) where S F (A = value) = 1 card(∏A(R)) max(A) – value S F (A > value) = max(A) – min(A) S F (A < value) = value – max (A) max(A) – min(A) SF (p(Ai) p(Aj)) = SF (p(Ai)) SF (p(Aj)) SF (p(Ai) p(Aj)) = SF (p(Ai)) + SF (p(Aj)) – (SF (p(Ai)) SF (p(Aj))) SF (A value) = SF (A= value) card({values}) Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 56

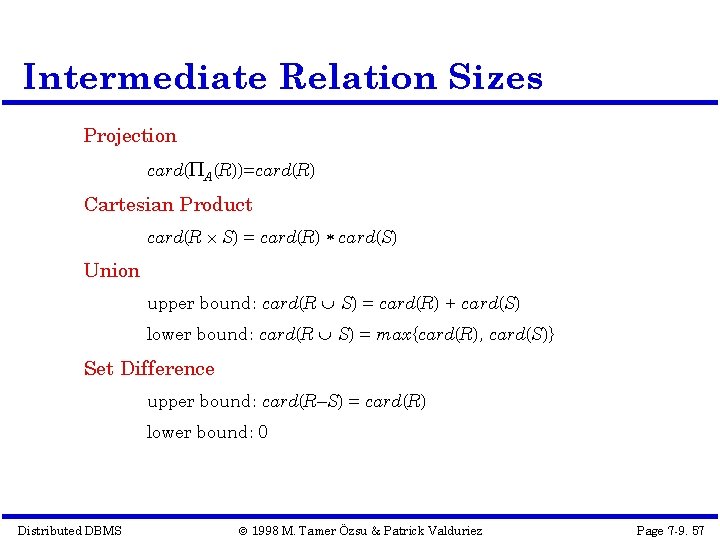

Intermediate Relation Sizes Projection card( A(R))=card(R) Cartesian Product card(R S) = card(R) card(S) Union upper bound: card(R S) = card(R) + card(S) lower bound: card(R S) = max{card(R), card(S)} Set Difference upper bound: card(R–S) = card(R) lower bound: 0 Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 57

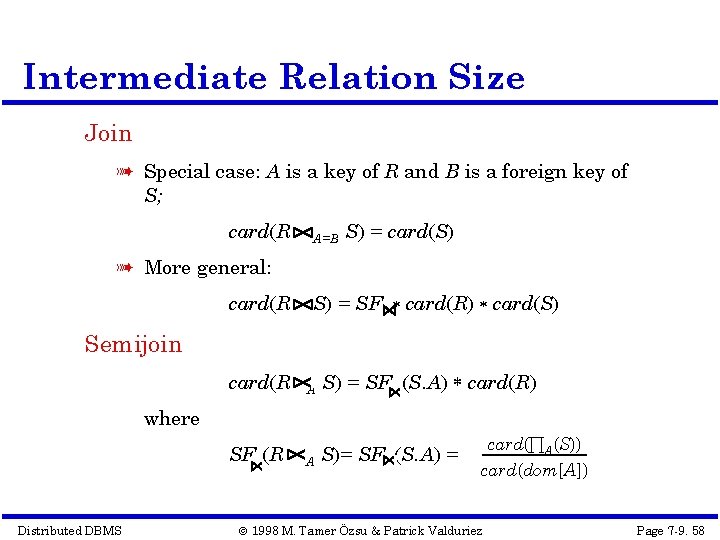

Intermediate Relation Size Join à Special case: A is a key of R and B is a foreign key of S; card(R A=B S) = card(S) à More general: card(R S) = SF card(R) card(S) Semijoin S) = SF (S. A) card(R) card(R A SF (R A S)= SF (S. A) = where Distributed DBMS card(∏A(S)) card(dom[A]) © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 58

Centralized Query Optimization INGRES à dynamic à interpretive System R à static à exhaustive search Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 59

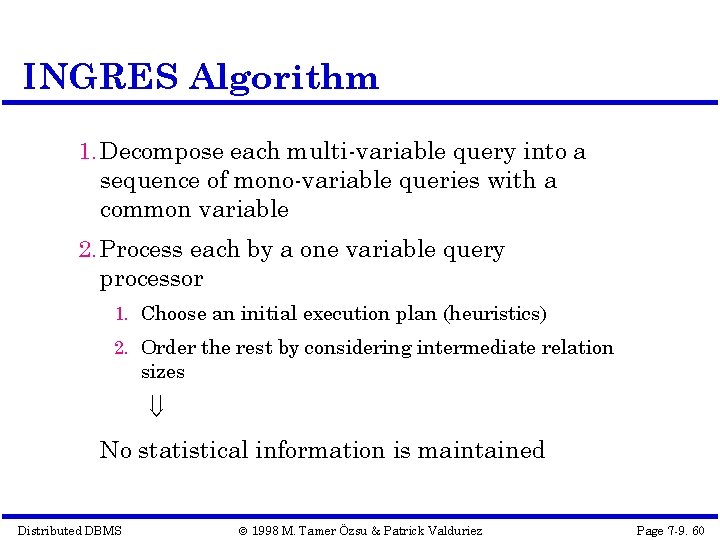

INGRES Algorithm 1. Decompose each multi-variable query into a sequence of mono-variable queries with a common variable 2. Process each by a one variable query processor 1. Choose an initial execution plan (heuristics) 2. Order the rest by considering intermediate relation sizes No statistical information is maintained Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 60

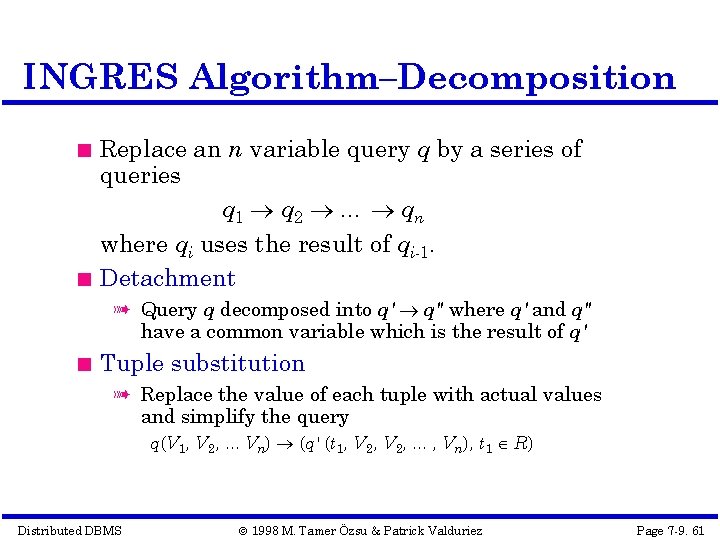

INGRES Algorithm–Decomposition Replace an n variable query q by a series of queries q 1 q 2 … qn where qi uses the result of qi-1. Detachment à Query q decomposed into q' q" where q' and q" have a common variable which is the result of q' Tuple substitution à Replace the value of each tuple with actual values and simplify the query q(V 1, V 2, . . . Vn) (q' (t 1, V 2, . . . , Vn), t 1 R) Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 61

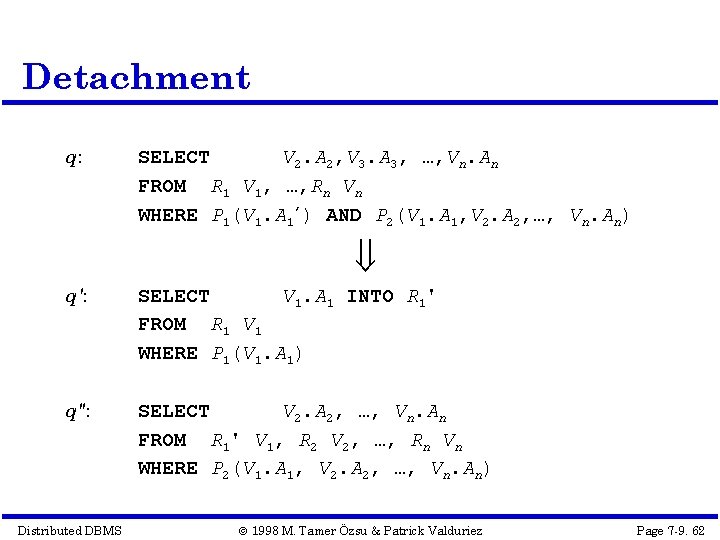

Detachment q: SELECT V 2. A 2, V 3. A 3, …, Vn. An FROM R 1 V 1, …, Rn Vn WHERE P 1(V 1. A 1’) AND P 2(V 1. A 1, V 2. A 2, …, Vn. An) q': SELECT V 1. A 1 INTO R 1' FROM R 1 V 1 WHERE P 1(V 1. A 1) q": SELECT V 2. A 2, …, Vn. An FROM R 1' V 1, R 2 V 2, …, Rn Vn WHERE P 2(V 1. A 1, V 2. A 2, …, Vn. An) Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 62

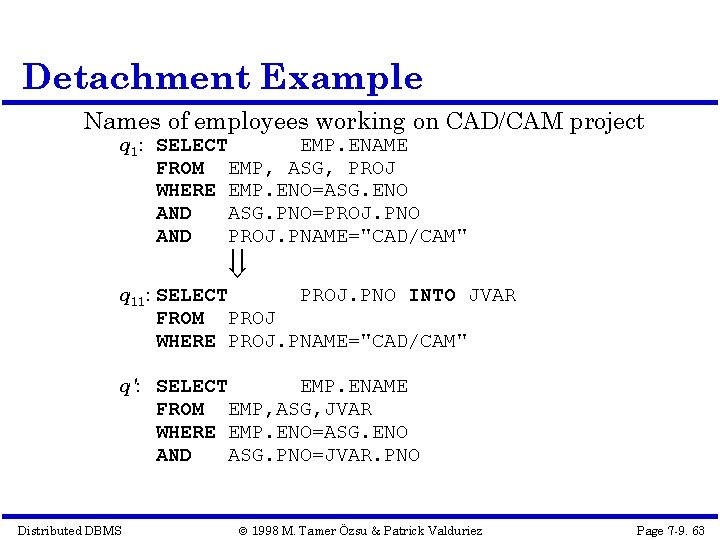

Detachment Example Names of employees working on CAD/CAM project q 1: SELECT EMP. ENAME FROM EMP, ASG, PROJ WHERE EMP. ENO=ASG. ENO AND ASG. PNO=PROJ. PNO AND PROJ. PNAME="CAD/CAM" q 11: SELECT PROJ. PNO INTO JVAR FROM PROJ WHERE PROJ. PNAME="CAD/CAM" q': SELECT EMP. ENAME FROM EMP, ASG, JVAR WHERE EMP. ENO=ASG. ENO AND ASG. PNO=JVAR. PNO Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 63

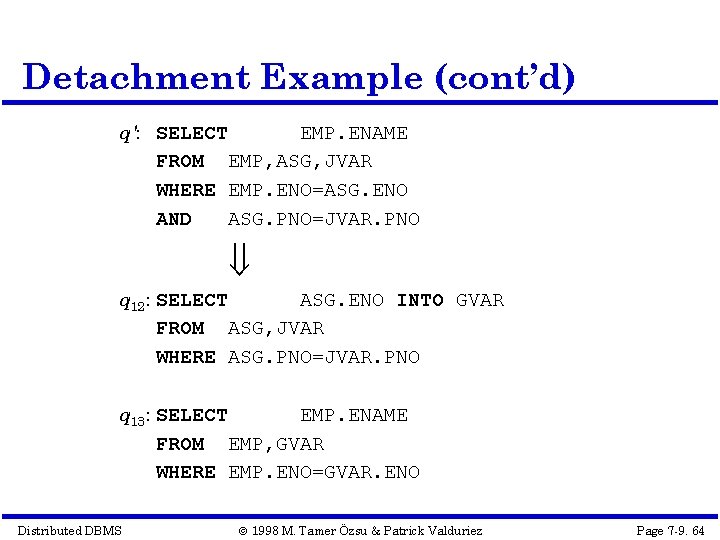

Detachment Example (cont’d) q': SELECT EMP. ENAME FROM EMP, ASG, JVAR WHERE EMP. ENO=ASG. ENO AND ASG. PNO=JVAR. PNO q 12: SELECT ASG. ENO INTO GVAR FROM ASG, JVAR WHERE ASG. PNO=JVAR. PNO q 13: SELECT EMP. ENAME FROM EMP, GVAR WHERE EMP. ENO=GVAR. ENO Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 64

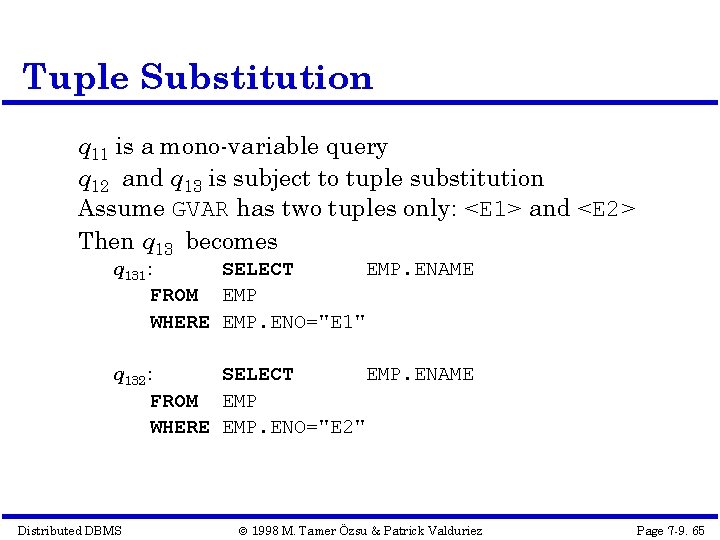

Tuple Substitution q 11 is a mono-variable query q 12 and q 13 is subject to tuple substitution Assume GVAR has two tuples only: <E 1> and <E 2> Then q 13 becomes q 131: SELECT EMP. ENAME FROM EMP WHERE EMP. ENO="E 1" q 132: SELECT EMP. ENAME FROM EMP WHERE EMP. ENO="E 2" Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 65

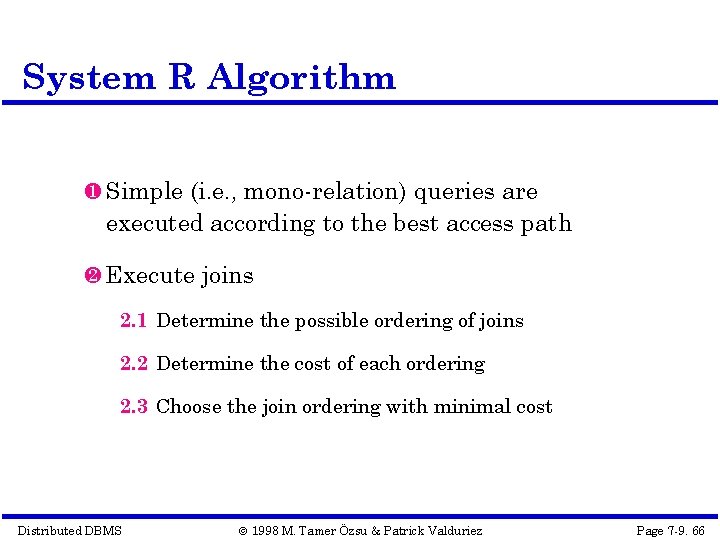

System R Algorithm Simple (i. e. , mono-relation) queries are executed according to the best access path Execute joins 2. 1 Determine the possible ordering of joins 2. 2 Determine the cost of each ordering 2. 3 Choose the join ordering with minimal cost Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 66

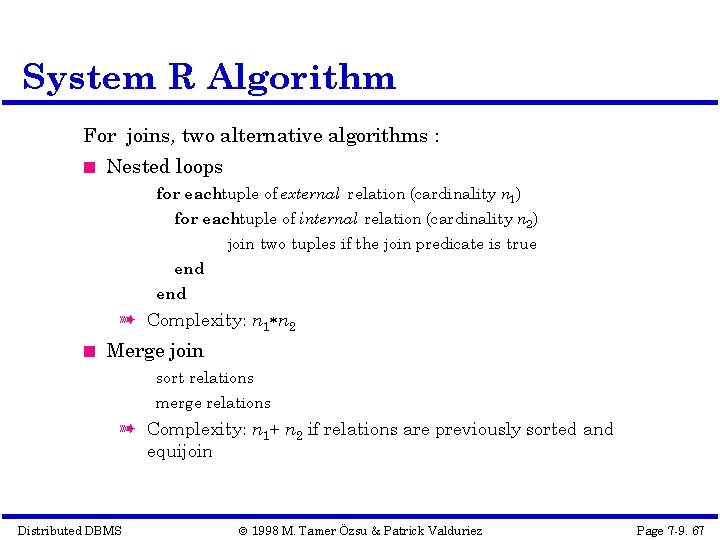

System R Algorithm For joins, two alternative algorithms : Nested loops for eachtuple of external relation (cardinality n 1) for eachtuple of internal relation (cardinality n 2) join two tuples if the join predicate is true end à Complexity: n 1 n 2 Merge join sort relations merge relations à Complexity: n 1+ n 2 if relations are previously sorted and equijoin Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 67

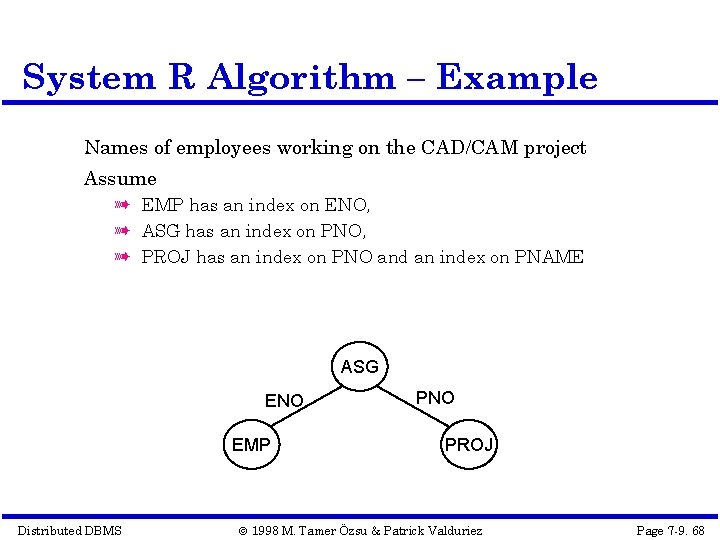

System R Algorithm – Example Names of employees working on the CAD/CAM project Assume à EMP has an index on ENO, à ASG has an index on PNO, à PROJ has an index on PNO and an index on PNAME ASG ENO EMP Distributed DBMS PNO PROJ © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 68

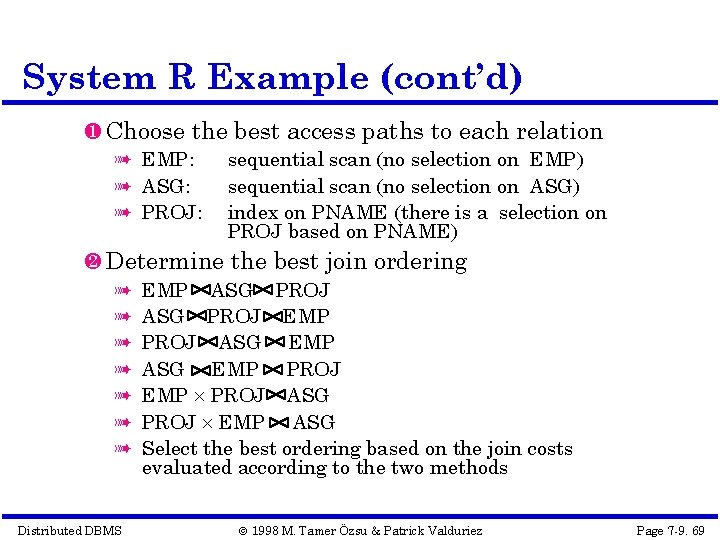

System R Example (cont’d) Choose the best access paths to each relation à EMP: à ASG: à PROJ: sequential scan (no selection on EMP) sequential scan (no selection on ASG) index on PNAME (there is a selection on PROJ based on PNAME) Determine the best join ordering à à à à Distributed DBMS EMP ASG PROJ EMP PROJ ASG EMP PROJ EMP PROJ ASG PROJ EMP ASG Select the best ordering based on the join costs evaluated according to the two methods © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 69

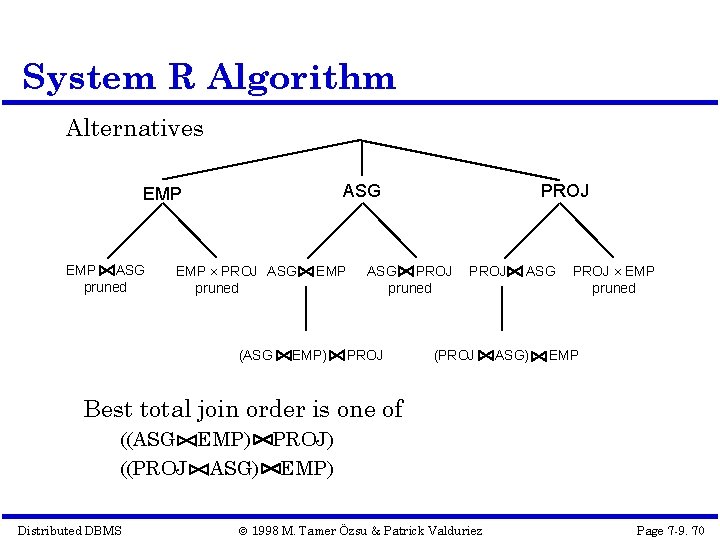

System R Algorithm Alternatives ASG EMP ASG pruned EMP PROJ ASG pruned (ASG EMP) PROJ ASG PROJ pruned PROJ (PROJ ASG) PROJ EMP pruned EMP Best total join order is one of ((ASG EMP) PROJ) ((PROJ ASG) EMP) Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 70

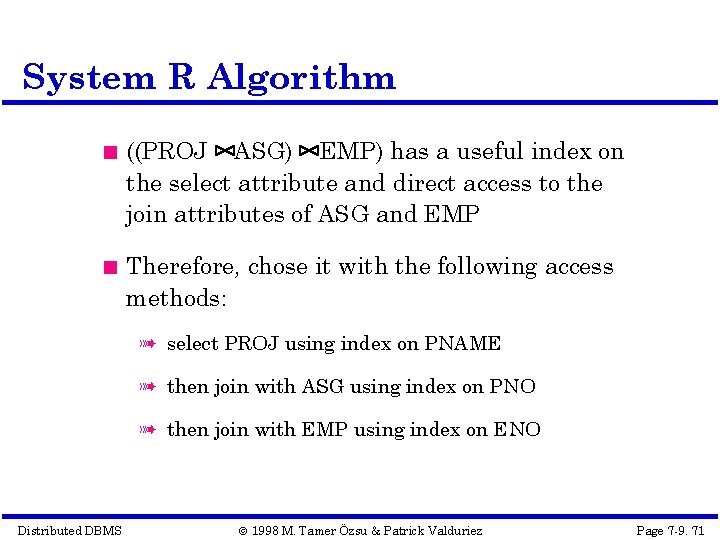

System R Algorithm ((PROJ ASG) EMP) has a useful index on the select attribute and direct access to the join attributes of ASG and EMP Therefore, chose it with the following access methods: à select PROJ using index on PNAME à then join with ASG using index on PNO à then join with EMP using index on ENO Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 71

Join Ordering in Fragment Queries Ordering joins à Distributed INGRES à System R* Semijoin ordering à SDD-1 Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 72

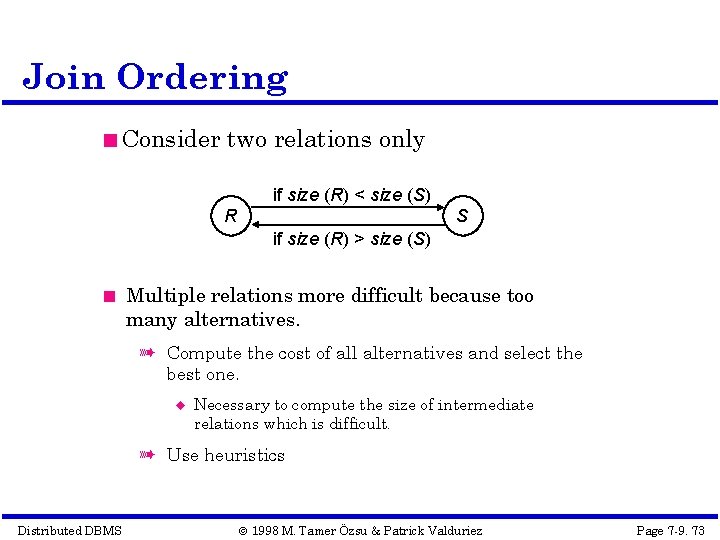

Join Ordering Consider two relations only if size (R) < size (S) R S if size (R) > size (S) Multiple relations more difficult because too many alternatives. à Compute the cost of all alternatives and select the best one. Necessary to compute the size of intermediate relations which is difficult. à Use heuristics Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 73

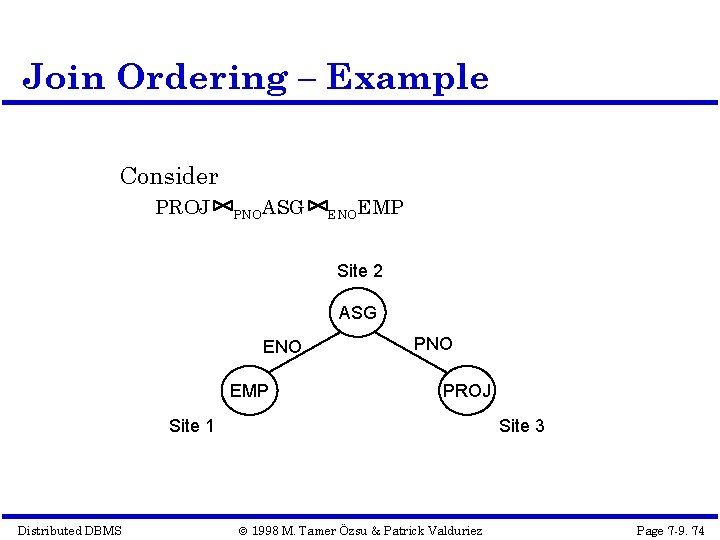

Join Ordering – Example Consider PROJ PNOASG ENOEMP Site 2 ASG ENO EMP PNO PROJ Site 1 Distributed DBMS Site 3 © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 74

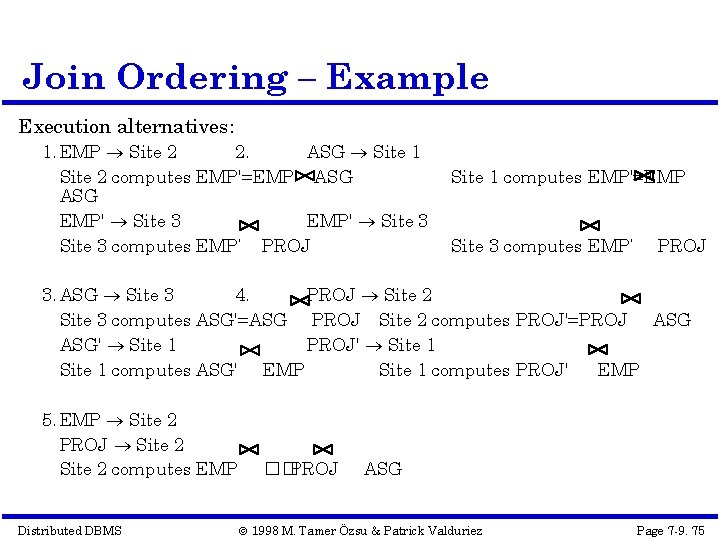

Join Ordering – Example Execution alternatives: 1. EMP Site 2 2. ASG Site 1 Site 2 computes EMP'=EMP ASG EMP' Site 3 computes EMP’ PROJ Site 1 computes EMP'=EMP Site 3 computes EMP’ PROJ 3. ASG Site 3 4. PROJ Site 2 Site 3 computes ASG'=ASG PROJ Site 2 computes PROJ'=PROJ ASG' Site 1 PROJ' Site 1 computes ASG' EMP Site 1 computes PROJ' EMP 5. EMP Site 2 PROJ Site 2 computes EMP Distributed DBMS �� PROJ ASG © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 75

![Semijoin Algorithms Consider the join of two relations: à R[A] (located at site 1) Semijoin Algorithms Consider the join of two relations: à R[A] (located at site 1)](http://slidetodoc.com/presentation_image_h/fb7ede624f629c92462d613dee0cefff/image-76.jpg)

Semijoin Algorithms Consider the join of two relations: à R[A] (located at site 1) à S[A] (located at site 2) Alternatives: 1 Do the join R A S 2 Perform one of the semijoin equivalents R Distributed DBMS A S (R R (R A A S) (S A S) A A S R) A (S A © 1998 M. Tamer Özsu & Patrick Valduriez R) Page 7 -9. 76

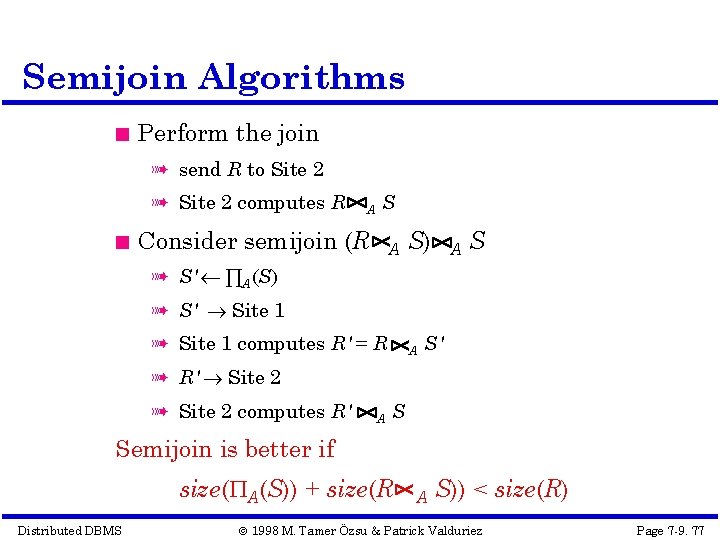

Semijoin Algorithms Perform the join à send R to Site 2 à Site 2 computes R A S Consider semijoin (R A S) A S à S' ∏A(S) à S' Site 1 à Site 1 computes R' = R A S' à R' Site 2 à Site 2 computes R' A S Semijoin is better if size( A(S)) + size(R Distributed DBMS A S)) < size(R) © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 77

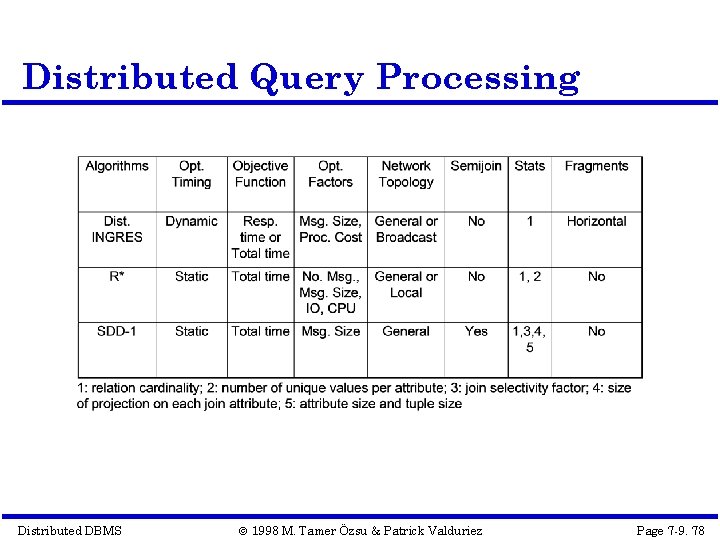

Distributed Query Processing Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 78

Distributed INGRES Algorithm Same as the centralized version except Movement of relations (and fragments) need to be considered Optimization with respect to communication cost or response time possible Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 79

R* Algorithm Cost function includes local processing as well as transmission Considers only joins Exhaustive search Compilation Published papers provide solutions to handling horizontal and vertical fragmentations but the implemented prototype does not Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 80

R* Algorithm Performing joins Ship whole à larger data transfer à smaller number of messages à better if relations are small Fetch as needed à number of messages = O(cardinality of external relation) à data transfer per message is minimal à better if relations are large and the selectivity is good Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 81

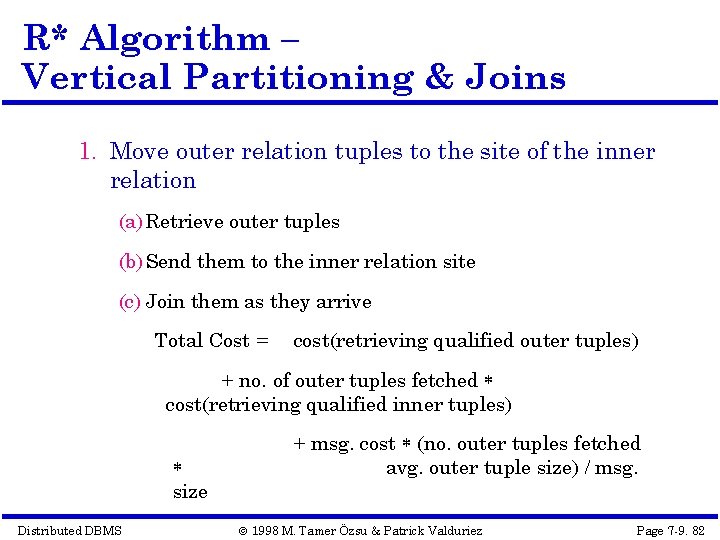

R* Algorithm – Vertical Partitioning & Joins 1. Move outer relation tuples to the site of the inner relation (a) Retrieve outer tuples (b) Send them to the inner relation site (c) Join them as they arrive Total Cost = cost(retrieving qualified outer tuples) + no. of outer tuples fetched cost(retrieving qualified inner tuples) size Distributed DBMS + msg. cost (no. outer tuples fetched avg. outer tuple size) / msg. © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 82

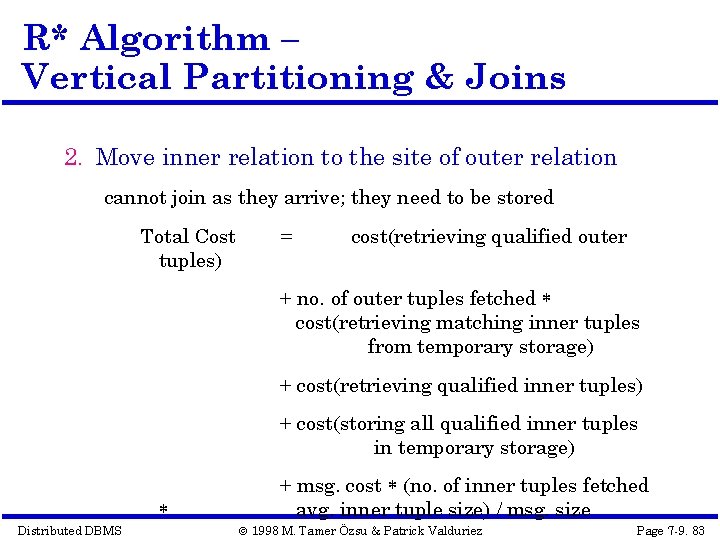

R* Algorithm – Vertical Partitioning & Joins 2. Move inner relation to the site of outer relation cannot join as they arrive; they need to be stored Total Cost tuples) = cost(retrieving qualified outer + no. of outer tuples fetched cost(retrieving matching inner tuples from temporary storage) + cost(retrieving qualified inner tuples) + cost(storing all qualified inner tuples in temporary storage) Distributed DBMS + msg. cost (no. of inner tuples fetched avg. inner tuple size) / msg. size © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 83

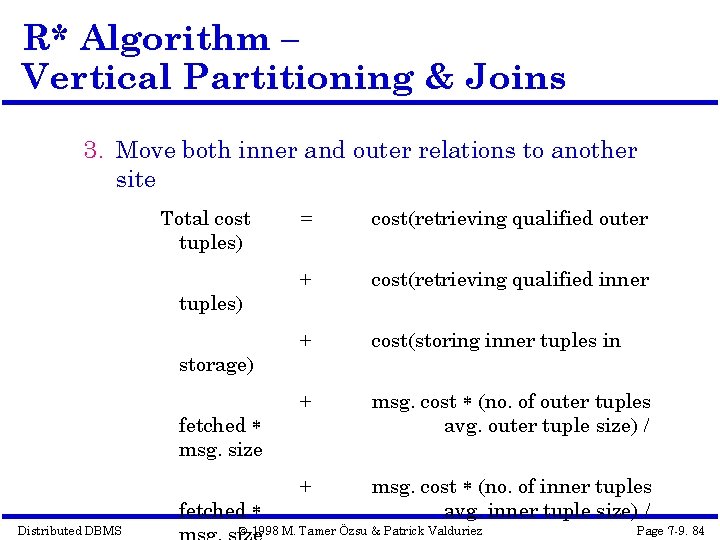

R* Algorithm – Vertical Partitioning & Joins 3. Move both inner and outer relations to another site Total cost tuples) storage) fetched msg. size fetched Distributed DBMS = cost(retrieving qualified outer + cost(retrieving qualified inner + cost(storing inner tuples in + msg. cost (no. of outer tuples avg. outer tuple size) / + msg. cost (no. of inner tuples avg. inner tuple size) / © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 84

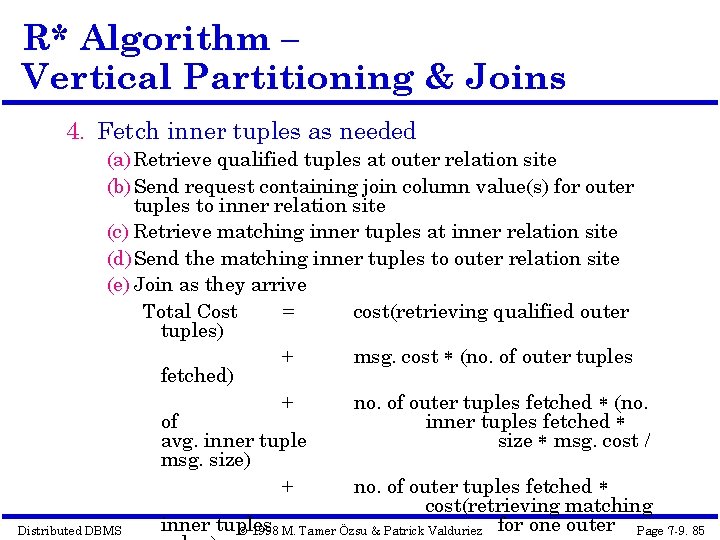

R* Algorithm – Vertical Partitioning & Joins 4. Fetch inner tuples as needed (a) Retrieve qualified tuples at outer relation site (b) Send request containing join column value(s) for outer tuples to inner relation site (c) Retrieve matching inner tuples at inner relation site (d) Send the matching inner tuples to outer relation site (e) Join as they arrive Total Cost = cost(retrieving qualified outer tuples) + msg. cost (no. of outer tuples fetched) + no. of outer tuples fetched (no. of inner tuples fetched avg. inner tuple size msg. cost / msg. size) + no. of outer tuples fetched cost(retrieving matching inner tuples Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez for one outer Page 7 -9. 85

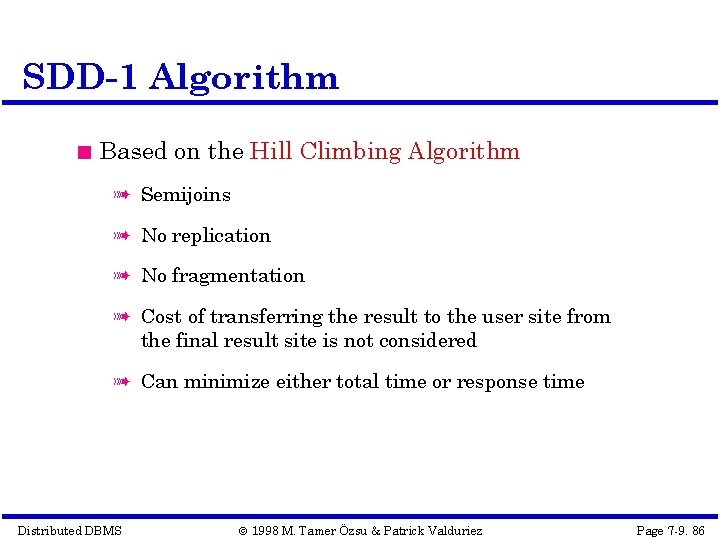

SDD-1 Algorithm Based on the Hill Climbing Algorithm à Semijoins à No replication à No fragmentation à Cost of transferring the result to the user site from the final result site is not considered à Can minimize either total time or response time Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 86

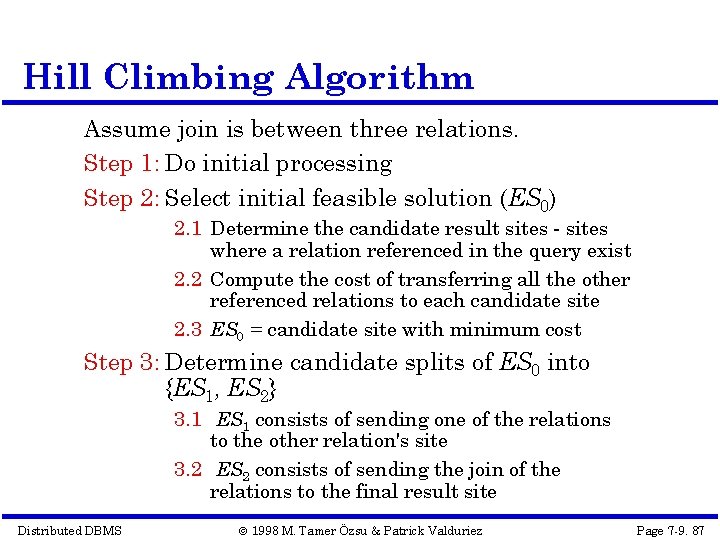

Hill Climbing Algorithm Assume join is between three relations. Step 1: Do initial processing Step 2: Select initial feasible solution (ES 0) 2. 1 Determine the candidate result sites - sites where a relation referenced in the query exist 2. 2 Compute the cost of transferring all the other referenced relations to each candidate site 2. 3 ES 0 = candidate site with minimum cost Step 3: Determine candidate splits of ES 0 into {ES 1, ES 2} 3. 1 ES 1 consists of sending one of the relations to the other relation's site 3. 2 ES 2 consists of sending the join of the relations to the final result site Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 87

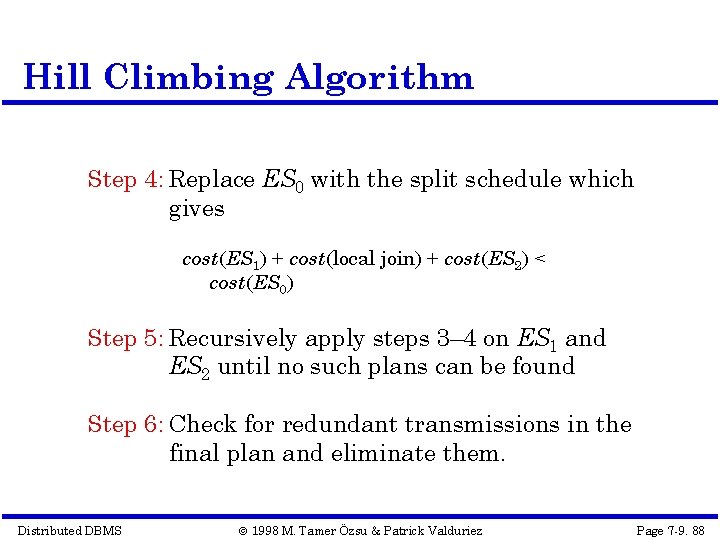

Hill Climbing Algorithm Step 4: Replace ES 0 with the split schedule which gives cost(ES 1) + cost(local join) + cost(ES 2) < cost(ES 0) Step 5: Recursively apply steps 3– 4 on ES 1 and ES 2 until no such plans can be found Step 6: Check for redundant transmissions in the final plan and eliminate them. Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 88

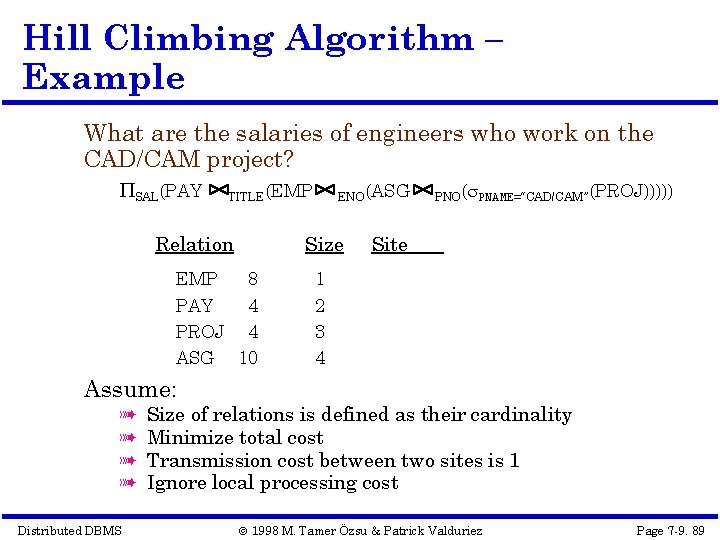

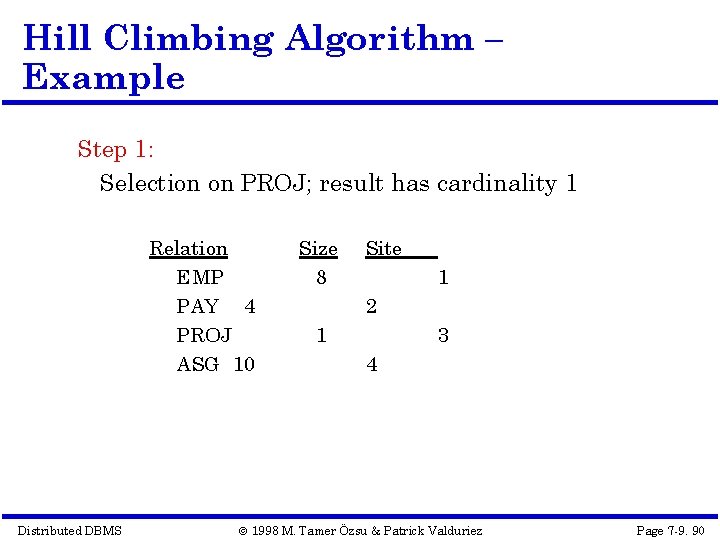

Hill Climbing Algorithm – Example What are the salaries of engineers who work on the CAD/CAM project? SAL(PAY TITLE(EMP Relation EMP ENO(ASG Size 8 1 PAY 4 PROJ 4 ASG 10 2 3 4 PNO( PNAME=“CAD/CAM”(PROJ))))) Site Assume: à à Distributed DBMS Size of relations is defined as their cardinality Minimize total cost Transmission cost between two sites is 1 Ignore local processing cost © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 89

Hill Climbing Algorithm – Example Step 1: Selection on PROJ; result has cardinality 1 Relation EMP PAY 4 PROJ ASG 10 Distributed DBMS Size 8 Site 1 2 1 3 4 © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 90

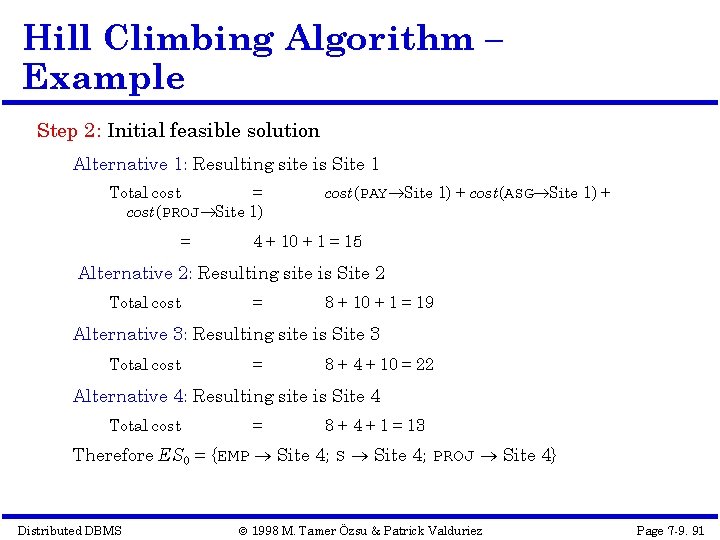

Hill Climbing Algorithm – Example Step 2: Initial feasible solution Alternative 1: Resulting site is Site 1 Total cost = cost(PROJ Site 1) = cost(PAY Site 1) + cost(ASG Site 1) + 4 + 10 + 1 = 15 Alternative 2: Resulting site is Site 2 Total cost = 8 + 10 + 1 = 19 Alternative 3: Resulting site is Site 3 Total cost = 8 + 4 + 10 = 22 Alternative 4: Resulting site is Site 4 Total cost = 8 + 4 + 1 = 13 Therefore ES 0 = {EMP Site 4; S Site 4; PROJ Site 4} Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 91

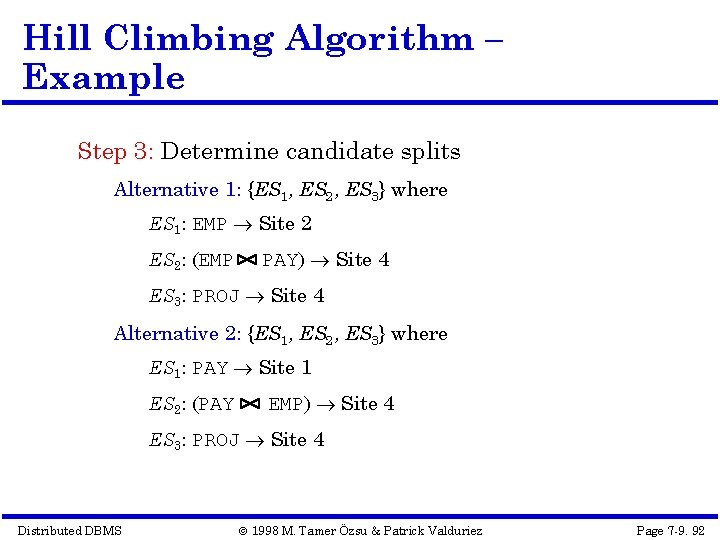

Hill Climbing Algorithm – Example Step 3: Determine candidate splits Alternative 1: {ES 1, ES 2, ES 3} where ES 1: EMP Site 2 ES 2: (EMP PAY) Site 4 ES 3: PROJ Site 4 Alternative 2: {ES 1, ES 2, ES 3} where ES 1: PAY Site 1 ES 2: (PAY EMP) Site 4 ES 3: PROJ Site 4 Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 92

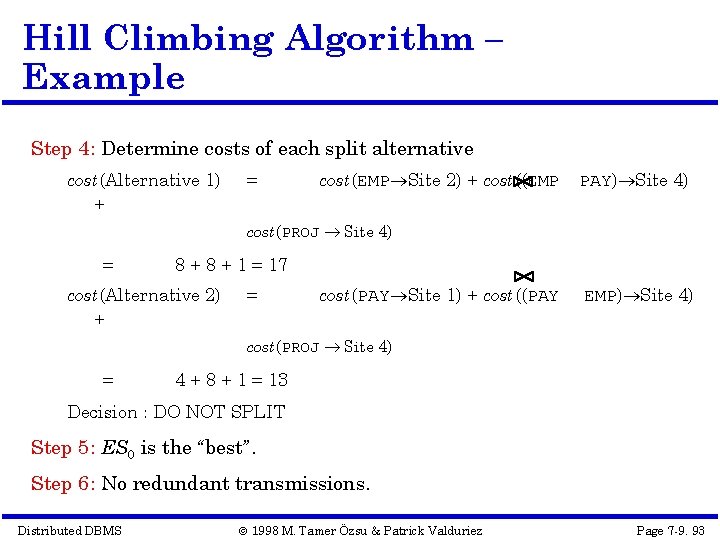

Hill Climbing Algorithm – Example Step 4: Determine costs of each split alternative cost(Alternative 1) + = cost(EMP Site 2) + cost((EMP PAY) Site 4) cost(PROJ Site 4) = 8 + 1 = 17 cost(Alternative 2) + = cost(PAY Site 1) + cost((PAY EMP) Site 4) cost(PROJ Site 4) = 4 + 8 + 1 = 13 Decision : DO NOT SPLIT Step 5: ES 0 is the “best”. Step 6: No redundant transmissions. Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 93

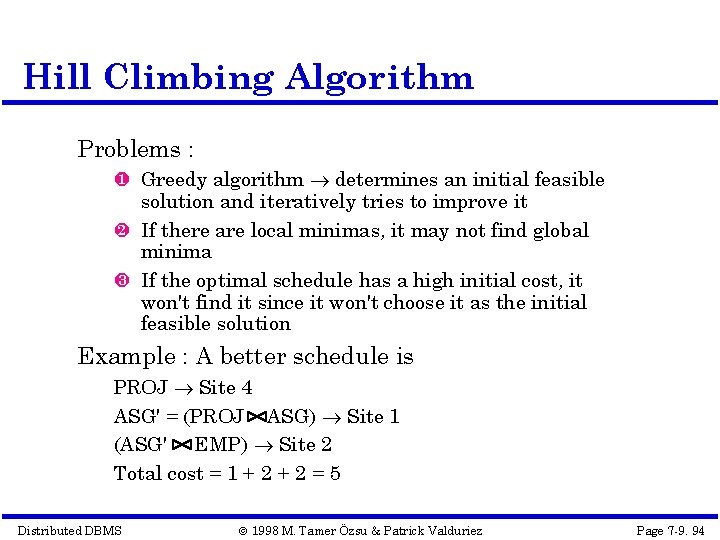

Hill Climbing Algorithm Problems : Greedy algorithm determines an initial feasible solution and iteratively tries to improve it If there are local minimas, it may not find global minima If the optimal schedule has a high initial cost, it won't find it since it won't choose it as the initial feasible solution Example : A better schedule is PROJ Site 4 ASG' = (PROJ ASG) Site 1 (ASG' EMP) Site 2 Total cost = 1 + 2 = 5 Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 94

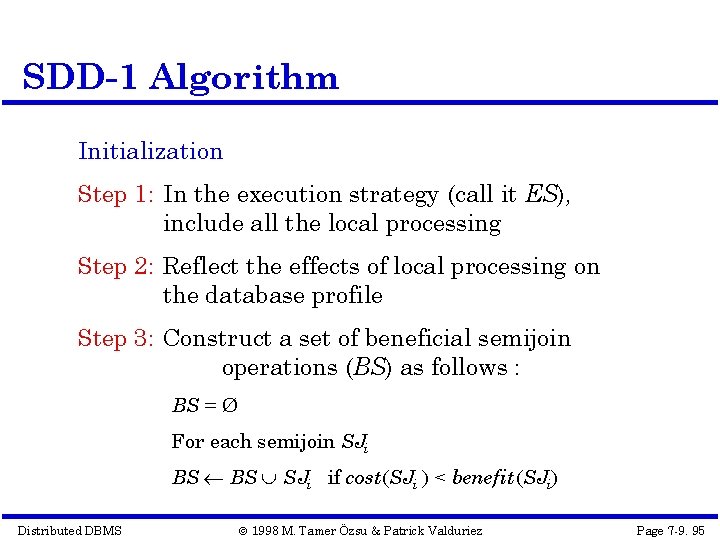

SDD-1 Algorithm Initialization Step 1: In the execution strategy (call it ES), include all the local processing Step 2: Reflect the effects of local processing on the database profile Step 3: Construct a set of beneficial semijoin operations (BS) as follows : BS = Ø For each semijoin SJi BS SJi if cost(SJi ) < benefit (SJi) Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 95

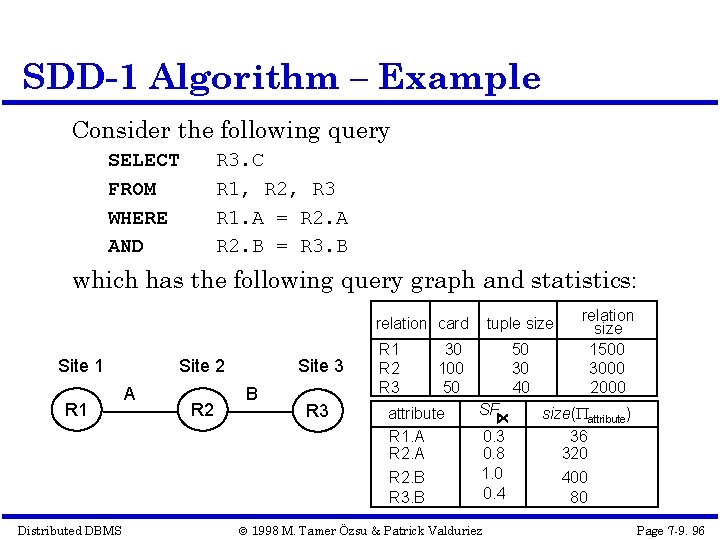

SDD-1 Algorithm – Example Consider the following query SELECT FROM WHERE AND R 3. C R 1, R 2, R 3 R 1. A = R 2. A R 2. B = R 3. B which has the following query graph and statistics: relation card Site 1 R 1 Distributed DBMS Site 2 A R 2 Site 3 B R 3 R 1 R 2 R 3 tuple size 30 100 50 attribute R 1. A R 2. B R 3. B 50 30 40 SF 0. 3 0. 8 1. 0 0. 4 © 1998 M. Tamer Özsu & Patrick Valduriez relation size 1500 3000 2000 size( attribute) 36 320 400 80 Page 7 -9. 96

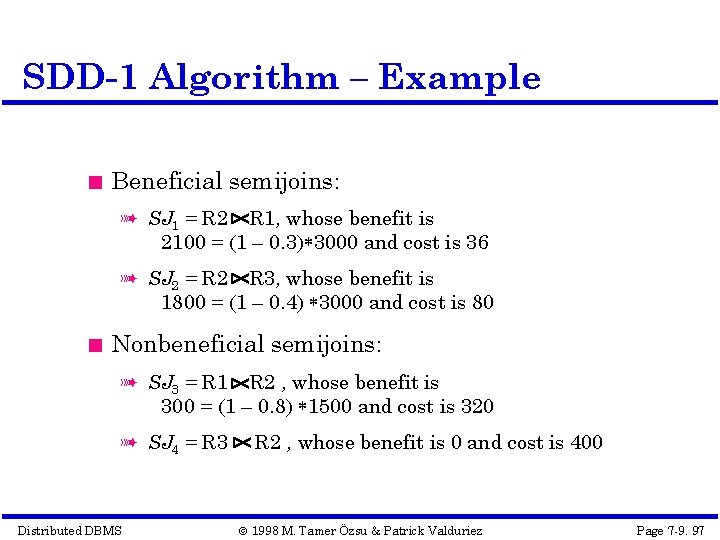

SDD-1 Algorithm – Example Beneficial semijoins: à SJ 1 = R 2 R 1, whose benefit is 2100 = (1 – 0. 3) 3000 and cost is 36 à SJ 2 = R 2 R 3, whose benefit is 1800 = (1 – 0. 4) 3000 and cost is 80 Nonbeneficial semijoins: à SJ 3 = R 1 R 2 , whose benefit is 300 = (1 – 0. 8) 1500 and cost is 320 à SJ 4 = R 3 Distributed DBMS R 2 , whose benefit is 0 and cost is 400 © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 97

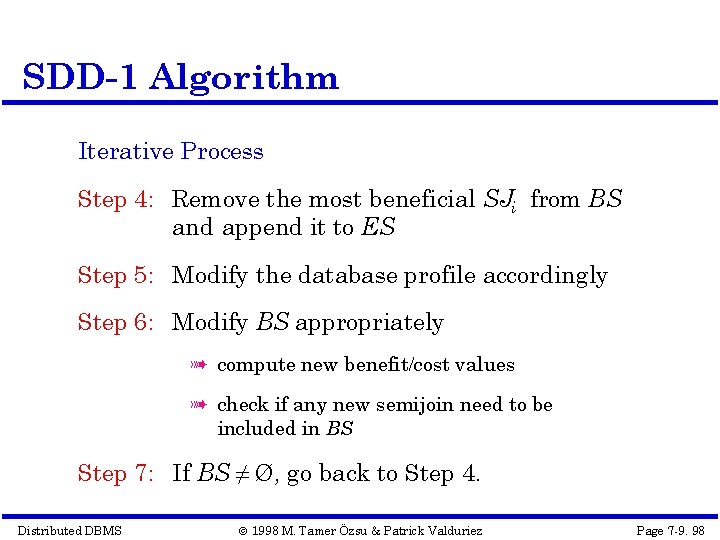

SDD-1 Algorithm Iterative Process Step 4: Remove the most beneficial SJi from BS and append it to ES Step 5: Modify the database profile accordingly Step 6: Modify BS appropriately à compute new benefit/cost values à check if any new semijoin need to be included in BS Step 7: If BS ≠ Ø, go back to Step 4. Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 98

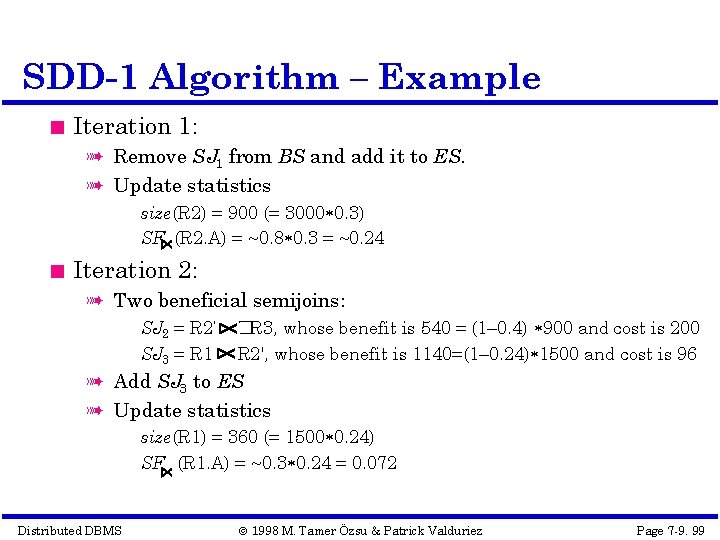

SDD-1 Algorithm – Example Iteration 1: à Remove SJ 1 from BS and add it to ES. à Update statistics size(R 2) = 900 (= 3000 0. 3) SF (R 2. A) = ~0. 8 0. 3 = ~0. 24 Iteration 2: à Two beneficial semijoins: SJ 2 = R 2’ SJ 3 = R 1 �R 3, whose benefit is 540 = (1– 0. 4) 900 and cost is 200 R 2', whose benefit is 1140=(1– 0. 24) 1500 and cost is 96 à Add SJ 3 to ES à Update statistics size(R 1) = 360 (= 1500 0. 24) SF (R 1. A) = ~0. 3 0. 24 = 0. 072 Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 99

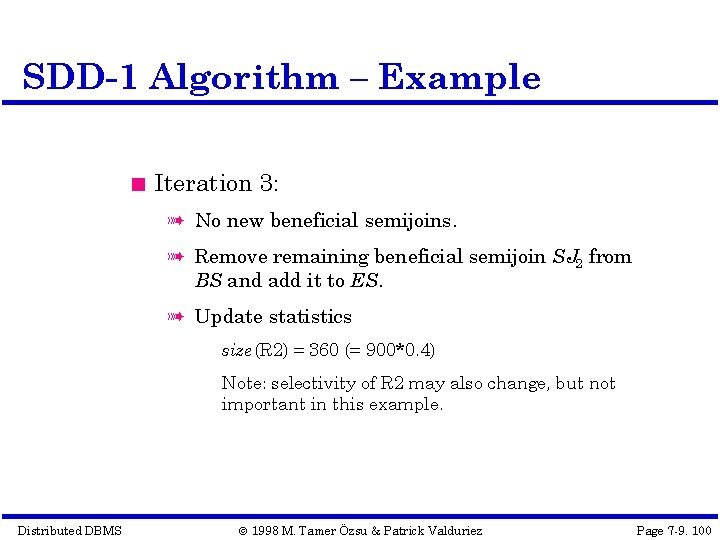

SDD-1 Algorithm – Example Iteration 3: à No new beneficial semijoins. à Remove remaining beneficial semijoin SJ 2 from BS and add it to ES. à Update statistics size(R 2) = 360 (= 900*0. 4) Note: selectivity of R 2 may also change, but not important in this example. Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 100

SDD-1 Algorithm Assembly Site Selection Step 8: Find the site where the largest amount of data resides and select it as the assembly site Example: Amount of data stored at sites: Site 1: 360 Site 2: 360 Site 3: 2000 Therefore, Site 3 will be chosen as the assembly site. Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 101

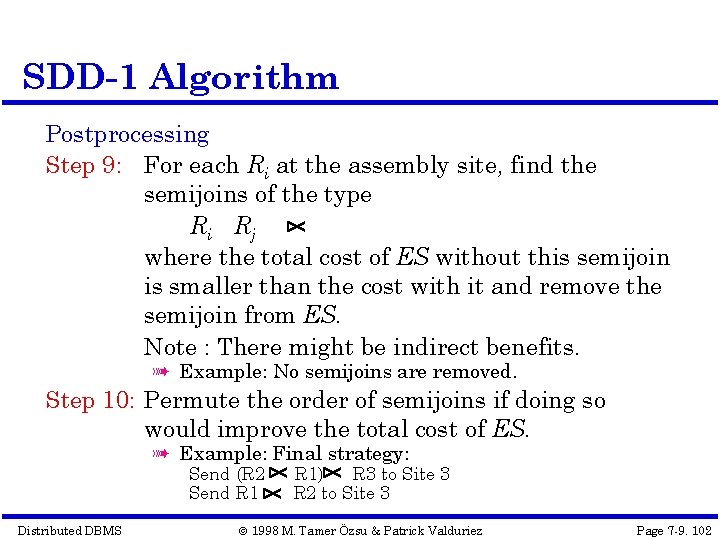

SDD-1 Algorithm Postprocessing Step 9: For each Ri at the assembly site, find the semijoins of the type Ri Rj where the total cost of ES without this semijoin is smaller than the cost with it and remove the semijoin from ES. Note : There might be indirect benefits. à Example: No semijoins are removed. Step 10: Permute the order of semijoins if doing so would improve the total cost of ES. à Example: Final strategy: Send (R 2 Send R 1 Distributed DBMS R 1) R 3 to Site 3 R 2 to Site 3 © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 102

Step 4 – Local Optimization Input: Best global execution schedule Select the best access path Use the centralized optimization techniques Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 103

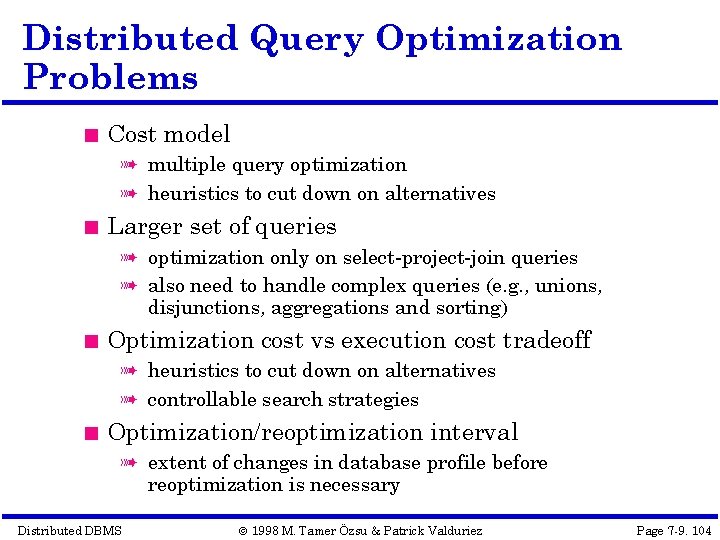

Distributed Query Optimization Problems Cost model à multiple query optimization à heuristics to cut down on alternatives Larger set of queries à optimization only on select-project-join queries à also need to handle complex queries (e. g. , unions, disjunctions, aggregations and sorting) Optimization cost vs execution cost tradeoff à heuristics to cut down on alternatives à controllable search strategies Optimization/reoptimization interval à extent of changes in database profile before reoptimization is necessary Distributed DBMS © 1998 M. Tamer Özsu & Patrick Valduriez Page 7 -9. 104

- Slides: 104