Outline for Todays Lecture Administrative Welcome back Programming

Outline for Today’s Lecture Administrative: – Welcome back! – Programming assignment 2 due date extended (as requested) Objective: – File caching – NTFS – Distributed File Systems

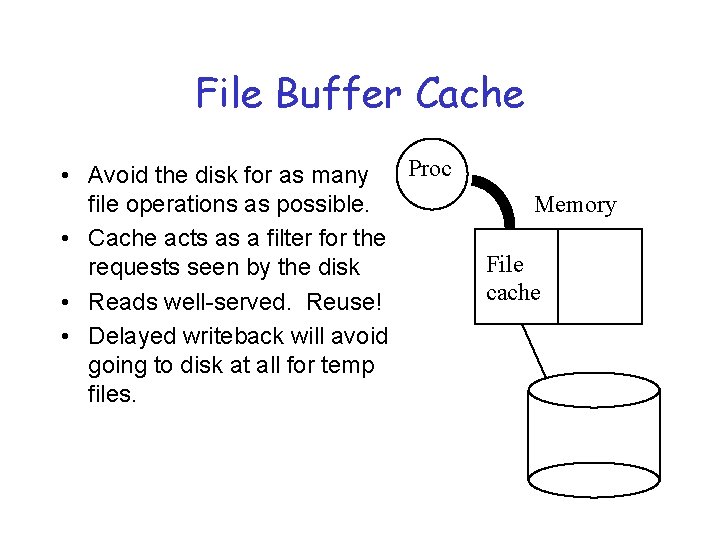

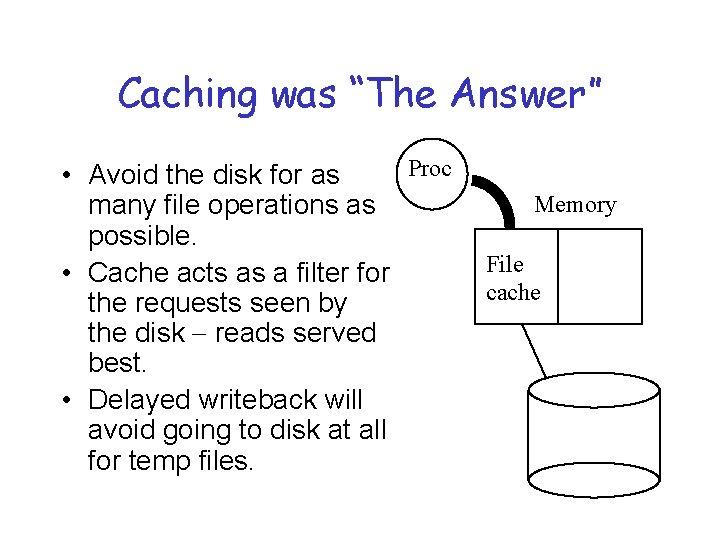

File Buffer Cache Proc • Avoid the disk for as many file operations as possible. • Cache acts as a filter for the requests seen by the disk • Reads well-served. Reuse! • Delayed writeback will avoid going to disk at all for temp files. Memory File cache

Why Are File Caches Effective? 1. Locality of reference: storage accesses come in clumps. spatial locality: If a process accesses data in block B, it is likely to reference other nearby data soon. (e. g. , the remainder of block B) example: reading or writing a file one byte at a time temporal locality: Recently accessed data is likely to be used again. 2. Read-ahead: if we can predict what blocks will be needed soon, we can prefetch them into the cache. most files are accessed sequentially

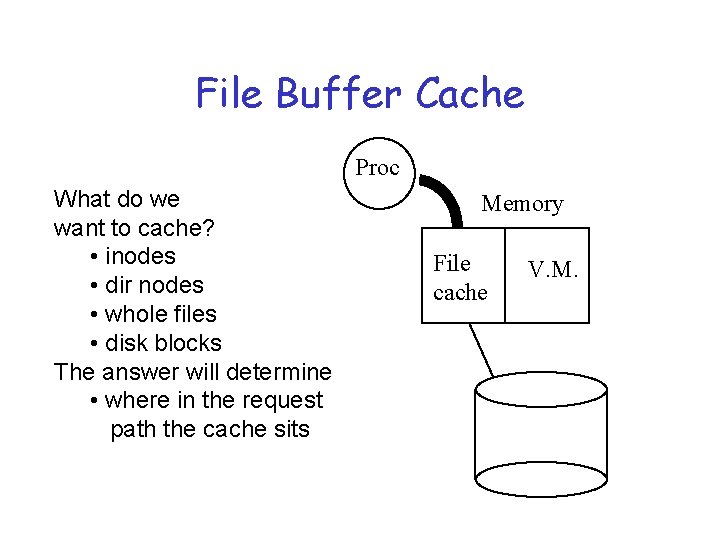

File Buffer Cache Proc What do we want to cache? • inodes • dir nodes • whole files • disk blocks The answer will determine • where in the request path the cache sits Memory File cache V. M.

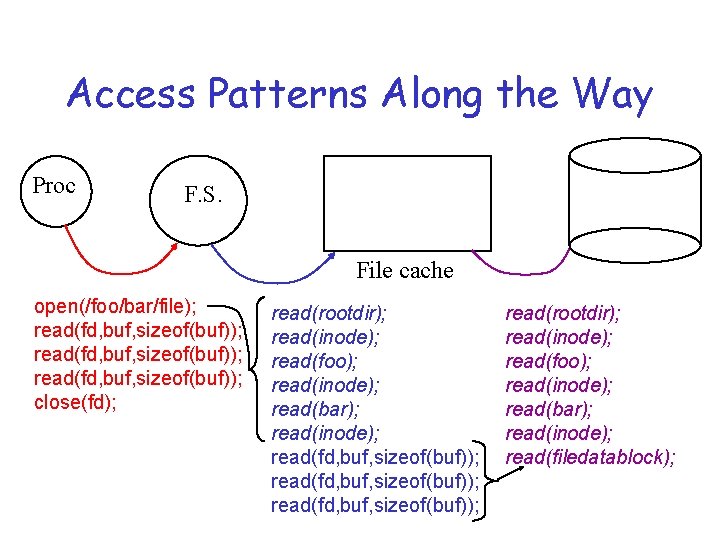

Access Patterns Along the Way Proc F. S. File cache open(/foo/bar/file); read(fd, buf, sizeof(buf)); close(fd); read(rootdir); read(inode); read(foo); read(inode); read(bar); read(inode); read(fd, buf, sizeof(buf)); read(rootdir); read(inode); read(foo); read(inode); read(bar); read(inode); read(filedatablock);

File Access Patterns • What do users seem to want from the file abstraction? • What do these usage patterns mean for file structure and implementation decisions? – – What operations should be optimized 1 st? How should files be structured? Is there temporal locality in file usage? How long do files really live?

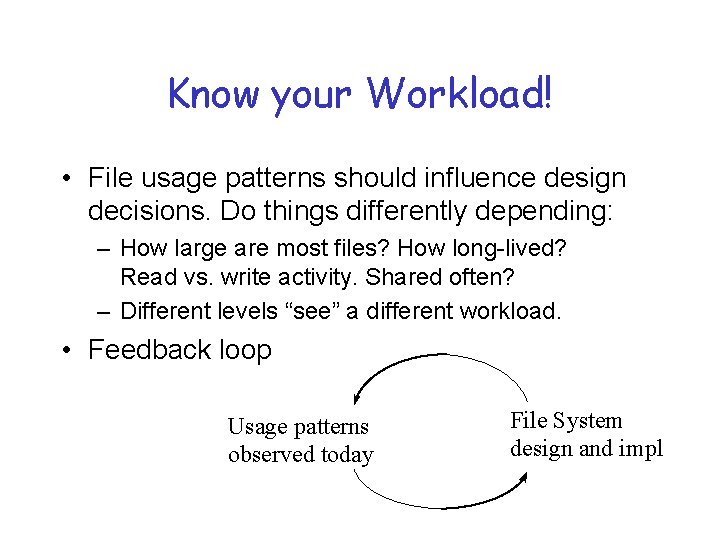

Know your Workload! • File usage patterns should influence design decisions. Do things differently depending: – How large are most files? How long-lived? Read vs. write activity. Shared often? – Different levels “see” a different workload. • Feedback loop Usage patterns observed today File System design and impl

What to Cache? Locality in File Access Patterns (UNIX Workloads) • Most files are small (often fitting into one disk block) although most bytes are transferred from longer files. • Accesses tend to be sequential and 100% – Spatial locality – What happens when we cache a huge file? • Most opens are for read mode, most bytes transferred are by read operations

What to Cache? Locality in File Access Patterns (continued) • There is significant reuse (re-opens) - most opens go to files repeatedly opened & quickly. Directory nodes and executables also exhibit good temporal locality. – Looks good for caching! • Use of temp files is significant part of file system activity in UNIX - very limited reuse, short lifetimes (less than a minute). • Long absolute pathnames are common in file opens – Name resolution can dominate performance – why?

What to do about long paths? • Make long lookups cheaper - cluster inodes and data on disk to make each component resolution step somewhat cheaper – Immediate files - meta-data and first block of data co-located • Collapse prefixes of paths - hash table – Prefix table • “Cache it” - in this case, directory info

Issues for Implementing an I/O Cache Structure Goal: maintain K slots in memory as a cache over a collection of m items on secondary storage (K << m). 1. What happens on the first access to each item? Fetch it into some slot of the cache, use it, and leave it there to speed up access if it is needed again later. 2. How to determine if an item is resident in the cache? Maintain a directory of items in the cache: a hash table. Hash on a unique identifier (tag) for the item (fully associative). 3. How to find a slot for an item fetched into the cache? Choose an unused slot, or select an item to replace according to some policy, and evict it from the cache, freeing its slot.

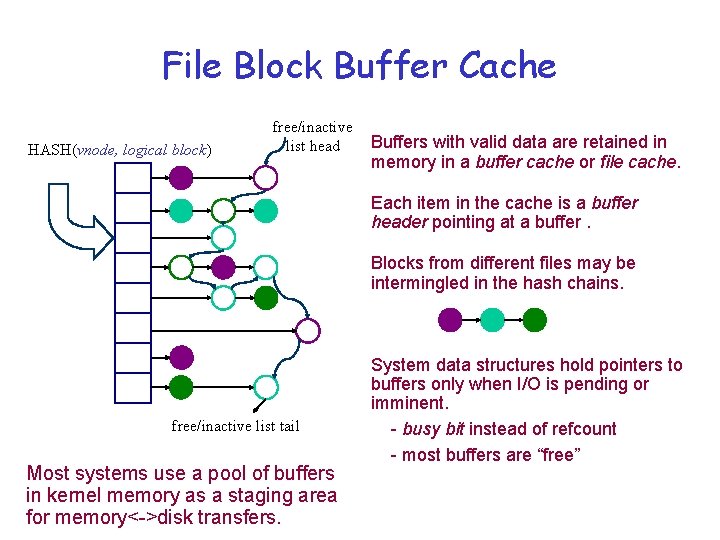

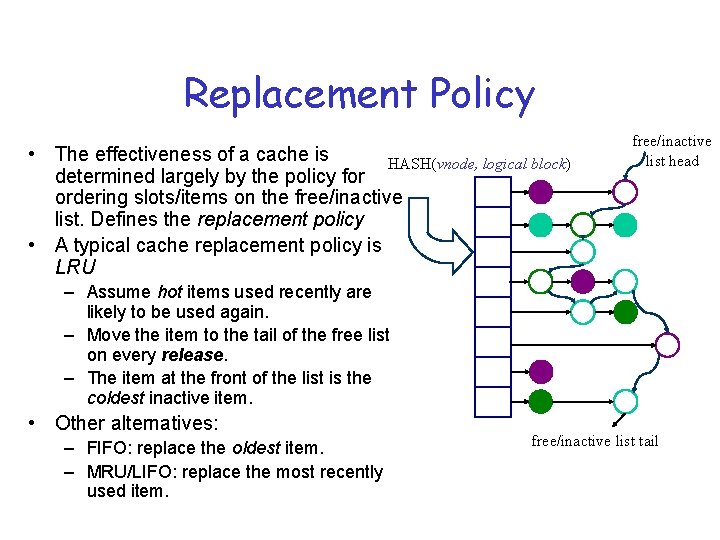

File Block Buffer Cache HASH(vnode, logical block) free/inactive list head Buffers with valid data are retained in memory in a buffer cache or file cache. Each item in the cache is a buffer header pointing at a buffer. Blocks from different files may be intermingled in the hash chains. free/inactive list tail Most systems use a pool of buffers in kernel memory as a staging area for memory<->disk transfers. System data structures hold pointers to buffers only when I/O is pending or imminent. - busy bit instead of refcount - most buffers are “free”

Handling Updates in the File Cache 1. Blocks may be modified in memory once they have been brought into the cache. Modified blocks are dirty and must (eventually) be written back. Write-back, write-through (104? ) 2. Once a block is modified in memory, the write back to disk may not be immediate (synchronous). Delayed writes absorb many small updates with one disk write. How long should the system hold dirty data in memory? Asynchronous writes allow overlapping of computation and disk update activity (write-behind). Do the write call for block n+1 while transfer of block n is in progress. Thus file caches also can improve performance for writes. 3. Knowing data gets to disk Force it but you can’t trust to a “write” syscall - fsync

Mechanism for Cache Eviction/Replacement • Typical approach: maintain an ordered free/inactive list of slots that are candidates for reuse. – Busy items in active use are not on the list. • E. g. , some in-memory data structure holds a pointer to the item. • E. g. , an I/O operation is in progress on the item. – The best candidates are slots that do not contain valid items. • Initially all slots are free, and they may become free again as items are destroyed (e. g. , as files are removed). – Other slots are listed in order of value of the items they contain. • These slots contain items that are valid but inactive: they are held in memory only in the hope that they will be accessed again later.

Replacement Policy • The effectiveness of a cache is HASH(vnode, logical block) determined largely by the policy for ordering slots/items on the free/inactive list. Defines the replacement policy • A typical cache replacement policy is LRU free/inactive list head – Assume hot items used recently are likely to be used again. – Move the item to the tail of the free list on every release. – The item at the front of the list is the coldest inactive item. • Other alternatives: – FIFO: replace the oldest item. – MRU/LIFO: replace the most recently used item. free/inactive list tail

Viewing Memory as a Unified I/O Cache A key role of the I/O system is to manage the page/block cache for performance and reliability. tracking cache contents and managing page/block sharing choreographing movement to/from external storage balancing competing uses of memory Modern systems attempt to balance memory usage between the VM system and the file cache. Grow the file cache for file-intensive workloads. Grow the VM page cache for memory-intensive workloads. Support a consistent view of files across different style of access. unified buffer cache

Synchronization Problems for a Cache 1. What if two processes try to get the same block concurrently, and the block is not resident? 2. What if a process requests to write block A while a put is already in progress on block A? 3. What if a get must replace a dirty block A in order to allocate a buffer to fetch block B? This will happen if the block/buffer at the head of the free list is dirty. What if another process requests to get A during the put? 4. How to handle read/write requests on shared files atomically? Unix guarantees that a read will not return the partial result of a concurrent write, and that concurrent writes do not interleave.

Linux Page Cache • Page Cache is the disk cache for all pagebased I/O – subsumes file buffer cache. – All page I/O flows through page cache • pdflush daemons – writeback to disk any dirty pages/buffers. – When free memory falls below threshold, wakeup daemon to reclaim free memory • Specified number written back • Free memory above threshold – Periodically, to prevent old data not getting written back, wakeup on timer expiration • Writes all pages older than specified limit.

Layout

Layout on Disk • Can address both seek and rotational latency • Cluster related things together (e. g. an inode and its data, inodes in same directory (ls command), data blocks of multiblock file, files in same directory) • Sub-block allocation to reduce fragmentation for small files • Log-Structured File Systems

File Structure Implementation: Mapping File -> Block • Contiguous – 1 block pointer, causes fragmentation, growth is a problem. • Linked – each block points to next block, directory points to first, OK for sequential access • Indexed – index structure required, better for random access into file.

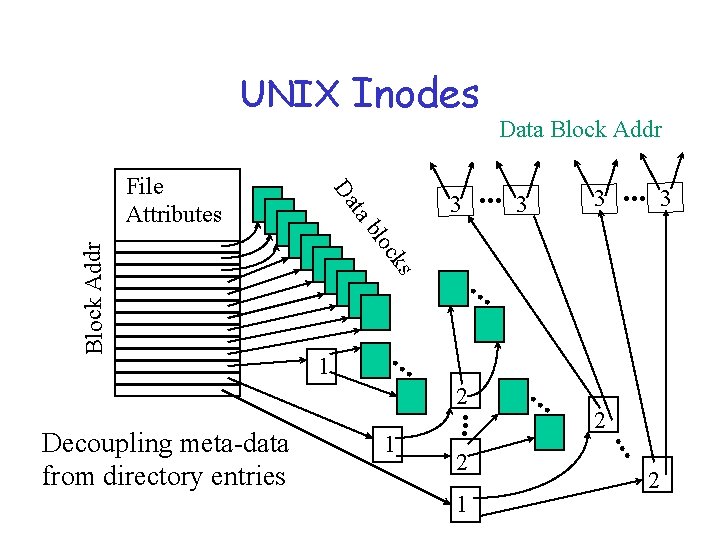

UNIX Inodes 3 c blo 1 . . . 2 . . . 3 2 1 . . . 2 . . . 1 . . . Decoupling meta-data from directory entries 3 3 . . . ks Block Addr ta Da File Attributes Data Block Addr 2

File Allocation Table (FAT) eof Lecture. ppt Pic. jpg Notes. txt eof

The Problem of Disk Layout • The level of indirection in the file block maps allows flexibility in file layout. • “File system design is 99% block allocation. ” [Mc. Voy] • Competing goals for block allocation: – allocation cost – bandwidth for high-volume transfers – efficient directory operations • Goal: reduce disk arm movement and seek overhead. • metric of merit: bandwidth utilization

FFS and LFS Two different approaches to block allocation: – Cylinder groups in the Fast File System (FFS) [Mc. Kusick 81] • clustering enhancements [Mc. Voy 91], and improved cluster allocation [Mc. Kusick: Smith/Seltzer 96] • FFS can also be extended with metadata logging [e. g. , Episode] – Log-Structured File System (LFS) • • proposed in [Douglis/Ousterhout 90] implemented/studied in [Rosenblum 91] BSD port, sort of maybe: [Seltzer 93] extended with self-tuning methods [Neefe/Anderson 97] – Other approach: extent-based file systems

Log-Structured File Systems • Assumption: Cache is effectively filtering out reads so we should optimize for writes • Basic Idea: manage disk as an append-only log (subsequent writes involve minimal head movement) • Data and meta-data (mixed) accumulated in large segments and written contiguously • Reads work as in UNIX - once inode is found, data blocks located via index. • Cleaning an issue - to produce contiguous free space, correcting fragmentation developing over time. • Claim: LFS can use 70% of disk bandwidth for writing while Unix FFS can use only 5 -10% typically because of seeks.

LFS logs In LFS, all block and metadata allocation is logbased. – LFS views the disk as “one big log” (logically). – All writes are clustered and sequential/contiguous. • Intermingles metadata and blocks from different files. – Data is laid out on disk in the order it is written. – No-overwrite allocation policy: if an old block or inode is modified, write it to a new location at the tail of the log. – LFS uses (mostly) the same metadata structures as FFS; only the allocation scheme is different. • Cylinder group structures and free block maps are eliminated. • Inodes are found by indirecting through a new map

LFS Data Structures on Disk • Inode – in log, same as FFS • Inode map – in log, locates position of inode, version, time of last access • Segment summary – in log, identifies contents of segment (file#, offset for each block in segment) • Segment usage table – in log, counts live bytes in segment and last write time • Checkpoint region – fixed location on disk, locates blocks of inode map, identifies last checkpoint in log. • Directory change log – in log, records directory operations to maintain consistency of ref counts in inodes

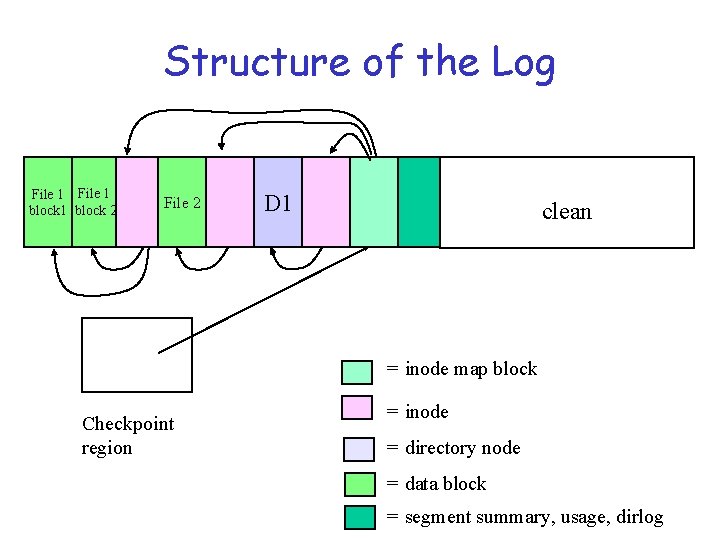

Structure of the Log File 1 block 2 File 2 D 1 clean = inode map block Checkpoint region = inode = directory node = data block = segment summary, usage, dirlog

Writing the Log in LFS 1. LFS “saves up” dirty blocks and dirty inodes until it has a full segment (e. g. , 1 MB). – Dirty inodes are grouped into block-sized clumps. – Dirty blocks are sorted by (file, logical block number). – Each log segment includes summary info and a checksum. 2. LFS writes each log segment in a single burst, with at most one seek. – Find a free segment “slot” on the disk, and write it. – Store a back pointer to the previous segment. • Logically the log is sequential, but physically it consists of a chain of segments, each large enough to amortize seek overhead.

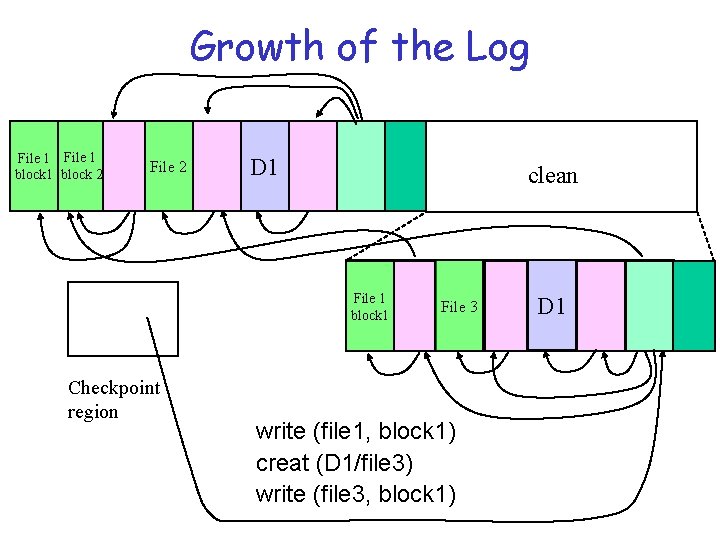

Growth of the Log File 1 block 2 File 2 D 1 clean File 1 block 1 Checkpoint region File 3 write (file 1, block 1) creat (D 1/file 3) write (file 3, block 1) D 1

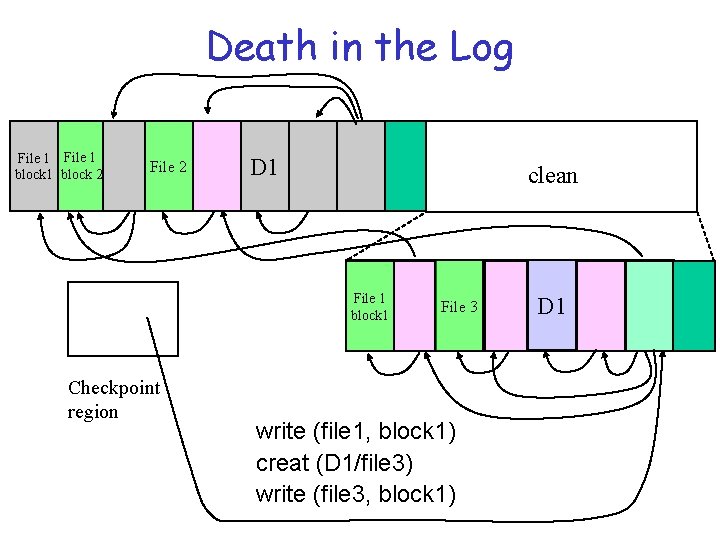

Death in the Log File 1 block 2 File 2 D 1 clean File 1 block 1 Checkpoint region File 3 write (file 1, block 1) creat (D 1/file 3) write (file 3, block 1) D 1

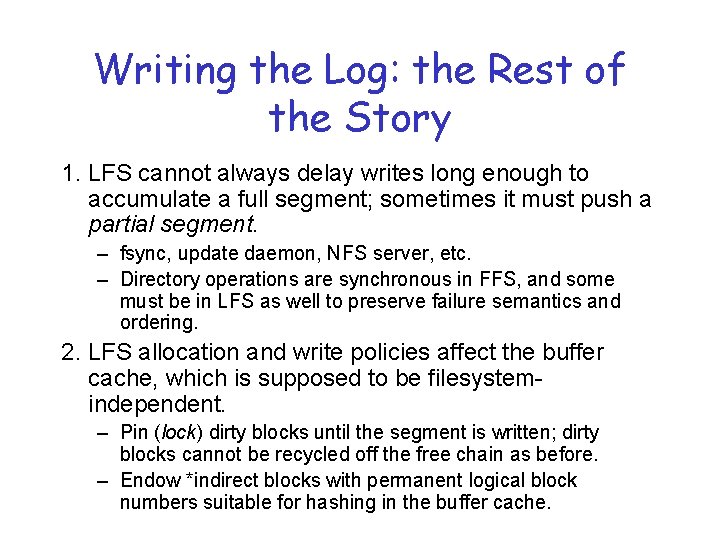

Writing the Log: the Rest of the Story 1. LFS cannot always delay writes long enough to accumulate a full segment; sometimes it must push a partial segment. – fsync, update daemon, NFS server, etc. – Directory operations are synchronous in FFS, and some must be in LFS as well to preserve failure semantics and ordering. 2. LFS allocation and write policies affect the buffer cache, which is supposed to be filesystemindependent. – Pin (lock) dirty blocks until the segment is written; dirty blocks cannot be recycled off the free chain as before. – Endow *indirect blocks with permanent logical block numbers suitable for hashing in the buffer cache.

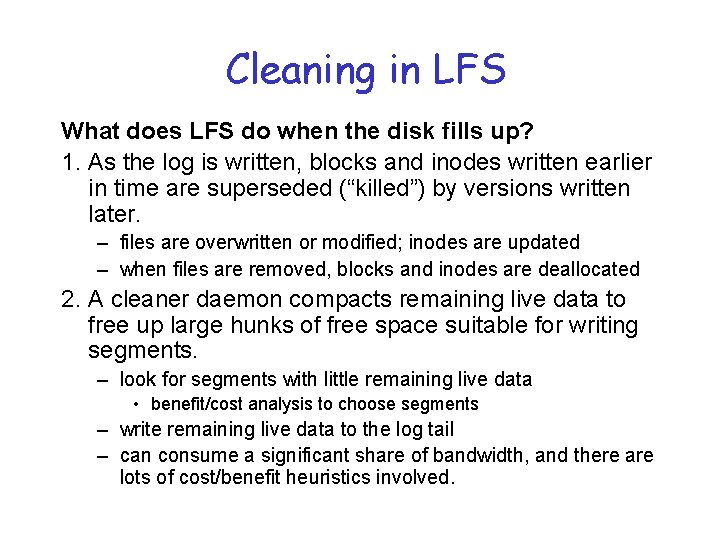

Cleaning in LFS What does LFS do when the disk fills up? 1. As the log is written, blocks and inodes written earlier in time are superseded (“killed”) by versions written later. – files are overwritten or modified; inodes are updated – when files are removed, blocks and inodes are deallocated 2. A cleaner daemon compacts remaining live data to free up large hunks of free space suitable for writing segments. – look for segments with little remaining live data • benefit/cost analysis to choose segments – write remaining live data to the log tail – can consume a significant share of bandwidth, and there are lots of cost/benefit heuristics involved.

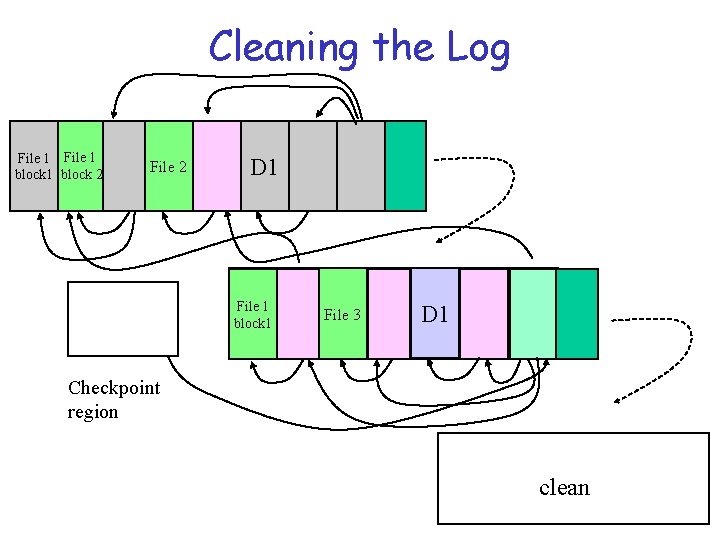

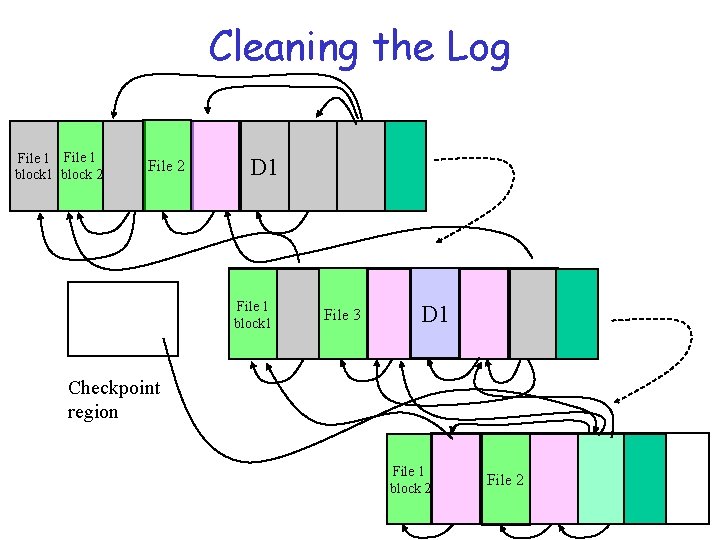

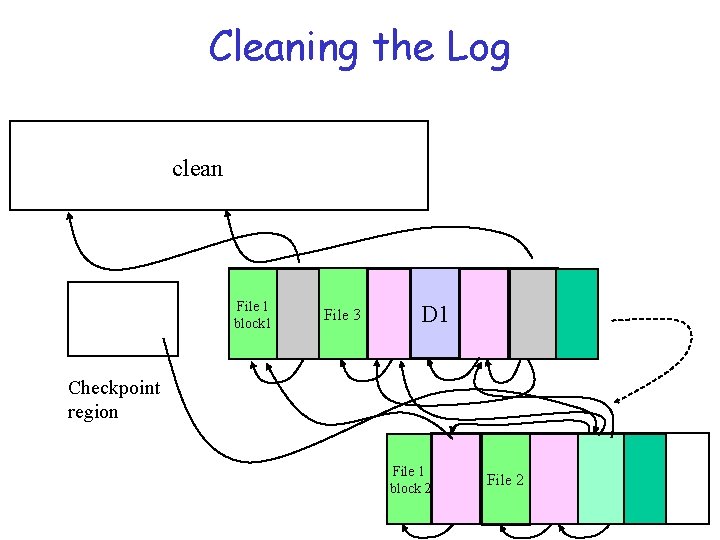

Cleaning the Log File 1 block 2 File 2 D 1 File 1 block 1 File 3 D 1 Checkpoint region clean

Cleaning the Log File 1 block 2 File 2 D 1 File 1 block 1 File 3 D 1 Checkpoint region File 1 block 2 File 2

Cleaning the Log clean File 1 block 1 File 3 D 1 Checkpoint region File 1 block 2 File 2

Cleaning Issues • Must be able to identify which blocks are live • Must be able to identify the file to which each block belongs in order to update inode to new location • Segment Summary block contains this info – File contents associated with uid (version # and inode #) – Inode entries contain version # (incr. on truncate) – Compare to see if inode points to block under consideration

Policies • When cleaner cleans – threshold based • How much – 10 s at a time until threshold reached • Which segments – Most fragmented segment is not best choice. – Value of free space in segment depends on stability of live data (approx. age) – Cost / benefit analysis Benefit = free space available (1 -u) * age of youngest block Cost = cost to read segment + cost to move live data – Segment usage table supports this • How to group live blocks

Recovering Disk Contents • Checkpoints – define consistent states – Position in log where all data structures are consistent – Checkpoint region (fixed location) – contains the addresses of all blocks of inode map and segment usage table, ptr to last segment written • Actually 2 that alternate in case a crash occurs while writing checkpoint region data • Roll-forward – to recover beyond last checkpoint – Uses Segment summary blocks at end of log – if we find new inodes, update inode map found from checkpoint – Adjust utilizations in segment usage table – Restore consistency in ref counts within inodes and directory entries pointing to those inodes using Directory operation log (like an intentions list)

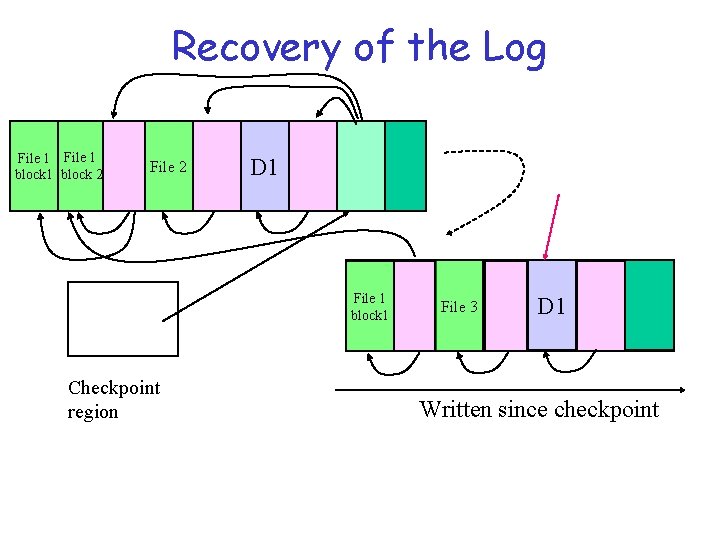

Recovery of the Log File 1 block 2 File 2 D 1 File 1 block 1 Checkpoint region File 3 D 1 Written since checkpoint

Recovery in Unix fsck • Traverses the directory structure checking ref counts of inodes • Traverses inodes and freelist to check block usage of all disk blocks

Evaluation of LFS vs. FFS 1. How effective is FFS clustering in “sequentializing” disk writes? Do we need LFS once we have clustering? – How big do files have to be before FFS matches LFS? – How effective is clustering for bursts of creates/deletes? – What is the impact of FFS tuning parameters? 2. What is the impact of file system age and high disk space utilization? – LFS pays a higher cleaning overhead. – In FFS fragmentation compromises clustering effectiveness. 3. What about workloads with frequent overwrites and random access patterns (e. g. , transaction processing)?

Which kinds of locality on disk are better?

Benchmarks and Conclusions 1. For bulk creates/deletes of small files, LFS is an order of magnitude better than FFS, which is disk-limited. • LFS gets about 70% of disk bandwidth for creates. 2. For bulk creates of large files, both FFS and LFS are disk-limited. 3. FFS and LFS are roughly equivalent for reads of files in create order, but FFS spends more seek time on large files. 4. For file overwrites in create order, FFS wins for large files.

The Cleaner Controversy Seltzer measured TP performance using a TPC-B benchmark (banking application) with a separate log disk. 1. TPC-B is dominated by random reads/writes of account file. 2. LFS wins if there is no cleaner, because it can sequentialize the random writes. • Journaling log avoids the need for synchronous writes. 3. Since the data dies quickly in this application, LFS cleaner is kept busy, leading to high overhead. 4. Claim: cleaner consumes 34% of disk bandwidth at 48% space utilization, removing any advantage of LFS.

NTFS

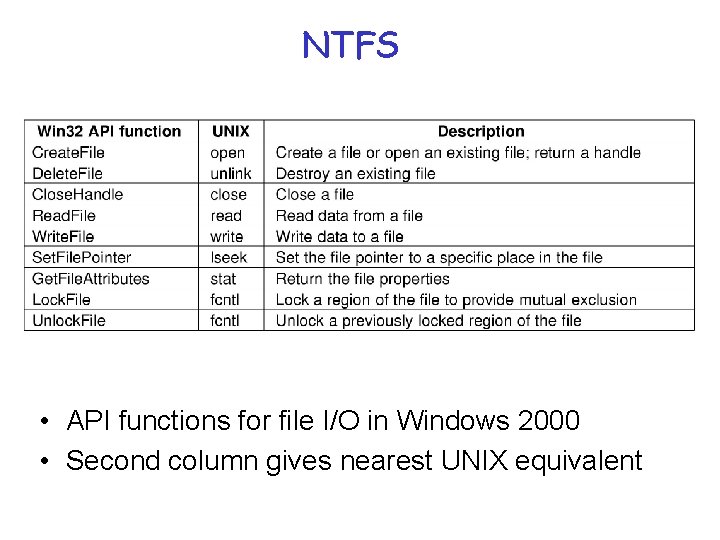

NTFS • API functions for file I/O in Windows 2000 • Second column gives nearest UNIX equivalent

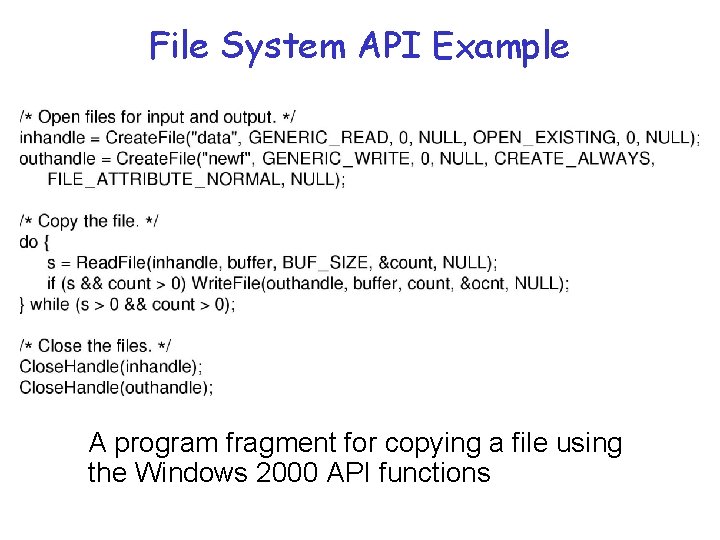

File System API Example A program fragment for copying a file using the Windows 2000 API functions

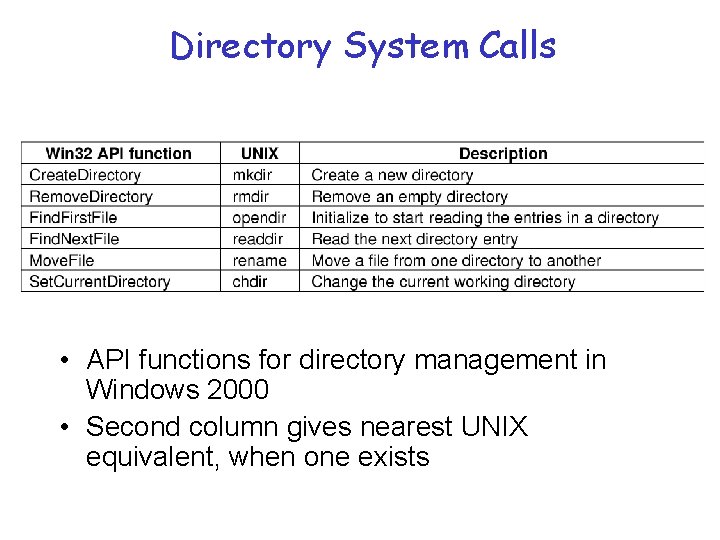

Directory System Calls • API functions for directory management in Windows 2000 • Second column gives nearest UNIX equivalent, when one exists

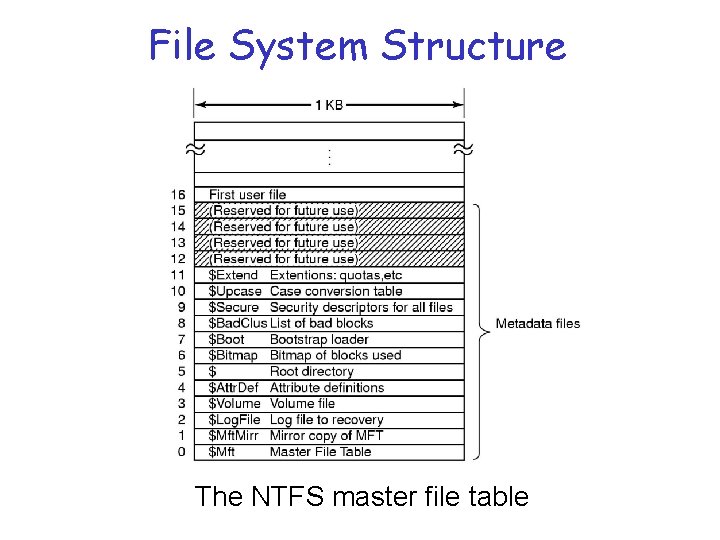

File System Structure The NTFS master file table

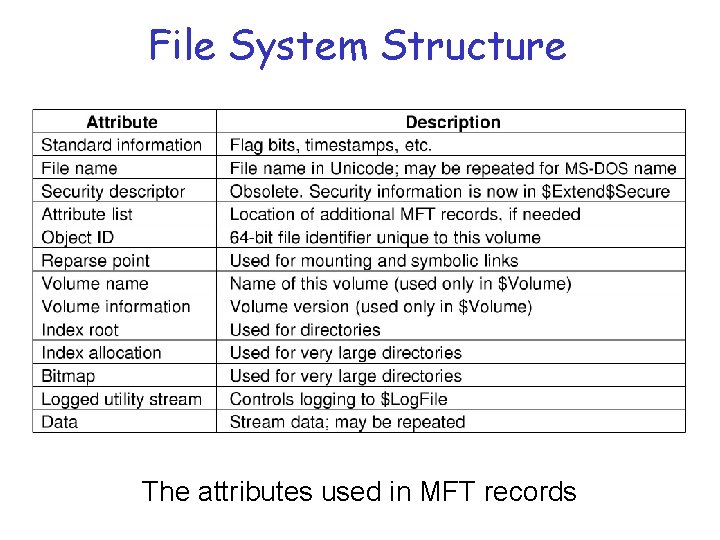

File System Structure The attributes used in MFT records

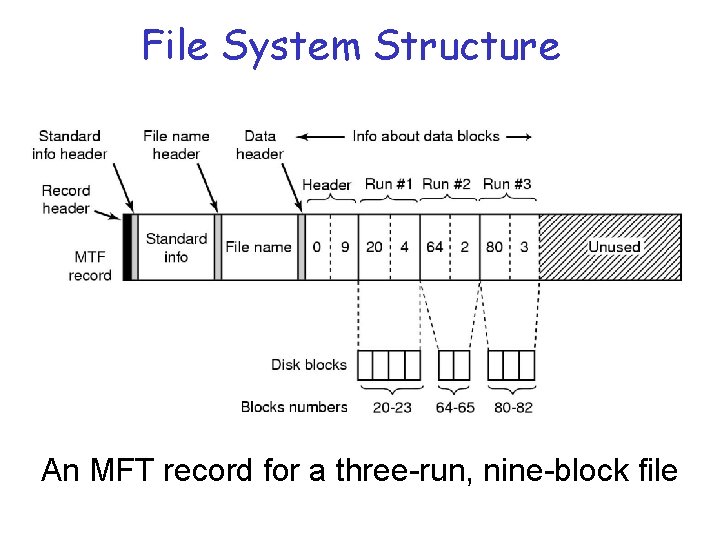

File System Structure An MFT record for a three-run, nine-block file

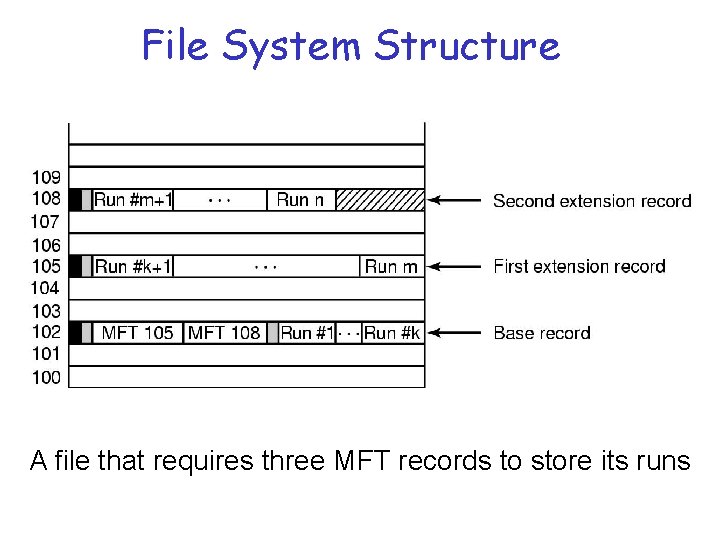

File System Structure A file that requires three MFT records to store its runs

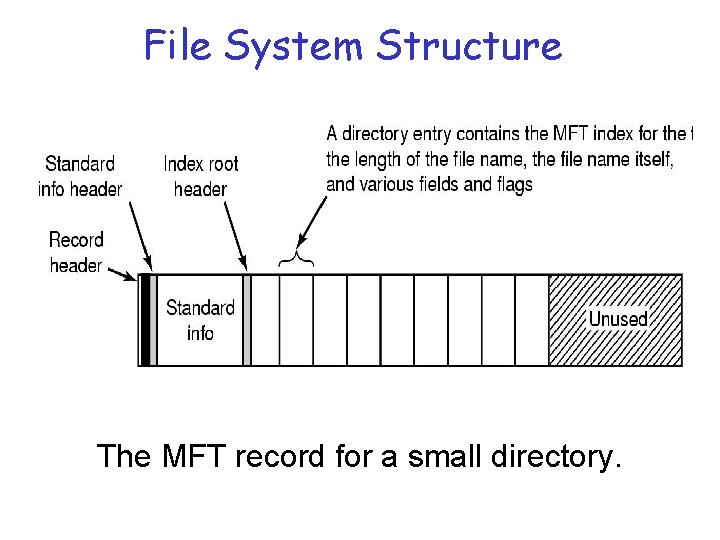

File System Structure The MFT record for a small directory.

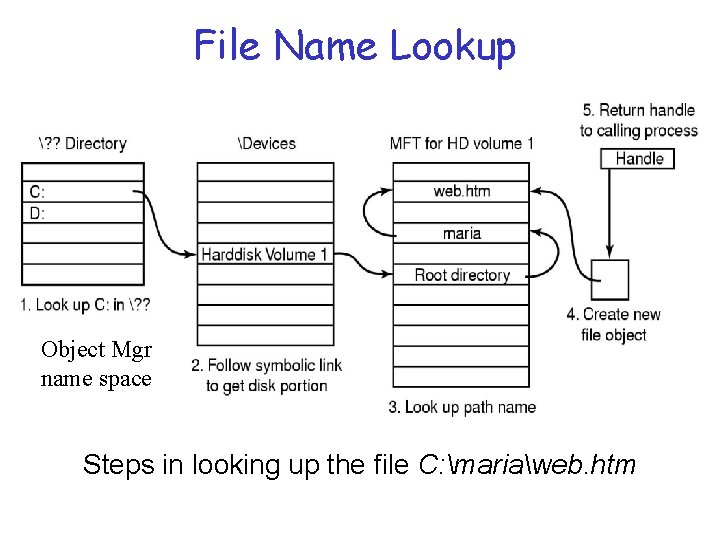

File Name Lookup Object Mgr name space Steps in looking up the file C: mariaweb. htm

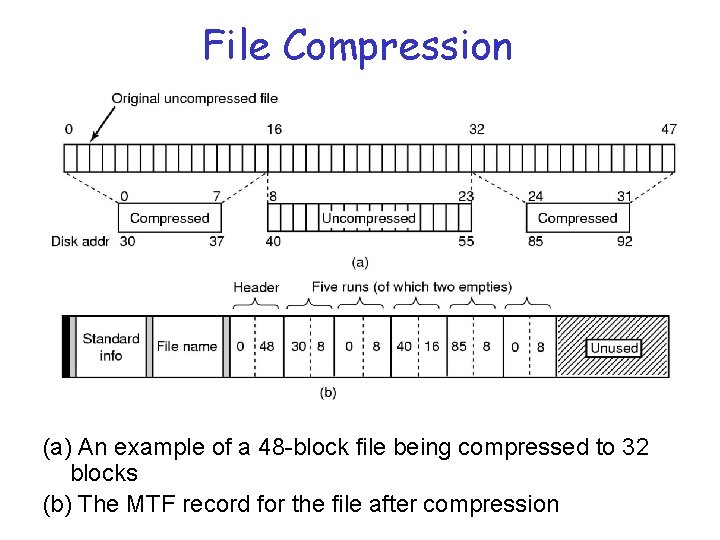

File Compression (a) An example of a 48 -block file being compressed to 32 blocks (b) The MTF record for the file after compression

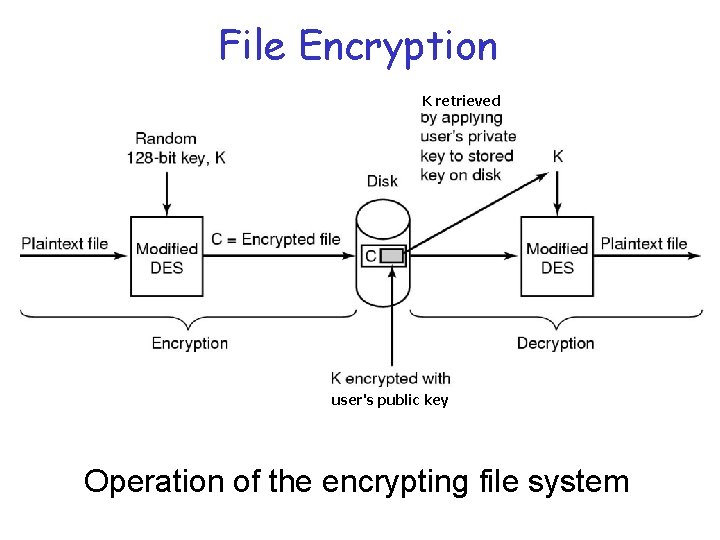

File Encryption K retrieved user's public key Operation of the encrypting file system

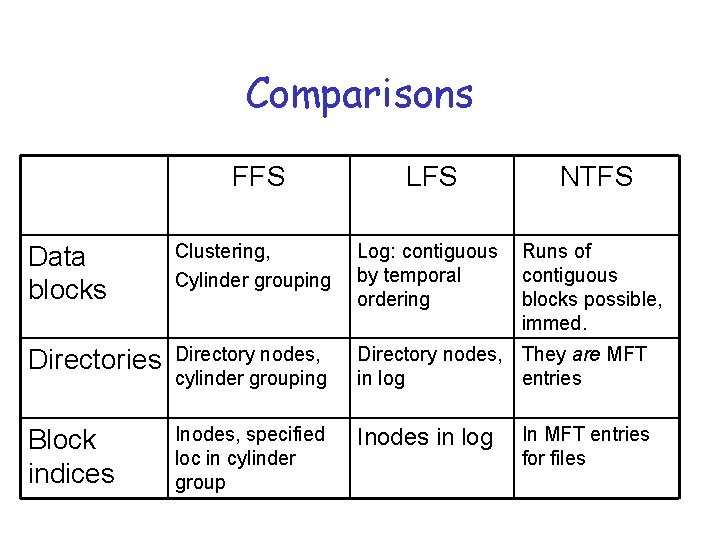

Comparisons FFS LFS NTFS Data blocks Clustering, Cylinder grouping Log: contiguous by temporal ordering Runs of contiguous blocks possible, immed. Directories Directory nodes, cylinder grouping Directory nodes, They are MFT in log entries Block indices Inodes, specified loc in cylinder group Inodes in log In MFT entries for files

Distributed File Systems

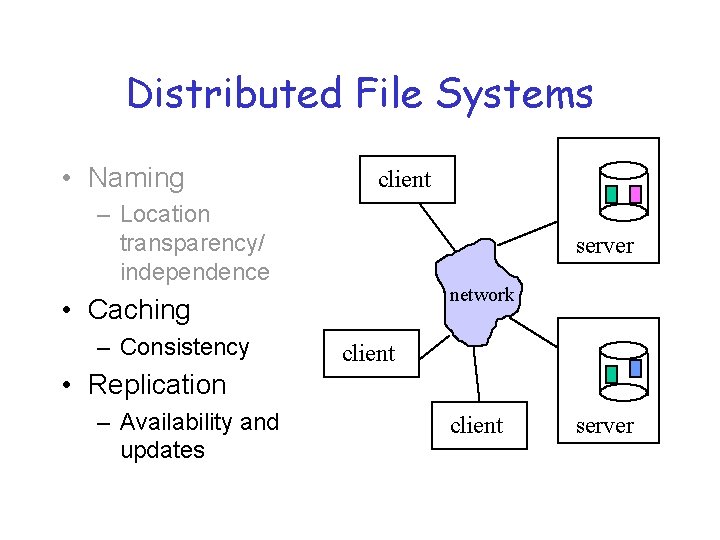

Distributed File Systems • Naming client – Location transparency/ independence server network • Caching – Consistency client • Replication – Availability and updates client server

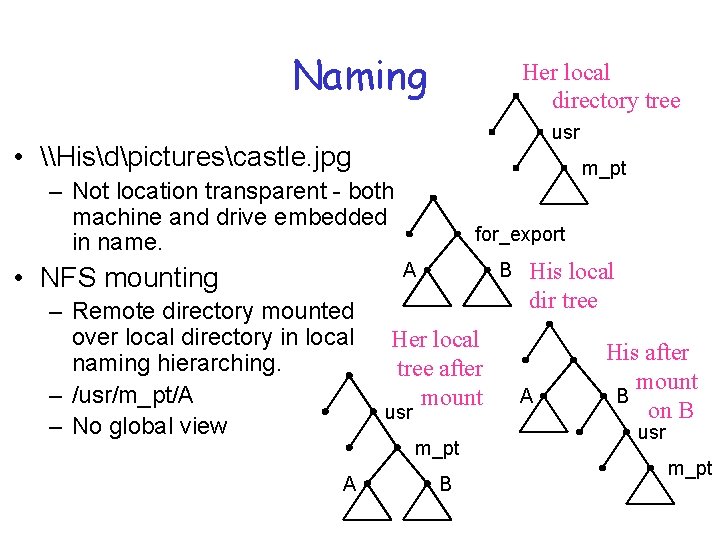

Naming Her local directory tree usr • \Hisdpicturescastle. jpg m_pt – Not location transparent - both machine and drive embedded in name. for_export A • NFS mounting – Remote directory mounted over local directory in local naming hierarching. – /usr/m_pt/A – No global view A B Her local tree after mount usr m_pt B His local dir tree A His after mount B on B usr m_pt

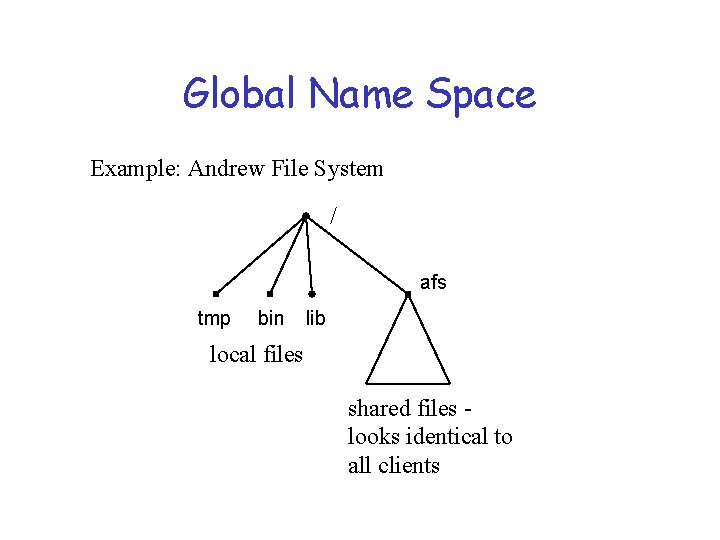

Global Name Space Example: Andrew File System / afs tmp bin lib local files shared files looks identical to all clients

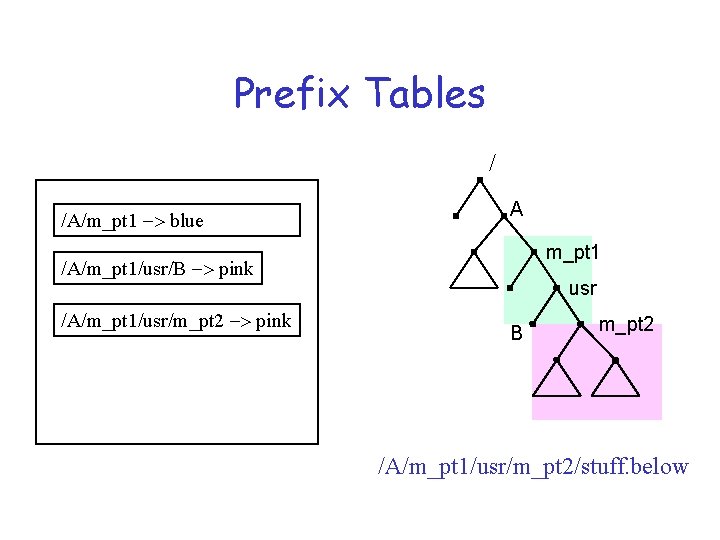

Hints • A valuable distributed systems design technique that can be illustrated in naming. • Definition: information that is not guaranteed to be correct. If it is, it can improve performance. If not, things will still work OK. Must be able to validate information. • Example: Sprite prefix tables

Prefix Tables / /A/m_pt 1 -> blue A m_pt 1 /A/m_pt 1/usr/B -> pink /A/m_pt 1/usr/m_pt 2 -> pink usr B m_pt 2 /A/m_pt 1/usr/m_pt 2/stuff. below

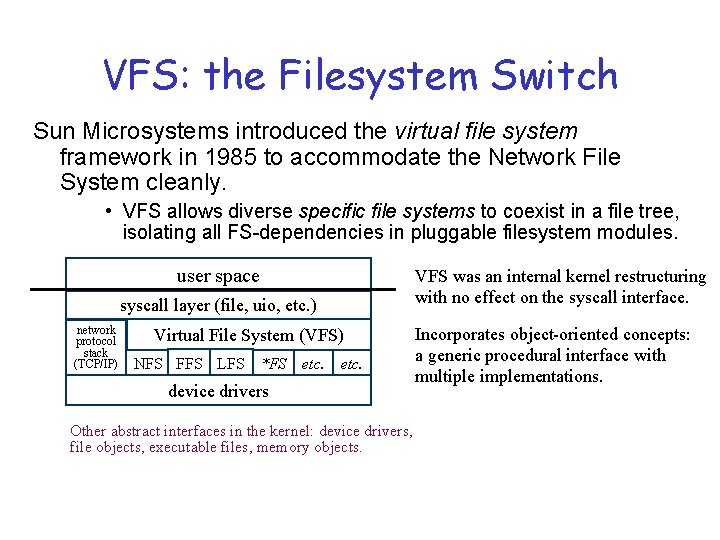

VFS: the Filesystem Switch Sun Microsystems introduced the virtual file system framework in 1985 to accommodate the Network File System cleanly. • VFS allows diverse specific file systems to coexist in a file tree, isolating all FS-dependencies in pluggable filesystem modules. user space syscall layer (file, uio, etc. ) network protocol stack (TCP/IP) Virtual File System (VFS) NFS FFS LFS *FS etc. device drivers Other abstract interfaces in the kernel: device drivers, file objects, executable files, memory objects. VFS was an internal kernel restructuring with no effect on the syscall interface. Incorporates object-oriented concepts: a generic procedural interface with multiple implementations.

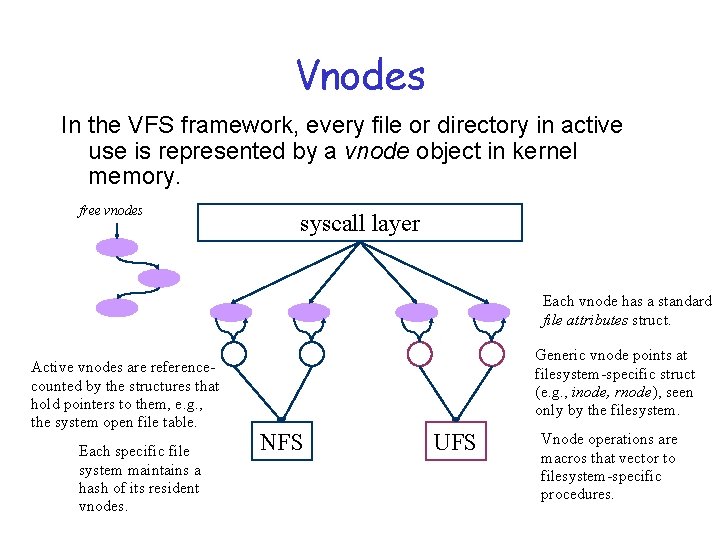

Vnodes In the VFS framework, every file or directory in active use is represented by a vnode object in kernel memory. free vnodes syscall layer Each vnode has a standard file attributes struct. Active vnodes are referencecounted by the structures that hold pointers to them, e. g. , the system open file table. Each specific file system maintains a hash of its resident vnodes. Generic vnode points at filesystem-specific struct (e. g. , inode, rnode), seen only by the filesystem. NFS UFS Vnode operations are macros that vector to filesystem-specific procedures.

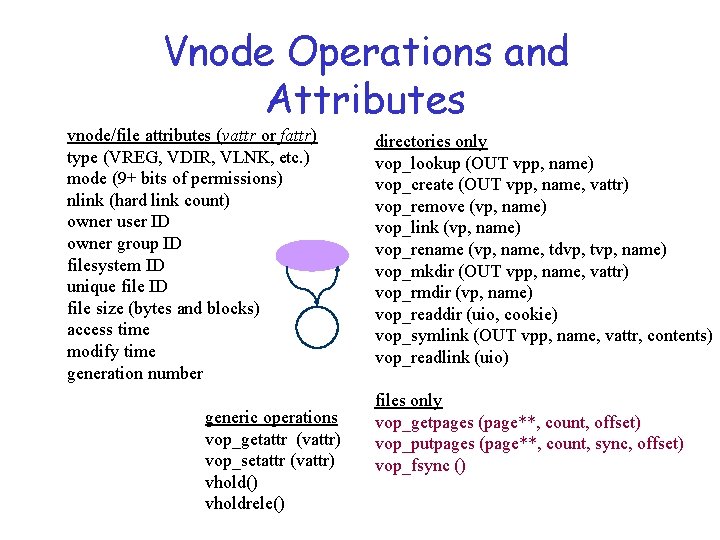

Vnode Operations and Attributes vnode/file attributes (vattr or fattr) type (VREG, VDIR, VLNK, etc. ) mode (9+ bits of permissions) nlink (hard link count) owner user ID owner group ID filesystem ID unique file ID file size (bytes and blocks) access time modify time generation number generic operations vop_getattr (vattr) vop_setattr (vattr) vhold() vholdrele() directories only vop_lookup (OUT vpp, name) vop_create (OUT vpp, name, vattr) vop_remove (vp, name) vop_link (vp, name) vop_rename (vp, name, tdvp, tvp, name) vop_mkdir (OUT vpp, name, vattr) vop_rmdir (vp, name) vop_readdir (uio, cookie) vop_symlink (OUT vpp, name, vattr, contents) vop_readlink (uio) files only vop_getpages (page**, count, offset) vop_putpages (page**, count, sync, offset) vop_fsync ()

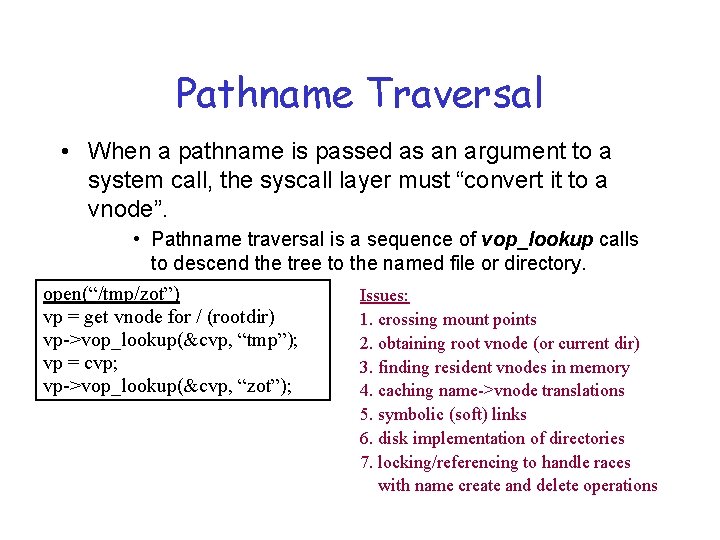

Pathname Traversal • When a pathname is passed as an argument to a system call, the syscall layer must “convert it to a vnode”. • Pathname traversal is a sequence of vop_lookup calls to descend the tree to the named file or directory. open(“/tmp/zot”) Issues: vp = get vnode for / (rootdir) 1. crossing mount points vp->vop_lookup(&cvp, “tmp”); 2. obtaining root vnode (or current dir) vp = cvp; 3. finding resident vnodes in memory vp->vop_lookup(&cvp, “zot”); 4. caching name->vnode translations 5. symbolic (soft) links 6. disk implementation of directories 7. locking/referencing to handle races with name create and delete operations

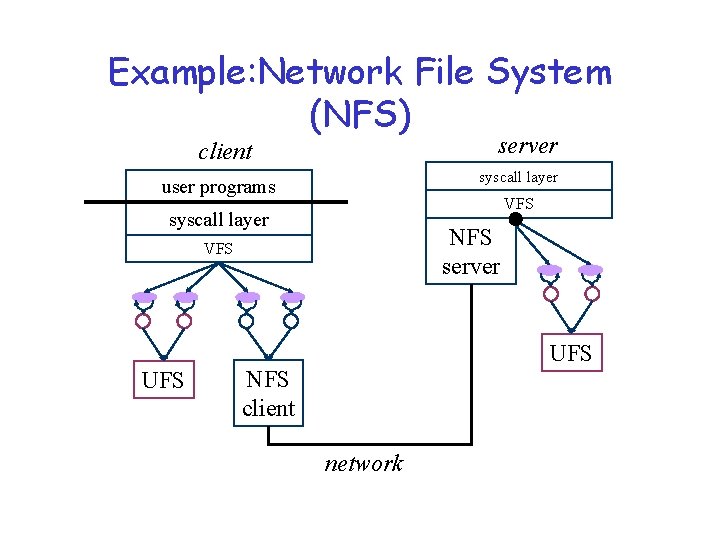

Example: Network File System (NFS) server client syscall layer user programs VFS syscall layer NFS server VFS UFS NFS client network

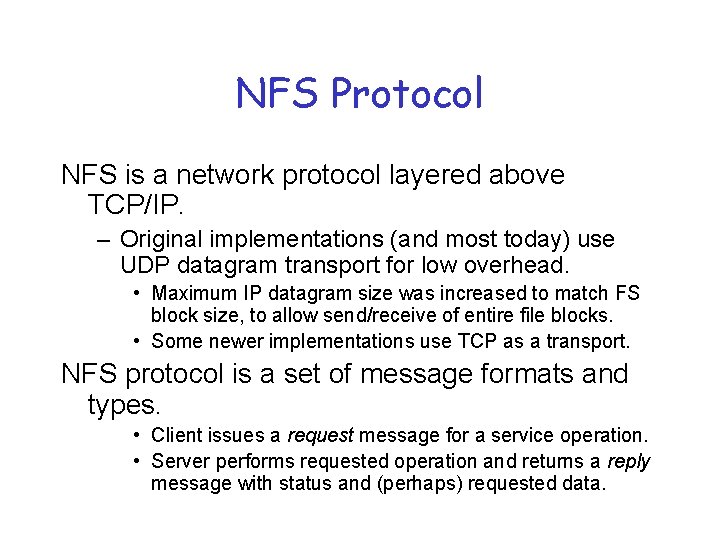

NFS Protocol NFS is a network protocol layered above TCP/IP. – Original implementations (and most today) use UDP datagram transport for low overhead. • Maximum IP datagram size was increased to match FS block size, to allow send/receive of entire file blocks. • Some newer implementations use TCP as a transport. NFS protocol is a set of message formats and types. • Client issues a request message for a service operation. • Server performs requested operation and returns a reply message with status and (perhaps) requested data.

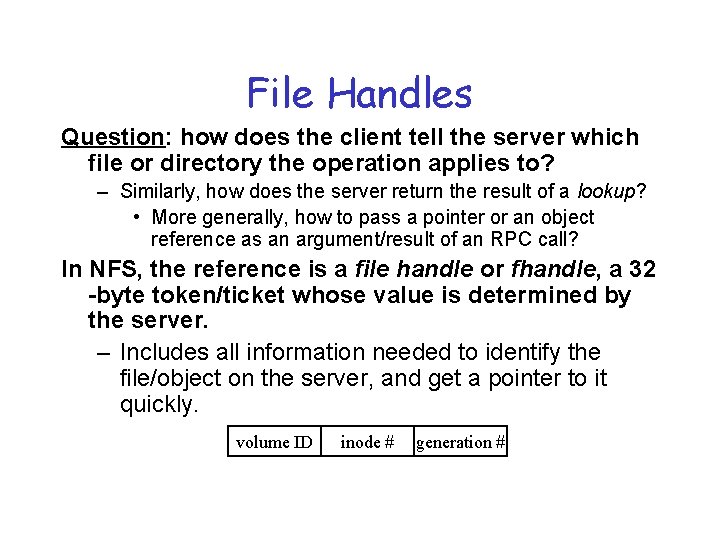

File Handles Question: how does the client tell the server which file or directory the operation applies to? – Similarly, how does the server return the result of a lookup? • More generally, how to pass a pointer or an object reference as an argument/result of an RPC call? In NFS, the reference is a file handle or fhandle, a 32 -byte token/ticket whose value is determined by the server. – Includes all information needed to identify the file/object on the server, and get a pointer to it quickly. volume ID inode # generation #

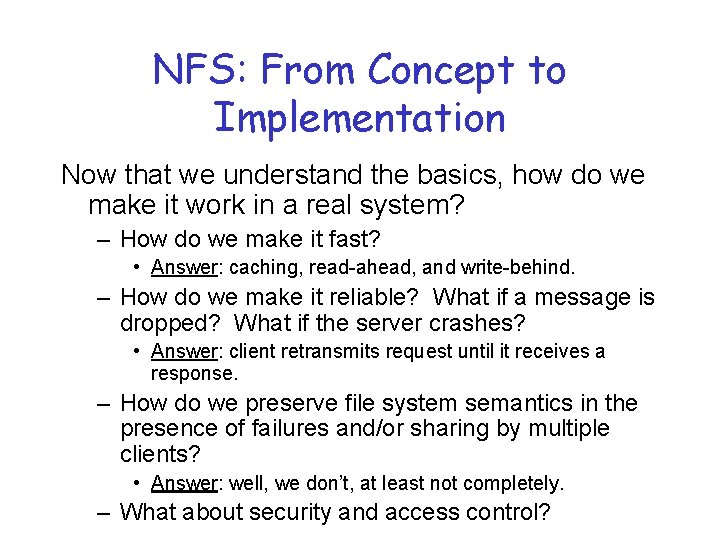

NFS: From Concept to Implementation Now that we understand the basics, how do we make it work in a real system? – How do we make it fast? • Answer: caching, read-ahead, and write-behind. – How do we make it reliable? What if a message is dropped? What if the server crashes? • Answer: client retransmits request until it receives a response. – How do we preserve file system semantics in the presence of failures and/or sharing by multiple clients? • Answer: well, we don’t, at least not completely. – What about security and access control?

Distributed File Systems • Naming client – Location transparency/ independence server network • Caching – Consistency client • Replication – Availability and updates client server

Caching was “The Answer” Proc • Avoid the disk for as many file operations as possible. • Cache acts as a filter for the requests seen by the disk - reads served best. • Delayed writeback will avoid going to disk at all for temp files. Memory File cache

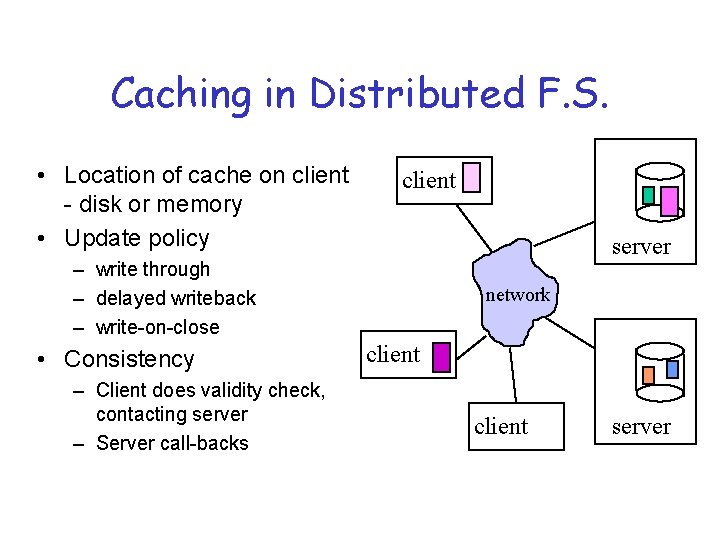

Caching in Distributed F. S. • Location of cache on client - disk or memory • Update policy client server – write through – delayed writeback – write-on-close • Consistency – Client does validity check, contacting server – Server call-backs network client server

File Cache Consistency Caching is a key technique in distributed systems. The cache consistency problem: cached data may become stale if cached data is updated elsewhere in the network. Solutions: Timestamp invalidation (NFS). Timestamp each cache entry, and periodically query the server: “has this file changed since time t? ”; invalidate cache if stale. Callback invalidation (AFS). Request notification (callback) from the server if the file changes; invalidate cache on callback. Leases (NQ-NFS) [Gray&Cheriton 89]

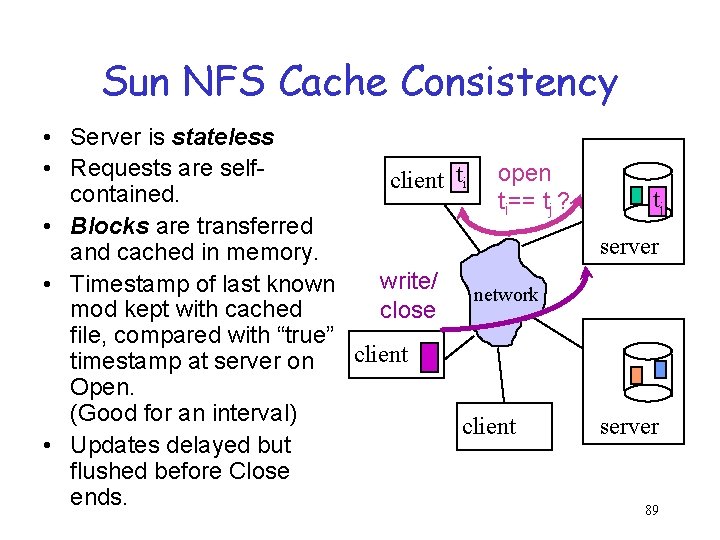

Sun NFS Cache Consistency • Server is stateless • Requests are selfclient ti open contained. ti== tj ? • Blocks are transferred and cached in memory. write/ • Timestamp of last known network mod kept with cached close file, compared with “true” client timestamp at server on Open. (Good for an interval) client • Updates delayed but flushed before Close ends. tj server 89

Cache Consistency for the Web • Time-to-Live (TTL) fields - HTTP “expires” client header lan • Client polling -HTTP “if-modified-since” request headers proxy – polling frequency? cache possibly adaptive (e. g. based on age of object and assumed stability) client network Web server 90

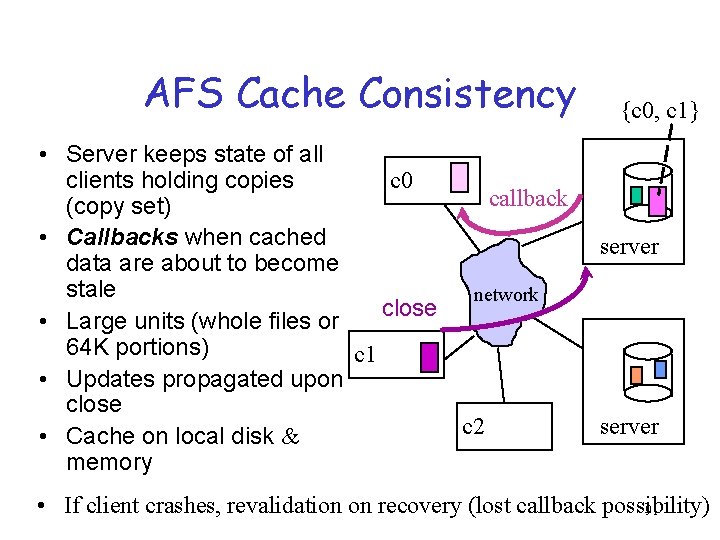

AFS Cache Consistency • Server keeps state of all c 0 clients holding copies callback (copy set) • Callbacks when cached data are about to become stale network close • Large units (whole files or 64 K portions) c 1 • Updates propagated upon close c 2 • Cache on local disk & memory {c 0, c 1} server • If client crashes, revalidation on recovery (lost callback possibility) 91

NQ-NFS Leases In NQ-NFS, a client obtains a lease on the file that permits the client’s desired read/write activity. “A lease is a ticket permitting an activity; the lease is valid until some expiration time. ” – A read-caching lease allows the client to cache clean data. Guarantee: no other client is modifying the file. – A write-caching lease allows the client to buffer modified data for the file. Guarantee: no other client has the file cached. Leases may be revoked by the server if another client requests a conflicting operation (server sends eviction notice). Since leases expire, losing “state” of leases at server is OK.

Coda – Using Caching to Handle Disconnected Access • Single location-transparent UNIX FS. • Scalability - coarse granularity (whole-file caching, volume management) • First class (server) replication and client caching (second class replication) • Optimistic replication & consistency maintenance. ® Designed for disconnected operation for mobile computing clients

Explicit First-class Replication • File name maps to set of replicas, one of which will be used to satisfy request – Goal: availability • Update strategy – Atomic updates - all or none – Primary copy approach – Voting schemes – Optimistic, then detection of conflicts

Optimistic vs. Pessimistic • High availability Conflicting updates are the potential problem - requiring detection and resolution. • Avoids conflicts by holding of shared or exclusive locks. • How to arrange when disconnection is involuntary? • Leases [Gray, SOSP 89] puts a time-bound on locks but what about expiration?

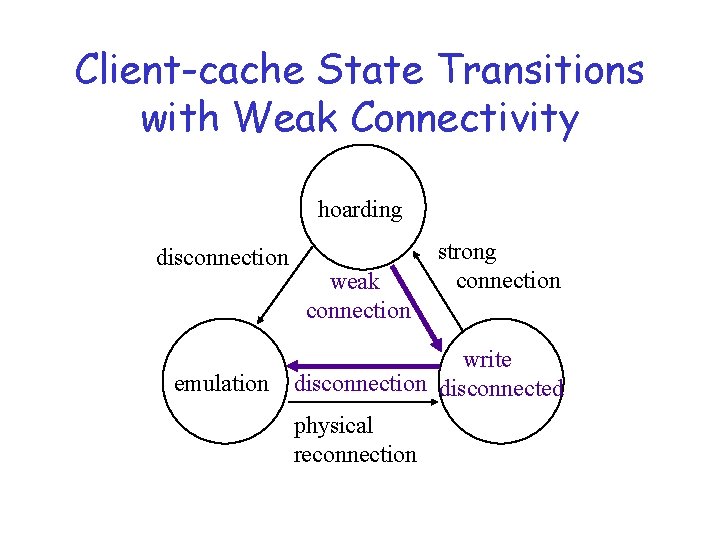

Client-cache State Transitions hoarding disconnection logical reconnection emulation reintegration physical reconnection

Prefetching • To avoid the access latency of moving the data in for that first cache miss. • Prediction! “Guessing” what data will be needed in the future. – It’s not for free: Consequences of guessing wrong Overhead

Hoarding - Prefetching for Disconnected Information Access • Caching for availability (not just latency) • Cache misses, when operating disconnected, have no redeeming value. (Unlike in connected mode, they can’t be used as the triggering mechanism for filling the cache. ) • How to preload the cache for subsequent disconnection? Planned or unplanned. • What does it mean for replacement?

Hoard Database • Per-workstation, per-user set of pathnames with priority • User can explicitly tailor HDB using scripts called hoard profiles • Delimited observations of reference behavior (snapshot spying with bookends)

Coda Hoarding State • Balancing act - caching for 2 purposes at once: – performance of current accesses, – availability of future disconnected access. • Prioritized algorithm Priority of object for retention in cache is f(hoard priority, recent usage). • Hoard walking (periodically or on request) maintains equilibrium - no uncached object has higher priority than any of cached objects

The Hoard Walk • Hoard walk - phase 1 - reevaluate name bindings (e. g. , any new children created by other clients? ) • Hoard walk - phase 2 - recalculate priorities in cache and in HDB, evict and fetch to restore equilibrium

Hierarchical Cache Mgt • Ancestors of a cached object must be cached in order to resolve pathname. • Directories with cached children are assigned infinite priority

Callbacks During Hoarding • Traditional callbacks - invalidate object and refetch on demand • With threat of disconnection – Purge files and refetch on demand or hoard walk – Directories - mark as stale and fix on reference or hoard walk, available until then just in case.

Emulation State • Pseudo-server, subject to validation upon reconnection • Cache management by priority – modified objects assigned infinite priority – freeing up disk space - compression, replacement to floppy, backout updates • Replay log also occupies non-volatile storage (RVM - recoverable virtual memory)

Client-cache State Transitions with Weak Connectivity hoarding disconnection emulation weak connection strong connection write disconnection disconnected physical reconnection

Cache Misses with Weak Connectivity • At least now it’s possible to service misses but $$$ and it’s a foreground activity (noticable impact). Maybe not • User patience threshold - estimated service time compared with what is acceptable • Defer misses by adding to HDB and letting hoard walk deal with it • User interaction during hoard walk.

- Slides: 95