Outline for Todays Lecture Administrative Objective NTFS continued

Outline for Today’s Lecture Administrative: Objective: – NTFS – continued – Journaling FS – Distributed File Systems – Disconnected File Access – Energy Management

NTFS - continued

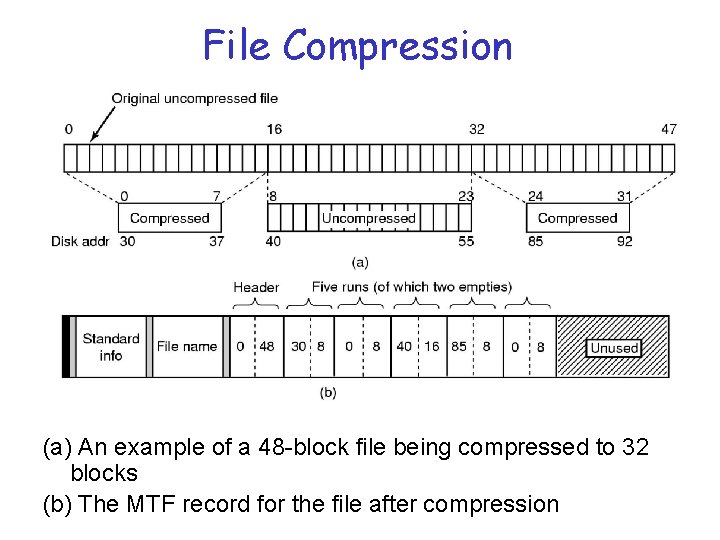

File Compression (a) An example of a 48 -block file being compressed to 32 blocks (b) The MTF record for the file after compression

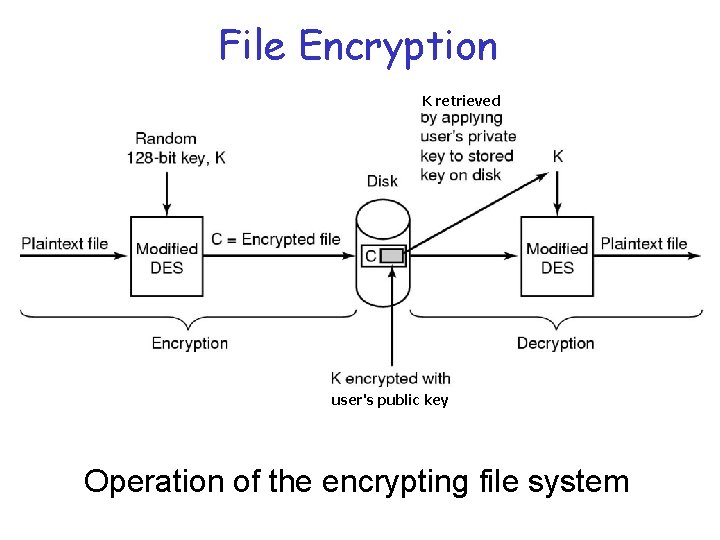

File Encryption K retrieved user's public key Operation of the encrypting file system

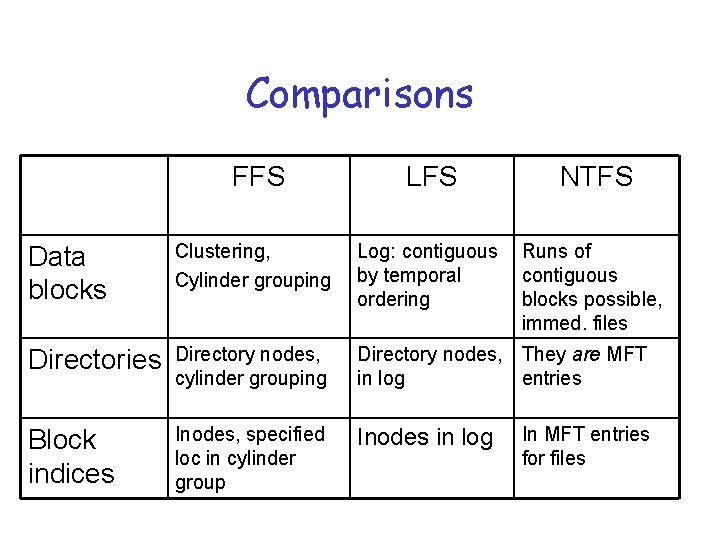

Comparisons FFS LFS NTFS Data blocks Clustering, Cylinder grouping Log: contiguous by temporal ordering Runs of contiguous blocks possible, immed. files Directories Directory nodes, cylinder grouping Directory nodes, They are MFT in log entries Block indices Inodes, specified loc in cylinder group Inodes in log In MFT entries for files

Journaling for Meta-data Ops

Metadata Operations • Metadata operations modify the structure of the file system – Creating, deleting, or renaming files, directories, or special files • Data must be written to disk in such a way that the file system can be recovered to a consistent state after a system crash

General Rules of Ordering 1) Never point to a structure before it has been initialized (inode < direntry) 2) Never re-use a resource before nullifying all previous pointers to it 3) Never reset the old pointer to a live resource before the new pointer has been set (renaming)

Metadata Integrity • FFS uses synchronous writes to guarantee the integrity of metadata – Any operation modifying multiple pieces of metadata will write its data to disk in a specific order – These writes will be blocking • Guarantees integrity and durability of metadata updates

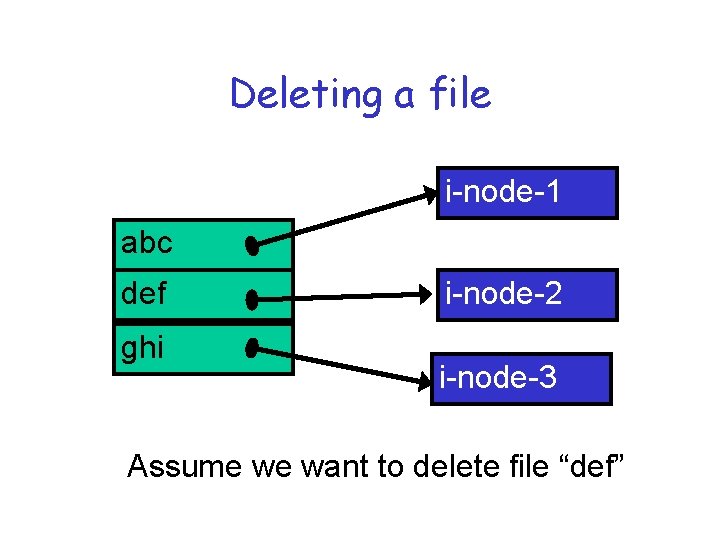

Deleting a file i-node-1 abc def ghi i-node-2 i-node-3 Assume we want to delete file “def”

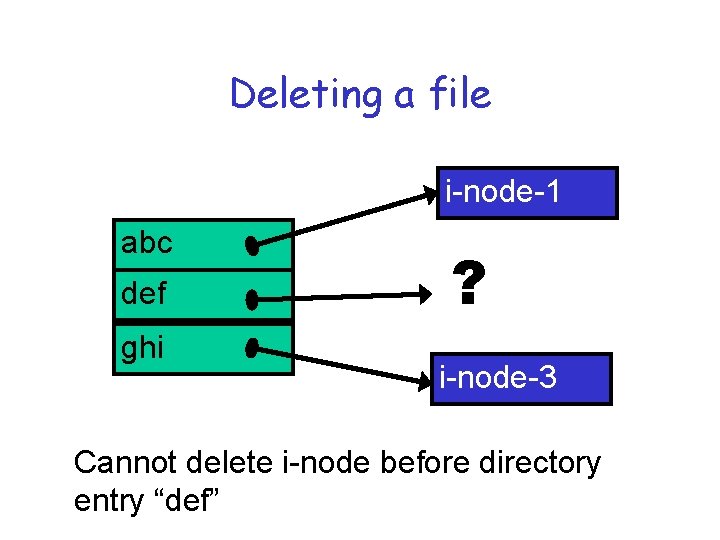

Deleting a file i-node-1 abc def ghi ? i-node-3 Cannot delete i-node before directory entry “def”

Deleting a file • Correct sequence is 1. 2. • Write to disk directory block containing deleted directory entry “def” Write to disk i-node block containing deleted i-node Leaves the file system in a consistent state

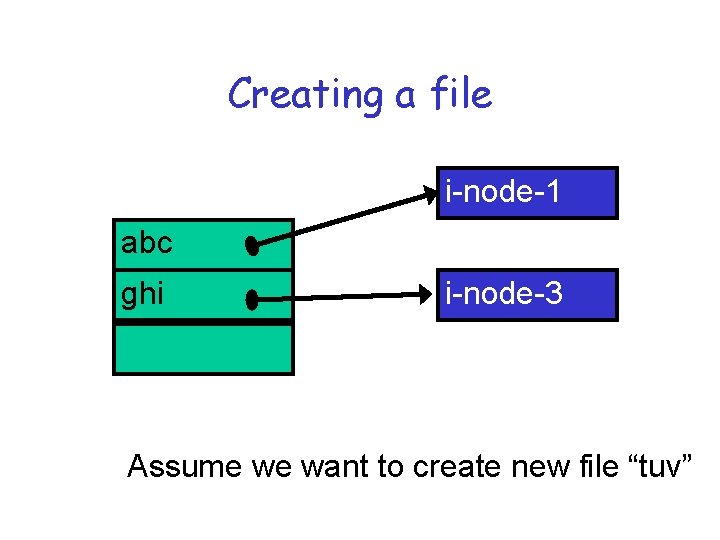

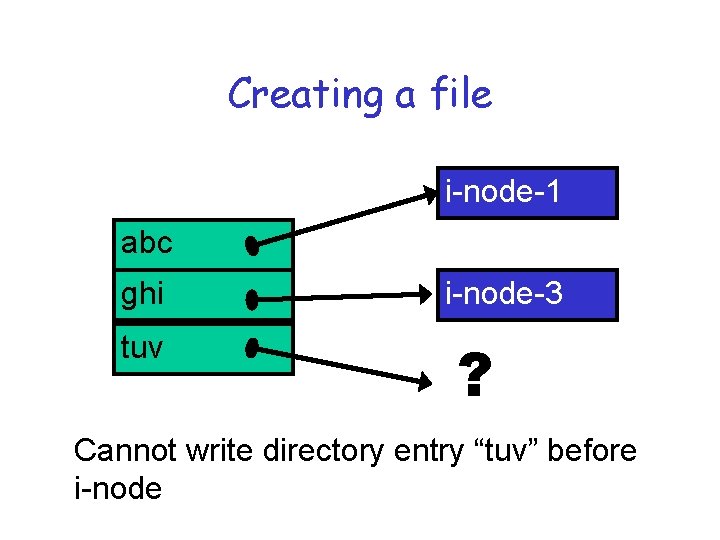

Creating a file i-node-1 abc ghi i-node-3 Assume we want to create new file “tuv”

Creating a file i-node-1 abc ghi tuv i-node-3 ? Cannot write directory entry “tuv” before i-node

Creating a file • Correct sequence is 1. 2. • Write to disk i-node block containing new i-node Write to disk directory block containing new directory entry Leaves the file system in a consistent state

Synchronous Updates • Used by FFS to guarantee consistency of metadata: – All metadata updates are done through blocking writes • Increases the cost of metadata updates • Can significantly impact the performance of whole file system

Journaling • Journaling systems maintain an auxiliary log that records all meta-data operations • Write-ahead logging ensures that the log is written to disk before any blocks containing data modified by the corresponding operations. – After a crash, can replay the log to bring the file system to a consistent state

Journaling • Log writes are performed in addition to the regular writes • Journaling systems incur log write overhead but – Log writes can be performed efficiently because they are sequential – Metadata blocks do not need to be written back after each update

Journaling • Journaling systems can provide – same durability semantics as FFS if log is forced to disk after each meta-data operation – the laxer semantics if log writes are buffered until entire buffers are full

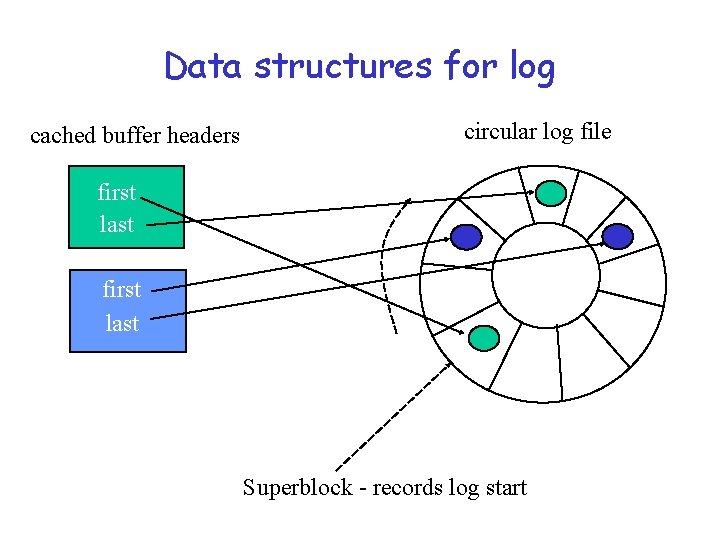

Implementation with log as file • Maintains a circular log in a pre-allocated file in the FFS (about 1% of file system size) • Buffer manager uses a write-ahead logging protocol to ensure proper synchronization between regular file data and the log • Buffer header of each modified block in cache identifies the first and last log entries describing an update to the block

Implementation with log as file • System uses – First item to decide which log entries can be purged from log – Second item to ensure that all relevant log entries are written to disk before the block is flushed from the cache • Maintains its log asynchronously – Maintains file system integrity, but does not guarantee durability of updates

Data structures for log cached buffer headers circular log file first last Superblock - records log start

Recovery • Superblock has address of last checkpoint • First recover the log • Read then the log from logical end (backward pass) and undo all aborted operations • Do forward pass and reapply all updates that have not yet been written to disk

Other Approaches • Using non-volatile cache (Network Appliances) – Ultimate solution: can keep data in cache forever – Additional cost of NVRAM • Simulating NVRAM with – Uninterruptible power supplies – Hardware-protected RAM (Rio): cache is marked read-only most of the time

Other Approaches • Log-structured file systems – Not always possible to write all related meta-data in a single disk transfer – Sprite-LFS adds small log entries to the beginning of segments – BSD-LFS make segments temporary until all metadata necessary to ensure the recoverability of the file system are on disk.

Distributed File Systems

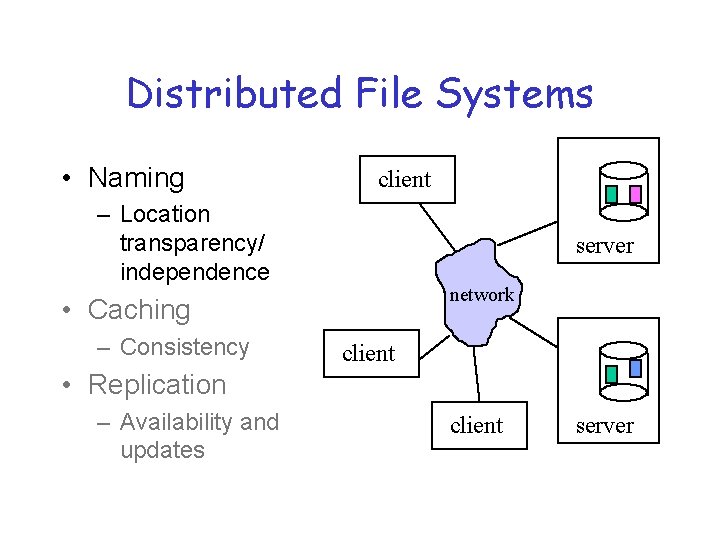

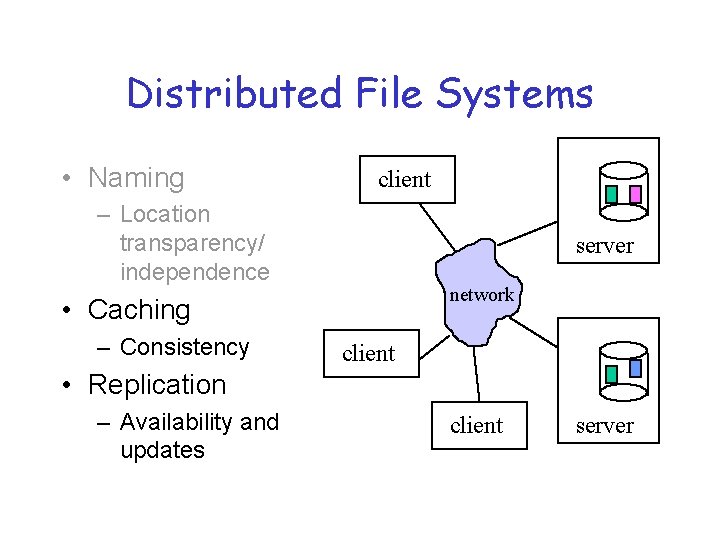

Distributed File Systems • Naming client – Location transparency/ independence server network • Caching – Consistency client • Replication – Availability and updates client server

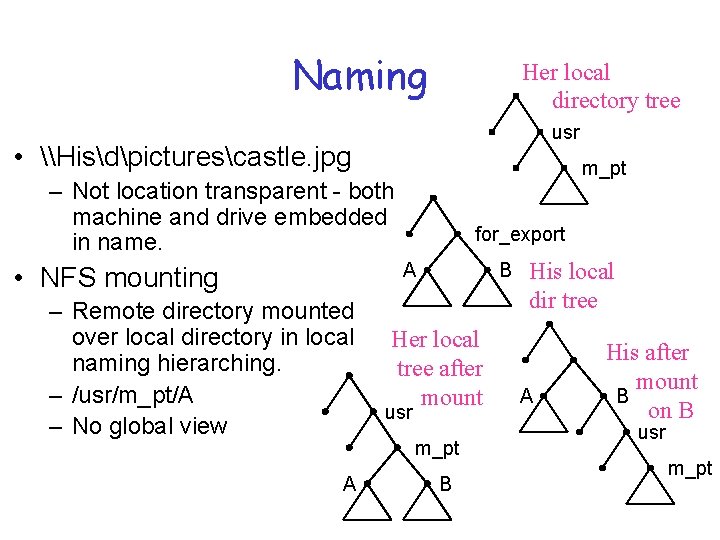

Naming Her local directory tree usr • \Hisdpicturescastle. jpg m_pt – Not location transparent - both machine and drive embedded in name. for_export A • NFS mounting – Remote directory mounted over local directory in local naming hierarching. – /usr/m_pt/A – No global view A B Her local tree after mount usr m_pt B His local dir tree A His after mount B on B usr m_pt

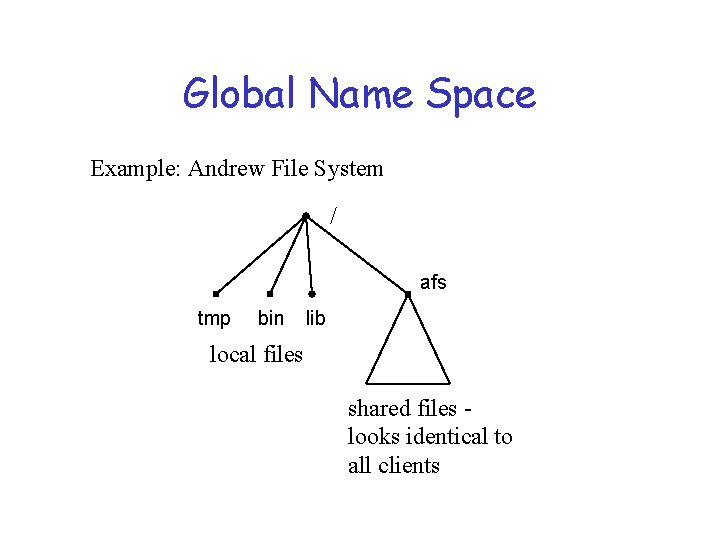

Global Name Space Example: Andrew File System / afs tmp bin lib local files shared files looks identical to all clients

Hints • A valuable distributed systems design technique that can be illustrated in naming. • Definition: information that is not guaranteed to be correct. If it is, it can improve performance. If not, things will still work OK. Must be able to validate information. • Example: Sprite prefix tables

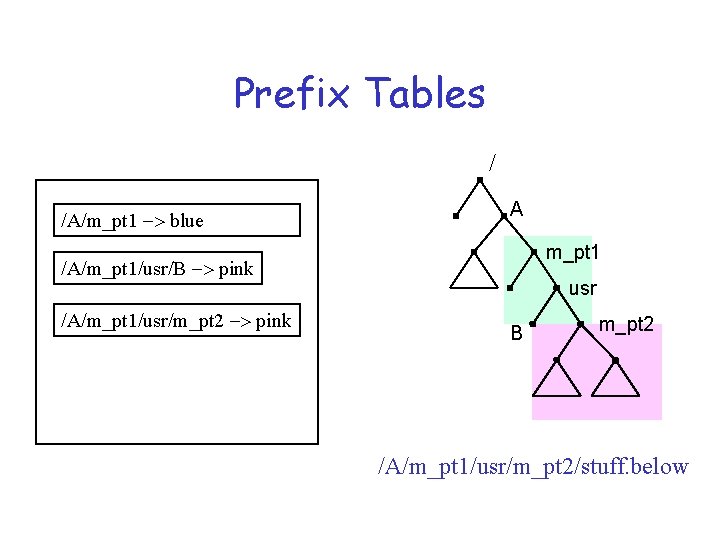

Prefix Tables / /A/m_pt 1 -> blue A m_pt 1 /A/m_pt 1/usr/B -> pink /A/m_pt 1/usr/m_pt 2 -> pink usr B m_pt 2 /A/m_pt 1/usr/m_pt 2/stuff. below

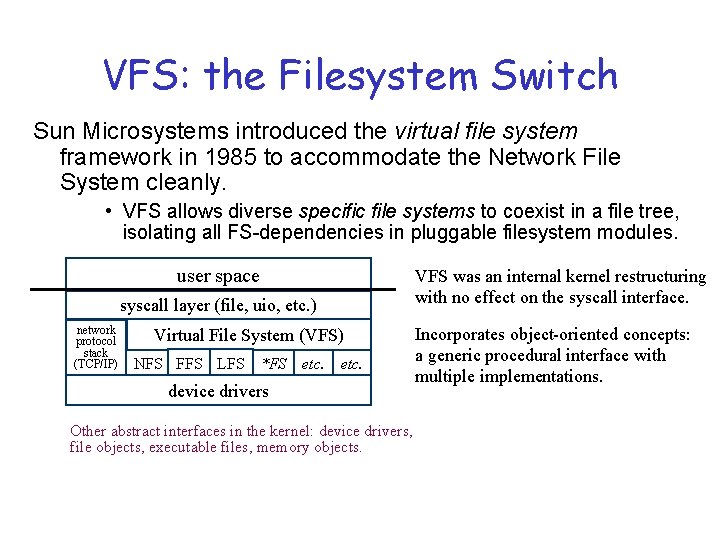

VFS: the Filesystem Switch Sun Microsystems introduced the virtual file system framework in 1985 to accommodate the Network File System cleanly. • VFS allows diverse specific file systems to coexist in a file tree, isolating all FS-dependencies in pluggable filesystem modules. user space syscall layer (file, uio, etc. ) network protocol stack (TCP/IP) Virtual File System (VFS) NFS FFS LFS *FS etc. device drivers Other abstract interfaces in the kernel: device drivers, file objects, executable files, memory objects. VFS was an internal kernel restructuring with no effect on the syscall interface. Incorporates object-oriented concepts: a generic procedural interface with multiple implementations.

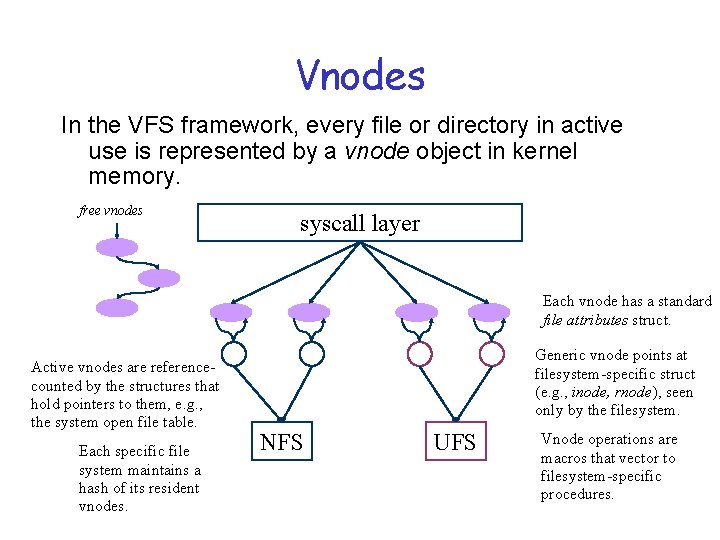

Vnodes In the VFS framework, every file or directory in active use is represented by a vnode object in kernel memory. free vnodes syscall layer Each vnode has a standard file attributes struct. Active vnodes are referencecounted by the structures that hold pointers to them, e. g. , the system open file table. Each specific file system maintains a hash of its resident vnodes. Generic vnode points at filesystem-specific struct (e. g. , inode, rnode), seen only by the filesystem. NFS UFS Vnode operations are macros that vector to filesystem-specific procedures.

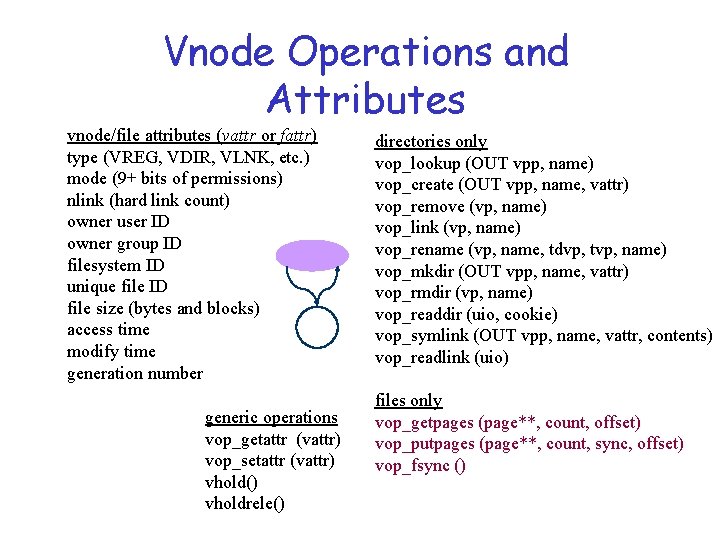

Vnode Operations and Attributes vnode/file attributes (vattr or fattr) type (VREG, VDIR, VLNK, etc. ) mode (9+ bits of permissions) nlink (hard link count) owner user ID owner group ID filesystem ID unique file ID file size (bytes and blocks) access time modify time generation number generic operations vop_getattr (vattr) vop_setattr (vattr) vhold() vholdrele() directories only vop_lookup (OUT vpp, name) vop_create (OUT vpp, name, vattr) vop_remove (vp, name) vop_link (vp, name) vop_rename (vp, name, tdvp, tvp, name) vop_mkdir (OUT vpp, name, vattr) vop_rmdir (vp, name) vop_readdir (uio, cookie) vop_symlink (OUT vpp, name, vattr, contents) vop_readlink (uio) files only vop_getpages (page**, count, offset) vop_putpages (page**, count, sync, offset) vop_fsync ()

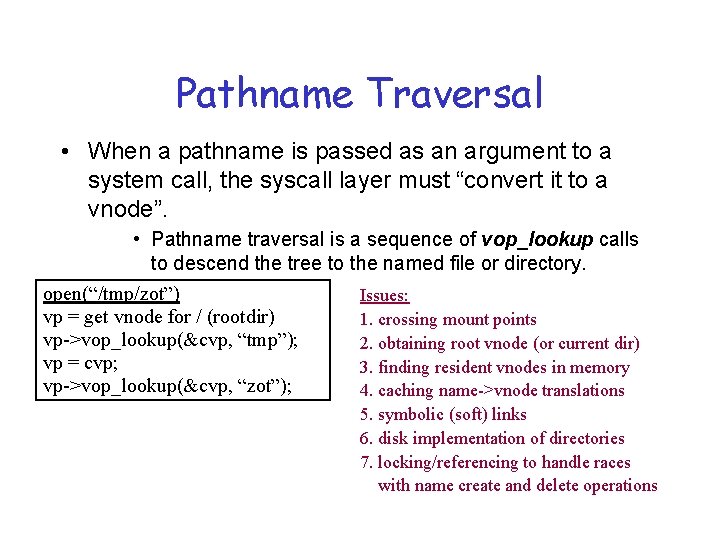

Pathname Traversal • When a pathname is passed as an argument to a system call, the syscall layer must “convert it to a vnode”. • Pathname traversal is a sequence of vop_lookup calls to descend the tree to the named file or directory. open(“/tmp/zot”) Issues: vp = get vnode for / (rootdir) 1. crossing mount points vp->vop_lookup(&cvp, “tmp”); 2. obtaining root vnode (or current dir) vp = cvp; 3. finding resident vnodes in memory vp->vop_lookup(&cvp, “zot”); 4. caching name->vnode translations 5. symbolic (soft) links 6. disk implementation of directories 7. locking/referencing to handle races with name create and delete operations

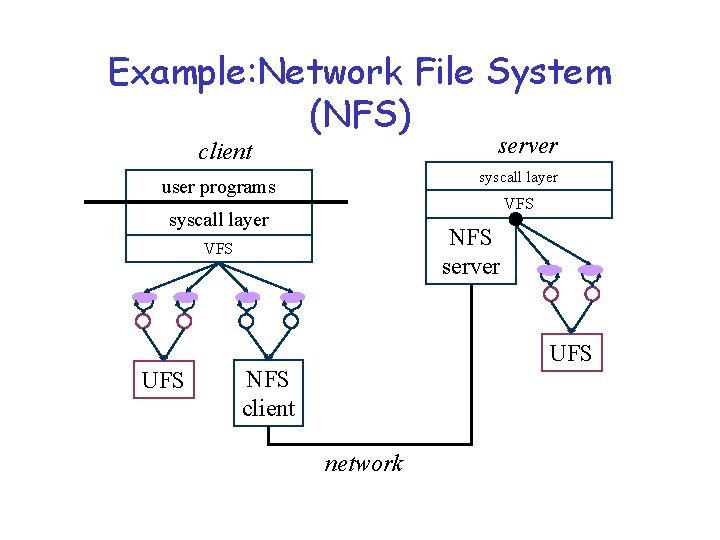

Example: Network File System (NFS) server client syscall layer user programs VFS syscall layer NFS server VFS UFS NFS client network

NFS Protocol NFS is a network protocol layered above TCP/IP. – Original implementations (and most today) use UDP datagram transport for low overhead. • Maximum IP datagram size was increased to match FS block size, to allow send/receive of entire file blocks. • Some newer implementations use TCP as a transport. NFS protocol is a set of message formats and types. • Client issues a request message for a service operation. • Server performs requested operation and returns a reply message with status and (perhaps) requested data.

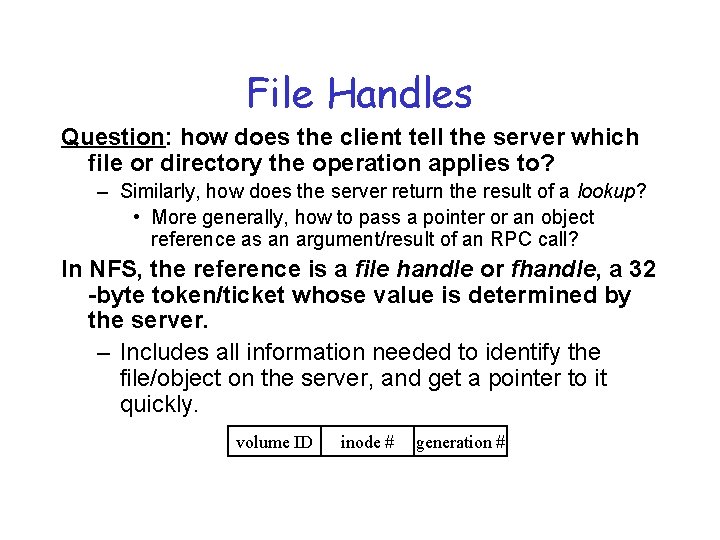

File Handles Question: how does the client tell the server which file or directory the operation applies to? – Similarly, how does the server return the result of a lookup? • More generally, how to pass a pointer or an object reference as an argument/result of an RPC call? In NFS, the reference is a file handle or fhandle, a 32 -byte token/ticket whose value is determined by the server. – Includes all information needed to identify the file/object on the server, and get a pointer to it quickly. volume ID inode # generation #

NFS: From Concept to Implementation Now that we understand the basics, how do we make it work in a real system? – How do we make it fast? • Answer: caching, read-ahead, and write-behind. – How do we make it reliable? What if a message is dropped? What if the server crashes? • Answer: client retransmits request until it receives a response. – How do we preserve file system semantics in the presence of failures and/or sharing by multiple clients? • Answer: well, we don’t, at least not completely. – What about security and access control?

Distributed File Systems • Naming client – Location transparency/ independence server network • Caching – Consistency client • Replication – Availability and updates client server

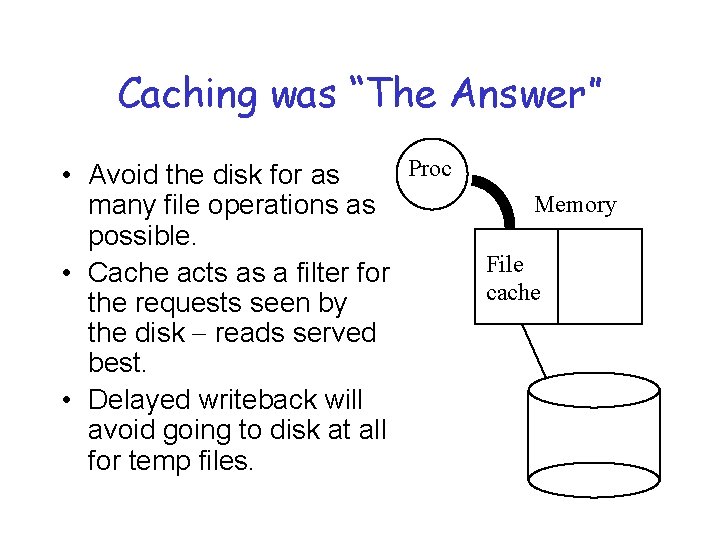

Caching was “The Answer” Proc • Avoid the disk for as many file operations as possible. • Cache acts as a filter for the requests seen by the disk - reads served best. • Delayed writeback will avoid going to disk at all for temp files. Memory File cache

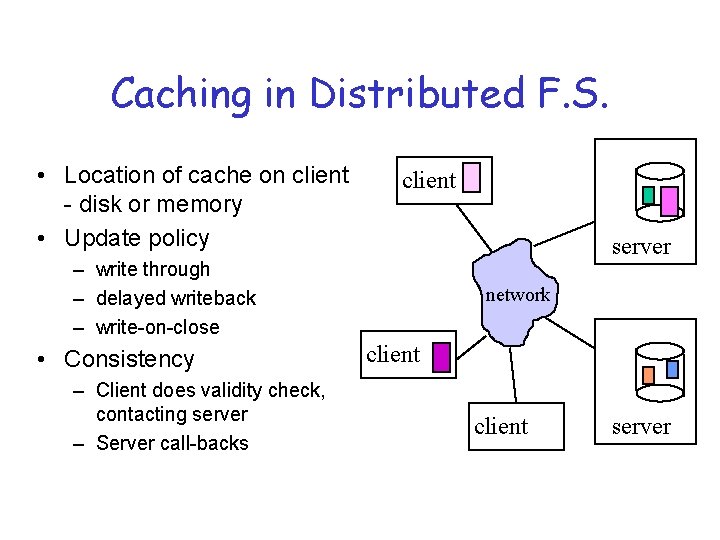

Caching in Distributed F. S. • Location of cache on client - disk or memory • Update policy client server – write through – delayed writeback – write-on-close • Consistency – Client does validity check, contacting server – Server call-backs network client server

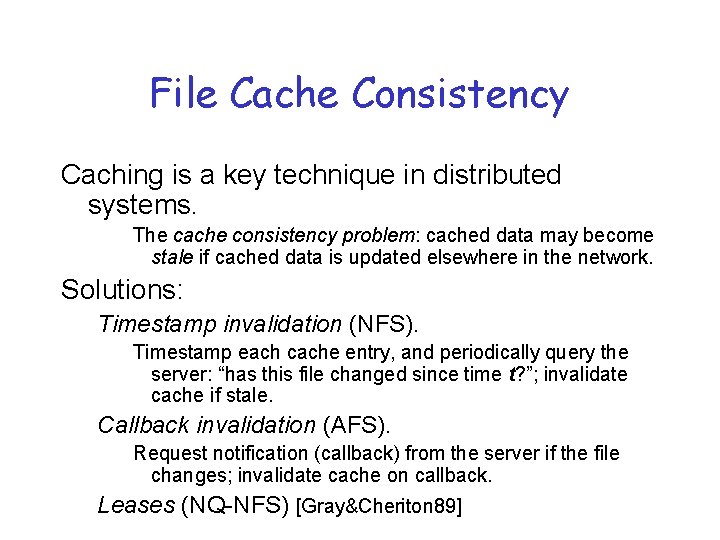

File Cache Consistency Caching is a key technique in distributed systems. The cache consistency problem: cached data may become stale if cached data is updated elsewhere in the network. Solutions: Timestamp invalidation (NFS). Timestamp each cache entry, and periodically query the server: “has this file changed since time t? ”; invalidate cache if stale. Callback invalidation (AFS). Request notification (callback) from the server if the file changes; invalidate cache on callback. Leases (NQ-NFS) [Gray&Cheriton 89]

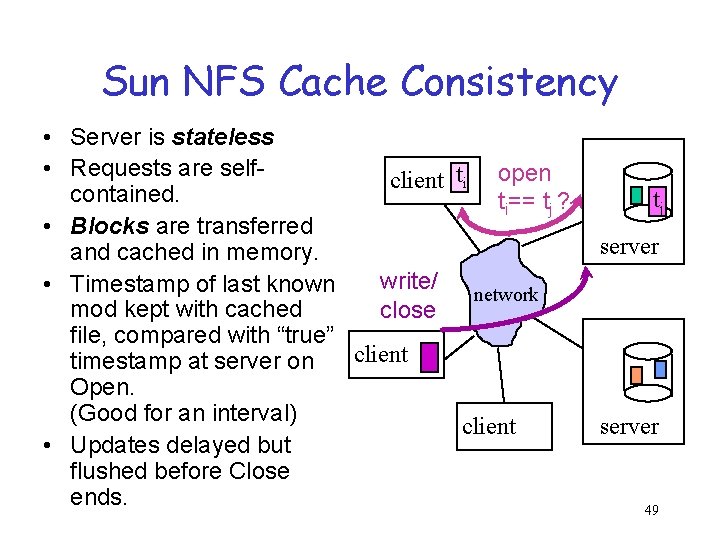

Sun NFS Cache Consistency • Server is stateless • Requests are selfclient ti open contained. ti== tj ? • Blocks are transferred and cached in memory. write/ • Timestamp of last known network mod kept with cached close file, compared with “true” client timestamp at server on Open. (Good for an interval) client • Updates delayed but flushed before Close ends. tj server 49

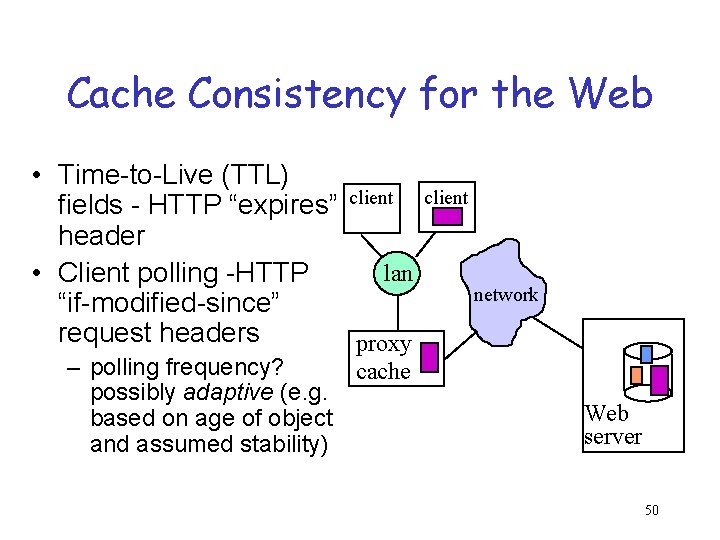

Cache Consistency for the Web • Time-to-Live (TTL) fields - HTTP “expires” client header lan • Client polling -HTTP “if-modified-since” request headers proxy – polling frequency? cache possibly adaptive (e. g. based on age of object and assumed stability) client network Web server 50

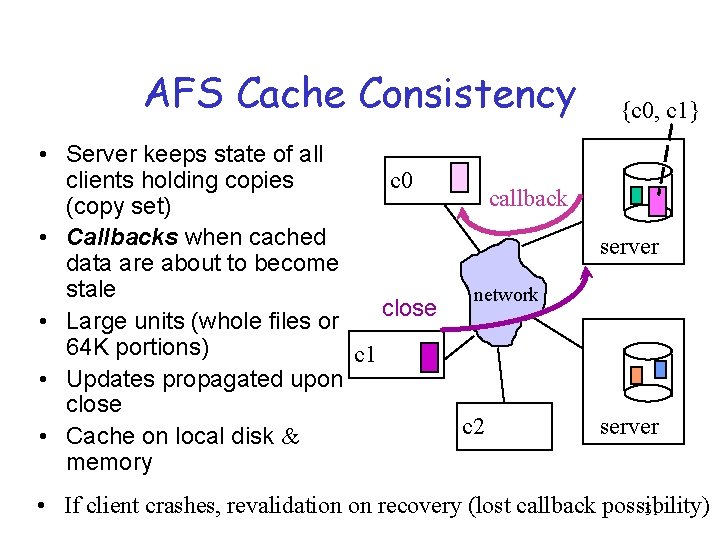

AFS Cache Consistency • Server keeps state of all c 0 clients holding copies callback (copy set) • Callbacks when cached data are about to become stale network close • Large units (whole files or 64 K portions) c 1 • Updates propagated upon close c 2 • Cache on local disk & memory {c 0, c 1} server • If client crashes, revalidation on recovery (lost callback possibility) 51

NQ-NFS Leases In NQ-NFS, a client obtains a lease on the file that permits the client’s desired read/write activity. “A lease is a ticket permitting an activity; the lease is valid until some expiration time. ” – A read-caching lease allows the client to cache clean data. Guarantee: no other client is modifying the file. – A write-caching lease allows the client to buffer modified data for the file. Guarantee: no other client has the file cached. Leases may be revoked by the server if another client requests a conflicting operation (server sends eviction notice). Since leases expire, losing “state” of leases at server is OK.

Coda – Using Caching to Handle Disconnected Access • Single location-transparent UNIX FS. • Scalability - coarse granularity (whole-file caching, volume management) ®First class (server) replication and client caching (second class replication) ®Optimistic replication & consistency maintenance. • Designed for disconnected operation for mobile computing clients

Explicit First-class Replication • File name maps to set of replicas, one of which will be used to satisfy request – Goal: availability • Update strategy – Atomic updates - all or none – Primary copy approach – Voting schemes – Optimistic, then detection of conflicts

Optimistic vs. Pessimistic • High availability Conflicting updates are the potential problem - requiring detection and resolution. • Avoids conflicts by holding of shared or exclusive locks. • How to arrange when disconnection is involuntary? • Leases [Gray, SOSP 89] puts a time-bound on locks but what about expiration?

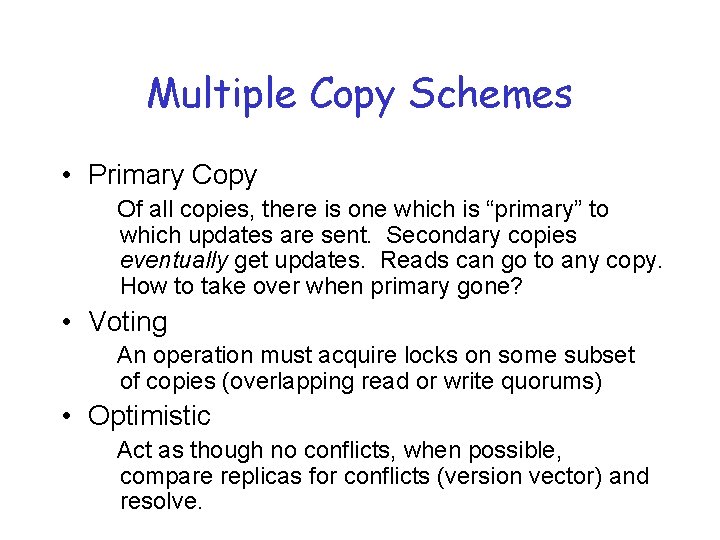

Multiple Copy Schemes • Primary Copy Of all copies, there is one which is “primary” to which updates are sent. Secondary copies eventually get updates. Reads can go to any copy. How to take over when primary gone? • Voting An operation must acquire locks on some subset of copies (overlapping read or write quorums) • Optimistic Act as though no conflicts, when possible, compare replicas for conflicts (version vector) and resolve.

“Committing” a Transaction Begin Transaction lots of reads and writes Commit or Abort Transaction

“Committing” a Transaction Begin Transaction Withdraw $1000 from savings account Deposit $1000 to checking account Commit or Abort Transaction

Atomic Transactions ACID property - data is recoverable. • Atomicity - a transaction must be all-or-nothing. • Consistency - a transaction takes system from one consistent state to another • Isolation - No intermediate effects are visible to others - serializability • Durability - the effects of a committed transaction are permanent

Implementation Mechanisms • Stable storage • Shadow blocks • Logging

Stable Storage We need to be able to trust something not to be corrupted or destroyed • Mirrored disks. Always write disk 1, verify, then write disk 2. – If crash, compare disks, disk 1 “wins” – If bad checksum, use other disk block. • Battery backed up RAM

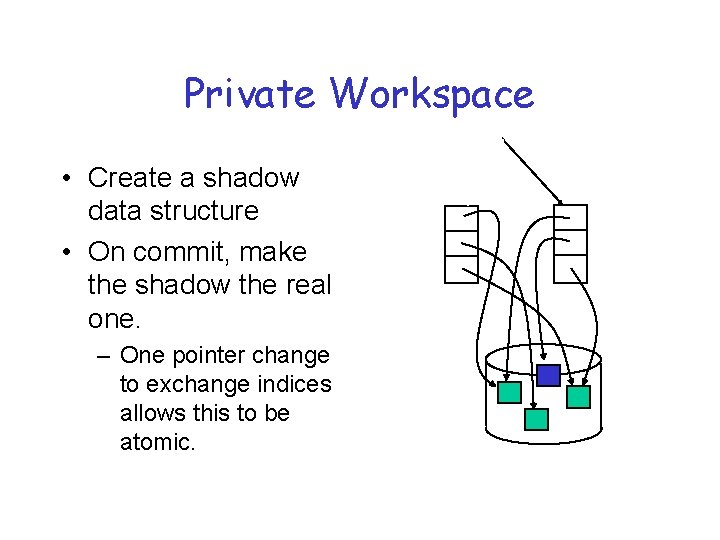

Private Workspace • Create a shadow data structure • On commit, make the shadow the real one. – One pointer change to exchange indices allows this to be atomic.

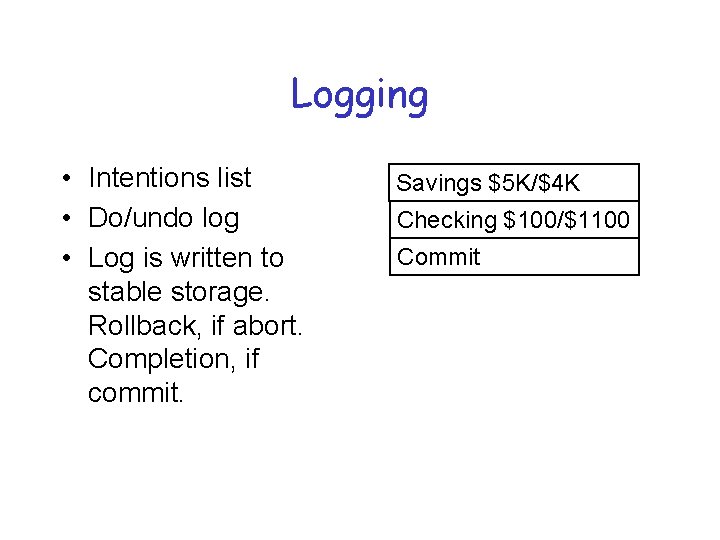

Logging • Intentions list • Do/undo log • Log is written to stable storage. Rollback, if abort. Completion, if commit. Savings $5 K/$4 K Checking $100/$1100 Commit

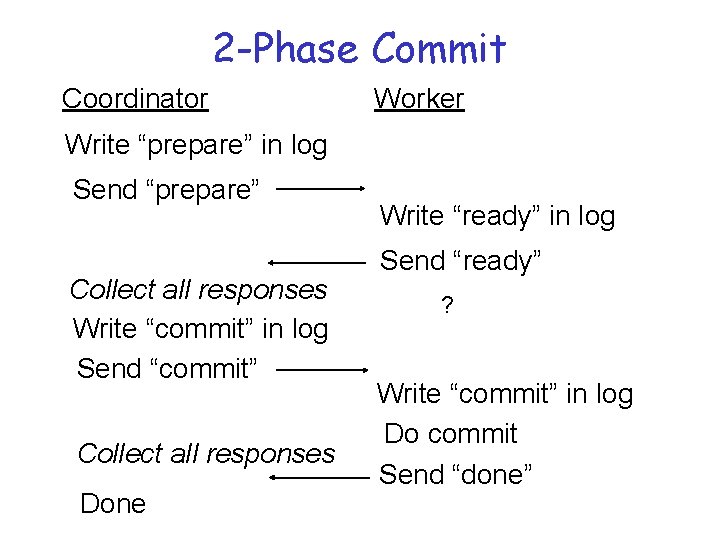

2 -Phase Commit Coordinator Worker Write “prepare” in log Send “prepare” Collect all responses Write “commit” in log Send “commit” Collect all responses Done Write “ready” in log Send “ready” ? Write “commit” in log Do commit Send “done”

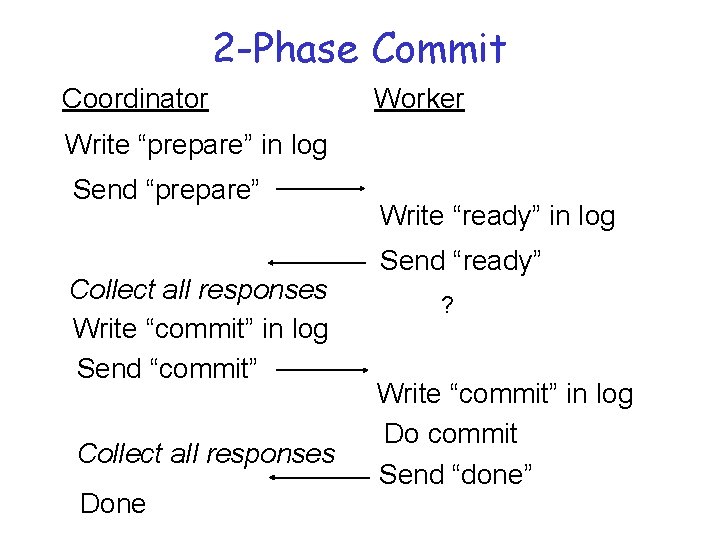

2 -Phase Commit Coordinator Worker Write “prepare” in log Send “prepare” Collect all responses Write “commit” in log Send “commit” Collect all responses Done Write “ready” in log Send “ready” ? Write “commit” in log Do commit Send “done”

Coda – Using Caching to Handle Disconnected Access • Single location-transparent UNIX FS. • Scalability - coarse granularity (whole-file caching, volume management) • First class (server) replication and client caching (second class replication) • Optimistic replication & consistency maintenance. ® Designed for disconnected operation for mobile computing clients

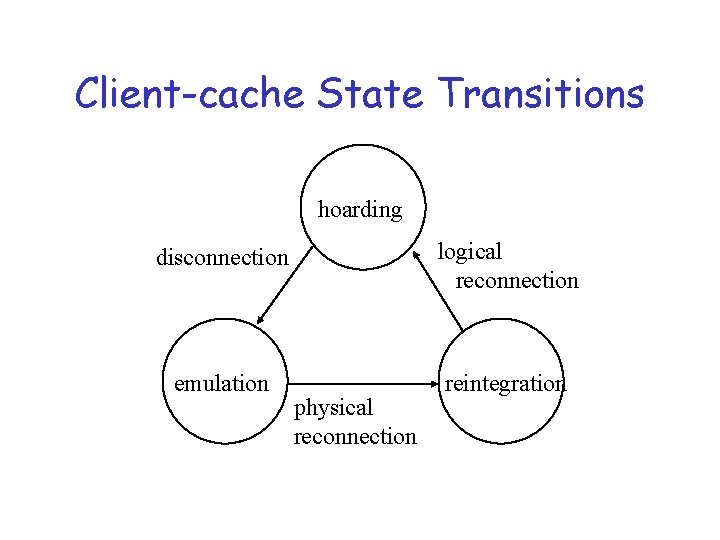

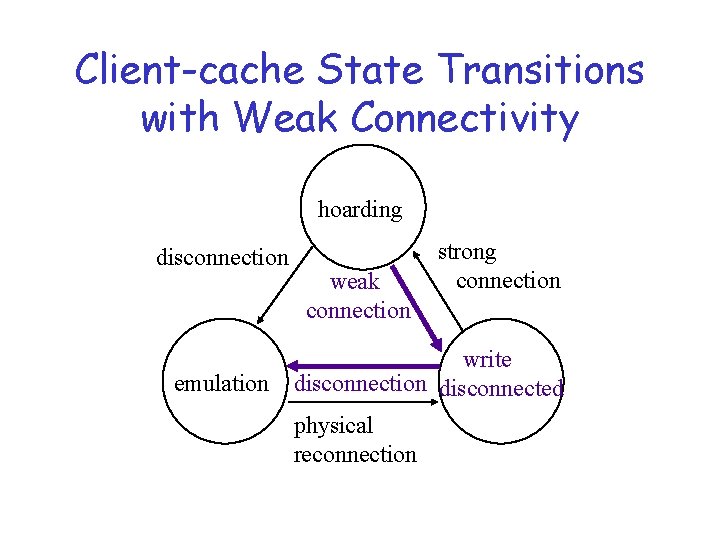

Client-cache State Transitions hoarding disconnection logical reconnection emulation reintegration physical reconnection

Prefetching • To avoid the access latency of moving the data in for that first cache miss. • Prediction! “Guessing” what data will be needed in the future. – It’s not for free: Consequences of guessing wrong Overhead

Hoarding - Prefetching for Disconnected Information Access • Caching for availability (not just latency) • Cache misses, when operating disconnected, have no redeeming value. (Unlike in connected mode, they can’t be used as the triggering mechanism for filling the cache. ) • How to preload the cache for subsequent disconnection? Planned or unplanned. • What does it mean for replacement?

Hoard Database • Per-workstation, per-user set of pathnames with priority • User can explicitly tailor HDB using scripts called hoard profiles • Delimited observations of reference behavior (snapshot spying with bookends)

Coda Hoarding State • Balancing act - caching for 2 purposes at once: – performance of current accesses, – availability of future disconnected access. • Prioritized algorithm Priority of object for retention in cache is f(hoard priority, recent usage). • Hoard walking (periodically or on request) maintains equilibrium - no uncached object has higher priority than any of cached objects

The Hoard Walk • Hoard walk - phase 1 - reevaluate name bindings (e. g. , any new children created by other clients? ) • Hoard walk - phase 2 - recalculate priorities in cache and in HDB, evict and fetch to restore equilibrium

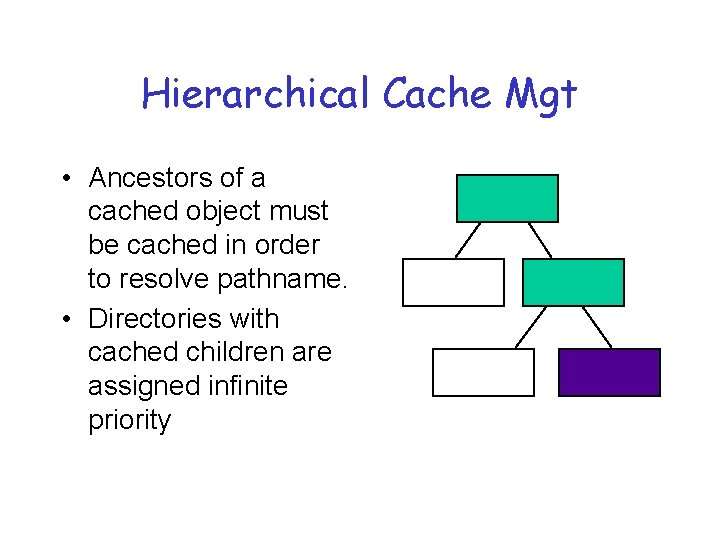

Hierarchical Cache Mgt • Ancestors of a cached object must be cached in order to resolve pathname. • Directories with cached children are assigned infinite priority

Callbacks During Hoarding • Traditional callbacks - invalidate object and refetch on demand • With threat of disconnection – Purge files and refetch on demand or hoard walk – Directories - mark as stale and fix on reference or hoard walk, available until then just in case.

Emulation State • Pseudo-server, subject to validation upon reconnection • Cache management by priority – modified objects assigned infinite priority – freeing up disk space - compression, replacement to floppy, backout updates • Replay log also occupies non-volatile storage (RVM - recoverable virtual memory)

Client-cache State Transitions with Weak Connectivity hoarding disconnection emulation weak connection strong connection write disconnection disconnected physical reconnection

Cache Misses with Weak Connectivity • At least now it’s possible to service misses but $$$ and it’s a foreground activity (noticable impact). Maybe not • User patience threshold - estimated service time compared with what is acceptable • Defer misses by adding to HDB and letting hoard walk deal with it • User interaction during hoard walk.

File System Energy Management

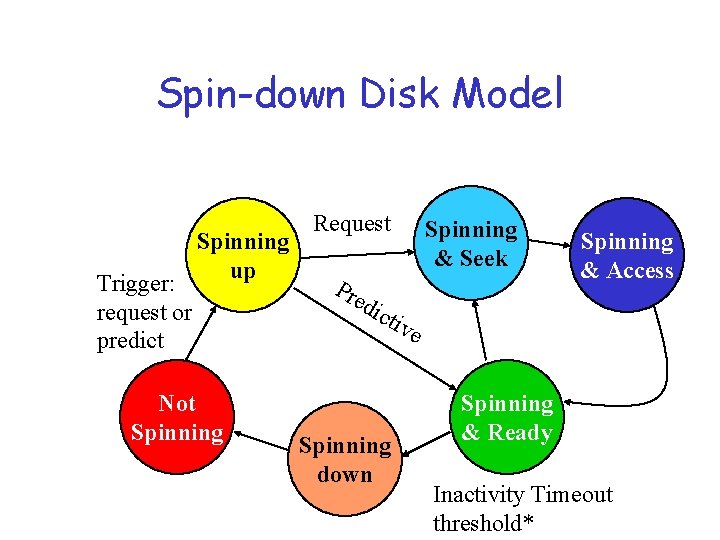

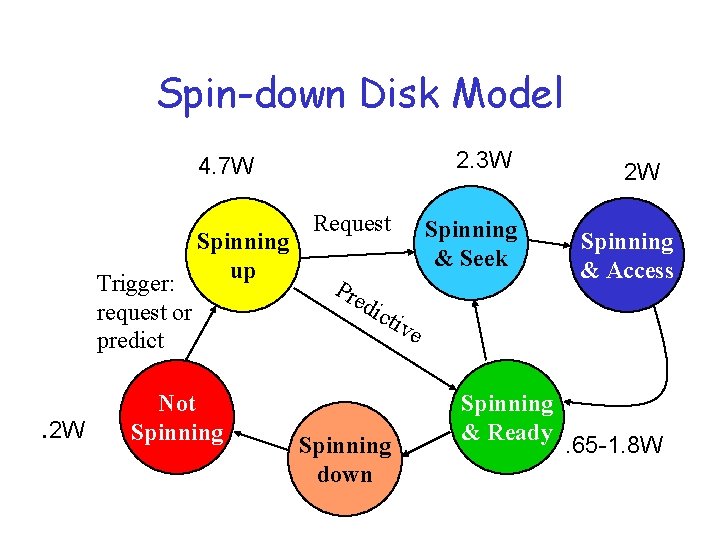

Spin-down Disk Model Trigger: request or predict Spinning up Not Spinning Request Spinning & Seek Pre Spinning & Access dic tiv e Spinning down Spinning & Ready Inactivity Timeout threshold*

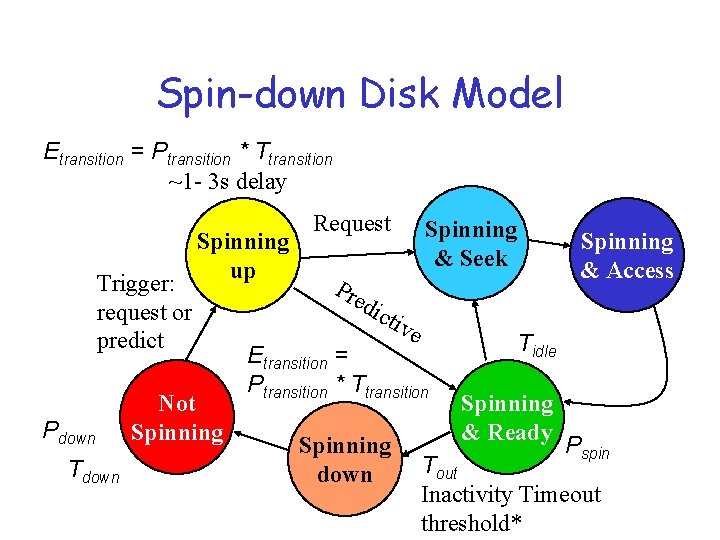

Spin-down Disk Model Etransition = Ptransition * Ttransition ~1 - 3 s delay Trigger: request or predict Pdown Tdown Spinning up Not Spinning Request Spinning & Seek Spinning & Access Pre dic tiv e Etransition = Ptransition * Ttransition Spinning down Tidle Spinning & Ready Pspin Tout Inactivity Timeout threshold*

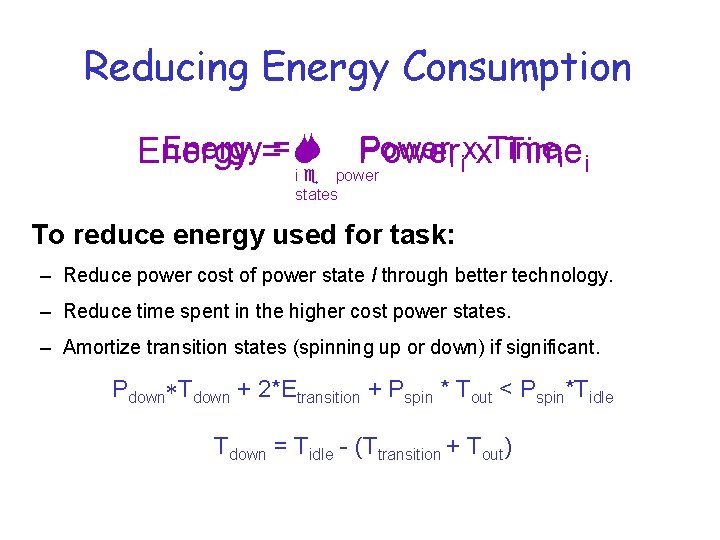

Reducing Energy Consumption Energy== S S Poweri ixx. Time Energy Time i i i e power states To reduce energy used for task: – Reduce power cost of power state I through better technology. – Reduce time spent in the higher cost power states. – Amortize transition states (spinning up or down) if significant. Pdown*Tdown + 2*Etransition + Pspin * Tout < Pspin*Tidle Tdown = Tidle - (Ttransition + Tout)

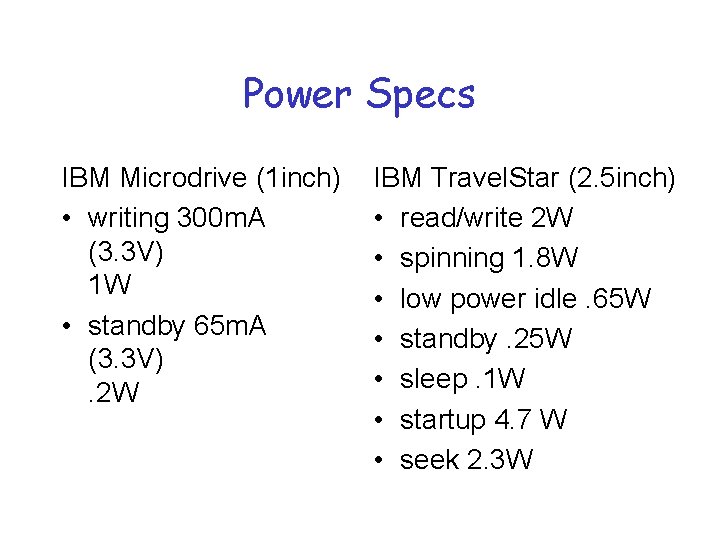

Power Specs IBM Microdrive (1 inch) • writing 300 m. A (3. 3 V) 1 W • standby 65 m. A (3. 3 V). 2 W IBM Travel. Star (2. 5 inch) • read/write 2 W • spinning 1. 8 W • low power idle. 65 W • standby. 25 W • sleep. 1 W • startup 4. 7 W • seek 2. 3 W

Spin-down Disk Model 2. 3 W 4. 7 W Trigger: request or predict . 2 W Spinning up Not Spinning Request Spinning & Seek Pre 2 W Spinning & Access dic tiv e Spinning down Spinning & Ready . 65 -1. 8 W

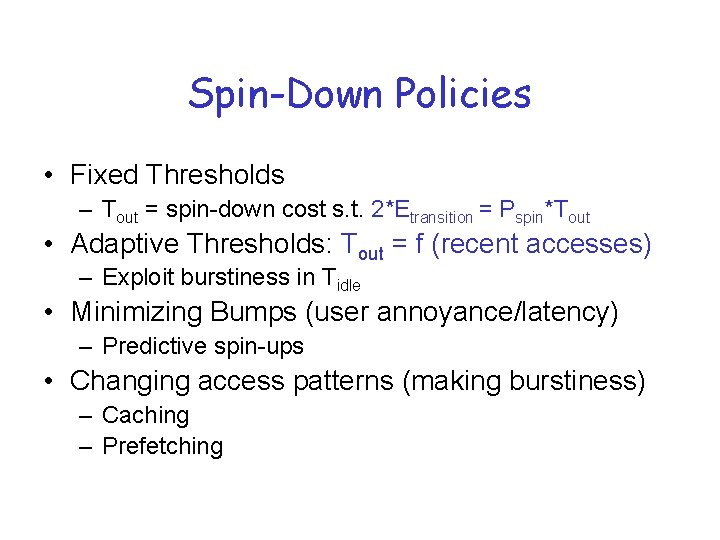

Spin-Down Policies • Fixed Thresholds – Tout = spin-down cost s. t. 2*Etransition = Pspin*Tout • Adaptive Thresholds: Tout = f (recent accesses) – Exploit burstiness in Tidle • Minimizing Bumps (user annoyance/latency) – Predictive spin-ups • Changing access patterns (making burstiness) – Caching – Prefetching

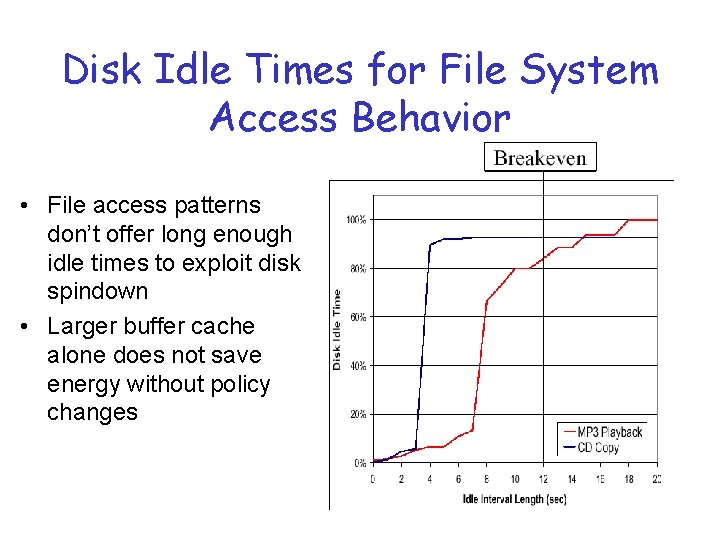

Disk Idle Times for File System Access Behavior • File access patterns don’t offer long enough idle times to exploit disk spindown • Larger buffer cache alone does not save energy without policy changes

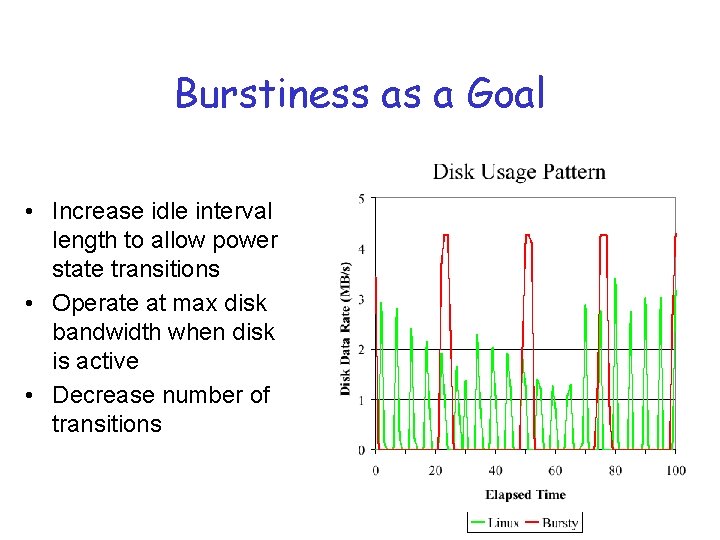

Burstiness as a Goal • Increase idle interval length to allow power state transitions • Operate at max disk bandwidth when disk is active • Decrease number of transitions

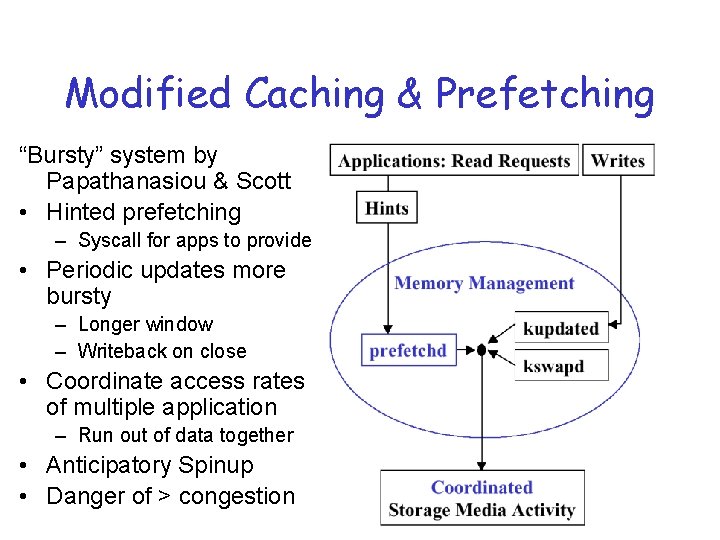

Modified Caching & Prefetching “Bursty” system by Papathanasiou & Scott • Hinted prefetching – Syscall for apps to provide • Periodic updates more bursty – Longer window – Writeback on close • Coordinate access rates of multiple application – Run out of data together • Anticipatory Spinup • Danger of > congestion

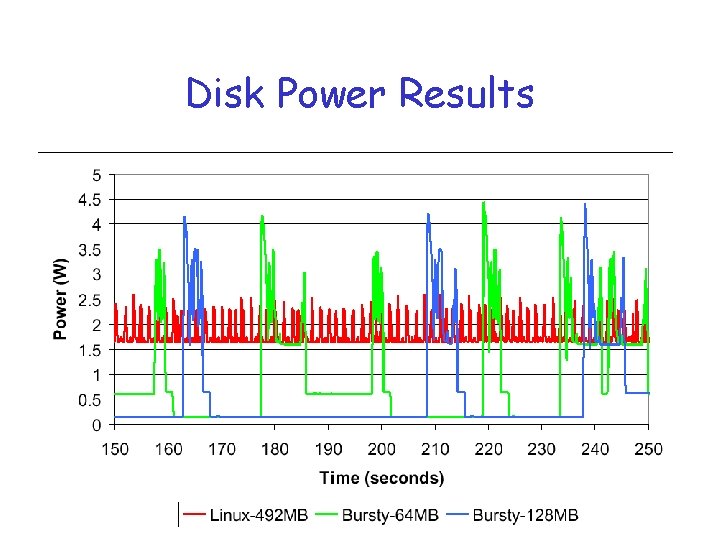

Disk Power Results

![Application Assistance • Hints on prefetching • Coop I/O [Weissel, Beutel, Bellosa] – File Application Assistance • Hints on prefetching • Coop I/O [Weissel, Beutel, Bellosa] – File](http://slidetodoc.com/presentation_image_h2/62c989396ce9cce87cf96f74d1a7d887/image-84.jpg)

Application Assistance • Hints on prefetching • Coop I/O [Weissel, Beutel, Bellosa] – File read and write ops that specify willingness to wait for timeout length of time • If disk spun down, wait for other ops to be issued to be grouped together • If disk spinning, do immediately • ECOSystem – Negotiation based on Currentcy

- Slides: 84