Organizing and Searching Information with XML Selectivity Estimation

![Selectivity Estimation E P[tn] E 1 Propability of tag tn occuring in the xml Selectivity Estimation E P[tn] E 1 Propability of tag tn occuring in the xml](https://slidetodoc.com/presentation_image/56657412f12858ff7cdd962f5cbb4045/image-19.jpg)

![Selectivity Estimation E P[tn] E 1 Propability of tag tn occuring in the xml Selectivity Estimation E P[tn] E 1 Propability of tag tn occuring in the xml](https://slidetodoc.com/presentation_image/56657412f12858ff7cdd962f5cbb4045/image-33.jpg)

- Slides: 67

Organizing and Searching Information with XML Selectivity Estimation for XML Queries Thomas Beer, Christian Linz, Mostafa Khabouze

Outline • Definition Selectivity Estimation • Motivation • Algorithms for Selectivity Estimation o Path Tree o Markov Tables o XPath. Learner o XSketches • Summary

Selectivity Definition Selectivity of a path expression σ(p) is defined as the number of paths in the XML data tree that match the tag sequence in p A Example: σ(A/B/D) = 2 B D E C D

Motivation • Estimating the size of query results and intermediate results is neccessary for effective query optimization • Knowing selectivities of sub-queries help identifying cheap query evaluation plans • Internet Context: Quick feedback about expected result size before evaluating the full query result

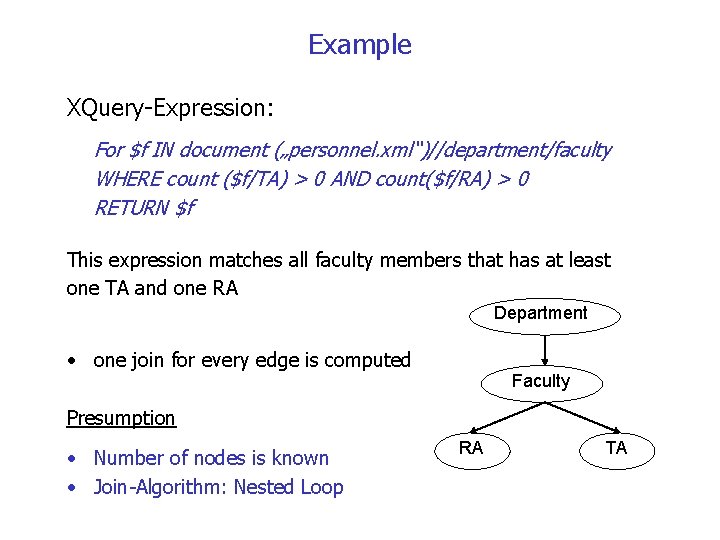

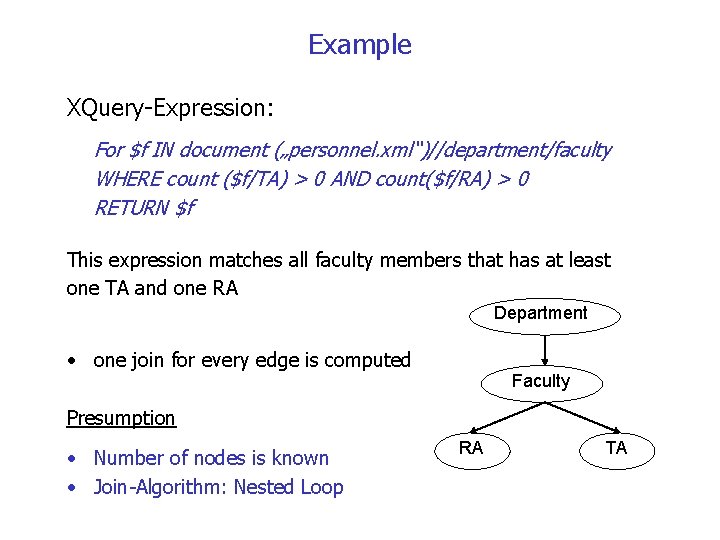

Example XQuery-Expression: For $f IN document („personnel. xml“)//department/faculty WHERE count ($f/TA) > 0 AND count($f/RA) > 0 RETURN $f This expression matches all faculty members that has at least one TA and one RA Department • one join for every edge is computed Faculty Presumption • Number of nodes is known • Join-Algorithm: Nested Loop RA TA

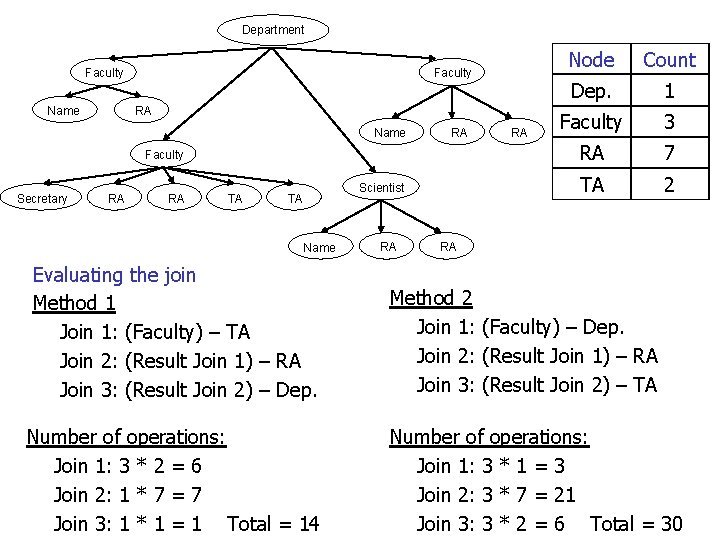

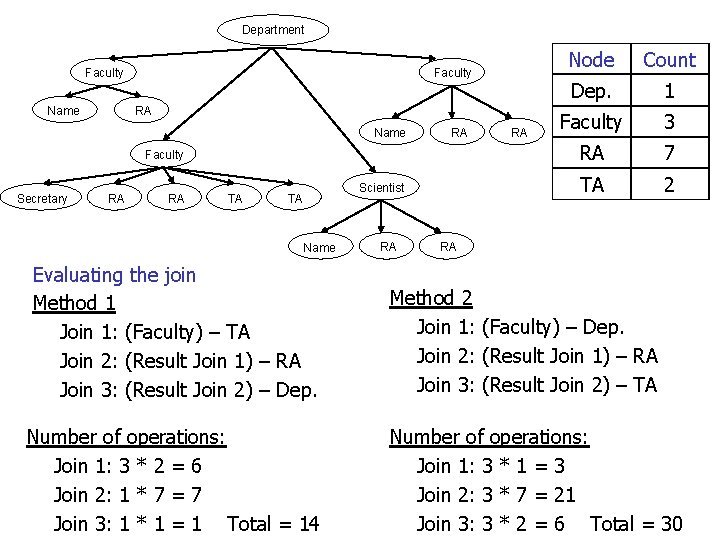

Department Faculty Name Faculty RA Name RA Faculty Secretary RA RA TA Scientist TA Name RA RA Node Count Dep. 1 Faculty 3 RA 7 TA 2 RA Evaluating the join Method 1 Join 1: (Faculty) – TA Join 2: (Result Join 1) – RA Join 3: (Result Join 2) – Dep. Method 2 Join 1: (Faculty) – Dep. Join 2: (Result Join 1) – RA Join 3: (Result Join 2) – TA Number of operations: Join 1: 3 * 2 = 6 Join 2: 1 * 7 = 7 Join 3: 1 * 1 = 1 Total = 14 Number of operations: Join 1: 3 * 1 = 3 Join 2: 3 * 7 = 21 Join 3: 3 * 2 = 6 Total = 30

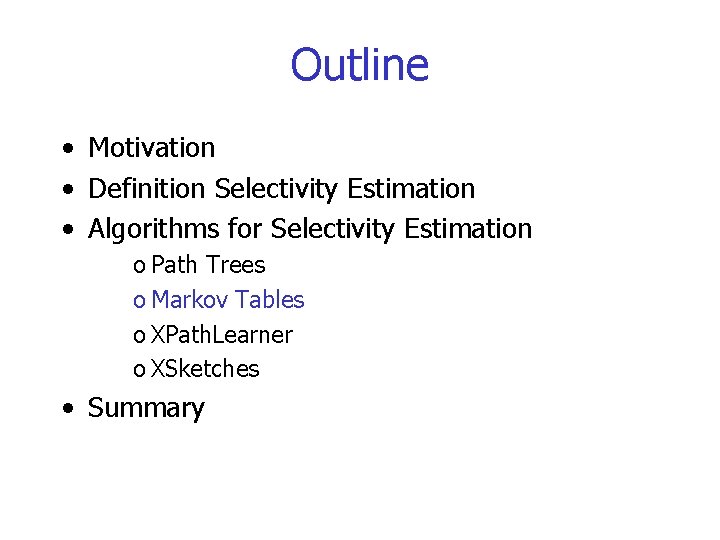

Outline • Motivation • Definition Selectivity Estimation • Algorithms for Selectivity Estimation o Path Trees o Markov Tables o XPath. Learner o XSketches • Summary

Representing XML data structure Path Trees Markov Tables

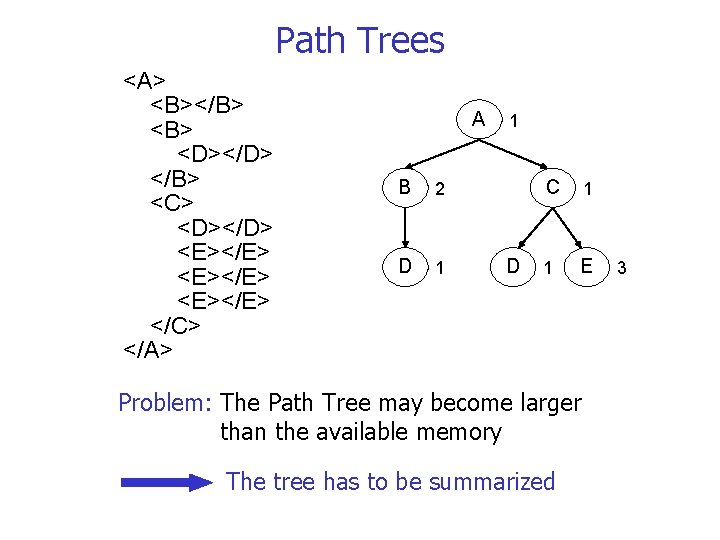

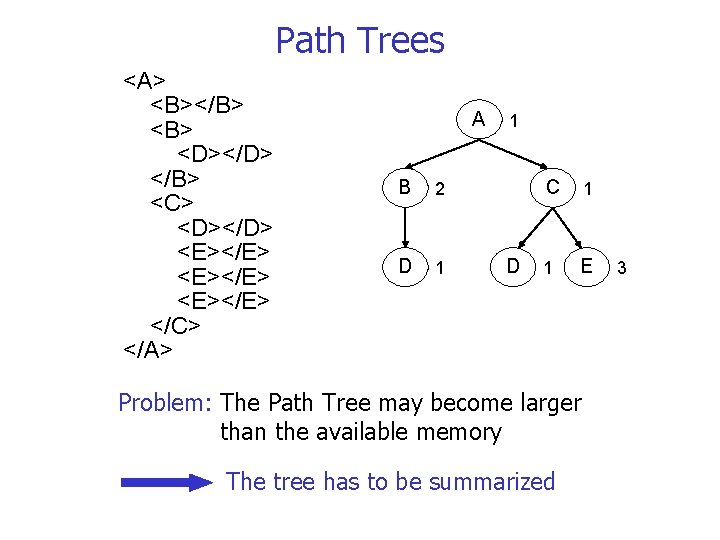

Path Trees <A> <B></B> <D></D> </B> <C> <D></D> <E></E> </C> </A> A B 2 D 1 1 D C 1 1 E Problem: The Path Tree may become larger than the available memory The tree has to be summarized 3

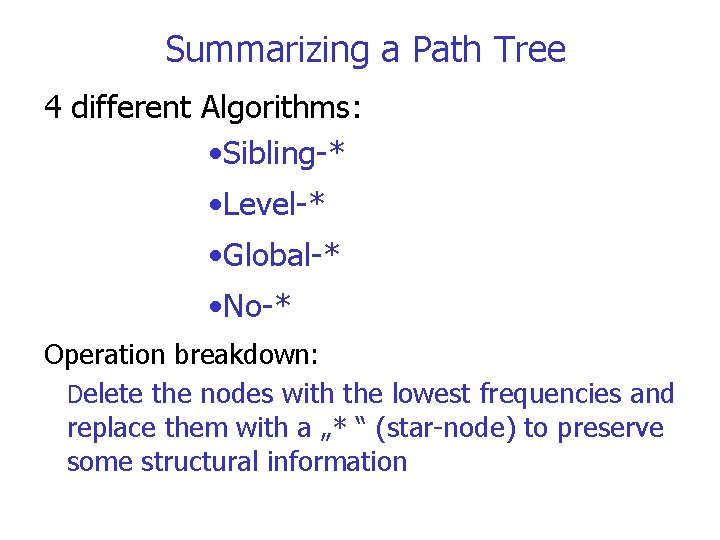

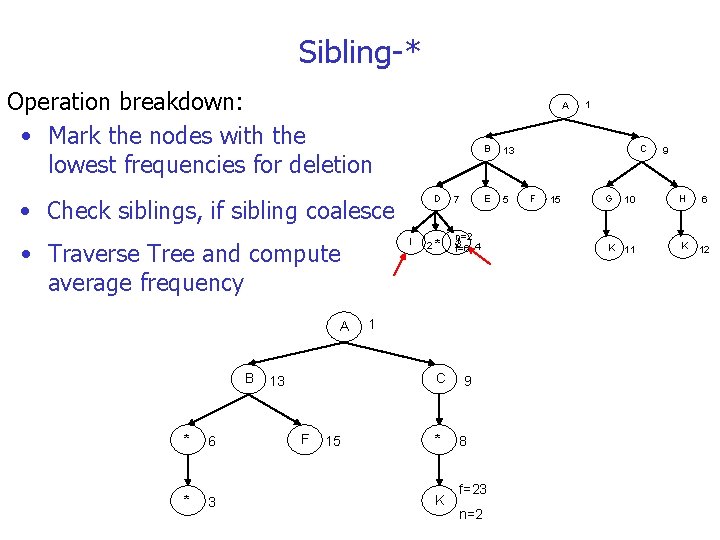

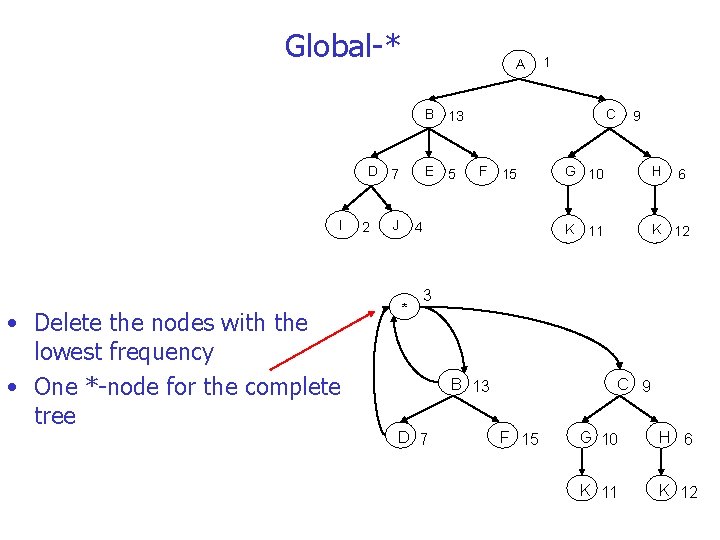

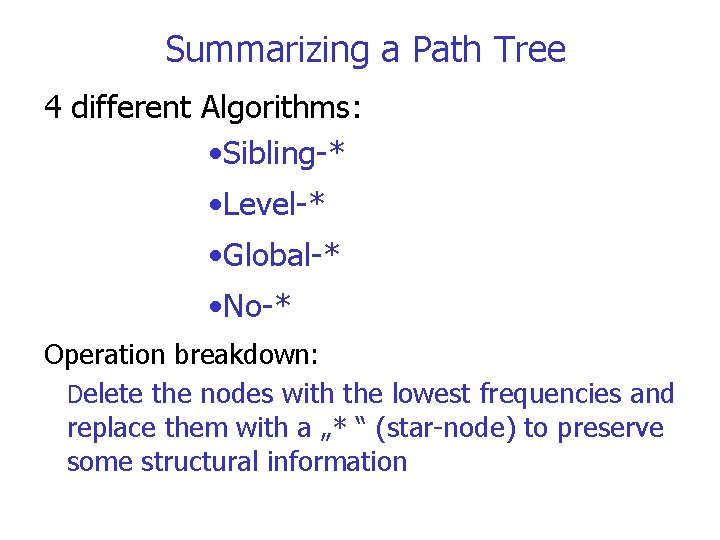

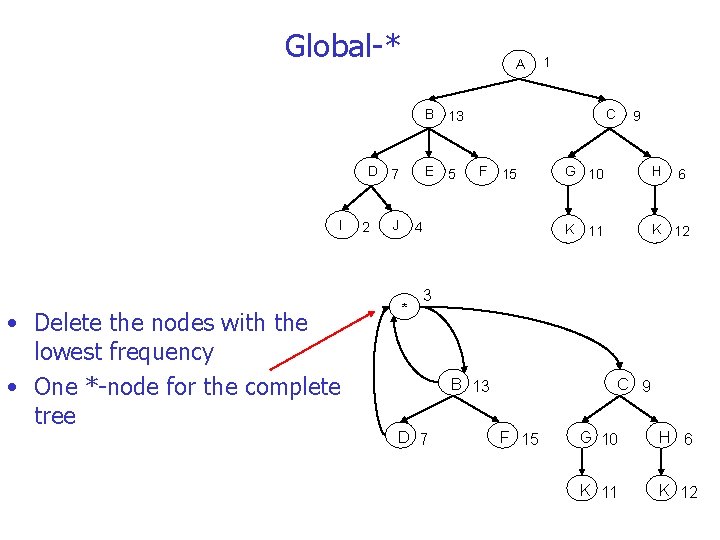

Summarizing a Path Tree 4 different Algorithms: • Sibling-* • Level-* • Global-* • No-* Operation breakdown: Delete the nodes with the lowest frequencies and replace them with a „* “ (star-node) to preserve some structural information

Sibling-* Operation breakdown: • Mark the nodes with the lowest frequencies for deletion A • Check siblings, if sibling coalesce I • Traverse Tree and compute average frequency A B * 6 * 3 13 F 15 2 D 7 * Jn=2 3 4 f=6 C 9 B 13 E 5 1 * K 8 f=23 n=2 1 C F 15 G 9 10 H 6 K 11 K 12

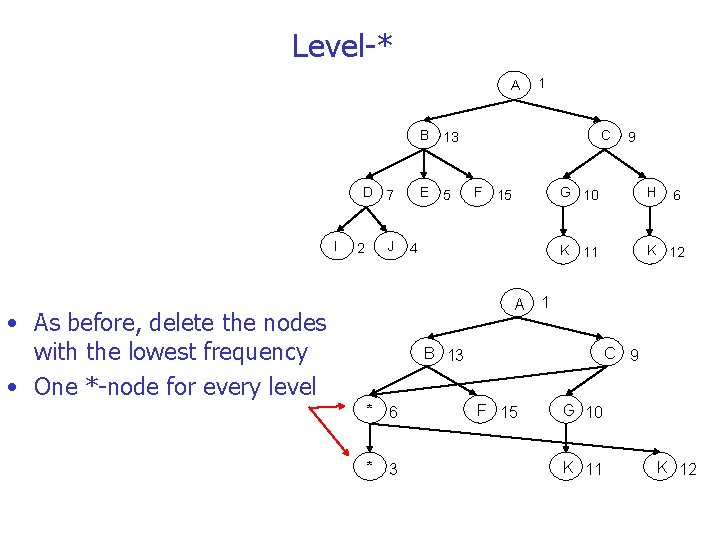

Level-* A 1 B 13 D 7 I J 2 E 5 C F 15 4 9 G 10 H K 11 K 12 6 A 1 • As before, delete the nodes with the lowest frequency • One *-node for every level B 13 * 6 * 3 C 9 F 15 G 10 K 11 K 12

Global-* A 1 B 13 D 7 I • Delete the nodes with the lowest frequency • One *-node for the complete tree 2 E 5 J C F 15 4 * 9 G 10 H K 11 K 12 6 3 B 13 D 7 C 9 F 15 G 10 H 6 K 11 K 12

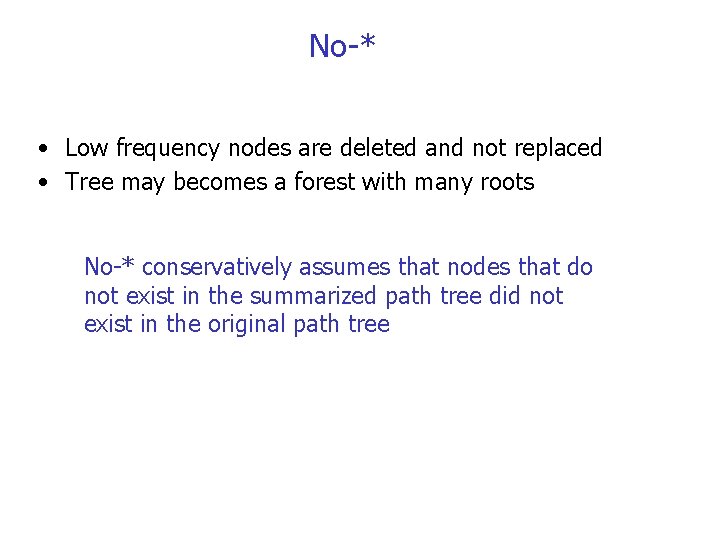

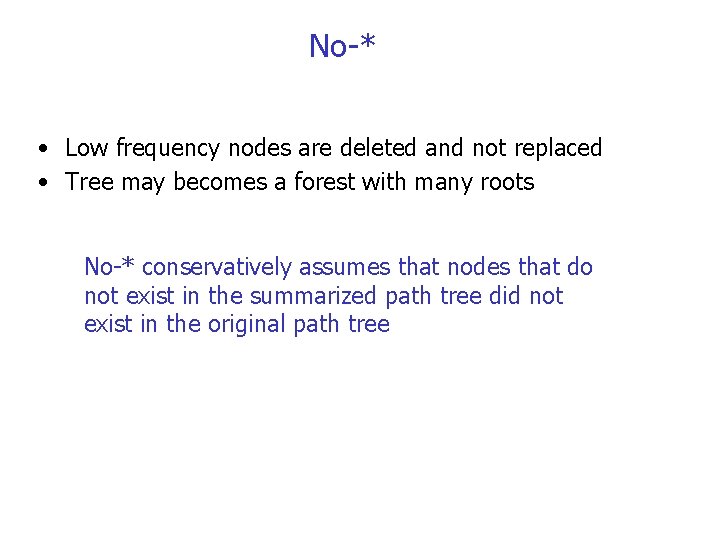

No-* • Low frequency nodes are deleted and not replaced • Tree may becomes a forest with many roots No-* conservatively assumes that nodes that do not exist in the summarized path tree did not exist in the original path tree

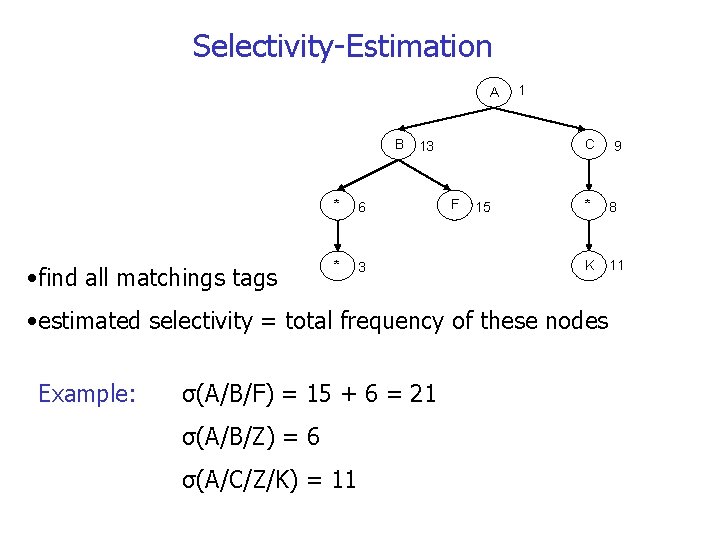

Selectivity-Estimation A B • find all matchings tags * 6 * 3 1 C 13 F 15 * 8 K 11 • estimated selectivity = total frequency of these nodes Example: σ(A/B/F) = 15 + 6 = 21 σ(A/B/Z) = 6 σ(A/C/Z/K) = 11 9

Outline • Motivation • Definition Selectivity Estimation • Algorithms for Selectivity Estimation o Path Trees o Markov Tables o XPath. Learner o XSketches • Summary

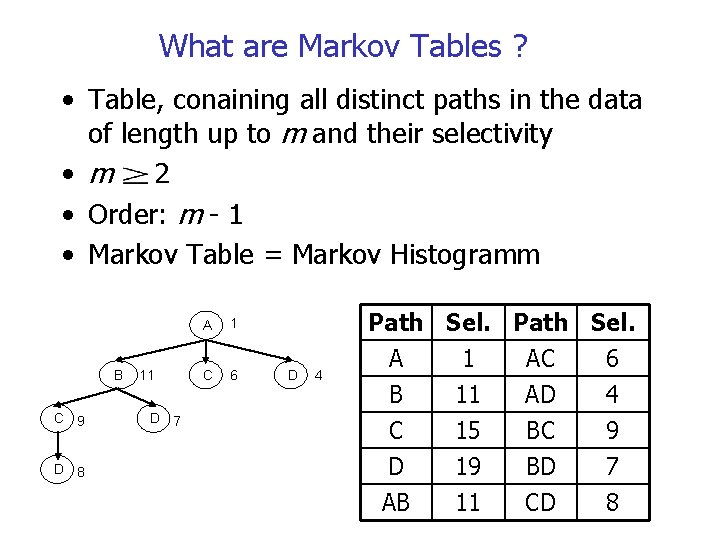

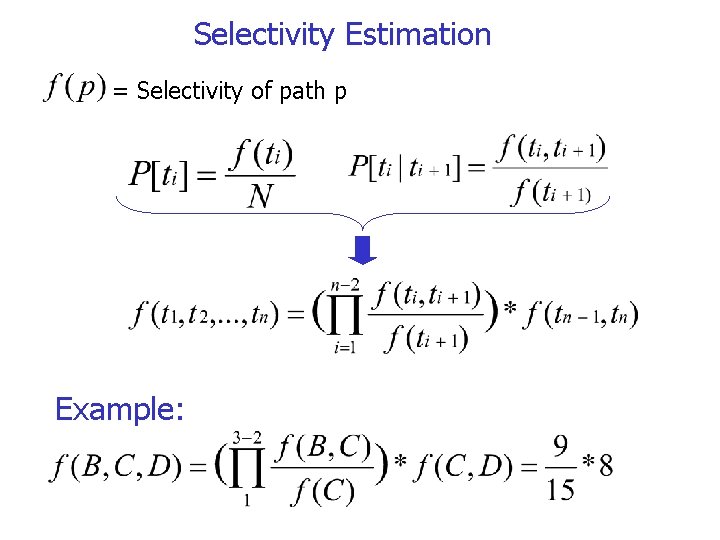

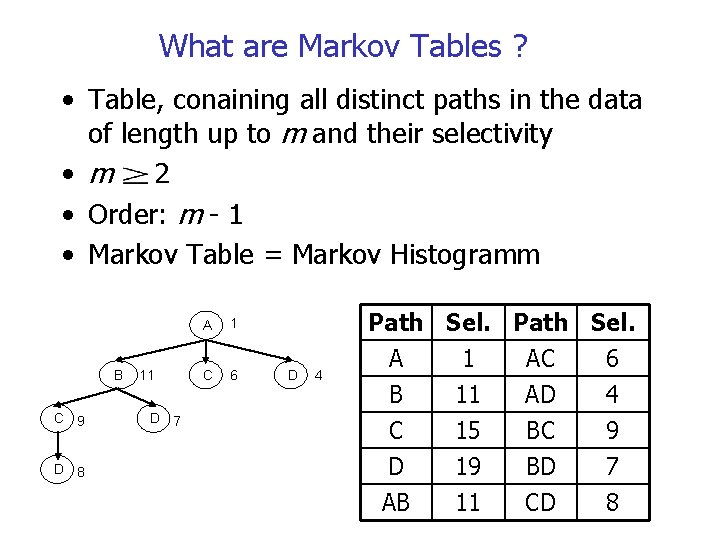

What are Markov Tables ? • Table, conaining all distinct paths in the data of length up to m and their selectivity • m 2 • Order: m - 1 • Markov Table = Markov Histogramm B C 9 D 8 11 D 7 A 1 C 6 D 4 Path Sel. A 1 AC 6 B 11 AD 4 C 15 BC 9 D AB 19 11 BD CD 7 8

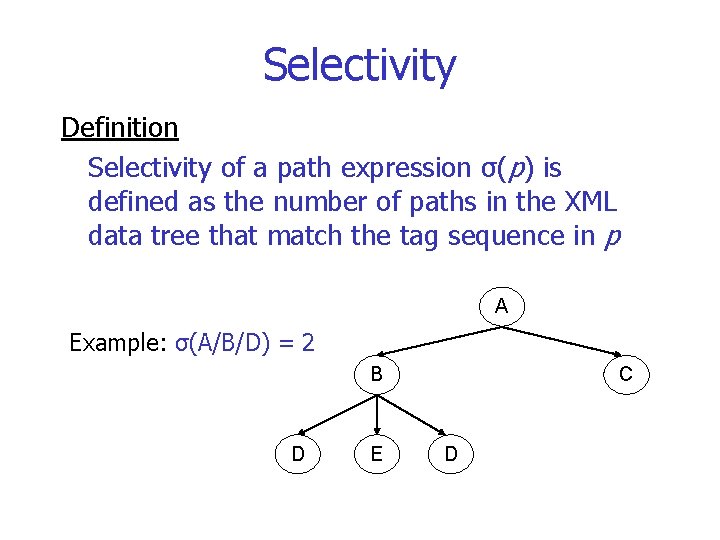

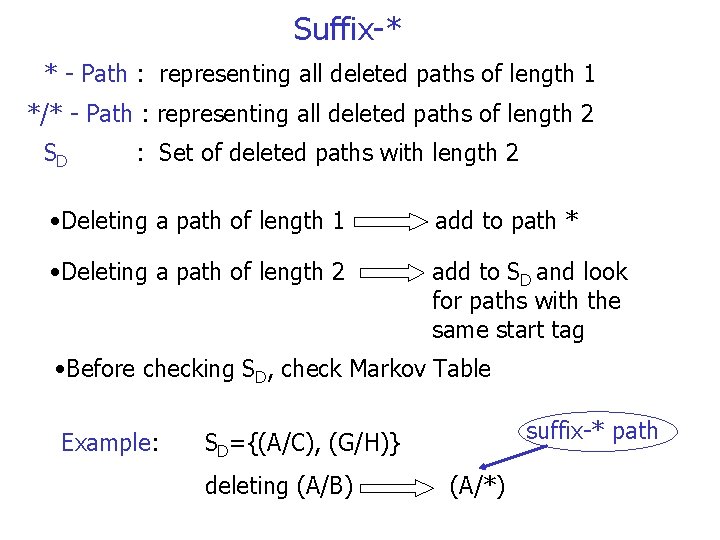

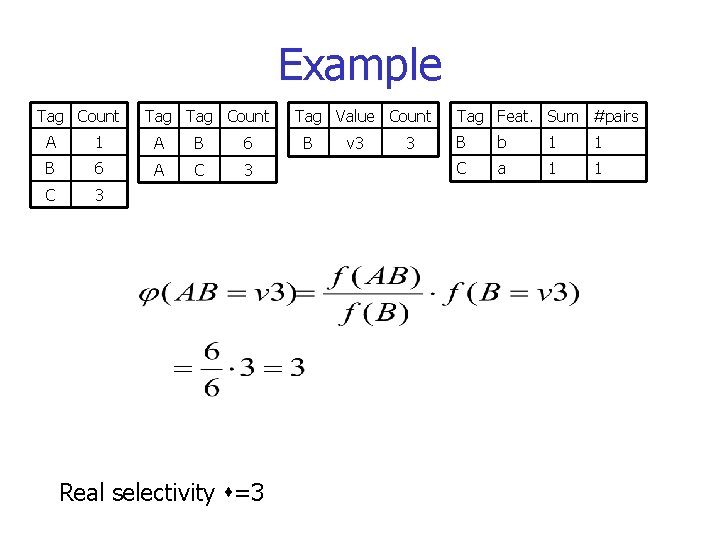

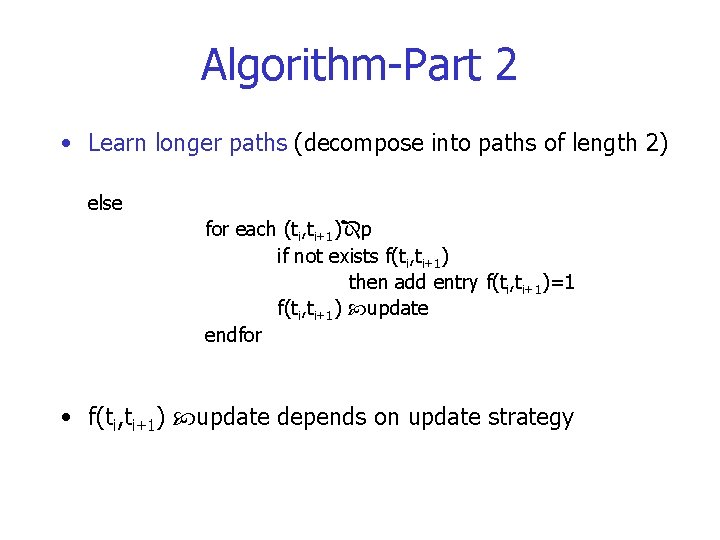

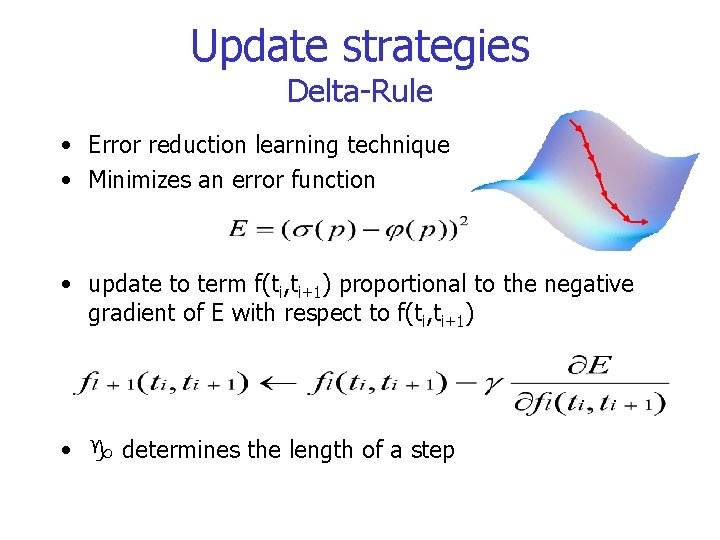

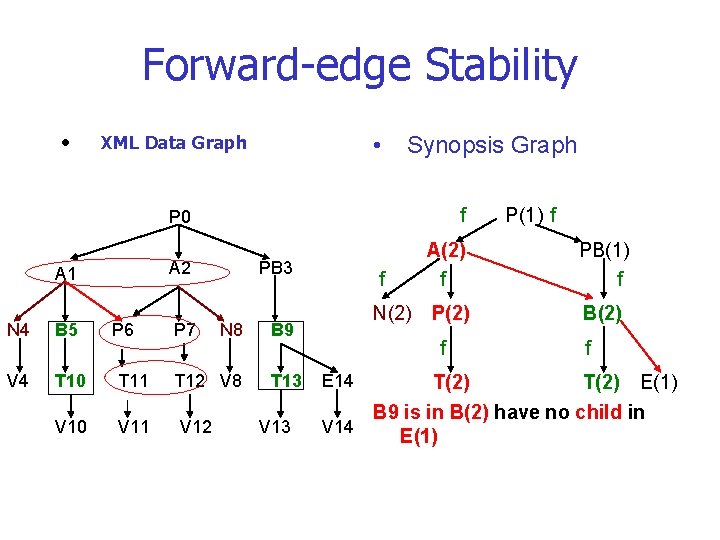

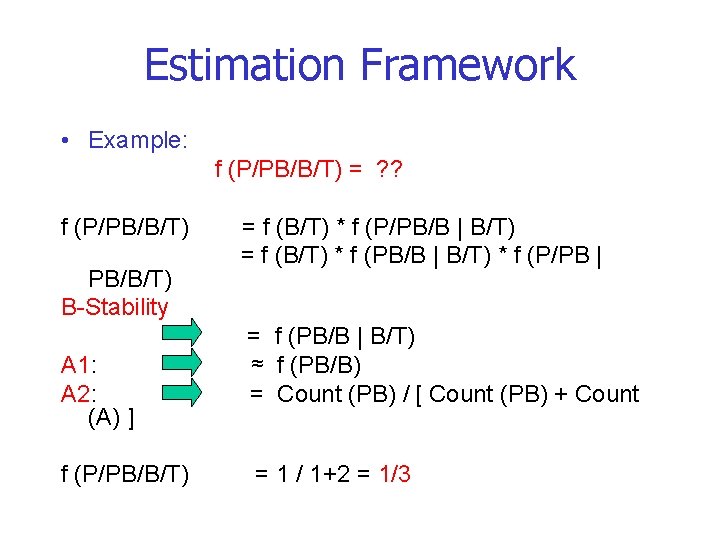

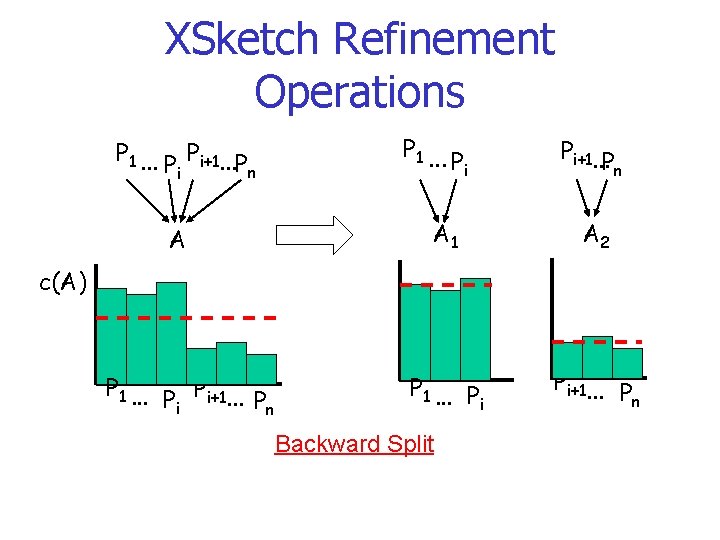

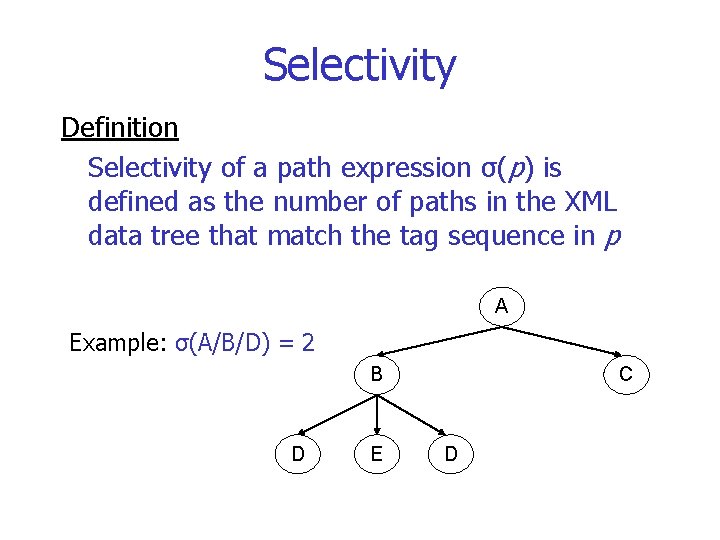

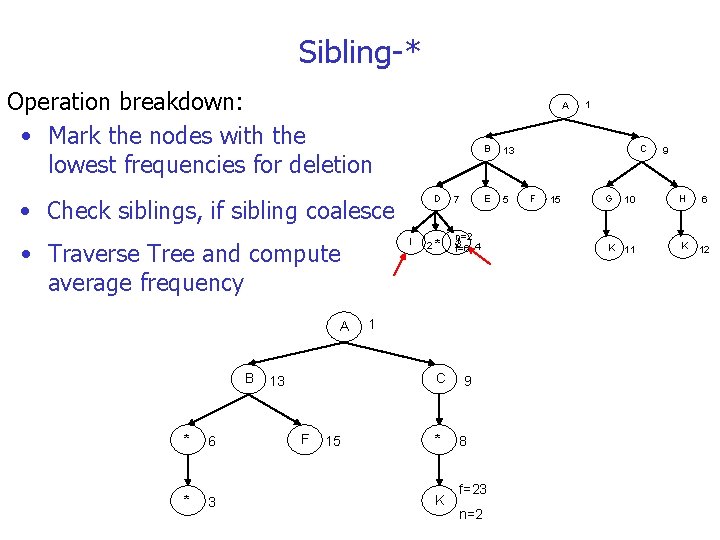

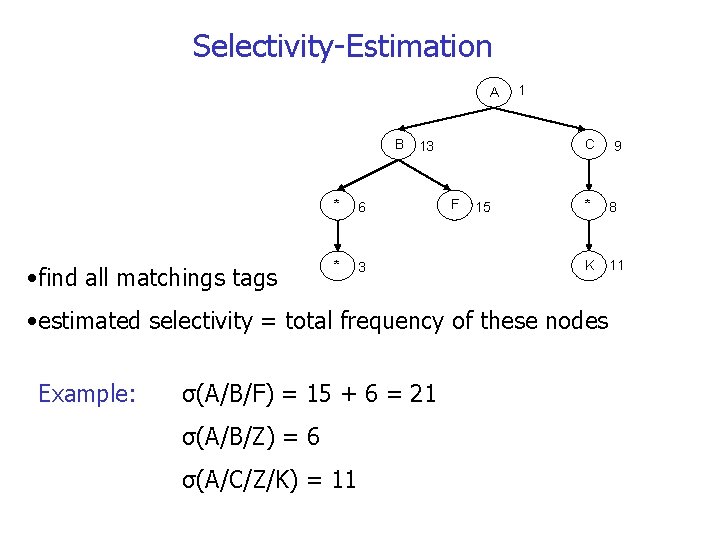

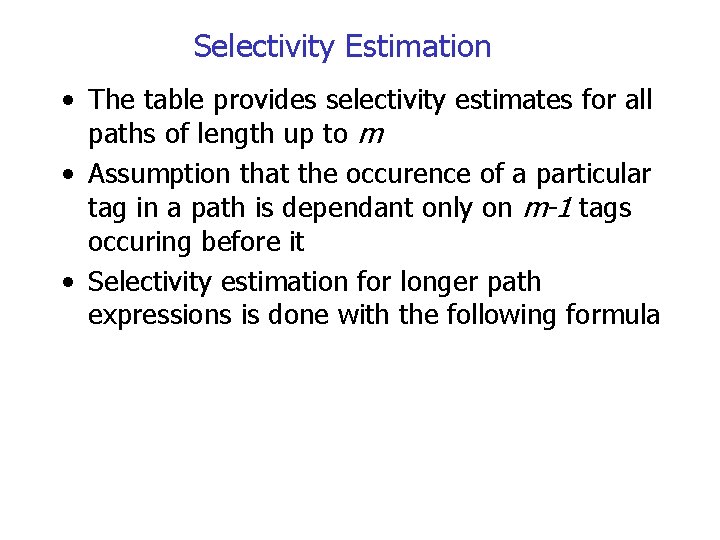

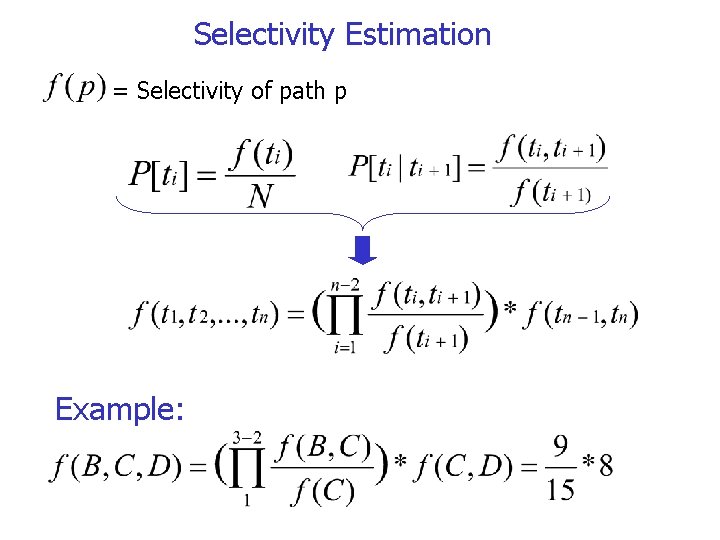

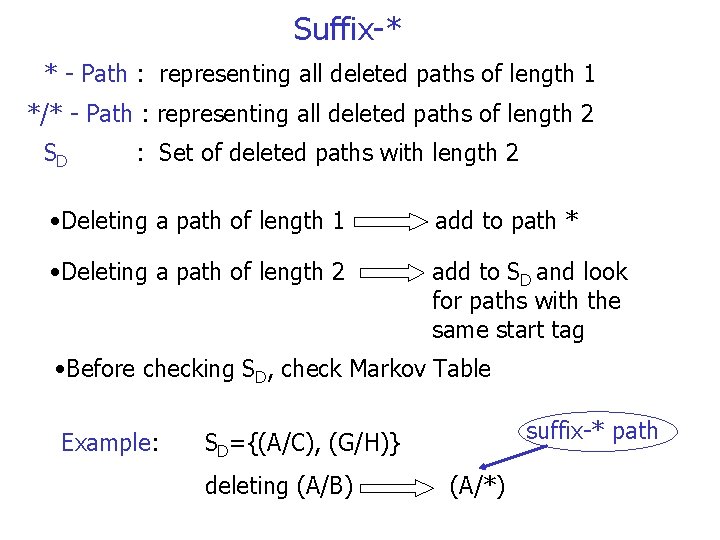

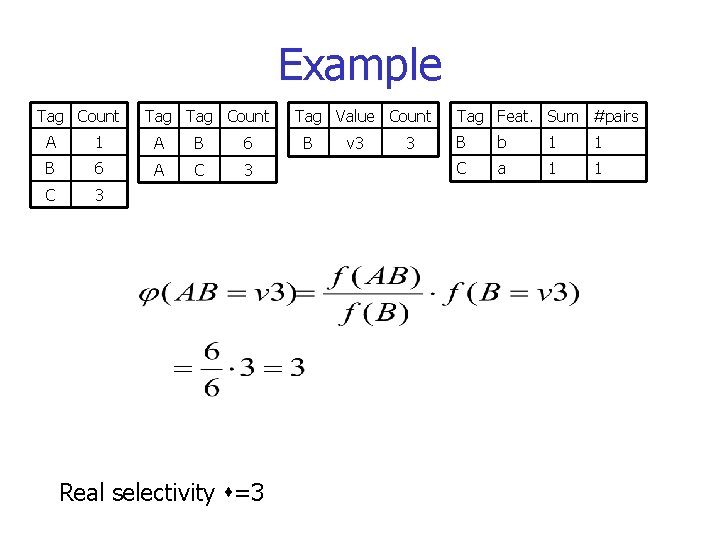

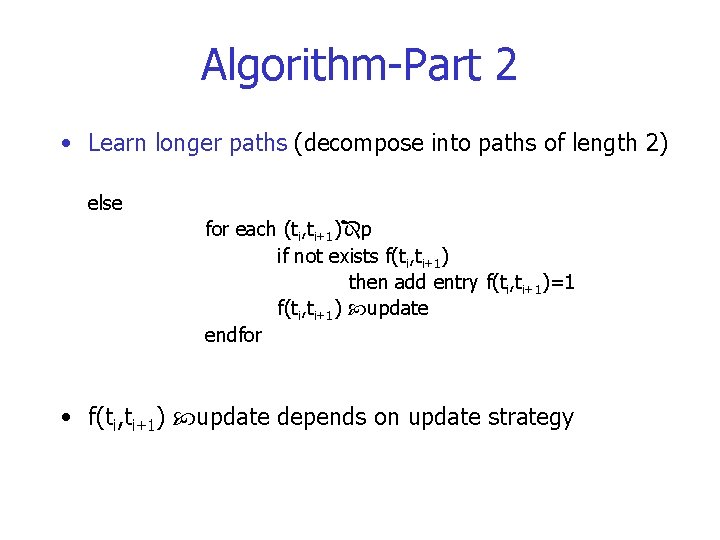

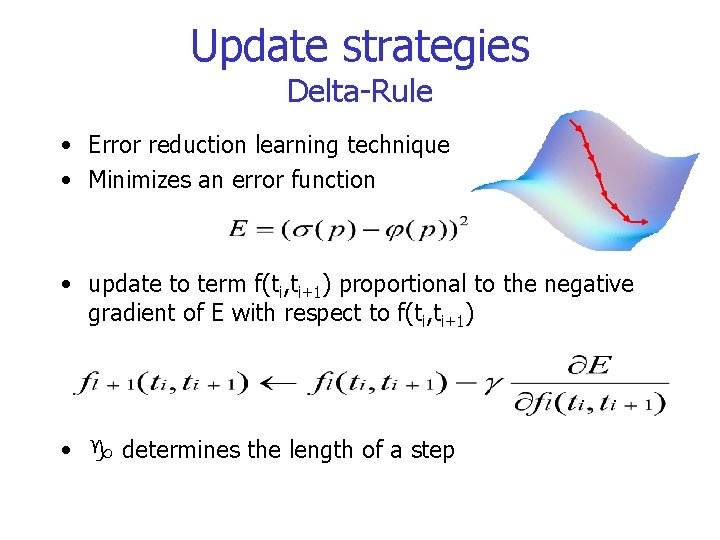

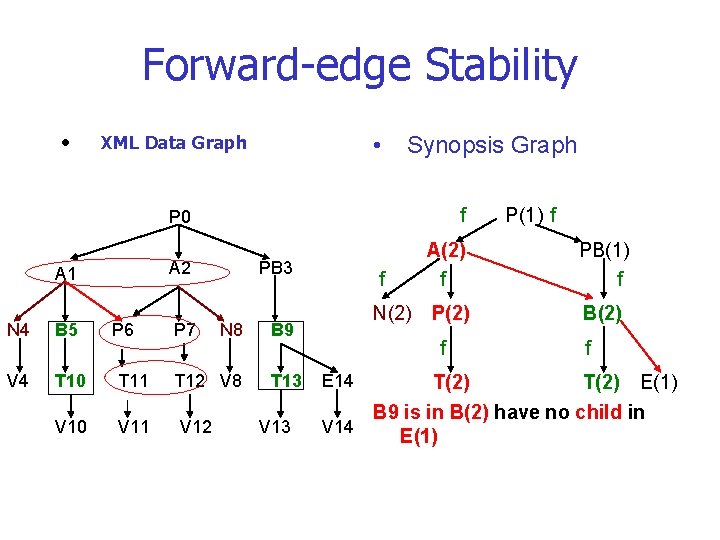

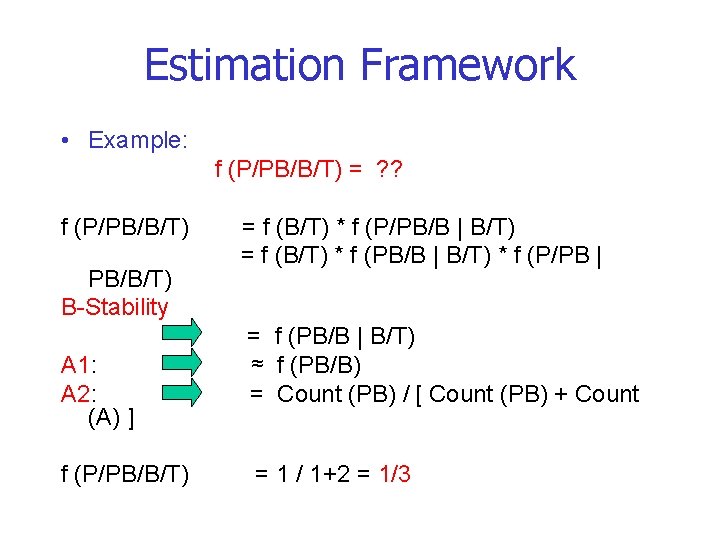

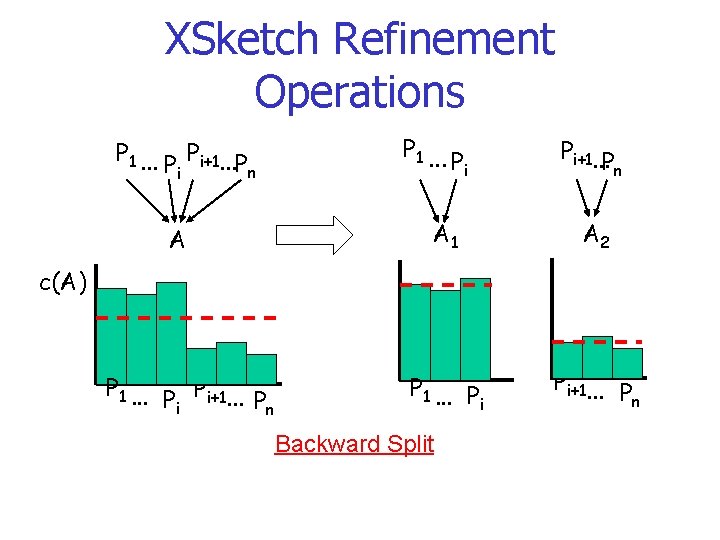

Selectivity Estimation • The table provides selectivity estimates for all paths of length up to m • Assumption that the occurence of a particular tag in a path is dependant only on m-1 tags occuring before it • Selectivity estimation for longer path expressions is done with the following formula

![Selectivity Estimation E Ptn E 1 Propability of tag tn occuring in the xml Selectivity Estimation E P[tn] E 1 Propability of tag tn occuring in the xml](https://slidetodoc.com/presentation_image/56657412f12858ff7cdd962f5cbb4045/image-19.jpg)

Selectivity Estimation E P[tn] E 1 Propability of tag tn occuring in the xml data tree N Total number of nodes in the xml data tree P[ti|ti+1] Probability of tag ti occuring before tag ti+1 E Predictand for the occurence of tag tn E 1 Predictand for the occurence of tag ti before tag ti+1 t… t… t 3 t 2 Markov Chain t 1

Selectivity Estimation = Selectivity of path p Example:

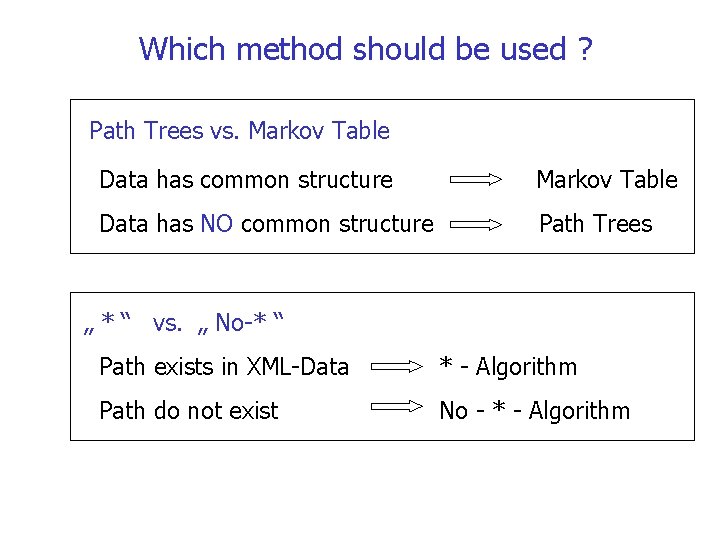

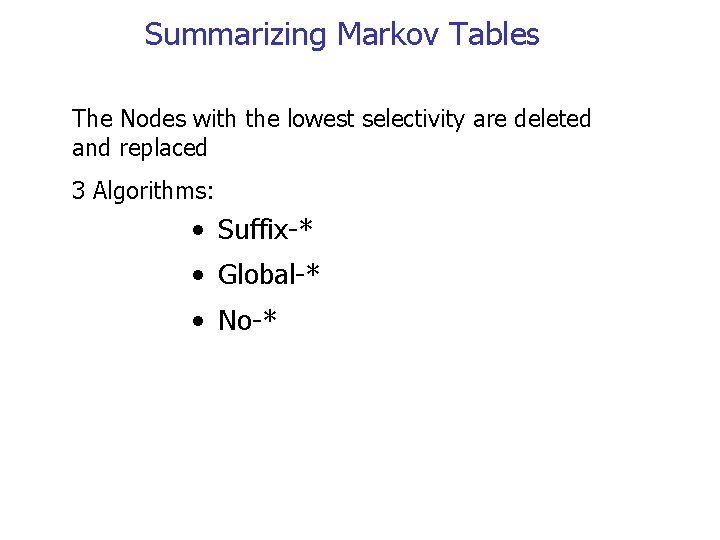

Summarizing Markov Tables The Nodes with the lowest selectivity are deleted and replaced 3 Algorithms: • Suffix-* • Global-* • No-*

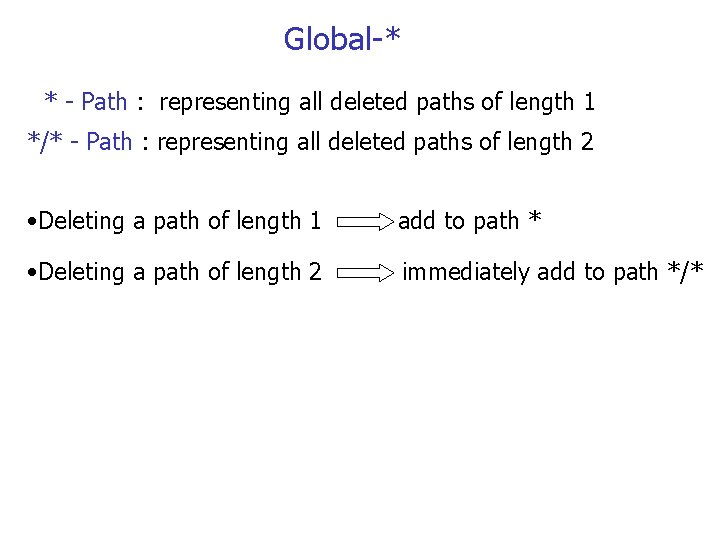

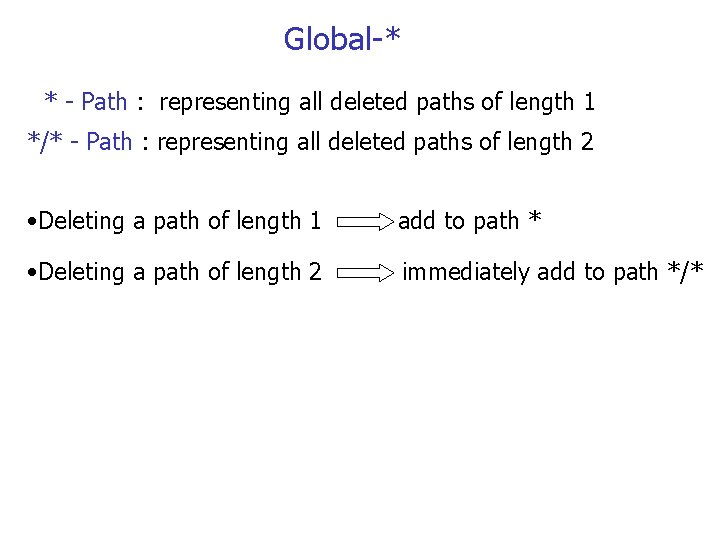

Suffix-* * - Path : representing all deleted paths of length 1 */* - Path : representing all deleted paths of length 2 SD : Set of deleted paths with length 2 • Deleting a path of length 1 add to path * • Deleting a path of length 2 add to SD and look for paths with the same start tag • Before checking SD, check Markov Table Example: suffix-* path SD={(A/C), (G/H)} deleting (A/B) (A/*)

Global-* * - Path : representing all deleted paths of length 1 */* - Path : representing all deleted paths of length 2 • Deleting a path of length 1 add to path * • Deleting a path of length 2 immediately add to path */*

No-* • does not use *-Paths • Low-frequency paths simply discarded If any of the required paths is not found (in the markov table) its selectivity is conservatively assumed to be zero

Which method should be used ? Path Trees vs. Markov Table Data has common structure Markov Table Data has NO common structure Path Trees „ * “ vs. „ No-* “ Path exists in XML-Data * - Algorithm Path do not exist No - * - Algorithm

Outline • Motivation • Definition Selectivity Estimation • Algorithms for Selectivity Estimation o Path Trees o Markov Tables o XPath. Learner o XSketches • Summary

Weaknesses of previous methods • Off-line, scan of the entire data set • Limited to simple path expressions • Oblivious to workload distribution • Updates too expensive

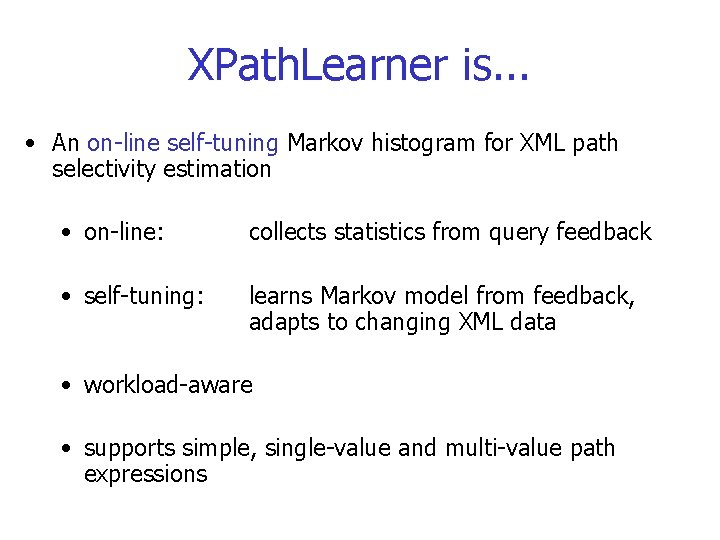

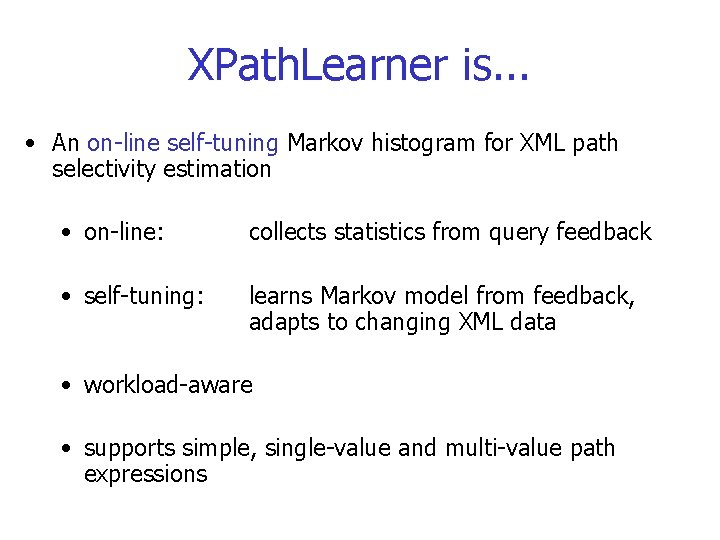

XPath. Learner is. . . • An on-line self-tuning Markov histogram for XML path selectivity estimation • on-line: collects statistics from query feedback • self-tuning: learns Markov model from feedback, adapts to changing XML data • workload-aware • supports simple, single-value and multi-value path expressions

Workflow Training data initial training Histogram Learner feedback, real selectivity updates Histogram Selectivity Estimator observed estimation error System uses feedback to update the statistics for the queried path. Updates are based on the observed estimation error. estimated selectivity

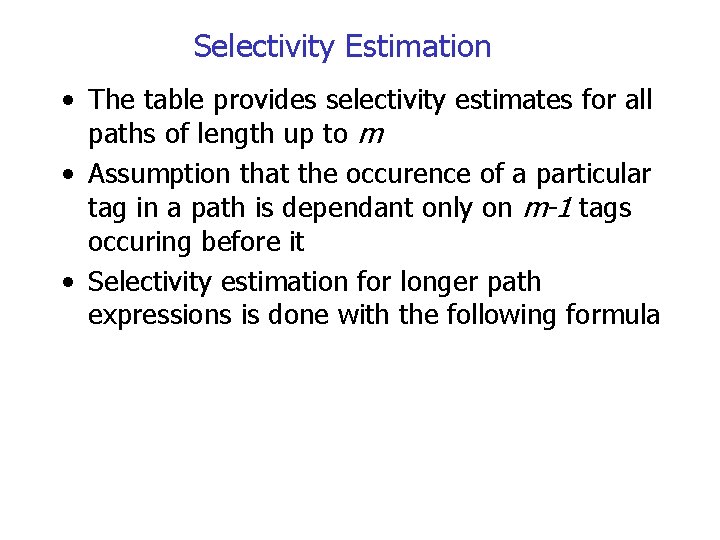

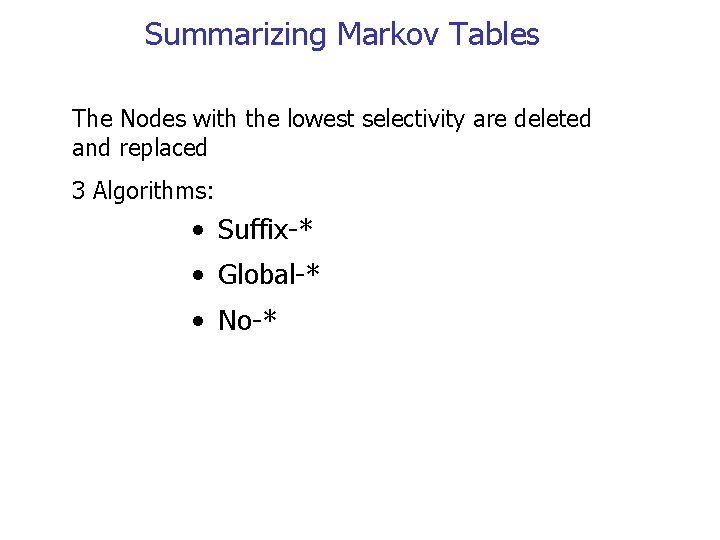

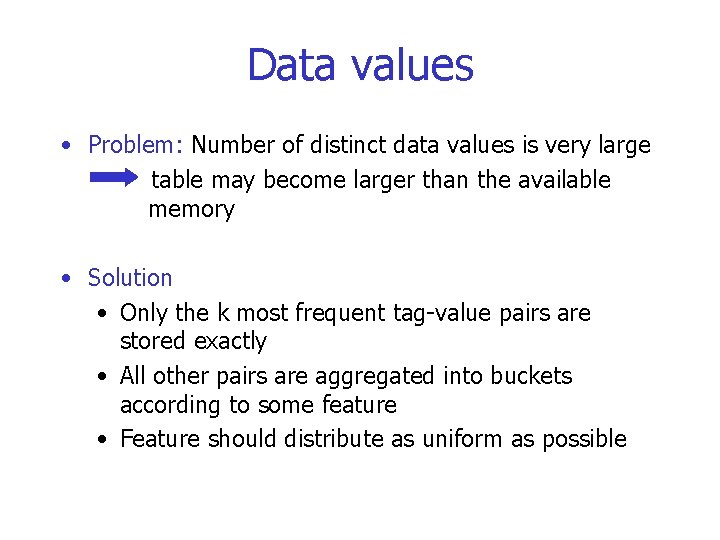

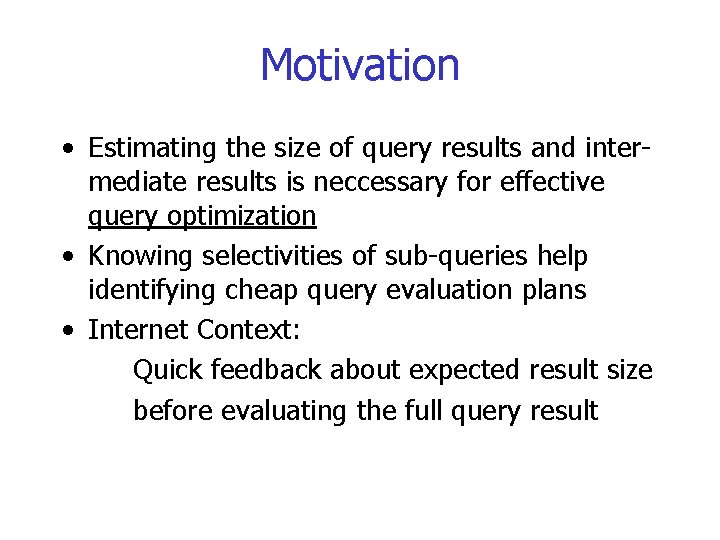

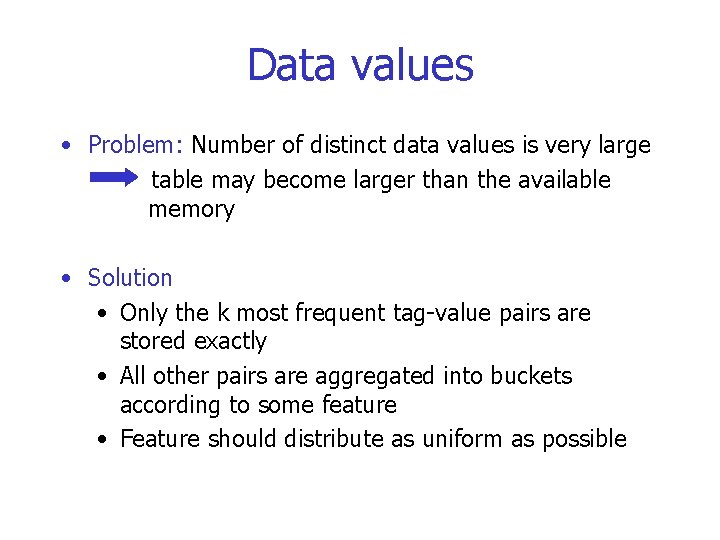

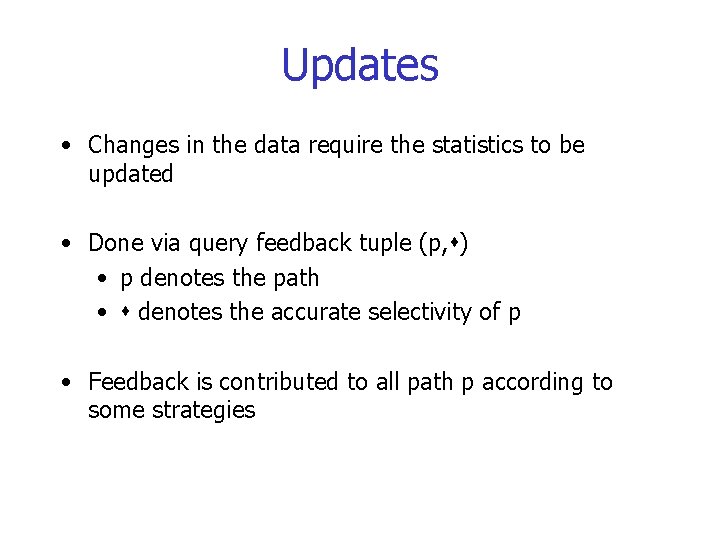

Basics • Relies on path trees as intermediate representation • Uses Markov histogram of order (m-1) to store the path tree and the statistics • Henceforth m=2 table stores tag-tag and tag-value pairs and single tags

Data values • Problem: Number of distinct data values is very large table may become larger than the available memory • Solution • Only the k most frequent tag-value pairs are stored exactly • All other pairs are aggregated into buckets according to some feature • Feature should distribute as uniform as possible

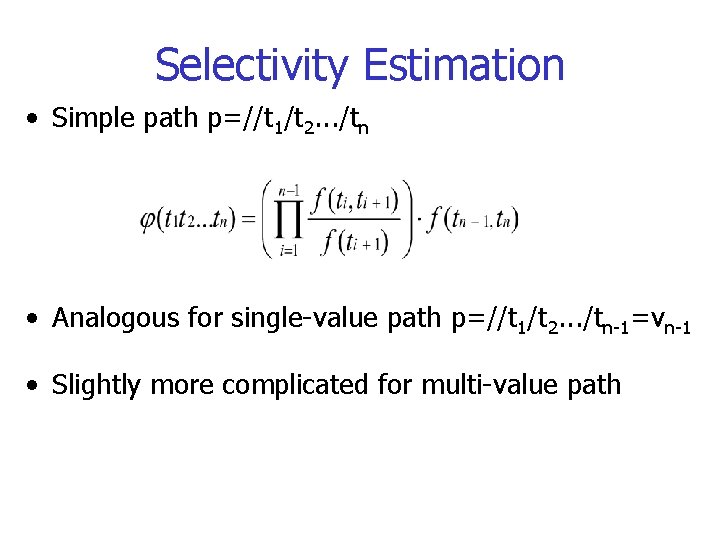

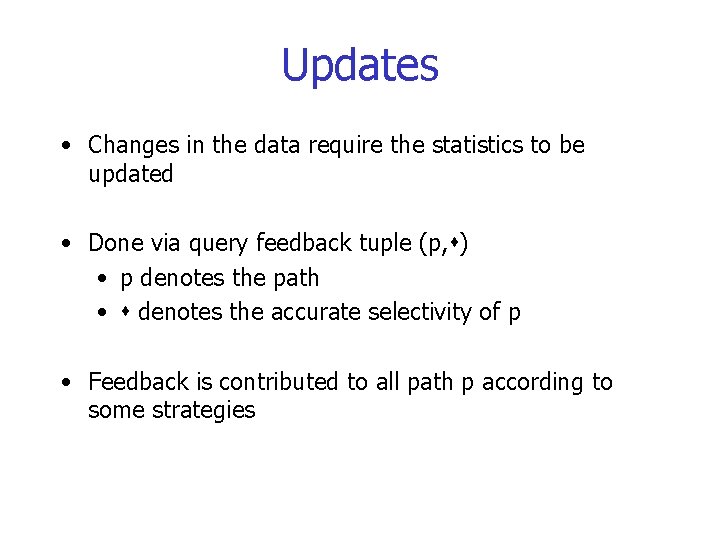

Example, k=1 A B V 2 Tag Count 1 C 6 V 3 1 V 1 3 Tag Count A 1 A B 6 A C 3 Data value v 1 begins with letter ‘a‘, v 2 with the letter ‘b‘ 3 1 Tag Value Count B v 3 3 Tag Feat. Sum #pairs B b 1 1 C a 1 1

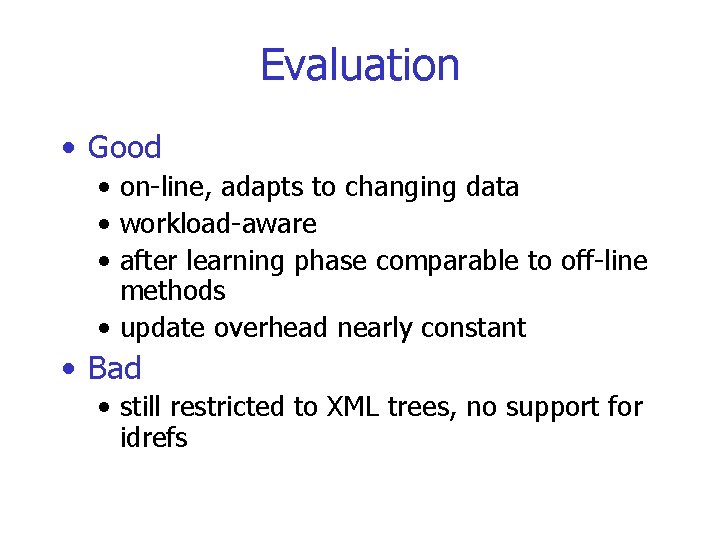

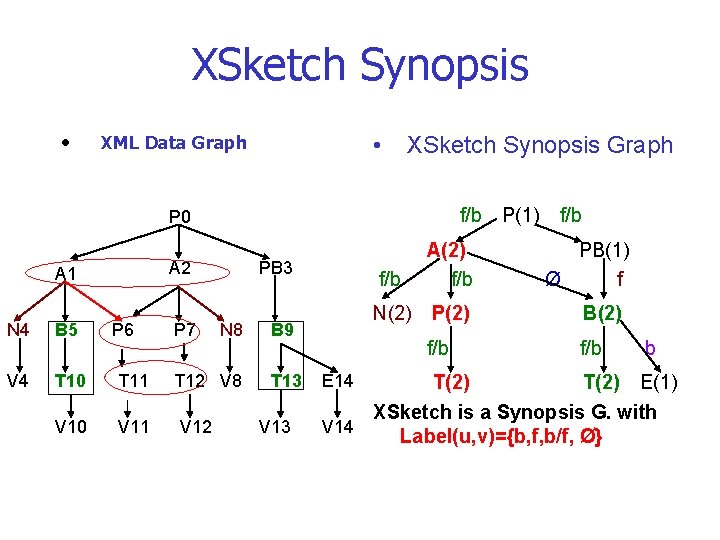

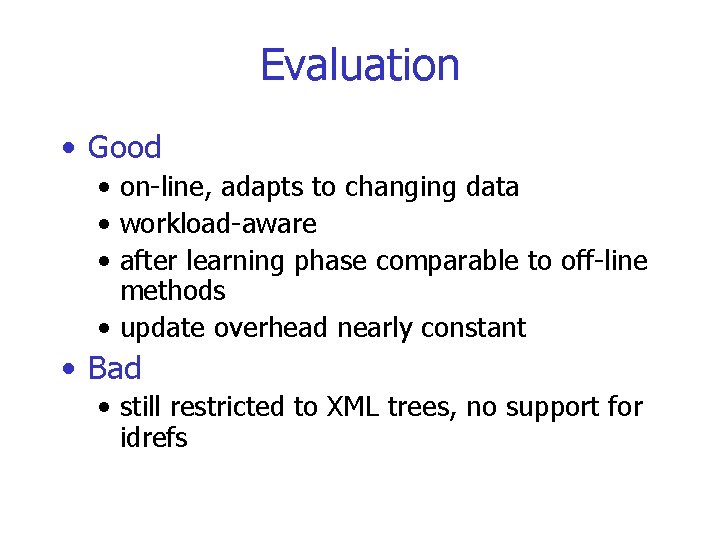

![Selectivity Estimation E Ptn E 1 Propability of tag tn occuring in the xml Selectivity Estimation E P[tn] E 1 Propability of tag tn occuring in the xml](https://slidetodoc.com/presentation_image/56657412f12858ff7cdd962f5cbb4045/image-33.jpg)

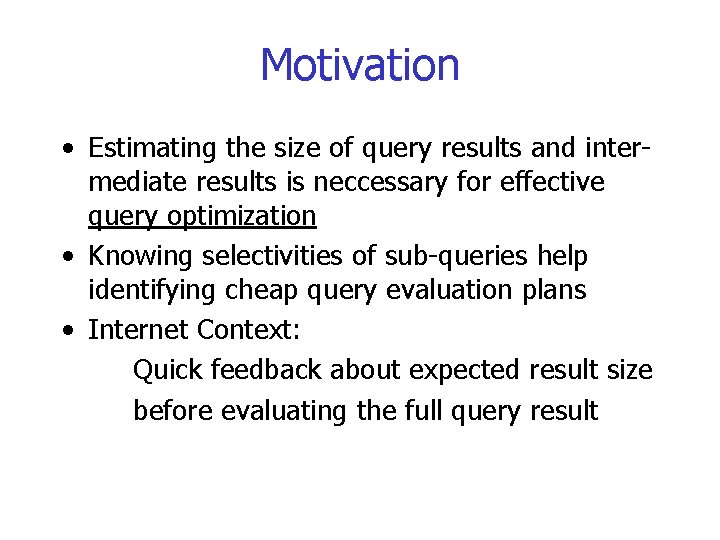

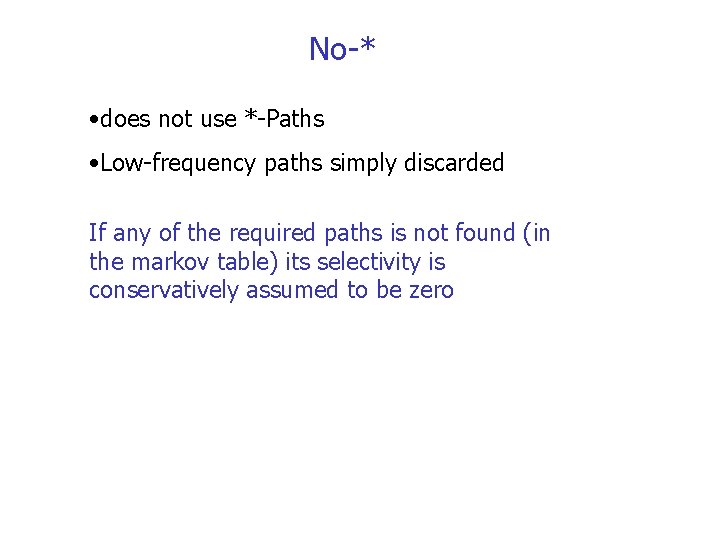

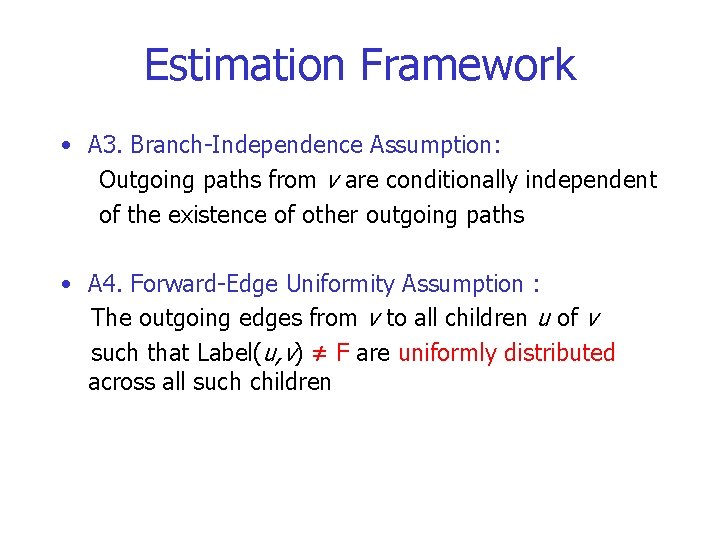

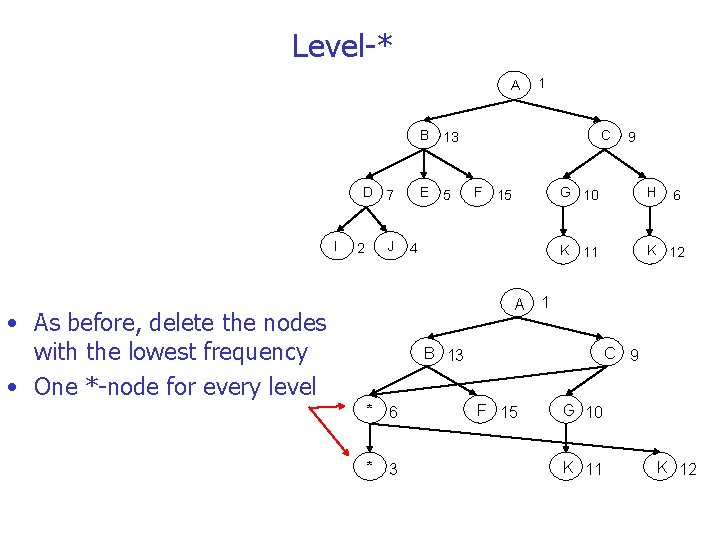

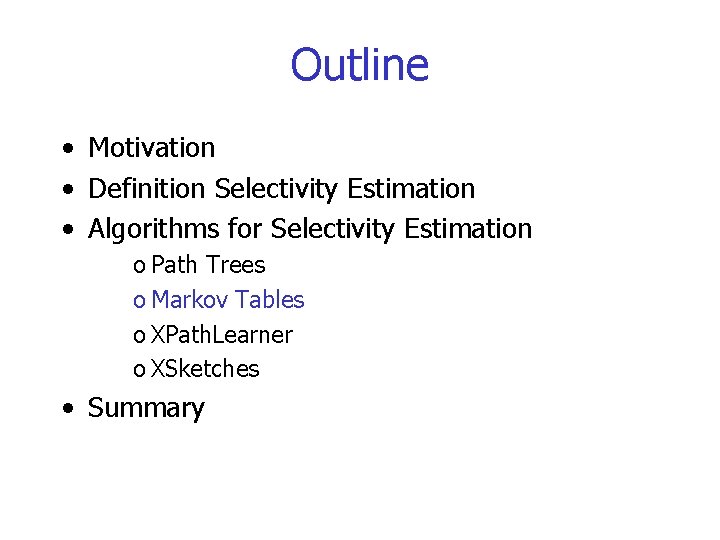

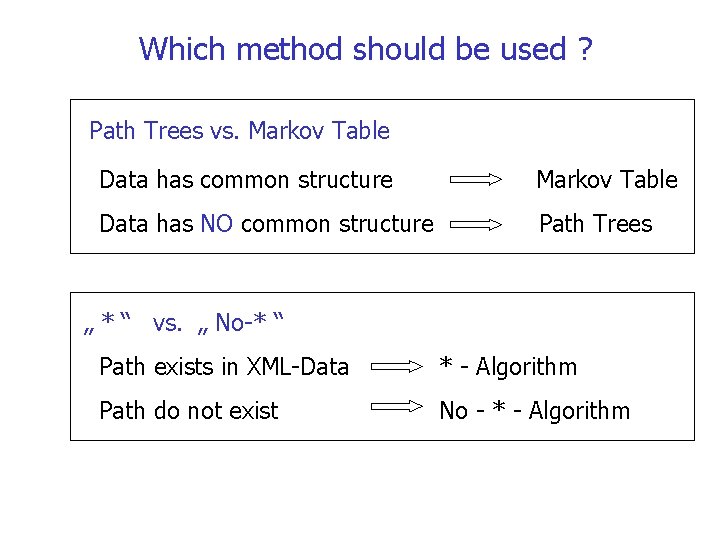

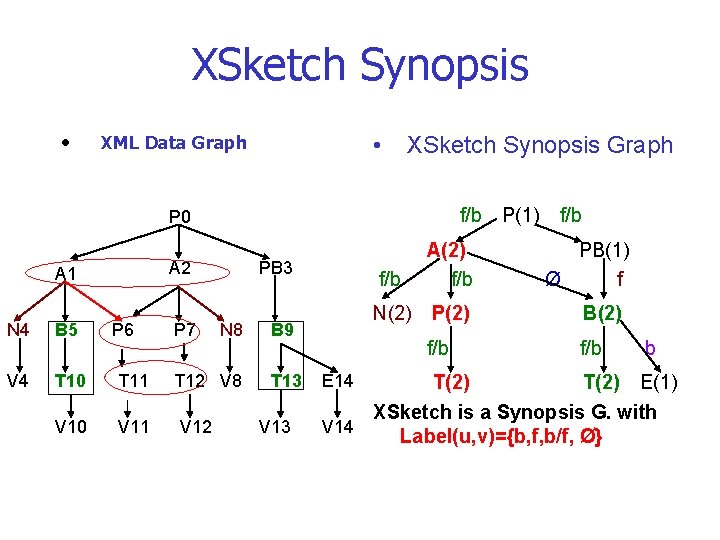

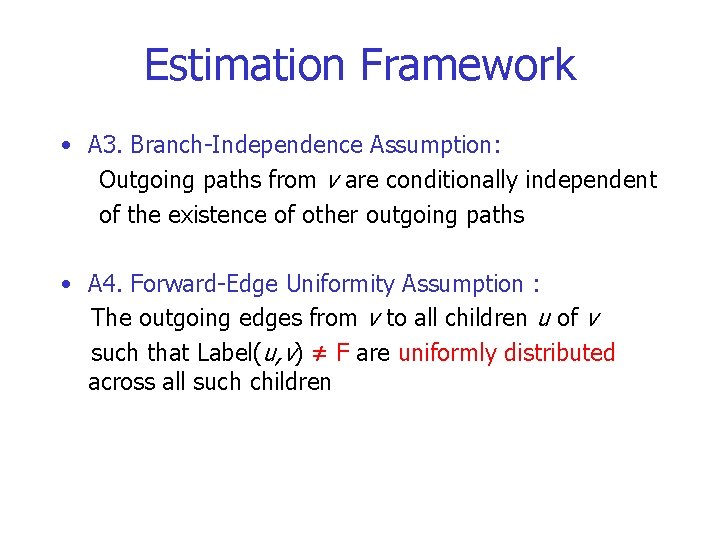

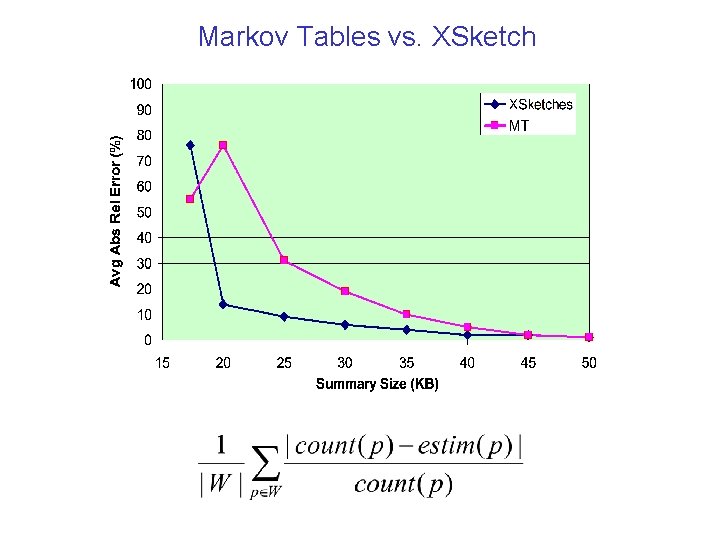

Selectivity Estimation E P[tn] E 1 Propability of tag tn occuring in the xml data tree N Total number of nodes in the xml data tree P[ti|ti+1] Probability of tag ti occuring before tag ti+1 E Expectation for the occurence of tag tn E 1 Expectation for the occurence of tag ti before tag ti+1 (if n=2 ti+1 = tn)

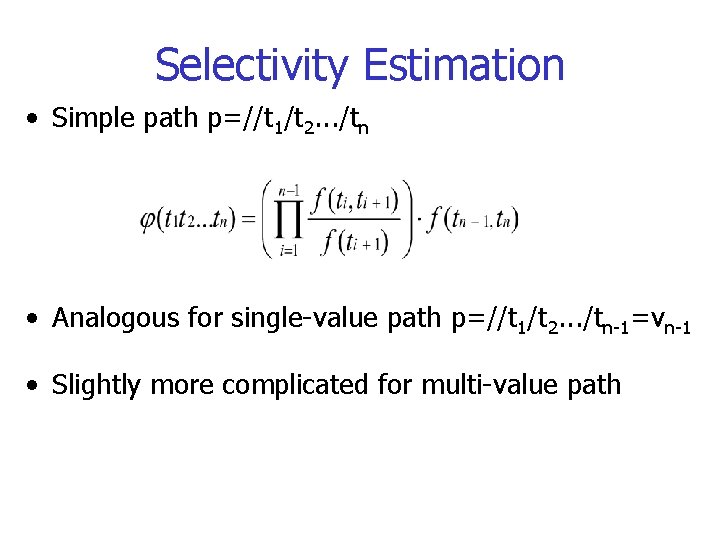

Selectivity Estimation • Simple path p=//t 1/t 2. . . /tn • Analogous for single-value path p=//t 1/t 2. . . /tn-1=vn-1 • Slightly more complicated for multi-value path

Example Tag Count A 1 A B 6 A C 3 Real selectivity =3 Tag Value Count B v 3 3 Tag Feat. Sum #pairs B b 1 1 C a 1 1

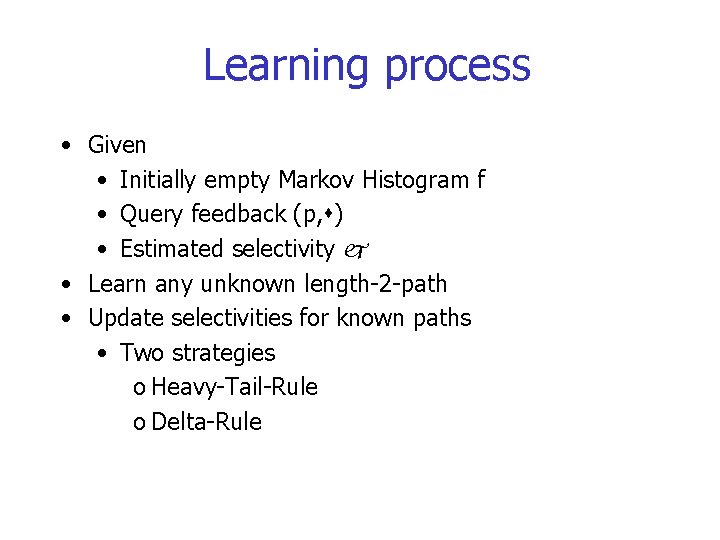

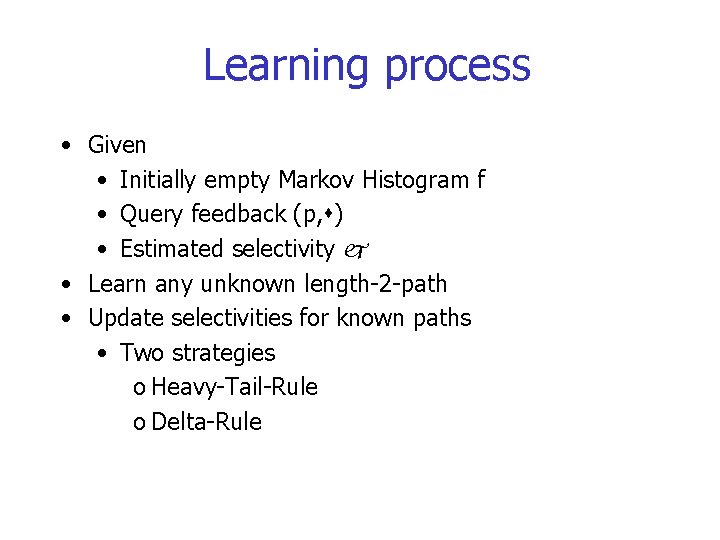

Updates • Changes in the data require the statistics to be updated • Done via query feedback tuple (p, ) • p denotes the path • denotes the accurate selectivity of p • Feedback is contributed to all path p according to some strategies

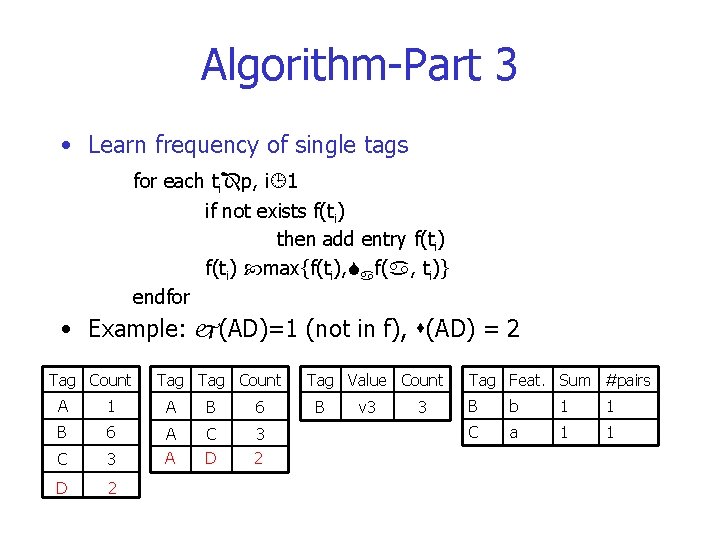

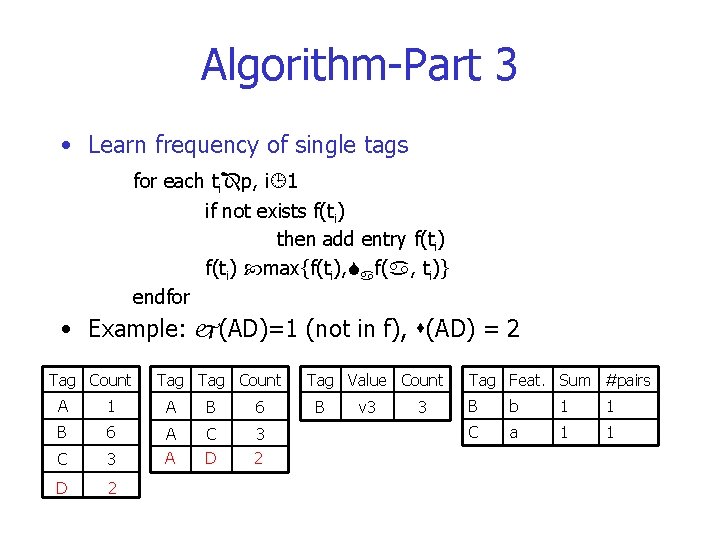

Learning process • Given • Initially empty Markov Histogram f • Query feedback (p, ) • Estimated selectivity • Learn any unknown length-2 -path • Update selectivities for known paths • Two strategies o Heavy-Tail-Rule o Delta-Rule

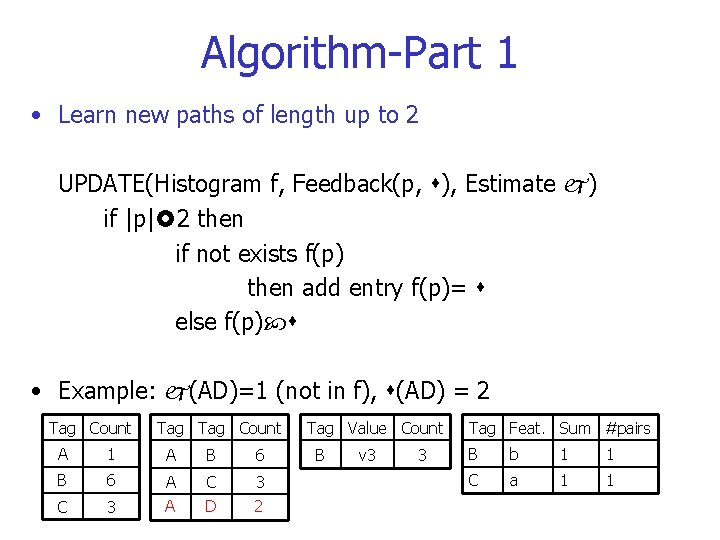

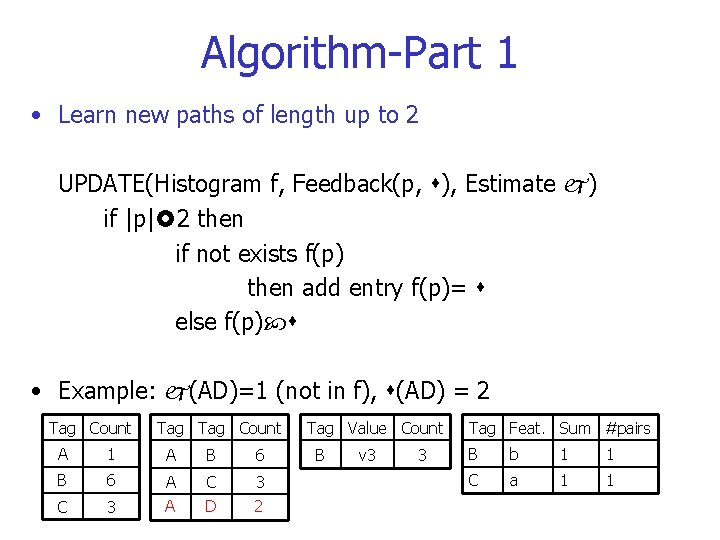

Algorithm-Part 1 • Learn new paths of length up to 2 UPDATE(Histogram f, Feedback(p, ), Estimate ) if |p| 2 then if not exists f(p) then add entry f(p)= else f(p) • Example: (AD)=1 (not in f), (AD) = 2 Tag Count A 1 A B 6 C 3 A A C D 3 2 Tag Value Count B v 3 3 Tag Feat. Sum #pairs B b 1 1 C a 1 1

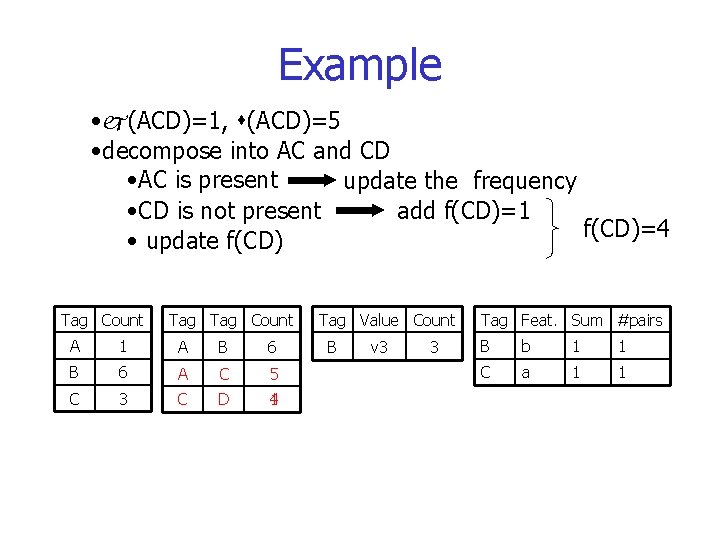

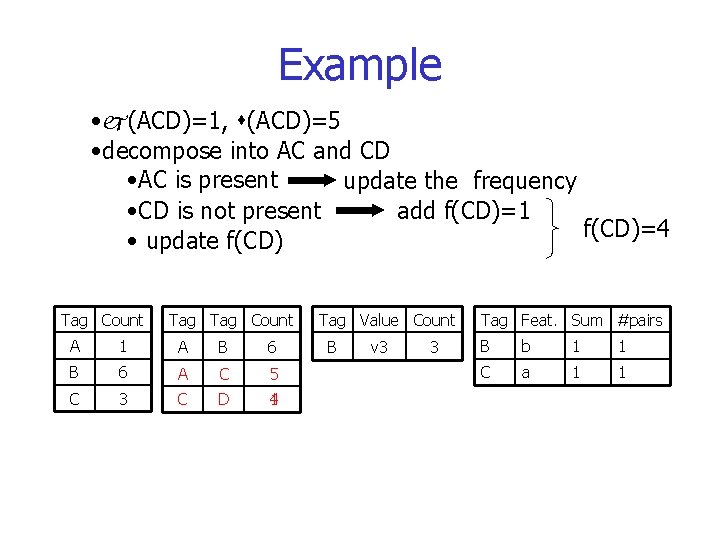

Algorithm-Part 2 • Learn longer paths (decompose into paths of length 2) else for each (ti, ti+1) p if not exists f(ti, ti+1) then add entry f(ti, ti+1)=1 f(ti, ti+1) update endfor • f(ti, ti+1) update depends on update strategy

Example • (ACD)=1, (ACD)=5 • decompose into AC and CD • AC is present update the frequency • CD is not present add f(CD)=1 f(CD)=4 • update f(CD) Tag Count A 1 A B 6 A C 5 C 3 C D 1 4 Tag Value Count B v 3 3 Tag Feat. Sum #pairs B b 1 1 C a 1 1

Algorithm-Part 3 • Learn frequency of single tags for each ti p, i 1 if not exists f(ti) then add entry f(ti) max{f(ti), f( , ti)} endfor • Example: (AD)=1 (not in f), (AD) = 2 Tag Count A 1 A B 6 C 3 A A C D 3 2 D 2 Tag Value Count B v 3 3 Tag Feat. Sum #pairs B b 1 1 C a 1 1

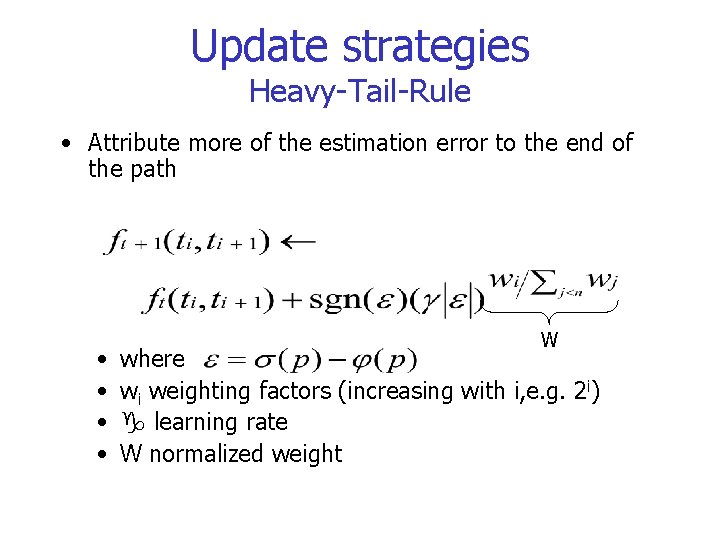

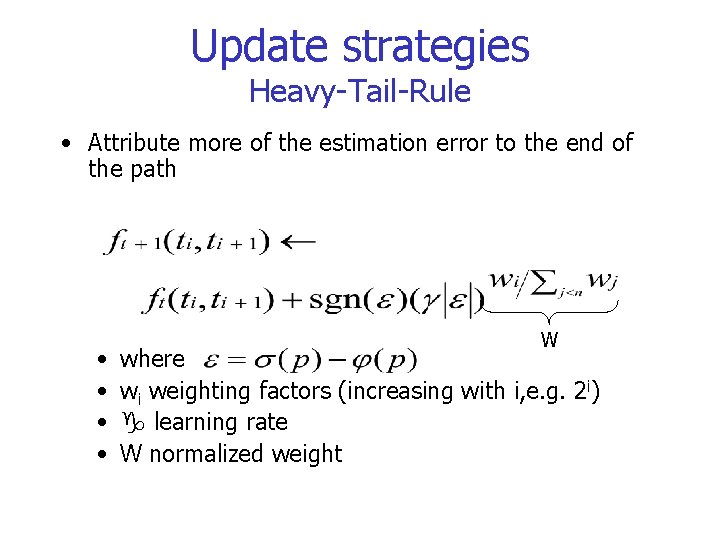

Update strategies Heavy-Tail-Rule • Attribute more of the estimation error to the end of the path • • W where wi weighting factors (increasing with i, e. g. 2 i) learning rate W normalized weight

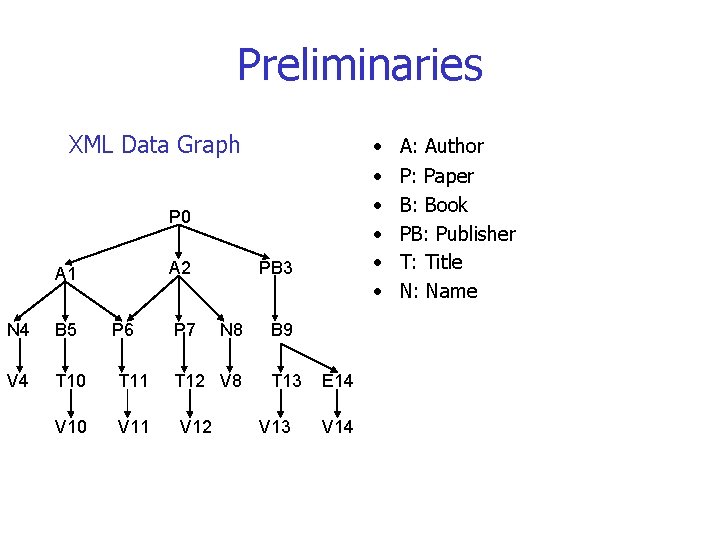

Update strategies Delta-Rule • Error reduction learning technique • Minimizes an error function • update to term f(ti, ti+1) proportional to the negative gradient of E with respect to f(ti, ti+1) • determines the length of a step

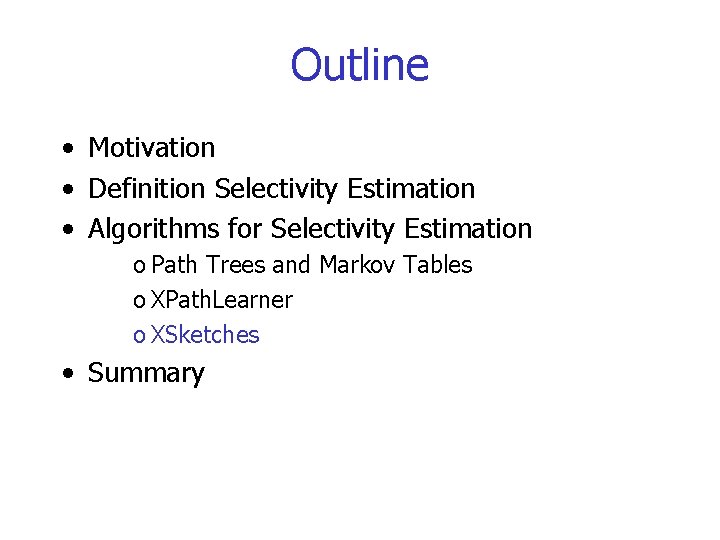

Evaluation • Good • on-line, adapts to changing data • workload-aware • after learning phase comparable to off-line methods • update overhead nearly constant • Bad • still restricted to XML trees, no support for idrefs

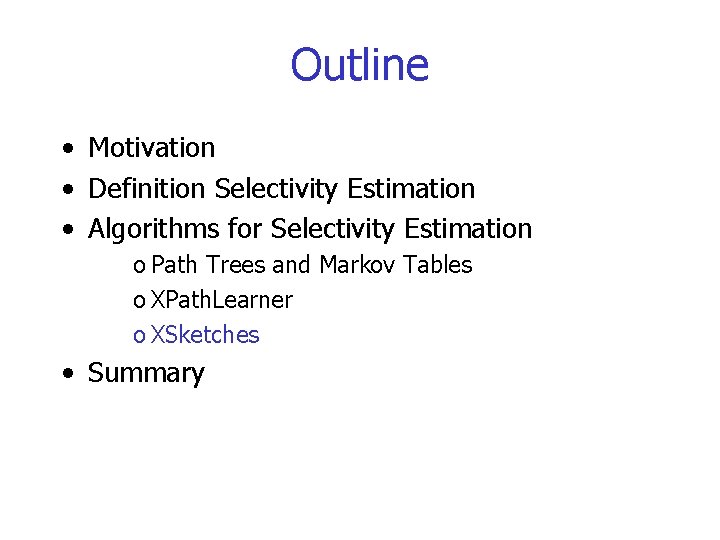

Outline • Motivation • Definition Selectivity Estimation • Algorithms for Selectivity Estimation o Path Trees and Markov Tables o XPath. Learner o XSketches • Summary

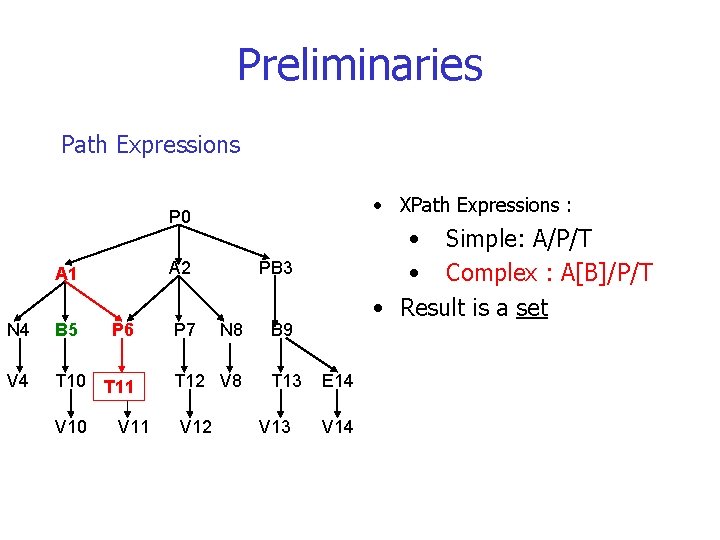

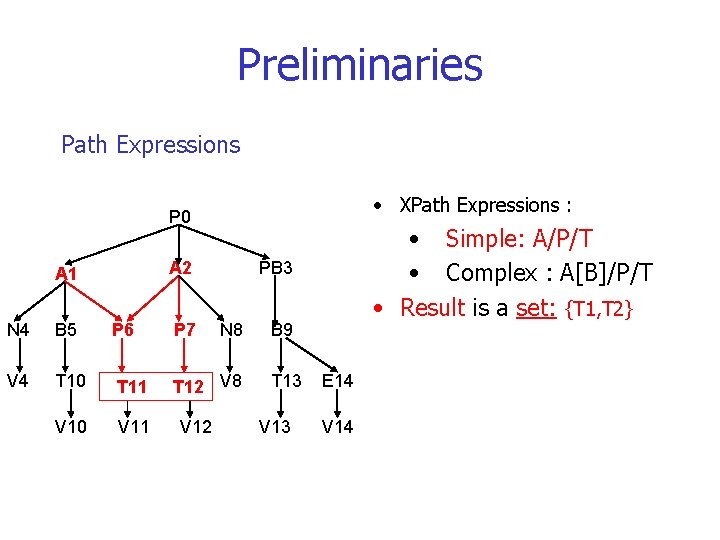

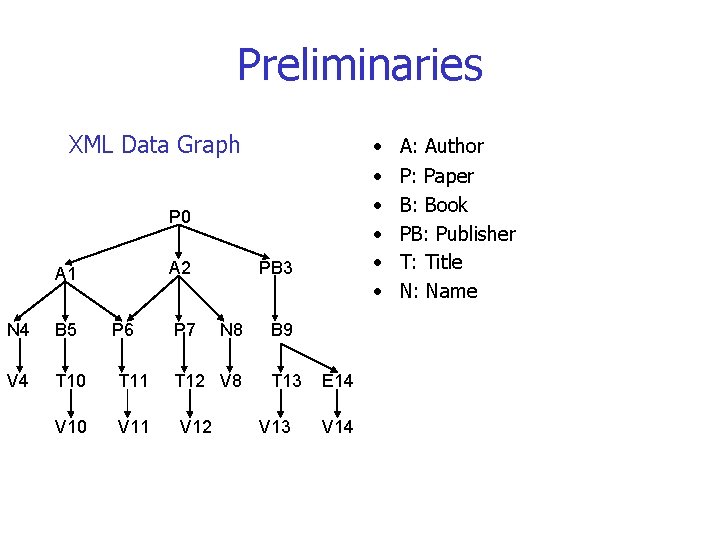

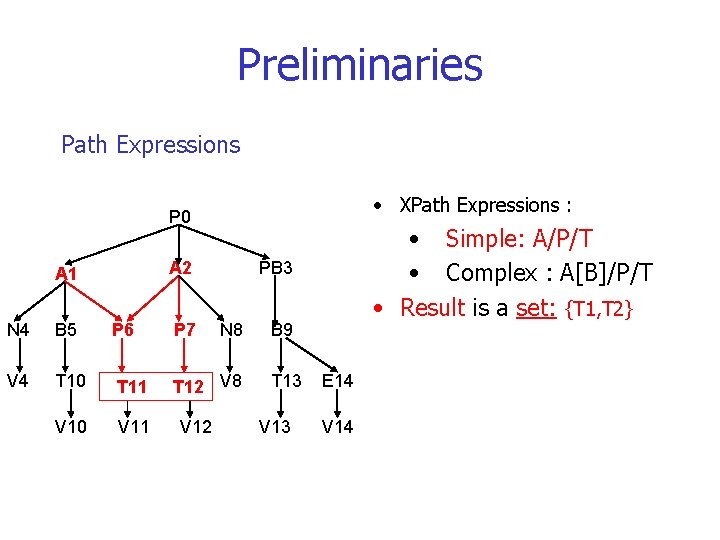

Preliminaries XML Data Graph • • • P 0 A 2 A 1 N 4 B 5 P 6 V 4 T 10 T 11 V 10 V 11 P 7 PB 3 N 8 T 12 V 8 V 12 B 9 T 13 V 13 E 14 V 14 A: Author P: Paper B: Book PB: Publisher T: Title N: Name

Preliminaries Path Expressions • XPath Expressions : P 0 A 2 A 1 PB 3 N 4 B 5 P 6 P 7 V 4 T 10 T 11 T 12 V 8 T 12 V 10 V 11 V 12 • Simple: A/P/T • Complex : A[B]/P/T • Result is a set N 8 B 9 T 13 V 13 E 14 V 14

Preliminaries Path Expressions • XPath Expressions : P 0 A 2 A 1 N 4 B 5 V 4 T 10 T 11 V 10 P 6 V 11 P 7 PB 3 N 8 T 12 V 8 V 12 • Simple: A/P/T • Complex : A[B]/P/T • Result is a set B 9 T 13 V 13 E 14 V 14

Preliminaries Path Expressions • XPath Expressions : P 0 A 2 A 1 PB 3 N 4 B 5 P 6 P 7 V 4 T 10 T 11 T 12 V 8 T 12 V 10 V 11 V 12 • Simple: A/P/T • Complex : A[B]/P/T • Result is a set: {T 1, T 2} N 8 B 9 T 13 V 13 E 14 V 14

Preliminaries • Motivation Selectivity Estimation over XML Data Graphs • Outline o XSketch Synopsis o Estimation Framework o XSketch Refinement Operations o Experiment

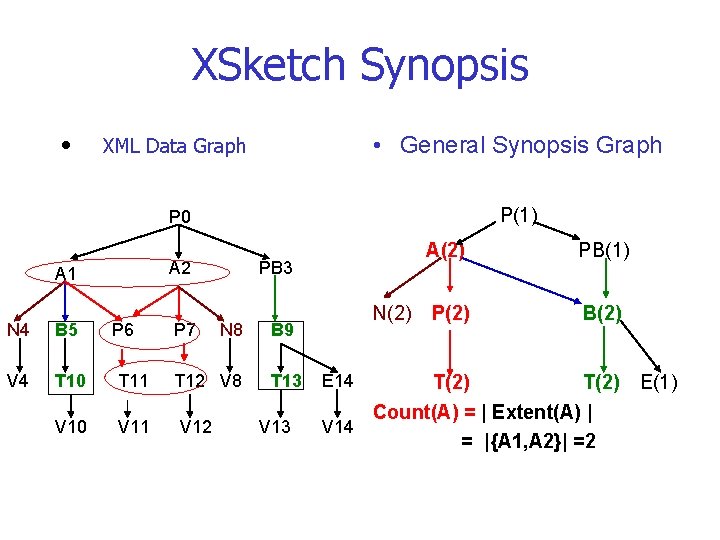

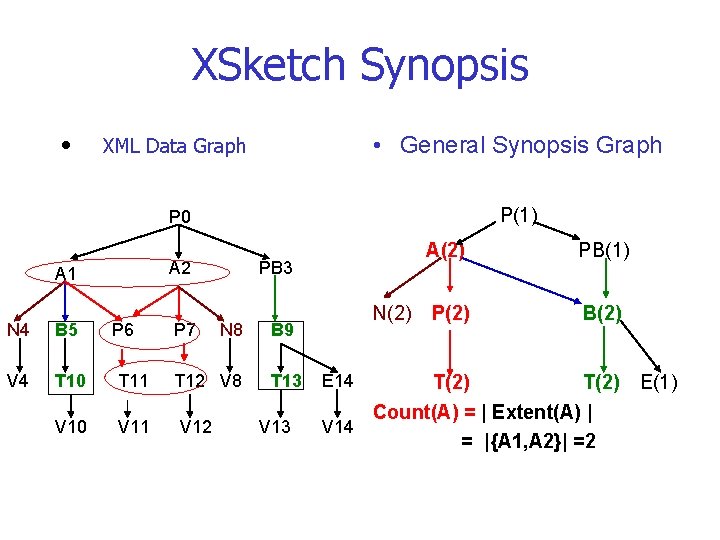

XSketch Synopsis • XML Data Graph • General Synopsis Graph P 0 P(1) A 2 A 1 N 4 B 5 P 6 V 4 T 10 T 11 V 10 V 11 P 7 PB 3 N 8 T 12 V 8 V 12 N(2) B 9 T 13 V 13 A(2) PB(1) P(2) B(2) T(2) Count(A) = | Extent(A) | V 14 = |{A 1, A 2}| =2 E 14 E(1)

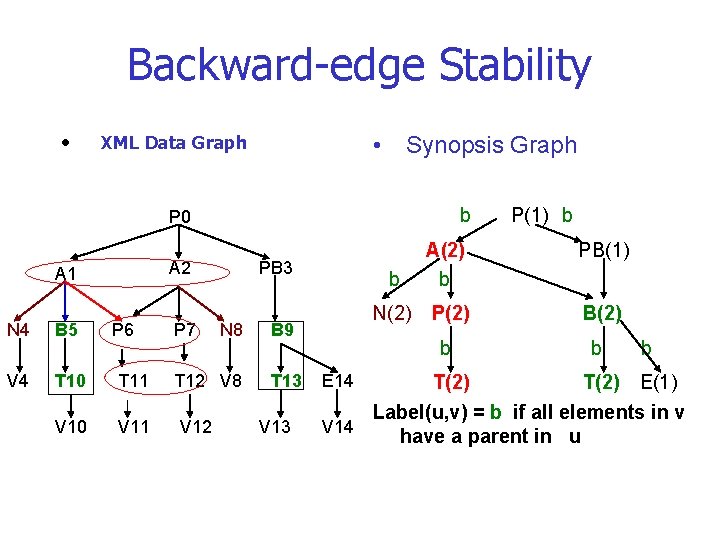

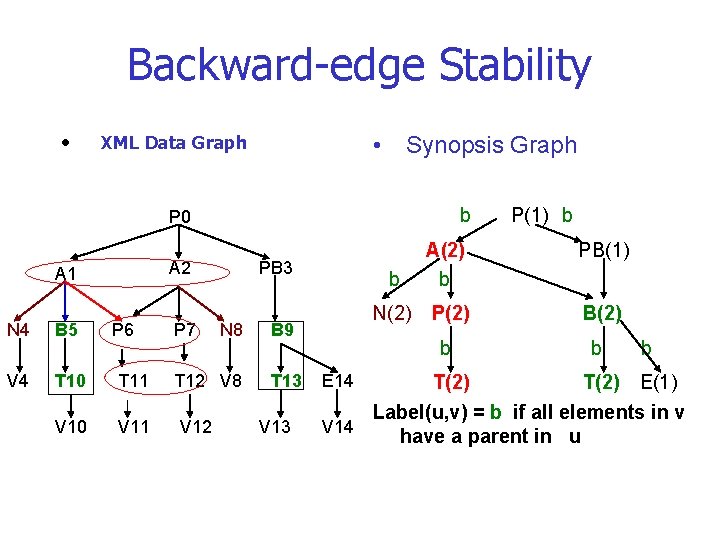

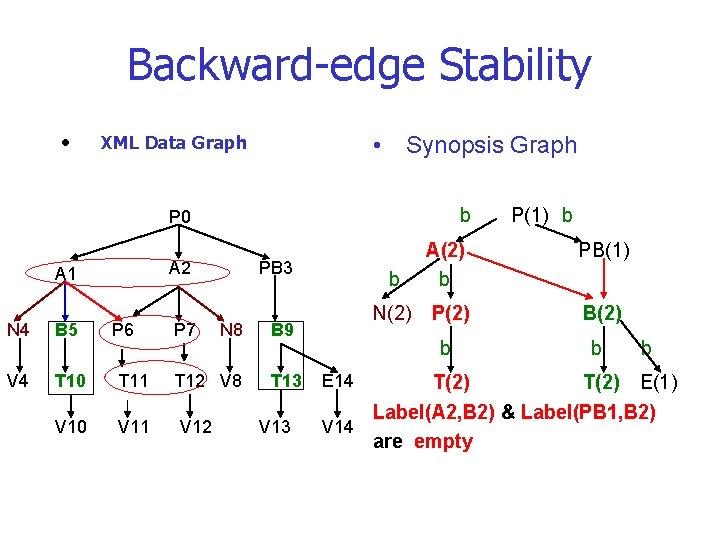

Backward-edge Stability • • XML Data Graph Synopsis Graph b P 0 A 2 A 1 N 4 B 5 P 6 V 4 T 10 T 11 V 10 V 11 P 7 PB 3 N 8 T 12 V 8 V 12 B 9 T 13 V 13 P(1) b PB(1) b A(2) b N(2) P(2) B(2) b b b T(2) E(1) Label(u, v) = b if all elements in v V 14 have a parent in u E 14

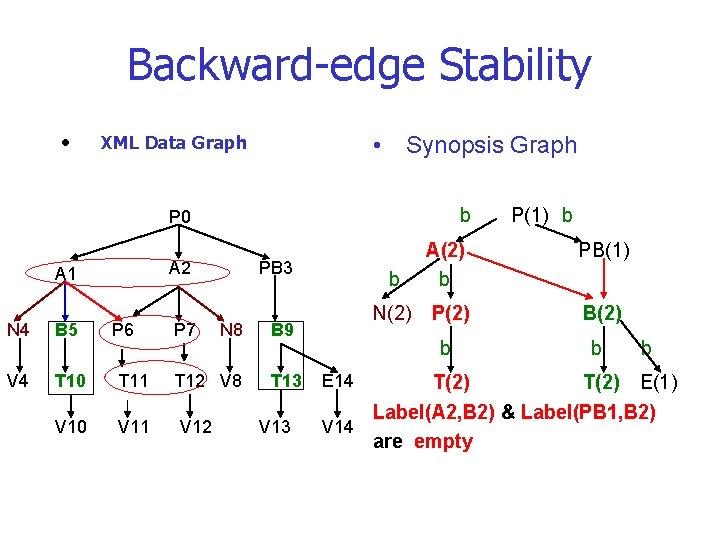

Backward-edge Stability • • XML Data Graph Synopsis Graph b P 0 A 2 A 1 N 4 B 5 P 6 V 4 T 10 T 11 V 10 V 11 P 7 PB 3 N 8 T 12 V 8 V 12 B 9 T 13 V 13 P(1) b PB(1) b A(2) b N(2) P(2) B(2) b b b T(2) E(1) Label(A 2, B 2) & Label(PB 1, B 2) V 14 are empty E 14

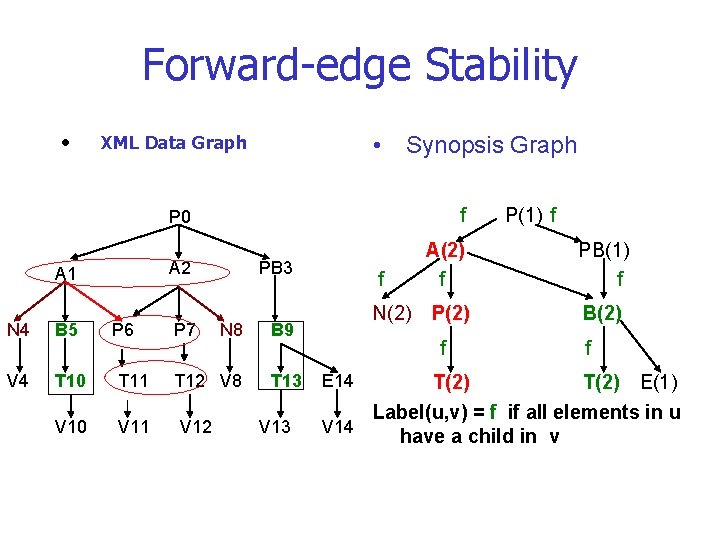

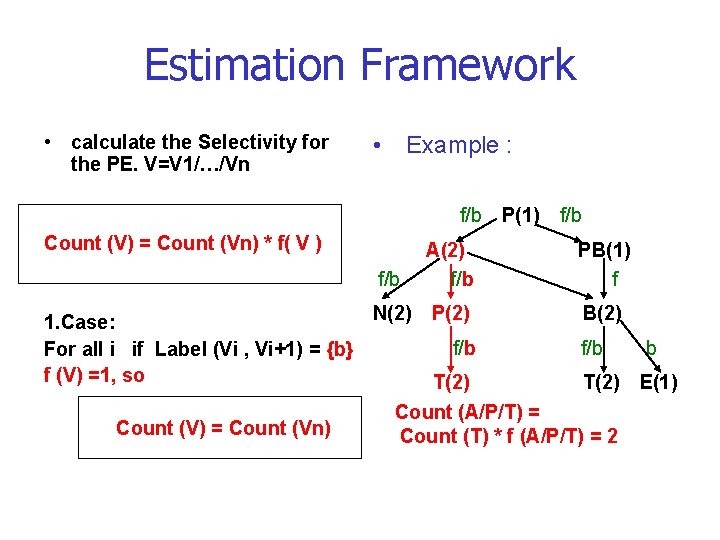

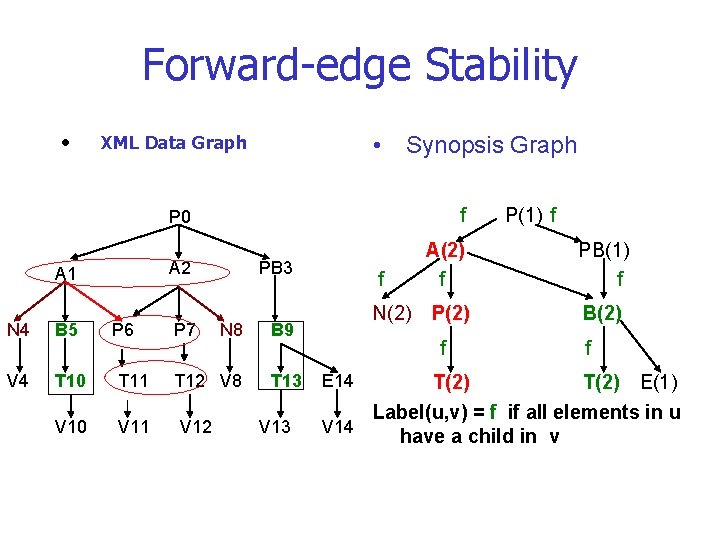

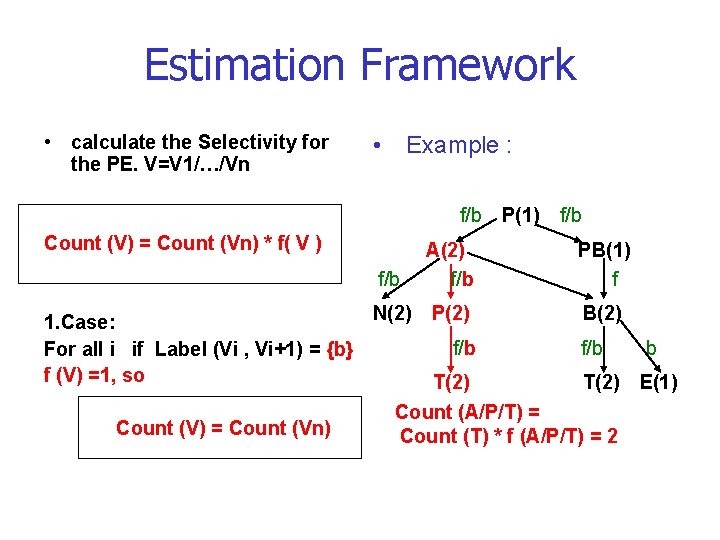

Forward-edge Stability • • XML Data Graph A 1 N 4 B 5 P 6 V 4 T 10 T 11 V 10 V 11 Synopsis Graph P 0 f A 2 f A(2) f PB(1) f N(2) P(2) B(2) P 7 PB 3 N 8 T 12 V 8 V 12 B 9 T 13 V 13 f P(1) f f T(2) E(1) Label(u, v) = f if all elements in u V 14 have a child in v E 14

Forward-edge Stability • • XML Data Graph A 1 N 4 B 5 P 6 V 4 T 10 T 11 V 10 V 11 Synopsis Graph P 0 f A 2 f A(2) f PB(1) f N(2) P(2) B(2) P 7 PB 3 N 8 T 12 V 8 V 12 B 9 T 13 V 13 f P(1) f f T(2) E(1) B 9 is in B(2) have no child in V 14 E(1) E 14

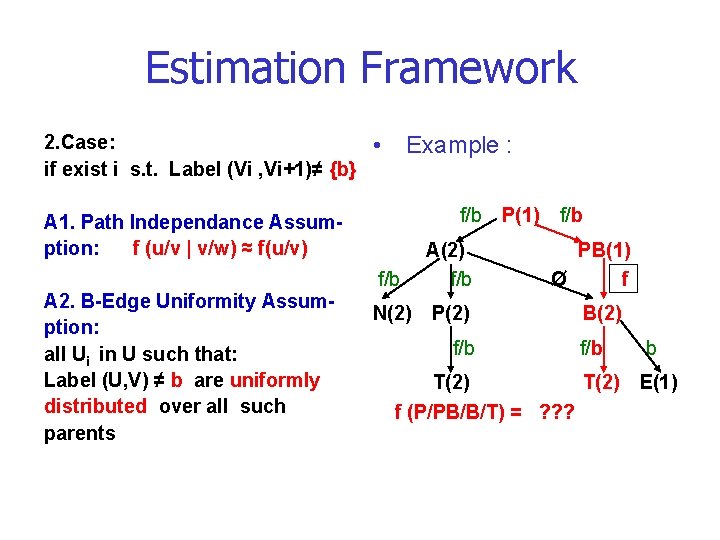

XSketch Synopsis • • XML Data Graph XSketch Synopsis Graph f/b P 0 A 2 A 1 N 4 B 5 P 6 V 4 T 10 T 11 V 10 V 11 P 7 PB 3 N 8 T 12 V 8 V 12 B 9 T 13 V 13 P(1) f/b A(2) f/b PB(1) Ø f N(2) P(2) B(2) f/b b T(2) E(1) XSketch is a Synopsis G. with V 14 Label(u, v)={b, f, b/f, Ø} E 14

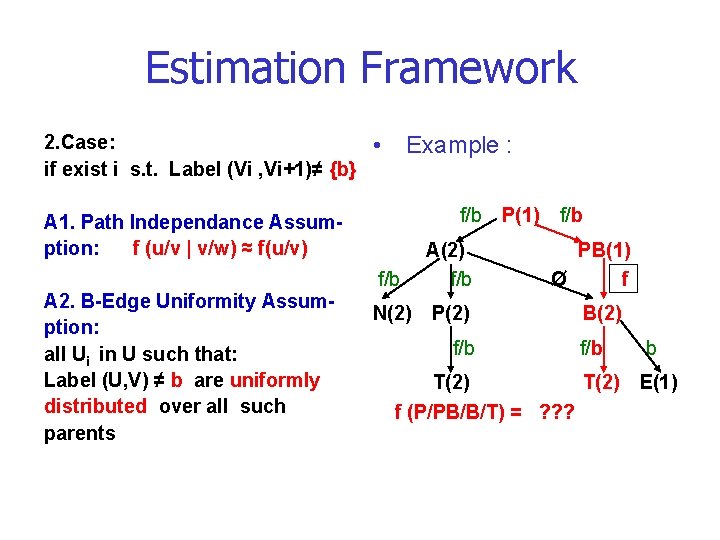

Estimation Framework • calculate the Selectivity for the PE. V=V 1/…/Vn • Example : f/b Count (V) = Count (Vn) * f( V ) f/b N(2) 1. Case: For all i if Label (Vi , Vi+1) = {b} f (V) =1, so Count (V) = Count (Vn) P(1) f/b A(2) f/b PB(1) f P(2) B(2) f/b T(2) Count (A/P/T) = Count (T) * f (A/P/T) = 2 b E(1)

Estimation Framework 2. Case: if exist i s. t. Label (Vi , Vi+1)≠ {b} • Example : f/b A 1. Path Independance Assumption: f (u/v | v/w) ≈ f(u/v) A 2. B-Edge Uniformity Assumption: all Ui in U such that: Label (U, V) ≠ b are uniformly distributed over all such parents f/b A(2) f/b N(2) P(2) f/b P(1) f/b PB(1) Ø f B(2) f/b T(2) f (P/PB/B/T) = ? ? ? b E(1)

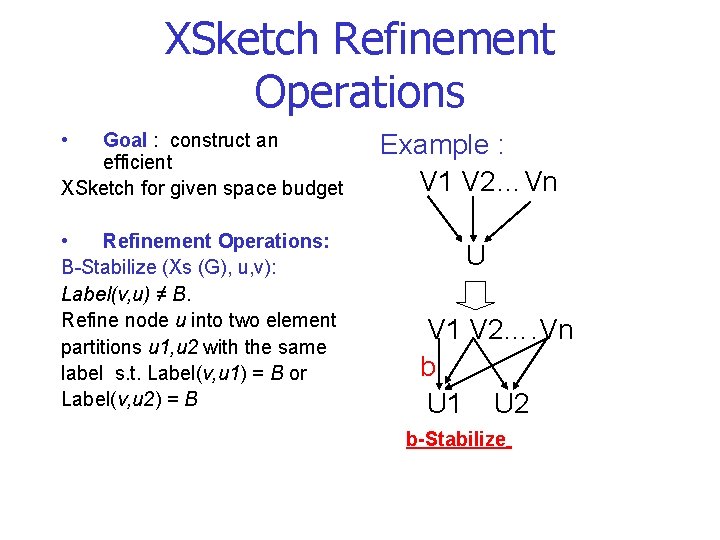

Estimation Framework • Example: f (P/PB/B/T) = ? ? f (P/PB/B/T) B-Stability A 1: A 2: (A) ] f (P/PB/B/T) = f (B/T) * f (P/PB/B | B/T) = f (B/T) * f (PB/B | B/T) * f (P/PB | = f (PB/B | B/T) ≈ f (PB/B) = Count (PB) / [ Count (PB) + Count = 1 / 1+2 = 1/3

Estimation Framework • A 3. Branch-Independence Assumption: Outgoing paths from v are conditionally independent of the existence of other outgoing paths • A 4. Forward-Edge Uniformity Assumption : The outgoing edges from v to all children u of v such that Label(u, v) ≠ F are uniformly distributed across all such children

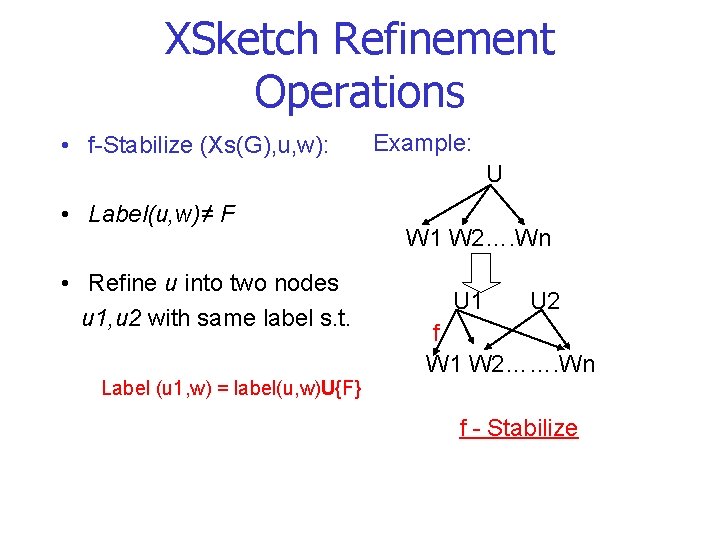

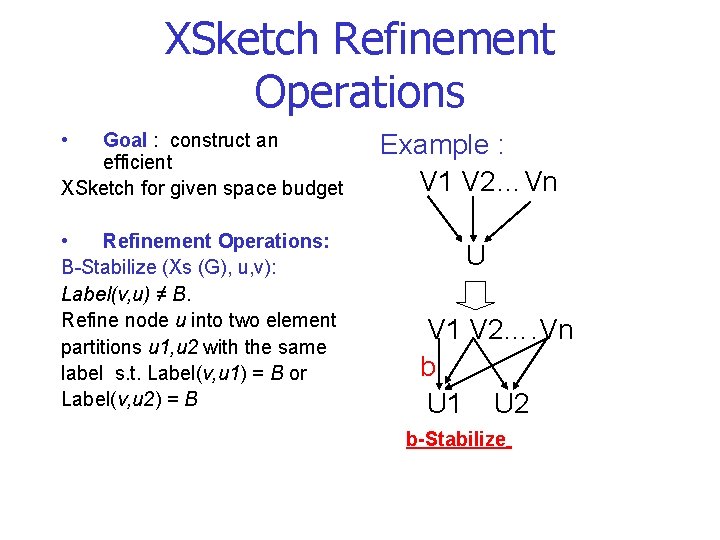

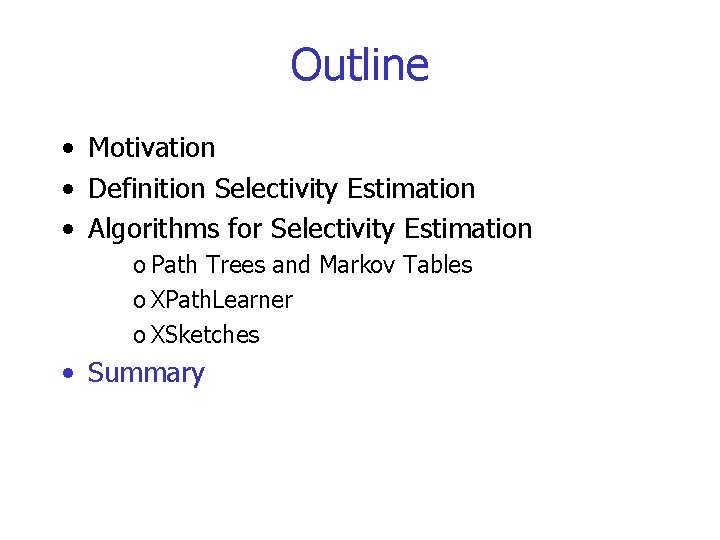

XSketch Refinement Operations • Goal : construct an efficient XSketch for given space budget • Refinement Operations: B-Stabilize (Xs (G), u, v): Label(v, u) ≠ B. Refine node u into two element partitions u 1, u 2 with the same label s. t. Label(v, u 1) = B or Label(v, u 2) = B Example : V 1 V 2…Vn U V 1 V 2…. Vn b U 1 U 2 b-Stabilize

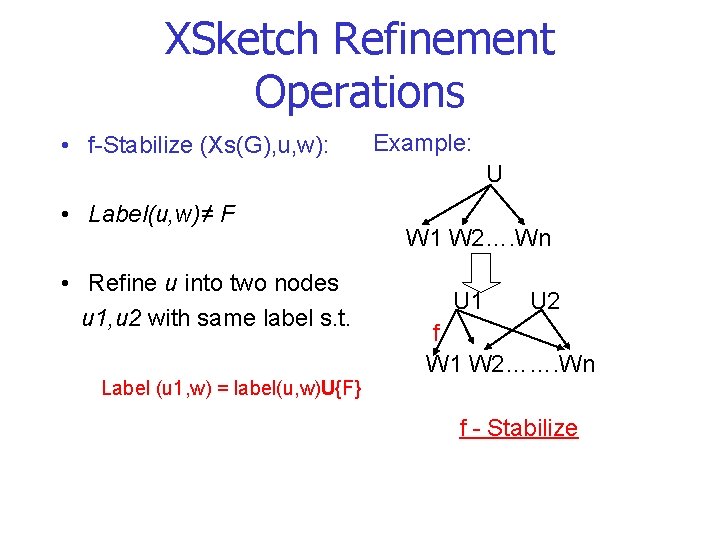

XSketch Refinement Operations • f-Stabilize (Xs(G), u, w): Example: U • Label(u, w)≠ F • Refine u into two nodes u 1, u 2 with same label s. t. Label (u 1, w) = label(u, w)U{F} W 1 W 2…. Wn U 1 U 2 f W 1 W 2……. Wn f - Stabilize

XSketch Refinement Operations P 1. . . P Pi+1. . . P n i A P 1. . . Pi Pi+1. . . P n A 1 A 2 P 1. . . P i Pi+1. . . P n c(A) P 1. . . P Pi+1. . . Pn i Backward Split

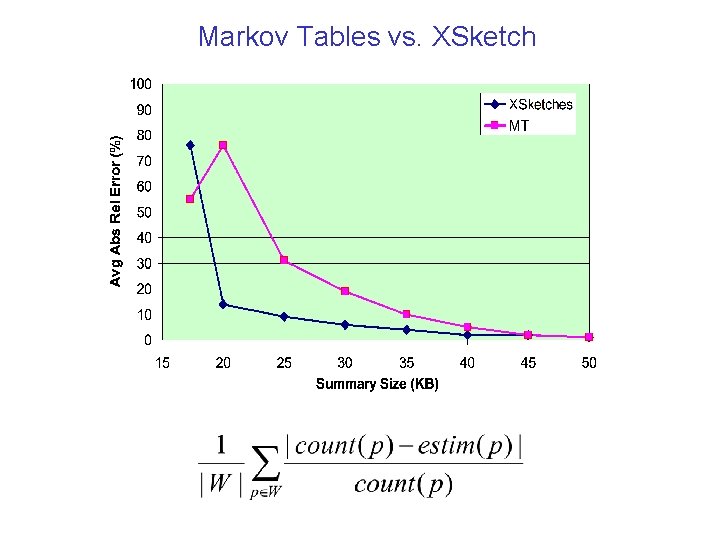

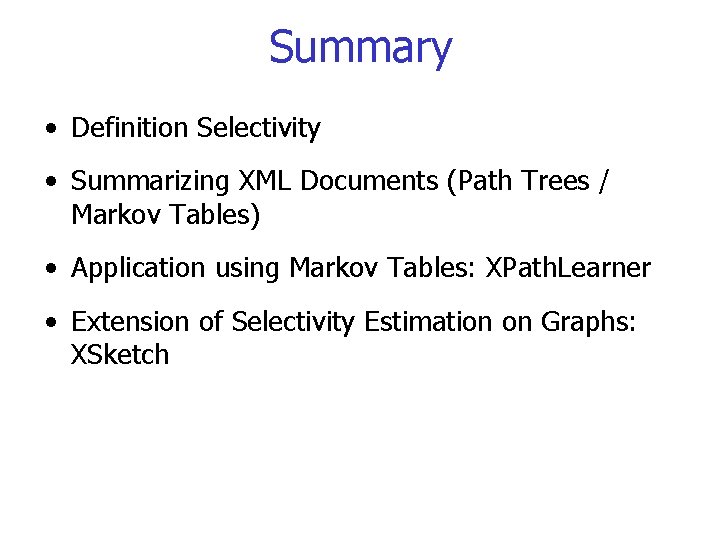

Markov Tables vs. XSketch

Outline • Motivation • Definition Selectivity Estimation • Algorithms for Selectivity Estimation o Path Trees and Markov Tables o XPath. Learner o XSketches • Summary

Summary • Definition Selectivity • Summarizing XML Documents (Path Trees / Markov Tables) • Application using Markov Tables: XPath. Learner • Extension of Selectivity Estimation on Graphs: XSketch

Questions?