Ordinal Optimization Copyright by YuChi Ho Some Additional

Ordinal Optimization Copyright by Yu-Chi Ho

Some Additional Issues F Design dependent estimation noises vs. observation noise F Goal Softening to increase the Alignment Probability F Constrained Ordinal Optimization Copyright by Yu-Chi Ho 2

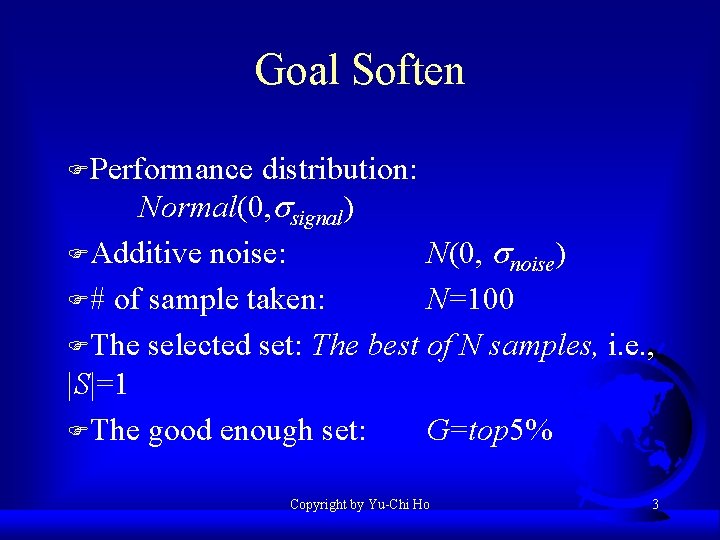

Goal Soften FPerformance distribution: Normal(0, ssignal) FAdditive noise: N(0, snoise) F# of sample taken: N=100 FThe selected set: The best of N samples, i. e. , |S|=1 FThe good enough set: G=top 5% Copyright by Yu-Chi Ho 3

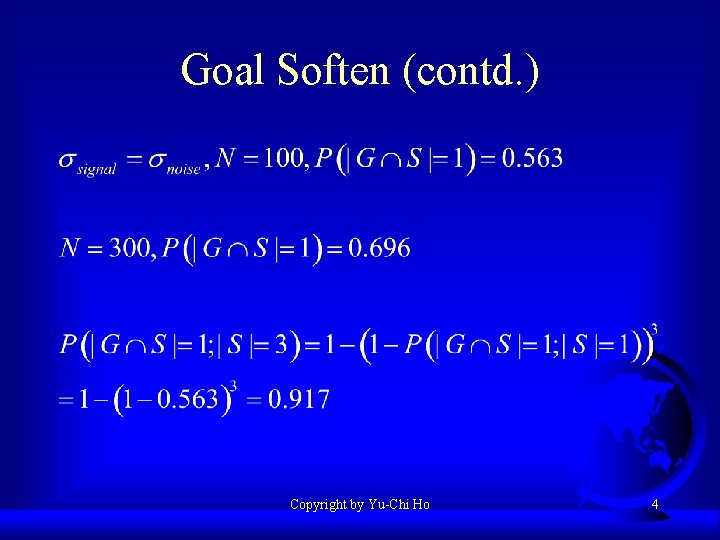

Goal Soften (contd. ) Copyright by Yu-Chi Ho 4

Advanced Ordinal Optimization - Breadth vs. Depth in Searches Y. C. Ho & X. C. Lin October, 2001 Tsinghua University, China Copyright by Yu-Chi Ho

Fundamental Difficulties in the Design of Complex Systems 1. 2. 3. 4. Time consuming performance evaluation via simulation - the 1/(T)1/2 limit Combinatorially large search space - the NP-hard limit Lack of structure - the No Free Lunch Theorem limit Finite limit of computational resource Copyright by Yu-Chi Ho 6

Premises FIt is a finite world! FComputing resources are finite Copyright by Yu-Chi Ho 7

Breadth vs. Depth in Stochastic Optimization F At any point in an optimization process, the question is “How do I spend my next unit of computing resources? ” – 1. Making sure that the current estimated best is indeed the current best (local or depth) – 2. Explore additional points to find a better solution (global or breadth) F Trade off between these two sub-goals to optimize finite resource utilization Copyright by Yu-Chi Ho 8

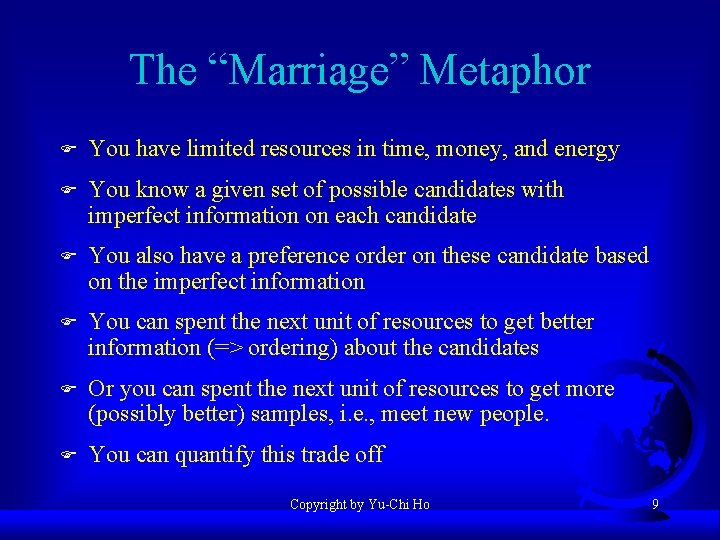

The “Marriage” Metaphor F You have limited resources in time, money, and energy F You know a given set of possible candidates with imperfect information on each candidate F You also have a preference order on these candidate based on the imperfect information F You can spent the next unit of resources to get better information (=> ordering) about the candidates F Or you can spent the next unit of resources to get more (possibly better) samples, i. e. , meet new people. F You can quantify this trade off Copyright by Yu-Chi Ho 9

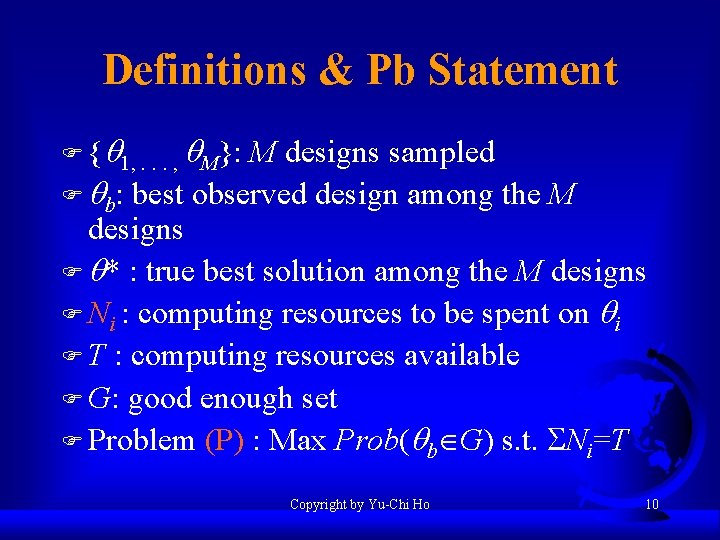

Definitions & Pb Statement F {q 1, . . . , q. M}: M designs sampled F qb: best observed design among the M designs F q* : true best solution among the M designs F Ni : computing resources to be spent on qi F T : computing resources available F G: good enough set F Problem (P) : Max Prob(qb G) s. t. Ni=T Copyright by Yu-Chi Ho 10

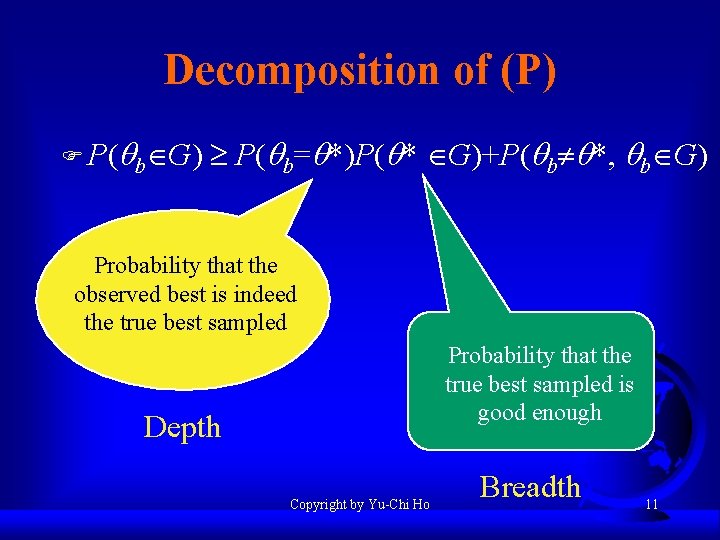

Decomposition of (P) F P(qb G) P(qb=q*)P(q* G)+P(qb q*, qb G) Probability that the observed best is indeed the true best sampled Probability that the true best sampled is good enough Depth Copyright by Yu-Chi Ho Breadth 11

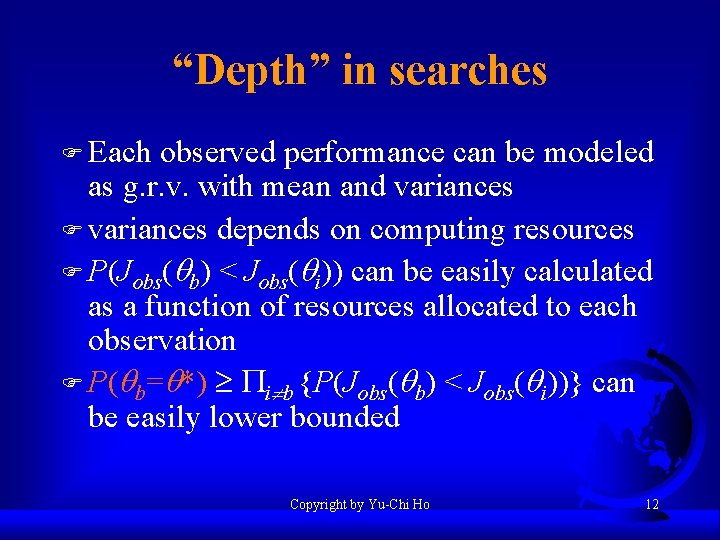

“Depth” in searches F Each observed performance can be modeled as g. r. v. with mean and variances F variances depends on computing resources F P(Jobs(qb) < Jobs(qi)) can be easily calculated as a function of resources allocated to each observation F P(qb=q*) i b {P(Jobs(qb) < Jobs(qi))} can be easily lower bounded Copyright by Yu-Chi Ho 12

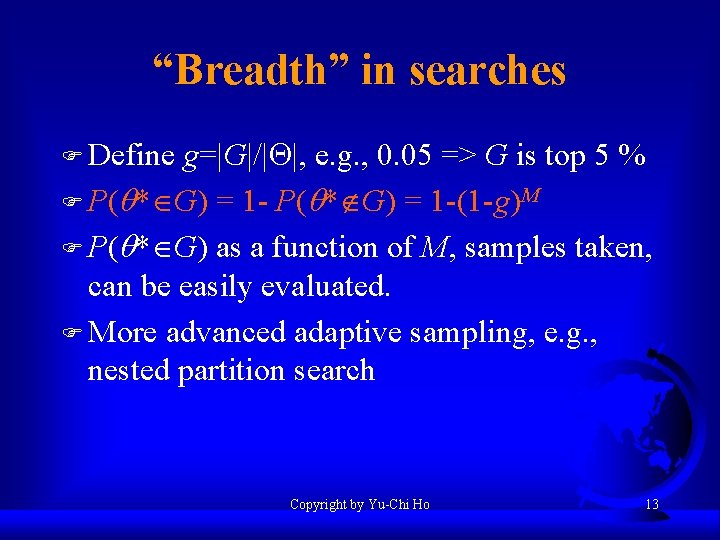

“Breadth” in searches F Define g=|G|/|Q|, e. g. , 0. 05 => G is top 5 % F P(q* G) = 1 -(1 -g)M F P(q* G) as a function of M, samples taken, can be easily evaluated. F More advanced adaptive sampling, e. g. , nested partition search Copyright by Yu-Chi Ho 13

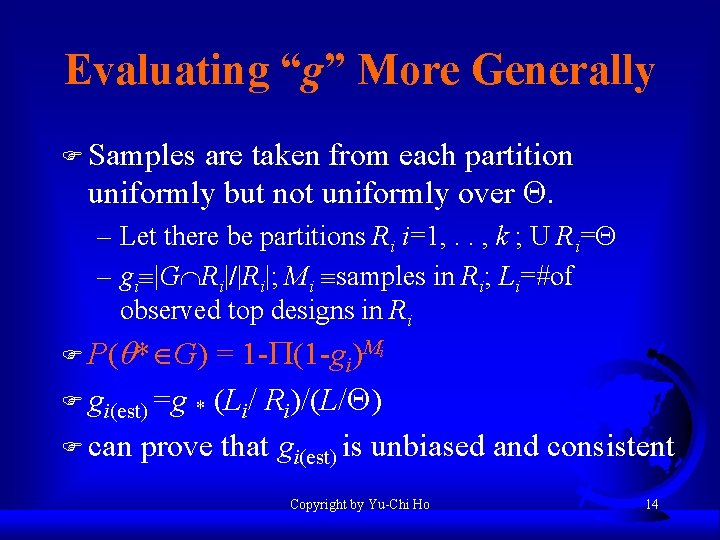

Evaluating “g” More Generally F Samples are taken from each partition uniformly but not uniformly over Q. – Let there be partitions Ri i=1, . . , k ; U Ri=Q – gi |G Ri|/|Ri|; Mi samples in Ri; Li=#of observed top designs in Ri F P(q* G) = 1 - (1 -gi)Mi F gi(est) =g * (Li/ Ri)/(L/Q) F can prove that gi(est) is unbiased and consistent Copyright by Yu-Chi Ho 14

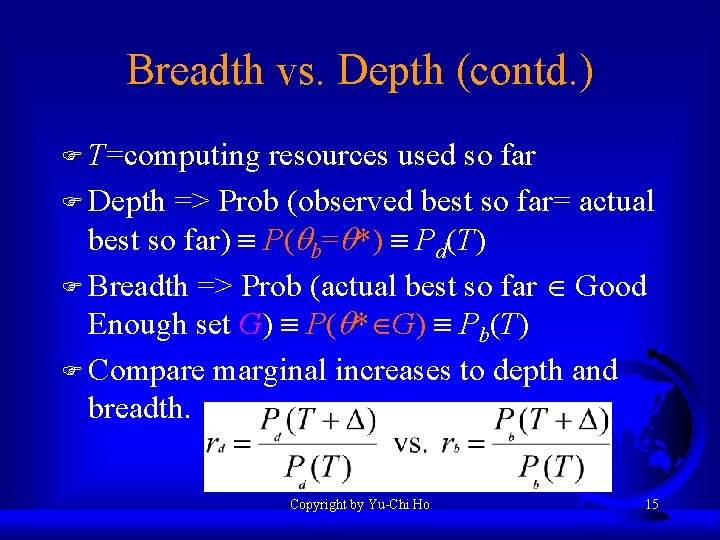

Breadth vs. Depth (contd. ) F T=computing resources used so far F Depth => Prob (observed best so far= actual best so far) P(qb=q*) Pd(T) F Breadth => Prob (actual best so far Good Enough set G) P(q* G) Pb(T) F Compare marginal increases to depth and breadth. Copyright by Yu-Chi Ho 15

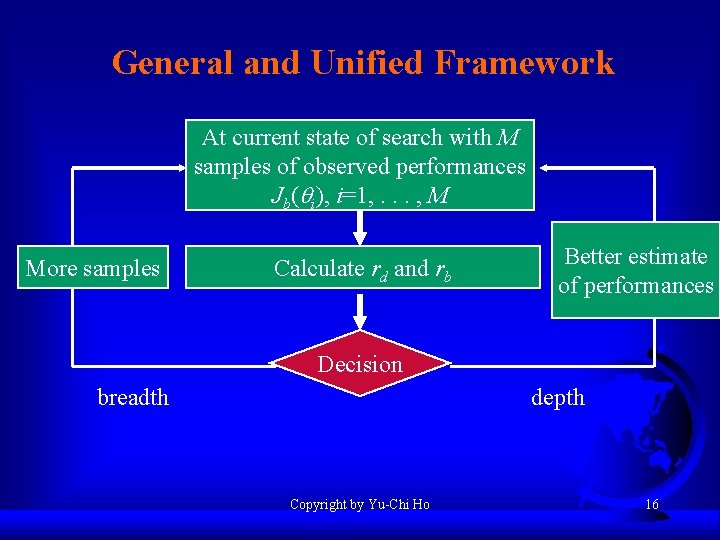

General and Unified Framework At current state of search with M samples of observed performances Jb(qi), i=1, . . . , M More samples Calculate rd and rb Better estimate of performances Decision depth breadth Copyright by Yu-Chi Ho 16

Applications F Test problems F Apparel manufacturing problem F Stock Option problem F See X. C. Lin “ A New Approach to Stochastic Optimization” Ph. D. thesis Harvard University, Oct. 2000 Copyright by Yu-Chi Ho 17

- Slides: 17