Ordering of events in Distributed Systems Eventual Consistency

Ordering of events in Distributed Systems & Eventual Consistency Jinyang Li

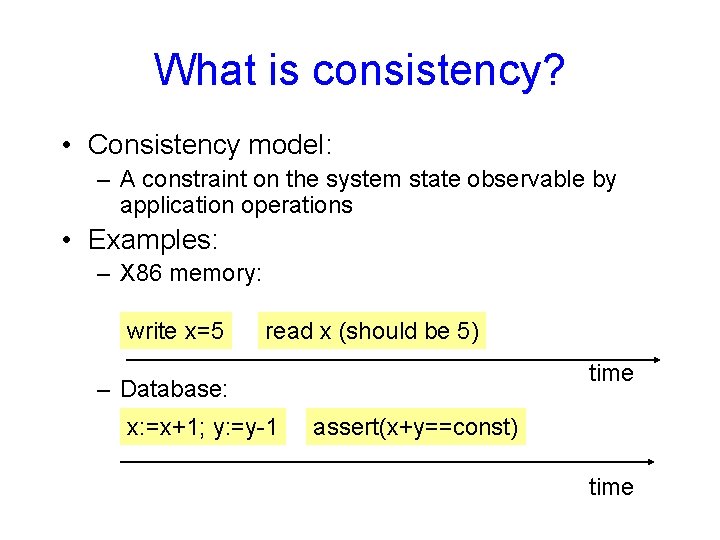

What is consistency? • Consistency model: – A constraint on the system state observable by application operations • Examples: – X 86 memory: write x=5 read x (should be 5) time – Database: x: =x+1; y: =y-1 assert(x+y==const) time

Consistency • No right or wrong consistency models – Tradeoff between ease of programmability and efficiency • Consistency is hard in (distributed) systems: – Data replication (caching) – Concurrency – Failures

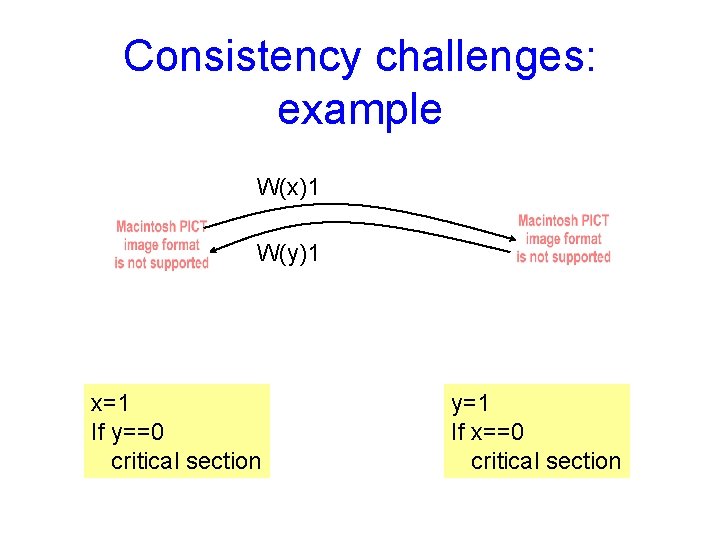

Consistency challenges: example • Each node has a local copy of state • Read from local state • Send writes to the other node, but do not wait

Consistency challenges: example W(x)1 W(y)1 x=1 If y==0 critical section y=1 If x==0 critical section

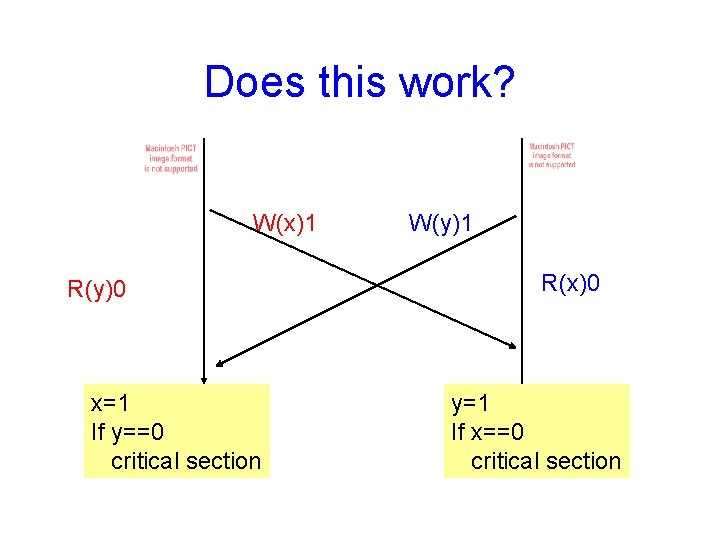

Does this work? W(x)1 R(y)0 x=1 If y==0 critical section W(y)1 R(x)0 y=1 If x==0 critical section

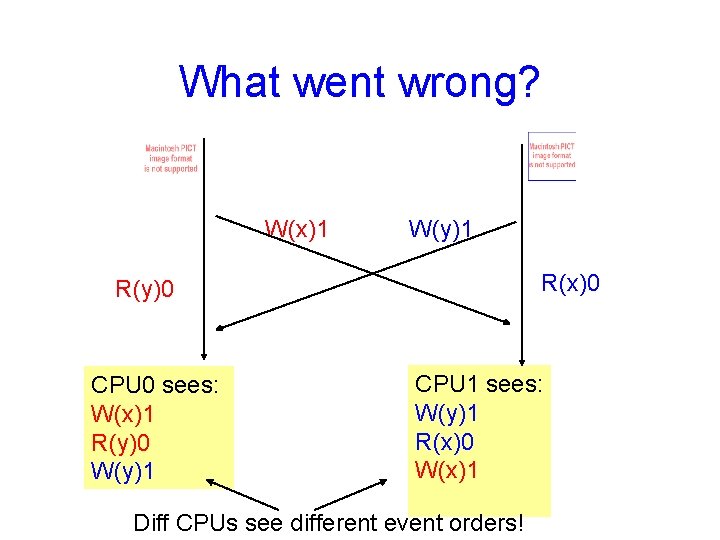

What went wrong? W(x)1 W(y)1 R(x)0 R(y)0 CPU 0 sees: W(x)1 R(y)0 W(y)1 CPU 1 sees: W(y)1 R(x)0 W(x)1 Diff CPUs see different event orders!

Strict consistency • Each operation is stamped with a global wall-clock time • Rules: 1. Each read gets the latest write value 2. All operations at one CPU have timestamps in execution order

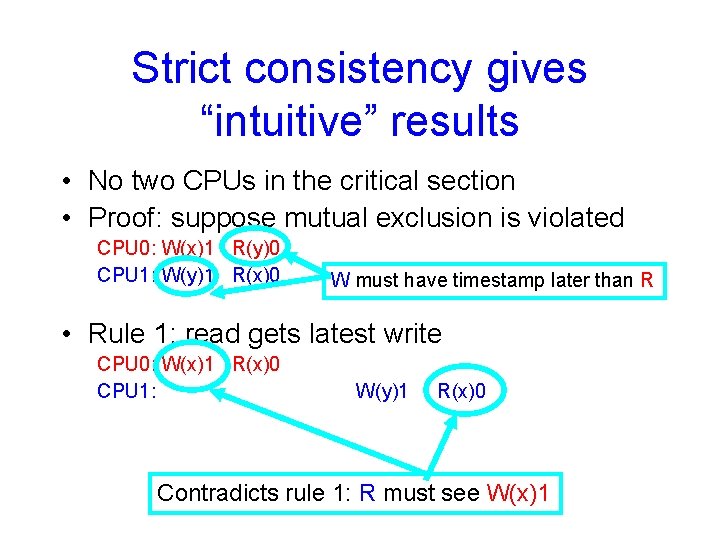

Strict consistency gives “intuitive” results • No two CPUs in the critical section • Proof: suppose mutual exclusion is violated CPU 0: W(x)1 R(y)0 CPU 1: W(y)1 R(x)0 W must have timestamp later than R • Rule 1: read gets latest write CPU 0: W(x)1 R(x)0 CPU 1: W(y)1 R(x)0 Contradicts rule 1: R must see W(x)1

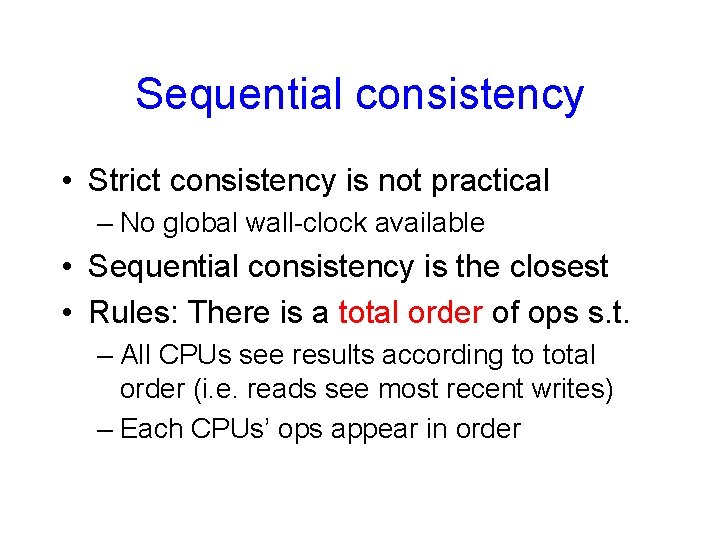

Sequential consistency • Strict consistency is not practical – No global wall-clock available • Sequential consistency is the closest • Rules: There is a total order of ops s. t. – All CPUs see results according to total order (i. e. reads see most recent writes) – Each CPUs’ ops appear in order

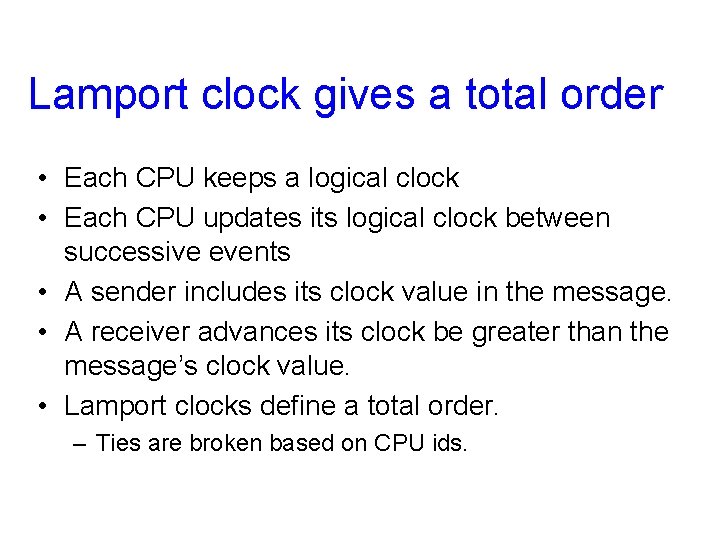

Lamport clock gives a total order • Each CPU keeps a logical clock • Each CPU updates its logical clock between successive events • A sender includes its clock value in the message. • A receiver advances its clock be greater than the message’s clock value. • Lamport clocks define a total order. – Ties are broken based on CPU ids.

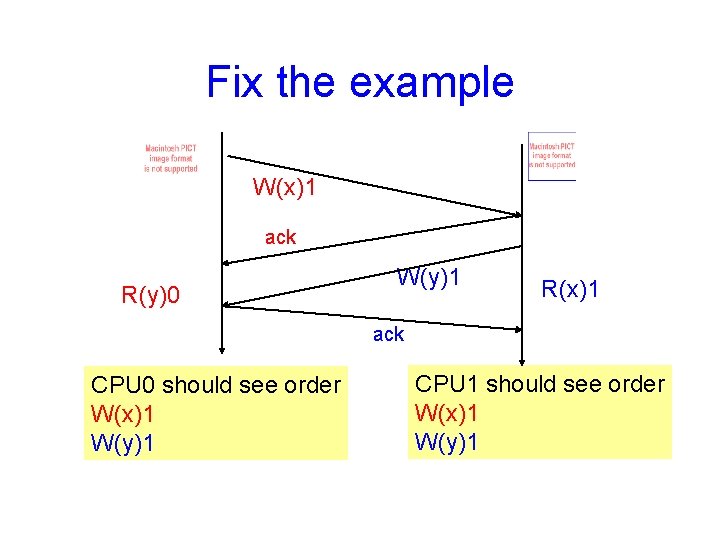

Fix the example W(x)1 ack R(y)0 W(y)1 R(x)1 ack CPU 0 should see order W(x)1 W(y)1 CPU 1 should see order W(x)1 W(y)1

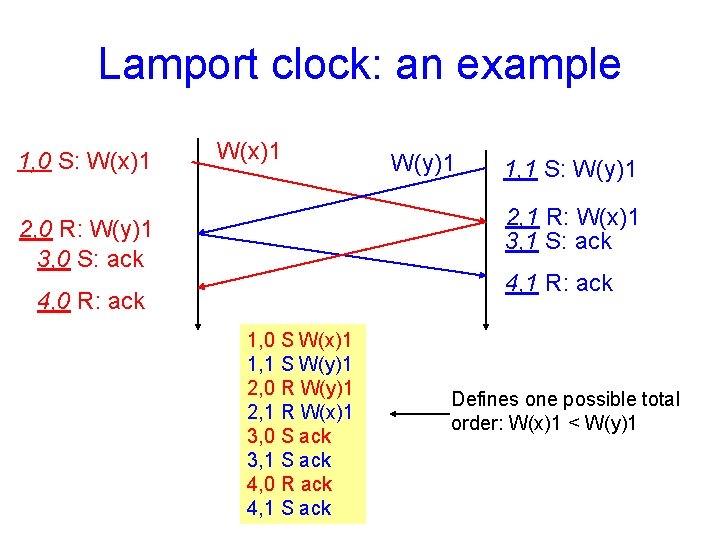

Lamport clock: an example 1, 0 S: W(x)1 W(y)1 1, 1 S: W(y)1 2, 1 R: W(x)1 3, 1 S: ack 2, 0 R: W(y)1 3, 0 S: ack 4, 1 R: ack 4, 0 R: ack 1, 0 S W(x)1 1, 1 S W(y)1 2, 0 R W(y)1 2, 1 R W(x)1 3, 0 S ack 3, 1 S ack 4, 0 R ack 4, 1 S ack Defines one possible total order: W(x)1 < W(y)1

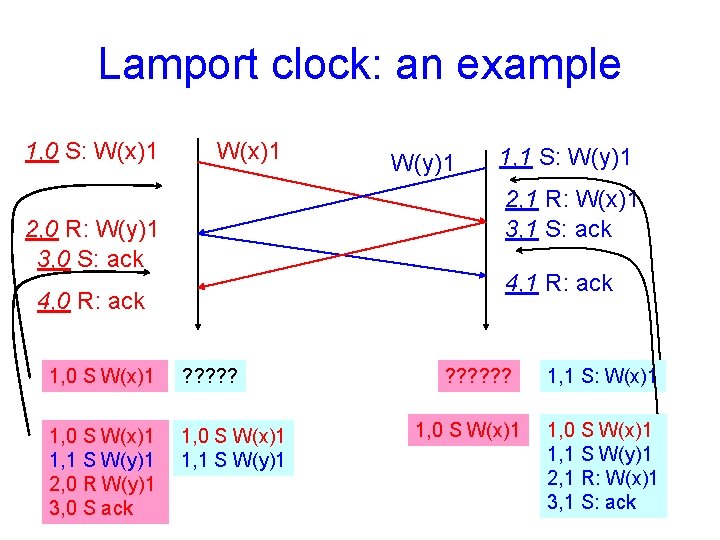

Lamport clock: an example 1, 0 S: W(x)1 W(y)1 1, 1 S: W(y)1 2, 1 R: W(x)1 3, 1 S: ack 2, 0 R: W(y)1 3, 0 S: ack 4, 1 R: ack 4, 0 R: ack 1, 0 S W(x)1 ? ? ? 1, 0 S W(x)1 1, 1 S W(y)1 2, 0 R W(y)1 3, 0 S ack 1, 0 S W(x)1 1, 1 S W(y)1 ? ? ? 1, 0 S W(x)1 1, 1 S: W(x)1 1, 0 S W(x)1 1, 1 S W(y)1 2, 1 R: W(x)1 3, 1 S: ack

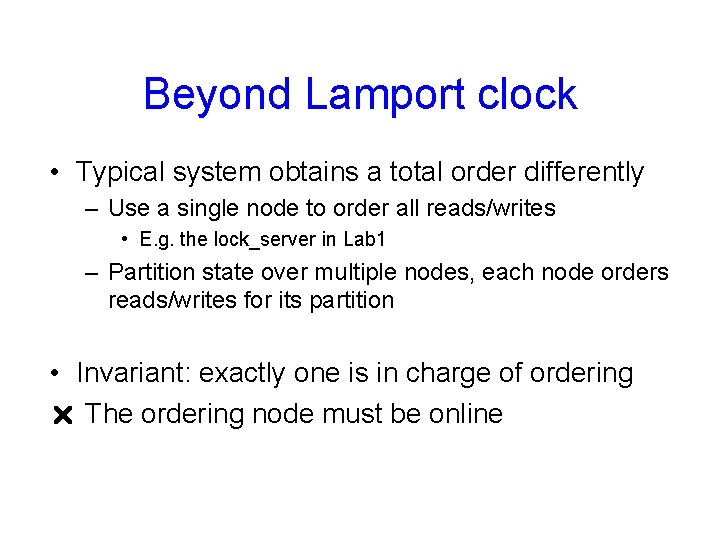

Beyond Lamport clock • Typical system obtains a total order differently – Use a single node to order all reads/writes • E. g. the lock_server in Lab 1 – Partition state over multiple nodes, each node orders reads/writes for its partition • Invariant: exactly one is in charge of ordering r The ordering node must be online

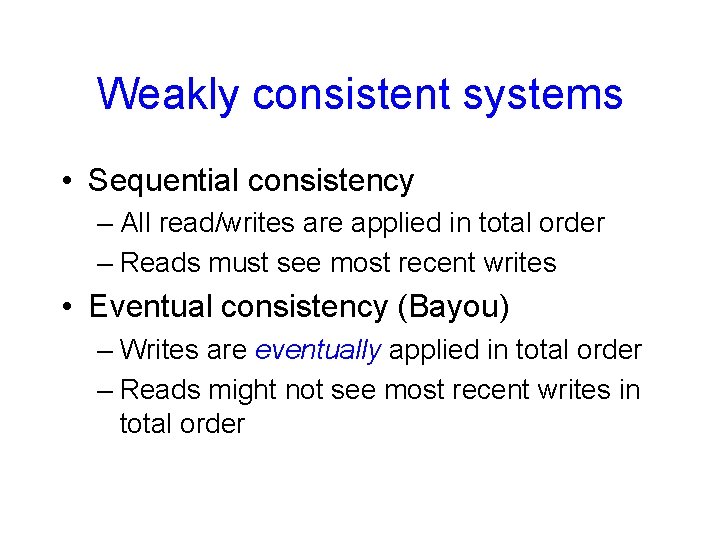

Weakly consistent systems • Sequential consistency – All read/writes are applied in total order – Reads must see most recent writes • Eventual consistency (Bayou) – Writes are eventually applied in total order – Reads might not see most recent writes in total order

Why (not) eventual consistency? • Support disconnected operations – Better to read a stale value than nothing – Better to save writes somewhere than nothing • Potentially anomalous application behavior – Stale reads and conflicting writes…

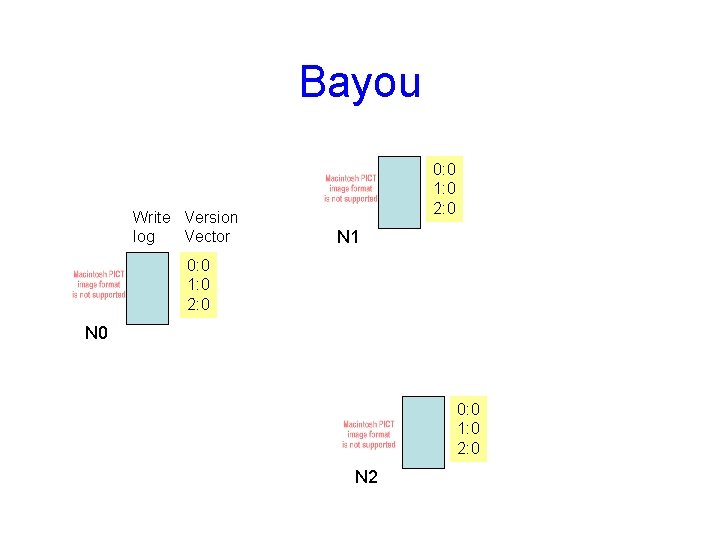

Bayou Write Version log Vector 0: 0 1: 0 2: 0 N 1 0: 0 1: 0 2: 0 N 0 0: 0 1: 0 2: 0 N 2

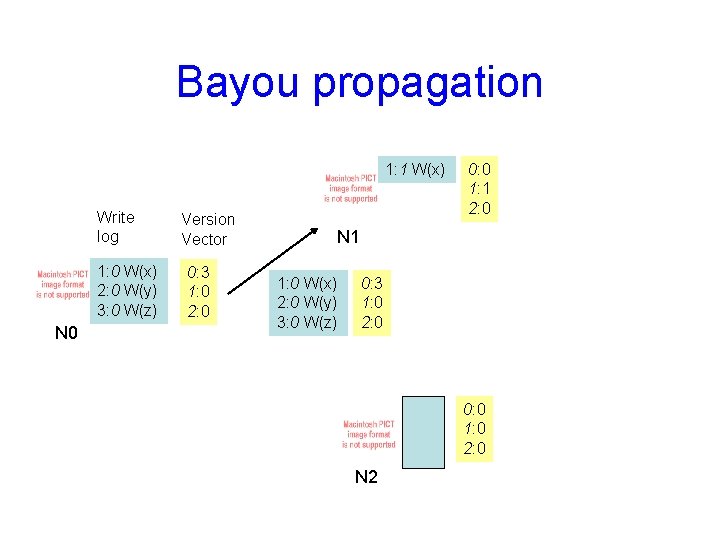

Bayou propagation 1: 1 W(x) Write log 1: 0 W(x) 2: 0 W(y) 3: 0 W(z) N 0 Version Vector 0: 3 1: 0 2: 0 0: 0 1: 1 2: 0 N 1 1: 0 W(x) 2: 0 W(y) 3: 0 W(z) 0: 3 1: 0 2: 0 0: 0 1: 0 2: 0 N 2

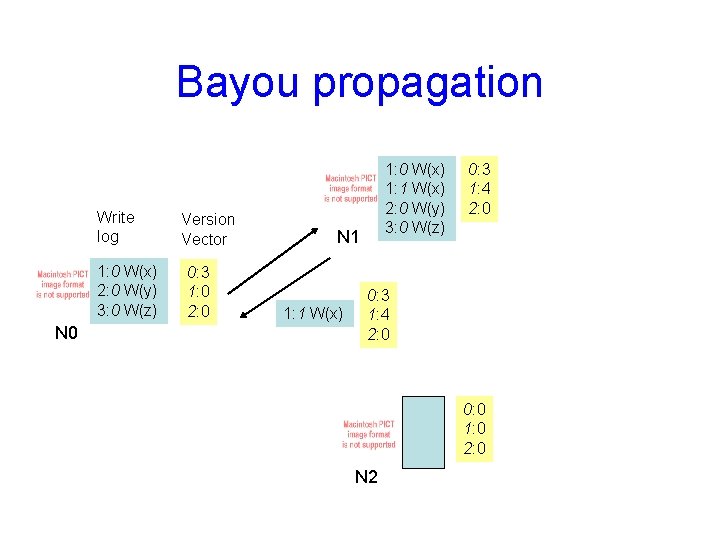

Bayou propagation Write log 1: 0 W(x) 2: 0 W(y) 3: 0 W(z) N 0 Version Vector 0: 3 1: 0 2: 0 1: 0 W(x) 1: 1 W(x) 2: 0 W(y) 3: 0 W(z) N 1 1: 1 W(x) 0: 3 1: 4 2: 0 0: 0 1: 0 2: 0 N 2

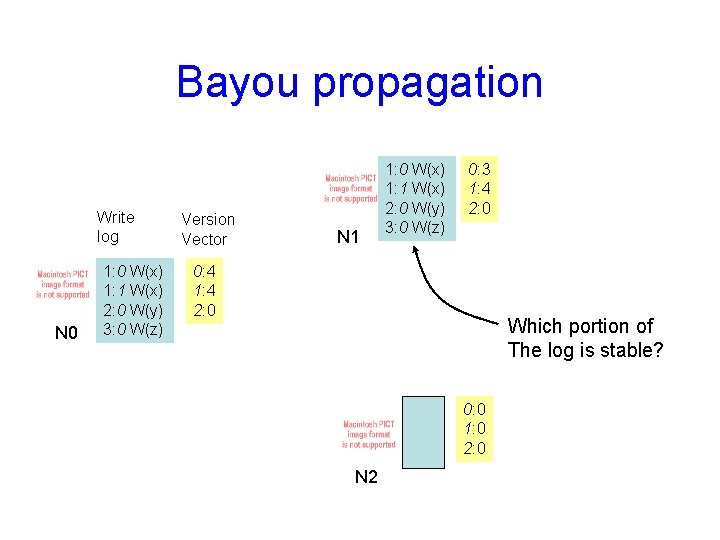

Bayou propagation Write log N 0 1: 0 W(x) 1: 1 W(x) 2: 0 W(y) 3: 0 W(z) Version Vector N 1 1: 0 W(x) 1: 1 W(x) 2: 0 W(y) 3: 0 W(z) 0: 3 1: 4 2: 0 0: 4 1: 4 2: 0 Which portion of The log is stable? 0: 0 1: 0 2: 0 N 2

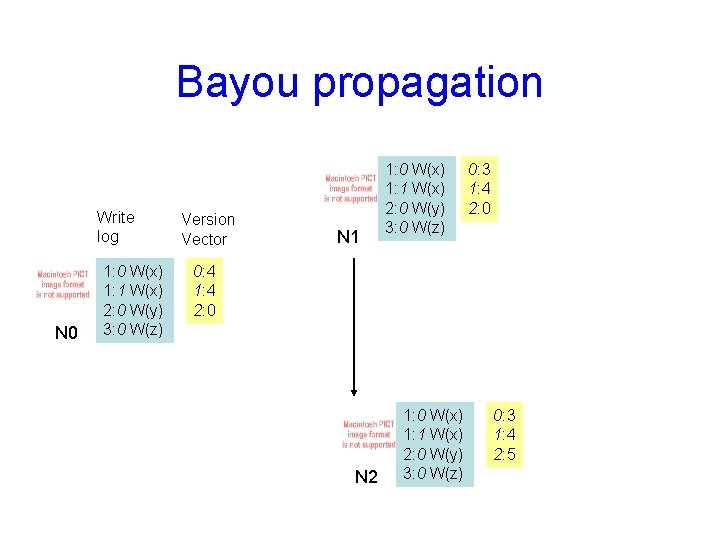

Bayou propagation Write log N 0 1: 0 W(x) 1: 1 W(x) 2: 0 W(y) 3: 0 W(z) Version Vector N 1 1: 0 W(x) 1: 1 W(x) 2: 0 W(y) 3: 0 W(z) 0: 3 1: 4 2: 0 0: 4 1: 4 2: 0 N 2 1: 0 W(x) 1: 1 W(x) 2: 0 W(y) 3: 0 W(z) 0: 3 1: 4 2: 5

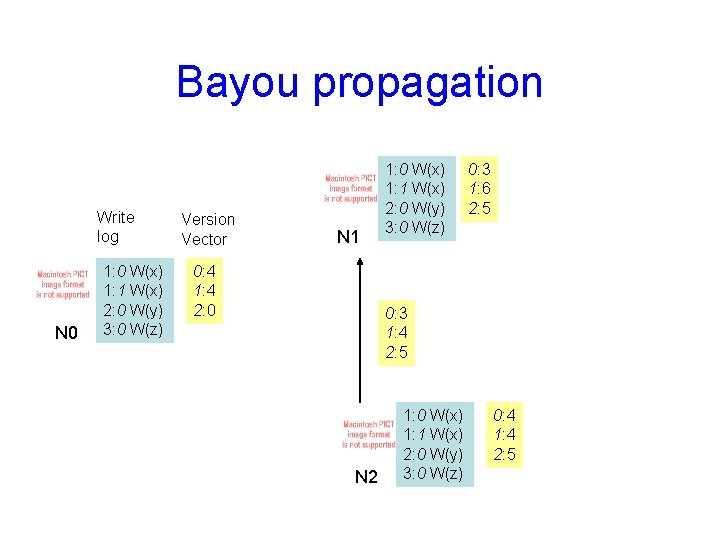

Bayou propagation Write log N 0 1: 0 W(x) 1: 1 W(x) 2: 0 W(y) 3: 0 W(z) Version Vector N 1 0: 4 1: 4 2: 0 1: 0 W(x) 1: 1 W(x) 2: 0 W(y) 3: 0 W(z) 0: 3 1: 6 2: 5 0: 3 1: 4 2: 5 N 2 1: 0 W(x) 1: 1 W(x) 2: 0 W(y) 3: 0 W(z) 0: 4 1: 4 2: 5

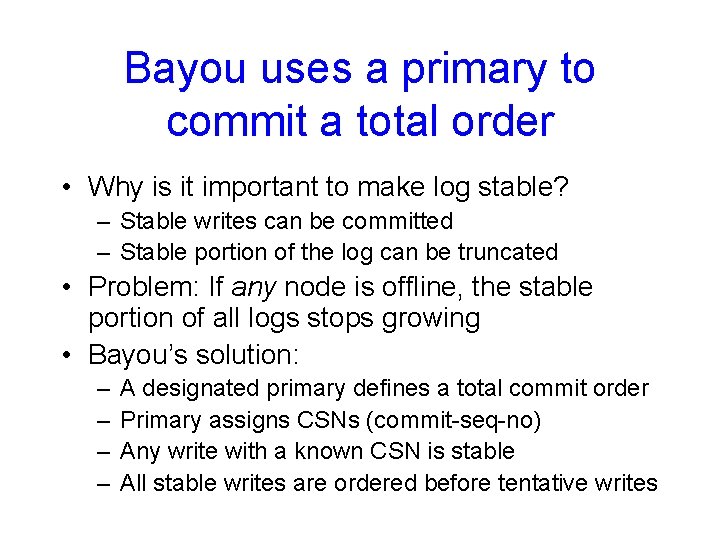

Bayou uses a primary to commit a total order • Why is it important to make log stable? – Stable writes can be committed – Stable portion of the log can be truncated • Problem: If any node is offline, the stable portion of all logs stops growing • Bayou’s solution: – – A designated primary defines a total commit order Primary assigns CSNs (commit-seq-no) Any write with a known CSN is stable All stable writes are ordered before tentative writes

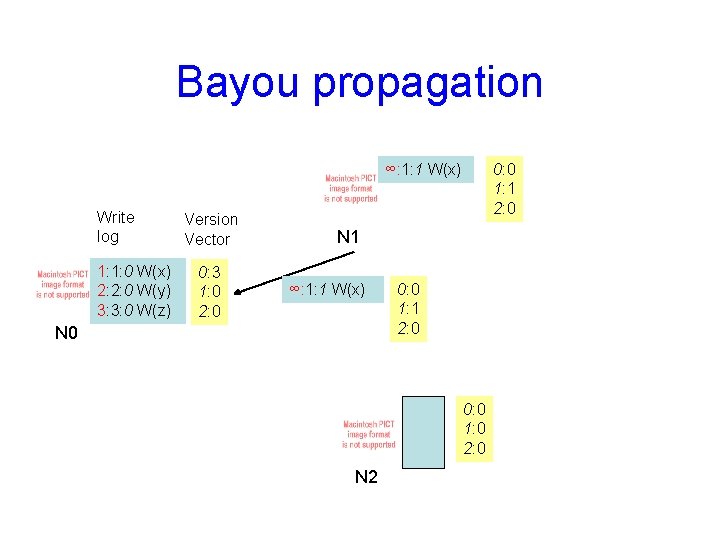

Bayou propagation ∞: 1: 1 W(x) Write log 1: 1: 0 W(x) 2: 2: 0 W(y) 3: 3: 0 W(z) Version Vector 0: 3 1: 0 2: 0 0: 0 1: 1 2: 0 N 1 ∞: 1: 1 W(x) N 0 0: 0 1: 1 2: 0 0: 0 1: 0 2: 0 N 2

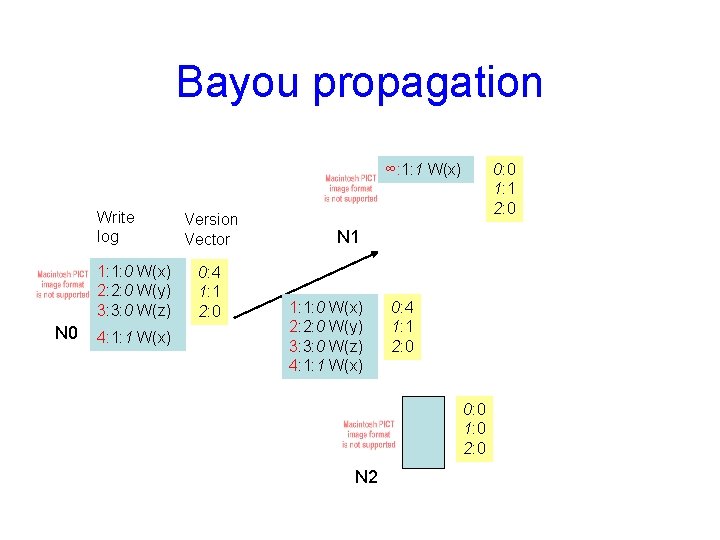

Bayou propagation ∞: 1: 1 W(x) Write log 1: 1: 0 W(x) 2: 2: 0 W(y) 3: 3: 0 W(z) N 0 4: 1: 1 W(x) Version Vector 0: 4 1: 1 2: 0 0: 0 1: 1 2: 0 N 1 1: 1: 0 W(x) 2: 2: 0 W(y) 3: 3: 0 W(z) 4: 1: 1 W(x) 0: 4 1: 1 2: 0 0: 0 1: 0 2: 0 N 2

Bayou’s limitations • Primary cannot fail • Server creation & retirement makes node. ID grow arbitrarily long • Anomalous behaviors for apps? – Calendar app

- Slides: 27