Oracle 10 g for Data Warehousing Hermann Baer

Oracle 10 g for Data Warehousing Hermann Baer, Oracle Product Management Data Warehousing Server Technologies No. COUG Winter Conference, Feb 8 th 2005

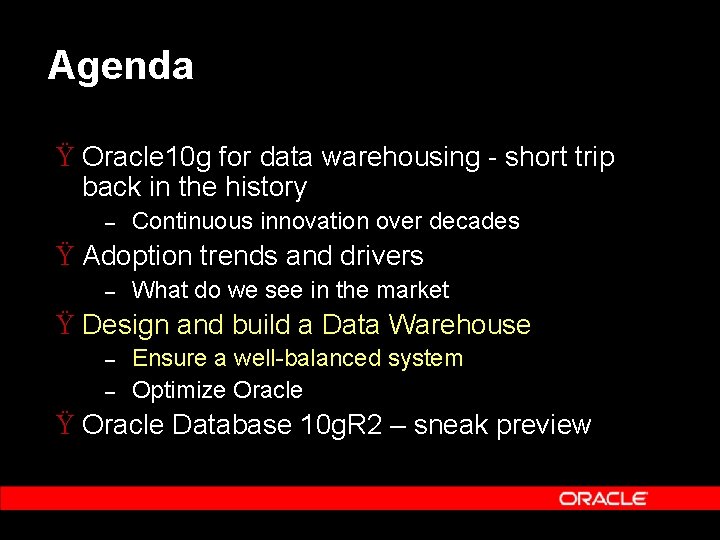

Agenda Ÿ Oracle 10 g for data warehousing - short trip back in the history – Continuous innovation over decades Ÿ Adoption trends and drivers – What do we see in the market Ÿ Design and build a Data Warehouse – – Ensure a well-balanced system Optimize Oracle Ÿ Oracle Database 10 g. R 2 – sneak preview

The way to Oracle 10 g … Ÿ Data Warehousing development started decades ago with Oracle 7. 0 – – Primary focus on performance and scalability Extended with Manageability and the BI platform vision in the Oracle 8 i time frame Ÿ Data Warehousing Imperatives – – – Efficient Extract, Transform, Load (ETL) Managing Large Data Volumes Fast Query Response Supporting Large User Population Managing Simply

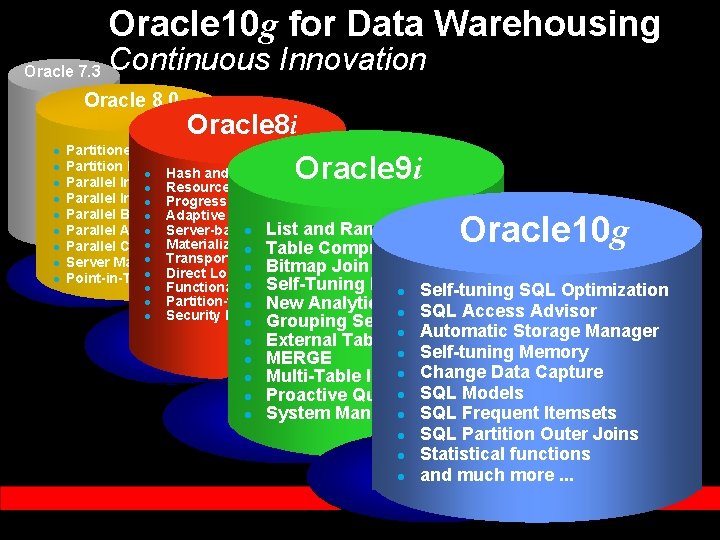

Oracle 10 g for Data Warehousing Oracle 7. 3 Continuous Innovation Oracle 8. 0 l l l l l Oracle 8 i Partitioned Tables and Indexes Partition Pruning l Hash and Composite Partitioning Parallel Index Scans l Resource Manager Parallel Insert, Update, l Progress. Delete Monitor Parallel Bitmap Star Query l Adaptive Parallel Query Parallel ANALYZE l Server-based Functions l Analytic List and Range-List Partitioning l Materialized Parallel Constraint Enabling l. Views Table Compression l Transportable Tablespaces Server Managed Backup/Recovery l API Bitmap Join Index l Direct Loader Point-in-Time Recovery l Self-Tuning Runtime Memory l Functional Indexes l Self-tuning SQL Optimization l Partition-wise l Joins New Analytic Functions l SQL Access Advisor l Security Enhancements l Grouping Sets Oracle 9 i Oracle 10 g l l l External Tablesl Automatic Storage Manager l Self-tuning Memory MERGE l Change Data Capture Multi-Table Insert SQL Models Proactive Queryl Governing System Managed l Undo SQL Frequent Itemsets l SQL Partition Outer Joins l Statistical functions l and much more. . .

Agenda Ÿ Oracle 10 g for data warehousing - short trip back in the history – Continuous innovation over decades Ÿ Adoption trends and drivers – What do we see in the market Ÿ Design and build a Data Warehouse – – Ensure a well-balanced system Optimize Oracle Ÿ Oracle Database 10 g. R 2 – sneak preview

Main Trends and Drivers Ÿ Oracle VLDWs are growing – Less systems, more data Ÿ DW systems are consolidated – Global view of the business Ÿ Importance of Data Warehousing increases dramatically – Growing operational/tactical importance Ÿ Cost Effectiveness becomes more important – Better decisions, lower cost

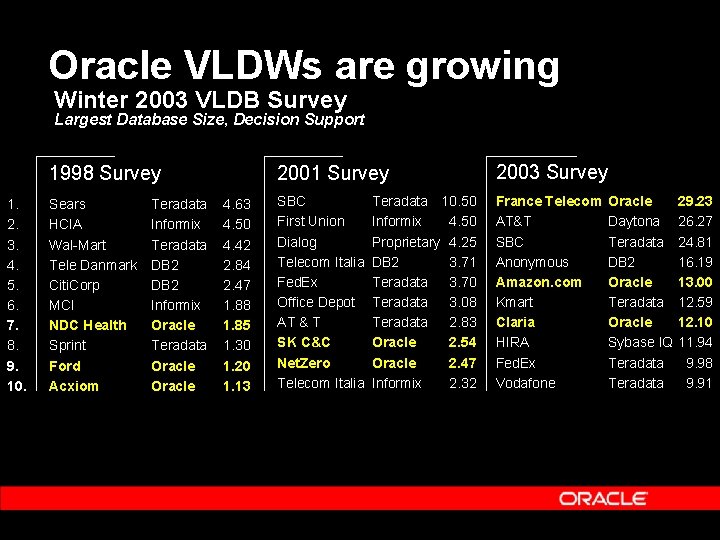

Oracle VLDWs are growing Winter 2003 VLDB Survey Largest Database Size, Decision Support 1998 Survey 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. Sears HCIA Wal-Mart Tele Danmark Citi. Corp MCI NDC Health Sprint Ford Acxiom Teradata Informix Teradata DB 2 Informix Oracle Teradata Oracle 4. 63 4. 50 4. 42 2. 84 2. 47 1. 88 1. 85 1. 30 1. 20 1. 13 2001 Survey 2003 Survey SBC First Union Dialog Telecom Italia Fed. Ex Office Depot AT & T SK C&C Net. Zero Telecom Italia France Telecom AT&T SBC Anonymous Amazon. com Kmart Claria HIRA Fed. Ex Vodafone Teradata 10. 50 Informix 4. 50 Proprietary 4. 25 DB 2 3. 71 Teradata 3. 70 Teradata 3. 08 Teradata 2. 83 Oracle 2. 54 Oracle 2. 47 Informix 2. 32 Oracle Daytona Teradata DB 2 Oracle Teradata Oracle Sybase IQ Teradata 29. 23 26. 27 24. 81 16. 19 13. 00 12. 59 12. 10 11. 94 9. 98 9. 91

Oracle VLDWs are growing Ÿ Powerful RDBMS functionality becomes more important and visible, e. g. – – Partitioning Table compression Automatic Storage Management (ASM) Parallel processing

Increasing Importance of DW Ÿ Latency between operational and analytical data must be minimized – Intelligence when you need it Ÿ Need for new and enhanced analytical capabilities – More value from your data Ÿ “Classical” strengths of an RDBMS become more important – E. g. Security, B/R, Availability, Concurrency

Cost Effectiveness Ÿ Safe money whenever possible – – – Commodity servers Commodity disks Software manageability Ÿ Example Amazon – – – 16 low cost Intel boxes replaced one Super. Dome Low cost storage arrays replaced high end storage arrays 2 DBAs

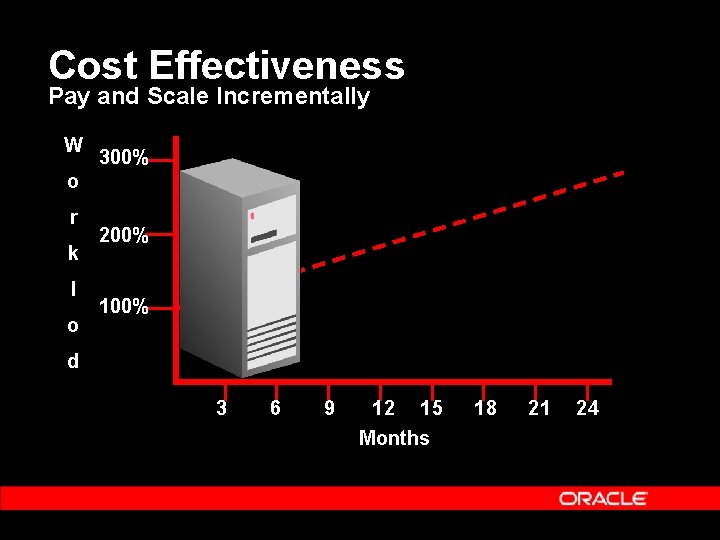

Cost Effectiveness Pay and Scale Incrementally W 300% o r k l o 200% 100% d 3 6 9 12 15 Months 18 21 24

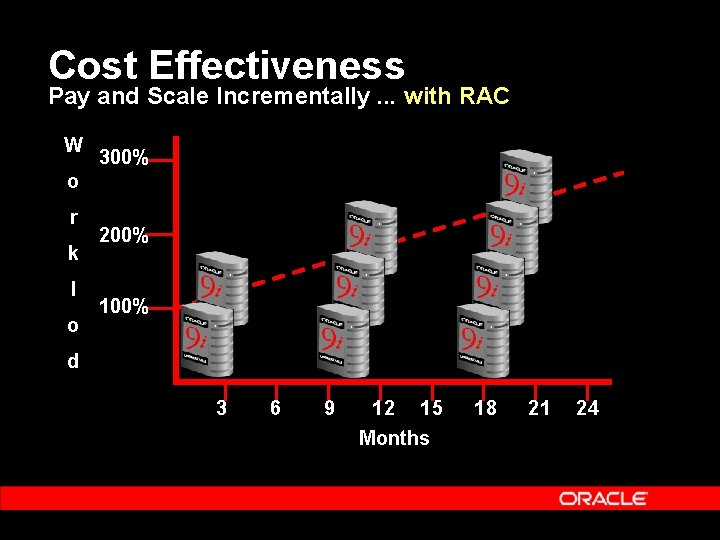

Cost Effectiveness Pay and Scale Incrementally. . . with RAC W 300% o r k l o 200% 100% d 3 6 9 12 15 Months 18 21 24

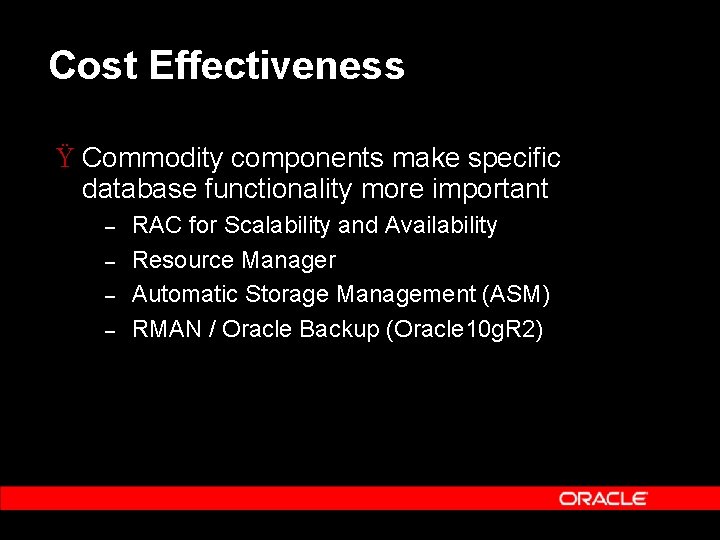

Cost Effectiveness Ÿ Commodity components make specific database functionality more important – – RAC for Scalability and Availability Resource Manager Automatic Storage Management (ASM) RMAN / Oracle Backup (Oracle 10 g. R 2)

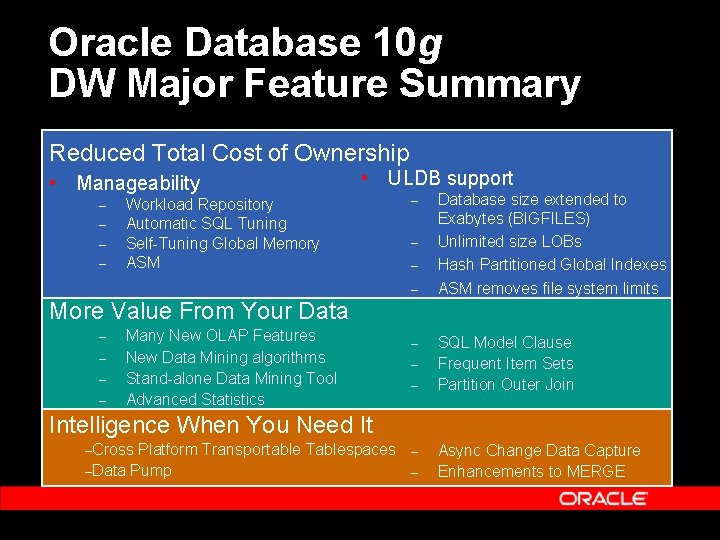

Oracle Database 10 g DW Major Feature Summary Reduced Total Cost of Ownership • Manageability – – • ULDB support Workload Repository Automatic SQL Tuning Self-Tuning Global Memory ASM – – Database size extended to Exabytes (BIGFILES) Unlimited size LOBs Hash Partitioned Global Indexes ASM removes file system limits More Value From Your Data – – Many New OLAP Features New Data Mining algorithms Stand-alone Data Mining Tool Advanced Statistics – – – SQL Model Clause Frequent Item Sets Partition Outer Join Intelligence When You Need It –Cross Platform Transportable Tablespaces –Data Pump – – Async Change Data Capture Enhancements to MERGE

Agenda Ÿ Oracle 10 g for data warehousing - short trip back in the history – Continuous innovation over decades Ÿ Adoption trends and drivers – What do we see in the market Ÿ Design and build a Data Warehouse – – Ensure a well-balanced system Optimize Oracle Ÿ Oracle Database 10 g. R 2 – sneak preview

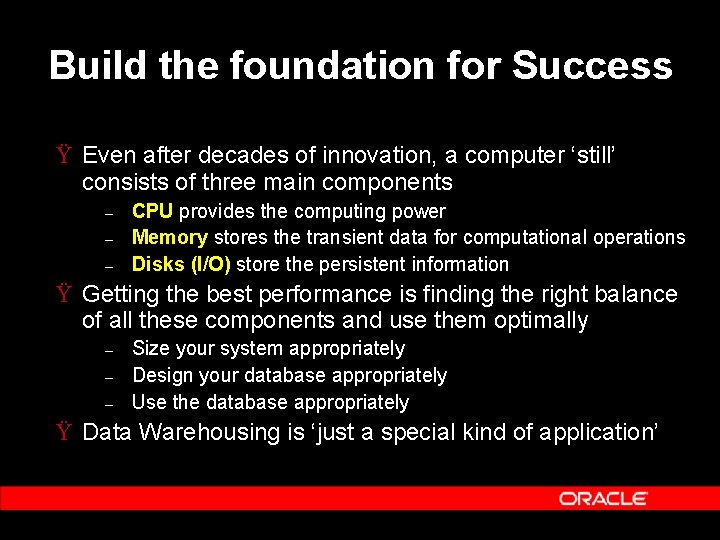

Build the foundation for Success Ÿ Even after decades of innovation, a computer ‘still’ consists of three main components – – – CPU provides the computing power Memory stores the transient data for computational operations Disks (I/O) store the persistent information Ÿ Getting the best performance is finding the right balance of all these components and use them optimally – – – Size your system appropriately Design your database appropriately Use the database appropriately Ÿ Data Warehousing is ‘just a special kind of application’

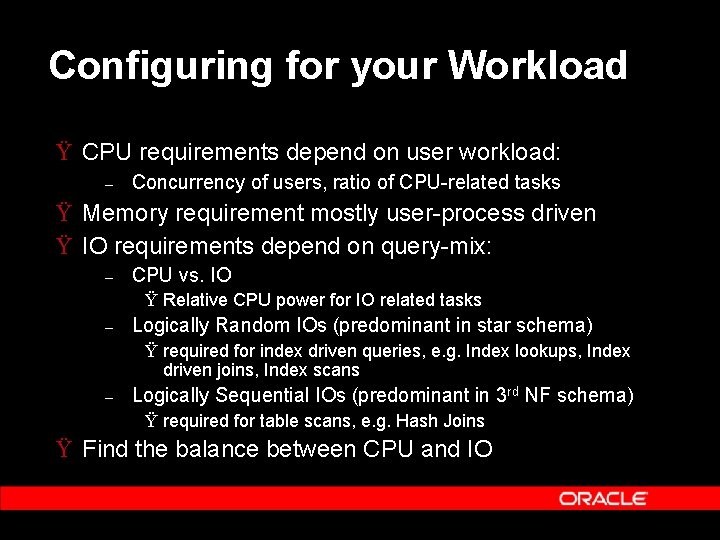

Configuring for your Workload Ÿ CPU requirements depend on user workload: – Concurrency of users, ratio of CPU-related tasks Ÿ Memory requirement mostly user-process driven Ÿ IO requirements depend on query-mix: – CPU vs. IO Ÿ Relative CPU power for IO related tasks – Logically Random IOs (predominant in star schema) Ÿ required for index driven queries, e. g. Index lookups, Index driven joins, Index scans – Logically Sequential IOs (predominant in 3 rd NF schema) Ÿ required for table scans, e. g. Hash Joins Ÿ Find the balance between CPU and IO

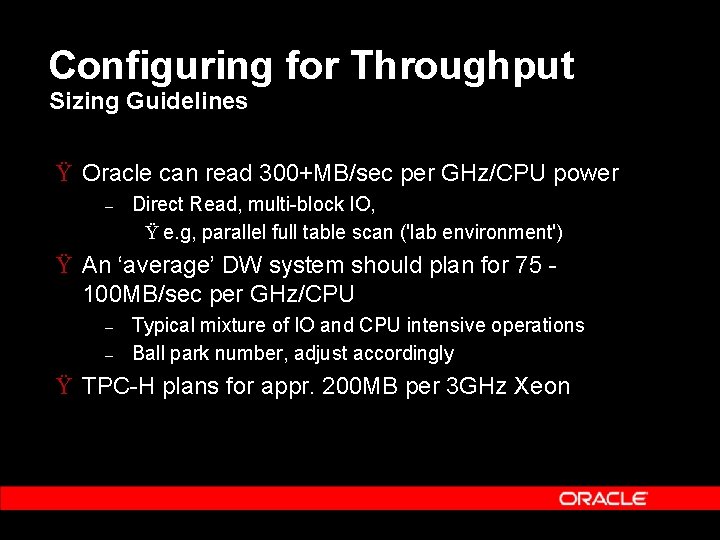

Configuring for Throughput Sizing Guidelines Ÿ Oracle can read 300+MB/sec per GHz/CPU power – Direct Read, multi-block IO, Ÿ e. g, parallel full table scan ('lab environment') Ÿ An ‘average’ DW system should plan for 75 100 MB/sec per GHz/CPU – – Typical mixture of IO and CPU intensive operations Ball park number, adjust accordingly Ÿ TPC-H plans for appr. 200 MB per 3 GHz Xeon

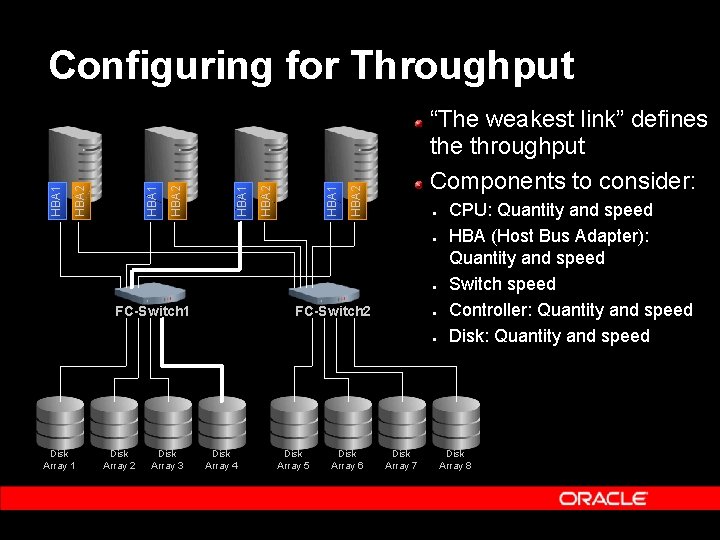

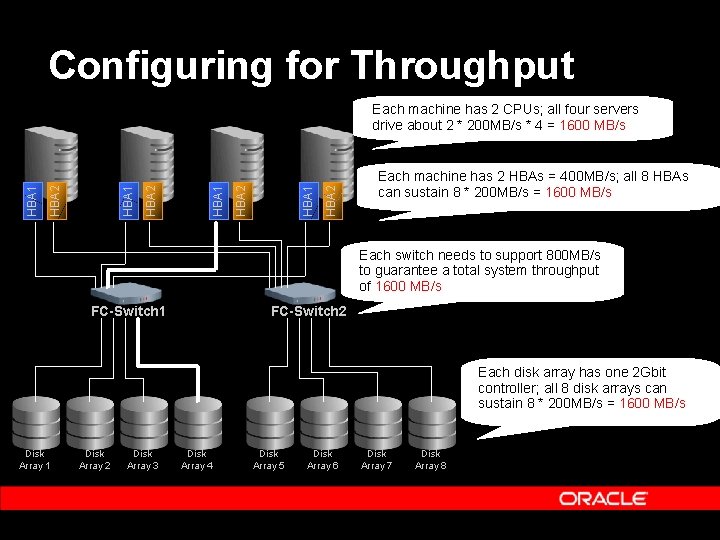

“The weakest link” defines the throughput Components to consider: HBA 2 HBA 1 Configuring for Throughput ● ● ● FC-Switch 1 FC-Switch 2 ● ● Disk Array 1 Disk Array 2 Disk Array 3 Disk Array 4 Disk Array 5 Disk Array 6 Disk Array 7 CPU: Quantity and speed HBA (Host Bus Adapter): Quantity and speed Switch speed Controller: Quantity and speed Disk Array 8

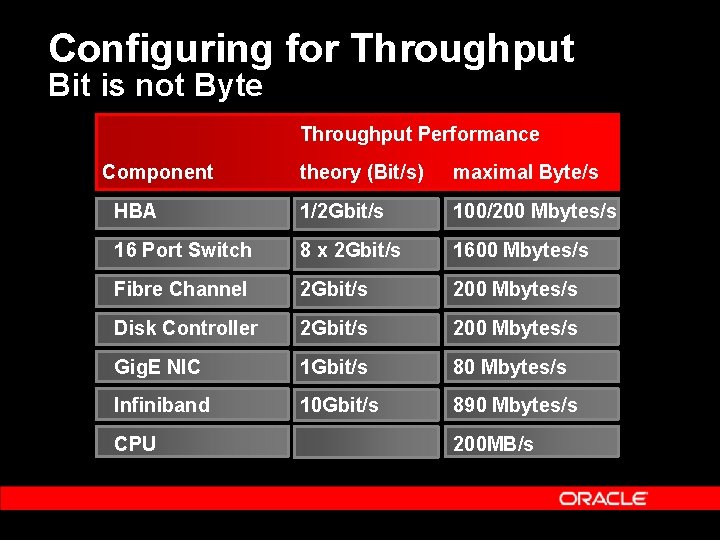

Configuring for Throughput Bit is not Byte Throughput Performance Component theory (Bit/s) maximal Byte/s HBA 1/2 Gbit/s 100/200 Mbytes/s 16 Port Switch 8 x 2 Gbit/s 1600 Mbytes/s Fibre Channel 2 Gbit/s 200 Mbytes/s Disk Controller 2 Gbit/s 200 Mbytes/s Gig. E NIC 1 Gbit/s 80 Mbytes/s Infiniband 10 Gbit/s 890 Mbytes/s CPU 200 MB/s

Configuring for Throughput HBA 2 HBA 1 Each machine has 2 CPUs; all four servers drive about 2 * 200 MB/s * 4 = 1600 MB/s Each machine has 2 HBAs = 400 MB/s; all 8 HBAs can sustain 8 * 200 MB/s = 1600 MB/s Each switch needs to support 800 MB/s to guarantee a total system throughput of 1600 MB/s FC-Switch 1 FC-Switch 2 Each disk array has one 2 Gbit controller; all 8 disk arrays can sustain 8 * 200 MB/s = 1600 MB/s Disk Array 1 Disk Array 2 Disk Array 3 Disk Array 4 Disk Array 5 Disk Array 6 Disk Array 7 Disk Array 8

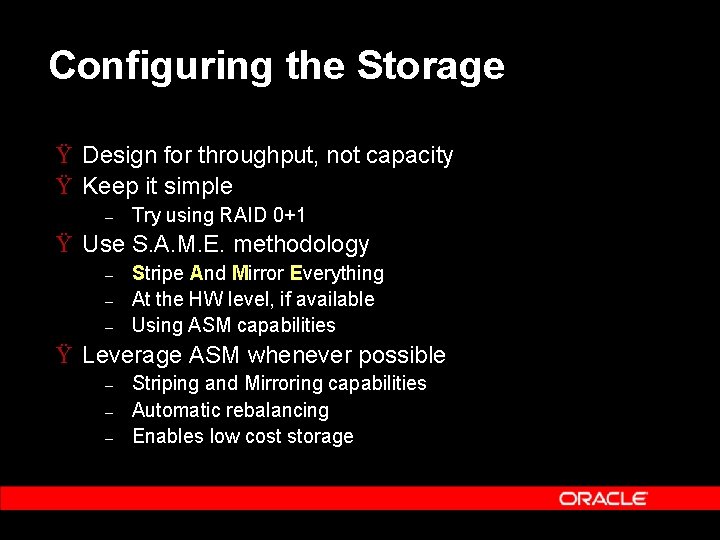

Configuring the Storage Ÿ Design for throughput, not capacity Ÿ Keep it simple – Try using RAID 0+1 Ÿ Use S. A. M. E. methodology – – – Stripe And Mirror Everything At the HW level, if available Using ASM capabilities Ÿ Leverage ASM whenever possible – – – Striping and Mirroring capabilities Automatic rebalancing Enables low cost storage

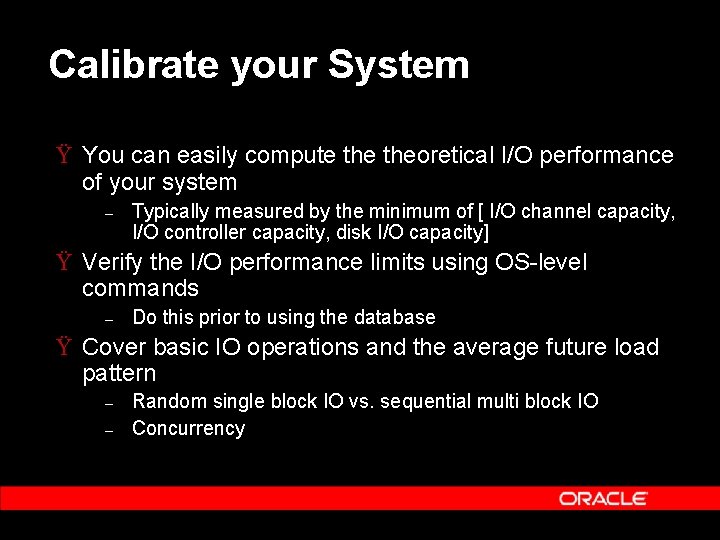

Calibrate your System Ÿ You can easily compute theoretical I/O performance of your system – Typically measured by the minimum of [ I/O channel capacity, I/O controller capacity, disk I/O capacity] Ÿ Verify the I/O performance limits using OS-level commands – Do this prior to using the database Ÿ Cover basic IO operations and the average future load pattern – – Random single block IO vs. sequential multi block IO Concurrency

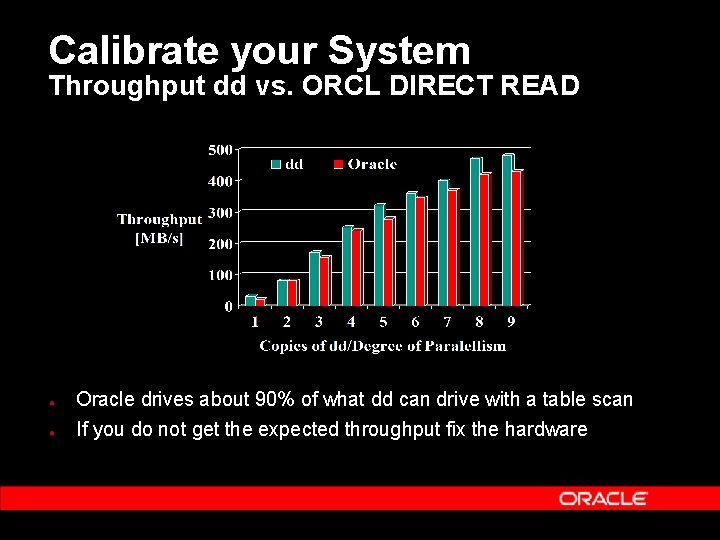

Calibrate your System Throughput dd vs. ORCL DIRECT READ ● Oracle drives about 90% of what dd can drive with a table scan ● If you do not get the expected throughput fix the hardware

Agenda Ÿ Oracle 10 g for data warehousing - short trip back in the history – Continuous innovation over decades Ÿ Adoption trends and drivers – What do we see in the market Ÿ Design and build a Data Warehouse – – Ensure a well-balanced system Optimize Oracle Ÿ Oracle Database 10 g. R 2 – sneak preview

Schema – which way to go? Ÿ Don’t get lost in theory and academia – – Philosophical discussions won’t help (“Star fights 3 NF”) None of the two extremes will work (Red. Brick? , Teradata? ) Ÿ Design according to your business needs – Reality shows that most of the customers are doing a mix and match Ÿ 3 NF more in an ODS layer Ÿ ‘Denormalized’ 3 NF in DW/Stage for general purposes Ÿ Dimensional model for subject areas, e. g. sales, marketing (remember shared dimensions!) Successful database has to support everything * OLAP will not be covered in this presentation

Schema – which way to go? Ÿ The chosen schema approach determines used Oracle functionality Ÿ The chosen schema approach determines IO pattern – Logically Random IOs (predominant in star schema) Ÿ required for index driven queries, e. g. Index lookups, Index driven joins, Index scans – Logically Sequential IOs (predominant in 3 rd NF schema) Ÿ required for table scans, e. g. Hash Join Ÿ Oracle has both functionality to – – Push the IO to the limit Optimize the IO requirements

Schema – which way to go? Star Schema Leading performance for dimensional schemas Ÿ Innovative usage of bitmap indexes and bitmap join indexes – – Index access instead of large table access Bitmap indexes 3 to 20 times smaller than btree indexes Ÿ Support for complex star schemas – – – Multiple fact tables Snowflake schemas Large number of dimensions Ÿ Fully integrated Ÿ Parallel execution Ÿ Partition Pruning

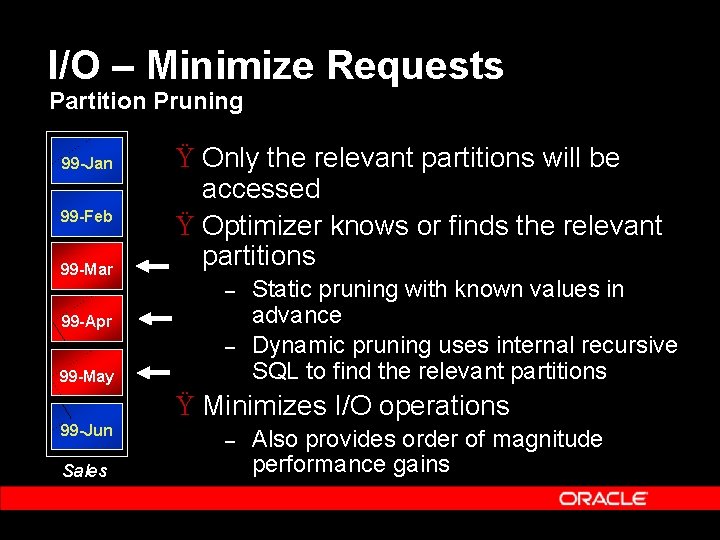

I/O – Minimize Requests Partition Pruning 99 -Jan 99 -Feb 99 -Mar Ÿ Only the relevant partitions will be accessed Ÿ Optimizer knows or finds the relevant partitions – 99 -Apr – 99 -May Static pruning with known values in advance Dynamic pruning uses internal recursive SQL to find the relevant partitions Ÿ Minimizes I/O operations 99 -Jun Sales – Also provides order of magnitude performance gains

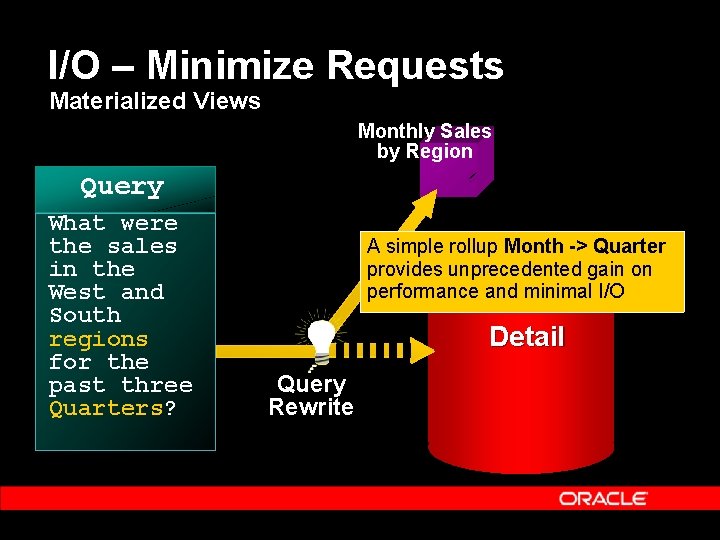

I/O – Minimize Requests Materialized Views Monthly Sales by Region Query What were the sales in the West and South regions for the past three Quarters? A simple rollup Month -> Quarter provides unprecedented gain on performance and minimal I/O Detail Query Rewrite

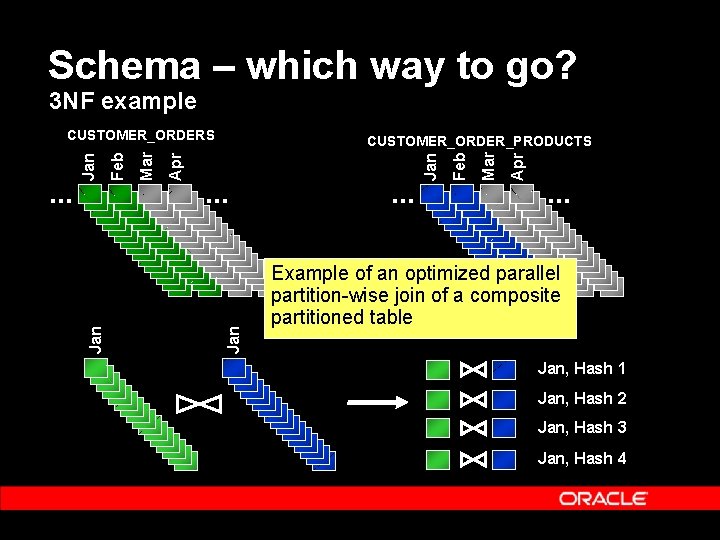

Schema – which way to go? 3 NF example Apr Mar . . . Feb . . . Jan Apr Mar Feb CUSTOMER_ORDER_PRODUCTS Jan . . . Jan CUSTOMER_ORDERS . . . Example of an optimized parallel partition-wise join of a composite partitioned table Jan, Hash 1 Jan, Hash 2 Jan, Hash 3 Jan, Hash 4

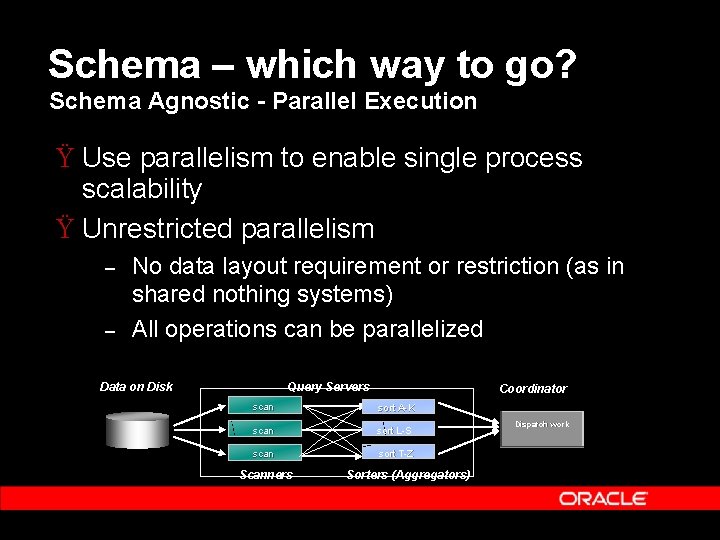

Schema – which way to go? Schema Agnostic - Parallel Execution Ÿ Use parallelism to enable single process scalability Ÿ Unrestricted parallelism – – No data layout requirement or restriction (as in shared nothing systems) All operations can be parallelized Data on Disk Query Servers Coordinator scan sort A-K scan sort L-S scan sort T-Z Scanners Sorters (Aggregators) Dispatch work

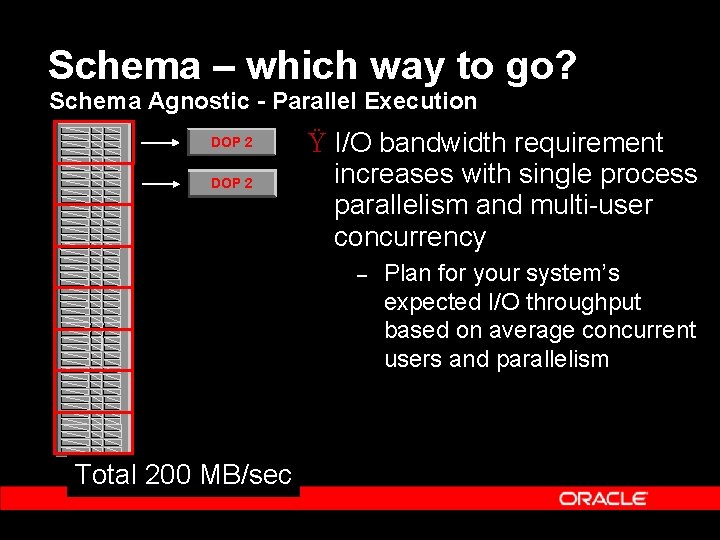

Schema – which way to go? Schema Agnostic - Parallel Execution DOP 2 Ÿ I/O bandwidth requirement increases with single process parallelism and multi-user concurrency – Total 200 MB/sec Plan for your system’s expected I/O throughput based on average concurrent users and parallelism

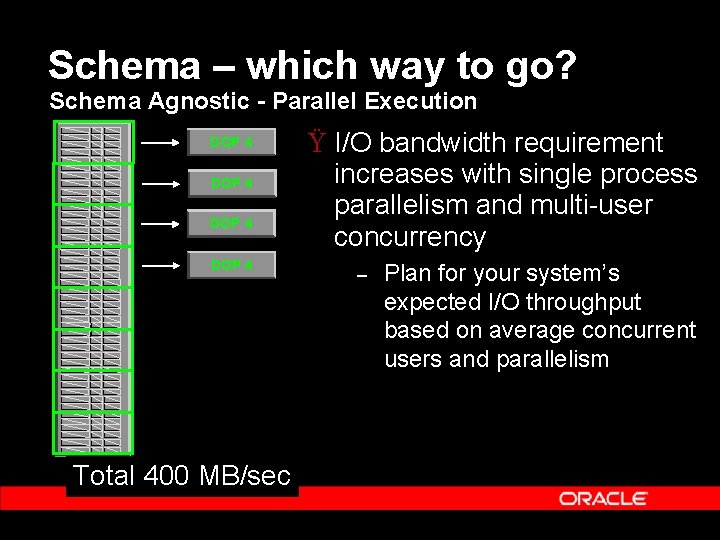

Schema – which way to go? Schema Agnostic - Parallel Execution DOP 4 Total 400 MB/sec Ÿ I/O bandwidth requirement increases with single process parallelism and multi-user concurrency – Plan for your system’s expected I/O throughput based on average concurrent users and parallelism

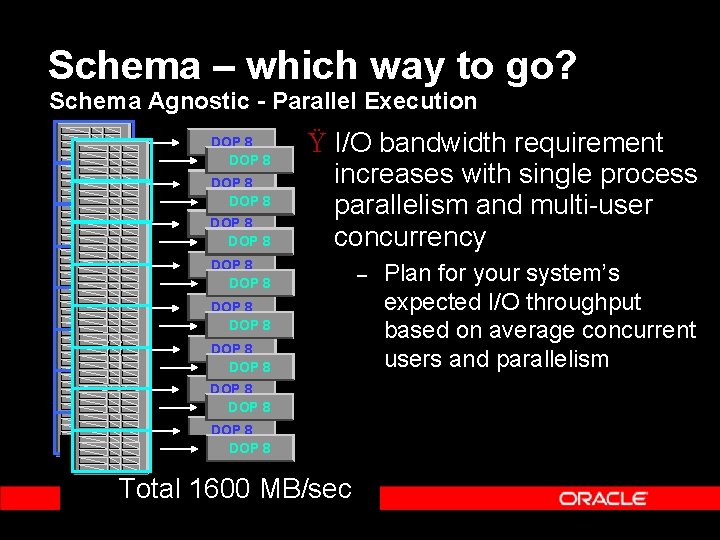

Schema – which way to go? Schema Agnostic - Parallel Execution DOP 8 DOP 8 Ÿ I/O bandwidth requirement increases with single process parallelism and multi-user concurrency DOP 8 DOP 8 DOP 8 Total 8001600 MB/sec Total MB/sec – Plan for your system’s expected I/O throughput based on average concurrent users and parallelism

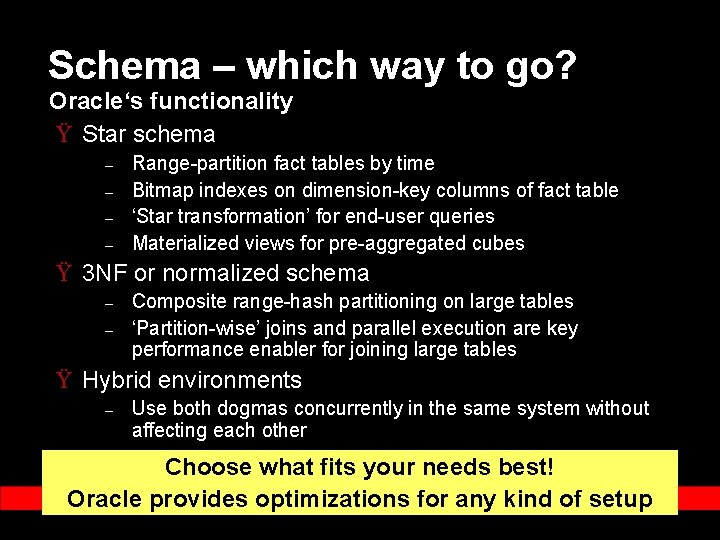

Schema – which way to go? Oracle‘s functionality Ÿ Star schema – – Range-partition fact tables by time Bitmap indexes on dimension-key columns of fact table ‘Star transformation’ for end-user queries Materialized views for pre-aggregated cubes Ÿ 3 NF or normalized schema – – Composite range-hash partitioning on large tables ‘Partition-wise’ joins and parallel execution are key performance enabler for joining large tables Ÿ Hybrid environments – Use both dogmas concurrently in the same system without affecting each other Choose what fits your needs best! Oracle provides optimizations for any kind of setup

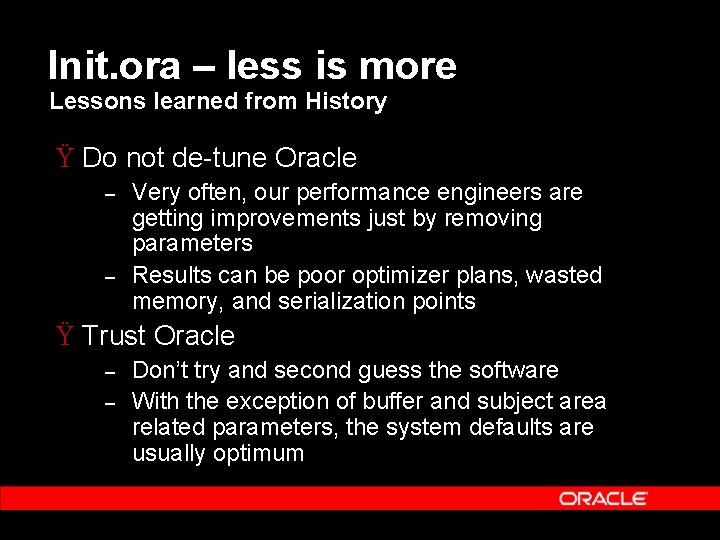

Init. ora – less is more Lessons learned from History Ÿ Do not de-tune Oracle – – Very often, our performance engineers are getting improvements just by removing parameters Results can be poor optimizer plans, wasted memory, and serialization points Ÿ Trust Oracle – – Don’t try and second guess the software With the exception of buffer and subject area related parameters, the system defaults are usually optimum

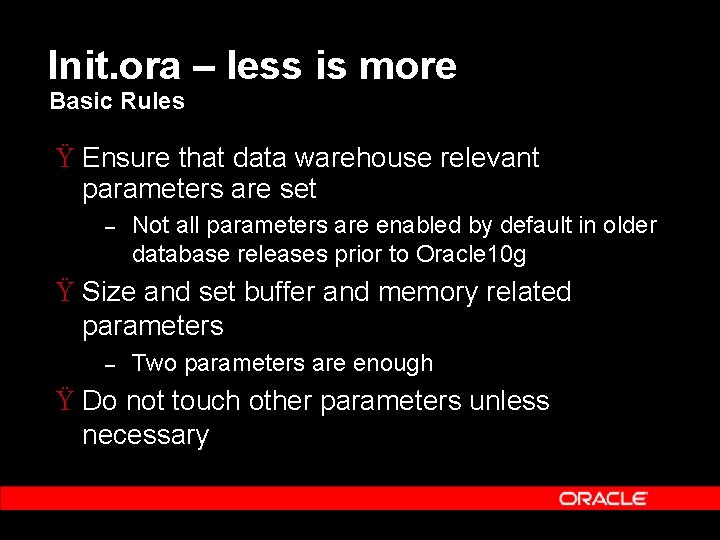

Init. ora – less is more Basic Rules Ÿ Ensure that data warehouse relevant parameters are set – Not all parameters are enabled by default in older database releases prior to Oracle 10 g Ÿ Size and set buffer and memory related parameters – Two parameters are enough Ÿ Do not touch other parameters unless necessary

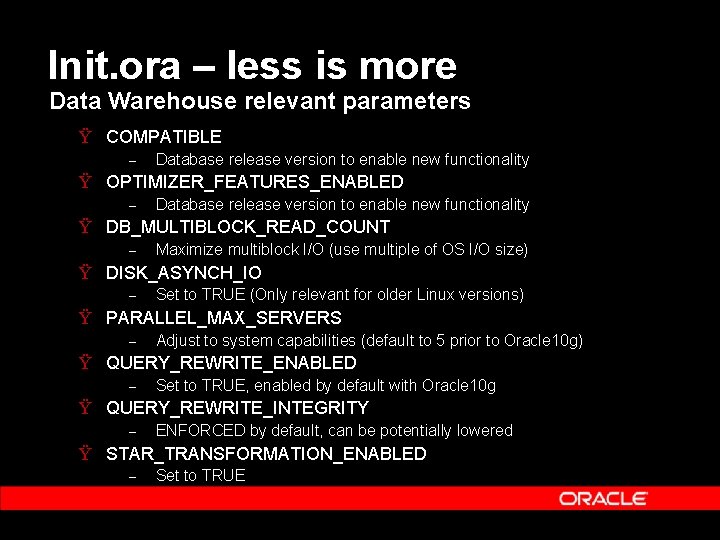

Init. ora – less is more Data Warehouse relevant parameters Ÿ COMPATIBLE – Database release version to enable new functionality Ÿ OPTIMIZER_FEATURES_ENABLED – Database release version to enable new functionality Ÿ DB_MULTIBLOCK_READ_COUNT – Maximize multiblock I/O (use multiple of OS I/O size) Ÿ DISK_ASYNCH_IO – Set to TRUE (Only relevant for older Linux versions) Ÿ PARALLEL_MAX_SERVERS – Adjust to system capabilities (default to 5 prior to Oracle 10 g) Ÿ QUERY_REWRITE_ENABLED – Set to TRUE, enabled by default with Oracle 10 g Ÿ QUERY_REWRITE_INTEGRITY – ENFORCED by default, can be potentially lowered Ÿ STAR_TRANSFORMATION_ENABLED – Set to TRUE

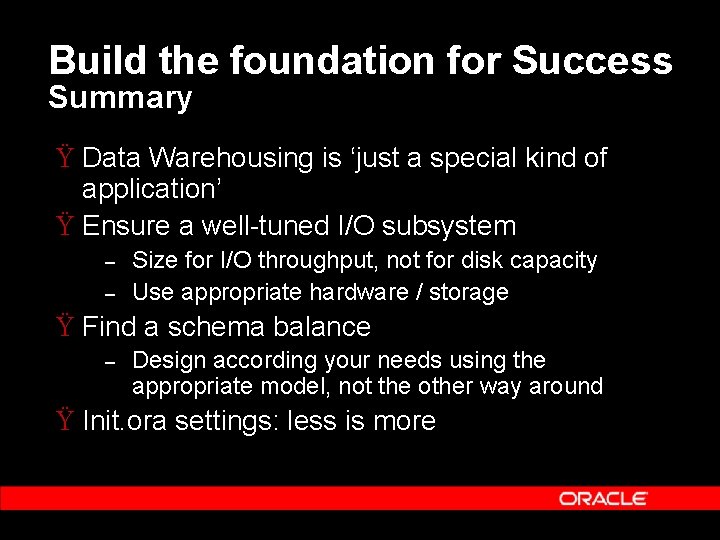

Build the foundation for Success Summary Ÿ Data Warehousing is ‘just a special kind of application’ Ÿ Ensure a well-tuned I/O subsystem – – Size for I/O throughput, not for disk capacity Use appropriate hardware / storage Ÿ Find a schema balance – Design according your needs using the appropriate model, not the other way around Ÿ Init. ora settings: less is more

Agenda Ÿ Oracle 10 g for data warehousing - short trip back in the history – Continuous innovation over decades Ÿ Adoption trends and drivers – What do we see in the market Ÿ Design and build a Data Warehouse – – Ensure a well-balanced system Optimize Oracle Ÿ Oracle Database 10 g. R 2 – sneak preview

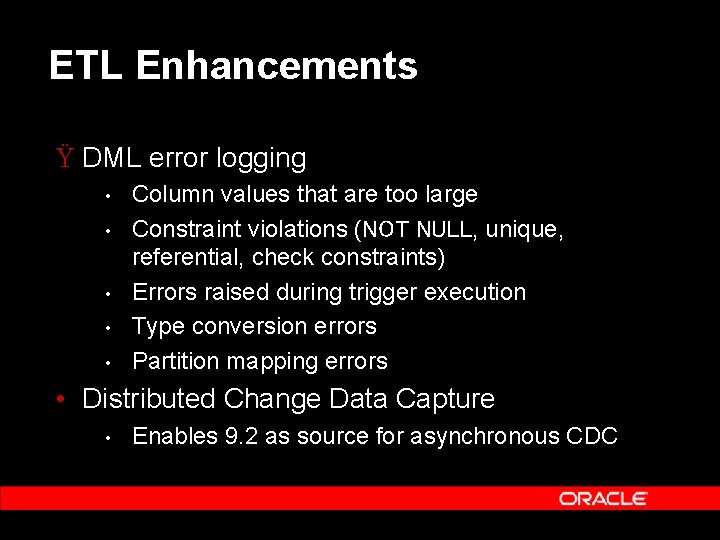

ETL Enhancements Ÿ DML error logging • • • Column values that are too large Constraint violations (NOT NULL, unique, referential, check constraints) Errors raised during trigger execution Type conversion errors Partition mapping errors • Distributed Change Data Capture • Enables 9. 2 as source for asynchronous CDC

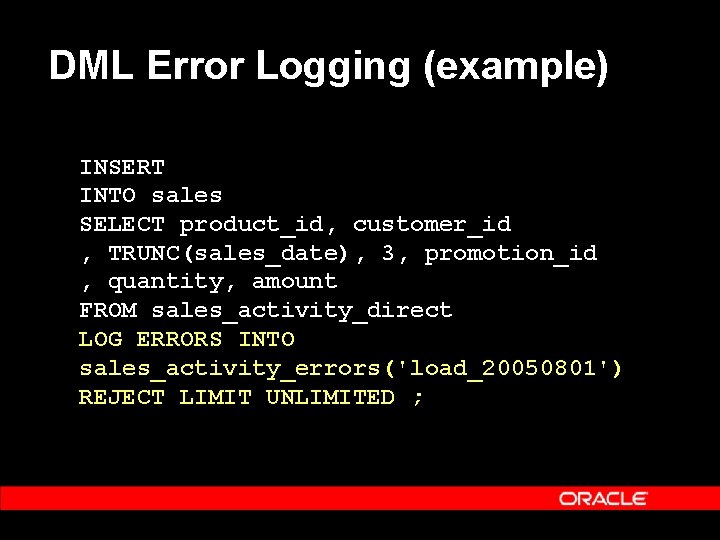

DML Error Logging (example) INSERT INTO sales SELECT product_id, customer_id , TRUNC(sales_date), 3, promotion_id , quantity, amount FROM sales_activity_direct LOG ERRORS INTO sales_activity_errors('load_20050801') REJECT LIMIT UNLIMITED ;

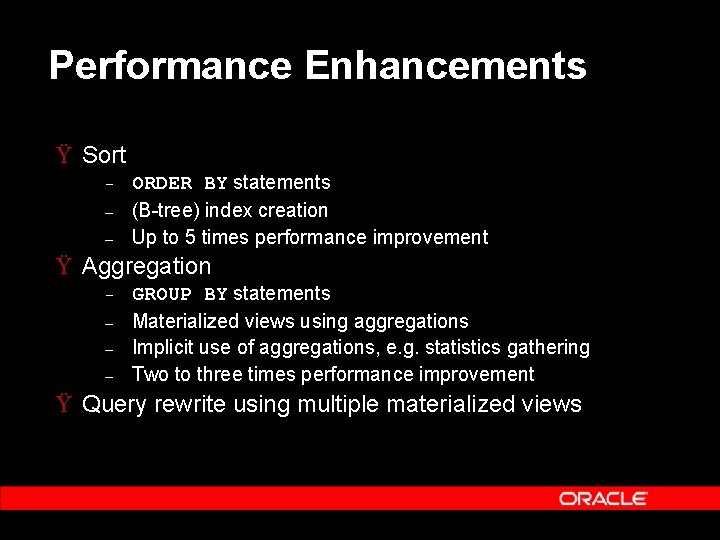

Performance Enhancements Ÿ Sort – – – ORDER BY statements (B-tree) index creation Up to 5 times performance improvement Ÿ Aggregation – – GROUP BY statements Materialized views using aggregations Implicit use of aggregations, e. g. statistics gathering Two to three times performance improvement Ÿ Query rewrite using multiple materialized views

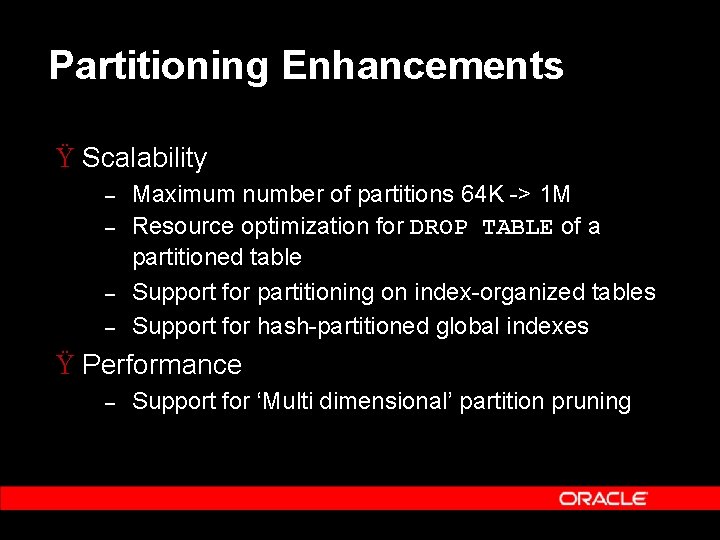

Partitioning Enhancements Ÿ Scalability – – Maximum number of partitions 64 K -> 1 M Resource optimization for DROP TABLE of a partitioned table Support for partitioning on index-organized tables Support for hash-partitioned global indexes Ÿ Performance – Support for ‘Multi dimensional’ partition pruning

Other Enhancements Ÿ Manageability – – SQL Access Advisor improvements Materialized view refresh improvements Ÿ Analytics – SQL model clause enhancements

Summary Ÿ Oracle 10 g for data warehousing - short trip back in the history – The most powerful and successful DW platform Ÿ Adoption trends and drivers – – Be visionary, though conservative Guarantee success and protect investments Ÿ Design and build a Data Warehouse – – Ensure a well-balanced system Optimize Oracle Ÿ Oracle Database 10 g. R 2 Beta – Interested?

Q& A QUESTIONS ANSWERS

- Slides: 48