Optimization driven by genetic algorithms for disruption prediction

Optimization driven by genetic algorithms for disruption prediction based on APODIS architecture G. A. Rattá¹, J. Vega¹, A. Murari ², S. Dormido-Canto³, R. Moreno¹ and JET Contributors* EUROfusion Consortium, JET, Culham Science Centre, Abingdon, OX 14 3 DB, UK 1 Laboratorio Nacional de Fusión, CIEMAT, Madrid, Spain 2 Consorzio RFX, Padua, Italy 3 Departamento de Informática y Automática, UNED, Madrid, Spain *See the Appendix of F. Romanelli et al. , Proceedings of the 25 th IAEA Fusion Energy Conference 2014, Saint Petersburg, Russia

Outline • Introduction. • Data-driven disruption predictors. • The APODIS architechture. • The general optimization. • Genetic algorithms. • Results. • Summary and conclusions.

Introduction (1/2) • Up to now, disruptions are an unavoidable. • It is extremely important to solve this problem in sights of ITER. • Detecting them in advance provides the opportunity to start mitigation/avoidance actions. • The detection of precursors has to take into account the non-linear relationship of several plasma parameters. • Data-driven techniques to predict them. • At JET, the highest rates have been achieved using APODIS architecture. • This configuration has been revised and upgraded (mainly regarding the selection of plasma signals and features to analyze). • Yet, a global optimization, including the tuning of the internal parameters of the machine learning models, their quantity, width and internal parameters has not been revised. • A fast optimization method may provide hints to undercover how and why the disruptions evolve and occur. • The changes in the global shape of the predictors and the optimal set of signals to analyze at different campaigns could be extremely relevant.

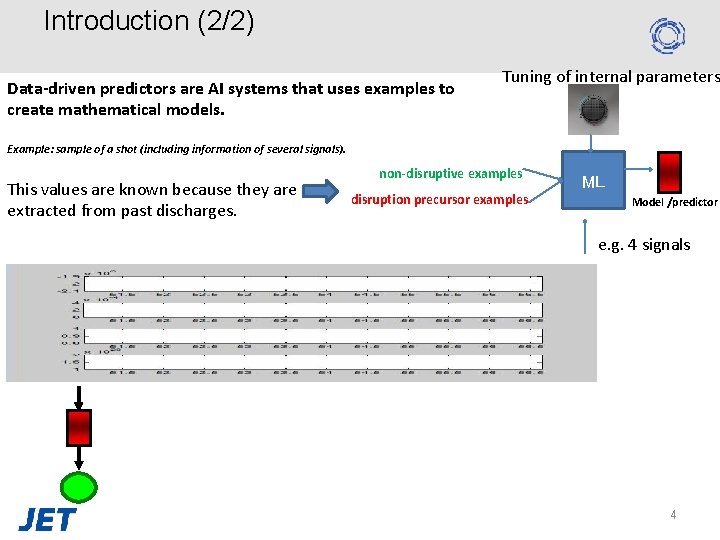

Introduction (2/2) Data-driven predictors are AI systems that uses examples to create mathematical models. Tuning of internal parameters Example: sample of a shot (including information of several signals). This values are known because they are extracted from past discharges. non-disruptive examples disruption precursor examples ML Model /predictor e. g. 4 signals 4

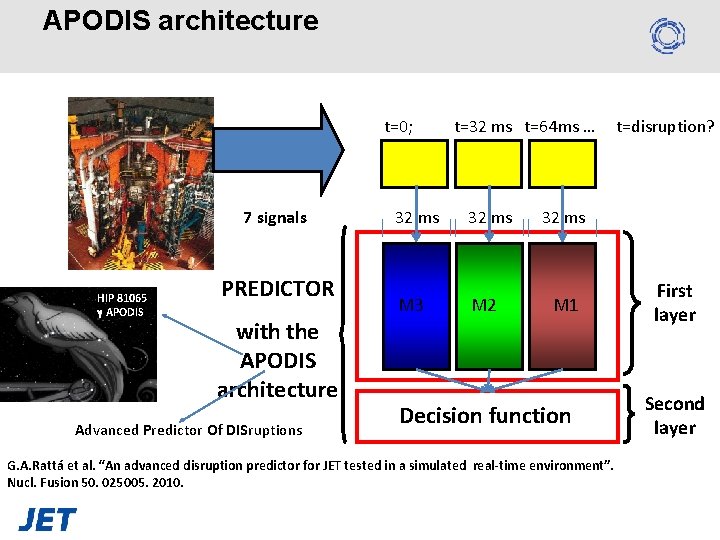

APODIS architecture t=0; 7 signals HIP 81065 γ APODIS PREDICTOR with the APODIS architecture Advanced Predictor Of DISruptions t=32 ms t=64 ms … 32 ms M 3 M 2 t=disruption? 32 ms M 1 Decision function G. A. Rattá et al. “An advanced disruption predictor for JET tested in a simulated real-time environment”. Nucl. Fusion 50. 025005. 2010. First layer Second layer

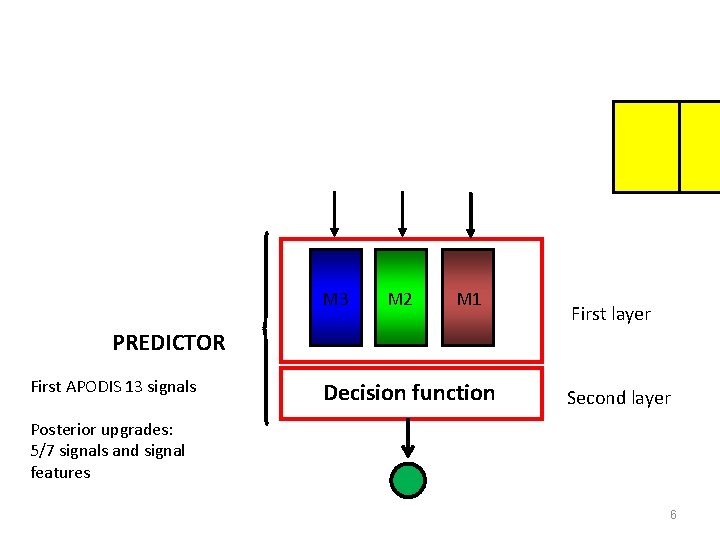

M 3 M 2 M 1 First layer PREDICTOR First APODIS 13 signals Decision function Second layer Posterior upgrades: 5/7 signals and signal features 6

The optimization problem But why 7 signals, 3 models in the first layer of 32 ms. Depending on the device and the training data (set of examples for the creation of the predictor), the optimal structure may change. 3? ? Requires to evaluate the best combination of: The number of models in the first layer. The width of the models. The internal parameters. 32 ms? ? Decision Function 1. Required upgrade Including this variables in the main optimization code would allow to obtain a quasi-optimal (or even optimal) data-driven system. Models in the first layer: 4 values {1; 2; 3; 4}. Windows width: 4 values {8; 16; 32; 64}. Gamma values: 8 {0. 001; 0. 005; 0. 01; 0. 05; 0. 1; 0. 5; 1; 10}. C parameter values: 8 {0. 01; 0. 1; 1; 5; 10; 50; 1: 00; 1000}. Signals and features: 18. Total= 42. where: n=i= 42 ~3 secs per train/test 418380 years!!!! 4 hours Global optimization Genetic Algorithms Plasma current. 2. Poloidal beta. 3. Poloidal beta time derivative. PC PB PB_d 4. Mode lock amplitude. ML 5. Safety factor at 95% of minor radius. Q 95 6. Safety factor at 95% of minor radius time Q 95_d derivative. 7. Total input power. 8. Plasma internal inductance. PTOT LI 9. Plasma internal inductance time derivative. LI_d 10. Plasma vertical centroid position. PVP 11. Plasma density. n 12. Stored diamagnetic energy time derivative. Wdia 13. Net power. Pnet

Genetic Algorithms (GA) • Computational methods inspired in natural selection. • In nature better adapted individuals have higher chances to survive and reproduce. • • • These individuals will transmit and combine their genetic material, creating children. The ones not adapted will have lower chances to transmit their DNA/RNA. GA emulates this behavior: 1 - Creation of a population of individuals (each individual represents a possible solution to a problem). 2 - Evaluation of each individual of the population according the objectives of the problem. This requires to have defined a metric to test how good each individual is solving the problem (i. e. the FF). 3 - Selection of parents (a higher probability to be chosen as parent is assigned to those individuals with higher FF values). 4 - Creation of children as a combination of parents’ genes (using genetic operators as crossover, mutation, reproductor). 5 - Unless an ending condition is satisfied, iterate from step 2, where the new population (created in step 4) is evaluated. • Instead of an exhaustive exploration of all the possible solutions, this method is based in combine promising solutions to create newer ones prone to be improved versions of their predecessors. 8

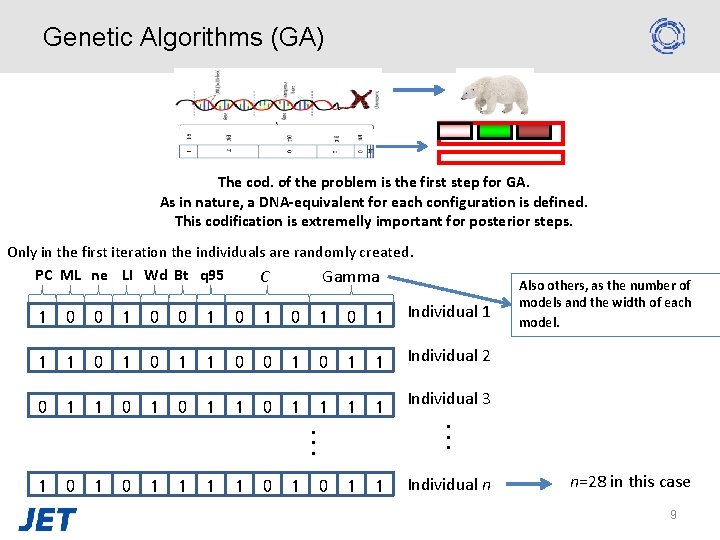

Genetic Algorithms (GA) The cod. of the problem is the first step for GA. As in nature, a DNA-equivalent for each configuration is defined. This codification is extremelly important for posterior steps. Only in the first iteration the individuals are randomly created. PC ML ne LI Wd Bt q 95 C Gamma 1 0 0 1 0 1 0 1 Individual 1 1 1 0 0 1 1 Individual 2 0 1 1 1 1 0 1 1 . . . 1 0 1 Also others, as the number of models and the width of each model. Individual 3. . . Individual n n=28 in this case 9

Genetic Algorithms (GA) • Computational methods inspired in natural selection. • In nature better adapted individuals have higher chances to reproduce and survive. • • • These individuals will transmit and combine their genetic material, creating children. The ones not adapted will have lower chances to transmit their DNA/RNA. GA emulates this behavior: 1 - Creation of a population of individuals (each individual represents a possible solution to a problem). 2 - Evaluation of each individual of the population according the objectives of the problem. This requires to have defined a metric to test how good each individual is solving the problem (i. e. the FF). 3 - Selection of parents (a higher probability to be chosen as parent is assigned to those individuals with higher FF values). 4 - Creation of children as a combination of parents’ genes (using genetic operators as crossover, mutation, reproductor). 5 - Unless an ending condition is satisfied, iterate from step 2, where the new population (created in step 4) is evaluated. • Instead of an exhaustive exploration of all the possible solutions, this method is based in combine promising solutions to create newer ones prone to be improved versions of their predecessors. 10

Genetic Algorithms (GA) Genotype Phenotype Fitness DNA is not an animal, but a digital code to create one. The same happens with the DNA-equivalent strings. A BP is required to read the instructions and create the phenotype (disruption predictor candidate) based on the instructions. 1 0 1 0 1 1 1 0 0 0 1 1 0 1 Individual 1 1 1 Individual 2 1 . . . 1 1 0 1 1 Individual 3. . . 1 Individual n Building program Test Predictor 1 program Test Predictor 2 program Test Predictor 3 program Building program Test Predictor n program 80 12 90 11

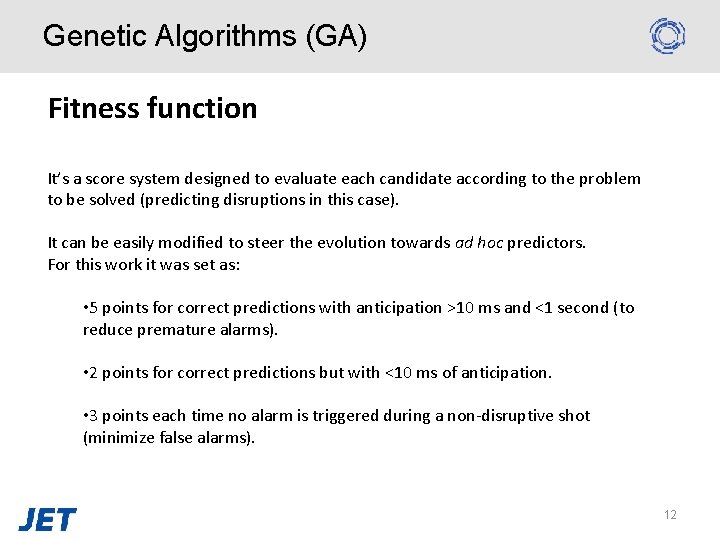

Genetic Algorithms (GA) Fitness function It’s a score system designed to evaluate each candidate according to the problem to be solved (predicting disruptions in this case). It can be easily modified to steer the evolution towards ad hoc predictors. For this work it was set as: • 5 points for correct predictions with anticipation >10 ms and <1 second (to reduce premature alarms). • 2 points for correct predictions but with <10 ms of anticipation. • 3 points each time no alarm is triggered during a non-disruptive shot (minimize false alarms). 12

Genetic Algorithms (GA) • Computational methods inspired in natural selection. • In nature better adapted individuals have higher chances to reproduce and survive. • These individuals will transmit and combine their genetic material, creating children. • The ones not adapted will have lower chances to transmit their DNA/RNA. • GA emulates this behaviors: 1 - Creation of a population of individuals (each individual represents a possible solution to a problem). 2 - Evaluation of each individual of the population according the objectives of the problem. This requires to have defined a metric 13 to test how good each individual is solving the problem (i. e. the

Genetic Algorithms (GA) Lottery system: higher chances to be selected as parents are assigned to these individuals with higher FF values. To create children, selected parents genes are mixed using the cross-over operator. The new population of children replace the previous population. 14

Genetic Algorithms (GA) • Computational methods inspired in natural selection. • In nature better adapted individuals have higher chances to reproduce and survive. • These individuals will transmit and combine their genetic material, creating children. • The ones not adapted will have lower chances to transmit their DNA/RNA. • GA emulates this behavior: 1 - Creation of a population of individuals (each individual represents a possible solution to a problem). 2 - Evaluation of each individual of the population according the objectives of the problem. This requires to have defined a metric to test how good each individual is solving the problem (i. e. the FF). 15 3 - Selection of parents (a higher probability to be chosen as parent is

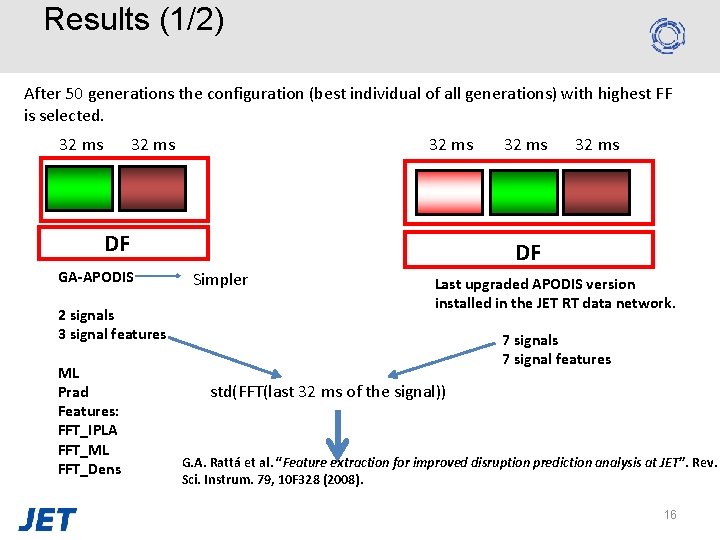

Results (1/2) After 50 generations the configuration (best individual of all generations) with highest FF is selected. 32 ms DF GA-APODIS 2 signals 3 signal features ML Prad Features: FFT_IPLA FFT_ML FFT_Dens 32 ms DF Simpler Last upgraded APODIS version installed in the JET RT data network. 7 signals 7 signal features std(FFT(last 32 ms of the signal)) G. A. Rattá et al. “Feature extraction for improved disruption prediction analysis at JET”. Rev. Sci. Instrum. 79, 10 F 328 (2008). 16

Results (2/2) Train Test: 467 shots 158 disruptives Database D 1: 1178 shots 144 disruptives Ideal [%] APODIS-Online (trained with 66, 45 database D 1) APODIS-GA (trained with database D 1) 86, 08 Correct Total Late [%] [%] Prematures False [%] 73, 41 12, 65 86, 076 6, 96 1, 31 89. 24 2, 53 91, 77 3, 16 3, 55 17

Summary and conclusions • Disruptions are a key problem in tokamaks and their prediction helps to perform mitigation actions. • • The best systems (in terms of prediction rates and times) have been achieved using data-driven system. Up to now, a complete optimization algorithm able to consider all possible parameters (general structure, feature selection, kernel parameter tuning) was not conceived to enhance APODIS results. • Main achievements: • The prediction rates are remarkably higher using the same training/testing database. • Simpler predictor: requires less models in the first layer and it needs only to rely on 4 signals. • Adjustable to circumstances: e. g. it can be programmed to trigger alarms with higher anticipations (even if they mean to activate more false alarms) • Or the opposite. Not a solution for ITER yet (it still requires a considerably big database to be created), but… • The procedure can be performed over different sets of experimental campaigns. • The analysis of the changes in the shape and the optimal set of signals selected in each period could provide hints to make better predictors. – …or even to shed some light upon the phenomenon’s physics. 18

Thanks for your attention!

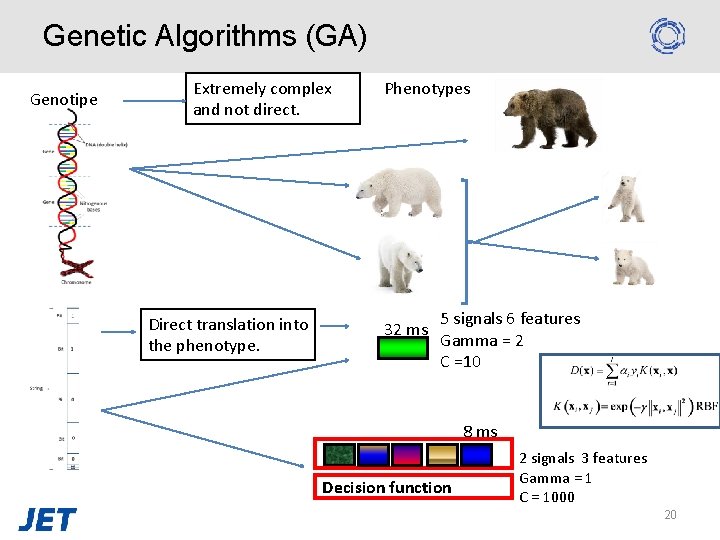

Genetic Algorithms (GA) Genotipe Extremely complex and not direct. Direct translation into the phenotype. Phenotypes 32 ms 5 signals 6 features Gamma = 2 C =10 8 ms Decision function 2 signals 3 features Gamma = 1 C = 1000 20

- Slides: 20