Optimal Learning Informs Tut ORials October 2008 Warren

Optimal Learning Informs Tut. ORials October, 2008 . Warren Powell Peter Frazier With research by Ilya Ryzhov Princeton University © 2008 B. Warren B. Powell © 2008 Warren Powell, Princeton University 1

Outline n Introduction © 2008 Warren B. Powell Slide 2

Applications n Sports » Who should be in the batting lineup for a baseball team? » What is the best group of five basketball players out of a team of 12 to be your starting lineup? » Who are the best four people to man the four-person boat for crew racing? » Who will perform the best in competition for your gymnastics team? © 2008 Warren B. Powell 3

Applications n Figure out Manhattan: » » » Walking Subway/walking Taxi Street bus Driving © 2008 Warren B. Powell 4

Applications n Biomedical research » How do we find the best drug to cure cancer? » There are millions of combinations, with laboratory budgets that cannot test everything. » We need a method for sequencing experiments. © 2008 Warren B. Powell 5

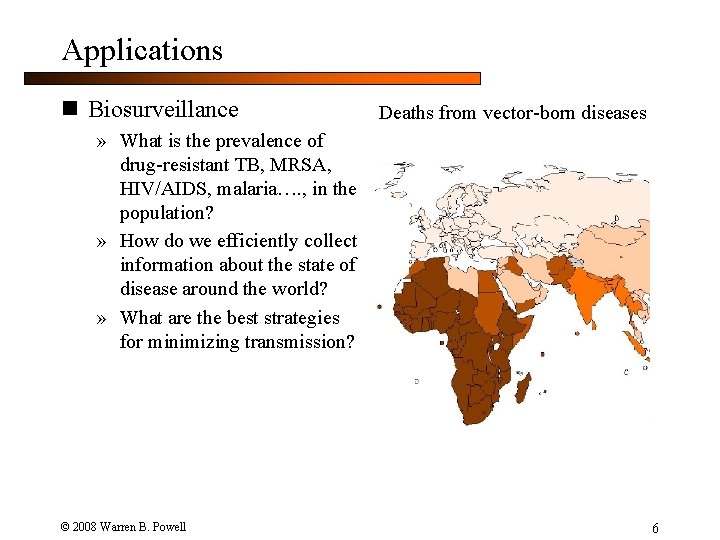

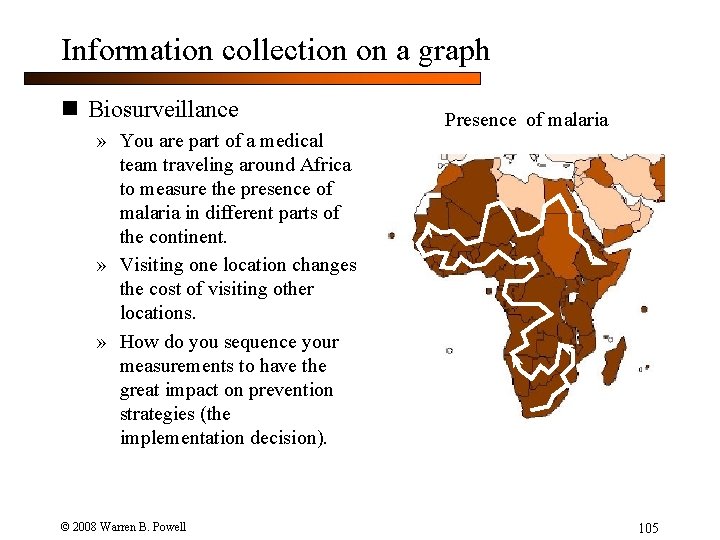

Applications n Biosurveillance Deaths from vector-born diseases » What is the prevalence of drug-resistant TB, MRSA, HIV/AIDS, malaria…. , in the population? » How do we efficiently collect information about the state of disease around the world? » What are the best strategies for minimizing transmission? © 2008 Warren B. Powell 6

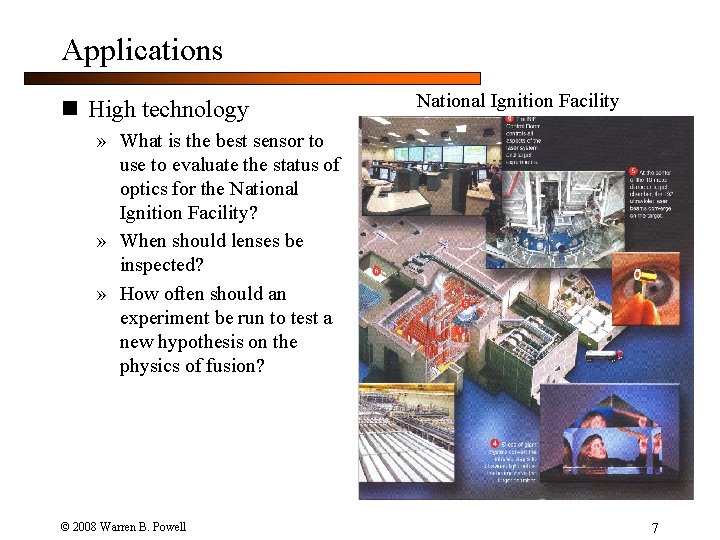

Applications n High technology National Ignition Facility » What is the best sensor to use to evaluate the status of optics for the National Ignition Facility? » When should lenses be inspected? » How often should an experiment be run to test a new hypothesis on the physics of fusion? © 2008 Warren B. Powell 7

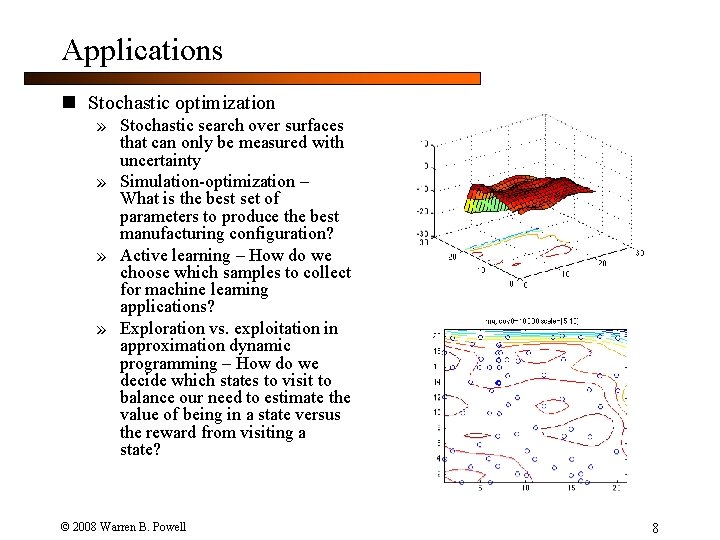

Applications n Stochastic optimization » Stochastic search over surfaces that can only be measured with uncertainty » Simulation-optimization – What is the best set of parameters to produce the best manufacturing configuration? » Active learning – How do we choose which samples to collect for machine learning applications? » Exploration vs. exploitation in approximation dynamic programming – How do we decide which states to visit to balance our need to estimate the value of being in a state versus the reward from visiting a state? © 2008 Warren B. Powell 8

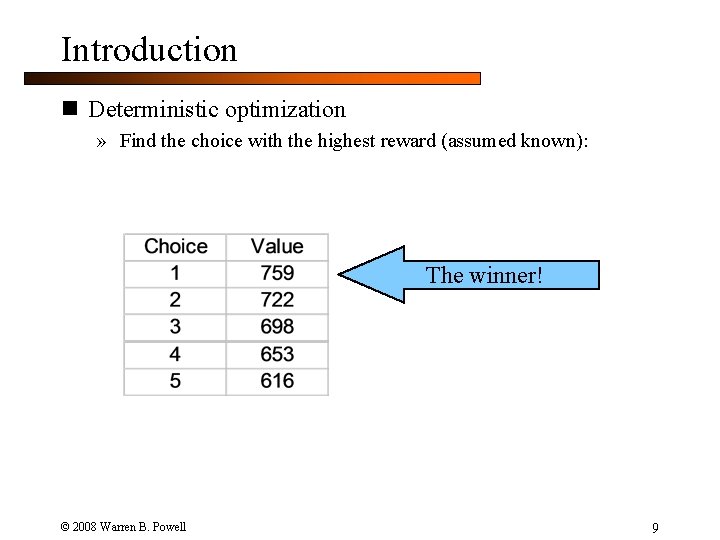

Introduction n Deterministic optimization » Find the choice with the highest reward (assumed known): The winner! © 2008 Warren B. Powell 9

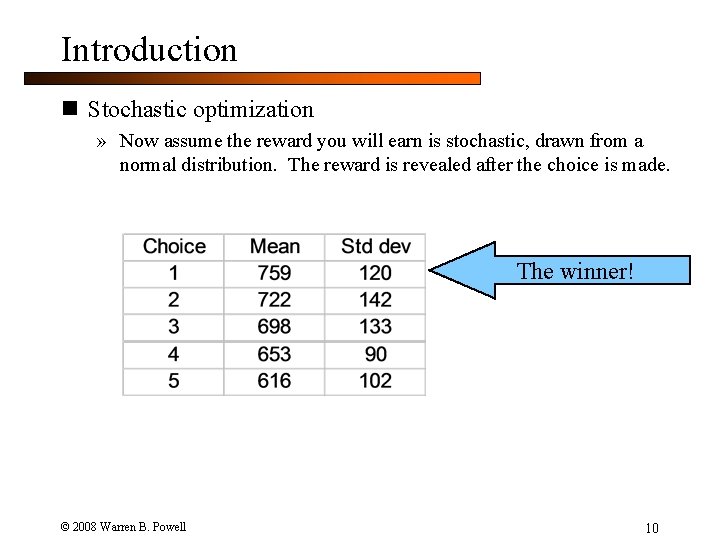

Introduction n Stochastic optimization » Now assume the reward you will earn is stochastic, drawn from a normal distribution. The reward is revealed after the choice is made. The winner! © 2008 Warren B. Powell 10

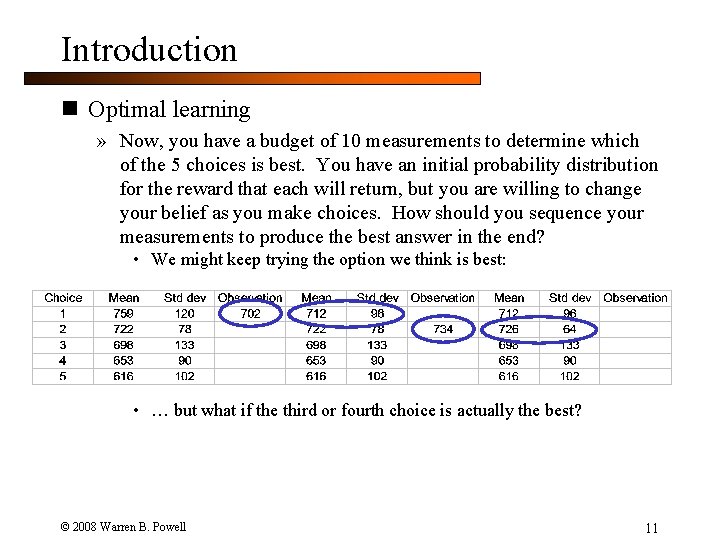

Introduction n Optimal learning » Now, you have a budget of 10 measurements to determine which of the 5 choices is best. You have an initial probability distribution for the reward that each will return, but you are willing to change your belief as you make choices. How should you sequence your measurements to produce the best answer in the end? • We might keep trying the option we think is best: • … but what if the third or fourth choice is actually the best? © 2008 Warren B. Powell 11

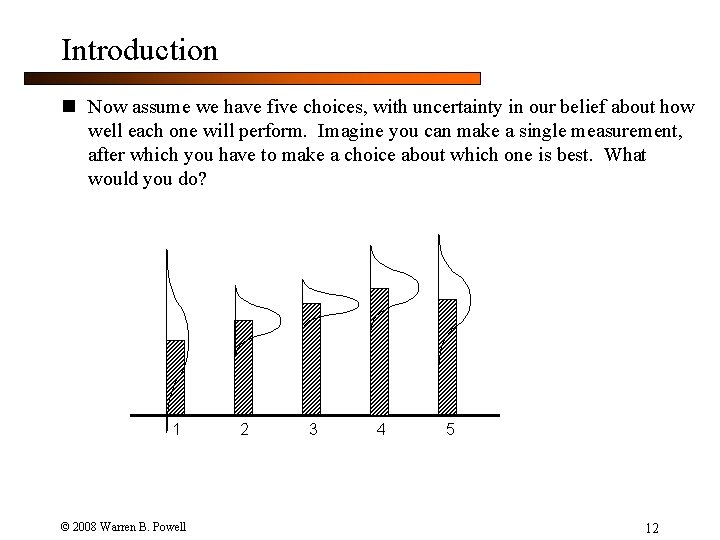

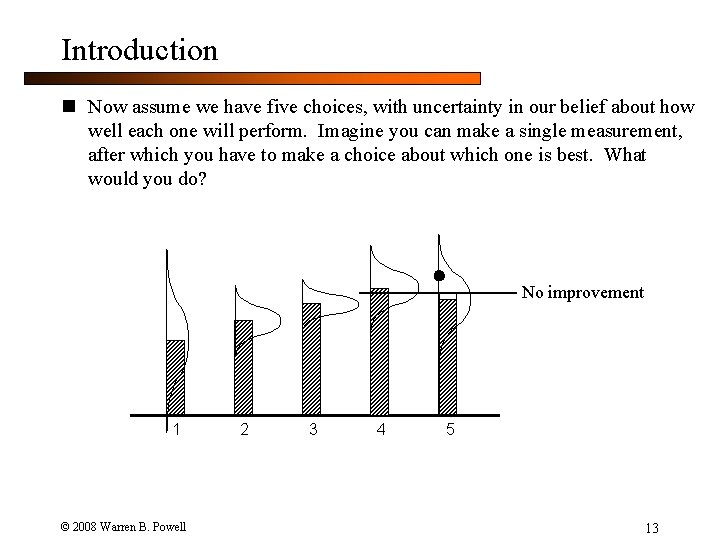

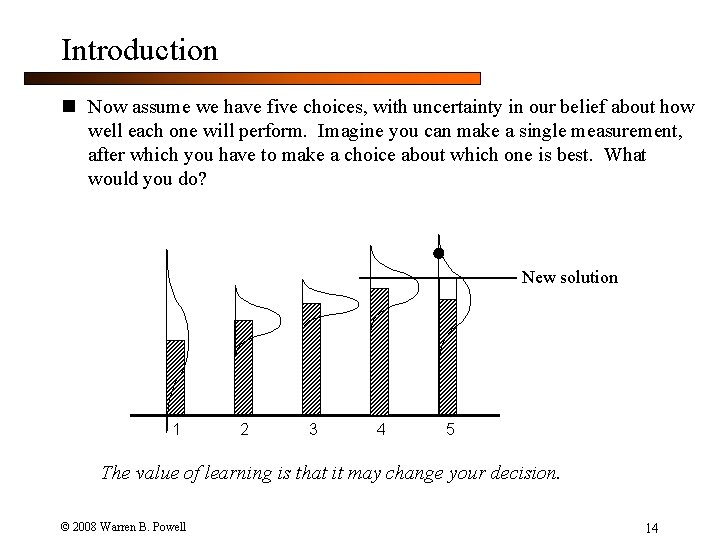

Introduction n Now assume we have five choices, with uncertainty in our belief about how well each one will perform. Imagine you can make a single measurement, after which you have to make a choice about which one is best. What would you do? 1 © 2008 Warren B. Powell 2 3 4 5 12

Introduction n Now assume we have five choices, with uncertainty in our belief about how well each one will perform. Imagine you can make a single measurement, after which you have to make a choice about which one is best. What would you do? No improvement 1 © 2008 Warren B. Powell 2 3 4 5 13

Introduction n Now assume we have five choices, with uncertainty in our belief about how well each one will perform. Imagine you can make a single measurement, after which you have to make a choice about which one is best. What would you do? New solution 1 2 3 4 5 The value of learning is that it may change your decision. © 2008 Warren B. Powell 14

Outline n Types of learning problems © 2008 Warren B. Powell Slide 15

Elements of a learning problem n Things we have to think about: » How do we make measurements? What is the nature of the measurement decision? » What is the effect of a measurement? How does it change our state of knowledge? » What do we do with the results of what we learn from a measurement? What is the nature of the implementation decision? » How do we evaluate how well we have done with the results of our measurement? » Do we learn as we go, or are we able to make a series of measurements before solving a problem? © 2008 Warren B. Powell 16

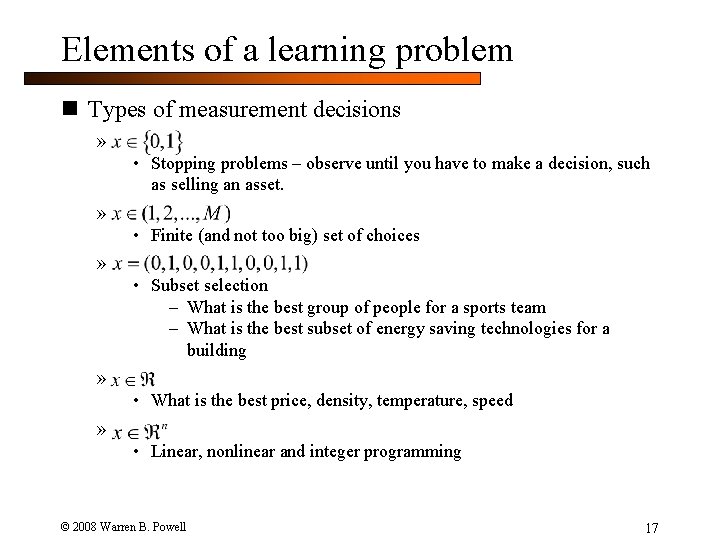

Elements of a learning problem n Types of measurement decisions » • Stopping problems – observe until you have to make a decision, such as selling an asset. » • Finite (and not too big) set of choices » • Subset selection – What is the best group of people for a sports team – What is the best subset of energy saving technologies for a building » • What is the best price, density, temperature, speed » • Linear, nonlinear and integer programming © 2008 Warren B. Powell 17

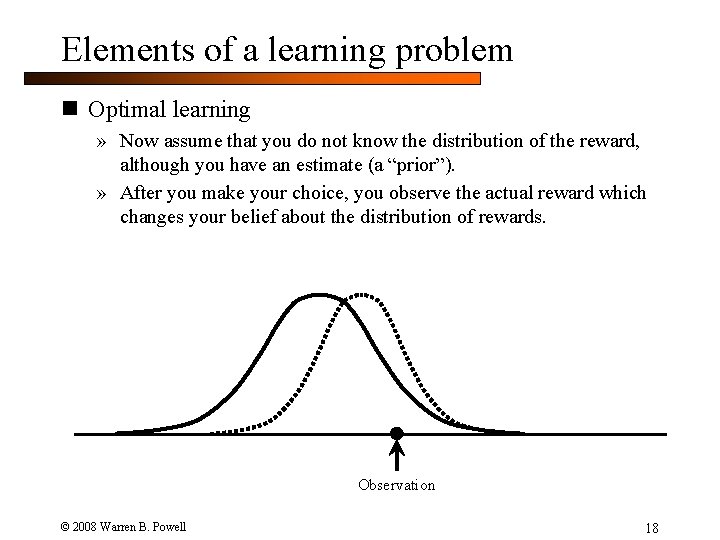

Elements of a learning problem n Optimal learning » Now assume that you do not know the distribution of the reward, although you have an estimate (a “prior”). » After you make your choice, you observe the actual reward which changes your belief about the distribution of rewards. Observation © 2008 Warren B. Powell 18

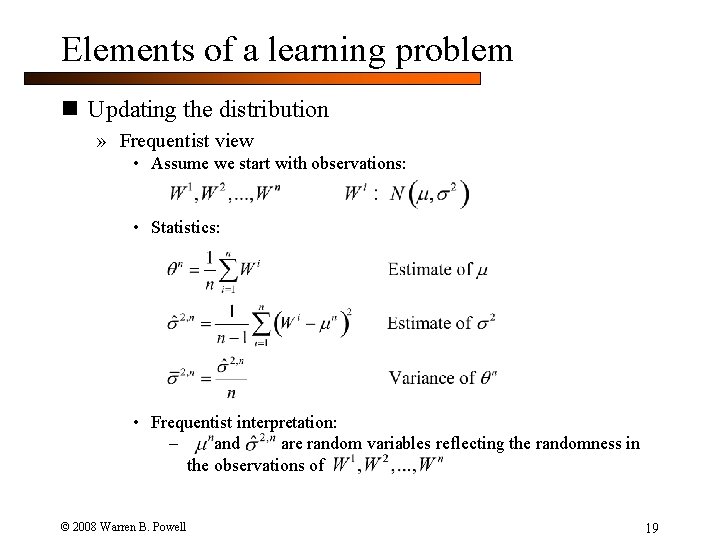

Elements of a learning problem n Updating the distribution » Frequentist view • Assume we start with observations: • Statistics: • Frequentist interpretation: – and are random variables reflecting the randomness in the observations of © 2008 Warren B. Powell 19

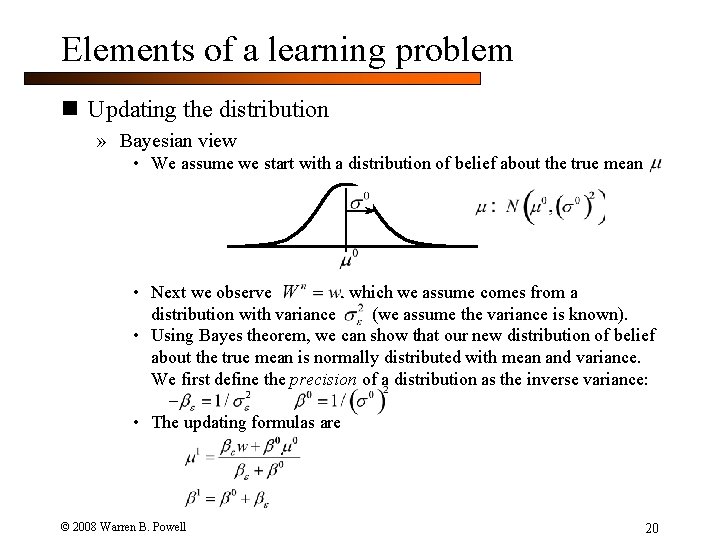

Elements of a learning problem n Updating the distribution » Bayesian view • We assume we start with a distribution of belief about the true mean • Next we observe , which we assume comes from a distribution with variance (we assume the variance is known). • Using Bayes theorem, we can show that our new distribution of belief about the true mean is normally distributed with mean and variance. We first define the precision of a distribution as the inverse variance: – • The updating formulas are © 2008 Warren B. Powell 20

Elements of a learning problem n Frequentist vs. Bayesian » For optimal learning applications, we are generally in the situation where we have some knowledge about our choices, and we have to decide which one to measure to improve our final decision. » The state of knowledge: • Frequentist view: • Bayesian view: » For the remainder of our talk, we will adopt a Bayesian view since it allows us to introduce prior knowledge, a common property of learning problems. © 2008 Warren B. Powell 21

Elements of a learning problem n Relationships between beliefs and measurements » Beliefs • Uncorrelated – What we know about one choice tells us nothing about what we know about another choice • Correlated – If our belief of one choice is high, our belief about another choice might be higher » Measurement noise • Uncorrelated - If we were to make two measurements at the same time, the measurements are independent. • Correlated: – At a point in time – Simultaneous measurements are correlated. – Over time – Measurements of different choices may or may not be correlated, but measurements of the same choice at different points in time are correlated. © 2008 Warren B. Powell 22

Elements of a learning problem n Types of learning probems » On-line learning • Learn as you earn • Give example problems – Finding the best path to work – What is the best set of energy-saving technologies to use for your building? – What is the best medication to control your diabetes? » Off-line learning • There is a phase of information collection with a finite (sometimes small) budget. • You are allowed to make a series of measurements, after which you make an implementation decision. • Examples: – Finding the best drug compound through laboratory experiments – Finding the best design of a manufacturing configuration or engineering design which is evaluated using an expensive simulation. – What is the best combination of designs for hydrogen production, storage and conversion. © 2008 Warren B. Powell 23

Elements of a learning problem n Measuring the benefits of knowledge: » Minimizing/maximizing a cost or reward • Minimizing expected cost/maximizing reward or utility • Minimizing expected opportunity cost (minimizing the gap from the best possible) • Collecting information to produce a better solution to an optimization problem. » Making the right choice • Maximizing the probability of making the correct selection • Indifference zone selection – maximizing the probability of collecting a choice whose performance is within of the optimal. » Statistical measures • Minimizing a measure (square, absolute value) of the distance between observations and a predictive function (classical estimation) • Minimizing a metric (e. g. Kullback-Leibler divergence) measuring the distance between actual and predicted probability distributions. • Minimizing entropy (or entropic loss) © 2008 Warren B. Powell 24

Outline n Measurement policies © 2008 Warren B. Powell Slide 25

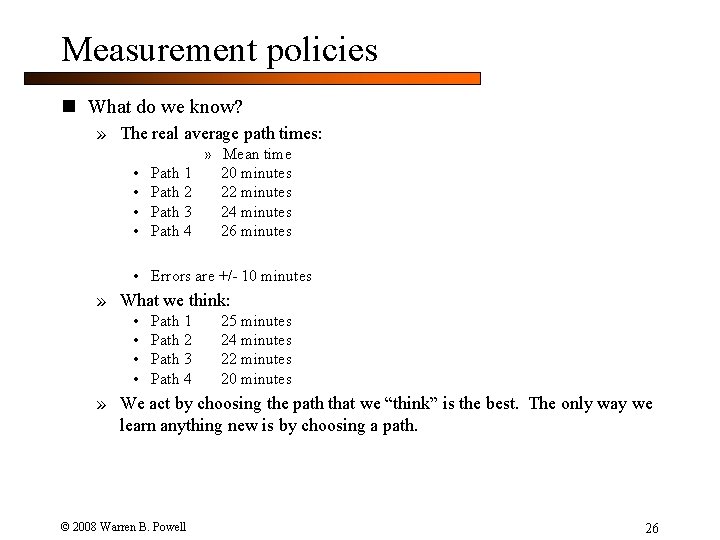

Measurement policies n What do we know? » The real average path times: • • Path 1 Path 2 Path 3 Path 4 » Mean time 20 minutes 22 minutes 24 minutes 26 minutes • Errors are +/- 10 minutes » What we think: • • Path 1 Path 2 Path 3 Path 4 25 minutes 24 minutes 22 minutes 20 minutes » We act by choosing the path that we “think” is the best. The only way we learn anything new is by choosing a path. © 2008 Warren B. Powell 26

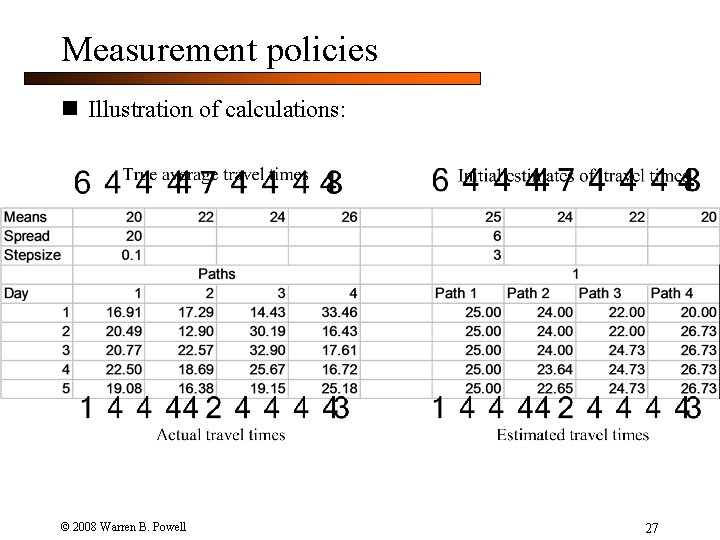

Measurement policies n Illustration of calculations: © 2008 Warren B. Powell 27

Measurement policies © 2008 Warren B. Powell 28

Measurement policies © 2008 Warren B. Powell 29

Measurement policies © 2008 Warren B. Powell 30

Measurement policies © 2008 Warren B. Powell 31

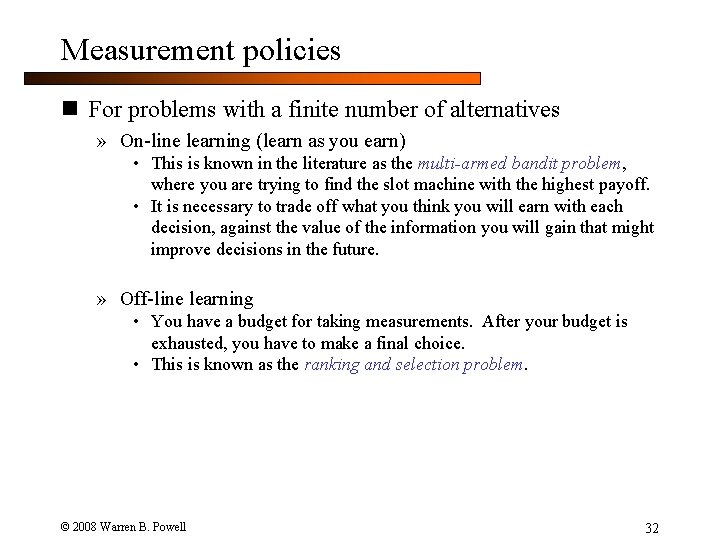

Measurement policies n For problems with a finite number of alternatives » On-line learning (learn as you earn) • This is known in the literature as the multi-armed bandit problem, where you are trying to find the slot machine with the highest payoff. • It is necessary to trade off what you think you will earn with each decision, against the value of the information you will gain that might improve decisions in the future. » Off-line learning • You have a budget for taking measurements. After your budget is exhausted, you have to make a final choice. • This is known as the ranking and selection problem. © 2008 Warren B. Powell 32

Measurement policies n Elements of a measurement policy: » Deterministic or sequential • Deterministic policy - you decide what you are going to measure in advance. • Sequential policy – Future measurements depend on past observations. » Designing a measurement policy • We have to strike a balance between the value of a good measurement policy and the cost of computing it • If we are drilling oil exploration holes, we might be willing to spend a day on the computer deciding what to do next • We may need a trivial calculation if we are guiding an algorithm that will perform thousands of iterations. » Evaluating a policy • The goal is to find a policy that gets us close enough to the truth that we make the optimal (or near-optimal) decisions • To do this, we have to assume a truth, and then use a policy to try to guess at the truth. © 2008 Warren B. Powell 33

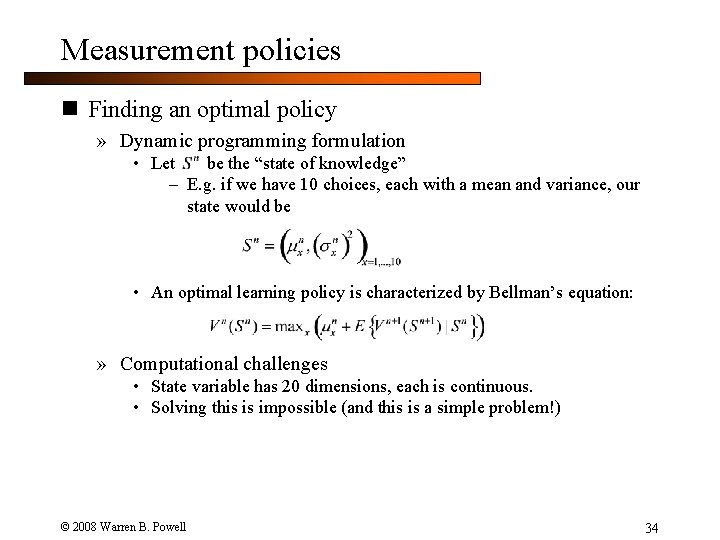

Measurement policies n Finding an optimal policy » Dynamic programming formulation • Let be the “state of knowledge” – E. g. if we have 10 choices, each with a mean and variance, our state would be • An optimal learning policy is characterized by Bellman’s equation: » Computational challenges • State variable has 20 dimensions, each is continuous. • Solving this is impossible (and this is a simple problem!) © 2008 Warren B. Powell 34

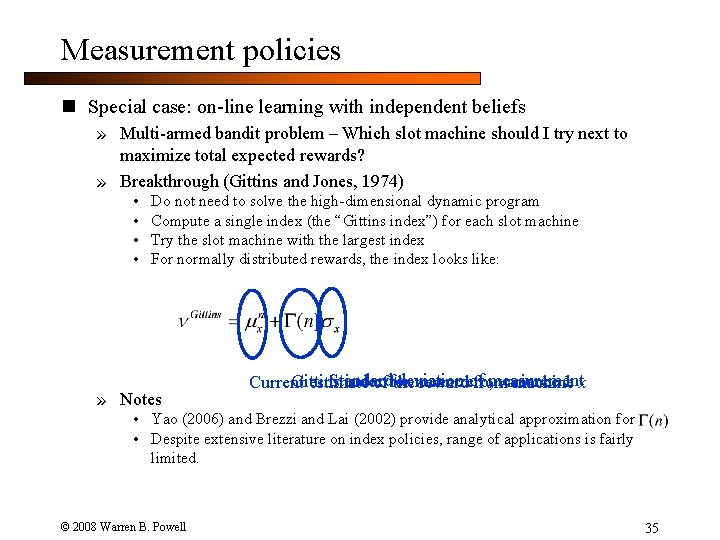

Measurement policies n Special case: on-line learning with independent beliefs » Multi-armed bandit problem – Which slot machine should I try next to maximize total expected rewards? » Breakthrough (Gittins and Jones, 1974) • • Do not need to solve the high-dimensional dynamic program Compute a single index (the “Gittins index”) for each slot machine Try the slot machine with the largest index For normally distributed rewards, the index looks like: » Notes Standard deviation of measurement Gittins index mean zero, variance 1 x Current estimate of for the reward from machine • Yao (2006) and Brezzi and Lai (2002) provide analytical approximation for • Despite extensive literature on index policies, range of applications is fairly limited. © 2008 Warren B. Powell 35

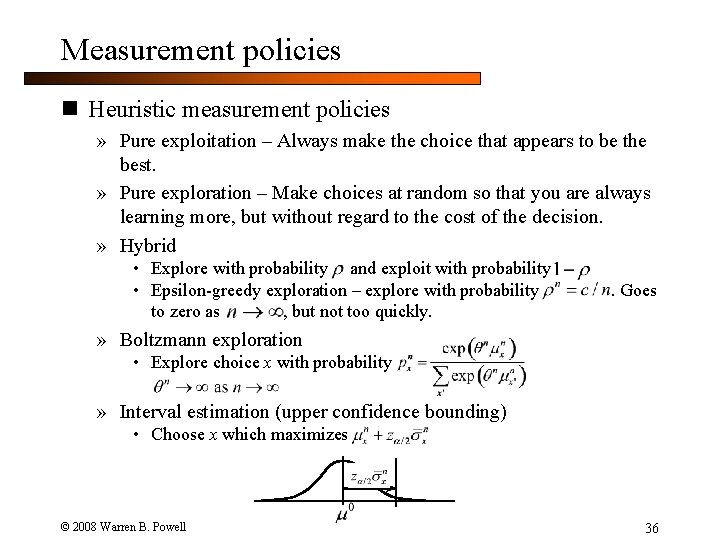

Measurement policies n Heuristic measurement policies » Pure exploitation – Always make the choice that appears to be the best. » Pure exploration – Make choices at random so that you are always learning more, but without regard to the cost of the decision. » Hybrid • Explore with probability and exploit with probability • Epsilon-greedy exploration – explore with probability to zero as , but not too quickly. . Goes » Boltzmann exploration • Explore choice x with probability » Interval estimation (upper confidence bounding) • Choose x which maximizes © 2008 Warren B. Powell 36

Measurement policies n Approximate policies for off-line learning » Optimal computing budget allocation (Chen et al) • Formulates the problem of allocating a set of observations as an optimization problem subject to a budget constraint » LL(s) – Batch linear loss (Chick et al) » Maximizing the expected value of a single measurement • (R 1, …, R 1) Gupta and Miescke (1996) • EVI (Chick, Branke and Schmidt, under review) • “Knowledge gradient” (Frazier and Powell, 2008) © 2008 Warren B. Powell 37

Measurement policies n Evaluating measurement policies » How do we compare one measurement policy to another? » One possibility: … but we would be wrong! © 2008 Warren B. Powell 38

Measurement policies n Illustration » Setup: • Option 1 is worth 15 • Remaining 999 options are worth 10 • Standard deviation of a measurement is 5 » Policy 1: • Measure each option 10 times » Policy 2: • Measure remaining 999 options once. • Measure first option 9, 001 times » Which measurement policy produces the best result? © 2008 Warren B. Powell 39

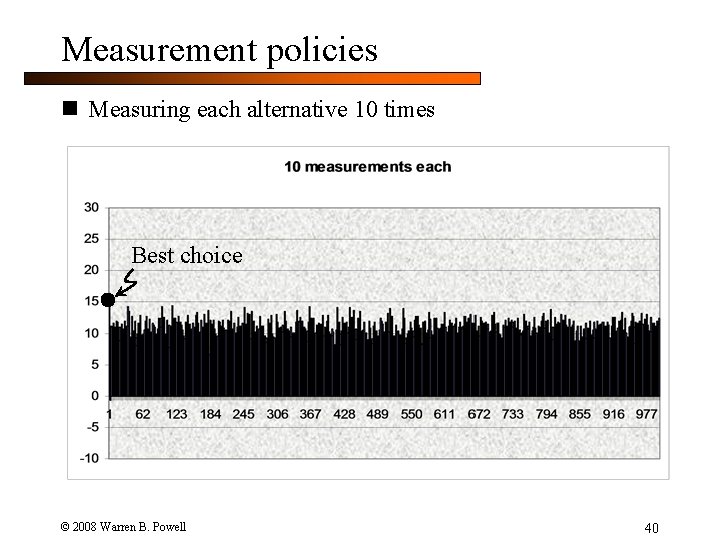

Measurement policies n Measuring each alternative 10 times Best choice © 2008 Warren B. Powell 40

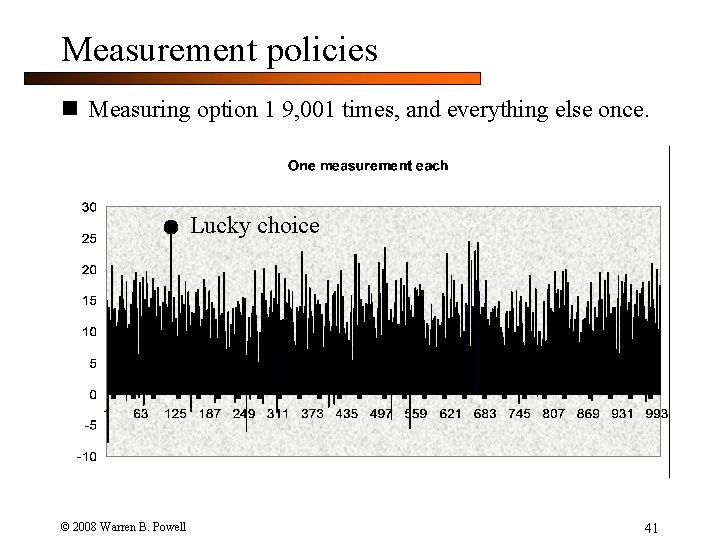

Measurement policies n Measuring option 1 9, 001 times, and everything else once. Lucky choice © 2008 Warren B. Powell 41

Measurement policies n What did we find? » Although option 1 is best, we will almost always identify some other option as being better, just through randomness. This method rewards collecting too little information. n A better way: » Assume a truth for each x. We do this by choosing a sample realization of a truth from a prior probability distribution for the mean. » Given this truth, apply policy to produce statistical estimates given by. Let be the best solution based on these estimates. Repeat this n times and evaluate the policy using » Note: This must be done with realistic (but not real) data. © 2008 Warren B. Powell 42

Outline n The knowledge gradient policy © 2008 Warren B. Powell Slide 43

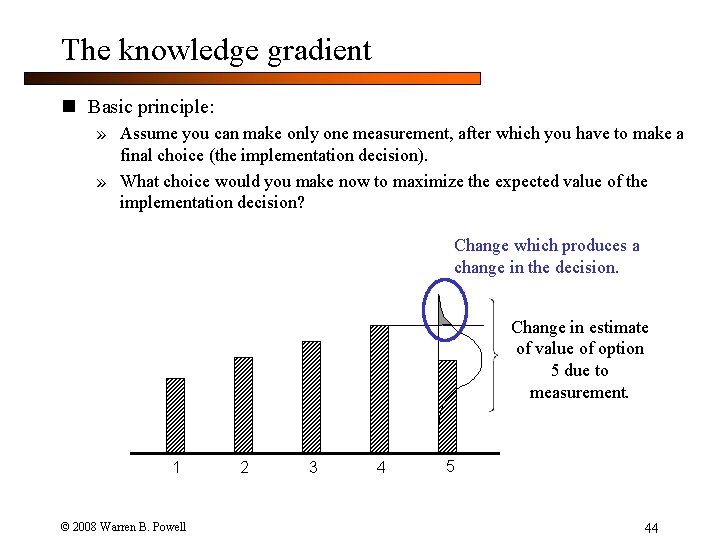

The knowledge gradient n Basic principle: » Assume you can make only one measurement, after which you have to make a final choice (the implementation decision). » What choice would you make now to maximize the expected value of the implementation decision? Change which produces a change in the decision. Change in estimate of value of option 5 due to measurement. 1 © 2008 Warren B. Powell 2 3 4 5 44

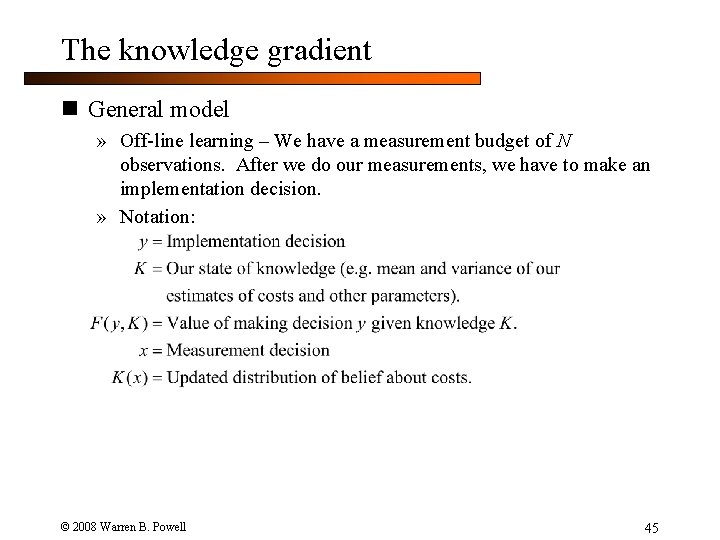

The knowledge gradient n General model » Off-line learning – We have a measurement budget of N observations. After we do our measurements, we have to make an implementation decision. » Notation: © 2008 Warren B. Powell 45

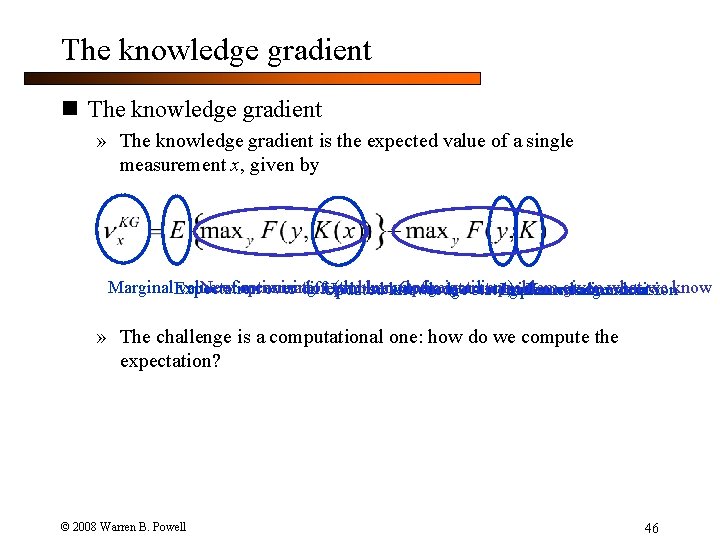

The knowledge gradient n The knowledge gradient » The knowledge gradient is the expected value of a single measurement x, given by Marginal. Expectation value of optimization measuring x. Updated (the knowledge gradient) Optimization given what we New problem over different measurement outcomes Implementation decision knowledge stateproblem given measurement x know Knowledge state » The challenge is a computational one: how do we compute the expectation? © 2008 Warren B. Powell 46

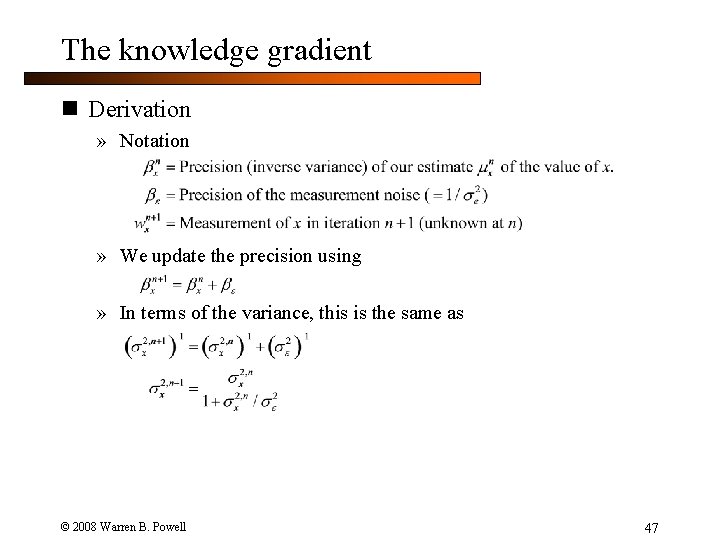

The knowledge gradient n Derivation » Notation » We update the precision using » In terms of the variance, this is the same as © 2008 Warren B. Powell 47

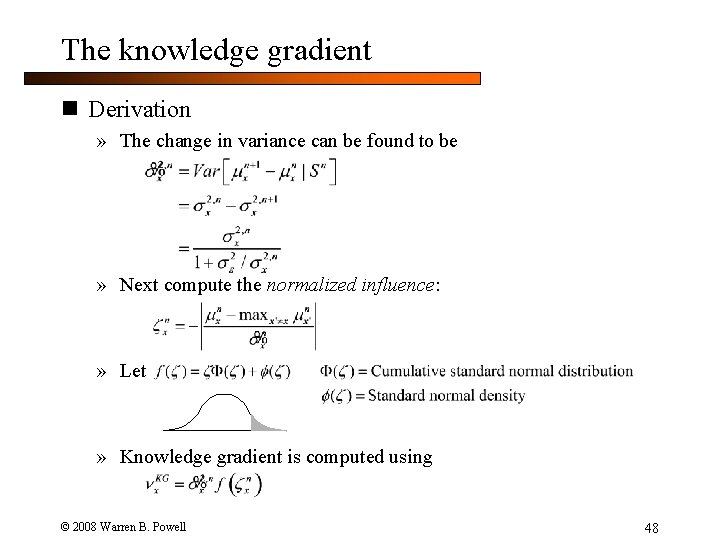

The knowledge gradient n Derivation » The change in variance can be found to be » Next compute the normalized influence: » Let » Knowledge gradient is computed using © 2008 Warren B. Powell 48

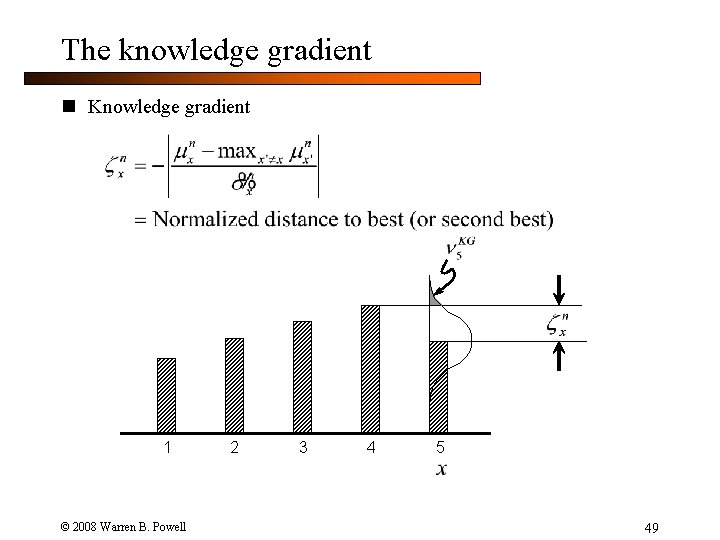

The knowledge gradient n Knowledge gradient 1 © 2008 Warren B. Powell 2 3 4 5 49

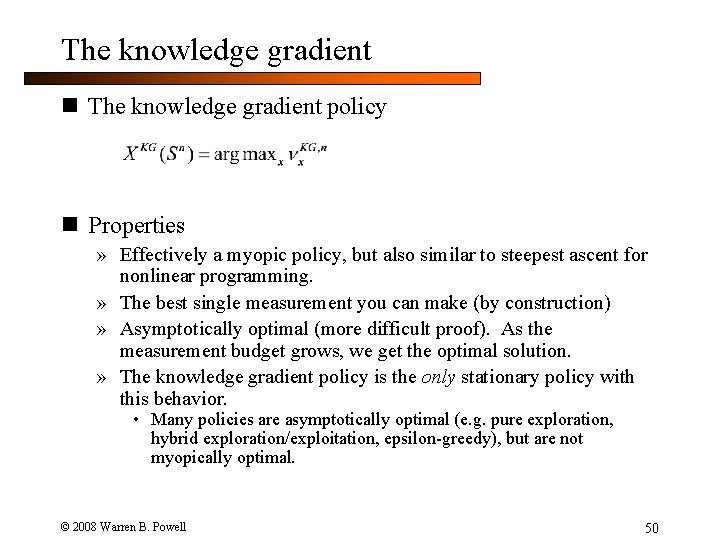

The knowledge gradient n The knowledge gradient policy n Properties » Effectively a myopic policy, but also similar to steepest ascent for nonlinear programming. » The best single measurement you can make (by construction) » Asymptotically optimal (more difficult proof). As the measurement budget grows, we get the optimal solution. » The knowledge gradient policy is the only stationary policy with this behavior. • Many policies are asymptotically optimal (e. g. pure exploration, hybrid exploration/exploitation, epsilon-greedy), but are not myopically optimal. © 2008 Warren B. Powell 50

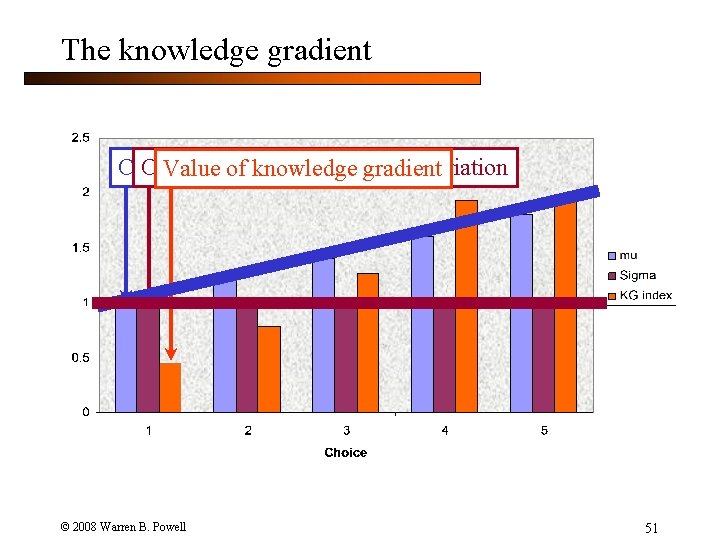

The knowledge gradient Current estimate of of value standard of a decision deviation Value of knowledge gradient © 2008 Warren B. Powell 51

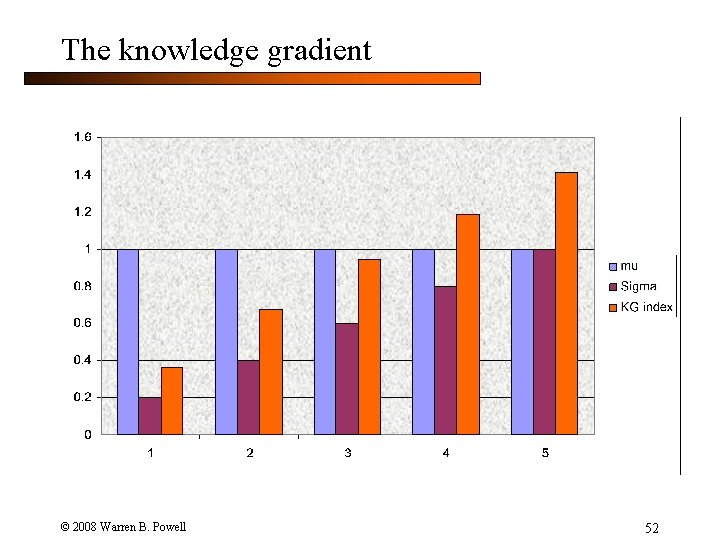

The knowledge gradient © 2008 Warren B. Powell 52

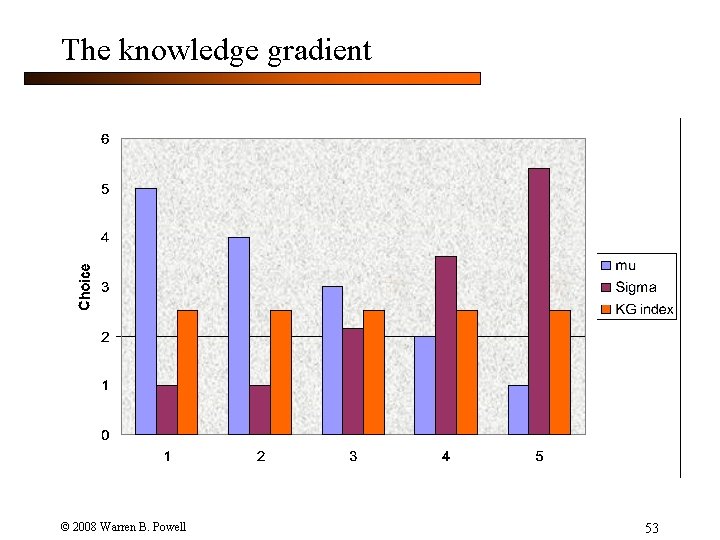

The knowledge gradient © 2008 Warren B. Powell 53

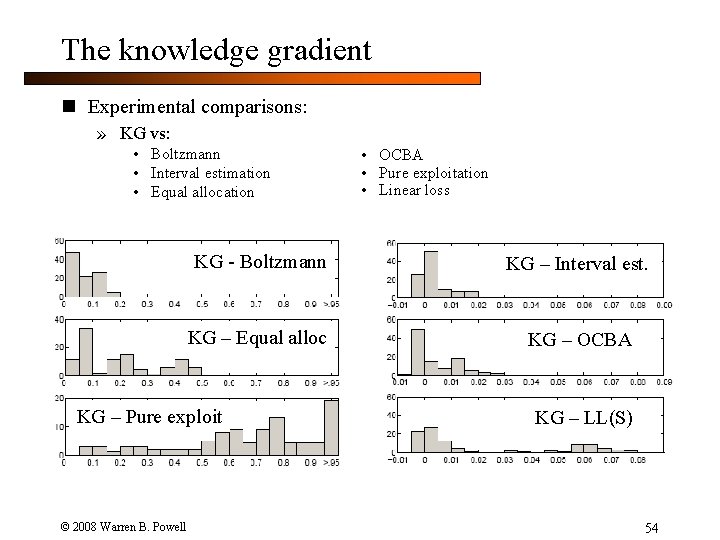

The knowledge gradient n Experimental comparisons: » KG vs: • Boltzmann • Interval estimation • Equal allocation KG - Boltzmann KG – Interval est. KG – Equal alloc KG – OCBA KG – Pure exploit © 2008 Warren B. Powell • OCBA • Pure exploitation • Linear loss KG – LL(S) 54

The knowledge gradient n Notes: » KG slightly outperforms Interval Estimation (IE), OCBA, and LL(S), and is easier to compute than OCBA and LL(S). » KG is fairly easy to compute for independent, normally distributed rewards. » But KG is a general concept which generalizes to other important problem classes: • • On-line applications Correlated beliefs Correlated measurements (e. g. Common Random Numbers) … more general optimization problems © 2008 Warren B. Powell 55

Outline n The knowledge gradient for on-line applications Ø Research by Ilya Ryzhov, Ph. D. candidate, Princeton University © 2008 Warren B. Powell Slide 56

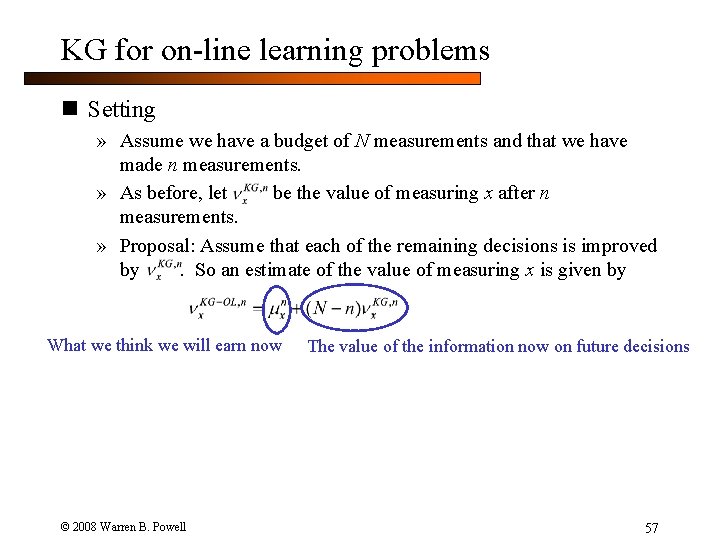

KG for on-line learning problems n Setting » Assume we have a budget of N measurements and that we have made n measurements. » As before, let be the value of measuring x after n measurements. » Proposal: Assume that each of the remaining decisions is improved by. So an estimate of the value of measuring x is given by What we think we will earn now The value of the information now on future decisions » For infinite horizon problems: © 2008 Warren B. Powell 57

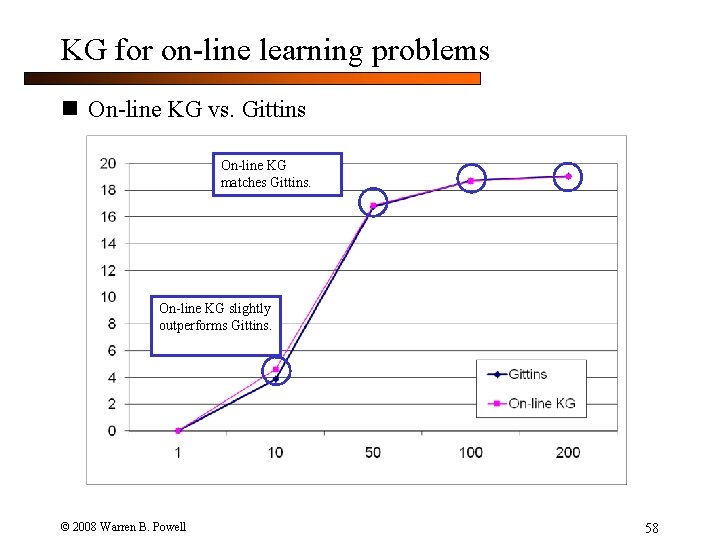

KG for on-line learning problems n On-line KG vs. Gittins On-line KG matches Gittins. On-line KG slightly outperforms Gittins. © 2008 Warren B. Powell 58

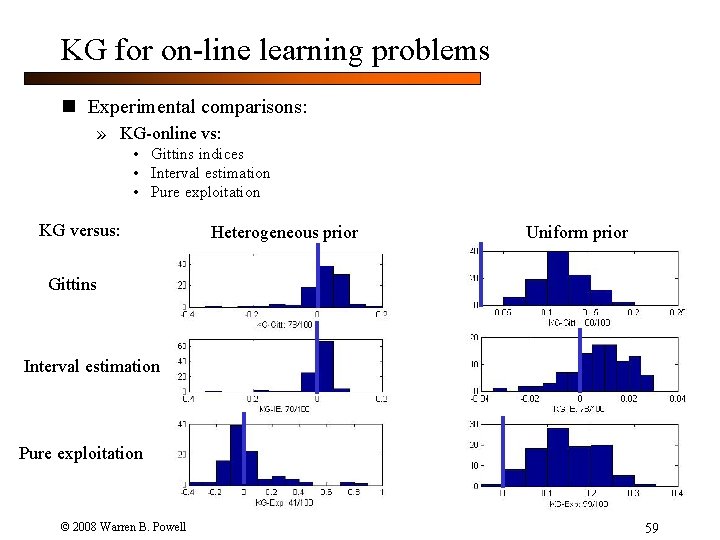

KG for on-line learning problems n Experimental comparisons: » KG-online vs: • Gittins indices • Interval estimation • Pure exploitation KG versus: Heterogeneous prior Uniform prior Gittins Interval estimation Pure exploitation © 2008 Warren B. Powell 59

Outline n The knowledge gradient with correlated beliefs Ø Presented by Peter Frazier, Ph. D. candidate, Princeton University © 2008 Warren B. Powell Slide 60

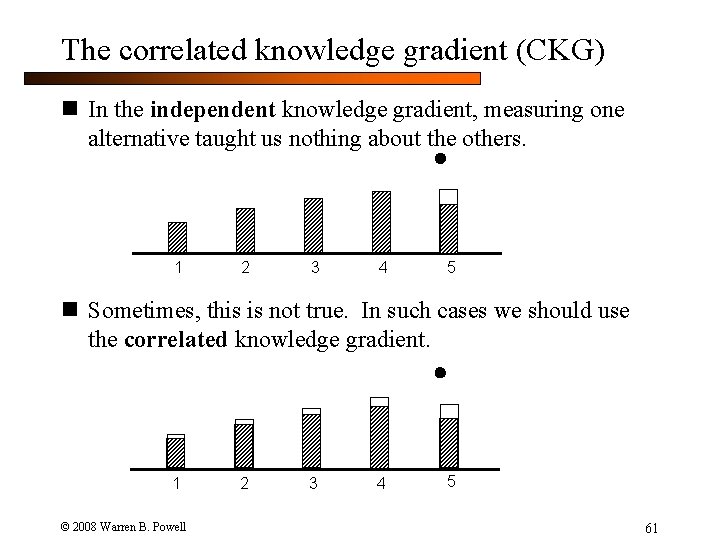

The correlated knowledge gradient (CKG) n In the independent knowledge gradient, measuring one alternative taught us nothing about the others. 1 2 3 4 5 n Sometimes, this is not true. In such cases we should use the correlated knowledge gradient. 1 © 2008 Warren B. Powell 2 3 4 5 61

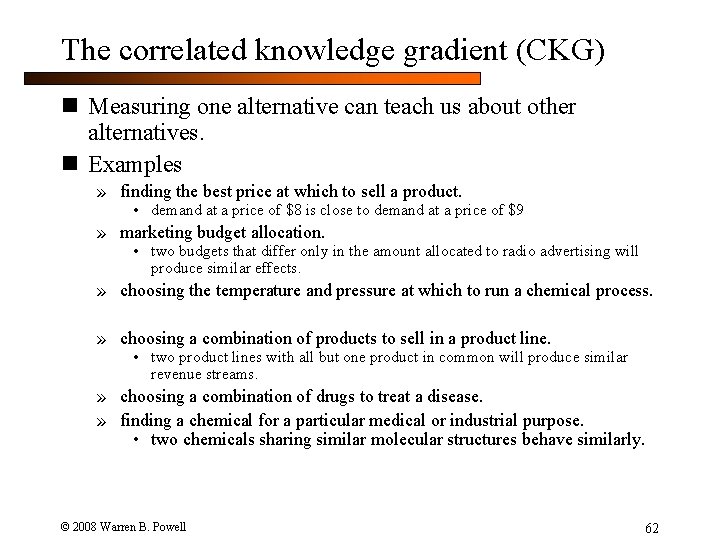

The correlated knowledge gradient (CKG) n Measuring one alternative can teach us about other alternatives. n Examples » finding the best price at which to sell a product. • demand at a price of $8 is close to demand at a price of $9 » marketing budget allocation. • two budgets that differ only in the amount allocated to radio advertising will produce similar effects. » choosing the temperature and pressure at which to run a chemical process. » choosing a combination of products to sell in a product line. • two product lines with all but one product in common will produce similar revenue streams. » choosing a combination of drugs to treat a disease. » finding a chemical for a particular medical or industrial purpose. • two chemicals sharing similar molecular structures behave similarly. © 2008 Warren B. Powell 62

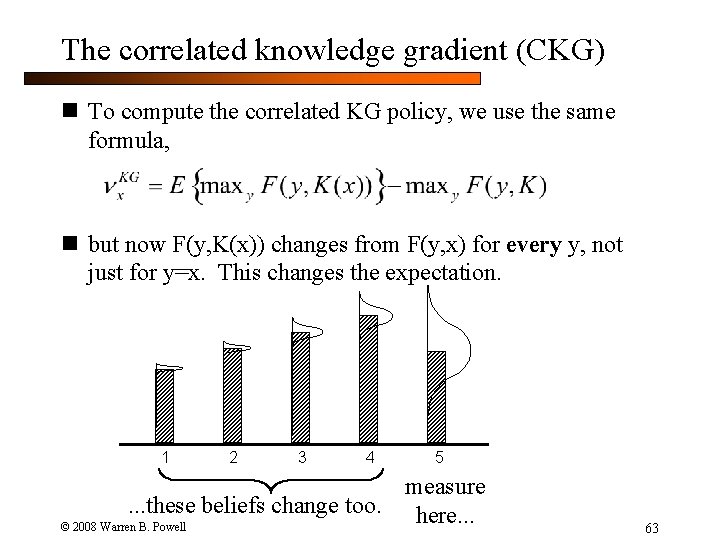

The correlated knowledge gradient (CKG) n To compute the correlated KG policy, we use the same formula, n but now F(y, K(x)) changes from F(y, x) for every y, not just for y=x. This changes the expectation. 1 2 3 4 5 measure. . . these beliefs change too. here. . . © 2008 Warren B. Powell 63

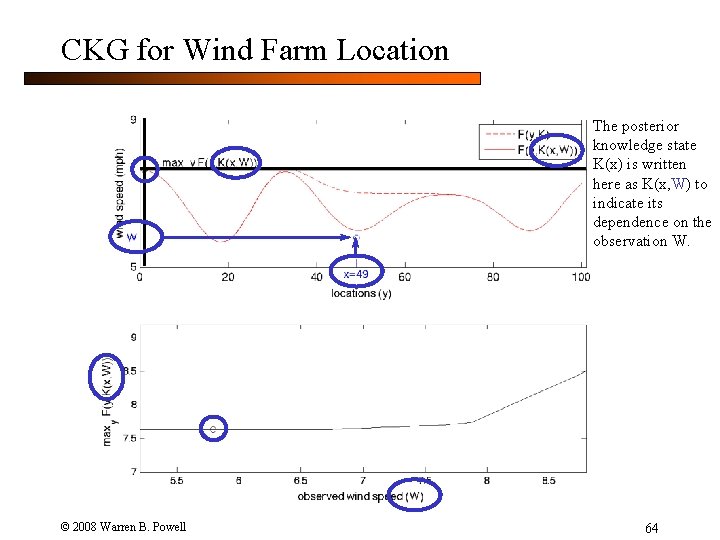

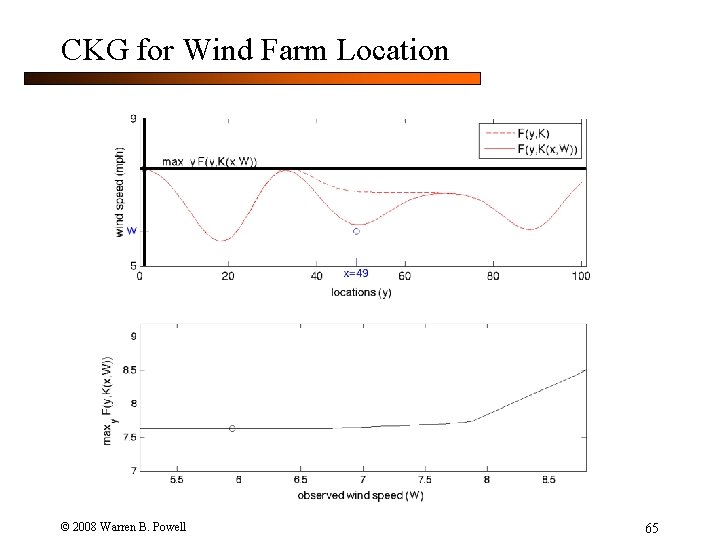

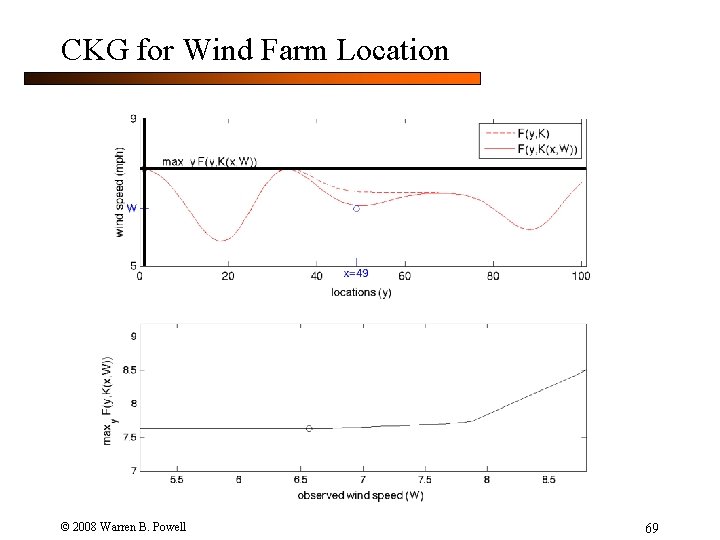

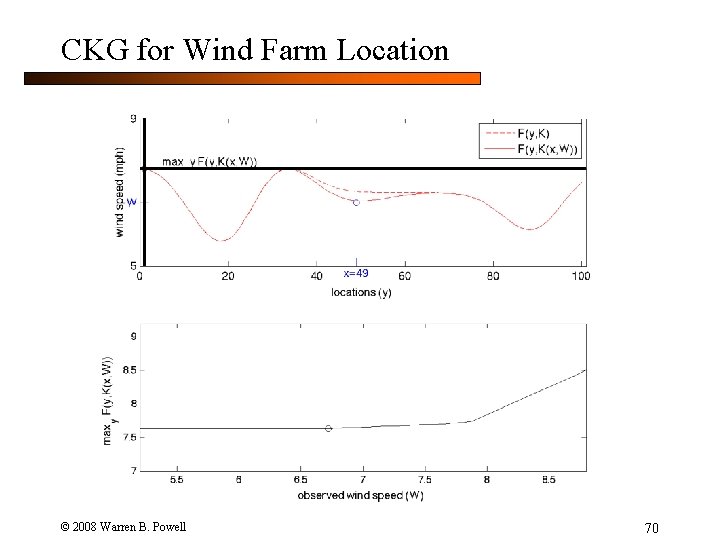

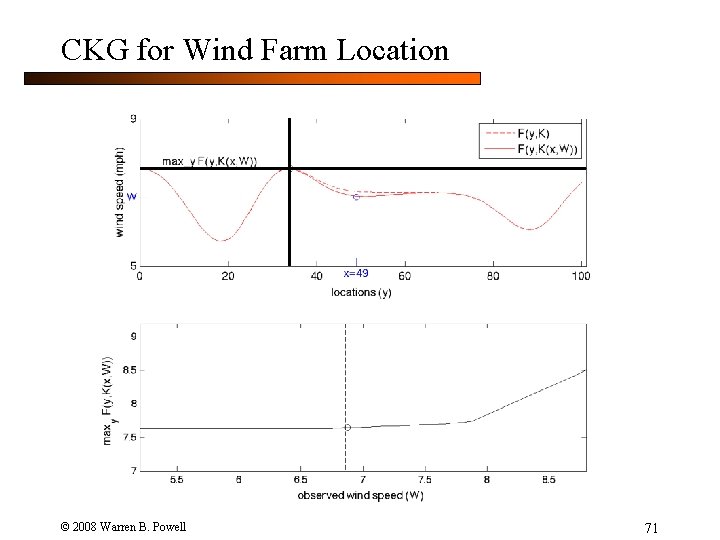

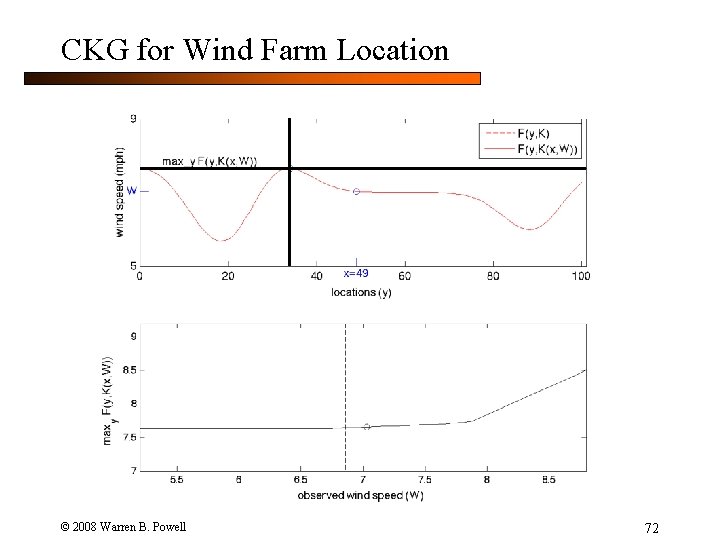

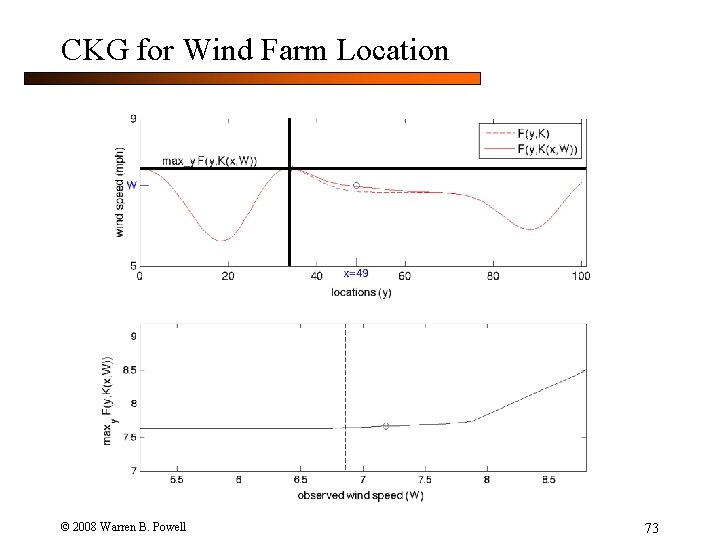

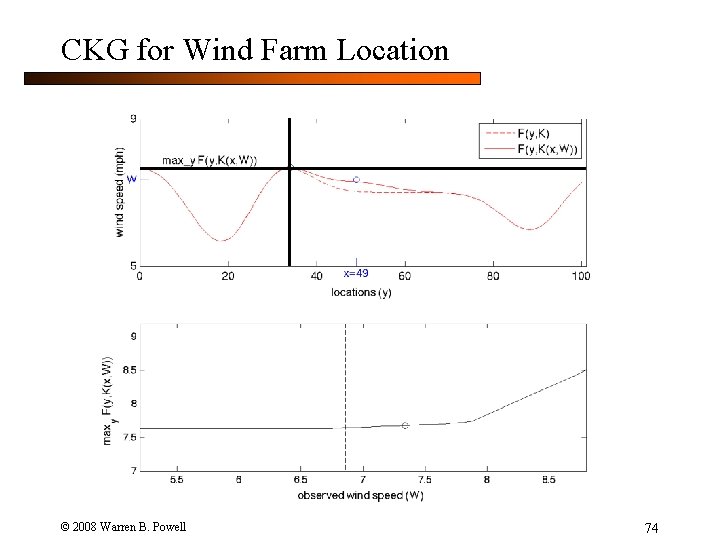

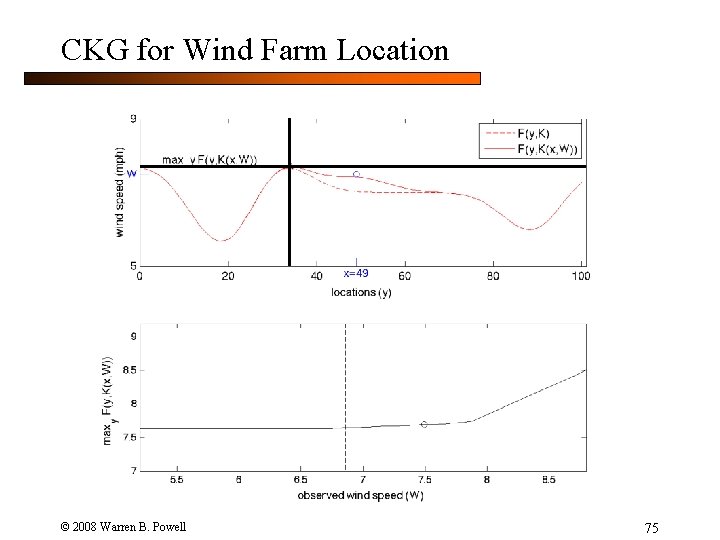

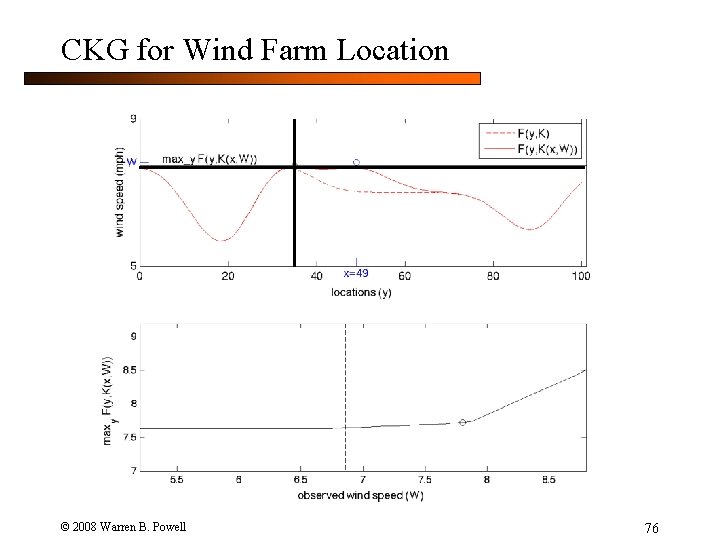

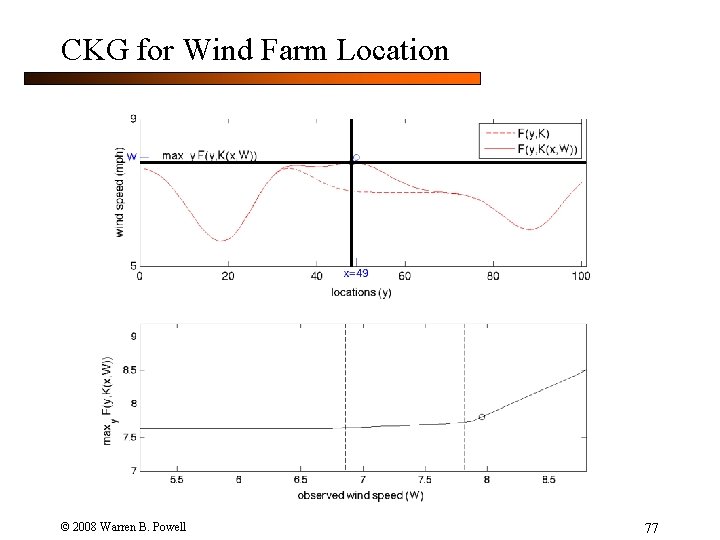

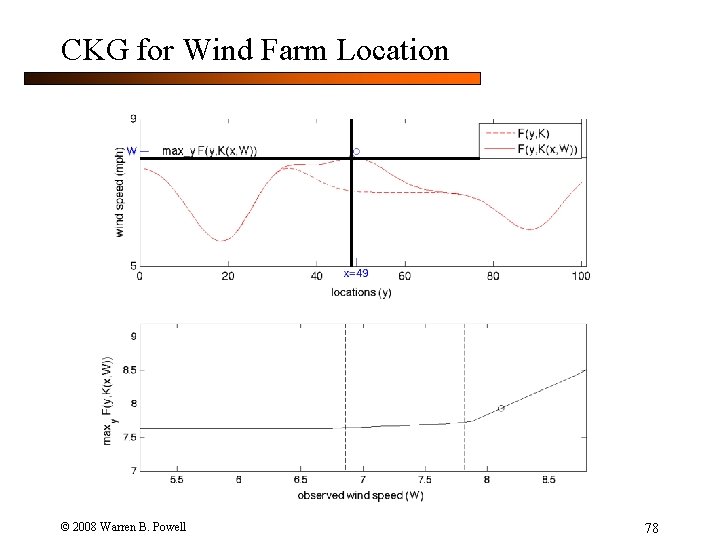

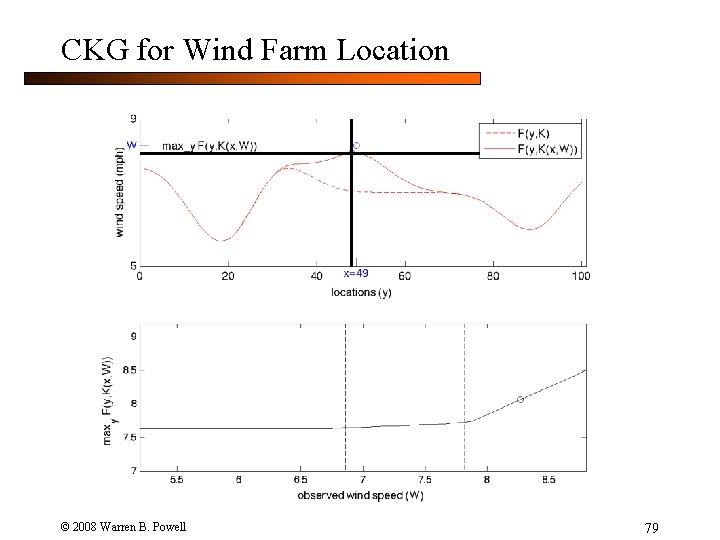

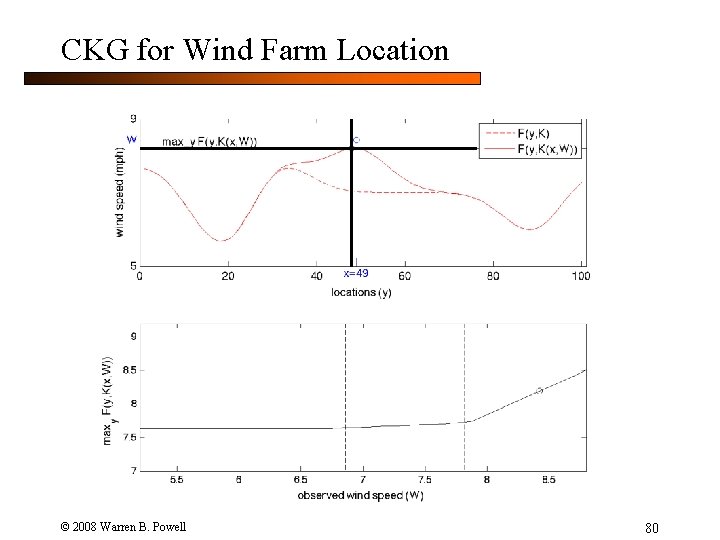

CKG for Wind Farm Location The posterior knowledge state K(x) is written here as K(x, W) to indicate its dependence on the observation W. © 2008 Warren B. Powell 64

CKG for Wind Farm Location © 2008 Warren B. Powell 65

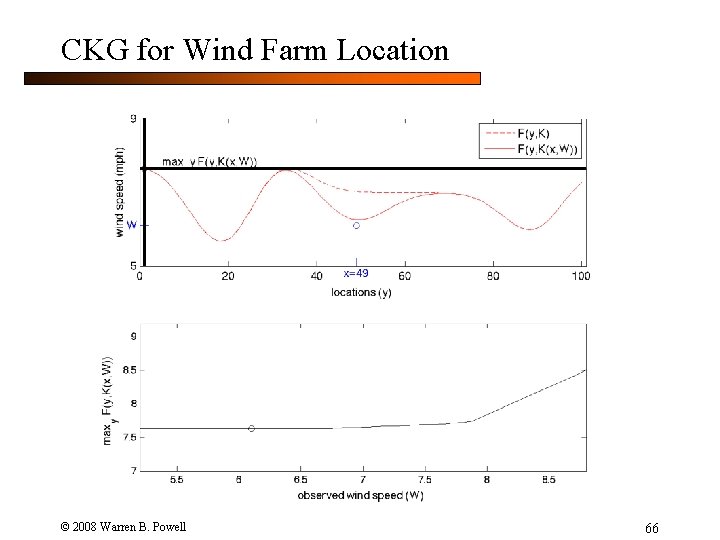

CKG for Wind Farm Location © 2008 Warren B. Powell 66

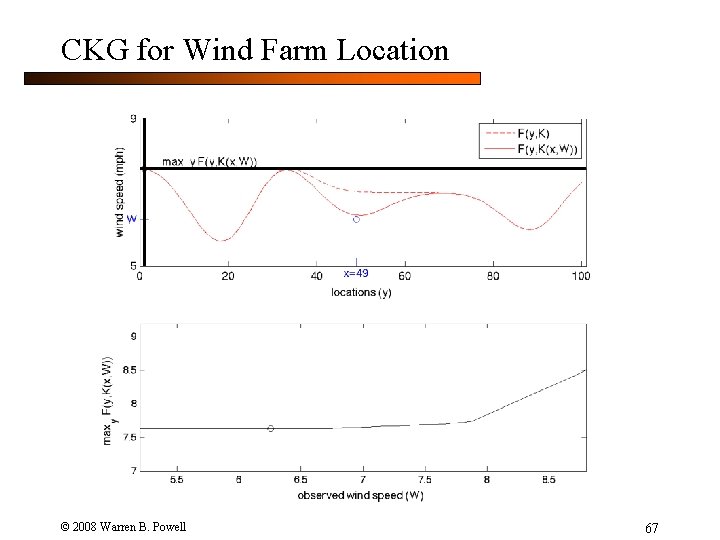

CKG for Wind Farm Location © 2008 Warren B. Powell 67

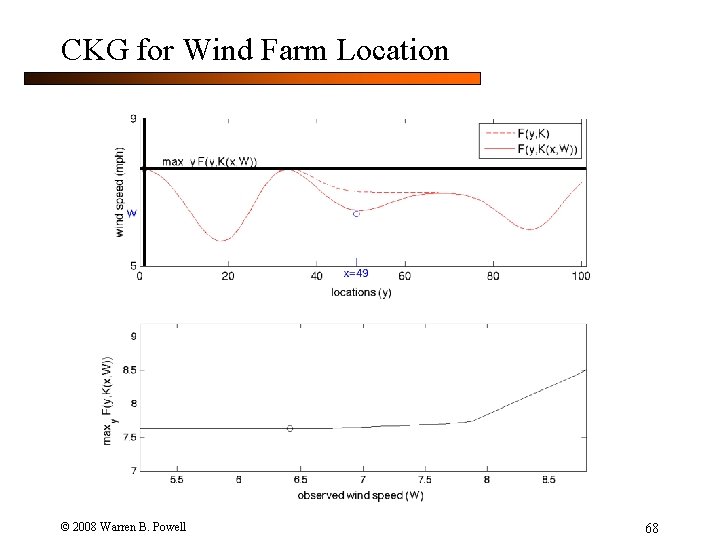

CKG for Wind Farm Location © 2008 Warren B. Powell 68

CKG for Wind Farm Location © 2008 Warren B. Powell 69

CKG for Wind Farm Location © 2008 Warren B. Powell 70

CKG for Wind Farm Location © 2008 Warren B. Powell 71

CKG for Wind Farm Location © 2008 Warren B. Powell 72

CKG for Wind Farm Location © 2008 Warren B. Powell 73

CKG for Wind Farm Location © 2008 Warren B. Powell 74

CKG for Wind Farm Location © 2008 Warren B. Powell 75

CKG for Wind Farm Location © 2008 Warren B. Powell 76

CKG for Wind Farm Location © 2008 Warren B. Powell 77

CKG for Wind Farm Location © 2008 Warren B. Powell 78

CKG for Wind Farm Location © 2008 Warren B. Powell 79

CKG for Wind Farm Location © 2008 Warren B. Powell 80

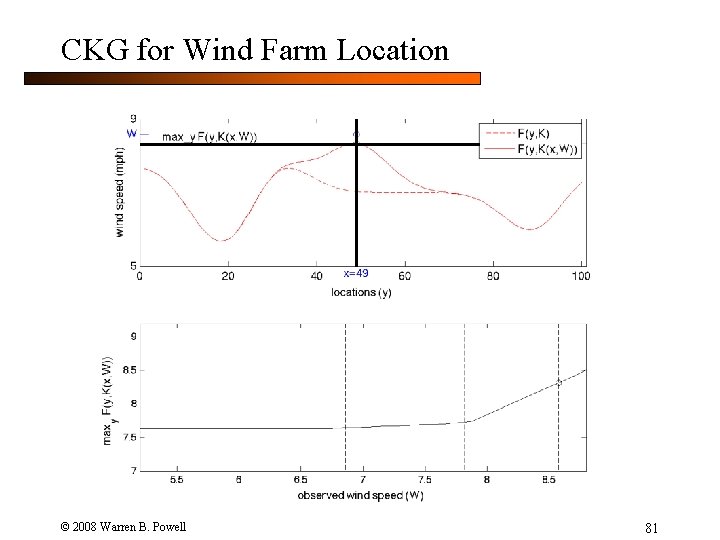

CKG for Wind Farm Location © 2008 Warren B. Powell 81

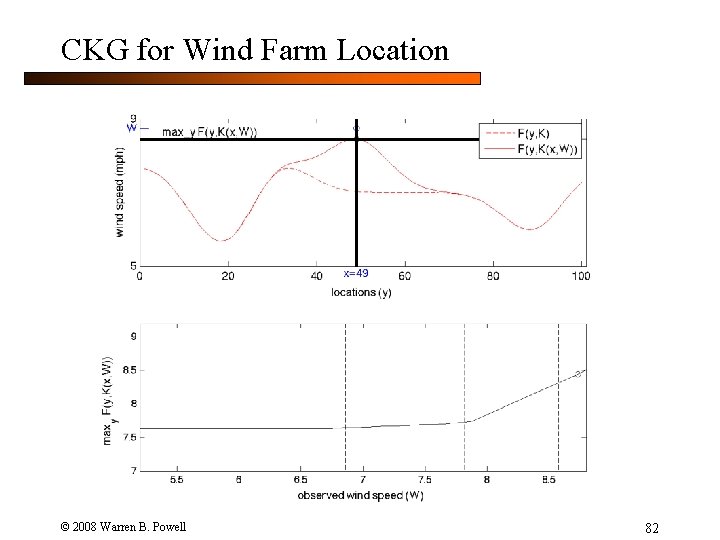

CKG for Wind Farm Location © 2008 Warren B. Powell 82

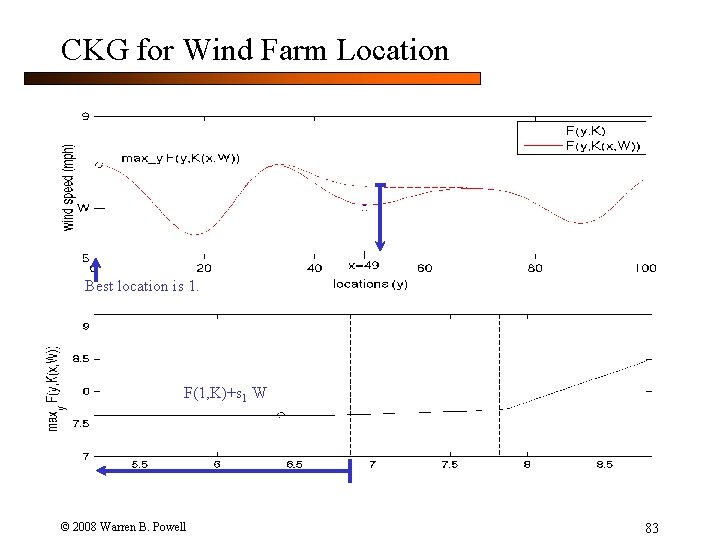

CKG for Wind Farm Location Best location is 1. F(1, K)+s 1 W © 2008 Warren B. Powell 83

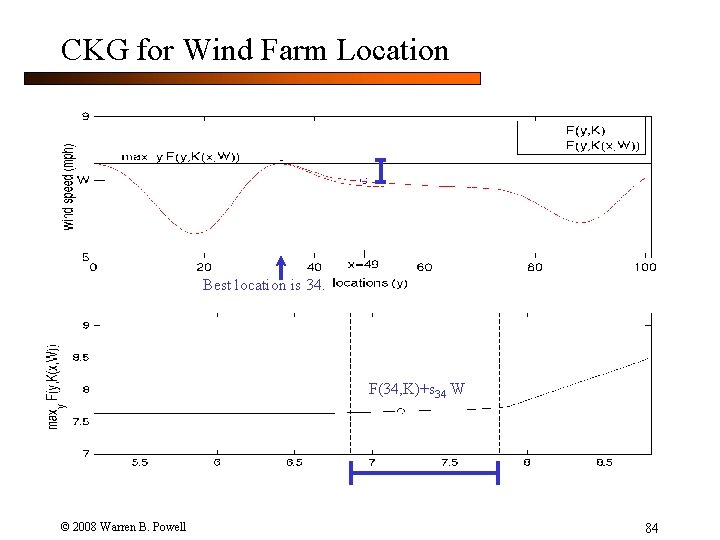

CKG for Wind Farm Location Best location is 34. F(34, K)+s 34 W © 2008 Warren B. Powell 84

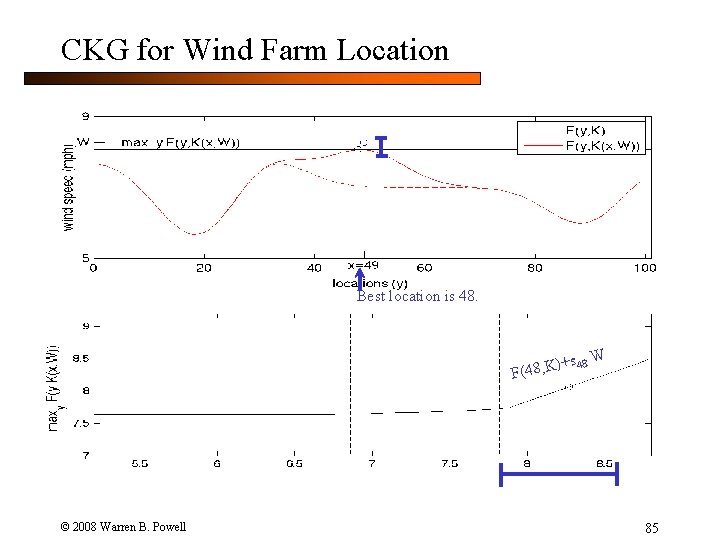

CKG for Wind Farm Location Best location is 48. s 48 W )+ F(48, K © 2008 Warren B. Powell 85

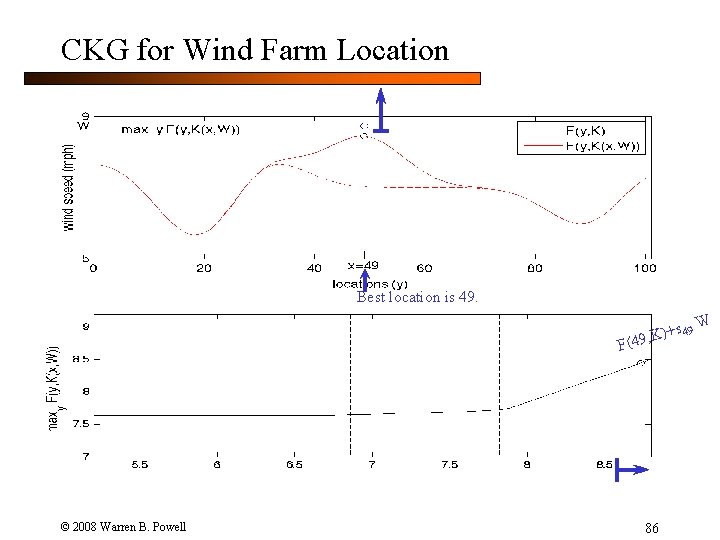

CKG for Wind Farm Location Best location is 49 W )+ (49, K F © 2008 Warren B. Powell 86

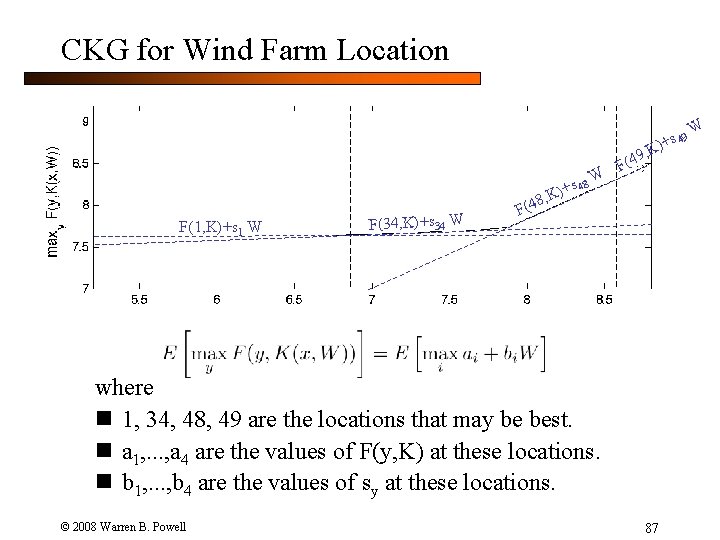

CKG for Wind Farm Location s 49 K)+ 9, s 48 K)+ W F(4 8, F(1, K)+s 1 W F(34, K)+s 34 W F(4 where n 1, 34, 48, 49 are the locations that may be best. n a 1, . . . , a 4 are the values of F(y, K) at these locations. n b 1, . . . , b 4 are the values of sy at these locations. © 2008 Warren B. Powell 87 W

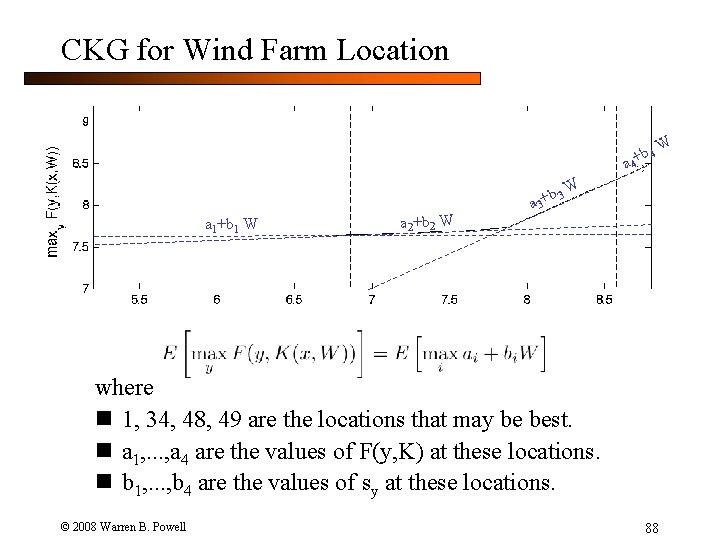

CKG for Wind Farm Location b 4 a 4+ a 1+b 1 W a 2+b 2 W W b 3 W + a 3 where n 1, 34, 48, 49 are the locations that may be best. n a 1, . . . , a 4 are the values of F(y, K) at these locations. n b 1, . . . , b 4 are the values of sy at these locations. © 2008 Warren B. Powell 88

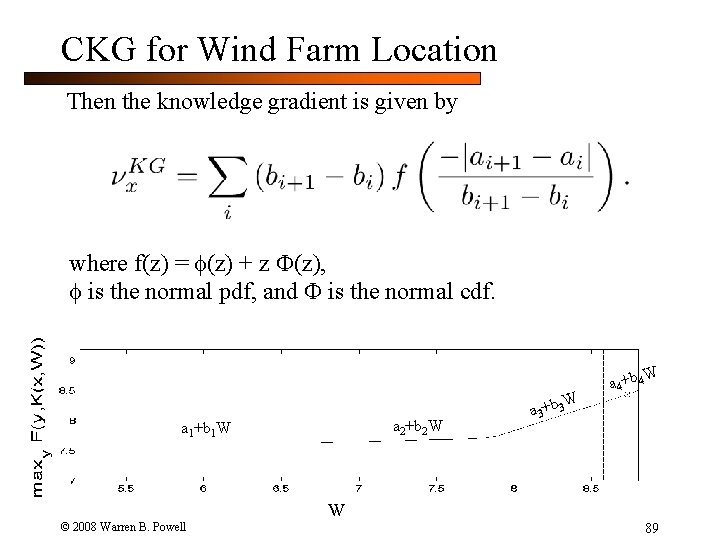

CKG for Wind Farm Location Then the knowledge gradient is given by where f(z) = (z) + z (z), is the normal pdf, and is the normal cdf. a 2+b 2 W a 1+b 1 W W a 3+b 3 W a 4+b 4 W © 2008 Warren B. Powell 89

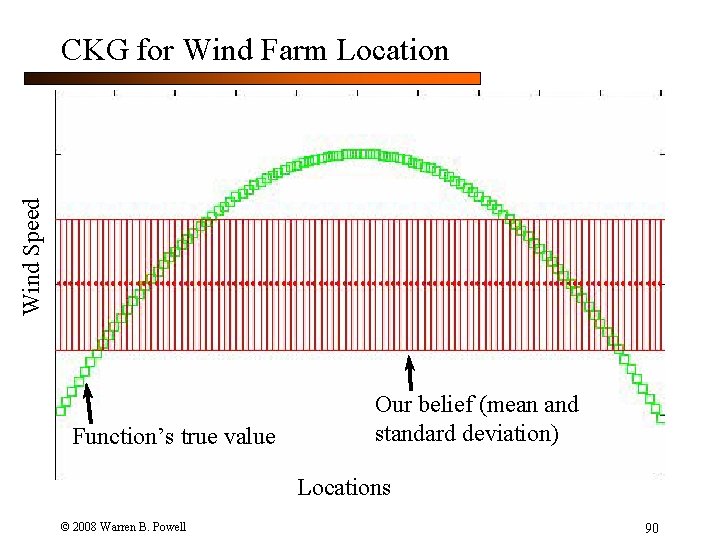

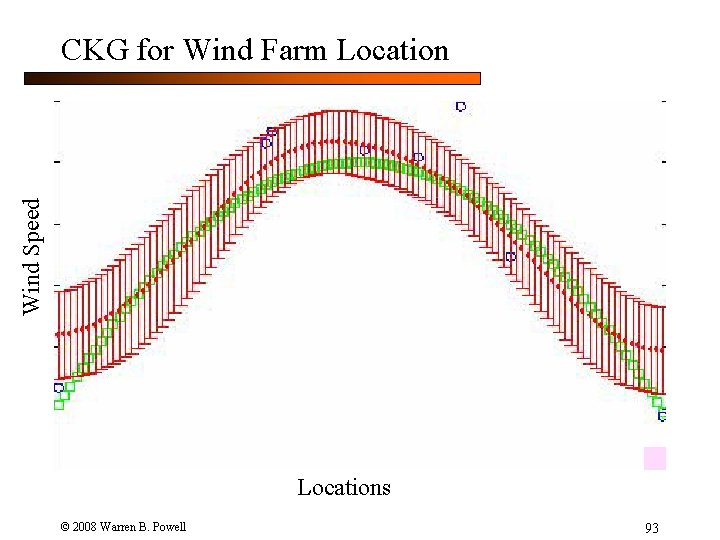

Wind Speed CKG for Wind Farm Location Function’s true value Our belief (mean and standard deviation) Locations © 2008 Warren B. Powell 90

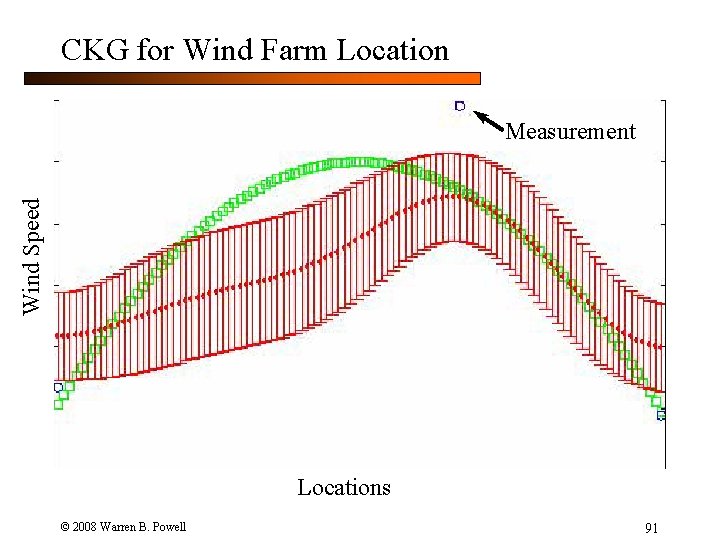

CKG for Wind Farm Location Wind Speed Measurement Locations © 2008 Warren B. Powell 91

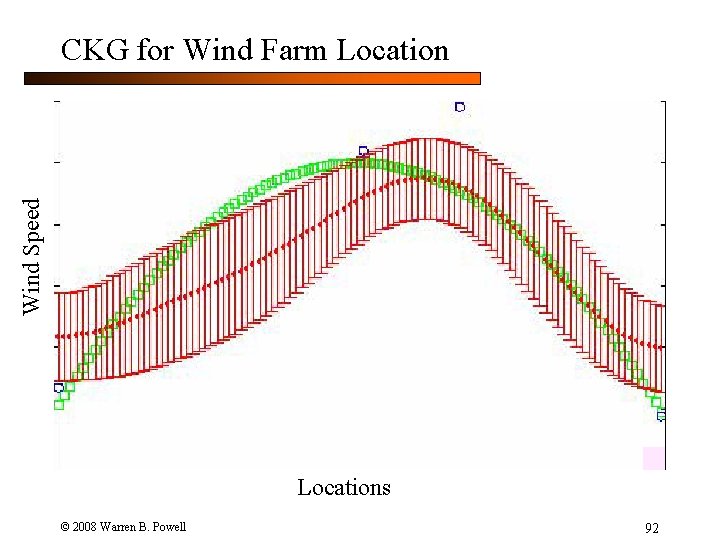

Wind Speed CKG for Wind Farm Locations © 2008 Warren B. Powell 92

Wind Speed CKG for Wind Farm Locations © 2008 Warren B. Powell 93

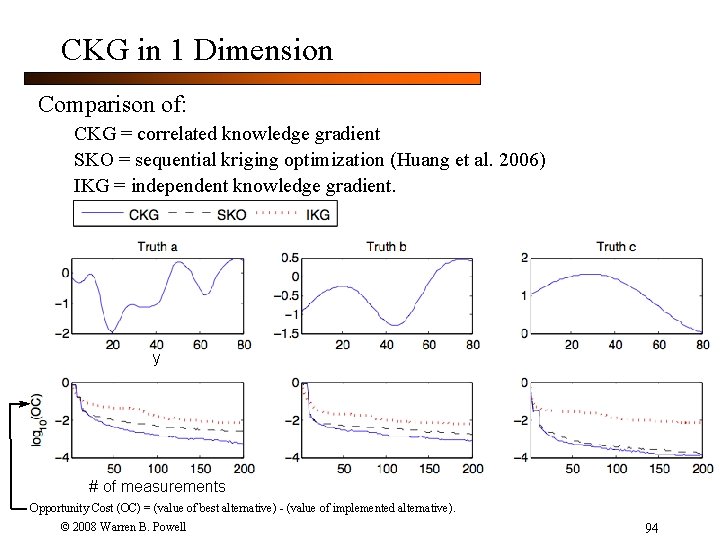

CKG in 1 Dimension Comparison of: CKG = correlated knowledge gradient SKO = sequential kriging optimization (Huang et al. 2006) IKG = independent knowledge gradient. y # of measurements Opportunity Cost (OC) = (value of best alternative) - (value of implemented alternative). © 2008 Warren B. Powell 94

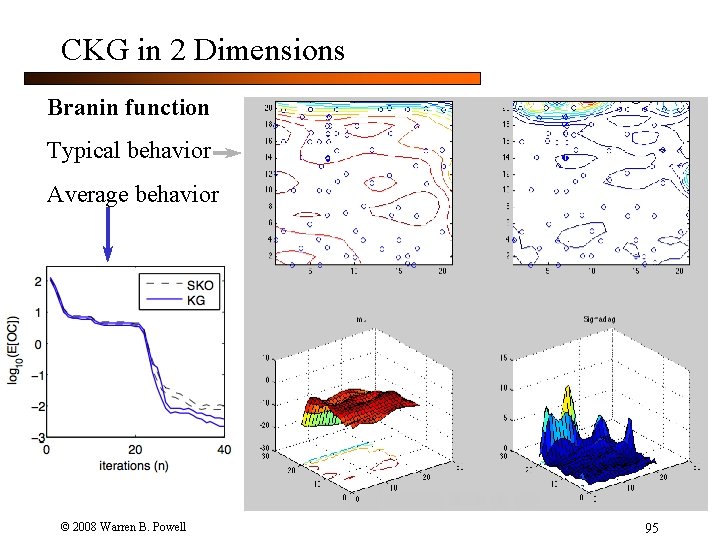

CKG in 2 Dimensions Branin function Typical behavior Average behavior © 2008 Warren B. Powell 95

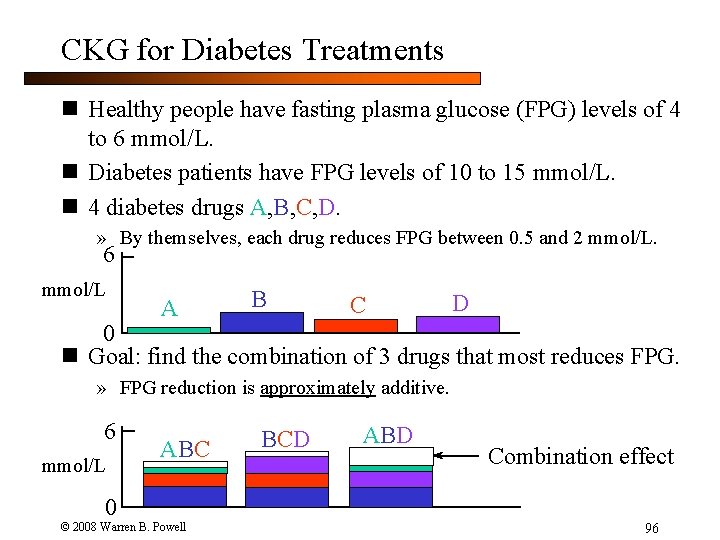

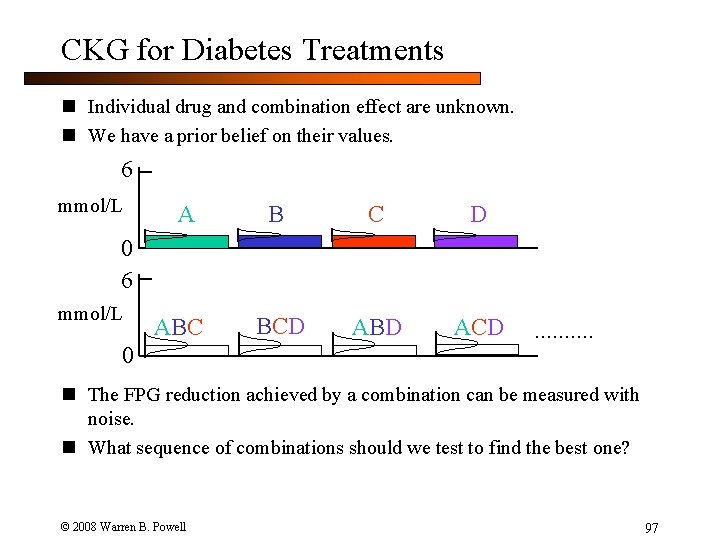

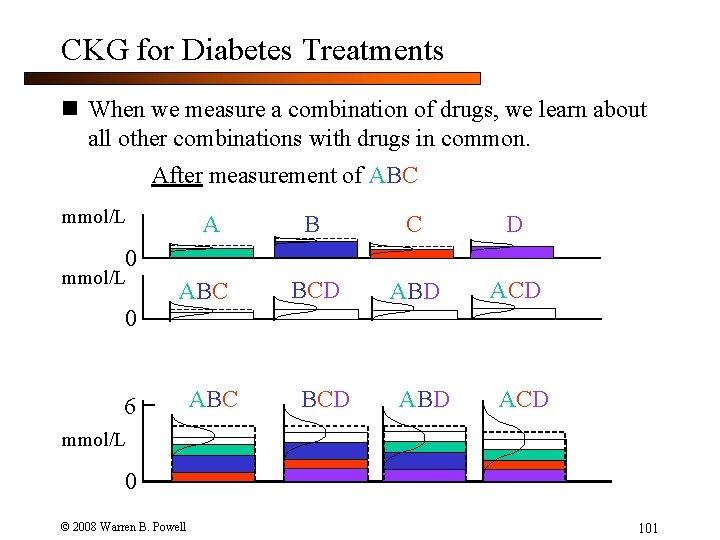

CKG for Diabetes Treatments n Healthy people have fasting plasma glucose (FPG) levels of 4 to 6 mmol/L. n Diabetes patients have FPG levels of 10 to 15 mmol/L. n 4 diabetes drugs A, B, C, D. » By themselves, each drug reduces FPG between 0. 5 and 2 mmol/L. 6 mmol/L A B C D 0 n Goal: find the combination of 3 drugs that most reduces FPG. » FPG reduction is approximately additive. 6 mmol/L ABC BCD ABD Combination effect 0 © 2008 Warren B. Powell 96

CKG for Diabetes Treatments n Individual drug and combination effect are unknown. n We have a prior belief on their values. 6 mmol/L A B C D BCD ABD ACD 0 6 mmol/L ABC 0 . . n The FPG reduction achieved by a combination can be measured with noise. n What sequence of combinations should we test to find the best one? © 2008 Warren B. Powell 97

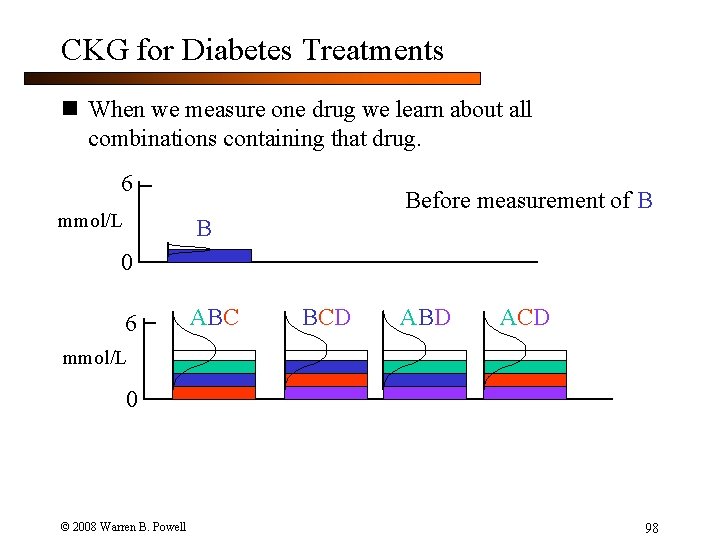

CKG for Diabetes Treatments n When we measure one drug we learn about all combinations containing that drug. 6 mmol/L Before measurement of B B 0 6 ABC BCD ABD ACD mmol/L 0 © 2008 Warren B. Powell 98

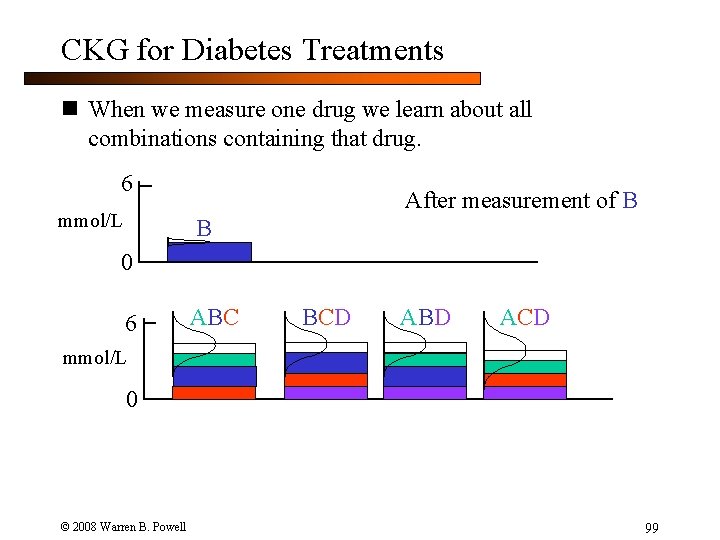

CKG for Diabetes Treatments n When we measure one drug we learn about all combinations containing that drug. 6 mmol/L After measurement of B B 0 6 ABC BCD ABD ACD mmol/L 0 © 2008 Warren B. Powell 99

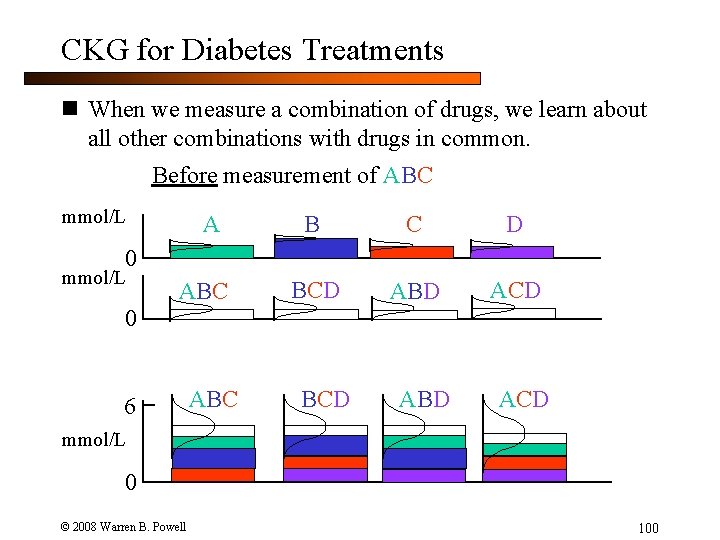

CKG for Diabetes Treatments n When we measure a combination of drugs, we learn about all other combinations with drugs in common. Before measurement of ABC mmol/L A B C D BCD ABD ACD 0 mmol/L ABC 0 6 ABC BCD ABD ACD mmol/L 0 © 2008 Warren B. Powell 100

CKG for Diabetes Treatments n When we measure a combination of drugs, we learn about all other combinations with drugs in common. After measurement of ABC mmol/L A B C D BCD ABD ACD 0 mmol/L ABC 0 6 ABC BCD ABD ACD mmol/L 0 © 2008 Warren B. Powell 101

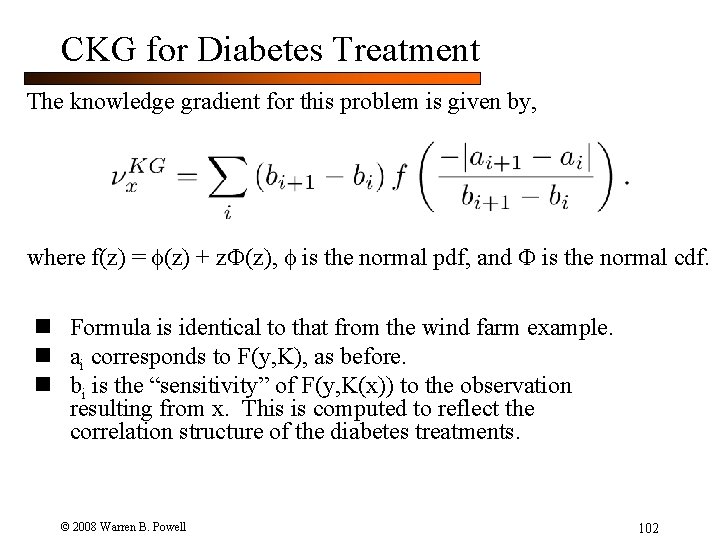

CKG for Diabetes Treatment The knowledge gradient for this problem is given by, where f(z) = (z) + z (z), is the normal pdf, and is the normal cdf. n Formula is identical to that from the wind farm example. n ai corresponds to F(y, K), as before. n bi is the “sensitivity” of F(y, K(x)) to the observation resulting from x. This is computed to reflect the correlation structure of the diabetes treatments. © 2008 Warren B. Powell 102

Outline n Optimal learning on a graph Ø Research by Ilya Ryzhov, Ph. D. candidate, Princeton University © 2008 Warren B. Powell Slide 103

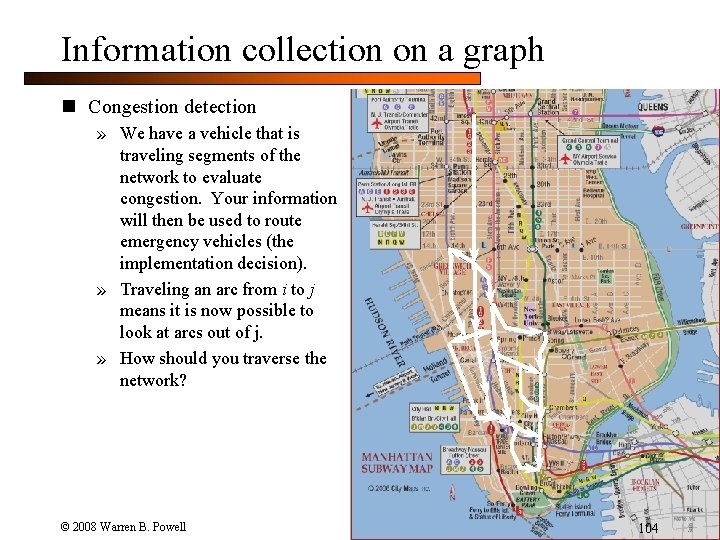

Information collection on a graph n Congestion detection » We have a vehicle that is traveling segments of the network to evaluate congestion. Your information will then be used to route emergency vehicles (the implementation decision). » Traveling an arc from i to j means it is now possible to look at arcs out of j. » How should you traverse the network? © 2008 Warren B. Powell 104

Information collection on a graph n Biosurveillance » You are part of a medical team traveling around Africa to measure the presence of malaria in different parts of the continent. » Visiting one location changes the cost of visiting other locations. » How do you sequence your measurements to have the great impact on prevention strategies (the implementation decision). © 2008 Warren B. Powell Presence of malaria 105

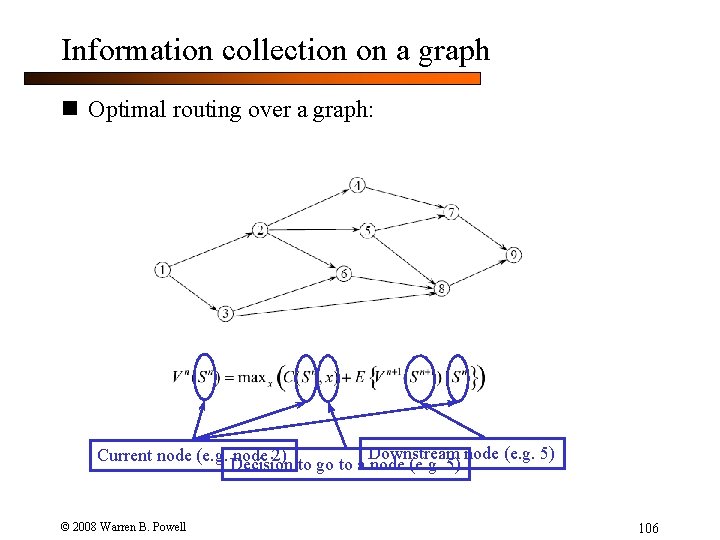

Information collection on a graph n Optimal routing over a graph: node (e. g. 5) Current node (e. g. Decision node 2) to go to a Downstream node (e. g. 5) © 2008 Warren B. Powell 106

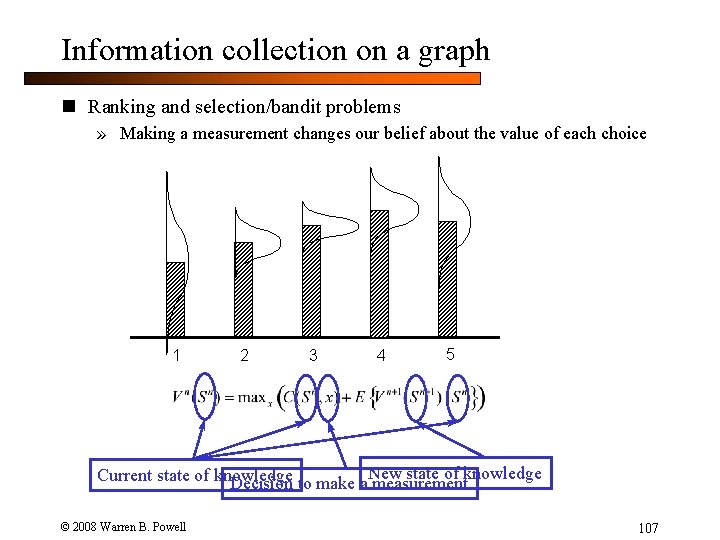

Information collection on a graph n Ranking and selection/bandit problems » Making a measurement changes our belief about the value of each choice 1 2 3 4 5 New state of knowledge Current state of knowledge Decision to make a measurement © 2008 Warren B. Powell 107

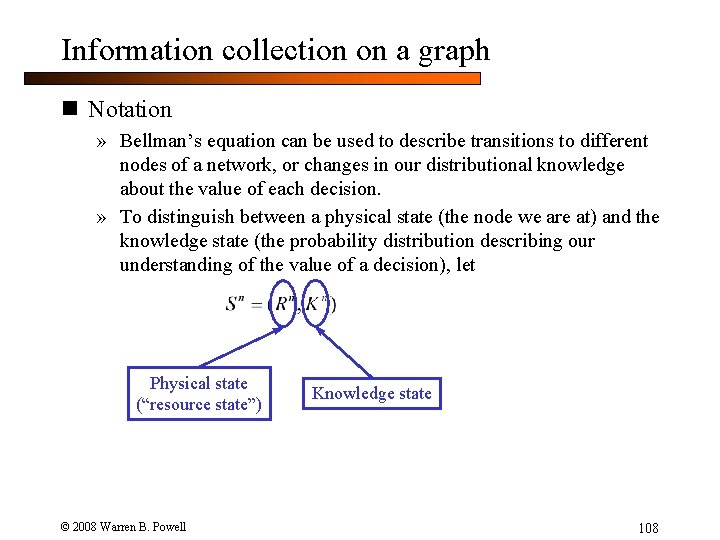

Information collection on a graph n Notation » Bellman’s equation can be used to describe transitions to different nodes of a network, or changes in our distributional knowledge about the value of each decision. » To distinguish between a physical state (the node we are at) and the knowledge state (the probability distribution describing our understanding of the value of a decision), let Physical state (“resource state”) © 2008 Warren B. Powell Knowledge state 108

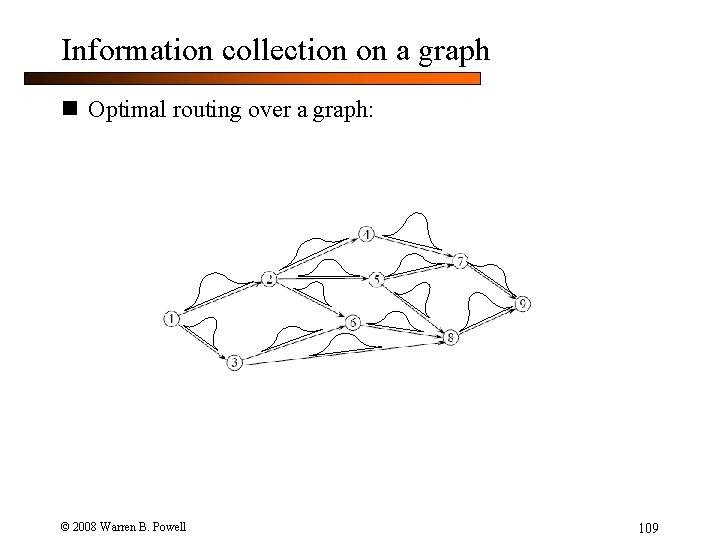

Information collection on a graph n Optimal routing over a graph: © 2008 Warren B. Powell 109

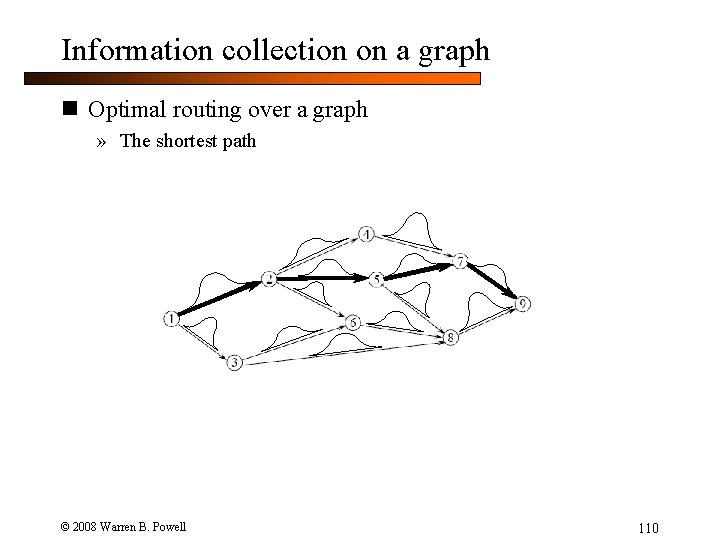

Information collection on a graph n Optimal routing over a graph » The shortest path © 2008 Warren B. Powell 110

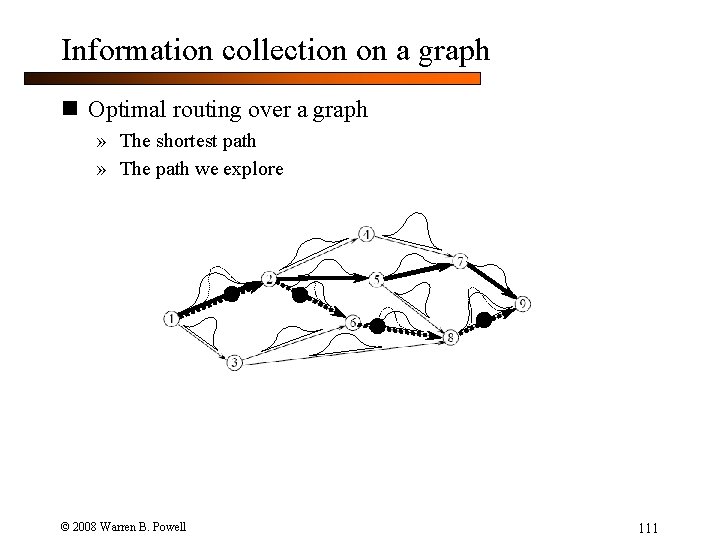

Information collection on a graph n Optimal routing over a graph » The shortest path » The path we explore © 2008 Warren B. Powell 111

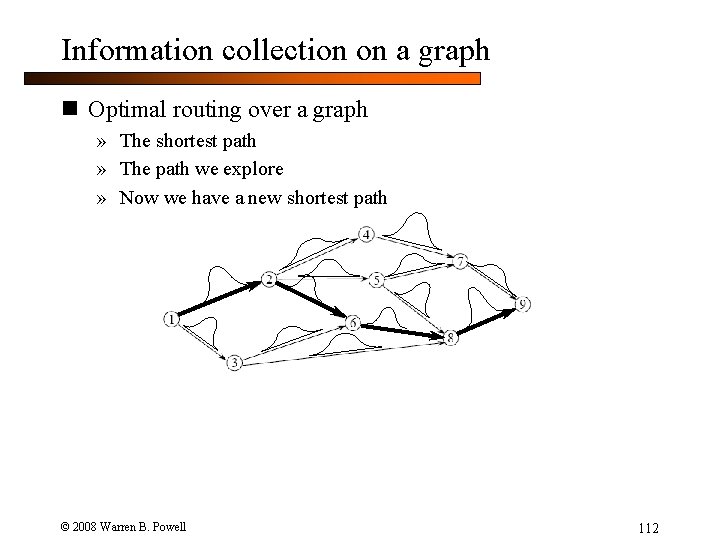

Information collection on a graph n Optimal routing over a graph » The shortest path » The path we explore » Now we have a new shortest path » How do we decide which links to measure? © 2008 Warren B. Powell 112

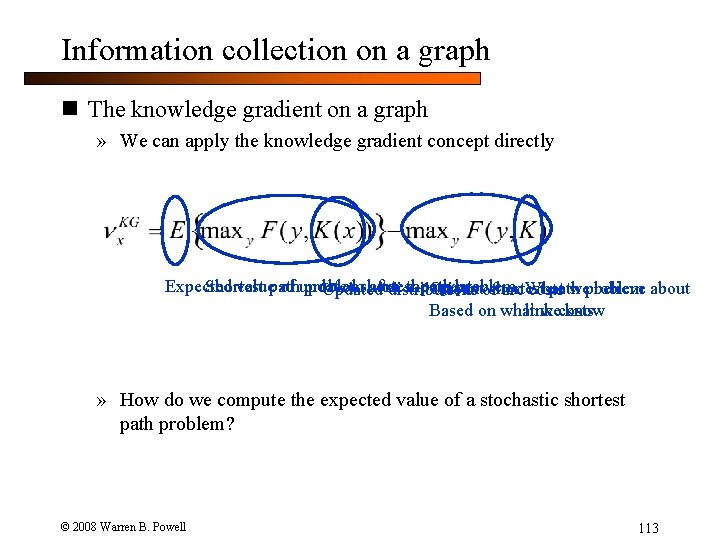

Information collection on a graph n The knowledge gradient on a graph » We can apply the knowledge gradient concept directly Expected valuepath of updated shortest problem Shortest problem after thepath update weproblem believe about Current path Updated distributions ofshortest arc What costs link Based on what wecosts know » How do we compute the expected value of a stochastic shortest path problem? © 2008 Warren B. Powell 113

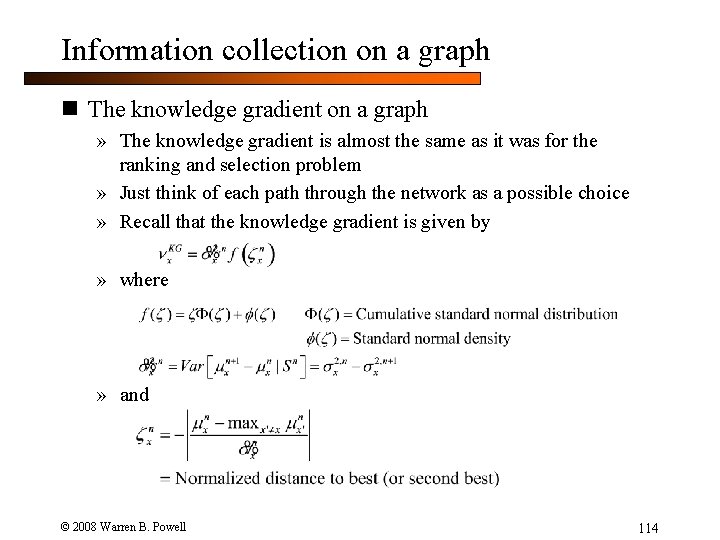

Information collection on a graph n The knowledge gradient on a graph » The knowledge gradient is almost the same as it was for the ranking and selection problem » Just think of each path through the network as a possible choice » Recall that the knowledge gradient is given by » where » and © 2008 Warren B. Powell 114

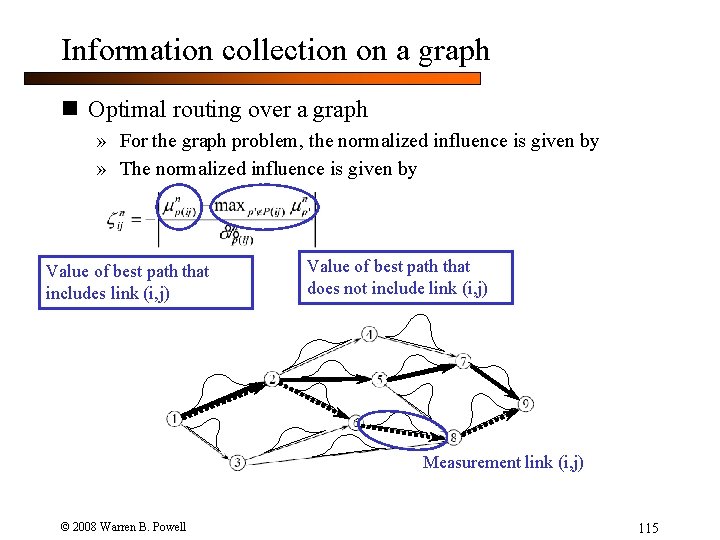

Information collection on a graph n Optimal routing over a graph » For the graph problem, the normalized influence is given by » The normalized influence is given by Value of best path that includes link (i, j) Value of best path that does not include link (i, j) Measurement link (i, j) © 2008 Warren B. Powell 115

© 2008 Warren B. Powell 116

- Slides: 116