Optimal Data Dependent Hashing for Approximate Near Neighbors

Optimal Data Dependent Hashing for Approximate Near Neighbors Alexandr Andoni (Simons Inst. / Columbia) Ilya Razenshteyn (MIT, now at IBM Almaden)

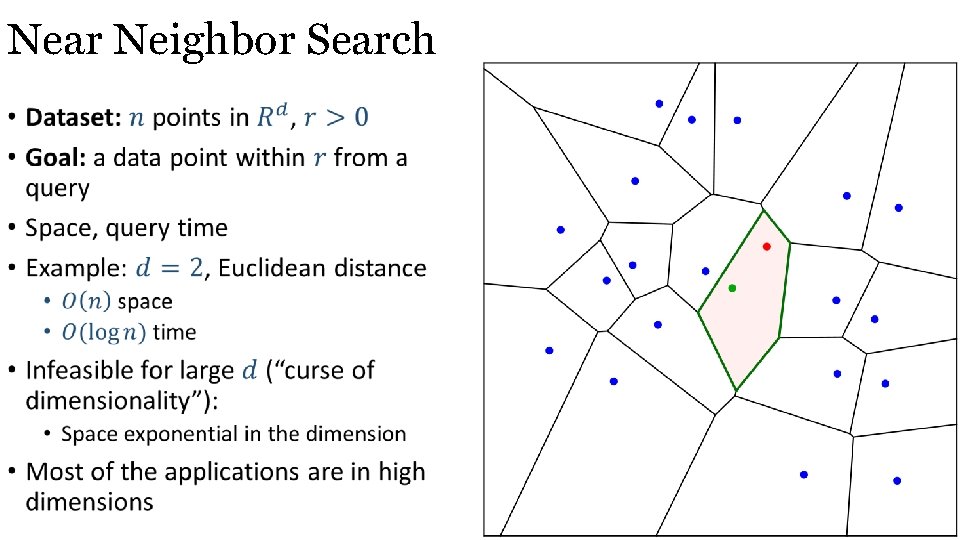

Near Neighbor Search •

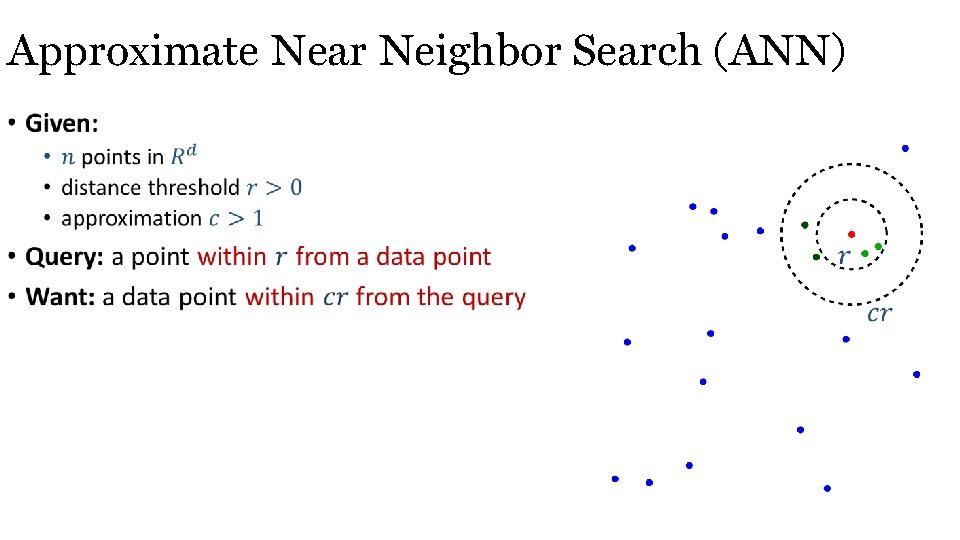

Approximate Near Neighbor Search (ANN) •

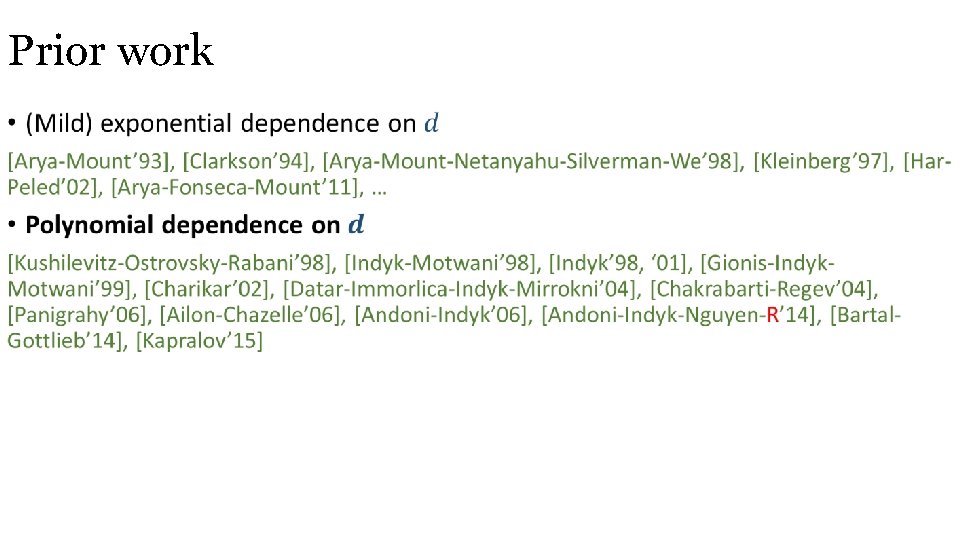

Prior work •

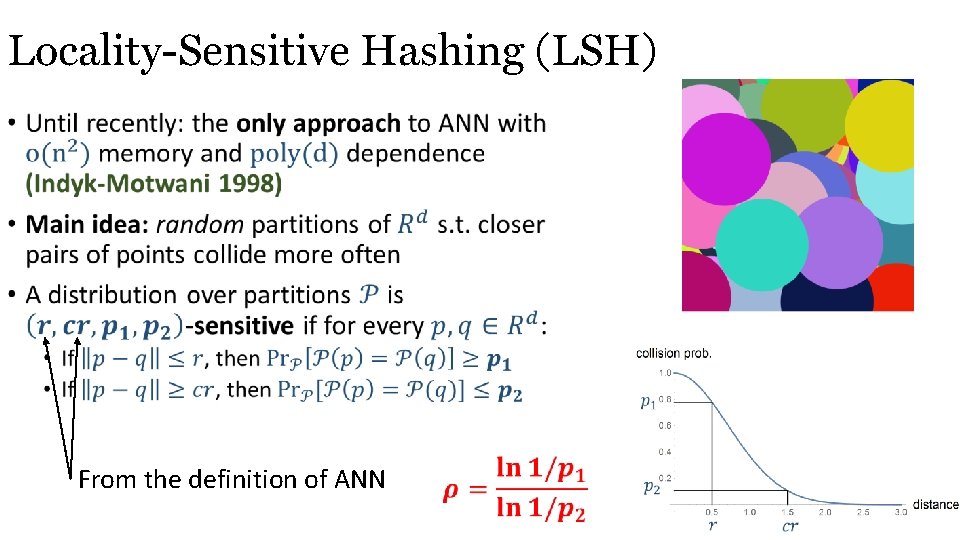

Locality Sensitive Hashing (LSH) • From the definition of ANN

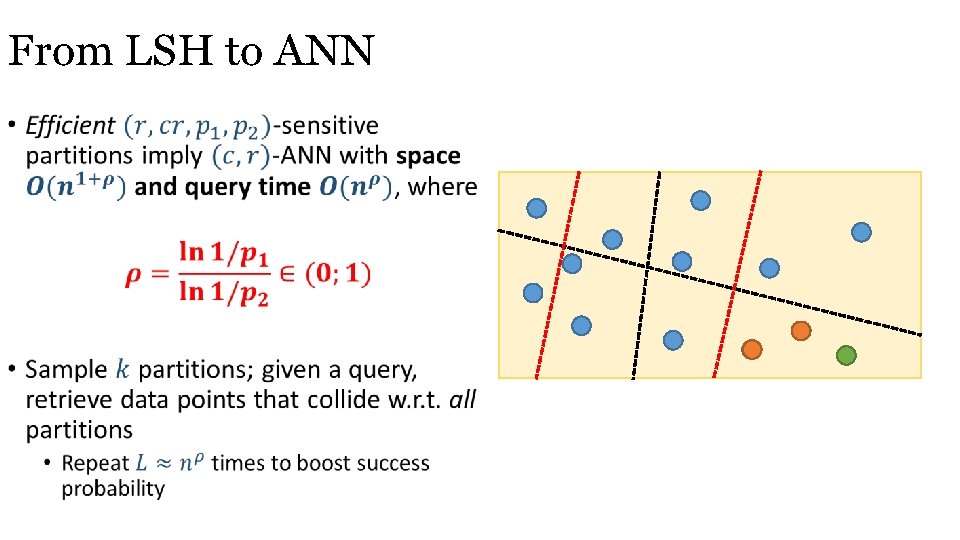

From LSH to ANN •

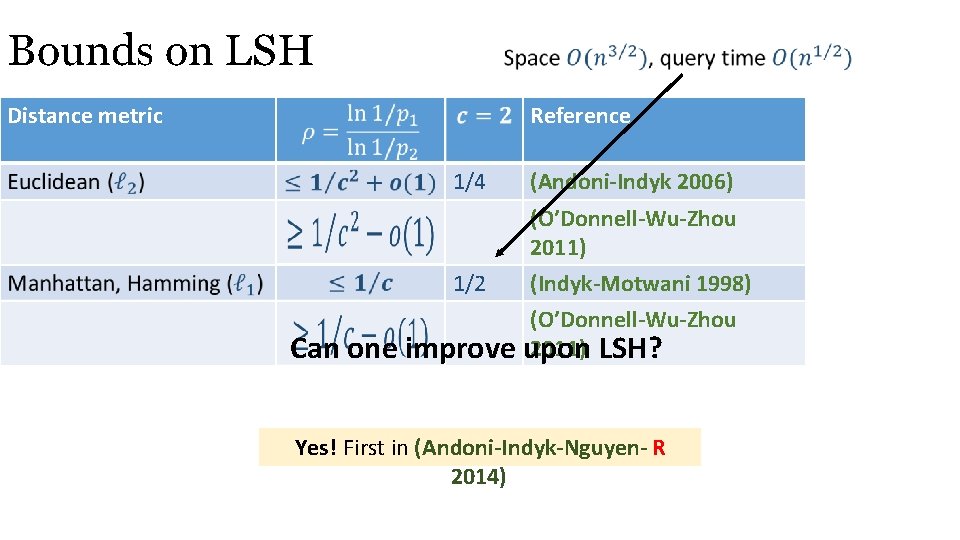

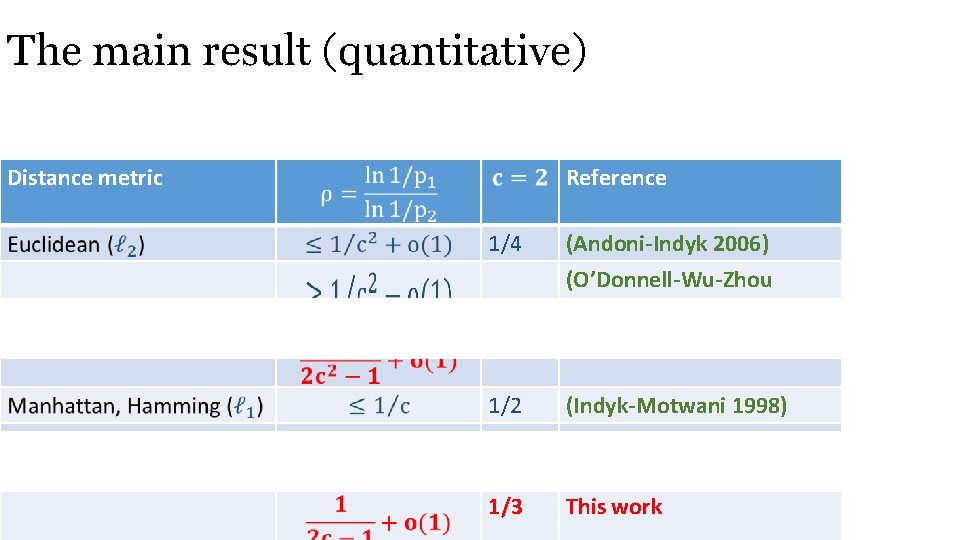

Bounds on LSH Distance metric Reference 1/4 (Andoni-Indyk 2006) 1/2 (O’Donnell-Wu-Zhou 2011) (Indyk-Motwani 1998) (O’Donnell-Wu-Zhou 2011) LSH? upon Can one improve Yes! First in (Andoni-Indyk-Nguyen- R 2014)

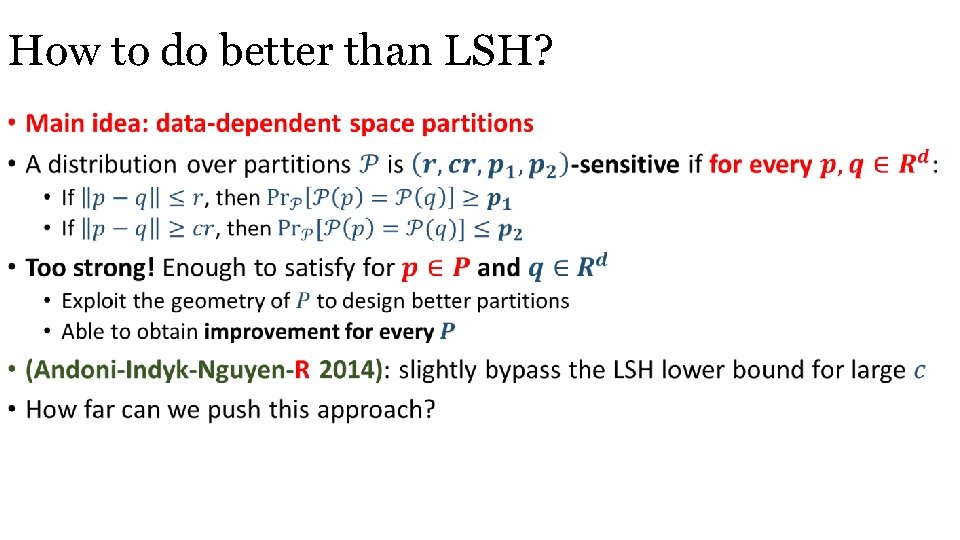

How to do better than LSH? •

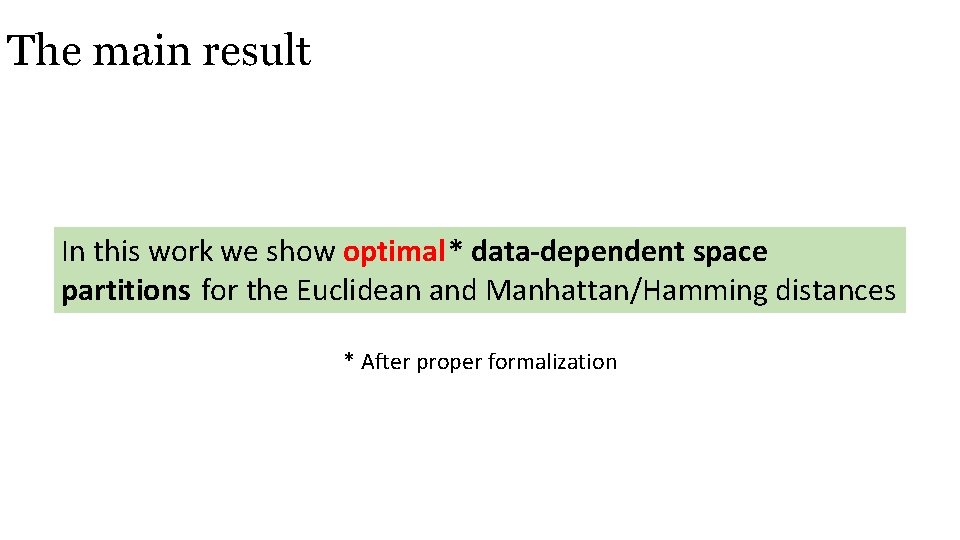

The main result In this work we show optimal* data-dependent space partitions for the Euclidean and Manhattan/Hamming distances * After proper formalization

The main result (quantitative) Distance metric Reference 1/4 (Andoni-Indyk 2006) (O’Donnell-Wu-Zhou 2011) 1/7 This work 1/2 (Indyk-Motwani 1998) (O’Donnell-Wu-Zhou 2011) 1/3 This work

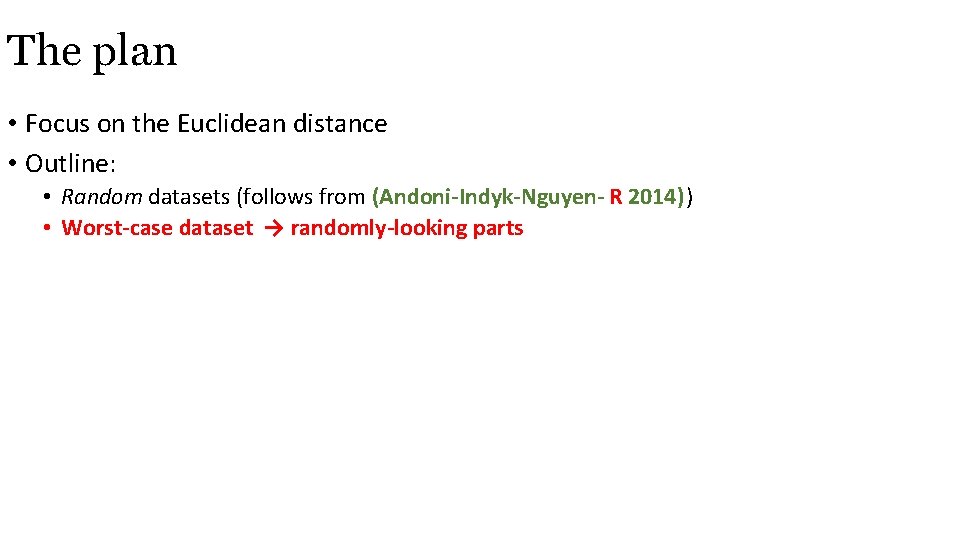

The plan • Focus on the Euclidean distance • Outline: • Random datasets (follows from (Andoni-Indyk-Nguyen- R 2014)) • Worst-case dataset → randomly-looking parts

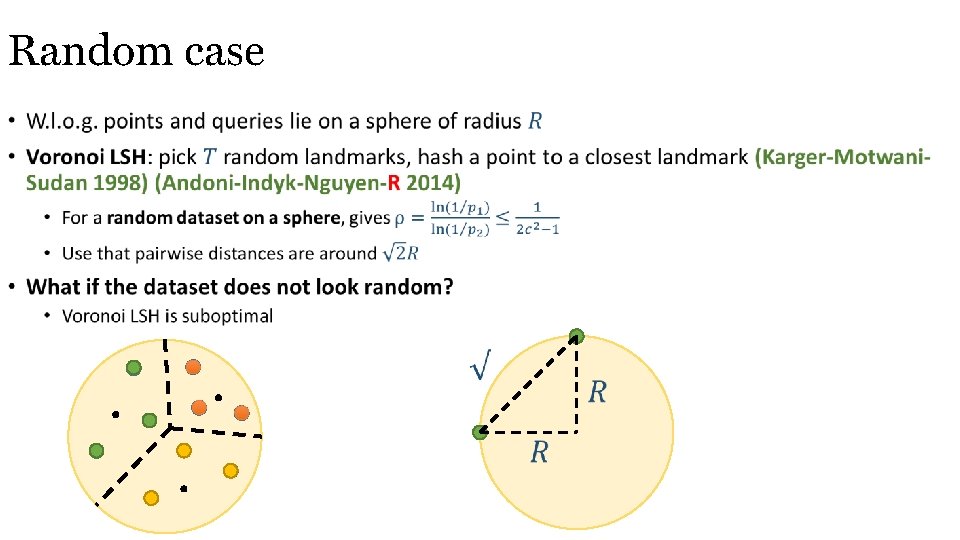

Random case •

General case •

Handling clusters •

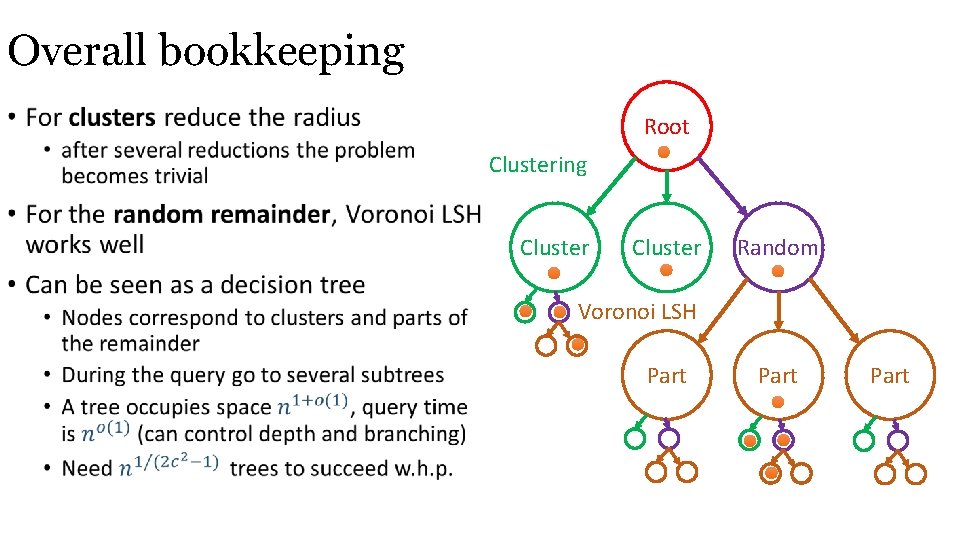

Overall bookkeeping • Root Clustering Cluster Random Voronoi LSH Part

Fast preprocessing •

Dynamic case • Classical LSH allows insertions and deletions “for free” • Insertions and deletions in ≈ query time by using dynamization techniques (Overmars-van Leeuwen 1981)

Conclusions and open problems • Quadratically improve upon the classical LSH (Indyk-Motwani 1998) and (Andoni -Indyk 2006) • Optimal data-dependent space partitions • Main idea: partition a worst-case dataset into pseudo-random pieces • High-dimensional geometric regularity lemma? • More applications of this approach? • Practical version of Voronoi LSH for ANN on a sphere (Andoni-Indyk-Kapralov-Laarhoven- R-Schmidt 2015) • can clustering be made practical too? Any questions?

- Slides: 18