Operating System Abstractions for GPU Programming Chris Rossbach

Operating System Abstractions for GPU Programming Chris Rossbach, Microsoft Research Emmett Witchel, University of Texas at Austin September 23 2010

Motivation GPU application domains limited CUDA ◦ Rich APIs/abstractions ◦ Language integration familiar environment Composition/integration into systems ◦ Programmer-visible abstractions expressive ◦ Realization/implementations unsatisfactory Better OS-level abstractions are required ◦ (IP/Business concerns aside)

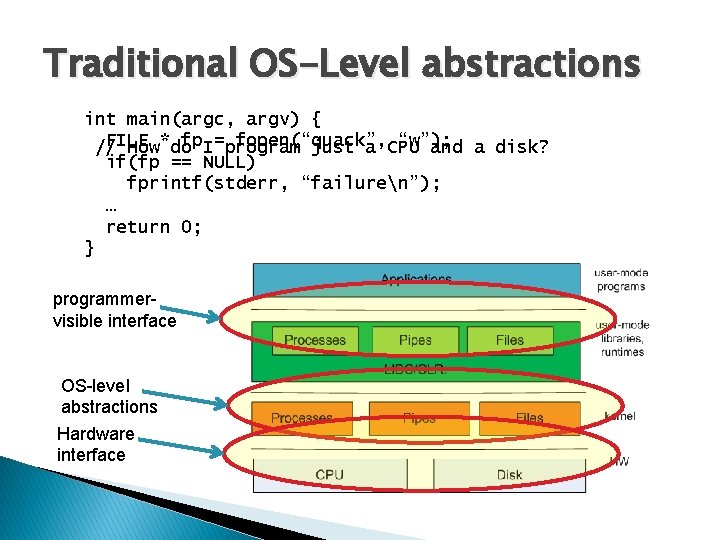

Traditional OS-Level abstractions int main(argc, argv) { FILE fp. I=program fopen(“quack”, “w”); // How*do just a CPU and a disk? if(fp == NULL) fprintf(stderr, “failuren”); … return 0; } programmervisible interface OS-level abstractions Hardware interface

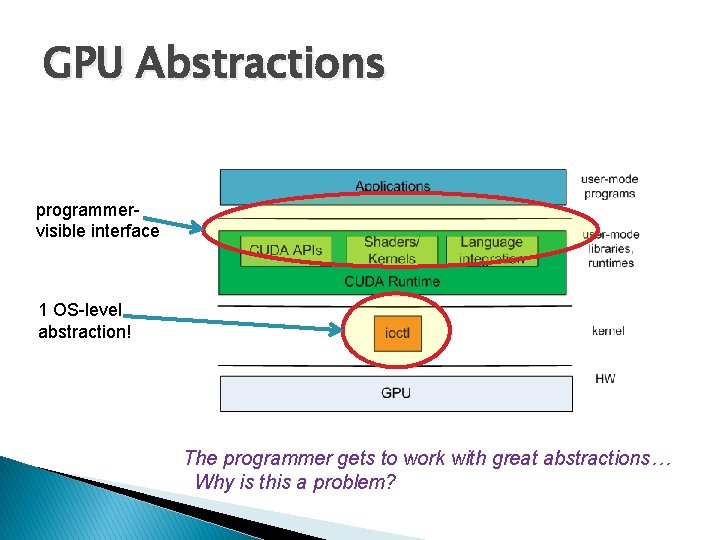

GPU Abstractions programmervisible interface 1 OS-level abstraction! The programmer gets to work with great abstractions… Why is this a problem?

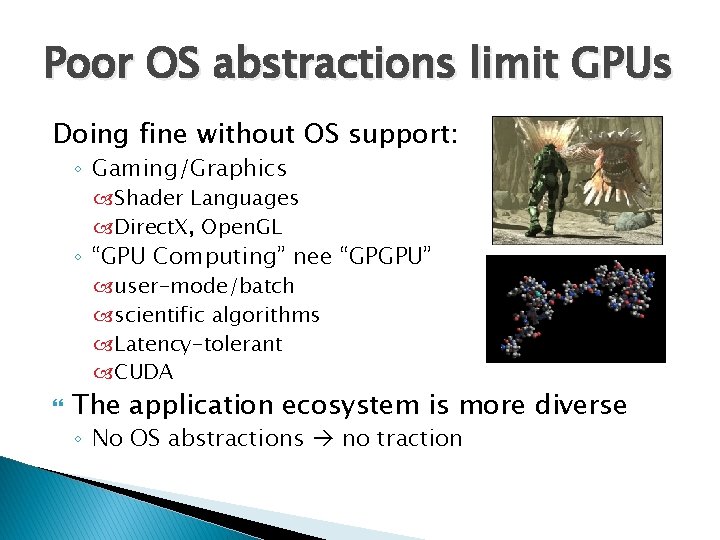

Poor OS abstractions limit GPUs Doing fine without OS support: ◦ Gaming/Graphics Shader Languages Direct. X, Open. GL ◦ “GPU Computing” nee “GPGPU” user-mode/batch scientific algorithms Latency-tolerant CUDA The application ecosystem is more diverse ◦ No OS abstractions no traction

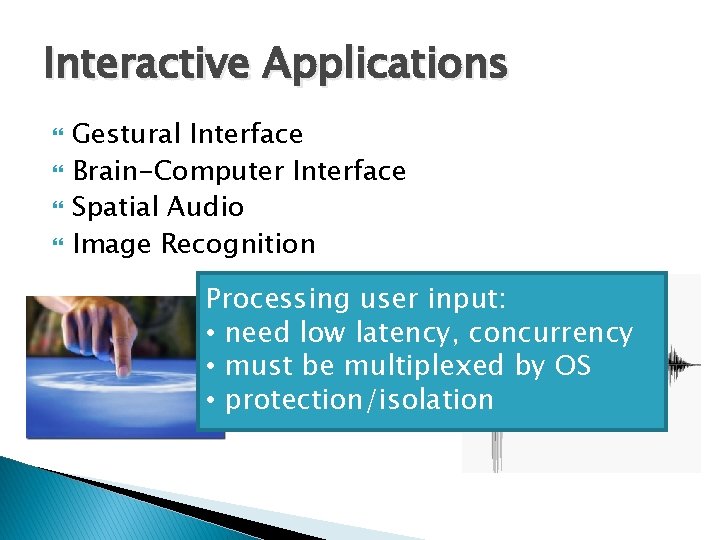

Interactive Applications Gestural Interface Brain-Computer Interface Spatial Audio Image Recognition Processing user input: • need low latency, concurrency • must be multiplexed by OS • protection/isolation

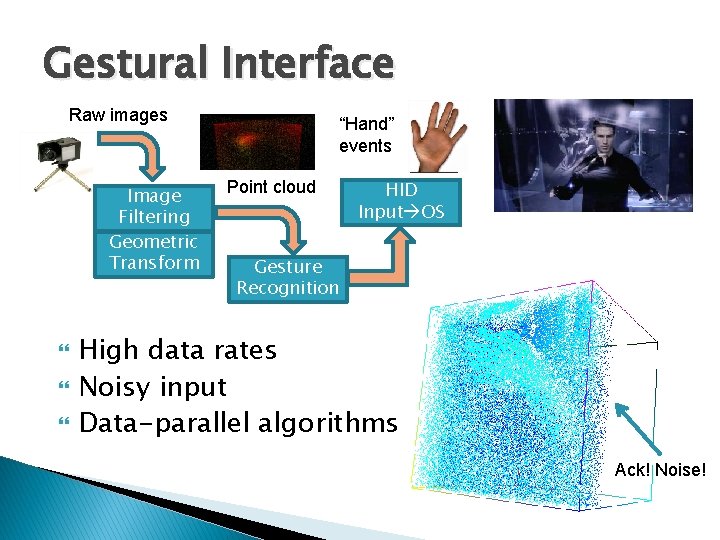

Gestural Interface Raw images Image Filtering Geometric Transform “Hand” events Point cloud HID Input OS Gesture Recognition High data rates Noisy input Data-parallel algorithms Ack! Noise!

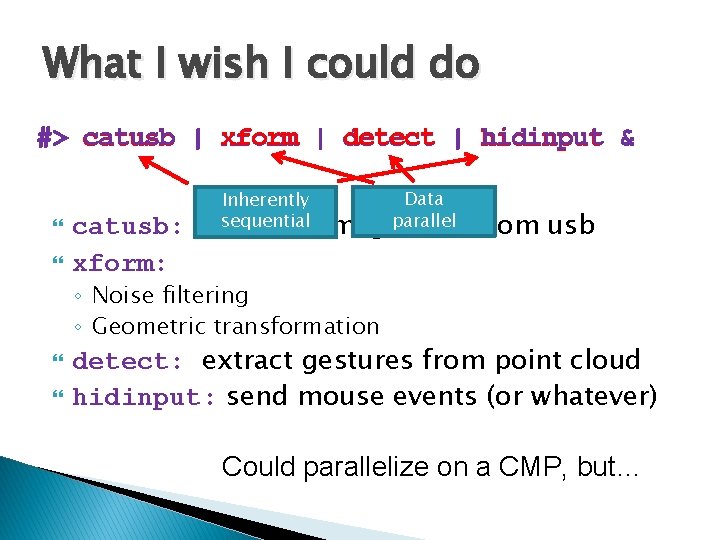

What I wish I could do #> catusb | xform | detect | hidinput & catusb: xform: Inherently sequential captures Data imageparallel data from usb ◦ Noise filtering ◦ Geometric transformation detect: extract gestures from point cloud hidinput: send mouse events (or whatever) Could parallelize on a CMP, but…

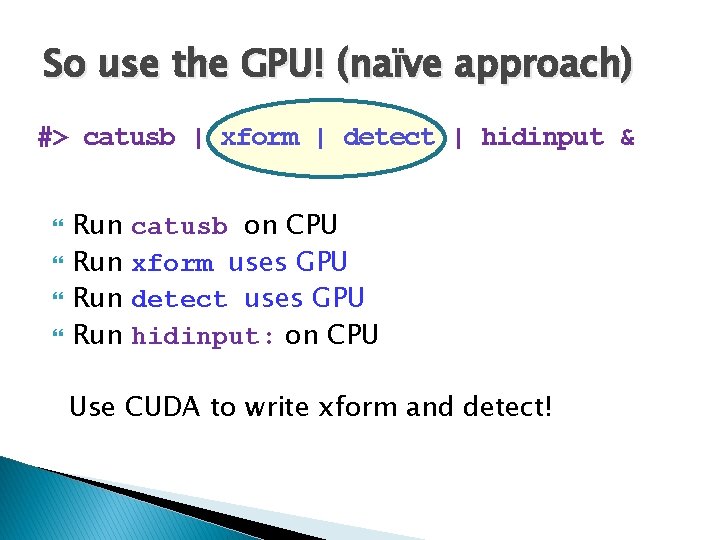

So use the GPU! (naïve approach) #> catusb | xform | detect | hidinput & Run Run catusb on CPU xform uses GPU detect uses GPU hidinput: on CPU Use CUDA to write xform and detect!

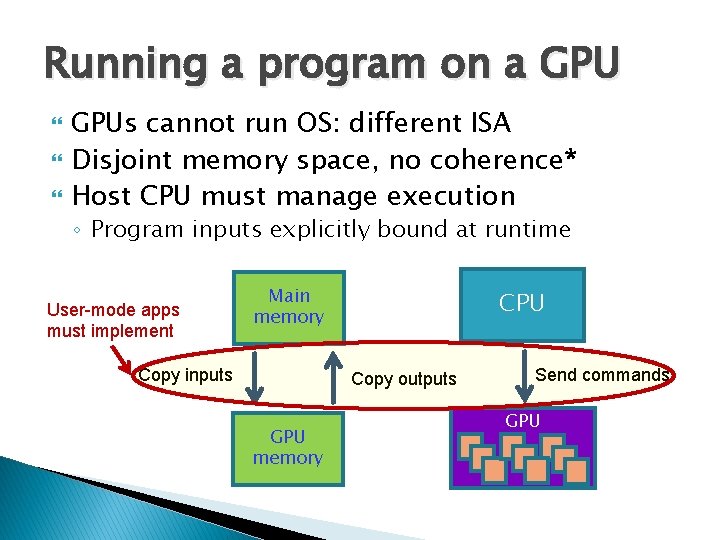

Running a program on a GPUs cannot run OS: different ISA Disjoint memory space, no coherence* Host CPU must manage execution ◦ Program inputs explicitly bound at runtime User-mode apps must implement Main memory Copy inputs CPU Copy outputs GPU memory Send commands GPU

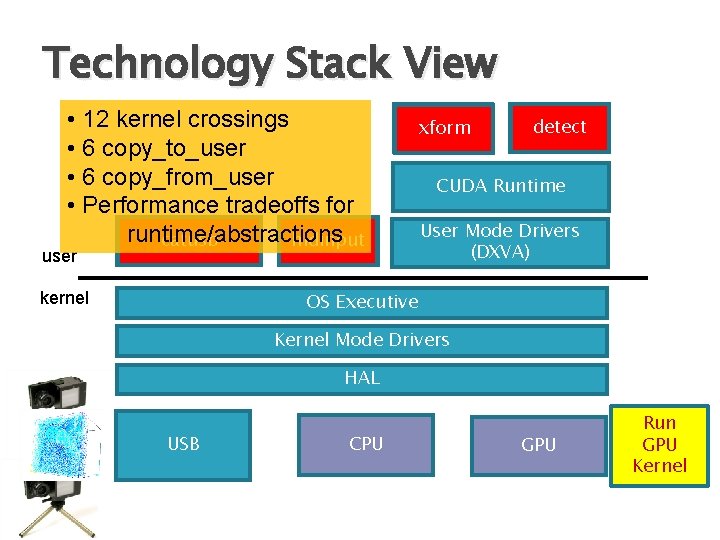

Technology Stack View • 12 kernel crossings • 6 copy_to_user • 6 copy_from_user • Performance tradeoffs for runtime/abstractions catusb hidinput xform user kernel detect CUDA Runtime User Mode Drivers (DXVA) OS Executive Kernel Mode Drivers HAL USB CPU GPU Run GPU Kernel

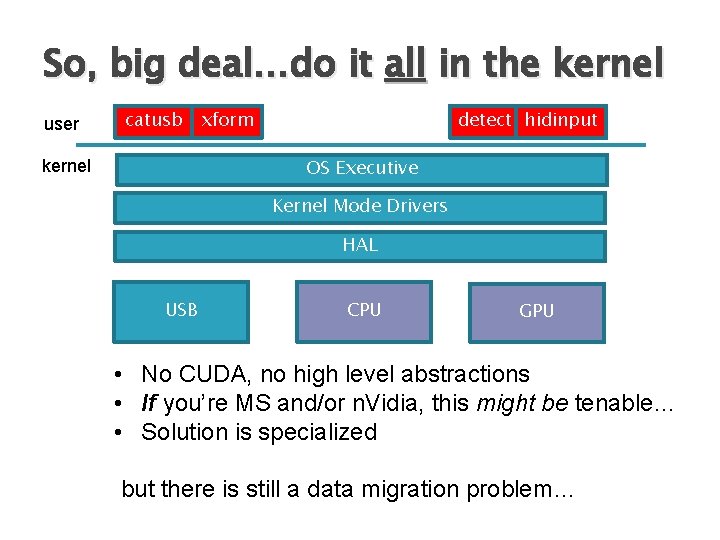

So, big deal…do it all in the kernel user catusb xform detect hidinput OS Executive kernel Kernel Mode Drivers HAL USB CPU GPU • No CUDA, no high level abstractions • If you’re MS and/or n. Vidia, this might be tenable… • Solution is specialized but there is still a data migration problem…

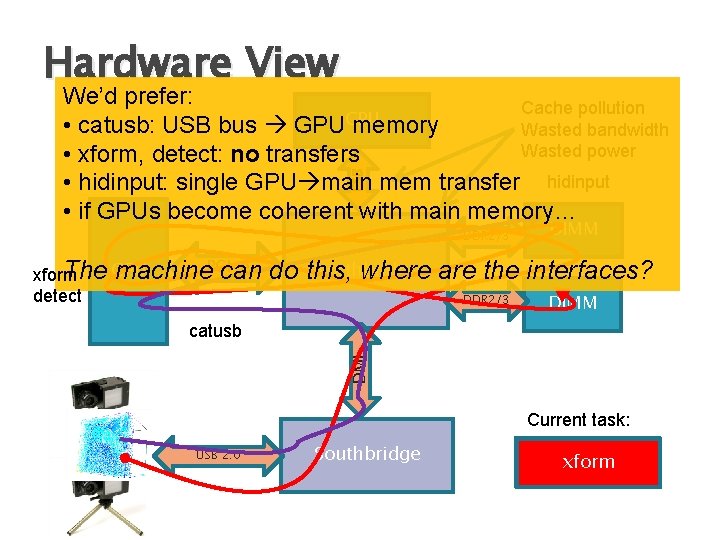

Hardware View FSB We’d prefer: Cache pollution CPU • catusb: USB bus GPU memory Wasted bandwidth Wasted power • xform, detect: no transfers • hidinput: single GPU main mem transfer hidinput • if GPUs become coherent with main memory… DDR 2/3 The GPU machine. PCI-e can xform detect DIMM Northbridge do this, where are the interfaces? DDR 2/3 DIMM DMI catusb Current task: USB 2. 0 Southbridge catusb xform

Outline Motivation Problems with lack of OS abstractions Can CUDA solve these problems? ◦ ◦ CUDA Streams Asynchrony GPUDirect™ OS guarantees New OS abstractions for GPUs Related Work Conclusion

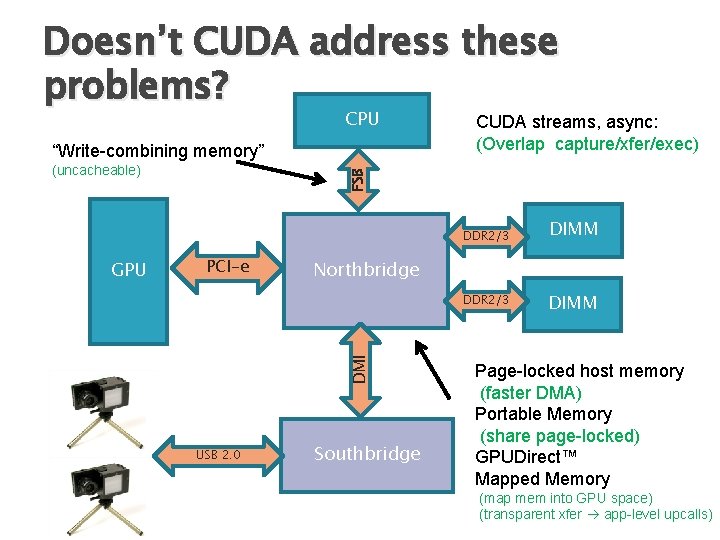

Doesn’t CUDA address these problems? CPU “Write-combining memory” FSB (uncacheable) CUDA streams, async: (Overlap capture/xfer/exec) DDR 2/3 PCI-e Northbridge DDR 2/3 DMI GPU USB 2. 0 DIMM Southbridge DIMM Page-locked host memory (faster DMA) Portable Memory (share page-locked) GPUDirect™ Mapped Memory (map mem into GPU space) (transparent xfer app-level upcalls)

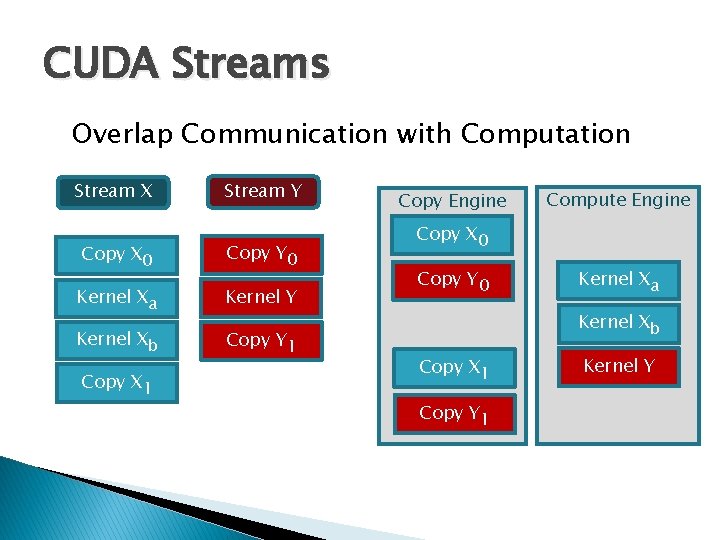

CUDA Streams Overlap Communication with Computation Stream X Stream Y Copy X 0 Copy Y 0 Kernel Xa Kernel Y Kernel Xb Copy Y 1 Copy X 1 Copy Engine Compute Engine Copy X 0 Copy Y 0 Kernel Xa Kernel Xb Copy X 1 Copy Y 1 Kernel Y

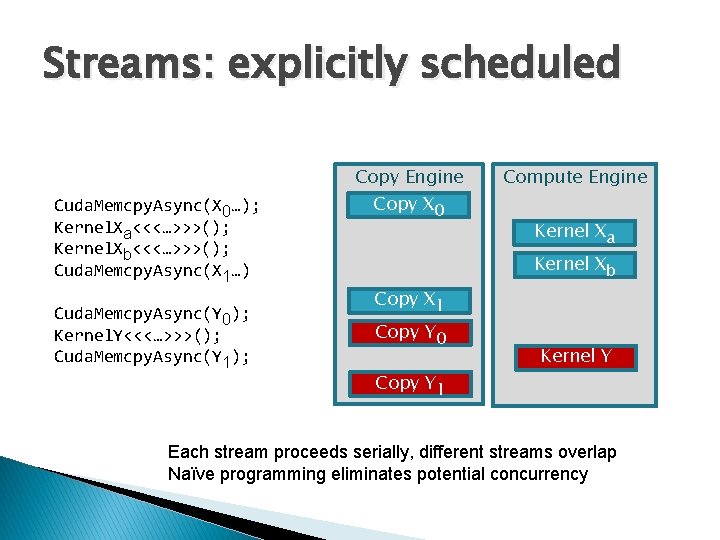

Streams: explicitly scheduled Cuda. Memcpy. Async(X 0…); Kernel. Xa<<<…>>>(); Kernel. Xb<<<…>>>(); Cuda. Memcpy. Async(X 1…) Cuda. Memcpy. Async(Y 0); Kernel. Y<<<…>>>(); Cuda. Memcpy. Async(Y 1); Copy Engine Copy X 0 Copy X 1 Copy Y 0 Copy Y 1 Compute Engine Kernel Xa Kernel Xb Kernel Y Each stream proceeds serially, different streams overlap Naïve programming eliminates potential concurrency

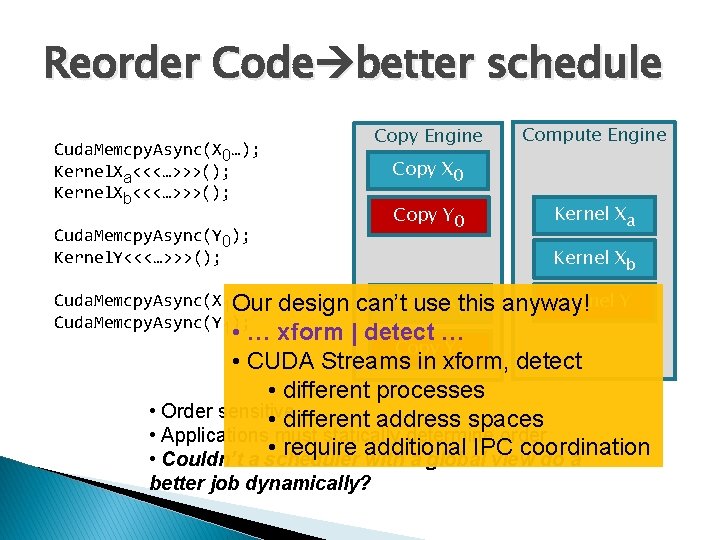

Reorder Code better schedule Cuda. Memcpy. Async(X 0…); Kernel. Xa<<<…>>>(); Kernel. Xb<<<…>>>(); Cuda. Memcpy. Async(Y 0); Kernel. Y<<<…>>>(); Copy Engine Compute Engine Copy X 0 Copy Y 0 Kernel Xa Kernel Xb Kernel Y design can’t. Copy use. X 1 this anyway! • … xform | detect … Copy Y 1 • CUDA Streams in xform, detect • different processes • Order sensitive • different address spaces • Applications must statically determine order • require additional IPC coordination • Couldn’t a scheduler with a global view do a Cuda. Memcpy. Async(X 1…) Our Cuda. Memcpy. Async(Y 1); better job dynamically?

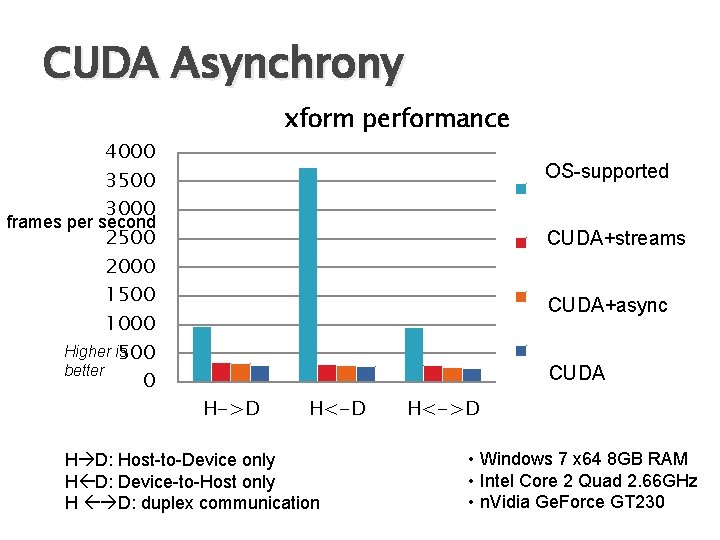

CUDA Asynchrony 4000 3500 3000 frames per second 2500 2000 1500 1000 Higher is 500 better 0 xform performance OS-supported ptask-analogue CUDA+streams CUDA-asyncping-pong CUDA-async CUDA+async CUDA H->D H<-D H D: Host-to-Device only H D: Device-to-Host only H D: duplex communication H<->D • Windows 7 x 64 8 GB RAM • Intel Core 2 Quad 2. 66 GHz • n. Vidia Ge. Force GT 230

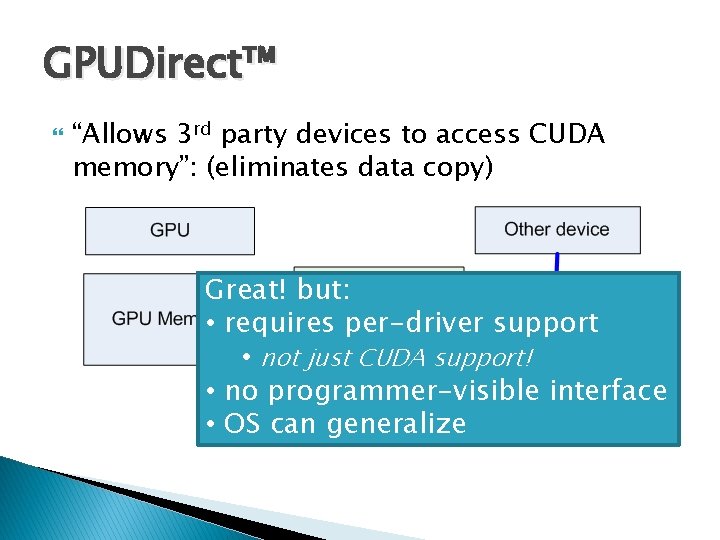

GPUDirect™ “Allows 3 rd party devices to access CUDA memory”: (eliminates data copy) Great! but: • requires per-driver support • not just CUDA support! • no programmer-visible interface • OS can generalize

The Elephant in the Room Traditional OS guarantees: Fairness Isolation No user-space runtime can provide these! Can support… Cannot guarantee

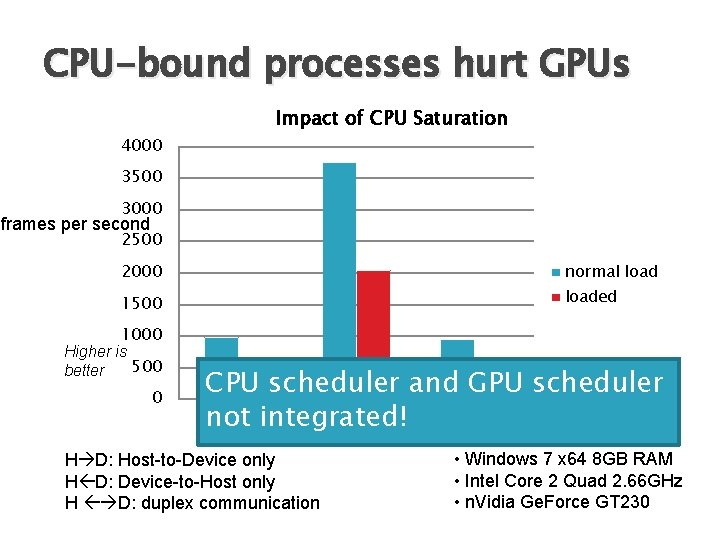

CPU-bound processes hurt GPUs Impact of CPU Saturation 4000 3500 3000 frames per second 2500 2000 normal load 1500 loaded 1000 Higher is 500 better 0 CPU scheduler and GPU scheduler H->Dintegrated! H<-D H<->D not H D: Host-to-Device only H D: Device-to-Host only H D: duplex communication • Windows 7 x 64 8 GB RAM • Intel Core 2 Quad 2. 66 GHz • n. Vidia Ge. Force GT 230

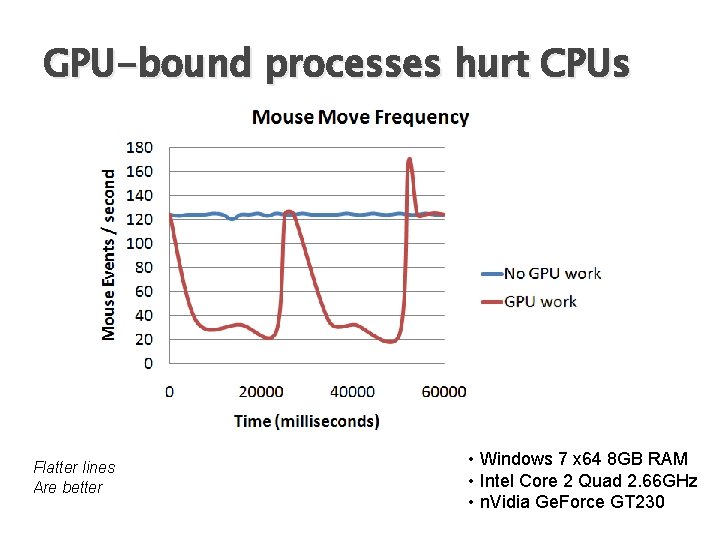

GPU-bound processes hurt CPUs Flatter lines Are better • Windows 7 x 64 8 GB RAM • Intel Core 2 Quad 2. 66 GHz • n. Vidia Ge. Force GT 230

Meaningful “GPU Computing” implies GPUs should be managed like CPUs Process API analogues IPC API analogues Scheduler hint analogues Must integrate with existing interfaces ◦ CUDA/DXGI/Direct. X ◦ DRI/DRM/Open. GL

Outline Motivation Problems with lack of OS abstractions Can CUDA solve these problems? New OS abstractions for GPUs Related Work Conclusion

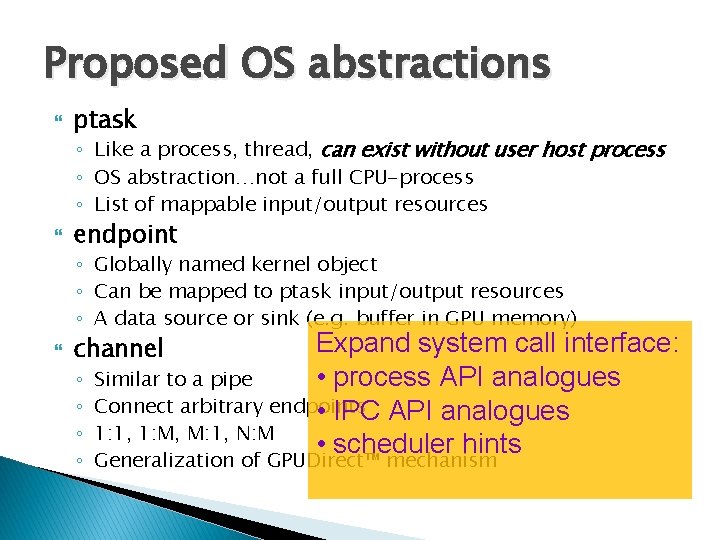

Proposed OS abstractions ptask ◦ Like a process, thread, can exist without user host process ◦ OS abstraction…not a full CPU-process ◦ List of mappable input/output resources endpoint ◦ Globally named kernel object ◦ Can be mapped to ptask input/output resources ◦ A data source or sink (e. g. buffer in GPU memory) Expand system call interface: Similar to a pipe • process API analogues Connect arbitrary endpoints • IPC API analogues 1: 1, 1: M, M: 1, N: M • scheduler hints channel ◦ ◦ Generalization of GPUDirect™ mechanism

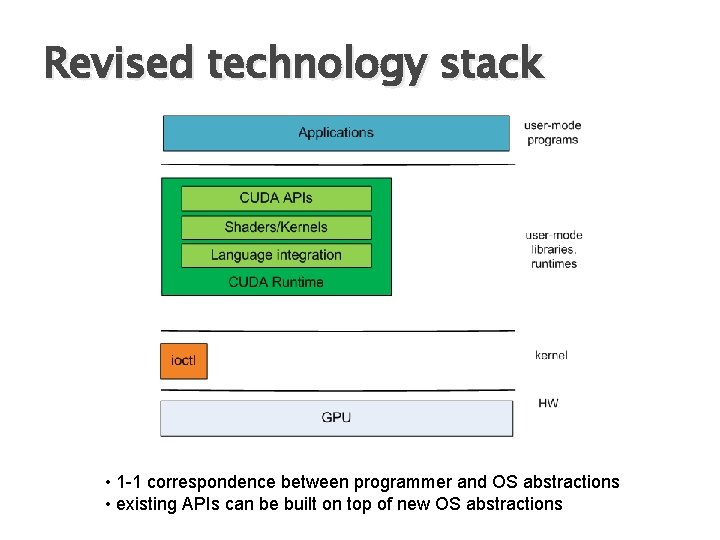

Revised technology stack • 1 -1 correspondence between programmer and OS abstractions • existing APIs can be built on top of new OS abstractions

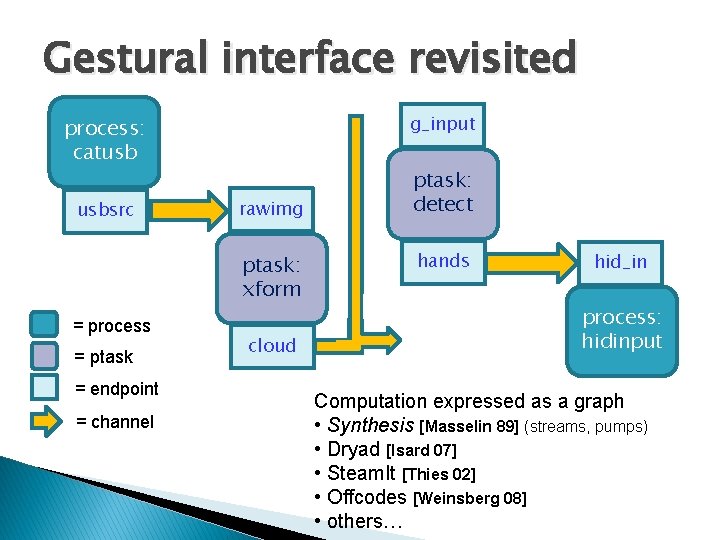

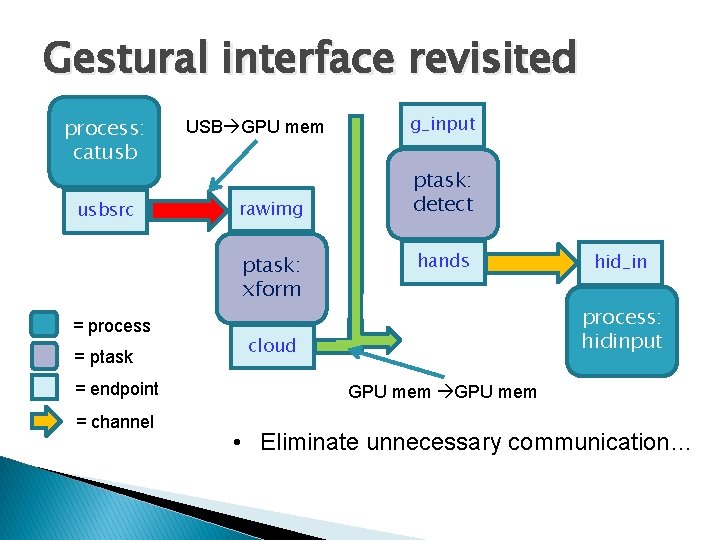

Gestural interface revisited g_input process: catusb usbsrc rawimg ptask: xform = process = ptask = endpoint = channel cloud ptask: detect hands hid_in process: hidinput Computation expressed as a graph • Synthesis [Masselin 89] (streams, pumps) • Dryad [Isard 07] • Steam. It [Thies 02] • Offcodes [Weinsberg 08] • others…

Gestural interface revisited process: catusb usbsrc USB GPU mem rawimg ptask: xform = process = ptask = endpoint = channel g_input ptask: detect hands hid_in process: hidinput cloud GPU mem • Eliminate unnecessary communication…

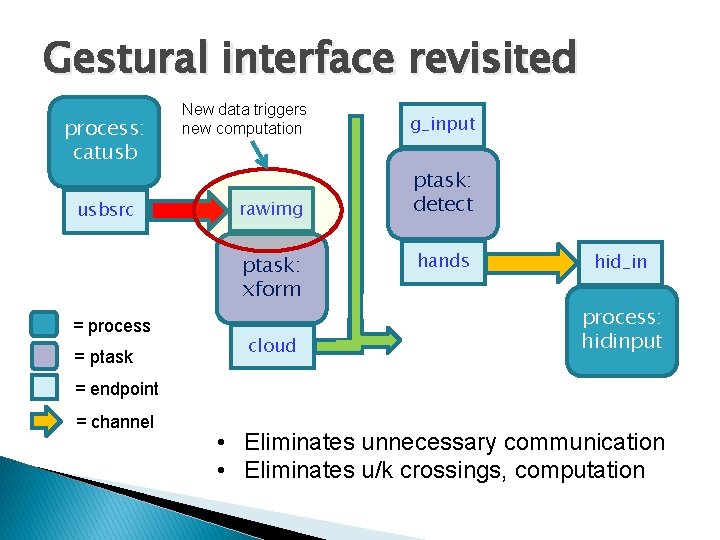

Gestural interface revisited process: catusb usbsrc New data triggers new computation g_input rawimg ptask: detect ptask: xform = process = ptask cloud hands hid_in process: hidinput = endpoint = channel • Eliminates unnecessary communication • Eliminates u/k crossings, computation

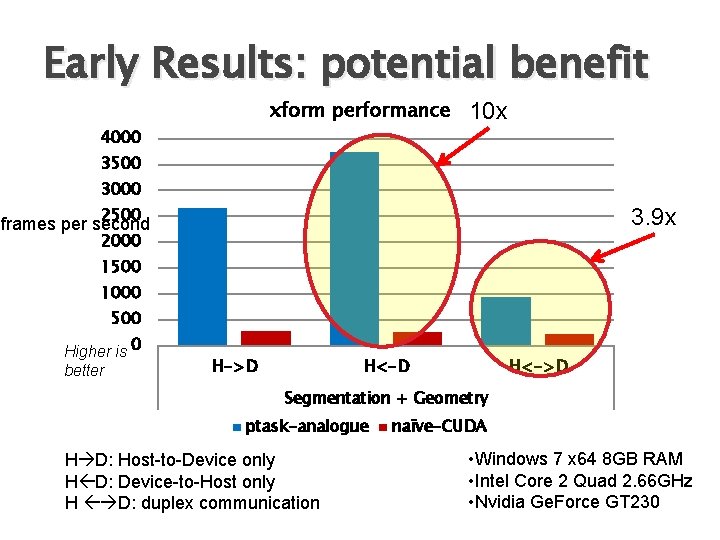

Early Results: potential benefit xform performance 10 x 4000 3500 3000 2500 3. 9 x frames per second 2000 1500 1000 500 Higher is 0 better H->D H<->D Segmentation + Geometry ptask-analogue H D: Host-to-Device only H D: Device-to-Host only H D: duplex communication naïve-CUDA • Windows 7 x 64 8 GB RAM • Intel Core 2 Quad 2. 66 GHz • Nvidia Ge. Force GT 230

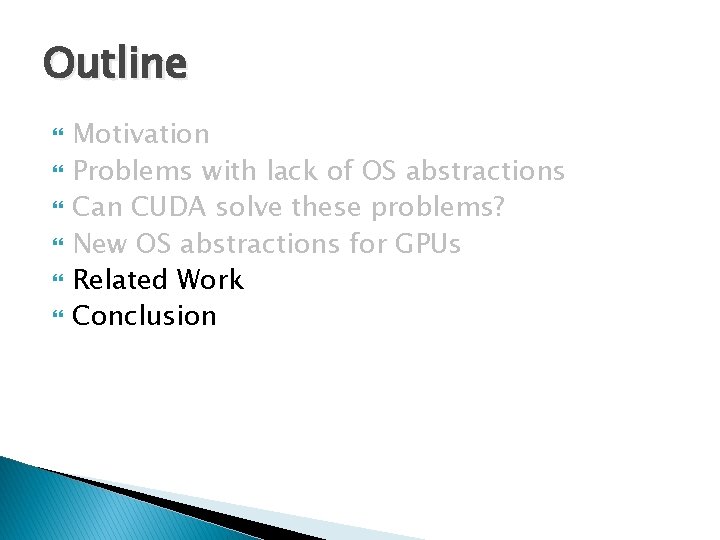

Outline Motivation Problems with lack of OS abstractions Can CUDA solve these problems? New OS abstractions for GPUs Related Work Conclusion

![Related Work OS support for Heterogeneous arch: ◦ Helios [Nightingale 09] ◦ Barrel. Fish Related Work OS support for Heterogeneous arch: ◦ Helios [Nightingale 09] ◦ Barrel. Fish](http://slidetodoc.com/presentation_image_h2/7b62549d62ad2d1c392ec9e0e50ecc7b/image-33.jpg)

Related Work OS support for Heterogeneous arch: ◦ Helios [Nightingale 09] ◦ Barrel. Fish [Baumann 09] ◦ Offcodes [Weinsberg 08] Graph-based programming models ◦ ◦ ◦ Synthesis [Masselin 89] Monsoon/Id [Arvind] Dryad [Isard 07] Stream. It [Thies 02] Direct. Show TCP Offload [Currid 04] GPU Computing ◦ CUDA, Open. CL

Conclusions CUDA: programming interface is right ◦ ◦ but OS must get involved Current interfaces waste data movement Current interfaces inhibit modularity/reuse Cannot guarantee fairness, isolation OS-level abstractions are required Questions?

- Slides: 34