Operant Conditioning The major theorists for the development

- Slides: 17

Operant Conditioning The major theorists for the development of operant conditioning are: • Edward Thorndike • John Watson • B. F. Skinner

Operant Conditioning • Operant conditioning investigates the influence of consequences on subsequent behavior, as well as the learning of voluntary responses. • It is often referred to as the ABC’s of behavior, with: A– being the Antecedent, or what comes before the behavior. • B– the Behavior itself. • C– the Consequence of the behavior.

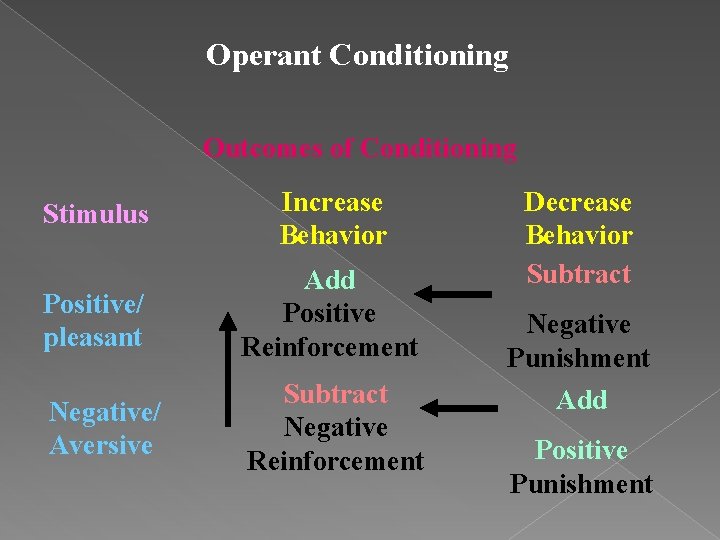

Operant Conditioning • There are two types of consequences: – positive (sometimes called pleasant) – negative (sometimes called aversive or unpleasant)

Operant Conditioning • Two actions can be taken with these stimuli: – they can be ADDED to the learner’s environment. – they can be SUBRACTED from the learner’s environment. • If adding or subtracting the stimulus results in a change in the probability that the response will occur again, the stimulus is considered a CONSEQUENCE. • Otherwise the stimulus is considered a NEUTRAL stimulus.

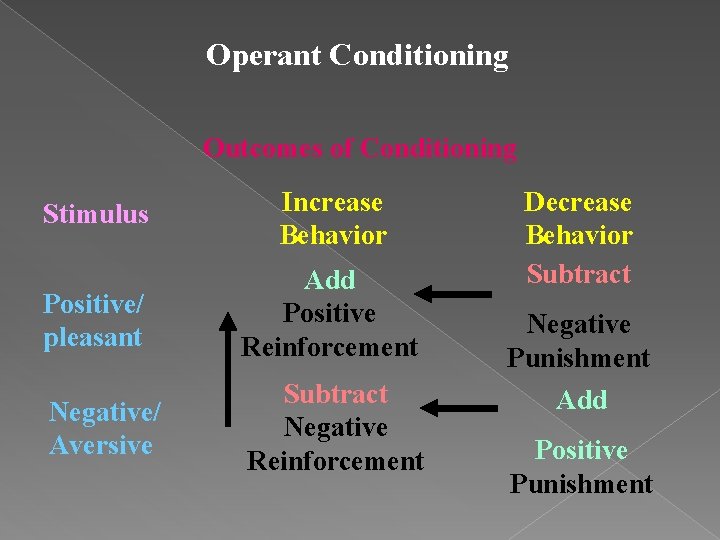

Operant Conditioning • There are 4 major techniques or methods used in operant conditioning. • They result from combining: – the two major purposes of operant conditioning (increasing or decreasing the probability that a specific behavior will occur in the future), – the types of stimuli used (positive/pleasant or negative/aversive), and – the action taken (adding or removing the stimulus).

Operant Conditioning Outcomes of Conditioning Stimulus Increase Behavior Positive/ pleasant Add Positive Reinforcement Negative/ Aversive Subtract Negative Reinforcement Decrease Behavior Subtract Negative Punishment Add Positive Punishment

Schedules of Reinforcement Continuous reinforcement simply means that the behavior is followed by a consequence each time it occurs. • Excellent for getting a new behavior started. • Behavior stops quickly when reinforcement stops. • Is the schedule of choice for punishment and response cost.

Schedules of consequences This results in an four classes of intermittent schedules. Fixed Interval • The first correct response after a set amount of time has passed is reinforced (i. e. , a consequence is delivered). • The time period required is always the same. • Example: Spelling test every Friday.

Schedules of consequences Variable Interval • The first correct response after a set amount of time has passed is reinforced (i. e. , a consequence is delivered). • After the reinforcement, a new time period (shorter or longer) is set with the average equaling a specific number over a sum total of trials. • Example: Pop quiz

Schedules of consequences Fixed Ratio • A reinforcer is given after a specified number of correct responses. This schedule is best for learning a new behavior. • The number of correct responses required for reinforcement remains the same. • Example: Ten math problems for homework

Schedules of consequences Variable Ratio • A reinforcer is given after a set number of correct responses. • After reinforcement the number of correct responses necessary for reinforcement changes. This schedule is best for maintaining behavior. • Example: A student raises his hand to be called on.

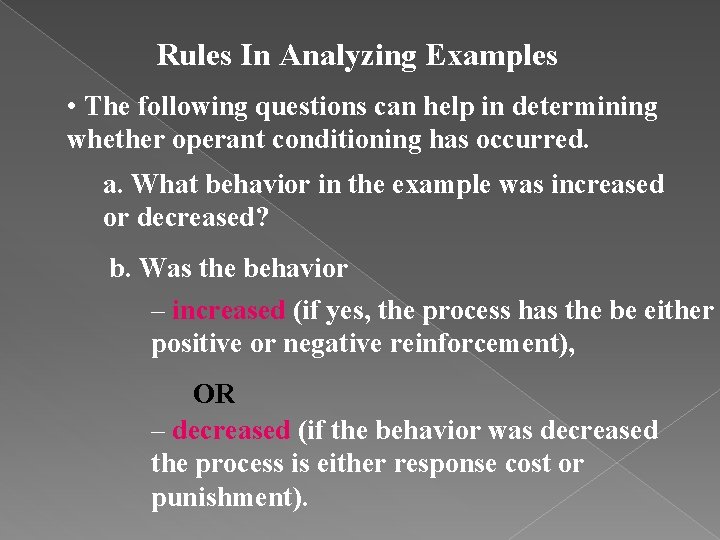

Rules In Analyzing Examples • The following questions can help in determining whether operant conditioning has occurred. a. What behavior in the example was increased or decreased? b. Was the behavior – increased (if yes, the process has the be either positive or negative reinforcement), OR – decreased (if the behavior was decreased the process is either response cost or punishment).

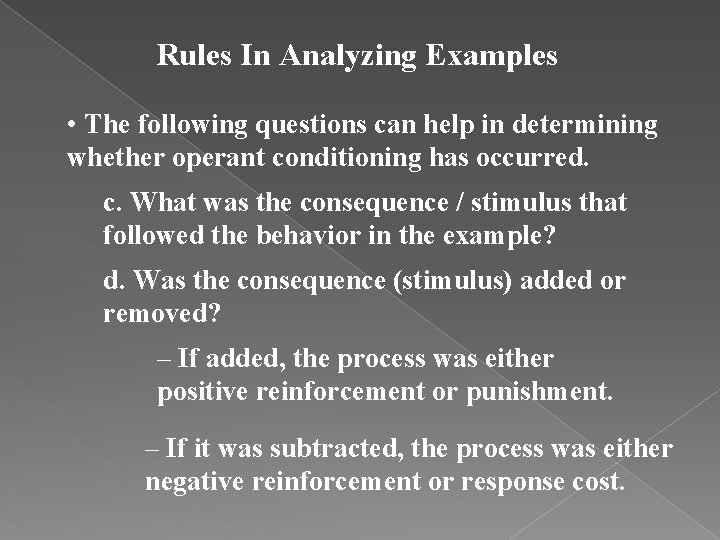

Rules In Analyzing Examples • The following questions can help in determining whether operant conditioning has occurred. c. What was the consequence / stimulus that followed the behavior in the example? d. Was the consequence (stimulus) added or removed? – If added, the process was either positive reinforcement or punishment. – If it was subtracted, the process was either negative reinforcement or response cost.

Analyzing An Example Billy likes to campout in the backyard. He campedout on every Friday during the month of June. The last time he camped out, some older kids snuck up to his tent while he was sleeping and threw a bucket of cold water on him. Billy has not camped-out for three weeks. a. What behavior was changed? Camping out

Analyzing An Example Billy likes to campout in the backyard. He campedout on every Friday during the month of June. The last time he camped out, some older kids snuck up to his tent while he was sleeping and threw a bucket of cold water on him. Billy has not camped-out for three weeks. b. Was the behavior strengthened or weakened? Weakened (Behavior decreased) Eliminate positive and negative reinforcement

Analyzing An Example Billy likes to campout in the backyard. He campedout on every Friday during the month of June. The last time he camped out, some older kids snuck up to his tent while he was sleeping and threw a bucket of cold water on him. Billy has not camped-out for three weeks. c. What was the consequence? Having water thrown on him. d. Was the behavior consequence added or subtracted? Added

Analyzing An Example Billy likes to campout in the backyard. He campedout on every Friday during the month of June. The last time he camped out, some older kids snuck up to his tent while he was sleeping and threw a bucket of cold water on him. Billy has not camped-out for three weeks. Since a consequence was ADDED and the behavior was WEAKENED (REDUCED), the process was PUNISHMENT.