Operant Conditioning DR DINESH RAMOO Introduction Shortly before

Operant Conditioning DR DINESH RAMOO

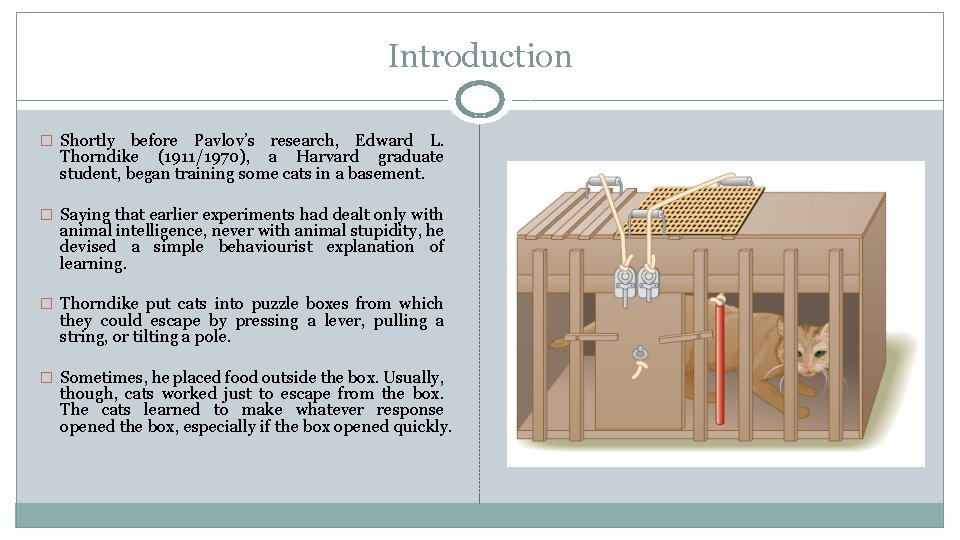

Introduction � Shortly before Pavlov’s research, Edward L. Thorndike (1911/1970), a Harvard graduate student, began training some cats in a basement. � Saying that earlier experiments had dealt only with animal intelligence, never with animal stupidity, he devised a simple behaviourist explanation of learning. � Thorndike put cats into puzzle boxes from which they could escape by pressing a lever, pulling a string, or tilting a pole. � Sometimes, he placed food outside the box. Usually, though, cats worked just to escape from the box. The cats learned to make whatever response opened the box, especially if the box opened quickly.

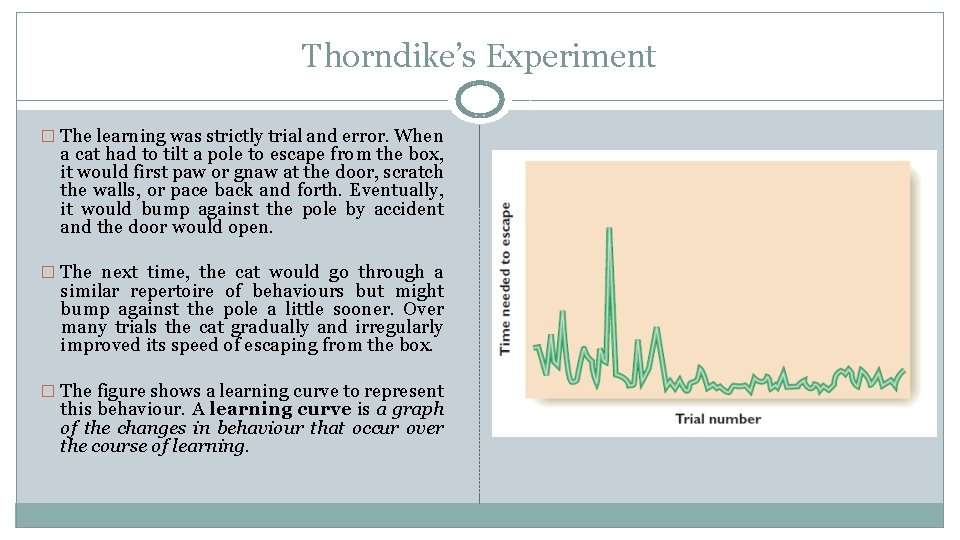

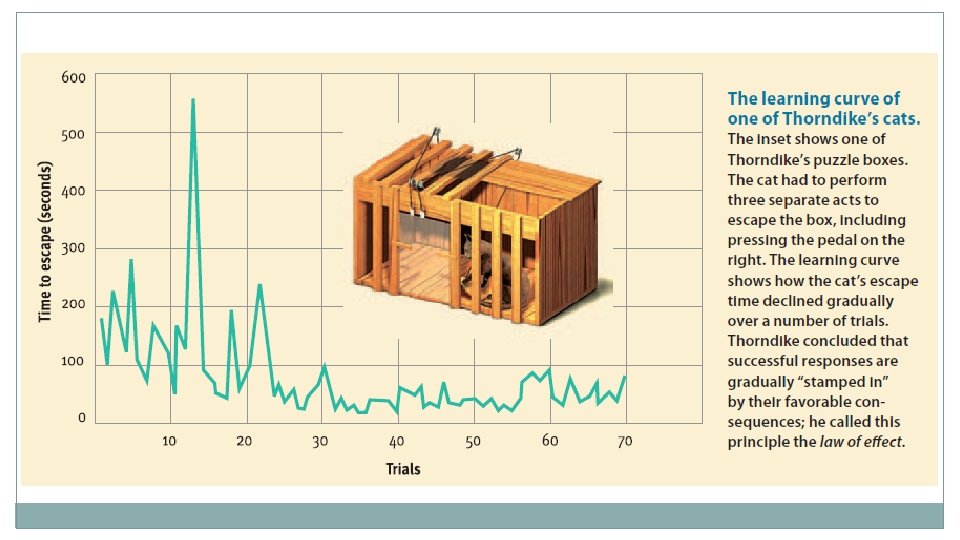

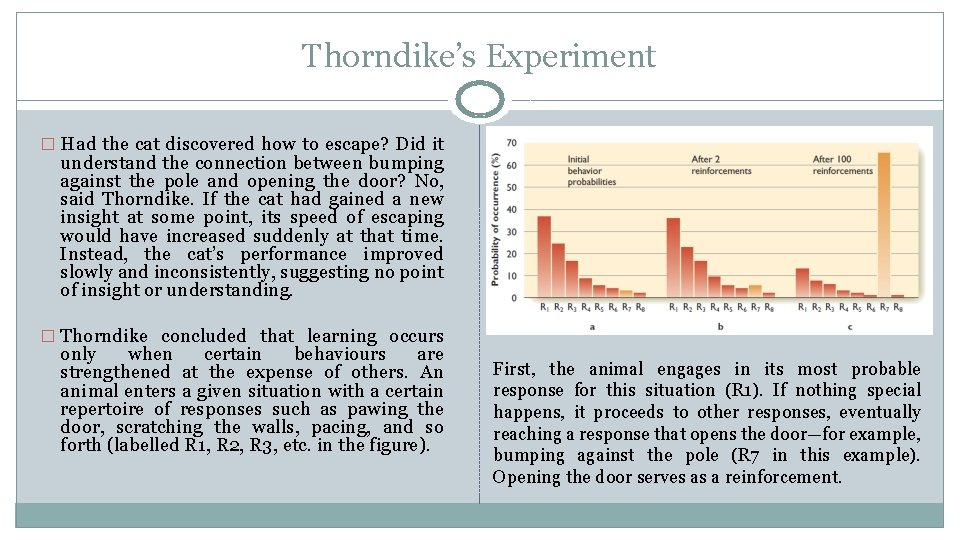

Thorndike’s Experiment � The learning was strictly trial and error. When a cat had to tilt a pole to escape from the box, it would first paw or gnaw at the door, scratch the walls, or pace back and forth. Eventually, it would bump against the pole by accident and the door would open. � The next time, the cat would go through a similar repertoire of behaviours but might bump against the pole a little sooner. Over many trials the cat gradually and irregularly improved its speed of escaping from the box. � The figure shows a learning curve to represent this behaviour. A learning curve is a graph of the changes in behaviour that occur over the course of learning.

Thorndike’s Experiment � Had the cat discovered how to escape? Did it understand the connection between bumping against the pole and opening the door? No, said Thorndike. If the cat had gained a new insight at some point, its speed of escaping would have increased suddenly at that time. Instead, the cat’s performance improved slowly and inconsistently, suggesting no point of insight or understanding. � Thorndike concluded that learning occurs only when certain behaviours are strengthened at the expense of others. An animal enters a given situation with a certain repertoire of responses such as pawing the door, scratching the walls, pacing, and so forth (labelled R 1, R 2, R 3, etc. in the figure). First, the animal engages in its most probable response for this situation (R 1). If nothing special happens, it proceeds to other responses, eventually reaching a response that opens the door—for example, bumping against the pole (R 7 in this example). Opening the door serves as a reinforcement.

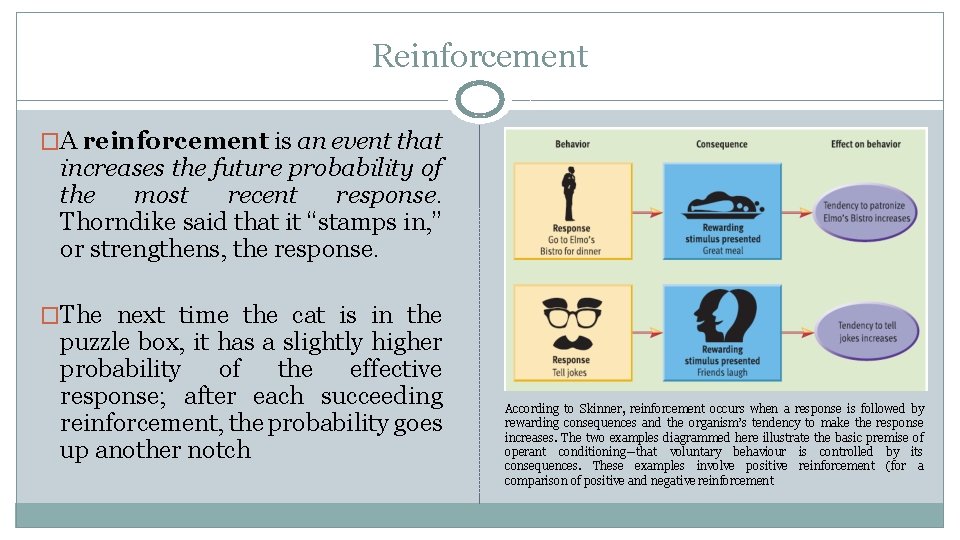

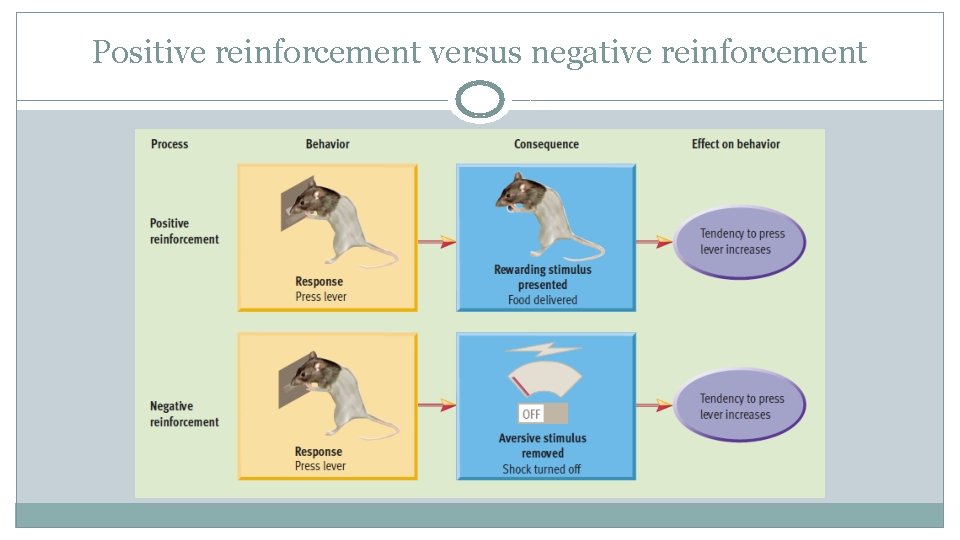

Reinforcement �A reinforcement is an event that increases the future probability of the most recent response. Thorndike said that it “stamps in, ” or strengthens, the response. �The next time the cat is in the puzzle box, it has a slightly higher probability of the effective response; after each succeeding reinforcement, the probability goes up another notch According to Skinner, reinforcement occurs when a response is followed by rewarding consequences and the organism’s tendency to make the response increases. The two examples diagrammed here illustrate the basic premise of operant conditioning—that voluntary behaviour is controlled by its consequences. These examples involve positive reinforcement (for a comparison of positive and negative reinforcement

Law of Effect �Thorndike summarized his views in the law of effect (Thorndike, 1911/1970, p. 244): “Of several responses made to the same situation, those which are accompanied or closely followed by satisfaction to the animal will, other things being equal, be more firmly connected with the situation, so that, when it recurs, they will be more likely to recur. ” �Hence, the animal becomes more likely to repeat the responses that led to favourable consequences even if it does not understand why. �In fact it doesn’t need to “understand” anything at all. A fairly simple machine could produce responses at random and then repeat the ones that led to reinforcement.

Operant Conditioning �Thorndike revolutionized the study of animal learning, substituting experimentation for the collection of anecdotes. �He also demonstrated the possibility of simple explanations for apparently complex behaviours (Dewsbury, 1998). �On the negative side, his example of studying animals in contrived laboratory situations led researchers to ignore many interesting phenomena about animals’ natural way of life (Galef, 1998). �The kind of learning that Thorndike studied is known as operant conditioning (because the subject operates on the environment to produce an outcome), or instrumental conditioning (because the subject’s behaviour is instrumental in producing the outcome).

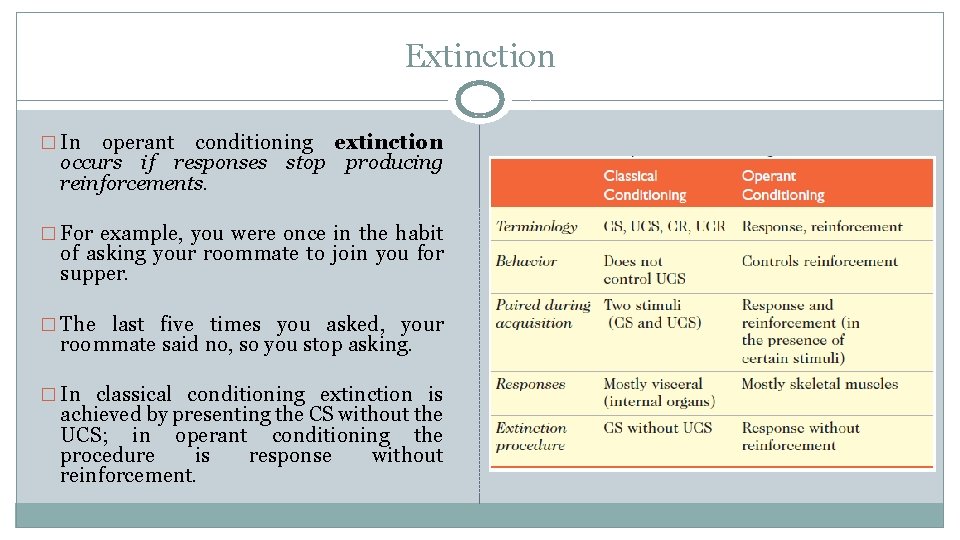

Classical and Operant Conditioning � Operant or instrumental conditioning is the process of changing behaviour by providing a reinforcement after a response. � The defining difference between operant conditioning and classical conditioning is the procedure: In operant conditioning the subject’s behaviour determines an outcome and the outcome affects future behaviour. In classical conditioning the subject’s behaviour has no effect on the outcome (the presentation of either the CS or the UCS). � For example, in classical conditioning the experimenter (or the world) presents two stimuli at particular times, regardless of what the individual does or doesn’t do. � In operant conditioning the individual has to make some response before it receives reinforcement.

Classical and Operant Conditioning �In general the two kinds of conditioning also differ in the behaviours they affect. �Classical conditioning applies primarily to visceral responses (i. e. , responses of the internal organs), such as salivation and digestion, whereas operant conditioning applies primarily to skeletal responses (i. e. , movements of leg muscles, arm muscles, etc. ). �However, this distinction sometimes breaks down. For example, if a tone consistently precedes an electric shock (a classical-conditioning procedure), the tone will make the animal freeze in position (a skeletal response) as well as increase its heart rate (a visceral response).

Reinforcement and Punishment � What constitutes reinforcement? From a practical standpoint, a reinforcer is an event that follows a response and increases the later probability or frequency of that response. � However, from a theoretical standpoint, we would like to have some way of predicting what would be a reinforcer and what would not. We might guess that reinforcers are biologically useful to the individual, but in fact many are not. � For example, saccharin, a sweet but biologically useless chemical, can be a reinforcer. � For many people alcohol and tobacco are stronger reinforcers than vitamin rich vegetables. So biological usefulness doesn’t define reinforcement. � In his law of effect, Thorndike described reinforcers as events that brought “satisfaction to the animal. ” That definition won’t work either. How could you know what brings a rat or a cat satisfaction? Furthermore, people will work hard for a pay check, a decent grade in a course, and other outcomes that often don’t produce evidence of pleasure (Berridge & Robinson, 1995).

Premack principle �David Premack (1965) proposed a simple rule, now known as the Premack principle: The opportunity to engage in frequent behaviour (e. g. , eating) will reinforce any less frequent behaviour (e. g. , lever pressing). �A great strength of this idea is its recognition that a reinforcer for one individual may not be for another. �For example, if you love reading and hate watching television, someone could increase your television watching by reinforcing you with a new book for every 10 hours of television you watch. �For someone else who loves television and seldom reads, the opposite procedure might work.

Disequilibrium principle � The limitation of the Premack principle is that opportunities for uncommon behaviours can also be reinforcing. What matters is not just how often you perform various behaviours usually but whether you have recently performed them as much as usual. � For example, in an average week, you probably spend little or no time clipping your toenails. Still, if you have not had a chance to clip them for a long time, an opportunity to do so would be reinforcing. � According to the disequilibrium principle of reinforcement, each of us has a normal, or “equilibrium, ” state in which we spend a certain amount of time on each of various activities. If you have had a limited opportunity to engage in one of your behaviours, you are in disequilibrium, and an opportunity to increase that behaviour, to get back to equilibrium, will be reinforcing (Farmer. Dougan, 1998; Timberlake & Farmer-Dougan, 1991). � For example, suppose that when you can do whatever you choose, you spend 30% of your day sleeping, 10% eating, 12% exercising, 11% reading, 9% talking with friends, 3% grooming, 3% playing the piano, and so forth. If you have been unable to spend this much time on one of those activities, then the opportunity to engage in that activity will be reinforcing.

Primary and Secondary Reinforcers � Psychologists distinguish between primary reinforcers (or unconditioned reinforcers), which are reinforcing because of their own properties, and secondary reinforcers (or conditioned reinforcers), which became reinforcing because of previous experiences. � Food and water are primary reinforcers. � Money (a secondary reinforcer) becomes reinforcing because it can be exchanged for food or other primary reinforcers. � A student learns that good grades will win the approval of parents and teachers; an employee learns that increased sales will win the approval of an employer. � In these cases secondary means “learned. ” It does not mean weak or unimportant. We spend most of our time working for secondary reinforcers.

Punishment �In contrast to a reinforcer, which increases the probability of a response, a punishment decreases the probability of a response. �A reinforcer can be either the presentation of something (e. g. , food) or the removal of something (e. g. , pain). �A punishment can be either the presentation of something (e. g. , pain) or the removal of something (e. g. , food).

Effectiveness of Punishment � Punishments are not always effective. � For example, if the threat of punishment were always effective, the crime rate would be zero. B. F. Skinner (1938) tested punishment in a famous laboratory study. � He first trained food-deprived rats to press a bar to get food and then stopped reinforcing their presses. � For the first 10 minutes, some rats not only failed to get food but also had the bar slap their paws every time they pressed it. � The punished rats temporarily suppressed their pressing, but in the long run, they pressed as many times as did the unpunished rats. � Skinner concluded that punishment temporarily suppresses behaviour but produces no long- term effects.

Effectiveness of Punishment �That conclusion, however, is an overstatement (Staddon, 1993). A better conclusion would have been that punishment does not greatly weaken a response when no other response is available. �Skinner’s food deprived rats had no other way to seek food. �Similarly, if someone punished you for breathing, you would continue breathing, but not because you were a slow learner. �A review of the literature found that physical punishment had one clear benefit, which was immediate compliance. That is, if you want your child to stop doing something at once, a quick spank or slap on the hand will work (Gershoff, 2002).

Effectiveness of Punishment � Punishment produces compliance especially if it is quick and predictable. � If you might get punished a long time from now, the effects are weak and variable. � The threat of punishment for crime is often ineffective because the punishment is uncertain and long delayed. � On the negative side, however, children who are spanked tend to be more aggressive than other children, more prone to antisocial and criminal behaviour in both adolescence and later adulthood, and less healthy mentally. � On the average they have a worse relationship with their parents, and they are more likely than others later to become abusive toward their own children or spouse (Gershoff, 2002).

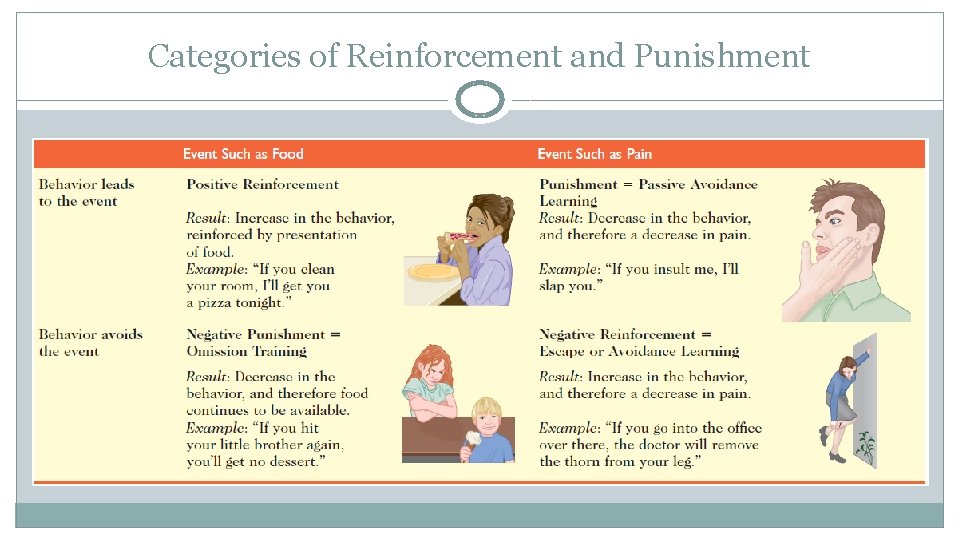

Categories of Reinforcement and Punishment �As mentioned, a reinforcer can be either the onset of something like food or the removal of something like pain. �Of course, what works as a reinforcer for one person at one time may not work for another person or at another time. �A punishment also can be either the onset or offset of something. �Psychologists use different terms to distinguish these possibilities

Categories of Reinforcement and Punishment

Categories of Reinforcement and Punishment �Note that the upper left and lower right of the table both show reinforcement. �Either gaining food or preventing pain increases the behaviour. �The items in the upper right and lower left are both punishment. �Gaining pain or preventing food decreases the behaviour. �Food and pain are, of course, just examples; many other events serve as reinforcers or punishers.

Positive reinforcement �Positive reinforcement is the presentation of an event (e. g. , food or money) that strengthens or increases the likelihood of a behaviour. �Punishment occurs when a response is followed by an event such as pain. �The frequency of the response then decreases. �For example, you put your hand on a hot stove, burn yourself, and learn to stop doing that. �Punishment is also called passive avoidance learning because the individual learns to avoid an outcome by being passive (e. g. , by not putting your hand on the stove).

Negative reinforcement � Try not to be confused by the term negative reinforcement. Negative reinforcement is a kind of reinforcement (not a punishment), and therefore, it increases the frequency of a behaviour. � It is “negative” in the sense that the reinforcement is the absence of something. � For example, you learn to apply sunscreen to avoid skin cancer, and you learn to brush your teeth to avoid tooth decay. � With negative reinforcement the behaviour increases and its outcome therefore decreases. � Negative reinforcement is also known as avoidance learning if the response prevents the outcome altogether or escape learning if it stops some outcome that has already begun. � Most people find the terms “escape learning” and “avoidance learning” easier to understand than “negative reinforcement, ” and the terms escape learning and avoidance learning show up in the titles of research articles four times more often than negative reinforcement does.

Omission training � If reinforcement by avoiding something bad is negative reinforcement, then punishment by avoiding something good is negative punishment. � If your parents punished you by taking away your allowance or privileges (“grounding you”), they were using negative punishment. � Another example is a teacher punishing a child by a “time out” session away from classmates. � Although this practice is common, the term negative punishment is not widely used. � The practice is usually known simply as punishment or as omission training because the omission of the response leads to restoration of the usual privileges.

Positive reinforcement versus negative reinforcement

Positive reinforcement versus negative reinforcement �In positive reinforcement, a response leads to the presentation of a rewarding stimulus. �In negative reinforcement, a response leads to the removal of an aversive stimulus. �Both types of reinforcement involve favourable consequences and both have the same effect on behaviour: The organism’s tendency to emit the reinforced response is strengthened.

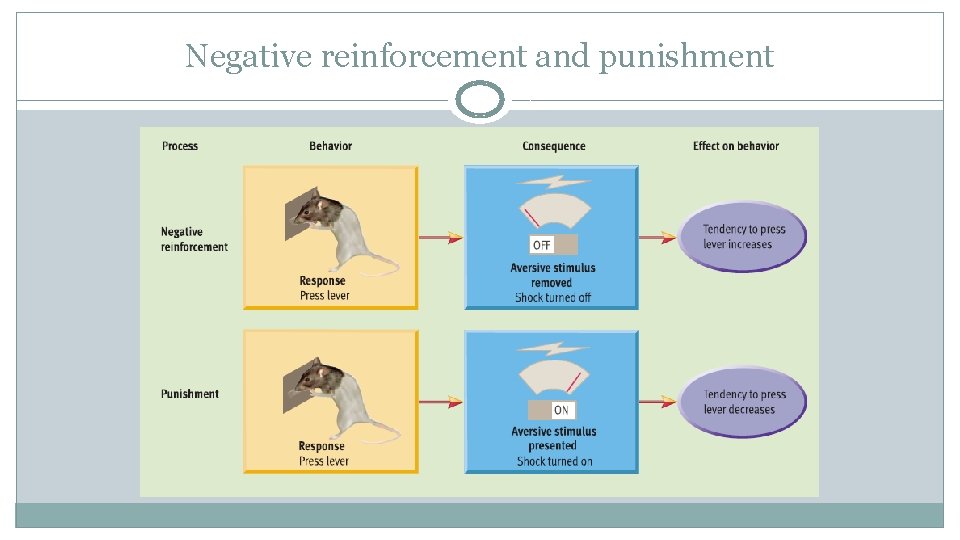

Negative reinforcement and punishment

Negative reinforcement and punishment �Although punishment can occur when a response leads to the removal of a rewarding stimulus, it more typically involves the presentation of an aversive stimulus. �Students often confuse punishment with negative reinforcement because they associate both with aversive stimuli. �However, as the diagram shows, punishment and negative reinforcement represent opposite procedures that have opposite effects on behaviour.

Categories of Reinforcement and Punishment � Classifying some procedure in one of four categories is often tricky. � If you adjust thermostat in a cold room to increase the heat, are you working for increased heat (positive reinforcement) or decreased cold (negative reinforcement)? � Because of this ambiguity, several authorities have recommended abandoning the term negative reinforcement (Baron & Galizio, 2005; Kimble, 1993). � The ambiguity can get even worse: If you are told you can be suspended from school for academic dishonesty, you can think of it as being honest in order to stay in school (positive reinforcement), being honest to avoid suspension (negative reinforcement or avoidance learning), decreasing dishonesty to avoid suspension (punishment or passive avoidance), or decreasing dishonesty to stay in school (negative punishment or omission training). � We can often simplify matters by using just the terms reinforcement (to increase a behaviour) and punishment (to decrease it). � Nevertheless, you should understand all of the terms, as they appear in many psychological publications and conversations. Attend to how something is worded: Are we talking about increasing or decreasing some behaviour and increasing or decreasing some outcome?

Operant Conditioning ADDITIONAL PHENOMENA

Extinction � In operant conditioning extinction occurs if responses stop producing reinforcements. � For example, you were once in the habit of asking your roommate to join you for supper. � The last five times you asked, your roommate said no, so you stop asking. � In classical conditioning extinction is achieved by presenting the CS without the UCS; in operant conditioning the procedure is response without reinforcement.

Generalization �Someone who receives reinforcement for a response in the presence of one stimulus will probably make the same response in the presence of a similar stimulus. �The more similar a new stimulus is to the original reinforced stimulus, the more likely the same response. This phenomenon is known as stimulus generalization. �For example, you might reach for the turn signal of a rented car in the same place you would find it in your own car.

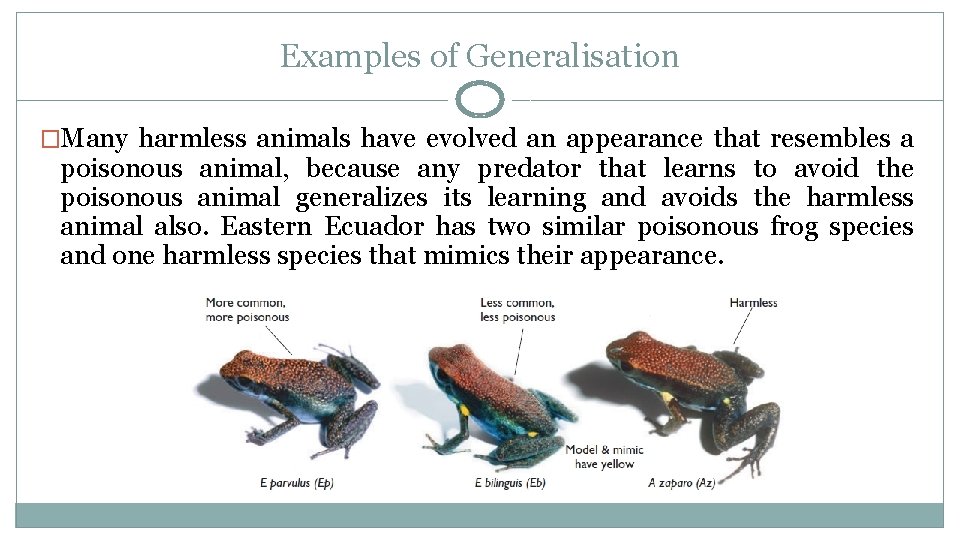

Examples of Generalisation �Many harmless animals have evolved an appearance that resembles a poisonous animal, because any predator that learns to avoid the poisonous animal generalizes its learning and avoids the harmless animal also. Eastern Ecuador has two similar poisonous frog species and one harmless species that mimics their appearance.

Discrimination �If reinforcement occurs for responding to one stimulus and not another, the result is a discrimination between them, yielding a response to one stimulus and not the other. �For example, you smile and greet someone you think you know, but then you realize it is someone else. �After several such experiences, you learn to recognize the difference between the two people.

Discriminative Stimuli �A stimulus that indicates which response is appropriate or inappropriate is called a discriminative stimulus. �A great deal of our behaviour is governed by discriminative stimuli. For example, you learn ordinarily to be quiet in class but to talk when the professor encourages discussion. �You learn to drive fast on some streets and slowly on others. Throughout your day one stimulus after another signals which behaviours will yield reinforcement, punishment, or neither. �The ability of a stimulus to encourage some responses and discourage others is known as stimulus control.

Skinner’s Box

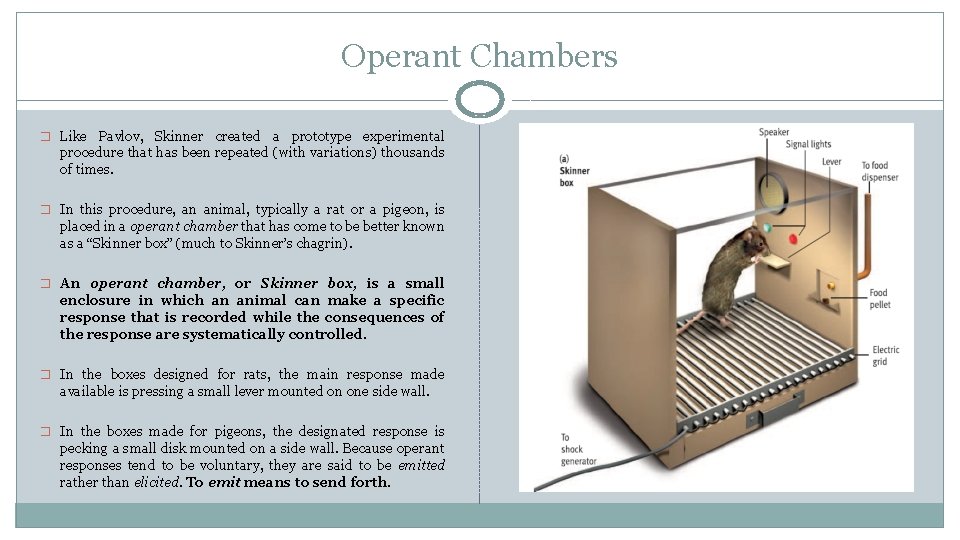

Operant Chambers � Like Pavlov, Skinner created a prototype experimental procedure that has been repeated (with variations) thousands of times. � In this procedure, an animal, typically a rat or a pigeon, is placed in a operant chamber that has come to be better known as a “Skinner box” (much to Skinner’s chagrin). � An operant chamber, or Skinner box, is a small enclosure in which an animal can make a specific response that is recorded while the consequences of the response are systematically controlled. � In the boxes designed for rats, the main response made available is pressing a small lever mounted on one side wall. � In the boxes made for pigeons, the designated response is pecking a small disk mounted on a side wall. Because operant responses tend to be voluntary, they are said to be emitted rather than elicited. To emit means to send forth.

Skinner’s Box � The Skinner box permits the experimenter to control the reinforcement contingencies that are in effect for the animal. � Reinforcement contingencies are the circumstances or rules that determine whether responses lead to the presentation of reinforcers. � Typically, the experimenter manipulates whether positive consequences occur when the animal makes the designated response. � The main positive consequence is usually delivery of a small bit of food into a food cup mounted in the chamber. � Because the animals are deprived of food for a while prior to the experimental session, their hunger virtually ensures that the food serves as a reinforcer.

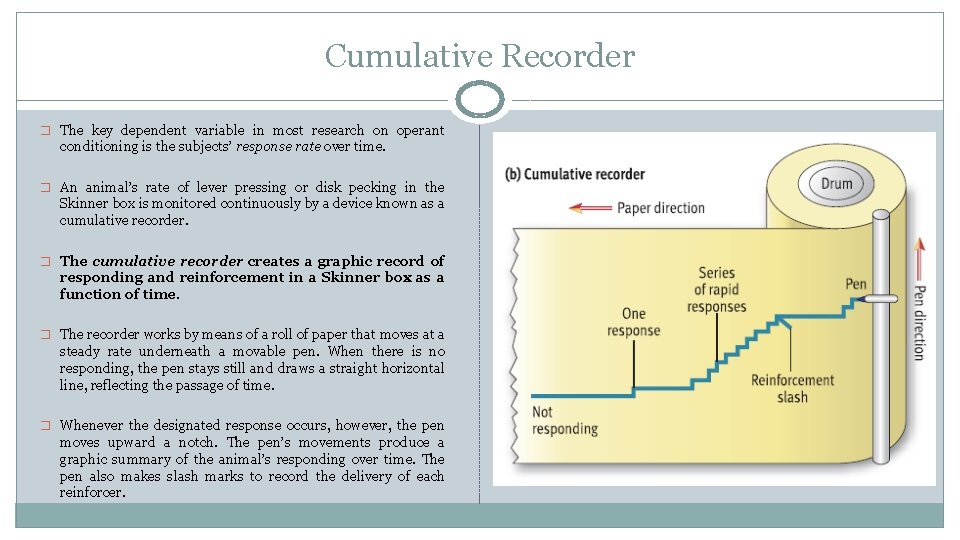

Cumulative Recorder � The key dependent variable in most research on operant conditioning is the subjects’ response rate over time. � An animal’s rate of lever pressing or disk pecking in the Skinner box is monitored continuously by a device known as a cumulative recorder. � The cumulative recorder creates a graphic record of responding and reinforcement in a Skinner box as a function of time. � The recorder works by means of a roll of paper that moves at a steady rate underneath a movable pen. When there is no responding, the pen stays still and draws a straight horizontal line, reflecting the passage of time. � Whenever the designated response occurs, however, the pen moves upward a notch. The pen’s movements produce a graphic summary of the animal’s responding over time. The pen also makes slash marks to record the delivery of each reinforcer.

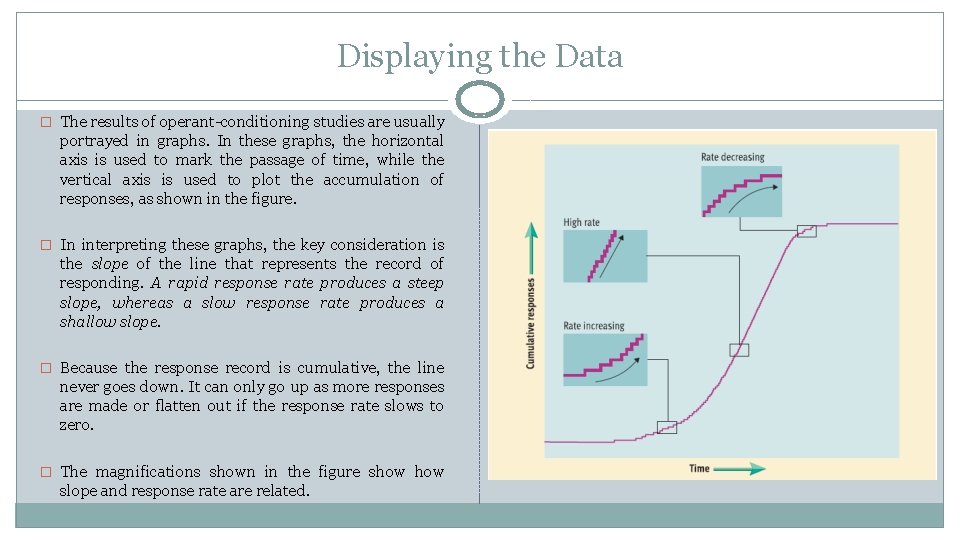

Displaying the Data � The results of operant-conditioning studies are usually portrayed in graphs. In these graphs, the horizontal axis is used to mark the passage of time, while the vertical axis is used to plot the accumulation of responses, as shown in the figure. � In interpreting these graphs, the key consideration is the slope of the line that represents the record of responding. A rapid response rate produces a steep slope, whereas a slow response rate produces a shallow slope. � Because the response record is cumulative, the line never goes down. It can only go up as more responses are made or flatten out if the response rate slows to zero. � The magnifications shown in the figure show slope and response rate are related.

Primary and Secondary Reinforcers � Operant theorists make a distinction between unlearned, or primary, reinforcers as opposed to conditioned, or secondary, reinforcers. � Primary reinforcers are events that are inherently reinforcing because they satisfy biological needs. � A given species has a limited number of primary reinforcers because they are closely tied to physiological needs. � In humans, primary reinforcers include food, water, warmth, sex, and perhaps affection expressed through hugging and close bodily contact. � Secondary, or conditioned, reinforcers are events that acquire reinforcing qualities by being associated with primary reinforcers. � The events that function as secondary reinforcers vary among members of a species because they depend on learning. Examples of common secondary reinforcers in humans include money, good grades, attention, flattery, praise, and applause. Similarly, people learn to find stylish clothes, sports cars, fine jewellery, exotic vacations, and state-of-the-art stereos reinforcing.

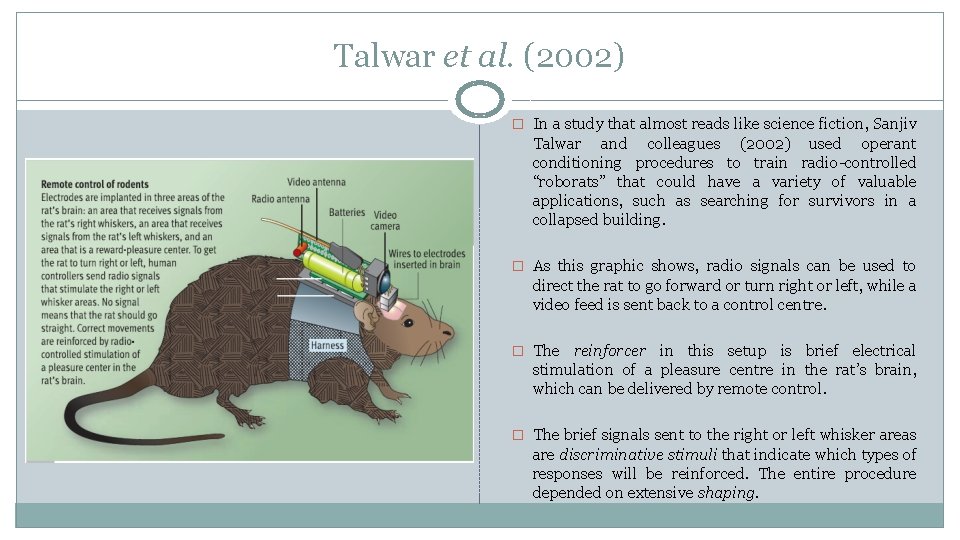

Talwar et al. (2002) � In a study that almost reads like science fiction, Sanjiv Talwar and colleagues (2002) used operant conditioning procedures to train radio-controlled “roborats” that could have a variety of valuable applications, such as searching for survivors in a collapsed building. � As this graphic shows, radio signals can be used to direct the rat to go forward or turn right or left, while a video feed is sent back to a control centre. � The reinforcer in this setup is brief electrical stimulation of a pleasure centre in the rat’s brain, which can be delivered by remote control. � The brief signals sent to the right or left whisker areas are discriminative stimuli that indicate which types of responses will be reinforced. The entire procedure depended on extensive shaping.

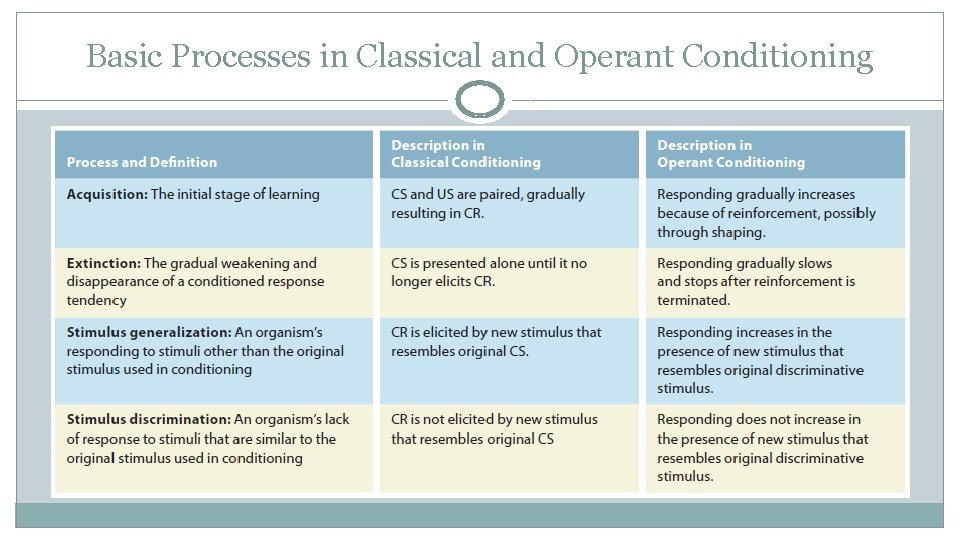

Basic Processes in Classical and Operant Conditioning

Questions?

- Slides: 45