Open Stack based storage system from Fujtisu Andrs

Open. Stack based storage system from Fujtisu András Pulai architect mailto: andras. pulai@ts. fujtisu. com 0 © 2014 Fujitsu

Open. Stack 1 © 2014 Fujitsu

Why openstack ? What is the Problem? Developers pointed applications directly to servers new APP = new SERVER Result: huge underutilized serverfarms Racking, Networking, procurment Solution: add a Hypervisor layer 2 © 2014 Fujitsu

Virtualization helped – and caused another pb. Developers pointed applications directly to VMs … Complexity in managing virtualization layer VM’s still managed like traditional physical servers No possiblility for automation 3 © 2014 Fujitsu

Traditional vs „smart” applications Today’s Iaa. S/Cloud Open. Stack v. Apps – New or legacy OS Apps – new type of intelligent Apps / Cloud – Web ready v. Cloud – Manage Cloud v. Center – Manage VM „Nova” - Compute API services start/stop/provision a node Network – High Availability „Neutron” - Network API services Storage – High Availability „Swift” – Storage API, object based 4 © 2014 Fujitsu

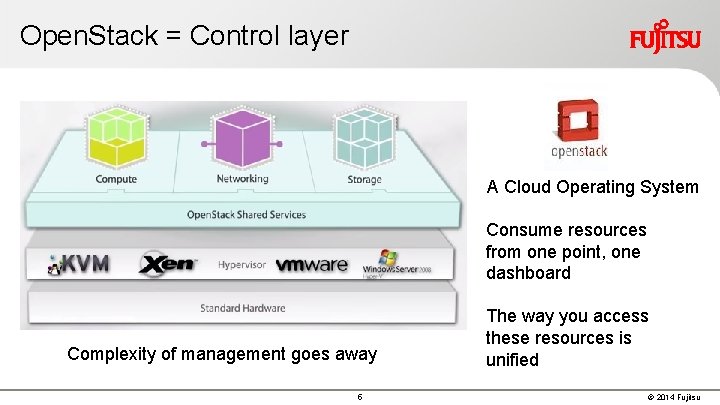

Open. Stack = Control layer A Cloud Operating System Consume resources from one point, one dashboard Complexity of management goes away 5 The way you access these resources is unified © 2014 Fujitsu

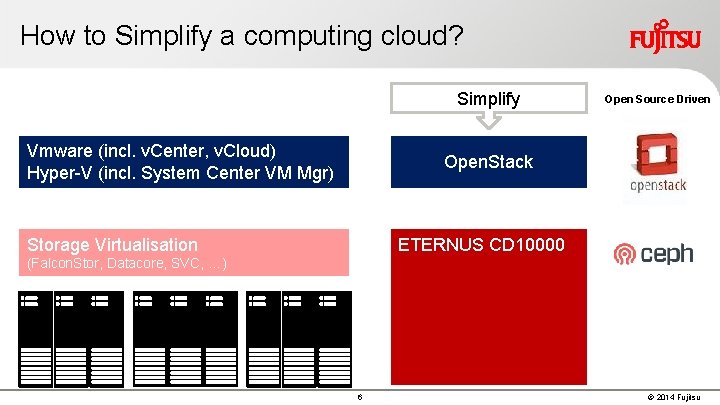

How to Simplify a computing cloud? Simplify Vmware (incl. v. Center, v. Cloud) Hyper-V (incl. System Center VM Mgr) Open Source Driven Open. Stack ETERNUS CD 10000 Storage Virtualisation (Falcon. Stor, Datacore, SVC, …) 6 © 2014 Fujitsu

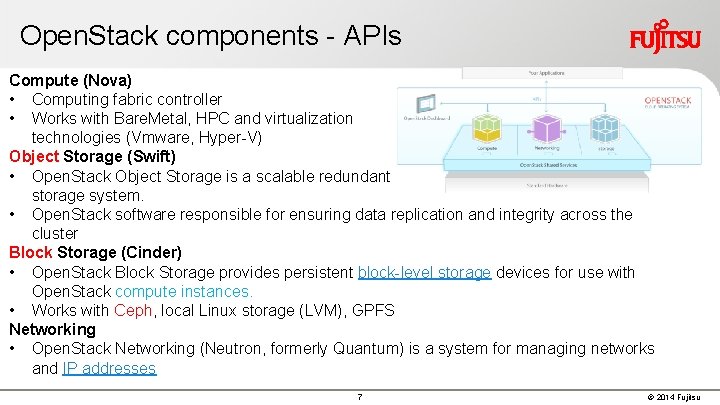

Open. Stack components - APIs Compute (Nova) • Computing fabric controller • Works with Bare. Metal, HPC and virtualization technologies (Vmware, Hyper-V) Object Storage (Swift) • Open. Stack Object Storage is a scalable redundant storage system. • Open. Stack software responsible for ensuring data replication and integrity across the cluster Block Storage (Cinder) • Open. Stack Block Storage provides persistent block-level storage devices for use with Open. Stack compute instances. • Works with Ceph, local Linux storage (LVM), GPFS Networking • Open. Stack Networking (Neutron, formerly Quantum) is a system for managing networks and IP addresses 7 © 2014 Fujitsu

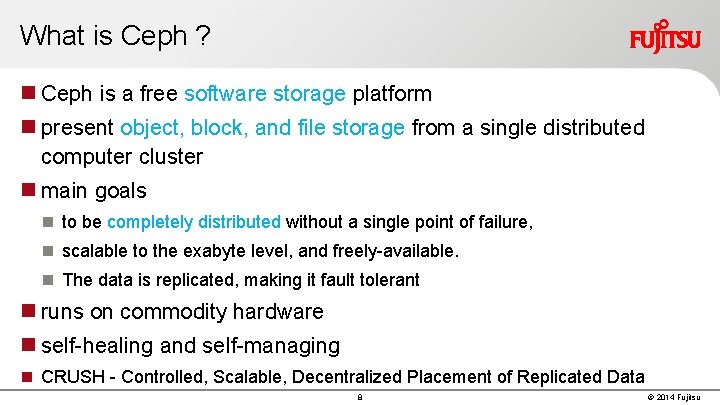

What is Ceph ? Ceph is a free software storage platform present object, block, and file storage from a single distributed computer cluster main goals to be completely distributed without a single point of failure, scalable to the exabyte level, and freely-available. The data is replicated, making it fault tolerant runs on commodity hardware self-healing and self-managing CRUSH - Controlled, Scalable, Decentralized Placement of Replicated Data 8 © 2014 Fujitsu

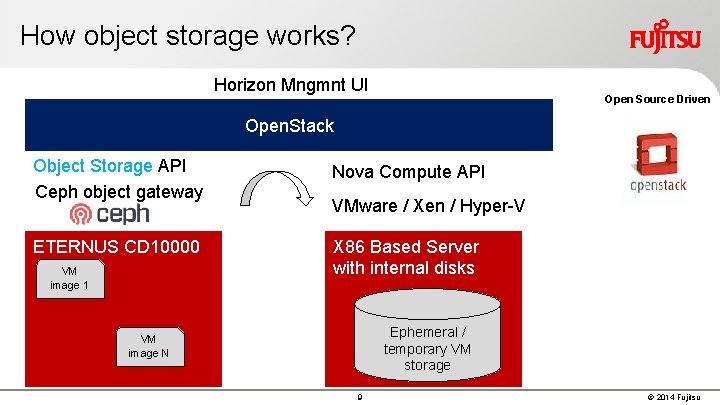

How object storage works? Horizon Mngmnt UI Open Source Driven Open. Stack Object Storage API Ceph object gateway Nova Compute API ETERNUS CD 10000 X 86 Based Server with internal disks VM image 1 VMware / Xen / Hyper-V Ephemeral / temporary VM storage VM image N 9 © 2014 Fujitsu

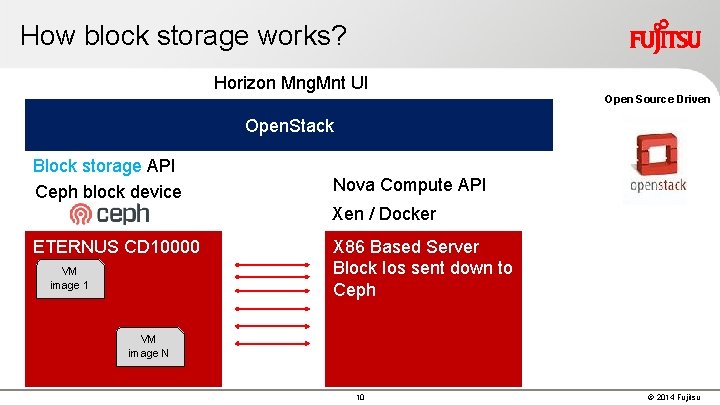

How block storage works? Horizon Mng. Mnt UI Open Source Driven Open. Stack Block storage API Ceph block device Nova Compute API Xen / Docker ETERNUS CD 10000 VM image 1 X 86 Based Server Block Ios sent down to Ceph VM image N 10 © 2014 Fujitsu

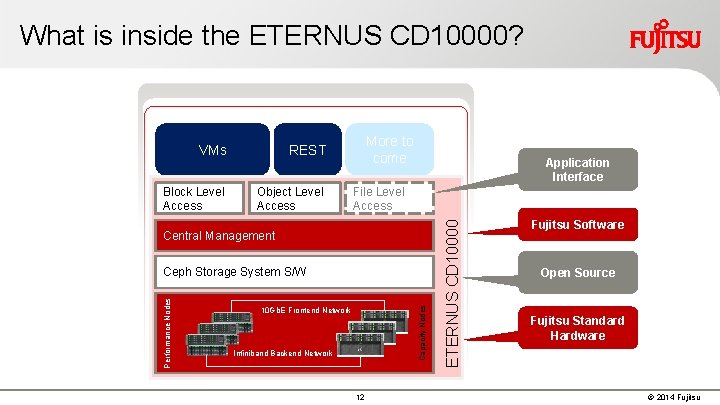

What is inside the ETERNUS CD 10000? REST Block Level Access Object Level Access More to come Application Interface File Level Access Central Management Capacity Nodes Performance Nodes Ceph Storage System S/W 10 Gb. E Frontend Network Infiniband Backend Network 12 ETERNUS CD 10000 VMs Fujitsu Software Open Source Fujitsu Standard Hardware © 2014 Fujitsu

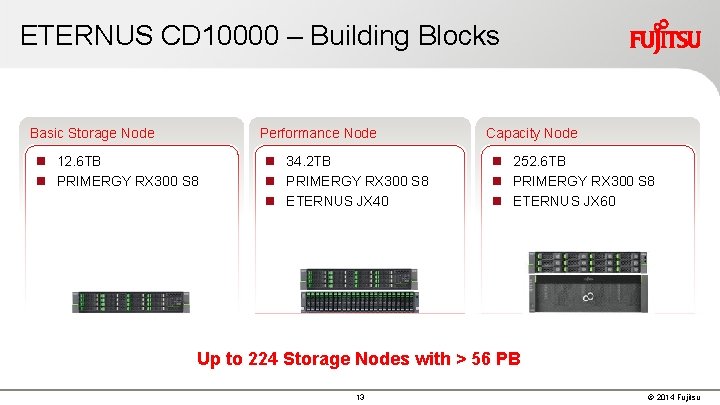

ETERNUS CD 10000 – Building Blocks Basic Storage Node Performance Node 12. 6 TB PRIMERGY RX 300 S 8 34. 2 TB PRIMERGY RX 300 S 8 ETERNUS JX 40 Capacity Node 252. 6 TB PRIMERGY RX 300 S 8 ETERNUS JX 60 Up to 224 Storage Nodes with > 56 PB 13 © 2014 Fujitsu

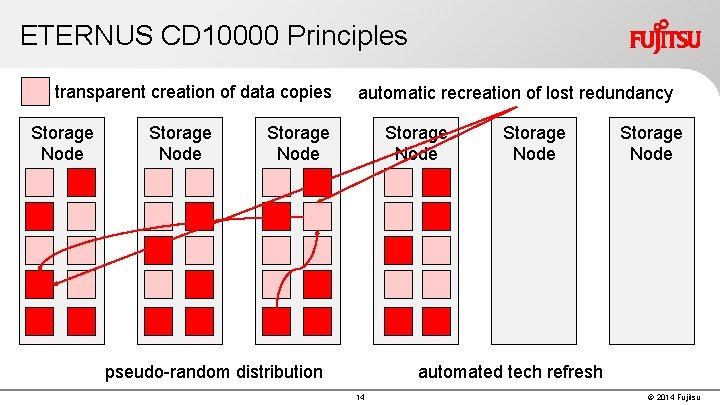

ETERNUS CD 10000 Principles transparent creation of data copies Storage Node automatic recreation of lost redundancy Storage Node pseudo-random distribution Storage Node automated tech refresh 14 © 2014 Fujitsu

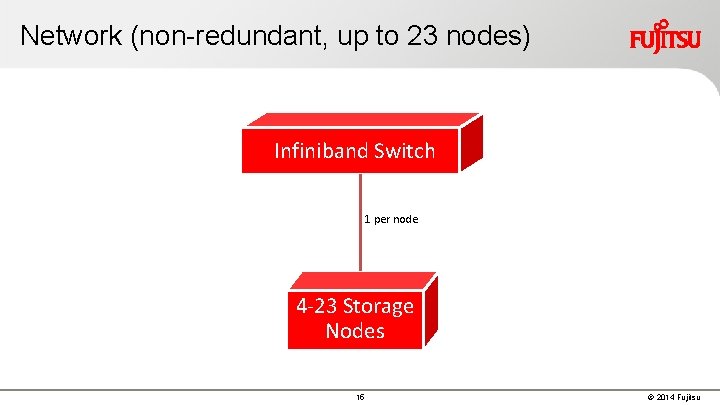

Network (non-redundant, up to 23 nodes) Infiniband Switch 1 per node 4 -23 Storage Nodes 15 © 2014 Fujitsu

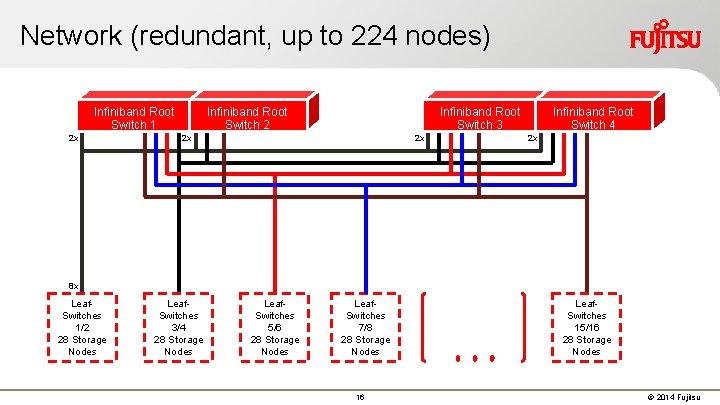

Network (redundant, up to 224 nodes) Infiniband Root Switch 1 2 x Infiniband Root Switch 2 Infiniband Root Switch 3 2 x 2 x Infiniband Root Switch 4 2 x 8 x Leaf. Switches 1/2 28 Storage Nodes Leaf. Switches 3/4 28 Storage Nodes Leaf. Switches 5/6 28 Storage Nodes Leaf. Switches 7/8 28 Storage Nodes 16 Leaf. Switches 15/16 28 Storage Nodes © 2014 Fujitsu

ETERNUS CD 10000 vs. Plain Ceph 19 © 2014 Fujitsu

ETERNUS CD 10000 vs. Plain Ceph: Lifecycle Management 20 © 2014 Fujitsu

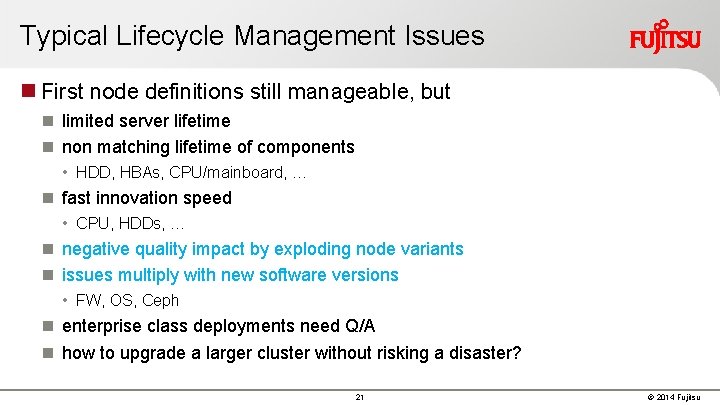

Typical Lifecycle Management Issues First node definitions still manageable, but limited server lifetime non matching lifetime of components • HDD, HBAs, CPU/mainboard, … fast innovation speed • CPU, HDDs, … negative quality impact by exploding node variants issues multiply with new software versions • FW, OS, Ceph enterprise class deployments need Q/A how to upgrade a larger cluster without risking a disaster? 21 © 2014 Fujitsu

ETERNUS CD 10000 Lifecycle Management Old components need to be phased out / replaced after a reasonable lifetime (max. 5 years) in order to receive continued solution maintenance benefit from future software functions Within these boundaries Fujitsu offers a quality assured combination of hardware and software a component oriented and selective upgrade scheme • introduction of new nodes, phase out of old ones • rolling software upgrades 23 © 2014 Fujitsu

ETERNUS CD 10000 vs. Plain Ceph Deployment & Administration 24 © 2014 Fujitsu

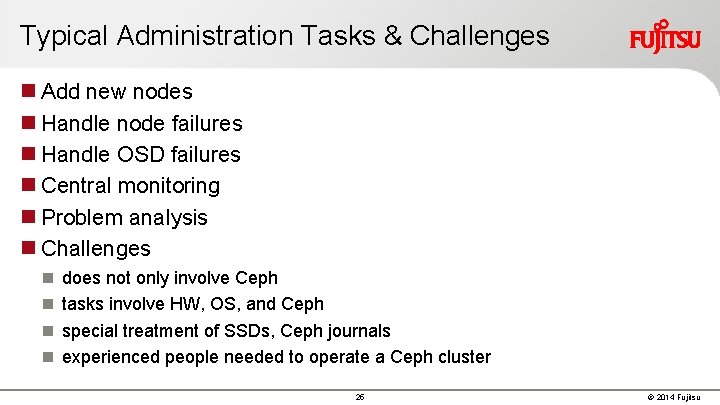

Typical Administration Tasks & Challenges Add new nodes Handle node failures Handle OSD failures Central monitoring Problem analysis Challenges does not only involve Ceph tasks involve HW, OS, and Ceph special treatment of SSDs, Ceph journals experienced people needed to operate a Ceph cluster 25 © 2014 Fujitsu

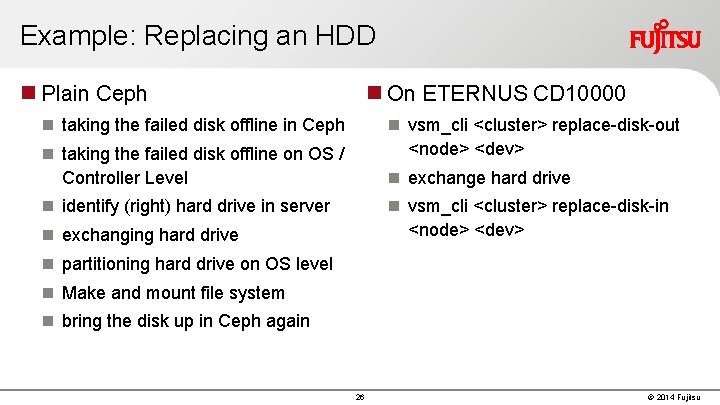

Example: Replacing an HDD Plain Ceph On ETERNUS CD 10000 taking the failed disk offline in Ceph vsm_cli <cluster> replace-disk-out <node> <dev> taking the failed disk offline on OS / Controller Level exchange hard drive identify (right) hard drive in server vsm_cli <cluster> replace-disk-in <node> <dev> exchanging hard drive partitioning hard drive on OS level Make and mount file system bring the disk up in Ceph again 26 © 2014 Fujitsu

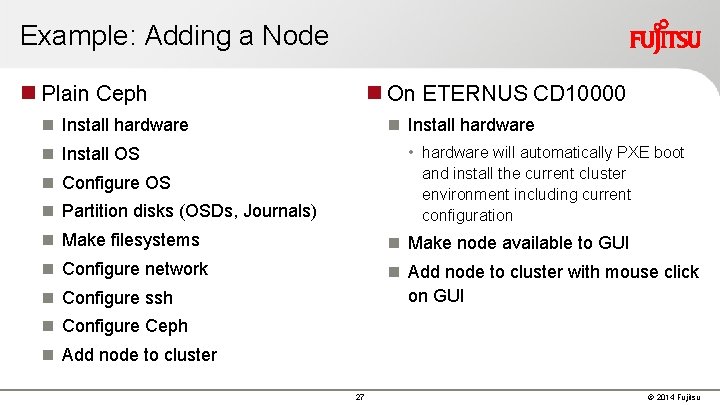

Example: Adding a Node Plain Ceph On ETERNUS CD 10000 Install hardware • hardware will automatically PXE boot and install the current cluster environment including current configuration Install OS Configure OS Partition disks (OSDs, Journals) Make filesystems Make node available to GUI Configure network Add node to cluster with mouse click on GUI Configure ssh Configure Ceph Add node to cluster 27 © 2014 Fujitsu

ETERNUS CD 10000 Summary 29 © 2014 Fujitsu

In a Nutshell … ETERNUS CD 10000 Cloud Storage Solution based on industry standard servers … (no RAID ) … and a bunch of disks supporting up to 224 nodes with > 56 PB hold tgether by hyperscale storage software E 2 E H/W-S/W integration, add-ons, & service by 30 © 2014 Fujitsu

33 © 2014 Fujitsu

- Slides: 27