Open Power IBM HPC IBM Server Solutions Product

Концепция Open. Power и новая стратегия IBM в области HPC Алексей Перевозчиков IBM Server Solutions Product Manager 82189117@ru. ibm. com МГУ, 2 июля 2015 г. © 2014 International Business Machines Corporation 1

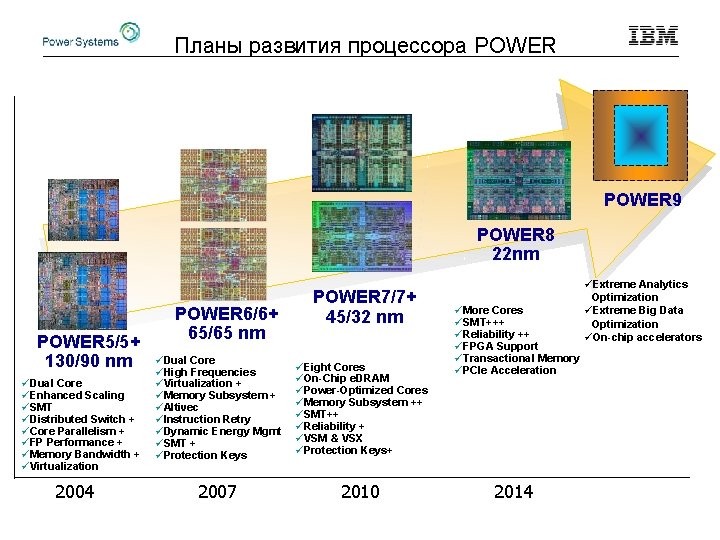

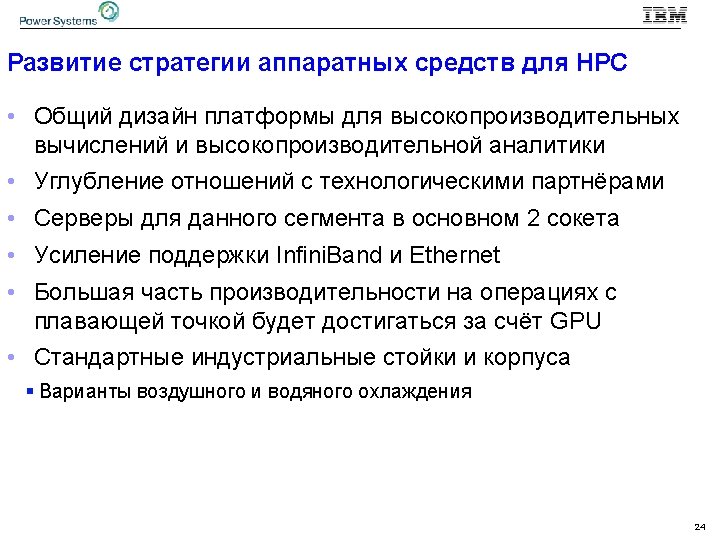

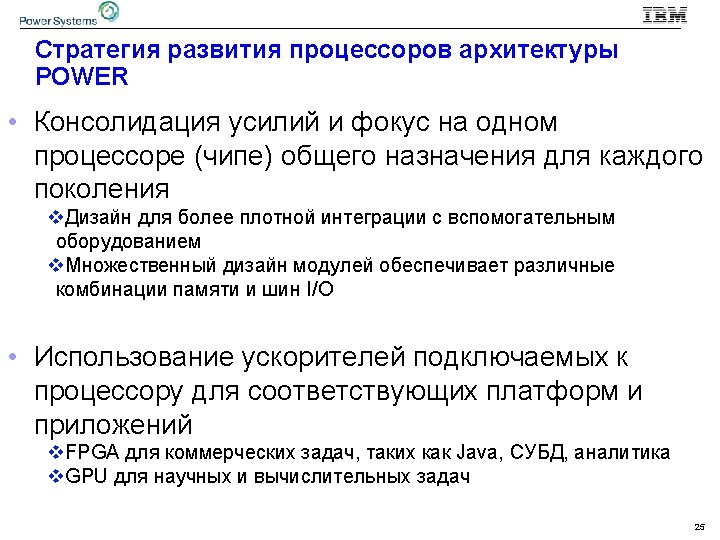

Планы развития процессора POWER 9 POWER 8 22 nm POWER 5/5+ 130/90 nm üDual Core üEnhanced Scaling üSMT üDistributed Switch + üCore Parallelism + üFP Performance + üMemory Bandwidth + üVirtualization 2004 POWER 6/6+ 65/65 nm POWER 7/7+ 45/32 nm üDual Core üHigh Frequencies üVirtualization + üMemory Subsystem + üAltivec üInstruction Retry üDynamic Energy Mgmt üSMT + üProtection Keys üEight Cores üOn-Chip e. DRAM üPower-Optimized Cores üMemory Subsystem ++ üSMT++ üReliability + üVSM & VSX üProtection Keys+ 2007 2010 üMore Cores üSMT+++ üReliability ++ üFPGA Support üTransactional Memory üPCIe Acceleration 2014 üExtreme Analytics Optimization üExtreme Big Data Optimization üOn-chip accelerators

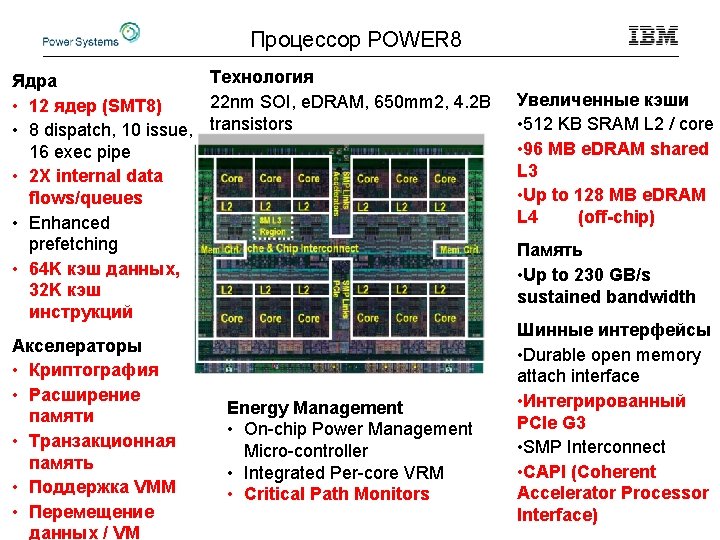

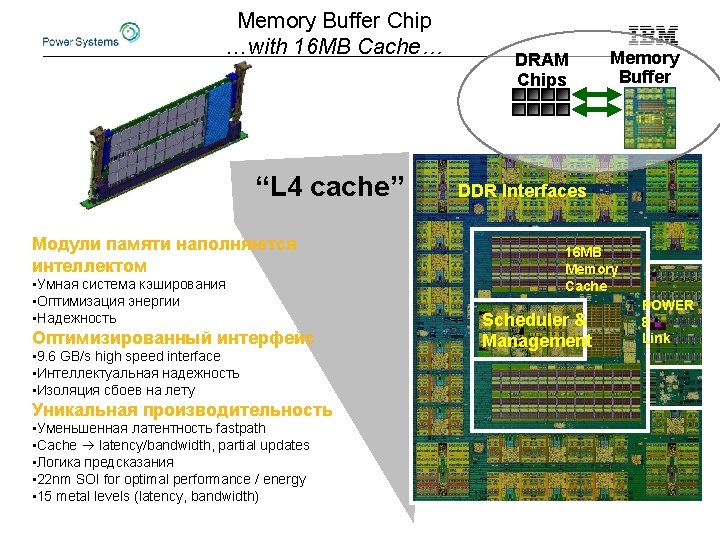

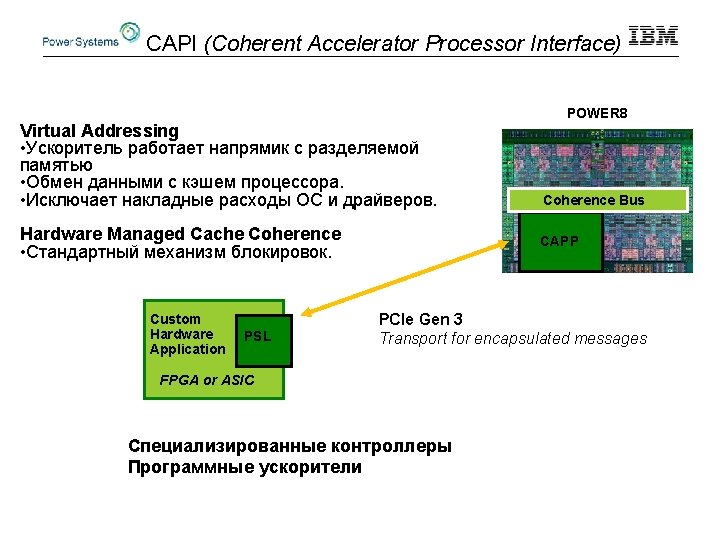

Процессор POWER 8 Технология Ядра 22 nm SOI, e. DRAM, 650 mm 2, 4. 2 B • 12 ядер (SMT 8) • 8 dispatch, 10 issue, transistors 16 exec pipe • 2 X internal data flows/queues • Enhanced prefetching • 64 K кэш данных, 32 K кэш инструкций Акселераторы • Криптография • Расширение памяти • Транзакционная память • Поддержка VMM • Перемещение данных / VM Energy Management • On-chip Power Management Micro-controller • Integrated Per-core VRM • Critical Path Monitors Увеличенные кэши • 512 KB SRAM L 2 / core • 96 MB e. DRAM shared L 3 • Up to 128 MB e. DRAM L 4 (off-chip) Память • Up to 230 GB/s sustained bandwidth Шинные интерфейсы • Durable open memory attach interface • Интегрированный PCIe G 3 • SMP Interconnect • CAPI (Coherent Accelerator Processor Interface)

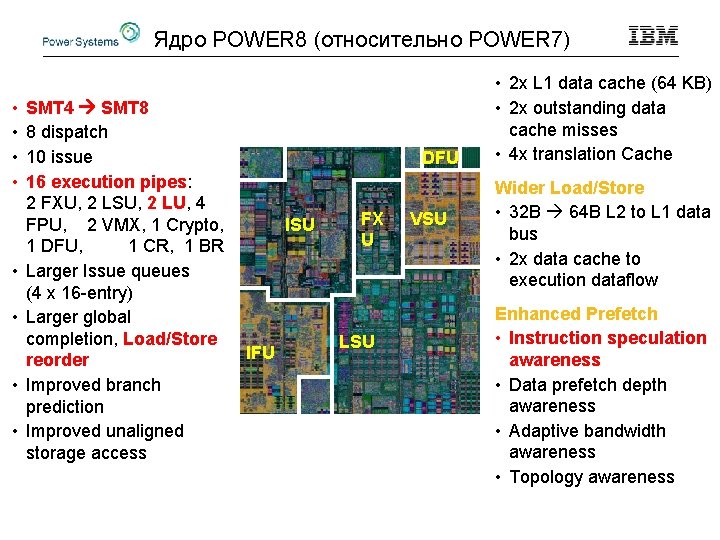

Ядро POWER 8 (относительно POWER 7) • • SMT 4 SMT 8 8 dispatch 10 issue 16 execution pipes: 2 FXU, 2 LSU, 2 LU, 4 FPU, 2 VMX, 1 Crypto, 1 DFU, 1 CR, 1 BR Larger Issue queues (4 x 16 -entry) Larger global completion, Load/Store reorder Improved branch prediction Improved unaligned storage access DFU ISU IFU FX U LSU VSU • 2 x L 1 data cache (64 KB) • 2 x outstanding data cache misses • 4 x translation Cache Wider Load/Store • 32 B 64 B L 2 to L 1 data bus • 2 x data cache to execution dataflow Enhanced Prefetch • Instruction speculation awareness • Data prefetch depth awareness • Adaptive bandwidth awareness • Topology awareness

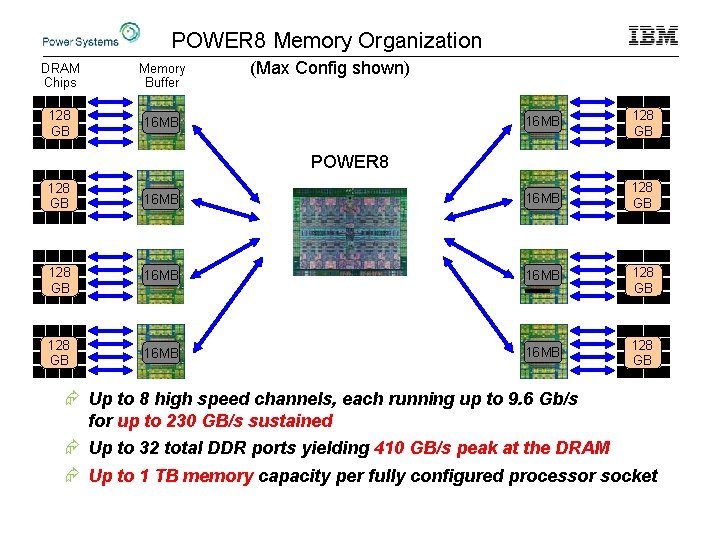

POWER 8 Memory Organization DRAM Chips Memory Buffer 128 GB 16 MB (Max Config shown) 16 MB 128 GB POWER 8 128 GB 16 MB 128 GB 16 MB 128 GB Æ Up to 8 high speed channels, each running up to 9. 6 Gb/s for up to 230 GB/s sustained Æ Up to 32 total DDR ports yielding 410 GB/s peak at the DRAM Æ Up to 1 TB memory capacity per fully configured processor socket

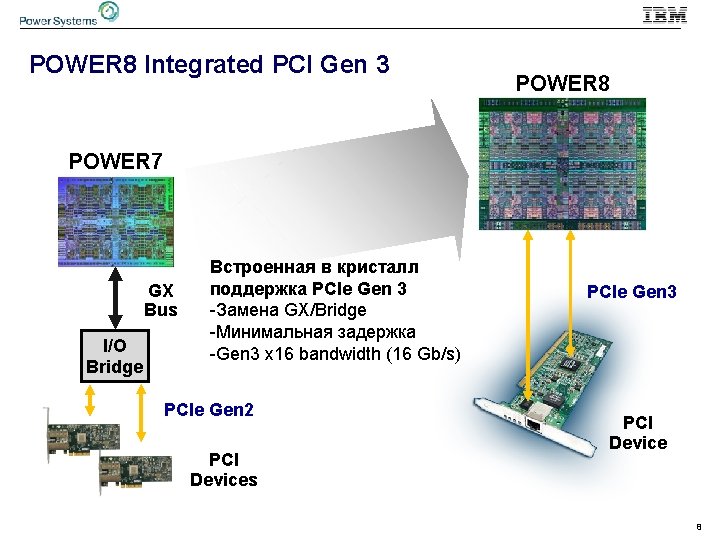

POWER 8 Integrated PCI Gen 3 POWER 8 POWER 7 GX Bus I/O Bridge Встроенная в кристалл поддержка PCIe Gen 3 -Замена GX/Bridge -Минимальная задержка -Gen 3 x 16 bandwidth (16 Gb/s) PCIe Gen 2 PCI Devices PCIe Gen 3 PCI Device 8

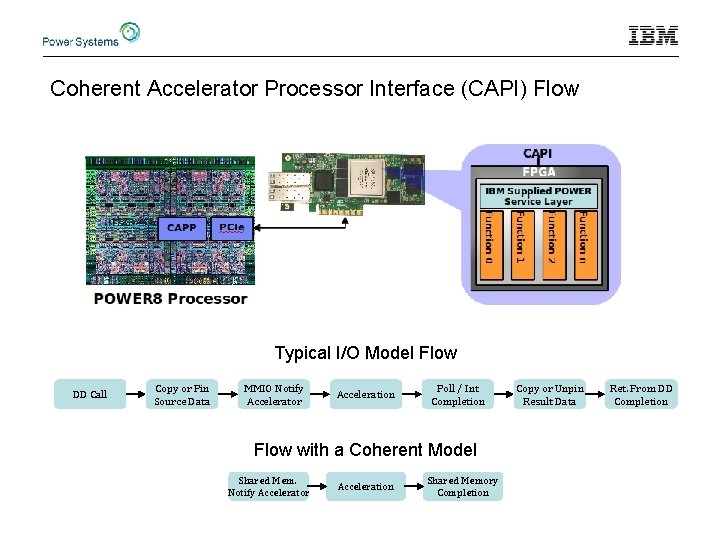

Coherent Accelerator Processor Interface (CAPI) Flow Typical I/O Model Flow DD Call Copy or Pin Source Data MMIO Notify Accelerator Acceleration Poll / Int Completion Flow with a Coherent Model Shared Mem. Notify Accelerator Acceleration Shared Memory Completion Copy or Unpin Result Data Ret. From DD Completion

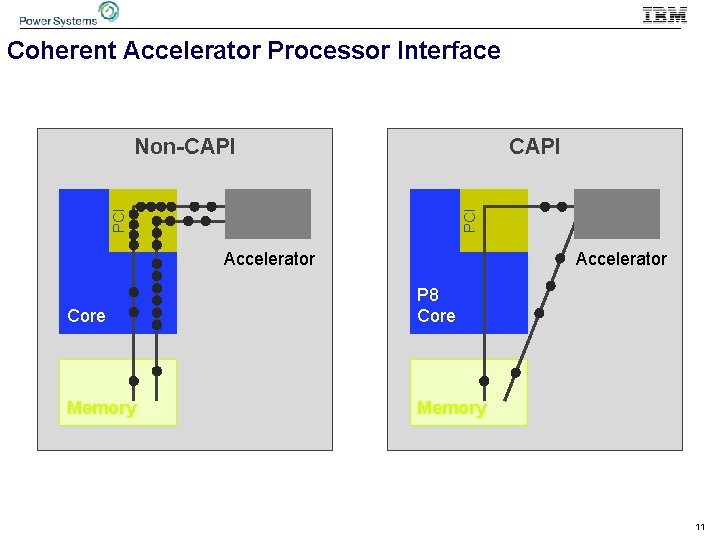

Coherent Accelerator Processor Interface PCI CAPI PCI Non-CAPI Accelerator Core P 8 Core Memory 11

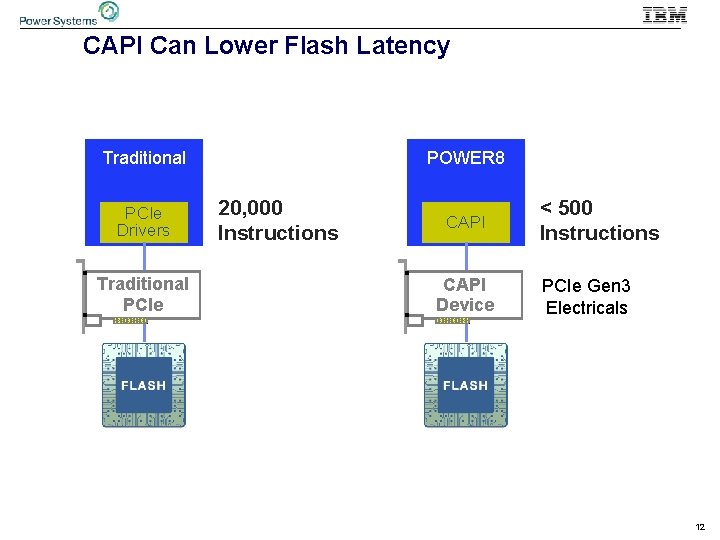

CAPI Can Lower Flash Latency Traditional PCIe Drivers Traditional PCIe POWER 8 20, 000 Instructions CAPI Device < 500 Instructions PCIe Gen 3 Electricals 12

Open. POWER Foundation – что, как, зачем. © 2015 IBM Corporation

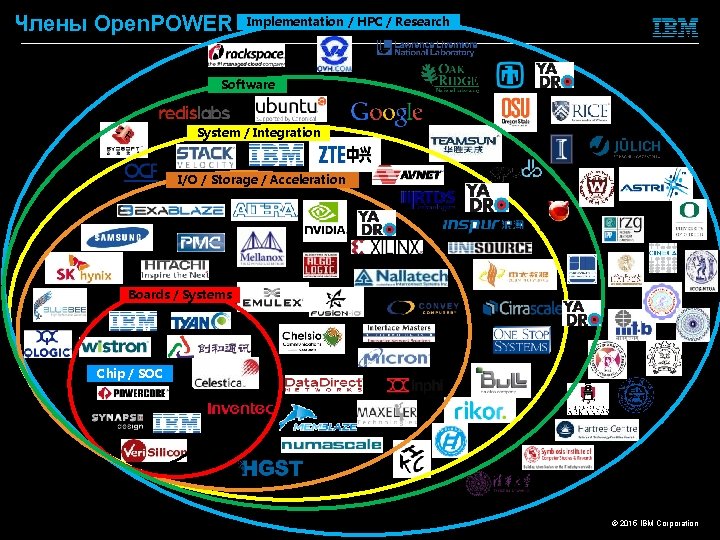

Члены Open. POWER Implementation / HPC / Research Software System / Integration I/O / Storage / Acceleration Boards / Systems Chip / SOC © 2015 IBM Corporation

21 © 2015 IBM Corporation

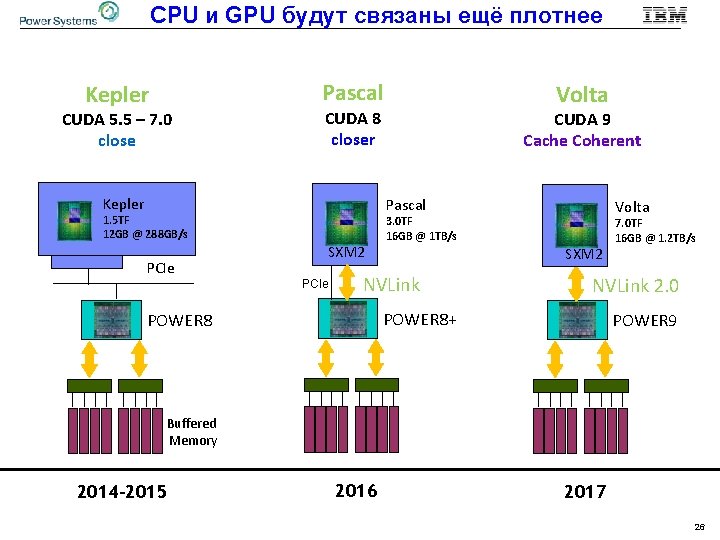

CPU и GPU будут связаны ещё плотнее Pascal Kepler CUDA 5. 5 – 7. 0 close CUDA 8 closer Kepler CUDA 9 Cache Coherent Pascal 1. 5 TF 12 GB @ 288 GB/s PCIe Volta SXM 2 PCIe Volta 3. 0 TF 16 GB @ 1 TB/s SXM 2 NVLink 2. 0 POWER 8+ POWER 8 7. 0 TF 16 GB @ 1. 2 TB/s POWER 9 Buffered Memory 2014 -2015 2016 2017 26

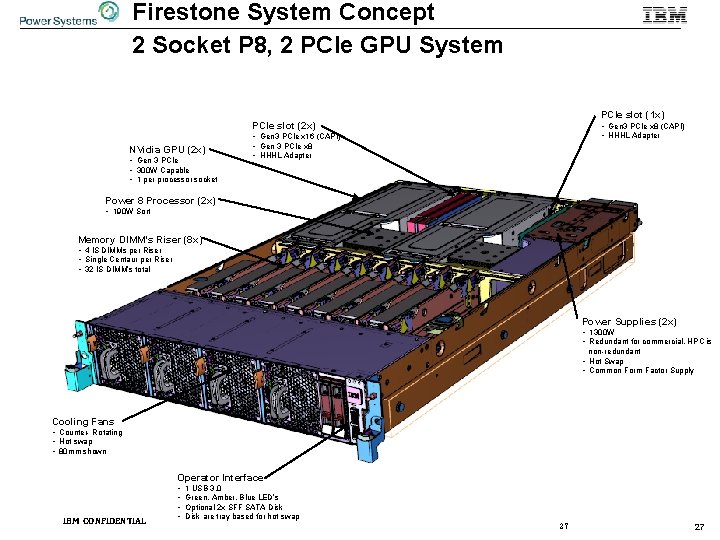

Firestone System Concept 2 Socket P 8, 2 PCIe GPU System PCIe slot (1 x) PCIe slot (2 x) NVidia GPU (2 x) • Gen 3 PCIe • 300 W Capable • 1 per processor socket • Gen 3 PCIe x 8 (CAPI) • HHHL Adapter • Gen 3 PCIe x 16 (CAPI) • Gen 3 PCIe x 8 • HHHL Adapter Power 8 Processor (2 x) • 190 W Sort Memory DIMM’s Riser (8 x) • 4 IS DIMMs per Riser • Single Centaur per Riser • 32 IS DIMM’s total Power Supplies (2 x) • 1300 W • Redundant for commercial, HPC is non-redundant • Hot Swap • Common Form Factor Supply Cooling Fans • Counter- Rotating • Hot swap • 80 mm shown Operator Interface IBM CONFIDENTIAL • • 1 USB 3. 0 Green, Amber, Blue LED’s Optional 2 x SFF SATA Disk are tray based for hot swap 27 27

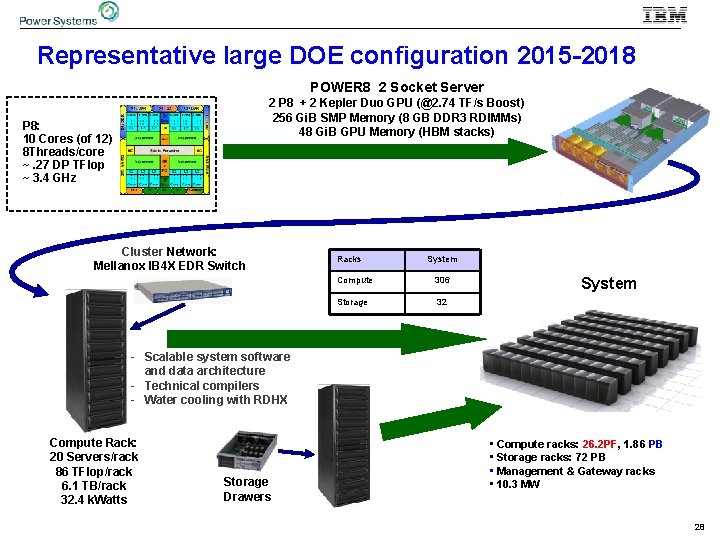

Representative large DOE configuration 2015 -2018 POWER 8 2 Socket Server 2 P 8 + 2 Kepler Duo GPU (@2. 74 TF/s Boost) 256 Gi. B SMP Memory (8 GB DDR 3 RDIMMs) 48 Gi. B GPU Memory (HBM stacks) P 8: 10 Cores (of 12) 8 Threads/core ~. 27 DP TFlop ~ 3. 4 GHz Cluster Network: Mellanox IB 4 X EDR Switch Racks System Compute 306 Storage 32 System - Scalable system software and data architecture - Technical compilers - Water cooling with RDHX Compute Rack: 20 Servers/rack 86 TFlop/rack 6. 1 TB/rack 32. 4 k. Watts Storage Drawers • Compute racks: 26. 2 PF, 1. 86 PB • Storage racks: 72 PB • Management & Gateway racks • 10. 3 MW 28

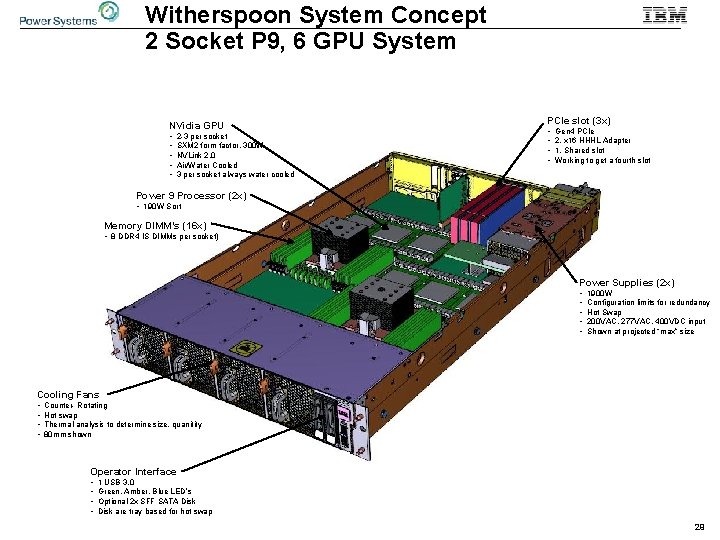

Witherspoon System Concept 2 Socket P 9, 6 GPU System NVidia GPU • • • 2 -3 per socket SXM 2 form factor, 300 W NVLink 2. 0 Air/Water Cooled 3 per socket always water cooled PCIe slot (3 x) • • Gen 4 PCIe 2, x 16 HHHL Adapter 1, Shared slot Working to get a fourth slot Power 9 Processor (2 x) • 190 W Sort Memory DIMM’s (16 x) • 8 DDR 4 IS DIMMs per socket) Power Supplies (2 x) • • • 1900 W Configuration limits for redundancy Hot Swap 200 VAC, 277 VAC, 400 VDC input Shown at projected “max” size Cooling Fans • • Counter- Rotating Hot swap Thermal analysis to determine size, quanitity 80 mm shown Operator Interface • • 1 USB 3. 0 Green, Amber, Blue LED’s Optional 2 x SFF SATA Disk are tray based for hot swap 29

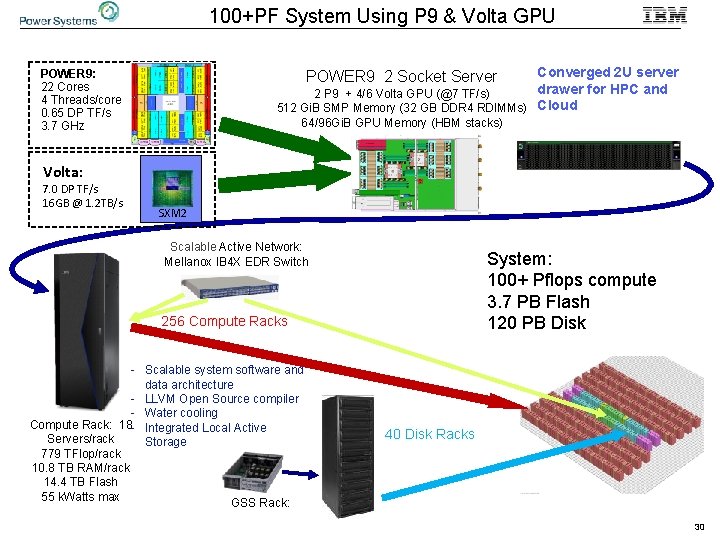

100+PF System Using P 9 & Volta GPU Converged 2 U server drawer for HPC and 2 P 9 + 4/6 Volta GPU (@7 TF/s) 512 Gi. B SMP Memory (32 GB DDR 4 RDIMMs) Cloud POWER 9: 22 Cores 4 Threads/core 0. 65 DP TF/s 3. 7 GHz POWER 9 2 Socket Server 64/96 Gi. B GPU Memory (HBM stacks) Volta: 7. 0 DP TF/s 16 GB @ 1. 2 TB/s SXM 2 Scalable Active Network: Mellanox IB 4 X EDR Switch System: 100+ Pflops compute 3. 7 PB Flash 120 PB Disk 256 Compute Racks - Scalable system software and data architecture - LLVM Open Source compiler - Water cooling Compute Rack: 18 - Integrated Local Active Servers/rack Storage 779 TFlop/rack 10. 8 TB RAM/rack 14. 4 TB Flash 55 k. Watts max GSS Rack: 40 Disk Racks 30

- Slides: 31