Open Fabrics Windows Development and Microsoft Windows CCS

Open. Fabrics Windows Development and Microsoft Windows CCS 2003 Part 2

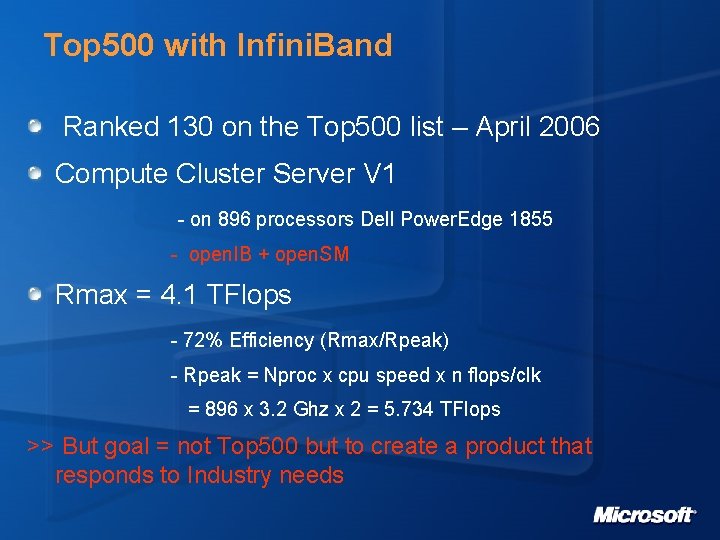

Top 500 with Infini. Band Ranked 130 on the Top 500 list – April 2006 Compute Cluster Server V 1 - on 896 processors Dell Power. Edge 1855 - open. IB + open. SM Rmax = 4. 1 TFlops - 72% Efficiency (Rmax/Rpeak) - Rpeak = Nproc x cpu speed x n flops/clk = 896 x 3. 2 Ghz x 2 = 5. 734 TFlops >> But goal = not Top 500 but to create a product that responds to Industry needs

Partners

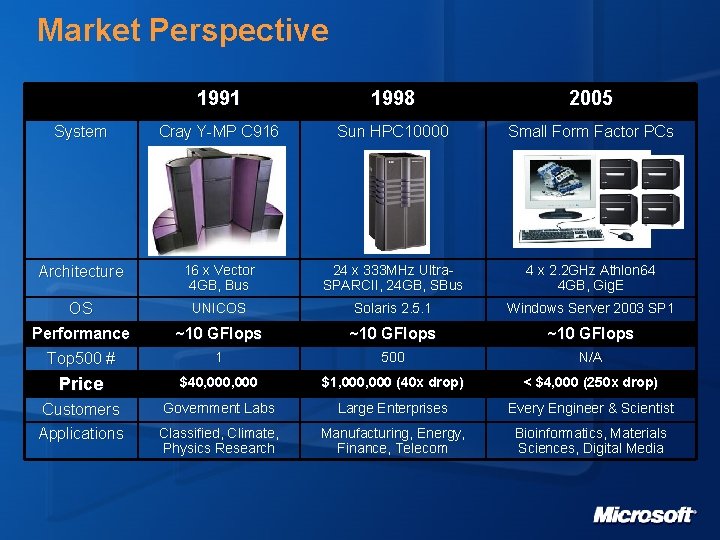

Market Perspective 1991 1998 2005 System Cray Y-MP C 916 Sun HPC 10000 Small Form Factor PCs Architecture 16 x Vector 4 GB, Bus 24 x 333 MHz Ultra. SPARCII, 24 GB, SBus 4 x 2. 2 GHz Athlon 64 4 GB, Gig. E OS UNICOS Solaris 2. 5. 1 Windows Server 2003 SP 1 Performance Top 500 # ~10 GFlops 1 500 N/A Price $40, 000 $1, 000 (40 x drop) < $4, 000 (250 x drop) Customers Applications Government Labs Large Enterprises Every Engineer & Scientist Classified, Climate, Physics Research Manufacturing, Energy, Finance, Telecom Bioinformatics, Materials Sciences, Digital Media

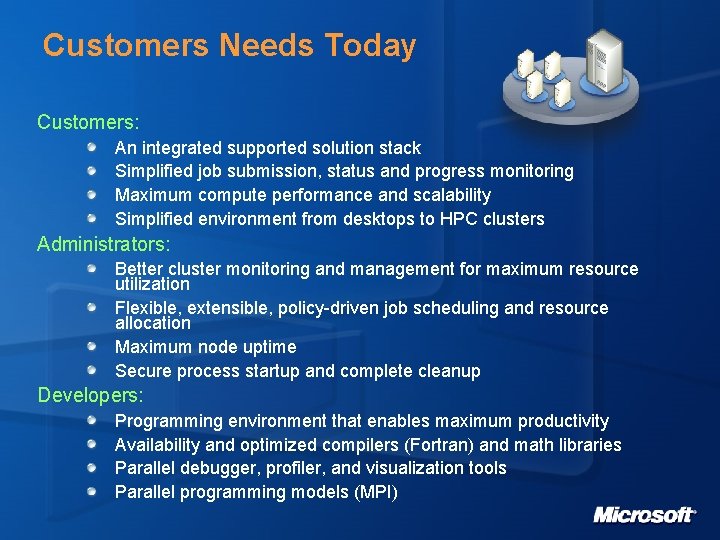

Customers Needs Today Customers: An integrated supported solution stack Simplified job submission, status and progress monitoring Maximum compute performance and scalability Simplified environment from desktops to HPC clusters Administrators: Better cluster monitoring and management for maximum resource utilization Flexible, extensible, policy-driven job scheduling and resource allocation Maximum node uptime Secure process startup and complete cleanup Developers: Programming environment that enables maximum productivity Availability and optimized compilers (Fortran) and math libraries Parallel debugger, profiler, and visualization tools Parallel programming models (MPI)

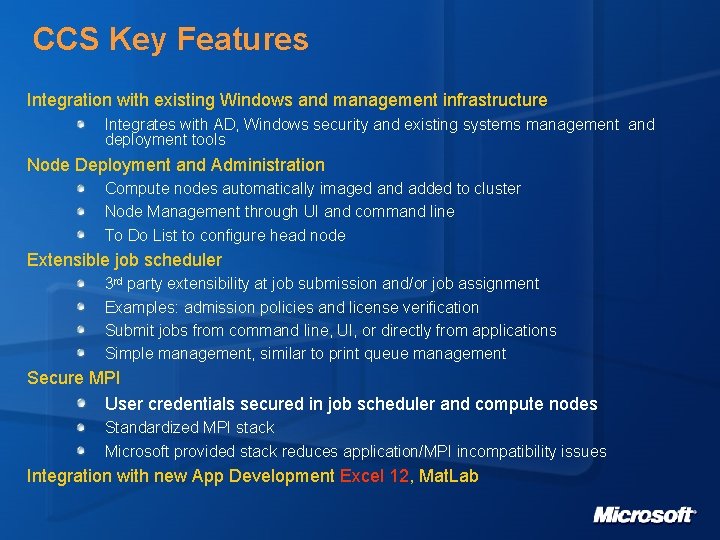

CCS Key Features Integration with existing Windows and management infrastructure Integrates with AD, Windows security and existing systems management and deployment tools Node Deployment and Administration Compute nodes automatically imaged and added to cluster Node Management through UI and command line To Do List to configure head node Extensible job scheduler 3 rd party extensibility at job submission and/or job assignment Examples: admission policies and license verification Submit jobs from command line, UI, or directly from applications Simple management, similar to print queue management Secure MPI User credentials secured in job scheduler and compute nodes Standardized MPI stack Microsoft provided stack reduces application/MPI incompatibility issues Integration with new App Development Excel 12, Mat. Lab

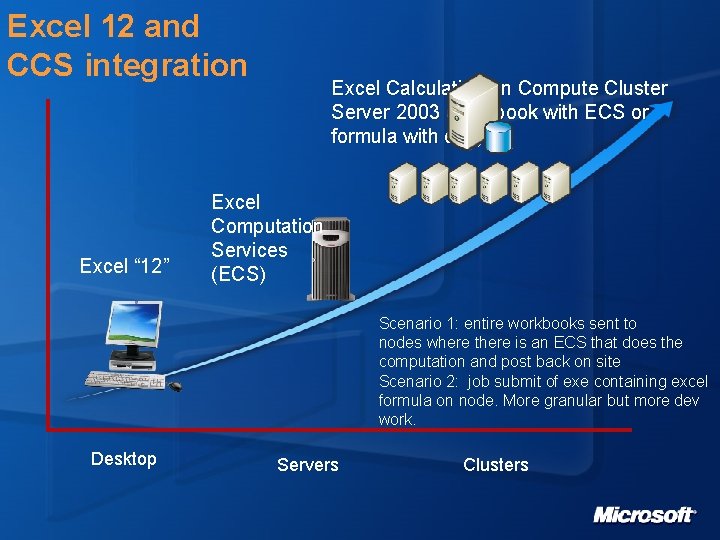

Excel 12 and CCS integration Excel “ 12” Excel Calculation on Compute Cluster Server 2003 (workbook with ECS or formula with exe) Excel Computation Services (ECS) Scenario 1: entire workbooks sent to nodes where there is an ECS that does the computation and post back on site Scenario 2: job submit of exe containing excel formula on node. More granular but more dev work. Desktop Servers Clusters

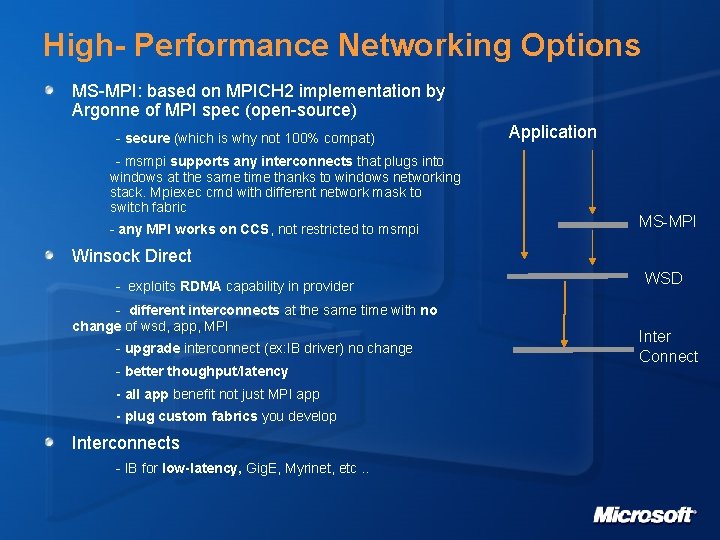

High- Performance Networking Options MS-MPI: based on MPICH 2 implementation by Argonne of MPI spec (open-source) - secure (which is why not 100% compat) - msmpi supports any interconnects that plugs into windows at the same time thanks to windows networking stack. Mpiexec cmd with different network mask to switch fabric - any MPI works on CCS, not restricted to msmpi Application MS-MPI Winsock Direct - exploits RDMA capability in provider - different interconnects at the same time with no change of wsd, app, MPI - upgrade interconnect (ex: IB driver) no change - better thoughput/latency - all app benefit not just MPI app - plug custom fabrics you develop Interconnects - IB for low-latency, Gig. E, Myrinet, etc. . WSD Inter Connect

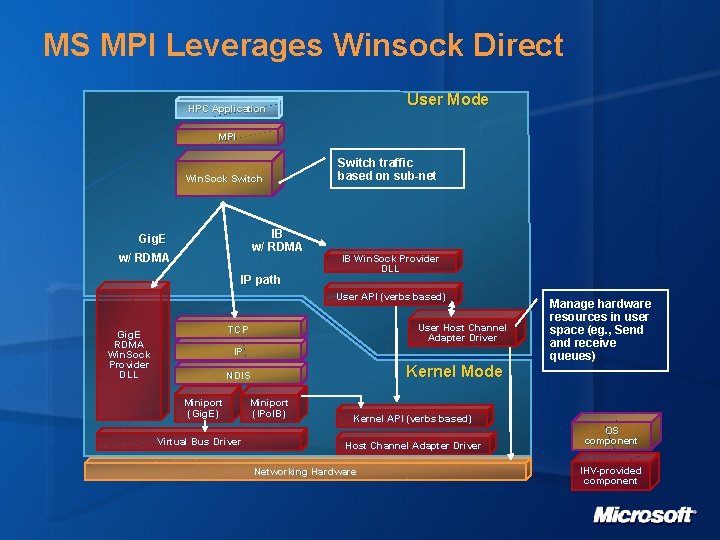

MS MPI Leverages Winsock Direct User Mode HPC Application MPI Win. Sock Switch IB w/ RDMA Gig. E w/ RDMA IP path Switch traffic based on sub-net IB Win. Sock Provider DLL User API (verbs based) User Host Channel Adapter Driver TCP Gig. E RDMA Win. Sock Provider DLL IP Kernel Mode NDIS Miniport (Gig. E) Virtual Bus Driver Manage hardware resources in user space (eg. , Send (eg. , and receive queues) Miniport (IPo. IB) Kernel API (verbs based) Host Channel Adapter Driver Networking Hardware OS component IHV-provided component

Performance Tuning Each application is different (parallel, small messages passing, large messages passing) and is sensitive to different critical factors. A unique set of mechanisms and parameters need to be applied to each application for optimal performance. Critical Factors: - Network bandwidth, Network latency, Physical RAM, CPU speed, Number of CPUs per node, File system speed, Job scheduler. Mechanisms: - RDMA zero copy for better throughput (when long transfer) - Offloads (TCP, checksum, . . ) Drivers parameters: - MPICH_SOCKET_SBUFFER_SIZE to zero for better throughput - IBWSD_POLL ~500 for low latency - Gig. E: CPU interrupt modulation off for low cpu usage

![Infini. Band in Microsoft’s Labs HPC team: - 6 clusters [6 -20 nodes] with Infini. Band in Microsoft’s Labs HPC team: - 6 clusters [6 -20 nodes] with](http://slidetodoc.com/presentation_image/2c0b7079ee59169d5cf408a1edef7902/image-11.jpg)

Infini. Band in Microsoft’s Labs HPC team: - 6 clusters [6 -20 nodes] with IB equipment for testing Other teams with smaller scale clusters: - Windows Networking - Windows Serviceability - Windows Performance - Sql Server

WHQL for Infini. Band Background WHQL = Windows Hardware Quality Labs Why WHQL? High quality set of drivers for Windows Driven by Windows Networking Team http: //www. microsoft. com/ whdc/whql/default. mspx Details A test suite for WSD providers and IP over IB Miniport drivers Include functional test only (no code coverage) Signature covers networking only (no storage)

High Speed Networking: Next Steps Contribute back to open-source project (MPICH 2/Argonne National Laboratory) with MPI perf enhancements. HPC is the first team at Microsoft contributing to an open-source project. [0 -6 months] Performance tuning whitepaper coming soon. [0 -3 months] Windows Networking releases QFE with perf enhancements. [0 -3 months] More perf work in general for WSD, MS-MPI, open. IB More network diagnosis tools

External Resources Microsoft HPC Web Site http: //www. microsoft. com/hpc Microsoft HPC Community Site http: //windowshpc. net/default. aspx Partner Information http: //www. microsoft. com/windowsserver 2003/ccs/partnerlist. mspx Develop Turbocharged Apps for Windows Compute Cluster Server http: //msdn. microsoft. com/msdnmag/issues/06/04/Cluster. Computing/default. as px Argonne National Lab’s MPI Web Site http: //www-unix. mcs. anl. gov/mpi/ Tuning MPI Programs for Peak Performance http: //mpc. uci. edu/wget/wwwunix. mcs. anl. gov/mpi/tutorial/perf/mpiperf/index. htm Winsock Direct Stability Patch http: //support. microsoft. com/kb/910481 http: //www. microsoft. com/downloads/details. aspx? familyid=A 747 A 23 D-CF 52493 C-943 B-B 95051 F 42 D 68&displaylang=en

Q &A

© 2005 Microsoft Corporation. All rights reserved. This presentation is for informational purposes only. Microsoft makes no warranties, express or implied, in this summary.

- Slides: 16