On the Potential of Automated Algorithm Configuration Frank

- Slides: 1

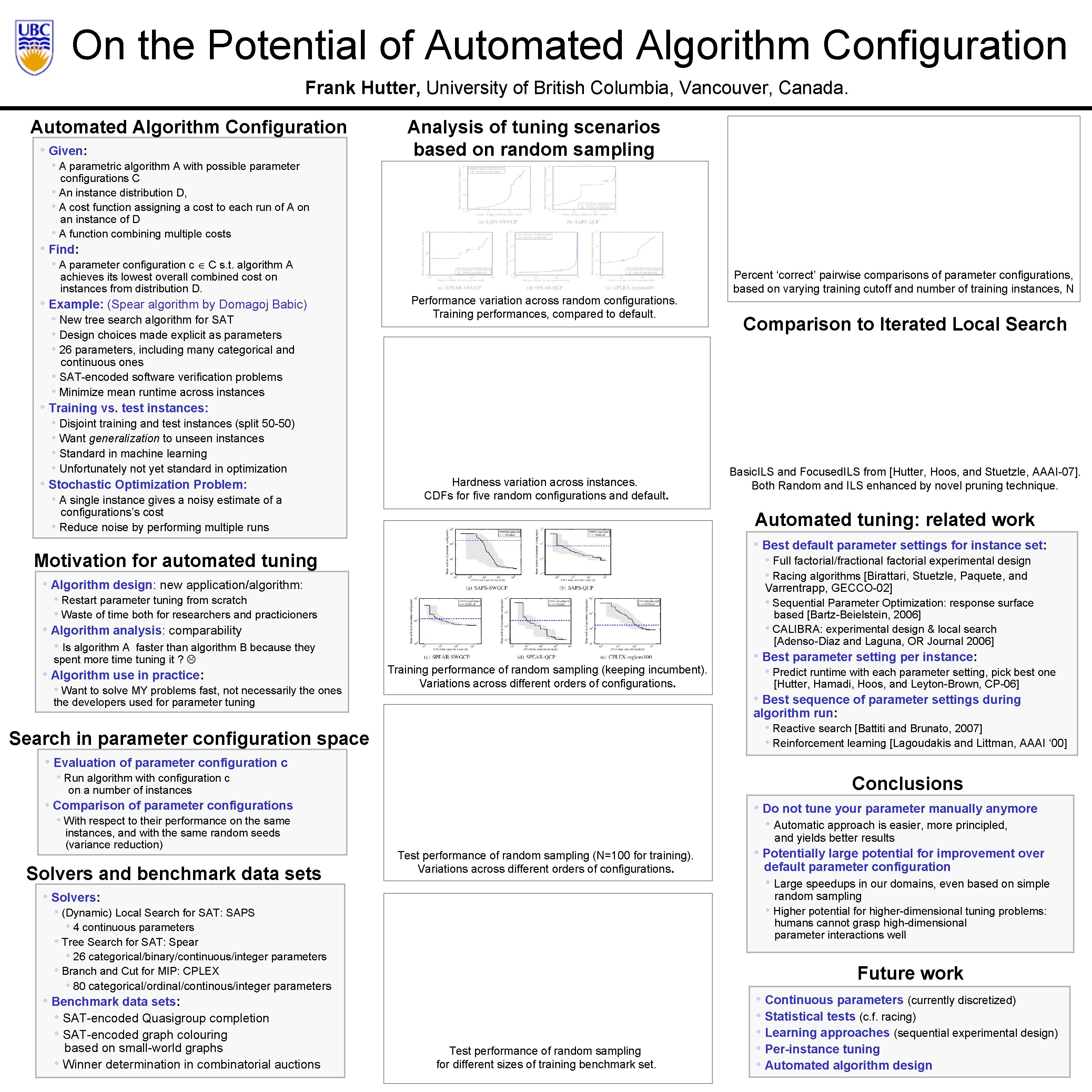

On the Potential of Automated Algorithm Configuration Frank Hutter, University of British Columbia, Vancouver, Canada. Automated Algorithm Configuration • Given: • A parametric algorithm A with possible parameter Analysis of tuning scenarios based on random sampling configurations C • An instance distribution D, • A cost function assigning a cost to each run of A on an instance of D • A function combining multiple costs • Find: • A parameter configuration c C s. t. algorithm A achieves its lowest overall combined cost on instances from distribution D. • Example: (Spear algorithm by Domagoj Babic) • New tree search algorithm for SAT • Design choices made explicit as parameters • 26 parameters, including many categorical and Performance variation across random configurations. Training performances, compared to default. Percent ‘correct’ pairwise comparisons of parameter configurations, based on varying training cutoff and number of training instances, N Comparison to Iterated Local Search continuous ones • SAT-encoded software verification problems • Minimize mean runtime across instances • Training vs. test instances: • Disjoint training and test instances (split 50 -50) • Want generalization to unseen instances • Standard in machine learning • Unfortunately not yet standard in optimization • Stochastic Optimization Problem: • A single instance gives a noisy estimate of a Hardness variation across instances. CDFs for five random configurations and default. configurations’s cost • Reduce noise by performing multiple runs Automated tuning: related work • Best default parameter settings for instance set: Motivation for automated tuning • Full factorial/fractional factorial experimental design • Racing algorithms [Birattari, Stuetzle, Paquete, and • Algorithm design: new application/algorithm: Varrentrapp, GECCO-02] • Sequential Parameter Optimization: response surface based [Bartz-Beielstein, 2006] • CALIBRA: experimental design & local search [Adenso-Diaz and Laguna, OR Journal 2006] • Restart parameter tuning from scratch • Waste of time both for researchers and practicioners Experimental Setup • Algorithm analysis: comparability • Is algorithm A faster than algorithm B because they spent more time tuning it ? • Algorithm use in practice: • Want to solve MY problems fast, not necessarily the ones Basic. ILS and Focused. ILS from [Hutter, Hoos, and Stuetzle, AAAI-07]. Both Random and ILS enhanced by novel pruning technique. Training performance of random sampling (keeping incumbent). Variations across different orders of configurations. • Best parameter setting per instance: • Predict runtime with each parameter setting, pick best one [Hutter, Hamadi, Hoos, and Leyton-Brown, CP-06] • Best sequence of parameter settings during the developers used for parameter tuning algorithm run: • Reactive search [Battiti and Brunato, 2007] • Reinforcement learning [Lagoudakis and Littman, AAAI ‘ 00] Search in parameter configuration space • Evaluation of parameter configuration c • Run algorithm with configuration c Conclusions on a number of instances • Comparison of parameter configurations • With respect to their performance on the same instances, and with the same random seeds (variance reduction) Solvers and benchmark data sets • Do not tune your parameter manually anymore • Automatic approach is easier, more principled, and yields better results Test performance of random sampling (N=100 for training). Variations across different orders of configurations. • Solvers: default parameter configuration • Large speedups in our domains, even based on simple random sampling • Higher potential for higher-dimensional tuning problems: humans cannot grasp high-dimensional parameter interactions well • (Dynamic) Local Search for SAT: SAPS • 4 continuous parameters • Tree Search for SAT: Spear • 26 categorical/binary/continuous/integer parameters • Branch and Cut for MIP: CPLEX • 80 categorical/ordinal/continous/integer parameters Future work • Benchmark data sets: • SAT-encoded Quasigroup completion • SAT-encoded graph colouring based on small-world graphs • Winner determination in combinatorial auctions • Potentially large potential for improvement over Test performance of random sampling for different sizes of training benchmark set. • Continuous parameters (currently discretized) • Statistical tests (c. f. racing) • Learning approaches (sequential experimental design) • Per-instance tuning • Automated algorithm design