On the Impossibility of Obfuscating Programs Boaz Barak

On the (Im)possibility of Obfuscating Programs Boaz Barak, Oded Goldreich, Russel Impagliazzo, Steven Rudich, Amit Sahai, Salil Vadhan and Ke Yang Presented by Shai Rubin Security Seminar, Fall 2003

![Theory/Practice “Gap” In theory [1] In practice Hackers successfully obfuscate viruses There is no Theory/Practice “Gap” In theory [1] In practice Hackers successfully obfuscate viruses There is no](http://slidetodoc.com/presentation_image/53f6080a3dbd6338f59f3e75f6a0cb38/image-2.jpg)

Theory/Practice “Gap” In theory [1] In practice Hackers successfully obfuscate viruses There is no good algorithm for obfuscating programs Researchers successfully obfuscate programs [2, 4] Companies sell obfuscation products [3] Which side are you? Security Seminar, Fall 2003

Why Do I Give This Talk? • Understand Theory/Practice Gap • An example of a good paper • An example of an interesting research: – shows how to model a practical problem in terms of complexity theory – Illustrates techniques used by theoreticians • I did not understand the paper. I thought that explaining the paper to others, will help me understand it • To hear your opinion (free consulting) • To learn how to pronounce ‘obfuscation’ Security Seminar, Fall 2003

Disclaimer • This paper is mostly about complexity theory • I’m not a complexity theory expert • I present and discuss only the main result of the paper • The paper describes extensions to the main result which I did not fully explore • Hence, some of my interpretations/conclusions/suggestions may be wrong/incomplete • You are welcome to catch me Security Seminar, Fall 2003

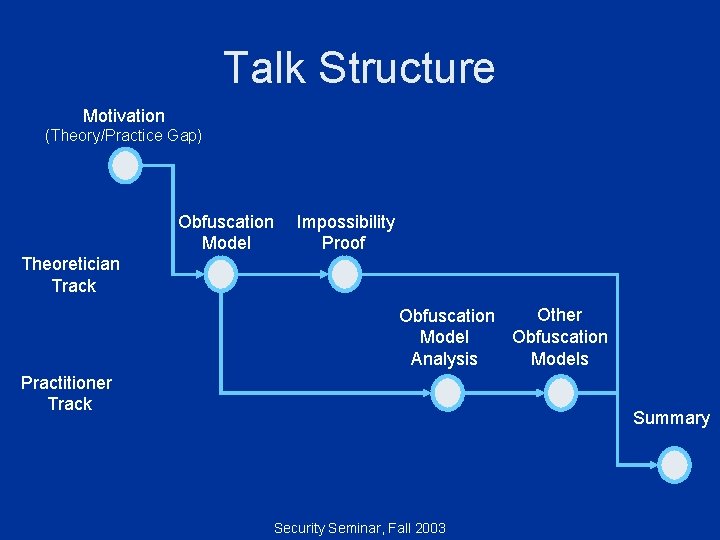

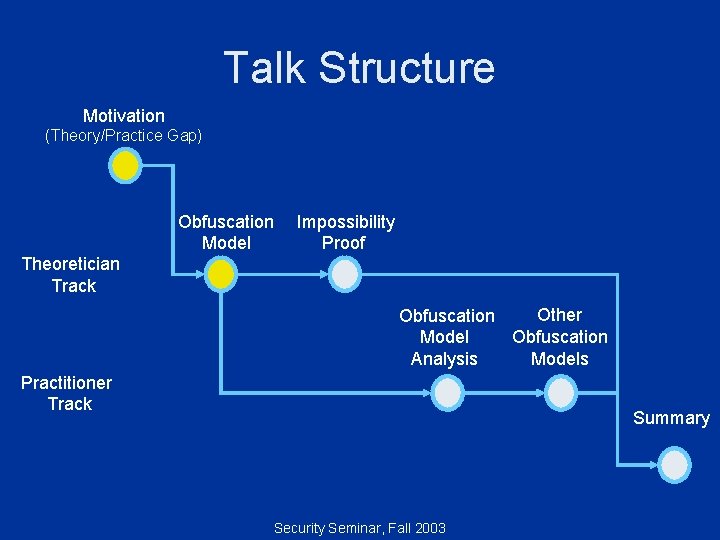

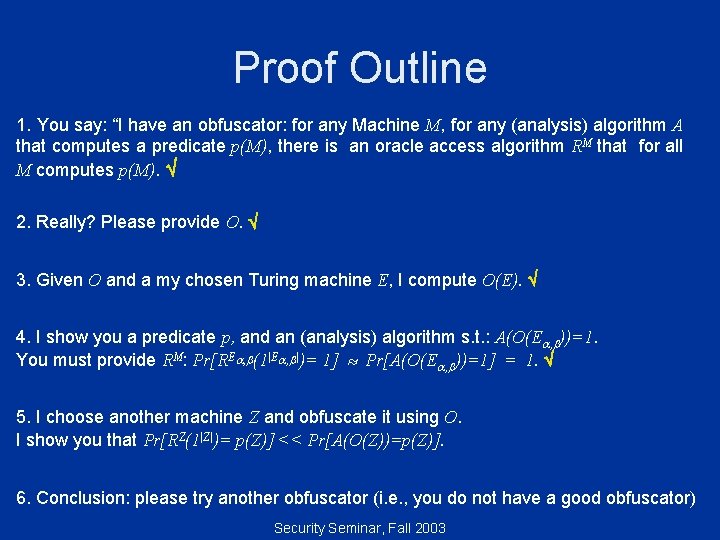

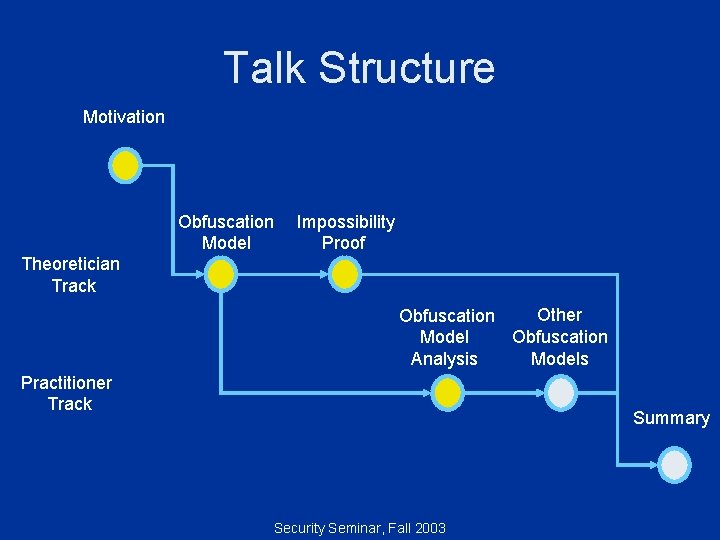

Talk Structure Motivation (Theory/Practice Gap) Obfuscation Model Impossibility Proof Theoretician Track Other Obfuscation Models Analysis Practitioner Track Summary Security Seminar, Fall 2003

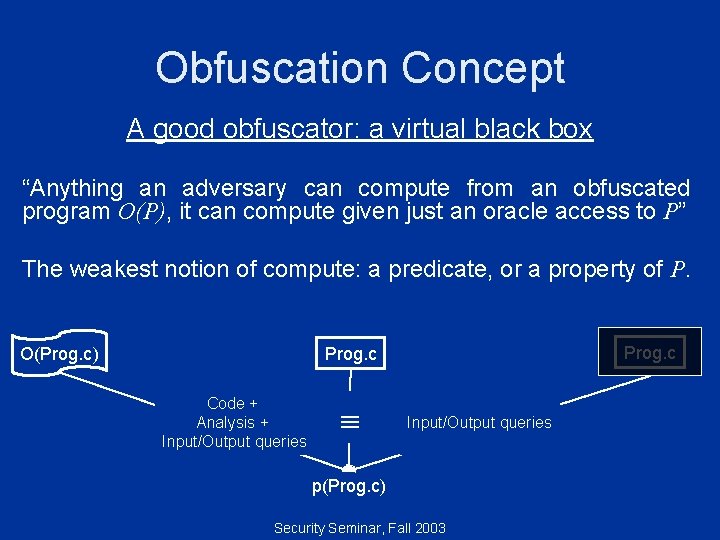

Obfuscation Concept A good obfuscator: a virtual black box “Anything an adversary can compute from an obfuscated program O(P), it can compute given just an oracle access to P” The weakest notion of compute: a predicate, or a property of P. O(Prog. c) Prog. c Code + Analysis + Input/Output queries p(Prog. c) Security Seminar, Fall 2003

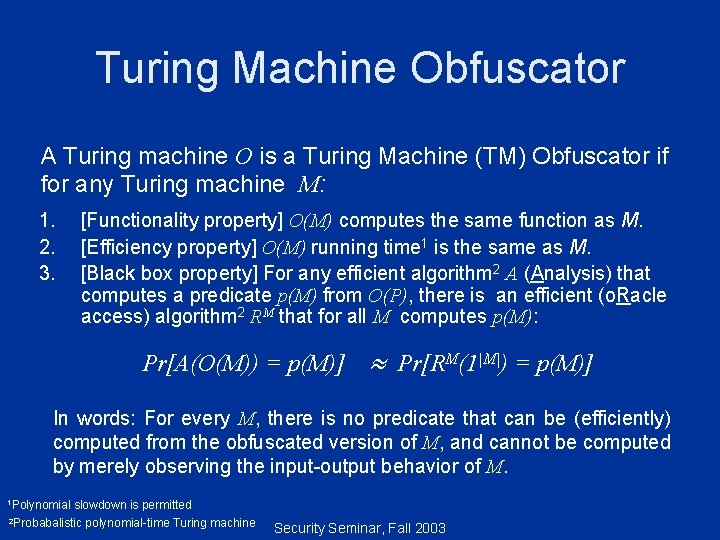

Turing Machine Obfuscator A Turing machine O is a Turing Machine (TM) Obfuscator if for any Turing machine M: 1. 2. 3. [Functionality property] O(M) computes the same function as M. [Efficiency property] O(M) running time 1 is the same as M. [Black box property] For any efficient algorithm 2 A (Analysis) that computes a predicate p(M) from O(P), there is an efficient (o. Racle access) algorithm 2 RM that for all M computes p(M): Pr[A(O(M)) = p(M)] Pr[RM(1|M|) = p(M)] In words: For every M, there is no predicate that can be (efficiently) computed from the obfuscated version of M, and cannot be computed by merely observing the input-output behavior of M. 1 Polynomial slowdown is permitted 2 Probabalistic polynomial-time Turing machine Security Seminar, Fall 2003

Talk Structure Motivation (Theory/Practice Gap) Obfuscation Model Impossibility Proof Theoretician Track Other Obfuscation Models Analysis Practitioner Track Summary Security Seminar, Fall 2003

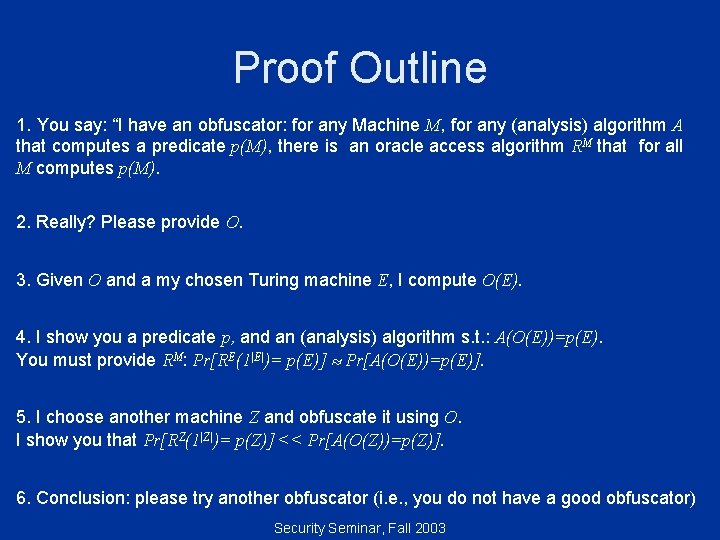

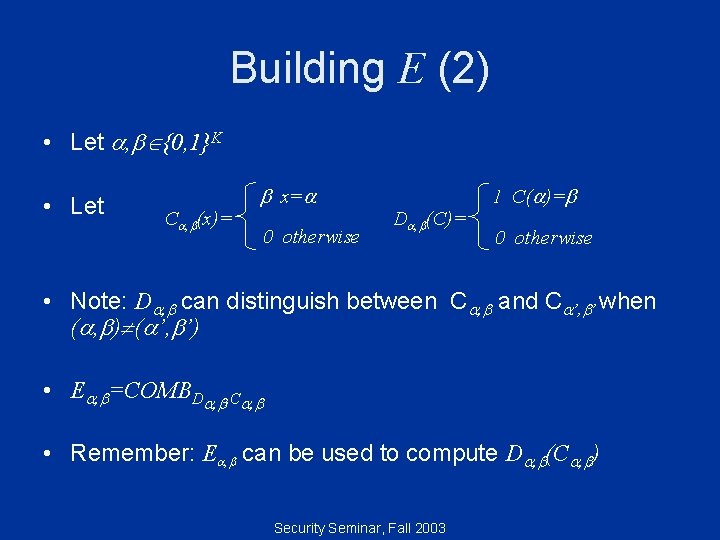

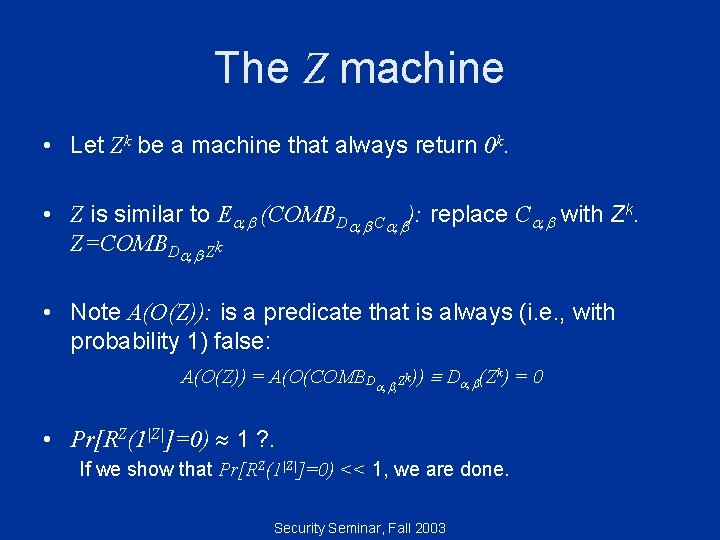

Proof Outline 1. You say: “I have an obfuscator: for any Machine M, for any (analysis) algorithm A that computes a predicate p(M), there is an oracle access algorithm RM that for all M computes p(M). 2. Really? Please provide O. 3. Given O and a my chosen Turing machine E, I compute O(E). 4. I show you a predicate p, and an (analysis) algorithm s. t. : A(O(E))=p(E). You must provide RM: Pr[RE(1|E|)= p(E)] Pr[A(O(E))=p(E)]. 5. I choose another machine Z and obfuscate it using O. I show you that Pr[RZ(1|Z|)= p(Z)] << Pr[A(O(Z))=p(Z)]. 6. Conclusion: please try another obfuscator (i. e. , you do not have a good obfuscator) Security Seminar, Fall 2003

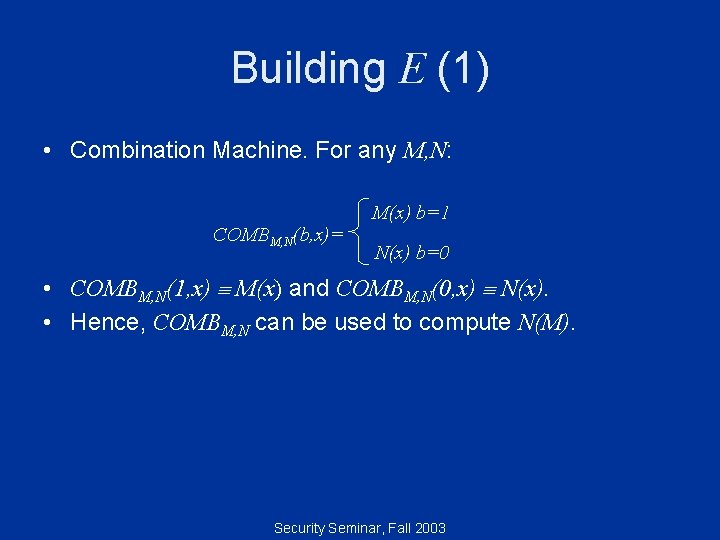

Building E (1) • Combination Machine. For any M, N: COMBM, N(b, x)= M(x) b=1 N(x) b=0 • COMBM, N(1, x) M(x) and COMBM, N(0, x) N(x). • Hence, COMBM, N can be used to compute N(M). Security Seminar, Fall 2003

Building E (2) • Let , {0, 1}K • Let C , (x)= x= 0 otherwise D , (C)= 1 C( )= 0 otherwise • Note: D , can distinguish between C , and C ’, ’ when ( , ) ( ’, ’) • E , =COMBD , , C , • Remember: E , can be used to compute D , (C , ) Security Seminar, Fall 2003

Proof Outline 1. You say: “I have an obfuscator: for any Machine M, for any (analysis) algorithm A that computes a predicate p(M), there is an oracle access algorithm RM that for all M computes p(M). 2. Really? Please provide O. 3. Given O and a my chosen Turing machine E, I compute O(E , ). 4. I show you a predicate p, and an (analysis) algorithm s. t. : A(O(E , ))=p(E , ). You must provide RM: Pr[RE , (1|E , |)= p(E , )] Pr[A(O(E , ))=p(E , )]. 5. I choose another machine Z and obfuscate it using O. I show you that Pr[RZ(1|Z|)= p(Z)] >> Pr[A(O(Z))=p(Z)]. 6. Conclusion: please try another obfuscator (i. e. , you do not have a good obfuscator) Security Seminar, Fall 2003

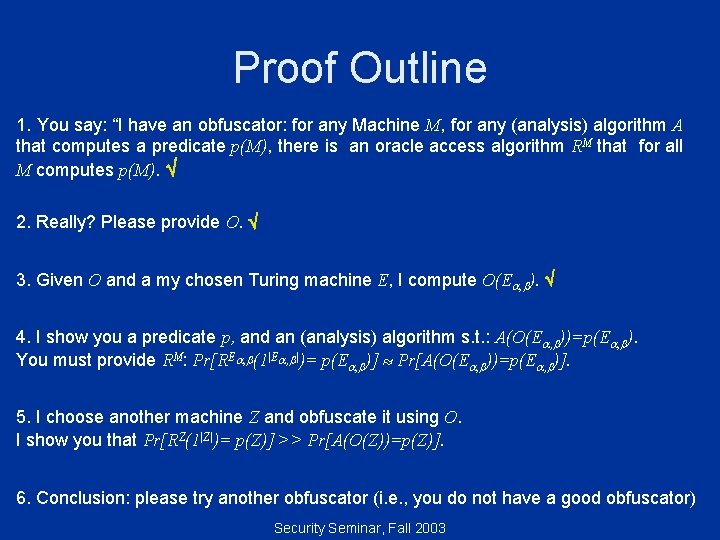

The Analysis Algorithm Input: A combination machine COMBM, N(b, x). Algorithm: 1. Decompose COMBM, N into M and N. a. b. 2. COMBM, N(1, x) M(x) COMPM, N(0, x) N(x)). Return M(N). Note: A(O(E , )) is a predicate that is always (i. e. , with probability 1) true: A(O(E , )) = A(O(COMBD , , C , )) D , (C , ) = 1 You must provide oracle access algorithm: RM s. t. Pr[RE , (1|E , |)=1] 1. Security Seminar, Fall 2003

Proof Outline 1. You say: “I have an obfuscator: for any Machine M, for any (analysis) algorithm A that computes a predicate p(M), there is an oracle access algorithm RM that for all M computes p(M). 2. Really? Please provide O. 3. Given O and a my chosen Turing machine E, I compute O(E). 4. I show you a predicate p, and an (analysis) algorithm s. t. : A(O(E , ))=1. You must provide RM: Pr[RE , (1|E , |)= 1] Pr[A(O(E , ))=1] = 1. 5. I choose another machine Z and obfuscate it using O. I show you that Pr[RZ(1|Z|)= p(Z)] << Pr[A(O(Z))=p(Z)]. 6. Conclusion: please try another obfuscator (i. e. , you do not have a good obfuscator) Security Seminar, Fall 2003

The Z machine • Let Zk be a machine that always return 0 k. • Z is similar to E , (COMBD , , C , ): replace C , with Zk. Z=COMBD , , Zk • Note A(O(Z)): is a predicate that is always (i. e. , with probability 1) false: A(O(Z)) = A(O(COMBD , , Zk)) D , (Zk) = 0 • Pr[RZ(1|Z|]=0) 1 ? . If we show that Pr[RZ(1|Z|]=0) << 1, we are done. Security Seminar, Fall 2003

![Why Pr[RZ(1|Z|]=0)<<1 ? Let us look at the execution of RE , : D Why Pr[RZ(1|Z|]=0)<<1 ? Let us look at the execution of RE , : D](http://slidetodoc.com/presentation_image/53f6080a3dbd6338f59f3e75f6a0cb38/image-16.jpg)

Why Pr[RZ(1|Z|]=0)<<1 ? Let us look at the execution of RE , : D , RE , : Start D , End C , 1 When we replace the oracle to C , with oracle to Zk, we get RZ. What will change in the execution? D , RZ : Start Pr(out’=0) Pr(query= ) 3 Inaccurate, see paper. Zk Zkk =3 = Pr(a query to C , returns non-zero) = 2 -k Security Seminar, Fall 2003 End Out’

Proof Outline 1. You say: “I have an obfuscator: for any Machine M, for any (analysis) algorithm A that computes a predicate p(M), there is an oracle access algorithm RM that for all M computes p(M). 2. Really? Please provide O. 3. Given O and a my chosen Turing machine E, I compute O(E). 4. I show you a predicate p, and an (analysis) algorithm s. t. : A(O(E))=1. You must provide RM: Pr[RE(1|E|)= 1] Pr[A(O(E))=1] = 1. 5. I choose another machine Z and obfuscate it using O. I show you that Pr[RZ(1|Z|)= 0]=2 -k << Pr[A(O(Z))=0] = 1. 6. Conclusion: please try another obfuscator (i. e. , you do not have a good obfuscator) Security Seminar, Fall 2003

Talk Structure Motivation (Theory/Practice Gap) Obfuscation Model Impossibility Proof Theoretician Track Other Obfuscation Models Analysis Practitioner Track Summary Security Seminar, Fall 2003

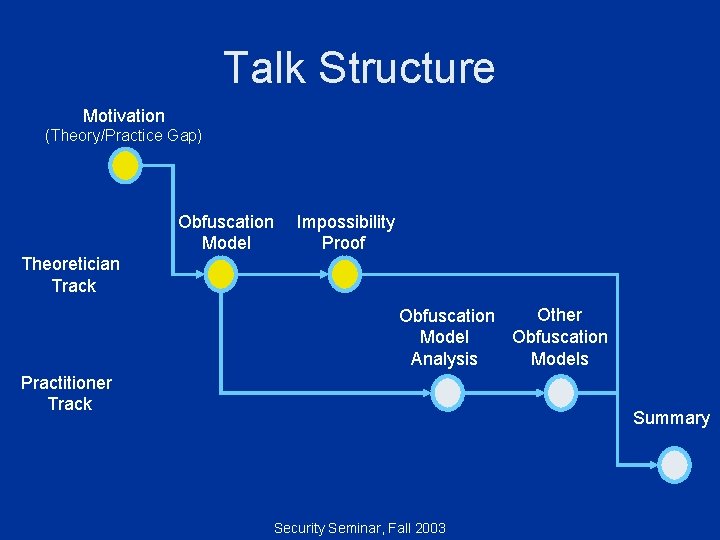

Modeling Obfuscation A good obfuscator: a virtual black box “Anything that an adversary can compute from an obfuscation O(P), it can also compute given just an oracle access to P” Prog. c O(Prog. c) Code + Analysis + Input/Output queries Knowledge • Barak shows: there are properties that cannot be efficiently learned from I/O queries, but can be learned from the code • However, we informally knew it: for example, whether a program is written in C or Pascal, or which data structure a program uses Security Seminar, Fall 2003

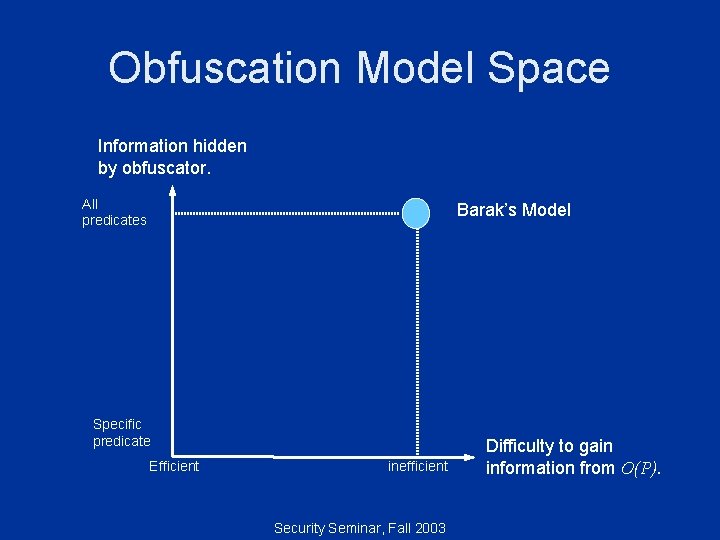

Obfuscation Model Space Information hidden by obfuscator. All predicates Barak’s Model Specific predicate Efficient inefficient Security Seminar, Fall 2003 Difficulty to gain information from O(P).

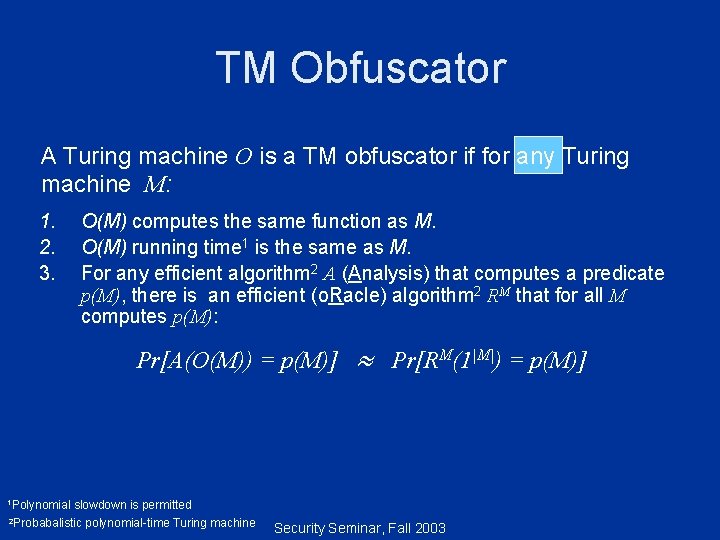

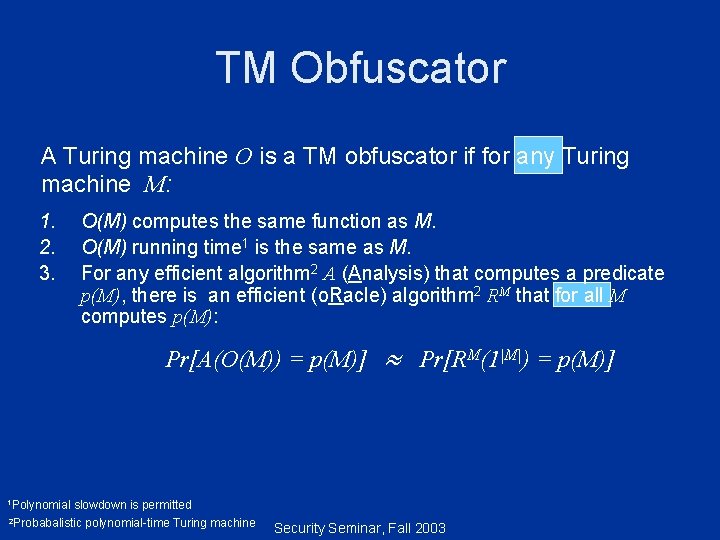

TM Obfuscator A Turing machine O is a TM obfuscator if for any Turing machine M: 1. 2. 3. O(M) computes the same function as M. O(M) running time 1 is the same as M. For any efficient algorithm 2 A (Analysis) that computes a predicate p(M), there is an efficient (o. Racle) algorithm 2 RM that for all M computes p(M): Pr[A(O(M)) = p(M)] 1 Polynomial Pr[RM(1|M|) = p(M)] slowdown is permitted 2 Probabalistic polynomial-time Turing machine Security Seminar, Fall 2003

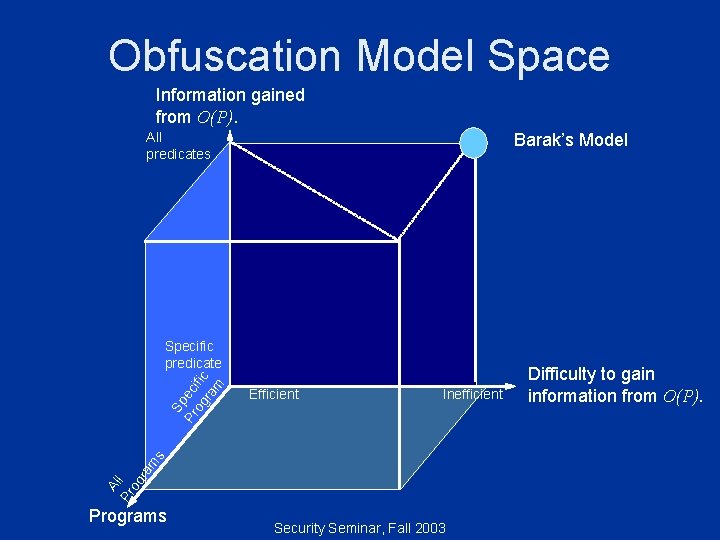

Obfuscation Model Space Information gained from O(P). All predicates Barak’s Model Efficient Inefficient Al Pr l og r am s Sp Pr ec og ific ra m Specific predicate Programs Security Seminar, Fall 2003 Difficulty to gain information from O(P).

TM Obfuscator A Turing machine O is a TM obfuscator if for any Turing machine M: 1. 2. 3. O(M) computes the same function as M. O(M) running time 1 is the same as M. For any efficient algorithm 2 A (Analysis) that computes a predicate p(M), there is an efficient (o. Racle) algorithm 2 RM that for all M computes p(M): Pr[A(O(M)) = p(M)] 1 Polynomial Pr[RM(1|M|) = p(M)] slowdown is permitted 2 Probabalistic polynomial-time Turing machine Security Seminar, Fall 2003

Talk Structure Motivation Obfuscation Model Impossibility Proof Theoretician Track Other Obfuscation Models Analysis Practitioner Track Summary Security Seminar, Fall 2003

![Other Obfuscation Models Information gained from O(P). All predicates Static Disassembly [2]: 1. Not Other Obfuscation Models Information gained from O(P). All predicates Static Disassembly [2]: 1. Not](http://slidetodoc.com/presentation_image/53f6080a3dbd6338f59f3e75f6a0cb38/image-25.jpg)

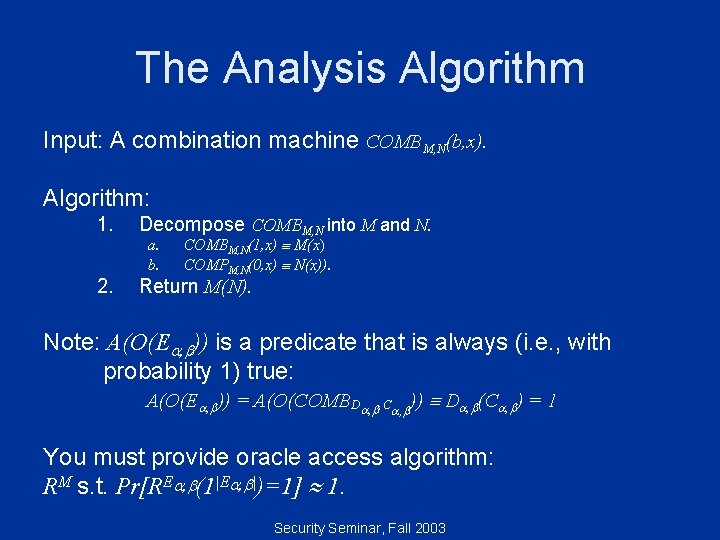

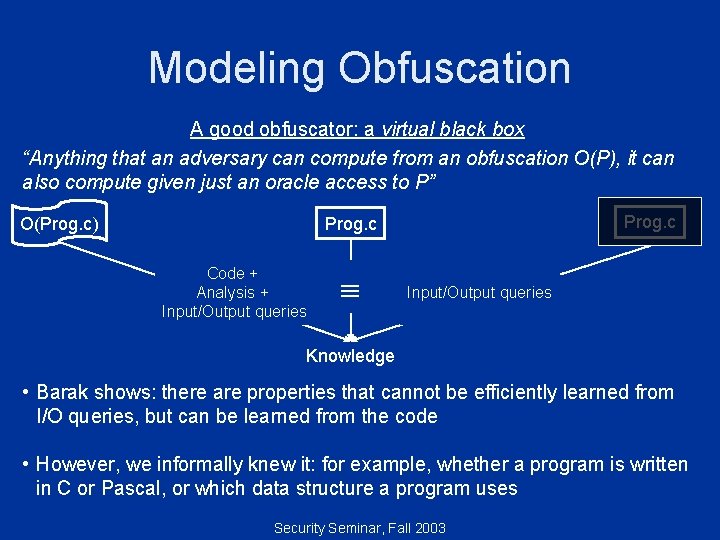

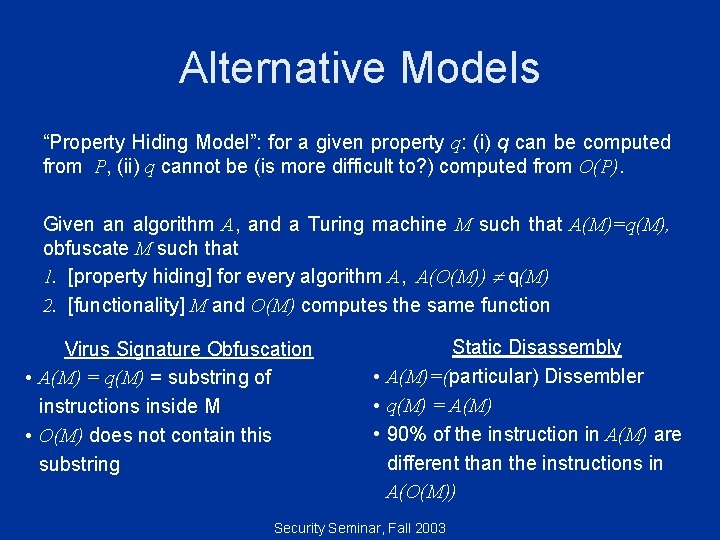

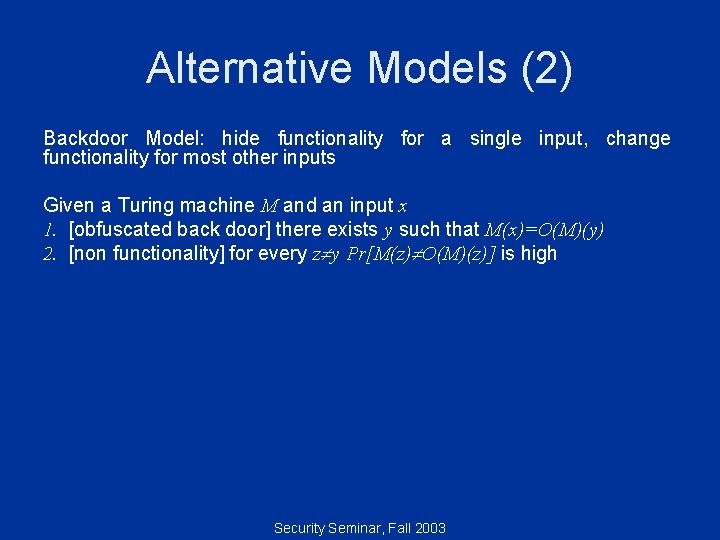

Other Obfuscation Models Information gained from O(P). All predicates Static Disassembly [2]: 1. Not all properties 2. Not difficult 3. Not virtual black box? Barak’s Model Specific predicate Efficient Inefficient Difficulty to gain information from O(P). Al Pr l og r am s Signature obfuscation: 1. Not all properties 2. Not virtual black box? Programs Security Seminar, Fall 2003

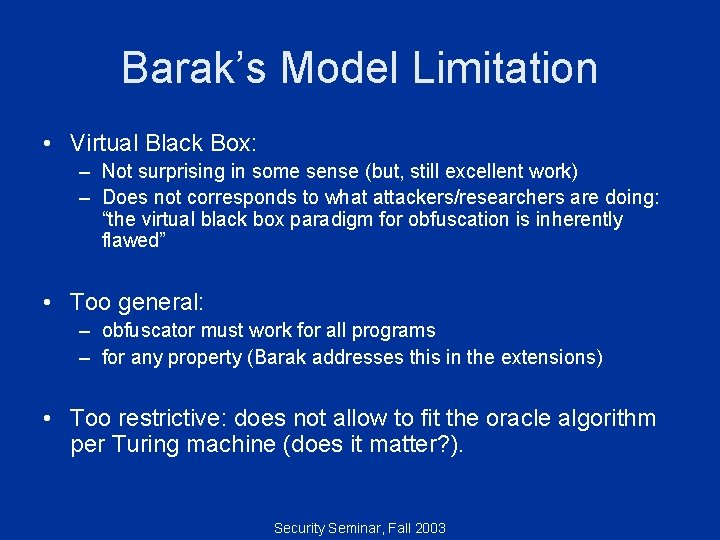

Barak’s Model Limitation • Virtual Black Box: – Not surprising in some sense (but, still excellent work) – Does not corresponds to what attackers/researchers are doing: “the virtual black box paradigm for obfuscation is inherently flawed” • Too general: – obfuscator must work for all programs – for any property (Barak addresses this in the extensions) • Too restrictive: does not allow to fit the oracle algorithm per Turing machine (does it matter? ). Security Seminar, Fall 2003

Alternative Models “Property Hiding Model”: for a given property q: (i) q can be computed from P, (ii) q cannot be (is more difficult to? ) computed from O(P). Given an algorithm A, and a Turing machine M such that A(M)=q(M), obfuscate M such that 1. [property hiding] for every algorithm A, A(O(M)) q(M) 2. [functionality] M and O(M) computes the same function Virus Signature Obfuscation • A(M) = q(M) = substring of instructions inside M • O(M) does not contain this substring Static Disassembly • A(M)=(particular) Dissembler • q(M) = A(M) • 90% of the instruction in A(M) are different than the instructions in A(O(M)) Security Seminar, Fall 2003

Alternative Models (2) Backdoor Model: hide functionality for a single input, change functionality for most other inputs Given a Turing machine M and an input x 1. [obfuscated back door] there exists y such that M(x)=O(M)(y) 2. [non functionality] for every z y Pr[M(z) O(M)(z)] is high Security Seminar, Fall 2003

Summary What to take home: • The gap is possible because: – Virtual black box paradigm is different than real world obfuscation. – The Obfuscation Model Space. • Nice research: Concept Formalism Properties • A lot remain to be done Security Seminar, Fall 2003

Bibliography 1. 2. 3. 4. B. Barak, O. Goldreich R. Impagliazzo, S. Rudich, A. Sahai, S. Vadhan and K. Yang, "On the (Im)possibility of Obfuscating Programs", CRYPTO, Aug. 2001, Santa Barbara, CA. Cullen Linn and Saumya Debray. "Obfuscation of Executable Code to Improve Resistance to Static Disassembly", CCS Oct. 2003, Washington DC. www. cloakware. com. Christian S. Collberg, Clark D. Thomborson, Douglas Low: Manufacturing Cheap, Resilient, and Stealthy Opaque Constructs. POPL 1998. Security Seminar, Fall 2003

- Slides: 30