Offline Software for the ATLAS Combined Test Beam

Offline Software for the ATLAS Combined Test Beam Ada Farilla – I. N. F. N. Roma 3 On behalf of the ATLAS Combined Test Beam Offline Team: M. Biglietti, M. Costa, B. Di Girolamo, M. Gallas, R. Hawkings, R. Mc. Pherson, C. Padilla, R. Petti, S. Rosati, A. Solodkov Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

ATLAS Combined Test Beam (CTB) Ø Ø Ø A full slice of the ATLAS detector (barrel) has been installed on the H 8 beam line of the SPS in the CERN North Area: beams of e/p/m/g will be delivered in the range 1 -350 Ge. V The data taking has started this year on May 17 th and will continue until mid November 2004: ATLAS is one of the main users of the SPS beam in 2004 The setup spans more that 50 meters and is enabling the ATLAS team to test the detector’s characteristics long before LHC starts Ø Among the most important goals for this project: 1. 2. 3. 4. 5. 6. 7. Study the detector performance of an ATLAS barrel slice Calibrate the calorimeters at a wide range of energies Gain experience with the latest offline reconstruction and simulation packages Collect data for a detailed comparison data-Monte. Carlo Gain experience with the latest Trigger and Data Acquisition packages Test the ATLAS Computing Model with real data Study commissioning aspects (i. e. integration of many sub-detectors, test the online and offline software with real data, management of conditions data) Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

ATLAS CTB: Layout Ø The setup includes elements of all the ATLAS sub-detectors: 1. Inner Detector: Pixel, Semi-Conductor Tracker (SCT), Transition Radiation Tracker (TRT) Electromagnetic Liquid Argon Calorimeter Hadronic Tile Calorimeter Muon System: Monitored Drift Tubes (MDT), Cathode Strip Chambers (CSC), Resistive Plate Chambers (RPC), Thin Gap Chambers (TGC) 2. 3. 4. Ø The DAQ includes elements of the Muon and Calorimeter Trigger (Level 1 and Level 2 Triggers) as well as Level 3 farms Ø A dedicated internal Fast Ethernet Network deals with controls. A dedicated internal Gigabit Ethernet Network deals with the data which are stored on the CERN Advanced Storage Manager (CASTOR) Ø About 5000 runs (50 Millions events) already collected so far, >1 TB of data stored Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

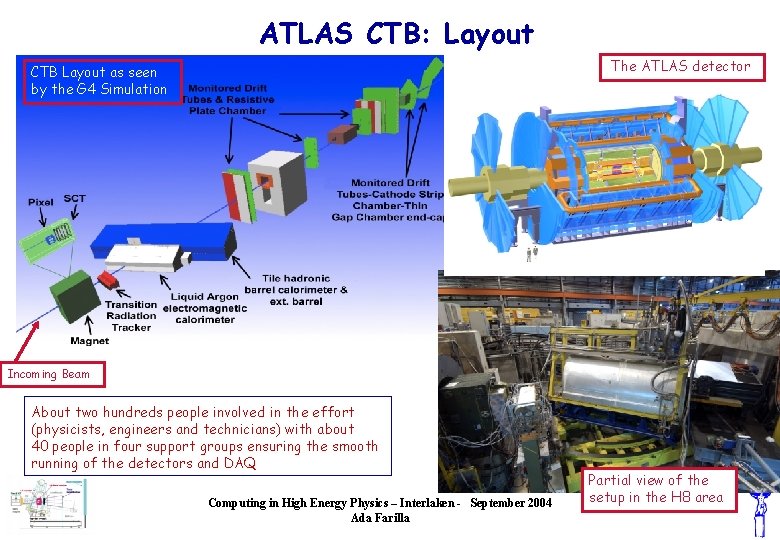

ATLAS CTB: Layout The ATLAS detector CTB Layout as seen by the G 4 Simulation Incoming Beam About two hundreds people involved in the effort (physicists, engineers and technicians) with about 40 people in four support groups ensuring the smooth running of the detectors and DAQ Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla Partial view of the setup in the H 8 area

ATLAS CTB: Offline Software Ø The CTB Offline Software is based on the standard ATLAS software, with adaptations due to the different geometry of the CTB setup w. r. t the ATLAS geometry. Very little CTB-specific code was developed, mostly for monitoring and sub-detectors’ code integration. Ø About 900 packages now in the ATLAS offline software suite, residing in a single CVS repository at CERN. Management of package dependencies libraries and executable building is performed with CMT. Ø The ATLAS software is based on the Athena/Gaudi framework, which embodies a separation between data and algorithms. Data are handled through an Event Store for event information and a Detector Store for condition information. Algorithms are driven through a flexible event loop. Common utilities (i. e. magnetic field map) are provided trough services. PYTHON Job. Options scripts allow to specify algorithms and services parameters and sequencing. Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

ATLAS CTB: Software Release Strategy Ø New ATLAS releases are built approx. every three weeks, following a predefined plan for software development Ø New CTB releases are built every week following a CVS-branch of the standard release. During test bean operation, a reason of concern has been to find a compromise between two conflicting needs: the need of new functionalities and the need of stability. The strategy to achieve that was discussed during daily/weekly meetings: a good level of communication between all the involved parties helped in solving problems and take good decisions. Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

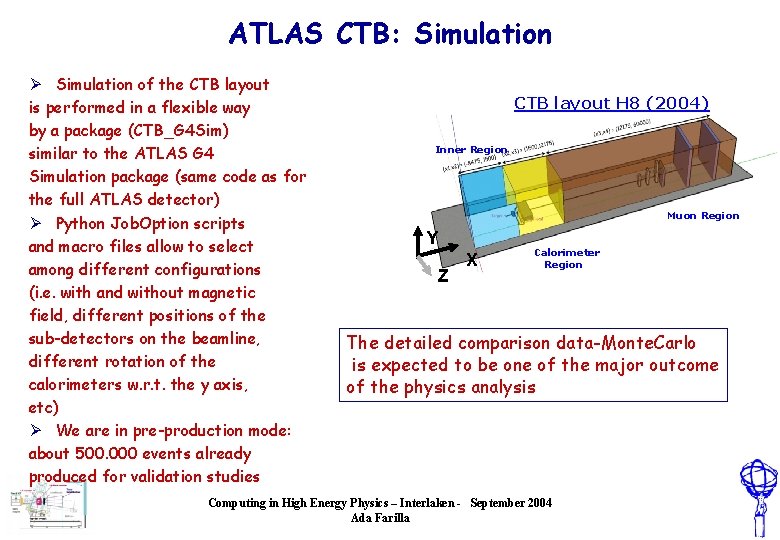

ATLAS CTB: Simulation Ø Simulation of the CTB layout is performed in a flexible way by a package (CTB_G 4 Sim) similar to the ATLAS G 4 Simulation package (same code as for the full ATLAS detector) Ø Python Job. Option scripts and macro files allow to select among different configurations (i. e. with and without magnetic field, different positions of the sub-detectors on the beamline, different rotation of the calorimeters w. r. t. the y axis, etc) Ø We are in pre-production mode: about 500. 000 events already produced for validation studies CTB layout H 8 (2004) Inner Region Muon Region Y Z X Calorimeter Region The detailed comparison data-Monte. Carlo is expected to be one of the major outcome of the physics analysis Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

ATLAS CTB: Reconstruction Ø 1. 2. 3. 4. 5. 6. 7. Full reconstruction is performed in a package (Rec. Ex. TB) that integrates all the sub-detectors’ reconstruction algorithms, through the following steps: Conversion of raw data from the online format to their Object representation Access to conditions data Access to ancillary information (i. e. scintillators, beam chambers, trigger patterns) Execution of sub-detector reconstruction Combined reconstruction across sub-detectors Production of Event Summary Data and ROOT Ntuples Production of xml files for event visualization using the Atlantis Event Display Ø From the full reconstruction of real data we are learning a lot on calibration and alignment procedures and on the performances of new reconstruction algorithms. Ø Moreover, we expect to gain experience in exercising the available tools for Physics Analysis, aiming at their wide-spread use in a community which is much bigger than the one restricted to software developers: non negligible by-product from an educational point of view Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

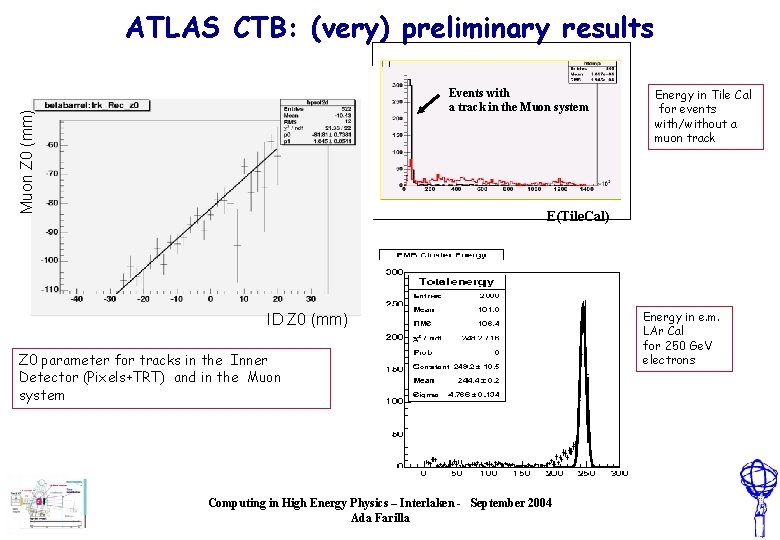

ATLAS CTB: (very) preliminary results Muon Z 0 (mm) Events with a track in the Muon system Energy in Tile Cal for events with/without a muon track E(Tile. Cal) ID Z 0 (mm) Z 0 parameter for tracks in the Inner Detector (Pixels+TRT) and in the Muon system Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla Energy in e. m. LAr Cal for 250 Ge. V electrons

ATLAS CTB: Detector Description Ø The detailed description of the detectors and of the experimental set-up is a key issue for the CTB software, along with the possibility to handle in a coherent way different experimental layouts (versioning). Ø The NOVA My. SQL database is presently used for storing detector description information and in few weeks this information will also be fully available in ORACLE Ø The new ATLAS detector description package (Geo. Model) has also been extensively used both for simulation and reconstruction. Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

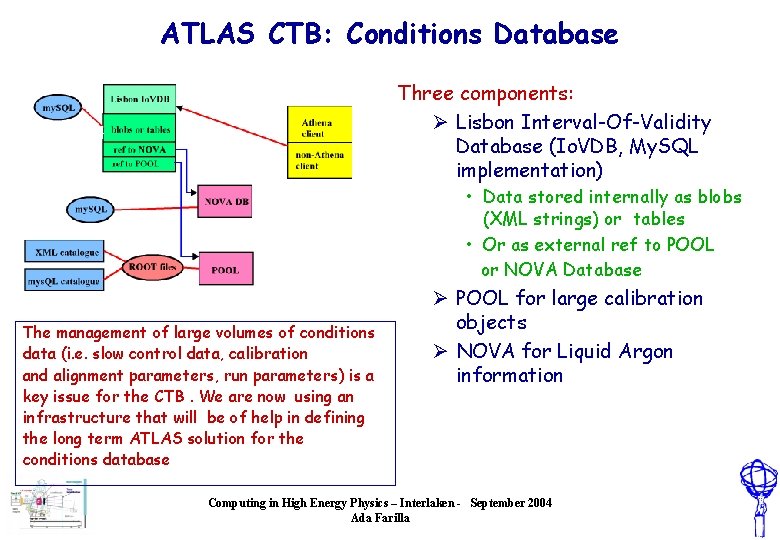

ATLAS CTB: Conditions Database Three components: Ø Lisbon Interval-Of-Validity Database (Io. VDB, My. SQL implementation) • Data stored internally as blobs (XML strings) or tables • Or as external ref to POOL or NOVA Database The management of large volumes of conditions data (i. e. slow control data, calibration and alignment parameters, run parameters) is a key issue for the CTB. We are now using an infrastructure that will be of help in defining the long term ATLAS solution for the conditions database Ø POOL for large calibration objects Ø NOVA for Liquid Argon information Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

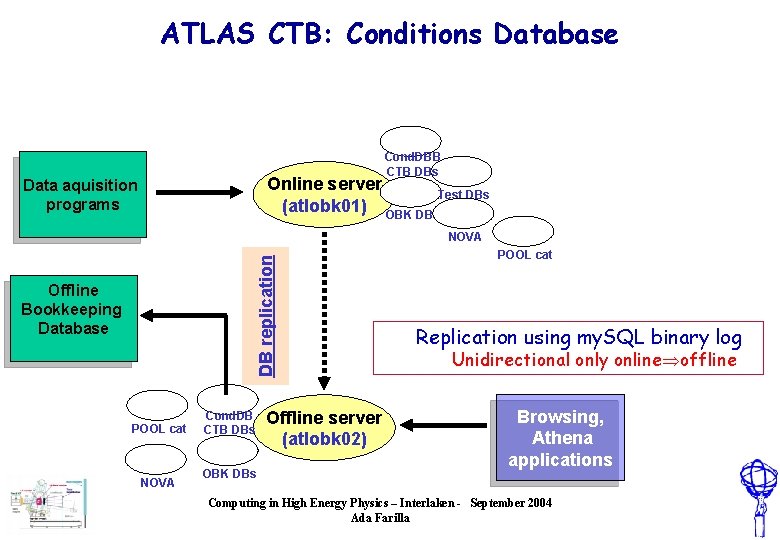

ATLAS CTB: Conditions Database Cond. DBB CTB DBs Online server Test DBs (atlobk 01) OBK DBs Data aquisition programs DB replication NOVA Offline Bookkeeping Database POOL cat NOVA Cond. DB CTB DBs OBK DBs Offline server (atlobk 02) POOL cat Replication using my. SQL binary log Unidirectional only online offline Browsing, Athena applications Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

ATLAS CTB: Production Infrastructures Ø GOAL: we have to deal with a big data set (more than 5000 runs, 10 7 events) and be able to perform many reprocessings in the next months. A production structure is in place to achieve that in short time and in a centralized way. Ø A sub-set of the information from the Conditions Database is copied to the bookkeeping database AMI (ATLAS Metadata Interface) : run number, file name, beam energy, etc. Other run information is entered by the Shifter. Ø AMI (Java application) contains a generic read-only web interface for searching. It is directly interfaced to At. Com (ATLAS Commander), a tool for automated job definition, submission and monitoring, developed for the CERN LSF Batch System, widely used during the “ATLAS Data Challenge 1” in 2003 Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

ATLAS CTB: Production Infrastructures Ø AMI, At. Com and a set of scripts represent the “production infrastructure” presently used both for real data reconstruction and for Monte. Carlo preproduction done at CERN, with Data Challenge 1 tools. Ø The “bulk” of Monte. Carlo production will be performed on Grid (“continuous production”), using the infrastructure presently used for running the “ATLAS Data Challenge 2” Both production infrastructures will be used in the coming months, operated by two different production groups Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

ATLAS CTB: High Level Trigger (HLT) Ø HLT = combination of Level 2 and Level 3 Triggers Ø HLT at the CTB as a service: Ø It provides the possibility to run “high-level monitoring” algorithms executed in the Athena processes running in the Level 3 farms. It is the first place, in the data flow, where to establish correlations between objects reconstructed in the separate sub-detectors Ø HLT at the CTB as a client: Ø Complex selection algorithms are under test with the aim to have two full slices (one slice for electrons and photons, one slice for muons) LVL 1 -> LVL 2 -> LVL 3 and producing separate outputs in the Sub Farm Output for the different selected particles Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

Conclusions Ø The Combined Test Beam is the first opportunity to exercise the “semi-final” ATLAS software with real data in a complex setup Ø All the software has been integrated and is running successfully, including the HLT: centralized reconstruction has already started on limited data samples and preliminary results are already available, showing good quality Ø Calibration, alignment and reconstruction algorithms will continue to improve and to be tested with the largest data sample ever collected in a test beam environment (O 107 events) Ø It has been a first, important step towards the integration of different sub-detectors and of people from different communities (software developers and sub-detector experts) Computing in High Energy Physics – Interlaken - September 2004 Ada Farilla

- Slides: 16