October Rotation PaperReviewer Matching Dina Elreedy Supervised by

October Rotation Paper-Reviewer Matching Dina Elreedy Supervised by: Prof. Sanmay Das

Agenda o Problem Definition and Motivation o System Design o Datasets o Experiments Results o Extension

Problem Definition and Motivation o Large Conferences receive hundreds (or thousands) of papers and have hundreds of reviewers! o Constraints Ø Reviewer Load Ø Matching Quality Challenging Task!! o Automatic Reviewer-Paper Matching systems try to maximize overall reviewers’ preferences through the assignment.

Problem Formulation Given: Matrix of paper-reviewer preferences A Goal: Find a matching Y satisfying constraints and maximizing total affinity. Challenge: Input preferences matrix A is very sparse! • We have followed same structure of Toronto Paper matching system[1], which is widely used in large AI conferences (NIPS, ICML, UAI, AISTATS , . . etc). [1] L. Charlin and R. S. Zemel, “The toronto paper matching system: an automated paper-reviewer assignment system, ” in International Conference on Machine Learning (ICML), 2013.

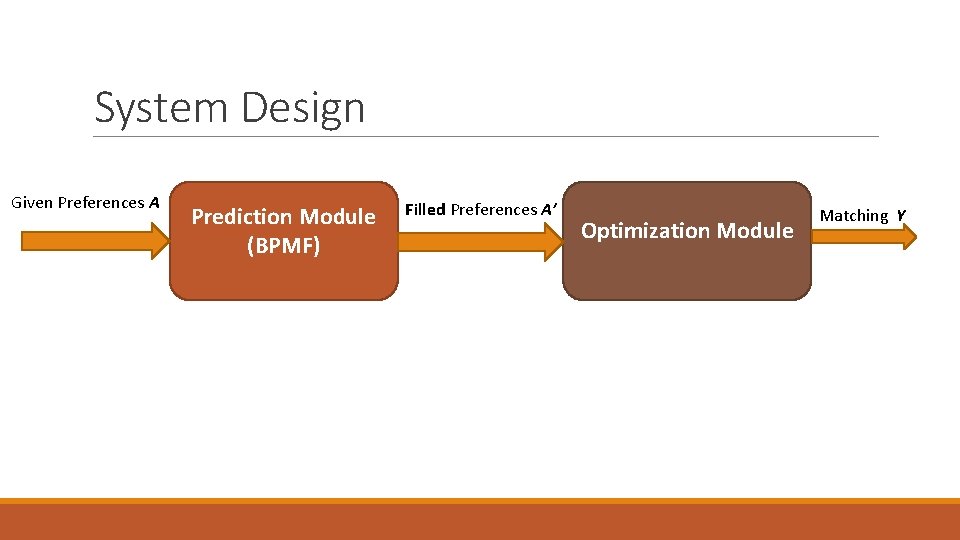

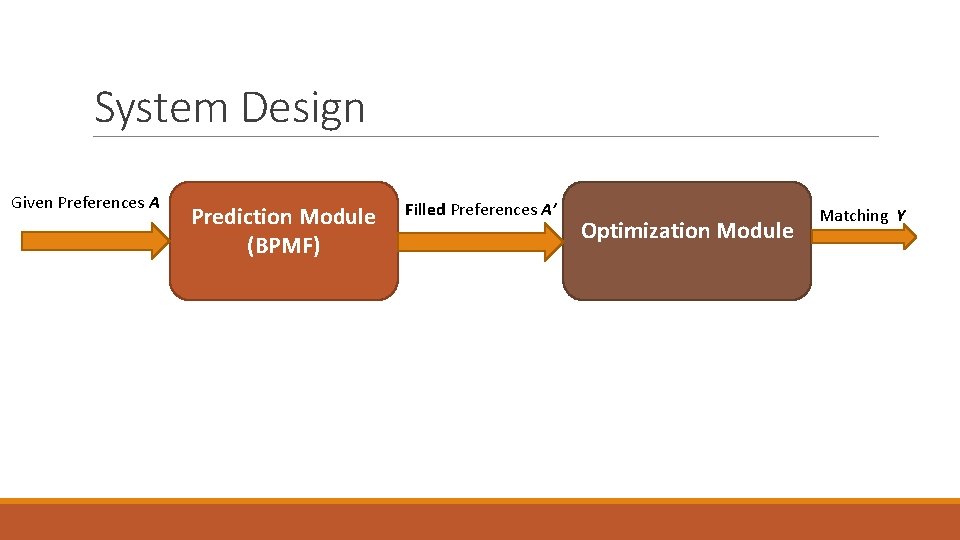

System Design Given Preferences A Prediction Module (BPMF) Filled Preferences A’ Optimization Module Matching Y

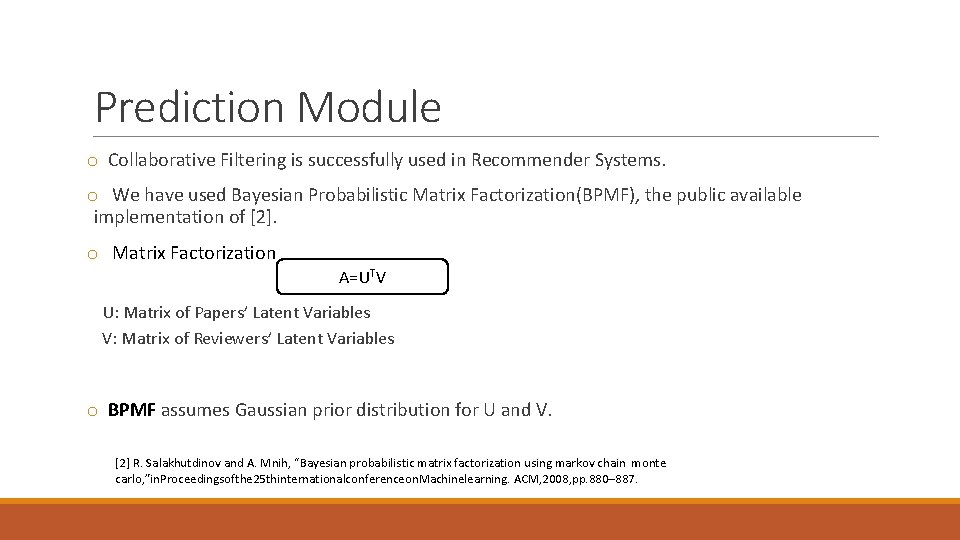

Prediction Module o Collaborative Filtering is successfully used in Recommender Systems. o We have used Bayesian Probabilistic Matrix Factorization(BPMF), the public available implementation of [2]. o Matrix Factorization A=UTV U: Matrix of Papers’ Latent Variables V: Matrix of Reviewers’ Latent Variables o BPMF assumes Gaussian prior distribution for U and V. [2] R. Salakhutdinov and A. Mnih, “Bayesian probabilistic matrix factorization using markov chain monte carlo, ”in. Proceedingsofthe 25 thinternationalconferenceon. Machinelearning. ACM, 2008, pp. 880– 887.

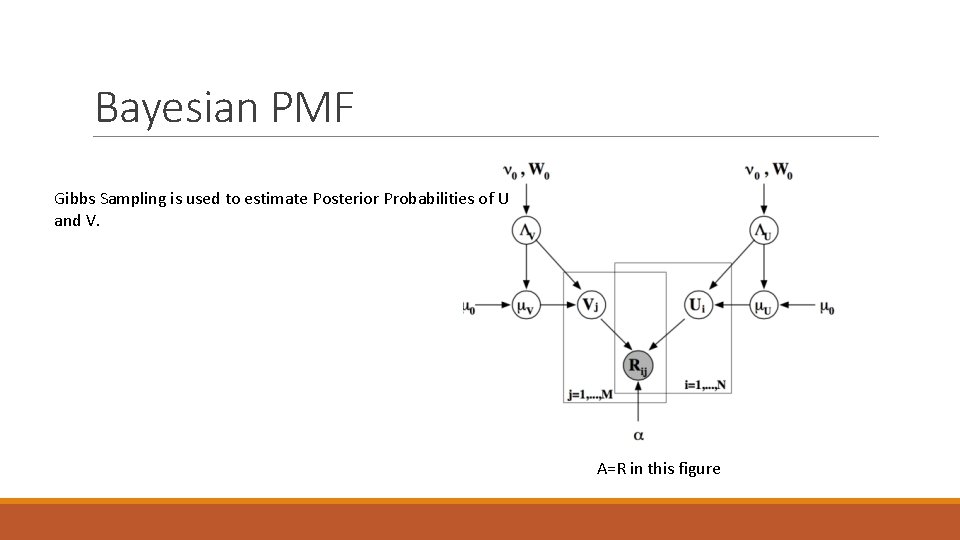

Bayesian PMF Gibbs Sampling is used to estimate Posterior Probabilities of U and V. A=R in this figure

System Design Given Preferences A Prediction Module (BPMF) Filled Preferences A’ Optimization Module Matching Y

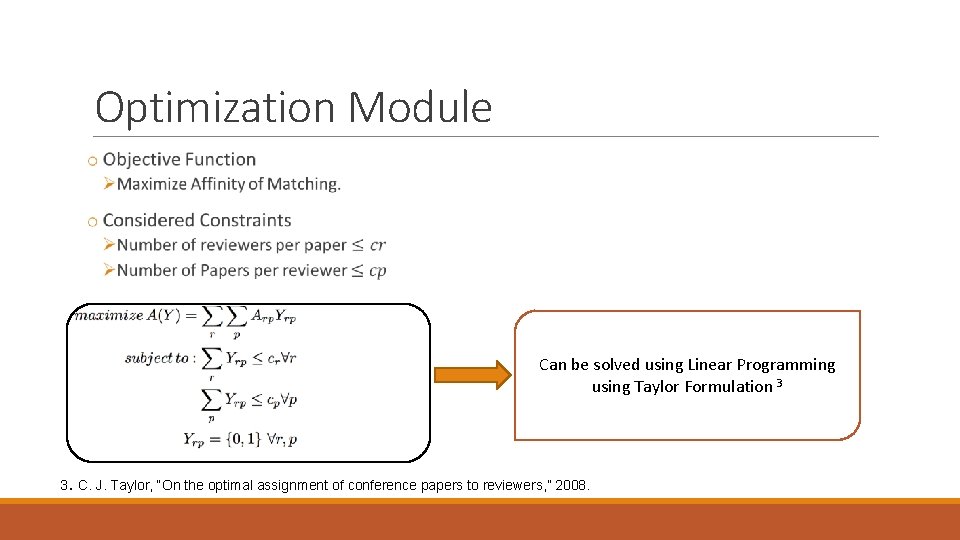

Optimization Module Can be solved using Linear Programming using Taylor Formulation 3 3. C. J. Taylor, “On the optimal assignment of conference papers to reviewers, ” 2008.

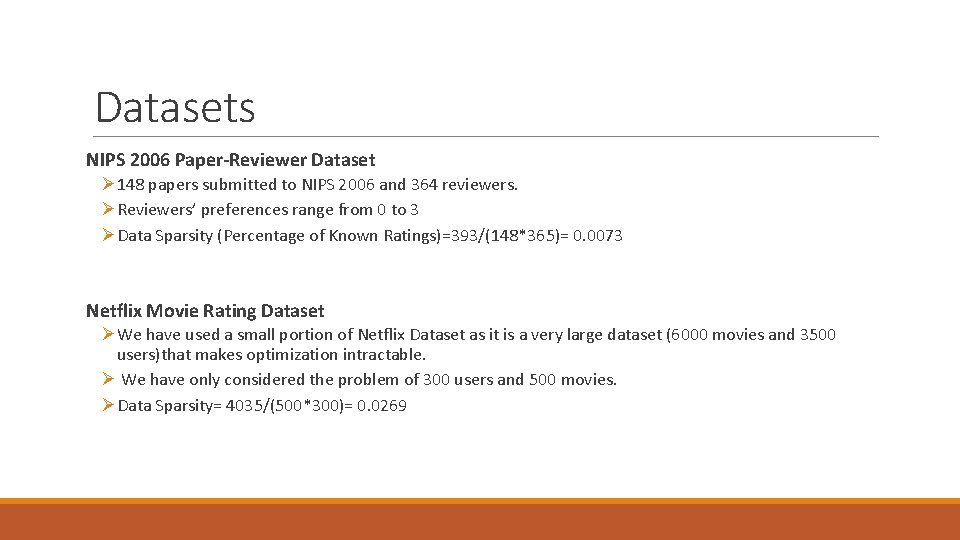

Datasets NIPS 2006 Paper-Reviewer Dataset Ø 148 papers submitted to NIPS 2006 and 364 reviewers. ØReviewers’ preferences range from 0 to 3 ØData Sparsity (Percentage of Known Ratings)=393/(148*365)= 0. 0073 Netflix Movie Rating Dataset ØWe have used a small portion of Netflix Dataset as it is a very large dataset (6000 movies and 3500 users)that makes optimization intractable. Ø We have only considered the problem of 300 users and 500 movies. ØData Sparsity= 4035/(500*300)= 0. 0269

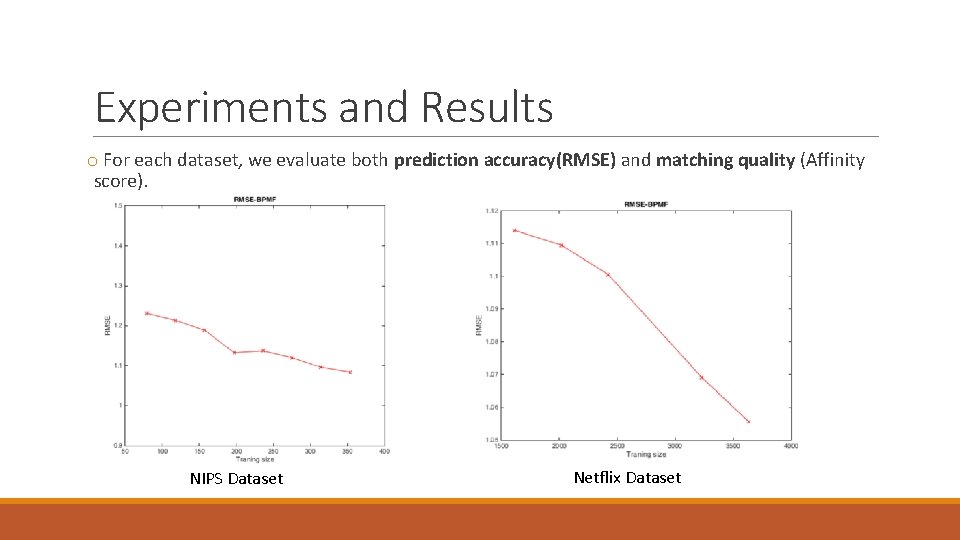

Experiments and Results o For each dataset, we evaluate both prediction accuracy(RMSE) and matching quality (Affinity score). NIPS Dataset Netflix Dataset

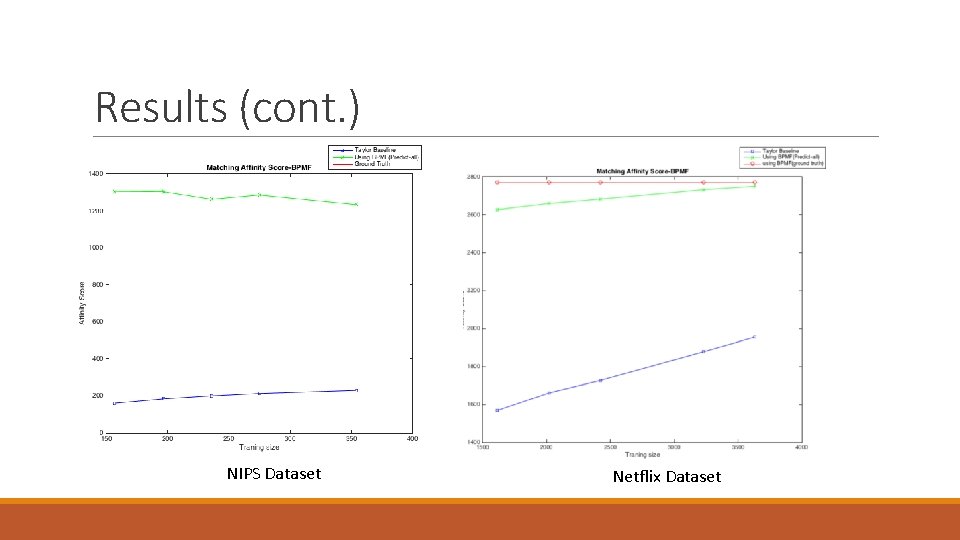

Results (cont. ) NIPS Dataset Netflix Dataset

Extension Develop Active Learning Strategies for ratings elicitation to enhance matching quality. We aim at selecting the most useful pairs for the matching processing (November Rotation).

Thank you

- Slides: 14