Ocean Store An Architecture for GlobalScale Persistent Storage

Ocean. Store: An Architecture for Global-Scale Persistent Storage John Kubiatowicz, et al ASPLOS 2000

Ocean. Store n n n 1010 users, each with 10, 000 files Global scale information storage. Mobile access to information in a uniform and highly available way. Servers are untrusted. Caches data anywhere, anytime. Monitors usage patterns.

![Ocean. Store [Rhea et al. 2003] Ocean. Store [Rhea et al. 2003]](http://slidetodoc.com/presentation_image_h2/9d4f92e474f944acf3f6d5a925107ba8/image-3.jpg)

Ocean. Store [Rhea et al. 2003]

Main Goals n n Untrusted infrastructure Nomadic data

Example applications n n Groupware and PIM Email Digital libraries Scientific data repository Personal information management tools: calendars, emails, contact lists

Example applications n n Groupware and PIM Email Digital libraries Scientific data repository Challenges: scaling, consistency, migration, network failures

Storage Organization Ocean. Store data object ~= file n Ordered sequence of read-only versions n Every version of every object kept forever n Can be used as backup n n An object contains metadata, and references to previous versions

Storage Organization n A stream of objects identified by AGUID Active globally-unique identifier n Cryptographically-secure hash of an application -specific name and the owner’s public key n Prevents namespace collisions n

Storage Organization n Each version of data object stored in a Btree like data structure n Each block has a BGUID n Cryptographically-secure hash of the block content Each version has a VGUID n Two versions may share blocks n

![Storage Organization [Rhea et al. 2003] Storage Organization [Rhea et al. 2003]](http://slidetodoc.com/presentation_image_h2/9d4f92e474f944acf3f6d5a925107ba8/image-10.jpg)

Storage Organization [Rhea et al. 2003]

Access Control n Restricting readers: n n Symmetric encryption key distributed to allowed readers. Restricting writers: n n n ACL. Signed writes. ACL for object chosen with signed certificate.

Location and Routing Attenuated Bloom Filters Find 11010

Location and Routing Plaxton-like trees

Updating data n n n All data is encrypted. A set of predicates is evaluated in order. The actions of the earliest true predicate are applied. Update is logged if it commits or aborts. Predicates: n n compare-version, compare-block, comparesize, search Actions n replace-block, insert-block, delete-block, append

Application-Specific Consistency An update is the operation of adding a new version to the head of a version stream n Updates are applied atomically n Represented as an array of potential actions n Each guarded by a predicate n

Application-Specific Consistency n Example actions Replacing some bytes n Appending new data to an object n Truncating an object n n Example predicates Check for the latest version number n Compare bytes n

Application-Specific Consistency n To implement ACID semantic Check for readers n If none, update n n Append to a mailbox n n No checking No explicit locks or leases

Application-Specific Consistency n Predicate for reads n Examples n Can’t read something older than 30 seconds n Only can read data from a specific time frame

Replication and Consistency A data object is a sequence of read-only versions, consisting of read-only blocks, named by BGUIDs n No issues for replication n The mapping from AGUID to the latest VGUID may change n Use primary-copy replication n

Serializing updates n n n A small primary tier of replicas run a Byzantine agreement protocol. A secondary tier of replicas optimistically propagate the update using an epidemic protocol. Ordering from primary tier is multicasted to secondary replicas.

The Full Update Path

Deep Archival Storage n n n Data is fragmented. Each fragment is an object. Erasure coding is used to increase reliability.

Introspection computation optimization Uses: • Cluster recognition • Replica management • Other uses observation

Software Architecture n Java atop the Staged Event Driven Architecture (SEDA) Each subsystem is implemented as a stage n With each own state and thread pool n Stages communicate through events n 50, 000 semicolons by five graduate students and many undergrad interns n

Software Architecture

Language Choice n Java: speed of development Strongly typed n Garbage collected n Reduced debugging time n Support for events n Easy to port multithreaded code in Java n n Ported to Windows 2000 in one week

Language Choice n Problems with Java: Unpredictability introduced by garbage collection n Every thread in the system is halted while the garbage collector runs n Any on-going process stalls for ~100 milliseconds n May add several seconds to requests travel cross machines n

Experimental Setup n Two test beds n Local cluster of 42 machines at Berkeley n Each with 2 1. 0 GHz Pentium III n 1. 5 GB PC 133 SDRAM n 2 36 GB hard drives, RAID 0 n Gigabit Ethernet adaptor n Linux 2. 4. 18 SMP

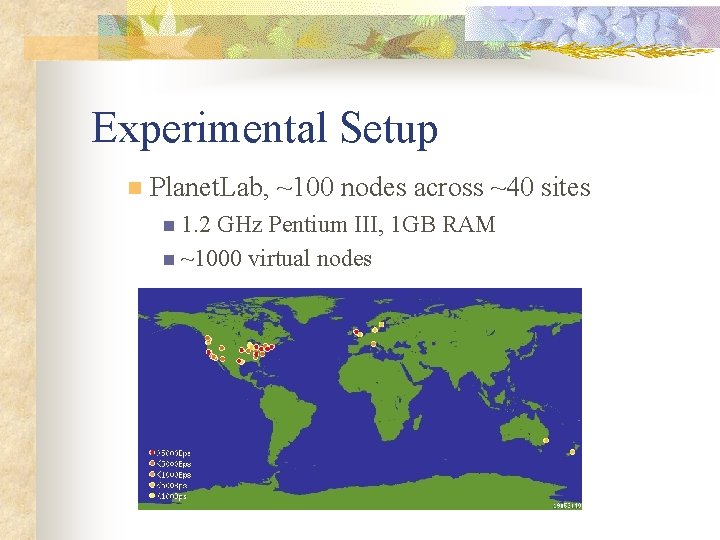

Experimental Setup n Planet. Lab, ~100 nodes across ~40 sites n 1. 2 GHz Pentium III, 1 GB RAM n ~1000 virtual nodes

Storage Overhead n For 32 choose 16 erasure encoding n n 2. 7 x for data > 8 KB For 64 choose 16 erasure encoding n 4. 8 x for data > 8 KB

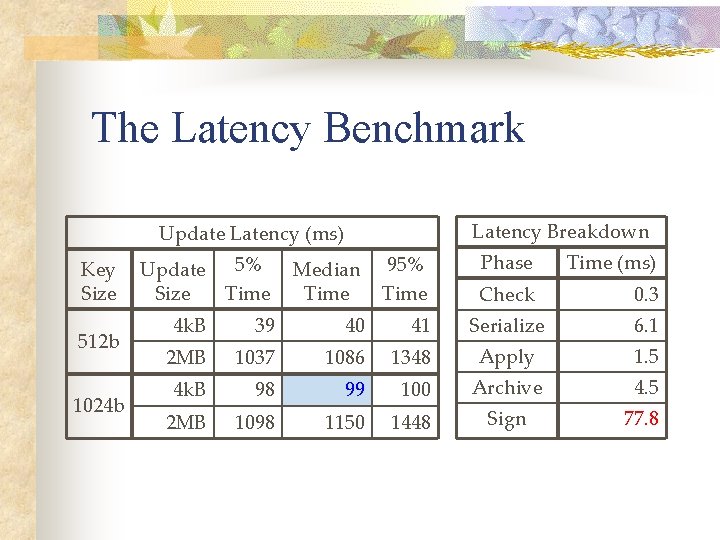

The Latency Benchmark A single client submits updates of various sizes to a four-node inner ring n Metric: Time from before the request is signed to the signature over the result is checked n Update 40 MB of data over 1000 updates, with 100 ms between updates n

The Latency Benchmark Latency Breakdown Update Latency (ms) Key Size 512 b 1024 b Update 5% Size Time Median Time 95% Time Phase Time (ms) Check 0. 3 Serialize 6. 1 4 k. B 39 40 41 2 MB 1037 1086 1348 Apply 1. 5 4 k. B 98 99 100 Archive 4. 5 2 MB 1098 1150 1448 Sign 77. 8

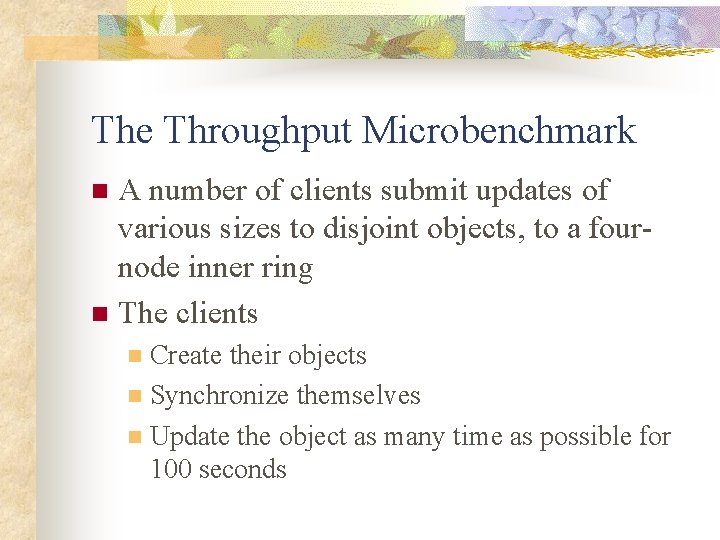

The Throughput Microbenchmark A number of clients submit updates of various sizes to disjoint objects, to a fournode inner ring n The clients n Create their objects n Synchronize themselves n Update the object as many time as possible for 100 seconds n

The Throughput Microbenchmark

Archive Retrieval Performance Populate the archive by submitting updates of various sizes to a four-node inner ring n Delete all copies of the data in its reconstructed form n A single client submits reads n

Archive Retrieval Performance n Throughput: 1. 19 MB/s (Planetlab) n 2. 59 MB/s (local cluster) n n Latency n ~30 -70 milliseconds

The Stream Benchmark n Ran 500 virtual nodes on Planet. Lab Inner Ring in SF Bay Area n Replicas clustered in 7 largest P-Lab sites n n Streams updates to all replicas One writer - content creator – repeatedly appends to data object n Others read new versions as they arrive n Measure network resource consumption n

The Stream Benchmark

The Tag Benchmark Measures the latency of token passing n Ocean. Store 2. 2 times slower than TCP/IP n

The Andrew Benchmark File system benchmark n 4. 6 x than NFS in read-intensive phases n 7. 3 x slower in write-intensive phases n

Bloom Filters n n Compact data structures for a probabilistic representation of a set Appropriate to answer membership queries [Koloniari and Pitoura]

Bloom Filters (cont’d) Query for b: check the bits at positions H 1(b), H 2(b), . . . , H 4(b). back

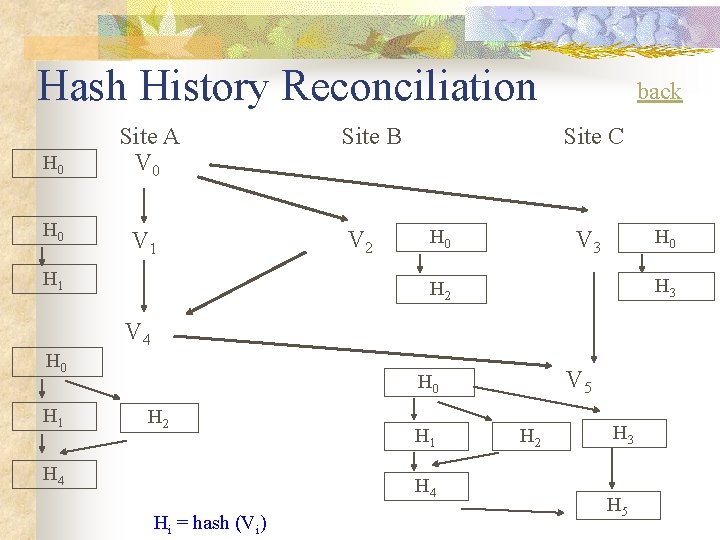

Pair-Wise Reconciliation Site A V 0 : (x 0) write x V 1 : (x 1) Site B V 0: (x 0) write x V 2: (x 2) Site C V 0 : (x 0) write x V 3 : (x 3) V 4 : (x 4) V 5: (x 5) [Kang et al. 2003]

Hash History Reconciliation H 0 Site A V 0 V 1 H 1 Site B V 2 back Site C H 0 V 3 H 0 H 3 H 2 V 4 H 0 H 1 V 5 H 0 H 2 H 4 H 1 H 4 Hi = hash (Vi) H 2 H 3 H 5

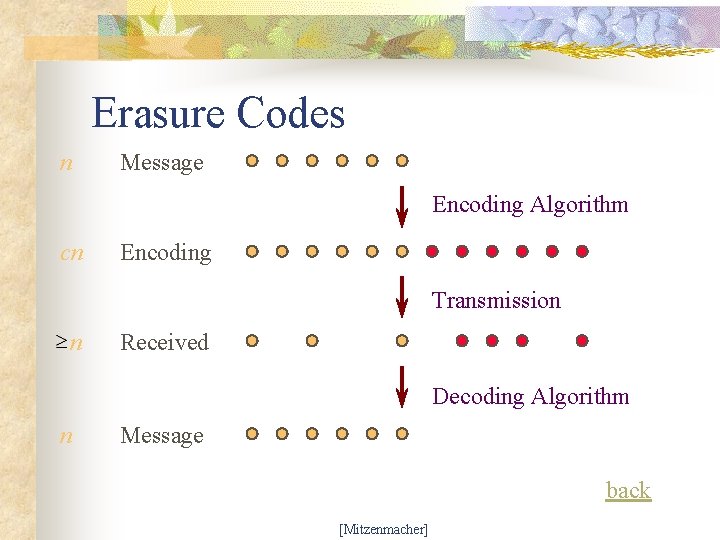

Erasure Codes n Message Encoding Algorithm cn Encoding Transmission n Received Decoding Algorithm n Message back [Mitzenmacher]

- Slides: 46