Observations for Model Intercomparisons obs 4 MIPs in

Observations for Model Intercomparisons (obs 4 MIPs) in support of Coupled Climate Model Intercomparison Project (CMIP 6) Tsengdar Lee, Ph. D. With inputs from Duane Waliser, Karl Taylor, Veronika Eyring, Peter Gleckler May 27, 2014 National Central University, Jhongli, Taiwan

Outline Background, Motivation & Plans 1) obs 4 MIPs: Evolution, Status and Plans 2) obs 4 MIPs: Standards, Conventions and Infrastructure 3) CMIP Phase 6 Planning 4) Benchmarking Climate Model Performance Ref: http: : //dx. doi. org/10. 1175/BAMS-D-12 -00204. 1 http: //www. earthsystemcog. org/projects/obs 4 mips

Initial Sentiments Behind obs 4 MIPS Under-Exploited Observations for Model Evaluation How to bring as much observational scrutiny as possible to the CMIP/IPCC process? How to best utilize the wealth of satellite observations for the CMIP/IPCC process? Courtesy of Duane Waliser

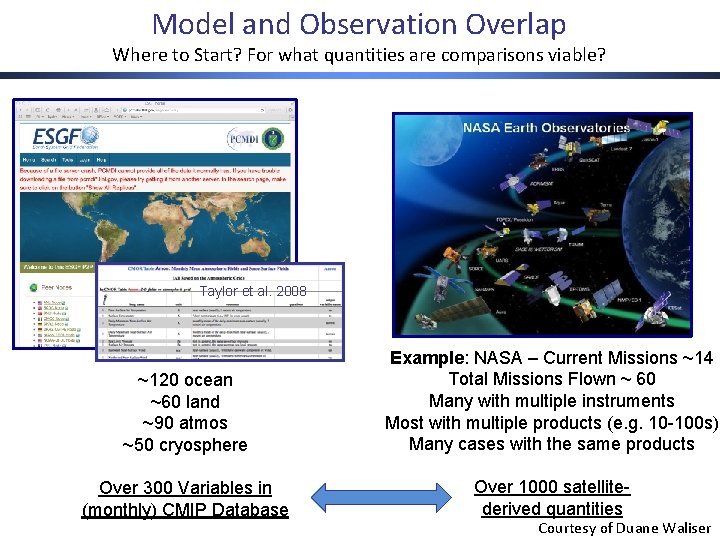

Model and Observation Overlap Where to Start? For what quantities are comparisons viable? Taylor et al. 2008 ~120 ocean ~60 land ~90 atmos ~50 cryosphere Example: NASA – Current Missions ~14 Total Missions Flown ~ 60 Many with multiple instruments Most with multiple products (e. g. 10 -100 s) Many cases with the same products Over 300 Variables in (monthly) CMIP Database Over 1000 satellitederived quantities Courtesy of Duane Waliser

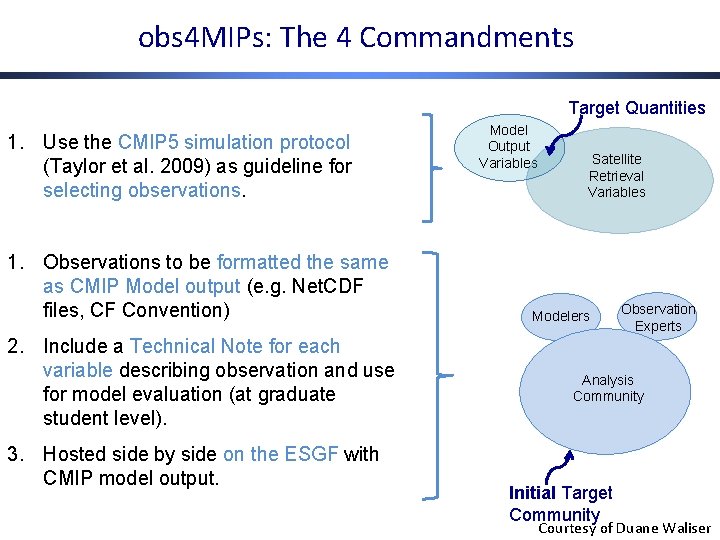

obs 4 MIPs: The 4 Commandments Target Quantities 1. Use the CMIP 5 simulation protocol (Taylor et al. 2009) as guideline for selecting observations. 1. Observations to be formatted the same as CMIP Model output (e. g. Net. CDF files, CF Convention) 2. Include a Technical Note for each variable describing observation and use for model evaluation (at graduate student level). 3. Hosted side by side on the ESGF with CMIP model output. Model Output Variables Satellite Retrieval Variables Modelers Observation Experts Analysis Community Initial Target Community Courtesy of Duane Waliser

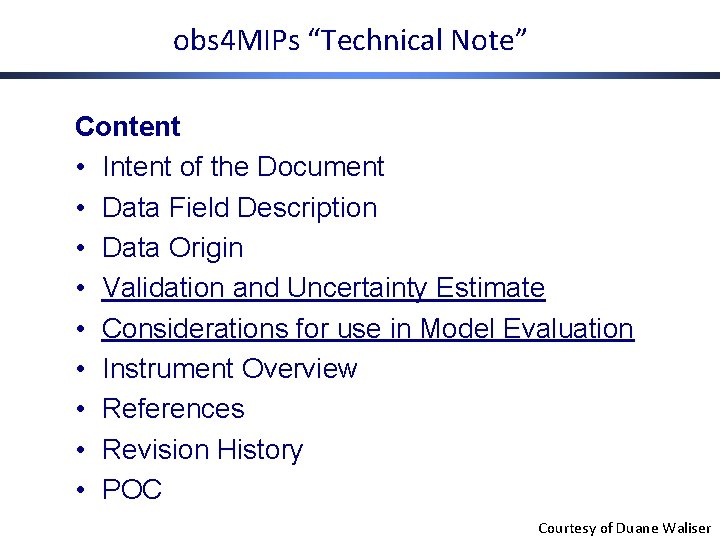

obs 4 MIPs “Technical Note” Content • Intent of the Document • Data Field Description • Data Origin • Validation and Uncertainty Estimate • Considerations for use in Model Evaluation • Instrument Overview • References • Revision History • POC Courtesy of Duane Waliser

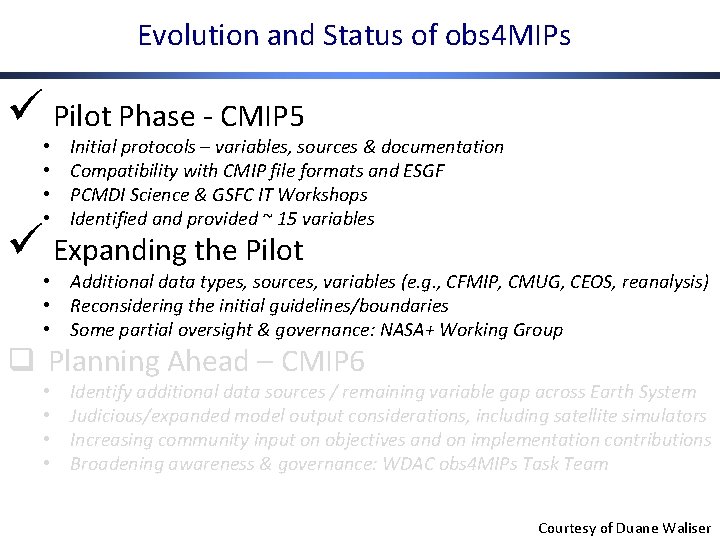

Evolution and Status of obs 4 MIPs ü Pilot Phase - CMIP 5 • • Initial protocols – variables, sources & documentation Compatibility with CMIP file formats and ESGF PCMDI Science & GSFC IT Workshops Identified and provided ~ 15 variables ü Expanding the Pilot • Additional data types, sources, variables (e. g. , CFMIP, CMUG, CEOS, reanalysis) • Reconsidering the initial guidelines/boundaries • Some partial oversight & governance: NASA+ Working Group q Planning Ahead – CMIP 6 • • Identify additional data sources / remaining variable gap across Earth System Judicious/expanded model output considerations, including satellite simulators Increasing community input on objectives and on implementation contributions Broadening awareness & governance: WDAC obs 4 MIPs Task Team Courtesy of Duane Waliser

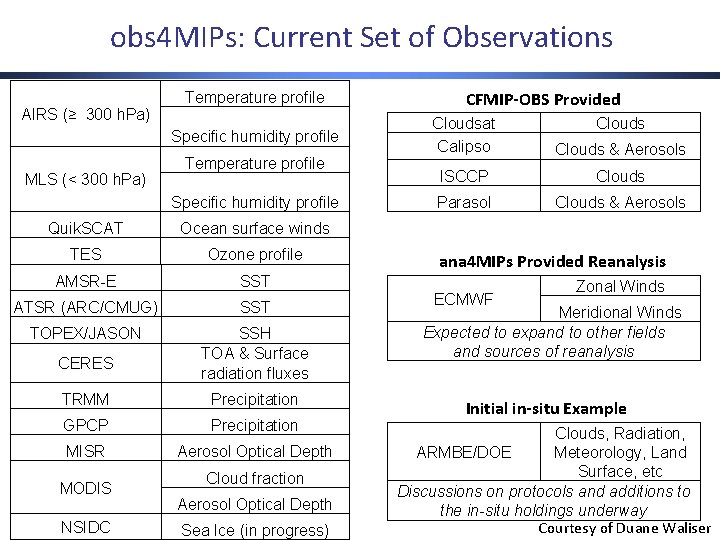

obs 4 MIPs: Current Set of Observations AIRS (≥ 300 h. Pa) Temperature profile Specific humidity profile MLS (< 300 h. Pa) Temperature profile Specific humidity profile Quik. SCAT Ocean surface winds TES Ozone profile AMSR-E SST ATSR (ARC/CMUG) SST TOPEX/JASON SSH TOA & Surface radiation fluxes CERES TRMM Precipitation GPCP Precipitation MISR Aerosol Optical Depth MODIS NSIDC Cloud fraction Aerosol Optical Depth Sea Ice (in progress) CFMIP-OBS Provided Cloudsat Calipso Clouds & Aerosols ISCCP Clouds Parasol Clouds & Aerosols ana 4 MIPs Provided Reanalysis ECMWF Zonal Winds Meridional Winds Expected to expand to other fields and sources of reanalysis Initial in-situ Example Clouds, Radiation, Meteorology, Land ARMBE/DOE Surface, etc Discussions on protocols and additions to the in-situ holdings underway Courtesy of Duane Waliser

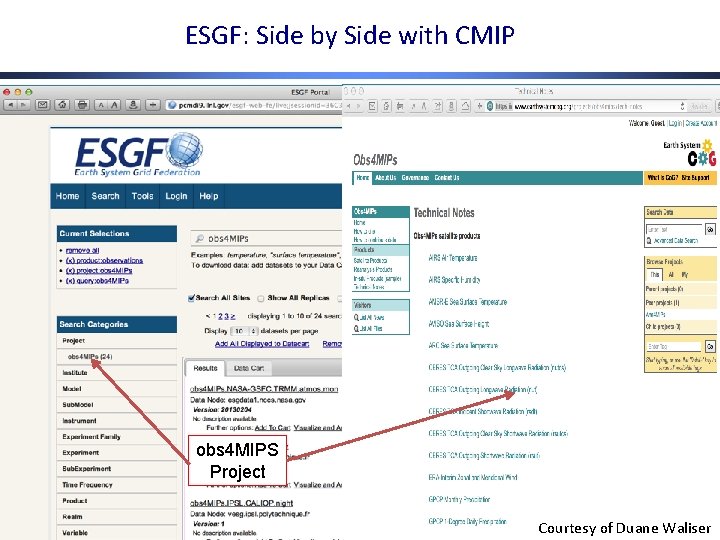

ESGF: Side by Side with CMIP obs 4 MIPS Project Courtesy of Duane Waliser

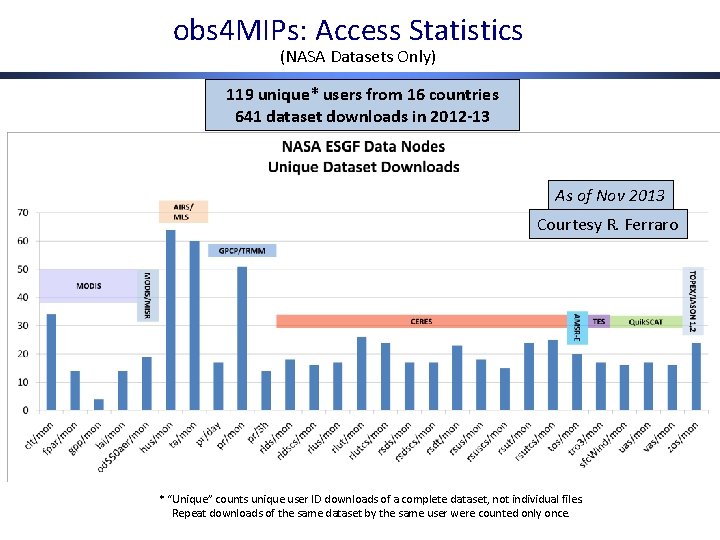

obs 4 MIPs: Access Statistics (NASA Datasets Only) 119 unique* users from 16 countries 641 dataset downloads in 2012 -13 As of Nov 2013 Courtesy R. Ferraro (NASA datasets only) * “Unique” counts unique user ID downloads of a complete dataset, not individual files. Repeat downloads of the same dataset by the same user were counted only once.

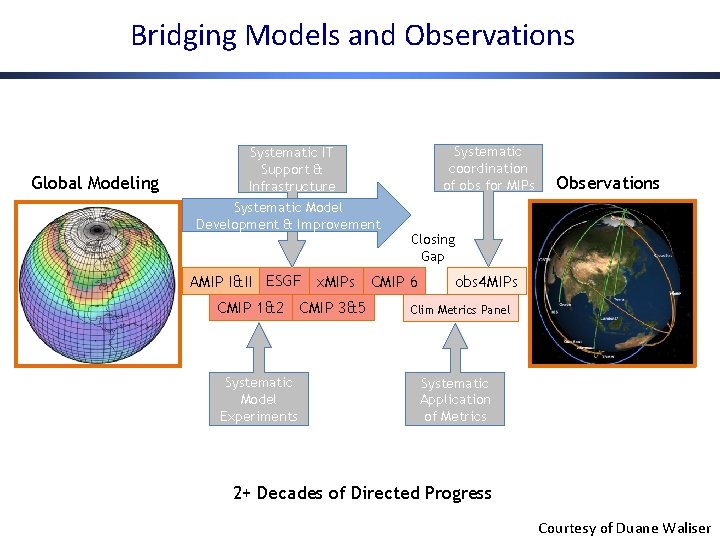

Bridging Models and Observations Global Modeling Systematic IT Support & Infrastructure Systematic Model Development & Improvement Systematic coordination of obs for MIPs Closing Gap AMIP I&II ESGF x. MIPs CMIP 6 CMIP 1&2 CMIP 3&5 Systematic Model Experiments Observations obs 4 MIPs Clim Metrics Panel Systematic Application of Metrics 2+ Decades of Directed Progress Courtesy of Duane Waliser

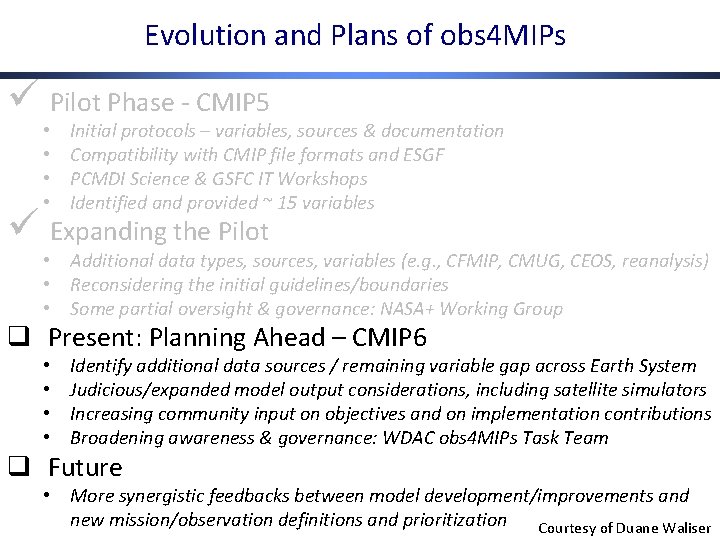

Evolution and Plans of obs 4 MIPs ü Pilot Phase - CMIP 5 • • Initial protocols – variables, sources & documentation Compatibility with CMIP file formats and ESGF PCMDI Science & GSFC IT Workshops Identified and provided ~ 15 variables ü Expanding the Pilot • Additional data types, sources, variables (e. g. , CFMIP, CMUG, CEOS, reanalysis) • Reconsidering the initial guidelines/boundaries • Some partial oversight & governance: NASA+ Working Group q Present: Planning Ahead – CMIP 6 • • Identify additional data sources / remaining variable gap across Earth System Judicious/expanded model output considerations, including satellite simulators Increasing community input on objectives and on implementation contributions Broadening awareness & governance: WDAC obs 4 MIPs Task Team q Future • More synergistic feedbacks between model development/improvements and new mission/observation definitions and prioritization Courtesy of Duane Waliser

Outline Background, Motivation & Plans 1) obs 4 MIPs: Evolution, Status and Plans 2) obs 4 MIPs: Standards, Conventions and Infrastructure 3) CMIP Phase 6 Planning 4) Benchmarking Climate Model Performance Ref: http: : //dx. doi. org/10. 1175/BAMS-D-12 -00204. 1 http: //www. earthsystemcog. org/projects/obs 4 mips

Why data standards? Standards facilitate discovery and use of data • MIP standardization has increased steadily over more than 2 decades • User community expanded from 100’s to 10, 000 Standardization requires • Conventions and controlled vocabularies • Tools enforcing or facilitating conformance Standardization enables: • ESG federated data archive • Uniform methods of reading and interpreting data • Automated methods and “smart” software to process data efficiently Courtesy of Karl Taylor

What standards is obs 4 MIPs building on? net. CDF – an API for reading and writing certain types of HDF formatted data (www. unidata. ucar. edu/software/netcdf/) CF Conventions – providing for standardized description of data contained in a file (cf-convention. github. io) Data Reference Syntax (DRS) – defining vocabulary used in uniquely identifying MIP datasets and specifying file and directory names (cmippcmdi. llnl. gov/cmip 5/output_req. html). CMIP output requirements – specifying the data structure and metadata requirements for CMIP data (cmip-pcmdi. llnl. gov/cmip 5/output_req. html) CMIP “standard output” list (cmip-pcmdi. llnl. gov/cmip 5/output_req. html) Courtesy of Karl Taylor

Standards governance and evolution CF-conventions • Governance board, Standards committee, active grass-roots input DRS • PCMDI and international data centers (BADC, DKRZ) • Extensions made by leaders of obs 4 MIPs, CORDEX, and SPECS CMIP output requirements • Specified by PCMDI (with community input) CMIP standard output • Coordinated by PCMDI, with community input and CMIP Panel oversight Courtesy of Karl Taylor

The WCRP supports adoption and extension of the CMIP standards for all its projects/activities Working Group on Coupled Modelling (WGCM) mandates use of established infrastructure for all MIPs • Standards and conventions • ESGF The WGCM has established the WGCM Infrastructure Panel (WIP) to govern evolution of standards, including: • CF metadata standards • Specifications beyond CF guaranteeing fully self-describing and easy-to-use datasets (e. g. , CMIP requirements for output) • Catalog and software interface standards ensuring remote access to data, independent of local format (e. g. , OPe. NDAP, THREDDS) • Node management and data publication protocols • Defined dataset description schemes and controlled vocabularies (e. g. , the DRS) • Standards governing model and experiment documentation (e. g. , CIM) Courtesy of Karl Taylor

Immediate needs of obs 4 MIPs CMOR needs to be generalized to better handle observational data. • CMOR was developed to meet modeling needs • Some of the attributes written by CMOR don’t apply to observations (e. g. , model name, experiment name) Modifications and extensions are needed for the DRS (should be proposed by the WDAC obs 4 MIPs Task Team to the WIP) A “recipe” for preparation of obs 4 MIPs datasets should be posted Co. G website needs to be modified to meet obs 4 MIPs requirements. Courtesy of Karl Taylor

obs 4 MIPs imperatives Align observational data with model output to facilitate analysis • Conform to data conventions followed in CMIP 5 • Serve data via ESGF Modify DRS and the dataset global attributes. Generalize CMOR software to provide an easy option for conforming data to obs 4 MIPs specifications: • Also provide user-friendly examples Configure the Co. G wiki to serve as the obs 4 MIPs website and portal to the data Review the CMIP standard output list for completeness Courtesy of Karl Taylor

Outline Background, Motivation & Plans 1) obs 4 MIPs: Evolution, Status and Plans 2) obs 4 MIPs: Standards, Conventions and Infrastructure 3) CMIP Phase 6 Planning 4) Benchmarking Climate Model Performance Ref: http: : //dx. doi. org/10. 1175/BAMS-D-12 -00204. 1 http: //www. earthsystemcog. org/projects/obs 4 mips

CMIP: Toward understanding past, present and future climate (organized by the WCRP Working Group on Coupled Modelling (WGCM)) Since 1995, the Coupled Model Intercomparison Project (CMIP) has coordinated climate model experiments involving multiple international modeling teams. CMIP has led to a better understanding of past, present and future climate change and variability. CMIP has developed in phases, with the simulations of the fifth phase, CMIP 5, now mostly completed. Though analyses of the CMIP 5 data will continue for at least several more years, science gaps and outstanding science questions have prompted preparations to get underway for the sixth phase of the project (CMIP 6). An initial proposal for the design of CMIP 6 has been published to inform interested research communities and to encourage discussion and feedback for consideration in the evolving experiment design. Meehl et al. , EOS, 2014 Courtesy of veronika Eyring

Preparing for Phase 6 of the Coupled Model Intercomparison Project (CMIP 6) see updates on the CMIP Panel website http: //www. wcrp-climate. org/index. php/wgcm-cmip/about-cmip Initial proposal for the design of CMIP 6 to inform interested research communities and to encourage discussion and feedback for consideration in the evolving experiment design. See details at Meehl, G. A. , R. Moss, K. E. Taylor, V. Eyring, R. J. Stouffer, S. Bony and B. Stevens, Climate Model Intercomparisons: Preparing for the Next Phase, Eos Trans. AGU, 95(9), 77, 2014. Feedback on this initial CMIP 6 proposal is being solicited over the year from modeling groups and model analysts. Please send comments to CMIP Panel chair, Veronika Eyring, Veronika. Eyring@dlr. de by September 2014. The WGCM and the CMIP Panel will then iterate on the proposed experiment design, with the intention to finalize it at its meeting in October, 2014. • Based on CMIP 5 survey and Aspen & WGCM/AIMES meetings. Courtesy of veronika Eyring

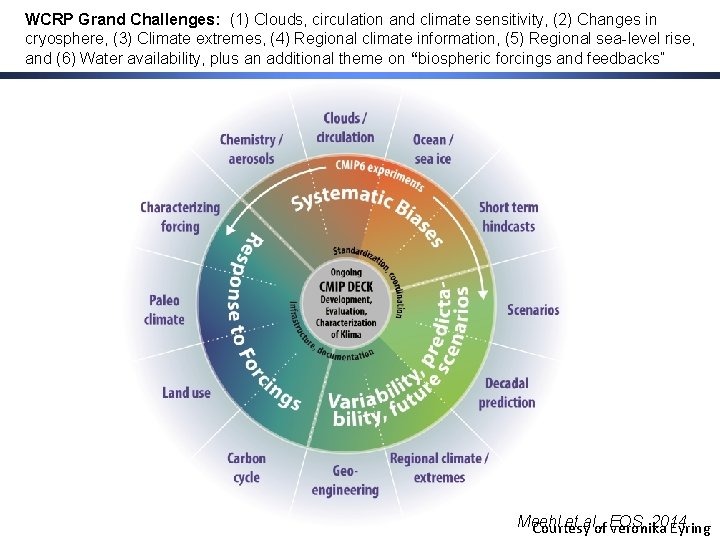

Initial CMIP 6 Proposal: Scientific Focus • The specific experimental design would be focused on three broad scientific questions: 1. How does the Earth System respond to forcing? 2. What are the origins and consequences of systematic model biases? 3. How can we assess future climate changes given climate variability, predictability and uncertainties in scenarios? All relate to Obs 4 MIPs • It is proposed to use as the scientific backdrop for CMIP 6 the six WCRP Grand Challenges, and an additional theme encapsulating questions related to biospheric forcings and feedbacks. 1. Clouds, Circulation and Climate Sensitivity 2. Changes in Cryosphere 3. Climate Extremes 4. Regional Climate Information 5. Regional Sea-level Rise 6. Water Availability 7. AIMES theme for collaboration: biospheric forcings and feedbacks Meehl et al. , EOS, 2014 Courtesy of veronika Eyring

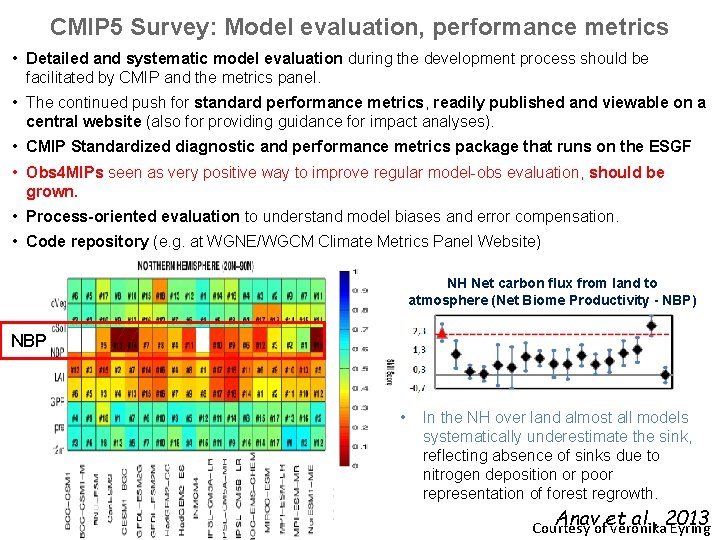

CMIP 5 Survey: Model evaluation, performance metrics • Detailed and systematic model evaluation during the development process should be facilitated by CMIP and the metrics panel. • The continued push for standard performance metrics, readily published and viewable on a central website (also for providing guidance for impact analyses). • CMIP Standardized diagnostic and performance metrics package that runs on the ESGF • Obs 4 MIPs seen as very positive way to improve regular model-obs evaluation, should be grown. • Process-oriented evaluation to understand model biases and error compensation. • Code repository (e. g. at WGNE/WGCM Climate Metrics Panel Website) NH Net carbon flux from land to atmosphere (Net Biome Productivity - NBP) NBP • In the NH over land almost all models systematically underestimate the sink, reflecting absence of sinks due to nitrogen deposition or poor representation of forest regrowth. Anavofet al. , 2013 Courtesy veronika Eyring

Initial CMIP 6 Proposal: A Distributed Organization under the oversight of the CMIP Panel CMIP would be comprised of two elements: 1. Ongoing CMIP Diagnostic, Evaluation and Characterization of Klima (DECK) experiments: a small set of standardized experiments that would be performed whenever a new model is developed. The DECK experiments are chosen to provide continuity across past and future phases of CMIP, to evolve only slowly with time, and to take advantage of what is already common practice in many modeling centers: i. an AMIP simulation (~1979 -2010); ii. a multi-hundred year pre-industrial control simulation; iii. a 1%/yr CO 2 increase simulation to quadrupling to derive the transient climate response; iv. an instantaneous 4 x. CO 2 run to derive the equilibrium climate sensitivity; v. a simulation starting in the 19 th century and running through the 21 st century using an existing scenario (RCP 8. 5). 2. Standardization, coordination, infrastructure, and documentation functions that make the simulations and their main characteristics performed under CMIP available to the broader community. Meehl et al. , 2014 Courtesy of EOS, veronika Eyring

Initial CMIP 6 Proposal: A Distributed Organization under the oversight of the CMIP Panel CMIP Phase 6 (CMIP 6): CMIP 6 -Endorsed MIPs would propose additional experiments, and modeling groups could choose a subset of these to run according to their interest, computing and/or human resources and funding constraints. The MIPs would also likely have additional experiments that would not be part of CMIP 6 but would be of interest and relevant to their respective communities. Participation A scientist or group of scientists could send a ‘Request for a CMIP 6 -Endorsed MIP’ at any time to the CMIP Panel Chair (see template on CMIP webpage). The main criteria for MIPs to be endorsed for CMIP 6 are The MIP addresses at least one of the key science questions of CMIP 6; The MIP follows CMIP standards in terms of experimental design, data format and documentation; A sufficient number of modeling groups have agreed to participate in the MIP; The MIP builds on the shared CMIP DECK experiments; A commitment to contribute to the creation of the CMIP 6 data request. Meehl et al. , 2014 Courtesy of EOS, veronika Eyring

WCRP Grand Challenges: (1) Clouds, circulation and climate sensitivity, (2) Changes in cryosphere, (3) Climate extremes, (4) Regional climate information, (5) Regional sea-level rise, and (6) Water availability, plus an additional theme on “biospheric forcings and feedbacks” Meehl et al. , 2014 Courtesy of EOS, veronika Eyring

Model Evaluation • A CMIP benchmarking and evaluation software package (made available to everyone, for example through the WGNE/WGCM metrics panel wiki) would produce well-established analyses as soon as model results become available. • The objective is to enable routine model evaluation and to aid the model development process by providing feedback concerning systematic model errors in the individual models. Communication The new distributed nature of CMIP 6 requires WGCM and the CMIP Panel to play a strong role in facilitating communication between MIPs, and between the MIPs and the modeling groups. Next Steps and Time Line • The overall data preparation will follow procedures developed in CMIP 5. • The historical emissions would be made available in spring 2015, and the emissions for the future climate scenarios by the end of 2015. • Analyses of CMIP 6 data would be ongoing, with the simulation phase of CMIP 6 running for five years, from 2015 to 2020, followed by many more years of model analysis. • The runs for the Scenario. MIP would probably occur near the end of the CMIP 6 cycle, and thus likely begin in 2017 and continue into 2018. • A possible IPCC AR 6 that would likely assess CMIP 6 simulations could take place from roughly 2017 to 2020, but when or even whethere will be an AR 6 will not be known until 2015 at the earliest. Even without an AR 6, CMIP 6 will still operate, as previous phases of CMIP have, to provide a set of state-of-the-art global climate model simulations as a resource for the international climate science community. Meehl et al. , EOS, 2014

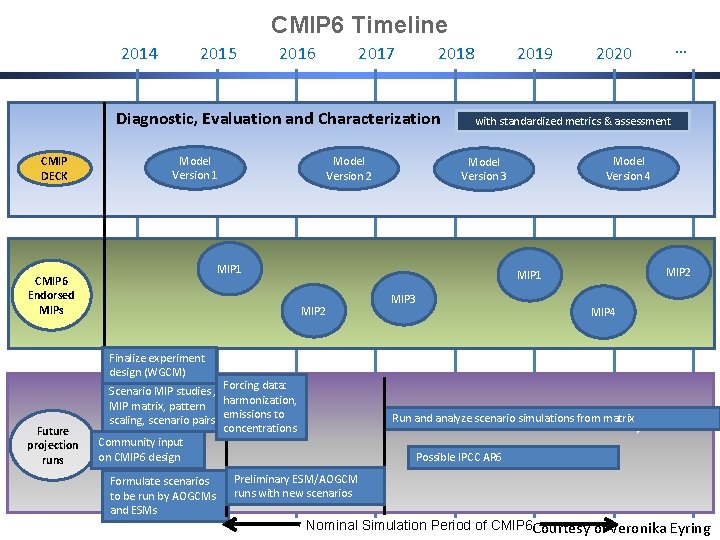

CMIP 6 Timeline 2014 2015 2016 2017 2018 Diagnostic, Evaluation and Characterization CMIP DECK Model Version 1 Model Version 2 2019 with standardized metrics & assessment Model Version 4 Model Version 3 MIP 1 CMIP 6 Endorsed MIPs MIP 2 MIP 1 MIP 2 … 2020 MIP 3 MIP 4 Finalize experiment design (WGCM) Future projection runs Scenario MIP studies , Forcing data: harmonization, MIP matrix, pattern scaling, scenario pairs emissions to concentrations Community input on CMIP 6 design Formulate scenarios to be run by AOGCMs and ESMs Run and analyze scenario simulations from matrix Possible IPCC AR 6 Preliminary ESM/AOGCM runs with new scenarios Nominal Simulation Period of CMIP 6 Courtesy of veronika Eyring

Outline Background, Motivation & Plans 1) obs 4 MIPs: Evolution, Status and Plans 2) obs 4 MIPs: Standards, Conventions and Infrastructure 3) CMIP Phase 6 Planning 4) Benchmarking Climate Model Performance Ref: http: : //dx. doi. org/10. 1175/BAMS-D-12 -00204. 1 http: //www. earthsystemcog. org/projects/obs 4 mips

Documenting climate model performance • Hundreds of model versions, many thousands of simulations • Research papers focused on model evaluation are essential, but we need to do better at documenting and benchmarking the baseline performance of all climate models • Doing this properly requires keeping track of which observational datasets are used, and their product versions Courtesy of Peter Gleckler

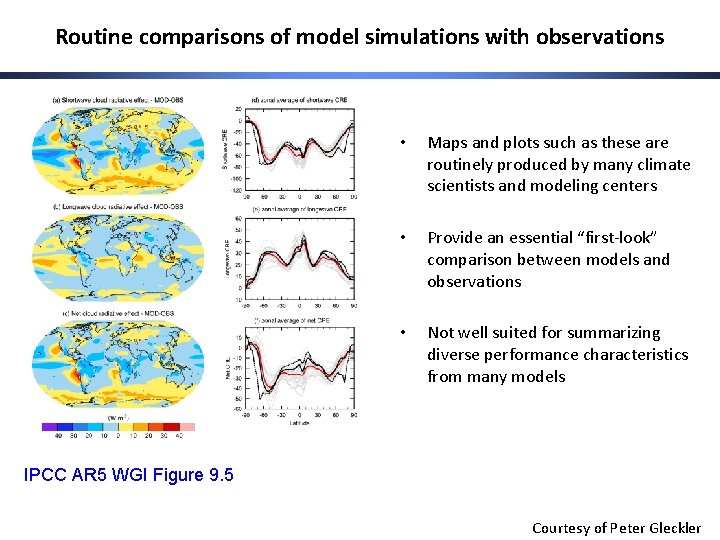

Routine comparisons of model simulations with observations • Maps and plots such as these are routinely produced by many climate scientists and modeling centers • Provide an essential “first-look” comparison between models and observations • Not well suited for summarizing diverse performance characteristics from many models IPCC AR 5 WGI Figure 9. 5 Courtesy of Peter Gleckler

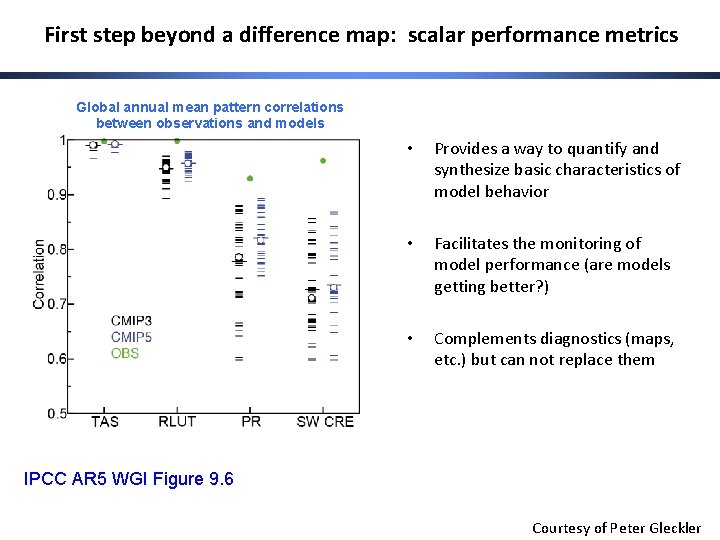

First step beyond a difference map: scalar performance metrics Global annual mean pattern correlations between observations and models • Provides a way to quantify and synthesize basic characteristics of model behavior • Facilitates the monitoring of model performance (are models getting better? ) • Complements diagnostics (maps, etc. ) but can not replace them IPCC AR 5 WGI Figure 9. 6 Courtesy of Peter Gleckler

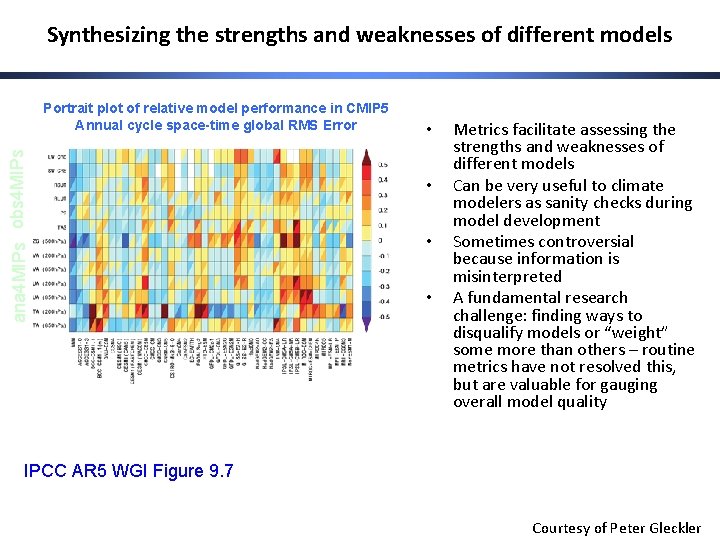

Synthesizing the strengths and weaknesses of different models ana 4 MIPs obs 4 MIPs Portrait plot of relative model performance in CMIP 5 Annual cycle space-time global RMS Error • • Metrics facilitate assessing the strengths and weaknesses of different models Can be very useful to climate modelers as sanity checks during model development Sometimes controversial because information is misinterpreted A fundamental research challenge: finding ways to disqualify models or “weight” some more than others – routine metrics have not resolved this, but are valuable for gauging overall model quality IPCC AR 5 WGI Figure 9. 7 Courtesy of Peter Gleckler

Establishing a mechanism for systematic benchmarking of climate model performance WGNE/WGCM Climate Model Metrics Panel: Tasked to identify and promote a limited set of frequently used performance metrics in an attempt to establish community benchmarks for climate models Some desired characteristics of performance metrics for benchmarking: • • • A useful quantification of model error (with respect to observations) Well established in the literature and widely used Relatively easy to compute, reproduce and interpret Fairly robust results (hence an initial emphasis on mean climate) Well suited for repeated use Courtesy of Peter Gleckler

Making CMIP more relevant to model development • The benefit to modeling groups contributing to CMIP has always been limited by the research phase. By the time results are presented/published, most groups have moved on to their next generation model. • Modeling groups would learn more if they could assess the relative strengths and weakness of their model during the development process. • PCMDI has developed a framework that will enable modeling groups to “quick-look” compare their newer model versions with all available CMIP simulations. Courtesy of Peter Gleckler

PCMDI’s metrics package • Includes code, documentation, observations and a database of results for all available CMIP simulations • Built on a light version of PCMDI’s UV-CDAT which is based on Python. ESMF regridding is built-in so that data on ocean grids can be interpolated to directly compare with observations • Alpha version under testing at three modeling centers; to be made available to all CMIP modeling groups this year • Designed to enable integration into existing analysis systems EMBRACE 2013 | Peter Gleckler Courtesy of Peter Gleckler

Initial Set of Baseline Metrics (recommended by the WGNE/WCGM Metrics Panel) • Statistics: Bias, pattern correlation, RMS, variance and mean absolute error • Domains: Global, tropical, and extra-tropical seasonal climatologies • Field examples: Air temperature and winds (200 and 850 h. Pa), geopotential height (500 h. Pa), surface air temperature, winds and humidity, TOA radiative fluxes, precipitation, precipitable water, SST and SSH • Package includes updated climatologies from multiple sources, mostly near-global satellite data (obs 4 MIPs) and reanalysis (ana 4 MIPs) • Planning to incrementally identify a more diverse set of performance tests • Metrics Panel collaborating with other activities, and receiving recommendations for additional metrics : Global Monsoon Precipitation Index (CLIVAR AAMP), ENSO metrics (CLIVAR Pacific Panel), Working Group on Ocean Model Development, ILAMB (AIMES/IGBP), MJO task force … obs 4 MIPs 2014 | Peter Gleckler Courtesy of Peter Gleckler

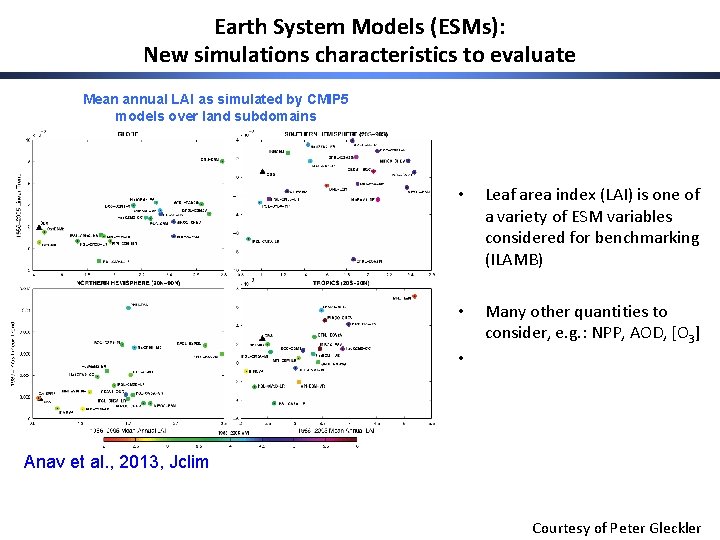

Earth System Models (ESMs): New simulations characteristics to evaluate Mean annual LAI as simulated by CMIP 5 models over land subdomains • Leaf area index (LAI) is one of a variety of ESM variables considered for benchmarking (ILAMB) • Many other quantities to consider, e. g. : NPP, AOD, [O 3] • Anav et al. , 2013, Jclim Courtesy of Peter Gleckler

The critical value of obs 4 MIPs for benchmarking model performance • Successful benchmarking capability must readily enable reproducibility: • Clarification of which datasets are used for performance metrics • Dataset version (updates) documented and clearly traceable • Observations “technically aligned” CMIP data and directly accessible via the same mechanism (ESGF) • Ideally, data experts are involved in the process (i. e. , not 3 rd party) Courtesy of Peter Gleckler

Prospects for advancing model benchmarking • This year and next • All CMIP modeling groups able to use a common analysis framework for baseline in-house benchmarking against selected obs 4 MIPs/ana 4 MIPs datasets. • During model development, modelers able to assess the relative strengths and weakness of their model by comparing it to other state-of-the-art CMIP models. This can help modelers make more informed decisions on priorities. • Looking ahead… • An increasingly diverse and targeted set of benchmarks • An expectation develops that all new simulations are tested against baseline metrics, results are made widely available as soon as model data become public • A benchmarking trail is established, documenting characteristics of all newer models and the observations (versions) used for benchmarking. Courtesy of Peter Gleckler

Beyond baseline benchmarking: Community-based model diagnosis • Much of what has been done to date has been ad-hoc • CMIP DECK experiments encourage more systematic evaluation • Diagnostic packages that target the CMIP DECK (e. g. , ESMVal. Tool under development in the EU Embrace project) will facilitate a community-based approach to more in-depth model evaluation • Well organized observations are essential for all of this! Courtesy of Peter Gleckler

- Slides: 42